Submitted:

29 September 2025

Posted:

30 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- (1)

- deploy the BudCAM devices for in-field data acquisition and on-device processing;

- (2)

- develop and validate YOLOv11-based detection framework on bud break image database images built in 2024 and 2025; and

- (3)

- establish a post-processing pipeline that converts raw detections into a smoothed time-series for phenological analysis.

2. Materials and Methods

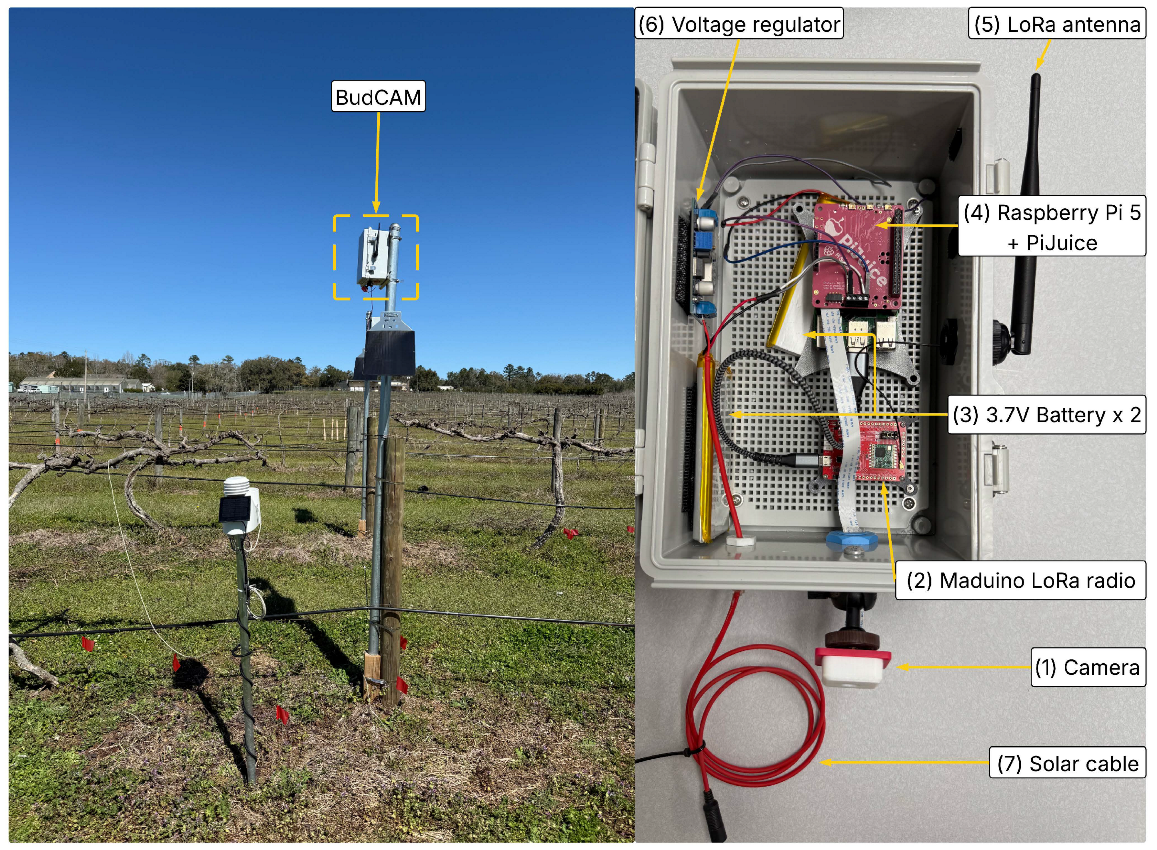

2.1. Hardware Development

2.2. Experiment Site and Data Collection

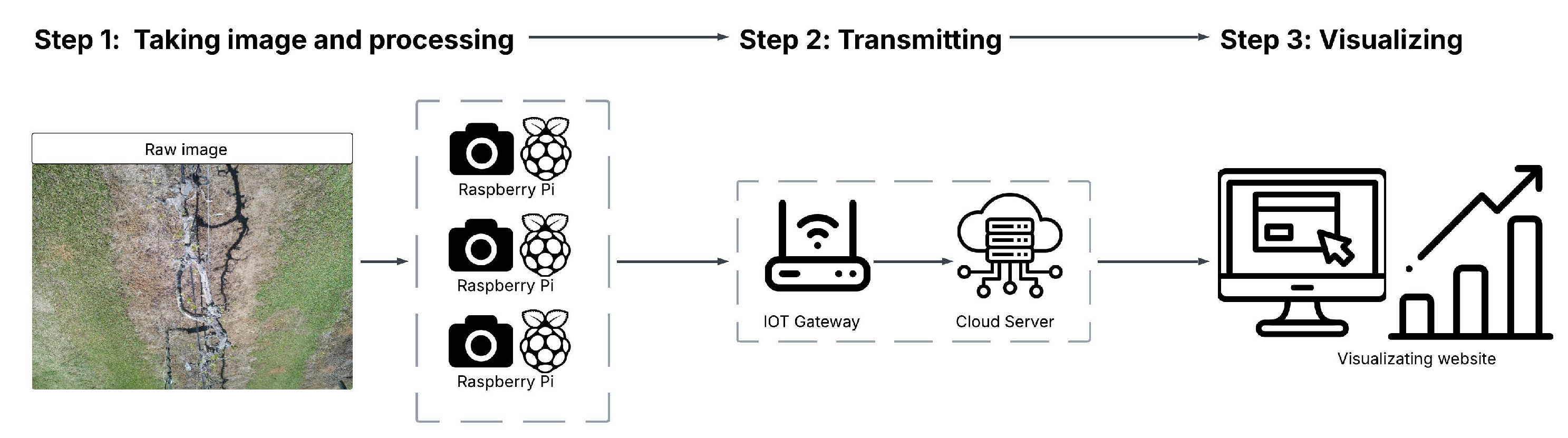

2.3. Software Development for BudCAM

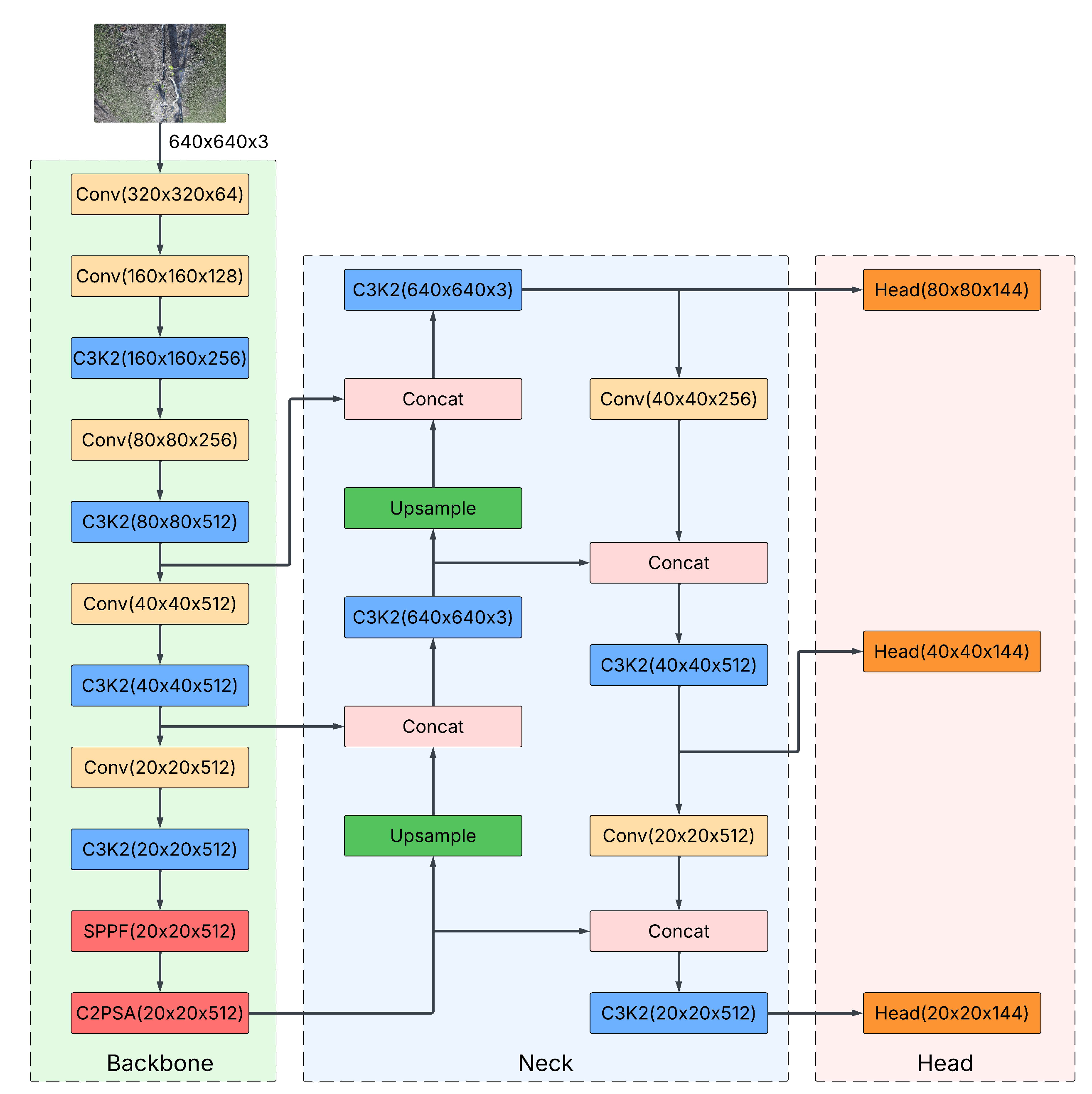

2.3.1. Detection Algorithm

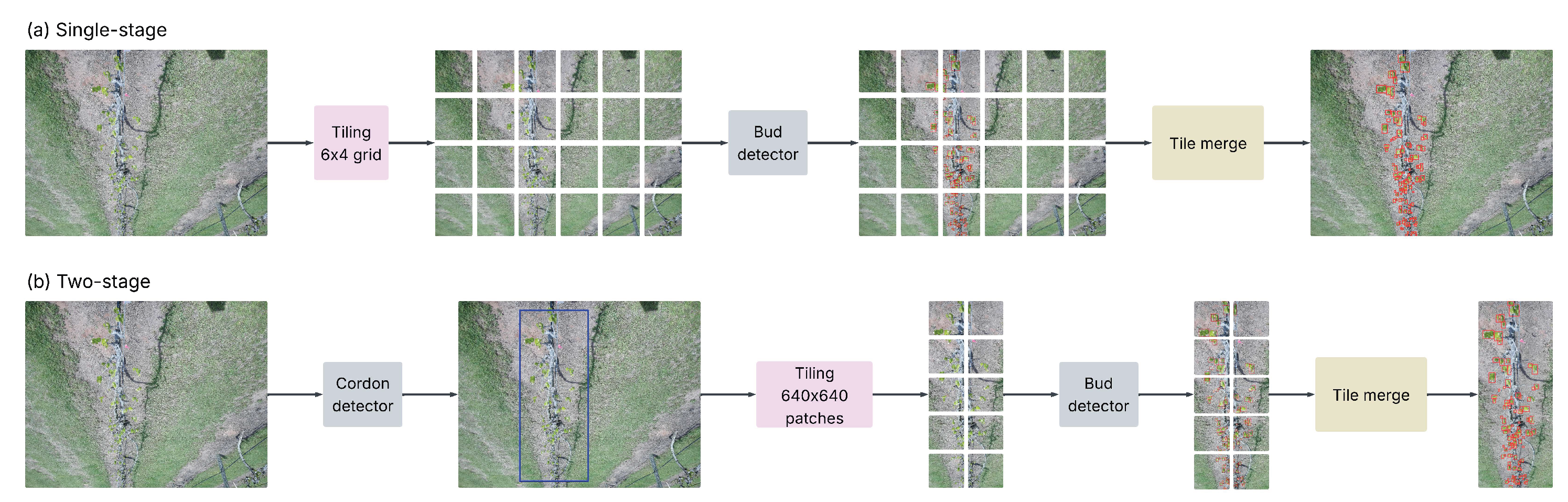

- (1)

- Single-Stage Bud Detector: A YOLO model was trained directly to detect buds from images, outputting bounding boxes and confidence scores for each detected bud (Figure 4(a)).

- (2)

- Two-Stage Pipeline: A grapevine cordon detection model first identifies the main grapevine structure. When a cordon prediction exceeds a confidence threshold, the corresponding region is cropped from the original image and passed to a second YOLO model trained specifically for bud detection. Images where no cordon meets the confidence threshold are skipped to avoid false detection from non-vine area (Figure 4(b)).

2.3.2. Dataset Preparation

2.3.3. Model Training Configuration

2.3.4. Inference Strategies on the 2024 Dataset

- (1)

- Resized Cordon Crop test set (Resize-CC): Each cropped cordon region was resized to the model’s 640×640 input size. This approach tests how well the model handles significant downscaling, where tiny objects like buds risk losing critical detail.

- (2)

- Sliced Cordon Crop evaluation (Sliced-CC): Although the baseline tiling strategy is effective, it may split buds located at tile boundaries, which can result in missed detections. This Sliced-CC strategy was therefore evaluated as an alternative to solve this "object fragmentation" problem. It was achieved using a sliding-window technique. Each cordon crop was divided into 640×640 slices with a slice-overlap ratio r (r = 0.2, 0.5, and 0.75). After the model processed each slice individually, the resulting detections were remapped to the original image coordinates and merged using non-maximum suppression (NMS).

2.4. Setup of Real-World Testing

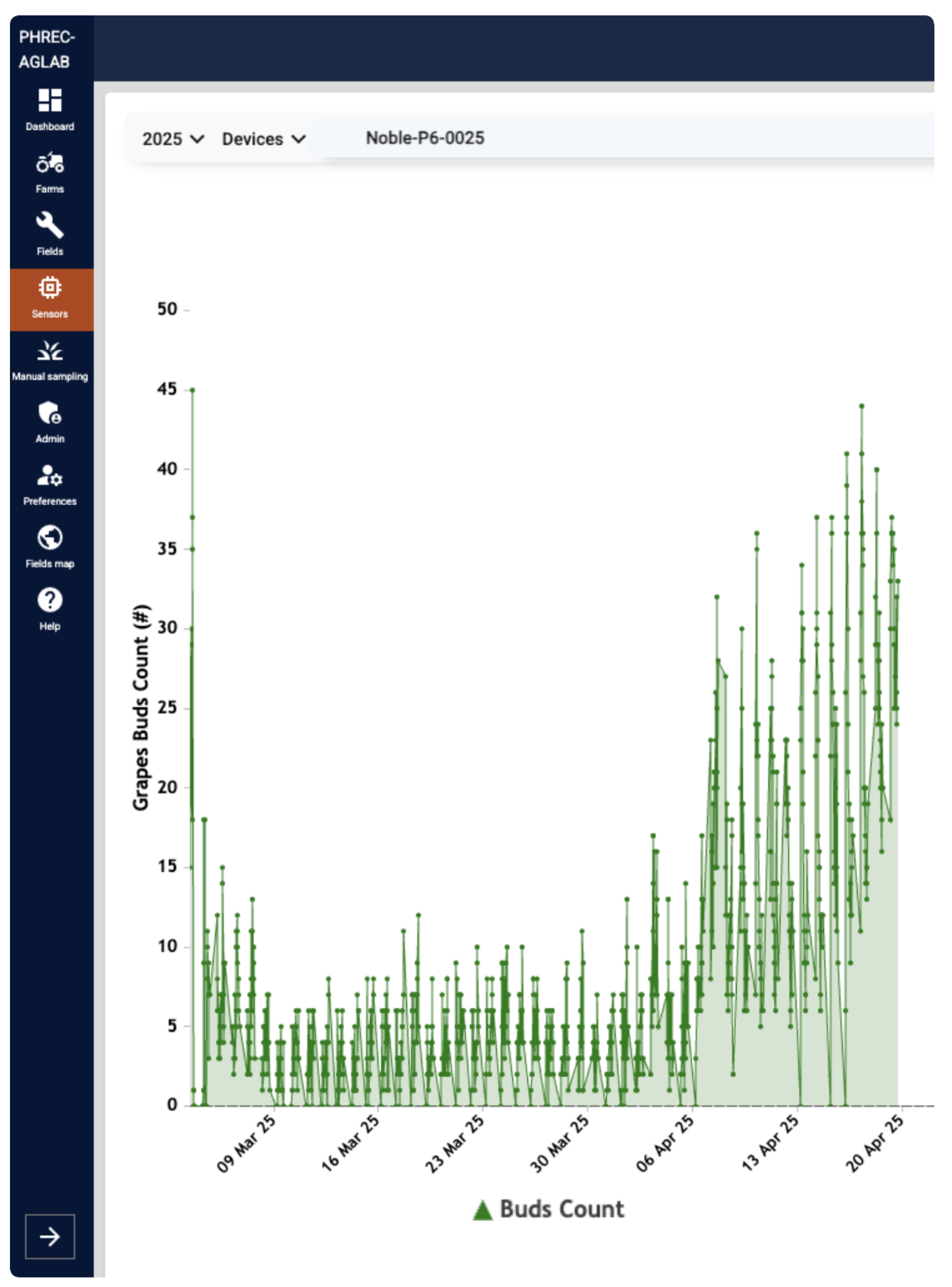

2.5. Time-Series Post-Processing

3. Results and Discussion

3.1. Model Performance and Inference Strategy Evaluation

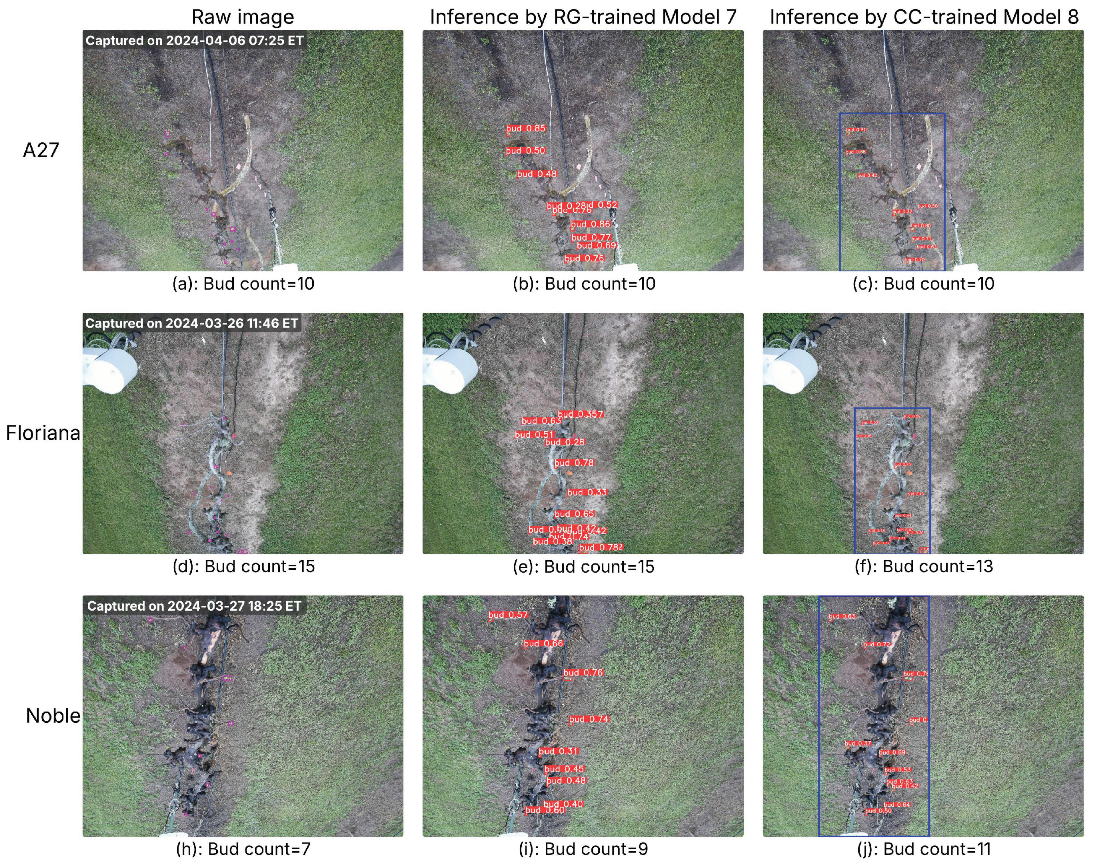

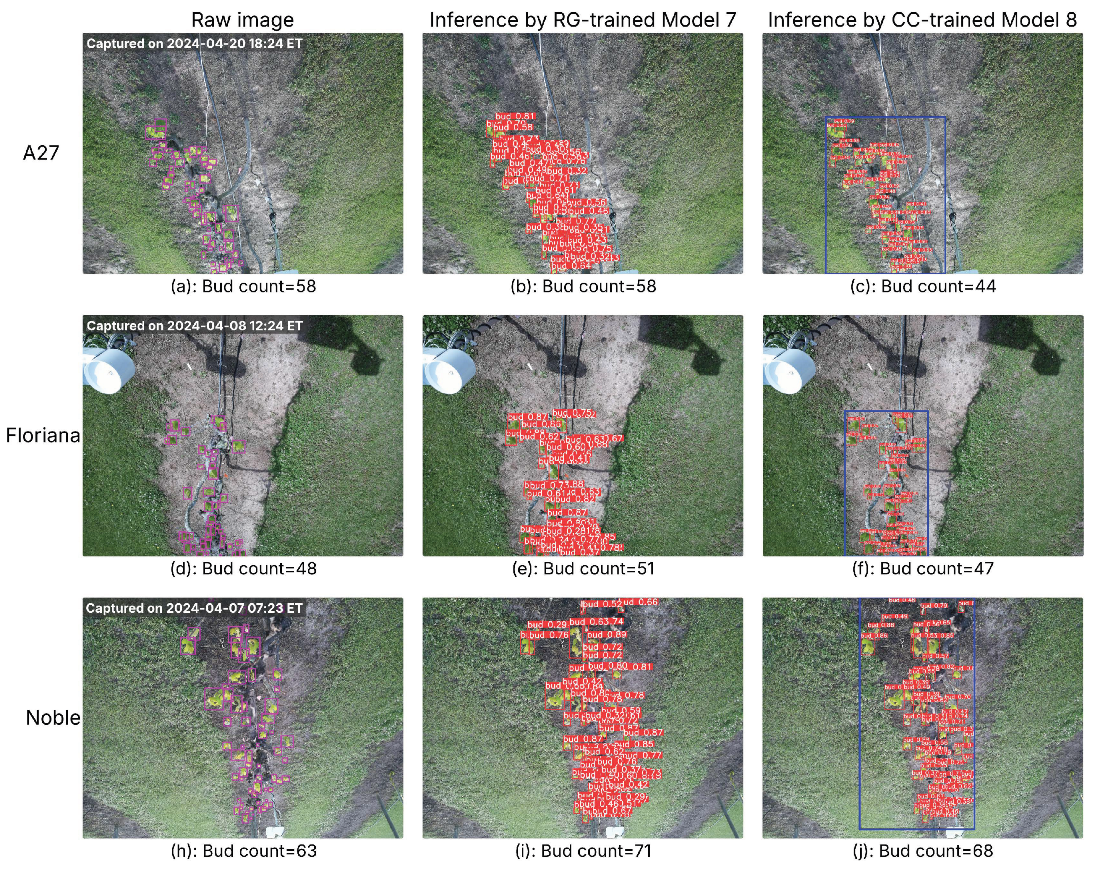

- (1)

- RG-trained Model 7, used with pre-processing step that tiles each raw image into a 6×4 grid.

- (2)

- CC-trained Model 8, used within the two-stage pipeline where a cropped cordon region is tiled into 640×640 patches for bud detection.

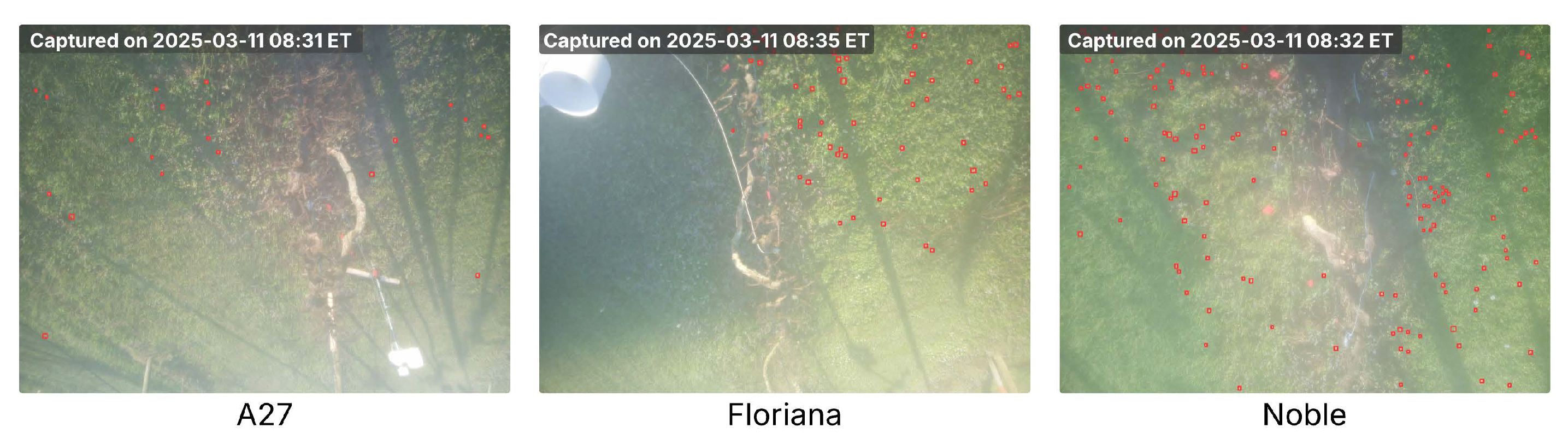

3.2. Dataset Comparison and 2024 Processed Image Examples

3.3. Comparison with Related Methods

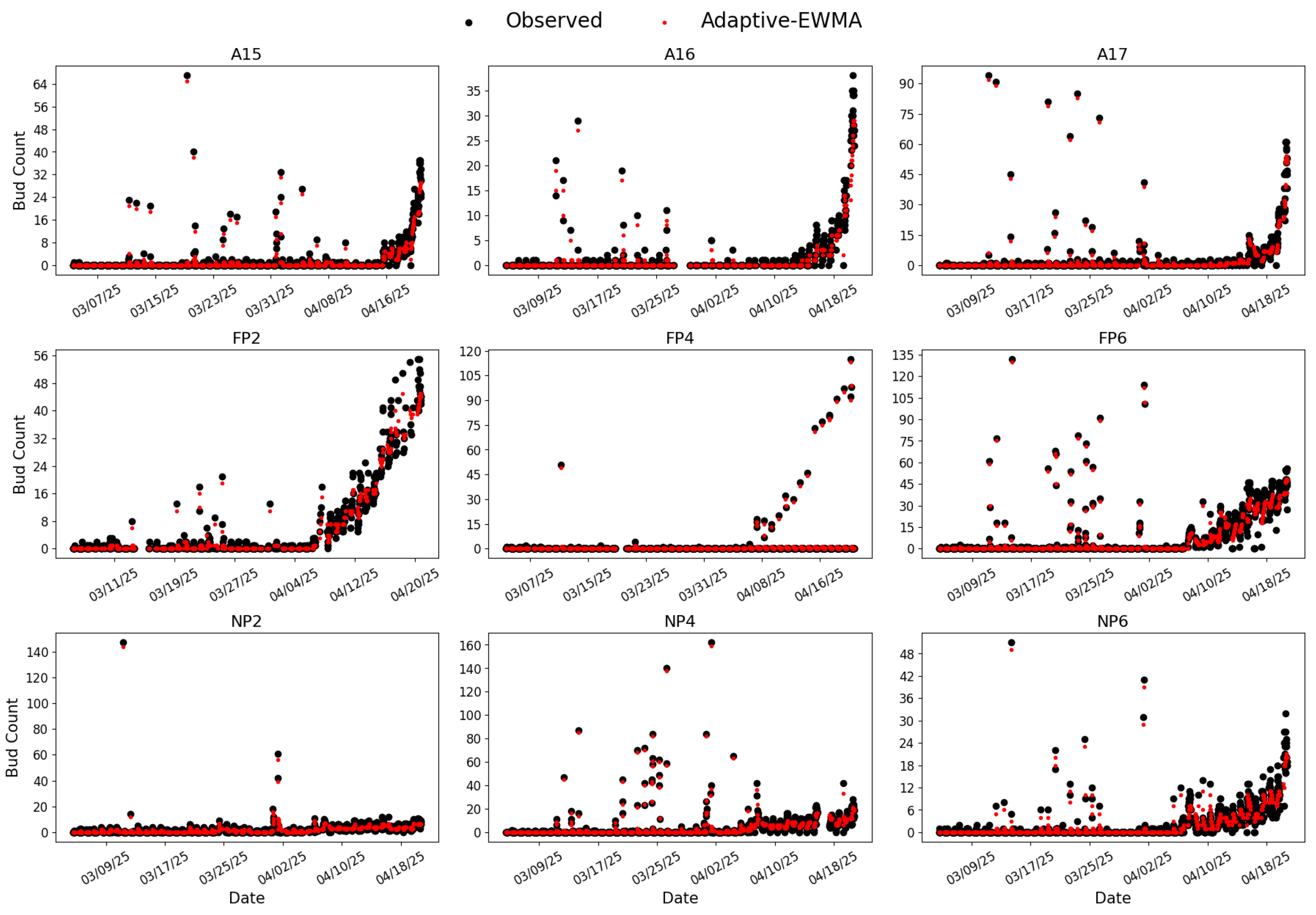

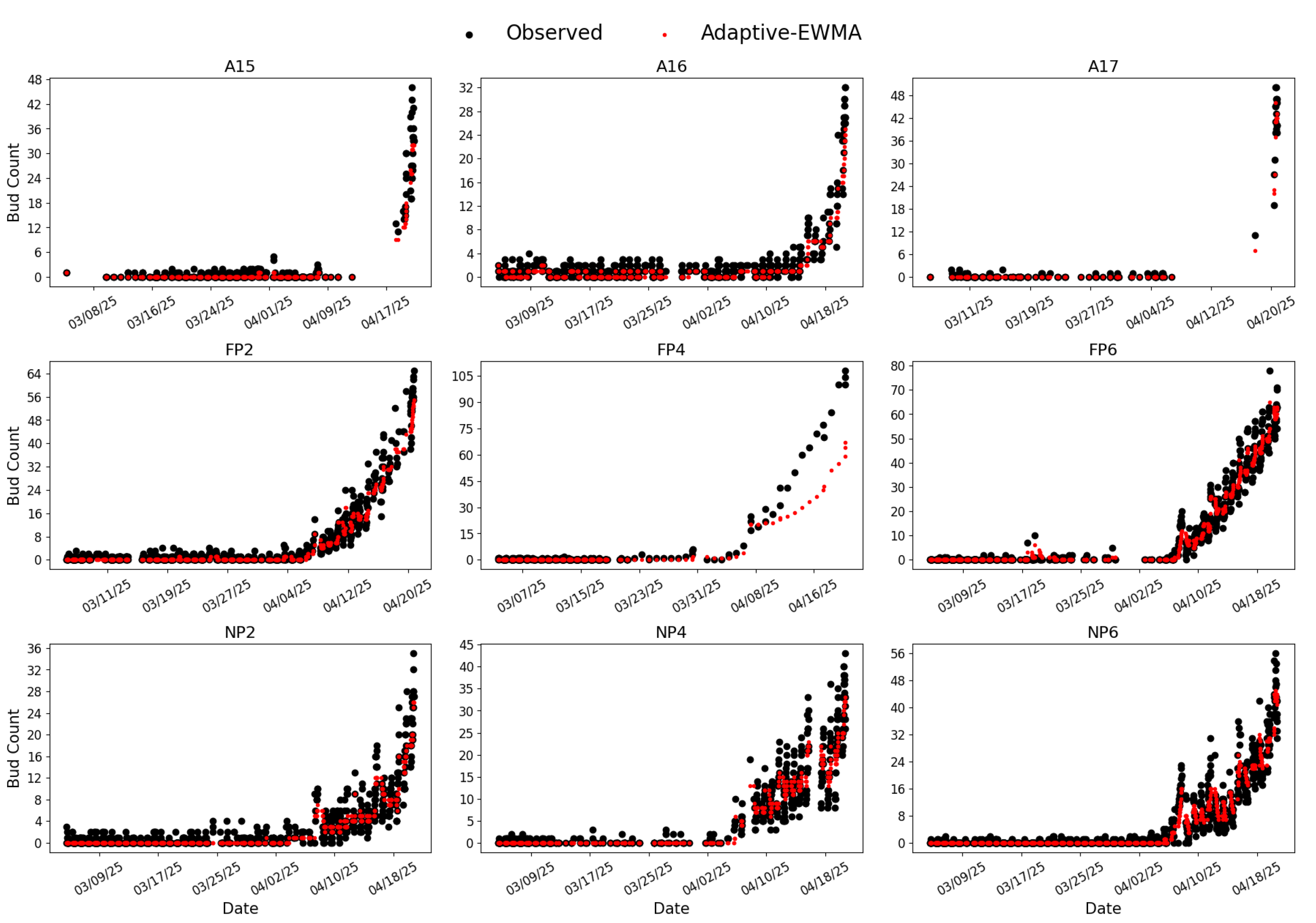

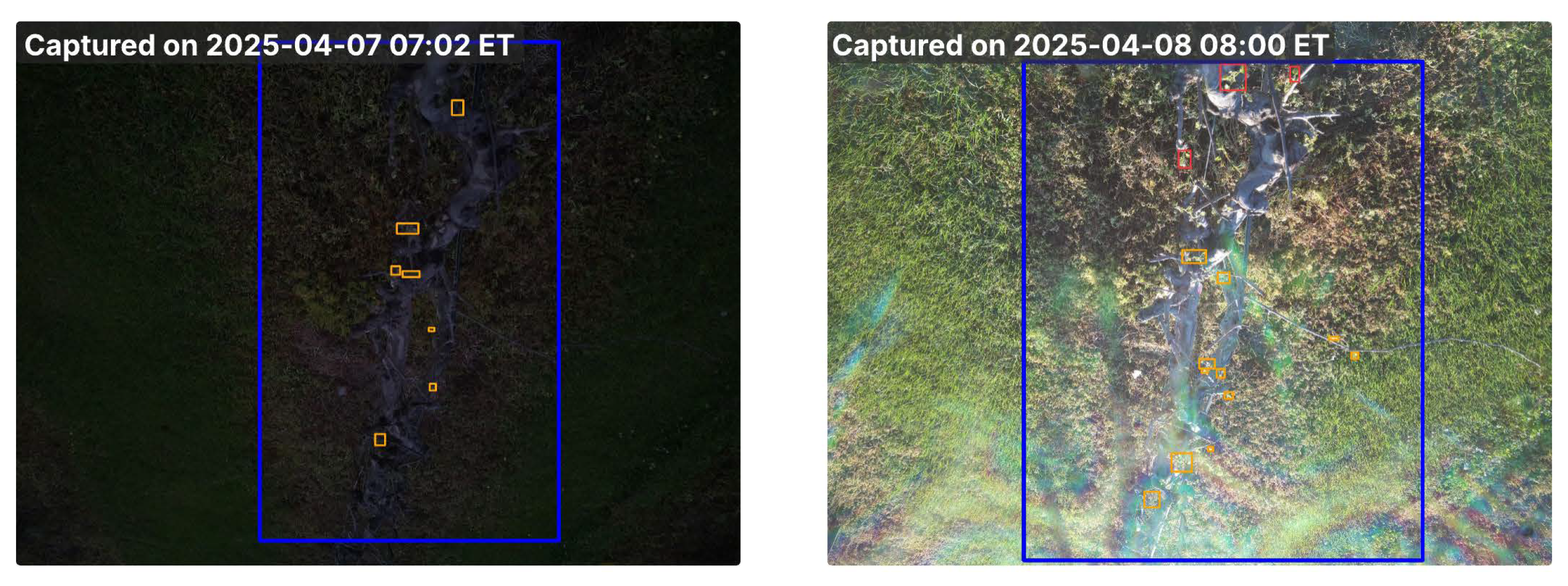

3.4. Real-World Inference in 2025

4. Conclusions

Acknowledgments

References

- Hickey, C.C.; Smith, E.D.; Cao, S.; Conner, P. Muscadine (Vitis rotundifolia Michx., syn. Muscandinia rotundifolia (Michx.) Small): The resilient, native grape of the southeastern US. Agriculture 2019, 9, 131. [Google Scholar] [CrossRef]

- Reta, K.; Netzer, Y.; Lazarovitch, N.; Fait, A. Canopy management practices in warm environment vineyards to improve grape yield and quality in a changing climate. A review A vademecum to vine canopy management under the challenge of global warming. Scientia Horticulturae 2025, 341, 113998. [Google Scholar] [CrossRef]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.Y. Dino: Detr with improved denoising anchor boxes for end-to-end object detection. arXiv preprint arXiv:2203.03605, arXiv:2203.03605 2022.

- Li, J.; Li, J.; Zhao, X.; Su, X.; Wu, W. Lightweight detection networks for tea bud on complex agricultural environment via improved YOLO v4. Computers and Electronics in Agriculture 2023, 211, 107955. [Google Scholar] [CrossRef]

- Gui, Z.; Chen, J.; Li, Y.; Chen, Z.; Wu, C.; Dong, C. A lightweight tea bud detection model based on Yolov5. Computers and Electronics in Agriculture 2023, 205, 107636. [Google Scholar] [CrossRef]

- Xia, X.; Chai, X.; Zhang, N.; Sun, T. Visual classification of apple bud-types via attention-guided data enrichment network. Computers and Electronics in Agriculture 2021, 191, 106504. [Google Scholar] [CrossRef]

- Grimm, J.; Herzog, K.; Rist, F.; Kicherer, A.; Toepfer, R.; Steinhage, V. An adaptable approach to automated visual detection of plant organs with applications in grapevine breeding. Biosystems Engineering 2019, 183, 170–183. [Google Scholar] [CrossRef]

- Rudolph, R.; Herzog, K.; Töpfer, R.; Steinhage, V.; et al. Efficient identification, localization and quantification of grapevine inflorescences and flowers in unprepared field images using Fully Convolutional Networks. Vitis 2019, 58, 95–104. [Google Scholar]

- Marset, W.V.; Pérez, D.S.; Díaz, C.A.; Bromberg, F. Towards practical 2D grapevine bud detection with fully convolutional networks. Computers and Electronics in Agriculture 2021, 182, 105947. [Google Scholar] [CrossRef]

- Pérez, D.S.; Bromberg, F.; Diaz, C.A. Image classification for detection of winter grapevine buds in natural conditions using scale-invariant features transform, bag of features and support vector machines. Computers and electronics in agriculture 2017, 135, 81–95. [Google Scholar] [CrossRef]

- Zhang, H.; Wu, C.; Zhang, Z.; Zhu, Y.; Lin, H.; Zhang, Z.; Sun, Y.; He, T.; Mueller, J.; Manmatha, R.; et al. networks. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp.

- Khanam, R.; Hussain, M. What is YOLOv5: A deep look into the internal features of the popular object detector. arXiv preprint arXiv:2407.20892, arXiv:2407.20892 2024.

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv preprint arXiv:1704.04861, arXiv:1704.04861 2017.

- Park, J.; Woo, S.; Lee, J.Y.; Kweon, I.S. Bam: Bottleneck attention module. arXiv preprint arXiv:1807.06514, arXiv:1807.06514 2018.

- Gevorgyan, Z. SIoU loss: More powerful learning for bounding box regression. arXiv preprint arXiv:2205.12740, arXiv:2205.12740 2022.

- Zheng, Z.; Wang, P.; Ren, D.; Liu, W.; Ye, R.; Hu, Q.; Zuo, W. Enhancing geometric factors in model learning and inference for object detection and instance segmentation. IEEE transactions on cybernetics 2021, 52, 8574–8586. [Google Scholar] [CrossRef] [PubMed]

- Chen, X.; Liu, T.; Han, K.; Jin, X.; Wang, J.; Kong, X.; Yu, J. TSP-yolo-based deep learning method for monitoring cabbage seedling emergence. European Journal of Agronomy 2024, 157, 127191. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp.

- Goyal, A.; Bochkovskiy, A.; Deng, J.; Koltun, V. Non-deep networks. Advances in neural information processing systems 2022, 35, 6789–6801. [Google Scholar]

- Pawikhum, K.; Yang, Y.; He, L.; Heinemann, P. Development of a Machine vision system for apple bud thinning in precision crop load management. Computers and Electronics in Agriculture 2025, 236, 110479. [Google Scholar] [CrossRef]

- Khanam, R.; Hussain, M. Yolov11: An overview of the key architectural enhancements. arXiv preprint arXiv:2410.17725, arXiv:2410.17725 2024.

- Roboflow. Roboflow, 2025.

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp.

- Liang, W.z.; Oboamah, J.; Qiao, X.; Ge, Y.; Harveson, B.; Rudnick, D.R.; Wang, J.; Yang, H.; Gradiz, A. CanopyCAM–an edge-computing sensing unit for continuous measurement of canopy cover percentage of dry edible beans. Computers and Electronics in Agriculture 2023, 204, 107498. [Google Scholar] [CrossRef]

- Press, W.H. Numerical recipes 3rd edition: The art of scientific computing; Cambridge university press, 2007.

- Zaman, B.; Lee, M.H.; Riaz, M.; Abujiya, M.R. An adaptive approach to EWMA dispersion chart using Huber and Tukey functions. Quality and Reliability Engineering International 2019, 35, 1542–1581. [Google Scholar] [CrossRef]

- Huber, P.J. Robust statistics. In International encyclopedia of statistical science; Springer, 2011; pp. 1248–1251.

- Rousseeuw, P.J.; Croux, C. Alternatives to the median absolute deviation. Journal of the American Statistical association 1993, 88, 1273–1283. [Google Scholar] [CrossRef]

- Leys, C.; Ley, C.; Klein, O.; Bernard, P.; Licata, L. Detecting outliers: Do not use standard deviation around the mean, use absolute deviation around the median. Journal of experimental social psychology 2013, 49, 764–766. [Google Scholar] [CrossRef]

- Brown, R.G. Smoothing, forecasting and prediction of discrete time series; Courier Corporation, 2004.

| Vine ID | Cultivar | Harvest Date | Start Date | End Date |

|---|---|---|---|---|

| FP2 | Floriana | 8/15/2024 | 3/24/2024 | 4/15/2024 |

| FP4 | Floriana | 8/15/2024 | 3/25/2024 | 4/8/2024 |

| FP6 | Floriana | 8/15/2024 | 3/26/2024 | 4/9/2024 |

| NP2 | Noble | 8/15/2024 | 3/26/2024 | 4/9/2024 |

| NP4 | Noble | 8/15/2024 | 3/26/2024 | 4/13/2024 |

| NP6 | Noble | 8/15/2024 | 3/24/2024 | 4/11/2024 |

| A27P15 | A27 | 8/20/2024 | 4/2/2024 | 4/20/2024 |

| A27P16 | A27 | 8/20/2024 | 3/30/2024 | 4/15/2024 |

| A27P17 | A27 | 8/20/2024 | 4/1/2024 | 4/20/2024 |

| Vine ID | Cultivar | Training | Validation | Test | Total |

|---|---|---|---|---|---|

| FP2 | Floriana | 168 | 52 | 43 | 263 |

| FP4 | Floriana | 133 | 24 | 30 | 187 |

| FP6 | Floriana | 233 | 51 | 47 | 331 |

| NP2 | Noble | 177 | 33 | 42 | 252 |

| NP4 | Noble | 299 | 80 | 65 | 444 |

| NP6 | Noble | 95 | 19 | 17 | 131 |

| A27P15 | A27 | 321 | 61 | 74 | 456 |

| A27P16 | A27 | 91 | 9 | 10 | 110 |

| A27P17 | A27 | 343 | 69 | 70 | 482 |

| – | Floriana total | 534 | 127 | 120 | 781 |

| – | Noble total | 571 | 132 | 124 | 827 |

| – | A27 total | 755 | 139 | 154 | 1048 |

| Dataset | Processing Strategy | Pipeline Usage | Training | Validation | Test | Total |

|---|---|---|---|---|---|---|

| RG | Raw image tiled into grid (776×864 px per tile) | Single-stage detector | 15,872 | 3387 | 3308 | 22,567 |

| CC | Cordon cropped and tiled into patches | Two-stage pipeline | 19,666 | 4147 | 4119 | 27,956 |

| Config. | Rotation | Translate | Scale | Shear | Perspective | Flip |

|---|---|---|---|---|---|---|

| Config. 1 | 45° | 40% | 30% | 10% | 0 | Yes |

| Config. 2 | 10° | 10% | 0 | 5% | 0.001 | Yes |

| Model ID | Model Size | Fine-Tuned | Data Augmentation |

|---|---|---|---|

| Model 1 | Medium | No | None |

| Model 2 | Medium | Yes | None |

| Model 3 | Large | No | None |

| Model 4 | Large | Yes | None |

| Model 5 | Medium | No | Config 1 |

| Model 6 | Medium | Yes | Config 1 |

| Model 7 | Medium | No | Config 2 |

| Model 8 | Medium | Yes | Config 2 |

| Model ID | Final Training Epoch | Test mAP@0.5 (%) |

|---|---|---|

| Trained on Raw Grid (RG) Dataset | ||

| Model 1 | 70 ± 25 | 75.9 ± 2.4 |

| Model 2 | 79 ± 34 | 80.3 ± 1.1 |

| Model 3 | 77 ± 25 | 76.0 ± 1.5 |

| Model 4 | 69 ± 24 | 78.5 ± 1.6 |

| Model 5 | 315 ± 69 | 85.1 ± 0.4 |

| Model 6 | 229 ± 30 | 85.3 ± 0.2 |

| Model 7 | 441 ± 70 | 86.0 ± 0.1 |

| Model 8 | 238 ± 43 | 85.7 ± 0.1 |

| Trained on Cordon-Cropped (CC) Dataset | ||

| Model 1 | 77 ± 4 | 79.1 ± 0.3 |

| Model 2 | 72 ± 10 | 78.3 ± 0.3 |

| Model 3 | 76 ± 4 | 79.2 ± 0.1 |

| Model 4 | 71 ± 9 | 78.7 ± 0.2 |

| Model 5 | 512 ± 60 | 84.4 ± 0.1 |

| Model 6 | 297 ± 103 | 84.4 ± 0.4 |

| Model 7 | 435 ± 27 | 84.7 ± 0.1 |

| Model 8 | 278 ± 45 | 85.0 ± 0.1 |

| Model | Baseline | Resized-CC | Sliced-CC |

|---|---|---|---|

| Model 7 (RG) | 86.0 ± 0.1 | 58.2 ± 1.3 | 74.8 ± 0.7 |

| Model 8 (CC) | 85.0 ± 0.1 | 52.6 ± 1.0 | 80.0 ± 0.2 |

| Model | Slice-overlap ratio | ||

|---|---|---|---|

| 0.2 | 0.5 | 0.75 | |

| Model 7 (RG) | 74.8 ± 0.7 | 72.0 ± 0.7 | 66.3 ± 0.6 |

| Model 8 (CC) | 80.0 ± 0.2 | 76.4 ± 0.4 | 69.2 ± 0.5 |

| Method | Model | Dataset | View / Camera Angle | Platform | Performance |

|---|---|---|---|---|---|

| BudCAM (this work) | YOLOv11-m (RG, M7) | 2,656 images; px | Nadir, 1–3 m above vines | Raspberry Pi 5 | 86.0 (mAP@0.5) |

| YOLOv11-m (CC, M8) | 85.0 (mAP@0.5) | ||||

| Grimm et al. [7] | UNet-style (VGG16 encoder) | 542 images; up to px | Side view, within ∼2 m | – | 79.7 (F1) |

| Rudolph et al. [8] | FCN | 108 images; px | Side view, within ∼1 m | – | 75.2 (F1) |

| Marset et al. [9] | FCN–MobileNet | 698 images; px | – | – | 88.6 (F1) |

| Xia et al. [6] | ResNeSt50 | 31,158 images; up to px | 0.4–0.8 m | – | 92.4 (F1) |

| Li et al. [4] | YOLOv4 | 7,723 images; px | – | – | 85.2 (mAP@0.5) |

| Gui et al. [5] | YOLOv5-l | 1,000 images; px | – | – | 92.7 (mAP@0.5) |

| Chen et al. [17] | YOLOv8-n | 4,058 images; px | UAV top view, ∼3 m AGL | – | 99.4 (mAP@0.5) |

| Pawikhum et al. [20] | YOLOv8-n | 1,500 images | Multi-angles, ∼0.5 m | Edge (embedded) | 59.0 (mAP@0.5) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).