Submitted:

26 September 2025

Posted:

16 December 2025

You are already at the latest version

Abstract

This study is preliminary methodological studies. RAG (Retrieval Augmented Generation) is a text-generative AI model that combines search-based and text-generative-based AI models. Because original data can be used as external search data for RAG, it is not affected by incorrect data from the internet introduced by fine-tuning. Furthermore, it is possible to construct an original generative AI model that has expert knowledge. Although the LlamaIndex library currently exists for implementing RAG, text vectorization is performed using an approach similar to doc2Vec, creating issues that affect the accuracy of the generative AI’s answers. Therefore, in this study, we propose a Property Graph RAG that can define meaning when indexing text by applying the Property Graph Index to LlamaIndex. Evaluation experiments were conducted using 10 real estate datasets and various cases including sales prices, On Foot Time to Nearest Station (min), and Exclusive Floor Area (m²), and the results confirmed that the proposed generative AI model offers more accurate answers than Prompt Refinement and Text_To_SQL for property search indexing.

Keywords:

1. Introduction

2. Prior Related Research and Methods

2.1. Situating the Proposed Approach Within the Existing Literature

2.2. Studying Technologies for Improving Accuracy of Generative AI Responses

2.2.1. RAG (Retrieval Augmented Generation) [1]

2.2.2. Chunking Strategy [2]

2.2.3. Metadata Filtering [3,4]

2.2.4. Prompt Refinement [5]

2.2.5. Knowledge Graph Index [6]

2.2.6. Text_To_SQL [8]

3. Property Graph RAG (Proposed Method)

- LLMs (Large Language Models): Section 3.1.

- LlamaIndex: Section 3.2.

- External information md file configured for RAG: Section 3.3.

- Property Graph Index: Section 3.4.

3.1. LLMs (Large Language Models)

3.2. LlamaIndex

- Creating structured data to pass external information to the LLM;

- Requesting the LLM to process and answer questions based on the created structured data.

- CPU/GPU: GPU;

- RAM: 32GB;

- OS (ver): Windows 11;

- Python (ver): Python 3.13;

- LlamaIndex (ver): llama_cpp_python-0.3.2-cp313.

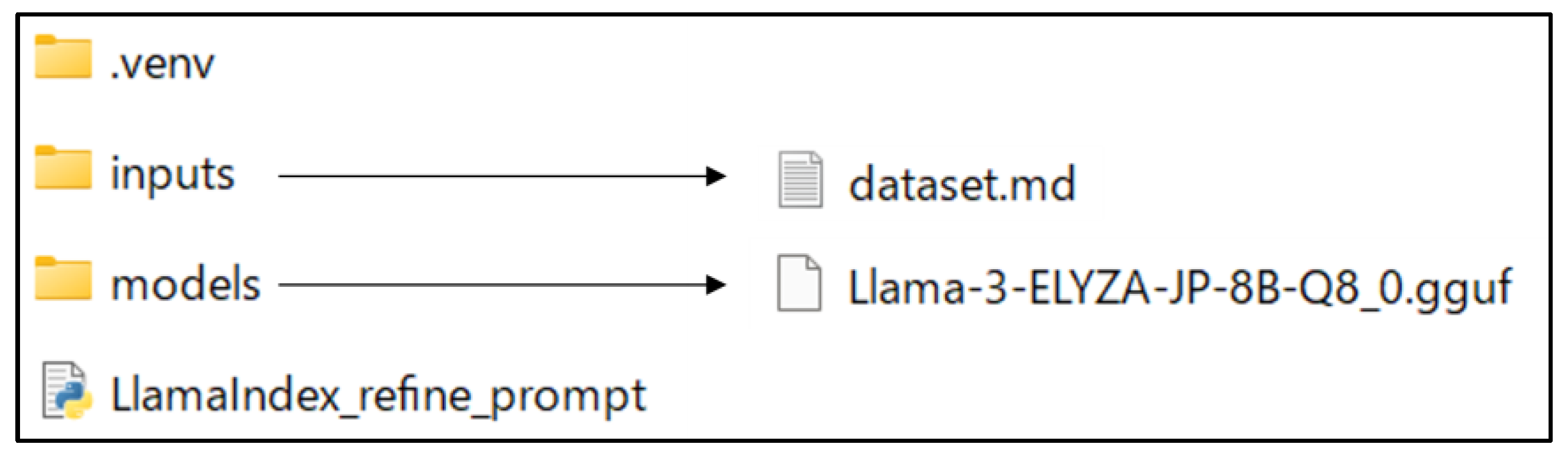

3.3. Overall Architecture of the Proposed Generative AI

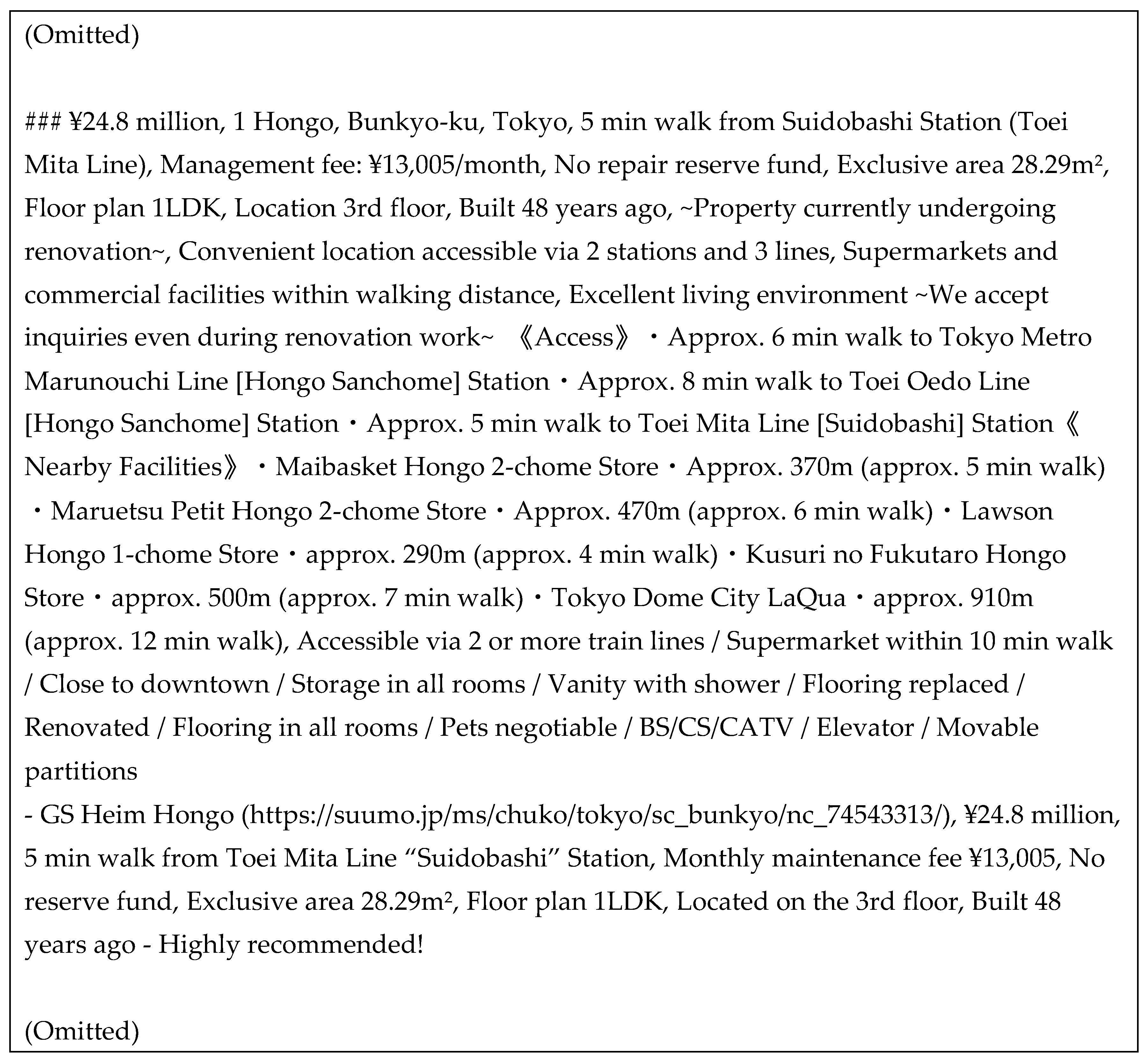

3.4. External Search File for RAG

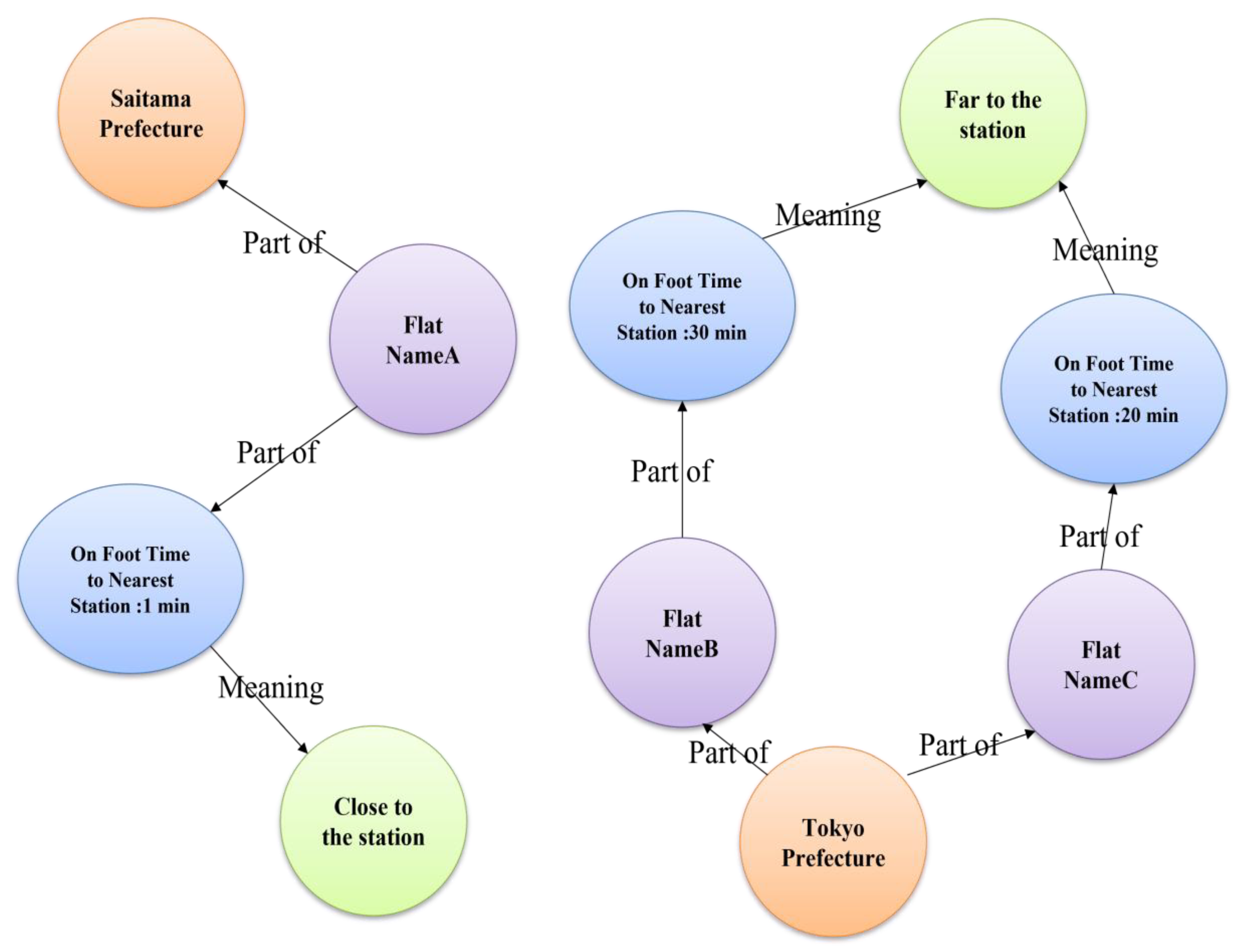

3.5. Property Graph Index

| Algorithm 1. Semantic definition using property graphs. |

| graph_store = SimplePropertyGraphStore() entities1 = [ EntityNode(label="FLAT",name="Flat NameA",properties={" Address": "3-24-13 Tamagawa, Setagaya-ku, Tokyo","Management Fee (¥/month)":9000," Building Age (Years) ": 41," Floor Level": 1," Floor Plan Type": "1LDK","Parking": None}), ] (Omitted) relations1 = [ Relation(label="CLOSEST", source_id=entities1[0].id,target_id=entities2[0].id, properties={"Walking Time (min)":5," Close from the station"}), ] (Omitted) graph_store.upsert_nodes(entities1) graph_store.upsert_relations(relations1) |

| Algorithm 2. Reflect the Property Graph Index in the query engine. |

| index = PropertyGraphIndex.from_existing( llm=llm, property_graph_store=graph_store, # optional, neo4j also supports vectors directly #vector_store=vector_store, #embed_kg_nodes=True, embed_model=embed_model ) query_engine = index.as_query_engine( include_text=False, llm=llm, ) print("=================================") while True: req_msg = input('\n Question: ') req_msg = req_msg.strip() if req_msg == "":continue if req_msg == "\q":break res_msg = query_engine.query(req_msg) print('\n', str(res_msg).strip()) |

4. Evaluation Experiment

4.1. Outline of Evaluation Experiment

-

Matching-based, which includes the following:

- ✓

- F1 Score: Harmonic mean of Recall and Precision;

- ✓

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): Calculated using Recall, Precision, and F1 Score;

- ✓

- BLEU (Bilingual Evaluation Understudy): Calculated based on the matching rate of n-grams (one word at a time, two words at a time, etc.) between the original text and the generated text;

- Generation-based, including the following:

- Human-based.

4.2. Evaluation Standard

4.3. Implementation of the Comparison Generative AI

4.3.1. RAG Prompt Refinement

4.3.2. Text-to-SQL

| Algorithm 3. Create a database for Text-to-SQL from Table 1. |

| import sqlite3 import pandas as pd df = pd.read_csv("table1.csv",encoding='utf-8') dbname = 'SAMPLE.db' conn = sqlite3.connect(dbname) cur = conn.cursor() df.to_sql(name='manssion', con=conn, if_exists='replace') |

| Algorithm 4. Implementation of Text-to-SQL. |

| engine = create_engine("sqlite:///SAMPLE.db") sql_database = SQLDatabase(engine=engine) query_engine = NLSQLTableQueryEngine( sql_database=sql_database, tables=["manssion"], llm=llm, embed_model=embed_model, ) while True: req_msg = input('\n Question: ') req_msg = req_msg.strip() if req_msg == "":continue if req_msg == "\q":break res_msg = query_engine.query(req_msg) print('\n', str(res_msg).strip()) |

4.4. Evaluation Experiment Results and Discussion

| Question: Please answer a low selling price used flat. |

| ### Please recommend a used flat near Futakotamagawa Station that is older, has fewer floors, has the smallest rooms, and lacks parking, but features a studio layout, has a low selling price, and low maintenance fees. |

4.5. Discussion on Comparing Proposed Generative AI and Existing Real Estate Search Websites

5. Conclusion and Limitations of This Study

References

- Gao, Yunfan; et al. Retrieval-augmented generation for large language models: A survey. arXiv 2023, arXiv:2312.10997. [Google Scholar]

- Vaibhav Kumar:Chunking Strategies for RAG in Generative AI,ADaSci, https://adasci.org/chunking-strategies-for-rag-in-generative-ai/(Last accessed date: 2025:3/15).

- Ishita Kaur:AI Metadata Extraction and Filtering from Scientific Research Articles, crossml, https://www.crossml.com/ai-metadata-extraction/ (Last accessed date: 2025:3/15).

- Jon Saad-Falcon, Joe Barrow, Alexa Siu, Ani Nenkova, David Seunghyun Yoon, Ryan A. Rossi, Franck Dernoncourt: PDFTriage: Question Answering over Long, Structured Documents, https://doi.org/10.48550/arXiv.2309.08872.

- Anders Giovanni Møller, Luca Maria Aiello :Prompt Refinement or Fine-tuning? Best Practices for using LLMs in Computational Social Science Tasks, https://doi.org/10.48550/arXiv.2408.01346(Last accessed date: 2025:3/21).

- Knowledge Graph Index: https://docs.llamaindex.ai/en/stable/examples/index_structs/knowledge_graph/KnowledgeGraphDemo/ (Last accessed date: 2025:3/15).

- Property Graph Index: https://docs.llamaindex.ai/en/stable/module_guides/indexing/lpg_index_guide/ (Last accessed date: 2025:3/15).

- Text_To_SQL :https://docs.llamaindex.ai/en/stable/use_cases/text_to_sql/(Last accessed date:2025:3/15).

- llamaindex Docment: https://docs.llamaindex.ai/en/stable/optimizing/basic_strategies/basic_strategies/ (Last accessed date: 2024/9/14).

- The LlamaIndex Team: Introducing the Property Graph Index: A Powerful New Way to Build Knowledge Graphs with LLMs, https://www.llamaindex.ai/blog/introducing-the-property-graph-index-a-powerful-new-way-to-build-knowledge-graphs-with-llms(Last accessed date: 2025/3/19).

- Long Ouyang et al.: Training language models to follow instructions with human feedback, https://arxiv.org/abs/2203.02155 (Last accessed date: 2024/9/17).

| 1 | |

| 2 | |

| 3 | |

| 4 |

| High City Futakotamagawa | Brands Minami-Azabu | ・・・ | Lions Mansion Plaza Odakyu Sagamihara | |

| Selling Price (JPY) | 9,980,000 | 74,800,000 | ・・・ | 31,800,000 |

| Location | 3-24-13 Tamagawa, Setagaya-ku, Tokyo | 1-27-13 Minami-Azabu, Minato-ku, Tokyo | ・・・ | 1-22-13 Sounami, Minami Ward, Sagamihara City, Kanagawa Prefecture |

| Nearest Station | Futakotamagawa Station (Tokyu Den-en-toshi Line) | Azabujuban Station (Toei Oedo Line) | ・・・ | Odakyu Odawara Line Odakyu Sagamihara Station |

| On Foot Time to Nearest Station (min) | 5 | 8 | ・・・ | 5 |

| Management Fee (JPY per month) | 9,000 | 13,000 | ・・・ | 14,600 |

| Building Age (Years) | 41 | 13 | ・・・ | 21 |

| Floor Level | 1 | 8 | ・・・ | 5 |

| Floor Plan Type | 1LDK | 2LDK | ・・・ | 3LDK |

| Exclusive Floor Area (m²) | 13.43 | 38.38 | ・・・ | 77.53 |

| Parking | None | Available | ・・・ | Available |

| Score | Reason | ||

| Proposed generative AI | Q1 | 1 | Because it proposed the property with the lowest price. |

| Q2 | 1 | Because it proposed the property with the lowest price. | |

| Q3 | 0 | Because it proposed the property for which management fee was the third lowest. | |

| Q4 | 1 | Because it proposed the property with the biggest room and closest to the station. | |

| Q5 | 1 | Because it proposed a property with low management fees and a spacious room. | |

| Total | 4.0 | ||

| RAG Refine Prompt | Q1 | 1 | Because it proposed the property with the lowest price. |

| Q2 | 1 | Because it proposed the property with the lowest price. | |

| Q3 | 1 | Because it proposed the property for which management fee was lowest. | |

| Q4 | 0 | Because it proposed the property with the third-most spacious rooms and ninth-longest time on foot. | |

| Q5 | 0.5 | Because it proposed the property that had the lowest price, but ninth-largest room and fifth-lowest management fee. | |

| Total | 3.5 | ||

| Text_To_SQL | Q1 | 0 | Because it proposed the property with the second-lowest price. |

| Q2 | 0 | Because it could not propose a property. | |

| Q3 | 0 | Because it proposed the property with the second-lowest management fee. | |

| Q4 | 0 | Because it could not propose a property. | |

| Q5 | 0 | Because it could not propose a property. | |

| Total | 0 |

| Q | A | |||

| Proposed generative AI | RAG Refine Prompt | Text_To_SQL | ||

| 1 | (Include 1 Item of Table 1) Please tell me the property with the lowest price. →Correct Answer: Rubelle Kumagaya |

○Rubelle Kumagaya at ¥9,800,000 is the lowest property price. | ○The property with the lowest price is Rubelle Kumagaya, with a selling price of ¥9,800,000. | ×High City Futakotamagawa at ¥9,980,000 is the lowest price. →Second-lowest property price. |

| 2 | (Include 1 Item of Table 1) Please tell me the property with the lowest property price. →Correct: Rubelle Kumagaya |

○Rubelle Kumagaya at ¥9,800,000 is the lowest. | ○The property with the lowest price is Rubelle Kumagaya, with a selling price of ¥9,800,000. | ×We apologize, but there is no property information available around the specified station. |

| 3 | (Include 1 Item of Table 1) Please tell me the property with the lowest management fee. →Correct: Minami-Osawa Residence |

×Palette Studio Ginza 2-chome at ¥10,820. →Management fee of ¥10,820 is the third-lowest property →Proposed generative AI did not define meaning in Management fees |

○The property with the lowest management fee is Minami-Osawa Residence, with a management fee of ¥6,000 per month | ×High City Futakotamagawa at ¥9,980,000 has the lowest management fee. →Second-lowest property price. |

| 4 | (Include 2 Item of Table 1) Please tell me about properties near the station with spacious rooms. →Correct: Minami-Osawa Residence |

〇The following properties are near the station and have spacious rooms. 1. Park House Tsunashima (omitted) 67.82 m² and 9 minutes on foot from the nearest station 2. Minami-Osawa Residence (omitted) 85.97 m² and 1 minutes on foot from the nearest station |

×The older apartment “Queen City Ichikawa Fierte” is a 2SLDK on the 2nd floor with a floor area of 70.94m², located 13 minutes on foot from the nearest station, JR Sobu/Chuo Slow Line Ichikawa Station. →70.94m² is the third-most spacious room →13 minutes is the rooms that ninth-longest time on foot |

×We apologize, but no matching properties were found. |

| 5 | (Include 3 Item of Table 1) Please tell me about properties with low prices and management fees and spacious rooms. →Correct answer: Minami-Osawa Residence. Because two out of three items are correct as shown below ・×JPY 30,900,000 is sixth-lowest price ・〇85.97 m² is most large room ・〇JPY 6000/month is lowest price →△ evaluation answer: Rubelle Kumagaya. Because one out of three items is correct as shown below →〇JPY 9,800,000 is lowest price →×53.43m² is nineth-largest room →×JPY 12,290 per month is fifth-lowest price |

〇The following property matches your criteria. Minami-Osawa Residence (omitted) This property has low management fees and a spacious room. |

△The used condominium “Rubelle Kumagaya” is priced at ¥9,800,000 with an exclusive area of 53.43m². Management fees are ¥12,290 per month. | ×We apologize, but no matching properties were found. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).