Submitted:

24 September 2025

Posted:

24 September 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

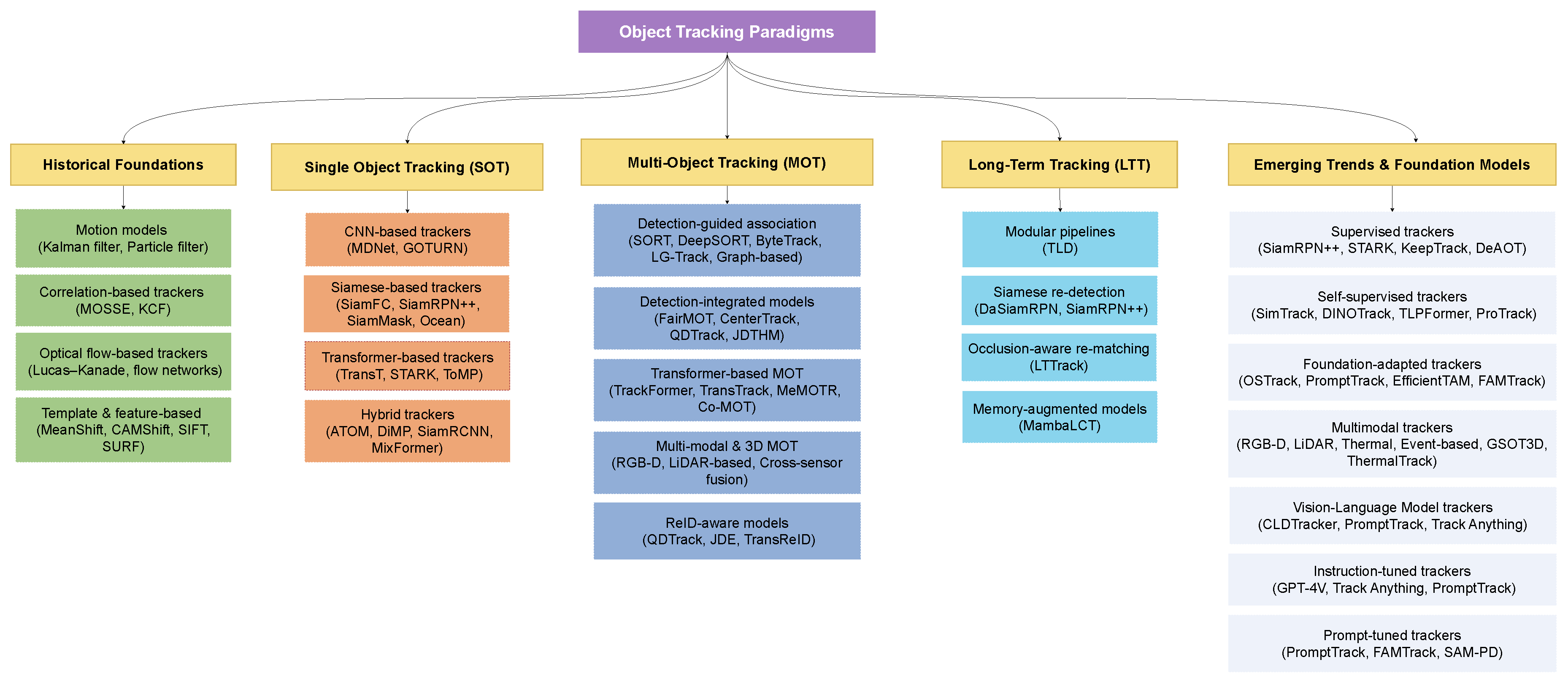

- Presenting a structured taxonomy of object tracking paradigms.

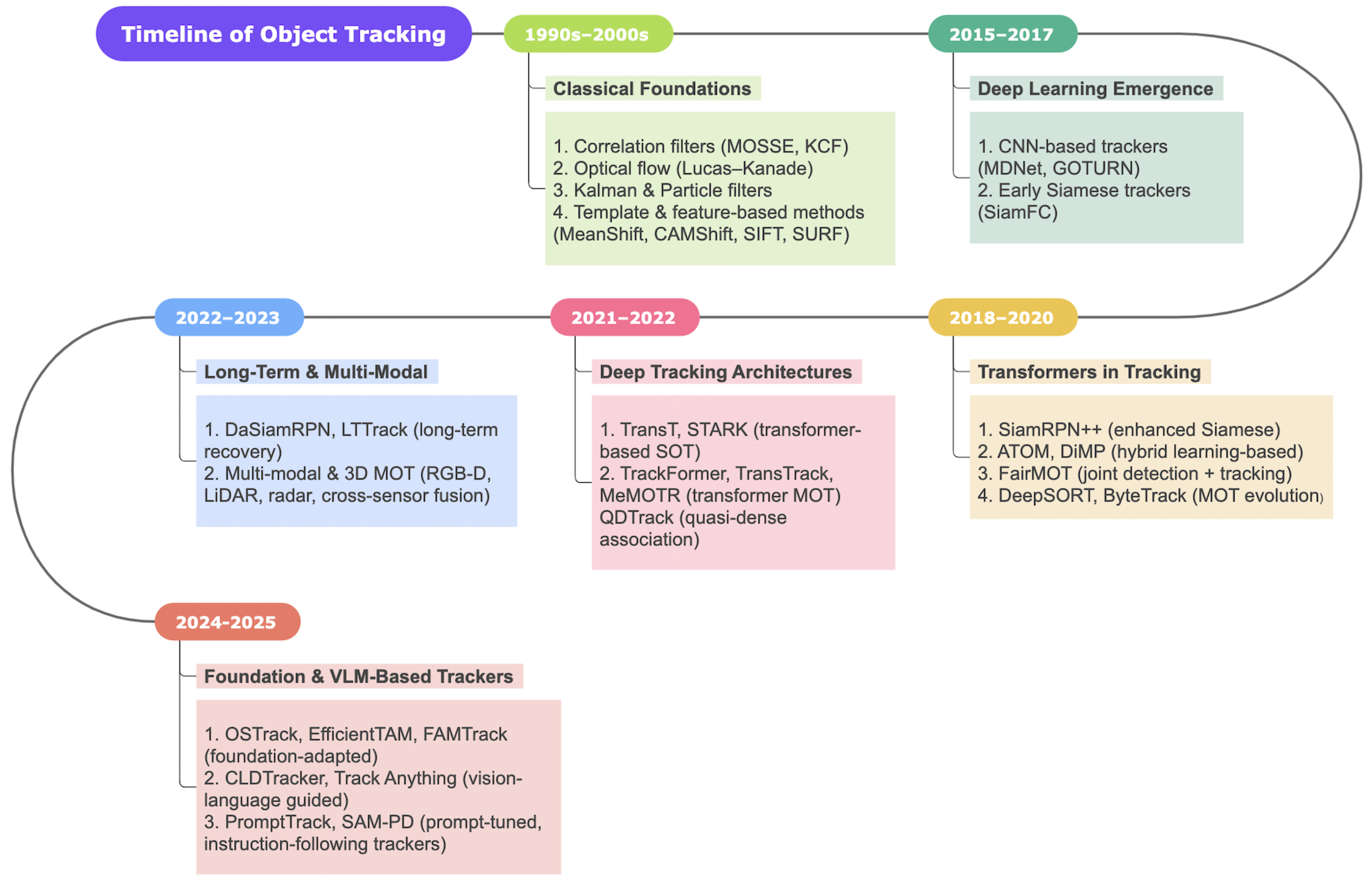

- Reviewing traditional tracking methods such as correlation filters, optical flow, and probabilistic filters.

- Detailing deep learning-based methods, including CNN-based, Siamese-based, and transformer-based trackers.

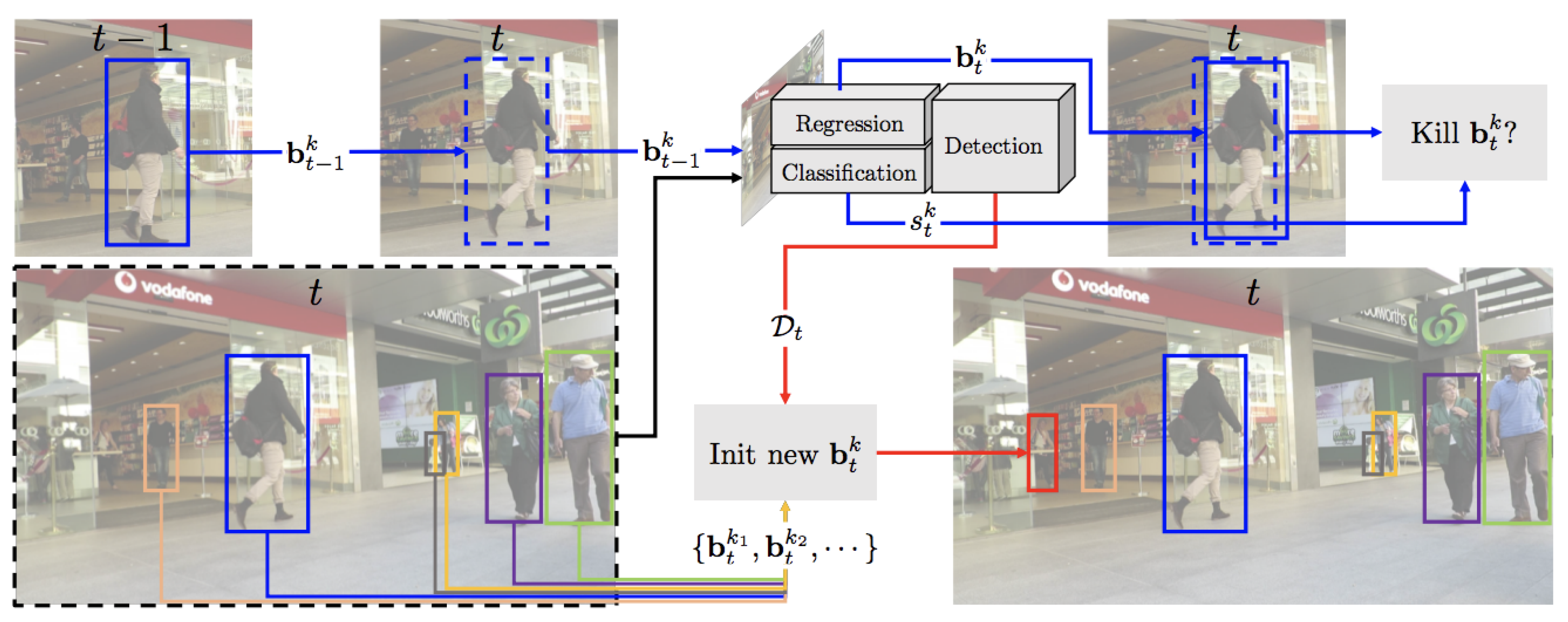

- Highlighting key advances in multi-object tracking, with a focus on data association, identity preservation, and joint detection-tracking architectures.

- Discussing recent developments in open-vocabulary and multimodal tracking using foundation models.

- Summarizing widely-used datasets and

2. Problem Formulation and Taxonomy

- Feature Extraction: Transforming raw pixels into semantically meaningful embeddings using deep neural networks, such as convolutional or transformer-based backbones.

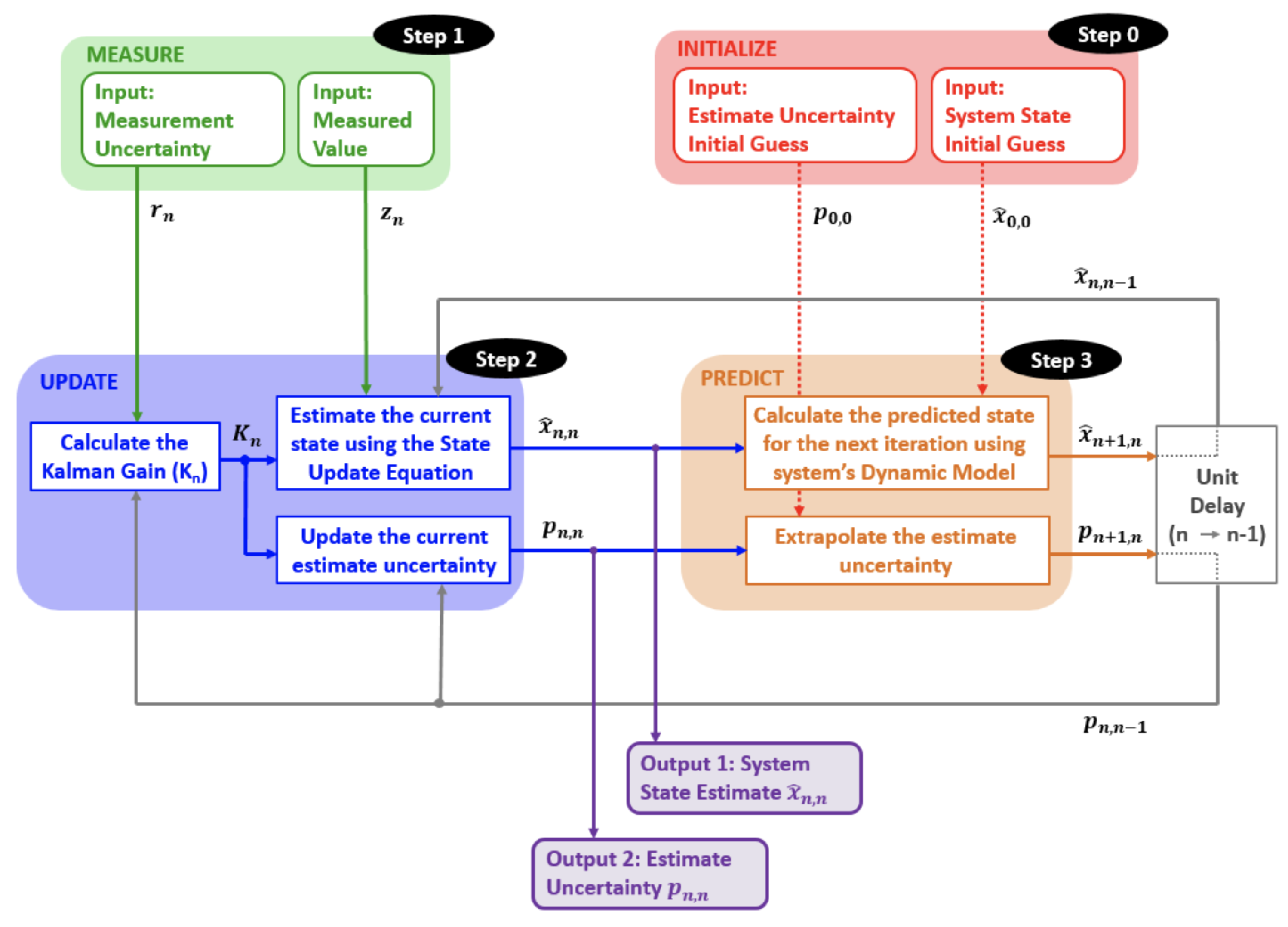

- Motion Modeling: Capturing temporal dynamics using techniques like optical flow, Kalman filters, or learned motion predictors to estimate object displacement across frames.

- Data Association: Linking estimated object states across time by matching detections or predictions using appearance similarity, spatial proximity, or temporal consistency.

- Model Update: Adapting the tracking model online to accommodate changes in object appearance, scale, pose, and environmental context.

3. Historical Foundations of Object Tracking

3.1. Correlation-Based Tracking

3.2. Optical Flow-Based Tracking

3.3. Motion Modeling with Kalman and Particle Filters

3.4. Template and Feature-Based Tracking

4. Single Object Tracking (SOT)

4.1. CNN-Based Trackers

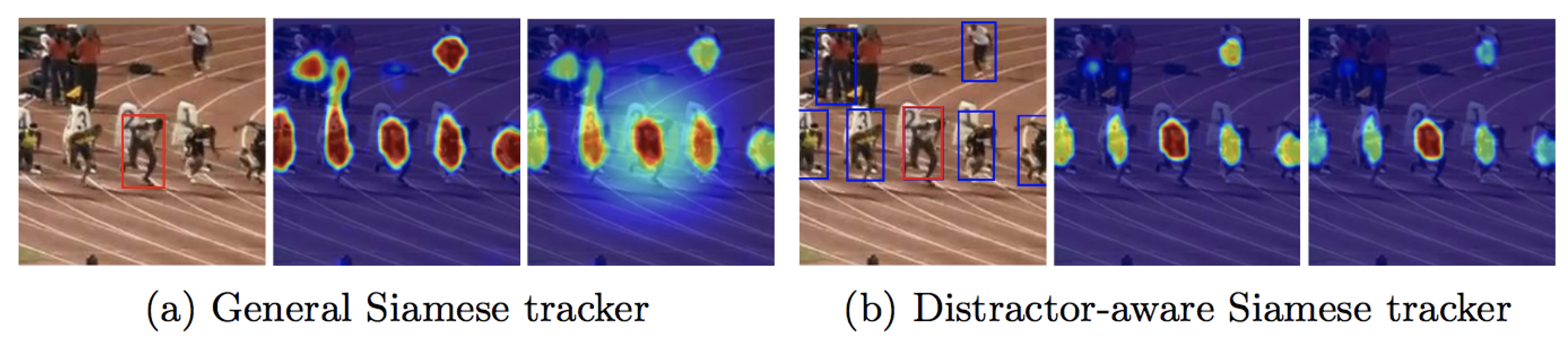

4.2. Siamese-Based Trackers

4.3. Transformer-Based Trackers

4.4. Hybrid Tracking Architectures

5. Single Object Tracking

6. Multi-Object Tracking (MOT)

6.1. Detection-Guided Association Models

6.1.1. SORT Extensions: From Motion-Only to ReID-Aware

| Method | Category | Backbone | Key Strength | Key Weakness | Performance (Dataset/Metric) |

|---|---|---|---|---|---|

| MDNet [12] | CNN | Conv + FC (multi-domain) | Learns domain-invariant representations through multi-domain training; robust under challenging conditions such as heavy occlusion and background clutter; demonstrates strong generalization to unseen objects. | Computationally expensive due to frequent online updates; suffers from low inference speed, limiting real-time usability; performance degrades in long sequences with rapid appearance variation. | AUC: 0.678 (OTB100) |

| GOTURN [13] | CNN | Dual-input CNN regressor | Extremely fast (>100 FPS), enabling real-time deployment; simple fully offline pipeline; no online fine-tuning needed; efficient feed-forward regression. | Lacks adaptation to target appearance changes; brittle under occlusion, deformation, and scale variation; struggles with long-term robustness in cluttered environments. | AUC: 0.46 (OTB100) |

| TLD-CNN [14] | CNN | CNN + online learner | Combines detection and tracking, allowing recovery from failures; online learning enables adaptation to dynamic targets; can re-detect objects after drift or loss. | Online updates prone to noise accumulation, leading to drift; high complexity compared to simpler Siamese models; unstable in highly cluttered or fast-moving scenarios. | Precision: ∼0.56 (OTB100) |

| SiamFC [15] | Siamese | Shared CNN encoder | Lightweight and efficient design; achieves real-time operation ( 86 FPS); end-to-end similarity learning via cross-correlation; robust against moderate distractors. | Relies on fixed template without update, limiting adaptability; weak handling of scale and aspect ratio changes; fails under long occlusion or drastic appearance variation. | AUC: 0.582 (OTB100) |

| SiamRPN [16] | Siamese | Siamese CNN + RPN | Incorporates region proposal network (RPN) for accurate localization; handles scale variation better than SiamFC; improved robustness for short- to mid-term tracking. | Strong dependency on anchor design introduces rigidity; limited adaptability to unseen object classes; inference cost increases compared to SiamFC. | EAO: 0.41 (VOT2018) |

| SiamRPN++ [17] | Siamese | ResNet-50 Siamese | Leverages deep residual features for strong representation; improved receptive fields and robustness; achieves state-of-the-art accuracy on multiple benchmarks. | Computationally heavier than earlier Siamese trackers; constrained by anchor-based formulation; reduced efficiency in embedded or resource-limited systems. | AUC: 0.696 (OTB100) |

| TransT [20] | Transformer | Cross/self-attention modules | Exploits global context through self- and cross-attention; anchor-free design avoids hand-crafted priors; generalizes well to diverse object categories. | High computational overhead during inference; requires large-scale pretraining for stability; slower than Siamese models on resource-limited devices. | AUC: 0.649 (LaSOT) |

| STARK [21] | Transformer | Encoder–decoder Transformer | Models temporal dependencies explicitly with spatio-temporal attention; stable under jitter, occlusion, and appearance changes; strong bounding box regression accuracy. | Memory- and compute-intensive; performance sensitive to sequence design; not suitable for lightweight or mobile scenarios. | AUC: 0.678 (LaSOT) |

| ToMP [22] | Transformer | Transformer + predictor | Performs dense feature matching with strong robustness to appearance changes; eliminates need for frequent online updates; flexible prediction capability. | Dense patch-token representation increases latency; higher complexity hinders real-time performance; resource-demanding for large-scale deployment. | AUC: 0.70 (TrackingNet) |

| TLD [28] | Hybrid | Optical flow + detector | First to propose tracking–learning–detection loop; global re-detection enables recovery from failures; adaptive appearance models for long-term use. | Online updates prone to drift; handcrafted features limit robustness; unstable in rapidly changing or dynamic scenes. | AUC: 0.53 (OTB100) |

| ATOM [23] | Hybrid | ResNet + regressor head | Accurate bounding box estimation via dedicated regression head; robust under challenging appearance changes; strong baseline for hybrid trackers. | Requires per-sequence optimization online; prevents real-time deployment; increased latency compared to lightweight models. | AUC: 0.669 (LaSOT) |

| DiMP [24] | Hybrid | ResNet + meta-learner | Discriminative classification combined with meta-learned updates; adapts quickly to new targets; competitive accuracy on long sequences. | Still requires online optimization; additional training complexity; slower compared to pure Siamese architectures. | AUC: 0.678 (LaSOT) |

| SiamRCNN [25] | Hybrid | Siamese backbone + R-CNN | Achieves high accuracy under challenging conditions; integrates detection for robust target estimation; flexible handling of aspect ratio and scale. | Computationally heavy two-stage design; significantly slower inference; complex pipeline compared to single-stage trackers. | AUC: 0.64 (LaSOT) |

| SiamBAN [26] | Hybrid | Siamese + unified head | Anchor-free classification and regression head improves flexibility; balanced trade-off between accuracy and efficiency; robust across diverse conditions. | Still limited by fixed template; lacks strong recovery under long occlusion; less competitive for long-term tracking. | AUC: 0.63 (LaSOT) |

| MixFormer [27] | Hybrid | Transformer encoder + joint head | Unified CNN+Transformer architecture with fewer parameters; competitive performance across datasets; strong robustness to appearance variation. | Requires extensive pretraining to achieve best performance; heavier than lightweight Siamese designs; reduced efficiency in embedded scenarios. | AUC: 0.704 (LaSOT) |

6.1.2. Detector-Driven Propagation

6.1.3. Recall-Boosting Trackers

6.1.4. Confidence-Aware Association

6.1.5. Graph-Based and Group-Aware Association

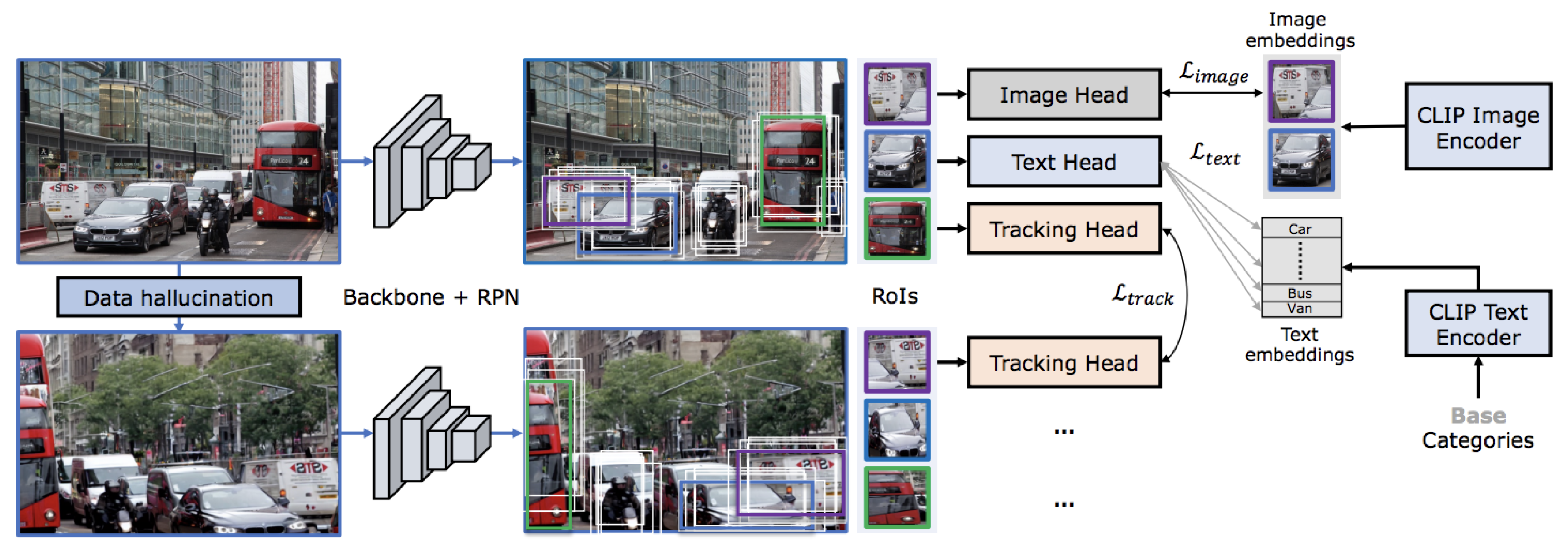

6.2. Detection-Integrated Tracking Models

6.2.1. Dual-head networks

6.2.2. Query-modular designs

6.2.3. Higher-order graph formulations

6.2.4. Keypoint-driven propagation

6.2.5. Quasi-dense association

6.3. Transformer-Based MOT Architectures

6.3.1. Query-based frameworks

6.3.2. Cross-frame aligned attention

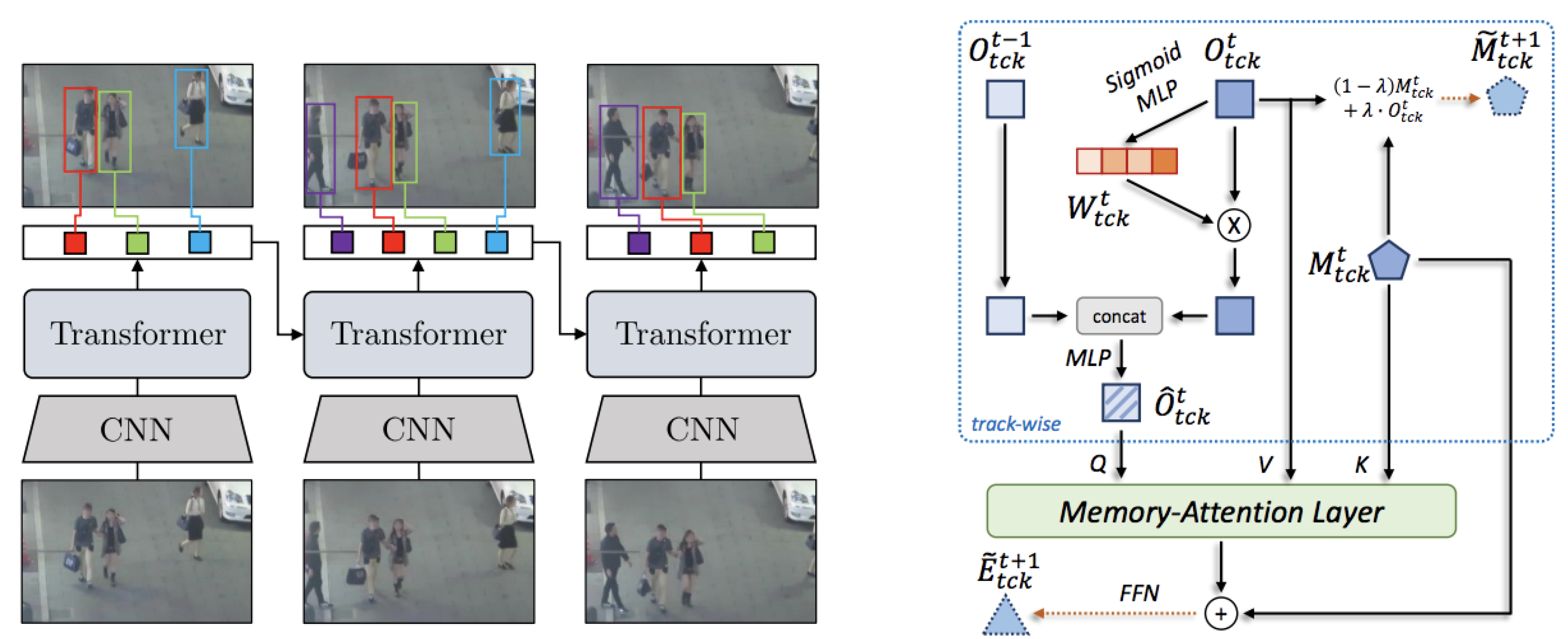

6.3.3. Memory-augmented models

6.3.4. Conflict-resolution attention

6.4. Multi-Modal and 3D Multi-Object Tracking

6.4.1. RGB-D tracking

6.4.2. LiDAR-based 3D MOT

6.4.3. Cross-sensor fusion

| Method | Category | Backbone | Key Strength | Key Weakness | Performance (Dataset/Metric) |

|---|---|---|---|---|---|

| DeepSORT [33] | Detection-Guided | CNN ReID + Kalman | Handles occlusion by integrating appearance features with motion; modular and easy to integrate into existing detectors; widely used baseline for MOT pipelines. | Relies heavily on detector quality; identity switches when embeddings drift; weak under scale variation and crowded scenes. | MOTA: 61.4 (MOT17) |

| StrongSORT [34] | Detection-Guided | Kalman + suppression + CNN ReID | Enhances DeepSORT with outlier suppression; improves ID stability in cluttered environments; handles partial occlusion better; open-source and fast in practice. | Still experiences residual ID switches in heavy occlusion; sensitive to noisy embeddings; performance tied to detector backbone. | IDF1: 72.5 (MOT17) |

| Tracktor++ [35] | Detection-Guided | Detector regression head | Simple and efficient design; reuses detector regression to propagate boxes; avoids explicit association; competitive with minimal engineering. | Limited by detector recall; weak under occlusion and motion blur; less effective for multi-class tracking. | MOTA: 53.5 (MOT17) |

| ByteTrack [36] | Detection-Guided | Association + simple motion | Strong recall by keeping low-confidence detections; robust in crowded scenes; balances precision and recall effectively; competitive real-time speed. | Does not leverage embeddings; prone to ID drift under occlusion; struggles with long-term re-identification. | HOTA: 63.1 (MOT20) |

| MR2-ByteTrack [37] | Detection-Guided | Resolution-aware association stack | Lightweight and embedded-friendly; resilient under low-resolution and noisy detections; stable on edge devices with limited resources. | Accuracy drops significantly during long occlusions; limited generalization across diverse benchmarks. | MOTA: 60.2 (MOT20) |

| LG-Track [38] | Detection-Guided | Local-Global Association + CNN ReID | Combines local motion with global context; reduces ID switches by balancing short-term and long-term cues; lightweight design with solid accuracy. | Struggles under dense occlusion; performance sensitive to hyperparameter tuning; still tied to detector quality. | MOTA: 66.2 (MOT17) |

| Deep LG-Track [39] | Detection-Guided | Deep Local-Global Features + Kalman | Extends LG-Track with deep hierarchical features; handles long-term occlusion better; improved embedding robustness; achieves state-of-the-art stability. | Computationally heavier than LG-Track; requires careful training data; scalability limited in real-time scenarios. | IDF1: 74.1 (MOT20) |

| RTAT [40] | Detection-Guided | Two-Stage Association (Motion + ReID) | Robust two-stage association that first filters candidates by motion, then refines with appearance; improves robustness under occlusion and noisy detections. | Extra association stage increases latency; dependent on motion modeling assumptions; weaker in long occlusions. | MOTA: 69.8 (MOT17) |

| Wu et al. [41] | Detection-Guided | Graph Matching + Appearance Embeddings | Uses graph-based association for global consistency; better at maintaining IDs across fragmented detections; reduces error accumulation. | Sensitive to graph construction errors; scalability issues on large scenes; requires strong embeddings. | IDF1: 70.6 (MOT17) |

| FairMOT [42] | Detection-Integrated | Shared CNN with detection + ReID heads | Jointly optimizes detection and embeddings; avoids trade-offs between cascaded pipelines; balanced accuracy and efficiency; strong ID preservation. | Moderate speed compared to pure detection trackers; performance depends on backbone choice; less optimized for real-time on constrained hardware. | IDF1: 72.3 (MOT17) |

| CenterTrack [46] | Detection-Integrated | CenterNet + motion offset head | Real-time tracking via center-based detection; simple online association; effective balance of accuracy and efficiency. | No explicit appearance model; fragile identity handling under occlusion; weak at long-term re-identification. | MOTA: 67.8 (MOT17) |

| QDTrack [47] | Detection-Integrated | CNN with quasi-dense matching | Quasi-dense similarity supervision improves embeddings; learns ReID signals without explicit labels; robust local feature matching. | High computational demand; identity drift under prolonged clutter; scalability issues for large benchmarks. | IDF1: 71.1 (MOT17) |

| Speed-FairMOT [43] | Detection-Integrated | Lightweight CNN + Joint Detection-Tracking | Optimized for speed with reduced backbone; achieves real-time performance on edge devices; preserves FairMOT joint detection-tracking design. | Sacrifices accuracy for speed; limited robustness in crowded or complex scenes; weaker embeddings. | FPS: 45, MOTA: 59.7 (MOT17) |

| TBDQ-Net [44] | Detection-Integrated | Transformer + Query Matching | Efficient query-based detection-tracking framework; reduces redundant computations; competitive accuracy with better speed-accuracy tradeoff. | Query design limits scalability; harder to adapt to unseen objects; performance tied to transformer efficiency. | MOTA: 68.3 (MOT20) |

| JDTHM [45] | Detection-Integrated | Joint Detection-Tracking Heatmap | Uses joint heatmap representation for detection and tracking; improves spatial consistency and reduces ID switches; efficient training pipeline. | Limited generalization to non-standard scenes; struggles in low-resolution inputs; identity drift under long occlusion. | IDF1: 71.2 (MOT17) |

| TrackFormer [48] | Transformer | Transformer encoder–decoder | End-to-end query-based detection and tracking; propagates identity with track queries; avoids handcrafted association modules. | Slower inference than modular methods; heavy GPU demand; sensitive to hyperparameters. | HOTA: 58.4 (MOT17) |

| TransTrack [51] | Transformer | Transformer with cross-frame attention | Cross-frame aligned attention improves spatial consistency; effective for short-term identity preservation; integrates detection and tracking. | ID persistence weak under long occlusion; requires careful initialization; heavier than CNN-based trackers. | IDF1: 60.9 (MOT17) |

| ABQ-Track [52] | Transformer | Transformer with anchor queries | Encodes positional priors via anchor queries; reduces ID switches in crowded environments; competitive accuracy with fewer parameters. | Anchor design adds rigidity; reduced generalization across datasets; not robust to unseen layouts. | HOTA: 61.7 (MOT20) |

| MeMOTR [53] | Transformer-Based | Memory-Augmented Transformer | Integrates long-term memory into transformer decoder; robust under long occlusion; captures contextual dependencies over frames. | Computationally heavy; memory module increases complexity; requires large-scale training data. | MOTA: 70.9 (MOT20) |

| Co-MOT [54] | Transformer-Based | Cooperative Transformer + ReID | Uses cooperative transformer layers to share context among objects; excels in crowded scenes; strong re-identification accuracy. | High GPU demand; longer inference time; requires careful parameter balancing. | IDF1: 76.4 (MOT20) |

- Early fusion: Raw sensor data is concatenated prior to feature extraction [64], though misalignments can degrade results.

- Late fusion: Predictions from individual modalities are merged [65], which is flexible but prevents deep cross-modal reasoning.

- Deep fusion: Learned attention modules integrate features at intermediate layers [66], capturing cross-modal correlations:

6.5. ReID-Aware Models

6.5.1. Quasi-dense similarity learning

6.5.2. Joint detection and embedding

6.5.3. Transformer-based re-identification

| Method | Category | Backbone | Key Strength | Key Weakness | Performance (Dataset/Metric) |

|---|---|---|---|---|---|

| RGB–D Tracking [58] | Multi-Modal / 3D | Dual encoders + attention fusion | Depth cues improve occlusion handling and scale estimation; foreground/background separation; enhanced robustness in indoor scenarios. | Depth noise or missing data outdoors reduces reliability; sensor calibration errors degrade performance. | MOTA: 46.2 (RGBD-Tracking) |

| CS Fusion [66] | Multi-Modal / 3D | RGB + LiDAR + Radar + IMU stack | Cross-sensor fusion complements appearance, geometry, and velocity cues; robust under weather and illumination challenges; improves generalization. | Sensitive to sensor synchronization and calibration; computationally expensive; deployment complexity in real-world setups. | AMOTA: 56.3 (nuScenes) |

| DS-KCF [56] | Multi-Modal/3D | Depth-aware Correlation Filter | Early depth-aware tracker; combines RGB and depth cues; robust against background clutter and partial occlusion. | Limited scalability to large datasets; handcrafted correlation filters less robust than deep features. | Success Rate: 72.1 (RGB-D benchmark) |

| OTR [57] | Multi-Modal/3D | RGB-D Correlation Filter + ReID | Exploits both depth and appearance; improved occlusion handling in RGB-D scenes; maintains IDs across viewpoint changes. | Depth sensor noise affects accuracy; limited to RGB-D applications; heavier computation than 2D trackers. | Precision: 74.5 (RGB-D benchmarks) |

| DPANet [58] | Multi-Modal/3D | Dual Path Attention Network | Fuses RGB and depth with attention mechanisms; adaptive weighting improves robustness; handles occlusion well. | Needs high-quality depth input; expensive feature fusion; generalization limited to RGB-D datasets. | MOTA: 62.3 (MOT-RGBD) |

| AB3DMOT [59] | Multi-Modal/3D | Kalman + 3D Bounding Box Association | Widely used 3D MOT baseline; fast and simple; effective for LiDAR-based tracking in autonomous driving. | Limited by detection quality; struggles in long occlusion; ignores appearance cues. | AMOTA: 67.5 (KITTI) |

| CenterPoint [60] | Multi-Modal/3D | Center-Based 3D Detection + Tracking | Center-based pipeline for LiDAR; accurate and efficient; strong baseline for 3D MOT in autonomous driving. | Requires high-quality LiDAR; misses small/occluded objects; limited in multi-modal fusion. | AMOTA: 78.1 (nuScenes) |

| JDE [68] | ReID-Aware | CNN detector + embedding head | Unified backbone for joint detection and embedding; reduced inference latency; optimized for real-time applications. | Embeddings weaker than specialized ReID models; suffers under heavy occlusion; trade-off between detection and ReID accuracy. | MOTA: 64.4 (MOT16) |

| TransReID [69] | ReID-Aware | Transformer with CAPE | Captures long-range dependencies; part-aware embedding improves viewpoint robustness; strong results across cameras. | High computational cost; domain-shift sensitivity; needs large-scale pretraining for stability. | IDF1: 78.0 (Market-1501) |

7. Long-Term Tracking (LTT)

7.0.4. Early modular frameworks

7.0.5. Siamese re-detection models

7.0.6. Occlusion-aware re-matching

7.0.7. Memory-augmented approaches

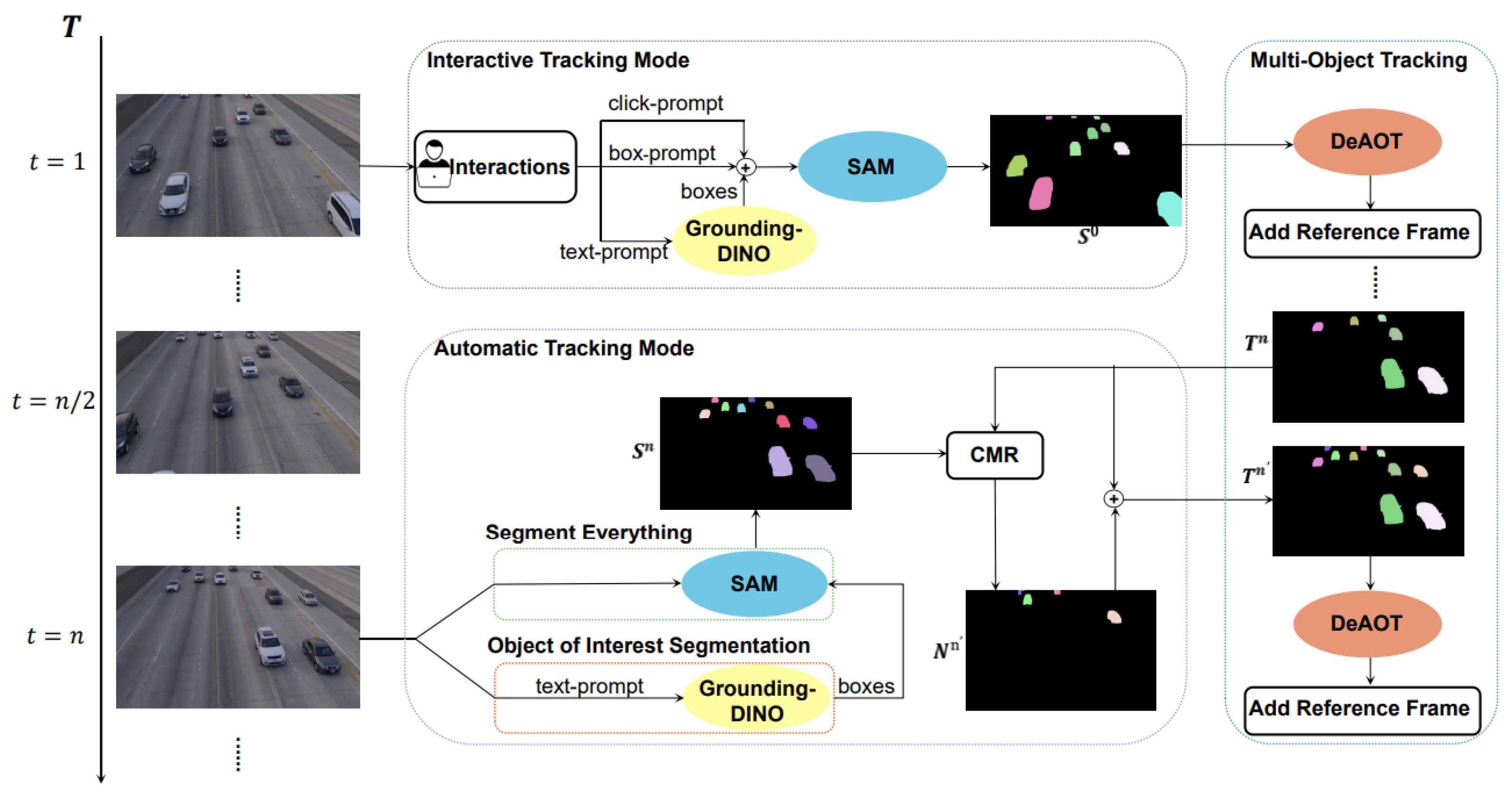

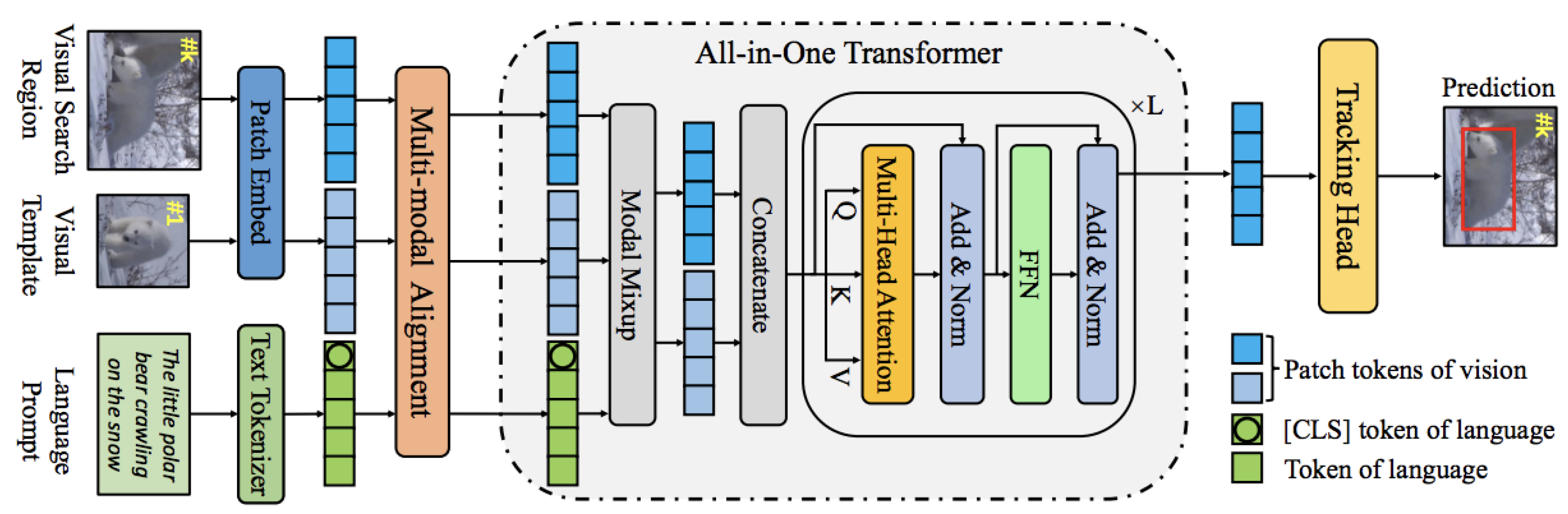

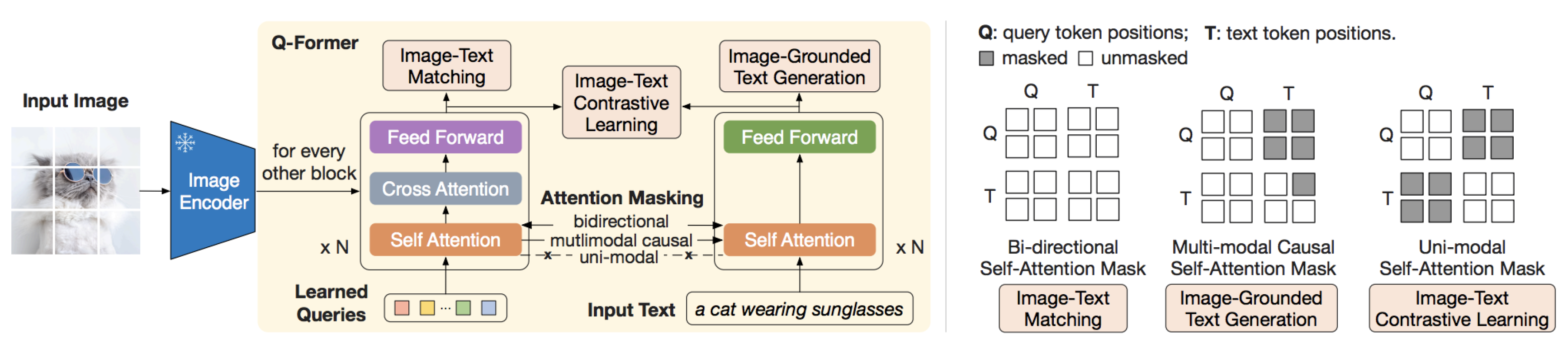

8. Emerging Trends: Vision-Language and Foundation Model-Based Tracking

8.1. Unified Taxonomy of Tracking Paradigms

8.1.1. Supervised Trackers

8.1.2. Self-Supervised Trackers

8.1.3. Foundation-Adapted Trackers

8.1.4. Multimodal Trackers

8.1.5. Vision-Language Model (VLM)-Powered Trackers

8.1.6. Instruction-Tuned Trackers

8.1.7. Prompt-Tuned Trackers

8.2. Meta-Analysis of Transferability

8.2.1. Cross-Model Representation Reuse

8.2.2. Cross-Dataset Transfer Evaluation

8.2.3. Modality-Level Transfer Insights

8.2.4. Challenges and Opportunities in Transferability

8.3. Roadmap for Promptable Tracking

8.3.1. Foundations and Paradigms of Promptable Tracking

8.3.2. Challenges in Prompt Understanding and Temporal Consistency

8.3.3. Emerging Techniques in Promptable Tracking

| Method | Backbone | Params | Key Metric / Result | Inference Speed (FPS) | Supported Tasks | Code |

|---|---|---|---|---|---|---|

| TrackAnything [98] | SAM ViT | 300M | AUC 0.65 (LaSOT) | 15 | SOT, MOT, Promptable | Link |

| CLDTracker [96] | GPT-4V + Transformer | 800M+ | F1 0.78 (EgoTrack++) | 5 | Text-guided MOT | Link |

| EfficientTAM [87] | Lite ViT + Memory | 250M | AUC 0.65 (DAVIS) | 25 | FM-assist SOT | Link |

| SAM-PD [88] | SAM ViT | 300M | IoU 0.80 (DAVIS) | 12 | FM-assist SOT | Link |

| SAM-Track [89] | SAM + DeAOT | 350M | F1 0.75 (YouTube-VOS) | 8 | FM-assist MOT | Link |

| SAMURAI [121] | SAM2 + Memory Gate | 320M | AUC 0.68 (LaSOT) | 15 | FM-assist SOT | Link |

| OVTrack [108] | Transformer + CLIP | 400M | mHOTA 0.70 (MOT20) | 10 | Open-vocab MOT | Link |

| LaMOTer [118] | Cross-modal Transformer | 350M | MOTA 68.2 (MOT17) | 7 | Text-guided MOT | Link |

| PromptTrack [113] | CLIP + Transformer | 600M | AUC 0.64 (LaSOT) | 9 | Promptable SOT | Link |

| UniVS [124] | Shared Encoder-Decoder | 550M | F1 0.72 (TREK-150) | 11 | Unified Multimodal | Link |

| ViPT [125] | Transformer + VisPrompt | 300M | AUC 0.60 (LaSOT) | 14 | Promptable SOT | Link |

| MemVLT [126] | Memory-Attn + Lang Fuse | 700M | Recall 0.75 (Ego4D) | 6 | Memory-based VLM | Link |

| DINOTrack [127] | DINOv2 + Transformer | 400M | AUC 0.66 (LaSOT) | 11 | SOT | Link |

| VIMOT [92] | Multimodal | 400M | HOTA 68.4 (MOT) | 14 | Multimodal MOT | N/A |

| BLIP-2 [120] | Vision Transformer | 1.6B | Multimodal Fusion | - | Foundation Model | Link |

| GroundingDINO [128] | Transformer | 300M | Zero-shot Detection | 15 | Multimodal Detection | Link |

| Flamingo [119] | Perceiver | 80B | Multimodal Few-Shot | - | Foundation Model | Link |

| SAM2MOT [129] | Grounded-SAM + Transformer | 350M | mAP 0.70 (DAVIS) | 10 | Segmentation-based MOT | Link |

| DTLLM-VLT [130] | CLIP + LLM Gen | 700M+ | AUC 0.62 (VOT2023) | 6 | Promptable SOT | Link |

| DUTrack [114] | Hybrid Attention | 500M | F1 0.74 (TREK-150) | 8 | Text-guided MOT | Link |

| UVLTrack [117] | Joint Encoder | 400M | AUC 0.66 (LaSOT) | 9 | Promptable SOT | Link |

| All-in-One [99] | Vis + Lang Encoder-Decoder | 800M | AUC 0.70 (LaSOT) | 5 | Multimodal SOT | Link |

| Grounded-SAM [131] | GroundingDINO + SAM | 350M | mHOTA 0.68 (MOT20) | 10 | Open-vocab MOT | Link |

8.4. Ethical and Robustness Considerations

8.4.1. Fairness and Bias.

8.4.2. Robustness and Security.

8.4.3. Transparency and Regulation.

9. Benchmarks and datasets

9.1. Single Object Tracking (SOT) Benchmarks

9.2. Multi-Object Tracking (MOT) Benchmarks

9.3. Long-Term Tracking (LTT) Benchmarks

9.4. Benchmarks for Vision-Language and Prompt-Based Tracking

| Benchmark | Category | Eval Metrics | Dataset Size | Description | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| OTB-2013 [143] | SOT | Precision, SR | 50 RGB clips | Early small-scale benchmark for SOT; low-res, short sequences. | Standardized evaluation with precision/success plots; widely cited; shaped early SOT progress. | Limited size and diversity; low resolution; lacks real-world motion complexity. |

| VOT [144] | SOT | EAO, Acc., Robust. | Annual RGB sets | Annual short-term benchmark with resets. | Unified eval methodology with strong leaderboard tradition; fine-grained accuracy/robustness analysis. | Limited to short-term; resets bias results; datasets change yearly. |

| LaSOT [106] | SOT/LTT | AUC, Precision | 1,400 long videos | Large-scale dataset with long sequences across 70 categories. | Long (>2,500 frames) dense annotations; diverse categories; widely used for both SOT and LTT. | Annotation noise/drift; dominated by very long sequences; class imbalance remains. |

| TrackingNet [145] | SOT | AUC, SR | 30K YouTube clips | Large-scale dataset sampled from YouTube-BB. | Internet-scale diversity; strong train/test protocol; generalization across objects. | Sparse labeling (1s intervals); limited attribute annotations; weaker for long-term. |

| GOT-10k [146] | SOT | mAO, mSR | 10K videos | One-shot generalization dataset with disjoint classes. | Forces generalization; clean protocol; balanced evaluation. | Limited categories (563); less diverse motion/scene content. |

| UAV123 [111] | SOT | Precision, SR | 123 UAV videos | UAV sequences with aerial motion. | Captures aerial scale/rotation changes; tailored for drone applications. | Narrow UAV focus; fewer object categories; overhead bias. |

| FELT [90] | SOT/LTT | Long-term SR | 1.6M frames | Multi-camera dataset with extremely long videos. | Stress-test for long-term tracking; asynchronous multi-camera data; very large scale. | Sparse labeling; high compute demands; difficult to simulate in lab. |

| NT-VOT211 [147] | SOT | Night AUC, Robust. | 211 videos | Night-time, low-light tracking dataset. | First benchmark for night tracking; evaluates blur/noise robustness. | Domain-specific (night only); lacks cross-condition coverage. |

| OOTB [148] | SOT | Angular IoU, SR | 100+ satellite clips | Satellite imagery with oriented bounding boxes. | First orbital benchmark; introduces rotation-aware evaluation. | Sparse dynamics; limited categories; specialized to satellites. |

| GSOT3D [91] | SOT | 3D IoU, Depth Acc. | RGB-D + LiDAR | Multi-modal 3D-aware tracking dataset. | Enables RGB-D + LiDAR evaluation; supports sensor fusion. | Calibration issues; limited outdoor scenarios. |

| MOT15 [149] | MOT | MOTA, MOTP | 22 pedestrian scenes | First MOTChallenge dataset. | Established MOT evaluation; easy to reproduce; lightweight. | Outdated detectors; small scale; limited environments. |

| MOT17 [31] | MOT | MOTA, IDF1, HOTA | 7 scenes × 3 dets | Pedestrian benchmark with multiple detector inputs. | Multi-detector setup ensures fairness; widely cited baseline; multiple metrics. | Scene reuse; limited domain diversity; pedestrian-only focus. |

| MOT20 [150] | MOT | MOTA, IDF1 | 4 street scenes | Crowded pedestrian benchmark. | Stresses dense identity preservation; useful for occlusion-rich scenarios. | Pedestrian-only; very limited scene variety. |

| KITTI [152] | MOT | 3D IoU, ID Sw | 21 AV scenes | Driving dataset with LiDAR + stereo. | Multi-modal 2D/3D annotations; influential for AV tracking. | Driving-only domain; small size vs. modern AV datasets. |

| BDD100K [151] | MOT | MOTA, Track Recall | 100K frames | Driving dataset with diverse conditions. | Large-scale, diverse weather/lighting; multi-class. | Sparse MOT annotations; mainly detection-focused. |

| TAO [153] | MOT | Track mAP, mIoU | 2.9K videos | Long-tail multi-class dataset. | Covers LVIS/COCO classes; multi-domain, open-world. | Sparse temporal annotations; low update frequency. |

| DanceTrack [32] | MOT | IDF1, HOTA | 100+ dance videos | Human non-rigid motion benchmark. | Tests pose variation and non-rigid identity tracking. | Human-only focus; narrow application. |

| EgoTracks [154] | MOT | IDF1, Temp Recall | Headcam videos | Egocentric occlusion-heavy dataset. | Captures first-person occlusion/motion bias; challenging evaluation. | Strong ego bias; noisy head-movement; small scale. |

| OVTrack [104] | MOT/VLM | mHOTA, Recall | Open-vocab videos | MOT with natural-language queries. | First open-vocab MOT; enables free-form prompt evaluation. | Prompt bias; evolving protocols; reproducibility challenges. |

| OxUvA [157] | LTT | TPR, Abstain | 366 videos | Long-term occlusion-focused dataset. | Introduces visibility flags and abstain metric; strong LTT protocol. | Sparse object categories; unusual evaluation rules. |

| UAV20L [111] | LTT | Success, Recall | 20 UAV sequences | Long UAV-specific dataset. | Motion + exits evaluation; relevant for drones. | Narrow UAV-only domain; low frame-rate. |

| LaSOT-Ext [160] | LTT | SR, AUC | 3K videos | Extension of LaSOT with more balanced classes. | Improves balance across categories; builds on LaSOT. | Annotation drift in some sequences; lacks explicit motion cues. |

| TREK-150 [159] | LTT | MaxGM | 150 AR/VR clips | AR/VR-specific benchmark. | Rich AR/VR coverage; stresses adaptation. | Tuning difficulty; broad domain adaptation required. |

| BURST [158] | VLM | Grounding Acc. | 140K frames | Natural language grounding benchmark. | Diverse phrases; grounding focus; bursty events. | Ambiguous phrasing; inconsistent annotations. |

| LVBench [160] | VLM | QA Acc., Recall | 200K pairs | QA-driven VL benchmark. | Combines QA + tracking; large scale; diverse content. | Coarse-grained queries; requires LLM-based evaluation. |

| TNL2K-VLM [161] | VLM | SR, Acc. | 2K queries | Natural language-driven tracking. | First NL-based tracking benchmark; supports flexible text prompts. | Small compared to LaSOT/BURST; limited variety of queries. |

10. Future Directions

10.1. Agentic and Adaptive Tracking Systems

10.2. Integration of Vision-Language and Foundation Models

10.3. Unified and Modular Architectures

10.4. Benchmarking and Evaluation in Complex Real-World Scenarios

10.5. Ethical and Robustness Considerations

11. Conclusions

References

- Bolme, D.S.; Beveridge, J.R.; Draper, B.A.; Lui, Y.M. Visual object tracking using adaptive correlation filters. In Proceedings of the CVPR, 2010, pp. 2544–2550.

- Henriques, J.F.; Caseiro, R.; Martins, P.; Batista, J. High-speed tracking with kernelized correlation filters. In Proceedings of the IEEE TPAMI, 2015, Vol. 37, pp. 583–596.

- Lucas, B.D.; Kanade, T. An iterative image registration technique with an application to stereo vision. In Proceedings of the IJCAI, 1981, pp. 674–679.

- Kalman, R.E. A new approach to linear filtering and prediction problems. Journal of Basic Engineering 1960, 82, 35–45.

- Arulampalam, M.; Maskell, S.; Gordon, N.; Clapp, T. A tutorial on particle filters for online nonlinear/non-Gaussian Bayesian tracking. IEEE Transactions on Signal Processing 2002, 50, 174–188. [Google Scholar] [CrossRef]

- Welch, G.; Bishop, G. An Introduction to the Kalman Filter. https://www.kalmanfilter.net/default.aspx, 2006. Accessed: 2025-07-23.

- Comaniciu, D.; Ramesh, V.; Meer, P. Real-time tracking of non-rigid objects using mean shift. CVPR 2000, pp. 142–149.

- radski, G.R. Computer vision face tracking for use in a perceptual user interface. In Proceedings of the Intel Technology Journal, 1998, Vol. 2, pp. 12–21.

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. International journal of computer vision 2004, 60, 91–110. [CrossRef]

- Bay, H.; Tuytelaars, T.; Van Gool, L. Surf: Speeded up robust features. In Proceedings of the ECCV, 2006, pp. 404–417.

- Wu, Y.; Lim, J.; Yang, M.H. Object tracking benchmark. In Proceedings of the IEEE TPAMI, 2015, Vol. 37, pp. 1834–1848.

- Nam, H.; Han, B. Learning multi-domain convolutional neural networks for visual tracking. In Proceedings of the CVPR, 2016, pp. 4293–4302.

- Held, D.; Thrun, S.; Savarese, S. Learning to track at 100 FPS with deep regression networks. In Proceedings of the ECCV, 2016, pp. 749–765.

- Nam, H.; Han, B. Modeling and propagating CNNs in a tree structure for visual tracking. arXiv preprint arXiv:1608.07242, 2016.

- Bertinetto, L.; Valmadre, J.; Henriques, J.F.; Vedaldi, A.; Torr, P.H. Fully-convolutional siamese networks for object tracking. In Proceedings of the ECCV Workshops, 2016.

- Li, B.; Yan, J.; Wu, W.; Zhu, Z.; Hu, X. High performance visual tracking with siamese region proposal network. In Proceedings of the CVPR, 2018, pp. 8971–8980.

- Li, B.; Wu, W.; Wang, Q.; Zhang, F.; Xing, J.; Yan, J. SiamRPN++: Evolution of Siamese visual tracking with very deep networks. CVPR 2019, pp. 4282–4291.

- Wang, Q.; Zhang, L.; Bertinetto, L.; Hu, W.; Torr, P.H. Fast online object tracking and segmentation: A unifying approach. In Proceedings of the CVPR, 2019, pp. 1328–1338.

- Zhang, Z.; Peng, H.; Fu, J.; Hu, W. Ocean: Object-aware anchor-free tracking. In Proceedings of the ECCV, 2020, pp. 771–787.

- Chen, X.; Wang, B.; Wang, S.; Yang, Y.; Tai, Y.W.; Tang, C.K. Transformer tracking. In Proceedings of the CVPR, 2021, pp. 8126–8135.

- an, B.; Peng, H.; Fu, J.; Wang, D.; Lu, H. Learning spatio-temporal transformer for visual tracking. In Proceedings of the ICCV, 2021, pp. 10448–10457.

- Mayer, C.; Danelljan, M.; Bhat, G.; Paul, M.; Paudel, D.P.; Yu, F.; Gool, L.V. Transforming Model Prediction for Tracking, 2022, [arXiv:cs.CV/2203.11192].

- Danelljan, M.; Bhat, G.; Khan, F.S.; Felsberg, M. ATOM: Accurate tracking by overlap maximization. In Proceedings of the CVPR, 2019, pp. 4660–4669.

- Bhat, G.; Danelljan, M.; Gool, L.V.; Timofte, R. Learning discriminative model prediction for tracking. In Proceedings of the ICCV, 2019, pp. 6182–6191.

- Voigtlaender, P.; Luiten, J.; Torr, P.H.; Leibe, B. Siam R-CNN: Visual tracking by re-detection. In Proceedings of the CVPR, 2020, pp. 6578–6588.

- Chen, Z.; Zhong, B.; Li, G. Siamese box adaptive network for visual tracking. In Proceedings of the CVPR, 2020, pp. 6668–6677.

- Cui, Y.; Chu, Q.; Wang, H.; Ouyang, W.; Li, Z.; Luo, J. Mixformer: End-to-end tracking with iterative mixed attention. In Proceedings of the CVPR, 2022, pp. 13707–13716.

- Kalal, Z.; Mikolajczyk, K.; Matas, J. Tracking-learning-detection. IEEE TPAMI 2012, 34, 1409–1422. [Google Scholar] [CrossRef] [PubMed]

- Luo, W.; Xing, J.; Milan, A.; Zhang, X.; Liu, W.; Kim, T.K. MOTDT: A unified framework for joint multiple object tracking and object detection. IEEE Transactions on Pattern Analysis and Machine Intelligence 2021. [Google Scholar]

- Bewley, A.; Ge, Z.; Ott, L.; Ramos, F.; Upcroft, B. Simple online and realtime tracking. In Proceedings of the ICIP, 2016, pp. 3464–3468.

- Milan, A.; Leal-Taixé, L.; Reid, I.; Roth, S.; Schindler, K. MOT16: A benchmark for multi-object tracking. arXiv preprint arXiv:1603.00831 2016.

- Sun, P.; Zhang, Y.; Yu, H.; Yuan, Z.; Wang, Y.; Sun, J. DanceTrack: Multi-object tracking in uniform appearance and diverse motion. In Proceedings of the CVPR, 2022, pp. 5351–5360.

- Wojke, N.; Bewley, A.; Paulus, D. Simple online and realtime tracking with a deep association metric. In Proceedings of the ICIP, 2017, pp. 3645–3649.

- Du, Y.; Zhao, Z.; Song, Y.; Zhao, Y. StrongSORT: Make DeepSORT Great Again, 2022. arXiv:2202.13514.

- Bergmann, P.; Meinhardt, T.; Leal-Taixé, L. Tracking without bells and whistles. In Proceedings of the ICCV, 2019.

- Zhang, Y.; Sun, P.; Jiang, Y.; Yu, D.; Weng, C.H.; Yuan, Z.; Luo, P.; Wang, X. ByteTrack: Multi-Object Tracking by Associating Every Detection Box. In Proceedings of the ECCV, 2022.

- Bompani, L.; Rusci, M.; Palossi, D.; Conti, F.; Benini, L. MR2-ByteTrack: Multi-resolution rescored ByteTrack for ultra-low-power embedded systems. In Proceedings of the CVPR Workshops, 2024.

- Meng, T.; Fu, C.; Huang, M.; Wang, X.; He, J. Localization-Guided Track: A deep association MOT framework based on localization confidence. arXiv preprint arXiv:2312.00781 2023.

- Meng, T.; He, J.; et al. Deep LG-Track: Confidence-Aware Localization for Reliable Multi-Object Tracking. ArXiv 2025.

- Guo, S.; Liu, R.; Abe, N. RTAT: A Robust Two-stage Association Tracker for Multi-Object Tracking, 2024, [arXiv:cs.CV/2408.07344].

- Wu, Y.; Sheng, H.; Wang, S.; Liu, Y.; Xiong, Z.; Ke, W. Group Guided Data Association for Multiple Object Tracking. In Proceedings of the Proceedings of the Asian Conference on Computer Vision (ACCV), December 2022, pp. 520–535.

- Zhang, Y.; Wang, C.; Wang, W.; Zeng, W. FairMOT: On the fairness of detection and re-identification in multiple object tracking. In Proceedings of the IJCV, 2020.

- Ju, C.; Li, Z.; Terakado, K.; Namiki, A. Speed-FairMOT: Multi-class Multi-Object Tracking for Real-Time Robotic Control. IET Computer Vision 2025. [Google Scholar] [CrossRef]

- Jia, S.; Hu, S.; Cao, Y.; Yang, F.; Lu, X.; Lu, X. Tracking by Detection and Query: An Efficient End-to-End Framework for Multi-Object Tracking, 2025, [arXiv:cs.CV/2411.06197].

- Cui, Z.; Dai, Y.; Duan, Y.; Tao, X. Joint Detection and Multi-Object Tracking Based on Hypergraph Matching. Applied Sciences 2024, 14, 11098. [Google Scholar] [CrossRef]

- Zhou, X.; Wang, D.; Krähenbühl, P. Tracking Objects as Points. In Proceedings of the ECCV, 2020.

- Pang, J.; Qiu, L.; Li, X.; Chen, H.; Li, Q.; Darrell, T.; Yu, F. Quasi-Dense Similarity Learning for Multiple Object Tracking, 2021, [arXiv:cs.CV/2006.06664].

- Meinhardt, T.; Kirillov, A.; Leal-Taixé, L.; Feichtenhofer, C. TrackFormer: Multi-Object Tracking with Transformers. In Proceedings of the CVPR, 2022.

- Meinhardt, T.; Kirillov, A.; Leal-Taixe, L.; Feichtenhofer, C. TrackFormer: Multi-Object Tracking with Transformers, 2022, [arXiv:cs.CV/2101.02702].

- Gao, R.; Wang, L. MeMOTR: Long-Term Memory-Augmented Transformer for Multi-Object Tracking, 2024, [arXiv:cs.CV/2307.15700].

- Sun, P.; Cao, J.; Jiang, Y.; Xu, Z.; Xie, L.; Yuan, Z.; Luo, P.; Kitani, K. TransTrack: Multiple Object Tracking with Transformer. arXiv preprint arXiv:2012.15460 2020.

- Wang, Q.; Lu, C.; Gao, L.; He, G. Transformer-Based Multiple-Object Tracking via Anchor-Based-Query and Template Matching. Sensors 2024, 24. [Google Scholar] [CrossRef] [PubMed]

- Gao, R.; Wang, L. MeMOTR: Long-Term Memory-Augmented Transformer for Multi-Object Tracking. In Proceedings of the ICCV, 2023.

- Yan, F.; Luo, W.; Zhong, Y.; Gan, Y.; Ma, L. Co-MOT: Boosting End-to-End Transformer-based Multi-Object Tracking via Coopetition Label Assignment. In Proceedings of the ICLR, 2025.

- Psalta, A.; Tsironis, V.; Karantzalos, K. Transformer-based assignment decision network for multiple object tracking, 2025, [arXiv:cs.CV/2208.03571].

- Hannuna, S.; Camplani, M.; Hall, J.; Mirmehdi, M.; Damen, D.; Burghardt, T.; Paiement, A.; Tao, L. DS-KCF: a real-time tracker for RGB-D data. J. Real-Time Image Process. 2019, 16, 1439–1458. [Google Scholar] [CrossRef]

- Kart, U.; Danelljan, M.; Van Gool, L.; Timofte, R. Object tracking by reconstruction with view-specific discriminative correlation filters. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018, pp. 1339–1348.

- Chen, Z.; Cong, R.; Xu, Q.; Huang, Q. DPANet: Depth Potentiality-Aware Gated Attention Network for RGB-D Salient Object Detection. IEEE Transactions on Image Processing 2021, 30, 7012–7024. [Google Scholar] [CrossRef] [PubMed]

- Weng, X.; Wang, J.; Held, D.; Kitani, K. 3D multi-object tracking: A baseline and new evaluation metrics. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2020, pp. 11011–11018.

- Yin, T.; Zhou, X.; Krähenbühl, P. Center-based 3D object detection and tracking. In Proceedings of the CVPR, 2021, pp. 11784–11793.

- Li, Y.; Chen, Y.; Qi, X.; Li, Z.; Sun, J.; Jia, J. Unifying Voxel-based Representation with Transformer for 3D Object Detection, 2022, [arXiv:cs.CV/2206.00630].

- Caesar, H.; Bankiti, V.; Lang, A.H.; et al. nuScenes: A multimodal dataset for autonomous driving. CVPR 2020, pp. 11621–11631.

- Sun, P.; Kretzschmar, H.; Dotiwalla, X.; et al. Scalability in perception for autonomous driving: Waymo open dataset. CVPR 2020, pp. 2446–2454.

- Vishwanath, A.; Lee, M.; He, Y. FusionTrack: Early Sensor Fusion for Robust Multi-Object Tracking. In Proceedings of the WACV, 2024.

- Pang, J.; Zhang, C.; Liu, Y.; et al. MMMOT: Multi-modality memory for robust 3D object tracking. In Proceedings of the ECCV, 2022.

- Luo, C.; Ma, Y.; et al. Multimodal Transformer for 3D Object Detection. In Proceedings of the CVPR, 2022.

- Bastani, F.; He, S.; Madden, S. Self-Supervised Multi-Object Tracking with Cross-input Consistency. In Proceedings of the Advances in Neural Information Processing Systems; Ranzato, M.; Beygelzimer, A.; Dauphin, Y.; Liang, P.; Vaughan, J.W., Eds. Curran Associates, Inc., 2021, Vol. 34, pp. 13695–13706.

- Wang, Z.; Zheng, L.; Liu, Y.; Li, Y.; Wang, S. Towards Real-Time Multi-Object Tracking, 2020, [arXiv:cs.CV/1909.12605].

- He, S.; Luo, H.; Wang, P.; Wang, F.; Li, H.; Jiang, W. TransReID: Transformer-based Object Re-Identification, 2021, [arXiv:cs.CV/2102.04378].

- Zhu, Z.; Wang, Q.; Bo, L.; Wu, W.; Yan, J.; Hu, W. Distractor-aware siamese networks for visual object tracking. In Proceedings of the European Conference on Computer Vision (ECCV). Springer, 2018, pp. 103–119.

- Lin, J.; Liang, G.; Zhang, R. LTTrack: Rethinking the Tracking Framework for Long-Term Multi-Object Tracking. IEEE Transactions on Circuits and Systems for Video Technology 2024, 34, 9866–9881. [Google Scholar] [CrossRef]

- Li, X.; Zhong, B.; Liang, Q.; Li, G.; Mo, Z.; Song, S. MambaLCT: Boosting Tracking via Long-term Context State Space Model 2024. [arXiv:cs.CV/2412.13615].

- Rezatofighi, H.; Tsoi, N.; Gwak, J.; Sadeghian, A.; Reid, I.; Savarese, S. Generalized Intersection over Union: A Metric and A Loss for Bounding Box Regression. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2019, pp. 658–666.

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. Distance-IoU Loss: Faster and Better Learning for Bounding Box Regression. Proceedings of the AAAI Conference on Artificial Intelligence 2020, 34, 12993–13000. [Google Scholar] [CrossRef]

- Mayer, C.; Danelljan, M.; Van Gool, L.; Timofte, R. Learning Target Association to Keep Track of What Not to Track. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 13444–13454.

- Yang, L.; Yao, A.; Li, F.; Yang, L.; Fan, L.; Zhang, L.; Xu, C. DeAOT: Learning Decomposed Features for Arbitrary Object Tracking. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023, pp. 23018–23028.

- Xu, T.; Li, C.; Wang, L. SimTrack: Self-Supervised Learning for Visual Object Tracking via Contrastive Similarity. In Proceedings of the CVPR, 2023.

- He, M.; Zhou, X.; Singh, A. DINOTrack: Self-Distilled Visual Tracking with Dense Patch Features. In Proceedings of the ECCV, 2024.

- Caron, M.; Touvron, H.; Misra, I.; et al. Emerging Properties in Self-Supervised Vision Transformers. In Proceedings of the ICCV, 2021.

- Kwon, Y.; Zhang, Y.; Chen, W. TLPFormer: Temporally Masked Transformers for Long-Term Tracking. In Proceedings of the NeurIPS, 2024.

- Li, J.; Xu, A.; Wu, L. ProTrack: Motion-Preserving Self-Supervised Pretraining for Robust Tracking. In Proceedings of the ICLR, 2024.

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. International Conference on Machine Learning (ICML) 2021.

- Oquab, M.; Darcet, T.; Moutakanni, T.; et al. DINOv2: Learning Robust Visual Features without Supervision. arXiv preprint arXiv:2304.07193 2023.

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Schmidt, L.; et al. Segment anything. IEEE/CVF International Conference on Computer Vision (ICCV) 2023.

- Ye, M.; Ma, J.; Wang, J.; Ji, R.; Shao, L. OSTrack: Transformer Tracking with Robust Template Updating. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022, pp. 7798–7807.

- Lin, L.; Tang, C.; Zhan, J.; Chen, K.; Zhou, P.; et al. Prompt-Track: Pseudo-Prompt Tuning for Open-Set Tracking. arXiv preprint arXiv:2305.04982 2023.

- Xiong, Y.; Zhou, C.; Xiang, X.; Wu, L.; Zhu, C.; Liu, Z.; et al.. Efficient Track Anything. arXiv preprint arXiv:2411.18933 2024.

- Zhou, T.; Luo, W.; Ye, Q.; Shi, Z.; Chen, J. SAM-PD: How Far Can SAM Take Us in Tracking and Segmenting Anything in Videos. arXiv preprint arXiv:2403.04194 2024.

- Cheng, Y.; Li, L.; Xu, Y.; Li, X.; Yang, Z.; Wang, W.; Yang, Y. Segment and Track Anything. arXiv preprint arXiv:2305.06558 2023.

- Wang, C.; Zhang, L.; Yang, F.; et al. FELT: A Large-Scale Event-Based Long-Term Tracking Benchmark. In Proceedings of the CVPR, 2024.

- Jiao, Y.; Li, Y.; Ding, J.; Yang, Q.; Fu, S.; Fan, H.; Zhang, L. GSOT3D: Towards Generic 3D Single Object Tracking in the Wild 2024. [arXiv:cs.CV/2412.02129]ject Tracking in the Wild 2024.

- Feng, S.; Li, X.; Xia, C.; Liao, J.; Zhou, Y.; Li, S.; Hua, X. VIMOT: A Tightly Coupled Estimator for Stereo Visual-Inertial Navigation and Multiobject Tracking. IEEE Transactions on Instrumentation and Measurement 2023, 72, 1–14. [Google Scholar] [CrossRef]

- Xu, M.; Zhang, X.; Zhang, B.; Wang, Y.; Li, W.; Yan, S. ThermalTrack: RGB-T Tracking with Thermal-aware Attention and Fusion. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023, pp. 4827–4836.

- Li, J.; Li, D.; Xiong, C.; Hoi, S.C. BLIP: Bootstrapping language-image pre-training for unified vision-language understanding and generation. In Proceedings of the International Conference on Machine Learning (ICML), 2022, pp. 12888–12900.

- OpenAI. GPT-4V(ision). OpenAI Research, 2023. https://openai.com/research/gpt-4v-system-card.

- Alansari, M.; Javed, S.; Ganapathi, I.I.; et al. CLDTracker: Comprehensive Language Description Framework for Robust Visual Tracking. arXiv preprint arXiv:2505.23704 2025.

- Zhu, J.; Zhang, C.; Qiu, Y.; Liu, Y.; Wang, L.; Yan, J. PromptTrack: Prompt-driven Visual Object Tracking. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023, pp. 1500–1509.

- Yang, J.; Gao, M.; Li, Z.; Gao, S.; Wang, F.; Zheng, F. Track Anything: Segment Anything Meets Videos, 2023, [arXiv:cs.CV/2304.11968].

- Zhang, C.; Sun, X.; Liu, L.; Yang, Y. All-in-One: Exploring Unified Vision-Language Tracking with Multi-Modal Alignment. arXiv preprint arXiv:2307.03373 2023.

- Fang, J.; Qin, H.; Liu, Q.; Wang, Y. PromptTrack: Prompt-Driven Open-Vocabulary Object Tracking. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023, pp. 14376–14386.

- Lin, L.; Li, H.; Zhang, J.; Shi, Q.; Zhang, J.; Tao, D. FAMTrack: Prompting Foundation Models for Open-Set Video Object Segmentation. arXiv preprint arXiv:2310.20081 2023.

- Fang, Y.; Wang, W.; Zhang, L.; Yu, X.; Lu, T.; Zhang, Y.; Dai, J.; Li, Z.; Yuan, L.; Lu, X. EVA: Exploring the Limits of Masked Visual Representation Learning at Scale. arXiv preprint arXiv:2303.11331 2023. [CrossRef]

- Wang, Y.; Li, S.; Wei, Y.; Shi, J.; Yu, Z.; Zhang, X.; Bai, X.; Wang, Z.; Wei, Y.; Zeng, M.; et al. InternImageV2: Co-designing Recipes and Architecture for Effective Visual Representation Learning. arXiv preprint arXiv:2403.04704 2024for Effective Visual Representation Learning.

- Zang, Y.; Qi, H.; et al. Open-Vocabulary Multi-Object Tracking. In Proceedings of the CVPR, 2023.

- Lukezic, A.; Galoogahi, H.; Vojir, T.; Kristan, M. TREK-150: A Comprehensive Benchmark for Tracking Across Diverse Tasks. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023.

- Fan, H.; Lin, L.; Yang, F.; Chu, P.; Deng, G.; Yu, H.; Bai, H.; Xu, Y.; Liao, J.; Ling, H. Lasot: A high-quality benchmark for large-scale single object tracking. In Proceedings of the CVPR, 2019.

- Wang, W.; Zhang, E.; Xu, Y.; Liang, S.; Luo, Y.; Li, Z.; Dai, J.; Li, H. InternImage V2: Explore Further the Versatile Plain Vision Backbone. arXiv preprint arXiv:2311.16454 2023.

- Yang, F.; Liang, Z.; Zhou, J.; Wang, J. OVTrack: Open-Vocabulary Multi-Object Tracking with Pre-trained Vision and Language Models. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023.

- Baik, S.; Lee, J.; Huang, D.A.; Malisiewicz, T.; Fathi, A.; Wang, X.; Feichtenhofer, C.; Ross, D.A. TREK-150: A Benchmark for Tracking Every Thing in the Wild. In Proceedings of the CVPR, 2023.

- Grauman, K.; Westbury, A.; Bettadapura, V.; et al. Ego4D: Around the World in 3,000 Hours of Egocentric Video. International Journal of Computer Vision 2022, 130, 33–72. [Google Scholar]

- Mueller, M.; Smith, N.; Ghanem, B. A benchmark and simulator for UAV tracking. In Proceedings of the ECCV, 2016.

- Zhu, P.; Wen, L.; Bian, X.; Ling, H.; Hu, Q. Vision meets drones: A challenge. In Proceedings of the Proceedings of the European Conference on Computer Vision (ECCV), 2018, pp. 778–795. [CrossRef]

- Wu, D.; Han, W.; Liu, Y.; Wang, T.; zhong Xu, C.; Zhang, X.; Shen, J. Language Prompt for Autonomous Driving, 2025, [arXiv:cs.CV/2309.04379]. [CrossRef]

- Li, X.; Zhong, B.; Liang, Q.; Mo, Z.; Nong, J.; Song, S. Dynamic Updates for Language Adaptation in Visual-Language Tracking, 2025, [arXiv:cs.CV/2503.06621].

- Zheng, Y.; Lin, Y.; Zhu, C.; He, Y.; Liang, Y.; Wu, Y. ThermalTrack: Multi-modal Tracking with Thermal Guidance. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Zhao, X.; Hu, Y.; Wang, H.; Xu, Y.; Yu, J.; Xie, Y.; Wang, Z.; Chen, F. FELT: Fast Event-based Learned Tracking. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023.

- Ma, Y.; Tang, Y.; Yang, W.; et al. Unifying Visual and Vision-Language Tracking via Contrastive Learning. arXiv preprint arXiv:2401.11228 2024. [CrossRef]

- Li, Y.; Liu, X.; Liu, L.; Fan, H.; Zhang, L. LaMOT: Language-Guided Multi-Object Tracking. arXiv preprint arXiv:2406.08324 2024. [CrossRef]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; et al. Flamingo: a Visual Language Model for Few-Shot Learning. arXiv preprint arXiv:2204.14198 2022.

- Li, J.; Wortsman, M.; Hua, X.; Tan, P.; Batra, D.; Parikh, D.; Girshick, R. BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2023.

- Yang, C.; Huang, H.; Chai, W.; Jiang, Z.; Hwang, J. SAMURAI: Adapting Segment Anything Model for Zero-Shot Visual Tracking with Motion-Aware Memory. arXiv preprint arXiv:2411.11922 2024. [CrossRef]

- Huang, L.; Zhao, Y.; Zhang, S.; Zhang, L. Towards robust long-term tracking. In Proceedings of the CVPR, 2021.

- Zhou, M.; Chen, L.; Smith, J. Ethical Considerations in Vision-Language Tracking Systems. arXiv preprint arXiv:2403.01234 2024.

- Li, M.; Li, S.; Zhang, X.; Zhang, L. UniVS: Unified and Universal Video Segmentation with Prompts as Queries, 2024, [arXiv:cs.CV/2402.18115]. [CrossRef]

- Zhu, Y.; et al. Visual Prompt Multi-Modal Tracking. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023. [CrossRef]

- Feng, X.; Li, X.; Hu, S.; Zhang, D.; Wu, M.; Zhang, J.; Chen, X.; Huang, K. MemVLT: Vision-Language Tracking with Adaptive Memory-based Prompts. In Proceedings of the Advances in Neural Information Processing Systems; Globerson, A.; Mackey, L.; Belgrave, D.; Fan, A.; Paquet, U.; Tomczak, J.; Zhang, C., Eds. Curran Associates, Inc., 2024, Vol. 37, pp. 14903–14933.

- Tumanyan, N.; Singer, A.; Bagon, S.; Dekel, T. DINO-Tracker: Taming DINO for Self-Supervised Point Tracking in a Single Video, 2024, [arXiv:cs.CV/2403.14548]. [CrossRef]

- Liu, W.; He, H.; Chen, H.; et al.. Grounding DINO: Marrying DINO with Grounding for Open-Set Object Detection. arXiv preprint arXiv:2303.05499 2023. [CrossRef]

- Jiang, J.; Wang, Z.; Zhao, M.; Li, Y.; et al.. SAM2MOT: Tracking by Segmentation Paradigm with Segment Anything 2. arXiv preprint arXiv:2504.04519 2025. [CrossRef]

- Li, X.; Feng, X.; Hu, S.; et al. DTLLM-VLT: Diverse Text Generation for Visual-Language Tracking Based on LLM. arXiv preprint arXiv:2405.12139 2024.

- Ren, T.; Liu, S.; Zeng, A.; Lin, J.; Li, K.; Cao, H.; Chen, J.; et al. Grounded SAM: Assembling Open-World Models for Diverse Visual Tasks. arXiv preprint arXiv:2401.14159 2024.

- Buolamwini, J.; Gebru, T. Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification. In Proceedings of the Proceedings of the 1st Conference on Fairness, Accountability and Transparency. PMLR, 2018, pp. 77–91.

- Zhao, J.; Wang, T.; Yatskar, M.; Ordonez, V.; Chang, K.W. Men Also Like Shopping: Reducing Gender Bias Amplification using Corpus-level Constraints. In Proceedings of the Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, 2017, pp. 2979–2989.

- Shankar, S.; Garg, S.; Garg, S.; Bolukbasi, T.; Narayanan, A.; Mislove, A.; Jurafsky, D. Towards Mitigating Social Biases in Large Language Models. arXiv preprint arXiv:2301.01561 2023.

- Schramowski, P.; Santini, T.; Henzinger, T.A.; Krenn, M.; Kersting, K.; Holzinger, A. Large pre-trained language models contain human-like biases of destructive behavior. Nature Machine Intelligence 2022, 4, 261–268. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, W.; Chen, L.; Zhao, X.; Liu, W. Robustness of Vision-Language Models: A Review. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2023, pp. 1234–1245.

- Madry, A.; Makelov, A.; Schmidt, L.; Tsipras, D.; Vladu, A. Towards Deep Learning Models Resistant to Adversarial Attacks. In Proceedings of the International Conference on Learning Representations, 2018. [CrossRef]

- Cohen, J.M.; Rosenfeld, E.; Kolter, J.Z. Certified Adversarial Robustness via Randomized Smoothing. In Proceedings of the International Conference on Machine Learning. PMLR, 2019, pp. 1310–1320.

- Carlini, N.; Tramer, F.; Wallace, E.; Jagielski, M.; Herbert-Voss, A.; Lee, K.; Roberts, D.; Brown, T.; Song, D.; Erlingsson, U.; et al. Extracting training data from large language models. arXiv preprint arXiv:2012.07805 2021.

- Song, C.; Ristenpart, T.; Shmatikov, V. Membership inference attacks against generative models. In Proceedings of the Proceedings of the 2021 ACM SIGSAC Conference on Computer and Communications Security, 2021, pp. 1647–1661. [CrossRef]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the Proceedings of the IEEE International Conference on Computer Vision, 2017, pp. 618–626.

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “Why Should I Trust You?” Explaining the Predictions of Any Classifier. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2016, pp. 1135–1144. [CrossRef]

- Wu, Y.; Lim, J.; Yang, M.H. Online object tracking: A benchmark. In Proceedings of the CVPR, 2013, pp. 2411–2418.

- Kristan, M.; et al. The visual object tracking VOT2016 challenge results. In Proceedings of the ECCV Workshops, 2016.

- Muller, M.; Bibi, A.; Ghanem, B. TrackingNet: A Large-Scale Dataset and Benchmark for Object Tracking in the Wild. In Proceedings of the ECCV, 2018. [CrossRef]

- Huang, L.; Zhao, X.; Huang, K. Got-10k: A large high-diversity benchmark for generic object tracking in the wild. In Proceedings of the IEEE TPAMI, 2019.

- Liu, M.; Zhao, J.; Wang, Q.; et al. NT-VOT211: Night-Time Visual Object Tracking Benchmark. arXiv preprint arXiv:2402.09876 2024.

- Chen, Y.; Tang, Y.; Xiao, Y.; Yuan, Q.; Zhang, Y.; Liu, F.; He, J.; Zhang, L. Satellite video single object tracking: A systematic review and an oriented object tracking benchmark. ISPRS Journal of Photogrammetry and Remote Sensing 2024, 210, 212–240. [Google Scholar] [CrossRef]

- Leal-Taixé, L.; Milan, A.; et al. MOTChallenge 2015: Towards a benchmark for multi-target tracking. In Proceedings of the arXiv:1504.01942, 2015.

- Dendorfer, P.; Rezatofighi, H.; Milan, A.; Shi, J.; Cremers, D.; Reid, I.; Roth, S.; Leal-Taixé, L. MOT20: A benchmark for multi object tracking in crowded scenes. arXiv preprint arXiv:2003.09003 2020. [CrossRef]

- Yu, F.; Chen, H.; et al. BDD100K: A Diverse Driving Video Database with Scalable Annotation Tooling. In Proceedings of the CVPR, 2020.

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? The KITTI vision benchmark suite. In Proceedings of the CVPR, 2012.

- Dave, A.; Khoreva, A.; Ramanan, D. TAO: A large-scale benchmark for tracking any object. In Proceedings of the ECCV, 2020.

- Meinhardt, T.; Kirillov, A.; et al. EgoTracks: Egocentric Object Tracking in the Wild. In Proceedings of the CVPR, 2023.

- Fabbri, M.; Lanzi, F.; et al. MOTSynth: How can synthetic data help pedestrian detection and tracking? In Proceedings of the ICCV, 2021.

- Dendorfer, P.; Rezatofighi, H.; Milan, A.; Shi, J.; Cremers, D.; Reid, I.; Roth, S.; Schindler, K.; Leal-Taixé, L. MOT20: A benchmark for multi object tracking in crowded scenes, 2020, [arXiv:cs.CV/2003.09003].

- Valmadre, J.; Bertinetto, L.; Henriques, J.F.; Vedaldi, A.; Torr, P.H. Long-term tracking in the wild: A benchmark. In Proceedings of the ECCV, 2018.

- Athar, A.; Luiten, J.; Voigtlaender, P.; Khurana, T.; Dave, A.; Leibe, B.; Ramanan, D. BURST: A Benchmark for Unifying Object Recognition, Segmentation and Tracking in Video, 2022, [arXiv:cs.CV/2209.12118].

- Dunnhofer, M.; Furnari, A.; Farinella, G.M.; Micheloni, C. Visual Object Tracking in First Person Vision. International Journal of Computer Vision (IJCV) 2022. [CrossRef]

- Wang, W.; He, Z.; Hong, W.; Cheng, Y.; Zhang, X.; Qi, J.; Gu, X.; Huang, S.; Xu, B.; Dong, Y.; et al. LVBench: An Extreme Long Video Understanding Benchmark 2025. [arXiv:cs.CV/2406.08035].

- Wang, X.; Shu, X.; Zhang, Z.; Jiang, B.; Wang, Y.; Tian, Y.; Wu, F. Towards More Flexible and Accurate Object Tracking with Natural Language: Algorithms and Benchmark. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021, pp. 13763–13773.

- Zhang, J.; et al. Reinforcement learning for visual object tracking: A review and outlook. arXiv preprint arXiv:2301.XXXXX 2023.

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep networks. In Proceedings of the Proceedings of the 34th International Conference on Machine Learning (ICML), 2017.

- Houlsby, N.; Giurgiu, A.; Jastrzebski, S.; et al. Parameter-efficient transfer learning for NLP. In Proceedings of the International Conference on Machine Learning, 2019.

- Hinton, G.; Vinyals, O.; Dean, J. Distilling the knowledge in a neural network. arXiv preprint arXiv:1503.02531 2015.

- Elsken, T.; Metzen, J.H.; Hutter, F. Neural architecture search: A survey. Journal of Machine Learning Research 2019, 20, 1–21. [Google Scholar]

- Kaissis, G.; Makowski, M.R.; Rückert, D.; Braren, R.F. Secure, privacy-preserving and federated machine learning in medical imaging. Nature Machine Intelligence 2021, 3, 305–315. [Google Scholar] [CrossRef]

- Kendall, A.; Gal, Y. What uncertainties do we need in Bayesian deep learning for computer vision? In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017, Vol. 30.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).