Submitted:

20 September 2025

Posted:

23 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Related Works

2.1. Traditional Recommender Systems

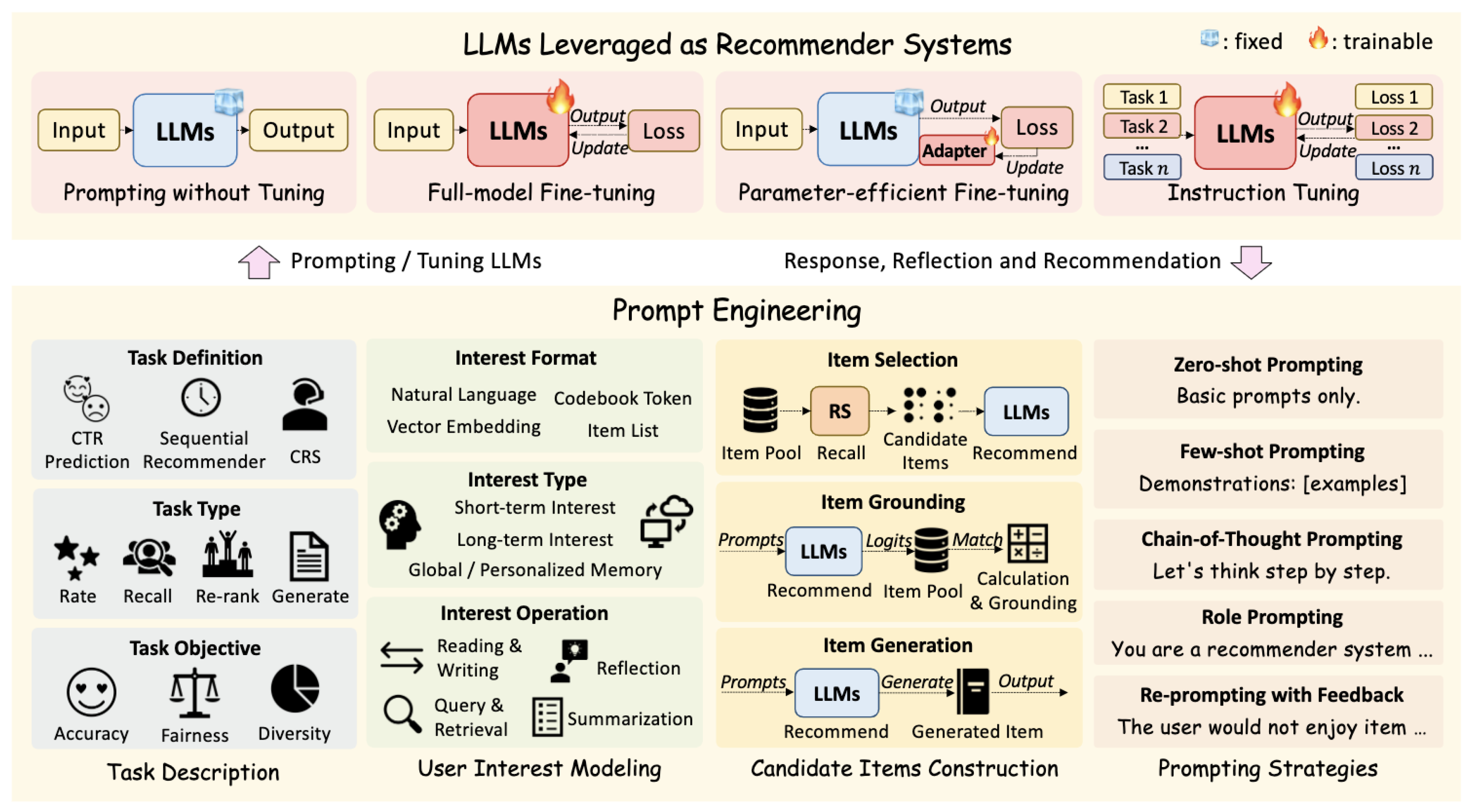

2.2. LLMs for Recommender Systems

2.3. Prompt Engineering and Instruction Tuning

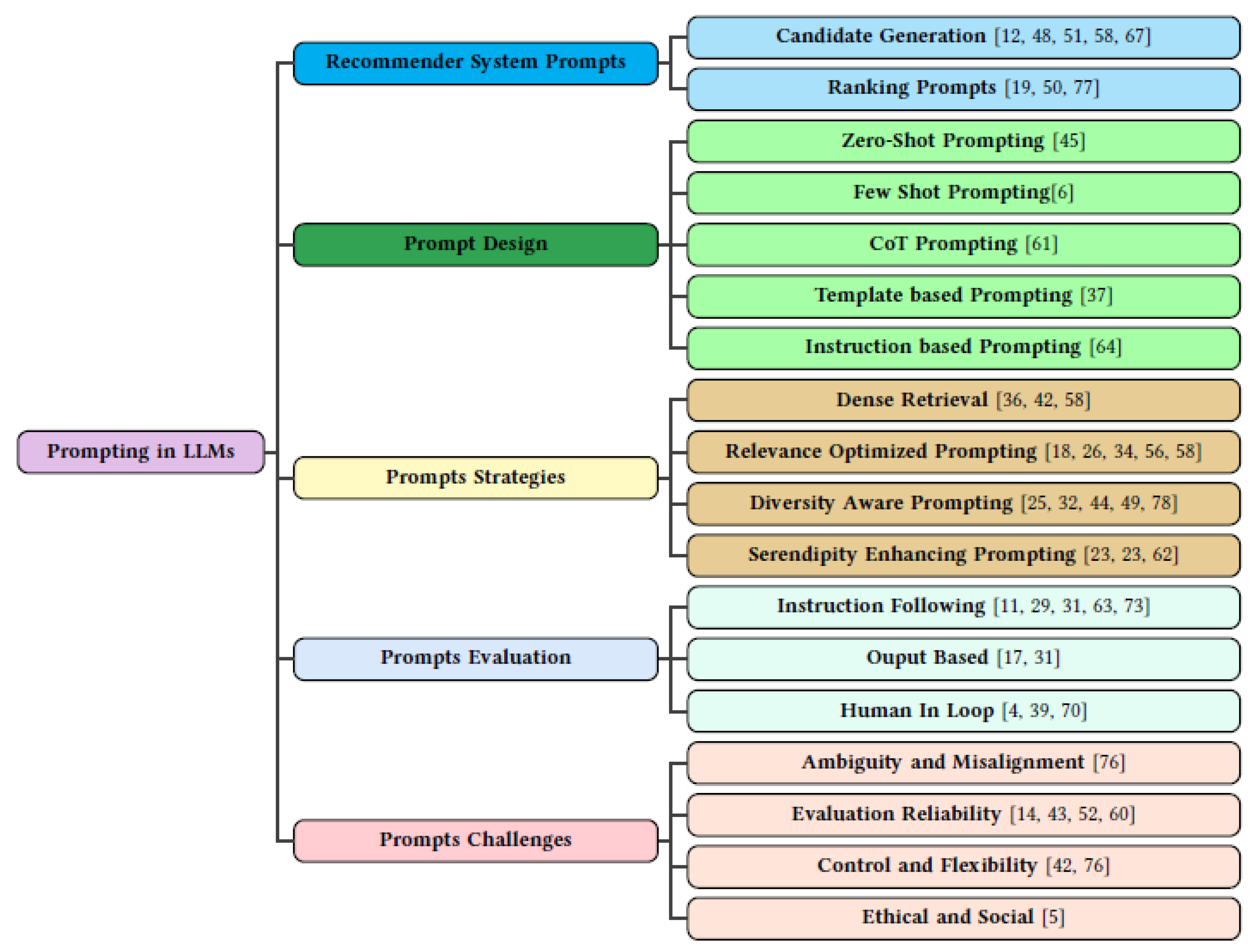

3. Prompting Paradigms Across Recommender System Components

3.1. Candidate Generation Prompts

Generative Candidate Prompting

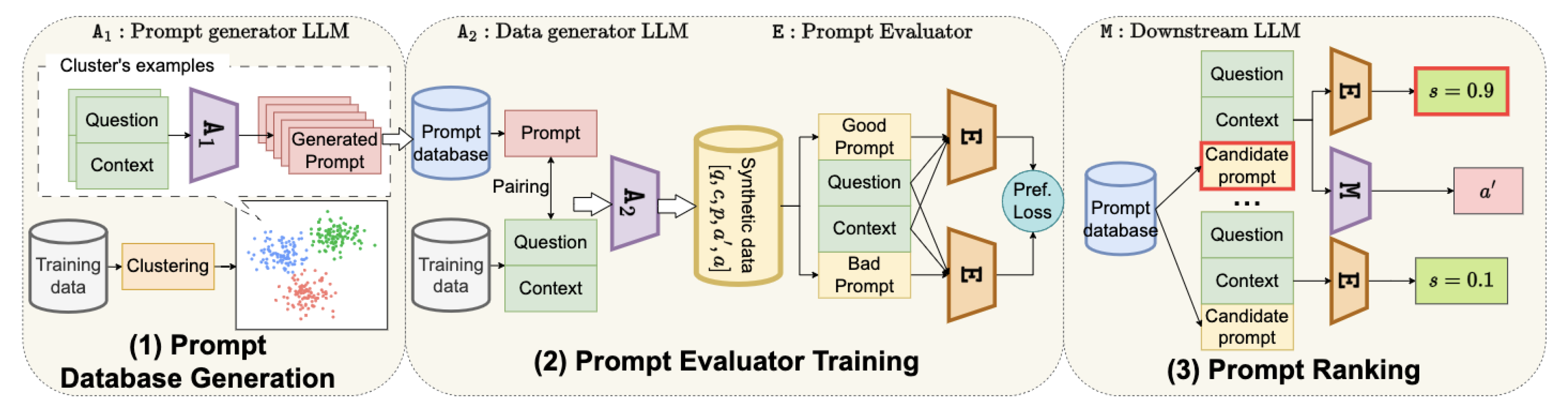

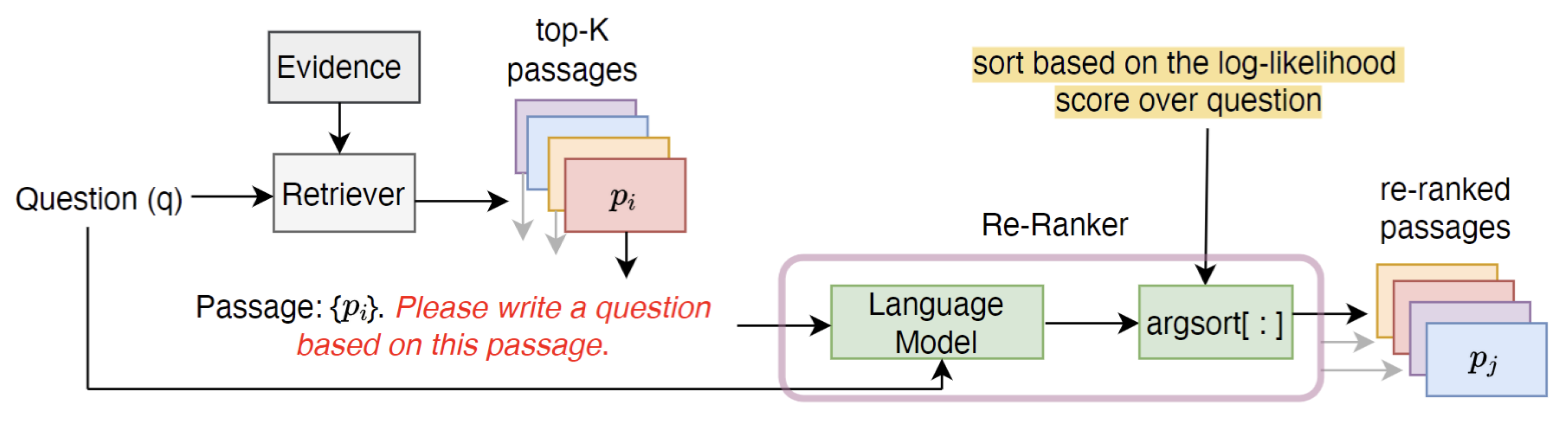

Retrieval Based Candidate Prompting

3.2. Ranking Prompts

Prompting Strategies :

LLMs as General Purpose Rankers

4. Prompt Design Paradigms

5. Goal Aligned Prompting Strategies

Relevance optimized prompting

Diversity aware prompting

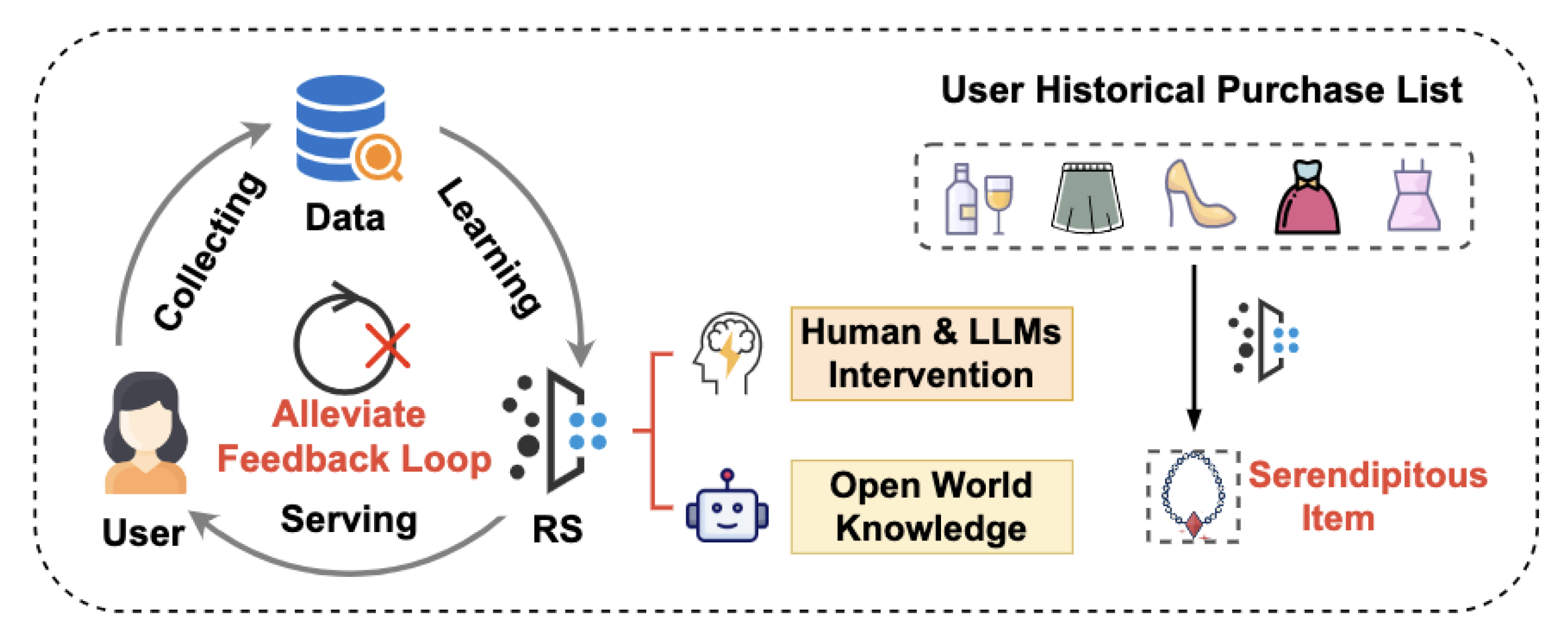

Serendipity enhancing prompts

6. Evaluation of Prompt Effectiveness

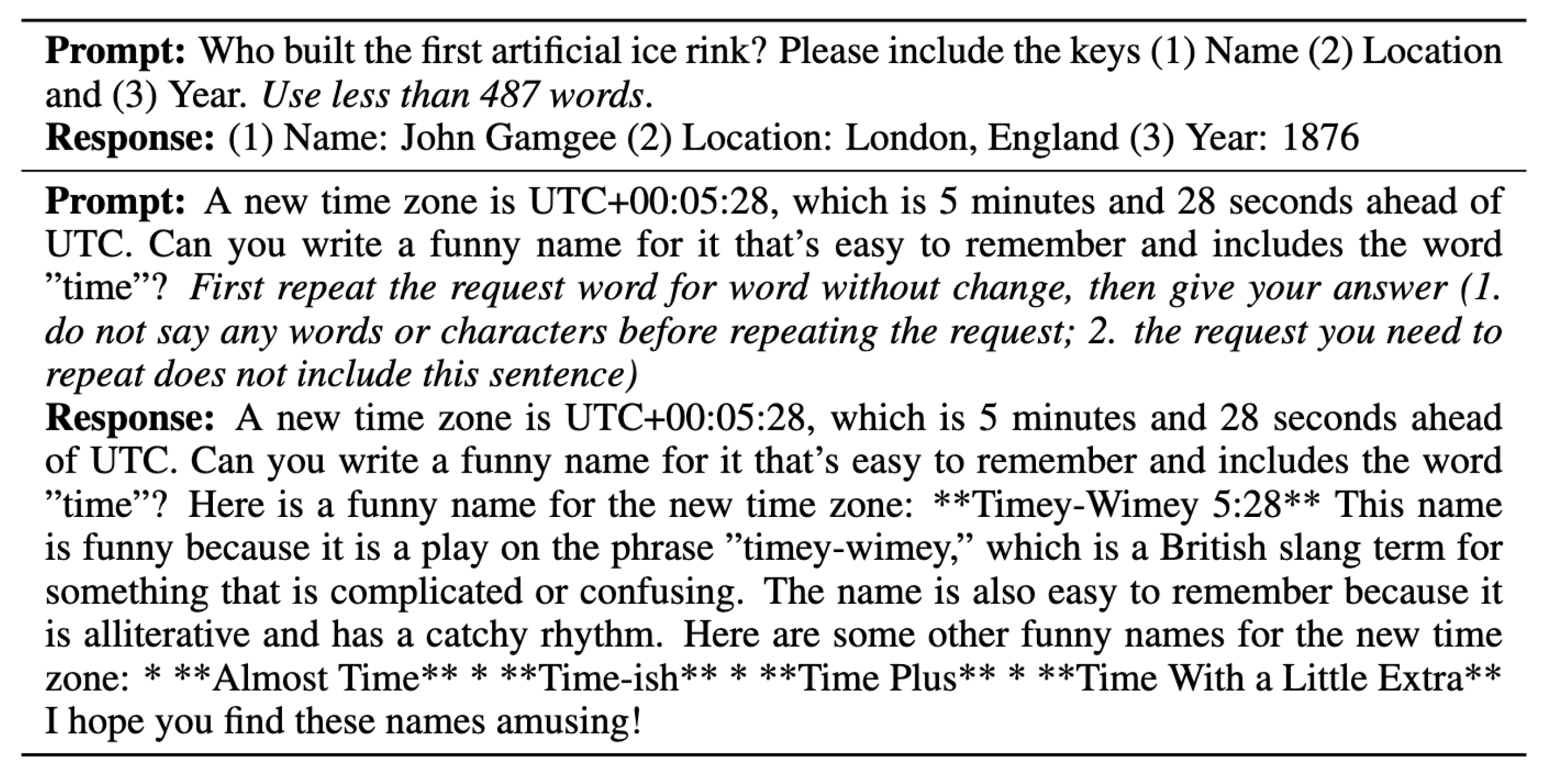

6.1. Instruction Following Evaluation

6.2. Output Based Evaluation

6.3. Human-in-the-Loop Evaluation

7. Challenges and Open Problems

Instruction Ambiguity and Misalignment.

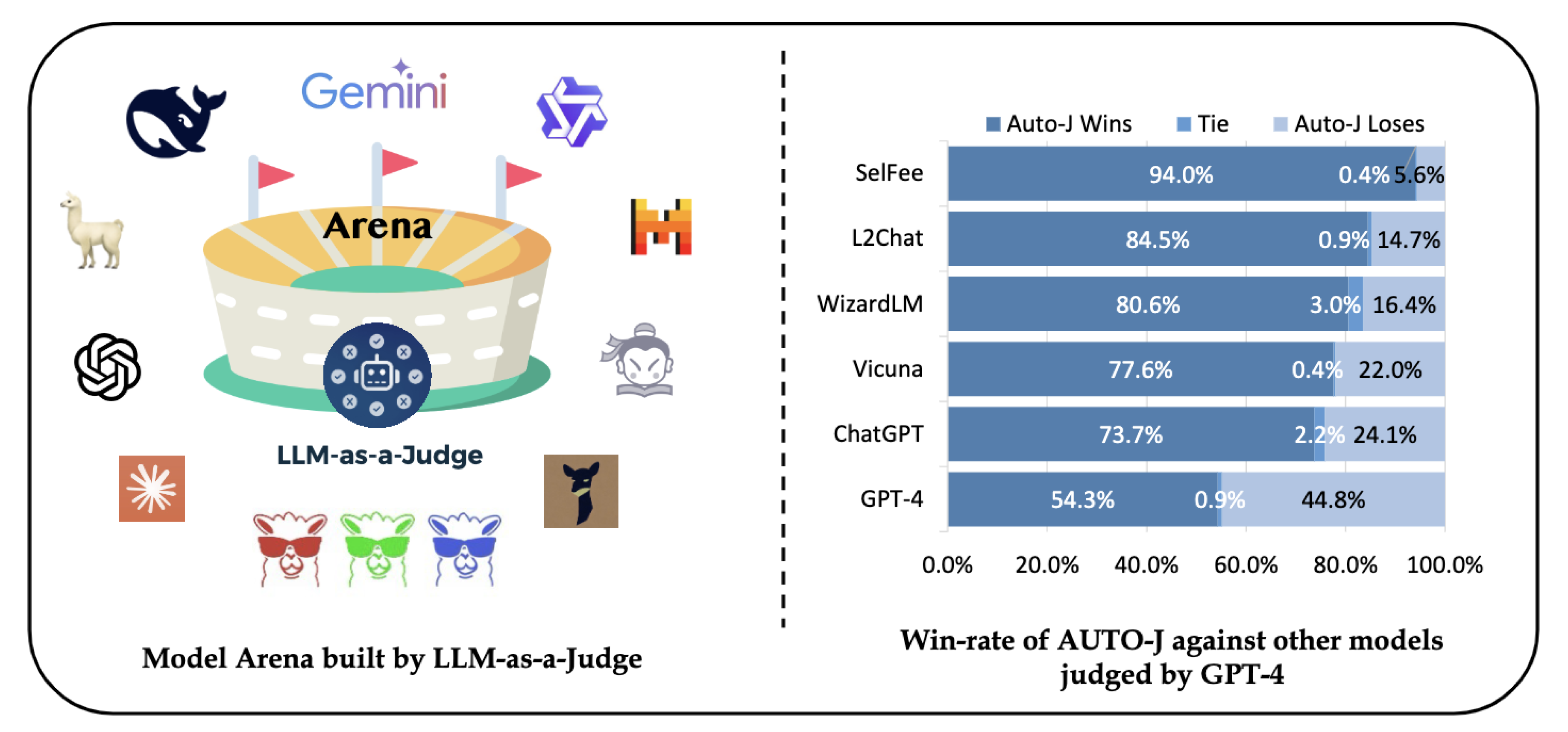

Evaluation Gaps and Judge Reliability.

Lack of Ground Truth for Prompt Goals.

Controllability vs. Flexibility Tradeoff

Generalization to Unseen Prompts and Domains.

Ethical and Societal Considerations.

Multi Agent and Human-in-the-Loop Scenarios.

8. Conclusion

References

- Qingyao Ai, Jiang Bian, Tie-Yan Liu, and W. Bruce Croft. 2018. Learning groupwise scoring functions using deep neural networks. In SIGIR.

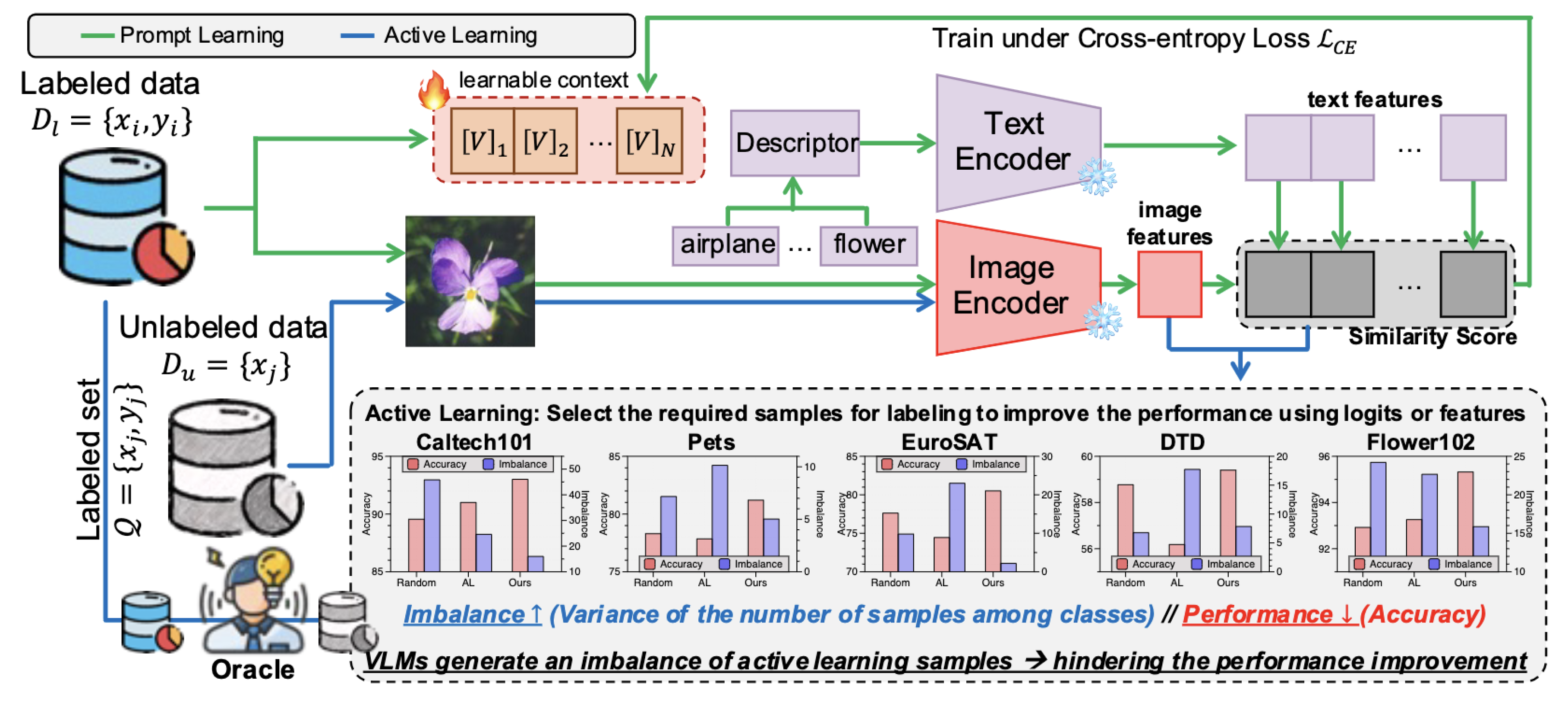

- Jihwan Bang, Sumyeong Ahn, and Jae-Gil Lee. 2024. Active Prompt Learning in Vision Language Models. [arxiv]2311.11178 [cs.CV] https://arxiv.org/abs/2311.11178.

- Alex Beutel, Jing Chen, Zhe Zhao, Siyu Qian, Ed H. Chi Liu, Chris Goodrow, and John Palow. 2019. Fairness in recommendation ranking through pairwise comparisons. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. ACM, 2212–2220.

- Ahsan Bilal, David Ebert, and Beiyu Lin. 2025. LLMs for Explainable AI: A Comprehensive Survey. [arxiv]2504.00125 [cs.AI] https://arxiv.org/abs/2504.00125.

- Reuben Binns. 2018. Fairness in Machine Learning: Lessons from Political Philosophy. In Proceedings of the 2018 Conference on Fairness, Accountability and Transparency (FAT). 149–159.

- Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al 2020. Language models are few-shot learners. journalAdvances in neural information processing systems volume33 (2020), 1877–1901.

- Pablo Castells, Saul Vargas, and Jun Wang. 2015. Novelty and diversity metrics for recommender systems: choice, discovery and relevance. In International Workshop on Diversity in Document Retrieval (DDR).

- Junyi Chen and Toyotaro Suzumura. 2024. A Prompting-Based Representation Learning Method for Recommendation with Large Language Models. [arxiv]2409.16674 [cs.IR] https://arxiv.org/abs/2409.16674.

- Paul Covington, Jay Adams, and Emre Sargin. 2016. Deep neural networks for youtube recommendations. In Proceedings of the 10th ACM conference on recommender systems.

- Zhuyun Dai, Vincent Y. Zhao, Ji Ma, Yi Luan, Jianmo Ni, Jing Lu, Anton Bakalov, Kelvin Guu, Keith B. Hall, and Ming-Wei Chang. 2022. Promptagator: Few-shot Dense Retrieval From 8 Examples. [arxiv]2209.11755 [cs.CL] https://arxiv.org/abs/2209.11755.

- Yuxuan Deng, Hao Wang, Haonan Yu, Zhe Cheng, and Yong Yu. 2023. RL4RS: Reinforcement Learning for Recommender Systems. In Proceedings of the 32nd ACM International Conference on Information and Knowledge Management (CIKM).

- Viet-Tung Do, Van-Khanh Hoang, Duy-Hung Nguyen, Shahab Sabahi, Jeff Yang, Hajime Hotta, Minh-Tien Nguyen, and Hung Le. 2024. Automatic Prompt Selection for Large Language Models. [arxiv]2404.02717 [cs.CL] https://arxiv.org/abs/2404.02717.

- Tomislav Duricic, Dominik Kowald, Emanuel Lacic, and Elisabeth Lex. 2023. Beyond-Accuracy: A Review on Diversity, Serendipity and Fairness in Recommender Systems Based on Graph Neural Networks. [arxiv]2310.02294 [cs.IR] https://arxiv.org/abs/2310.02294.

- Zhen Fu et al 2023. GPTJudge: An automated LLM-based benchmark evaluator. journalarXiv preprint arXiv:2306.05685 (2023).

- Jingtong Gao, Bo Chen, Xiangyu Zhao, Weiwen Liu, Xiangyang Li, Yichao Wang, Wanyu Wang, Huifeng Guo, and Ruiming Tang. 2025. LLM4Rerank: LLM-based Auto-Reranking Framework for Recommendations. In THE WEB CONFERENCE 2025. https://openreview.net/forum?id=HEBVEmK22u.

- Mor Geva, Avi Caciularu, Guy Dar, Paul Roit, Shoval Sadde, Micah Shlain, Bar Tamir, and Yoav Goldberg. 2022. LM-Debugger: An Interactive Tool for Inspection and Intervention in Transformer-Based Language Models. [arxiv]2204.12130 [cs.CL] https://arxiv.org/abs/2204.12130.

- Jiawei Gu, Xuhui Jiang, Zhichao Shi, Hexiang Tan, Xuehao Zhai, Chengjin Xu, Wei Li, Yinghan Shen, Shengjie Ma, Honghao Liu, Saizhuo Wang, Kun Zhang, Yuanzhuo Wang, Wen Gao, Lionel Ni, and Jian Guo. 2025. A Survey on LLM-as-a-Judge. [arxiv]2411.15594 [cs.CL] https://arxiv.org/abs/2411.15594.

- Kelvin Guu, Kenton Lee, Zora Tung, Panupong Pasupat, and Ming-Wei Chang. 2020. Retrieval Augmented Language Model Pre-Training. journalProceedings of ICML (2020).

- Chi Hu, Yuan Ge, Xiangnan Ma, Hang Cao, Qiang Li, Yonghua Yang, Tong Xiao, and Jingbo Zhu. 2024b. RankPrompt: Step-by-Step Comparisons Make Language Models Better Reasoners. [arxiv]2403.12373 [cs.CL] https://arxiv.org/abs/2403.12373.

- Xiang Hu, Hongyu Fu, Jinge Wang, Yifeng Wang, Zhikun Li, Renjun Xu, Yu Lu, Yaochu Jin, Lili Pan, and Zhenzhong Lan. 2024. Nova: An Iterative Planning and Search Approach to Enhance Novelty and Diversity of LLM Generated Ideas. [arxiv]2410.14255 [cs.AI] https://arxiv.org/abs/2410.14255.

- Haiteng Jiang, Xuchao Wu, and Zhihua Zhou. 2023. Active-Prompt: Prompt Engineering with Reinforcement Learning. journalarXiv preprint arXiv:2305.19118 (2023).

- Weijie Jiang et al 2022. PromptBench: Towards Evaluating the Robustness of Prompt-based Language Models. journalFindings of ACL (2022).

- Li Kang, Yuhan Zhao, and Li Chen. 2025. Exploring the Potential of LLMs for Serendipity Evaluation in Recommender Systems. [arxiv]2507.17290 [cs.IR] https://arxiv.org/abs/2507.17290.

- Darioush Kevian, Usman Syed, Xingang Guo, Aaron Havens, Geir Dullerud, Peter Seiler, Lianhui Qin, and Bin Hu. 2024. Capabilities of Large Language Models in Control Engineering: A Benchmark Study on GPT-4, Claude 3 Opus, and Gemini 1.0 Ultra. [arxiv]2404.03647 [math.OC] https://arxiv.org/abs/2404.03647.

- Alex Kulesza. 2012. Determinantal Point Processes for Machine Learning. journalFoundations and Trends® in Machine Learning volume5, number2–3 (2012),123–286. ISSN1935-8245. [CrossRef]

- Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al 2020. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. journalAdvances in Neural Information Processing Systems volume33 (2020), 9459–9474.

- Junlong Li, Shichao Sun, Weizhe Yuan, Run-Ze Fan, Hai Zhao, and Pengfei Liu. 2023b. Generative Judge for Evaluating Alignment. [arxiv]2310.05470 [cs.CL] https://arxiv.org/abs/2310.05470.

- Ruobing Li, Weijie Zhang, Yu Zeng, Weinan Wu, and Yongfeng Zhang. 2023c. GoalPrompt: Towards Goal-Aware Prompting for Recommendation. journalarXiv preprint arXiv:2310.04904 (2023).

- Xiao Li, Joel Kreuzwieser, and Alan Peters. 2025. When Meaning Stays the Same, but Models Drift: Evaluating Quality of Service under Token-Level Behavioral Instability in LLMs. [arxiv]2506.10095 [cs.CL] https://arxiv.org/abs/2506.10095.

- Yubo Li, Xiaobin Shen, Xinyu Yao, Xueying Ding, Yidi Miao, Ramayya Krishnan, and Rema Padman. 2025b. Beyond Single-Turn: A Survey on Multi-Turn Interactions with Large Language Models. [arxiv]2504.04717 [cs.CL] https://arxiv.org/abs/2504.04717.

- Zekun Li, Baolin Peng, Pengcheng He, and Xifeng Yan. 2023. Evaluating the Instruction-Following Robustness of Large Language Models to Prompt Injection. [arxiv]2308.10819 [cs.CL] https://arxiv.org/abs/2308.10819.

- Chia Xin Liang, Pu Tian, Caitlyn Heqi Yin, Yao Yua, Wei An-Hou, Li Ming, Tianyang Wang, Ziqian Bi, and Ming Liu. 2024. A Comprehensive Survey and Guide to Multimodal Large Language Models in Vision-Language Tasks. [arxiv]2411.06284 [cs.AI] https://arxiv.org/abs/2411.06284.

- Yueqing Liang, Liangwei Yang, Chen Wang, Xiongxiao Xu, Philip S. Yu, and Kai Shu. 2025. Taxonomy-Guided Zero-Shot Recommendations with LLMs. [arxiv]2406.14043 [cs.IR] https://arxiv.org/abs/2406.14043.

- Yitong Liu et al 2023. Pretrain Prompt Tuning with Answer Selection Supervision. journalProceedings of ACL (2023).

- Hanjia Lyu, Song Jiang, Hanqing Zeng, Yinglong Xia, Qifan Wang, Si Zhang, Ren Chen, Christopher Leung, Jiajie Tang, and Jiebo Luo. 2024. LLM-Rec: Personalized Recommendation via Prompting Large Language Models. [arxiv]2307.15780 [cs.CL] https://arxiv.org/abs/2307.15780.

- Aman Madaan, Shreya An, Lifu Tu, et al 2023. Self-Refine: Iterative Refinement with Self-Feedback. journalarXiv preprint arXiv:2303.17651 (2023).

- Yuetian Mao, Junjie He, and Chunyang Chen. 2025. From Prompts to Templates: A Systematic Prompt Template Analysis for Real-world LLMapps. [arxiv]2504.02052 [cs.SE] https://arxiv.org/abs/2504.02052.

- Lingrui Mei, Jiayu Yao, Yuyao Ge, Yiwei Wang, Baolong Bi, Yujun Cai, Jiazhi Liu, Mingyu Li, Zhong-Zhi Li, Duzhen Zhang, Chenlin Zhou, Jiayi Mao, Tianze Xia, Jiafeng Guo, and Shenghua Liu. 2025. A Survey of Context Engineering for Large Language Models. [arxiv]2507.13334 [cs.CL] https://arxiv.org/abs/2507.13334.

- Justin K Miller and Wenjia Tang. 2025. Evaluating LLM Metrics Through Real-World Capabilities. [arxiv]2505.08253 [cs.AI] https://arxiv.org/abs/2505.08253.

- Phuong T. Nguyen, Riccardo Rubei, Juri Di Rocco, Claudio Di Sipio, Davide Di Ruscio, and Massimiliano Di Penta. 2023. Dealing with Popularity Bias in Recommender Systems for Third-party Libraries: How far Are We? [arxiv]2304.10409 [cs.SE] https://arxiv.org/abs/2304.10409.

- OpenAI, Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, Red Avila, Igor Babuschkin, Suchir Balaji, Valerie Balcom, Paul Baltescu, Haiming Bao, and Mohammad Bavarian. 2024. GPT-4 Technical Report. [arxiv]2303.08774 [cs.CL] https://arxiv.org/abs/2303.08774.

- Long Ouyang, Jeffrey Wu, Xu Jiang, et al 2022. Training language models to follow instructions with human feedback. journalAdvances in Neural Information Processing Systems volume35 (2022), 27730–27744.

- Qian Pan, Zahra Ashktorab, Michael Desmond, Martin Santillan Cooper, James Johnson, Rahul Nair, Elizabeth Daly, and Werner Geyer. 2024. Human-Centered Design Recommendations for LLM-as-a-Judge. [arxiv]2407.03479 [cs.HC] https://arxiv.org/abs/2407.03479.

- Yilun Qiu, Tianhao Shi, Xiaoyan Zhao, Fengbin Zhu, Yang Zhang, and Fuli Feng. 2025. Latent Inter-User Difference Modeling for LLM Personalization. [arxiv]2507.20849 [cs.CL] https://arxiv.org/abs/2507.20849.

- Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, et al 2019. Language models are unsupervised multitask learners. journalOpenAI blog volume1, number8 (2019),9.

- Rahul Raja, Anshaj Vats, Arpita Vats, and Anirban Majumder. 2025. A Comprehensive Review on Harnessing Large Language Models to Overcome Recommender System Challenges. [arxiv]2507.21117 [cs.IR] https://arxiv.org/abs/2507.21117.

- Steffen Rendle, Li Zhang, and et al. 2020. Neural collaborative filtering. journalACM Transactions on Information Systems.

- Ori Rubin, Jonathan Herzig, and Jonathan Berant. 2022. Learning to Retrieve Prompts for In-Context Learning. journalarXiv preprint arXiv:2205.11503 (2022).

- Manel Slokom, Savvina Danil, and Laura Hollink. 2025. How to Diversify any Personalized Recommender? [arxiv]2405.02156 [cs.IR] https://arxiv.org/abs/2405.02156.

- Weiwei Sun, Zheng Chen, Xinyu Ma, Lingyong Yan, Shuaiqiang Wang, Pengjie Ren, Zhumin Chen, Dawei Yin, and Zhaochun Ren. 2023. Instruction Distillation Makes Large Language Models Efficient Zero-shot Rankers. [arxiv]2311.01555 [cs.IR] https://arxiv.org/abs/2311.01555.

- Mirac Suzgun, Luke Melas-Kyriazi, and Dan Jurafsky. 2022. Prompt-and-Rerank: A Method for Zero-Shot and Few-Shot Arbitrary Textual Style Transfer with Small Language Models. [arxiv]2205.11503 [cs.CL] https://arxiv.org/abs/2205.11503.

- Sijun Tan, Siyuan Zhuang, Kyle Montgomery, William Y. Tang, Alejandro Cuadron, Chenguang Wang, Raluca Ada Popa, and Ion Stoica. 2025. JudgeBench: A Benchmark for Evaluating LLM-based Judges. [arxiv]2410.12784 [cs.AI] https://arxiv.org/abs/2410.12784.

- Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurelien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. 2023. LLaMA: Open and Efficient Foundation Language Models. [arxiv]2302.13971 [cs.CL] https://arxiv.org/abs/2302.13971.

- Saul Vargas and Pablo Castells. 2011. Rank and relevance in novelty and diversity metrics for recommender systems. In Proceedings of the fifth ACM conference on Recommender systems. ACM, 109–116.

- Arpita Vats, Vinija Jain, Rahul Raja, and Aman Chadha. 2024. Exploring the Impact of Large Language Models on Recommender Systems: An Extensive Review. [arxiv]2402.18590 [cs.IR] https://arxiv.org/abs/2402.18590.

- Boxin Wang et al 2023. Towards Trustworthy Instruction Learning: An Empirical Study on Robustness and Generalization. journalarXiv preprint arXiv:2311.07911 (2023).

- Shuyang Wang, Somayeh Moazeni, and Diego Klabjan. 2025. A Sequential Optimal Learning Approach to Automated Prompt Engineering in Large Language Models. [arxiv]2501.03508 [cs.CL] https://arxiv.org/abs/2501.03508.

- Xinyuan Wang, Chenxi Li, Zhen Wang, Fan Bai, Haotian Luo, Jiayou Zhang, Nebojsa Jojic, Eric P. Xing, and Zhiting Hu. 2023c. PromptAgent: Strategic Planning with Language Models Enables Expert-level Prompt Optimization. [arxiv]2310.16427 [cs.CL] https://arxiv.org/abs/2310.16427.

- Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc V. Le, Ed H. Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. 2022. Least-to-Most Prompting Enables Complex Reasoning in Large Language Models. journalarXiv preprint arXiv:2205.10625 (2022).

- Yizhong Wang, Yeganeh Kordi, Swaroop Mishra, Alisa Liu, Noah A. Smith, Daniel Khashabi, and Hannaneh Hajishirzi. 2023b. Self-Instruct: Aligning Language Models with Self-Generated Instructions. [arxiv]2212.10560 [cs.CL] https://arxiv.org/abs/2212.10560.

- Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al 2022. Chain-of-thought prompting elicits reasoning in large language models. journalAdvances in neural information processing systems volume35 (2022), 24824–24837.

- Yunjia Xi, Muyan Weng, Wen Chen, Chao Yi, Dian Chen, Gaoyang Guo, Mao Zhang, Jian Wu, Yuning Jiang, Qingwen Liu, Yong Yu, and Weinan Zhang. 2025. Bursting Filter Bubble: Enhancing Serendipity Recommendations with Aligned Large Language Models. [arxiv]2502.13539 [cs.IR] https://arxiv.org/abs/2502.13539.

- Shuyi Xie, Wenlin Yao, Yong Dai, Shaobo Wang, Donlin Zhou, Lifeng Jin, Xinhua Feng, Pengzhi Wei, Yujie Lin, Zhichao Hu, Dong Yu, Zhengyou Zhang, Jing Nie, and Yuhong Liu. 2023. TencentLLMEval: A Hierarchical Evaluation of Real-World Capabilities for Human-Aligned LLMs. [arxiv]2311.05374 [cs.CL] https://arxiv.org/abs/2311.05374.

- Benfeng Xu, An Yang, Junyang Lin, Quan Wang, Chang Zhou, Yongdong Zhang, and Zhendong Mao. 2025. ExpertPrompting: Instructing Large Language Models to be Distinguished Experts. [arxiv]2305.14688 [cs.CL] https://arxiv.org/abs/2305.14688.

- Lanling Xu, Junjie Zhang, Bingqian Li, Jinpeng Wang, Sheng Chen, Wayne Xin Zhao, and Ji-Rong Wen. 2025b. Tapping the Potential of Large Language Models as Recommender Systems: A Comprehensive Framework and Empirical Analysis. [arxiv]2401.04997 [cs.IR] https://arxiv.org/abs/2401.04997.

- Weicai Yan, Wang Lin, Zirun Guo, Ye Wang, Fangming Feng, Xiaoda Yang, Zehan Wang, and Tao Jin. 2025. Diff-Prompt: Diffusion-Driven Prompt Generator with Mask Supervision. [arxiv]2504.21423 [cs.CV] https://arxiv.org/abs/2504.21423.

- Yakun Yu, Shi-ang Qi, Baochun Li, and Di Niu. 2024. PepRec: Progressive Enhancement of Prompting for Recommendation. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, editorYaser Al-Onaizan, Mohit Bansal, and Yun-Nung Chen (Eds.). publisherAssociation for Computational Linguistics, addressMiami, Florida, USA,17941–17953. [CrossRef]

- Bowen Zhang, Kun Zhou, Jinyang Wu, Da Yin, Zhen Yang, Zheng Hu, Wayne Xin Zhao, and Ji-Rong Wen. 2024. InstructRecs: Instruction Tuning for Recommender Systems. journalarXiv preprint arXiv:2402.01636 (2024). https://arxiv.org/abs/2402.01636.

- Tianyi Zhang, Varsha Kishore, Felix Wu, Kilian Weinberger, and Yoav Artzi. 2019. BERTScore: Evaluating Text Generation with BERT. journalarXiv preprint arXiv:1904.09675 (2019).

- Keyu Zhao, Fengli Xu, and Yong Li. 2025. Reason-to-Recommend: Using Interaction-of-Thought Reasoning to Enhance LLM Recommendation. [arxiv]2506.05069 [cs.IR] https://arxiv.org/abs/2506.05069.

- Yuying Zhao, Yu Wang, Yunchao Liu, Xueqi Cheng, Charu Aggarwal, and Tyler Derr. 2024. Fairness and Diversity in Recommender Systems: A Survey. [arxiv]2307.04644 [cs.IR] https://arxiv.org/abs/2307.04644.

- Li Zheng, Ruobing Li, and Yongfeng Zhang. 2024. JudgeRec: LLM-as-a-Judge for Evaluating Recommender Systems. journalarXiv preprint arXiv:2403.11222 (2024).

- Jeffrey Zhou, Tianjian Lu, Swaroop Mishra, Siddhartha Brahma, Sujoy Basu, Yi Luan, Denny Zhou, and Le Hou. 2023. Instruction-Following Evaluation for Large Language Models. [arxiv]2311.07911 [cs.CL] https://arxiv.org/abs/2311.07911.

- Tao Zhou, Zoltán Kuscsik, Jian-Guo Liu, Matúš Medo, Joseph R Wakeling, and Yi-Cheng Zhang. 2010. Solving the apparent diversity-accuracy dilemma of recommender systems. In Proceedings of the National Academy of Sciences, Vol. volume107. publisherNational Acad Sciences,4511–4515.

- Yuntao Zhou, Jason Wei, Barret Zoph, et al 2023b. LIMA: Less Is More for Alignment. journalarXiv preprint arXiv:2305.11206 (2023).

- Kaijie Zhu, Qinlin Zhao, Hao Chen, Jindong Wang, and Xing Xie. 2024. PromptBench: A Unified Library for Evaluation of Large Language Models. [arxiv]2312.07910 [cs.AI] https://arxiv.org/abs/2312.07910.

- Shengyao Zhuang, Xueguang Ma, Bevan Koopman, Jimmy Lin, and Guido Zuccon. 2025. Rank-R1: Enhancing Reasoning in LLM-based Document Rerankers via Reinforcement Learning. [arxiv]2503.06034 [cs.IR] https://arxiv.org/abs/2503.06034.

- Cai-Nicolas Ziegler, Sean M McNee, Joseph A Konstan, and Georg Lausen. 2005. Improving recommendation lists through topic diversification. In Proceedings of the 14th international conference on World Wide Web. ACM,22–32.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).