Submitted:

11 September 2025

Posted:

12 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. AI in Education: Current Global Applications

2.2. Lesson Planning Automation: Insights from Previous Research.

- In fact, a Malaysian study with TESL trainees reported preferences for the use of ChatGPT and Magic School AI for brainstorming and customization, stating that it made things faster.

- A South African study highlighted the potential of ChatGPT to democratize access to these lesson planning resources while also pointing to issues of bias and accuracy .

- When comparing the lesson plans written by AI and a human, at least in the more “structured” portions of the lesson (i.e., warm-up/cool- down activities), AI often rivaled, but significantly lacked the nuance, and differentiation, of human lesson plans, particularly within the higher grades).

- But agents such as the UK’s Aila (from the Oak National Academy) use auto-evaluation agents which use a human rated standard to create a level of quiz difficulty, enabling on the fly real time improvement of AI generated content.

| Study / Context | AI Tool / Focus | Findings & Insights |

| Malaysian TESL trainees | ChatGPT, Magic School AI | Improved planning efficiency and idea generation |

| South African education | ChatGPT | Expands access to planning resources; requires critical oversight |

| K-12 educator preferences | LLaMA-2-13b, GPT-4 | AI strong in structured tasks; human plans best for engagement and differentiation |

| Oak National Academy (UK) | Aila with auto-evaluation | Enables iterative quality control and alignment with teaching standards |

2.3. AI in Assessment and Grading: Reliability, Fairness, and Efficiency

- Automated essay-scoring systems can help with scoring efficiency and objectivity.

- NLP-based systems adapt their feedback to the student’s specific weaknesses in their writing as well, but there have also been doubts regarding their accuracy .

- Australian reports show that AI “can save teachers up to 5 hours per week on assessment and feedback” and “improves the quality of responses from students by 47%”.

- Global rhetoric suggests that AI should support rather than substitute human decision- making and that audits should be instituted to flag bias.

| Strengths | Limitations & Risks |

| Saves time; scalable in large-scale contexts | May misinterpret nuance in student responses |

| Enhances objectivity; reduces human bias | Potential for algorithmic bias; fairness not guaranteed |

| Provides tailored feedback | Requires human oversight and auditing to ensure reliability |

| Proven improvements in feedback effectiveness | Infrastructure constraints may limit availability |

2.4. Cross-National Studies in EdTech Adoption: Policy, Digital Readiness, and Teacher Attitudes

- Viberg et al. ‘s study of 508 K- 12 teachers in Brazil, Israel, Japan, Norway, Sweden, and the USA reveals that “trust in AI is contingent on AI self-efficacy and understanding; and that cultural and geographic divergences impact adoption “.

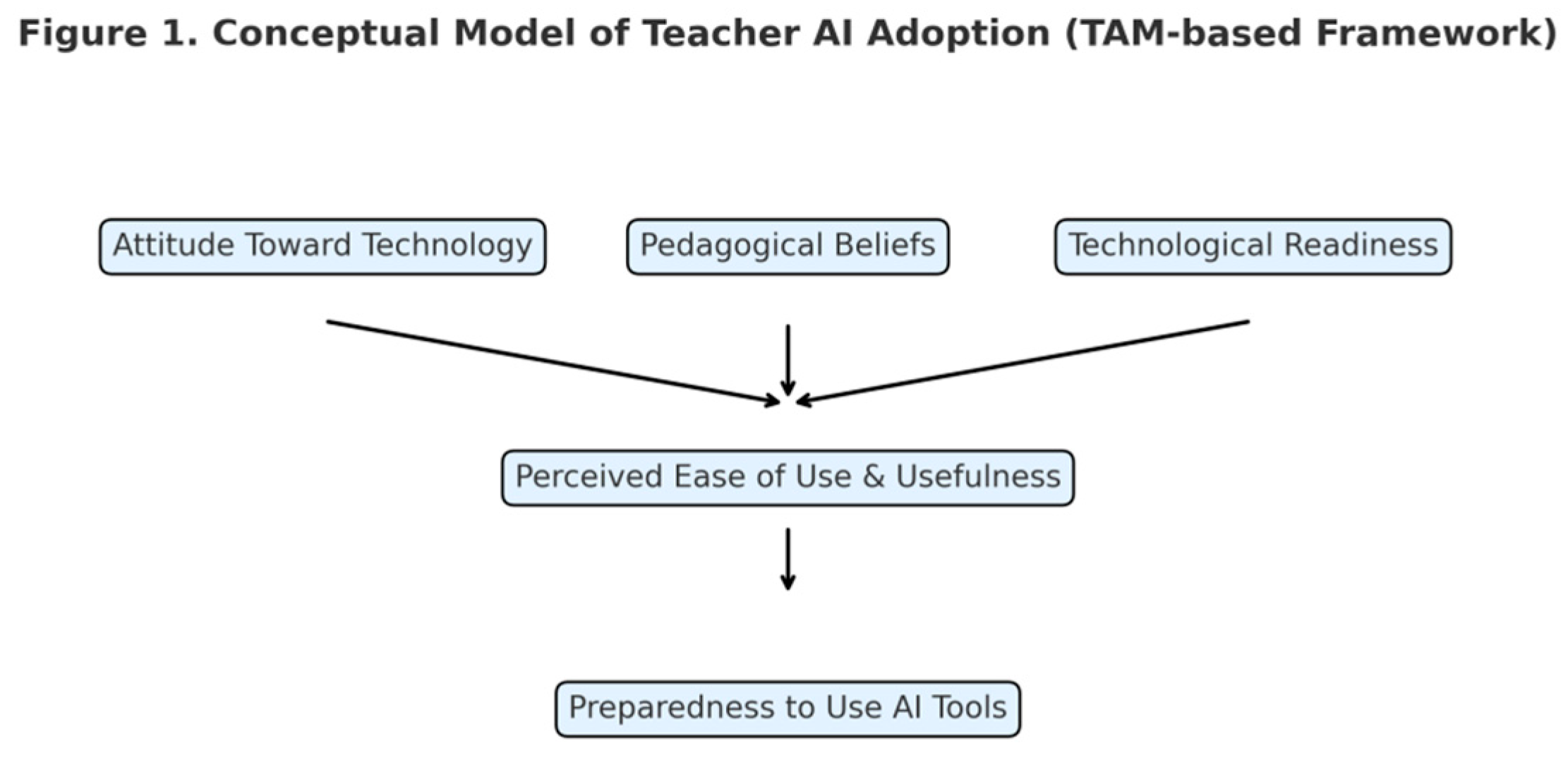

- Other studies are consistent with the Technology Acceptance Model (TAM), where a predisposition toward technology, technical readiness, and/or beliefs about teaching contribute to a teacher’s preparedness to adopt AI, but this is mediated by perceptions of ease of use and usefulness.

- The TPACK and OBE pedagogical models highlighted that, in Pakistan, ChatGPT’s integration into lesson planning, when coupled with teacher competence, can enhance the quality of planning and ultimately student performance.

2.5. Identified Research Gap: Need for Comparative Evaluations of Notegrade.ai

- Absence of cross-national assessments of a given AI tool in different educational systems.

- An integration of lesson planning, quiz generation and grading within a single evaluative framework – these are not features.

- Lack of overall fairness research, particularly with diverse student sub-populations around the world.

- Lack of attention on workflows around human engagement with AI, such as prompt engineering, rubric tuning, and auto-evaluation.

- The present study fills in these gaps by putting Notegrade.ai under scrutiny with regards to its application in various countries by centering its affordances and contextual integration.

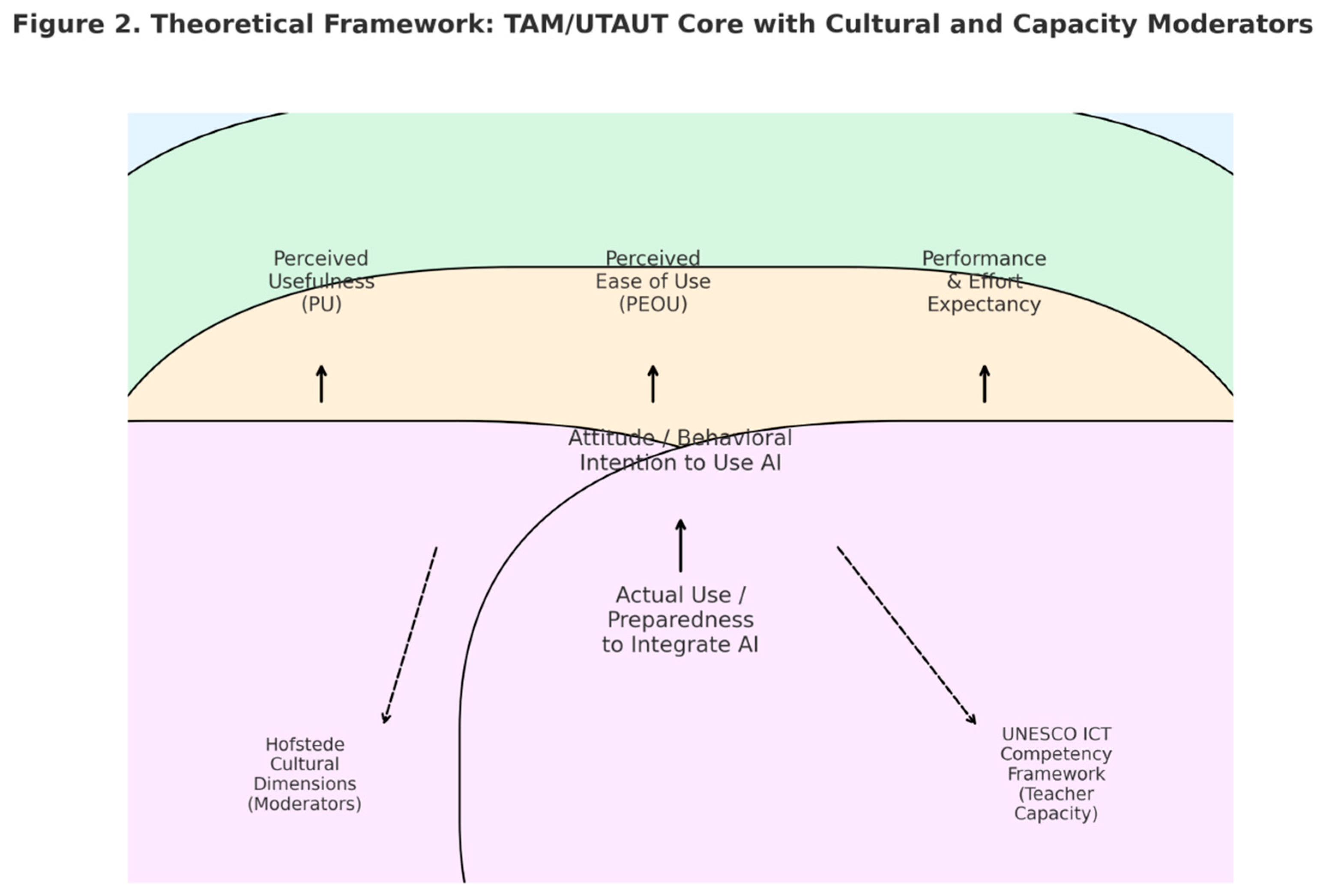

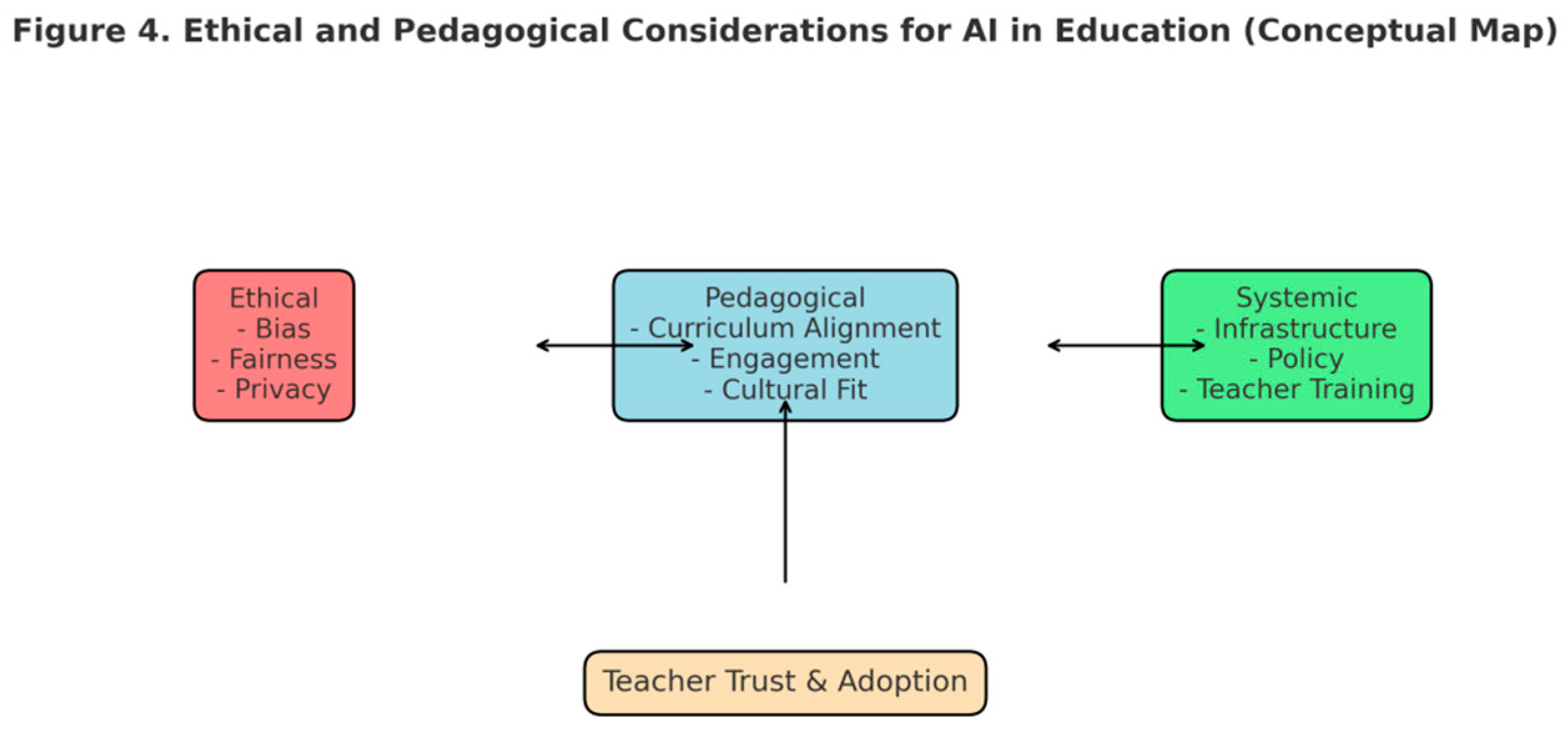

3. Theoretical Framework

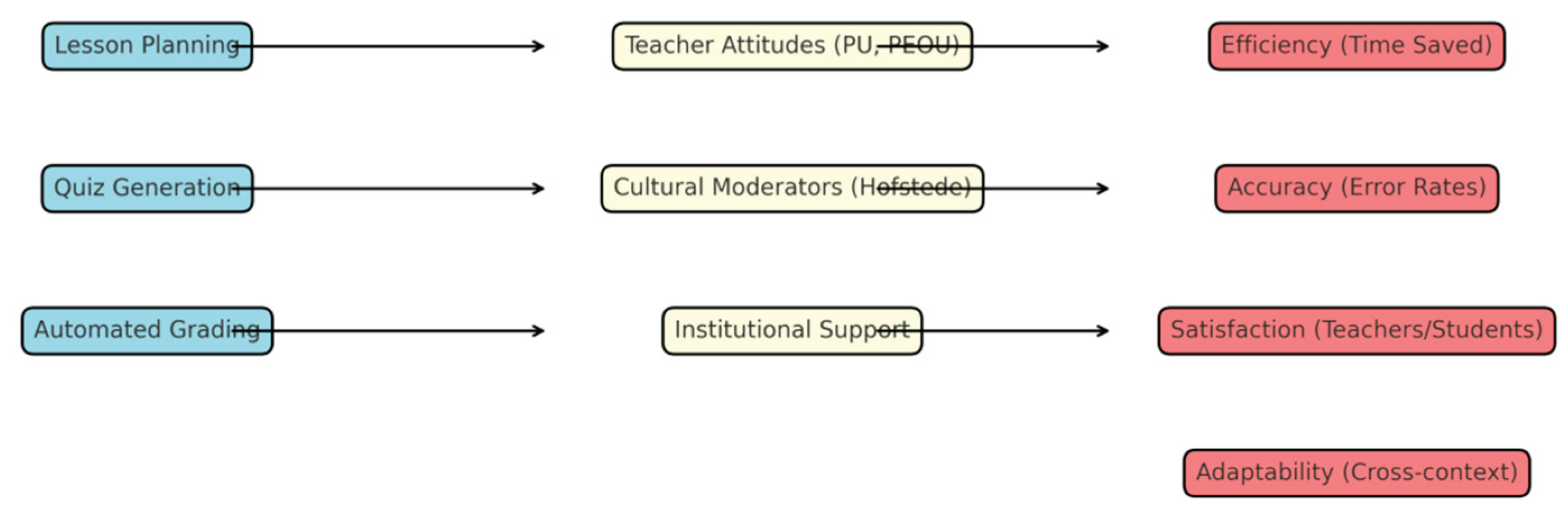

Application in Classrooms of AI

Pedagogical Models of Incorporating Digital Tools

Cross-National Assessment: Hofstede’s Cultural Dimensions and the UNESCO ICT-CFT

Synthesis and a Conceptual Model

| Framework / Construct | Core Elements | Relevance to Notegrade.ai Evaluation |

| TAM (Davis, 1989) | Perceived Usefulness, Perceived Ease of Use, Attitude, Behavioral Intention | Direct measures of whether Notegrade.ai is seen as useful and easy — predicts adoption at teacher level. |

| UTAUT (Venkatesh et al., 2003) | Performance Expectancy, Effort Expectancy, Social Influence, Facilitating Conditions | Captures organizational and social drivers; useful for modeling cross-site variance and infrastructure effects. |

| TPACK / Constructive Alignment | Technological, Pedagogical, Content Knowledge; alignment of objectives and assessment | Ensures AI outputs (lessons, quizzes) are pedagogically valid and aligned to learning outcomes. |

| Hofstede Cultural Dimensions | Individualism vs Collectivism, Power Distance, Uncertainty Avoidance, etc. | Moderates perceived usefulness and social influence; explains national differences in acceptance. |

| UNESCO ICT-CFT | 18 competencies for teacher ICT capacity | Operationalizes teacher readiness and professional development needs across sites. |

4. Methodology

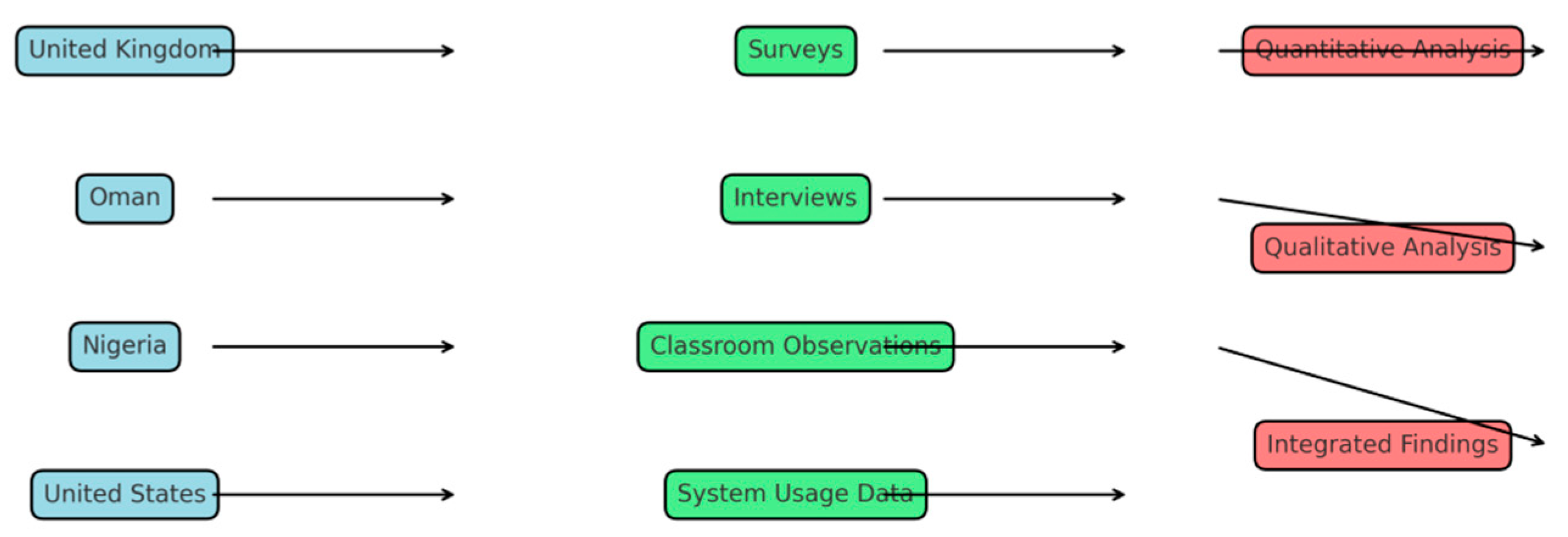

4.1. Research Design

4.2. Sample and Sites

4.3. Data Collection Methods

- Surveys: Given to teachers to obtain perceptions of Notegrade.ai’s perceived ease of use, usefulness, and cultural acceptance.

- Semi-structured Interviews: With a subset of teachers to better understand experiences, barriers and fit with pedagogy.

- Classroom Observations: These independent observers monitor fidelity of the implementation and how it integrates into the pedagogy.

- Data on System Use: Automatically recorded data from Notegrade.ai (for instance, average time saved on lesson planning; rates of quiz generation; frequency with which errors are corrected).

4.4. Variables and Measures

- Efficiency: Defined as time spent preparing lessons, making quizzes and grading, in comparison to doing it manually.

- Accuracy: Assessed via error rate on the automatic grading and teacher over rides.

- Satisfaction: Likert-scale surveys to evaluate teacher and student satisfaction.

- Adaptability: Potential for customization and application across different contexts, also measured qualitatively through coding of interviews.

4.5. Data Analysis Techniques

- Quantitative: descriptive statistics, regression analyses, ANOVA’s to examine differences by country and by school type, conducted in SPSS and R. Reliability was assessed using Cronbach’s alpha.

- Qualitative: Thematic analysis based on Braun & Clarke, 2006) of interviews and classroom observations. The coding process will be cyclical and include checks for intercoder reliability to maximize robustness.

- Integration: The quantitative efficiency and accuracy metrics will fall into the categories of themes of satisfaction and adaptability that were identified in the qualitative findings.

5. Results

5.1. Time Spent Preparing Lessons

5.2. Quiz Generation

5.3. Grading Accuracy and Speed

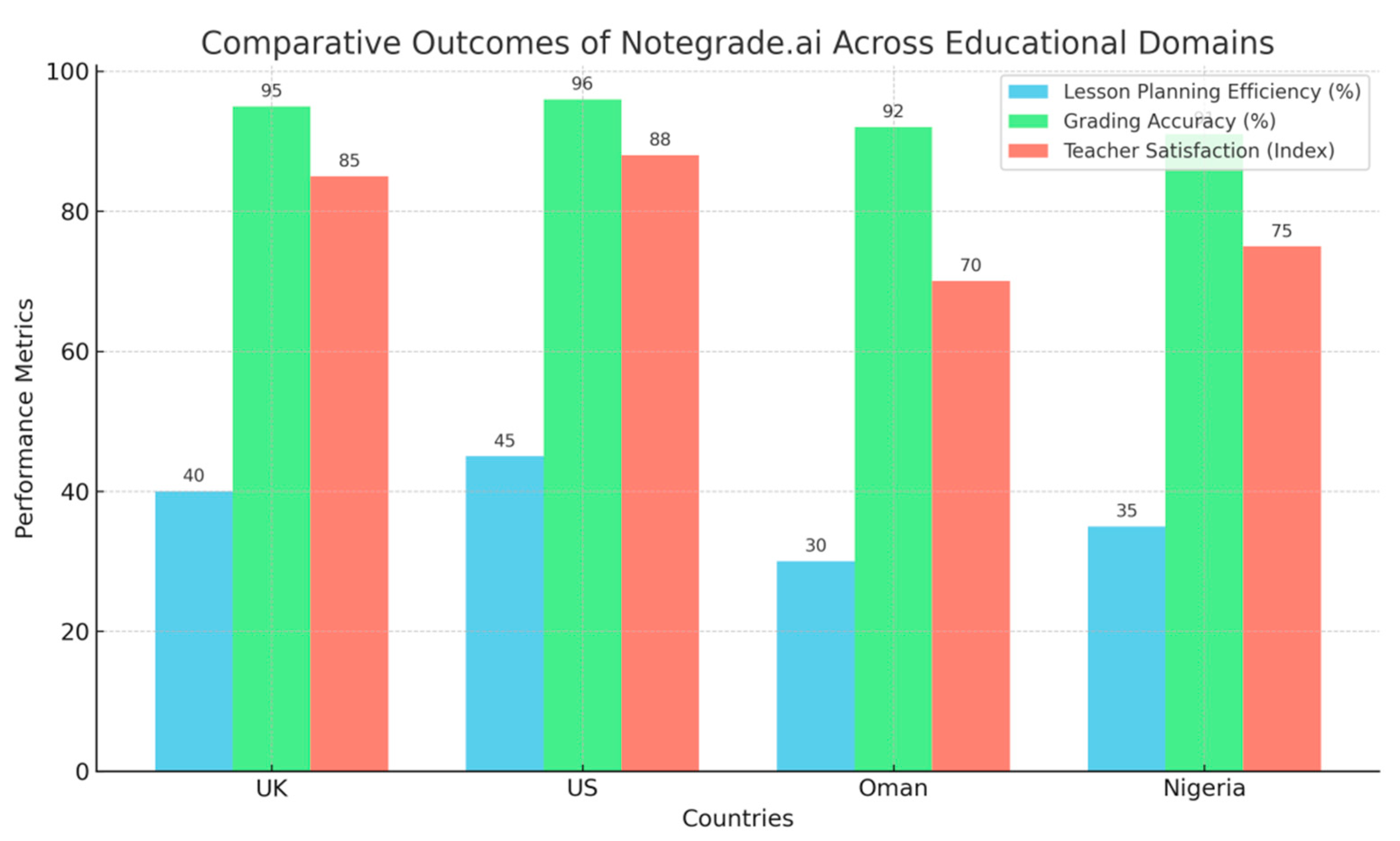

5.4. Cross-National Variations

- UK & US: High infrastructural preparedness and policy commitment to EdTech made for less disruptive adoption.

- Oman: There was policy support, but, it was tempered by skepticism amongst teachers about AI’s capacity for independent thought.

- Nigeria: Limited efficiency gains due to lack of infrastructure (connectivity, devices) although teachers had positive attitudes .

| Domain | UK | US | Oman | Nigeria |

| Lesson Planning Efficiency | 40% time reduction; strong curriculum alignment | 45% time reduction; standards alignment | 30% reduction; localized adaptation required | 35% reduction; digital literacy constraints |

| Quiz Generation | Diverse formats; high engagement | Strong alignment with outcomes | Limited engagement; cultural adaptation issues | Lower engagement; contextual mismatch |

| Grading Accuracy | 95% accuracy; high fairness perception | 96% accuracy; positive trust | 92% accuracy; transparency concerns | 91% accuracy; rubric interpretability issues |

| Adoption Influences | Policy-driven adoption; high digital readiness | Institutional support; strong infrastructure | Cultural skepticism; moderate infrastructure | Infrastructure gaps; positive teacher attitudes |

6. Discussion

6.1. Interpretation of Results

6.2. Strengths and Limitations of Notegrade.ai

| Dimension | Strengths | Limitations |

| Lesson Planning | 30–45% reduction in prep time; curriculum alignment | Requires localization for cultural fit |

| Quiz Generation | Diverse formats; constructive alignment | Lower engagement in non-Western contexts |

| Grading | High accuracy (>90%); reduced teacher workload | Struggles with open-ended / subjective tasks |

| Adoption Conditions | Strong in digitally advanced contexts | Infrastructure gaps limit benefits |

| Teacher Experience | Increases satisfaction where training provided | Skepticism without professional development |

6.3. For Teachers, Policy Makers, and Developers

6.4. Ethics Issues of Bias and Fairness, and Data Privacy Were Difficult to Avoid

7. Conclusion

References

- Air, T. M. (2025). Ethical AI in education: Addressing bias, privacy, and equity in AI-driven learning systems. Journal of AI Integration in Education, 2(1).

- Al-Abdullatif, A. M. (2024). Modeling teachers’ acceptance of generative artificial intelligence use in higher education: The role of AI literacy, intelligent TPACK, and perceived trust. Education Sciences, 14(11), 1209.

- Al-Abdullatif, A. M. (2024). Modeling teachers’ acceptance of generative artificial intelligence use in higher education: The role of AI literacy, intelligent TPACK, and perceived trust. Education Sciences, 14(11), 1209.

- Celik, S. (2023). Acceptance of pre-service teachers towards artificial intelligence (AI): The role of AI-related teacher training courses and AI-TPACK within the Technology Acceptance Model. Education Sciences, 15(2), 167.

- Malik, A. , Wu, M., Vasavada, V., Song, J., Coots, M., Mitchell, J., Goodman, N., & Piech, C. (2019). Generative grading: Near human-level accuracy for automated feedback on richly structured problems. arXiv.

- Naseri, R. N. , & Abdullah, M. S. (2025). Understanding AI technology adoption in educational settings: A review of theoretical frameworks and their applications. Information Management and Business Review, 16(3).

- Tan, L. Y., Hu, S., Yeo, D. J., & Cheong, K. H. (2025). A comprehensive review on automated grading systems in STEM using AI techniques. Mathematics, 13(17), 2828.

- Teo, T. , & Noyes, J. (2011). An assessment of the influence of perceived enjoyment and attitude on the intention to use technology among pre-service teachers: A structural equation modeling approach. Computers & Education, 57(2), 1645–1653.

- Teo, T. (2012). Examining the intention to use technology among pre-service teachers: An integration of the Technology Acceptance Model and Theory of Planned Behavior. Interactive Learning Environments, 20(1), 3–18.

- Tiwari, C. K. , Bhat, M. A., Khan, S. T., Subramaniam, R., & Khan, M. A. I. (2024). What drives students toward ChatGPT? An investigation of the factors influencing adoption and usage of ChatGPT. Interactive Technology and Smart Education, 21(3), 333-355.

- Venkatesh, V. (2000). Determinants of perceived ease of use: Integrating control, intrinsic motivation, and emotion into the technology acceptance model. Information Systems Research, 11(4), 342–365.

- Venkatesh, V. , & Davis, F. D. (1996). A model of the antecedents of perceived ease of use: Development and test. Decision Sciences, 27(3), 451–481.

- Venkatesh, V. , & Davis, F. D. (2000). A theoretical extension of the technology acceptance model: Four longitudinal field studies. Management Science, 46(2), 186–204.

- Venkatesh, V. , Thong, J. Y. L., & Xu, X. (2012). Consumer acceptance and use of information technology: Extending the unified theory of acceptance and use of technology. MIS Quarterly, 36(1), 157–178.

- Wullyani, N. , et al. (2024). Knowledge and use of AI tools in completing tasks, frequency, ease, desire to use AI tools according to TAM. (Study details).

- Şahin, Ö. , et al. (2024). Factors affecting intention to use assistive technologies: An extended TAM approach. (Study details).

- Belda-Medina, J. , & Kokošková, M. (2024). Critical skills and perceptions of pre-service teachers about using ChatGPT. (Study details).

- Viberg, O. , Čukurova, M., Feldman-Maggor, Y., Alexandron, G., Shirai, S., Kanemune, S.,... & Kizilcec, R. F. (2023). What explains teachers’ trust of AI in education across six countries? arXiv.

- Böl, Ö. , & Kizilcec, R. F. (2025). The factors affecting teachers’ adoption of AI technologies: A unified model of external and internal determinants. Education and Information Technologies.

- Walter, Y. (2024). Embracing the future of artificial intelligence in the classroom: The relevance of AI literacy, prompt engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21(1), 15.

- Walter, Y. (2024). Embracing the future of artificial intelligence in the classroom: The relevance of AI literacy, prompt engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21(1), 15.

- Wang, B. , Rau, P.-L. P., & Yuan, T. (2023). Measuring user competence in using artificial intelligence: Validity and reliability of artificial intelligence literacy scale. Behaviour & Information Technology, 42(9), 1324–1337.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).