Submitted:

07 September 2025

Posted:

09 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A.

- Topology of Review

2. Literature Review

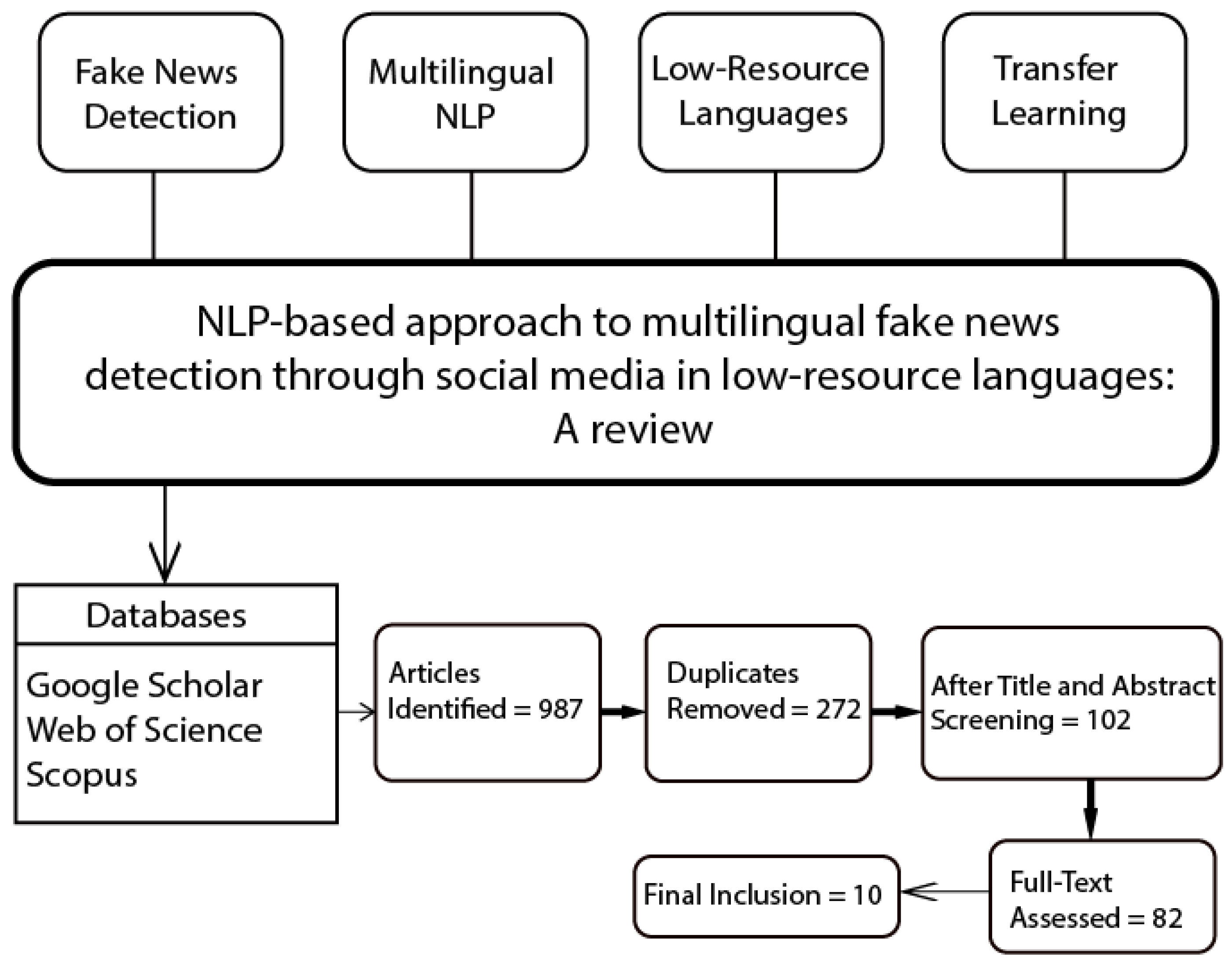

3. Methodology

- A.

- Collection of Articles

- B.

- Search Strategy

- Only studies published between 2015 and 2025 were included to reflect recent research trends.

- More preferences were given to those papers which discussed technical aspects-feature extraction, model architecture, preprocessing of data, and transfer learning techniques for cross-linguistic adaptation.

- Those papers were more preferred which reported the results based on performance metrics like accuracy, precision, recall, F1-score, and AUC.

- Only peer-reviewed journal articles and conference papers were included to maintain the integrity of the review. In this context, editorials, posters, and technical reports were not considered to be peer-reviewed sources.

4. Research Findings

- A.

- NLP Techniques for Fake News Detection

- a)

- Text Classification

- b)

- Sentiment Analysis

- c)

- Named Entity Recognition (NER)

- B.

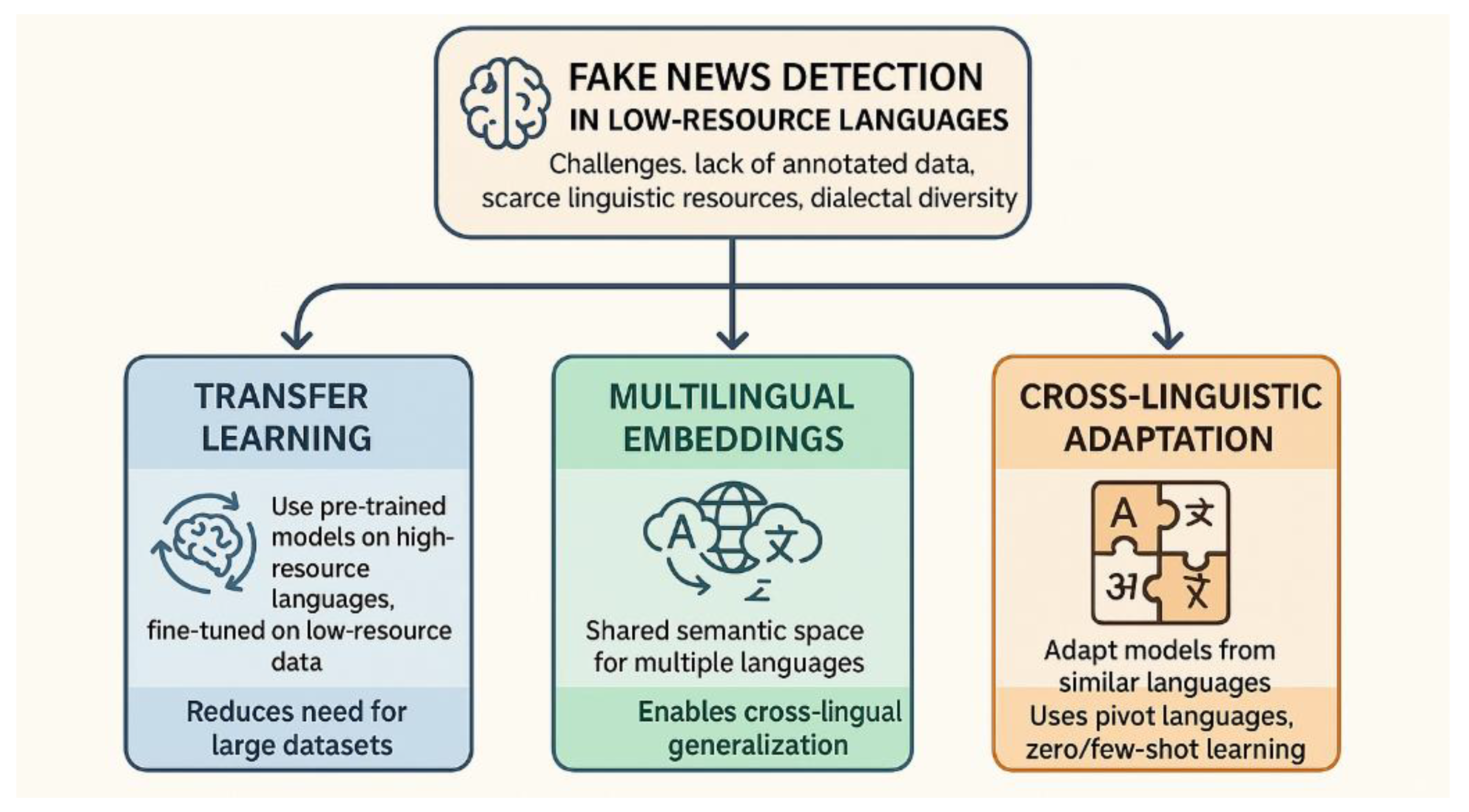

- State-of-the-ArtApproaches in Low-Resource Languages

- a)

- Transfer Learning

- b)

- Multilingual Embeddings

- c)

- Cross-linguistic Adaptation

- C.

- Critical Analysis of Existing Models

- a)

-

Strengths

- i.Cross-Linguistic Generalization

- ii.

- Scalability and Flexibility

- iii.

- Improved Textual Feature Extraction

- iv.

- Enhanced Model Robustness

- b)

-

Limitations

- i.

- Data Scarcity and Quality

- ii.

- Limited Cultural Context Understanding

- iii.

- Inadequate Adaptation to Low-Resource Languages

- iv.

- High Computational Requirements

- c)

-

Opportunities for Improvement

- i.

- Development of Multilingual Datasets

- ii.

- Incorporation of Cultural and Contextual Knowledge

- iii.

- Optimization for Low-Resource Environments

5. Challenges

- A.

- Scarcity of Annotated Data

- B.

- Linguistic Diversity

- C.

- Model Adaptability

| Challenge | Description | Implications | Potential Approaches |

|---|---|---|---|

| Scarcity of Annotated Data | Limited availability of manually labeled datasets in low-resource languages due to high costs and time required for annotation. | Hinders model training and validation; leads to poor performance and low accuracy. | Use of transfer learning, data augmentation, and unsupervised learning methods. |

| Linguistic Diversity | High variation in dialects, orthography, syntax, and presence of primarily oral languages. | Models trained on one dialect may not generalize to others; limited written data hinders the development of these models. | Develop dialect-specific resources; leverage multilingual embeddings and speech-to-text technologies. |

| Model Adaptability | Difficulty in adapting NLP models trained on high-resource languages to low-resource contexts. | Reduced performance and generalization; failure to capture unique linguistic features. | Design lightweight, adaptable architectures; train models on multilingual datasets; integrate meta-learning. |

6. Future Directions

7. Conclusions

References

- Dr. B. Dhiman, “The Rise and Impact of Misinformation and Fake News on Digital Youth: A Critical Review,” SSRN Electronic Journal, 2023. [CrossRef]

- De, D. Bandyopadhyay, B. Gain, and A. Ekbal, “A Transformer-Based Approach to Multilingual Fake News Detection in Low-Resource Languages,” ACM Transactions on Asian and Low-Resource Language Information Processing, vol. 21, no. 1, 2022. [CrossRef]

- H. Jand, “Natural Language Processing with Speech Recognition,” International Journal of Advanced Research Trends in Engineering and Technology (IJARTET), 2018.

- Bhardwaj, P. Khanna, S. Kumar, and Pragya, “Generative Model for NLP Applications based on Component Extraction,” in Procedia Computer Science, 2020. [CrossRef]

- M. Tsai, “Stylometric Fake News Detection Based on Natural Language Processing Using Named Entity Recognition: In-Domain and Cross-Domain Analysis,” Electronics (Switzerland), vol. 12, no. 17, 2023. [CrossRef]

- R. Mohawesh, X. Liu, H. M. Arini, Y. Wu, and H. Yin, “Semantic graph based topic modelling framework for multilingual fake news detection,” AI Open, vol. 4, 2023. [CrossRef]

- Y. Zhang et al., “Stance-level Sarcasm Detection with BERT and Stance-centered Graph Attention Networks,” ACM Trans Internet Technol, vol. 23, no. 2, 2023. [CrossRef]

- K. Sharifani, M. Amini, Y. Akbari, and J. A. Godarzi, “Operating Machine Learning across Natural Language Processing Techniques for Improvement of Fabricated News Model,” International Journal of Science and Information System Research, vol. 12, no. 9, 2022.

- M. Q. Alnabhan, P. Branco, and M. Alnabhan, “Date of publication xxxx 00, 0000, date of current version xxxx 00, 0000. Fake News Detection Using Deep Learning: A Systematic Literature Review”. [CrossRef]

- J. Alghamdi, Y. Lin, and S. Luo, “Fake news detection in low-resource languages: A novel hybrid summarization approach,” Knowl Based Syst, vol. 296, Jul. 2024. [CrossRef]

- M. J. G. Fagundes, N. T. Roman, and L. A. Digiampietri, “The Use of Syntactic Information in fake news detection: A Systematic Review,” SBC Reviews on Computer Science, vol. 4, no. 1, pp. 1–10, Mar. 2024. [CrossRef]

- P. Krasadakis, E. Sakkopoulos, and V. S. Verykios, “A Survey on Challenges and Advances in Natural Language Processing with a Focus on Legal Informatics and Low-Resource Languages,” Feb. 01, 2024, Multidisciplinary Digital Publishing Institute (MDPI). [CrossRef]

- P. Meesad, “Thai Fake News Detection Based on Information Retrieval, Natural Language Processing and Machine Learning,” SN Comput Sci, vol. 2, no. 6, Nov. 2021. [CrossRef]

- K. Agras and B. Atay, “A Novel Transformer-Based Deep Learning Pipeline for Multilingual Fake News Detection,” 2024. [CrossRef]

- R. F. Cekinel, P. Karagoz, and C. Coltekin, “Cross-Lingual Learning vs. Low-Resource Fine-Tuning: A Case Study with Fact-Checking in Turkish,” Mar. 2024, [Online]. Available: http://arxiv.org/abs/2403. 0041.

- S. Han, “Cross-lingual Transfer Learning for Fake News Detector in a Low-Resource Language,” Aug. 2022, [Online]. Available: http://arxiv.org/abs/2208. 1248.

- M. Zovikoğlu and U. Çetin, “Detecting misinformation on social networks with natural language processing,” 2024. [Online]. Available: https://dergipark.org.

- Ziyaden, A. Yelenov, F. Hajiyev, S. Rustamov, and A. Pak, “Text data augmentation and pre-trained Language Model for enhancing text classification of low-resource languages,” PeerJ Comput Sci, vol. 10, 2024. [CrossRef]

- P. Mookdarsanit and L. Mookdarsanit, “The covid-19 fake news detection in thai social texts,” Bulletin of Electrical Engineering and Informatics, vol. 10, no. 2, 2021. [CrossRef]

- F. Farhangian, R. M. O. Cruz, and G. D. C. Cavalcanti, “Fake news detection: Taxonomy and comparative study,” Information Fusion, vol. 103, 2024. [CrossRef]

- B. Hu, Z. Mao, and Y. Zhang, “An overview of fake news detection: From a new perspective,” 2024. [CrossRef]

- J. A. Nasir and Z. U. Din, “Syntactic structured framework for resolving reflexive anaphora in Urdu discourse using multilingual NLP,” KSII Transactions on Internet and Information Systems, vol. 15, no. 4, 2021. [CrossRef]

- S. Ranathunga, E. S. A. Lee, M. Prifti Skenduli, R. Shekhar, M. Alam, and R. Kaur, “Neural Machine Translation for Low-resource Languages: A Survey,” ACM Comput Surv, vol. 55, no. 11, 2023. [CrossRef]

- B. Ramesh Reddy, T. Tejaswi, P. Naveen, J. Giresh, and B. Vamsi, “Optimising the Detection of Fake News in Multilingual Exposition using Machine Learning Techniques,” in Proceedings of the 7th International Conference on Intelligent Computing and Control Systems, ICICCS 2023, 2023. [CrossRef]

- R. Wijayanti, M. L. Khodra, K. Surendro, and D. H. Widyantoro, “Learning bilingual word embedding for automatic text summarization in low resource language,” Journal of King Saud University - Computer and Information Sciences, vol. 35, no. 4, 2023. [CrossRef]

- T. Mahmud, M. Ptaszynski, J. Eronen, and F. Masui, “Cyberbullying detection for low-resource languages and dialects: Review of the state of the art,” Inf Process Manag, vol. 60, no. 5, 2023. [CrossRef]

- S. Na, P. Na, T. Na, and Dr. Z. Nasim, “Verify: Breakthrough accuracy in the {U}rdu fake news detection using Text classification,” in Proceedings of the 36th Pacific Asia Conference on Language, Information and Computation, 2022.

- S. A. F. Azhar, F. Hidayat, M. H. Azfarezat, G. Z. Nabiilah, and Rojali, “EFFICIENCY OF FAKE NEWS DETECTION WITH TEXT CLASSIFICATION USING NATURAL LANGUAGE PROCESSING,” J Theor Appl Inf Technol, vol. 101, no. 22, 2023.

- Y. Yang et al., “Multilingual universal sentence encoder for semantic retrieval,” in Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2020. [CrossRef]

- W. Cuenca, C. González-Fernández, A. Fernández-Isabel, I. Martín de Diego, and A. G. Martín, “Combining Conceptual Graphs and Sentiment Analysis for Fake News Detection,” in Studies in Computational Intelligence, vol. 955, 2022. [CrossRef]

- B. Mohamed, H. Haytam, and F. Abdelhadi, “Applying Fuzzy Logic and Neural Network in Sentiment Analysis for Fake News Detection: Case of Covid-19,” in Studies in Computational Intelligence, vol. 1001, 2022. [CrossRef]

- H. Taherdoost and M. Madanchian, “Artificial Intelligence and Sentiment Analysis: A Review in Competitive Research,” 2023. [CrossRef]

- Ligthart, C. Catal, and B. Tekinerdogan, “Systematic reviews in sentiment analysis: a tertiary study,” Artif Intell Rev, vol. 54, no. 7, 2021. [CrossRef]

- J. Li, A. Sun, J. Han, and C. Li, “A Survey on Deep Learning for Named Entity Recognition,” IEEE Trans Knowl Data Eng, vol. 34, no. 1, 2022. [CrossRef]

- M. Ehrmann, A. M. Ehrmann, A. Hamdi, E. L. Pontes, M. Romanello, and A. Doucet, “Named Entity Recognition and Classification in Historical Documents: A Survey,” ACM Comput Surv, vol. 56, no. 2, 2023. [CrossRef]

- Budi and R., R. Suryono, “Application of named entity recognition method for Indonesian datasets: a review,” 2023. [CrossRef]

- Z. Sun and X. Li, “Named Entity Recognition Model Based on Feature Fusion,” Information (Switzerland), vol. 14, no. 2, 2023. [CrossRef]

- H. Wang, L. Zhou, J. Duan, and L. He, “Cross-Lingual Named Entity Recognition Based on Attention and Adversarial Training,” Applied Sciences (Switzerland), vol. 13, no. 4, 2023. [CrossRef]

- M. S. Hernández, “Beliefs and attitudes of canarians towards the chilean linguistic variety,” Lenguas Modernas, no. 62, pp. 183–209, 2023. [CrossRef]

- M. Iman, H. R. Arabnia, and K. Rasheed, “A Review of Deep Transfer Learning and Recent Advancements,” 2023. [CrossRef]

- Z. Zhu, K. Z. Zhu, K. Lin, A. K. Jain, and J. Zhou, “Transfer Learning in Deep Reinforcement Learning: A Survey,” IEEE Trans Pattern Anal Mach Intell, vol. 45, no. 11, 2023. [CrossRef]

- Hämmerl, J. K. Hämmerl, J. Libovický, and A. Fraser, “Combining Static and Contextualised Multilingual Embeddings,” in Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2022. [CrossRef]

- H. Licht, “Cross-Lingual Classification of Political Texts Using Multilingual Sentence Embeddings,” Political Analysis, vol. 31, no. 3, 2023. [CrossRef]

- B. Dhyani, “Transfer Learning in Natural Language Processing: A Survey,” Mathematical Statistician and Engineering Applications, vol. 70, no. 1, 2021. [CrossRef]

- B. Palani and S. Elango, “CTrL-FND: content-based transfer learning approach for fake news detection on social media,” International Journal of System Assurance Engineering and Management, vol. 14, no. 3, 2023. [CrossRef]

- M. Ghayoomi and M. Mousavian, “Deep transfer learning for COVID-19 fake news detection in Persian,” Expert Syst, vol. 39, no. 8, 2022. [CrossRef]

- S. Schwarz, A. Theophilo, and A. Rocha, “Emet: Embeddings from multilingual-encoder transformer for fake news detection,” in ICASSP, IEEE International Conference on Acoustics, Speech and Signal Processing - Proceedings, 2020. [CrossRef]

- S. Kasim, “One True Pairing: Evaluating Effective Language Pairings for Fake News Detection Employing Zero-Shot Cross-Lingual Transfer,” in Communications in Computer and Information Science, 2023. [CrossRef]

- X. Han, W. Zhao, N. Ding, Z. Liu, and M. Sun, “PTR: Prompt Tuning with Rules for Text Classification,” AI Open, vol. 3, 2022. [CrossRef]

- Yang, X. Hu, G. Xiao, and Y. Shen, “A Survey of Knowledge Enhanced Pre-trained Language Models,” ACM Transactions on Asian and Low-Resource Language Information Processing, 2024. [CrossRef]

- J. Howard and S. Ruder, “Universal language model fine-tuning for text classification,” in ACL 2018 - 56th Annual Meeting of the Association for Computational Linguistics, Proceedings of the Conference (Long Papers), 2018. [CrossRef]

- S. Gururaja, R. Dutt, T. Liao, and C. Rosé, “Linguistic representations for fewer-shot relation extraction across domains,” in Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2023. [CrossRef]

- Z. Wang and D. Hershcovich, “On Evaluating Multilingual Compositional Generalization with Translated Datasets,” in Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2023. [CrossRef]

- Farokhian, V. Rafe, and H. Veisi, “Fake news detection using dual BERT deep neural networks,” Multimed Tools Appl, vol. 83, no. 15, 2024. [CrossRef]

- J. Alghamdi, Y. Lin, and S. Luo, “Towards COVID-19 fake news detection using transformer-based models,” Knowl Based Syst, vol. 274, 2023. [CrossRef]

- G. Kuling, B. Curpen, and A. L. Martel, “BI-RADS BERT and Using Section Segmentation to Understand Radiology Reports,” J Imaging, vol. 8, no. 5, 2022. [CrossRef]

- Y. Wang et al., “A comparison of word embeddings for the biomedical natural language processing,” J Biomed Inform, vol. 87, 2018. [CrossRef]

- X. Shi, M. Hu, J. Deng, F. Ren, P. Shi, and J. Yang, “Integration of Multi-Branch GCNs Enhancing Aspect Sentiment Triplet Extraction,” Applied Sciences (Switzerland), vol. 13, no. 7, 2023. [CrossRef]

- Y. Zhao, T. Xue, and G. Liu, “Research on the robustness of neural machine translation systems in word order perturbation,” Chinese Journal of Network and Information Security, vol. 9, no. 5, 2023. [CrossRef]

- Mohammed and, R. Prasad, “Building lexicon-based sentiment analysis model for low-resource languages,” MethodsX, vol. 11, 2023. [CrossRef]

- Haq, W. Qiu, J. Guo, and P. Tang, “NLPashto: NLP Toolkit for Low-resource Pashto Language,” International Journal of Advanced Computer Science and Applications, vol. 14, no. 6, 2023. [CrossRef]

- Z. Zeng and S. Bhat, “Idiomatic expression identification using semantic compatibility,” Trans Assoc Comput Linguist, vol. 9, 2021. [CrossRef]

- Seema Singh and, Dr. Piyush Pratap Singh, “Transitive and Intransitive Verb Analysis for Idiomatic Expression Understanding: An NLP-based Framework,” International Journal of Advanced Research in Science, Communication and Technology, 2023. [CrossRef]

- C. Raffel et al., “Exploring the limits of transfer learning with a unified text-to-text transformer,” Journal of Machine Learning Research, vol. 21, 2020.

- R. Careem, G. Johar, and A. Khatibi, “Deep neural networks optimization for resource-constrained environments: techniques and models,” Indonesian Journal of Electrical Engineering and Computer Science, vol. 33, no. 3, 2024. [CrossRef]

- J. Kreutzer et al., “Quality at a Glance: An Audit of Web-Crawled Multilingual Datasets,” Trans Assoc Comput Linguist, vol. 10, 2022. [CrossRef]

- C. Fuchs, “Cultural and contextual affordances in language MOOCs: Student perspectives,” 2020. [CrossRef]

- Wang, M. Feng, B. Zhou, B. Xiang, and S. Mahadevan, “Efficient hyper-parameter optimization for NLP applications,” in Conference Proceedings - EMNLP 2015: Conference on Empirical Methods in Natural Language Processing, 2015. [CrossRef]

- D. Dementieva and A. Panchenko, “Fake news detection using multilingual evidence,” in Proceedings - 2020 IEEE 7th International Conference on Data Science and Advanced Analytics, DSAA 2020, 2020. [CrossRef]

- Z. Tan et al., “Large Language Models for Data Annotation and Synthesis: A Survey,” Dec. 2024, [Online]. Available: http://arxiv.org/abs/2402. 1344.

- L. Alzubaidi et al., “A survey on deep learning tools dealing with data scarcity: definitions, challenges, solutions, tips, and applications,” J Big Data, vol. 10, no. 1, 2023. [CrossRef]

- P. Joshi, S. Santy, A. Budhiraja, K. Bali, and M. Choudhury, “The state and fate of linguistic diversity and inclusion in the NLP world,” in Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2020. [CrossRef]

- Edwards, Multilingualism: Understanding Linguistic Diversity. 2012. [CrossRef]

- Abdedaiem, A. H. Dahou, and M. A. Cheragui, “Fake News Detection in Low Resource Languages using SetFit Framework,” Inteligencia Artificial, vol. 26, no. 72, 2023. [CrossRef]

- E. Raja, B. Soni, and S. K. Borgohain, “Fake news detection in Dravidian languages using transfer learning with adaptive finetuning,” Eng Appl Artif Intell, vol. 126, 2023. [CrossRef]

- F. Gereme, W. Zhu, T. Ayall, and D. Alemu, “Combating fake news in ‘low-resource’ languages: Amharic fake news detection accompanied by resource crafting,” Information (Switzerland), vol. 12, no. 1, 2021. [CrossRef]

- B. Zoph, D. Yuret, J. May, and K. Knight, “Transfer learning for low-resource neural machine translation,” in EMNLP 2016 - Conference on Empirical Methods in Natural Language Processing, Proceedings, 2016. [CrossRef]

- Y. Chen et al., “Cross-modal Ambiguity Learning for Multimodal Fake News Detection,” in WWW 2022 - Proceedings of the ACM Web Conference 2022, 2022. [CrossRef]

- Shu, S. Wang, and H. Liu, “Beyond news contents: The role of social context for fake news detection,” in WSDM 2019 - Proceedings of the 12th ACM International Conference on Web Search and Data Mining, 2019. [CrossRef]

- D. Dementieva, M. Kuimov, and A. Panchenko, “Multiverse: Multilingual Evidence for Fake News Detection †,” J Imaging, vol. 9, no. 4, 2023. [CrossRef]

- Park and, S. Chai, “Constructing a User-Centered Fake News Detection Model by Using Classification Algorithms in Machine Learning Techniques,” IEEE Access, vol. 11, 2023. [CrossRef]

- Y. Dou, K. Shu, C. Xia, P. S. Yu, and L. Sun, “User Preference-aware Fake News Detection,” in SIGIR 2021 - Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2021. [CrossRef]

| Statistics | Value |

|---|---|

| Number of languages spoken worldwide | Approximately 7,000 |

| Percentage of languages classified as low-resource | Over 90% |

| Number of people using social media globally | 4.7 billion (as of 2023) |

| Percentage of social media users in low-resource language regions | 25% |

| Increase in fake news incidents on social media (2019-2023) | 78% increase |

| Languages with substantial NLP resources | Fewer than 100 |

| Number of research papers on fake news detection in low-resource languages (2018-2023) | Less than 5% of all studies |

| Author, Year | Target Variable | Input | Architecture | Pre-Processing | Dataset |

Outcome | Output Result |

|---|---|---|---|---|---|---|---|

| Alnabhan et al. – 2023 | Fake News Detection | Social Media Text | CNN, Bi(LSTM), BERT, Hybrid Models | Data Augmentation, Class Balancing | 178 articles | Fake News Detection | High Accuracy with Class Imbalance Issues Addressed |

| Alghamdi et al. – 2024 | Fake News Detection | Social Media Text | Hybrid Extractive-Abstractive Summarization | Domain Transfer, Data Reduction | Various Domains | Cross-Domain Transfer | Improved Model Versatility |

| Fagundes et al. – 2024 | Fake News Detection | Social Media Text | Deep Syntactic Representations | Feature Combination | 20 papers | Syntactic Information Usage | Enhanced Detection with Deep Syntactic Features |

| Krasadakis et al. – 2024 | Fake News Detection | Legal Documents | NER, EL, RelEx, Coref | Custom Dataset Challenges | Legal Texts | Legal Citation Management | Improved Multilingual NLP Applications |

| Meesad et al. – 2021 | Fake News Detection in Thai | Thai News Articles | Naïve Bayes, Logistic Regression, LSTM | Word Segmentation | 41,448 samples | Fake News Detection | LSTM Model Most Accurate, Addressed Thai Language Challenges |

| Bahmanyar et al. – 2024 | Fake News Detection in Multiple Languages | Multilingual Text | Transformer-based Models | Data Filtering, Zero-Shot Learning | 16 Languages | Multilingual Fake News Detection | 97.38% Accuracy, Cross-Lingual Transfer |

| Schmidt et al. – 2024 | Fake News Detection | Statement Text | LLaMA Models (Various Sizes) | Stylistic Feature Tuning | Snopes and FCTR1000 Datasets | Fake News Detection | High Accuracy with Contextual Improvement |

| Abboud et al. – 2022 | Fake News Detection in Low-Resource Languages | Multilingual Text | Cross-Lingual Transfer Learning | Machine-Translated Data | Various Low-Resource Languages | Low-Resource Fake News Detection | Improved Accuracy with Cross-Lingual Features |

| Masis et al. – 2024 | Fake News Detection | News Titles | Logistic Regression | Title-Based Feature Extraction | 19,000 entries | Fake News Detection | Effective with Title-Only Input |

| Ziyaden et al. - 2024 | Fake News Detection | Social Media Text | Text Augmentation Techniques | Back-Translation, Neural Network-Based | Various Languages | Enhanced Model Performance | Improved Accuracy with Augmentation Strategies |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).