Submitted:

22 August 2025

Posted:

22 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

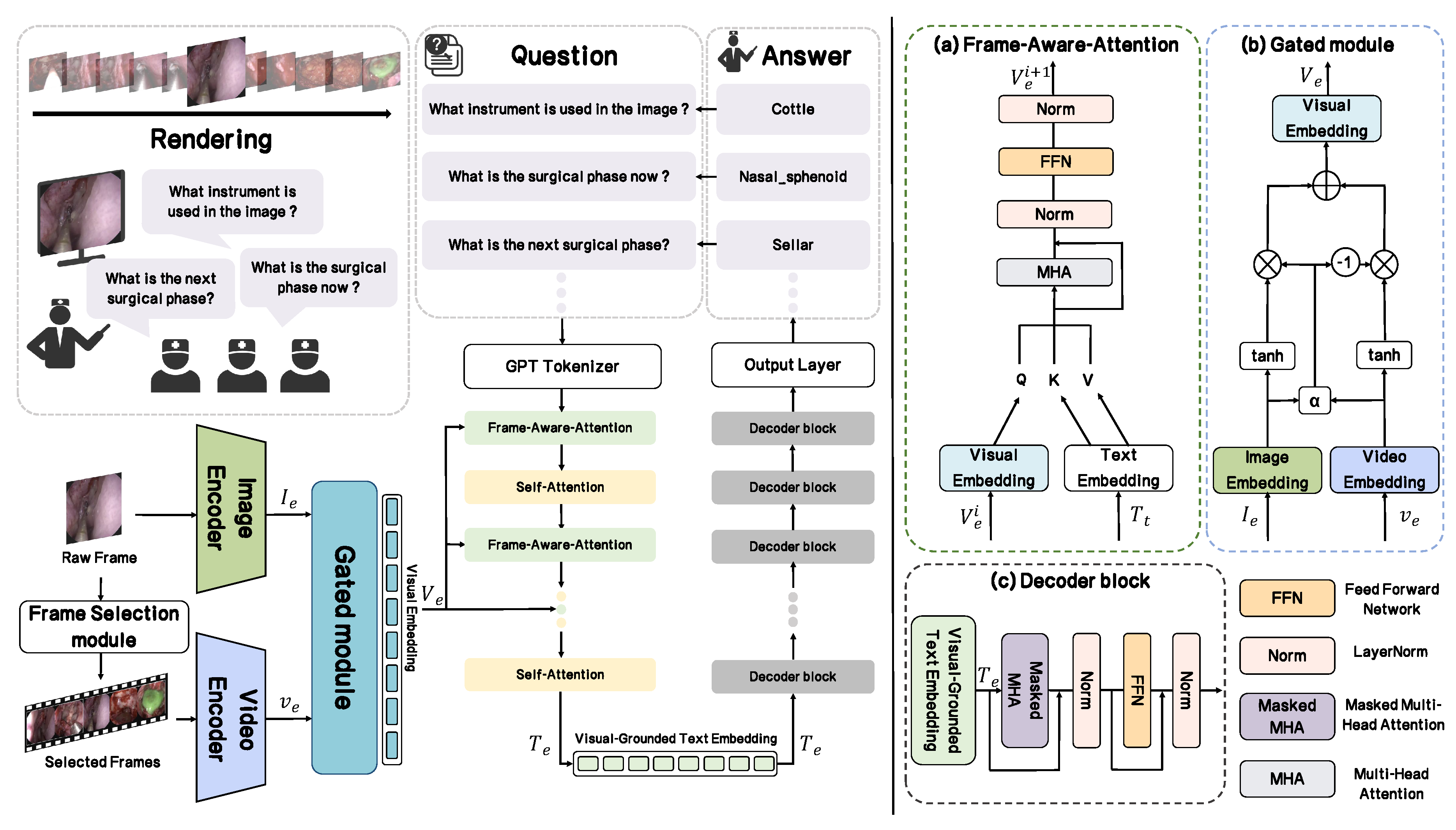

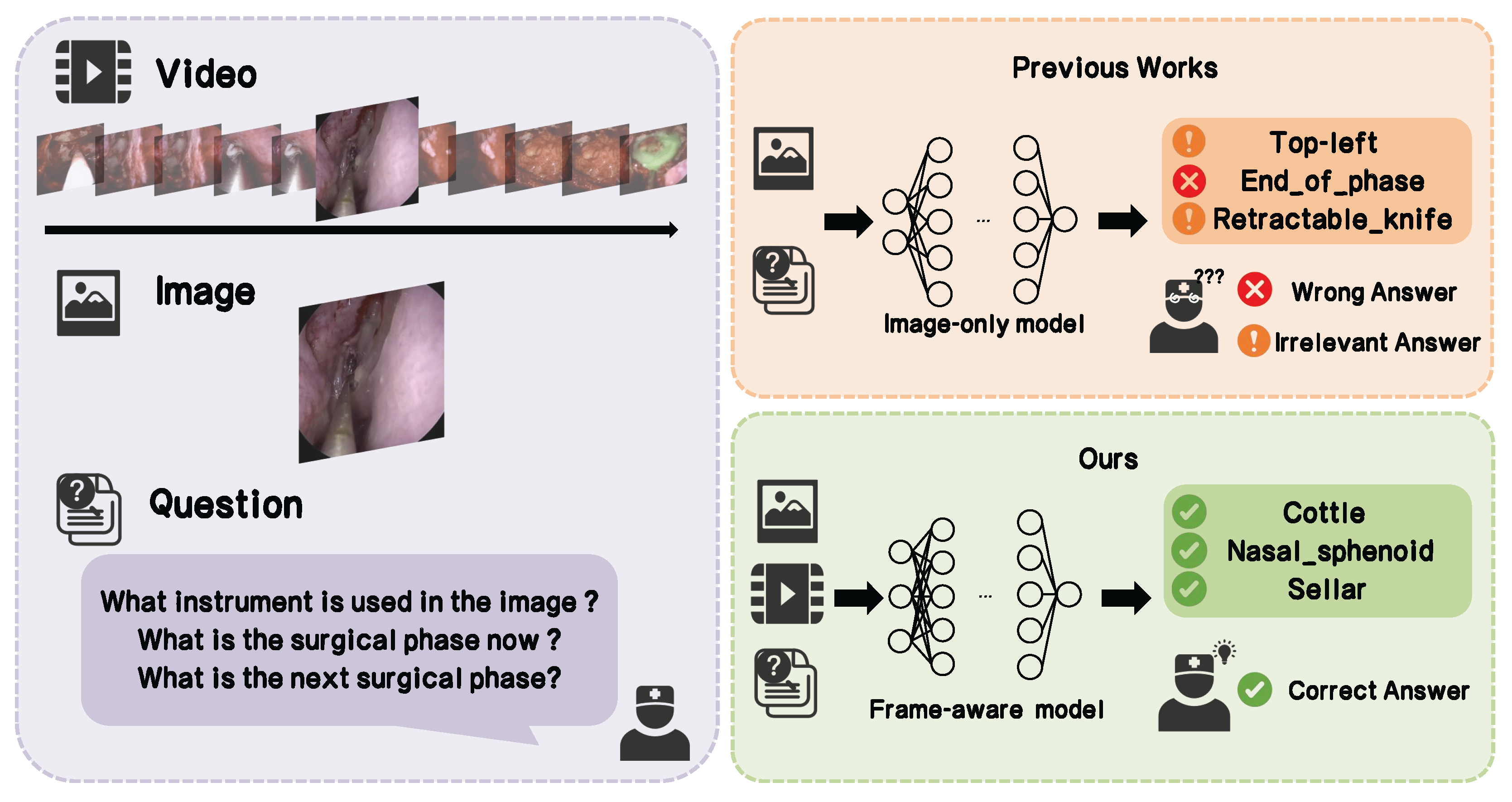

- We propose a frame-aware network named SFN-ESVQA, which effectively incorporates video data to capture the temporal dynamics of surgical procedures. With frame-aware sampling, the network samples the selected frames and then fuses the video modality and image modality into visual embedding, integrating the visual feature and textual feature by proposed visual guided attention, resulting in better understanding of the question and the video.

- We design a standardized evaluation protocol and introduce a novel metric, Irrelevant Answer Rate (IAR), to quantify the frequency of irrelevant responses. Meanwhile we propose a tailored loss function that explicitly penalizes such irrelevant outputs to enhance our model’s ability to generate the appropriate answer.

- Extensive experiments are conducted based on the PitVQA [4] and EndoVis18-VQA [18] datasets. We achieved improvements of 35.28% and 27.83% in balanced accuracy, and 25.44% and 29.86% in F1-score across the two datasets, demonstrating the great potential for its application in the field of postoperative review and training.

2. Related Work

2.1. Medical Image-Text VQA

2.2. Videos on Endoscopic Surgery

2.3. Compared with Video-Grounded QA: Focusing on Frame-Aware

3. Method

3.1. Overview

3.1.1. Challenges and Overview

- Limited temporal understanding. Most methods process frames in isolation or with naive sampling, failing to capture long-range temporal cues critical for interpreting surgical workflow.

- Insufficient cross-modal integration. Visual and textual features are often fused late or without adaptive weighting, which weakens the alignment between the question and relevant visual regions or frames.

- Weak control over answer relevance. Models may output syntactically valid but semantically irrelevant answers, especially when visual context is ambiguous or dominated by spurious cues.

3.1.2. Framework

3.2. Understanding Surgical Video: Frame-Aware-Sampling

3.3. Fusion for Dynamic Visual Representation: Gated Module

3.4. Multi-Modal Fusion: Visual-Guided Text Encoder

3.5. Answer Generation: Decoder and Output Layer

3.6. IAR Loss: Relevance-Aware Answer Regularization

4. Experiments

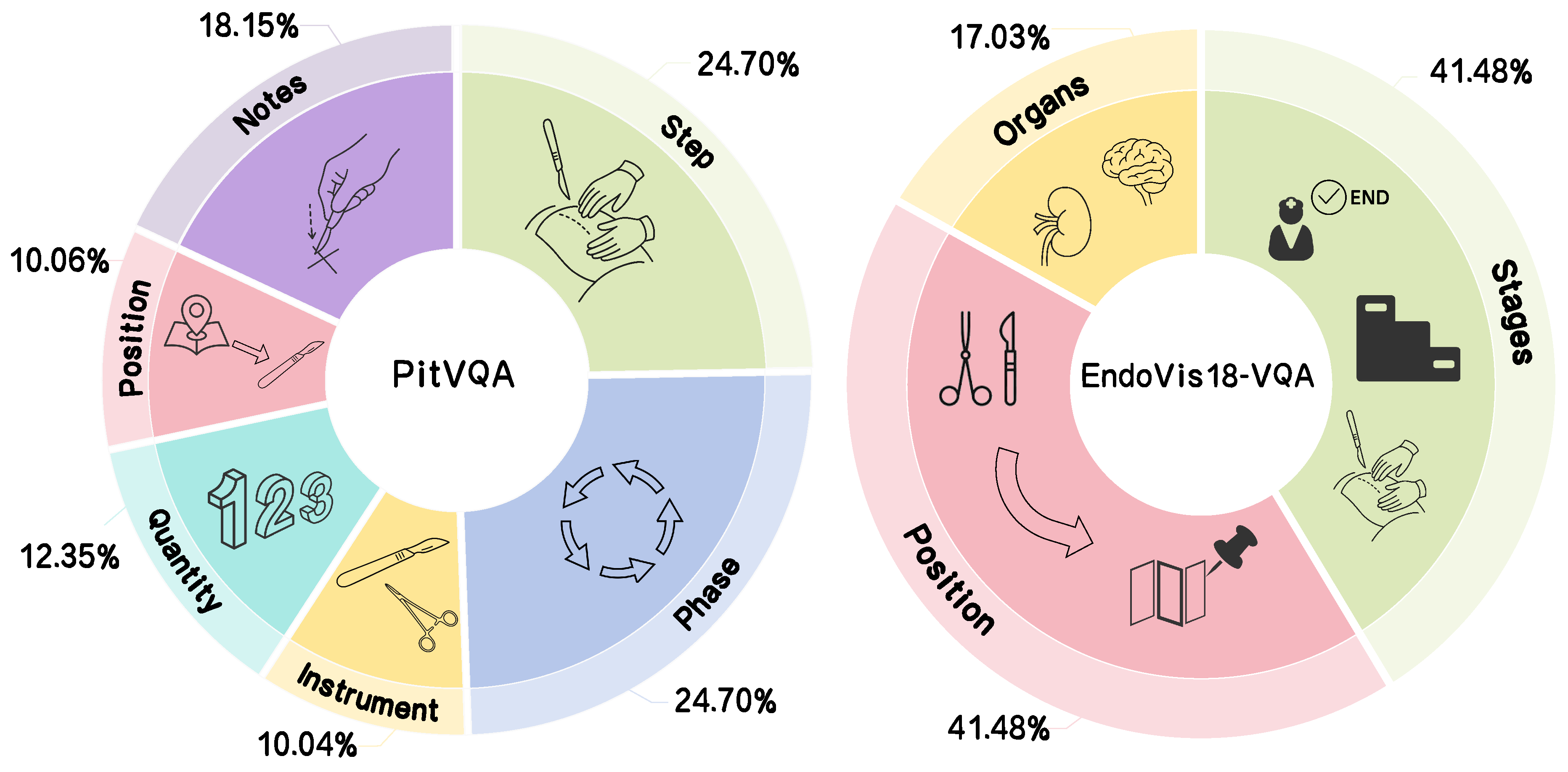

4.1. Dataset Description

4.2. Implementation Details

4.3. Evaluation Metrics

- F1-score:F1 summarizes precision and recall as their harmonic mean, where , . It is defined as follows:

- Balanced Accuracy:B.Acc averages per-class recall to reduce the effect of class imbalance, where C is the number of classes, indexes classes, and denote the true positives and false negatives for class c under a one-vs-rest view. This metric is defined as follows:

- Recall:Recall measures how well the model retrieves relevant positives:

- Irrelevant Answering Rate:In addition, we introduced a special evaluation metric named Irrelevant Answering Rate (IAR). This metric measures the proportion of irrelevant answers among all incorrect responses. An answer is deemed irrelevant if its semantic category does not match the question’s expected answer type. IAR is calculated as:where refers to these generated answers which falls outside the expected answer category, and denotes the number of answers that match the expected answer type yet but contain incorrect content.

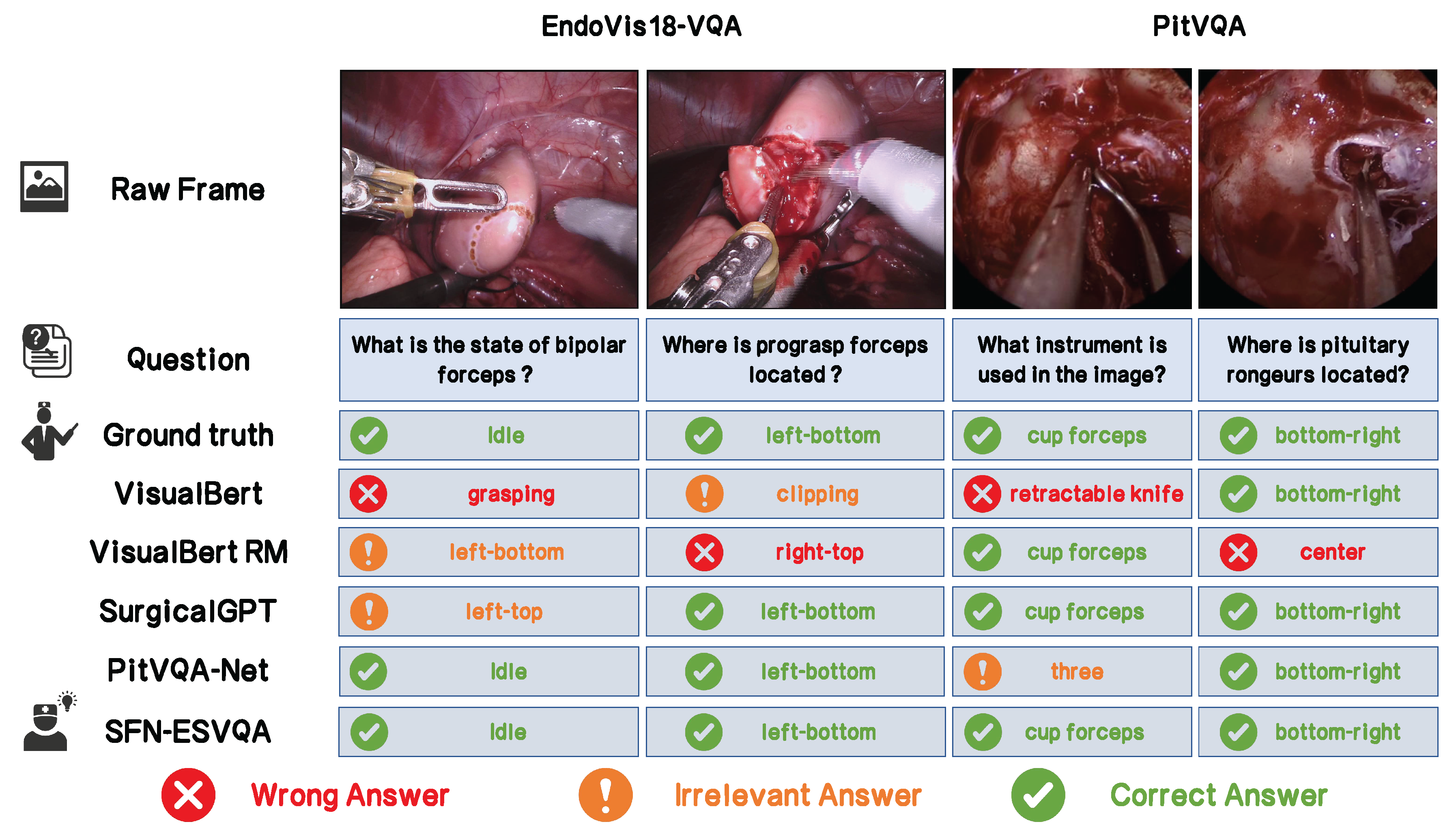

4.4. Results: SFN-ESVQA Versus SOTA Baselines

4.5. Ablation Study

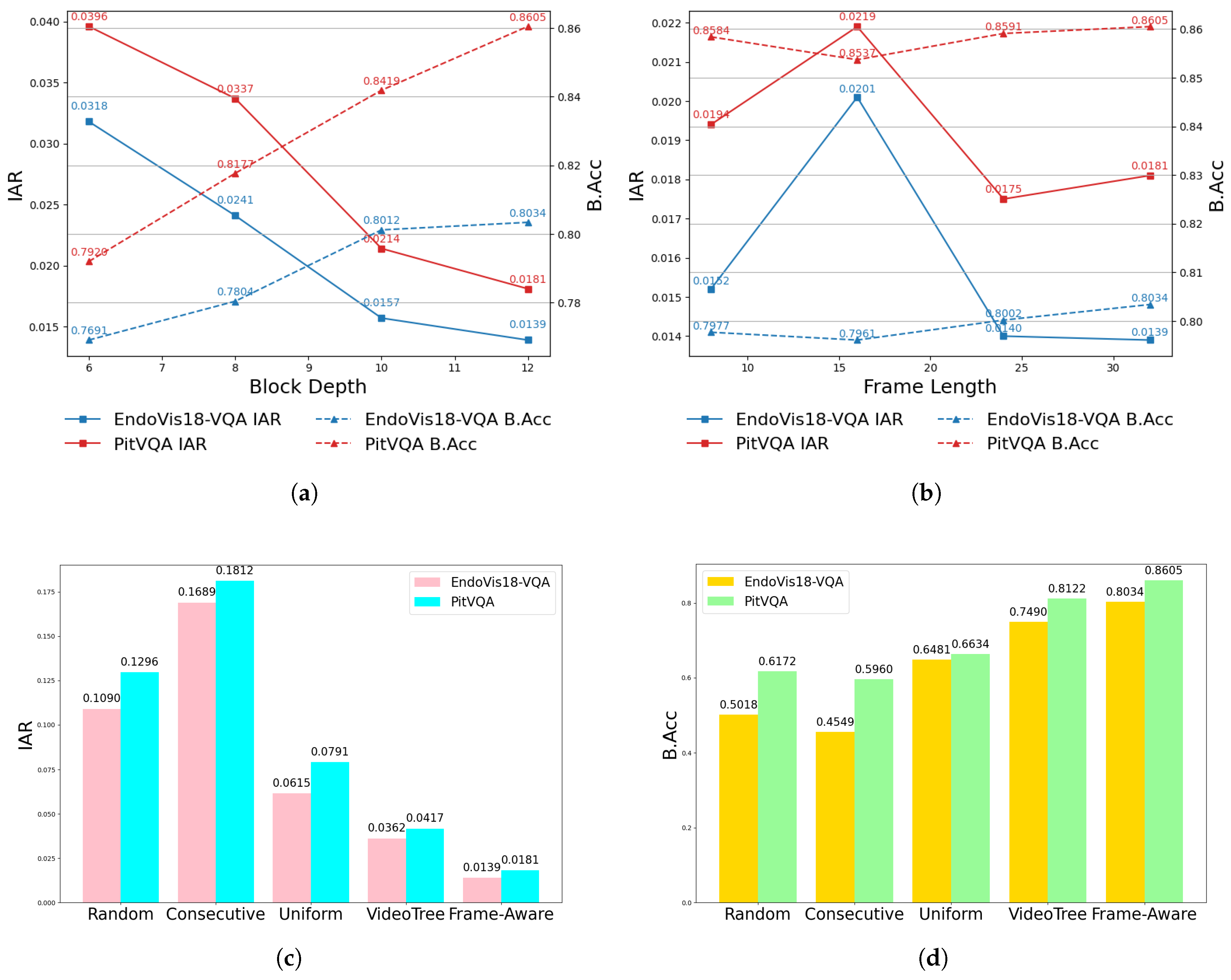

- Block Depth:Figure 5(a) shows the influence of block depth in the Frame-Aware Attention module. We varied this hyperparameter from 6 to 12. The results indicate that both IAR and B.Acc steadily improve across both datasets, demonstrating that increasing the model capacity leads to better fusion performance. This confirms the effectiveness of a deeper backbone for SFN-ESVQA.

- Frame Length:Figure 5(b) illustrates the effect of different frame lengths, which control the temporal context window between the raw frames and the input question. While performance initially improves with an increase on frame length, we observed a decline beyond 16 frames. However, considering the overall trend, the model still performs robustly, suggesting that overly long sequences may introduce redundancy or noise.

- Sampling Approach:Figure 5(c) and Figure 5(d) illustrate the results of several frame sampling strategies, including Random (selecting arbitrary frames), Consecutive (choosing temporally adjacent frames around raw frame), Uniform (evenly spaced sampling from 1600 frames), and VideoTree [66] (hierarchical temporal coverage). In contrast, our proposed Frame-Aware method adaptively selects key frames based on temporal consistency and proximity to the input, allowing SFN-ESVQA to better capture surgical dynamics. This leads to improved comprehension of the video context and reduces the generation of irrelevant answers.

5. Discussion and Conclusions

Acknowledgments

References

- Y. Di, H. Shi, J. Fan, J. Bao, G. Huang, and Y. Liu, “Efficient federated recommender system based on slimify module and feature sharpening module,” Knowledge and Information Systems, pp. 1–34, 2025.

- S. Li, B. Li, B. Sun, and Y. Weng, “Towards visual-prompt temporal answer grounding in instructional video,” IEEE transactions on pattern analysis and machine intelligence, vol. 46, no. 12, pp. 8836–8853, 2024. [CrossRef]

- X. Liang, Y. He, M. Tao, Y. Xia, J. Wang, T. Shi, J. Wang, and J. Yang, “Cmat: A multi-agent collaboration tuning framework for enhancing small language models,” arXiv preprint arXiv:2404.01663, 2024.

- R. He, M. Xu, A. Das, D. Z. Khan, S. Bano, H. J. Marcus, D. Stoyanov, M. J. Clarkson, and M. Islam, “Pitvqa: Image-grounded text embedding llm for visual question answering in pituitary surgery,” in International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer, 2024, pp. 488–498.

- J. Zhang, B. Li, and S. Zhou, “Hierarchical modeling for medical visual question answering with cross-attention fusion,” Applied Sciences, vol. 15, no. 9, p. 4712, 2025. [CrossRef]

- D. T. Guerrero, M. Asaad, A. Rajesh, A. Hassan, and C. E. Butler, “Advancing surgical education: the use of artificial intelligence in surgical training,” The American Surgeon, vol. 89, no. 1, pp. 49–54, 2023. [CrossRef]

- P. Satapathy, A. H. Hermis, S. Rustagi, K. B. Pradhan, B. K. Padhi, and R. Sah, “Artificial intelligence in surgical education and training: opportunities, challenges, and ethical considerations–correspondence,” International Journal of Surgery, vol. 109, no. 5, pp. 1543–1544, 2023.

- A. Das, D. Z. Khan, S. C. Williams, J. G. Hanrahan, A. Borg, N. L. Dorward, S. Bano, H. J. Marcus, and D. Stoyanov, “A multi-task network for anatomy identification in endoscopic pituitary surgery,” in International conference on medical image computing and computer-assisted intervention. Springer, 2023, pp. 472–482.

- H. Ge, L. Hao, Z. Xu, Z. Lin, B. Li, S. Zhou, H. Zhao, and Y. Liu, “Clinkd: Cross-modal clinical knowledge distiller for multi-task medical images,” arXiv preprint arXiv:2502.05928, 2025.

- P. V. Tomazic, F. Sommer, A. Treccosti, H. R. Briner, and A. Leunig, “3d endoscopy shows enhanced anatomical details and depth perception vs 2d: a multicentre study,” European Archives of Oto-Rhino-Laryngology, vol. 278, no. 7, pp. 2321–2326, 2021. [CrossRef]

- L. P. Sturm, J. A. Windsor, P. H. Cosman, P. Cregan, P. J. Hewett, and G. J. Maddern, “A systematic review of skills transfer after surgical simulation training,” Annals of surgery, vol. 248, no. 2, pp. 166–179, 2008.

- D. W. Bates and A. A. Gawande, “Error in medicine: what have we learned?” 2000.

- C. Wang, C. Nie, and Y. Liu, “Evaluating supervised learning models for fraud detection: A comparative study of classical and deep architectures on imbalanced transaction data,” arXiv preprint arXiv:2505.22521, 2025.

- S. M. Kilminster and B. C. Jolly, “Effective supervision in clinical practice settings: a literature review,” Medical education, vol. 34, no. 10, pp. 827–840, 2000. [CrossRef]

- L. Seenivasan, M. Islam, G. Kannan, and H. Ren, “Surgicalgpt: end-to-end language-vision gpt for visual question answering in surgery,” in International conference on medical image computing and computer-assisted intervention. Springer, 2023, pp. 281–290.

- G. Wang, L. Bai, W. J. Nah, J. Wang, Z. Zhang, Z. Chen, J. Wu, M. Islam, H. Liu, and H. Ren, “Surgical-lvlm: Learning to adapt large vision-language model for grounded visual question answering in robotic surgery,” arXiv preprint arXiv:2405.10948, 2024.

- L. Seenivasan, M. Islam, A. K. Krishna, and H. Ren, “Surgical-vqa: Visual question answering in surgical scenes using transformer,” in International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer, 2022, pp. 33–43.

- M. Allan, S. Kondo, S. Bodenstedt, S. Leger, R. Kadkhodamohammadi, I. Luengo, F. Fuentes, E. Flouty, A. Mohammed, M. Pedersen et al., “2018 robotic scene segmentation challenge,” arXiv preprint arXiv:2001.11190, 2020.

- D. A. Hudson and C. D. Manning, “Gqa: A new dataset for real-world visual reasoning and compositional question answering,” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 6700–6709.

- W. Hou, Y. Cheng, K. Xu, Y. Hu, W. Li, and J. Liu, “Memory-augmented multimodal llms for surgical vqa via self-contained inquiry,” arXiv preprint arXiv:2411.10937, 2024.

- S. Antol, A. Agrawal, J. Lu, M. Mitchell, D. Batra, C. L. Zitnick, and D. Parikh, “Vqa: Visual question answering,” in Proceedings of the IEEE international conference on computer vision, 2015, pp. 2425–2433.

- T. M. Thai, A. T. Vo, H. K. Tieu, L. N. Bui, and T. T. Nguyen, “Uit-saviors at medvqa-gi 2023: Improving multimodal learning with image enhancement for gastrointestinal visual question answering,” arXiv preprint arXiv:2307.02783, 2023.

- K. Uehara and T. Harada, “K-vqg: Knowledge-aware visual question generation for common-sense acquisition,” in Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2023, pp. 4401–4409.

- B. N. Patro, S. Kumar, V. K. Kurmi, and V. P. Namboodiri, “Multimodal differential network for visual question generation,” arXiv preprint arXiv:1808.03986, 2018.

- S. Tascon-Morales, P. Márquez-Neila, and R. Sznitman, “Localized questions in medical visual question answering,” in International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer, 2023, pp. 361–370.

- Y. Di, X. Wang, H. Shi, C. Fan, R. Zhou, R. Ma, and Y. Liu, “Personalized consumer federated recommender system using fine-grained transformation and hybrid information sharing,” IEEE Transactions on Consumer Electronics, 2025. [CrossRef]

- A. Krizhevsky, I. Sutskever, and G. E. Hinton, “Imagenet classification with deep convolutional neural networks,” Advances in neural information processing systems, vol. 25, 2012.

- W. Zaremba, I. Sutskever, and O. Vinyals, “Recurrent neural network regularization,” arXiv preprint arXiv:1409.2329, 2014.

- S. Hochreiter and J. Schmidhuber, “Long short-term memory,” Neural computation, vol. 9, no. 8, pp. 1735–1780, 1997.

- K. Cho, B. Van Merriënboer, C. Gulcehre, D. Bahdanau, F. Bougares, H. Schwenk, and Y. Bengio, “Learning phrase representations using rnn encoder-decoder for statistical machine translation,” arXiv preprint arXiv:1406.1078, 2014.

- M. Malinowski, M. Rohrbach, and M. Fritz, “Ask your neurons: A neural-based approach to answering questions about images,” in Proceedings of the IEEE international conference on computer vision, 2015, pp. 1–9.

- R. Xie, L. Jiang, X. He, Y. Pan, and Y. Cai, “A weakly supervised and globally explainable learning framework for brain tumor segmentation,” in 2024 IEEE International Conference on Multimedia and Expo (ICME). IEEE, 2024, pp. 1–6.

- J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “Bert: Pre-training of deep bidirectional transformers for language understanding,” in Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, volume 1 (long and short papers), 2019, pp. 4171–4186.

- Y. Liu, X. Qin, Y. Gao, X. Li, and C. Feng, “Setransformer: A hybrid attention-based architecture for robust human activity recognition,” arXiv preprint arXiv:2505.19369, 2025.

- H. Tan and M. Bansal, “Lxmert: Learning cross-modality encoder representations from transformers,” in Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing, 2019.

- J. Lu, D. Batra, D. Parikh, and S. Lee, “Vilbert: Pretraining task-agnostic visiolinguistic representations for vision-and-language tasks,” Advances in neural information processing systems, vol. 32, 2019.

- X. Li, X. Yin, C. Li, X. Hu, P. Zhang, L. Zhang, L. Wang, H. Hu, L. Dong, F. Wei, Y. Choi, and J. Gao, “Oscar: Object-semantics aligned pre-training for vision-language tasks,” ECCV 2020, 2020.

- L. H. Li, M. Yatskar, D. Yin, C.-J. Hsieh, and K.-W. Chang, “Visualbert: A simple and performant baseline for vision and language,” arXiv preprint arXiv:1908.03557, 2019.s.

- D. N. Kamtam, J. B. Shrager, S. D. Malla, N. Lin, J. J. Cardona, J. J. Kim, and C. Hu, “Deep learning approaches to surgical video segmentation and object detection: A scoping review,” Computers in Biology and Medicine, vol. 194, p. 110482, 2025.

- S. Ren, K. He, R. Girshick, and J. Sun, “Faster r-cnn: Towards real-time object detection with region proposal networks,” IEEE transactions on pattern analysis and machine intelligence, vol. 39, no. 6, pp. 1137–1149, 2016. [CrossRef]

- D. Sharma, S. Purushotham, and C. K. Reddy, “Medfusenet: An attention-based multimodal deep learning model for visual question answering in the medical domain,” Scientific Reports, vol. 11, no. 1, p. 19826, 2021. [CrossRef]

- Y. Khare, V. Bagal, M. Mathew, A. Devi, U. D. Priyakumar, and C. Jawahar, “Mmbert: Multimodal bert pretraining for improved medical vqa,” in 2021 IEEE 18th international symposium on biomedical imaging (ISBI). IEEE, 2021, pp. 1033–1036.

- L. Li, S. Lu, Y. Ren, and A. W.-K. Kong, “Set you straight: Auto-steering denoising trajectories to sidestep unwanted concepts,” arXiv preprint arXiv:2504.12782, 2025.

- L. Bai, M. Islam, L. Seenivasan, and H. Ren, “Surgical-vqla: Transformer with gated vision-language embedding for visual question localized-answering in robotic surgery,” in 2023 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2023, pp. 6859–6865.

- L. Bai, M. Islam, and H. Ren, “Cat-vil: Co-attention gated vision-language embedding for visual question localized-answering in robotic surgery,” in International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer, 2023, pp. 397–407.

- P. Wang, S. Bai, S. Tan, S. Wang, Z. Fan, J. Bai, K. Chen, X. Liu, J. Wang, W. Ge et al., “Qwen2-vl: Enhancing vision-language model’s perception of the world at any resolution,” arXiv preprint arXiv:2409.12191, 2024.

- C. Loukas, “Video content analysis of surgical procedures,” Surgical endoscopy, vol. 32, pp. 553–568, 2018. [CrossRef]

- W. Wu, X. Qiu, S. Song, Z. Chen, X. Huang, F. Ma, and J. Xiao, “Image augmentation agent for weakly supervised semantic segmentation,” arXiv preprint arXiv:2412.20439, 2024.

- M. Kawka, T. M. Gall, C. Fang, R. Liu, and L. R. Jiao, “Intraoperative video analysis and machine learning models will change the future of surgical training,” Intelligent Surgery, vol. 1, pp. 13–15, 2022. [CrossRef]

- O. Zisimopoulos, E. Flouty, I. Luengo, P. Giataganas, J. Nehme, A. Chow, and D. Stoyanov, “Deepphase: surgical phase recognition in cataracts videos,” in Medical Image Computing and Computer Assisted Intervention–MICCAI 2018: 21st International Conference, Granada, Spain, September 16-20, 2018, Proceedings, Part IV 11. Springer, 2018, pp. 265–272.

- I. Oropesa, P. Sánchez-González, M. K. Chmarra, P. Lamata, A. Fernández, J. A. Sánchez-Margallo, F. W. Jansen, J. Dankelman, F. M. Sánchez-Margallo, and E. J. Gómez, “Eva: laparoscopic instrument tracking based on endoscopic video analysis for psychomotor skills assessment,” Surgical endoscopy, vol. 27, pp. 1029–1039, 2013. [CrossRef]

- I. Funke, S. T. Mees, J. Weitz, and S. Speidel, “Video-based surgical skill assessment using 3d convolutional neural networks,” International journal of computer assisted radiology and surgery, vol. 14, pp. 1217–1225, 2019. [CrossRef]

- P. Mascagni, D. Alapatt, T. Urade, A. Vardazaryan, D. Mutter, J. Marescaux, G. Costamagna, B. Dallemagne, and N. Padoy, “A computer vision platform to automatically locate critical events in surgical videos: documenting safety in laparoscopic cholecystectomy,” Annals of surgery, vol. 274, no. 1, pp. e93–e95, 2021. [CrossRef]

- A. J. Hung, R. Bao, I. O. Sunmola, D.-A. Huang, J. H. Nguyen, and A. Anandkumar, “Capturing fine-grained details for video-based automation of suturing skills assessment,” International journal of computer assisted radiology and surgery, vol. 18, no. 3, pp. 545–552, 2023. [CrossRef]

- Y. Zhong, J. Xiao, W. Ji, Y. Li, W. Deng, and T.-S. Chua, “Video question answering: Datasets, algorithms and challenges,” arXiv preprint arXiv:2203.01225, 2022.

- K.-H. Zeng, T.-H. Chen, C.-Y. Chuang, Y.-H. Liao, J. C. Niebles, and M. Sun, “Leveraging video descriptions to learn video question answering,” in Proceedings of the AAAI conference on artificial intelligence, vol. 31, no. 1, 2017. [CrossRef]

- D. Gao, S. Lu, W. Zhou, J. Chu, J. Zhang, M. Jia, B. Zhang, Z. Fan, and W. Zhang, “Eraseanything: Enabling concept erasure in rectified flow transformers,” in Forty-second International Conference on Machine Learning, 2025.

- J. Gao, R. Ge, K. Chen, and R. Nevatia, “Motion-appearance co-memory networks for video question answering,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 6576–6585.

- J. Xu, T. Mei, T. Yao, and Y. Rui, “Msr-vtt: A large video description dataset for bridging video and language,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 5288–5296.

- M. Tapaswi, Y. Zhu, R. Stiefelhagen, A. Torralba, R. Urtasun, and S. Fidler, “Movieqa: Understanding stories in movies through question-answering,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 4631–4640.

- X. Li, J. Song, L. Gao, X. Liu, W. Huang, X. He, and C. Gan, “Beyond rnns: Positional self-attention with co-attention for video question answering,” in Proceedings of the AAAI conference on artificial intelligence, vol. 33, no. 01, 2019, pp. 8658–8665.

- J. Lei, L. Li, L. Zhou, Z. Gan, T. L. Berg, M. Bansal, and J. Liu, “Less is more: Clipbert for video-and-language learning via sparse sampling,” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 7331–7341.

- H. Ben-Younes, R. Cadene, M. Cord, and N. Thome, “Mutan: Multimodal tucker fusion for visual question answering,” in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2612–2620.

- Z. Yu, J. Yu, J. Fan, and D. Tao, “Multi-modal factorized bilinear pooling with co-attention learning for visual question answering,” in Proceedings of the IEEE international conference on computer vision, 2017, pp. 1821–1830.

- Z. Yu, J. Yu, C. Xiang, J. Fan, and D. Tao, “Beyond bilinear: Generalized multimodal factorized high-order pooling for visual question answering,” IEEE transactions on neural networks and learning systems, vol. 29, no. 12, pp. 5947–5959, 2018. [CrossRef]

- Z. Wang, S. Yu, E. Stengel-Eskin, J. Yoon, F. Cheng, G. Bertasius, and M. Bansal, “Videotree: Adaptive tree-based video representation for llm reasoning on long videos,” in Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 3272–3283.

| Models | EndoVis18-VQA [18] | PitVQA [4] | ||||

|---|---|---|---|---|---|---|

| F1-score | B.Acc | Recall | F1-score | B.Acc | Recall | |

| Mutan [63] (2017) | 0.4565 | - | 0.4969 | - | - | - |

| MFB [64] (2017) | 0.3622 | - | 0.4235 | - | - | - |

| MFH [65] (2018) | 0.4224 | - | 0.4835 | - | - | - |

| VisualBert [38] (2019) | 0.3745 | 0.3474 | 0.4282 | 0.4286 | 0.4358 | 0.4549 |

| VisualBert RM [17] (2019) | 0.3583 | 0.3422 | 0.4079 | 0.4281 | 0.3892 | 0.4103 |

| SurgicalGPT [15] (2023) | 0.4649 | 0.3543 | 0.4649 | 0.5261 | 0.5090 | 0.5397 |

| PitVQA-Net [4] (2024) | 0.6165 | 0.4506 | 0.4849 | 0.5952 | 0.5822 | 0.5917 |

| SFN-ESVQA (2025) | 0.8709 | 0.8034 | 0.8350 | 0.8938 | 0.8605 | 0.8981 |

| Models | EndoVis18-VQA [18] | PitVQA [4] |

|---|---|---|

| SurgicalGPT [15] (2023) | 0.1903 | 0.2381 |

| PitVQA-Net [4] (2024) | 0.1730 | 0.1856 |

| SFN-ESVQA (2025) | 0.0139 | 0.0181 |

| Module & Loss | EndoVis18-VQA [18] | PitVQA [4] | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Frame-aware | IAR-loss | F1-score | B.Acc | Recall | IAR | F1-score | B.Acc | Recall | IAR |

| ✗ | ✗ | 0.6165 | 0.4506 | 0.4849 | 0.1730 | 0.5952 | 0.5822 | 0.5917 | 0.1856 |

| ✗ | ✓ | 0.6541 | 0.4810 | 0.5134 | 0.1406 | 0.6218 | 0.6096 | 0.6170 | 0.1579 |

| ✓ | ✗ | 0.8611 | 0.7969 | 0.8201 | 0.0147 | 0.8792 | 0.8410 | 0.8804 | 0.0219 |

| ✓ | ✓ | 0.8709 | 0.8034 | 0.8350 | 0.0139 | 0.8938 | 0.8605 | 0.8981 | 0.0181 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).