Submitted:

22 August 2025

Posted:

26 August 2025

You are already at the latest version

Abstract

AI systems may reproduce and amplify societal biases present in their training data as decision-making becomes more automated. The acknowledged biases present significant barriers to equity, accountability, and the ethical application of AI. This research review evaluates the efficacy of synthetic data generation through generative AI and knowledge-based methodologies in alleviating dataset bias and enhancing equality in AI systems. This study analyzes recent advancements in fairness-aware generative modeling, including text-to-image fairness algorithms like Fair Diffusion and FairCoT, knowledge-driven approaches such as DECAF and counterfactual GANs, as well as comprehensive frameworks like FairGAN and FairGen. The paper examines theoretical frameworks and empirical evaluations in graphical and tabular formats. The production of synthetic data can improve demographic representation and guarantee that results align with defined fairness standards. However, drawbacks still exist regarding the quality of annotations, scalability, equity trade-offs, and ethical considerations. So in this paper, we outline the potential research directions, including multimodal fairness frameworks, interactive refinement with human feedback, and fairness pretraining for foundational models. This analysis of ours indicates that not only are these approaches effective, but also they can be applied in various contexts. However, the success of these approaches relies on thorough implementation and continuous monitoring with a strong allegiance to ethical AI principles.

Keywords:

1. Introduction

2. Background and Motivation

2.1. Types of Bias in Machine Learning Datasets

- Representation Bias occurs when certain subgroups are underrepresented in the training data, leading models to perform poorly or unfairly on those populations [5].

- Measurement Bias involves systematic errors in how features or outcomes are recorded, often due to flawed instruments or subjective labeling [5].

- Historical Bias reflects societal inequities embedded in real-world data; even perfect sampling can capture skewed distributions if the underlying reality is unjust [1].

- Selection Bias results from non-random sampling processes that disproportionately include or exclude specific groups [5].

2.2. Fairness Definitions and Formalizations

- Statistical Parity: Ensures that outcomes are equally distributed across groups [5].

- Equalized Odds: Demands both true positive rates (TPR) and false positive rates (FPR) be equal across groups.

- Counterfactual Fairness (Kusner et al., 2017): A model is counterfactually fair if for any individual, the prediction remains unchanged under a counterfactual change of the protected attribute, holding all else equal.

| Metric | Definition | Use Case |

|---|---|---|

| Statistical Parity | Simple group fairness check | |

| Equal Opportunity | Focuses on true positives | |

| Equalized Odds | TPR and FPR are equal across groups | Balances both TPR and FPR |

| Counterfactual Fairness | Prediction remains unchanged under counterfactual change of A | Causal model-based fairness |

2.3. Synthetic Data and Generative Models

- Generative Adversarial Networks (GANs): Introduced by Goodfellow et al. [10] (2014), GANs involve a generator G and discriminator D trained in a minimax game:

- Variational Autoencoders (VAEs): These models optimize a lower bound on the log-likelihood using variational inference and are effective for learning disentangled representations [5].

- Diffusion Models: Recently popularized for text-to-image synthesis, diffusion models learn to reverse a Markovian noise process. They iteratively denoise a random Gaussian vector into a realistic image using learned score functions [3].

2.4. Motivation for Fairness-Driven Synthetic Data

3. Synthetic Data for Bias Mitigation in Image Generation

3.1. Text-to-Image Fairness Strategies

- Fair Diffusion (Wei et al. [3]) modifies the denoising process by adding conditioning vectors that encode fairness constraints. The score function of the diffusion model is modified to push samples toward group-balanced distributions during inference.

- FairCoT (Chung et al. [4]) introduces a chain-of-thought prompting method that decomposes the generation into sequential reasoning steps. Each step is guided by fairness-aware instructions, improving representation across intersectional attributes (e.g., “a female firefighter with East Asian features”).

- Prompt Engineering and Rewriting: A practical strategy involves rephrasing prompts to explicitly include underrepresented group attributes. For example, “a doctor” might be expanded to “a Black woman doctor with curly hair,” improving both specificity and demographic coverage [9].

3.2. Fairness Metrics for Image Generation

- Group Coverage (GC): Measures the proportion of demographic groups represented in generated samples. A higher GC indicates more equitable representation [3].

- Fréchet Inception Distance (FID) stratified by group: Assesses sample quality while comparing inter-group fidelity.

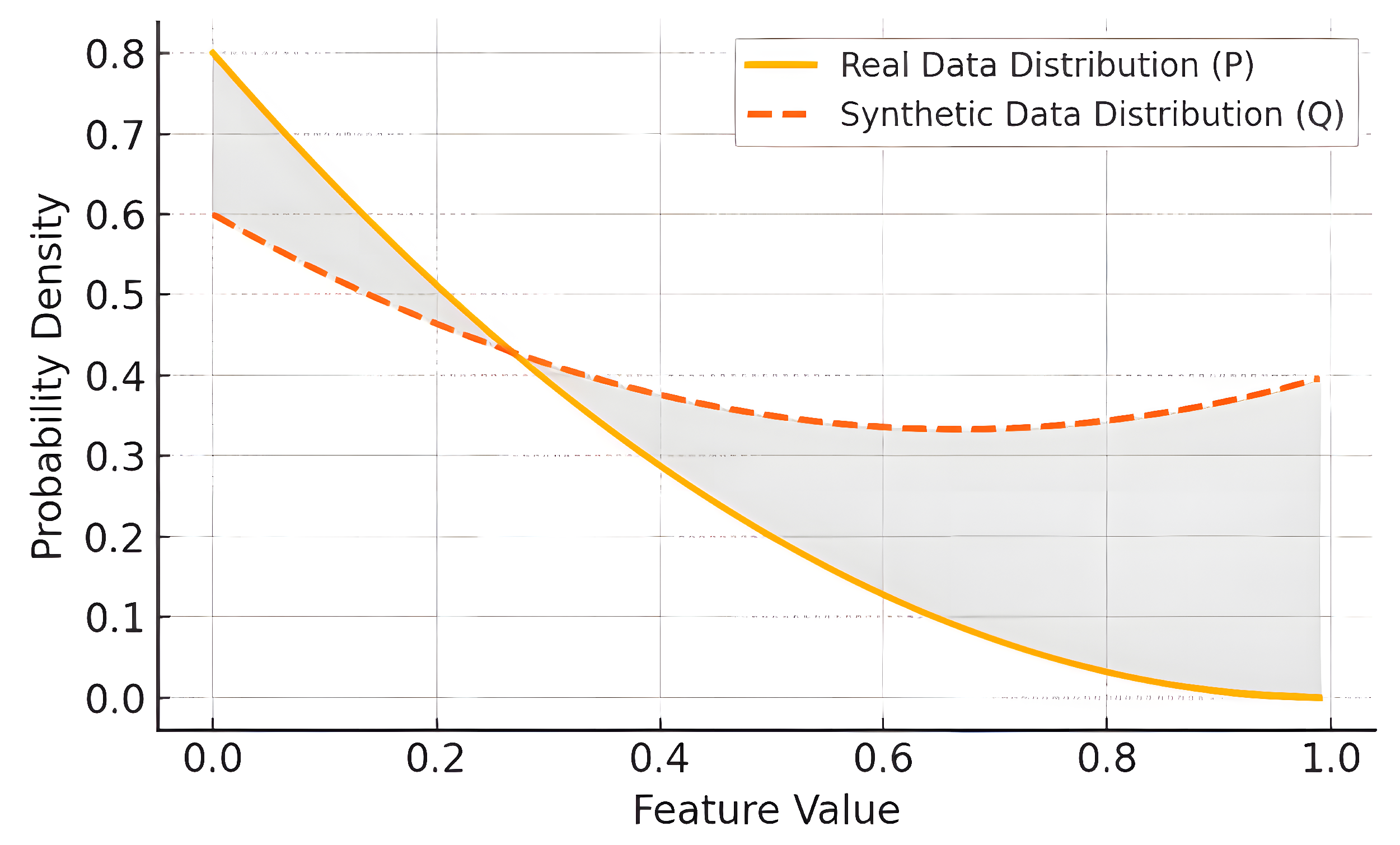

- KL Divergence between group-wise distributions in real vs. synthetic data:

3.3. Dataset-Level Interventions

3.4. Fair Representations and Latent Interventions

4. Knowledge-Driven Generative Models

4.1. DECAF: Causally-Aware Generative Networks

4.2. Counterfactual Fairness in GANs

4.3. Theoretical Implications and Practical Challenges

5. General Fairness-Aware Generative Approaches

5.1. FairGAN: Dual-Discriminator Fairness Optimization

5.2. FairGen: Data Preprocessing and Architecture-Independent Bias Mitigation

5.3. Ethical Models and Frameworks for Fairness-Aware Generation

6. Challenges and Limitations

6.1. Dependence on Accurate Annotations and Representations

6.2. Incomplete Fairness Formalizations

6.3. Causal Modeling Assumptions

6.4. Trade-offs Between Fairness, Utility, and Privacy

6.5. Scalability and Computational Costs

6.6. Risk of Fairness-Washing and Misuse

6.7. Ethical Subjectivity and Sociotechnical Boundaries

7. Ethical, Social, and Regulatory Implications

7.1. Fairness-Washing and Superficial Compliance

7.2. Cultural Representation and Identity Ethics

7.3. Transparency, Explainability, and Auditing

7.4. Regulatory Frameworks and Compliance

- Demonstrable fairness improvements (e.g., reduced disparate impact).

- Documentation of generative model behavior and dataset provenance.

- Privacy-preserving mechanisms such as differential privacy.

7.5. Democratic Oversight and Participatory Governance

7.6. Risks of Dual Use and Generative Harms

8. Future Directions

8.1. Integration of Text-to-Image Strategies into Tabular and Multimodal Fairness Pipelines

- Prompt-based instance generation using language models (e.g., GPT) to diversify underrepresented image-text combinations.

- Latent direction modulation, where attribute vectors in image latent space are aligned with fairness targets through optimization (as in “Latent Directions”).

- Real-time fairness feedback, where metrics such as KL divergence and demographic parity guide progressive synthesis rounds.

8.2. Interactive Fairness Refinement with Human Feedback Loops

8.3. Compositional and Intersectional Fairness Modeling

8.4. Fairness-Aware Pretraining for Foundation Models

- Fairness-aware prompt tuning.

- Diversity-aware negative sampling.

- Representation balancing using causal priors, as in DECAF [1].

8.5. Domain-Transferable Fairness Constraints

8.6. Benchmarking, Standardization, and Toolkits

9. Conclusions

Author Contributions

Conflicts of Interest

References

- van Breugel, M.; Kooti, P.; Rojas, J.M.; Horvitz, E. DECAF: Generating Fair Synthetic Data Using Causally-Aware Generative Networks. arXiv preprint, arXiv:2106.02757 2021.

- Xu, X.; Yuan, T.; Ji, S. FairGAN: Fairness-aware Generative Adversarial Networks. In Proc. IEEE Int. Conf. Big Data 2018. [Google Scholar]

- Wei, T.; Liu, M.; Wu, Y. Fair Diffusion: Achieving Fairness in Text-to-Image Generation via Score-Based Denoising. arXiv preprint, arXiv:2303.09867 2023.

- Chung, J.; Li, S.; Zhao, R. FairCoT: Fairness via Chain-of-Thought Prompting in Text-to-Image Models. arXiv preprint, arXiv:2401.04982 2024.

- Kusner, A.; Loftus, J.; Russell, C.; Silva, R. Counterfactual Fairness. In Adv. Neural Inf. Process. Syst. (NeurIPS) 2017. [Google Scholar]

- Abroshan, A.; Lee, M.; Balzano, L. FairGAN++: Mitigating Intersectional Bias with Counterfactual Fairness Objectives. arXiv preprint, arXiv:2207.12345 2022.

- Jakkaraju, R.; Muraleedharan, S. Frameworks for Ethical Generative AI: Evaluation Metrics and Deployment Governance. AI & Society 2025, 40, 112–132. [Google Scholar]

- Chaudhary, D.; Patel, K.; Gupta, R. FairGen: Architecture-Agnostic Bias Mitigation in Synthetic Data Generation. In Proc. AAAI Conf. Artif. Intell. 2022. [Google Scholar]

- Wired. Fake Pictures of People of Color Won’t Fix AI Bias. Wired Magazine, Feb. 2024. [Online]. Available online: https://www.wired.com/story/google-images-ai-diversity-fake-pictures/.

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; et al. Generative Adversarial Nets. In Adv. Neural Inf. Process. Syst. 2014. [Google Scholar]

- Goyal, P.; Dettmers, T.; Zhou, J. Steerable Latent Directions for Controlled Representation in GANs. In Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR) 2023. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).