Submitted:

15 August 2025

Posted:

18 August 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Works

3. Materials and Methods Used for the Proposed System

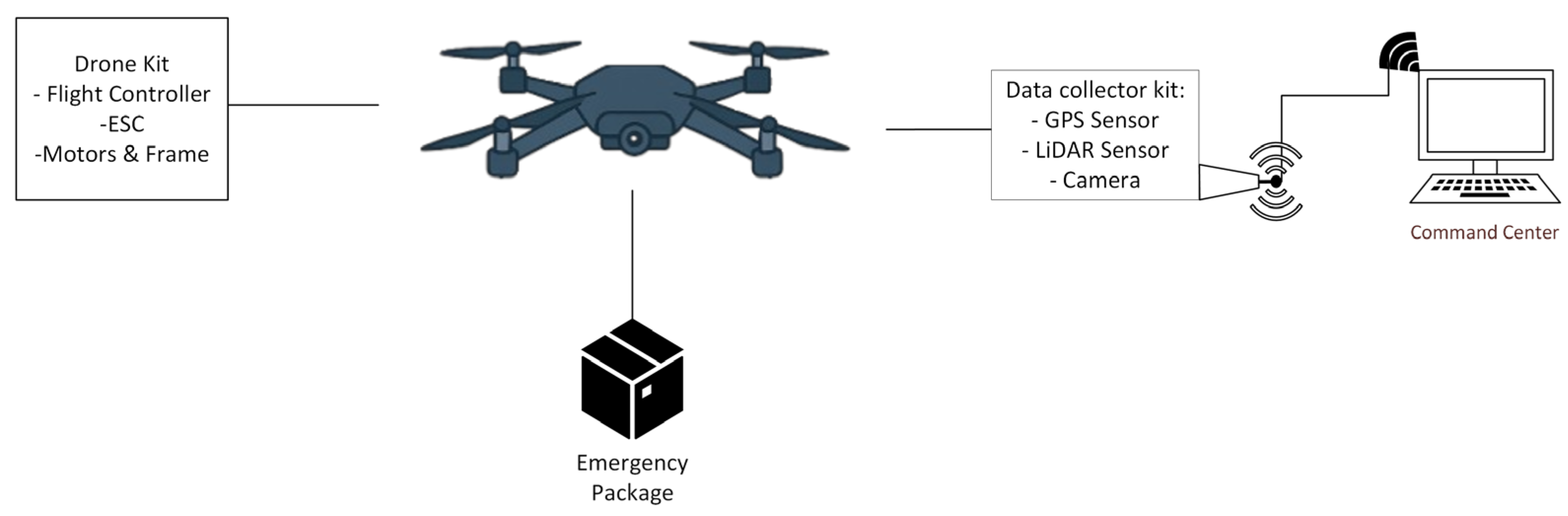

3.1. Flight Module

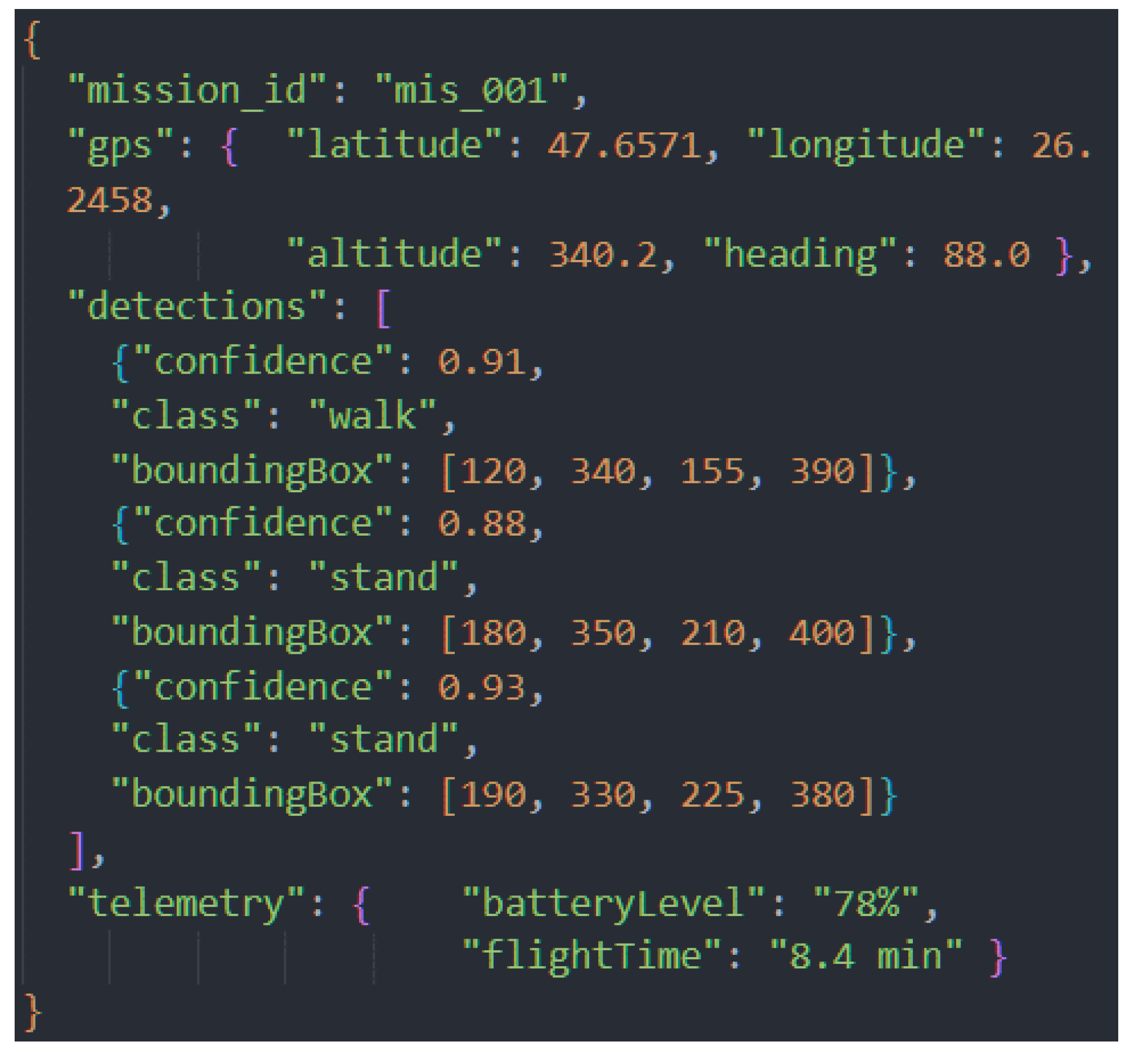

3.2. Data Acquisition Module

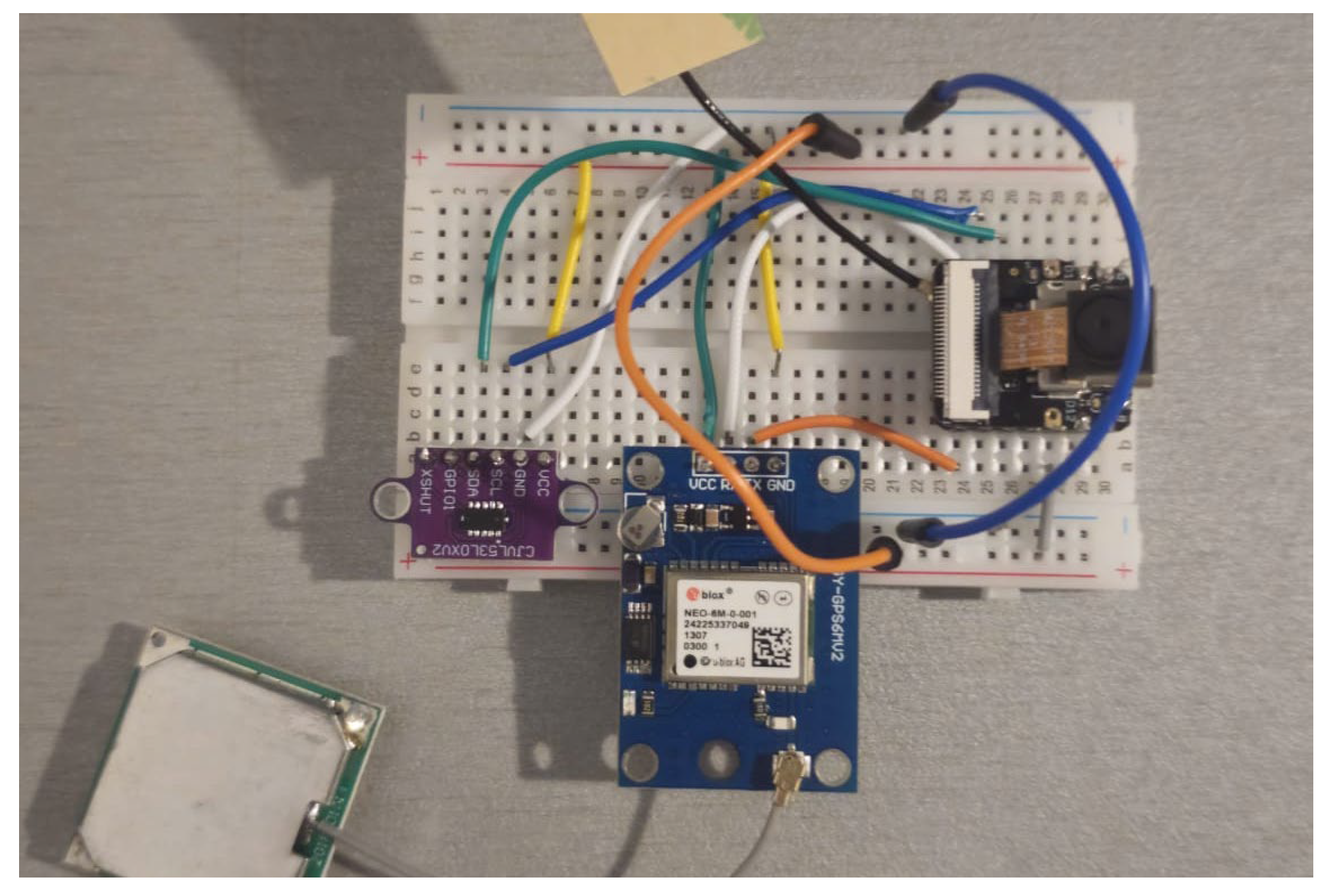

- Main MPU: It features a 32-bit, dual-core Tensilica Xtensa LX7 processor that operates at speeds of up to 240 MHz. It is also equipped with 8 MB of PSRAM and 8 MB of Flash memory, which provides ample storage for processing sensor data and managing video streams.

- Camera: Although the ESP32S3 MPU is equipped with an OV2640 camera sensor, a superior OV5640 camera module has been added to enhance image quality and transmission speed. This enables higher-resolution capture and faster access times, providing the analysis module with higher-quality visual data at a cost-effective price.

- Distance sensor (LIDAR): A TOF LIDAR laser distance sensor (VL53LDK) is used. It is important to note that this sensor has a maximum measurement range of 2 meters, with an accuracy of ±3%. The sensor has a maximum measurement range of up to 2 meters and an accuracy of ±3%. Although this sensor is not designed for large-scale mapping, it enables proximity awareness and estimation of the terrain profile immediately below the drone. This data is essential for low-altitude flight and for the victim location estimation algorithm.

- GPS module: A GY-NEO6MV2 module is integrated to provide positioning data with a horizontal accuracy of 2.5 meters (circular error probable) and an update rate of 1 Hz.

3.3. Delivery Module

3.4. Drone Command Center

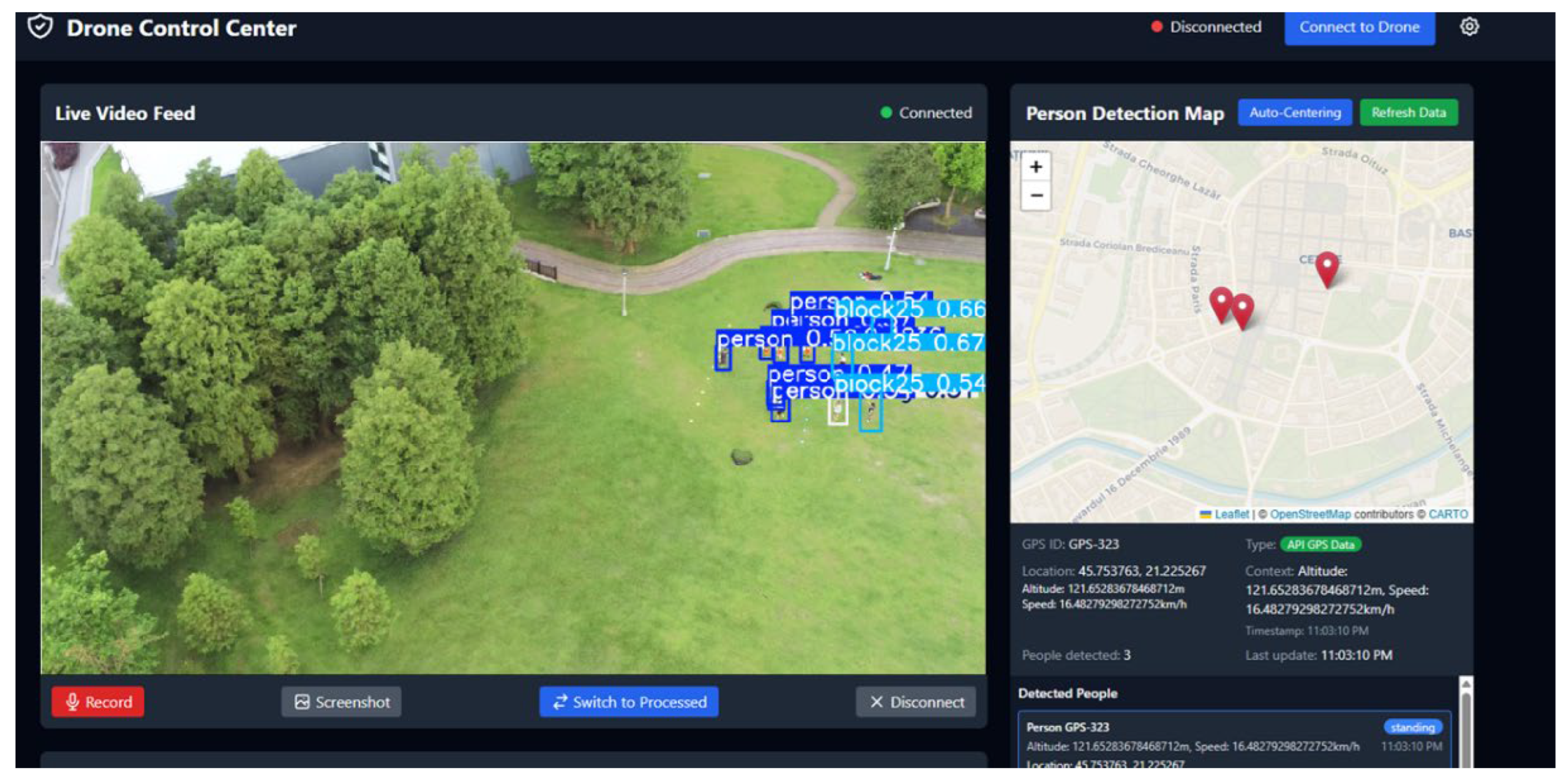

- Human-Machine Interface (HMI): The front end gives the human operator complete situational awareness. A key feature is the interactive map, which displays the drone’s position in real time, the precise location of any detected individuals and other relevant geospatial data. At the same time, the operator can view the live video stream annotated in real time by the detection module which highlights victims and classifies their status (e.g., walking, standing, or lying down). Figure 6 shows the visual interface of the central application, which consolidates these data streams.

- 2.

-

Perceptual and cognitive processing: One of the fundamental architectural decisions behind our system is to decouple AI-intensive processing from the aerial platform and host it at the GCS level. The drone acts as an advanced data collection platform, transmitting video and telemetry data to the ground station. Here, the backend takes this data and performs two critical tasks:

- a.

- Visual detection: Uses the YOLO11 object detection model to analyze the video stream and extract semantic information. This off-board approach allows the use of complex, high-precision computational models, that would otherwise exceed the hardware capabilities of resource-limited aerial platforms.

- b.

- Agentic Reasoning: The GCS hosts the entire cognitive-agentic architecture. This architecture is detailed in Section 6. All interactions between AI agents, including contextual analysis, risk assessment and recommendation generation, take place at the ground server level.

4. Visual Detection and Classification with YOLO

4.1. Construction of the Dataset and Class Taxonomy

- C2A Dataset: Human Detection in Disaster Scenarios [26] - This dataset is designed to improve human detection in disaster contexts.

- NTUT 4K Drone Photo Dataset for Human Detection [27] – It is a dataset designed to identify human behavior. It includes detailed annotations for classifying human actions.

- States/Actions: person, push, riding, sit, stand, walk, watchphone

- Visibility/Occlusion: block25 (25% occlusion), block50 (50% occlusion), block75 (75% occlusion).

4.2. Data Preprocessing and Hyperparameter Optimization

- Data augmentation: The techniques applied consisted exclusively of fixed rotations at 90°, random rotations between -15° and +15°, and shear deformations of ±10°. The purpose of these transformations was to artificially simulate the variety of viewing angles and target orientations that naturally occur in dynamic landscapes filmed by a drone, forcing the model to learn features that are invariant to rotation and perspective.

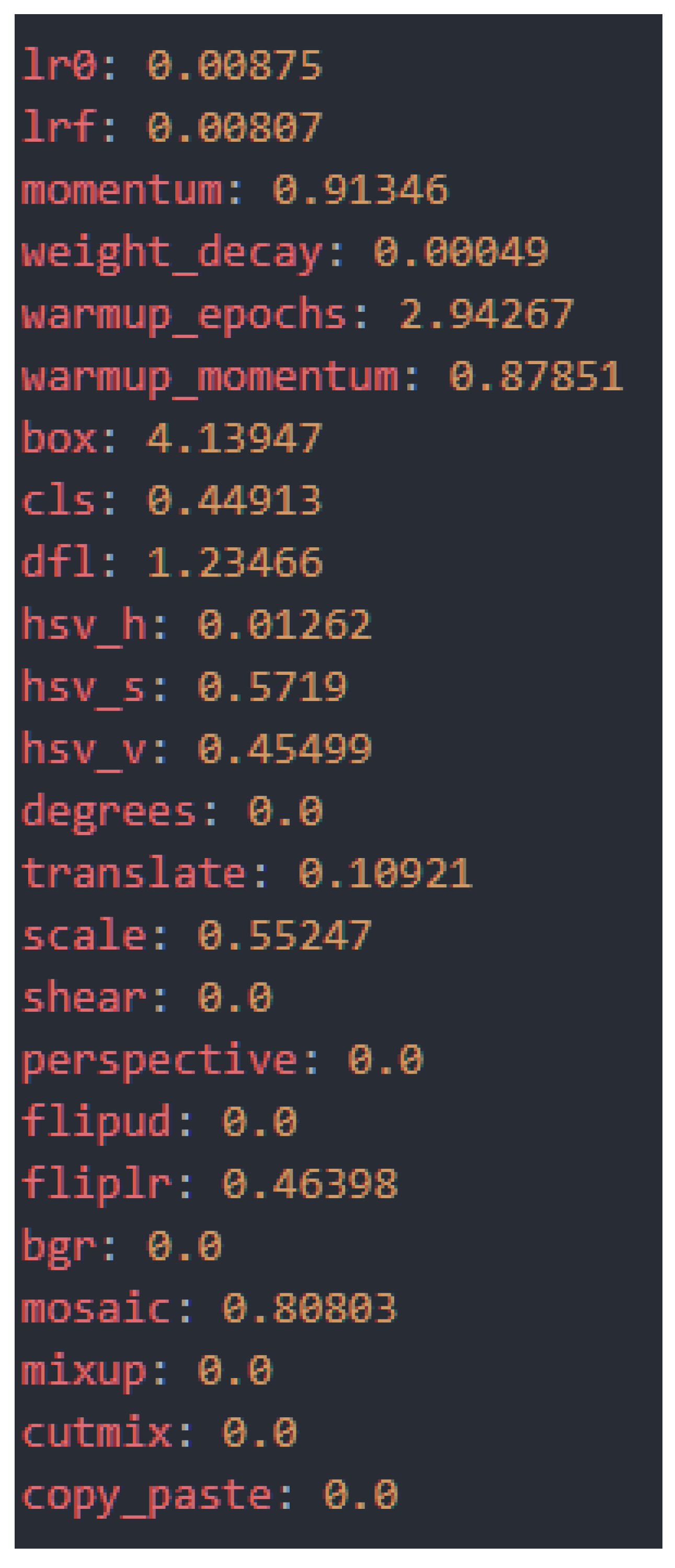

- Hyperparameter optimization: Through a process of evolutionary tuning consisting of 100 iterations, we determined the optimal set of hyperparameters for the final training. This method automatically explores the configuration space to find the combination that maximizes performance. The resulting hyperparameters, which define everything from the learning rate to the loss weights and augmentation strategies, are shown in Figure 7.

4.3. Experimental Setup and Validation of Overall Performance

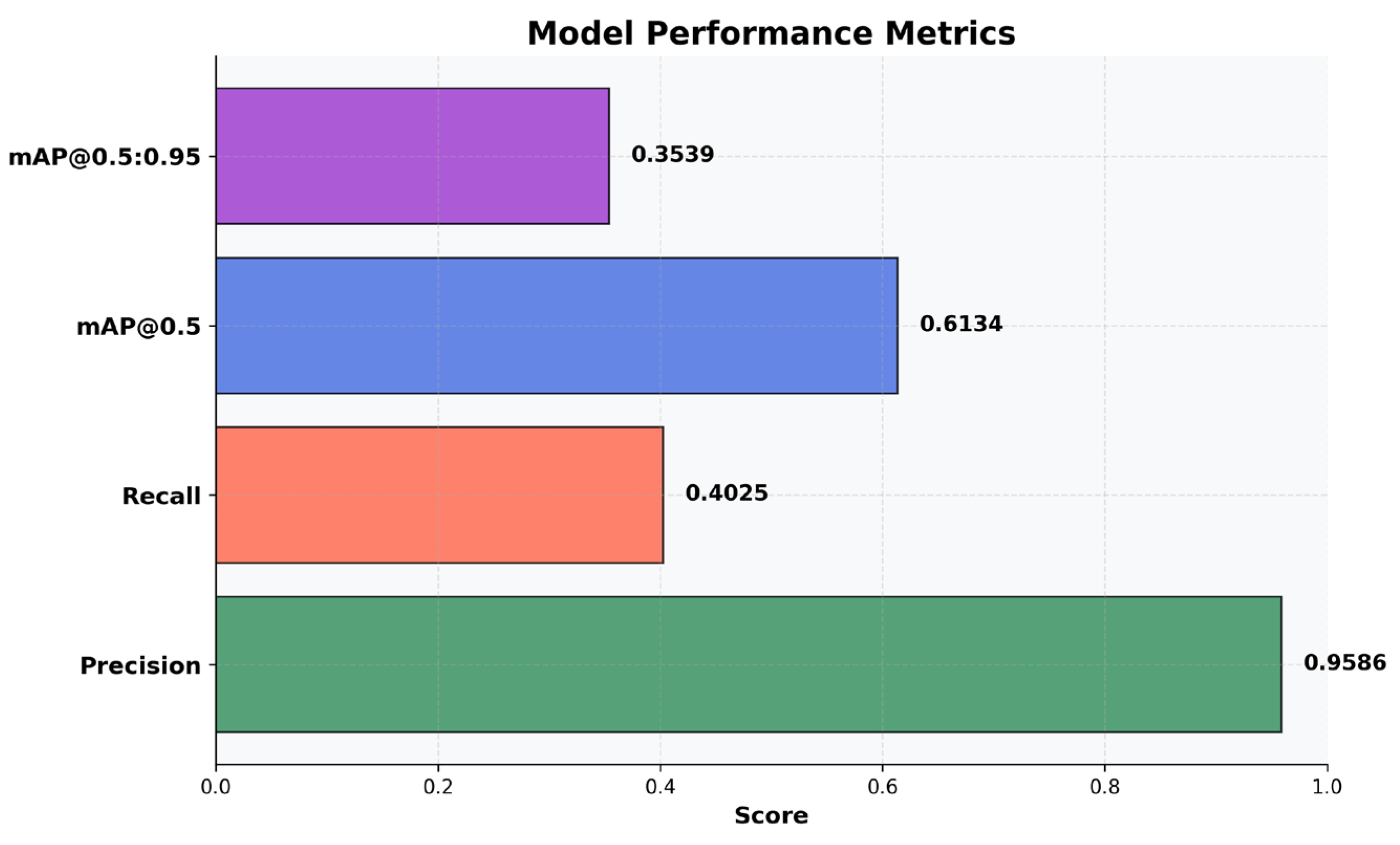

- Precision (0.9586 / 95.86%) indicates the proportion of correct detections out of the total number of detections performed. The high value reflects a low probability of erroneous predictions and high confidence in the model’s results.

- The Recall (0.4025 / 40.25%) expresses the ability to identify objects present in the image. The relatively low value suggests the omission of about 60% of objects. This is explained by the increased complexity of the training set due to the concatenation of two datasets, which reduces raw performance but increases the generalization and versatility of the model.

- mAP@0.5 (0,6134 combines Precision and Recall, evaluating correct detections at an intersection over union (IoU) threshold of 50%. The result indicates balanced performance, despite the low Recall.

- mAP@0.5:0.95 (0,3539) measures the accuracy of localization at strict IoU thresholds (50%–95%). The value significantly lower than mAP@0.5 suggests that, although the model detects objects, the generated bounding boxes are not always well adjusted to their contours.

4.4. Granular Analysis: From Aggregate Metrics to Error Patterns

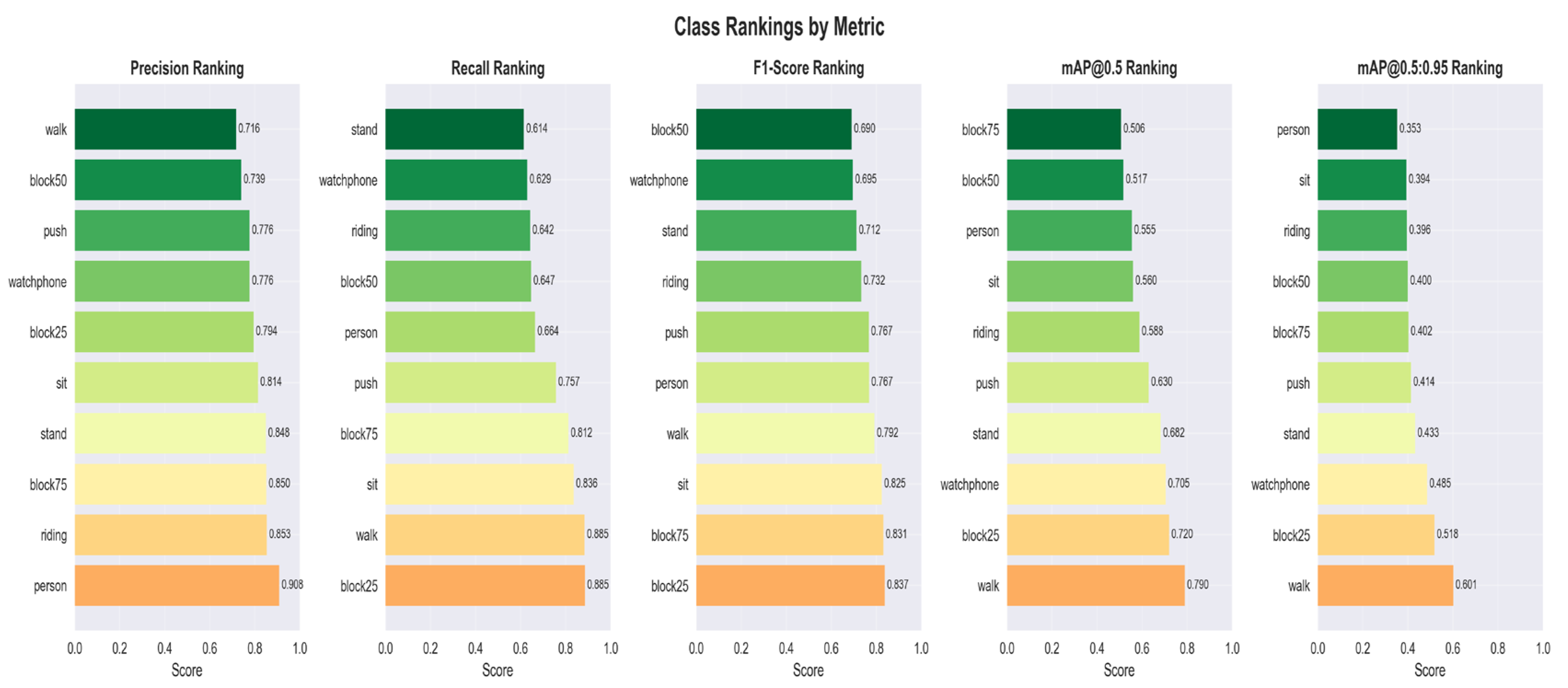

4.4.1. Classes Performance

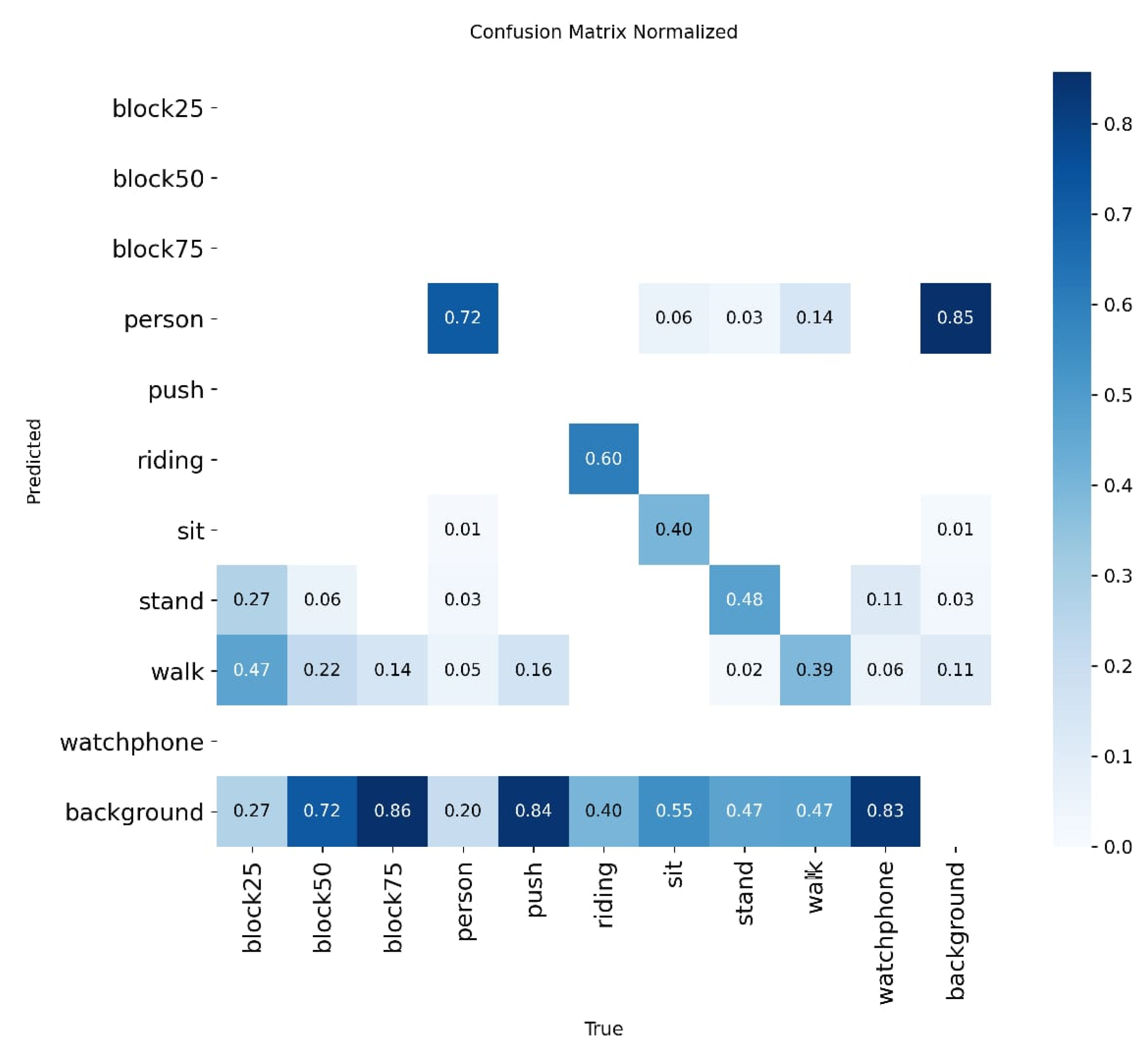

4.4.2. Diagnosing Error Patterns with the Confusion Matrix

- A.

- Analysis of Semantic Confusion and Critical Distinctions

- B.

- Performance vs. Background

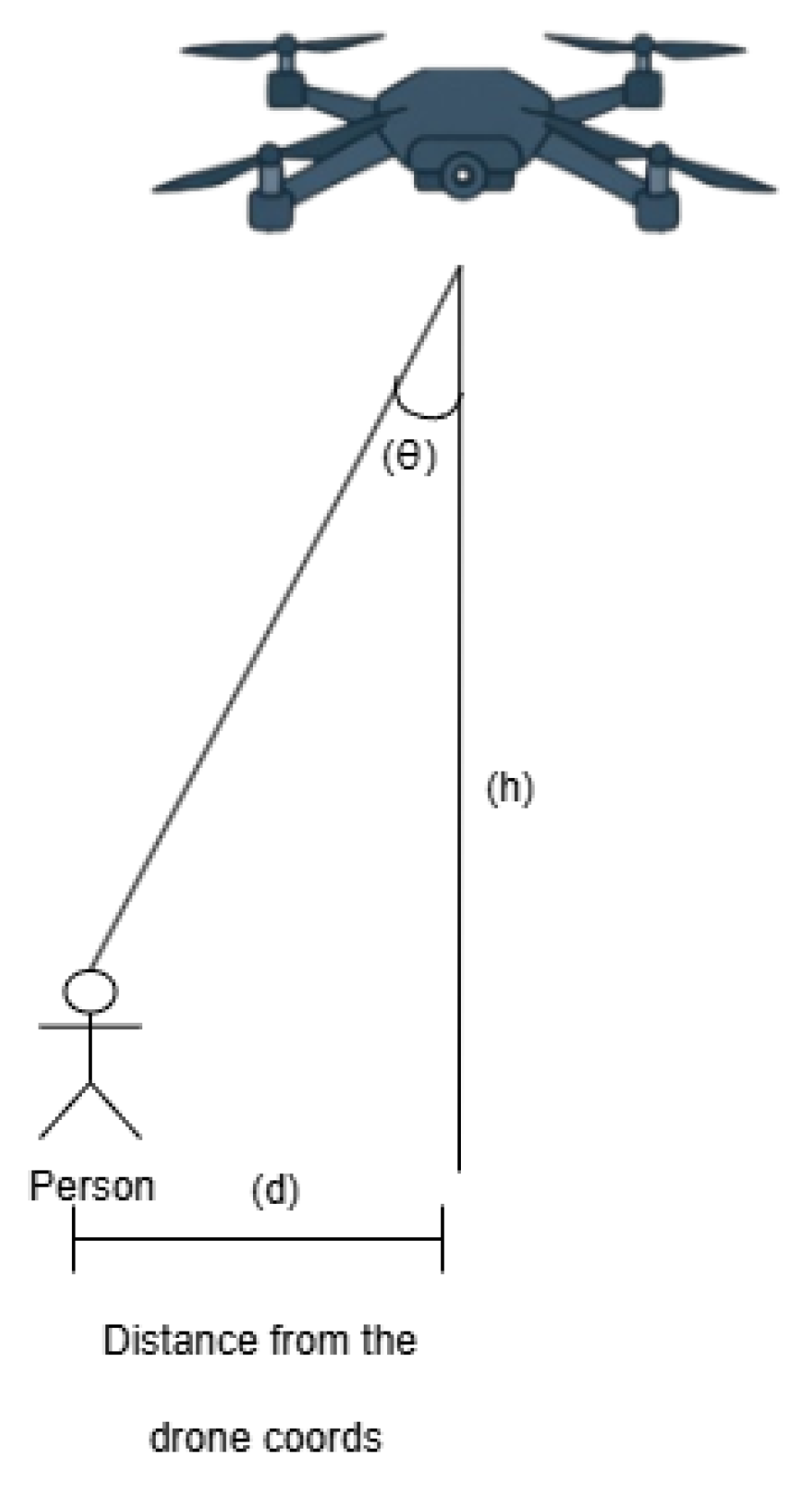

5. Geolocation of Detected Targets

5.1. Methodology for Estimating Position

- ): The geographical coordinates (latitude, longitude) of the drone, provided by the GPS module;

- : Height of the drone above the ground;

- : The vertical deviation angle, representing the angle between the vertical axis of the camera and the line of sight to the person. This is calculated based on the vertical position of the person in the image and the vertical field of view (FOV) of the camera;

- : This value is provided by the magnetometer integrated into the flight controller’s inertial measurement unit (IMU) and is crucial for defining the projection direction of the visual vector [29];

- : The estimated geographical coordinates of the person are the final result of the calculation.

- Flight height (h): 27 m;

- GPS position of the drone ): (45.7723°, 22.144°);

- Drone orientation (): 30° (azimuth measured from North);

- Vertical deviation angle (): 25° (calculated from the target position in the image).

- Earth’s radius (R): 6,371,000 m;

- Angular distance (): ;

- Azimuth (): ;

- Drone latitude ): ;

- Drone longitude .

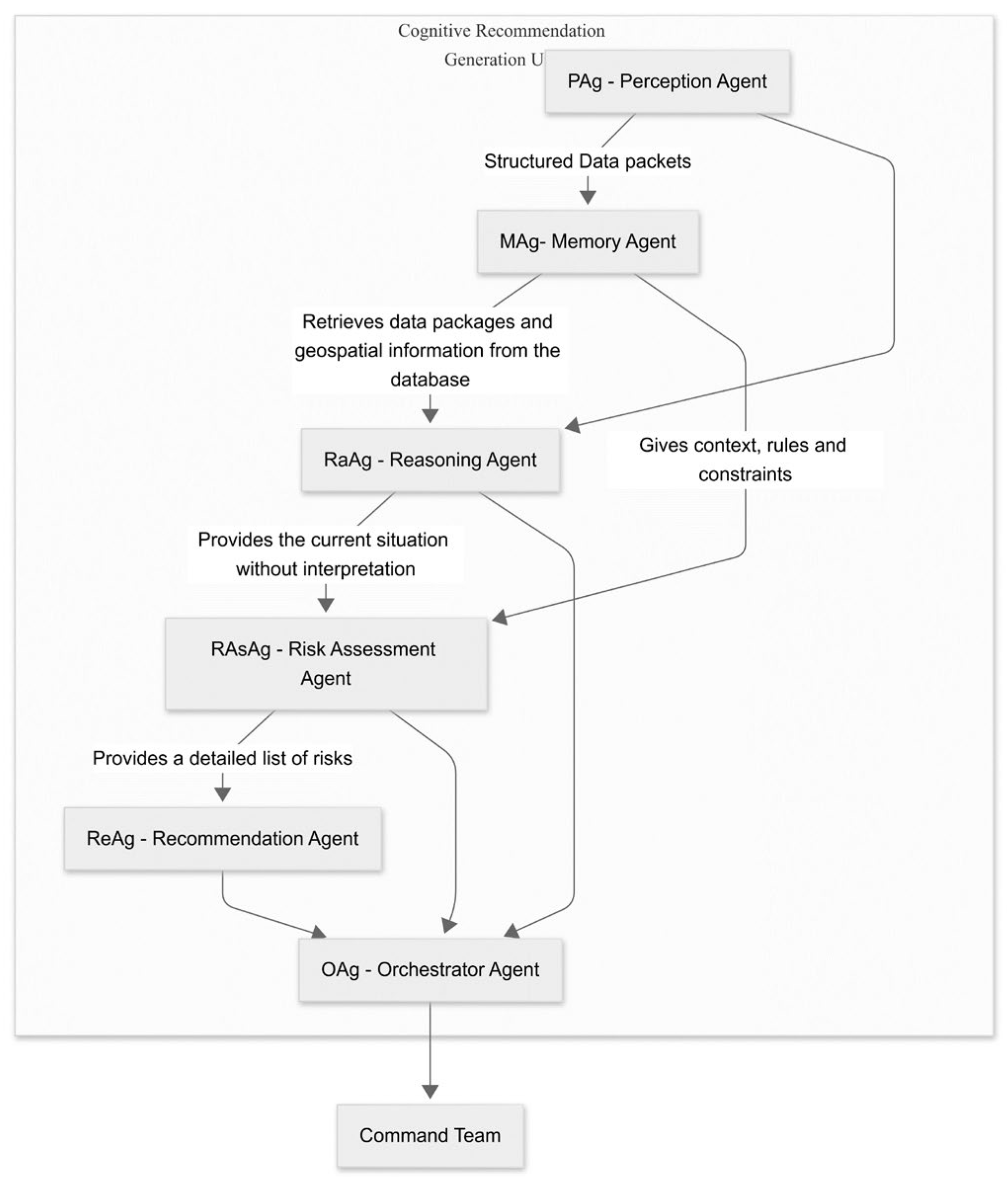

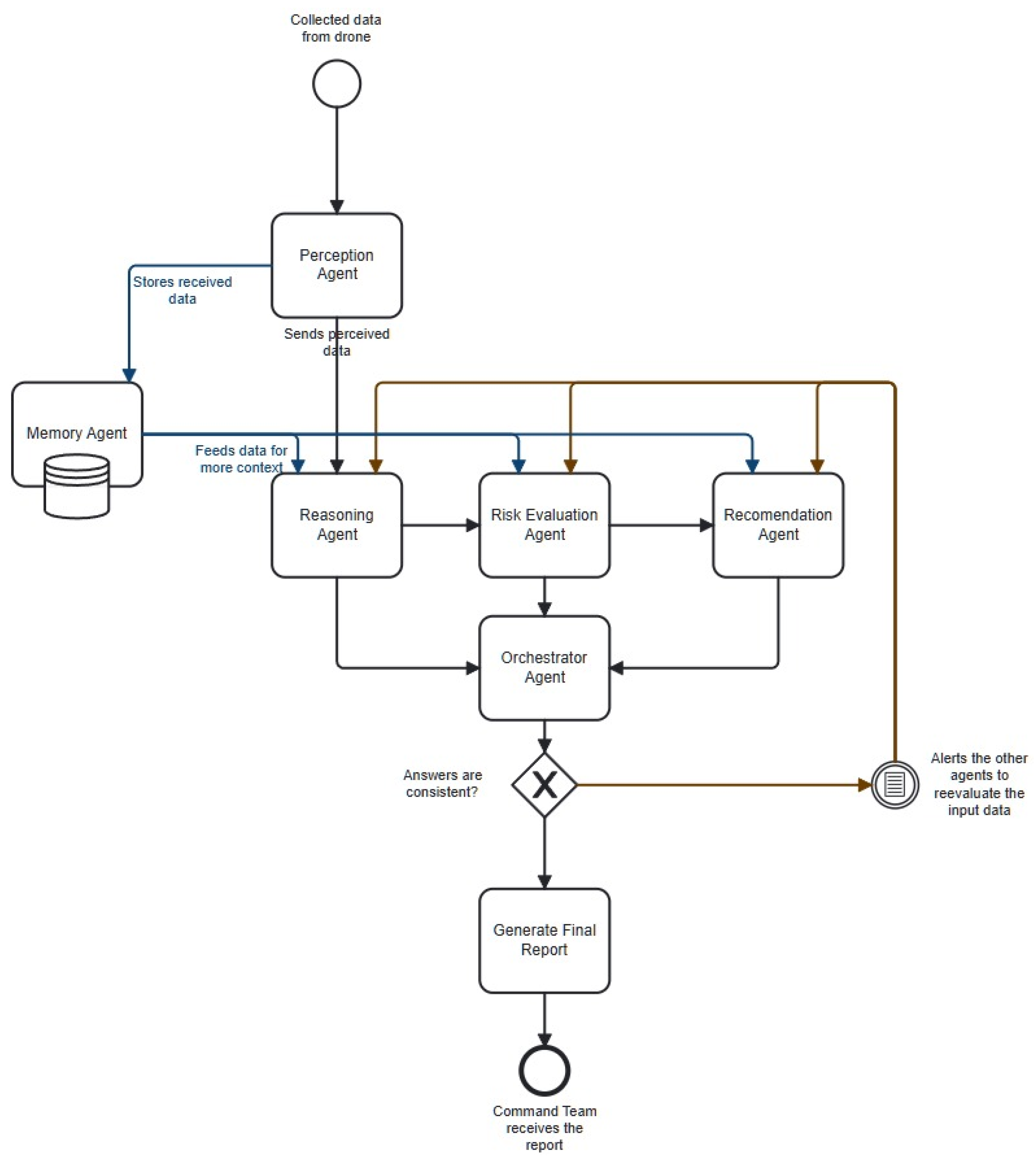

6. Cognitive Agent Architecture

6.1. Components of the Proposed Cognitive-Agentic Architecture

6.1.1. Integration of External Data Into the Cognitive-Agentic System

6.1.2. MAg–Memory Agent

6.1.3. RaAg–System Reasoning

6.1.4. RAsAg–Risk Assessment Agent

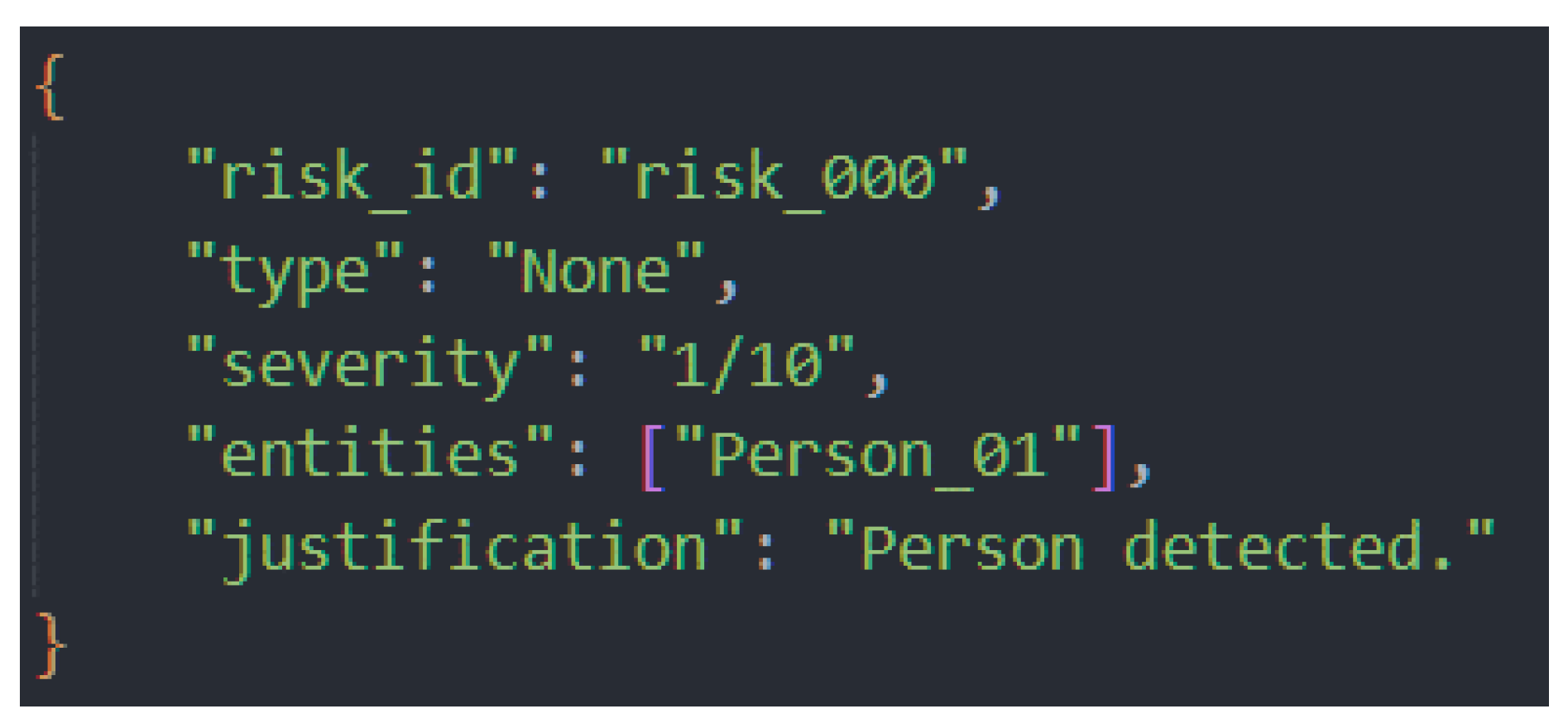

- Rule_ID: A unique identifier for traceability (e.g., R-MED-01).

- Condition (C): The description of the rule prerequisites it to act.

- Action (A): The process of generating the structured risk report, specifying the Type, Severity, and Justification.

- Severity (S): A numerical value that dictates the order of execution in case multiple rules are triggered simultaneously. A higher value indicates a higher priority.

- Type of risk

- Severity level: a numerical value, where 1 means low risk and 10 means high risk

- Entities involved: ID of the person or area affected or coordinates

- Justification: A brief explanation of the rules that led to the assessment

6.1.5. ReAg–Recommendation Agent

6.1.6. Consolidate and Interpret (Orchestrator Agent)

6.1.7. Inter-agent Communication and Operational Flow

7. Results

7.1. Validation Methodology

- Information Integrity: The ability to maintain the consistency and accuracy of data as it moves through the cognitive cycle (from PAg to the final report).

- Temporal Coherence (Memory): The effectiveness of the Memory Agent (MAg) in maintaining a persistent state of the environment to avoid redundant alerts and adapt to evolving situations.

- Accuracy of Hazard Identification: The accuracy of the system in identifying the most significant threat in a given context.

- Self-Correction Capability: The system’s ability to detect and rectify internal logical inconsistencies, a key feature of the Orchestrator Agent.

7.2. First Scenario—Low Risk Situation

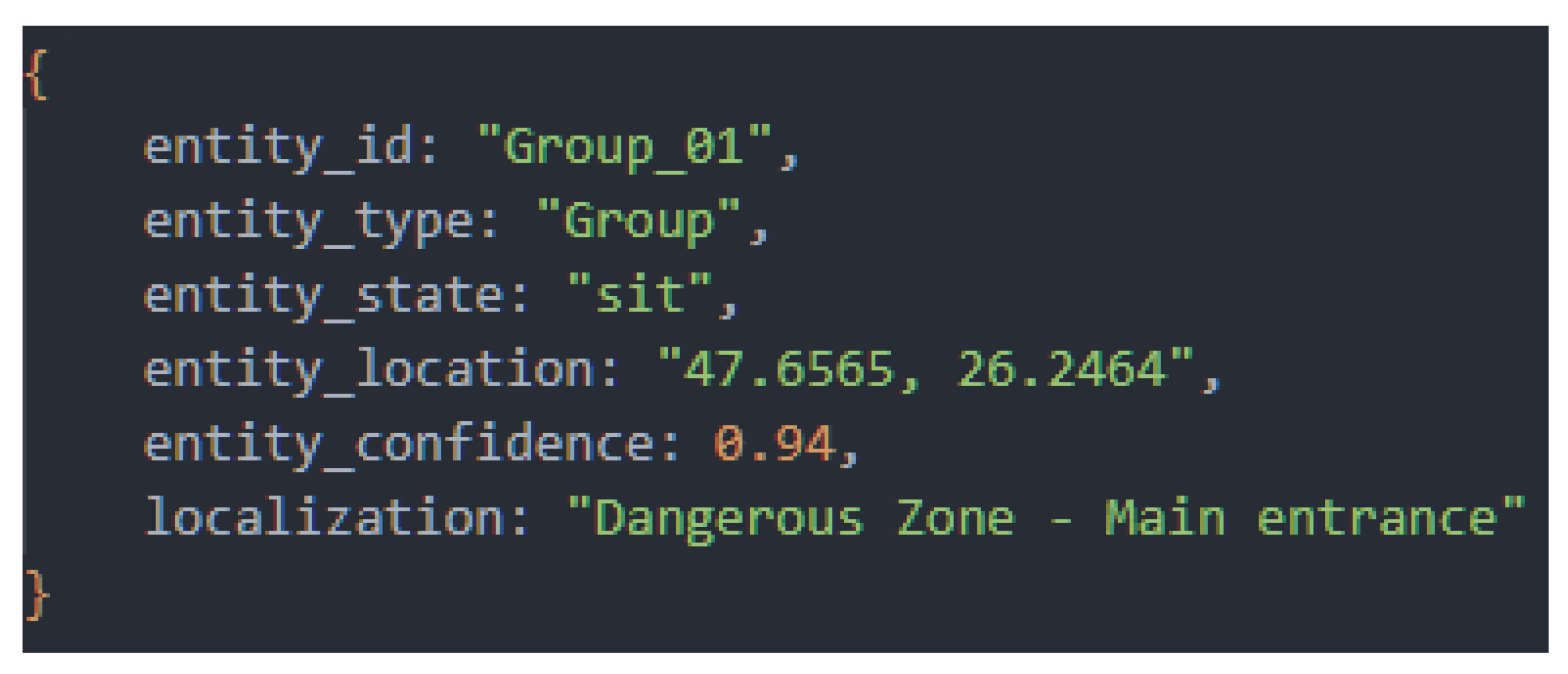

7.2.1. Initial Data

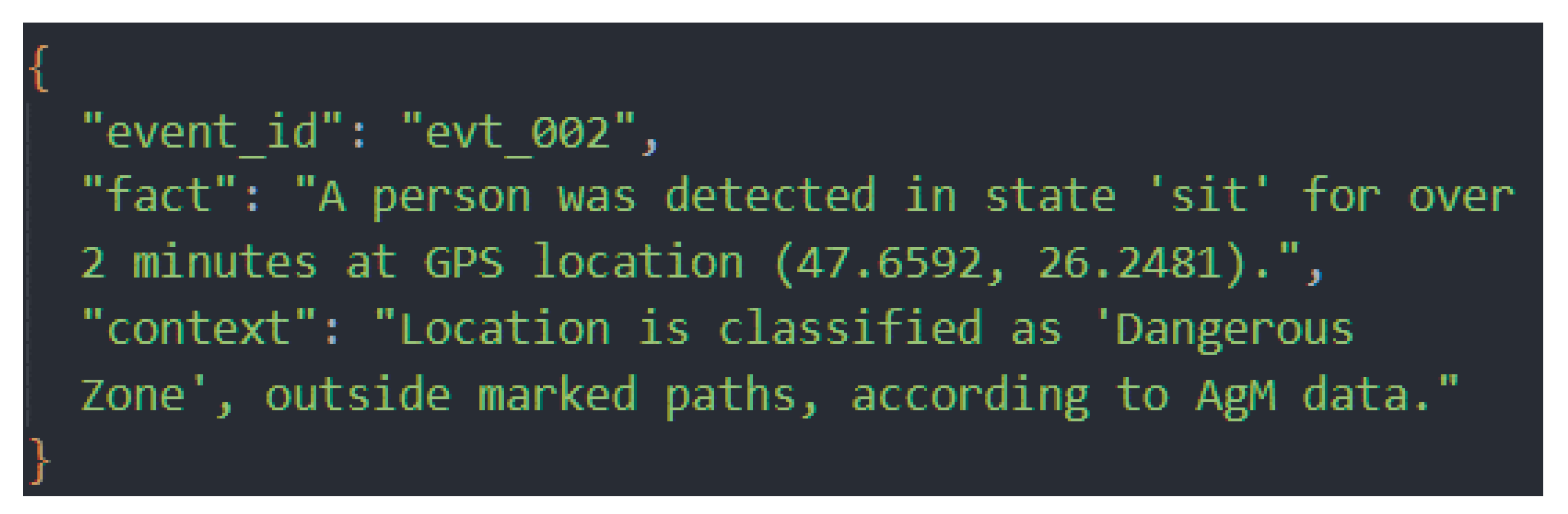

7.2.2. Contextual Enrichment (RaAg)

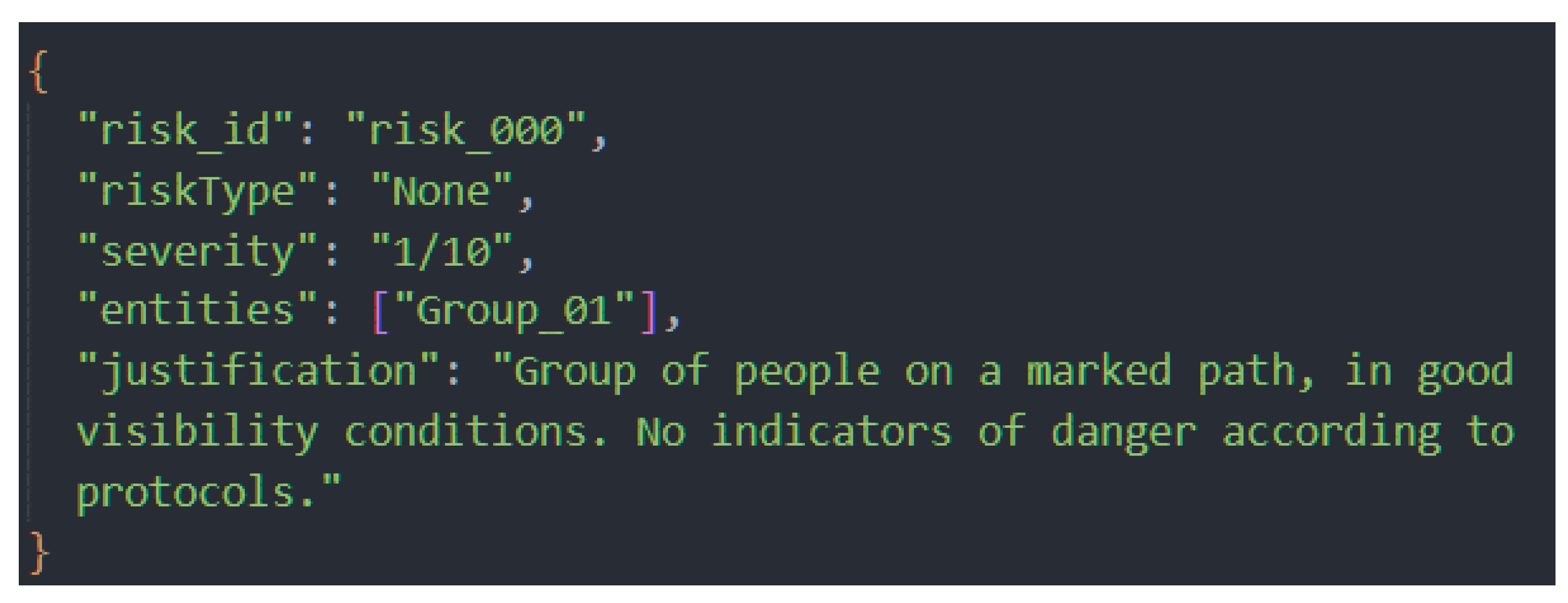

7.2.3. Risk Assessment (RAsAg)

7.2.4. Determining the Response Protocol (ReAg)

7.2.5. Final Validation and Display in GCS

- context: “group of people on a marked path”;

- assessment of “low risk (1/10)”;

- selected response protocol.

- The pins corresponding to individuals were marked in green;

- The operator received an informative, non-intrusive notification.

7.3. Second Scenario – High risk and Self-correction

7.3.1. Description of the Initial Situation

7.3.2. Deliberate Error and System Response

7.3.3. Self-Correcting Mechanism

7.3.4. Final Result

- marking the victim on the interactive map with a red pin;

- displaying the detailed report in a prominent window;

- enabling immediate intervention by the operator.

7.4. Third Scenario – Demonstration of Adaptation

- An update to an existing event

- A completely new situation

- Reducing operator cognitive fatigue by limiting unnecessary alerts

- Increasing operational accuracy by consolidating information

- Improving information continuity in long-term missions

7.5. Cognitive Performance Analysis

8. Discussion

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Sun, J.; Li, B.; Jiang, Y.; Wen, C.-y. A Camera-Based Target Detection and Positioning UAV System for Search and Rescue (SAR) Purposes. Sensors 2016, 16, 1778. https://doi.org/10.3390/s16111778.

- Pensieri, Maria Gaia, Mauro Garau, and Pier Matteo Barone. “Drones as an integral part of remote sensing technologies to help missing people.” Drones 4.2 (2020): 15.

- Ashish, Naveen, et al. “Situational awareness technologies for disaster response.” Terrorism informatics: Knowledge management and data mining for homeland security. Boston, MA: Springer US, 2008. 517-544.

- Kutpanova, Zarina, et al. “Multi-UAV path planning for multiple emergency payloads delivery in natural disaster scenarios.” Journal of Electronic Science and Technology 23.2 (2025): 100303.

- Kang, Dae Kun, et al. “Optimising disaster response: opportunities and challenges with Uncrewed Aircraft System (UAS) technology in response to the 2020 Labour Day wildfires in Oregon, USA.” International Journal of Wildland Fire 33.8 (2024).

- R. Arnold, J. Jablonski, B. Abruzzo and E. Mezzacappa, “Heterogeneous UAV Multi-Role Swarming Behaviors for Search and Rescue,” 2020 IEEE Conference on Cognitive and Computational Aspects of Situation Management (CogSIMA), Victoria, BC, Canada, 2020, pp. 122-128, doi: 10.1109/CogSIMA49017.2020.9215994.

- Alotaibi, Ebtehal Turki, Shahad Saleh Alqefari, and Anis Koubaa. “Lsar: Multi-uav collaboration for search and rescue missions.” Ieee Access 7 (2019): 55817-55832.

- Zak, Yuval, Yisrael Parmet, and Tal Oron-Gilad. “Facilitating the work of unmanned aerial vehicle operators using artificial intelligence: an intelligent filter for command-and-control maps to reduce cognitive workload.” Human Factors 65.7 (2023): 1345-1360.

- Zhang, Wenjuan, et al. “Unmanned aerial vehicle control interface design and cognitive workload: A constrained review and research framework.” 2016 IEEE international conference on systems, man, and cybernetics (SMC). IEEE, 2016.

- Jiang, Peiyuan, et al. “A Review of Yolo algorithm developments.” Procedia computer science 199 (2022): 1066-1073.

- Sapkota, Ranjan, Konstantinos I. Roumeliotis, and Manoj Karkee. “UAVs Meet Agentic AI: A Multidomain Survey of Autonomous Aerial Intelligence and Agentic UAVs.” arXiv preprint arXiv:2506.08045 (2025).

- Jones, Brennan, Anthony Tang, and Carman Neustaedter. “RescueCASTR: Exploring Photos and Live Streaming to Support Contextual Awareness in the Wilderness Search and Rescue Command Post.” Proceedings of the ACM on Human-Computer Interaction 6.CSCW1 (2022): 1-32.

- Volpi, Michele, and Vittorio Ferrari. “Semantic segmentation of urban scenes by learning local class interactions.” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops. 2015.

- Kutpanova, Zarina, et al. “Multi-UAV path planning for multiple emergency payloads delivery in natural disaster scenarios.” Journal of Electronic Science and Technology 23.2 (2025): 100303.

- M. Atif, R. Ahmad, W. Ahmad, L. Zhao and J. J. P. C. Rodrigues, “UAV-Assisted Wireless Localization for Search and Rescue,” in IEEE Systems Journal, vol. 15, no. 3, pp. 3261-3272, Sept. 2021, doi: 10.1109/JSYST.2020.3041573.

- S. Hayat, E. Yanmaz, T. X. Brown and C. Bettstetter, “Multi-objective UAV path planning for search and rescue,” 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 2017, pp. 5569-5574, doi: 10.1109/ICRA.2017.7989656.

- Liu, C.; Szirányi, T. Real-Time Human Detection and Gesture Recognition for On-Board UAV Rescue. Sensors 2021, 21, 2180. https://doi.org/10.3390/s21062180.

- D. Cavaliere, S. Senatore and V. Loia, “Proactive UAVs for Cognitive Contextual Awareness,” in IEEE Systems Journal, vol. 13, no. 3, pp. 3568-3579, Sept. 2019, doi: 10.1109/JSYST.2018.2817191.

- M. Yahya, M. A. Fattah, M. S. Saeed, Design and Analysis of a Hybrid VTOL-Fixed Wing UAV for Extended Endurance Missions, Engineering Science and Technology, an International Journal, Vol. 23, No. 6, pp. 1356–1365, 2020.

- T. Toschi et al., Evaluation of DJI Matrice 300 RTK Performance in Photogrammetric Surveys with Zenmuse P1 and L1 Sensors, ISPRS Archives, Vol. XLIII-B1-2022, pp. 339–346, 2022. https://doi.org/10.5194/isprs-archives-XLIII-B1-2022-339-2022.

- T. Toschi et al., Evaluation of DJI Matrice 300 RTK Performance in Photogrammetric Surveys with Zenmuse P1 and L1 Sensors, ISPRS Archives, Vol. XLIII-B1-2022, pp. 339–346, 2022. https://doi.org/10.5194/isprs-archives-XLIII-B1-2022-339-2022.

- Liang, Junbiao. “A review of the development of YOLO object detection algorithm.” Appl. Comput. Eng 71.1 (2024): 39-46.

- Wang, Xin, et al. “Yolo-erf: lightweight object detector for uav aerial images.” Multimedia Systems 29.6 (2023): 3329-3339.

- Terven, Juan, Diana-Margarita Córdova-Esparza, and Julio-Alejandro Romero-González. “A comprehensive review of yolo architectures in computer vision: From yolov1 to yolov8 and yolo-nas.” Machine learning and knowledge extraction 5.4 (2023): 1680-1716.

- Ragab, Mohammed Gamal, et al. “A comprehensive systematic review of YOLO for medical object detection (2018 to 2023).” IEEE Access 12 (2024): 57815-57836.

- NIHAL, Ragib Amin, et al. UAV-Enhanced Combination to Application: Comprehensive analysis and benchmarking of a human detection dataset for disaster scenarios. In: International Conference on Pattern Recognition. Cham: Springer Nature Switzerland, 2024. p. 145-162.

- https://www.kaggle.com/datasets/kuantinglai/ntut-4k-drone-photo-dataset-for-human-detection/data.

- Zhao, Xiaoyue, et al. “Detection, tracking, and geolocation of moving vehicle from uav using monocular camera.” IEEE Access 7 (2019): 101160-101170.

- Mallick, Mahendra. “Geolocation using video sensor measurements.” 2007 10th International Conference on Information Fusion. IEEE, 2007.

- Cai, Y.; Zhou, Y.; Zhang, H.; Xia, Y.; Qiao, P.; Zhao, J. Review of Target Geo-Location Algorithms for Aerial Remote Sensing Cameras without Control Points. Appl. Sci. 2022, 12, 12689. https://doi.org/10.3390/app122412689.

- Thrun, Sebastian. “Toward a framework for human-robot interaction.” Human–Computer Interaction 19.1-2 (2004): 9-24.

- Brooks, Rodney A. “Intelligence without representation.” Artificial intelligence 47.1-3 (1991): 139-159.

- Gat, Erann, R. Peter Bonnasso, and Robin Murphy. “On three-layer architectures.” Artificial intelligence and mobile robots 195 (1998): 210.

- Laird, John E., Christian Lebiere, and Paul S. Rosenbloom. “A standard model of the mind: Toward a common computational framework across artificial intelligence, cognitive science, neuroscience, and robotics.” Ai Magazine 38.4 (2017): 13-26.

- Karoudis, Konstantinos, and George D. Magoulas. “An architecture for smart lifelong learning design.” Innovations in smart learning. Singapore: Springer Singapore, 2016. 113-118.

- Webb, Taylor, Keith J. Holyoak, and Hongjing Lu. “Emergent analogical reasoning in large language models.” Nature Human Behaviour 7.9 (2023): 1526-1541.

- Cavaliere, Danilo, Sabrina Senatore, and Vincenzo Loia. “Proactive UAVs for cognitive contextual awareness.” IEEE Systems Journal 13.3 (2018): 3568-3579.

- Zheng, Yu, Yujia Zhu, and Lingfeng Wang. “Consensus of heterogeneous multi-agent systems.” IET Control Theory & Applications 5.16 (2011): 1881-1888.

- Russell, Stuart, Peter Norvig, and Artificial Intelligence. “A modern approach.” Artificial Intelligence. Prentice-Hall, Egnlewood Cliffs 25.27 (1995): 79-80.

- BOUSETOUANE, Fouad. Physical AI Agents: Integrating Cognitive Intelligence with Real-World Action. arXiv preprint arXiv:2501.08944, 2025.

- Romero, Marcos Lima, and Ricardo Suyama. “Agentic AI for Intent-Based Industrial Automation.” arXiv preprint arXiv:2506.04980 (2025).

- Weiss, Michael, and Franz Stetter. “A hierarchical blackboard architecture for distributed AI systems.” Proceedings Fourth International Conference on Software Engineering and Knowledge Engineering. IEEE, 1992.

- Yao, Shunyu, et al. “React: Synergizing reasoning and acting in language models.” International Conference on Learning Representations (ICLR). 2023.

- Wang, Lei, et al. “A survey on large language model based autonomous agents.” Frontiers of Computer Science 18.6 (2024): 186345.

- Park, Joon Sung, et al. “Generative agents: Interactive simulacra of human behavior.” Proceedings of the 36th annual acm symposium on user interface software and technology. 2023.

| Comparison criterion | The proposed drone | Fixed-wing VTOL UAV [19] | DJI Matrice 300 RTK ([20,21]) |

| Drone type | Custom-built multi-motor (quadcopter) | Fixed wing + VTOL | Multi-motor (quadcopter) |

| Payload (kg) | 0,5 – 1,0 (delivery module 0,3-0,7) | ~1,0 | 2,7 |

| Hardware configuration | 920 kv motors, 2650 mAh LiPo battery, STM32F405 flight controller, camera sensors, LiDAR, GPS | Electric motor with propulsion propeller + VTOL lift propeller, Li-Po batteries | Coaxial electric motors, compatible with Zenmuse P1/L1 sensors |

| Autonomy (min) | 12-15 | 90 (up to 4-10 hours in extended configurations) | 55 |

| AI capabilities | YOLO11 + cognitive-agentic architecture (LLM) | Autonomous routing and position maintenance systems in hover-forward transitions | Integrated AI functions for mapping and automatic inspection |

| Maximum range (km) | 0,5-1,0 | 50-200 | 15 |

| Rule_ID | Severity | Condition | Action |

| R-MED-01 | 10 | Sudden Collapse: An abrupt change from an active to an inactive state. | Immediately transmit coordinates to the SAR team. Switch drone to ‘hover’ mode for monitoring. |

| R-MED-02 | 9 | Critical Inactivity: A person in a vulnerable position for an extended period. | Prepare first-aid package delivery. Mark victim on GCS map. |

| R-ENV-01 | 8 | Environmental Hazard: A person located in a known natural danger zone. | Mark danger zone on GCS map. Plan safe extraction route. Notify operator about zonal hazard. |

| R-BHV-01 | 7 | Mass Evacuation: Coordinated fleeing behavior of a group. | Monitor group’s flee path. Scan origin area to identify the source of danger. Notify operator about the unusual group event. |

| R-VUL-01 | 8 | Trapped Victim: High occlusion and prolonged inactivity suggest entrapment. | Initiate proximity scan with LiDAR. Mark point of interest for ground investigation. Alert operator about possible entrapment. |

| Cognitive Metrics | Scenario 1 (Low Risk) | Scenario 2 (High Risk) | Scenario 3 (With Forced Error) |

| Total decision time (seconds) | 11 | 14 | 18 |

| Accuracy Risk Assessment | Correct (1/10) | Correct (9/10) | Initially incorrect, corrected onto 9/10 |

| Auto-Correct Success | N/A | N/A | YES |

| Long-Term Memory Calls (MAg) | 1 | 2 | 3 |

| Fidelity Final Report vs. Situation | High | High | High (after correction) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).