Submitted:

11 August 2025

Posted:

13 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- In-distribution: Samples closely resemble the training data (e.g., images of white cats or gray dogs in the previous example).

- Distorted in-distribution (distribution shift): Samples exhibit visual perturbations such as blur or color shift (e.g., gray cats viewed as distorted variants).

- Out-of-distribution (OOD): Samples differ entirely from training data, including unseen categories (e.g., white bears) or synthetic noise.

- Proposal of a confidence score based on block-scaling: A novel method for quantifying sample uncertainty using BSQ. This approach requires only one model and supports the use of pretrained networks.

- Experimental comparison: Evaluation of the proposed approach against MC dropout and vanilla approaches under in-distribution, distorted, and OOD datasets. Empirical results show that our method offers superior performance under distortion and OOD conditions.

2. Related Work

3. Proposed Approach

- Generation of image masks. We define a mask , where and denote mask dimensions. Each pixel in the mask receives independently sampled uniform values across the red, green, and blue channels, allowing diverse contextual substitution when overlayed onto the original image. Typically, the mask size is approximately the size of the input image. Empirical observations suggest that mask size has limited impact on final performance.

- Construction of masked images. For a single test image , we generate variants by sliding the mask from bottom-left to top-right. Specifically, let the bottom-left corner of the mask be initialized at location at ( for the s-th test image. Then, the pixel values of are computed as:

- Classifications of generated images. Each masked image is passed through the classification model to obtain a softmax output , where is the total number of classes. Overall, there are sets of .

- Majority voting for class prediction. Initially, let for . For each prediction, let the predicted class be . we then update the vote count such that . The final class label for the original image I is determined by majority vote as .

- Confidence score computation. We then compute the mean and standard deviation across the softmax scores for class from the masked images, where and . Here, reflects the model’s average confidence, while captures its sensitivity to localized perturbations. A low implies stable predictions; a high suggests the model relies on spatially localized features. To map these values to a normalized confidence score in [0, 1], we apply a scaling function based on the arctangent of the inverse variance. The final confidence score is calculated as:where [0,1] is a weighting factor balancing average confidence and sensitivity-based adjustment.

4. Experiments and Results

4.1. Evaluation Metrics

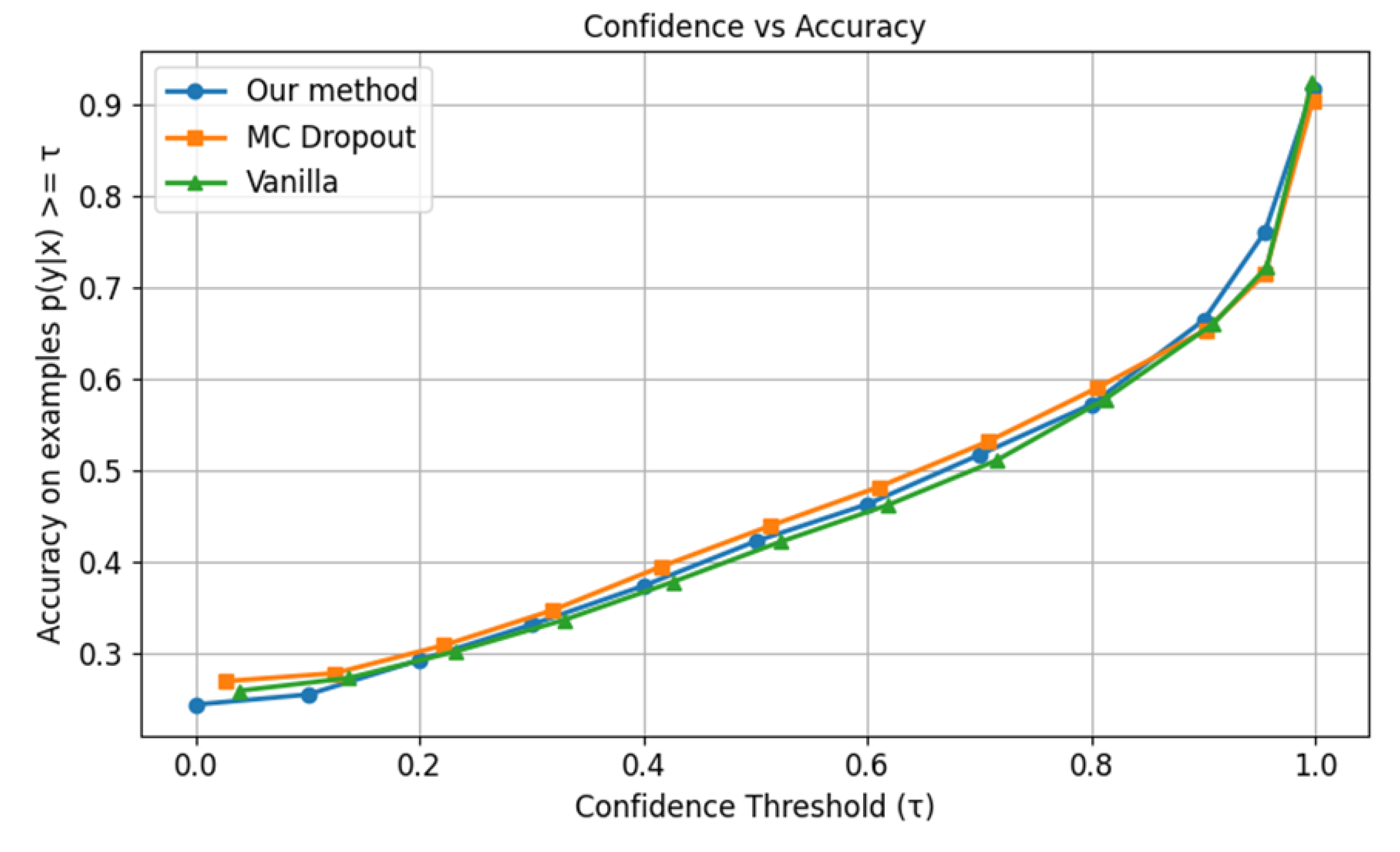

4.1.1. Accuracy vs. Confidence Curve

4.1.2. Expected Calibration Error (ECE)

4.1.3. Brier Score

4.1.4. Negative Log-Likelihood

4.1.5. Entropy

4.1.6. Using the Metrics in Experiments

4.2. Experimental Datasets

4.2.1. CIFAR-10

4.2.2. ImageNet 2012

4.2.3. iNaturalist 2018

4.2.4. Places365 Dataset

4.2.5. Distorted in-Distribution Datasets

4.2.6. Out-of-Distribution Datasets

4.3. Comparison Targets

4.4. Model Architectures and Configuration

4.5. Experiment I: CIFAR-10

4.6. Experiment II: ImageNet 2012

4.7. Experiment III: iNaturalist 2018

4.8. Experiment IV: Places365

4.9. Experiment V: Ensemble Approaches

4.10. Summary of Experimental Results

- We conducted four experiments (Subsections 4.5–4.8) to evaluate the relative performance of the proposed approach, MC dropout, and vanilla baseline methods. One additional experiment (Subsection 4.9) explored the feasibility of fusing two confidence scores. The findings are summarized as follows:

- In-distribution datasets: The proposed method demonstrates consistently stable performance, remaining comparable to the other two approaches. While it does not consistently outperform them, its reliability underscores its effectiveness in standard settings.

- Distorted (distribution-shift) datasets: In high-confidence regions, the proposed method achieves higher cumulative accuracy and lower ECE, indicating stronger calibration performance and heightened sensitivity to data degradation.

- OOD datasets: The method exhibits a denser concentration of samples in high-entropy regions, effectively reducing overconfident predictions and enhancing the model’s awareness of unfamiliar inputs.

- Class-imbalanced datasets (e.g., iNaturalist 2018): Relative to the other two methods, the proposed approach better mitigates prediction bias arising from unstable dropout, demonstrating enhanced robustness and interpretability.

- Ensemble performance: When integrated with MC dropout, the proposed method performs notably well under distortion scenarios, achieving lower ECE and a higher entropy peak center value than either method in isolation—highlighting its potential for synergistic integration.

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| BNN | Bayesian Neural Network |

| BSQ | Block Scaling Quality |

| ECE | Expected Calibration Error |

| MC | Monte Carlo |

| NLL | Negative Log-Likelihood |

| OOD | Out of distribution |

| UQ | Uncertainty Quantification |

References

- Mohri, M.; Rostamizadeh, A.; Talwalkar, A. Foundations of Machine Learning; The MIT Press: Cambridge MA, USA, 2012. [Google Scholar]

- Scheirer, W. J.; de Rezende Rocha, A.; Sapkota, A. and Boult, T. E. Toward open set recognition. IEEE Trans Pattern Anal Mach Intell. 2013, 35, 1757–1772. [Google Scholar] [CrossRef] [PubMed]

- Hendrycks, D.; Gimpel, K. A baseline for detecting misclassified and out-of-distribution examples in neural networks. In International Conference on Learning Representations, Toulon, France, 24 – 26 April 2017.

- Guo, C.; Pleiss, G.; Sun, Y.; Weinberger, K. Q. On calibration of modern neural networks. In International Conference on Machine Learning, Sydney, Australia, 6-11 August, 2017.

- Lakshminarayanan, B.; Pritzel, A.; Blundell, C. Simple and scalable predictive uncertainty estimation using deep ensembles. Adv. Neural Inf. Process. 2017, 30, 6405–6416. [Google Scholar]

- Blundell, C.; Cornebise, J.; Kavukcuoglu, K.; Wierstra, D. Weight uncertainty in neural networks. In International Conference on Machine Learning, Lille, France, 6-11 July 2015.

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian approximation: representing model uncertainty in deep learning. In International Conference on Machine Learning, New York City, NY, USA, 19-24 June 2016.

- Y. Ovadia et al. Can you trust your model’s uncertainty? Evaluating predictive uncertainty under dataset shift. Adv. Neural Inf. Process. 2019, 32, 13991–14002. [Google Scholar]

- MNIST Dataset. Available online: https://www.kaggle.com/datasets/hojjatk/mnist-dataset (accessed on 1 August 2025).

- You, S. D.; Lin, K-R; Liu, C-H. Estimating classification accuracy for unlabeled datasets based on block scaling. Int. j. eng. technol. innov. 2023, 13, 313–327. [Google Scholar]

- You, S. D.; Liu, H-C; Liu, C-H. Predicting classification accuracy of unlabeled datasets using multiple deep neural networks. IEEE Access, 2022, 10, 1–12. [Google Scholar] [CrossRef]

- M. Abdar, et al. A review of uncertainty quantification in deep learning: Techniques, applications and challenges. Information Fusion 2021, 76, 243–297. [Google Scholar] [CrossRef]

- Kristoffersson Lind, S.; Xiong, Z.; Forssén, P-E; Krüger, V. Uncertainty quantification metrics for deep regression. Pattern Recognit. Lett. 2024, 186, 91–97. [Google Scholar] [CrossRef]

- Pavlovic, M. Understanding model calibration - A gentle introduction and visual exploration of calibration and the expected calibration error (ECE). arXiv:2501.19047v2 2025, available at https://arxiv.org/html/2501.19047v2.

- Si, C.; Zhao C.; Min S.; Boyd-Graber, J. Re-examining calibration: The case of question answering. arXiv preprint arXiv:2205.12507, 2022, available at https://arxiv.org/pdf/2205.12507.

- Brier, G. W. Verification of forecasts expressed in terms of probability. Mon. Weather Rev. 1950, 78, 1–3. [Google Scholar] [CrossRef]

- Gneiting, T.; Raftery, A. E. Strictly proper scoring rules, prediction, and estimation. J. Am. Stat. Assoc. 2007, 102, 359–378. [Google Scholar] [CrossRef]

- Shannon, C. E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Krizhevsky, A. Learning multiple layers of features from tiny images. University of Toronto, 2009.

- The CIFAR-10 Dataset. Available online: https://www.cs.toronto.edu/~kriz/cifar.html (accessed on 1 August 2025).

- Deng, J.; Dong, W.; Socher, R.; Li, L.-J.; Li, K.; Li, F-F. ImageNet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20-25 June 2009. [Google Scholar]

- Russakovsky, O.; et al. ImageNet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Van Horn, G.; et al. The iNaturalist Species Classification and Detection Dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, Utah, USA, 18-22 June 2018. [Google Scholar]

- Visipedia/Inat_comp. Available online: https://github.com/visipedia/inat_comp (accessed on 1 August 2025).

- Zhou, B.; Lapedriza, A.; Khosla, A.; Oliva, A.; and Torralba, A. Places: A 10 million Image Database for Scene Recognition," IEEE Trans Pattern Anal Mach Intell. 2017, 40, 1452–1464.

- Places Download. Available online: http://places2.csail.mit.edu/download.html (accessed on 1 August 2025).

- Models and pre-trained weights. Available online: https://docs.pytorch.org/vision/main/models.html (accessed on 1 August 2025).

- CSAILVision/Places365. Available online: https://github.com/CSAILVision/places365 (accessed on 1 August 2025).

| Metric | Priority | Preference | Cal. steps |

|---|---|---|---|

| Acc. Vs Conf. | Primary | Problem dependent | All |

| ECE | Primary | ↓1 | All |

| BS | Secondary | ↓ | 1-3 |

| NLL | Secondary | ↓ | 1-3 |

| Entropy | Secondary | ↑ | 1-3 |

| Item | Value |

|---|---|

| Training set | 50,000 |

| Test set | 10,000 |

| Image size | 32 × 32 pixels |

| Image channel | 3 (R, G, B) |

| No. of classes | 10 |

| Item | Value |

|---|---|

| Training set | 1,281,167 |

| Validation set | 50,000 |

| Test set | 100,000 (no label) |

| Image size | Variable (typically 224 × 224) |

| Image channel | 3 (R, G, B) |

| No. of classes | 1,000 |

| Item | Value |

|---|---|

| Training set | 437,513 |

| Validation set | 24,426 |

| Test set | 149,394 (no label) |

| Image size | Variable (typically 224 × 224) |

| Image channel | 3 (R, G, B) |

| No. of classes | 8,142 |

| No. of super classes | 14 |

| Item | Standard | Challenge |

|---|---|---|

| Training set | 1,800,000 | 8,000,000 |

| Validation set | 36,000 | 36,000 |

| Test set | 149,394 (no label) | |

| Image size | Variable, typically 224 × 224 or 256 × 256 | Variable, typically 224 × 224 or 256 × 256 |

| Image channel | 3 | 3 |

| No. of classes | 365 | 434 |

| Category | Distortion |

|---|---|

| Blur | Glass blur, Defocus blur, Zoom blur, Gaussian blur |

| Noise | Impulse noise, Shot noise, Speckle noise, Gaussian noise |

| Transformation | Elastic transform, Pixelate |

| Color | Saturation, Brightness, Contrast |

| Environment | Fog, Spatter, Frost (not for iNaturalist2018 & Places365) |

| Item | CIFAR-10 | ImageNet 2012 | iNaturalist 2018 | Places365 |

|---|---|---|---|---|

| Framework | TensorFlow | PyTorch | PyTorch | PyTorch |

| Structure | ResNet-20 V1 | ResNet-50 | ResNet-50 | VGG-16 |

| Training epochs | 200 | Pre-trained | 50 | Pre-trained |

| Batch size/Model | 7 | IMAGENET1K_V1 | 80 | CSAIL Vision |

| Optimizer | Adam | - | SGD | - |

| Learning rate | 0.000717 | - | 0.1 | - |

| Momentum | - | - | 0.9 | - |

| Loss | Categorical cross entropy | Categorical cross entropy | Categorical cross entropy | Categorical cross entropy |

| Item | CIFAR-10 | ImageNet 2012 | iNaturalist 2018 | Places365 |

|---|---|---|---|---|

| Mask size | 3 × 3 | 20 × 20 | 20 × 20 | 20 × 20 |

| Hop size | 1 × 1 | 10 × 10 | 10 × 10 | 10 × 10 |

| in Eq. (2) | 1/2 | 1/2 | 1/2 | 1/2 |

| In-distribution image | 10,000 | 10,000 | 5,000 | 5,000 |

| Distorted image | 80,000 | 32,000 | 15,000 | 15,000 |

| OOD image | 26,032 | 1,000 | 1,000 | 1,000 |

| Metrics | Proposed | MC dropout | Vanilla |

|---|---|---|---|

| Accuracy | 87.80% | ~91.2% | ~90.5% |

| ECE | 0.0693 | ~0.01 | ~0.05 |

| Brier Score | 0.2036 | ~0.13 | ~0.15 |

| Metrics | Proposed | MC dropout | Vanilla |

|---|---|---|---|

| Accuracy | 75.94% | ~74.55% | ~75.0% |

| ECE | 0.026 | ~0.015 | ~0.040 |

| Brier Score | 0.342 | ~0.35 | ~0.34 |

| NLL | 0.998 | ~1.1 | ~1.1 |

| Metrics | Proposed | MC dropout | Vanilla |

|---|---|---|---|

| ECE | 0.055 | 0.070 | 0.007 |

| Brier Score | 0.053 | 0.101 | 0.048 |

| NLL | 0.109 | 0.196 | 0.104 |

| Metrics | Proposed | MC dropout | Vanilla |

|---|---|---|---|

| ECE | 0.062 | 0.047 | 0.043 |

| Brier Score | 0.461 | 0.443 | 0.449 |

| NLL | 1.165 | 1.112 | 1.108 |

| Metrics | Proposed | MC dropout | Vanilla | Ensemble |

|---|---|---|---|---|

| ECE | 0.062 | 0.047 | 0.043 | 0.079 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).