Submitted:

05 August 2025

Posted:

06 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Background

1.2. Issue

- Insufficient data volume and quality: Industrial data is often sparse, noisy, and inconsistent due to sensor malfunctions, heterogeneous sources, or environmental variability. Preprocessing is time-intensive, and accurate labeling is resource-intensive and error-prone [25,26]. These issues reduce model performance and impede sustainable insights generation.

- Limited model adaptability and transferability: ML/DL models trained for specific machines, production lines, or factories often fail to generalize across different settings. Overfitted models lack robustness, while overly generic models miss critical domain-specific dynamics. Furthermore, many existing forecasting models provide only point estimates, lacking mechanisms to quantify uncertainty—an essential capability in sustainability-critical or safety-sensitive applications [27].

- Adapt to concept drift while preserving model performance over time,

- Quantify predictive uncertainty to improve confidence in decisions and support risk-aware operations,

- Generalize effectively across diverse processes, equipment, and industrial contexts.

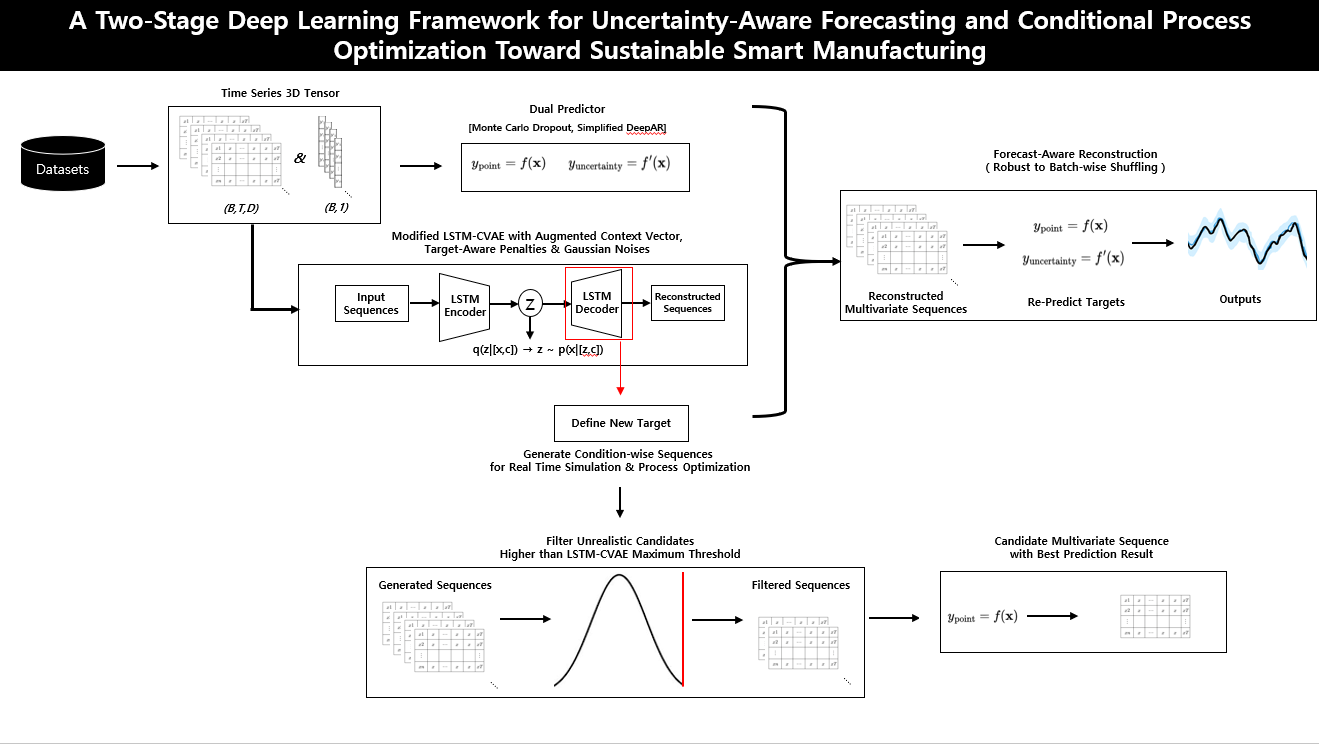

1.3. Our Idea

- Uncertainty-aware forecasting module: Provides calibrated point predictions along with probabilistic confidence intervals, enabling risk-aware decision support under dynamic conditions.

- Conditional generative module (LSTM-CVAE): Reconstructs realistic multivariate input trajectories conditioned on desired outcomes or target distributions, allowing controllable scenario generation.

1.4. Contributions

- Multivariate Sequence Reconstruction with Forecast Consistency: We propose an end-to-end deep learning framework that unifies uncertainty-aware probabilistic forecasting with multivariate sequence reconstruction. By recursively predicting outcomes from reconstructed inputs, the model ensures both point-wise accuracy and distributional reliability. Temporal dependencies are preserved, enabling the system to realistically capture process volatility. This conditional generation supports sustainable operations by reducing resource consumption, minimizing waste, and optimizing energy use.

- Two-Stage Filtering for Sustainable Scenario Control: We design a novel two-stage filtering mechanism that improves the realism and control of generated operational sequences. Sequences with high reconstruction errors are filtered out based on the learned MAE distribution, and the best-aligned sequence is selected based on target accuracy. This enhances scenario flexibility and decision controllability, supporting adaptive and resource-efficient production planning under uncertainty.

- Temporal Robustness Under Disrupted Conditions: Our framework demonstrates robustness to batch-order variations by preserving local temporal patterns rather than relying on fixed sequence order. This enables stable performance even under dynamically changing production schedules—an essential property for resilient, real-time decision-making in sustainable manufacturing environments.

- Generalizability Across Sustainable Manufacturing Domains: We validate the framework on diverse real-world datasets, including sugar and biomaterial manufacturing processes, as well as open-source benchmarks. The results confirm strong generalization performance, supporting its applicability across domains to enhance operational efficiency and contribute to sustainability goals.

2. Literature Review

2.1. ML and DL Applications in Manufacturing

2.2. Uncertainty Forecasting in Manufacturing Quality Management

2.3. ML and DL Optimization in Manufacturing

3. Methods

- (1)

-

Prediction Model Specification (Section 3.1)We implement probabilistic forecasting using:

- (2)

-

Forecast Evaluation and Predictor Selection (Section 3.2)Model performance is evaluated using:

- MAE score[51] combining both the point accuracy and volatility sensitiviy.

These metrics are used for dual-model selection: one model is chosen for point forecasting, and another for uncertainty estimation. - (3)

-

Modified LSTM-Conditional VAE (Section 3.3)

- The encoder augments each condition vector (target value) with recent statistical features from prior input sequences and learns latent distributions via KL annealing with target-aware penalties, thereby enhancing its ability to capture key temporal patterns.

- The decoder reconstructs multivariate sequences using sampled latent vectors and augmented condition vectors.

- To improve generalization and robustness, Gaussian noise is post-hoc injected into both the encoder and decoder.

- (4)

-

Three-Stage Validation (Section 3.4)To evaluate whether the reconstructed sequences preserve temporally meaningful and predictive patterns :

- Reconstruction Evaluation: Assess the temporal reconstruction quality by measuring MAE differences in point values, variability, and volatility.

- Downstream Forecast Evaluation: Use the best-trained point-wise and uncertainty-aware predictors to evaluate whether the reconstructed inputs preserve forecast-relevant dynamics by computing .

- Robustness Check: Test the model’s ability to capture both local patterns and global dynamics by evaluating performance under batch-wise temporal shuffling—independent of strict sequential batch-to-batch order.

- (5)

-

Multivariate Sequence Generation (Section 3.5)Conditioned on a defined or forecasted target, the trained decoder generates multiple candidate sequences, which are then filtered through a two-stage selection process:

- Discard sequences with reconstruction error exceeding the MAE threshold observed during training.

- Select the sequence whose point-wise predicted target is closest to the conditioning value.

This process ensures that the selected candidate sequence maintains empirical validity while remaining consistent with the forecast target.

3.1. Prediction Model Specification

-

1. Monte Carlo Dropout (MCD): Dropout [56] is applied at both training and inference to enable stochastic sampling. For each input, N forward passes generate multiple output samples. Uncertainty is computed as:Here, and denote the sample mean and standard deviation, and n is the confidence factor (e.g., for 95%) [57]. MCD is implemented across various backbones such as LSTM and CNN-LSTM [58], enabling uncertainty-aware forecasting via stochastic inference.2. Likelihood-Based Model (Simplified DeepAR): This model simplifies the original DeepAR by removing the autoregressive decoder and directly predicting the Gaussian distribution parameters [59] using a single LSTM layer. Unlike the original recursive approach, all target distribution parameters are inferred at once from the input sequence, eliminating the need for iterative decoding. The standard deviation is constrained to be positive via the softplus activation [60]:The model is trained using the Gaussian negative log-likelihood (NLL) loss [61]:The predicted mean serves as the point forecast, and the uncertainty interval is computed similarly to MCD (Eq. 1).

3.2. Forecast Measurements and Predictor Selection

3.2.1. Balanced Point Forecast Metric

- Absolute MAE — captures the average absolute deviation between predicted and true values (original MAE score).

- Differential MAE — measures the accuracy in predicting the changes (first differences) in the time series.

3.2.2. Uncertainty Forecast Metric

3.2.3. Dual Predictor Selection Strategy

3.3. Modified LSTM-Conditional VAE Architecture

3.3.1. Augmented Condition Vector

3.3.2. Conditional LSTM Encoder

3.3.3. Latent Sampling via Reparameterization

3.3.4. Conditional LSTM Decoder

3.3.5. Loss Function with Target-Aware Penalty

3.4. Three-Stage Validation

3.4.1. Reconstruction Evaluation

- Point Values:

- Standard Deviation (Variability):

- First Differences (Volatility):

3.4.2. Downstream Forecast Evaluation

3.4.3. Robustness Evaluation of Forecast-Aware Reconstruction (Conditional Generation) under Temporal Disruption

3.5. Multivariate Sequence Generation

3.5.1. Conditional Input Generation

3.5.2. Two-Stage Filtering

4. Experiment Results

4.1. Data Collection and Preprocessing

4.2. Prediction Model Configuration and Results

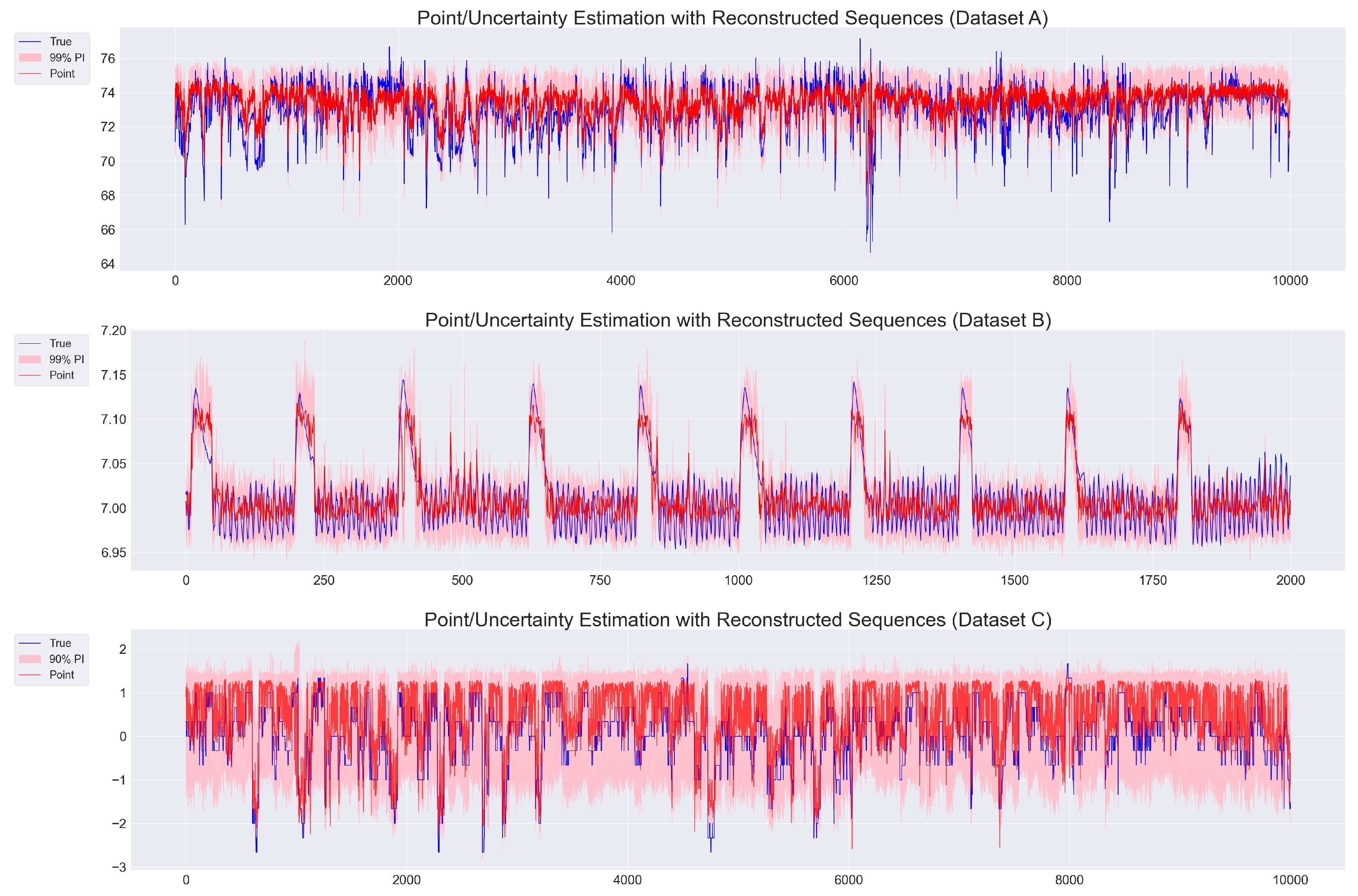

4.3. Reconstruction Model Configuration and Results

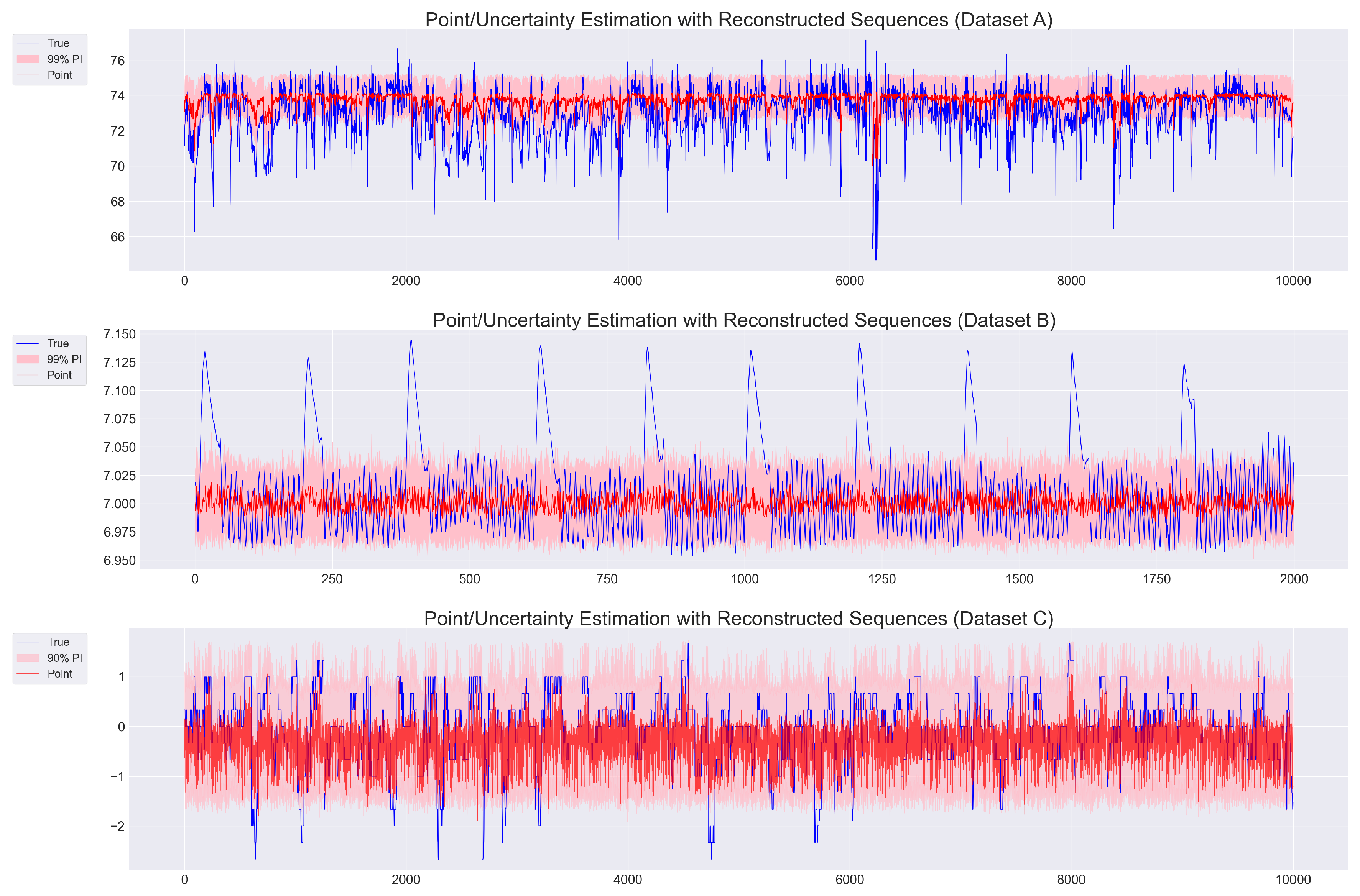

4.4. Evaluation Under Batch-Wise Temporal Shuffling

4.5. Multivariate Sequence Generation Evaluation

5. Discussion

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Lu, Y. Industry 4.0: A survey on technologies, applications and open research issues. Journal of industrial information integration 2017, 2017 6, 1–10. [Google Scholar] [CrossRef]

- Nizam, H.; Zafar, S.; Lv, Z.; Wang, F.; Hu, X. Real-time deep anomaly detection framework for multivariate time-series data in industrial IoT. IEEE Sensors Journal, 2: 22.23, 2283. [Google Scholar]

- Yao, Y.; Yang, M.; Wang, J.; Xie, M. Multivariate time-series prediction in industrial processes via a deep hybrid network under data uncertainty. IEEE Transactions on Industrial Informatics, 1: 19.2, 1977. [Google Scholar]

- Yadav, D. Machine learning: Trends, perspective, and prospects. Science, 2: 349.

- Gupta, C.; Farahat, A. Deep learning for industrial AI: Challenges, new methods and best practices. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 2020. p. 3571-3572.

- Xu, J.; Kovatsch, M.; Mattern, D.; Mazza, F.; Harasic, M.; Paschke, A.; Lucia, S. A review on AI for smart manufacturing: Deep learning challenges and solutions. Applied Sciences, 8: 12.16, 8239. [Google Scholar]

- Lee, J.; Davari, H.; Singh, J.; Pandhare, V. Industrial Artificial Intelligence for industry 4.0-based manufacturing systems. Manufacturing letters, 2: 18.

- Bousdekis, A.; Lepenioti, K.; Apostolou, D.; Mentzas, G. A review of data-driven decision-making methods for industry 4.0 maintenance applications. Electronics, 8: 10.7.

- Alloghani, M.; Al-Jumeily, D.; Mustafina, J.; Hussain, A.; Aljaaf, A. J. A systematic review on supervised and unsupervised machine learning algorithms for data science. Supervised and unsupervised learning for data science.

- Roy, D.; Srivastava, R.; Jat, M.; Karaca, M. S. A complete overview of analytics techniques: descriptive, predictive, and prescriptive. Decision intelligence analytics and the implementation of strategic business management.

- Iftikhar, N.; Baattrup-Andersen, T.; Nordbjerg, F. E.; Bobolea, E.; Radu, P. B. Data Analytics for Smart Manufacturing: A Case Study. In: DATA. 2019. p. 392-399.

- Han, K.; Wang, W.; Guo, J. Research on a Bearing Fault Diagnosis Method Based on a CNN-LSTM-GRU Model. Machines, 9: 12.12.

- Kang, Z.; Catal, C.; Tekinerdogan, B. Remaining useful life (RUL) prediction of equipment in production lines using artificial neural networks. Sensors, 9: 21.3.

- Lepenioti, K.; Pertselakis, M.; Bousdekis, A.; Louca, A.; Lampathaki, F.; Apostolou, D.; Anastasiou, S. Machine learning for predictive and prescriptive analytics of operational data in smart manufacturing. In: Advanced Information Systems Engineering Workshops: CAiSE 2020 International Workshops, Grenoble, France, –12, 2020, Proceedings 32. Springer International Publishing, 2020. p. 5-16. 8 June.

- Zhao, R.; Yan, R.; Chen, Z.; Mao, K.; Wang, P.; Gao, R. X. Deep learning and its applications to machine health monitoring. Mechanical Systems and Signal Processing, 2: 115.

- Awodiji, T. O. Industrial Big Data Analytics and Cyber-Physical Systems for Future Maintenance & Service Innovation. In: CS & IT Conference Proceedings. CS & IT Conference Proceedings, 2021.

- Attal, F.; Mohammed, S.; Dedabrishvili, M.; Chamroukhi, F.; Oukhellou, L.; Amirat, Y. Physical human activity recognition using wearable sensors. Sensors, 3: 15.12, 3131. [Google Scholar]

- Muzaffar, S.; Afshari, A. Short-term load forecasts using LSTM networks. Energy Procedia, 2: 158, 2922. [Google Scholar]

- Grigorescu, S.; Trasnea, B.; Cocias, T.; Macesanu, G. A survey of deep learning techniques for autonomous driving. Journal of field robotics, 3: 37.3.

- Kotsiopoulos, T.; Sarigiannidis, P.; Ioannidis, D.; Tzovaras, D. Machine learning and deep learning in smart manufacturing: The smart grid paradigm. Computer Science Review, 1: 40, 1003. [Google Scholar]

- Plathottam, S. J.; Rzonca, A.; Lakhnori, R.; Iloeje, C. O. A review of artificial intelligence applications in manufacturing operations. Journal of Advanced Manufacturing and Processing, e: 5.3, 1015. [Google Scholar]

- Wuest, T.; Weimer, D.; Irgens, C.; Thoben, K. D. WUEST, Thorsten, et al. Machine learning in manufacturing: advantages, challenges, and applications. Production & Manufacturing Research, 2: 4.1.

- Shi, Y.; Wei, P.; Feng, K.; Feng, D. C.; Beer, M. A survey on machine learning approaches for uncertainty quantification of engineering systems. Machine Learning for Computational Science and Engineering, 1: 1.1.

- Yong, B. X.; Brintrup, A. Bayesian autoencoders with uncertainty quantification: Towards trustworthy anomaly detection. Expert Systems with Applications, 1: 209, 1181. [Google Scholar]

- Escobar, C. A.; McGovern, M. E.; Morales-Menendez, R. Quality 4.0: a review of big data challenges in manufacturing. Journal of Intelligent Manufacturing, 2: 32.8, 2319. [Google Scholar]

- Xie, J.; Sun, L.; Zhao, Y. F. On the data quality and imbalance in machine learning-based design and manufacturing—A systematic review. Engineering, 1: 45.2.

- Serradilla, O.; Zugasti, E.; Rodriguez, J.; Zurutuza, U. Deep learning models for predictive maintenance: a survey, comparison, challenges and prospects. Applied Intelligence, 1: 52.10, 1093. [Google Scholar]

- Chatterjee, S.; Chaudhuri, R.; Vrontis, D.; Papadopoulos, T. Examining the impact of deep learning technology capability on manufacturing firms: moderating roles of technology turbulence and top management support. Annals of Operations Research, 1: 339.1.

- Weichert, D.; Link, P.; Stoll, A.; Rüping, S.; Ihlenfeldt, S.; Wrobel, S. A review of machine learning for the optimization of production processes. The International Journal of Advanced Manufacturing Technology, 1: 104.5, 1889. [Google Scholar]

- Ramesh, K.; Indrajith, M. N.; Prasanna, Y. S.; Deshmukh, S. S.; Parimi, C.; Ray, T. Comparison and assessment of machine learning approaches in manufacturing applications. Industrial Artificial Intelligence, 2: 3.1.

- Kim, S.; Seo, H.; Lee, E. C. Advanced Anomaly Detection in Manufacturing Processes: Leveraging Feature Value Analysis for Normalizing Anomalous Data. Electronics, 1: 13.7, 1384. [Google Scholar]

- Liu, W.; Yan, L.; Ma, N.; Wang, G.; Ma, X. , Liu, P.; Tang, R. Unsupervised deep anomaly detection for industrial multivariate time series data. Applied Sciences, 7: 14.2.

- Tercan, H.; Meisen, T. Machine learning and deep learning based predictive quality in manufacturing: a systematic review. Journal of Intelligent Manufacturing, 1: 33.7, 1879. [Google Scholar]

- Zhang, H.; Jiang, S.; Gao, D.; Sun, Y.; Bai, W. A Review of Physics-Based, Data-Driven, and Hybrid Models for Tool Wear Monitoring. Machines, 8: 12.12.

- Hermann, F.; Michalowski, A.; Brünnette, T.; Reimann, P.; Vogt, S.; Graf, T. Data-driven prediction and uncertainty quantification of process parameters for directed energy deposition. Materials, 7: 16.23, 7308. [Google Scholar]

- Incorvaia, G.; Hond, D.; Asgari, H. Uncertainty quantification of machine learning model performance via anomaly-based dataset dissimilarity measures. Electronics, 9: 13.5.

- Kompa, B.; Snoek, J.; Beam, A. L. Empirical frequentist coverage of deep learning uncertainty quantification procedures. Entropy, 1: 23.12, 1608. [Google Scholar]

- Çekik, R.; Turan, A. Deep Learning for Anomaly Detection in CNC Machine Vibration Data: A RoughLSTM-Based Approach. Applied Sciences, 3: 15.6, 3179. [Google Scholar]

- Khdoudi, A.; Masrour, T.; El Hassani, I.; El Mazgualdi, C. A deep-reinforcement-learning-based digital twin for manufacturing process optimization. Systems, 3: 12.2.

- Dharmadhikari, S.; Menon, N.; Basak, A. A reinforcement learning approach for process parameter optimization in additive manufacturing. Additive Manufacturing, 1: 71, 1035. [Google Scholar]

- Ma, Y.; Kassler, A.; Ahmed, B. S.; Krakhmalev, P.; Thore, A.; Toyser, A.; Lindbäck, H. Using deep reinforcement learning for zero defect smart forging. In: SPS2022. IOS Press, 2022. p. 701-712.

- Kim, J. Y.; Yu, J.; Kim, H.; Ryu, S. DRL-Based Injection Molding Process Parameter Optimization for Adaptive and Profitable Production. arXiv preprint arXiv:2505.10988, arXiv:2505.10988, 2025.

- Malashin, I.; Martysyuk, D.; Tynchenko, V.; Gantimurov, A.; Semikolenov, A.; Nelyub, V.; Borodulin, A. Machine Learning-Based Process Optimization in Biopolymer Manufacturing: A Review. Polymers, 3: 16.23, 3368. [Google Scholar]

- Chung, J.; Shen, B.; Kong, Z. J. Anomaly detection in additive manufacturing processes using supervised classification with imbalanced sensor data based on generative adversarial network. Journal of Intelligent Manufacturing, 2: 35.5, 2387. [Google Scholar]

- Harford, S.; Karim, F.; Darabi, H. Generating adversarial samples on multivariate time series using variational autoencoders. IEEE/CAA Journal of Automatica Sinica, 1: 8.9, 1523. [Google Scholar]

- Bashar, M. A.; Nayak, R. Time series anomaly detection with adjusted-lstm gan. International Journal of Data Science and Analytics.

- Feng, C. A cGAN Ensemble-based Uncertainty-aware Surrogate Model for Offline Model-based Optimization in Industrial Control Problems. In: 2024 International Joint Conference on Neural Networks (IJCNN). IEEE, 2024. p. 1-8.

- Liu, Q.; Cai, P.; Abueidda, D.; Vyas, S.; Koric, S.; Gomez-Bombarelli, R.; Geubelle, P. Univariate conditional variational autoencoder for morphogenic pattern design in frontal polymerization-based manufacturing. Computer Methods in Applied Mechanics and Engineering, 1: 438, 1178. [Google Scholar]

- Gal, Y.; Ghahramani, Z. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In: international conference on machine learning. PMLR, 2016. p. 1050-1059.

- Salinas, D.; Flunkert, V.; Gasthaus, J.; Januschowski, T. DeepAR: Probabilistic forecasting with autoregressive recurrent networks. International journal of forecasting, 1: 36.3, 1181. [Google Scholar]

- Hodson, T. O. Root mean square error (RMSE) or mean absolute error (MAE): When to use them or not. Geoscientific Model Development Discussions, 1: 2022, 2022. [Google Scholar]

- Gneiting, T.; Balabdaoui, F.; Raftery, A. E. Probabilistic forecasts, calibration and sharpness. Journal of the Royal Statistical Society Series B: Statistical Methodology, 2: 69.2.

- Pearce, T.; Brintrup, A.; Zaki, M.; Neely, A. High-quality prediction intervals for deep learning: A distribution-free, ensembled approach. In: International conference on machine learning. PMLR, 2018. p. 4075-4084.

- Suh, S.; Chae, D. H.; Kang, H. G.; Choi, S. Echo-state conditional variational autoencoder for anomaly detection. In: 2016 International Joint Conference on Neural Networks (IJCNN). IEEE, 2016. p. 1015-1022.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural computation, 1: 9.8, 1735. [Google Scholar]

- Nitish, S. Dropout: a simple way to prevent neural networks from overfitting. The journal of machine learning research, 1: 15.1, 1929. [Google Scholar]

- Bland, J. M.; Altman, D. G. Transformations, means, and confidence intervals. BMJ: British Medical Journal, 1: 312.7038, 7038. [Google Scholar]

- Lu, W.; Li, J.; Li, Y.; Sun, A.; Wang, J. A CNN-LSTM-based model to forecast stock prices. Complexity, 6: 2020.1, 2020. [Google Scholar]

- Zhang, X. Gaussian distribution. In: Encyclopedia of machine learning and data mining. Springer, Boston, MA, 2016. p. 1-5.

- Zheng, H.; Yang, Z.; Liu, W.; Liang, J.; Li, Y. Improving deep neural networks using softplus units. In: 2015 International joint conference on neural networks (IJCNN). IEEE, 2015. p. 1-4.

- Yao, H.; Zhu, D. L.; Jiang, B.; Yu, P. Negative log likelihood ratio loss for deep neural network classification. In: Proceedings of the Future Technologies Conference (FTC) 2019: Volume 1. Springer International Publishing, 2020. p. 276-282.

- Dufva, J. Machine Learning-Based Uncertainty Quantification for Postmortem Interval Prediction from Metabolomics Data. 2025.

- Lee, D. U.; Luk, W.; Villasenor, J. D.; Cheung, P. Y. A Gaussian noise generator for hardware-based simulations. IEEE Transactions on Computers, 1: 53.12, 1523. [Google Scholar]

- Luchnikov, I. A.; Ryzhov, A.; Stas, P. J.; Filippov, S. N.; Ouerdane, H. Variational autoencoder reconstruction of complex many-body physics. Entropy, 1: 21.11, 1091. [Google Scholar]

- Pinheiro Cinelli, L.; Araújo Marins, M.; Barros da Silva, E. A.; Lima Netto, S. Variational autoencoder. In: Variational methods for machine learning with applications to deep networks. Cham: Springer International Publishing, 2021. p. 111-149.

- Blei, D. M.; Kucukelbir, A.; McAuliffe, J. D. Variational inference: A review for statisticians. Journal of the American statistical Association, 8: 112.518.

- Joyce, J. M. Kullback-leibler divergence. In: International encyclopedia of statistical science. Springer, Berlin, Heidelberg, 2011. p. 720-722.

- Fu, H.; Li, C.; Liu, X.; Gao, J.; Celikyilmaz, A.; Carin, L. Cyclical annealing schedule: A simple approach to mitigating kl vanishing. arXiv preprint arXiv:1903.10145, arXiv:1903.10145, 2019.

- Hansen, P. R.; Lunde, A. Estimating the persistence and the autocorrelation function of a time series that is measured with error. Econometric Theory, 6: 30.1.

- Cabello-Solorzano, K.; Ortigosa de Araujo, I.; Peña, M.; Correia, L. ; J. Tallón-Ballesteros, A. The impact of data normalization on the accuracy of machine learning algorithms: a comparative analysis. In: International conference on soft computing models in industrial and environmental applications. Cham: Springer Nature Switzerland, 2023. p. 344-353.

- Banerjee, C.; Mukherjee, T.; Pasiliao Jr, E. An empirical study on generalizations of the ReLU activation function. In: Proceedings of the 2019 ACM Southeast Conference. 2019. p. 164-167.

- Zamanlooy, B.; Mirhassani, M. Efficient VLSI implementation of neural networks with hyperbolic tangent activation function. IEEE Transactions on Very Large Scale Integration (VLSI) Systems, 3: 22.1.

- Naseri, H.; Mehrdad, V. Novel CNN with investigation on accuracy by modifying stride, padding, kernel size and filter numbers. Multimedia Tools and Applications, 2: 82.15, 2367. [Google Scholar]

- Zhang, Z. Improved adam optimizer for deep neural networks. In: 2018 IEEE/ACM 26th international symposium on quality of service (IWQoS). Ieee, 2018. p. 1-2.

- Ji, Z.; Li, J.; Telgarsky, M. Early-stopped neural networks are consistent. Advances in Neural Information Processing Systems, 1: 34, 1805. [Google Scholar]

- Smith, K. E.; Smith, A. O. Conditional GAN for timeseries generation. arXiv preprint arXiv:2006.16477, arXiv:2006.16477, 2020.

- Panaretos, V. M.; Zemel, Y. Statistical aspects of Wasserstein distances. Annual review of statistics and its application, 4: 6.1.

- Tolstikhin, I.; Bousquet, O. ; Gelly, S; Schoelkopf, B. Wasserstein auto-encoders. arXiv preprint arXiv: 1711.01558, 2017. [Google Scholar]

- Lee, S. K.; Ko, H. Generative Machine Learning in Adaptive Control of Dynamic Manufacturing Processes: A Review. arXiv preprint arXiv:2505.00210, arXiv:2505.00210, 2025.

- Lee, J.; Jang, J.; Tang, Q.; Jung, H. LEE, Junhee, et al. Recipe Based Anomaly Detection with Adaptable Learning: Implications on Sustainable Smart Manufacturing. Sensors, 1: 25.5, 1457. [Google Scholar]

- Tynchenko, V.; Kukartseva, O.; Tynchenko, Y.; Kukartsev, V.; Panfilova, T.; Kravtsov, K.; Malashin, I. Predicting Tilapia Productivity in Geothermal Ponds: A Genetic Algorithm Approach for Sustainable Aquaculture Practices. Sustainability, 9: 16.21, 9276. [Google Scholar]

- Huang, W.; Cui, Y.; Li, H.; Wu, X. Effective probabilistic neural networks model for model-based reinforcement learning USV. IEEE Transactions on Automation Science and Engineering.

- Kang, H.; Kang, P. Transformer-based multivariate time series anomaly detection using inter-variable attention mechanism. Knowledge-Based Systems, 1: 290, 1115. [Google Scholar]

| Dataset | Domain | Raw Size | Shape (Rows × Cols) | Target Variable |

|---|---|---|---|---|

| A (Private) | Sugar | 71,820 | 71,820 × 29 | Brix concentration |

| B (Private) | Bio | 9,201 | 9,201 × 15 | pH concentration |

| C (Public) | Sensor | 99,573 | 99,573 × 25 | Raw Sensor_02 |

| Dataset | Split | Input Shape | Target Shape | Shape Format |

|---|---|---|---|---|

| A | Train | 59,980 × 20 × 28 | 59,980 × 1 | |

| Val | 1,241 × 20 × 28 | 1,241 × 1 | ||

| Test | 10,000 × 20 × 28 | 10,000 × 1 | ||

| B | Train | 6,415 × 85 × 14 | 6,415 × 1 | |

| Val | 531 × 85 × 14 | 531 × 1 | ||

| Test | 2,000 × 85 × 14 | 2,000 × 1 | ||

| C | Train | 83,940 × 60 × 24 | 83,940 × 1 | |

| Val | 5,454 × 60 × 24 | 5,454 × 1 | ||

| Test | 10,000 × 60 × 24 | 10,000 × 1 |

| Dataset | Measure Type | Best Model | Result |

|---|---|---|---|

| A | Balanced-MAE | DeepAR | 0.6673 |

| Unified Uncertainty Score | 0.1776 | ||

| B | Balanced-MAE | MCD CNN-LSTM | 0.1742 |

| Unified Uncertainty Score | DeepAR | 0.2549 | |

| C | Balanced-MAE | MCD CNN-LSTM | 0.3178 |

| Unified Uncertainty Score | DeepAR | 0.3306 |

| Parameter | Dataset A | Dataset B | Dataset C |

|---|---|---|---|

| Model architecture | 128–20–128 | 64–16–64 | 32–8–32 |

| Best epoch (early stopping) | 23/500 | 81/200 | 39/500 |

| Batch size | 64 | 32 | 64 |

| Optmizer | Adam (learning rate ) | ||

| KL Weight | 0.440 | 1.00 | 0.760 |

| Validation MAE Loss | 0.0227 | 0.0376 | 0.0030 |

| Recent Batch Size (N) | 10 | 10 | 30 |

| Target-Aware threshold () | Upper 95th Percentile | Upper 95th Percentile | Lower 95th Percentile |

| Penalty weight (p) | 1.0 | 1.0 | 1.0 |

| Encoder noise (Gaussian std ) | 0.5 | 0.1 | 0.001 |

| Posthoc noise (Gaussian std ) | 0.015 | 0.2 | 0.01 |

| Model | MAE() | Std () | MAE() | Point / Uncertainty Error |

|---|---|---|---|---|

| Dataset A | ||||

| Proposed | 5.1387 | 11.4274 | 1.8455 | 0.7516/0.1805 |

| Mean Reversion | 4.8363 | 11.4224 | 1.1245 | 1.1228/0.2456 |

| LSTM-CVAE | 5.0352 | 11.9609 | 1.2786 | 1.01691/0.2582 |

| LSTM-WCVAE | 5.2271 | 12.0363 | 2.2277 | 1.1855/0.2801 |

| LSTM-CGAN | 12.6540 | 19.7733 | 4.1229 | 1.6908/0.4080 |

| LSTM-WCGAN | 6.2006 | 14.4517 | 5.5122 | 1.3956/0.3238 |

| Dataset B | ||||

| Proposed | 10.7326 | 15.7482 | 9.8223 | 0.0191/0.2604 |

| Mean Reversion | 8.1444 | 11.4462 | 0.3268 | 0.0304/0.3042 |

| LSTM-CVAE | 9.6854 | 12.8046 | 0.8718 | 0.0299/0.2970 |

| LSTM-WCVAE | 10.0363 | 12.9578 | 1.8012 | 0.0302/0.2915 |

| LSTM-CGAN | 28.0970 | 63.6527 | 11.5516 | 0.0391/0.3864 |

| LSTM-WCGAN | 11.2080 | 15.0513 | 5.9422 | 0.0308/0.3127 |

| Dataset C | ||||

| Proposed | 13.0457 | 27.4023 | 10.2044 | 0.4585/0.2948 |

| Mean Reversion | 9.3925 | 18.1818 | 3.6464 | 0.6926/0.3450 |

| LSTM-CVAE | 7.3437 | 10.2652 | 7.5356 | 0.5039/0.3060 |

| LSTM-WCVAE | 24.2246 | 55.4900 | 7.6082 | 0.5616/0.3831 |

| LSTM-CGAN | 27.6172 | 61.3529 | 7.0803 | 0.7971/0.5492 |

| LSTM-WCGAN | 10.8584 | 23.1551 | 12.3627 | 0.8194/0.4304 |

| Dataset | MAE() | Std () | MAE() | Point / Uncertainty Error |

|---|---|---|---|---|

| A | / | |||

| B | / | |||

| C | / |

| Dataset | Target Type | Candidate Seqs | Best Seq Index | Best Prediction | ||

|---|---|---|---|---|---|---|

| A | Point | 0.4054 | 822 / 1,000 | 74.25 | 674 | 74.2492 |

| Lower | 812 / 1,000 | 72.10 | 720 | 72.7877 | ||

| Upper | 846 / 1,000 | 75.00 | 4 | 75.0135 | ||

| Min | 867 / 1,000 | 60.08 | 499 | 65.32 | ||

| Max | 877 / 1,000 | 77.37 | 868 | 75.83 | ||

| B | Point | 0.3037 | 899 / 1,000 | 7.0016 | 22 | 7.0015 |

| Lower | 898 / 1,000 | 6.9700 | 281 | 6.9732 | ||

| Upper | 899 / 1,000 | 7.1300 | 593 | 7.1076 | ||

| Min | 898 / 1,000 | 6.9535 | 897 | 6.9726 | ||

| Max | 898 / 1,000 | 7.1527 | 592 | 7.1260 | ||

| C | Point | 0.1271 | 981/1000 | -1.11 | 886 | -1.1123 |

| Lower | 970/1000 | -2.10 | 719 | -2.0997 | ||

| Upper | 987/1000 | 1.05 | 838 | 1.0468 | ||

| Min | 953/1000 | -4.90 | 57 | -4.0312 | ||

| Max | 989/1000 | 1.67 | 649 | 1.3597 |

| Dataset | Target Type | Candidate Seqs | Best Seq Index | Best Prediction | ||

|---|---|---|---|---|---|---|

| A | Point | 0.4054 | 781 / 1,000 | 74.25 | 351 | 74.2512 |

| Lower | 784 / 1,000 | 72.10 | 559 | 72.0962 | ||

| Upper | 796 / 1,000 | 75.00 | 140 | 74.9187 | ||

| Min | 792 / 1,000 | 60.08 | 44 | 65.3882 | ||

| Max | 753 / 1,000 | 77.37 | 671 | 75.7628 | ||

| B | Point | 0.3037 | 869 / 1,000 | 7.0016 | 655 | 7.0015 |

| Lower | 898 / 1,000 | 6.9700 | 868 | 6.9746 | ||

| Upper | 871 / 1,000 | 7.1300 | 189 | 7.1240 | ||

| Min | 868 / 1,000 | 6.9535 | 769 | 6.9747 | ||

| Max | 816 / 1,000 | 7.1527 | 176 | 7.1356 | ||

| C | Point | 0.1271 | 980/1000 | -1.11 | 162 | -1.1083 |

| Lower | 967/1000 | -2.10 | 541 | -2.0978 | ||

| Upper | 985/1000 | 1.05 | 142 | 1.0501 | ||

| Min | 953/1000 | -4.90 | 583 | -4.1495 | ||

| Max | 986/1000 | 1.67 | 647 | 1.3514 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).