Submitted:

03 August 2025

Posted:

05 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

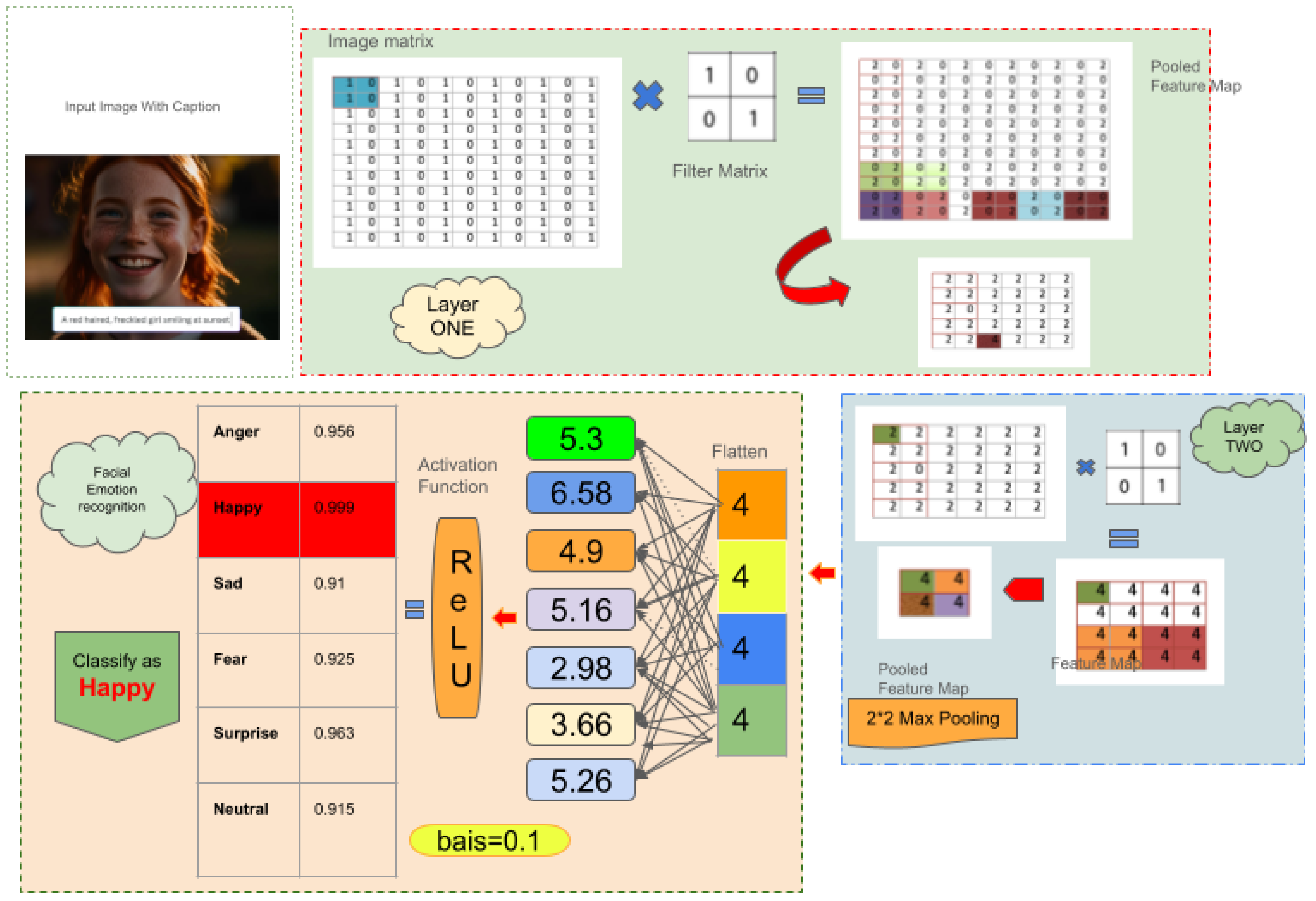

2. FER Architecture

- **Face Detection Layer**: Responsible for face localization and tracking in unconstrained environments. It is designed to be insensitive to problems such as occlusions and changing lighting conditions. • Feature Extraction Layer: It makes use of CNNs in identifying the prominent facial features and action units essential for emotion understanding. • Emotion Prediction Layer: Lastly, the features are categorized into discrete emotional classes with the help of pre-trained deep models.

- Contextual Processing Layer: Adds external sources of data—such as body movements, eye gaze, and speech—to enrich and contextualize emotional inference.

- Multimodal Integration Layer: Aggregates the output from different modalities (e.g., facial, auditory, and text inputs) through Multimodal Large Language Models (MLLMs) to create an all-encompassing emotional profile [6].

3. Related Studies

4. Dataset Development

5. Methodology

5.1. A. Data Preprocessing

5.2. B. Visual Approach

-

Convolution OperationFor a convolution filter , the convolution output at location is computed as:

-

Activation Function (ReLU)The output of the convolution is passed through a non-linear activation function:

-

Max PoolingPooling reduces the spatial dimensions. For a pooling window:

-

FlatteningAfter the final convolution and pooling layers, the feature maps are flattened into a 1D vector:

-

Fully Connected Layer with Bias and ReLUEach neuron computes a weighted sum followed by an activation function:

-

Output Layer with SoftmaxTo produce probabilities for each emotion class:where K is the number of emotion categories (e.g., Happy, Sad, Angry, etc.)

-

ClassificationThe predicted emotion is the class with the highest probability:

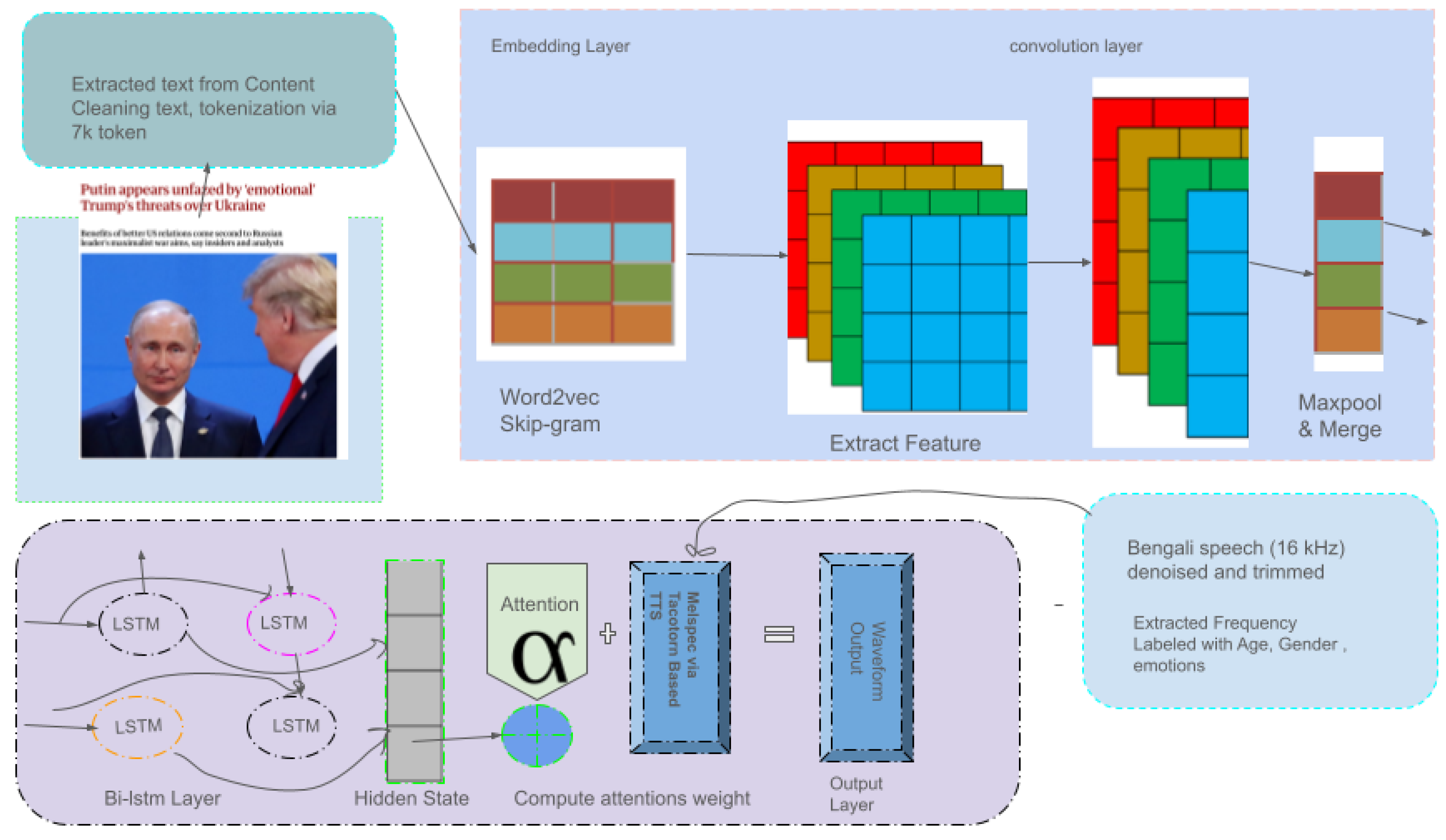

5.3. C. Textual and Audio Approach

5.4. D. Multimodal Approach

- A facial emotion recognition (FER) module,

- A sequence generation module (text decoder),

- A Tacotron 2 + WaveGlow-based speech synthesis pipeline.

1. Stage I: Facial Emotion Recognition (FER)

2. Stage II: Emotion-to-Text Feedback Generation

3. Stage III: Speech Synthesis with Tacotron 2 + WaveGlow

Final Output

6. Results and Performance Analysis

6.1. Performance of Pre-Trained Models

6.1.1. ResNet-50

6.1.2. MobileNetV2

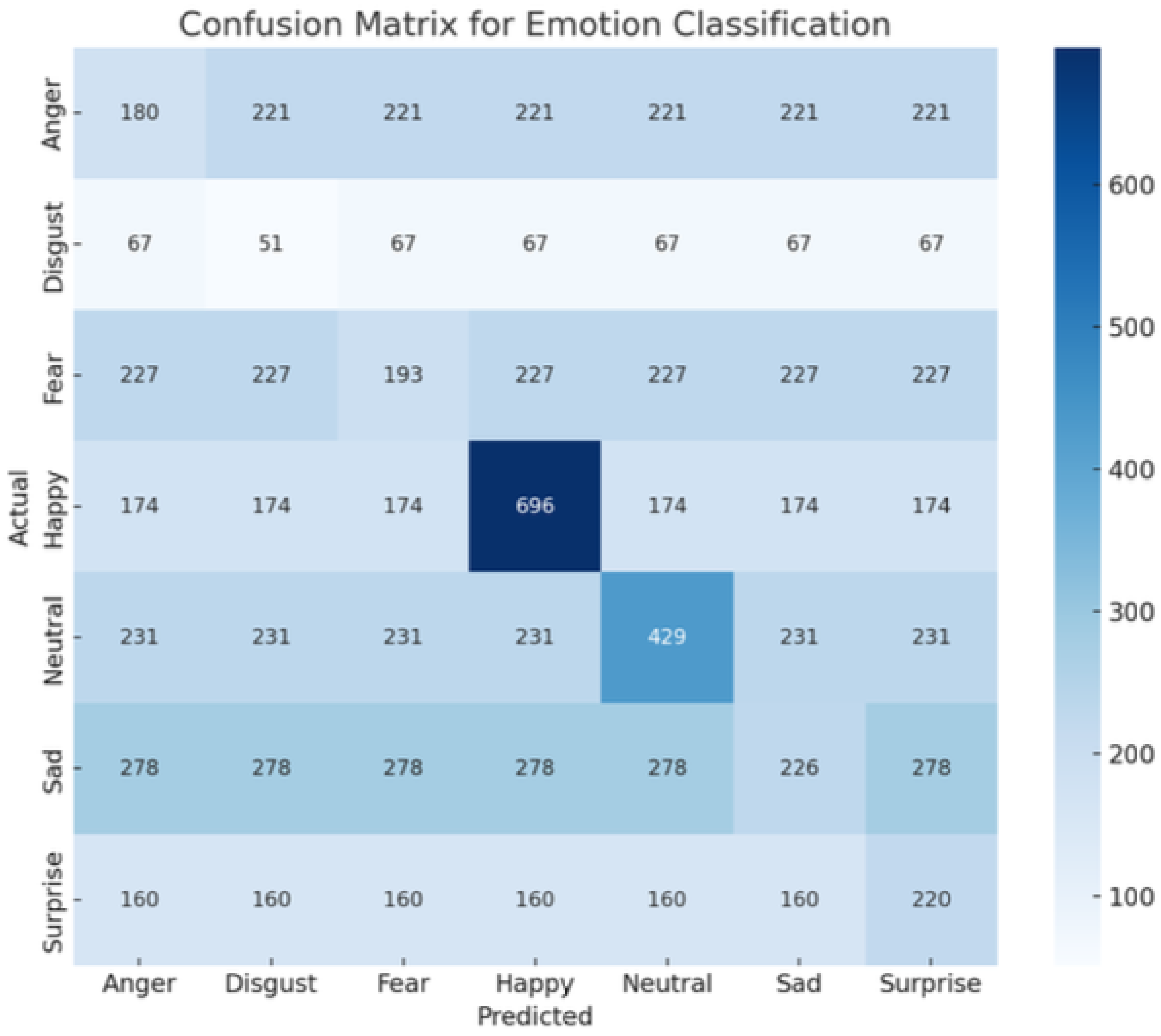

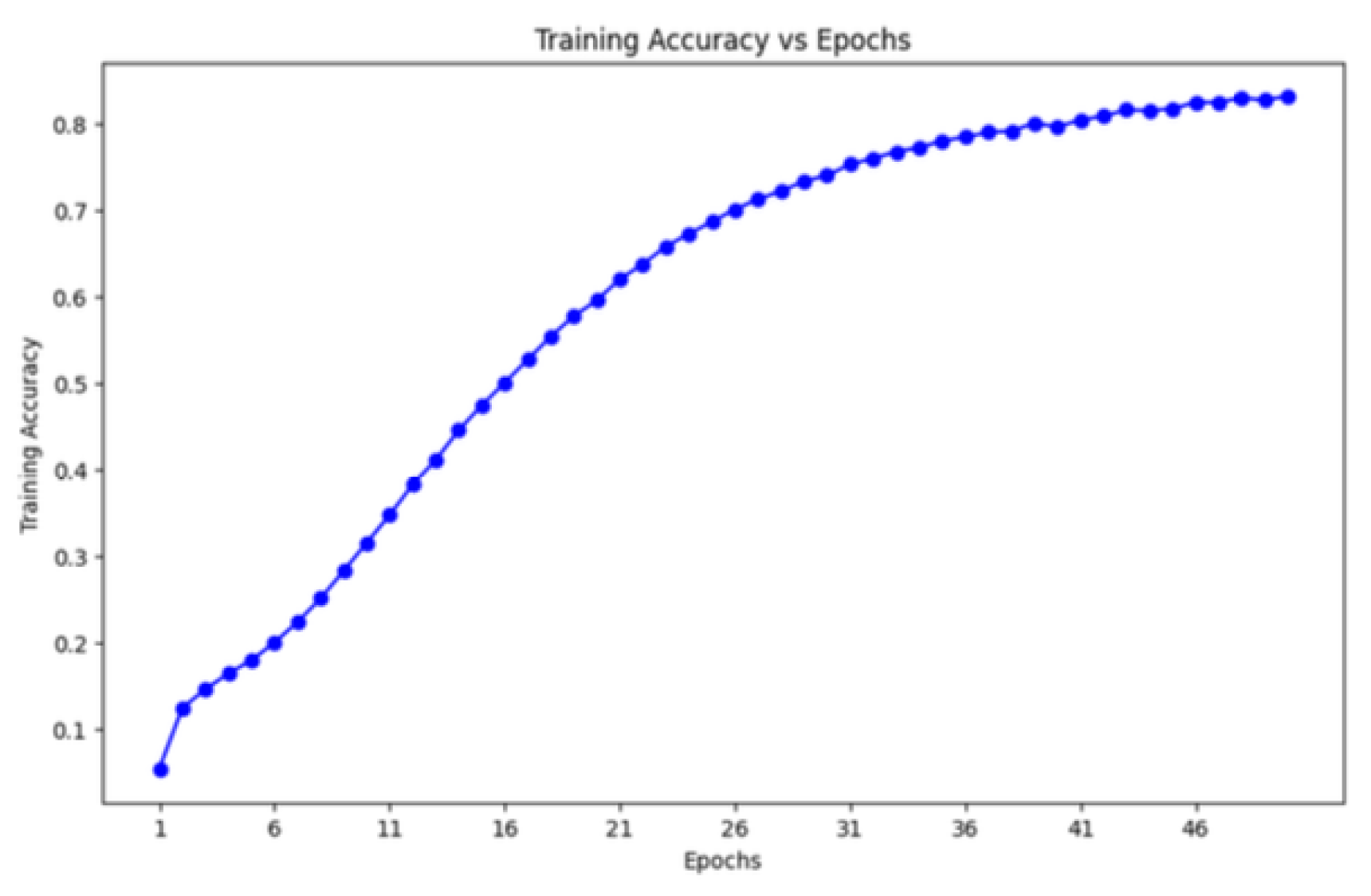

6.2. Performance of the Proposed Model

| Class | Precision | Recall | F1-Score | Support |

|---|---|---|---|---|

| Anger | 0.45 | 0.50 | 0.47 | 401 |

| Disgust | 0.44 | 0.48 | 0.46 | 118 |

| Fear | 0.46 | 0.40 | 0.43 | 420 |

| Happy | 0.80 | 0.78 | 0.79 | 870 |

| Neutral | 0.65 | 0.65 | 0.65 | 660 |

| Sad | 0.45 | 0.42 | 0.43 | 504 |

| Surprise | 0.58 | 0.62 | 0.60 | 380 |

| Accuracy | 0.58 (3607) | |||

| Macro Avg | 0.55 | 0.55 | 0.55 | 3607 |

| Weighted Avg | 0.58 | 0.58 | 0.58 | 3607 |

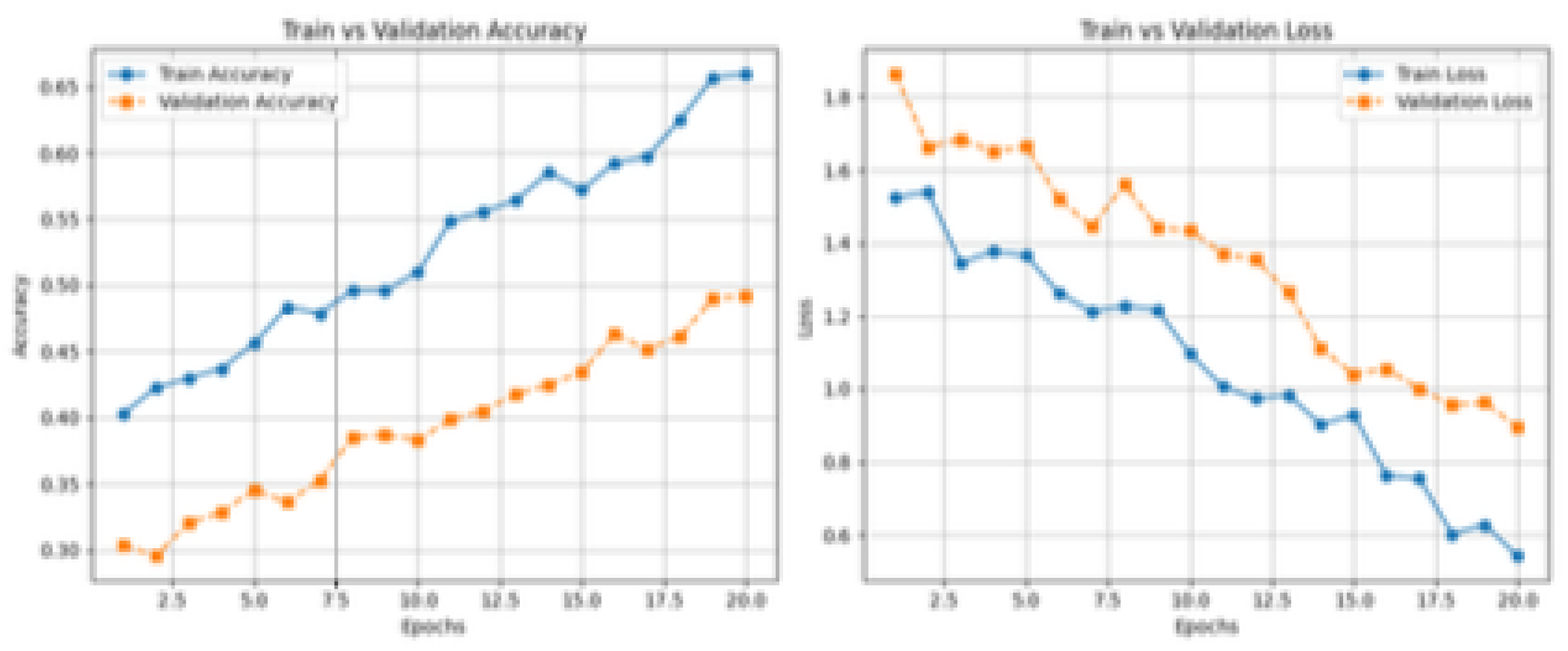

6.3. Feedback Generation Performance

7. Discussion

| Model | Training Accuracy (%) | Validation Accuracy (%) |

|---|---|---|

| ResNet-50 | 56.59 | 33.42 |

| MobileNetV2 | 60.82 | 45.69 |

| Best_FER (Proposed Model) | 65.12 | 50.34 |

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| MDPI | Multidisciplinary Digital Publishing Institute |

| DOAJ | Directory of open access journals |

| TLA | Three letter acronym |

| LD | Linear dichroism |

References

- Scherer, M.J. Assistive Technology: Matching Device and Consumer for Successful Rehabilitation; American Psychological Association: Washington, DC, USA, 2002.

- Graves, A.; Schmidhuber, J. Framewise phoneme classification with bidirectional lstm and other neural network architectures. Neural Netw. 2005, 18, 602–610. [Google Scholar] [CrossRef] [PubMed]

- Hossain, S.A.; Rahman, M.L.; Ahmed, F. A review on bangla phoneme production and perception for computational approaches. In Proceedings of the 7th WSEAS International Conference on Mathematical Methods and Computational Techniques In Electrical Engineering, 2005; pp. 346–354.

- Fathurahman, K.; Lestari, D.P. Support vector machine-based automatic music transcription for transcribing polyphonic music into musicxml. In Proceedings of the 2015 International Conference on Electrical Engineering and Informatics (ICEEI), Bali, Indonesia, 10–11 August 2015; pp. 535–539. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Yu, F. Multi-scale context aggregation by dilated convolutions. arXiv 2015, arXiv:1511.07122. [Google Scholar]

- Goodfellow, I. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Chollet, F. The limitations of deep learning. In Deep Learning with Python; Manning Publications: Shelter Island, NY, USA, 2017. [Google Scholar]

- Smith, L.N. Cyclical learning rates for training neural networks. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017; pp. 464–472. [Google Scholar]

- Baltrušaitis, T.; Ahuja, C.; Morency, L.P. Multimodal machine learning: A survey and taxonomy. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 423–443. [Google Scholar] [CrossRef] [PubMed]

- Buda, M.; Maki, A.; Mazurowski, M.A. A systematic study of the class imbalance problem in convolutional neural networks. Neural Netw. 2018, 106, 249–259. [Google Scholar] [CrossRef] [PubMed]

- Smith, L.N. A Disciplined Approach to Neural Network Hyper-Parameters: Part 1 – Learning Rate, Batch Size, Momentum, and Weight Decay. arXiv 2018, arXiv:1803.09820. Available online: https://arxiv.org/abs/1803.09820 (accessed on 22 July 2025). [Google Scholar]

- Shorten, C.; Khoshgoftaar, T. A survey on image data augmentation for deep learning. J. Big Data 2019, 6, 60. [Google Scholar] [CrossRef]

- K., N.; Patil, A. Multimodal Emotion Recognition Using Cross-Modal Attention and 1D Convolutional Neural Networks. Available online: https://api.semanticscholar.org/CorpusID:226202203 (accessed on 22 July 2025).

- Nadimuthu, S.; Dhanaraj, K.R.; Kanthan, J. Brain tumor classification using convolution neural network. J. Phys.: Conf. Ser. 2021, 1916, 012206. [Google Scholar]

- Maithri, M.; Raghavendra, U.; Gudigar, A.; et al. Automated emotion recognition: Current trends and future perspectives. Comput. Methods Programs Biomed. 2022, 215, 106646. [Google Scholar] [CrossRef] [PubMed]

- Monica, S.; Mary, R.R. Face and emotion recognition from real-time facial expressions using deep learning algorithms. In Congress on Intelligent Systems; Saraswat, M., Sharma, H., Balachandran, K., Kim, J.H., Bansal, J.C., Eds.; Springer Nature Singapore: Singapore, 2022; pp. 451–460. [Google Scholar]

- Wang, Y.; Song, W.; Tao, W.; et al. A systematic review on affective computing: Emotion models, databases, and recent advances. arXiv 2022, arXiv:2203.06935. [Google Scholar] [CrossRef]

- Venkatakrishnan, R.; Goodarzi, M.; Canbaz, M.A. Exploring large language models’ emotion detection abilities: Use cases from the middle east. In Proceedings of the 2023 IEEE Conference on Artificial Intelligence (CAI), Santa Clara, CA, USA, 5–7 June 2023; pp. 241–244. [Google Scholar]

- Bian, Y.; Küster, D.; Liu, H.; Krumhuber, E.G. Understanding naturalistic facial expressions with deep learning and multimodal large language models. Sensors 2024, 24, 126. [Google Scholar] [CrossRef] [PubMed]

- Bian, Y.; Küster, D.; Liu, H.; Krumhuber, E.G. Understanding naturalistic facial expressions with deep learning and multimodal large language models. Sensors 2024, 24, 126. [Google Scholar] [CrossRef] [PubMed]

- Dahal, A.; Moulik, S. The multi-model stacking and ensemble framework for human activity recognition. IEEE Sens. Lett. 2024, in press. [Google Scholar] [CrossRef]

- Li, D.; Liu, X.; Xing, B.; et al. Eald-mllm: Emotion analysis in long-sequential and de-identity videos with multi-modal large language model. arXiv 2024, arXiv:2405.00574. [Google Scholar]

- Ouali, S. Deep learning for arabic speech recognition using convolutional neural networks. J. Electr. Syst. 2024, 20, 3032–3039. [Google Scholar] [CrossRef]

| Class | Train | Val | Test | Total | NW | NUW | AW |

|---|---|---|---|---|---|---|---|

| Happy | 2580 | 322 | 323 | 3225 | 28940 | 9330 | 11.16 |

| Angry | 1760 | 215 | 218 | 2193 | 19670 | 7084 | 11.01 |

| Disgust | 1320 | 165 | 170 | 1655 | 14390 | 4992 | 11.36 |

| Fear | 1420 | 178 | 180 | 1778 | 15791 | 5320 | 11.10 |

| Sad | 1900 | 240 | 240 | 2380 | 21980 | 7103 | 12.04 |

| Surprise | 1550 | 195 | 195 | 1940 | 17991 | 6453 | 11.79 |

| Neutral | 2820 | 355 | 359 | 3534 | 30885 | 10420 | 10.97 |

| Total | 13450 | 1670 | 1685 | 16785 | 149647 | 50702 | - |

| Emotion | Text Samples | Audio Samples | Avg. Words | Avg. Duration (s) |

|---|---|---|---|---|

| Happy | 912 | 703 | 8.6 | 2.1 |

| Sad | 840 | 680 | 9.1 | 2.3 |

| Angry | 721 | 615 | 7.4 | 2.0 |

| Fear | 603 | 520 | 8.2 | 2.2 |

| Disgust | 497 | 412 | 7.9 | 2.1 |

| Surprise | 672 | 541 | 8.7 | 2.0 |

| Neutral | 820 | 690 | 7.8 | 1.9 |

| Total | 6065 | 4161 | - | - |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).