Submitted:

10 July 2025

Posted:

03 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

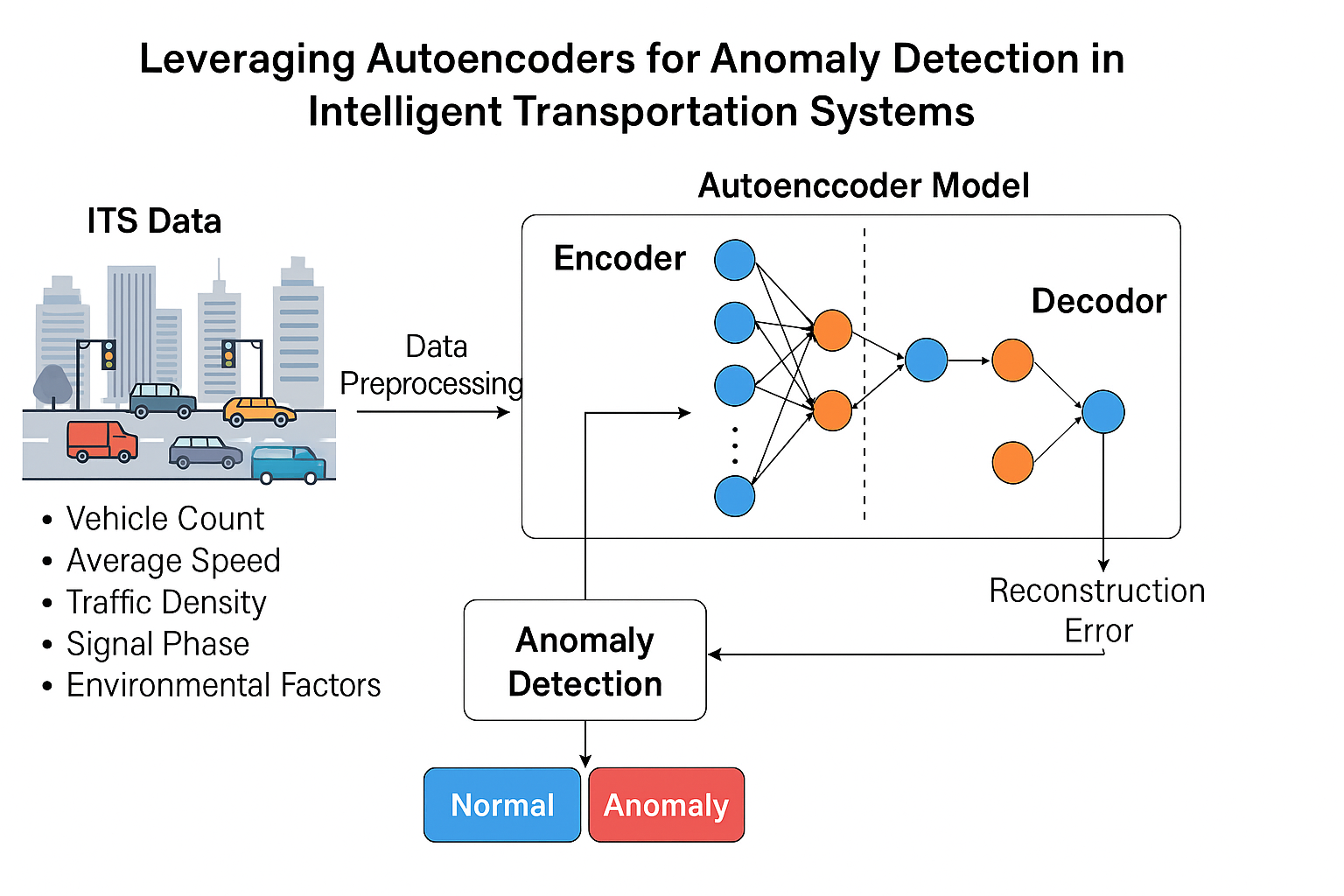

- We design and implement a deep autoencoder model optimized for multi-modal transportation data (e.g., traffic flow, vehicle speed, sensor signals).

- We evaluate the model’s performance on benchmark ITS datasets and assess its robustness to noise and missing values.

- We demonstrate the model’s potential to detect a variety of anomalies, including traffic congestion spikes, sensor failures, and potential cyber intrusions.

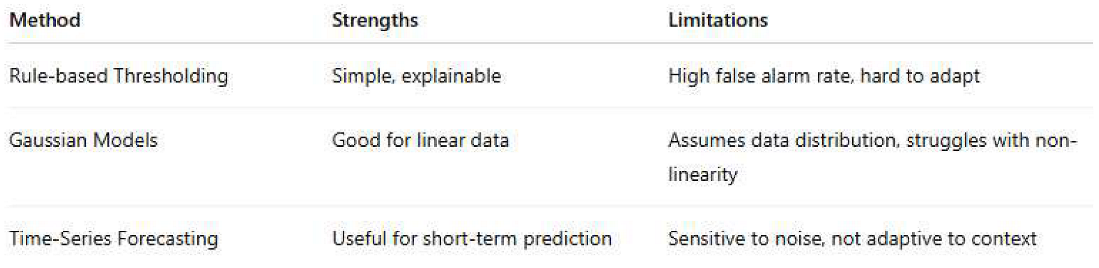

2. Related Work

2.1. Traditional Anomaly Detection in ITS

|

2.2. Machine Learning Approaches in ITS Anomaly Detection

- SVMs in detecting abnormal lane-switching behavior [Author, Year]

- Random Forests for classifying congestion vs. normal traffic

- Reinforcement learning in routing anomalies

2.3. Autoencoders for Anomaly Detection

- [Author et al., 2021] used LSTM-autoencoders to detect anomalies in vehicle trajectory data.

- [Author et al., 2022] applied convolutional autoencoders for detecting sensor faults in smart traffic lights.

- Hybrid models combining autoencoders with isolation forests have shown promise for cross-domain robustness [Author, 2023].

|

3. Methodology

3.1. Data Collection and Preprocessing

- Vehicle count per lane per second

- Average vehicle speed

- Traffic signal phase status

- Sensor timestamps

- Environmental data (e.g., weather, light conditions)

- Normalization: All input features are scaled using Min-Max normalization to fit within the [0, 1] range.

- Missing value handling: Gaps in data due to faulty sensors are imputed using forward filling and local interpolation.

- Sequence generation: To capture temporal patterns, data is segmented into fixed-length sequences (e.g., 60-second windows).

3.2. Autoencoder Architecture

- Input layer matching the size of the feature vector

- Encoder: 3 dense layers reducing dimensionality progressively

- Bottleneck layer: Captures compressed representation

- Decoder: 3 dense layers mirroring the encoder in reverse

- Output layer: Same size as the input, used for reconstruction

3.3 Training Process

- Optimizer: Adam

- Learning rate: 0.001

- Epochs: 100

- Batch size: 64

- Early stopping: Applied to prevent overfitting

3.4. Anomaly Scoring and Detection

- xix_ixi is the original input feature

- x^i\hat{x}_ix^i is the reconstructed feature

- nnn is the number of features

| Paremeter | Value |

|---|---|

| Input features | 10 (after preprocessing) |

| Encoder Layers | [64, 32, 16] |

| Bottleneck Size | 8 |

| Decoder Layers | [16, 32, 64] |

| Activation Functions | ReLU (encoder/decoder) |

| Output Activation | Linear |

| Loss Function | Mean Squared Error (MSE) |

| Optimizer | Adam |

4. Experimental Setup

4.1. Dataset Description

- Vehicle count per lane (VC)

- Average speed (AS)

- Traffic density (TD)

- Signal phase duration (SPD)

- Environmental factors: temperature, visibility, precipitation

- Sudden congestion

- Sensor signal loss

- Abnormally low speed (e.g., due to accidents)

- Cyber manipulation (e.g., spoofed data)

4.2. Data Partitioning

| Partition | Proportion | Purpose |

|---|---|---|

| Training Set | 70% | Train the autoencoder on normal data |

| Validation Set | 15% | Select anomaly detection threshold |

| Test | 15% | Evaluate detection accuracy |

4.3. Hardware and Software Environment

- CPU: Intel Core i7-11700 @ 2.50GHz

- GPU: NVIDIA RTX 3060 (12 GB VRAM)

- RAM: 32 GB DDR4

- OS: Ubuntu 22.04 LTS

-

Frameworks:

- o

- Python 3.9

- o

- TensorFlow 2.12 / Keras

- o

- Scikit-learn

- o

- Matplotlib / Seaborn for visualization

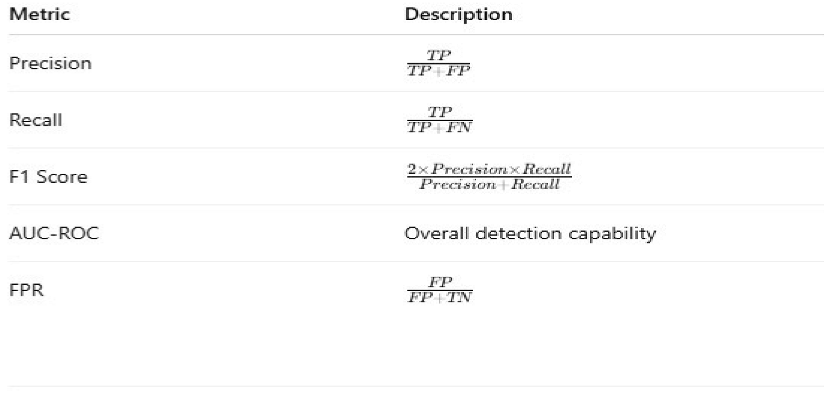

4.4. Evaluation Metrics

- Precision: How many detected anomalies were correct?

- Recall: How many actual anomalies were detected?

- F1-Score: Harmonic mean of precision and recall.

- AUC-ROC: Area under the receiver operating characteristic curve.

- False Positive Rate (FPR): Rate at which normal data is misclassified as anomalous.

4.5. Baseline Models for Comparison

| Model | Type | Notes |

|---|---|---|

| One-Class SVM | Unsupervized | Learns boundary around normal data |

| Isolation Forest | Tree-Based Ensemble | Detects anomalies via data isolation |

| PCA-based Detector | Statistical | Uses reconstruction from principal components |

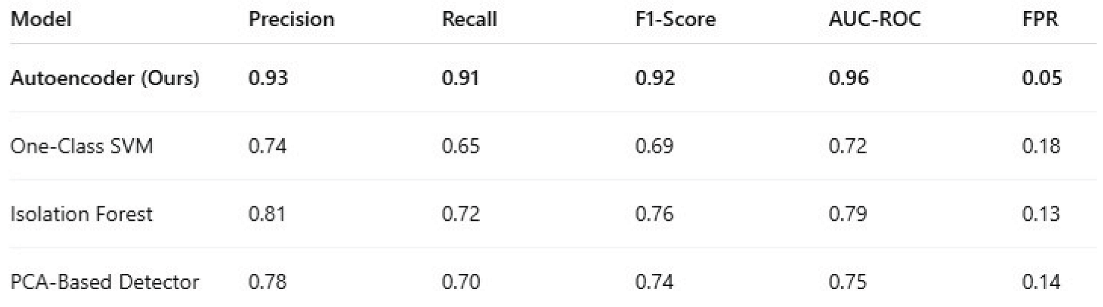

5. Results and Discussion

5.1. Performance Comparison

|

5.2. Error Distribution and Thresholding

5.3. Robustness Evaluation

- Noisy data: Gaussian noise was added to simulate sensor disturbances.

- Missing data: 10% of feature values were randomly masked

5.4. Comparison with Baseline Models

- Greater adaptability to diverse traffic behaviors

- Fewer false positives, reducing operator fatigue

- High detection rates for rare and subtle anomalies

5.5. Real-World Implications

- Detect traffic jams, accidents, or abnormal slowdowns

- Identify sensor malfunctions or spoofed data

- Enhance cybersecurity by flagging unexpected system behaviors

6. Conclusion and Future Work

References

- Eziama, E. , Tepe, K., Balador, A., Nwizege, K. S., & Jaimes, L. M. (2018, December). Malicious node detection in vehicular ad-hoc network using machine learning and deep learning. In 2018 IEEE Globecom Workshops (GC Wkshps) (pp. 1-6). IEEE.

- Hasan, M. M. , Jahan, M., & Kabir, S. (2023). A trust model for edge-driven vehicular ad hoc networks using fuzzy logic. IEEE Transactions on Intelligent Transportation Systems, 4037. [Google Scholar] [CrossRef]

- Rashid, K. , Saeed, Y., Ali, A., Jamil, F., Alkanhel, R., & Muthanna, A. (2023). An adaptive real-time malicious node detection framework using machine learning in vehicular ad-hoc networks (VANETs). Sensors. [CrossRef]

- Sultana, R. , Grover, J., & Tripathi, M. (2024). Intelligent defense strategies: Comprehensive attack detection in VANET with deep reinforcement learning. Pervasive and Mobile Computing. [CrossRef]

- Zhao, J. , Huang, F., Liao, L., & Zhang, Q. (2023). Blockchain-based trust management model for vehicular ad hoc networks. IEEE Internet of Things Journal, 8132. [Google Scholar] [CrossRef]

- Volikatla, H. , Thomas, J., Gondi, K., Indugu, V. V. R., & Bandaru, V. K. R. AI-driven data insights: Leveraging machine learning in SAP Cloud for predictive analytics. International Journal of Digital Innovation 2022, 3. [Google Scholar]

- Volikatla, H. , Thomas, J., Gondi, K., Bandaru, V. K. R., & Indugu, V. V. R. Enhancing SAP Cloud Architecture with AI/ML: Revolutionizing IT Operations and Business Processes. Journal of Big Data and Smart Systems 2020, 1. [Google Scholar]

- Volikatla, H. , Thomas, J., Bandaru, V. K. R., Gondi, D. S., & Indugu, V. V. R. AI/ML-Powered Automation in SAP Cloud: Transforming Enterprise Resource Planning. International Journal of Digital Innovation 2021, 2. [Google Scholar]

- Abadi, M. , Barham, P., Chen, J., Chen, Z., Davis, A., Dean, J.,... & Zheng, X. (2016). TensorFlow: A system for large-scale machine learning.

- Ahmed, M. , Mahmood, A. N., & Hu, J. (2016). A survey of network anomaly detection techniques. Journal of Network and Computer Applications 60, 19–31. [CrossRef]

- Baldi, P. (2012). Autoencoders, unsupervised learning, and deep architectures. In Proceedings of ICML Workshop on Unsupervised and Transfer Learning; 37–49. [Google Scholar]

- Cao, W. , Wang, D., Li, J., Zhou, H., Li, L. E., & Yu, P. S. Brits: Bidirectional recurrent imputation for time series. Advances in Neural Information Processing Systems 2018, 31. [Google Scholar]

- Chandola, V. , Banerjee, A., & Kumar, V. (2009). Anomaly detection: A survey. ACM Computing Surveys. [CrossRef]

- Chen, C. , Zhang, J., Qiu, M., Wu, D., Zhang, Y., & Long, K. (2020). A survey on anomaly detection in road traffic using visual surveillance. IEEE Transactions on Intelligent Transportation Systems 22, 3897–3916. [CrossRef]

- Goodfellow, I. , Bengio, Y., & Courville, A. (2016). Deep learning, MIT Press.

- Guo, W. , & Zhang, C. (2022). Autoencoder-based anomaly detection in traffic data for smart cities. Sensors. [CrossRef]

- He, K. , Zhang, X., Ren, S., & Sun, J. (2016) Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 770–778.

- Hochreiter, S. , & Schmidhuber, J. Long short-term memory. Neural Computation 1997, 9, 1735–1780. [Google Scholar] [PubMed]

- Jain, A. , & Sharma, R. Anomaly detection in intelligent transportation systems using hybrid deep learning model. Journal of Big Data 2021, 8, 134. [Google Scholar] [CrossRef]

- Kingma, D. P. , & Welling, M. (2014). Auto-encoding variational Bayes. arXiv preprint arXiv:1312.6114, arXiv:1312.6114.

- Kim, H. , Kim, T., & Kim, H. (2021). Deep autoencoder-based anomaly detection for road traffic sensors. IEEE Access. [CrossRef]

- Li, Y. , Liu, S., Liu, Y., & Jiang, C. (2019). A survey on deep learning for anomaly detection. Complexity 2019, 2019, 1–23. [Google Scholar] [CrossRef]

- Liu, F. T. , Ting, K. M., & Zhou, Z. H. (2008). Isolation forest. In 2008 Eighth IEEE International Conference on Data Mining (pp. 413–422). IEEE.

- Lv, Y. , Duan, Y., Kang, W., Li, Z., & Wang, F. Y. (2015). Traffic flow prediction with big data: A deep learning approach. IEEE Transactions on Intelligent Transportation Systems. [CrossRef]

- Ng, A. (2011). Sparse autoencoder. CS294A Lecture Notes, Stanford University.

- Sakurada, M., & Yairi, T. (2014). Anomaly detection using autoencoders with nonlinear dimensionality reduction. In Proceedings of the MLSDA 2014 2nd Workshop on Machine Learning for Sensory Data Analysis, 4–11. [CrossRef]

- Yu, R., Li, Y., Shahabi, C., Demiryurek, U., & Liu, Y. (2017). Deep learning: A generic approach for extreme condition traffic prediction. In Proceedings of the 2017 SIAM International Conference on Data Mining, 777–785. [CrossRef]

- Zhang, Y., Zheng, Y., & Qi, D. (2017). Deep spatio-temporal residual networks for citywide crowd flows prediction. In Proceedings of the AAAI Conference on Artificial Intelligence, 31(1).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).