2. Literature Review

While cybersecurity behavior is shaped by a wide range of organizational, cultural, and infrastructural influences, our focus is restricted to individual-level predictors. In line with the study’s goal—to build interpretable models from user-reported data—we exclude factors such as organizational security culture, policy enforcement, and community-level deterrents, which, though important (e.g., Glaspie & Karwowski, 2018; Lahcen et al., 2020), fall outside the scope of our end-user-centered framework.

This section is organized into three parts.

Section 2.1 introduces the five thematic domains that served as predictors in our modeling framework: Work–Life Blurring, Risk Rationalization, Cybersecurity Behavior, Digital Literacy, and Personality Traits. These domains were selected for their conceptual distinctiveness, empirical grounding, and applicability in behaviorally driven cybersecurity research. Each domain reflects a specific pathway through which users may become vulnerable to digital threats, and their operationalization in the survey enabled interpretable, item-level analysis.

Section 2.2 turns to the outcome side of our model: the typology of cybersecurity incidents reported by participants. Drawing on prior work in digital behavior research, we classify incidents into mild and serious categories to capture the full spectrum of consequences. We also introduce composite outcome variables designed to improve robustness in classification.

Finally,

Section 2.3 reviews prior approaches to predictive modeling in cybersecurity, focusing on how user-level vulnerability has been estimated using survey-based scoring tools, real-time behavioral analytics, and hybrid systems that combine static and dynamic inputs. Despite growing calls for human-centered cybersecurity, surprisingly little is known about the actual predictive power of behavioral and psychological variables in forecasting real-world incidents. Much of the existing literature offers conceptual frameworks or retrospective accounts but stops short of rigorously testing whether behavioral indicators meaningfully outperform—or even complement—technical or organizational predictors. As such, the empirical strength of the behavioral perspective remains an open question. This study seeks to address that gap by evaluating the predictive contributions of multiple behavioral, cognitive, and dispositional domains in explaining incident occurrence. This context provides a methodological foundation for our use of logistic regression and situates our approach within ongoing efforts to operationalize human-centered cybersecurity through scalable and adaptive predictive tools.

Together, these three sections provide the conceptual and empirical basis for the predictive models presented in the remainder of the paper.

2.1. Behavioral-Cognitive Domains of Cyber Risk

This section presents the five-domain conceptual framework that underpins our predictive modeling of cybersecurity incident risk.

Although Work–Life Blurring (WLB) emerged in this study as a weaker direct predictor of cybersecurity incidents, its theoretical relevance as a contextual amplifier of digital risk remains compelling. WLB refers to the erosion of traditional boundaries between professional and personal domains, often driven by ubiquitous connectivity, mobile technologies, and flexible work arrangements. Drawing from boundary theory and segmentation–integration models (Ashforth et al., 2000; Kossek et al., 2012; Nippert-Eng, 1996), WLB captures individuals’ capacity—or lack thereof—to maintain psychological detachment and role clarity in hybrid environments. Prior studies have linked WLB to increased technostress, digital fatigue, and self-regulatory depletion, all of which are hypothesized precursors to risky digital behavior (Derks et al., 2014; Mazmanian et al., 2013; Wajcman et al., 2010). Recent conceptual reviews employing the Antecedents–Decisions–Outcomes (ADO) framework further underscore the multidimensionality of WLB, highlighting how various antecedents—including organizational expectations and digital norms—interact with individual coping strategies and behavioral outcomes (Singh et al., 2022). Other empirical evidence suggests that WLB may not directly cause security lapses but instead creates gray zones in which secure and insecure behaviors coexist without clear norms or oversight (Borkovich & Skovira, 2020; Thilagavathy and Geetha, 2023).

In operational terms, our survey captured WLB through items measuring difficulty in detachment from work-related communications, frequency of cross-domain device sharing, and perceived expectations of constant availability.

Risk Rationalization (RR) refers to cognitive mechanisms by which individuals justify their security-compromising actions. This concept extends Bandura’s (1999) theory of moral disengagement and is aligned with neutralization theory from criminology, as applied in information systems security contexts (Siponen & Vance, 2010; Willison et al., 2018). Users may, for instance, rationalize bypassing a security step as a necessity due to work urgency or consider it harmless if no immediate consequence follows (Cheng et al., 2014; Posey et al., 2011). Recent studies have reframed such justifications as a form of “cybersecurity hygiene discounting,” wherein individuals diminish the perceived importance of routine protective behaviours—such as software updates or secure password use—based on convenience or perceived irrelevance (Siponen et al., 2024). This reframing builds on and expands traditional neutralization theory by emphasizing not only post-hoc rationalizations but also proactive cognitive shortcuts that devalue protective norms. Empirical work further confirms that specific rationalization techniques, such as denial of responsibility and metaphor of the ledger, significantly predict misuse of organizational information systems, even when policy awareness and deterrence are present (Mohammed et al., 2025). These developments highlight RR not merely as a residual factor but as a central cognitive mechanism actively shaping security compliance and noncompliance in workplace settings.

Our survey included RR items reflecting common justifications: blaming inconvenience, shifting responsibility, or citing lack of clarity in policy requirements.

Cybersecurity Behaviour (CB) encompasses tangible user actions that either mitigate or amplify digital risk exposure. Foundational research has linked insecure practices—such as habitual password reuse, neglecting software updates, and sharing personal devices—to elevated organizational vulnerabilities (Hadlington, 2018; Pfleeger et al., 2014; Vance et al., 2012). Parallel findings have also emerged in higher education, where survey-based models have revealed distinct behavioral dimensions shaping students’ cybersecurity awareness and practices (Bognár & Bottyán, 2024). Conversely, secure behaviours like enabling multi-factor authentication (MFA) and regularly updating credentials have been shown to significantly reduce breach likelihood (Bulgurcu et al., 2010; Ng et al., 2009). Later empirical work continues to support these findings. Zwilling et al. (2020), in a cross-national survey, found that despite relatively high awareness, individuals often failed to adopt protective behaviours such as using strong and unique passwords or enabling MFA. Recent studies reinforce the continued importance of cybersecurity behaviours. A 2025 systematic review of multi-factor authentication (MFA) in digital payment systems found that, despite its clear protective benefits, MFA remains underutilized due to usability challenges and misalignment with NIST standards (Tran-Truong et al., 2025). In parallel, usage trends show many small and medium-sized businesses still lagging—65% reported not implementing MFA, often citing cost and lack of awareness. Regarding password hygiene, Radwan and Zejnilovic (2025) reported that nearly half of observed user logins are compromised due to password reuse. Complementary data from JumpCloud (Blanton, S. 2024) revealed that up to 30% of organizational breaches involve password sharing, reuse, or phishing—highlighting how user behaviour continues to drive risk. These findings underscore the persistent relevance of secure digital habits and the necessity of tracking them at the user level for effective cybersecurity risk assessment.

Our behavioural scale was designed to reflect these ongoing risks by including both negatively and positively framed items that capture users' actual digital practices—such as MFA usage and password management—rather than relying solely on self-perceived awareness.

Digital Literacy (DL) incorporates both general digital skills and cybersecurity-specific awareness. Foundational scholarship by Paul Gilster (1997) first described digital literacy as the capacity to “…understand and use information from a variety of digital sources,” establishing early recognition of its multifaceted nature. Subsequent work by DiMaggio and Hargittai (2001) expanded this to include both access to technology and the skills to use it effectively. Hargittai (2005) further refined the concept, emphasizing users’ navigational competence and evaluative judgment online. Park (2011) showed that knowing how the internet works, how companies use data, and what privacy policies mean helps people take better control of their online privacy—highlighting why digital literacy matters for cybersecurity behaviour. Later, van Deursen and van Dijk (2014) introduced a layered model of digital literacy encompassing operational, formal, and critical dimensions—areas crucial for interpreting security-related signals. Recent studies reinforce DL’s role in cybersecurity resilience. A qualitative study found that low digital literacy increases the vulnerability of both individuals and organizations to cyberattacks (Ramadhany et al., 2025). In Vietnam, Phan et al. (2025) reported that higher digital literacy predicts better personal information security behaviors, indicating stronger technical and policy awareness leads to safer online actions. Broader evidence also shows that people with higher digital literacy are significantly better at spotting phishing scams and other online threats (Ismael, 2025). These findings underscore that DL is not just theoretical, they confirm it is a measurable and trainable asset essential for reducing cybersecurity risk.

Our DL scale thus captures confidence and competence in managing digital risk—evaluating email legitimacy, interpreting URLs, and identifying secure connections. Items also reflect functional digital fluency, procedural autonomy, and emotional self-regulation, including technostress.

Personality Traits (P) have long been recognized as foundational predictors of individual behaviour across domains, including cybersecurity. The Big Five model (McCrae & Costa, 1999) remains the most widely adopted framework in this context, encompassing five dimensions: Openness to Experience, Conscientiousness, Extraversion, Agreeableness, and Neuroticism. Several other traits frequently investigated in cybersecurity research—such as Impulsivity, Vigilance, or Trust Propensity—can be understood as facets or behavioural expressions rooted in these five broader domains. Impulsivity often conceptualized as a facet of low Conscientiousness (e.g., lack of discipline, poor self-control) or high Neuroticism (e.g., emotional reactivity, stress-driven decisions). Its inclusion in cybersecurity research reflects its strong predictive value for risky behaviour (Gratian et al., 2018; Hadlington, 2017; McCormac et al., 2017). Research on personality and privacy suggests that individuals high in Agreeableness and Openness to Experience often show heightened concern for personal data and privacy-preserving behavior. For example, they tend to value ethical norms and communal responsibility, which motivates cautious sharing and adherence to privacy settings (Junglas et al., 2008; Li et al., 2012; Shappie et al., 2020). Conversely, empirical findings in online behavior indicate that higher Openness is sometimes associated with more extensive information disclosure—such as increased posting activity and less restrictive privacy settings on social platforms—suggesting a complex, context-dependent relationship (Halevi et al., 2013). So, while the theoretical expectation is that Agreeableness and Openness promote privacy-conscious behavior, actual outcomes may vary depending on context and manifestation of the trait.

Our measurement of personality traits reflects a behaviorally grounded adaptation of the Big Five. Openness was assessed through items reflecting curiosity toward new technologies and willingness to explore digital tools. Conscientiousness captured attention to digital organization and task follow-through. Extraversion was reflected in social media participation and online community engagement, while Agreeableness focused on conflict aversion and valuing others' digital privacy. Neuroticism was indexed by anxiety about unpredictability and loss of digital control.

2.2. Self-Reported Cybersecurity Incidents: Typology and Prior Research

To model cybersecurity risk effectively, it is essential not only to identify predictive factors but also to define meaningful outcome variables. In our study, we classify six self-reported incident types into two severity categories. Mild incidents (INC1–INC3) include account lockouts, forced password resets, or unauthorized login notifications, while Serious incidents (INC4–INC6) refer to financial loss, impersonation or total device compromise. This two-tiered typology reflects both user-level consequences and broader systemic risks. It aligns with existing empirical categorizations that distinguish digital harms by severity, user awareness, and operational disruption (Buil-Gil et al., 2023; Redmiles et al., 2016; Wash & Cooper, 2018). Although self-reports are susceptible to recall bias and semantic variability, structured survey instruments grounded in concrete behavioral language can yield valid insights into cybersecurity exposure—especially when log data are unavailable (Buil-Gil et al., 2023). These instruments are particularly effective when paired with typological frameworks that make distinctions users can easily understand and recall. Recent conceptual advances also support the importance of severity-based models. Conard (2024) proposed the Cybersecurity Incident Severity Scale (CISS), a multidimensional framework that formalizes severity by integrating technical, operational, and human-centric impact factors. Drawing on analogies from emergency response and public health (e.g., Richter and NIH stroke scales), the CISS model allows researchers and practitioners to assign meaningful weight to diverse outcomes, including disruptions to workflow, psychological distress, or reputational damage. While our study does not adopt CISS scoring directly, it shares the foundational aim of quantifying cyber harm beyond a single binary breach/no-breach outcomes.

In our survey, participants responded to six binary (yes/no) items indicating whether they had experienced specific cybersecurity incidents in the past. In addition to analysing these individual outcomes, we also constructed composite variables—for example AtLeastOneMild and AtLeastOneSerious, to improve statistical robustness and capture the breadth of user-reported experiences. This approach allows us to map behavioural predictors to cybersecurity consequences with greater granularity and practical relevance.

2.3. Predictive Modeling in Cybersecurity Research

Historically, much cybersecurity research has focused on predicting behavioural intentions or compliance-related patterns—such as willingness to follow security policies, password reuse, or responses to phishing simulations (Hanus & Wu, 2016; McCormac et al., 2017). While such models are valuable for understanding user psychology and identifying risk-prone behaviour, they are often limited in practical application, as their response variables typically reflect proxy measures—like self-reported intentions or isolated behavioural tendencies—rather than actual, user-reported security incidents. The challenge, then, is how to bridge the gap between theoretically grounded survey constructs and the operational prediction of real-world security events that carry tangible consequences for users and organizations alike.

Our study addresses this gap by using actual self-reported incidents as outcome variables, including both mild and serious events. This approach is conceptually consistent with the call for human-centered modeling in cybersecurity (Diesch et al., 2020; Glaspie & Karwowski, 2018) yet methodologically optimized for operational application: each predictor reflects a directly observable, interpretable, and potentially modifiable aspect of individual digital behavior.

While some may argue that survey-based models lack the immediacy or behavioral granularity of telemetry-based systems, recent work has demonstrated that well-constructed surveys can yield meaningful and predictive insights into incident susceptibility. Notably, de Bruin (2022) developed multilevel models using survey data from over 27,000 EU citizens and 9,000 enterprise users, linking individual characteristics—such as prior incident experience, digital confidence, and national cultural context—to actual phishing simulation outcomes and configuration behaviors. Although the study focused on behavioral outcomes rather than incident labels per se, its integration of cultural, organizational, and psychological predictors showed that survey-derived data can approximate real-world risk conditions when designed thoughtfully.

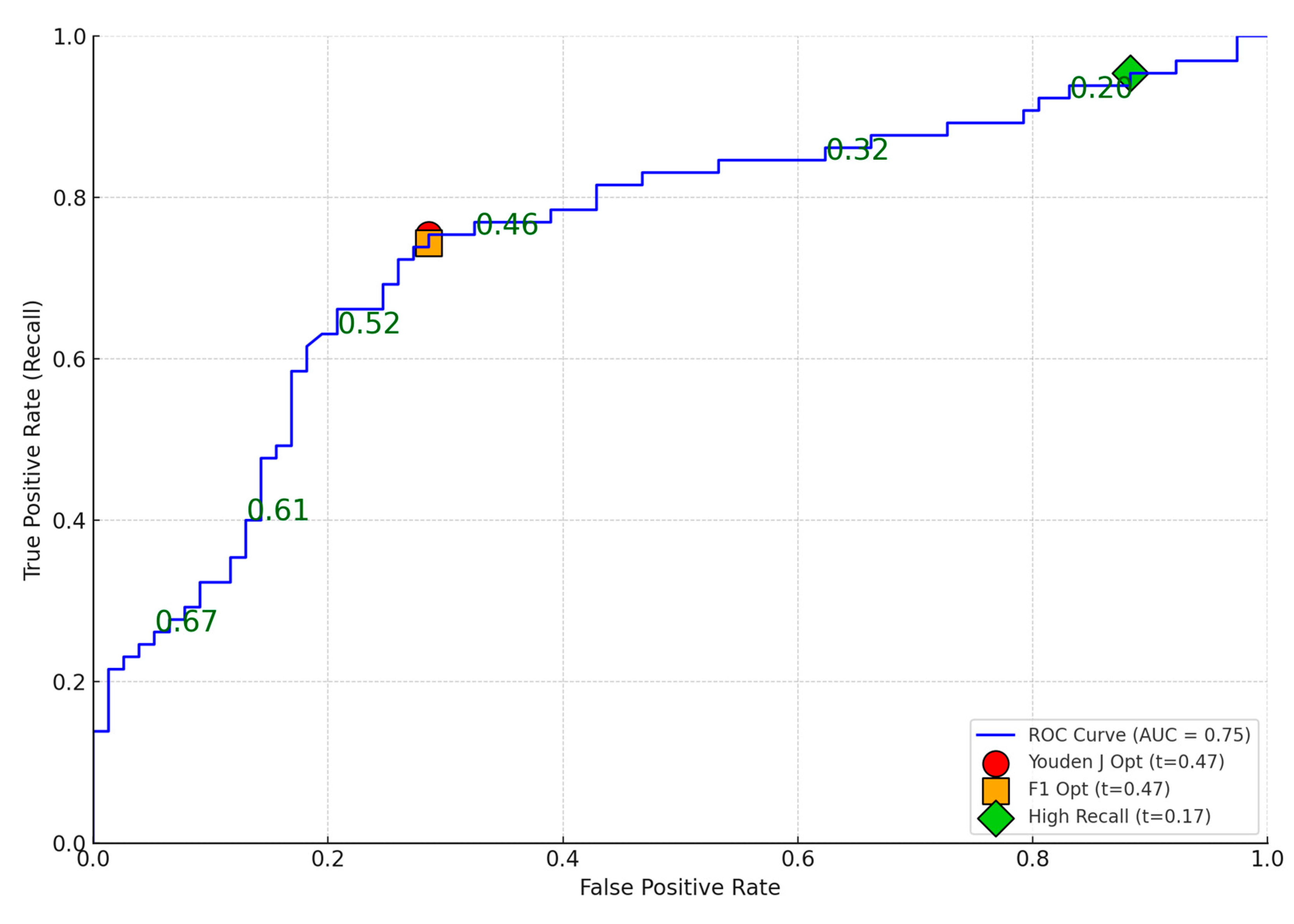

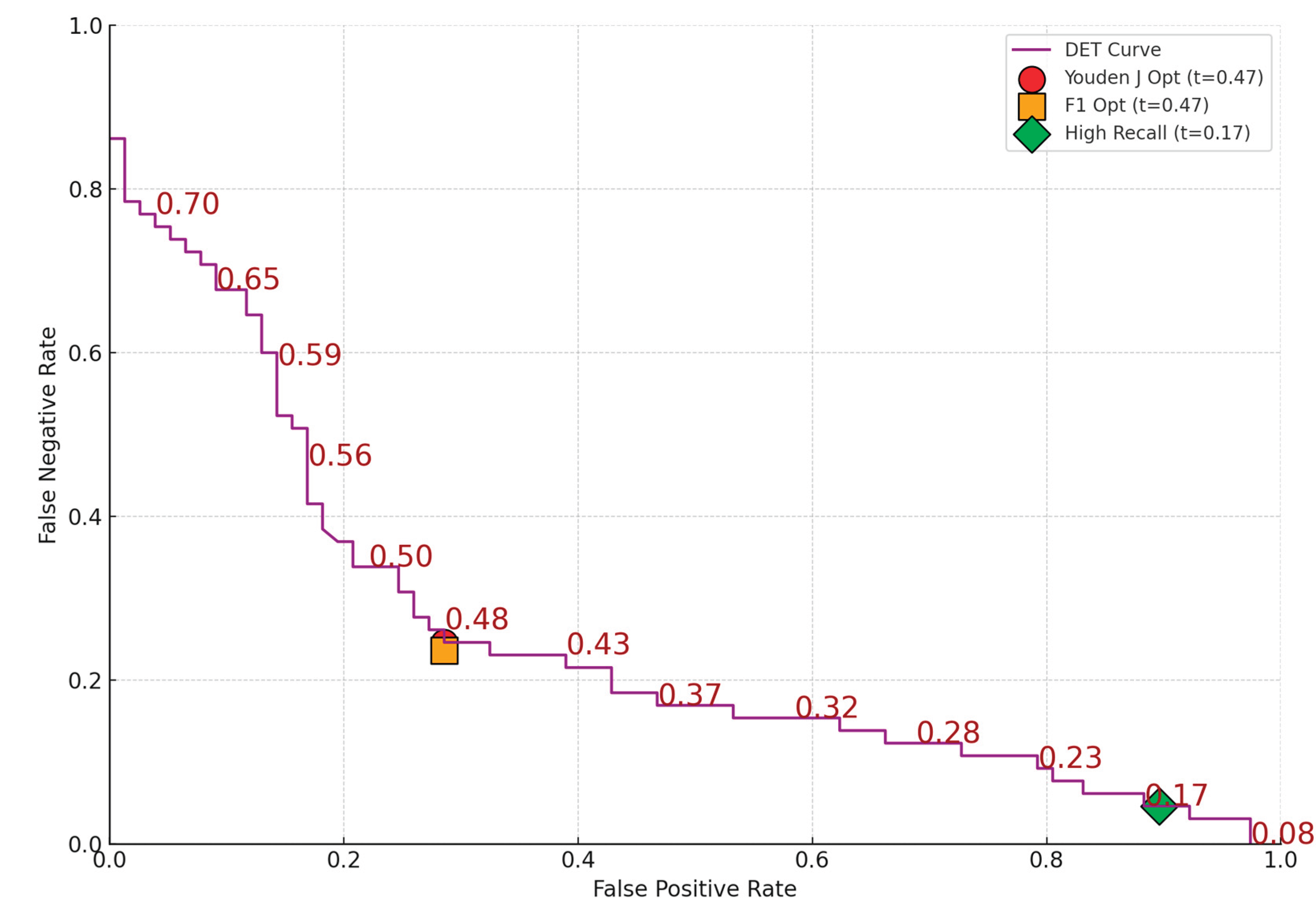

Our approach builds on and extends this logic. Rather than predicting behavioral proxy outcomes (e.g., clicks in phishing simulations), we directly model self-reported security incidents—a more ecologically valid and practically relevant endpoint. The use of logistic regression on item-level survey data offers two core advantages. First, it circumvents the need for strict unidimensionality, which, as our factor analysis showed, was lacking in domains based on diverse behavioral indicators (Lahcen et al., 2020). Second, it allows each survey item to serve as a transparent predictor, which can be immediately interpreted, acted upon, and potentially integrated into personalized risk assessments or training interventions.

In operational contexts, survey-based predictive models can be transformed into practical scoring tools. These scores—whether expressed numerically or categorized into levels such as low, medium, or high—enable organizations to identify users with elevated susceptibility to cybersecurity incidents based solely on self-reported inputs. When integrated into onboarding procedures, periodic training, or targeted assessments, such tools allow for scalable, privacy-preserving interventions, including just-in-time prompts, personalized awareness campaigns, or access restrictions. Importantly, this approach maintains interpretability and transparency, distinguishing it from opaque machine learning systems often embedded in security infrastructure. However, survey-based models may lack temporal sensitivity, prompting researchers and practitioners to explore hybrid approaches that combine static user characteristics with real-time telemetry. One increasingly adopted solution is User and Entity Behaviour Analytics (UEBA), which enhances detection by linking baseline profiles with dynamic activity patterns such as login behaviour, device usage, or authentication anomalies (Khaliq et al., 2020). In such frameworks, survey-based scores can function as front-end risk indicators or baseline inputs that are subsequently refined through behavioural monitoring (Diesch et al., 2020; Danish, 2024). These developments reflect a growing consensus that predictive human-risk modelling is most effective when embedded within adaptive, multi-channel security architectures that integrate cognitive, dispositional, and behavioural dimensions in real time.