Submitted:

27 July 2025

Posted:

28 July 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

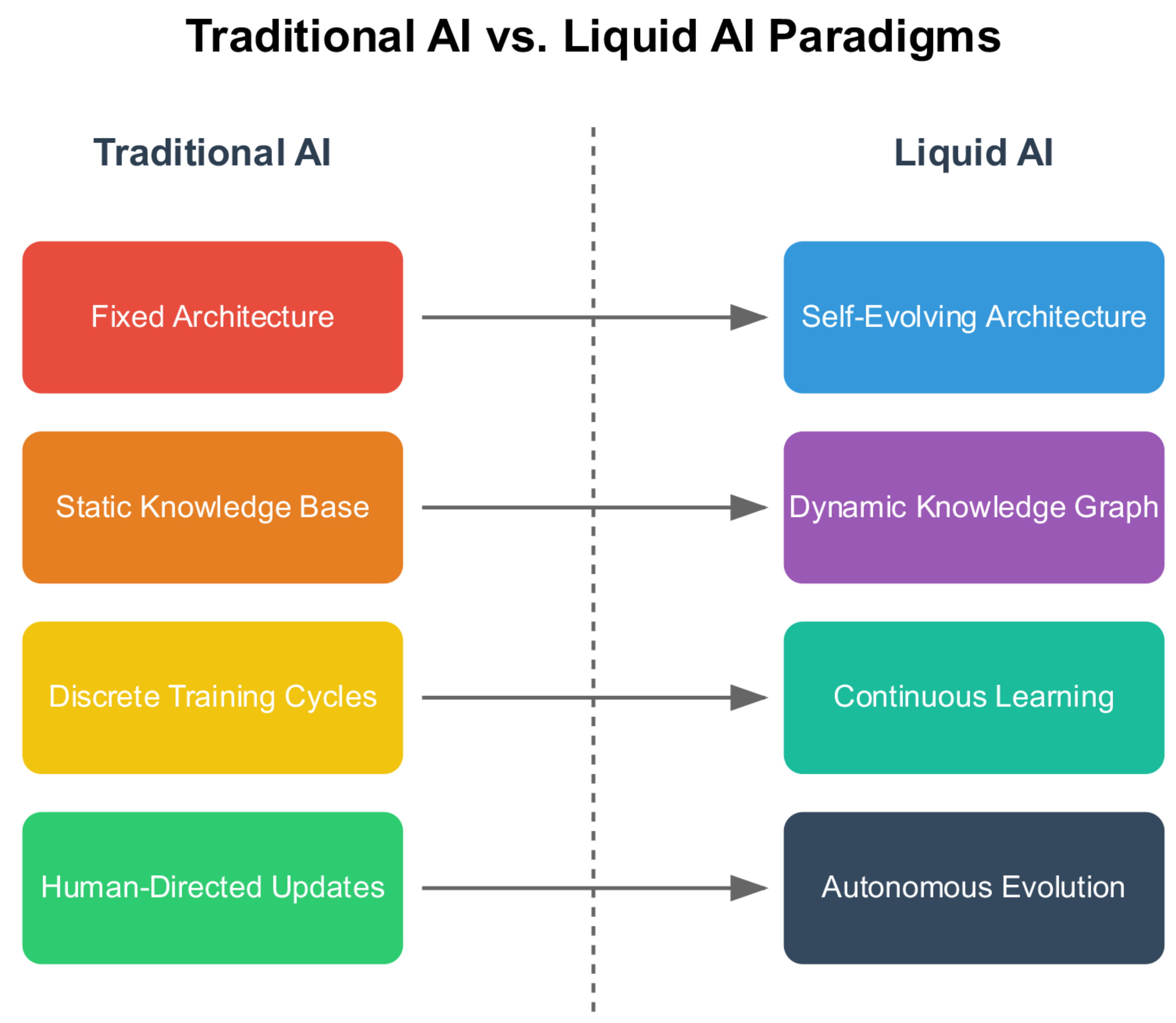

2. Current Limitations and Theoretical Opportunities

2.1. Architectural Constraints in Contemporary AI

| Capability | Liquid AI | EWC | MAML | DARTS | PackNet | QMIX |

|---|---|---|---|---|---|---|

| (Ours) | [81] | [17] | [82] | [70] | [83] | |

| Architectural Adaptation | ||||||

| Runtime Architecture Modification | ✓ | × | × | × | × | × |

| Topological Plasticity | ✓ | × | × | × | × | × |

| Autonomous Structural Evolution | ✓ | × | × | × | × | × |

| Pre-deployment Architecture Search | N/A | × | × | ✓ | × | × |

| Learning Capabilities | ||||||

| Continual Learning | ✓ | ✓ | ✓ | × | ✓ | × |

| Catastrophic Forgetting Prevention | ✓ | ✓ | ✓ | N/A | ✓ | N/A |

| Cross-Domain Knowledge Transfer | ✓ | Limited | ✓ | × | Limited | × |

| Zero-Shot Task Adaptation | ✓ | × | ✓ | × | × | × |

| Self-Supervised Learning | ✓ | × | × | × | × | × |

| Knowledge Management | ||||||

| Dynamic Knowledge Graphs | ✓ | × | × | × | × | × |

| Entropy-Guided Optimization | ✓ | × | × | × | × | × |

| Cross-Domain Reasoning | ✓ | × | Limited | × | × | × |

| Temporal Knowledge Evolution | ✓ | × | × | × | × | × |

| Multi-Agent Capabilities | ||||||

| Emergent Agent Specialization | ✓ | N/A | N/A | N/A | N/A | × |

| Dynamic Agent Topology | ✓ | N/A | N/A | N/A | N/A | × |

| Collective Intelligence | ✓ | N/A | N/A | N/A | N/A | ✓ |

| Autonomous Role Assignment | ✓ | N/A | N/A | N/A | N/A | × |

| Performance Characteristics | ||||||

| Sustained Improvement | ✓ | × | × | × | × | × |

| Resource Efficiency | Adaptive | Fixed | Fixed | Fixed | Fixed | Fixed |

| Scalability | Unlimited | Limited | Limited | Limited | Limited | Moderate |

| Interpretability | Dynamic | Low | Low | Moderate | Low | Low |

| Deployment Flexibility | ||||||

| Online Adaptation | ✓ | Limited | Limited | × | Limited | Limited |

| Distributed Deployment | ✓ | × | × | × | × | ✓ |

| Hardware Agnostic | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Real-Time Operation | ✓ | ✓ | ✓ | × | ✓ | ✓ |

Five Fundamental Limitations

Parameter Rigidity

Knowledge Fragmentation

Human-Dependent Evolution

Catastrophic Forgetting

Limited Meta-Learning

2.2. Theoretical Foundations from Natural Systems

2.3. Core Contributions and Article Structure

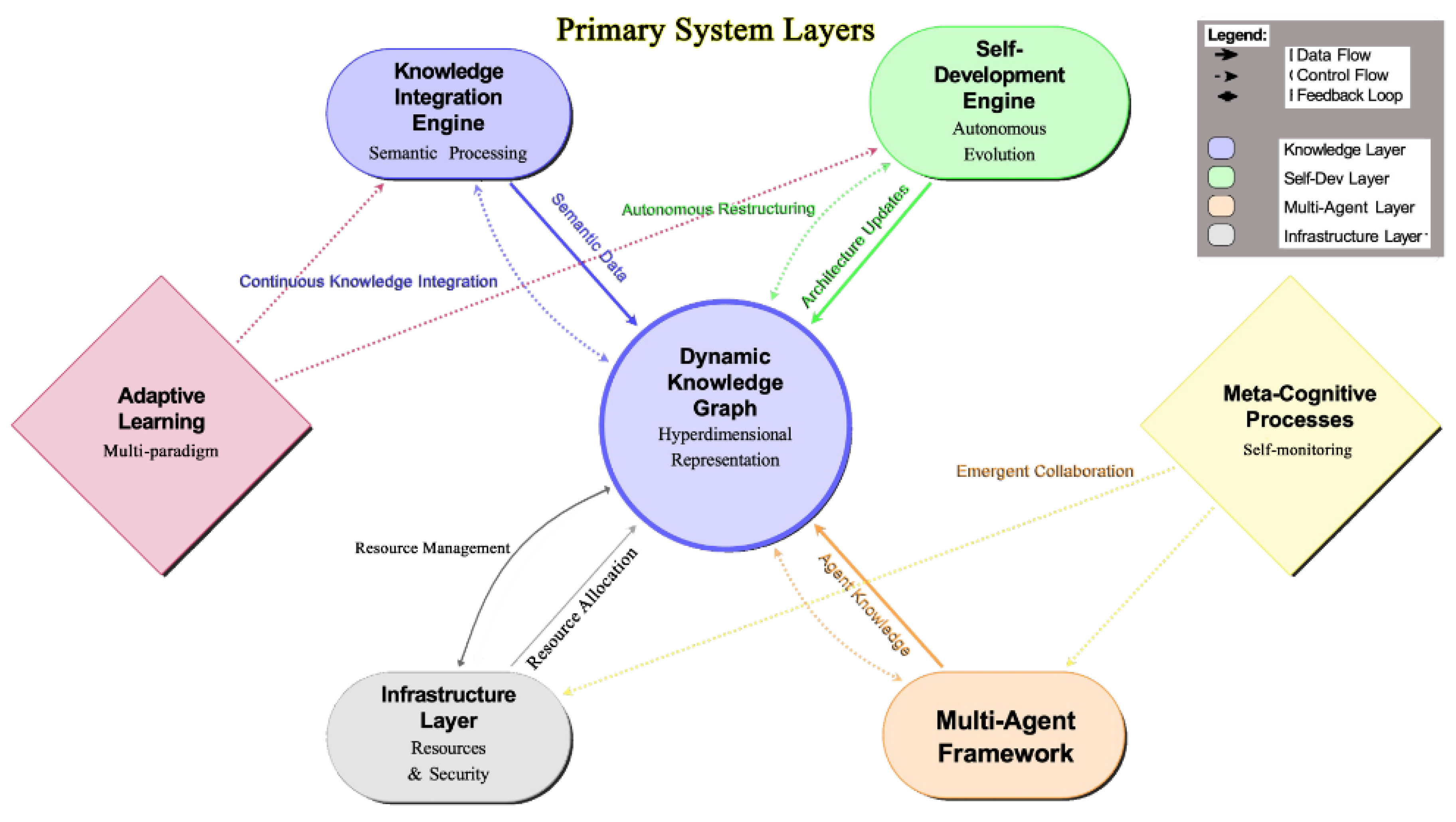

3. Liquid AI Architecture

3.1. Architectural Overview

3.2. Core System Components

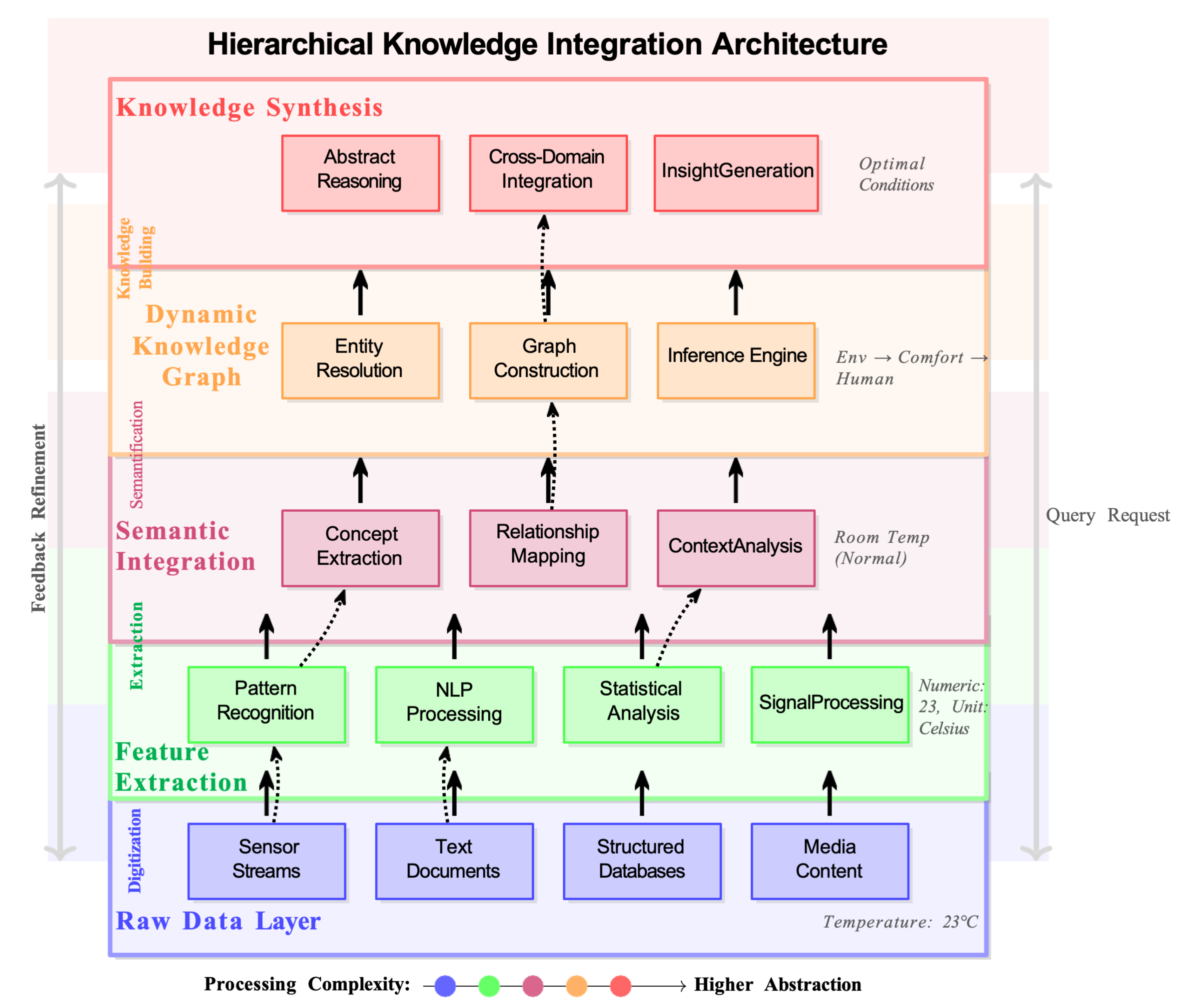

3.2.1. Dynamic Knowledge Graph

| Algorithm 1 Dynamic Knowledge Graph Update |

|

3.2.2. Self-Development Engine

| Algorithm 2 Self-Development Process |

|

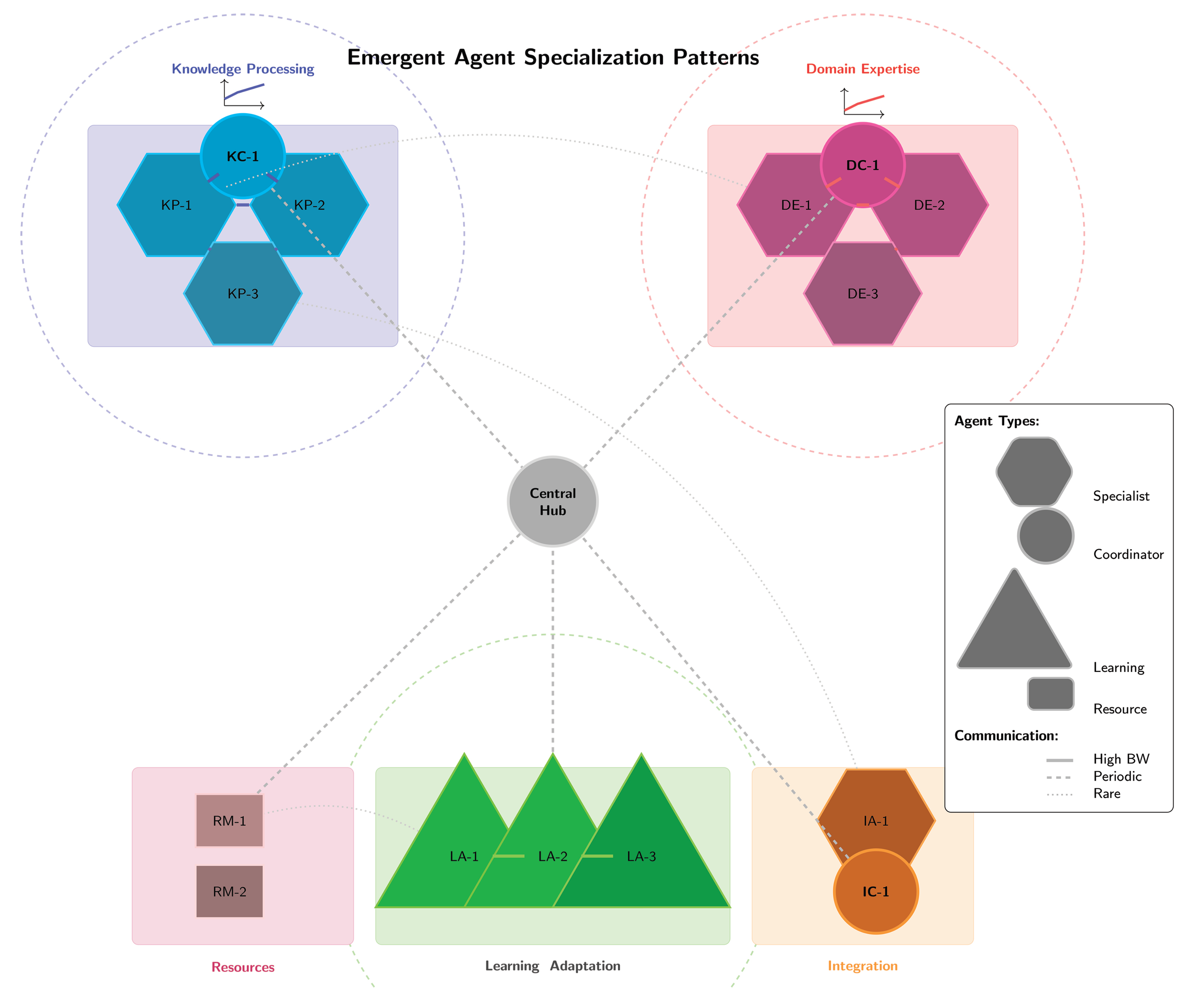

3.2.3. Multi-Agent Collaborative Framework

3.2.4. Adaptive Learning Mechanisms

3.2.5. Meta-Cognitive Processes

3.3. Information Flow and System Dynamics

3.3.1. Temporal Evolution

3.3.2. Information Propagation

3.3.3. Stability and Convergence

3.3.4. Information-Theoretic Optimization

3.3.5. Adaptive Computational Graphs

3.4. System Boundaries and Theoretical Guarantees

3.4.1. Environmental Interaction

3.4.2. Secure Containment

3.4.3. Theoretical Performance Bounds

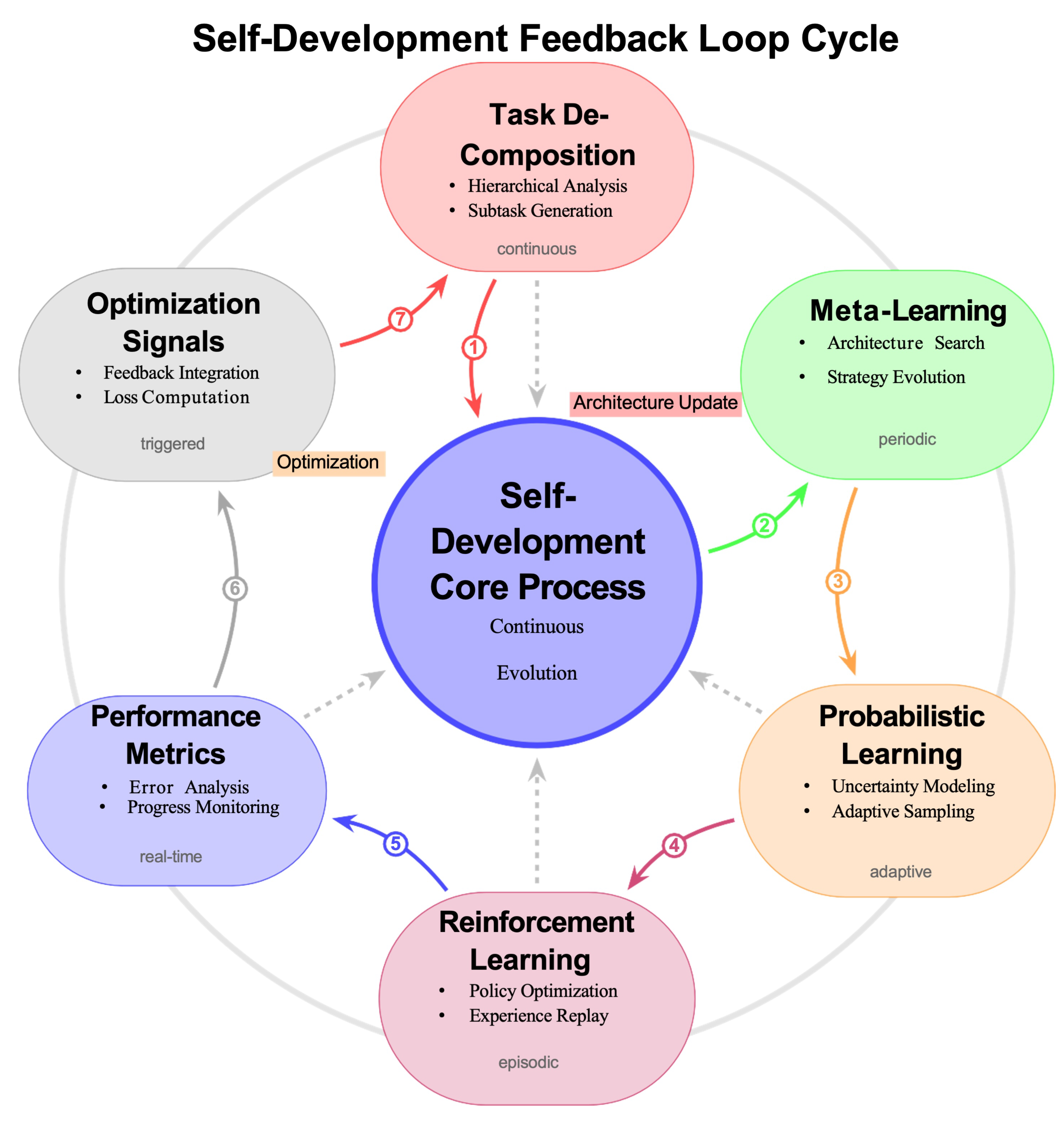

4. Self-Development Mechanisms

4.1. Foundational Principles of Self-Development

4.2. Hierarchical Task Decomposition

4.3. Meta-Learning for Architectural Adaptation

4.3.1. Bilevel Optimization

| Algorithm 3 Online Bayesian Architecture Optimization |

|

4.4. Probabilistic Program Synthesis

4.5. Reinforcement Learning for Architectural Evolution

4.6. Optimization Algorithms and Theoretical Analysis

4.7. Integrated Self-Development Framework

| Algorithm 4 Adaptive Architecture Evolution |

|

5. Multi-Agent Collaboration Framework

5.1. Theoretical Foundations of Multi-Agent Systems

5.2. Agent Architecture and Capabilities

5.3. Emergent Specialization and Dynamic Topology

| Algorithm 5 Adaptive Agent Topology Evolution |

|

5.4. Coordination Mechanisms

5.4.1. Decentralized Consensus

| Algorithm 6 Adaptive Consensus Protocol |

|

5.4.2. Hierarchical Organization

| Algorithm 7 Meta-Agent Formation |

|

5.5. Distributed Learning and Credit Assignment

5.5.1. Collaborative Policy Optimization

5.5.2. Multi-Agent Credit Assignment

6. Knowledge Integration Engine

6.1. Dynamic Knowledge Representation

6.1.1. Hyperdimensional Graph Neural Networks

| Algorithm 8 Hyperdimensional Graph Evolution |

|

6.2. Information-Theoretic Knowledge Organization

6.2.1. Transformer-Based Relational Reasoning

6.2.2. Cross-Domain Knowledge Synthesis

6.3. Distributed Knowledge Management

6.3.1. Federated Knowledge Aggregation

6.3.2. Semantic Memory and Retrieval

6.4. Uncertainty Quantification

6.5. Computational Infrastructure for Knowledge Processing

| Algorithm 9 Adaptive Load Balancing |

|

7. Implementation Considerations

7.1. Computational Complexity Analysis

7.1.1. Asymptotic Complexity

| Algorithm 10 Efficient Knowledge Graph Query |

|

7.2. Distributed Architecture and Resource Management

7.2.1. Hierarchical Processing

7.2.2. Dynamic Resource Allocation

7.3. Security and Privacy Considerations

7.4. Deployment and Monitoring

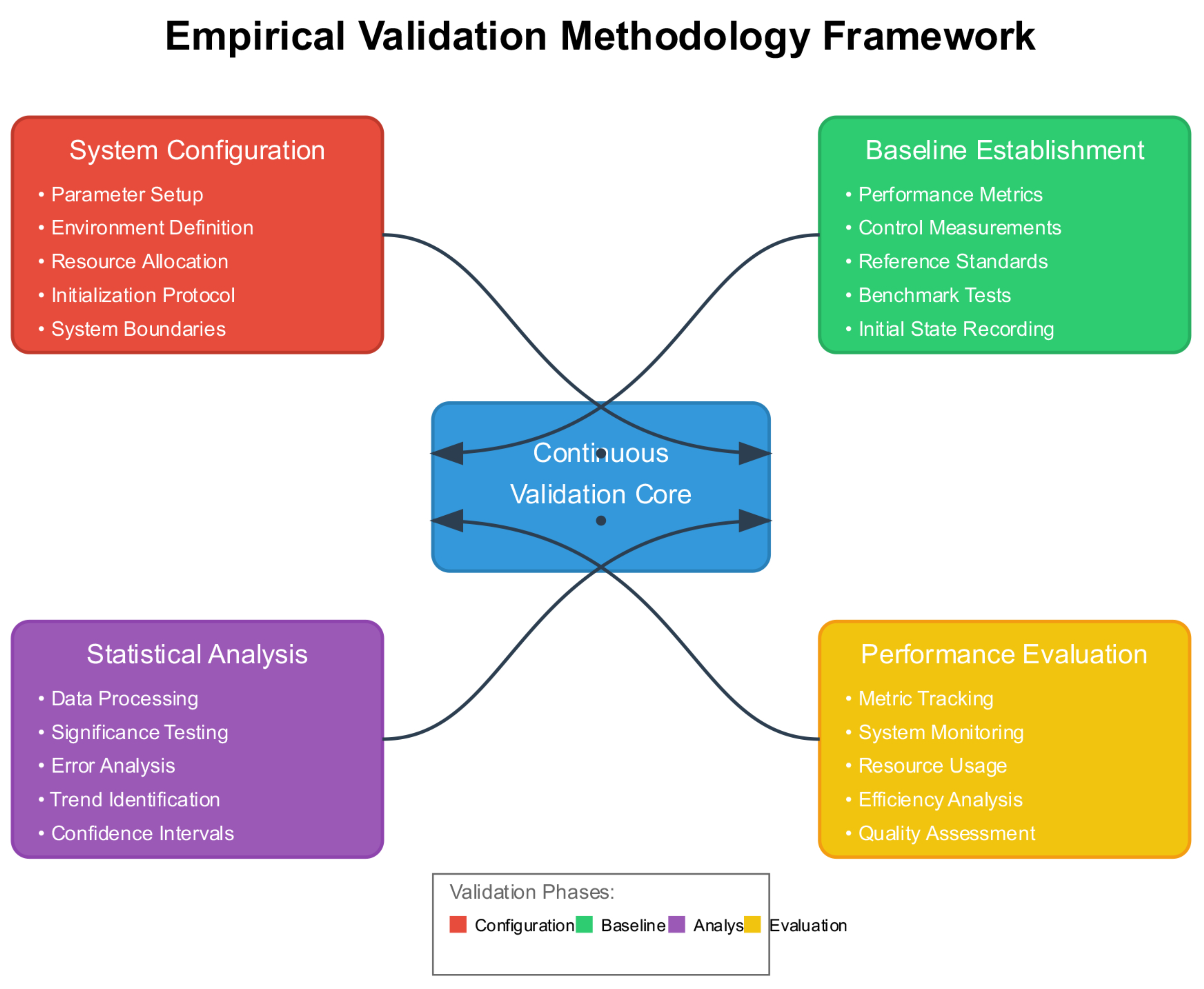

8. Evaluation Methodology

8.1. Challenges in Evaluating Adaptive Systems

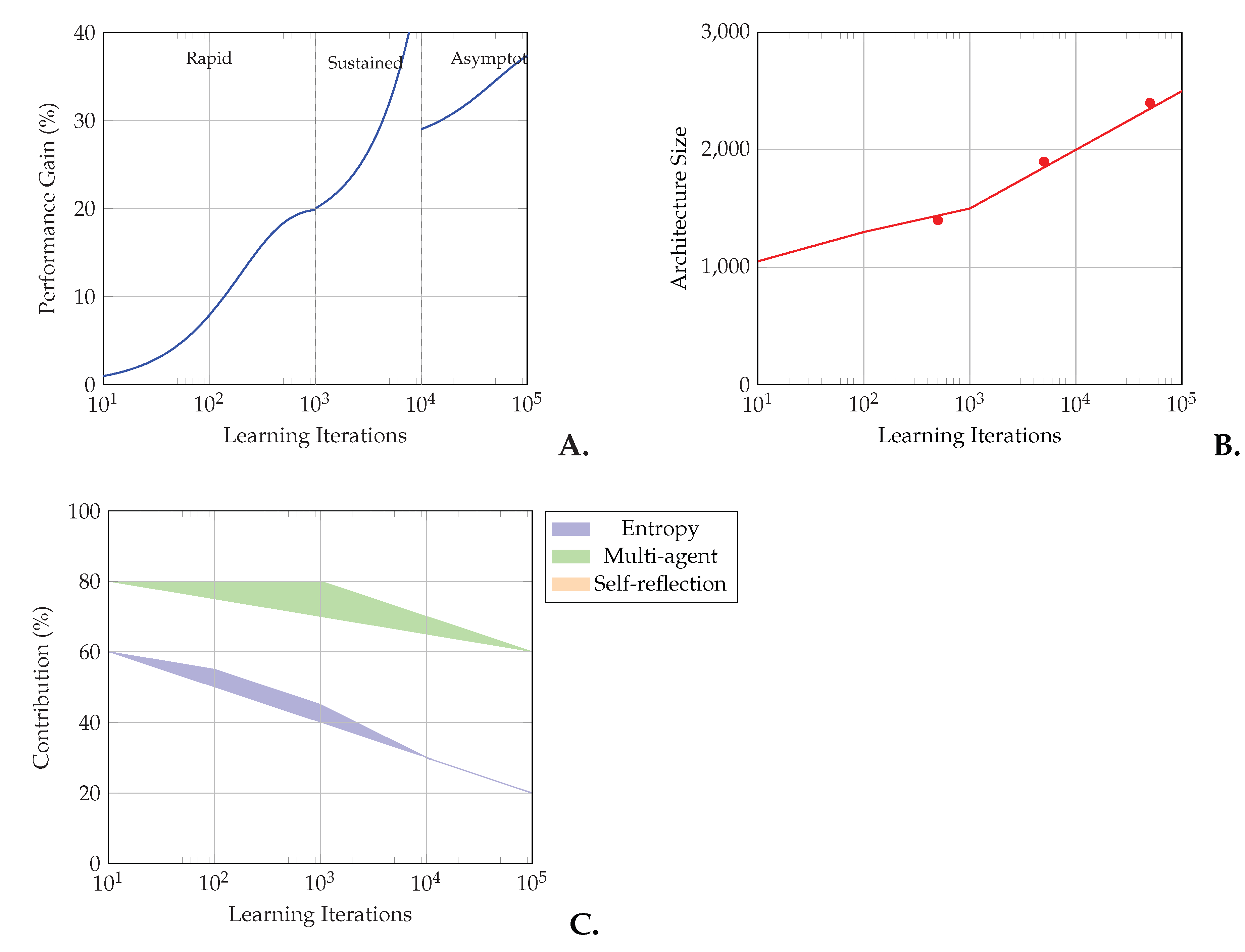

8.2. Temporal Performance Metrics

8.2.1. Capability Evolution Tracking

8.2.2. Multi-Domain Evaluation

| Algorithm 11 Multi-Domain Task Construction |

|

| Algorithm 12 Adaptive Learning Assessment |

|

8.3. Human-AI Interaction Evaluation

| Algorithm 13 Interactive Adaptation Assessment |

|

8.4. Safety and Deployment Validation

| Algorithm 14 Safe Deployment for Self-Modifying Systems |

|

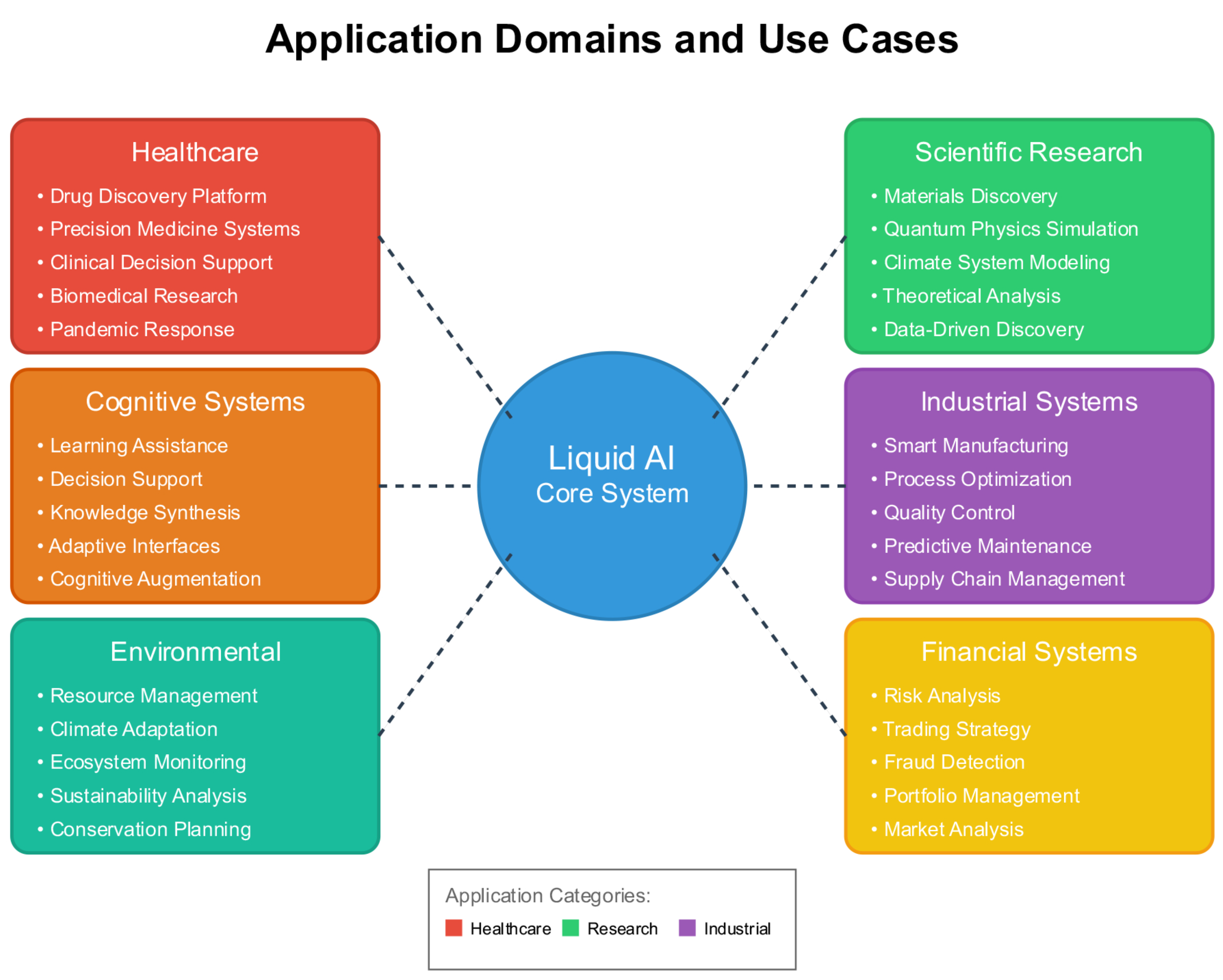

9. Applications and Use Cases

9.1. Healthcare and Biomedical Applications

9.2. Scientific Discovery

9.3. Industrial and Infrastructure Systems

10. Future Directions and Implications

10.1. Philosophical and Theoretical Implications

10.2. Technical Research Challenges

10.3. Societal Considerations

| Aspect | Challenge | Mitigation Strategy | Research Needs |

|---|---|---|---|

| Autonomy | Self-modification may lead to unintended behaviors | Bounded modification spaces, continuous monitoring | Formal verification methods for dynamic systems |

| Transparency | Evolving architectures complicate interpretability | Maintain modification logs, interpretable components | Dynamic explanation generation techniques |

| Accountability | Unclear responsibility for emergent decisions | Clear governance frameworks, audit trails | Legal frameworks for autonomous AI |

| Fairness | Potential for bias amplification | Active bias detection and mitigation | Fairness metrics for evolving systems |

| Privacy | Distributed knowledge may leak sensitive information | Differential privacy, secure computation | Privacy-preserving knowledge integration |

| Safety | Unpredictable emergent behaviors | Conservative modification bounds, rollback mechanisms | Safety verification for self-modifying systems |

| Control | Difficulty in stopping runaway evolution | Multiple kill switches, consensus requirements | Robust control mechanisms |

10.4. Long-Term Research Trajectories

11. Conclusions

Supplementary Materials

Author Contributions

References

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. (2016). Deep learning. MIT Press.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. Advances in Neural Information Processing Systems 2017, 30, 5998–6008. [Google Scholar]

- Sutton, R.S.; Barto, A.G. (2018). Reinforcement learning: An introduction. MIT Press.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P. .. Amodei, D. Language models are few-shot learners. Advances in Neural Information Processing Systems 2020, 33, 1877–1901. [Google Scholar]

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A. .. Fiedel, N. PaLM: Scaling language modeling with pathways. Journal of Machine Learning Research 2023, 24, 1–113. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. IEEE Conference on Computer Vision and Pattern Recognition 2016, 770–778. [Google Scholar]

- Howard, J.; Ruder, S. Universal language model fine-tuning for text classification. Annual Meeting of the Association for Computational Linguistics 2018, 328–339. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S. ;... Chen, W. (2022). LoRA: Low-rank adaptation of large language models. International Conference on Learning Representations.

- Holtmaat, A.; Svoboda, K. Experience-dependent structural synaptic plasticity in the mammalian brain. Nature Reviews Neuroscience 2009, 10, 647–658. [Google Scholar] [CrossRef]

- Ruder, S. An overview of multi-task learning in deep neural networks. arXiv 2017. arXiv:1706.05098. [Google Scholar] [CrossRef]

- Crawshaw, M. Multi-task learning with deep neural networks: A survey. arXiv 2020. arXiv:2009.09796. [Google Scholar] [CrossRef]

- Hutter, F.; Kotthoff, L.; Vanschoren, J. (2019). Automated machine learning: Methods, systems, challenges. Springer.

- Elsken, T.; Metzen, J.H.; Hutter, F. Neural architecture search: A survey. Journal of Machine Learning Research 2019, 20, 1997–2017. [Google Scholar]

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A. .. Hadsell, R. Overcoming catastrophic forgetting in neural networks. Proceedings of the National Academy of Sciences 2017, 114, 3521–3526. [Google Scholar] [CrossRef] [PubMed]

- Parisi, G.I.; Kemker, R.; Part, J.L.; Kanan, C.; Wermter, S. Continual lifelong learning with neural networks: A review. Neural Networks 2019, 113, 54–71. [Google Scholar] [CrossRef] [PubMed]

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep networks. International Conference on Machine Learning 2017, 1126–1135. [Google Scholar]

- Nichol, A.; Achiam, J.; Schulman, J. On first-order meta-learning algorithms. arXiv 2018, arXiv:1803.02999. [Google Scholar]

- Draganski, B.; Gaser, C.; Busch, V.; Schuierer, G.; Bogdahn, U.; May, A. Neuroplasticity: Changes in grey matter induced by training. Nature 2004, 427, 311–312. [Google Scholar] [CrossRef]

- Bonabeau, E.; Dorigo, M.; Theraulaz, G. (1999). Swarm intelligence: From natural to artificial systems. Oxford University Press.

- Rusu, A.A.; Rabinowitz, N.C.; Desjardins, G.; Soyer, H.; Kirkpatrick, J.; Kavukcuoglu, K. .. Hadsell, R. Progressive neural networks. arXiv 2016, arXiv:1606.04671. [Google Scholar]

- Kazemi, S.M.; Goel, R.; Jain, K.; Kobyzev, I.; Sethi, A.; Forsyth, P.; Poupart, P. Representation learning for dynamic graphs: A survey. Journal of Machine Learning Research 2020, 21, 2648–2720. [Google Scholar]

- Andreas, J.; Rohrbach, M.; Darrell, T.; Klein, D. Neural module networks. IEEE Conference on Computer Vision and Pattern Recognition 2016, 39–48. [Google Scholar]

- Ji, S.; Pan, S.; Cambria, E.; Marttinen, P.; Philip, S.Y. A survey on knowledge graphs: Representation, acquisition, and applications. IEEE Transactions on Neural Networks and Learning Systems 2021, 33, 494–514. [Google Scholar] [CrossRef]

- White, C.; Nolen, S.; Savani, Y. Exploring the loss landscape in neural architecture search. International Conference on Machine Learning 2021, 10962–10973. [Google Scholar]

- Ru, B.; Wan, X.; Dong, X.; Osborne, M. (2021). Interpretable neural architecture search via Bayesian optimisation with Weisfeiler-Lehman kernels. International Conference on Learning Representations.

- Zhang, K.; Yang, Z.; Başar, T. Multi-agent reinforcement learning: A selective overview of theories and algorithms. Handbook of Reinforcement Learning and Control 2021, 321–384. [Google Scholar]

- Gronauer, S.; Diepold, K. Multi-agent deep reinforcement learning: A survey. Artificial Intelligence Review 2022, 55, 895–943. [Google Scholar] [CrossRef]

- Laird, J.E.; Lebiere, C.; Rosenbloom, P.S. A standard model of the mind: Toward a common computational framework across artificial intelligence, cognitive science, neuroscience, and robotics. AI Magazine 2019, 40, 13–26. [Google Scholar] [CrossRef]

- Kotseruba, I.; Tsotsos, J.K. 40 years of cognitive architectures: Core cognitive abilities and practical applications. Artificial Intelligence Review 2020, 53, 17–94. [Google Scholar] [CrossRef]

- Schmidhuber, J. Gödel machines: Fully self-referential optimal universal self-improvers. Artificial General Intelligence 2007, 199–226. [Google Scholar]

- Jia, X., De Brabandere, B., Tuytelaars, T., & Gool, L. V. Dynamic filter networks. Advances in Neural Information Processing Systems 2020, 29, 667–675.

- Friston, K. Active inference and artificial curiosity. arXiv 2017, arXiv:1709.07470. [Google Scholar]

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mané, D. Concrete problems in AI safety. arXiv 2016. arXiv:1606.06565. [Google Scholar] [CrossRef]

- Hendrycks, D.; Carlini, N.; Schulman, J.; Steinhardt, J. Unsolved problems in ML safety. arXiv 2021, arXiv:2109.13916. [Google Scholar]

- He, X.; Zhao, K.; Chu, X. AutoML: A survey of the state-of-the-art. Knowledge-Based Systems 2021, 212, 106622. [Google Scholar] [CrossRef]

- Karmaker, S.K.; Hassan, M.M.; Smith, M.J.; Xu, L.; Zhai, C.; Veeramachaneni, K. AutoML to date and beyond: Challenges and opportunities. ACM Computing Surveys 2021, 54, 1–36. [Google Scholar] [CrossRef]

- Lake, B.M.; Ullman, T.D.; Tenenbaum, J.B.; Gershman, S.J. Building machines that learn and think like people. Behavioral and Brain Sciences 2017, 40, E253. [Google Scholar] [CrossRef]

- Pateria, S.; Subagdja, B.; Tan, A.H.; Quek, C. Hierarchical reinforcement learning: A comprehensive survey. ACM Computing Surveys 2021, 54, 1–35. [Google Scholar] [CrossRef]

- Franceschi, L.; Frasconi, P.; Salzo, S.; Grazzi, R.; Pontil, M. Bilevel programming for hyperparameter optimization and meta-learning. International Conference on Machine Learning 2018, 1568–1577. [Google Scholar]

- Lorraine, J.; Vicol, P.; Duvenaud, D. Optimizing millions of hyperparameters by implicit differentiation. International Conference on Artificial Intelligence and Statistics 2020, 1540–1552. [Google Scholar]

- Lowe, R.; Wu, Y.; Tamar, A.; Harb, J.; Abbeel, P.; Mordatch, I. Multi-agent actor-critic for mixed cooperative-competitive environments. Advances in Neural Information Processing Systems 2017, 30, 6379–6390. [Google Scholar]

- Shoham, Y.; Leyton-Brown, K. (2008). Multiagent systems: Algorithmic, game-theoretic, and logical foundations. Cambridge University Press.

- Boyd, S.; Parikh, N.; Chu, E.; Peleato, B.; Eckstein, J. Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends in Machine Learning 2011, 3, 1–122. [Google Scholar] [CrossRef]

- Kennedy, J. , Eberhart, R. (2001). Swarm intelligence. Morgan Kaufmann.

- Chalkiadakis, G.; Elkind, E.; Wooldridge, M. Computational aspects of cooperative game theory. Synthesis Lectures on Artificial Intelligence and Machine Learning 2022, 16, 1–219. [Google Scholar]

- Olfati-Saber, R.; Fax, J.A.; Murray, R.M. Consensus and cooperation in networked multi-agent systems. Proceedings of the IEEE 2007, 95, 215–233. [Google Scholar] [CrossRef]

- Hogan, A.; Blomqvist, E.; Cochez, M.; d’Amato, C.; Melo, G.D.; Gutierrez, C. .. Zimmermann, A. Knowledge graphs. ACM Computing Surveys 2021, 54, 1–37. [Google Scholar] [CrossRef]

- Wang, Q.; Mao, Z.; Wang, B.; Guo, L. Knowledge graph embedding: A survey of approaches and applications. IEEE Transactions on Knowledge and Data Engineering 2017, 29, 2724–2743. [Google Scholar] [CrossRef]

- Castro, M.; Liskov, B. (1999). Practical Byzantine fault tolerance. Proceedings of the Third Symposium on Operating Systems Design and Implementation, 173–186.

- Wu, Z.; Pan, S.; Chen, F.; Long, G.; Zhang, C.; Philip, S.Y. A comprehensive survey on graph neural networks. IEEE Transactions on Neural Networks and Learning Systems 2021, 32, 4–24. [Google Scholar] [CrossRef] [PubMed]

- Zhou, J.; Cui, G.; Hu, S.; Zhang, Z.; Yang, C.; Liu, Z. .. Sun, M. Graph neural networks: A review of methods and applications. AI Open 2020, 1, 57–81. [Google Scholar] [CrossRef]

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. International Conference on Machine Learning 2016, 1050–1059. [Google Scholar]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and policy considerations for deep learning in NLP. Annual Meeting of the Association for Computational Linguistics 2019, 3645–3650. [Google Scholar]

- Xu, K.; Hu, W.; Leskovec, J.; Jegelka, S. (2019). How powerful are graph neural networks? International Conference on Learning Representations.

- Hernández-Orallo, J.; Martínez-Plumed, F.; Schmid, U.; Siebers, M.; Dowe, D.L. Computer models solving intelligence test problems: Progress and implications. Artificial Intelligence 2016, 230, 74–107. [Google Scholar] [CrossRef]

- Shoeybi, M.; Patwary, M.; Puri, R.; LeGresley, P.; Casper, J.; Catanzaro, B. Megatron-LM: Training multi-billion parameter language models using model parallelism. arXiv 2019, arXiv:1909.08053. [Google Scholar]

- Rajbhandari, S., Rasley, J., Ruwase, O., & He, Y. ZeRO: Memory optimizations toward training trillion parameter models. International Conference for High Performance Computing, Networking, Storage and Analysis 2020, 1–16.

- Chen, C.; Wang, K.; Wu, Q. Efficient checkpointing and recovery for distributed systems. IEEE Transactions on Parallel and Distributed Systems 2015, 26, 3301–3313. [Google Scholar]

- Jouppi, N.P.; Young, C.; Patil, N.; Patterson, D.; Agrawal, G.; Bajwa, R. ; ... Yoon, D.H. In-datacenter performance analysis of a tensor processing unit. ACM/IEEE International Symposium on Computer Architecture, 2017; 1–12. [Google Scholar]

- NVIDIA. (2020). NVIDIA A100 tensor core GPU architecture. NVIDIA Technical Report.

- Micikevicius, P.; Narang, S.; Alben, J.; Diamos, G.; Elsen, E.; Garcia, D. ;... Wu, H. (2018). Mixed precision training. International Conference on Learning Representations.

- Kotthoff, L. Algorithm selection for combinatorial search problems: A survey. Data Mining and Constraint Programming 2016, 149–190. [Google Scholar]

- Dwork, C.; Roth, A. The algorithmic foundations of differential privacy. Foundations and Trends in Theoretical Computer Science 2014, 9, 211–407. [Google Scholar] [CrossRef]

- Evans, D. , Kolesnikov, V., Rosulek, M. A pragmatic introduction to secure multi-party computation. Foundations and Trends in Privacy and Security 2018, 2, 70–246. [Google Scholar] [CrossRef]

- Cohen, J.; Rosenfeld, E.; Kolter, Z. Certified adversarial robustness via randomized smoothing. International Conference on Machine Learning 2019, 1310–1320. [Google Scholar]

- Carlini, N.; Wagner, D. Towards evaluating the robustness of neural networks. IEEE Symposium on Security and Privacy 2017, 39–57. [Google Scholar]

- Hernández-Orallo, J. Evaluation in artificial intelligence: From task-oriented to ability-oriented measurement. Artificial Intelligence Review 2017, 48, 397–447. [Google Scholar] [CrossRef]

- Chollet, F. (). On the measure of intelligence. arXiv 2019, arXiv:1911.01547. [Google Scholar]

- Kerschke, P. , Hoos, H. H., Neumann, F., Trautmann, H. Automated algorithm selection: Survey and perspectives. Evolutionary Computation 2019, 27, 3–45. [Google Scholar] [CrossRef] [PubMed]

- Vamathevan, J.; Clark, D.; Czodrowski, P.; Dunham, I.; Ferran, E.; Lee, G. .. Zhao, S. Applications of machine learning in drug discovery and development. Nature Reviews Drug Discovery 2019, 18, 463–477. [Google Scholar] [CrossRef]

- Johnson, K.B.; Wei, W.Q.; Weeraratne, D.; Frisse, M.E.; Misulis, K.; Rhee, K. ; .. Snowdon, J. L. Precision medicine, AI, and the future of personalized health care. Clinical and Translational Science 2021, 14, 86–93. [Google Scholar]

- Alamo, T.; Reina, D.G.; Mammarella, M.; Abella, A. Covid-19: Open-data resources for monitoring, modeling, and forecasting the epidemic. Electronics 2020, 9, 827. [Google Scholar] [CrossRef]

- Himanen, L.; Geurts, A.; Foster, A.S.; Rinke, P. Data-driven materials science: Status, challenges, and perspectives. Advanced Science 2019, 6, 1900808. [Google Scholar] [CrossRef]

- Reichstein, M.; Camps-Valls, G.; Stevens, B.; Jung, M.; Denzler, J.; Carvalhais, N. Deep learning and process understanding for data-driven Earth system science. Nature 2019, 566, 195–204. [Google Scholar] [CrossRef]

- Zhong, R.Y.; Xu, X.; Klotz, E.; Newman, S.T. Intelligent manufacturing in the context of Industry 4.0: A review. Engineering 2017, 3, 616–630. [Google Scholar] [CrossRef]

- Zhang, Q.; Li, H.; Liao, Y. Smart grid: A review of recent developments and future challenges. International Journal of Electrical Power & Energy Systems 2018, 103, 481–490. [Google Scholar]

- Dreyfus, H. L. (1992). What computers still can’t do: A critique of artificial reason. MIT Press.

- Chalmers, D. The singularity: A philosophical analysis. Journal of Consciousness Studies 2010, 17, 7–65. [Google Scholar]

- Clark, A. (2008). Supersizing the mind: Embodiment, action, and cognitive extension. Oxford University Press.

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A. .. Hadsell, R. Overcoming catastrophic forgetting in neural networks. Proceedings of the National Academy of Sciences 2017, 114, 3521–3526. [Google Scholar] [CrossRef]

- Liu, H.; Simonyan, K.; Yang, Y. (2019). DARTS: Differentiable architecture search. International Conference on Learning Representations.

- Rashid, T.; Samvelyan, M.; Schroeder, C.; Farquhar, G.; Foerster, J.; Whiteson, S. QMIX: Monotonic value function factorisation for deep multi-agent reinforcement learning. International Conference on Machine Learning 2018, 4295–4304. [Google Scholar]

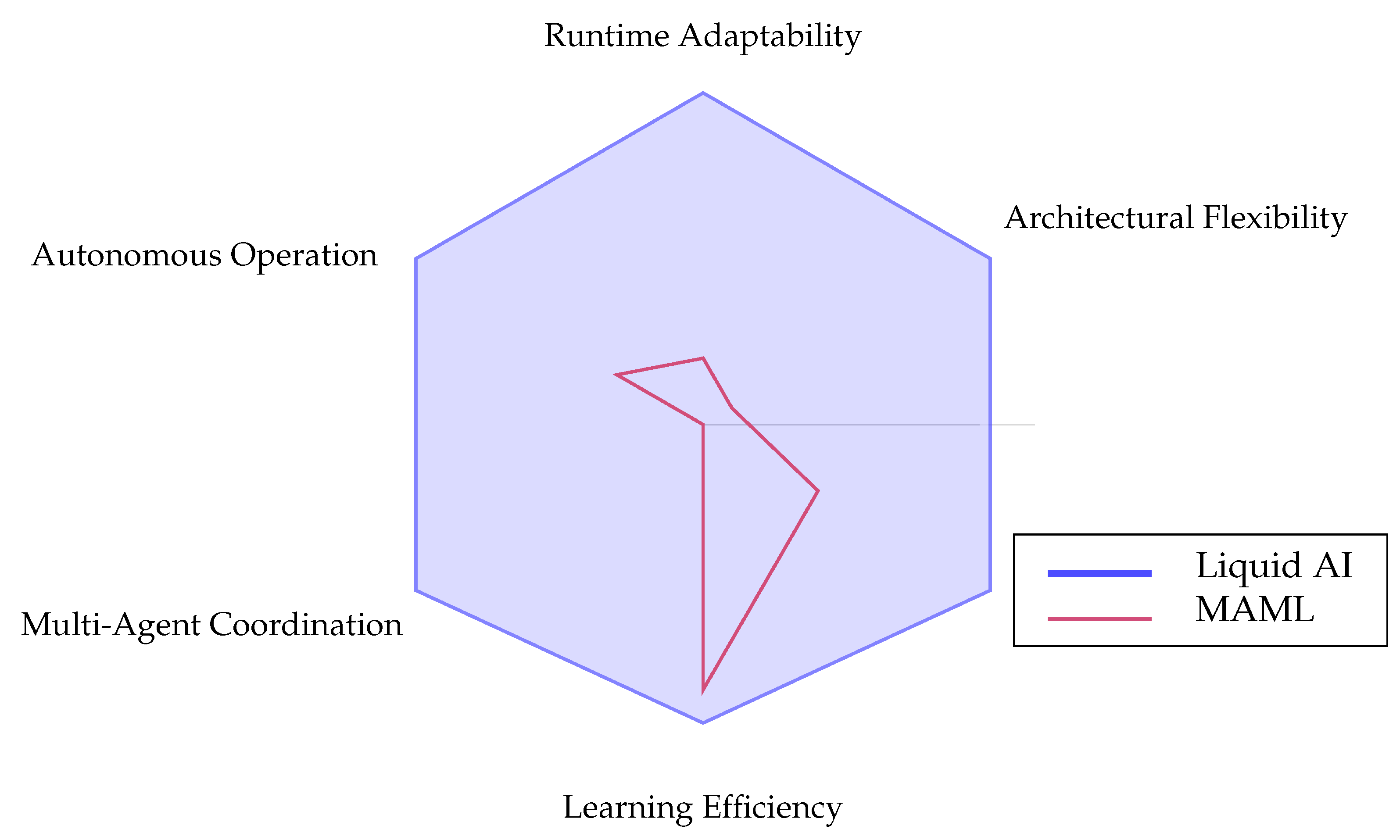

| Liquid AI | |

| MAML |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).