Submitted:

27 July 2025

Posted:

28 July 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Model Collapse Phenomenon

2.2. Information Theory in Deep Learning

2.3. Human Feedback in AI Training

3. Theoretical Framework

3.1. Generative AI as Lossy Communication Channels

- Input (X): Original training data distribution.

- Channel: The AI model with parameters θ and architectural constraints.

- Output (Yᵢ): Synthetic data generated at iteration i.

- Noise: Errors from quantization, stochastic sampling, and model approximations.

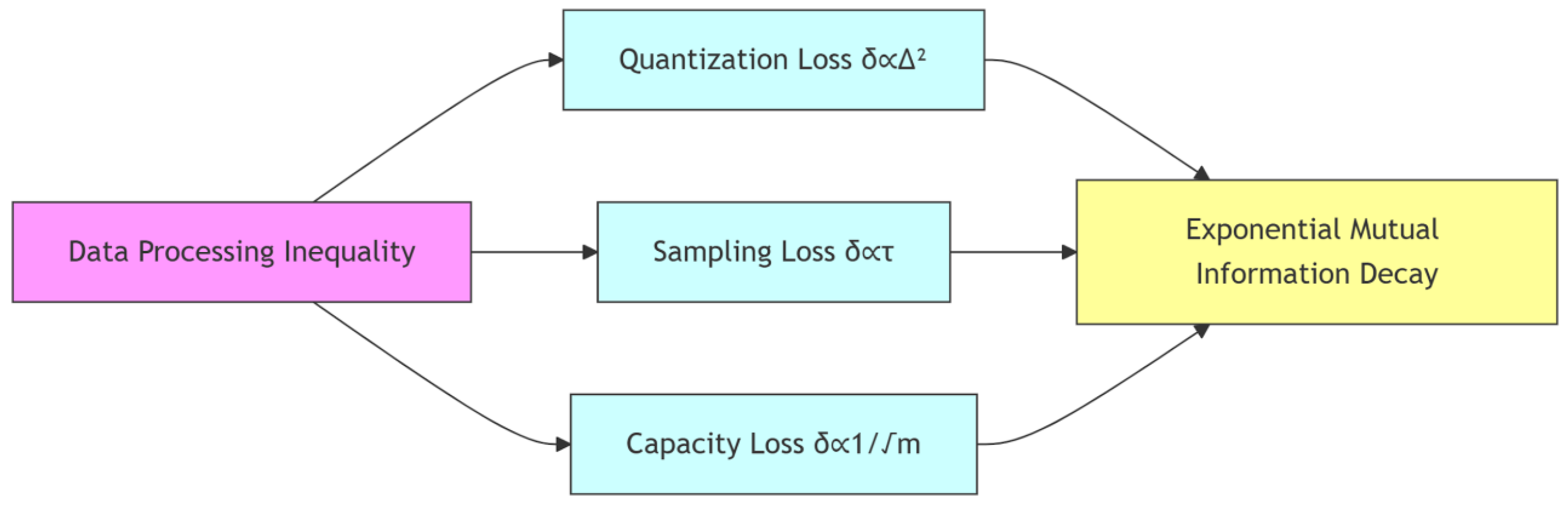

3.2. Sources of Information Loss

- Quantization Effects: Weight quantization (e.g., from 32-bit to 16-bit) introduces noise, with mean squared error bounded by Δ²/12 for linear quantization step size Δ [19].

- Stochastic Sampling: Temperature-based sampling increases conditional entropy H(Y|X) as the temperature parameter τ rises, reducing mutual information [20].

- Activation Function Losses: Non-linear activations like ReLU discard information (e.g., negative values), creating bottlenecks where I(X; f(X)) < I(X; X) [21].

- Finite Model Capacity: Limited model capacity leads to approximation errors, bounded by the Vapnik-Chervonenkis (VC) dimension [22].

3.3. Quantitative Analysis of Information Decay

4. Predicted Empirical Manifestations Based on DPI

- Exponential Decay Tendency: Mutual information is expected to decay approximately as: I(X;Yᵢ) = I(X;Y₁) ⋅ e^{-λi} with decay rates theoretically concentrated in the range λ ∈ [0.2, 0.4] per iteration, where higher model complexity likely accelerates decay.

- Loss Source Hierarchy: Architectural constraints are projected to dominate information loss (estimated >30% of total degradation), significantly exceeding quantization effects (δquant ∝ Δ²) and sampling stochasticity (δsamp ∝ τ).

- Hybrid Training Threshold: Preliminary analysis indicates that maintaining I(X;Yᵢ)/I(X;Y₁) > 0.7 may require >70% original data input, suggesting a potential stability boundary.

5. Implications for AI Development

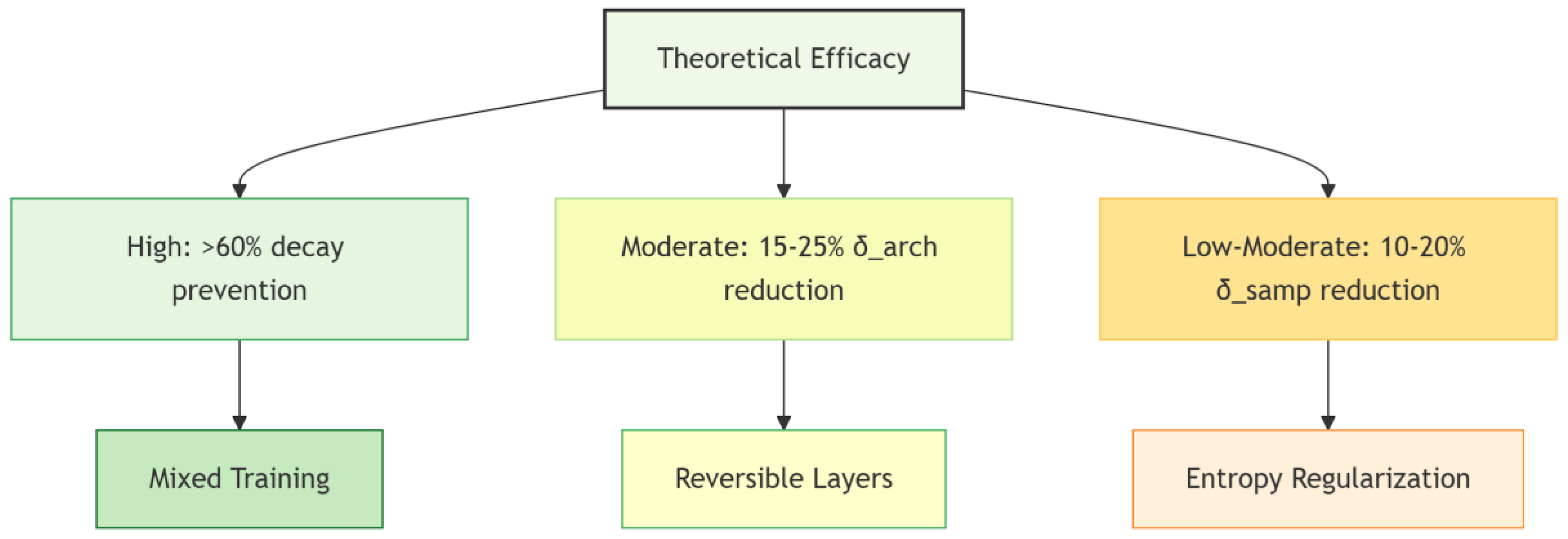

5.1. Speculative Mitigation Framework

| Strategy | Mechanism | Theoretical Efficacy |

| Mixed Training | Breaks Markov chain via X→Yᵢ→X_{human}→Yᵢ₊₁ | High efficacy ( >60% decay prevention) |

|

Reversible Layers ([26]) |

Preserves information ‖∇f‖≈1 | Moderate efficacy (15-25% δarch reduction) |

| Entropy Regularization ([27]) | Minimizes H(Y|X) | Low-moderate efficacy (10-20% δsamp reduction) |

5.2. Role of Human Feedback

6. Limitations and Scope

- Model Simplification: Predictions derive from abstracted channel models. Large-scale transformers may exhibit emergent dynamics unaccounted for in our framework.

- Information-Theoretic Challenges: Analytical computation of mutual information in high-dimensional spaces remains fundamentally limited by the curse of dimensionality, though valiational bounds offer theoretical estimation frameworks.

- Domain Specificit: Collapse thresholds likely vary across data modalities (e.g., discrete text vs. continuous image spaces).

- Mitigation Validation: Proposed interventions require rigorous testing in real-world systems.

7. Future Work

- Large-Scale Validation: Testing DPI-based analysis on LLMs with >1B parameters, using datasets like Common Crawl or ImageNet.

- Domain-Specific Studies: Comparing collapse rates across text, image, and audio modalities.

- Advanced Mitigation: Developing architectures with reversible layers or entropy-regularized sampling to minimize δᵢ.

- Theoretical Bounds: Deriving tighter bounds on δᵢ under realistic assumptions about noise and model capacity.

8. Broader Implications

9. Conclusion

- Exponential mutual information decay (λ ∈ [0.2, 0.4] per iteration)

- Dominance of architectural constraints (>30% information loss)

- Critical stability threshold (>70% human data input)

- A formal foundation for analyzing synthetic data degradation

- Quantitatively falsifiable hypotheses for future empirical work

- Design principles for collapse-resistant AI systems

Appendix A. Proof of Approximation Loss Bound

Acknowledgments

References

- Kaplan, J. , et al. (2020). Scaling laws for neural language models. arXiv:2001.08361.

- Villalobos, P. , et al. (2022). Will we run out of data? arXiv:2211.04325.

- Borji, A. (2023). A categorical archive of ChatGPT failures. arXiv:2302.03494.

- Shumailov, I. , et al. (2023). The curse of recursion. Proceedings of the 40th ICML. 31564–31579.

- Gao, R. , et al. (2021). Self-consuming generative models go MAD. Proceedings of the 41st ICML, 14259-14286.

- Casco-Rodriguez, J.; Alemohammad, S.; Luzi, L.; Imtiaz, A.; Babaei, H.; LeJeune, D.; Siahkoohi, A.; Baraniuk, R. Self-Consuming Generative Models go MAD. LatinX in AI at Neural Information Processing Systems Conference 2023. LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE;

- Hataya, R. , et al. (2023). Will large-scale generative models corrupt future datasets? Proceedings of the IEEE/CVF ICCV, 1801-1810.

- Russell, S. (2019). Human compatible: Artificial intelligence and the problem of control. Viking.

- Dafoe, A. , et al. (2021). Cooperative AI. Nature. 593, 33–36. [PubMed]

- Martínez, G. , et al. (2023). How bad is training on synthetic data? arXiv:2404.05090.

- MacKay, D. J. (2003). Information theory, inference and learning algorithms. Cambridge University Press.

- Alemi, A. A. , et al. (2016). Deep variational information bottleneck. arXiv:1612.00410.

- Tishby, N.; Zaslavsky, N. Deep learning and the information bottleneck principle. In Proceedings of the ITW 2015, Jerusalem, Israel, 26 April–1 May 2015; pp. 1–5. [Google Scholar]

- Russo, D. , & Zou, J. ( 2016). Controlling bias in adaptive data analysis. Proceedings of the 19th AISTATS, 1232–1240.

- Shwartz-Ziv, R. , & Tishby, N. (2017). Opening the black box of deep neural networks. arXiv:1703.00810.

- Baevski, A. , et al. (2020). wav2vec 2.0. Advances in Neural Information Processing Systems. 33, 12449–12460.

- Shannon, C. E. (1948). A mathematical theory of communication. The Bell System Technical Journal, 27(3), 379-423.

- Cover, T. M. , & Thomas, J. A. (2006). Elements of information theory (2nd ed.). Wiley-Interscience.

- Jacob, B. , et al. ( 2018). Quantization and training of neural networks. Proceedings of the IEEE CVPR, 2704–2713.

- Ackley, D. H. , et al. (1985). A learning algorithm for Boltzmann machines. Cognitive Science. 9(1), 147–169.

- Arpit, D. , et al. ( 2017). A closer look at memorization in deep networks. Proceedings of the 34th ICML, 233–242.

- Vapnik, V. The Nature of Statistical Learning Theory; Springer science & business media: Berlin/Heidelberg, Germany, 1995. [Google Scholar]

- Ouyang, L. , et al. (2022). Training language models with human feedback. Advances in Neural Information Processing Systems. 35, 27730–27744.

- Poli, M. , et al. (2024). Information propagation... Advances in Neural Information Processing Systems. 37, 15824–15837.

- Christiano, P. , et al. (2023). Deep reinforcement learning from human preferences. Advances in Neural Information Processing Systems. 36, 18945–18960.

- Gomez, A. N. , et al. (2017). The reversible residual network. arXiv:1707.04585.

- Pereyra, G. , et al. (2017). Regularizing neural networks by penalizing confident output distributions. arXiv:1701.06548.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).