Submitted:

05 April 2026

Posted:

08 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Conventional and Convolution-based Techniques

2.2. Attention-based Techniques

2.3. State-Space Based Techniques

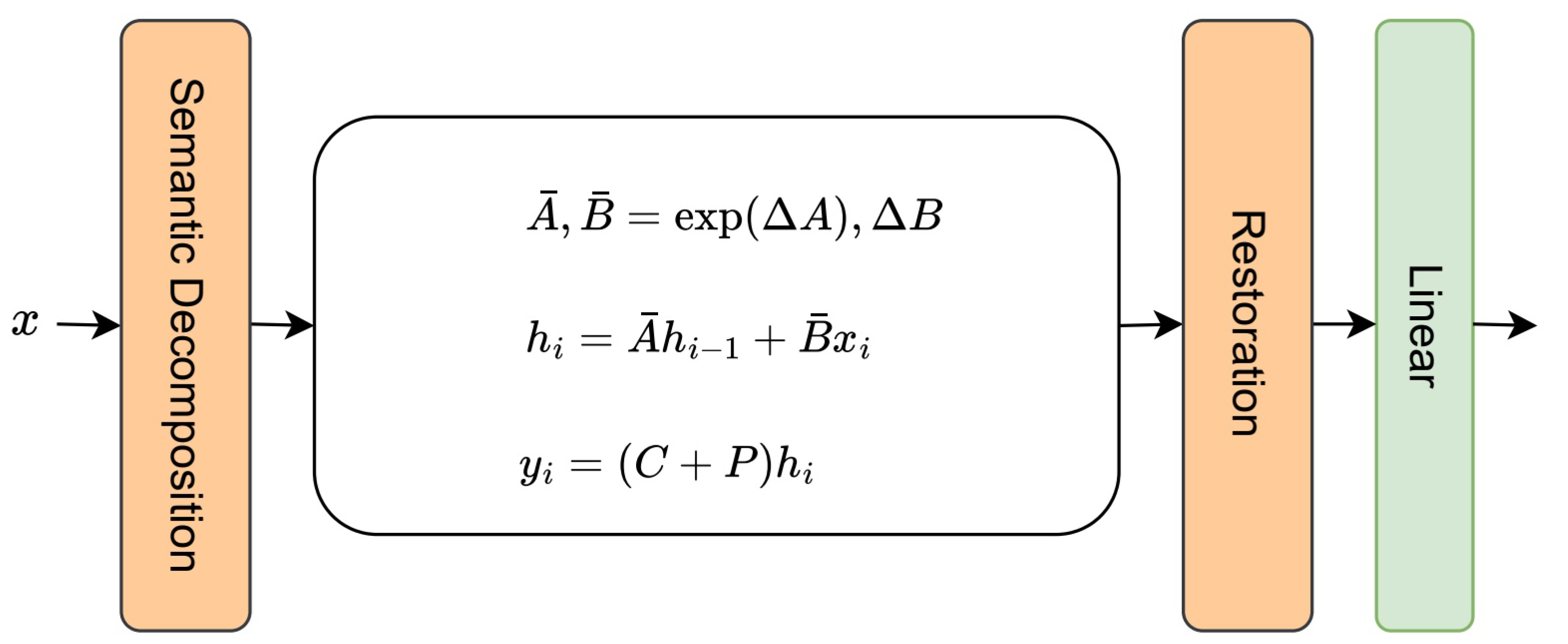

3. Semantic-Injected State Modeling

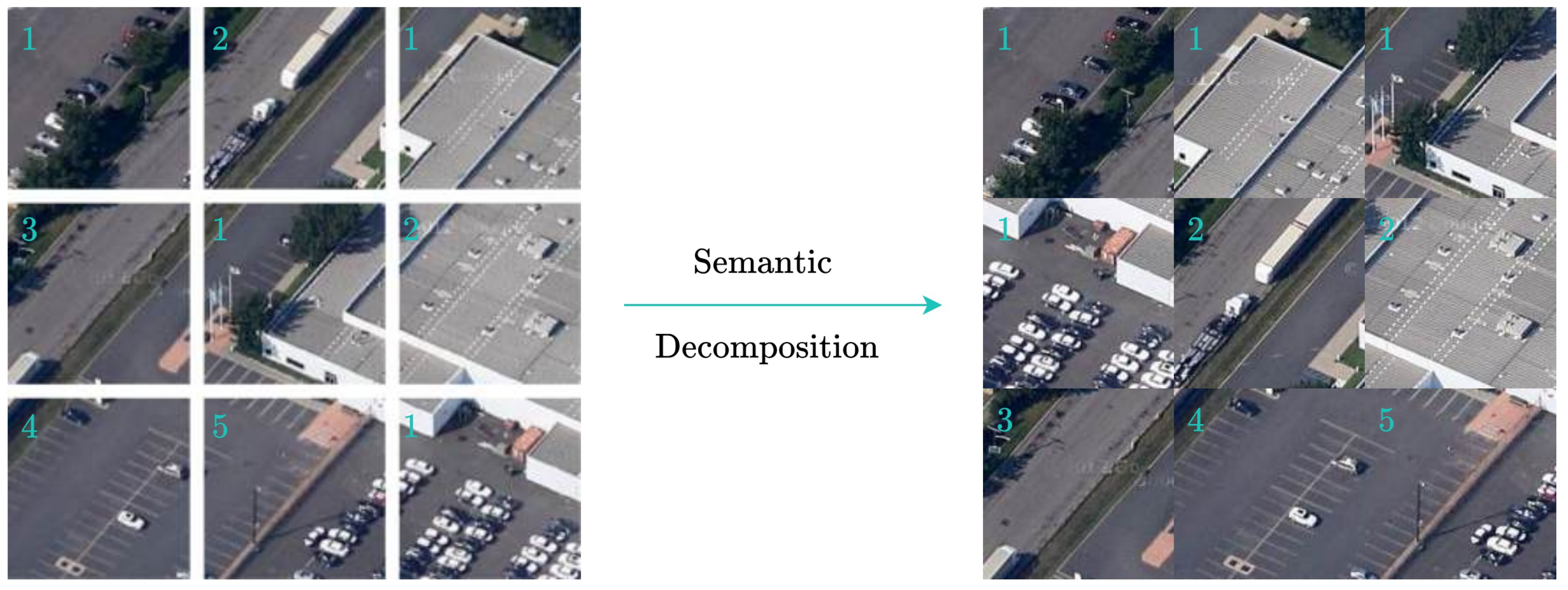

3.1. Semantic Decomposition and Prototype Anchoring

3.2. Semantic Injection Mechanism

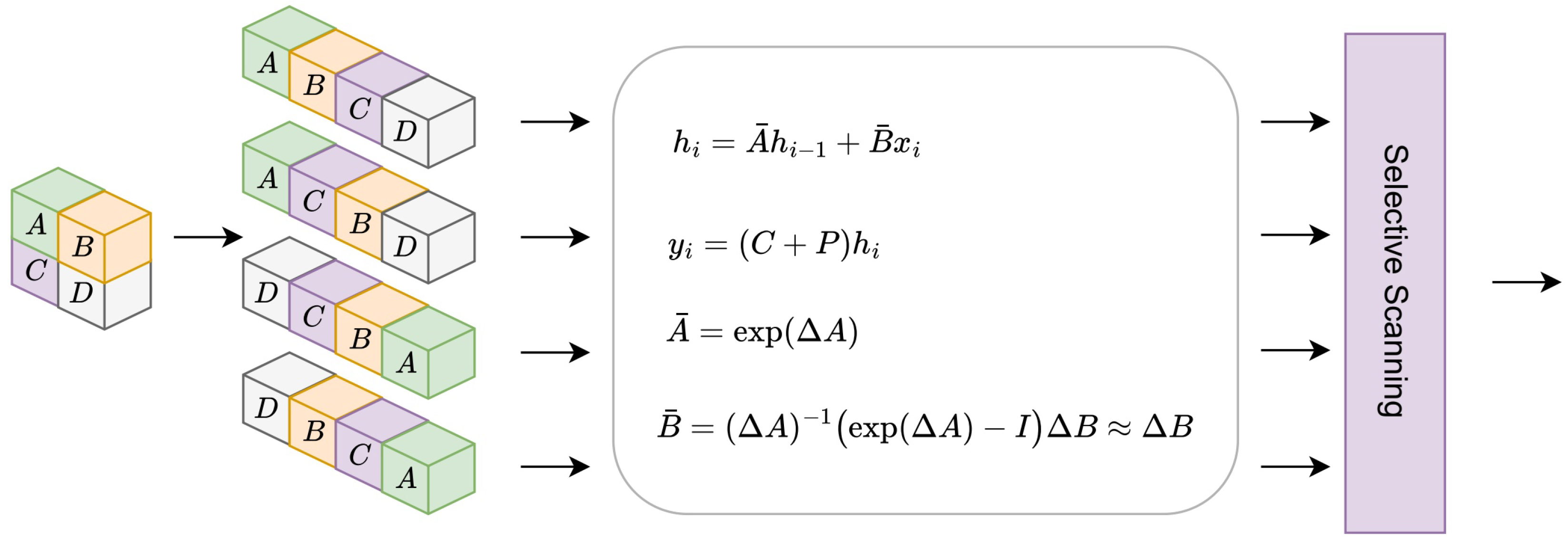

3.3. 2D State Modeling Module

3.3.1. Computational Complexity Analysis

3.4. Semantic Injection State Modeling Block

3.5. Geographically-Chunked Parallel Processing

4. Methodology

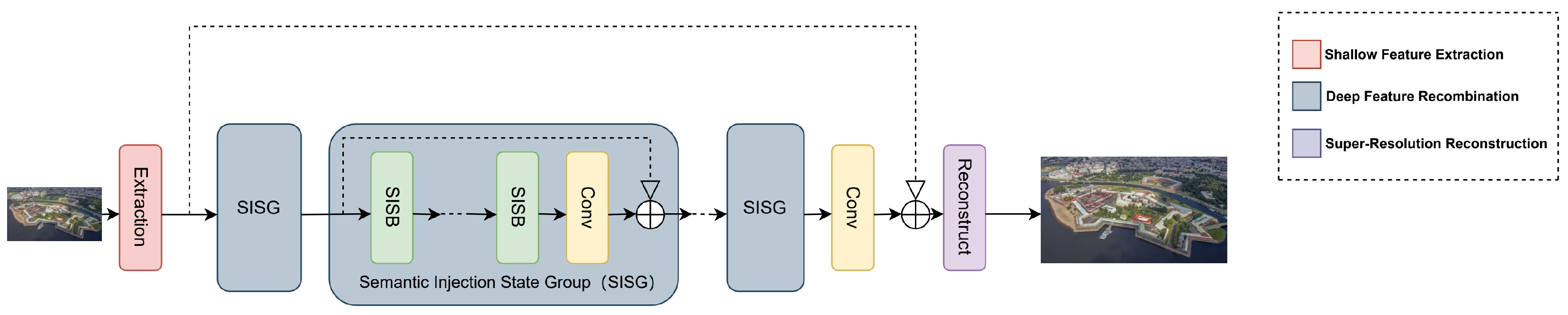

4.1. Model Architecture

4.2. Semantic-Injected State-Space Group (SISG) Architecture

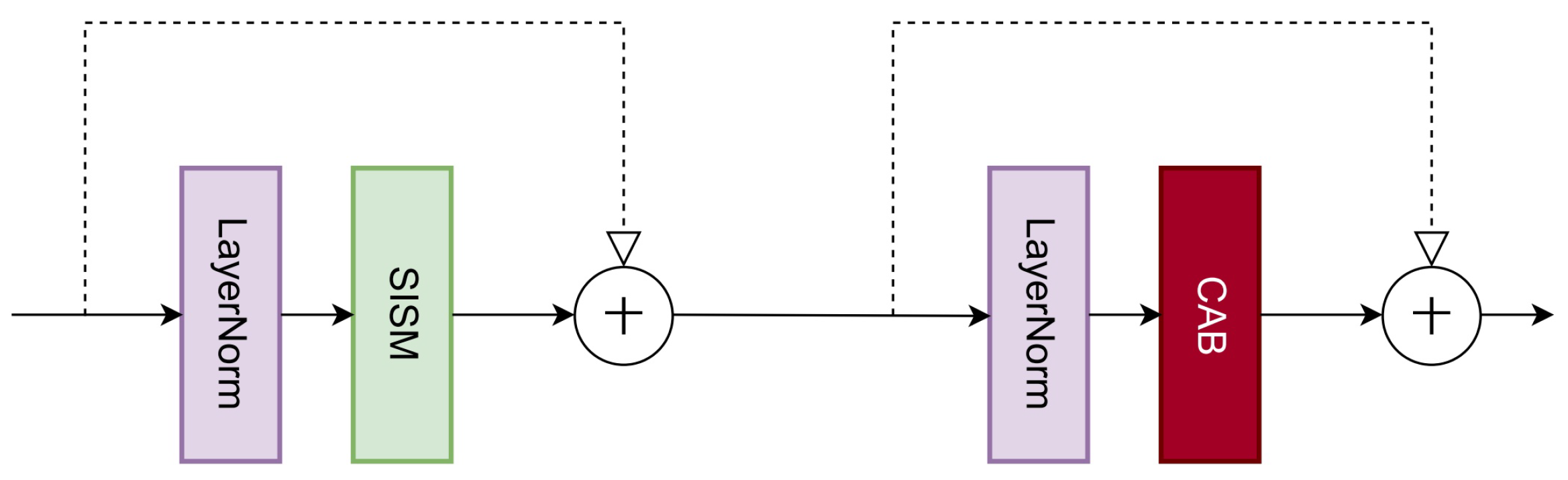

4.2.1. Semantic-Injected State-Space Block (SISB)

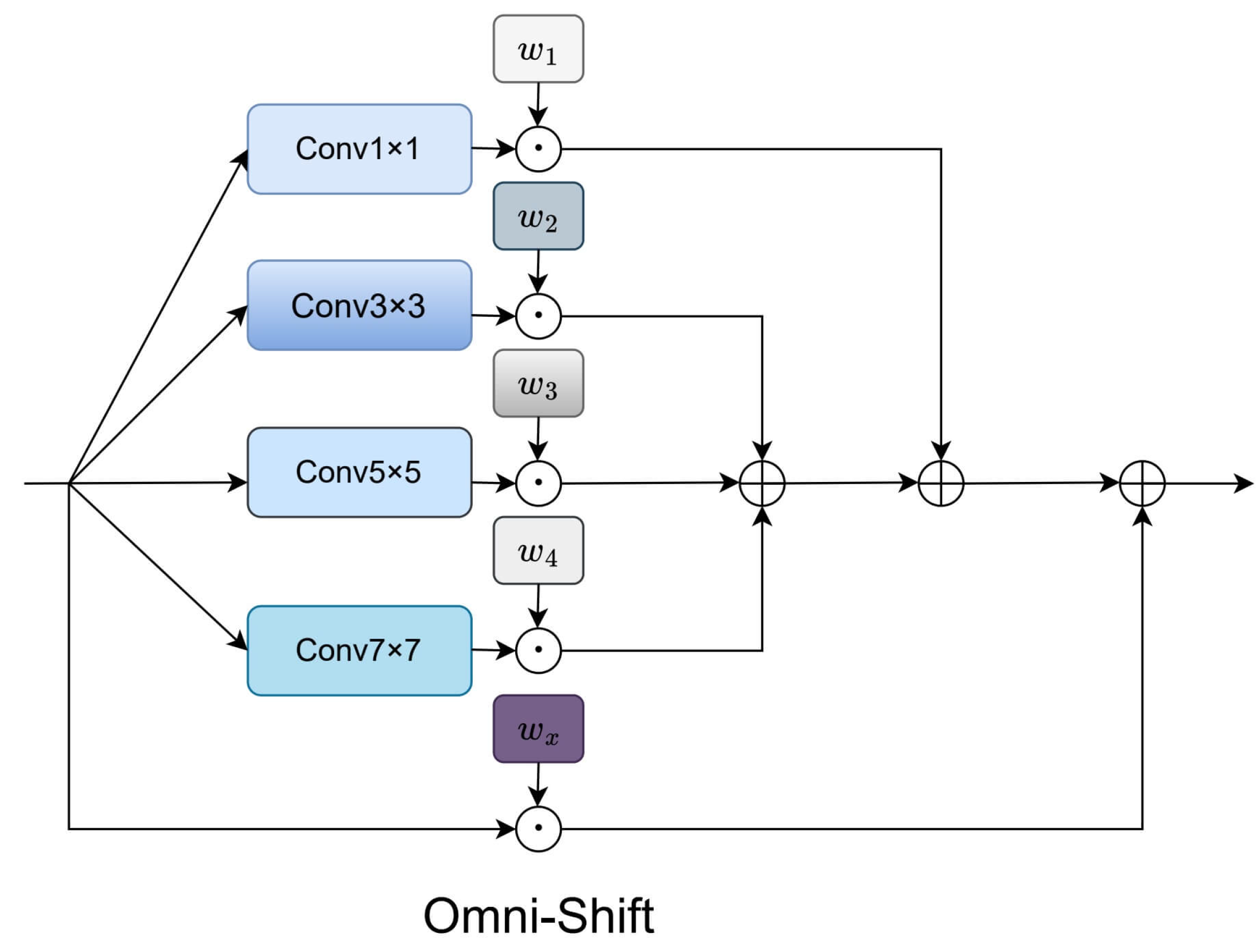

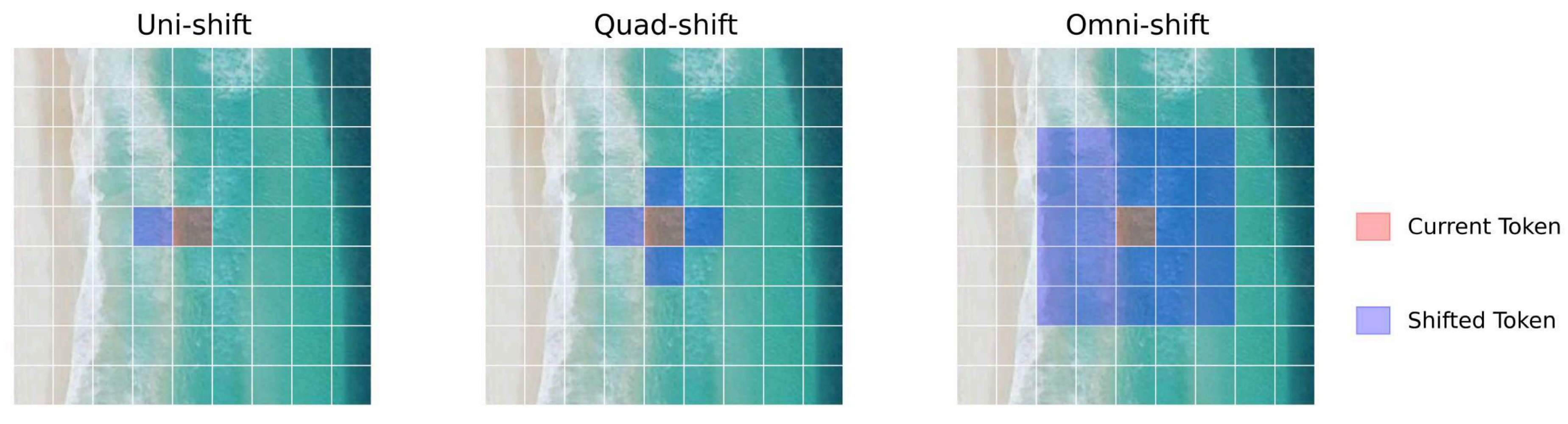

4.3. Omni-Shift Mechanism

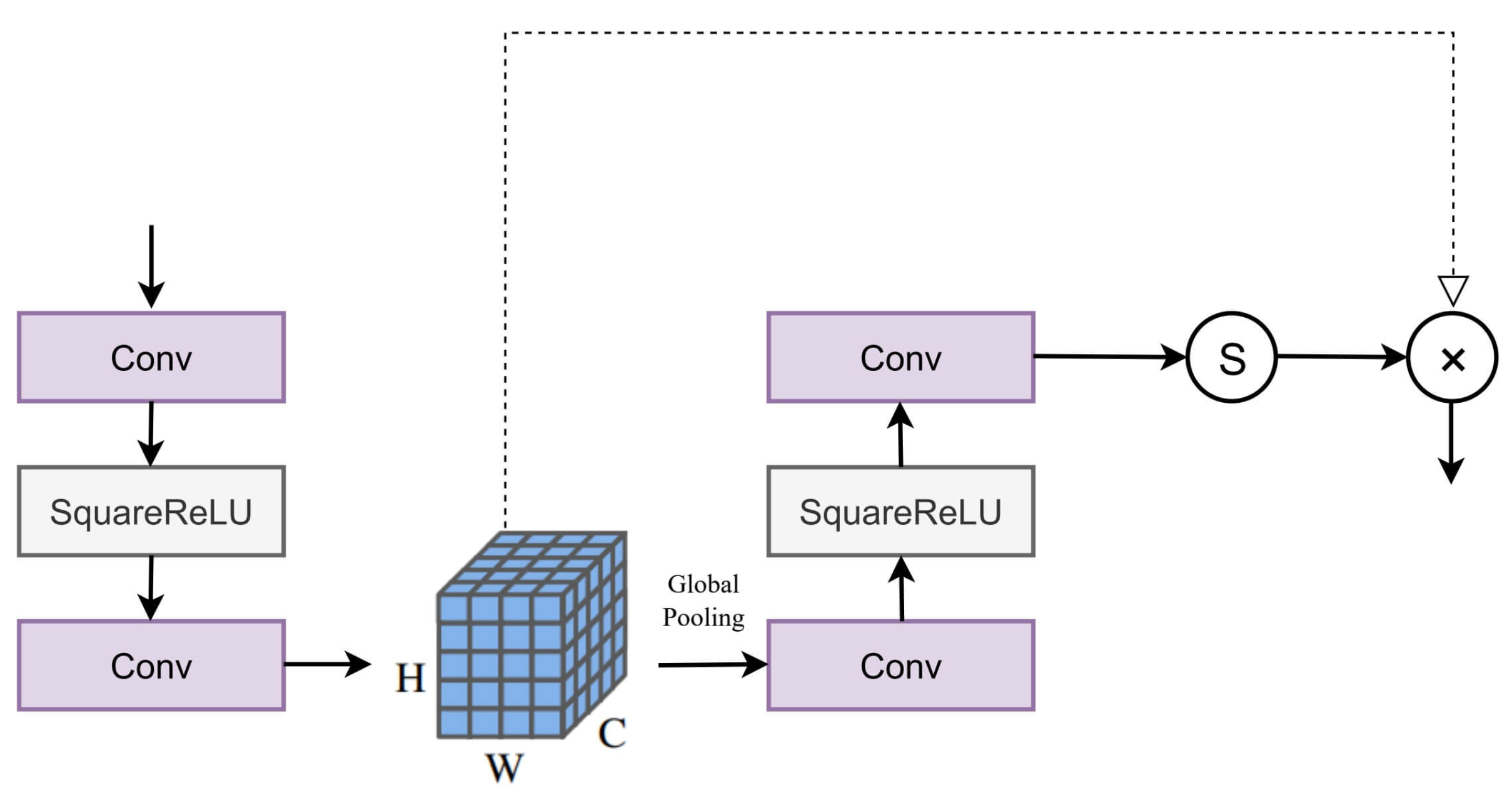

4.4. Channel Attention

4.5. Loss Function and Optimization

5. Experimental Settings

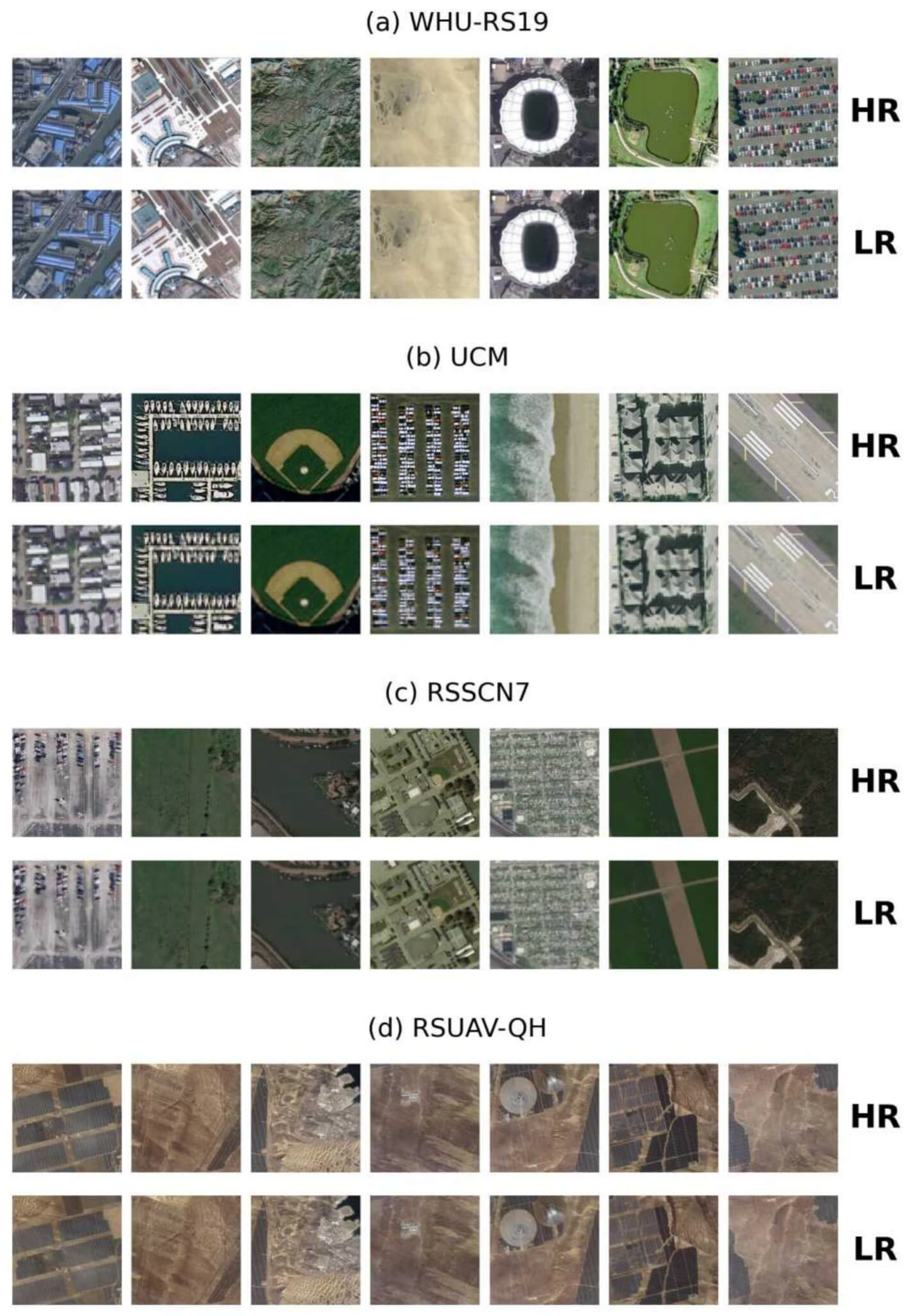

5.1. Datasets for UAV-Based Ecological Monitoring

5.2. Training Settings

6. Experimental Results

6.1. Quantitative Results

| Datasets | Method | PSNR ↑ | SSIM ↑ | NIQE ↓ | LPIPS ↓ | RMSE ↓ | SAM ↓ |

|---|---|---|---|---|---|---|---|

| RSUAV-QH | SRCNN[21] | 21.1437 | 0.7093 | 9.8234 | 0.3373 | 7.7725 | 0.1506 |

| VDSR[22] | 21.3548 | 0.7346 | 10.3282 | 0.3338 | 7.5716 | 0.1602 | |

| SwinIR[16] | 22.6073 | 0.7891 | 8.8427 | 0.2909 | 7.6129 | 0.1528 | |

| HAT[25] | 23.8924 | 0.8617 | 8.3129 | 0.2189 | 6.4297 | 0.1583 | |

| MambaIR[33] | 24.8382 | 0.8365 | 9.3182 | 0.2469 | 6.6814 | 0.1478 | |

| MambaIRv2[34] | 23.6127 | 0.8173 | 9.5198 | 0.2504 | 7.1163 | 0.1476 | |

| SIMSR (Ours) | 24.9281 | 0.8665 | 7.9073 | 0.2135 | 6.2426 | 0.1463 | |

| UCM | SRCNN[21] | 21.0571 | 0.7018 | 9.9216 | 0.3467 | 7.8808 | 0.1542 |

| VDSR[22] | 21.2666 | 0.7280 | 10.4365 | 0.3422 | 7.6733 | 0.1644 | |

| SwinIR[16] | 22.5205 | 0.7812 | 8.9439 | 0.2983 | 7.7241 | 0.1565 | |

| HAT[25] | 24.7515 | 0.8540 | 9.6211 | 0.2254 | 6.5343 | 0.1625 | |

| MambaIR[33] | 23.8029 | 0.8291 | 9.4208 | 0.2531 | 6.7818 | 0.1514 | |

| MambaIRv2[34] | 23.5250 | 0.8104 | 8.4174 | 0.2568 | 7.2239 | 0.1514 | |

| SIMSR (Ours) | 24.8312 | 0.8598 | 8.1124 | 0.2198 | 6.3469 | 0.1501 | |

| WHU-RS19 | SRCNN[21] | 23.2700 | 0.7069 | 8.1523 | 0.3589 | 8.0819 | 0.1516 |

| VDSR[22] | 23.7775 | 0.7122 | 7.1796 | 0.3682 | 8.1232 | 0.1427 | |

| SwinIR[16] | 23.4011 | 0.5908 | 7.8451 | 0.4720 | 7.8556 | 0.1473 | |

| HAT[25] | 23.6580 | 0.5993 | 8.3204 | 0.4633 | 8.6052 | 0.1422 | |

| MambaIR[33] | 23.7002 | 0.7084 | 6.6657 | 0.4052 | 8.0293 | 0.1506 | |

| MambaIRv2[34] | 23.9886 | 0.7208 | 7.5345 | 0.3755 | 7.9644 | 0.1501 | |

| SIMSR (Ours) | 24.2634 | 0.7296 | 6.5012 | 0.3544 | 7.8764 | 0.1406 |

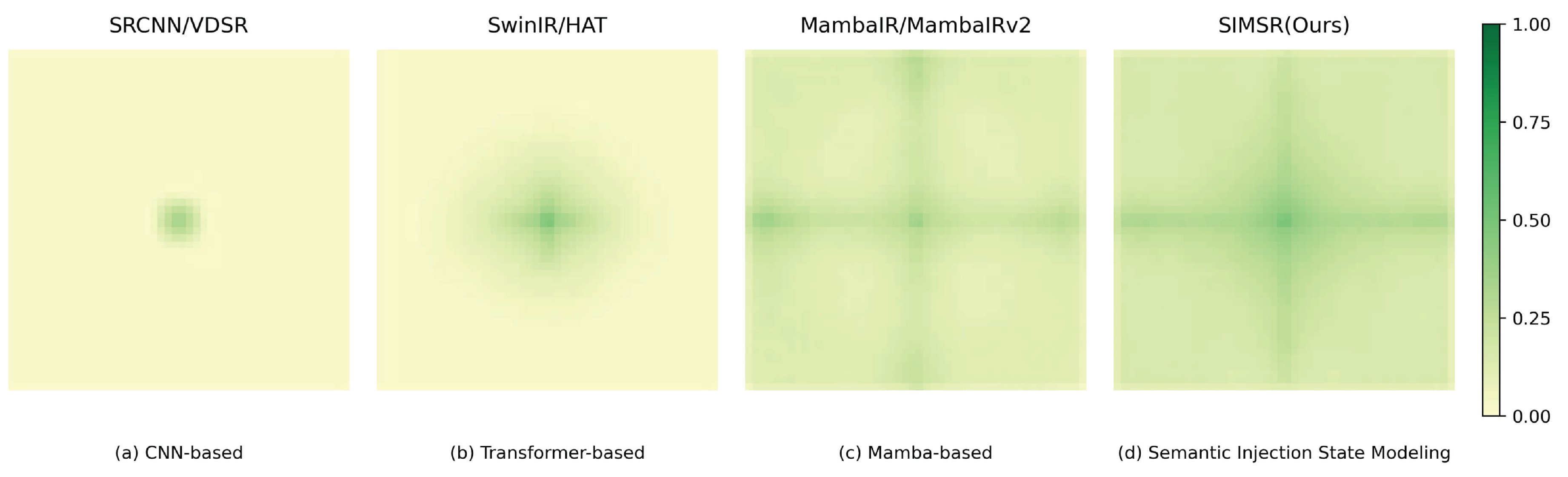

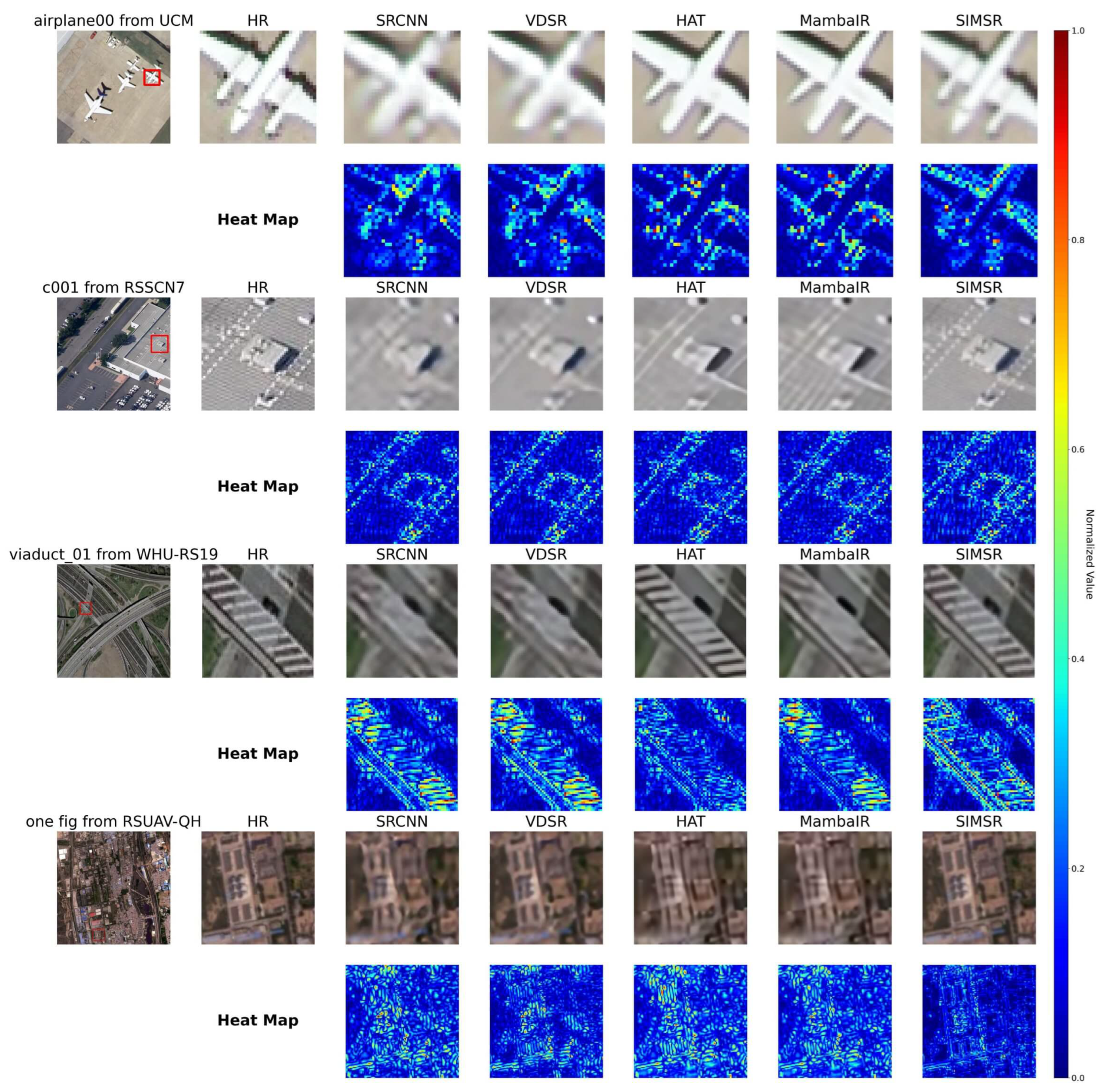

6.2. Qualitative Results and Feature Analysis

| Classes | Method | PSNR ↑ | SSIM ↑ | NIQE ↓ | LPIPS ↓ | RMSE ↓ | SAM ↓ |

|---|---|---|---|---|---|---|---|

| aGrass | SRCNN[21] | 31.6593 | 0.7717 | 7.3087 | 0.4076 | 5.4812 | 0.1617 |

| VDSR[22] | 31.7324 | 0.7729 | 7.1464 | 0.4024 | 5.4694 | 0.1614 | |

| SwinIR[16] | 31.7514 | 0.7647 | 7.5602 | 0.3620 | 5.3454 | 0.1579 | |

| HAT[25] | 31.7586 | 0.7733 | 7.2579 | 0.4036 | 5.4552 | 0.1565 | |

| MambaIR[33] | 32.5437 | 0.7951 | 7.3111 | 0.3375 | 4.9799 | 0.1548 | |

| MambaIRv2[34] | 32.8623 | 0.8202 | 7.1536 | 0.3357 | 6.1316 | 0.1587 | |

| SIMSR (Ours) | 32.9074 | 0.8257 | 7.0268 | 0.3312 | 4.8296 | 0.1539 | |

| bField | SRCNN[21] | 30.9887 | 0.6972 | 7.7422 | 0.4384 | 5.8524 | 0.1579 |

| VDSR[22] | 31.0113 | 0.6880 | 7.9297 | 0.4175 | 5.8004 | 0.1592 | |

| SwinIR[16] | 31.0884 | 0.6996 | 7.5414 | 0.4309 | 5.8324 | 0.1563 | |

| HAT[25] | 31.1053 | 0.6998 | 7.6590 | 0.4331 | 5.8254 | 0.1546 | |

| MambaIR[33] | 31.5853 | 0.7124 | 7.7017 | 0.3976 | 5.5454 | 0.1542 | |

| MambaIRv2[34] | 31.8543 | 0.7381 | 7.6077 | 0.3744 | 7.2795 | 0.1544 | |

| SIMSR (Ours) | 31.9009 | 0.7428 | 7.4724 | 0.3713 | 5.4483 | 0.1530 | |

| cIndustry | SRCNN[21] | 23.8071 | 0.6530 | 7.5993 | 0.3959 | 7.7559 | 0.1540 |

| VDSR[22] | 24.2170 | 0.6841 | 6.9130 | 0.4155 | 7.7071 | 0.1544 | |

| SwinIR[16] | 24.2811 | 0.6874 | 7.0884 | 0.3713 | 7.4907 | 0.1543 | |

| HAT[25] | 24.5127 | 0.6972 | 7.0539 | 0.3946 | 7.6396 | 0.1539 | |

| MambaIR[33] | 24.5198 | 0.6976 | 7.3548 | 0.3897 | 7.6512 | 0.1525 | |

| MambaIRv2[34] | 25.1669 | 0.7423 | 6.1057 | 0.3465 | 7.5097 | 0.1522 | |

| SIMSR (Ours) | 25.2485 | 0.7488 | 5.8562 | 0.3416 | 7.2029 | 0.1501 | |

| dRiverLake | SRCNN[21] | 26.0556 | 0.7788 | 6.8601 | 0.3210 | 6.1159 | 0.1512 |

| VDSR[22] | 28.9093 | 0.7741 | 6.7854 | 0.3498 | 5.7623 | 0.1517 | |

| SwinIR[16] | 28.9152 | 0.7847 | 6.8352 | 0.3793 | 5.8470 | 0.1517 | |

| HAT[25] | 29.0360 | 0.7872 | 6.8792 | 0.3729 | 5.8266 | 0.1632 | |

| MambaIR[33] | 29.0572 | 0.7875 | 6.8479 | 0.3748 | 5.8134 | 0.1675 | |

| MambaIRv2[34] | 29.4932 | 0.8008 | 6.8506 | 0.3075 | 5.4813 | 0.1698 | |

| SIMSR (Ours) | 29.5927 | 0.8072 | 6.7513 | 0.3035 | 5.2786 | 0.1508 | |

| eForest | SRCNN[21] | 26.3516 | 0.5835 | 9.0943 | 0.5012 | 7.9684 | 0.1637 |

| VDSR[22] | 26.3947 | 0.5854 | 8.9165 | 0.5087 | 7.9465 | 0.1537 | |

| SwinIR[16] | 26.4321 | 0.5852 | 8.8184 | 0.4994 | 7.9394 | 0.1514 | |

| HAT[25] | 26.4655 | 0.5713 | 8.8667 | 0.4525 | 7.9155 | 0.1509 | |

| MambaIR[33] | 26.8391 | 0.5948 | 9.2291 | 0.4448 | 7.7438 | 0.1625 | |

| MambaIRv2[34] | 30.2330 | 0.8339 | 6.4067 | 0.3120 | 8.2871 | 0.1586 | |

| SIMSR (Ours) | 30.2738 | 0.8414 | 6.3091 | 0.3061 | 7.6905 | 0.1502 | |

| fResident | SRCNN[21] | 22.9982 | 0.6361 | 8.5432 | 0.4148 | 8.2945 | 0.1669 |

| VDSR[22] | 23.2386 | 0.6630 | 8.3945 | 0.4454 | 8.2801 | 0.1571 | |

| SwinIR[16] | 23.4050 | 0.6661 | 8.4604 | 0.3976 | 8.0717 | 0.1560 | |

| HAT[25] | 23.4675 | 0.6743 | 8.1616 | 0.4290 | 8.2068 | 0.1543 | |

| MambaIR[33] | 23.4765 | 0.6749 | 8.4901 | 0.4248 | 8.2172 | 0.1541 | |

| MambaIRv2[34] | 27.6244 | 0.6900 | 9.4581 | 0.4196 | 8.1094 | 0.1564 | |

| SIMSR (Ours) | 27.6757 | 0.6955 | 8.0123 | 0.4127 | 7.8572 | 0.1508 | |

| gParking | SRCNN[21] | 23.2839 | 0.6139 | 7.5217 | 0.4232 | 7.7822 | 0.1560 |

| VDSR[22] | 23.5637 | 0.6429 | 6.8784 | 0.4400 | 7.7423 | 0.1573 | |

| SwinIR[16] | 23.6548 | 0.6469 | 7.0965 | 0.3974 | 7.5371 | 0.1558 | |

| HAT[25] | 23.8184 | 0.6568 | 6.7592 | 0.4190 | 7.6764 | 0.1553 | |

| MambaIR[33] | 23.8386 | 0.6578 | 6.9988 | 0.4155 | 7.6839 | 0.1552 | |

| MambaIRv2[34] | 25.0994 | 0.7680 | 7.3963 | 0.3538 | 7.4748 | 0.1545 | |

| SIMSR (Ours) | 25.1659 | 0.7766 | 6.6415 | 0.3489 | 7.3752 | 0.1533 |

7. Ablation Study and Deeper Analysis

7.1. Cross-Dataset Component Analysis

7.2. Semantic Mask Robustness Analysis

7.3. Endpoint Device Deployment Performance

7.4. Ablation Study with Detailed Component Analysis

7.5. Computational Efficiency and Resource Analysis

7.6. Efficiency and Complexity Analysis

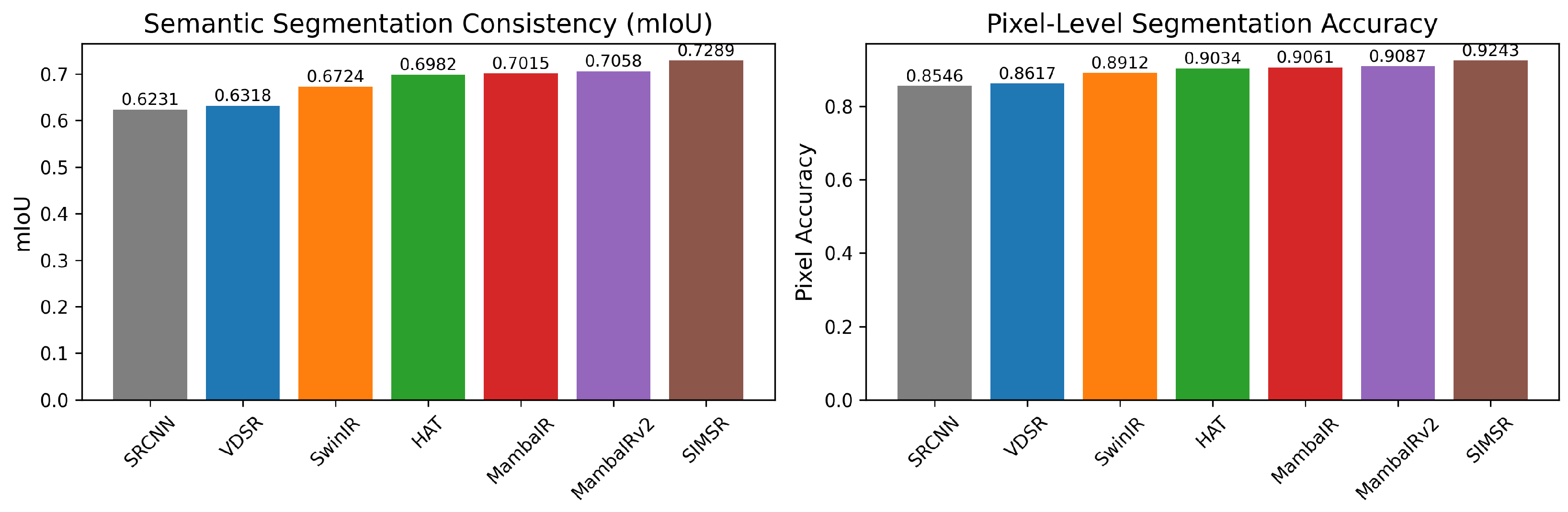

7.7. Semantic Consistency Evaluation

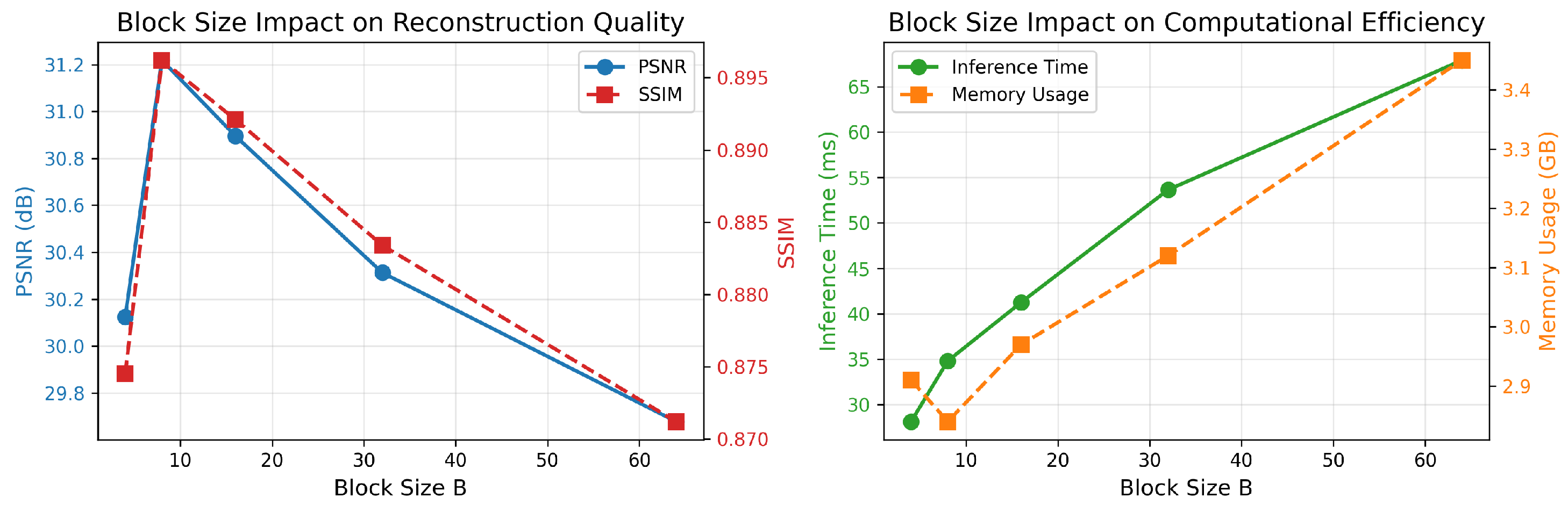

7.8. Impact of Semantic Block Size

7.9. Geographically-Chunked Processing Analysis

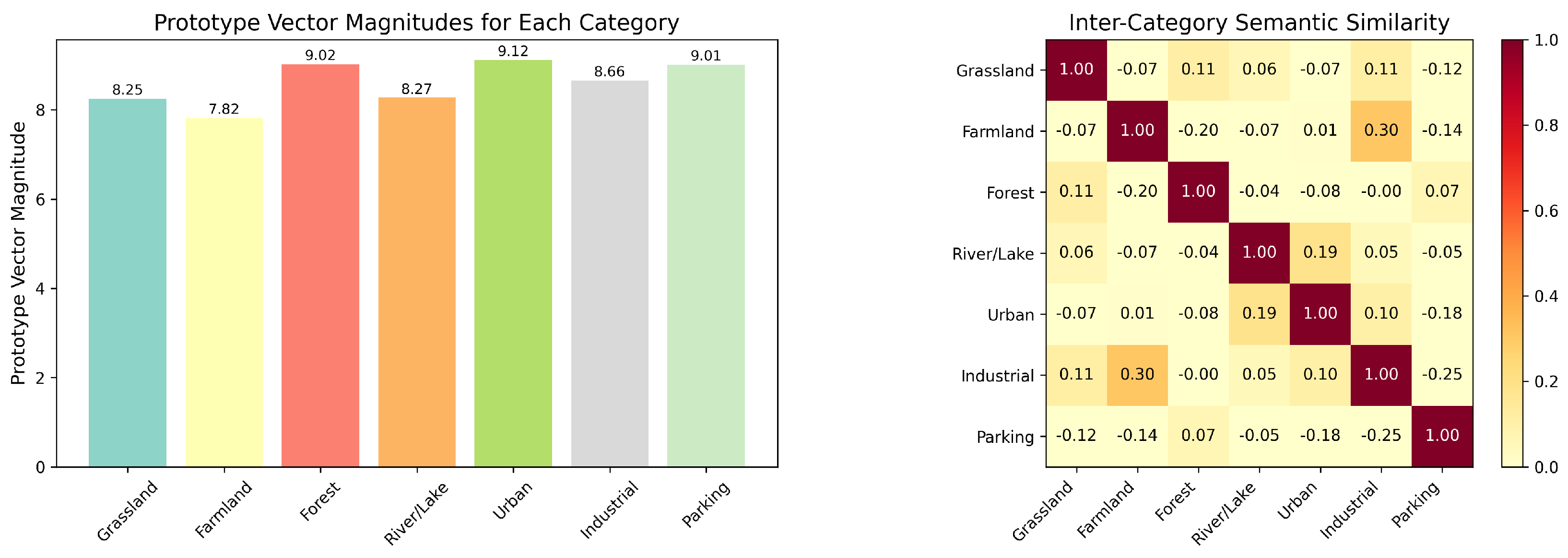

7.10. Semantic Prototype Analysis

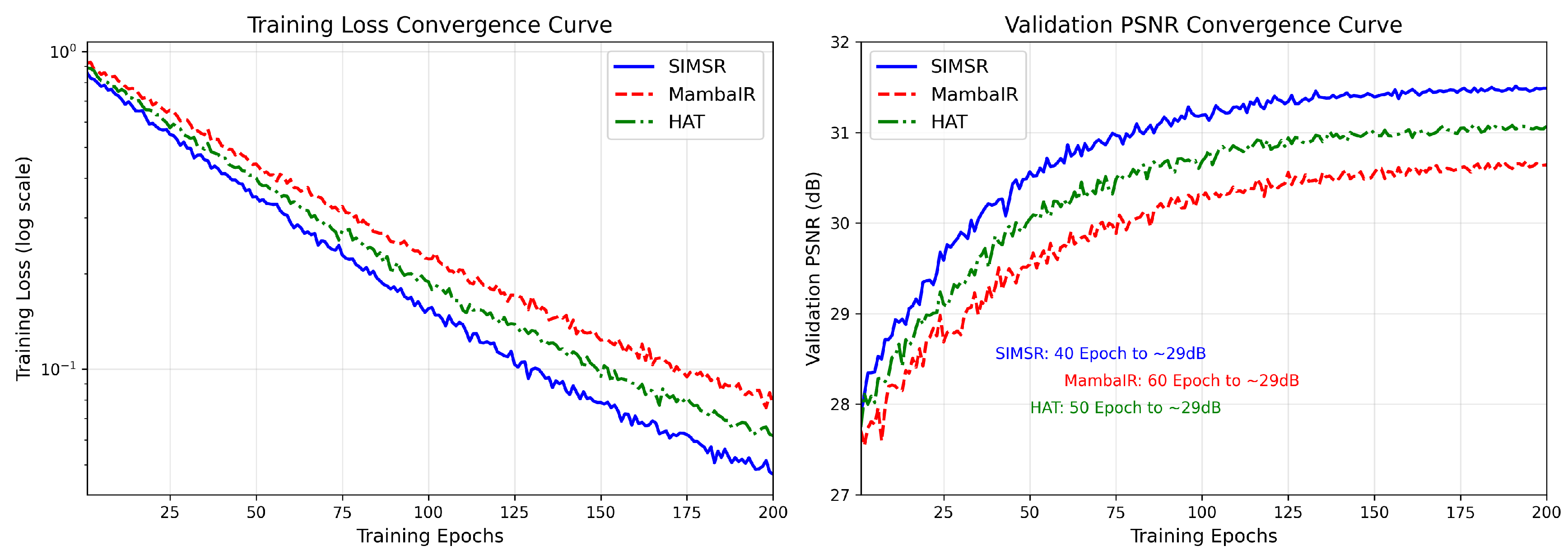

7.11. Training Dynamics Analysis

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

References

- Jcgm, J.; et al. Evaluation of measurement data—Guide to the expression of uncertainty in measurement. Int. Organ. Stand. Geneva ISBN 2008, 50, 134.

- Drake Jr, P.J. Dimensioning and tolerancing handbook; McGraw-Hill, 1999.

- Huete, A.; Didan, K.; Miura, T.; Rodriguez, E.P.; Gao, X.; Ferreira, L.G. Overview of the radiometric and biophysical performance of the MODIS vegetation indices. Remote sensing of environment 2002, 83, 195–213.

- Petropoulos, G. Remote Sensing of Land Surface Turbulent Fluxes and Soil Moisture 2013.

- Li, S.; Dragicevic, S.; Castro, F.A.; Sester, M.; Winter, S.; Coltekin, A.; Pettit, C.; Jiang, B.; Haworth, J.; Stein, A.; et al. Geospatial big data handling theory and methods: A review and research challenges. ISPRS journal of Photogrammetry and Remote Sensing 2016, 115, 119–133.

- Platel, A.; Sandino, J.; Shaw, J.; Bollard, B.; Gonzalez, F. Advancing Sparse Vegetation Monitoring in the Arctic and Antarctic: A Review of Satellite and UAV Remote Sensing, Machine Learning, and Sensor Fusion. Remote Sensing 2025, 17. [CrossRef]

- Albanwan, H.; Qin, R.; Liu, J.K. Remote Sensing-Based 3D Assessment of Landslides: A Review of the Data, Methods, and Applications. Remote Sensing 2024, 16. [CrossRef]

- Kumar, S.; Meena, R.S.; Sheoran, S.; Jangir, C.K.; Jhariya, M.K.; Banerjee, A.; Raj, A. Chapter 5 - Remote sensing for agriculture and resource management. In Natural Resources Conservation and Advances for Sustainability; Jhariya, M.K.; Meena, R.S.; Banerjee, A.; Meena, S.N., Eds.; Elsevier, 2022; pp. 91–135. [CrossRef]

- Mathieu, R.; Freeman, C.; Aryal, J. Mapping private gardens in urban areas using object-oriented techniques and very high-resolution satellite imagery. Landscape and Urban Planning 2007, 81, 179–192. [CrossRef]

- Li, J.; Pei, Y.; Zhao, S.; Xiao, R.; Sang, X.; Zhang, C. A Review of Remote Sensing for Environmental Monitoring in China. Remote Sensing 2020, 12. [CrossRef]

- Stöcker, C.; Bennett, R.; Nex, F.; Gerke, M.; Zevenbergen, J. Review of the Current State of UAV Regulations. Remote Sensing 2017, 9. [CrossRef]

- Song, Y.; Sun, L.; Bi, J.; Quan, S.; Wang, X. DRGAN: A Detail Recovery-Based Model for Optical Remote Sensing Images Super-Resolution. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–13. [CrossRef]

- Chung, M.; Jung, M.; Kim, Y. Enhancing Remote Sensing Image Super-Resolution Guided by Bicubic-Downsampled Low-Resolution Image. Remote Sensing 2023, 15. [CrossRef]

- Zhang, Y.; Li, K.; Li, K.; Wang, L.; Zhong, B.; Fu, Y. Image super-resolution using very deep residual channel attention networks. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 286–301.

- de França e Silva, N.R.; Chaves, M.E.D.; Luciano, A.C.d.S.; Sanches, I.D.; de Almeida, C.M.; Adami, M. Sugarcane Yield Estimation Using Satellite Remote Sensing Data in Empirical or Mechanistic Modeling: A Systematic Review. Remote Sensing 2024, 16. [CrossRef]

- Liang, J.; Cao, J.; Sun, G.; Zhang, K.; Van Gool, L.; Timofte, R. Swinir: Image restoration using swin transformer. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 1833–1844.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, .; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in neural information processing systems, 2017, pp. 5998–6008.

- Somvanshi, S.; Monzurul Islam, M.; Sultana Mimi, M.; Bashar Polock, S.B.; Chhetri, G.; Das, S. From S4 to Mamba: A Comprehensive Survey on Structured State Space Models. arXiv e-prints 2025, p. arXiv:2503.18970, [arXiv:stat.ML/2503.18970]. [CrossRef]

- Wang, Y.; Yuan, W.; Xie, F.; Lin, B. ESatSR: Enhancing Super-Resolution for Satellite Remote Sensing Images with State Space Model and Spatial Context. Remote Sensing 2024, 16. [CrossRef]

- Keys, R. Cubic convolution interpolation for digital image processing. IEEE Transactions on Acoustics, Speech, and Signal Processing 1981, 29, 1153–1160.

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Image super-resolution using deep convolutional networks. In Proceedings of the IEEE Transactions on Pattern Analysis and Machine Intelligence. IEEE, 2015, Vol. 38, pp. 295–307.

- Kim, J.; Lee, J.K.; Lee, K.M. Accurate image super-resolution using very deep convolutional networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 1646–1654.

- Zhang, Z.; Liu, J.; Wang, L. Swinfir: Rethinking the swinir for image restoration and enhancement. In Proceedings of the 2022 IEEE International Conference on Multimedia and Expo (ICME). IEEE, 2022, pp. 1–6.

- Chen, X.; Wang, Y.; Wu, G.; Chen, J.; Liu, J. Activating more pixels in image super-resolution transformer. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023, pp. 11612–11621.

- Chen, X.; Wang, X.; Zhang, W.; Kong, X.; Qiao, Y.; Zhou, J.; Dong, C. HAT: Hybrid Attention Transformer for Image Restoration, 2024, [arXiv:cs.CV/2309.05239].

- Chen, C.; Yang, H.; Chen, S.; Xi, F.; Liu, Z. A Data-Driven Motion Compensation Scheme for Compressed Sensing SAR Image Restoration. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–18. [CrossRef]

- Luan, X.; Fan, H.; Wang, Q.; Yang, N.; Liu, S.; Li, X.; Tang, Y. FMambaIR: A Hybrid State-Space Model and Frequency Domain for Image Restoration. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–14. [CrossRef]

- Zhou, Y.; Suo, J.; Wang, Y.; Su, J.; Xiao, W.; Hong, Z.; Ranjan, R.; Wang, L.; Wen, Z. MMCANet A Multimodal and Cross-Attention Network for Cloud Removal and Exploration of Progressive Remote Sensing Images Restoration Algorithm. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–13. [CrossRef]

- Zhang, W.; Qu, Q.; Qiu, A.; Li, Z.; Liu, X.; Li, Y. Efficient Denoising of Ultrasonic Logging While Drilling Images: Multinoise Diffusion Denoising and Distillation. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–17. [CrossRef]

- Huang, Z.; Yang, Y.; Yu, H.; Li, Q.; Shi, Y.; Zhang, Y.; Fang, H. RCST: Residual Context-Sharing Transformer Cascade to Approximate Taylor Expansion for Remote Sensing Image Denoising. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–15. [CrossRef]

- Cui, Y.; Bin Waheed, U.; Chen, Y. Unsupervised Deep Learning for DAS-VSP Denoising Using Attention-Based Deep Image Prior. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–14. [CrossRef]

- Zhang, Y.; Li, K.; Li, K.; Wang, L.; Zhong, B.; Fu, Y. Image Super-Resolution Using Very Deep Residual Channel Attention Networks. In Proceedings of the The European Conference on Computer Vision (ECCV), September 2018.

- Zhao, W.; Wang, L.; Zhang, K. MambaIR: A Simple and Efficient State Space Model for Image Restoration. arXiv preprint arXiv:2403.09963 2024.

- Guo, H.; Guo, Y.; Zha, Y.; Zhang, Y.; Li, W.; Dai, T.; Xia, S.T.; Li, Y. MambaIRv2: Attentive State Space Restoration, 2025, [arXiv:eess.IV/2411.15269].

- Zhu, L.; Liao, B.; Zhang, Q.; Wang, X.; Liu, W.; Wang, X. Vision Mamba: Efficient Visual Representation Learning with Bidirectional State Space Model, 2024, [arXiv:cs.CV/2401.09417].

- Liu, Y.; Tian, Y.; Zhao, Y.; Yu, H.; Xie, L.; Wang, Y.; Ye, Q.; Jiao, J.; Liu, Y. VMamba: Visual State Space Model. In Proceedings of the Advances in Neural Information Processing Systems; Globerson, A.; Mackey, L.; Belgrave, D.; Fan, A.; Paquet, U.; Tomczak, J.; Zhang, C., Eds. Curran Associates, Inc., 2024, Vol. 37, pp. 103031–103063.

- Zhang, H.; Zhu, Y.; Wang, D.; Zhang, L.; Chen, T.; Wang, Z.; Ye, Z. A Survey on Visual Mamba. Applied Sciences 2024, 14. [CrossRef]

- He, X.; Cao, K.; Zhang, J.; Yan, K.; Wang, Y.; Li, R.; Xie, C.; Hong, D.; Zhou, M. Pan-Mamba: Effective pan-sharpening with state space model. Information Fusion 2025, 115, 102779. [CrossRef]

- Zhu, Q.; Zhang, G.; Zou, X.; Wang, X.; Huang, J.; Li, X. ConvMambaSR: Leveraging State-Space Models and CNNs in a Dual-Branch Architecture for Remote Sensing Imagery Super-Resolution. Remote Sensing 2024, 16. [CrossRef]

- Lim, B.; Son, S.; Kim, H.; Nah, S.; Lee, K.M. Enhanced deep residual networks for single image super-resolution. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition workshops, 2017, pp. 136–144.

- Zou, Q.; Ni, L.; Zhang, T.; Wang, Q. Deep Learning Based Feature Selection for Remote Sensing Scene Classification. IEEE Geoscience and Remote Sensing Letters 2015, 12, 2321–2325. [CrossRef]

- Yang, Y.; Newsam, S.D. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th ACM SIGSPATIAL International Symposium on Advances in Geographic Information Systems, ACM-GIS 2010, November 3-5, 2010, San Jose, CA, USA, Proceedings; Agrawal, D.; Zhang, P.; Abbadi, A.E.; Mokbel, M.F., Eds. ACM, 2010, pp. 270–279. [CrossRef]

- Dai, D.; Yang, W. Satellite Image Classification via Two-Layer Sparse Coding With Biased Image Representation. IEEE Geoscience and Remote Sensing Letters 2011, 8, 173–176. [CrossRef]

- Wang, Z.; Bovik, A.; Sheikh, H.; Simoncelli, E. Image quality assessment: from error visibility to structural similarity. IEEE Transactions on Image Processing 2004, 13, 600–612. [CrossRef]

- Yuhas, R.; Goetz, A.; Boardman, J. Discrimination among semi-arid landscape endmembers using the Spectral Angle Mapper (SAM) algorithm 1992.

- Mittal, A.; Soundararajan, R.; Bovik, A.C. Making a “Completely Blind” Image Quality Analyzer. IEEE Signal Processing Letters 2013, 20, 209–212. [CrossRef]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The Unreasonable Effectiveness of Deep Features as a Perceptual Metric. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2018.

- Gu, J.; Dong, C. Interpreting Super-Resolution Networks with Local Attribution Maps. In Proceedings of the Computer Vision and Pattern Recognition, 2021.

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic Attribution for Deep Networks. In Proceedings of the Proceedings of the 34th International Conference on Machine Learning; Precup, D.; Teh, Y.W., Eds. PMLR, 06–11 Aug 2017, Vol. 70, Proceedings of Machine Learning Research, pp. 3319–3328.

| Dataset Type | Component | PSNR ↑ | SSIM ↑ | SAM ↓ | LPIPS ↓ |

|---|---|---|---|---|---|

| Agricultural (RSSCN7 Grassland) | Baseline (w/o Semantic) | 30.8245 | 0.8126 | 0.1593 | 0.3418 |

| + Semantic Decomposition | 32.2176 | 0.8194 | 0.1547 | 0.3362 | |

| + 2D Scanning | 32.5941 | 0.8238 | 0.1532 | 0.3314 | |

| Full SIMSR | 32.9074 | 0.8257 | 0.1539 | 0.3312 | |

| Urban (UCM Residential) | Baseline (w/o Semantic) | 26.3874 | 0.6865 | 0.1574 | 0.4263 |

| + Semantic Decomposition | 27.1247 | 0.6928 | 0.1526 | 0.4175 | |

| + 2D Scanning | 27.4382 | 0.6941 | 0.1512 | 0.4148 | |

| Full SIMSR | 27.6757 | 0.6955 | 0.1508 | 0.4127 | |

| Natural Texture (WHU-RS19 Forest) | Baseline (w/o Semantic) | 29.6842 | 0.8327 | 0.1563 | 0.3184 |

| + Semantic Decomposition | 30.0128 | 0.8386 | 0.1521 | 0.3127 | |

| + 2D Scanning | 30.1845 | 0.8409 | 0.1509 | 0.3083 | |

| Full SIMSR | 30.2738 | 0.8414 | 0.1502 | 0.3061 | |

| Regular Structure (RSSCN7 Parking) | Baseline (w/o Semantic) | 24.3728 | 0.7624 | 0.1568 | 0.3627 |

| + Semantic Decomposition | 24.9163 | 0.7712 | 0.1549 | 0.3546 | |

| + 2D Scanning | 25.0847 | 0.7745 | 0.1537 | 0.3512 | |

| Full SIMSR | 25.1659 | 0.7766 | 0.1533 | 0.3489 |

| Segmentation | PSNR ↑ | SSIM ↑ | NIQE ↓ | LPIPS ↓ | mIoU ↓ | Degradation |

|---|---|---|---|---|---|---|

| Error Rate (%) | of SR Output | vs. Perfect Mask (%) | ||||

| 0 (Ground Truth) | 31.2179 | 0.8962 | 4.7261 | 0.1342 | 0.7289 | 0.00 |

| 5 | 31.0436 | 0.8917 | 4.8125 | 0.1387 | 0.7214 | -0.56 |

| 10 | 30.8754 | 0.8873 | 4.9013 | 0.1435 | 0.7138 | -1.10 |

| 15 | 30.6248 | 0.8821 | 5.0147 | 0.1498 | 0.7046 | -1.90 |

| 20 | 30.3429 | 0.8765 | 5.1382 | 0.1563 | 0.6951 | -2.80 |

| Random Mask | 28.7154 | 0.8426 | 5.8942 | 0.2014 | 0.6327 | -8.01 |

| No Semantic | 27.4973 | 0.8521 | 5.1783 | 0.2094 | 0.6832 | -11.92 |

| Device / Method | Resolution | FPS | Power (W) | Memory (MB) | Latency (ms) | PSNR ↑ | Energy per Frame (J) |

|---|---|---|---|---|---|---|---|

| NVIDIA Jetson AGX Xavier (30W Max) | |||||||

| SRCNN | 512×512 | 18.42 | 26.4 | 512 | 54.3 | 21.1437 | 1.433 |

| VDSR | 512×512 | 15.73 | 27.1 | 628 | 63.6 | 21.3548 | 1.723 |

| SwinIR | 512×512 | 3.82 | 29.3 | 1424 | 261.8 | 22.6073 | 7.670 |

| HAT | 512×512 | 3.45 | 29.8 | 1582 | 289.9 | 23.8924 | 8.639 |

| MambaIR | 512×512 | 7.64 | 28.7 | 1268 | 130.9 | 24.8382 | 3.756 |

| MambaIRv2 | 512×512 | 6.98 | 29.1 | 1342 | 143.3 | 23.6127 | 4.169 |

| SIMSR | 512×512 | 24.16 | 25.2 | 1024 | 41.4 | 24.9281 | 1.043 |

| Desktop GPU (NVIDIA RTX 4090) for Reference | |||||||

| SIMSR | 512×512 | 67.82 | 285.6 | 2912 | 14.7 | 24.9281 | 4.211 |

| Resolution Scaling on Jetson AGX Xavier | |||||||

| SIMSR | 256×256 | 87.45 | 22.7 | 364 | 11.4 | 28.3472 | 0.260 |

| SIMSR | 512×512 | 24.16 | 25.2 | 1024 | 41.4 | 24.9281 | 1.043 |

| SIMSR | 1024×1024 | 5.83 | 28.6 | 3842 | 171.5 | 21.5746 | 4.906 |

| ID | Configuration | PSNR ↑ | SSIM ↑ | NIQE ↓ | LPIPS ↓ | RMSE ↓ | SAM ↓ | FLOPs (G) |

|---|---|---|---|---|---|---|---|---|

| A1 | Baseline (ResNet backbone + 1D scan) | 25.8264 | 0.7121 | 6.8472 | 0.3415 | 7.8921 | 0.1683 | 52.34 |

| A2 | A1 + Channel Attention | 26.1248 | 0.7246 | 6.6357 | 0.3278 | 7.6345 | 0.1652 | 53.67 |

| A3 | A2 + Omni-Shift (Uni-directional) | 26.3844 | 0.7276 | 6.4470 | 0.3147 | 6.5315 | 0.1711 | 54.21 |

| A4 | A2 + Omni-Shift (Quad-directional) | 26.6158 | 0.7445 | 6.2692 | 0.3125 | 6.4983 | 0.1705 | 55.38 |

| A5 | A2 + Omni-Shift (Ours) | 27.0418 | 0.7643 | 5.9904 | 0.3024 | 6.4954 | 0.1668 | 56.74 |

| B1 | A5 + Naive Attention Backbone | 27.4862 | 0.7954 | 5.7623 | 0.2789 | 6.3127 | 0.1624 | 58.92 |

| B2 | A5 + SISM Backbone (w/o 2D scan) | 27.7597 | 0.7945 | 6.1033 | 0.2855 | 6.3229 | 0.1812 | 57.31 |

| B3 | A5 + SISM Backbone (with 2D scan) | 27.8730 | 0.8183 | 5.7611 | 0.2582 | 6.2835 | 0.1735 | 59.84 |

| C1 | B3 + MLP(ReLU) Feature Transform | 30.8137 | 0.9247 | 5.5504 | 0.1717 | 4.7993 | 0.1592 | 60.12 |

| C2 | B3 + MLP(GELU) Feature Transform | 30.8710 | 0.9347 | 5.3733 | 0.1523 | 4.7711 | 0.1569 | 60.35 |

| C3 | B3 + Channel Attention (Ours) | 31.0816 | 0.9765 | 4.7990 | 0.1097 | 4.6922 | 0.1454 | 60.78 |

| D1 | C3 w/o Semantic Decomposition | 30.1427 | 0.9124 | 5.2146 | 0.1895 | 5.1283 | 0.1578 | 58.69 |

| D2 | C3 w/o Geographic Chunking | 30.5968 | 0.9372 | 5.0387 | 0.1473 | 4.8564 | 0.1521 | 68.42 |

| D3 | Full SIMSR | 31.2179 | 0.8962 | 4.7261 | 0.1342 | 4.5821 | 0.1384 | 60.78 |

| Model | Params (M) | FLOPs (G) | Memory (MB) | Throughput (FPS) | Energy (J/frame) | Quality-Efficiency Score* |

|---|---|---|---|---|---|---|

| SRCNN | 0.58 | 8.23 | 412 | 65.8 | 0.876 | 2.47 |

| VDSR | 0.66 | 12.45 | 498 | 54.2 | 1.124 | 2.14 |

| SwinIR | 2.78 | 154.95 | 1424 | 9.8 | 8.942 | 1.82 |

| HAT | 2.82 | 142.95 | 1582 | 9.2 | 9.874 | 1.89 |

| MambaIR | 2.87 | 121.34 | 1268 | 19.3 | 4.826 | 2.12 |

| MambaIRv2 | 2.92 | 132.34 | 1342 | 17.6 | 5.327 | 1.98 |

| SIMSR | 2.12 | 60.78 | 1024 | 42.6 | 2.314 | 3.01 |

| Implementation | FLOPs (G) | Training | Inference |

|---|---|---|---|

| Naive PyTorch | 89.23 | 12h 23m | 1h 21m 10s |

| Triton (Element-wise, FP32) | 71.23 | 4h 56m | 17m 57s |

| Triton (Element-wise, BF16) | 71.23 | 4h 45m | 13m 50s |

| SIMSR (Chunk-wise, BF16) | 60.78 | 1h 25m | 7m 29s |

| Method | Params (M) | FLOPs (G) | Memory (GB) | Inference (ms) | Training (hr) | L2 Hit (%) | Bandwidth (GB/s) |

|---|---|---|---|---|---|---|---|

| SRCNN | 0.58 | 8.23 | 1.12 | 15.24 | 3.2 | 42.3 | 182.4 |

| VDSR | 0.66 | 12.45 | 1.34 | 18.67 | 4.1 | 45.7 | 175.8 |

| SwinIR | 2.78 | 154.95 | 3.89 | 126.45 | 5.4 | 41.2 | 210.3 |

| HAT | 2.82 | 142.95 | 4.12 | 134.28 | 5.0 | 44.7 | 204.1 |

| MambaIR | 2.87 | 121.34 | 3.45 | 97.82 | 4.8 | 48.5 | 163.7 |

| MambaIRv2 | 2.92 | 132.34 | 3.67 | 102.45 | 5.1 | 54.1 | 168.9 |

| SIMSR | 2.12 | 60.78 | 2.84 | 34.82 | 1.4 | 86.7 | 78.2 |

| Method | mIoU ↑ | Pixel Accuracy ↑ | Boundary F1 ↑ |

|---|---|---|---|

| SRCNN | 0.6231 | 0.8546 | 0.7124 |

| VDSR | 0.6318 | 0.8617 | 0.7215 |

| SwinIR | 0.6724 | 0.8912 | 0.7568 |

| HAT | 0.6982 | 0.9034 | 0.7812 |

| MambaIR | 0.7015 | 0.9061 | 0.7834 |

| MambaIRv2 | 0.7058 | 0.9087 | 0.7891 |

| SIMSR | 0.7289 | 0.9243 | 0.8142 |

| Block Size B | PSNR ↑ | SSIM ↑ | Inference (ms) | Memory (GB) | FLOPs (G) | Cache Hit (%) |

|---|---|---|---|---|---|---|

| 4 | 30.1247 | 0.8745 | 28.15 | 2.91 | 58.24 | 84.2 |

| 8 | 31.2179 | 0.8962 | 34.82 | 2.84 | 60.78 | 86.7 |

| 16 | 30.8954 | 0.8921 | 41.27 | 2.97 | 63.15 | 82.4 |

| 32 | 30.3128 | 0.8834 | 53.64 | 3.12 | 67.82 | 78.9 |

| 64 | 29.6783 | 0.8712 | 67.89 | 3.45 | 72.41 | 73.5 |

| Chunking Strategy | L1 Hit (%) | L2 Hit (%) | L3 Hit (%) | Bandwidth (GB/s) | Compute Util. (%) | Power (W) |

|---|---|---|---|---|---|---|

| No chunking (global) | 62.3 | 41.8 | 78.5 | 210.2 | 68.2 | 312.4 |

| Fixed grid chunking | 78.5 | 63.4 | 85.7 | 155.9 | 82.4 | 285.7 |

| Random chunking | 71.2 | 58.9 | 81.3 | 178.3 | 75.6 | 298.1 |

| Semantic-guided | 92.7 | 86.7 | 94.2 | 78.2 | 95.3 | 267.8 |

| Category | Grass | Field | Forest | River/Lake | Urban | Industrial | Parking |

|---|---|---|---|---|---|---|---|

| Grass | 1.0000 | 0.8264 | 0.7951 | 0.3142 | 0.5127 | 0.4673 | 0.4892 |

| Field | 0.8264 | 1.0000 | 0.7689 | 0.2987 | 0.5346 | 0.4815 | 0.5037 |

| Forest | 0.7951 | 0.7689 | 1.0000 | 0.3248 | 0.4876 | 0.4539 | 0.4721 |

| River/Lake | 0.3142 | 0.2987 | 0.3248 | 1.0000 | 0.2135 | 0.1984 | 0.2276 |

| Urban | 0.5127 | 0.5346 | 0.4876 | 0.2135 | 1.0000 | 0.8214 | 0.7945 |

| Industrial | 0.4673 | 0.4815 | 0.4539 | 0.1984 | 0.8214 | 1.0000 | 0.7682 |

| Parking | 0.4892 | 0.5037 | 0.4721 | 0.2276 | 0.7945 | 0.7682 | 1.0000 |

| Method | Epochs to 29dB | Final PSNR | Training Stability | LR Schedule | Batch Size |

|---|---|---|---|---|---|

| SRCNN | 80 | 25.83 | Moderate | Step decay | 16 |

| VDSR | 75 | 26.35 | High | Step decay | 16 |

| SwinIR | 65 | 28.52 | Moderate | Cosine | 8 |

| HAT | 60 | 28.79 | High | Cosine | 8 |

| MambaIR | 55 | 29.12 | High | Cosine | 16 |

| MambaIRv2 | 50 | 29.45 | High | Cosine | 16 |

| SIMSR | 40 | 31.22 | Very High | Cosine | 16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.