Submitted:

22 July 2025

Posted:

23 July 2025

Read the latest preprint version here

Abstract

Keywords:

Introduction

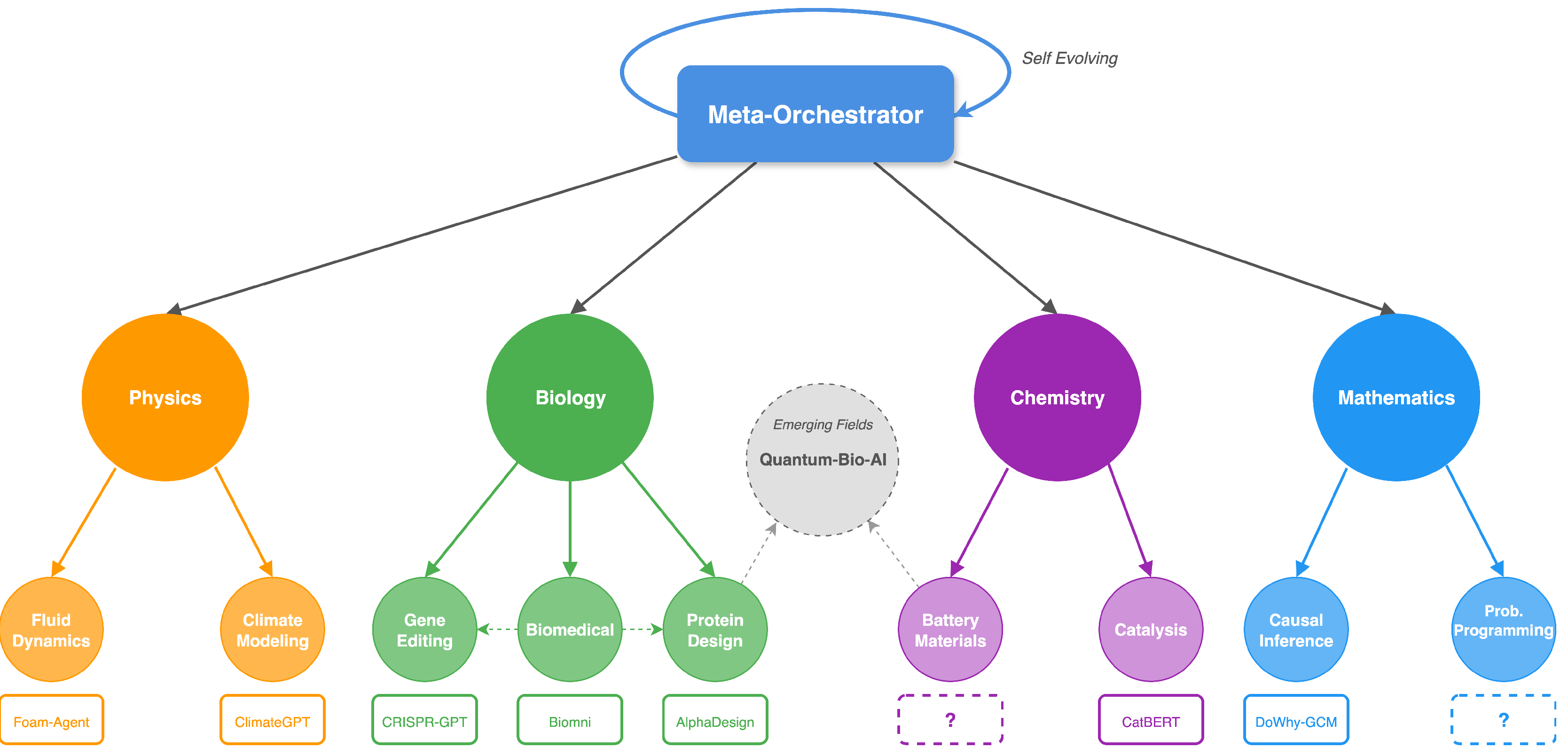

Hierarchical Self-Evolving Architecture

| Algorithm 1:Dynamic Agent Spawning |

|

Protocol-Based Interoperability

| Algorithm 2:Hierarchical Task Decomposition |

|

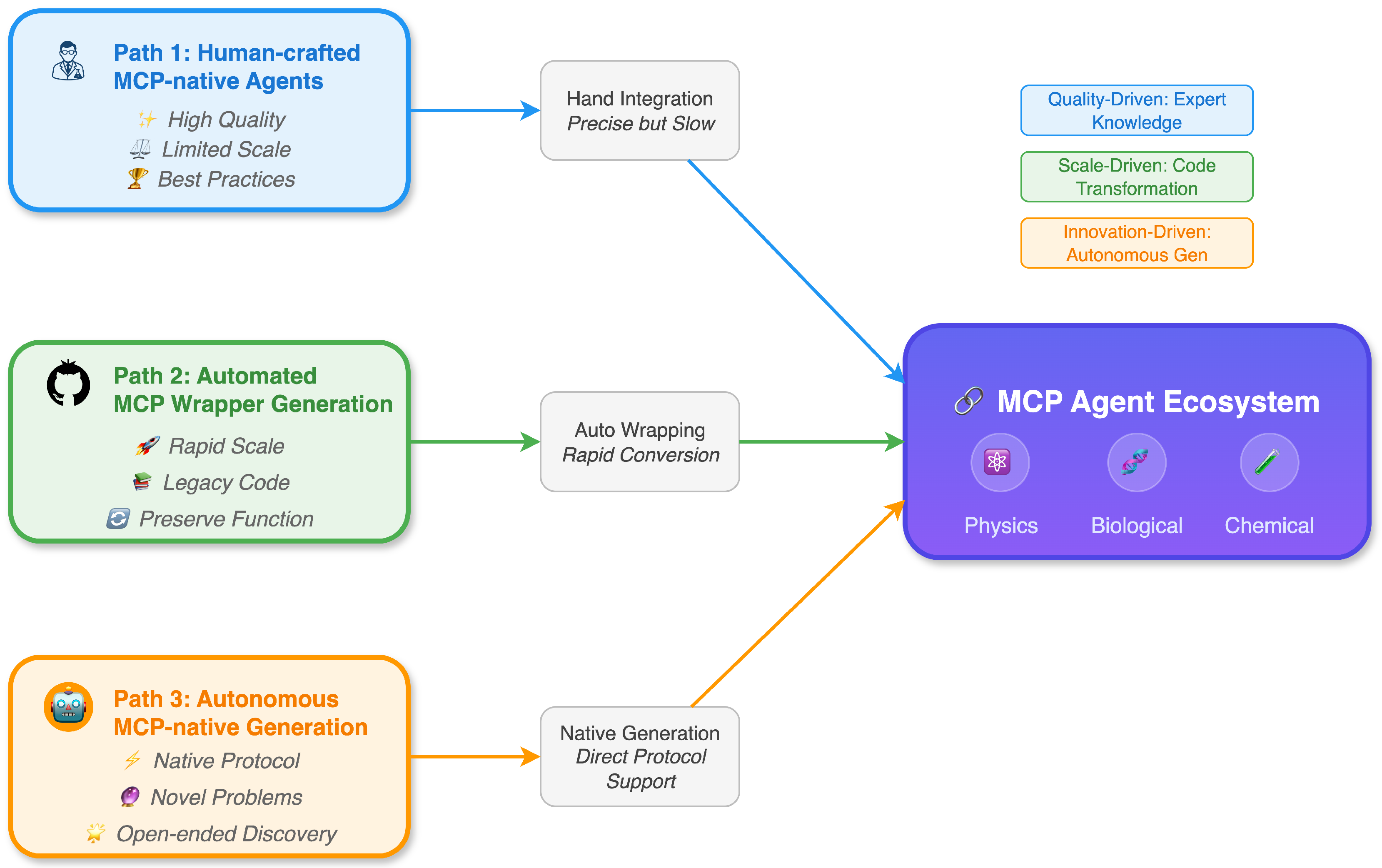

Three Implementation Pathways

| Path 1 | Path 2 | Path 3 | |

|---|---|---|---|

| Human-crafted | Automated transformation | Autonomous generation | |

| Quality | High | Medium | Variable |

| Scalability | Low | High | High |

| Novel capabilities | Limited | No | Yes |

| Development effort | High | Medium | Low (once developed) |

Case Study: Adaptive Materials Discovery

Discussion and Future Directions

References

- Ren, S.; Jian, P.; Ren, Z.; Leng, C.; Xie, C.; Zhang, J. Towards Scientific Intelligence: A Survey of LLM-based Scientific Agents, 2025. arXiv:2503.24047. [CrossRef]

- Gridach, M.; Nanavati, J.; Abidine, K.Z.E.; Mendes, L.; Mack, C. Agentic AI for Scientific Discovery: A Survey of Progress, Challenges, and Future Directions, 2025. arXiv:2503.08979. [CrossRef]

- Koutra, D.; Huang, L.; Kulkarni, A.; Prioleau, T.; Soh, B.; Yan, Y.; Yang, Y.; Zhou, D.; Zou, J. TOWARDS AGENTIC AI FOR SCIENCE: HYPOTHESIS GENERATION, COMPREHENSION, QUANTIFICATION, AND VALIDATION 2025.

- Lu, C.; Lu, C.; Lange, R.T.; Foerster, J.; Clune, J.; Ha, D. The AI Scientist: Towards Fully Automated Open-Ended Scientific Discovery, 2024. arXiv:2408. 06292. [Google Scholar] [CrossRef]

- Jansen, P.; Côté, M.A.; Khot, T.; Bransom, E.; Mishra, B.D.; Majumder, B.P.; Tafjord, O.; Clark, P. DISCOVERYWORLD: A Virtual Environment for Developing and Evaluating Automated Scientific Discovery Agents, 2024. arXiv:2406. 06769. [Google Scholar] [CrossRef]

- Zhang, P.; Zhang, H.; Xu, H.; Xu, R.; Wang, Z.; Wang, C.; Garg, A.; Li, Z.; Ajoudani, A.; Liu, X. Scaling Laws in Scientific Discovery with AI and Robot Scientists, 2025. arXiv:2503. 22444. [Google Scholar] [CrossRef]

- Gottweis, J.; Weng, W.H.; Daryin, A.; Tu, T.; Palepu, A.; Sirkovic, P.; Myaskovsky, A.; Weissenberger, F.; Rong, K.; Tanno, R.; et al. Towards an AI co-scientist, 2025. arXiv:2502. 18864. [Google Scholar] [CrossRef]

- Yamada, Y.; Lange, R.T.; Lu, C.; Hu, S.; Lu, C.; Foerster, J.; Clune, J.; Ha, D. The AI Scientist-v2: Workshop-Level Automated Scientific Discovery via Agentic Tree Search, 2025. arXiv:2504. 08066. [Google Scholar] [CrossRef]

- Zelikman, E.; Wu, Y.; Mu, J.; Goodman, N.D. STaR: Bootstrapping Reasoning With Reasoning, 2022. arXiv:2203. 14465. [Google Scholar] [CrossRef]

- Singh, A.; Co-Reyes, J.D.; Agarwal, R.; Anand, A.; Patil, P.; Garcia, X.; Liu, P.J.; Harrison, J.; Lee, J.; Xu, K.; et al. Beyond Human Data: Scaling Self-Training for Problem-Solving with Language Models, 2024. arXiv:2312. 06585. [Google Scholar] [CrossRef]

- Narayanan, S.; Braza, J.D.; Griffiths, R.R.; Ponnapati, M.; Bou, A.; Laurent, J.; Kabeli, O.; Wellawatte, G.; Cox, S.; Rodriques, S.G.; et al. Aviary: training language agents on challenging scientific tasks, 2024. arXiv:2412. 21154. [Google Scholar] [CrossRef]

- Liu, H.; Zhou, Y.; Li, M.; Yuan, C.; Tan, C. Literature Meets Data: A Synergistic Approach to Hypothesis Generation, 2025. arXiv:2410. 17309. [Google Scholar] [CrossRef]

- Baek, J.; Jauhar, S.K.; Cucerzan, S.; Hwang, S.J. ResearchAgent: Iterative Research Idea Generation over Scientific Literature with Large Language Models, 2025. arXiv:2404. 07738. [Google Scholar] [CrossRef]

- Schmidgall, S.; Su, Y.; Wang, Z.; Sun, X.; Wu, J.; Yu, X.; Liu, J.; Moor, M.; Liu, Z.; Barsoum, E. Agent Laboratory: Using LLM Agents as Research Assistants, 2025. arXiv:2501. 04227. [Google Scholar] [CrossRef]

- Team, N.; Zhang, B.; Feng, S.; Yan, X.; Yuan, J.; Yu, Z.; He, X.; Huang, S.; Hou, S.; Nie, Z.; et al. NovelSeek: When Agent Becomes the Scientist – Building Closed-Loop System from Hypothesis to Verification, 2025. arXiv:2505. 16938. [Google Scholar] [CrossRef]

- Liu, B.; Li, X.; Zhang, J.; Wang, J.; He, T.; Hong, S.; Liu, H.; Zhang, S.; Song, K.; Zhu, K.; et al. Advances and Challenges in Foundation Agents: From Brain-Inspired Intelligence to Evolutionary, Collaborative, and Safe Systems, 2025. arXiv:2504. 01990. [Google Scholar] [CrossRef]

- Zhou, H.; Wan, X.; Sun, R.; Palangi, H.; Iqbal, S.; Vulić, I.; Korhonen, A.; Arık, S.Ö. Multi-Agent Design: Optimizing Agents with Better Prompts and Topologies.

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J.; et al. Autogen: Enabling next-gen llm applications via multi-agent conversation. arXiv preprint arXiv:2308.08155, 2023; arXiv:2308.08155 2023. [Google Scholar]

- Li, G.; Hammoud, H.; Itani, H.; Khizbullin, D.; Ghanem, B. Camel: Communicative agents for" mind" exploration of large language model society. Advances in Neural Information Processing Systems 2023, 36, 51991–52008. [Google Scholar]

- Wang, J.; Wang, J.; Athiwaratkun, B.; Zhang, C.; Zou, J. Mixture-of-Agents Enhances Large Language Model Capabilities, 2024. arXiv:2406. 04692. [Google Scholar] [CrossRef]

- Subramaniam, V.; Du, Y.; Tenenbaum, J.B.; Torralba, A.; Li, S.; Mordatch, I. Multiagent Finetuning: Self Improvement with Diverse Reasoning Chains, 2025. arXiv:2501. 05707. [Google Scholar] [CrossRef]

- Zhang, J.; Xiang, J.; Yu, Z.; Teng, F.; Chen, X.; Chen, J.; Zhuge, M.; Cheng, X.; Hong, S.; Wang, J.; et al. AFlow: Automating Agentic Workflow Generation, 2024. arXiv:2410. 10762. [Google Scholar] [CrossRef]

- Hu, S.; Lu, C.; Clune, J. Automated Design of Agentic Systems, 2024. arXiv:2408. 08435. [Google Scholar] [CrossRef]

- Zhang, J.; Hu, S.; Lu, C.; Lange, R.; Clune, J. Darwin Godel Machine: Open-Ended Evolution of Self-Improving Agents, 2025. arXiv:2505. 22954. [Google Scholar] [CrossRef]

- Mirzadeh, I.; Alizadeh, K.; Shahrokhi, H.; Tuzel, O.; Bengio, S.; Farajtabar, M. GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models, 2024. arXiv:2410. 05229. [Google Scholar] [CrossRef]

- McGreivy, N.; Hakim, A. Weak baselines and reporting biases lead to overoptimism in machine learning for fluid-related partial differential equations. Nat Mach Intell 2024, 6, 1256–1269 arXiv:2407.07218. [Google Scholar] [CrossRef]

- Buehler, M.J. Agentic Deep Graph Reasoning Yields Self-Organizing Knowledge Networks, 2025. arXiv:2502. 13025. [Google Scholar] [CrossRef]

- Cheng, J.; Clark, P.; Richardson, K. Language Modeling by Language Models, 2025. arXiv:2506. 20249. [Google Scholar] [CrossRef]

- Campbell, Q.; Cox, S.; Medina, J.; Watterson, B.; White, A.D. MDCrow: Automating Molecular Dynamics Workflows with Large Language Models, 2025. arXiv:2502. 09565. [Google Scholar] [CrossRef]

- Holt, S.; Luyten, M.R.; Schaar, M.v.d. L2MAC: Large Language Model Automatic Computer for Extensive Code Generation, 2024. arXiv:2310. 02003. [Google Scholar] [CrossRef]

- Bengio, Y.; Cohen, M.; Fornasiere, D.; Ghosn, J.; Greiner, P.; MacDermott, M.; Mindermann, S.; Oberman, A.; Richardson, J.; Richardson, O.; et al. Superintelligent Agents Pose Catastrophic Risks: Can Scientist AI Offer a Safer Path?, 2025. arXiv:2502. 15657. [Google Scholar] [CrossRef]

- Shah, C.; White, R.W. Agents Are Not Enough, 2024. arXiv:2412. 16241. [Google Scholar] [CrossRef]

- Fu, Y.; Peng, H.; Khot, T.; Lapata, M. Improving Language Model Negotiation with Self-Play and In-Context Learning from AI Feedback, 2023. arXiv:2305. 10142. [Google Scholar] [CrossRef]

- Qi, J.; Jia, Z.; Liu, M.; Zhan, W.; Zhang, J.; Wen, X.; Gan, J.; Chen, J.; Liu, Q.; Ma, M.D.; et al. MetaScientist: A Human-AI Synergistic Framework for Automated Mechanical Metamaterial Design, 2024. arXiv:2412. 16270. [Google Scholar] [CrossRef]

- Pan, J.; Wang, X.; Neubig, G.; Jaitly, N.; Ji, H.; Suhr, A.; Zhang, Y. Training Software Engineering Agents and Verifiers with SWE-Gym, 2024. arXiv:2412. 21139. [Google Scholar] [CrossRef]

- Nguyen, C.V.; Shen, X.; Aponte, R.; Xia, Y.; Basu, S.; Hu, Z.; Chen, J.; Parmar, M.; Kunapuli, S.; Barrow, J.; et al. A Survey of Small Language Models, 2024. arXiv:2410.20011.

- Ghaffari, M.; Haeupler, B. Distributed algorithms for planar networks II: low-congestion shortcuts, mst, and min-cut. In Proceedings of the Proceedings of the twenty-seventh annual ACM-SIAM symposium on Discrete algorithms.SIAM, 2016, pp. 202–219.

- Yuksekgonul, M.; Bianchi, F.; Boen, J.; Liu, S.; Huang, Z.; Guestrin, C.; Zou, J. TextGrad: Automatic "Differentiation" via Text, 2024. arXiv:2406. 07496. [Google Scholar] [CrossRef]

- Finlayson, M.; Kulikov, I.; Bikel, D.M.; Oguz, B.; Chen, X.; Pappu, A. Post-training an LLM for RAG? Train on Self-Generated Demonstrations, 2025. arXiv:2502. 10596. [Google Scholar] [CrossRef]

- Guo, Z.; Xia, L.; Yu, Y.; Ao, T.; Huang, C. LightRAG: Simple and Fast Retrieval-Augmented Generation, 2024. arXiv:2410. 05779. [Google Scholar] [CrossRef]

- Shao, Y.; Samuel, V.; Jiang, Y.; Yang, J.; Yang, D. Collaborative Gym: A Framework for Enabling and Evaluating Human-Agent Collaboration, 2025. arXiv:2412. 15701. [Google Scholar] [CrossRef]

- Yang, C.; Wang, X.; Lu, Y.; Liu, H.; Le, Q.V.; Zhou, D.; Chen, X. Large Language Models as Optimizers, 2024. arXiv:2309. 03409. [Google Scholar] [CrossRef]

- Jiang, C.; Shu, X.; Qian, H.; Lu, X.; Zhou, J.; Zhou, A.; Yu, Y. LLMOPT: Learning to Define and Solve General Optimization Problems from Scratch, 2025. arXiv:2410. 13213. [Google Scholar] [CrossRef]

- Ghareeb, A.E.; Chang, B.; Mitchener, L.; Yiu, A.; Szostkiewicz, C.J.; Laurent, J.M.; Razzak, M.T.; White, A.D.; Hinks, M.M.; Rodriques, S.G. Robin: A multi-agent system for automating scientific discovery, 2025. arXiv:2505. 13400. [Google Scholar] [CrossRef]

- Chen, Y.; Zhang, L.; Zhu, X.; Zhou, H.; Ren, Z. OptMetaOpenFOAM: Large Language Model Driven Chain of Thought for Sensitivity Analysis and Parameter Optimization based on CFD, 2025. arXiv:2503. 01273. [Google Scholar] [CrossRef]

- Yue, L.; Somasekharan, N.; Cao, Y.; Pan, S. Foam-Agent: Towards Automated Intelligent CFD Workflows. arXiv preprint arXiv:2505.04997 2025, arXiv:2505.04997 2025.

- Yue, L.; Xing, S.; Chen, J.; Fu, T. Clinicalagent: Clinical trial multi-agent system with large language model-based reasoning. In Proceedings of the Proceedings of the 15th ACM International Conference on Bioinformatics, Computational Biology and Health Informatics, 2024, pp. 1–10. [CrossRef]

- Wang, H.; Cao, Y.; Huang, Z.; Liu, Y.; Hu, P.; Luo, X.; Song, Z.; Zhao, W.; Liu, J.; Sun, J.; et al. Recent Advances on Machine Learning for Computational Fluid Dynamics: A Survey, 2024. arXiv:2408. 12171. [Google Scholar] [CrossRef]

- Dong, Z.; Lu, Z.; Yang, Y. Fine-tuning an Large Language Model for Automating Computational Fluid Dynamics Simulations, 2025. arXiv:2504. 1: [physics] version, 0960; 09602. [Google Scholar] [CrossRef]

- Zhang, Y.; Yao, Q.; Yue, L.; Wu, X.; Zhang, Z.; Lin, Z.; Zheng, Y. Emerging drug interaction prediction enabled by a flow-based graph neural network with biomedical network. Nature Computational Science 2023, 3, 1023–1033. [Google Scholar] [CrossRef] [PubMed]

- Raissi, M.; Perdikaris, P.; Karniadakis, G. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Journal of Computational Physics 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Zhang, W.; Suo, W.; Song, J.; Cao, W. Physics Informed Neural Networks (PINNs) as intelligent computing technique for solving partial differential equations: Limitation and Future prospects, 2024. arXiv:2411. 18240. [Google Scholar] [CrossRef]

- Herde, M.; Raonić, B.; Rohner, T.; Käppeli, R.; Molinaro, R.; Bézenac, E.d.; Mishra, S. Poseidon: Efficient Foundation Models for PDEs, 2024. arXiv:2405. 19101. [Google Scholar] [CrossRef]

- Rahman, M.A.; George, R.J.; Elleithy, M.; Leibovici, D.; Li, Z.; Bonev, B.; White, C.; Berner, J.; Yeh, R.A.; Kossaifi, J.; et al. Pretraining Codomain Attention Neural Operators for Solving Multiphysics PDEs, 2024. arXiv:2403. 12553. [Google Scholar] [CrossRef]

- Wuwu, Q.; Gao, C.; Chen, T.; Huang, Y.; Zhang, Y.; Wang, J.; Li, J.; Zhou, H.; Zhang, S. PINNsAgent: Automated PDE Surrogation with Large Language Models, 2025. arXiv:2501. 12053. [Google Scholar] [CrossRef]

- Zhang, Z.; Zheng, C.; Wu, Y.; Zhang, B.; Lin, R.; Yu, B.; Liu, D.; Zhou, J.; Lin, J. The Lessons of Developing Process Reward Models in Mathematical Reasoning, 2025. arXiv:2501. 07301. [Google Scholar] [CrossRef]

- DeepSeek-AI. ; Guo, D.; Yang, D., Zhang, H., Song, J., Zhang, R., Xu, R., Zhu, Q., Ma, S., Wang, P., Eds.; et al. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning, arXiv:2501.12948. [Google Scholar] [CrossRef]

- Watson, J.L.; Juergens, D.; Bennett, N.R.; Trippe, B.L.; Yim, J.; Eisenach, H.E.; Ahern, W.; Borst, A.J.; Ragotte, R.J.; Milles, L.F.; et al. De novo design of protein structure and function with RFdiffusion. Nature 2023, 620, 1089–1100. [Google Scholar] [CrossRef] [PubMed]

- Zambaldi, V.; La, D.; Chu, A.E.; Patani, H.; Danson, A.E.; Kwan, T.O.C.; Frerix, T.; Schneider, R.G.; Saxton, D.; Thillaisundaram, A.; et al. De novo design of high-affinity protein binders with AlphaProteo, 2024. arXiv:2409. 08022. [Google Scholar] [CrossRef]

- Sayeed, M.A.; Tekin, E.; Nadeem, M.; ElNaker, N.A.; Singh, A.; Vassilieva, N.; Amor, B.B. Prot42: a Novel Family of Protein Language Models for Target-aware Protein Binder Generation, 2025. arXiv:2504. 04453. [Google Scholar] [CrossRef]

- Bazgir, A.; Zhang, Y. PROTEINHYPOTHESIS: A PHYSICS-AWARE CHAIN OF MULTI-AGENT RAG LLM FOR HYPOTHESIS GENER- ATION IN PROTEIN SCIENCE 2025.

- Messeri, L.; Crockett, M.J. Artificial intelligence and illusions of understanding in scientific research. Nature 2024, 627, 49–58. [Google Scholar] [CrossRef] [PubMed]

- Gupta, T.; Pruthi, D. All That Glitters is Not Novel: Plagiarism in AI Generated Research, 2025. arXiv:2502. 16487. [Google Scholar] [CrossRef]

- Belcak, P.; Heinrich, G.; Diao, S.; Fu, Y.; Dong, X.; Muralidharan, S.; Lin, Y.C.; Molchanov, P. Small Language Models are the Future of Agentic AI, 2025. arXiv:2506. 02153. [Google Scholar] [CrossRef]

- Cappello, F.; Madireddy, S.; Underwood, R.; Getty, N.; Chia, N.L.P.; Ramachandra, N.; Nguyen, J.; Keceli, M.; Mallick, T.; Li, Z.; et al. EAIRA: Establishing a Methodology for Evaluating AI Models as Scientific Research Assistants, 2025. arXiv:2502. 20309. [Google Scholar] [CrossRef]

- Cooper, A.F.; Choquette-Choo, C.A.; Bogen, M.; Jagielski, M.; Filippova, K.; Liu, K.Z.; Chouldechova, A.; Hayes, J.; Huang, Y.; Mireshghallah, N.; et al. Machine Unlearning Doesn’t Do What You Think: Lessons for Generative AI Policy, Research, and Practice, 2024. arXiv:2412. 06966. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).