Submitted:

21 July 2025

Posted:

22 July 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

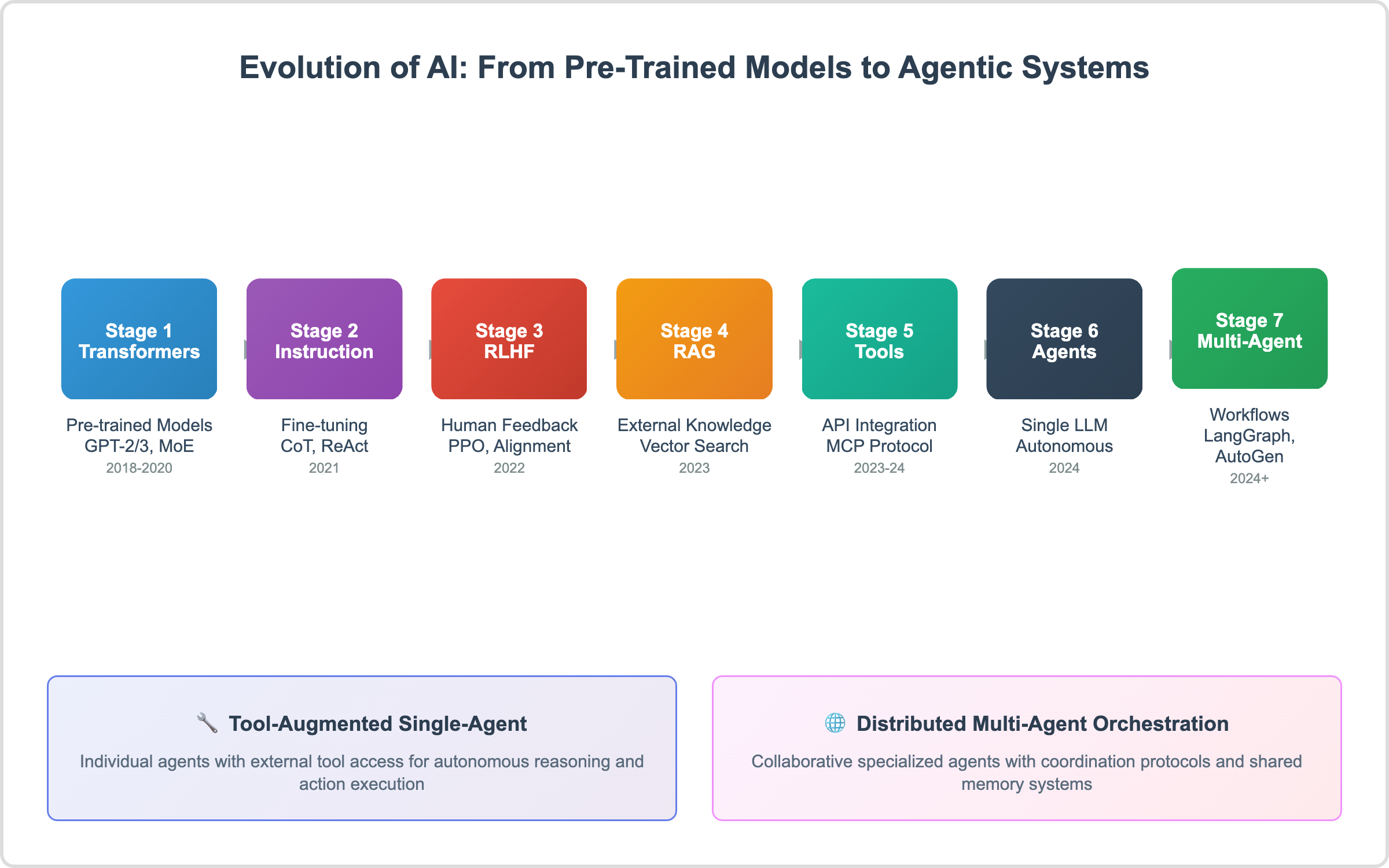

2. Evolution of AI: From Pre-Trained Models to Agentic Systems

2.1. Stage 1: Transformer-Based Pre-Trained Models (2018–2020)

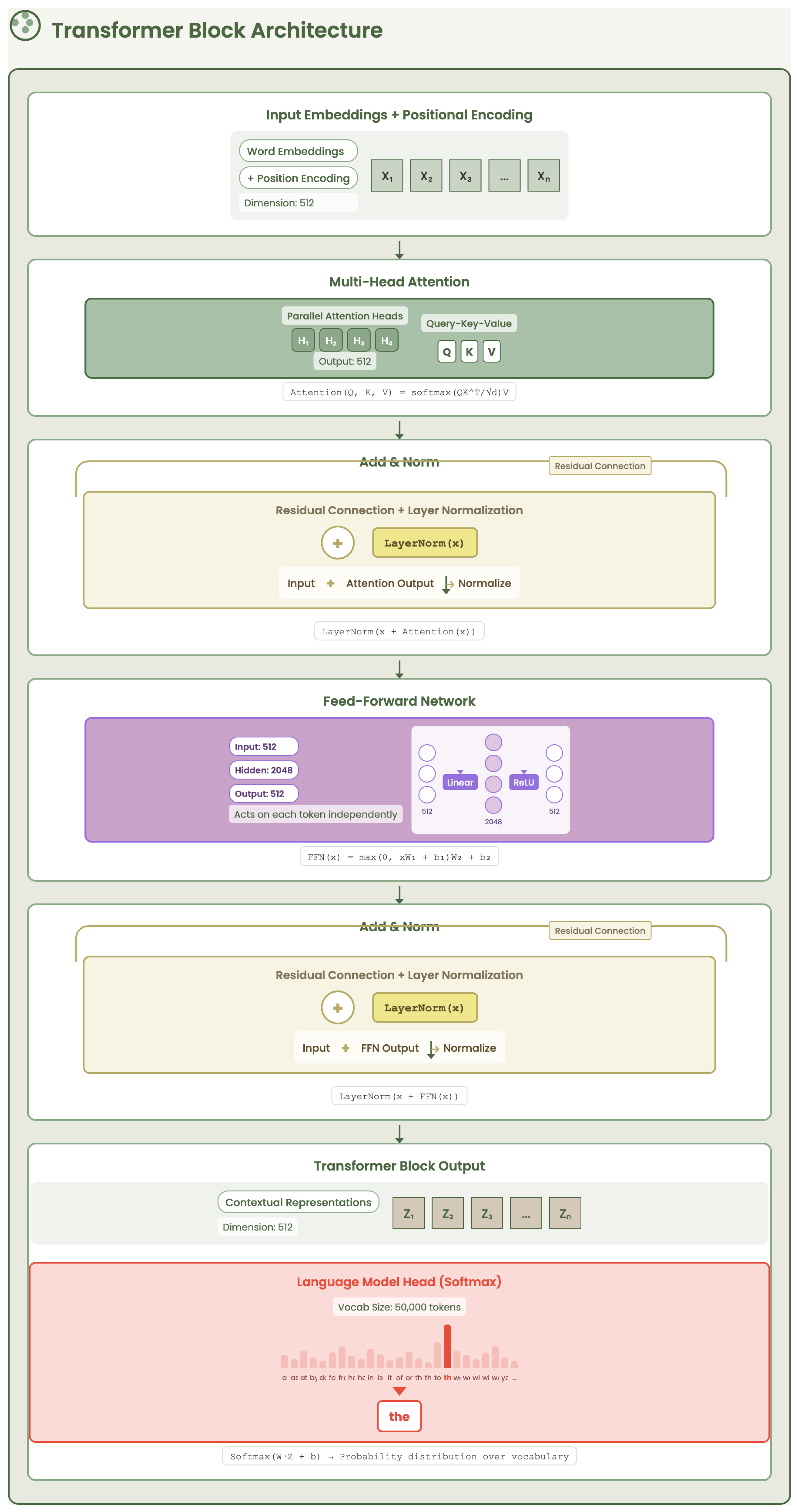

2.1.1. Transformer Architecture Fundamentals

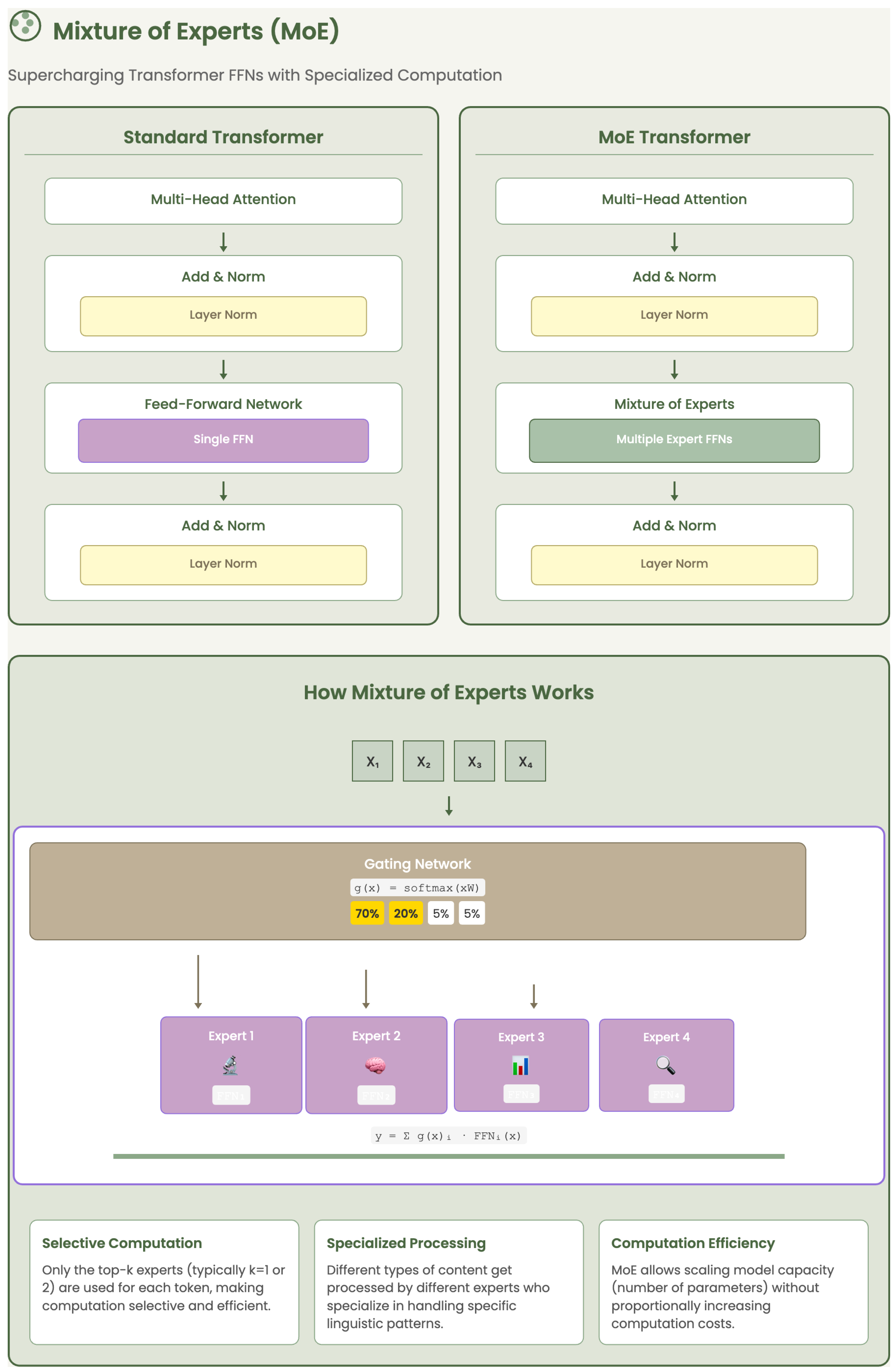

2.1.2. Mixture of Experts (MoE)

2.1.3. Pre-Trained Models: GPT-2 and GPT-3

2.2. Stage 2: Instruction Fine-Tuning (2021)

- Controllability: The model responds more accurately to varied user intents.

- Safety and factuality: Trained models are less likely to hallucinate or produce harmful content.

- Task-specific performance: It enables models to generalize better across downstream tasks without retraining.

-

Chain-of-Thought (CoT): Introduces intermediate reasoning steps to help the model think through problems (e.g., math or logic). This makes its outputs more traceable and reduces logical errors [16].Example: To solve “What is 15% of 80?”, the model first converts 15% to decimal (0.15), then multiplies: .

-

Tree-of-Thought (ToT): Explores multiple reasoning paths like a decision tree, which prevents premature conclusions and encourages diverse solution paths [17].Example: For the question “How can a company increase profits?”, the model evaluates different branches:

- −

- Option 1: Cut operational costs.

- −

- Option 2: Raise product prices.

- −

- Option 3: Expand to new markets.

-

ReAct (Reasoning + Acting): Combines reasoning with real-time action (e.g., tool use or fact-checking), improving factual grounding [18].Example: Q: “What is the capital of France?”Thought: I need to verify.Action: Search “capital of France”.Observation: Paris.Final Answer: Paris.

2.3. Stage 3: Reinforcement Learning from Human Feedback (RLHF)

- High-risk investment strategies,

- Balanced ethical advice,

- Legitimate freelance opportunities.

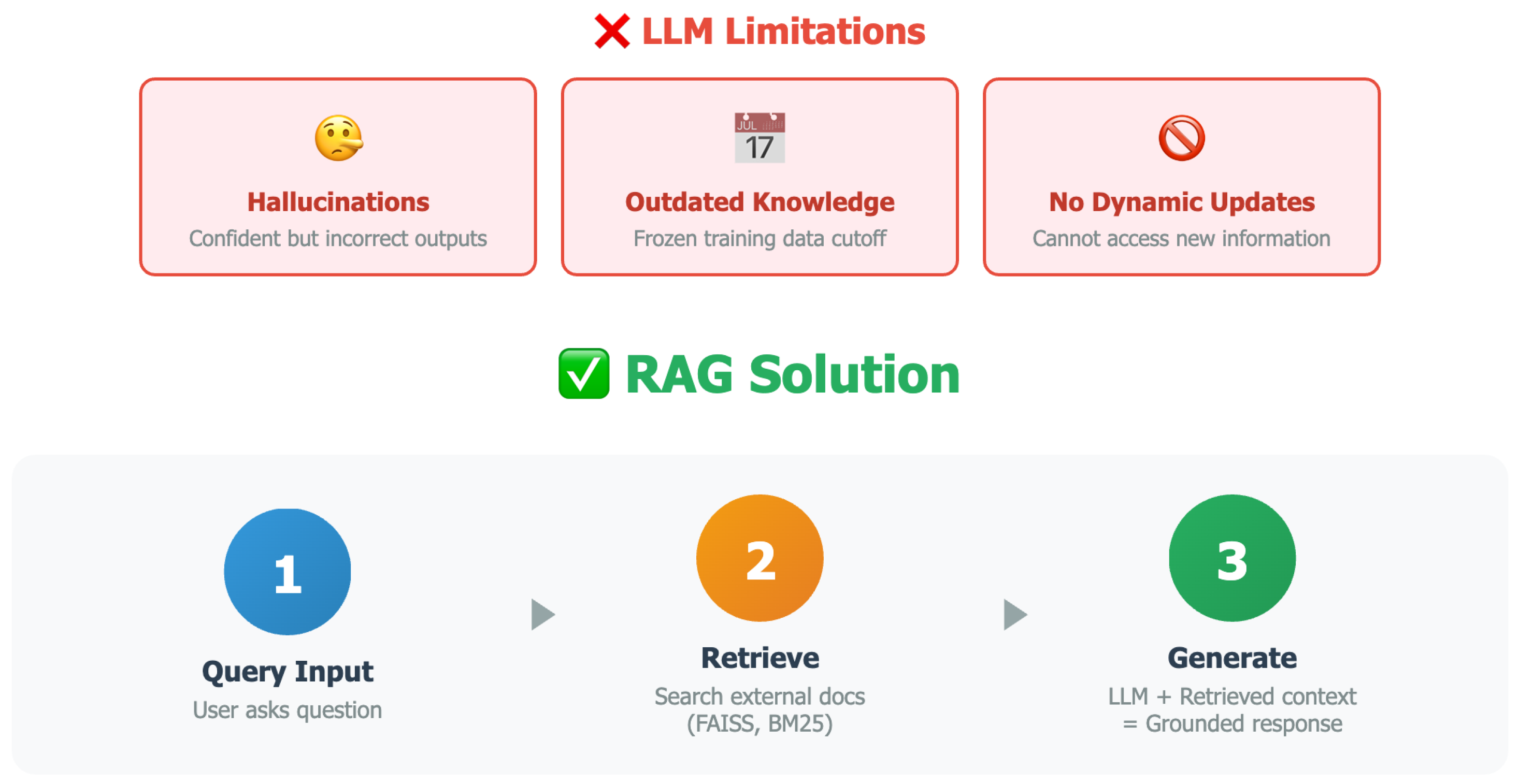

2.4. Stage 4: Retrieval-Augmented Generation (RAG)

- Modularity: Knowledge updates can be made by modifying the retrieval corpus without retraining the model.

- Efficiency: Retrieval systems are more computationally efficient than repeated fine-tuning for each domain or update.

- Transparency: The retrieved documents provide interpretability and support for the generated response.

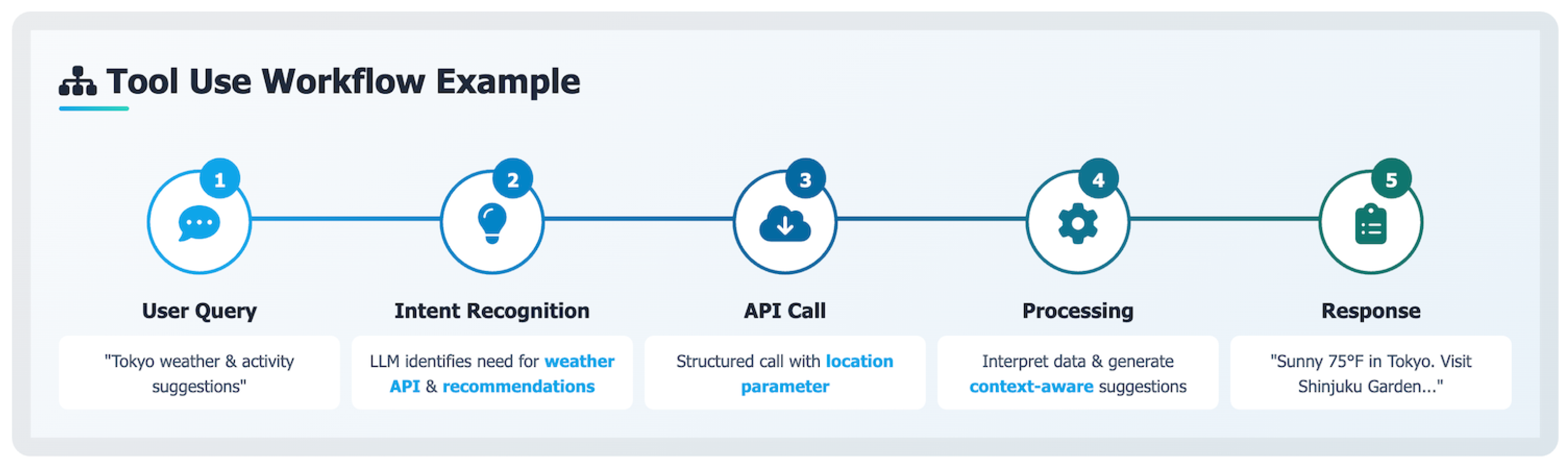

2.5. Stage 5: Tools and APIs Integration

From Text to Action.

Training for Intent Understanding.

How It Works.

- Tool Registration: Each tool is described with a name, description, and input/output schema.

- Function Call Prediction: The LLM is prompted (or fine-tuned) to detect when a tool is needed and how to call it.

- Tool Execution: A backend system receives the tool call, executes it, and returns the result.

- Result Injection: The result is returned to the LLM, which then generates a complete response.

Example.

"Check the weather in Paris tomorrow and summarize the forecast."

"Tomorrow in Paris, the weather will be sunny with a high of 24°C."

The Role of MCP.

- Unification: A common protocol replaces hand-crafted tool-call mechanisms with a structured, extensible specification.

- Context-awareness: Tools are only made available to the model within a relevant context, improving safety and reasoning efficiency.

- Automation-readiness: Because tools are self-describing, models can auto-generate calls, parse responses, and chain actions in autonomous workflows.

Insights

2.6. Stage 6: Single LLM Agents

- Reasoning: The agent breaks down the task into logical steps using CoT.

- Acting: It takes concrete actions (e.g., API calls, tool invocation) based on its reasoning.

- Observing: It assesses the outcomes of its actions and updates its knowledge or next steps.

- Planning: Based on observations, it revises its strategy to continue toward the goal.

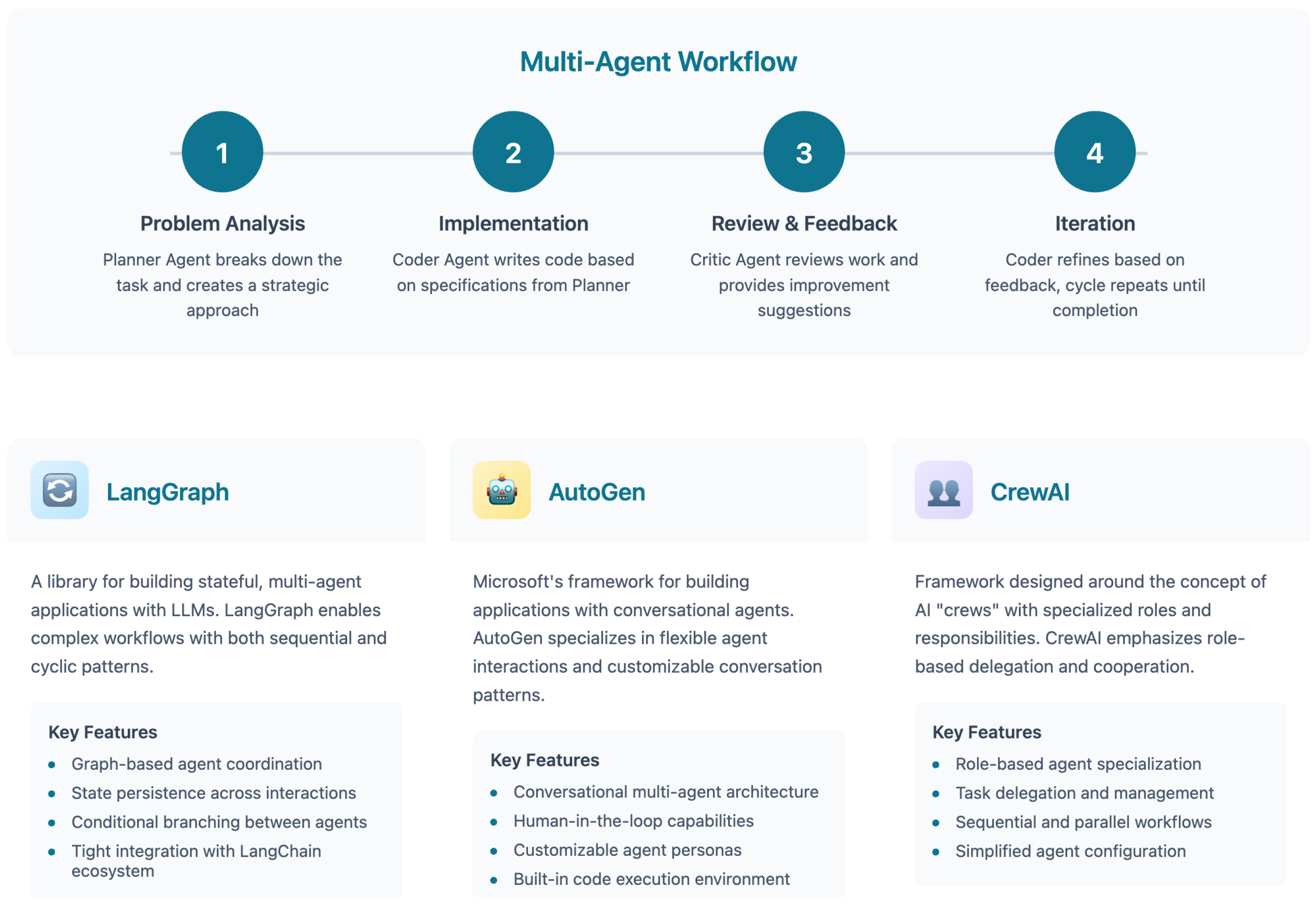

2.7. Stage 7: Agentic AI Workflows

Core Capabilities.

- Task Decomposition: A central planner agent breaks down complex problems into subtasks.

- Specialized Roles: Different agents are assigned domain-specific functions (e.g., coding, data analysis, summarization).

- Memory Sharing: Shared context or memory buffers enable continuity across agents.

Illustrative Example.

- The Planner Agent parses a user request: “Find recent AI papers on agentic systems and summarize their contributions.”

- It dispatches subtasks to a Search Agent, a Summarizer Agent, and a Citation Verifier Agent.

- Each agent performs its function independently—querying arXiv, writing summaries, or validating references.

- The Supervisor Agent integrates results and decides whether to loop, revise, or finalize the response.

Advantages Over Stage 6.

Strategic Impact.

Outlook.

3. Agentic AI Programming Frameworks

3.1. AutoGen: Conversational Multi-Agent Coordination

3.2. LangGraph: Graph-Based Workflow Orchestration

3.3. CrewAI: Role-Based Agent Orchestration

3.4. Comparative Analysis of Frameworks

Strategic Insight.

Outlook.

4. Strategic Discussion and Future Outlook

5. Conclusions

References

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All You Need. Advances in Neural Information Processing Systems 2017, 30. [Google Scholar]

- Shazeer, N.; Mirhoseini, A.; Maziarz, K.; Davis, A.; Hinton, G. Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer. arXiv 2017, arXiv:1701.06538. [Google Scholar]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language Models are Unsupervised Multitask Learners. OpenAI Blog 2019. [Google Scholar]

- Brown, T.B.; Mann, B.; Ryder, N.; et al. Language Models are Few-Shot Learners. Advances in Neural Information Processing Systems 2020, 33. [Google Scholar]

- Ouyang, L.; Wu, J.; Jiang, X.; et al. Training Language Models to Follow Instructions with Human Feedback. arXiv 2022, arXiv:2203.02155. [Google Scholar] [CrossRef]

- Christiano, P.F.; Leike, J.; Brown, T.B.; et al. Deep Reinforcement Learning from Human Preferences. Advances in Neural Information Processing Systems 2017, 30. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems 2020, 33. [Google Scholar]

- Microsoft. AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation. https://microsoft.github.io/autogen, 2024.

- LangChain. LangGraph: A Framework for Multi-Agent Workflows. https://langchain.com/langgraph, 2024.

- CrewAI Team. CrewAI: Orchestrating Role-Based AI Agents. https://crewai.com, 2024.

- CodeAct Team. CodeAct: A Framework for Code-Based AI Agents. https://codeact.org, 2024.

- Sennrich, R.; Haddow, B.; Birch, A. Neural Machine Translation of Rare Words with Subword Units. In Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics; 2016. [Google Scholar]

- Shoeybi, M.; Patwary, M.; Puri, R.; LeGresley, P.; Casper, J.; Catanzaro, B. Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism. arXiv 2019, arXiv:1909.08053. [Google Scholar]

- Lepikhin, D.; Lee, H.; Xu, Y.; et al. GShard: Scaling Giant Models with Conditional Computation and Automatic Sharding. arXiv 2020, arXiv:2006.16668. [Google Scholar] [CrossRef]

- Liu, P.; Yuan, W.; Fu, J.; Jiang, Z.; Hayashi, H.; Neubig, G. Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing. ACM Computing Surveys 2023, 55, 1–35. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D. Chain of Thought Prompting Elicits Reasoning in Large Language Models. arXiv 2022, arXiv:2201.11903. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.L.; Cao, Y.; Narasimhan, K. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. Advances in Neural Information Processing Systems 2023, 36. [Google Scholar]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. arXiv 2022, arXiv:2210.03629. [Google Scholar]

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.; Chi, E.H.; Narasimhan, K.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. arXiv 2022, arXiv:2203.11171. [Google Scholar]

- Bai, Y.; Jones, A.; Ndousse, K.; Askell, A.; Chen, A.; Goldie, A.; Mirhoseini, A.; Olsson, C.; Saunders, W.; Schulman, J.; et al. Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback. arXiv 2022, arXiv:2204.05862. [Google Scholar] [CrossRef]

- Bradley, R.A.; Terry, M.E. Rank analysis of incomplete block designs: I. The method of paired comparisons. Biometrika 1952, 39, 324–345. [Google Scholar] [CrossRef]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

- Ngo, R.; Chan, E.; Kaplan, J.; Amodei, D. Scaling Laws for Reward Model Overoptimization. Anthropic blog https://www.anthropic.com/index/scaling-laws-for-reward-model-overoptimization. 2022. [Google Scholar]

- Huang, J.; Schuurmans, D.; Chi, E.H.; Zhou, D.; Le, Q.V. Language Models Can Self-Improve. arXiv 2022, arXiv:2210.11610. [Google Scholar] [CrossRef]

- Johnson, J.; Douze, M.; Jégou, H. Billion-scale similarity search with GPUs. IEEE Transactions on Big Data 2019, 7, 535–547. [Google Scholar] [CrossRef]

- Robertson, S.; Zaragoza, H. The probabilistic relevance framework: BM25 and beyond. Foundations and Trends in Information Retrieval 2009, 3, 333–389. [Google Scholar] [CrossRef]

- Schick, T.; Dwivedi-Yu, Y.K.; Sorensen, L.; et al. Toolformer: Language Models Can Teach Themselves to Use Tools. arXiv 2023, arXiv:2302.04761. [Google Scholar] [CrossRef]

- OpenAI. Function Calling with GPT-4 and GPT-3.5. https://platform.openai.com/docs/guides/function-calling, 2023.

- Anthropic. Model Context Protocol (MCP): Towards Safer Tool Use with Language Models. https://www.anthropic.com/index/mcp, 2024.

- Richards, T.B. AutoGPT: An Experimental Open-Source Attempt to Make GPT-4 Fully Autonomous. https://github.com/Torantulino/Auto-GPT, 2023.

- Shinn, N.; Wang, X.; Radev, D. Reflexion: Language Agents with Verbal Reinforcement Learning. arXiv 2023, arXiv:2303.11366. [Google Scholar] [CrossRef]

- Li, Y.; Li, J.; Yang, K.; et al. Camel: Communicative Agents for Mind Exploration of Large Scale Language Model Society. arXiv 2023, arXiv:2303.17760. [Google Scholar]

- Park, J.S.; O’Brien, J.; Cai, C.J.; et al. Generative Agents: Interactive Simulacra of Human Behavior. arXiv 2023, arXiv:2304.03442. [Google Scholar] [CrossRef]

- Wang, W.; Dong, L.; Cheng, H.; Liu, X.; Yan, X.; Gao, J.; Wei, F. Augmenting Language Models with Long-Term Memory. Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), arXiv 2023, arXiv:2306.07174. [Google Scholar]

- Koubaa, A. The Rise of Autonomous Intelligence with Agentic AI. In Proceedings of the Alfaisal University Presentation; 2025. [Google Scholar]

| Feature | LangGraph | AutoGen | CrewAI |

|---|---|---|---|

| Coordination Model | Graph-Based | Conversational | Role-Based |

| Replay / Debugging | Time Travel | Human Intervention | Recent Task Replay |

| Ease of Use | Low (Technical) | Moderate | High (User-Friendly) |

| Scalability | Enterprise-Ready | Enterprise-Ready | Suitable for Lightweight Use |

| Integration | LangChain Ecosystem | LLM + Tools (e.g., Browsing, Code) | YAML + Predefined Tools |

| Best Fit | Research, Complex Workflows | Task Automation, Dialogues | Prototyping, Team Simulations |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).