Submitted:

16 July 2025

Posted:

17 July 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Problem Statement

3. Literature Review

3.1. Image Segmentation Techniques for Urban Environments

3.2. Advancements in Deep Learning for Urban Scene Analysis

3.3. Utilization of Attention Mechanisms in Urban Scene Segmentation

3.4. Context-Aware Segmentation Approaches

4. Problem formulation

4.1. Dataset Replacement

4.2. Introduction of a Competitive Layer

4.3. Model Light-weighting

4.4. Accuracy Preservation Amidst Model Reduction

4.5. Optimization Goal

5. Analysis

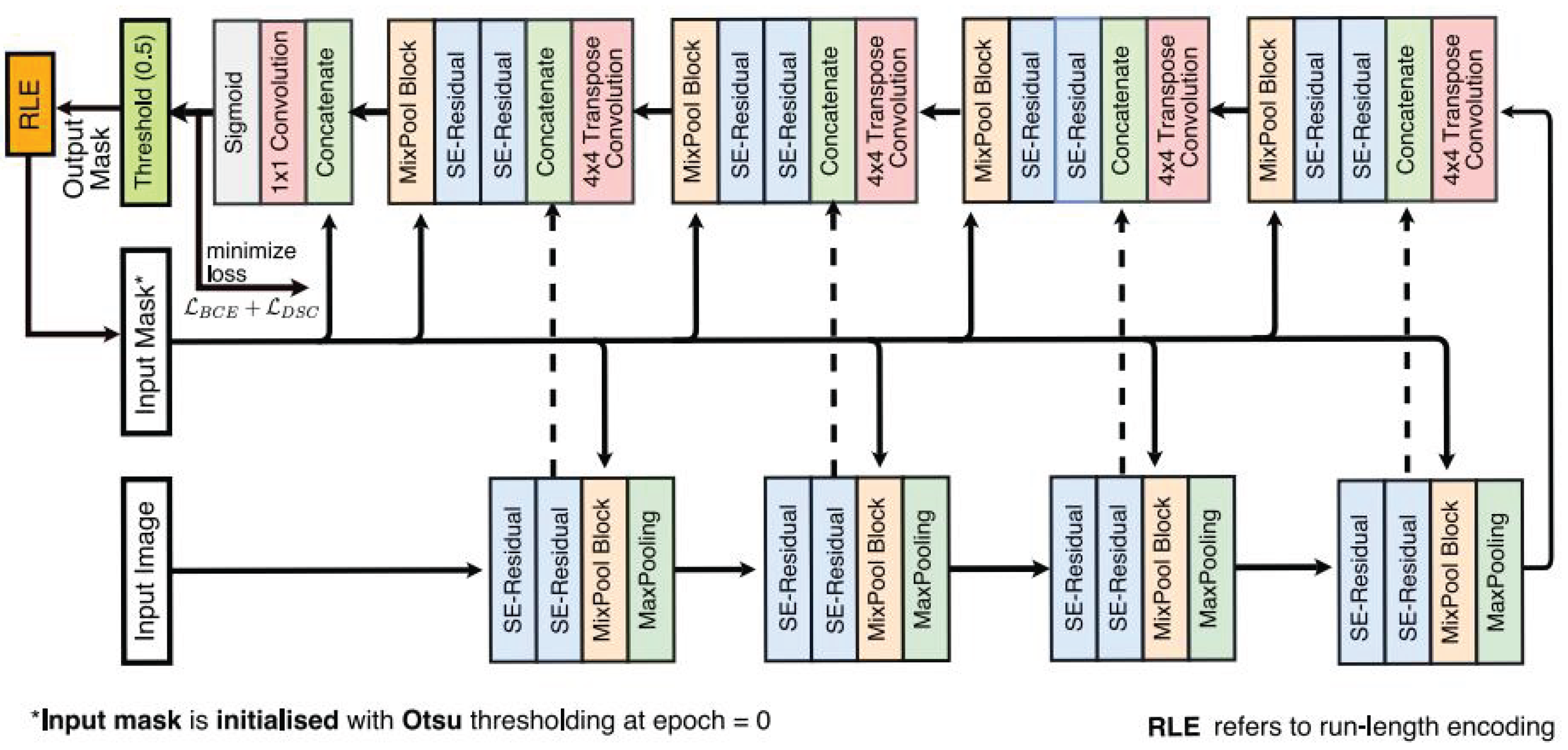

5.1. Original FANet Model: Principles and Application

5.2. Improved FANet Model with New Dataset "Cityscapes"

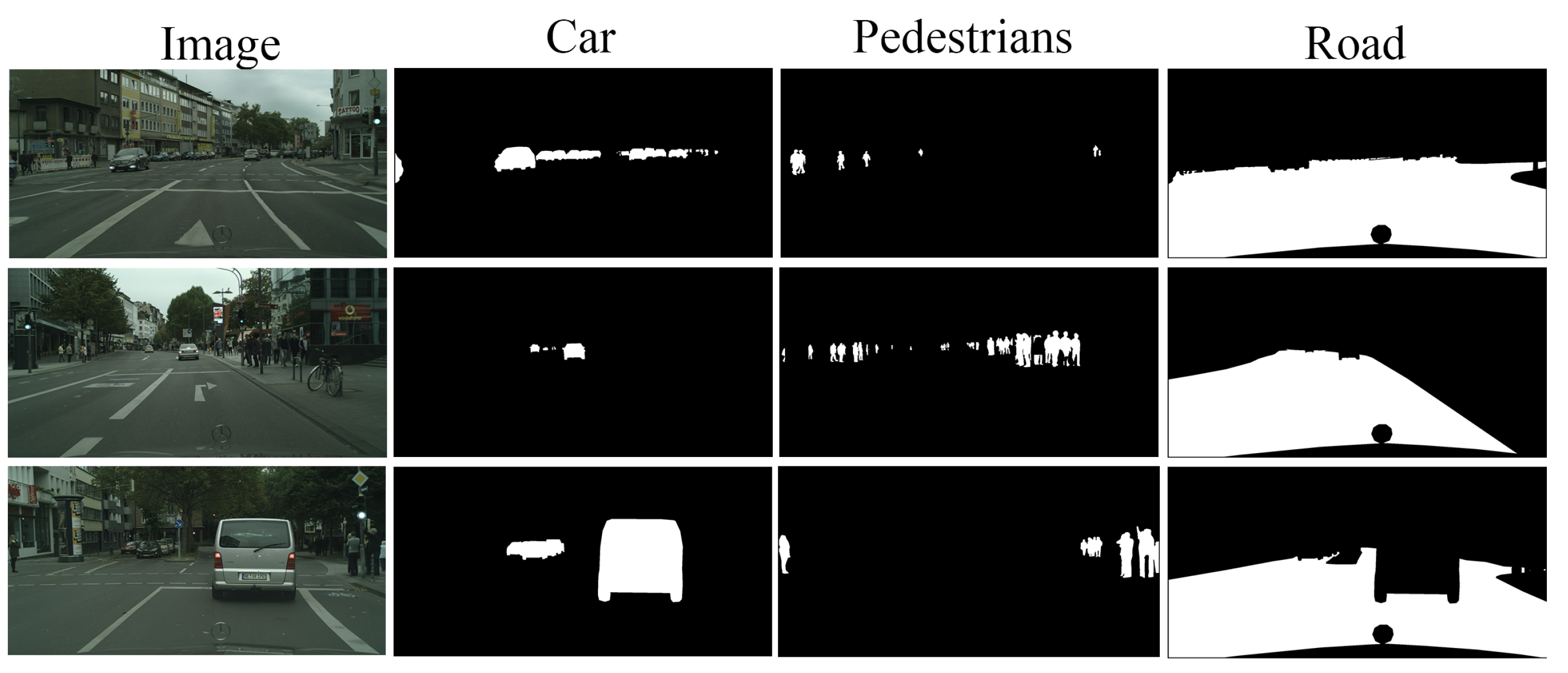

5.2.1. Dataset Description

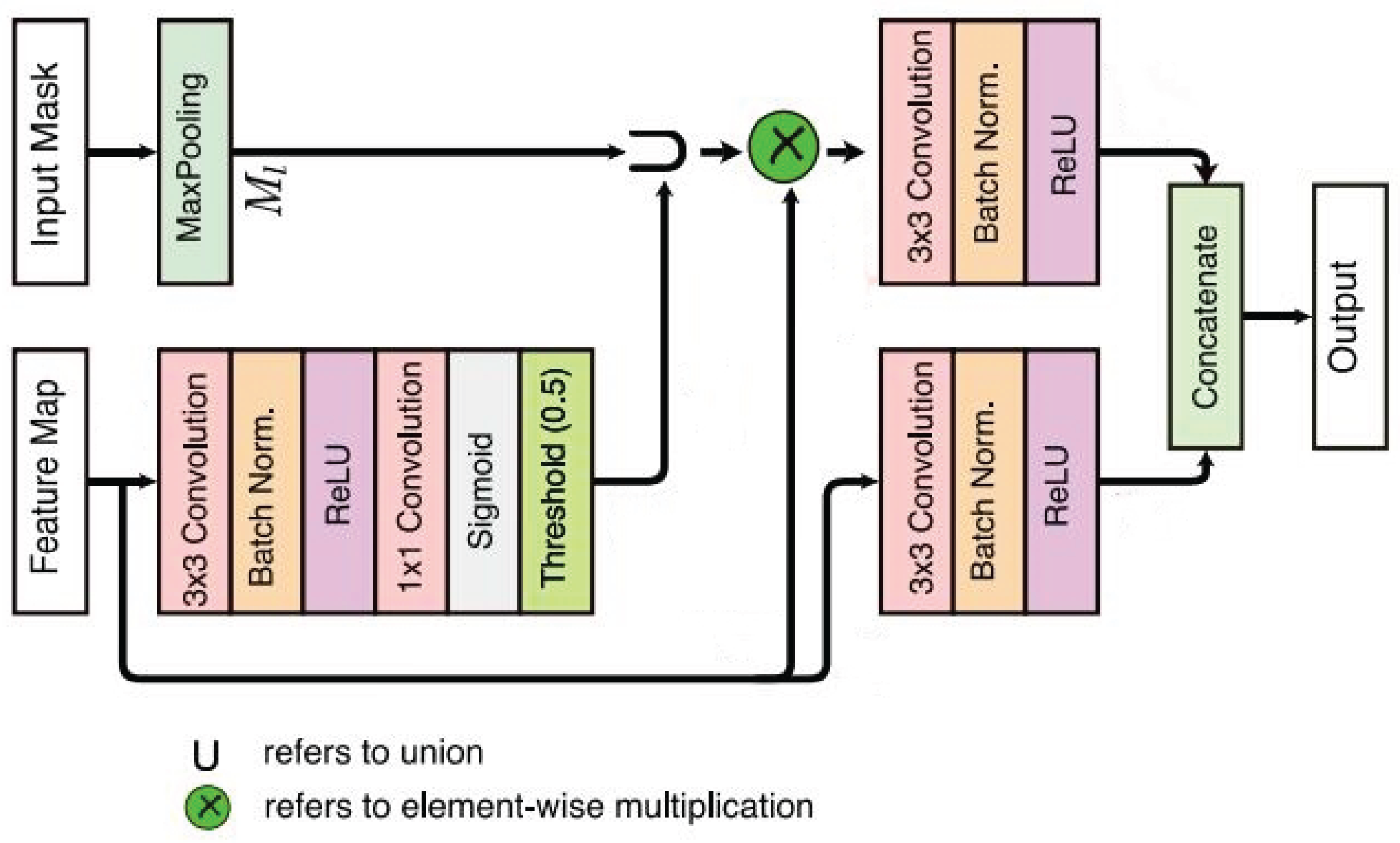

5.2.2. Adaptation to Cityscapes Dataset by Introducing a Competitive Layer

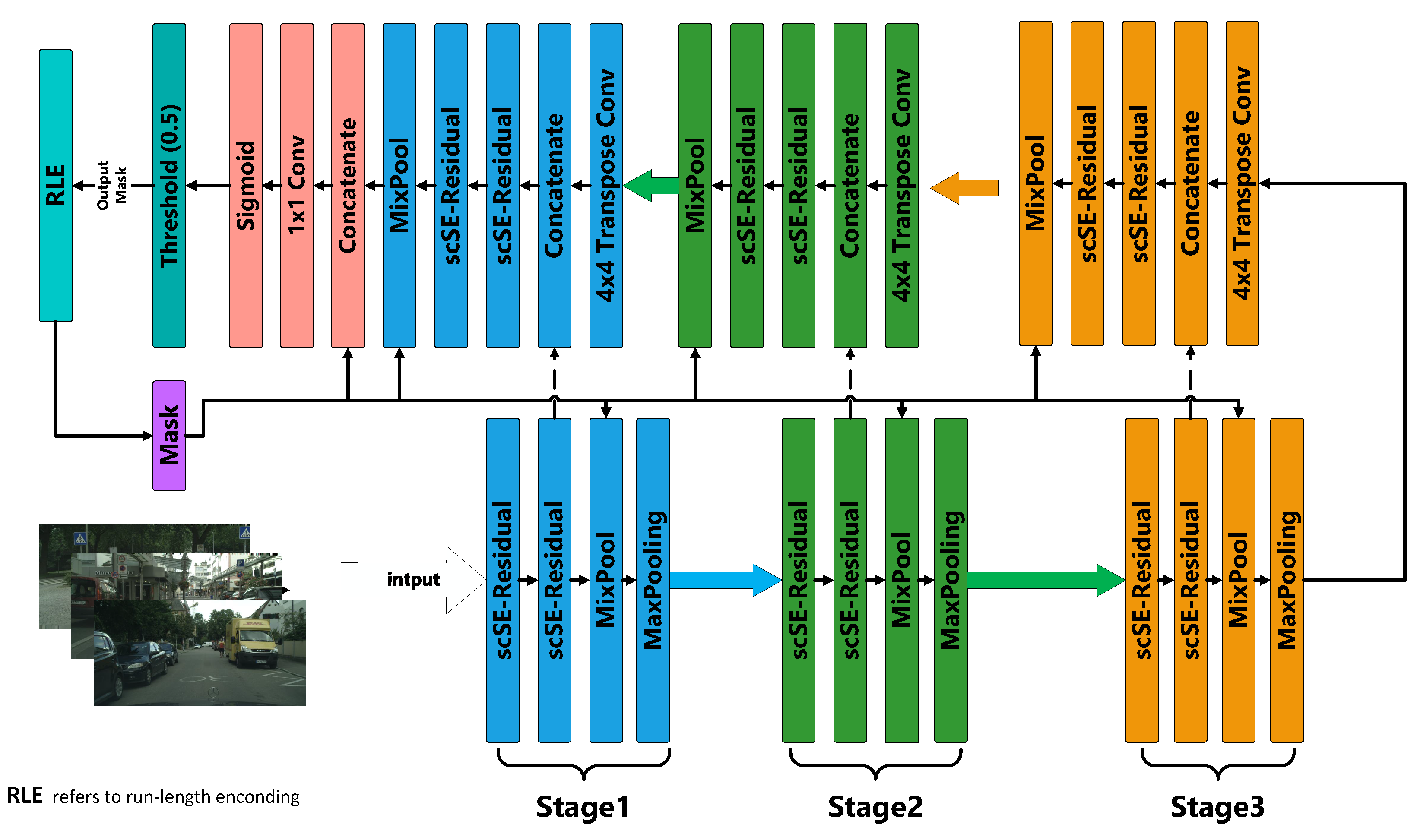

5.2.3. Model Light-Weighting

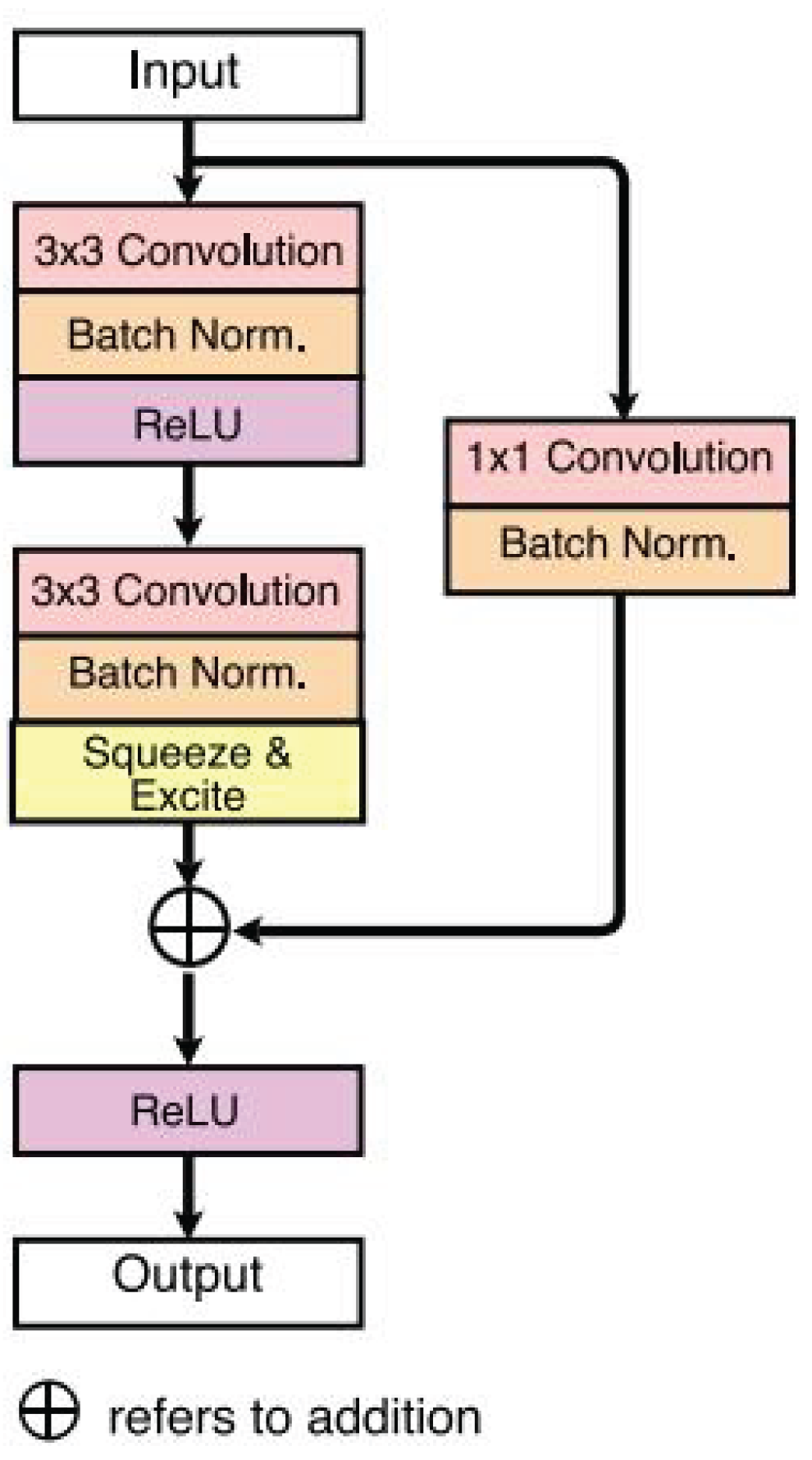

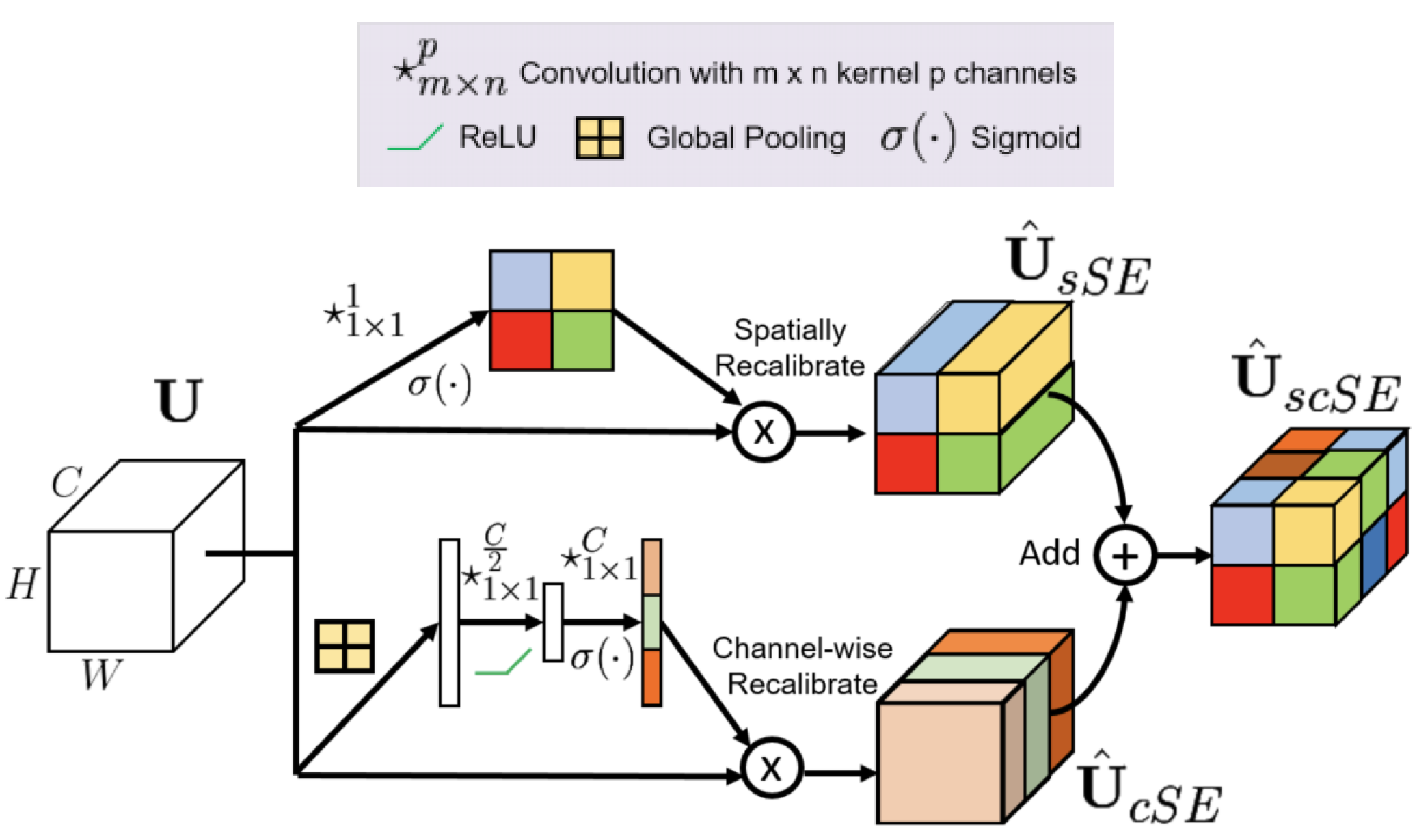

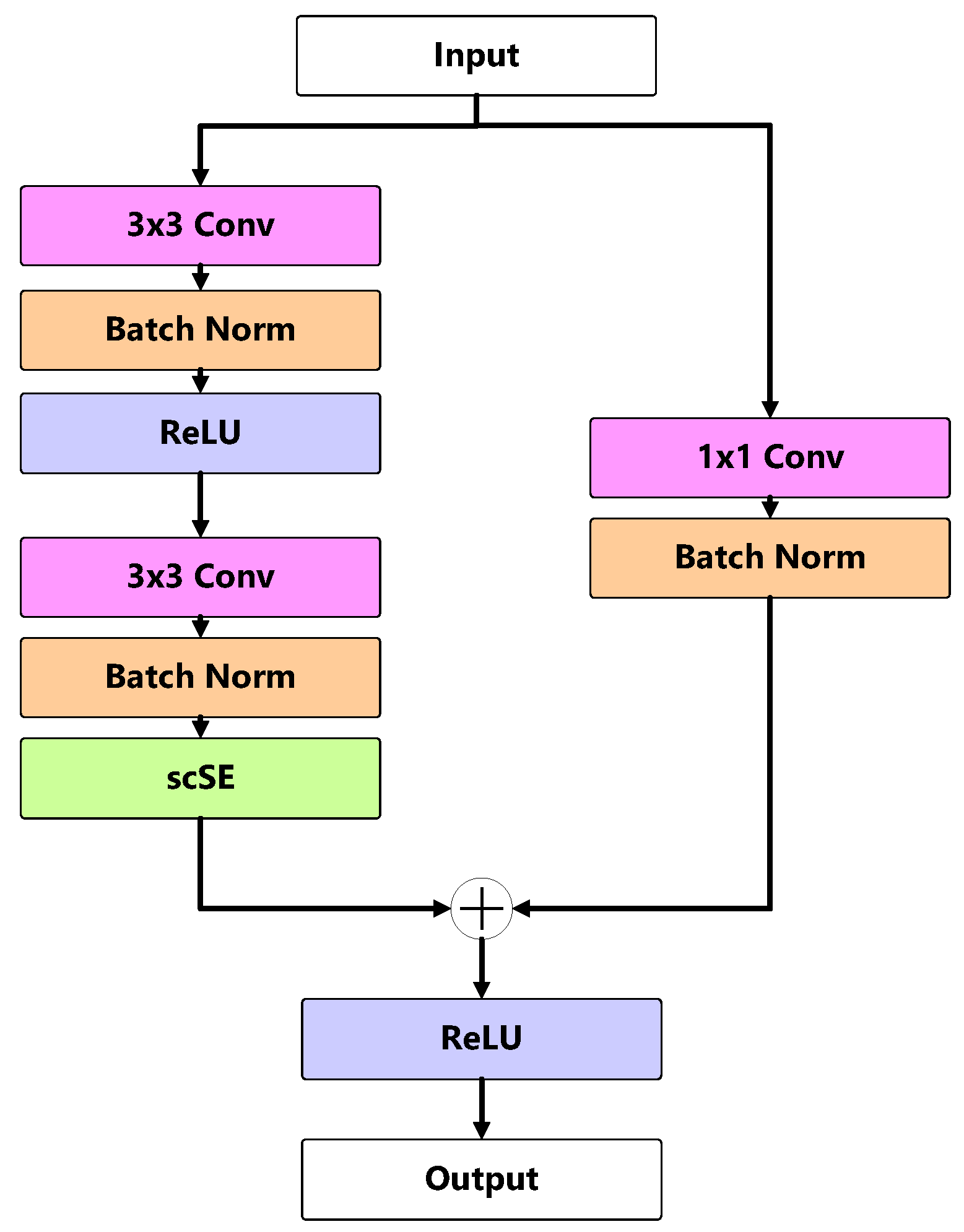

5.2.4. Optimization with Attention Mechanism Modules

6. Experimental Results

6.1. Implementation Details

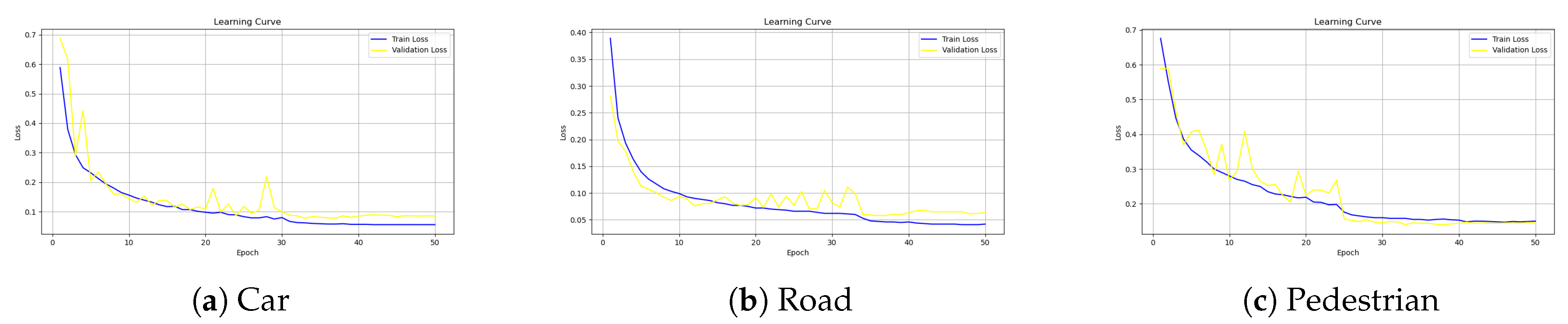

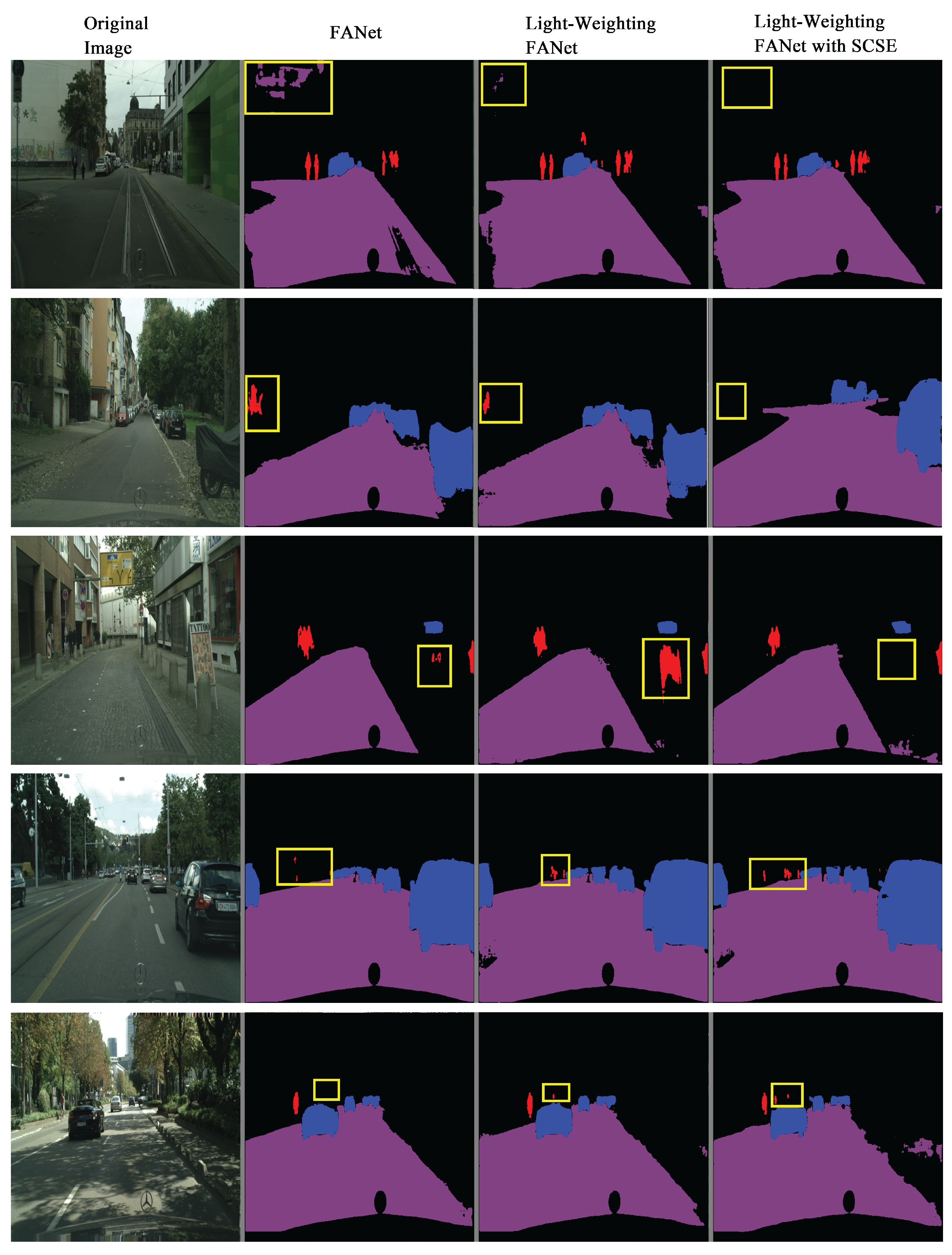

6.2. Results and Analysis

7. Discussion

8. Conclusions

References

- M. Cordts et al., “The Cityscapes Dataset for Semantic Urban Scene Understanding,” in 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA: IEEE, Jun. 2016, pp. 3213–3223. [CrossRef]

- G. Neuhold, T. Ollmann, S. R. Bulo, and P. Kontschieder, “The Mapillary Vistas Dataset for Semantic Understanding of Street Scenes,” in 2017 IEEE International Conference on Computer Vision (ICCV), Venice: IEEE, Oct. 2017, pp. 5000–5009. [CrossRef]

- H. Zhao, J. Shi, X. Qi, X. Wang, and J. Jia, “Pyramid Scene Parsing Network,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI: IEEE, Jul. 2017, pp. 6230–6239. [CrossRef]

- H. Zhang et al., “Context Encoding for Semantic Segmentation,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA: IEEE, Jun. 2018, pp. 7151–7160. [CrossRef]

- J. Hu, L. Shen, S. Albanie, G. Sun, and E. Wu, “Squeeze-and-Excitation Networks,” IEEE Trans. Pattern Anal. Mach. Intell., vol. 42, no. 8, pp. 2011–2023, Aug. 2020. [CrossRef]

- A. G. Roy, N. Navab, and C. Wachinger, “Recalibrating Fully Convolutional Networks With Spatial and Channel ‘Squeeze and Excitation’ Blocks,” IEEE Trans. Med. Imaging, vol. 38, no. 2, pp. 540–549, Feb. 2019. [CrossRef]

- N. K. Tomar et al., “FANet: A Feedback Attention Network for Improved Biomedical Image Segmentation,” IEEE Trans. Neural Netw. Learning Syst., vol. 34, no. 11, pp. 9375–9388, Nov. 2023. [CrossRef]

- J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” in 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA: IEEE, Jun. 2015, pp. 3431–3440. [CrossRef]

- G. Han et al., “Improved U-Net based insulator image segmentation method based on attention mechanism,” Energy Reports, vol. 7, pp. 210–217, Nov. 2021. [CrossRef]

| Methods | Category | Jaccard | F1 | Recall | Specificity | Accuracy | F2 | mIoU | Size(MB) |

|---|---|---|---|---|---|---|---|---|---|

| Original | car | 0.0263 | 0.0322 | 0.1682 | 0.9046 | 0.915596 | 0.037733 | 0.4614 | 30 |

| Light-weighting | car | 0.0246 | 0.029 | 0.1641 | 0.905 | 0.905954 | 0.034053 | 0.4606 | 7.6 |

| Light-weighting+scSE | car | 0.0267 | 0.0329 | 0.1669 | 0.9047 | 0.915748 | 0.038513 | 0.4612 | 7.8 |

| Original | people | 0.4528 | 0.5533 | 0.572 | 0.9176 | 0.995089 | 0.549075 | 0.684 | 30 |

| Light-weighting | people | 0.4939 | 0.6054 | 0.6823 | 0.9169 | 0.995032 | 0.62651 | 0.7048 | 7.6 |

| Light-weighting+scSE | people | 0.5413 | 0.6495 | 0.6709 | 0.9184 | 0.996241 | 0.645008 | 0.7292 | 7.8 |

| Original | path | 0.0006 | 0.0012 | 0.1094 | 0.6625 | 0.655846 | 0.002224 | 0.3286 | 30 |

| Light-weighting | path | 0.0005 | 0.001 | 0.1082 | 0.6719 | 0.644969 | 0.001973 | 0.3326 | 7.6 |

| Light-weighting+scSE | path | 0.0004 | 0.0008 | 0.1088 | 0.6638 | 0.656963 | 0.001557 | 0.3282 | 7.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).