Submitted:

12 July 2025

Posted:

14 July 2025

You are already at the latest version

Abstract

Keywords:

I. Introduction

A. Background on AI in Social Media

B. Emergence of Ethical Concerns

C. Problem Statement: AI-driven Harassment, Bullying, and Synthetic Content

- How aware are social media users of AI features and their ethical implications?

- What are users' experiences and concerns regarding AI-generated content used for harassment and bullying?

- What are the psychological and societal impacts of AI-driven content on social media users?

- What are the current technical capabilities and limitations in detecting AI-generated harmful content?

- What ethical principles and regulatory frameworks are most pertinent to mitigating AI-

- driven harm on social media?

- What actionable recommendations can be proposed for platforms, regulators, and users?

- •

- Analysing survey data to understand user perceptions and experiences.

- •

- Detailing relevant real-world case studies to illustrate the severity of the problem.

- •

- Conducting a comprehensive review of existing literature on AI ethics, content generation, and detection.

- •

- Proposing actionable future directions and recommendations for addressing AI-driven ethical challenges in social media.

II. Literature Review

A. Foundational Concepts in AI Ethics

B. Evolution of AI in Social Media and its Societal Implications

C. Deepfake and Fake Image Generation Techniques

- Generative Adversarial Networks (GANs): GANs operate on an adversarial principle, consisting of a generator network that creates synthetic content and a discriminator network that attempts to distinguish between real and generated content.[13] Through this "zero-sum game," the generator continuously improves its ability to produce hyper-realistic fakes until the discriminator can no longer differentiate them from authentic data.[68] GANs have been instrumental in face-swapping and face reenactment, where the generator learns to map source identity attributes onto a target face or synchronize facial expressions with audio inputs.[59]

- Diffusion Models (DMs): Diffusion models represent a newer class of generative AI that has shown remarkable capabilities in image synthesis.[13] These models work by gradually adding noise to an image until it becomes pure noise, and then learning to reverse this process to generate a clean image from noise.[2] This iterative denoising process allows for the creation of high-quality and diverse images, including those used for malicious purposes.[66]

- Variational Autoencoders (VAEs): VAEs are a type of autoencoder neural network that provide a probabilistic approach to generating realistic fake images.[2] They learn a latent space as statistical parameters of probabilistic distributions, which significantly improves the quality of generated results compared to earlier autoencoders.[2] VAE-based architectures are also employed in face-swapping, where they can obtain a latent representation of a face independent of geometry and non-face regions, which is then used to synthesize a swapped image.[59]

D. Challenges in AI-Generated Content Detection

- Rapid Evolution of Generation Techniques: Deepfake generation technologies are constantly evolving, with new models and methods emerging that can produce increasingly realistic and harder-to-detect synthetic media.[13] This creates a continuous cat-and-mouse game where detection methods struggle to keep pace.[13]

- Susceptibility to Adversarial Attacks: Deepfake detectors can be vulnerable to adversarial attacks, where subtle perturbations are introduced to the synthetic content to fool detection algorithms, making them misclassify fake content as real.[6]

- Scarcity of Diverse and High-Quality Datasets: Training robust detection models requires vast and diverse datasets of both real and synthetic content.[13] However, curating and manually labelling in-the-wild deepfake data is costly and susceptible to human error, leading to insufficient dataset sizes for comprehensive training and evaluation.[13]

- Need for Multimodal Detection: Malicious AI- generated content often involves multiple modalities, such as manipulated video, audio, and text.[43] Effective detection increasingly requires multimodal approaches that can analyse and fuse cues from all these sources to identify inconsistencies or artifacts that single-modality detectors might miss.[43]

E. Psychological and Societal Impacts of Online Harassment and Misinformation

F. Existing Ethical Frameworks and Content Moderation Policies

III. Methodology

A. Research Design and Approach

B. Survey Instrument: "AI Ethics in Social Media Questionnaire"

C. Data Collection and Participant Demographics (N=200)

D. Data Analysis Techniques

E. Ethical Considerations

IV. Manipulation of AI Evidence

A. Techniques for Fabricating Content

V. Impact of AI

A. Effects on User Behaviour

B. Effects on Mental Health

C. Effects on Societal Trust

VI. Case Studies

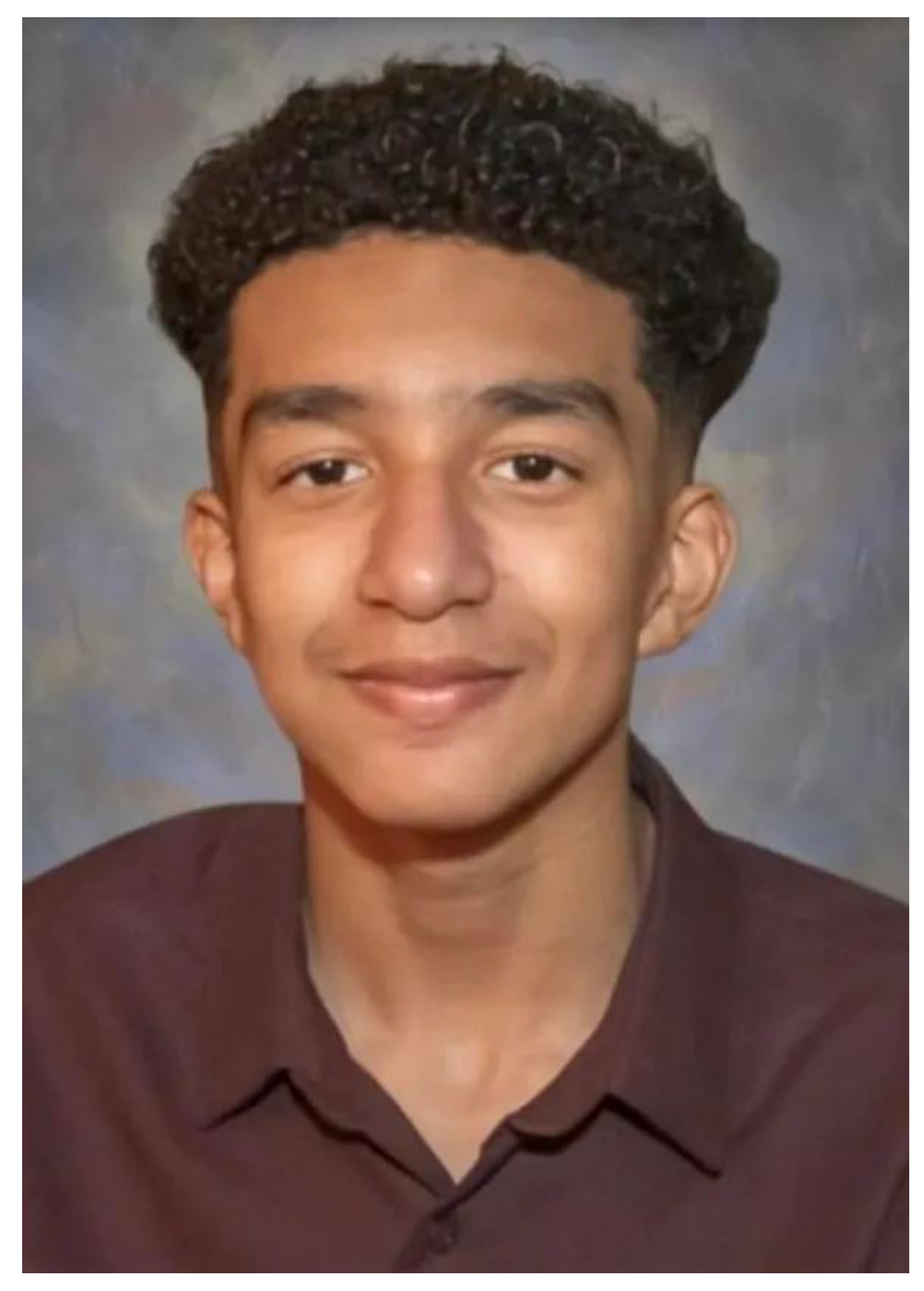

A. Sewell Setzer III (2024, USA)

B. Belgian Man (2023)

C. Chase Nasca (2022, USA)

D. Deepfake Victims (2023)

E. Molly Russell (2017, UK; Ruled 2022)

VII. Results and Analysis

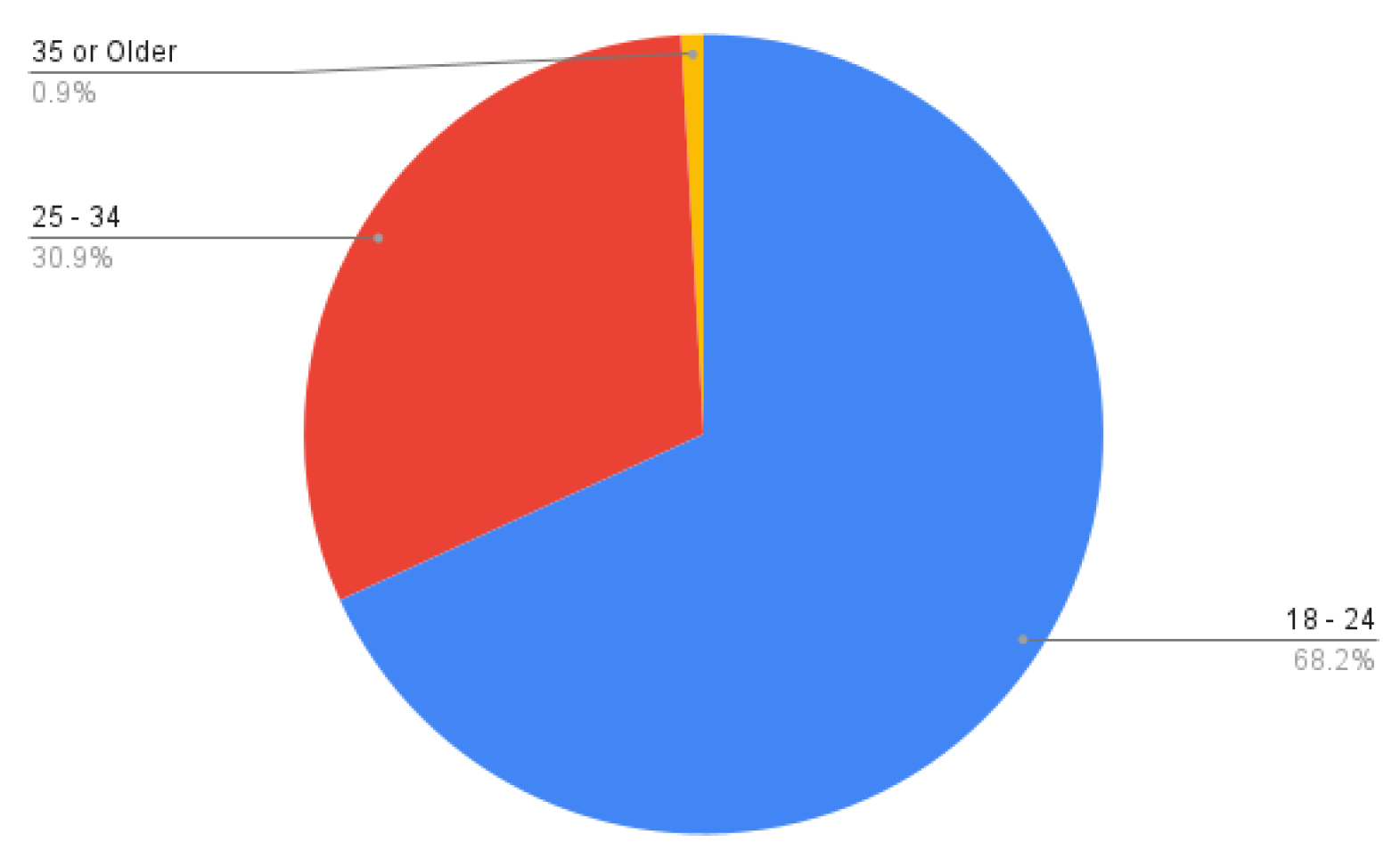

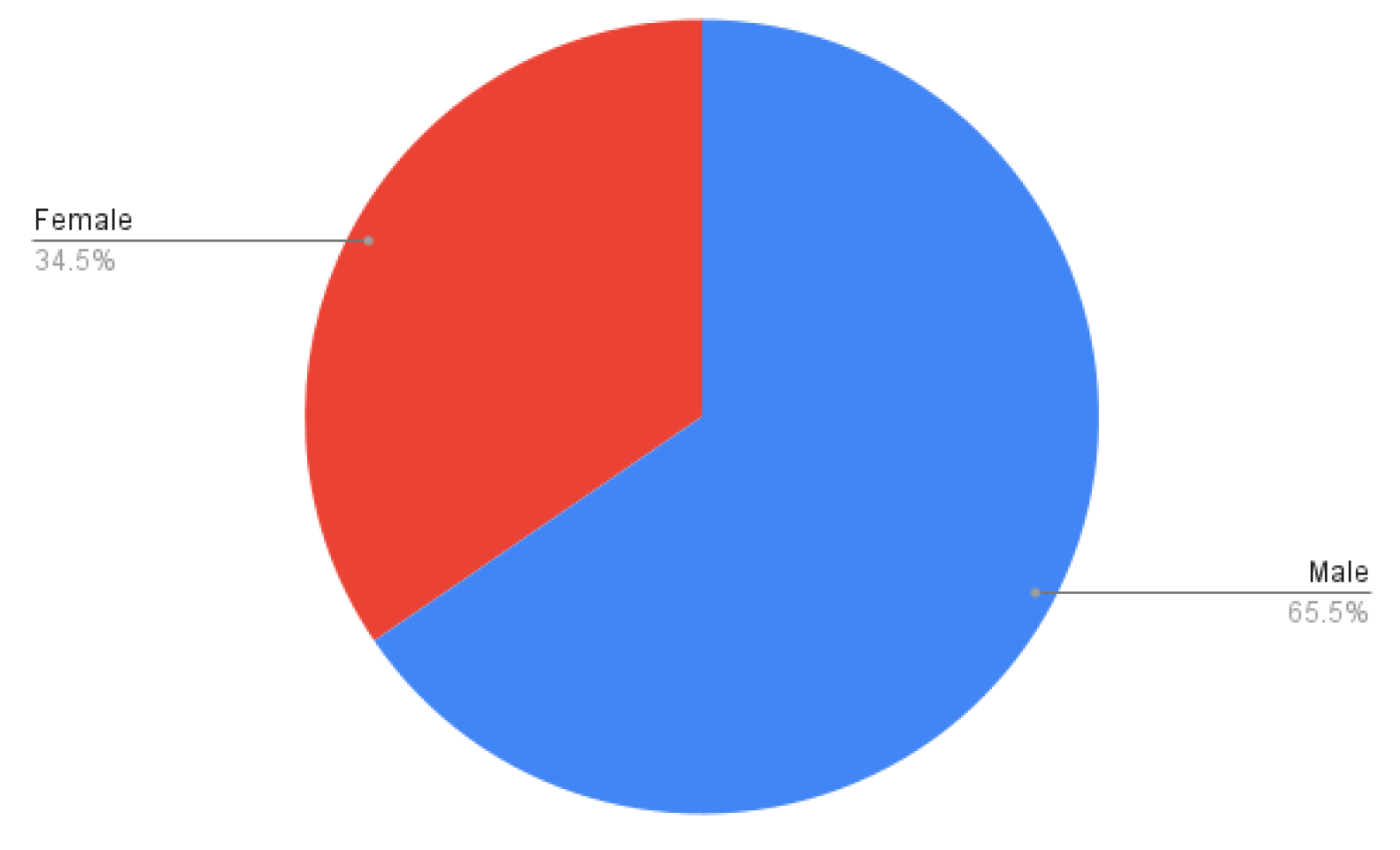

A. Respondent Demographics

- Age Group (Question 1): The largest age group was 18-24 years, accounting for 68.20% of the total participants. The 25-34 age group

- Gender (Question 2): The gender distribution was relatively balanced, with 65.5% identifying as Male and 34.5% as Female.[1]

B. Awareness and Usage

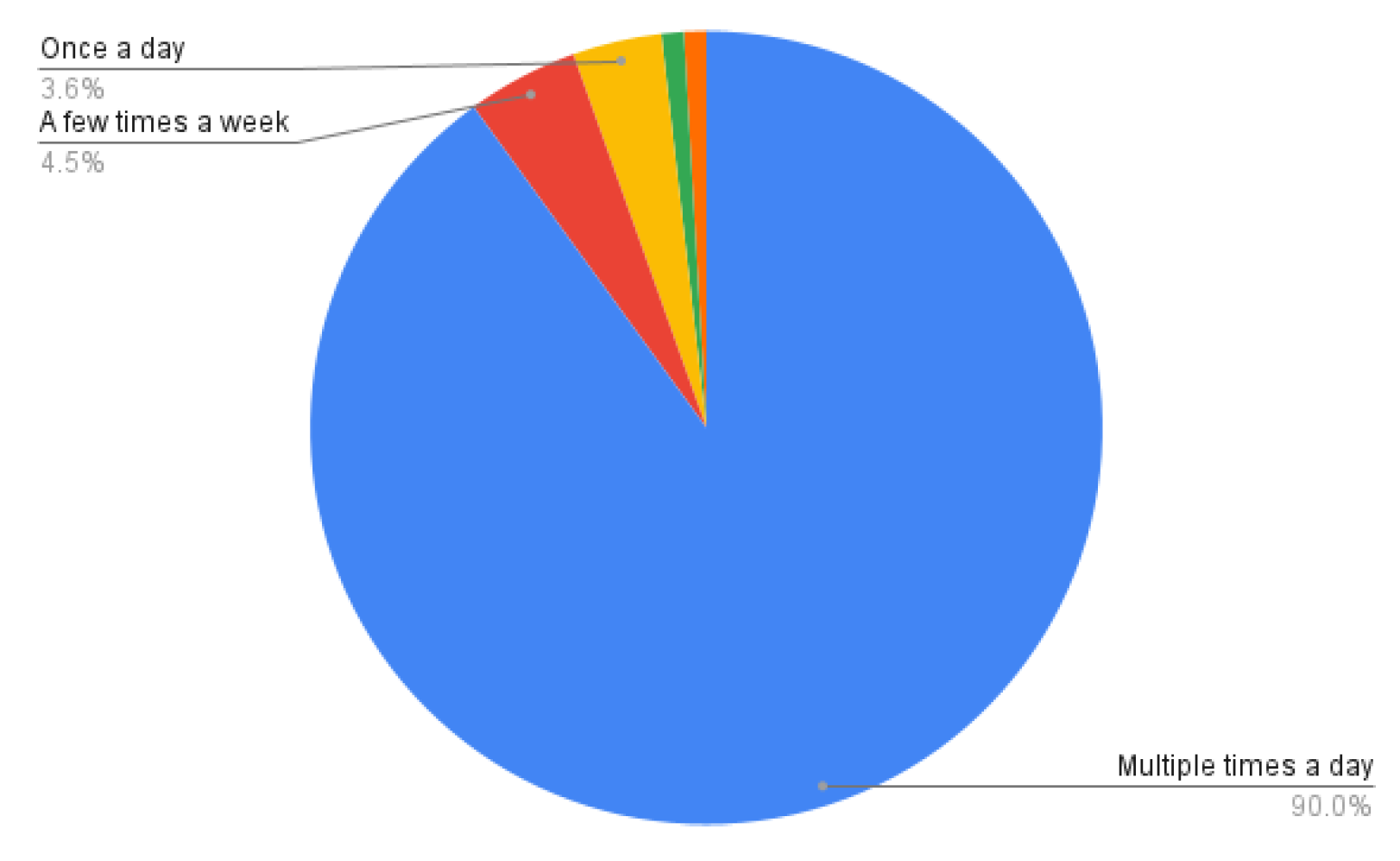

- Social Media Usage Frequency (Question 3): A vast majority of respondents, 90%, reported using social media platforms "Multiple times a

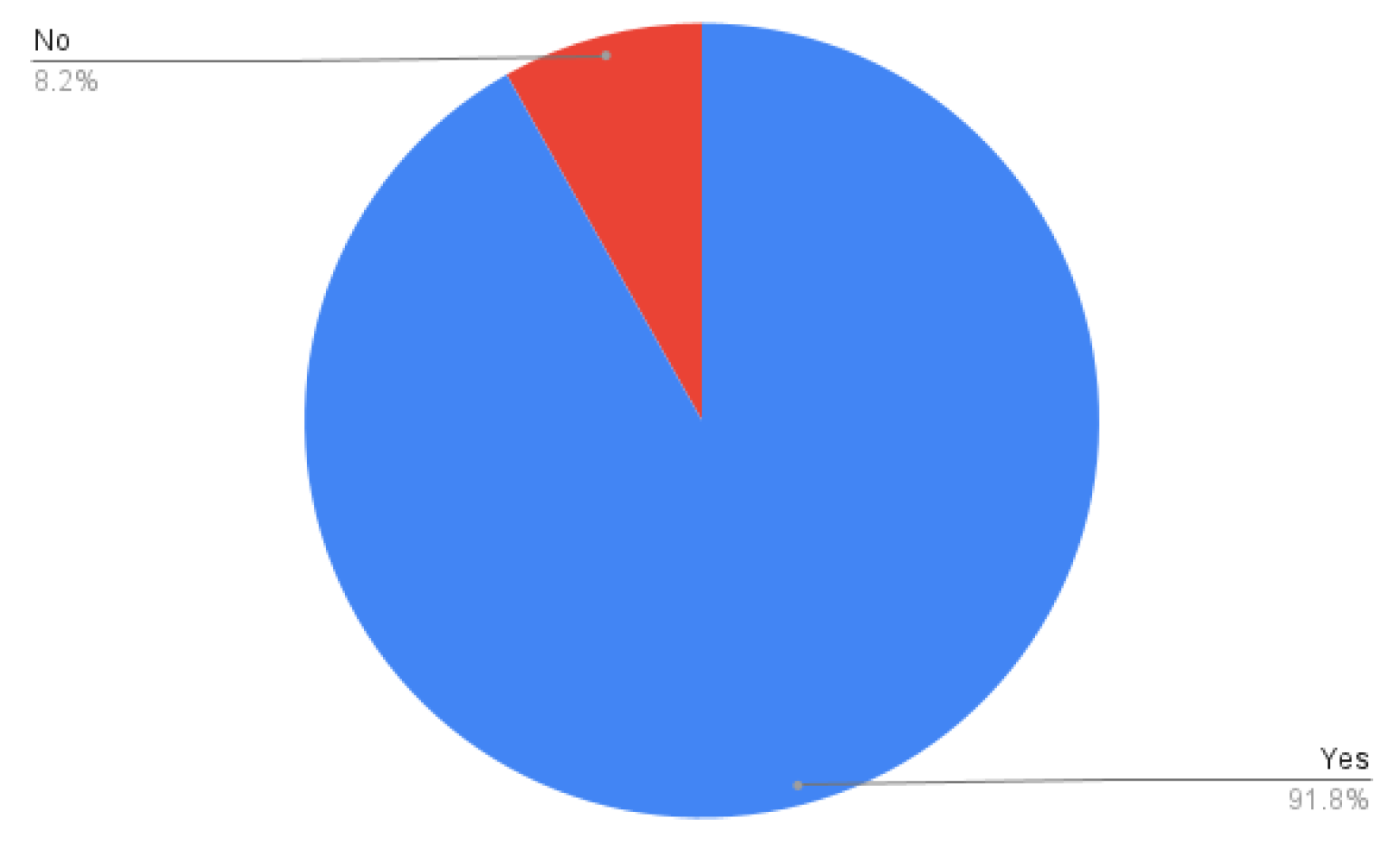

- Undergraduate Status (Question 4): Consistent with the age demographics, 91.8% of the participants were currently undergraduate students, while 8.2% were not.[1] This highlights the focus on a student population.

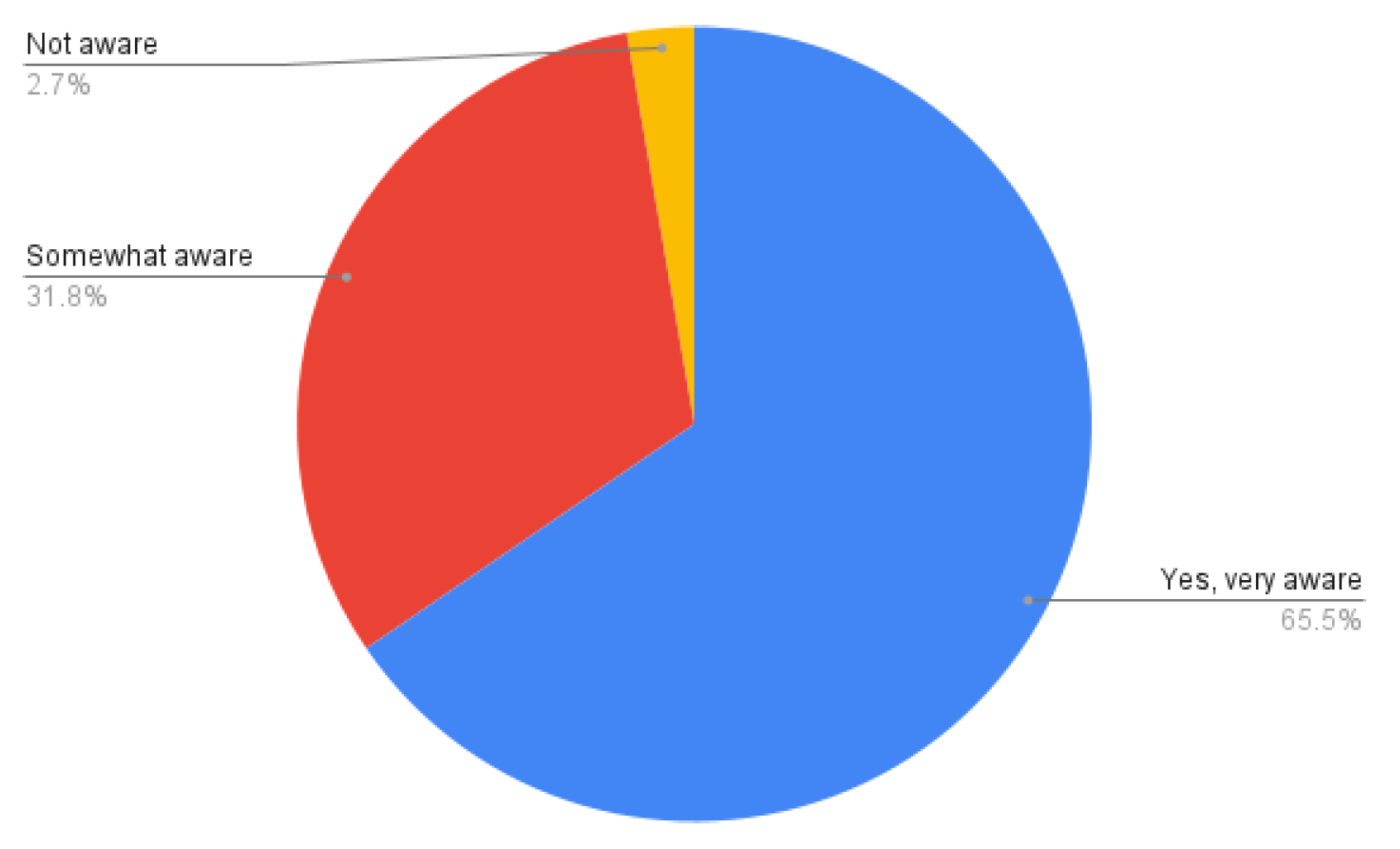

- Awareness of AI Features (Question 5): A substantial 65.5% were "Yes, very aware" that social media platforms use AI for features like content recommendations, moderation, and targeted ads, or for generating images/videos (e.g., deepfakes).[1] Another 31.8% were "Somewhat aware," and only 2.7% were "Not aware".[1] This demonstrates a widespread understanding of AI's integration into social media.

C. Ethical Concerns and Experiences

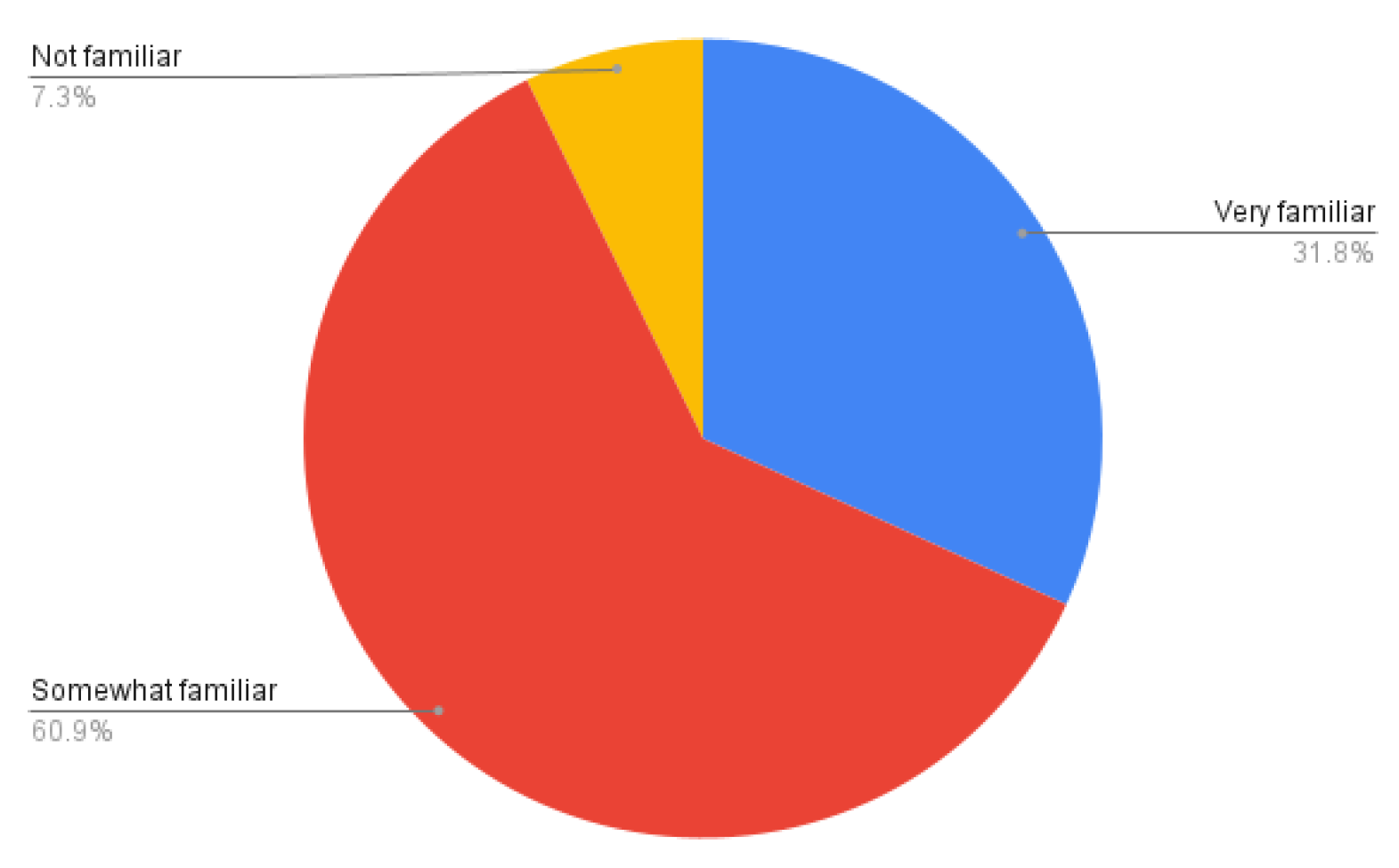

- Familiarity with AI Ethics (Question 6): 31.8% reported being "Very familiar" with the concept of AI ethics in social media, while 60.9% were "Somewhat familiar".[1] Only 7.3% indicated they were "Not familiar".[1] This suggests that while a majority have some understanding, deep familiarity is less common.

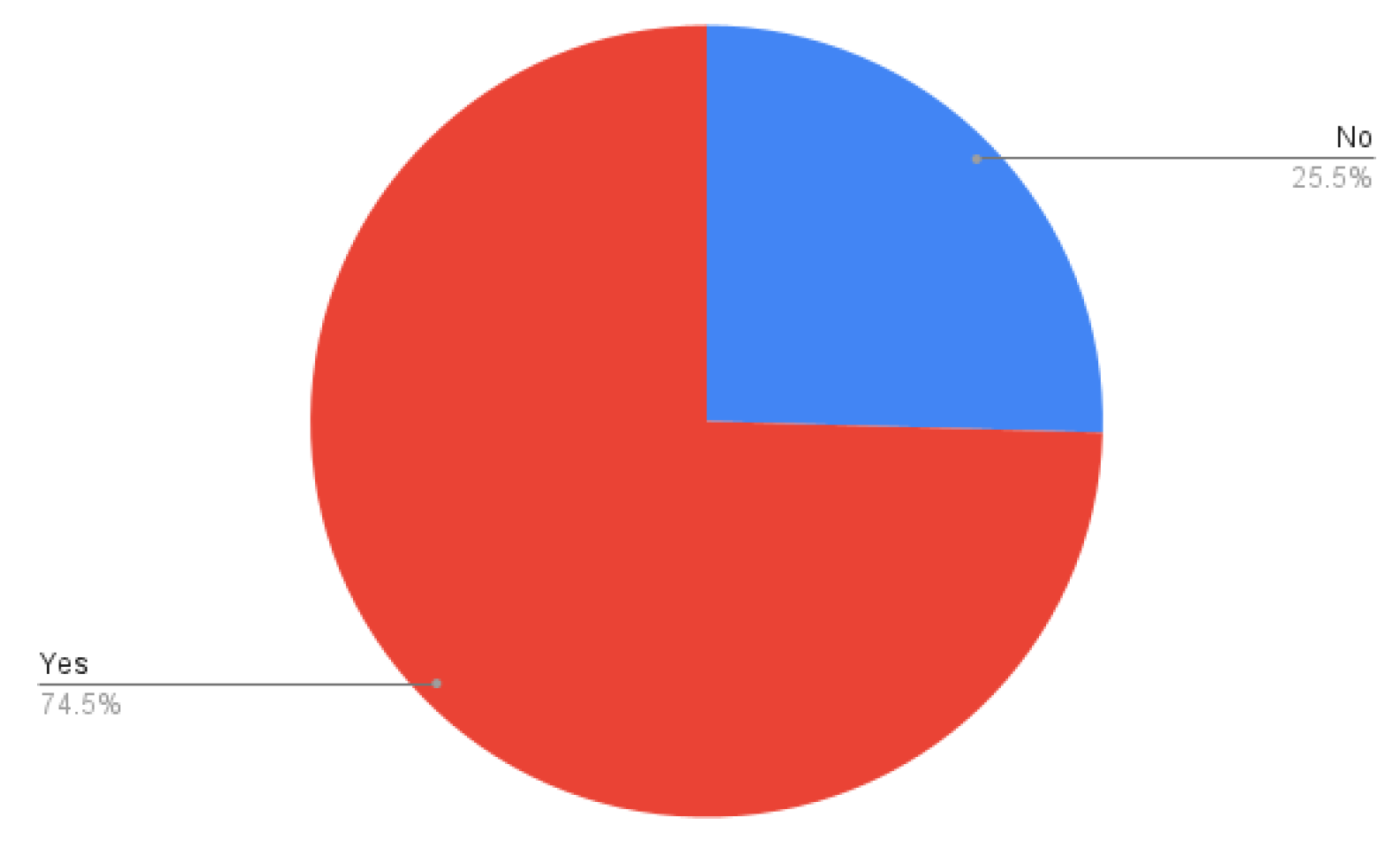

- Experience with AI-Generated Harassment (Question 8): A notable 74.5% reported having "experienced or noticed AI-generated content (e.g., deepfake videos, fake images) being used for harassment or bullying on social media".[1] This high percentage underscores the tangible presence of this issue for users.

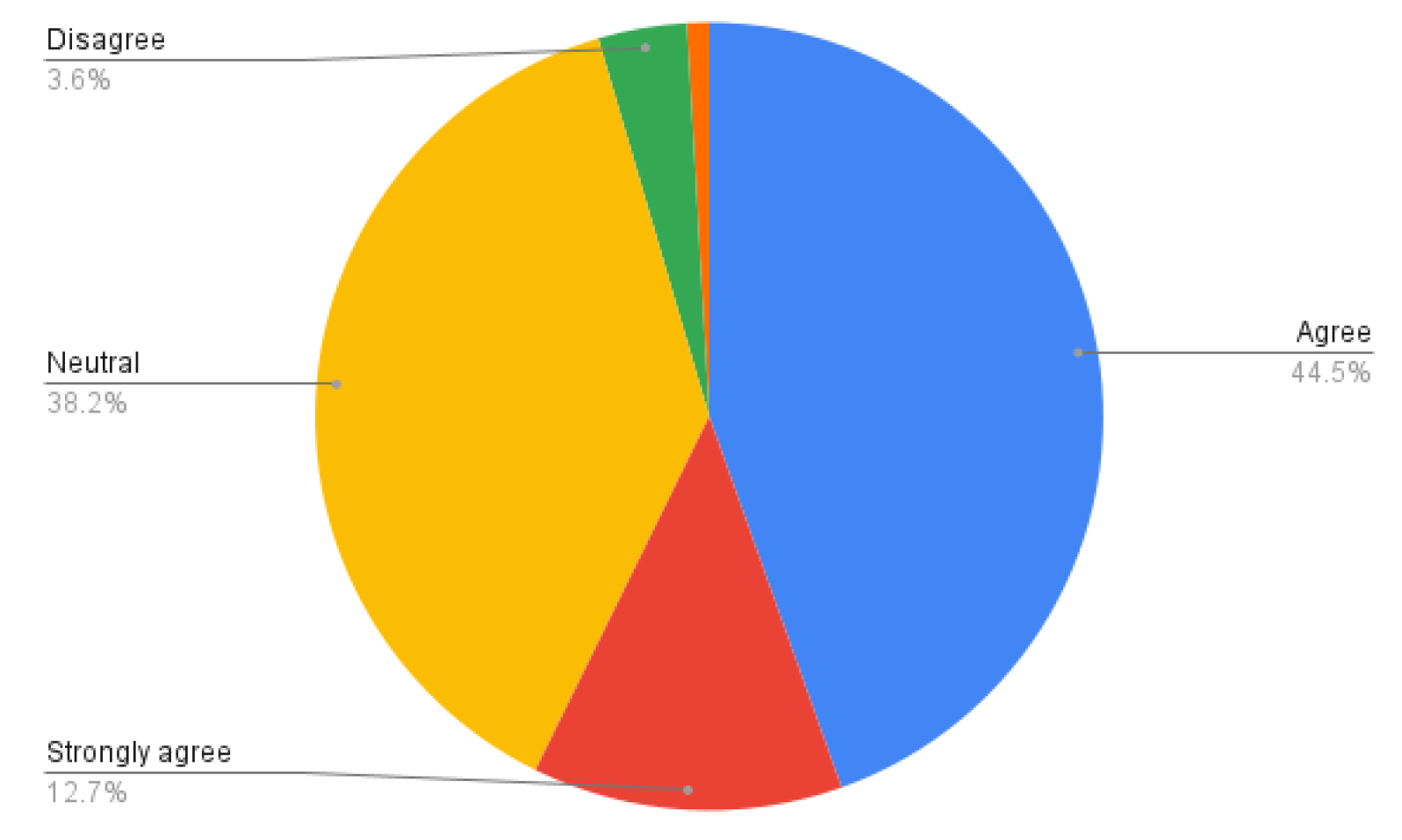

- Disclosure Requirements (Question 9): A strong consensus emerged regarding disclosure, with 44.5% agreeing or strongly agreeing that social media platforms should be required to disclose when AI is used to generate content.[1] 38.2% remained neutral, while only 12.7% strongly agreed and 3.6% strongly disagreed.[1] This highlights a clear user demand for transparency.

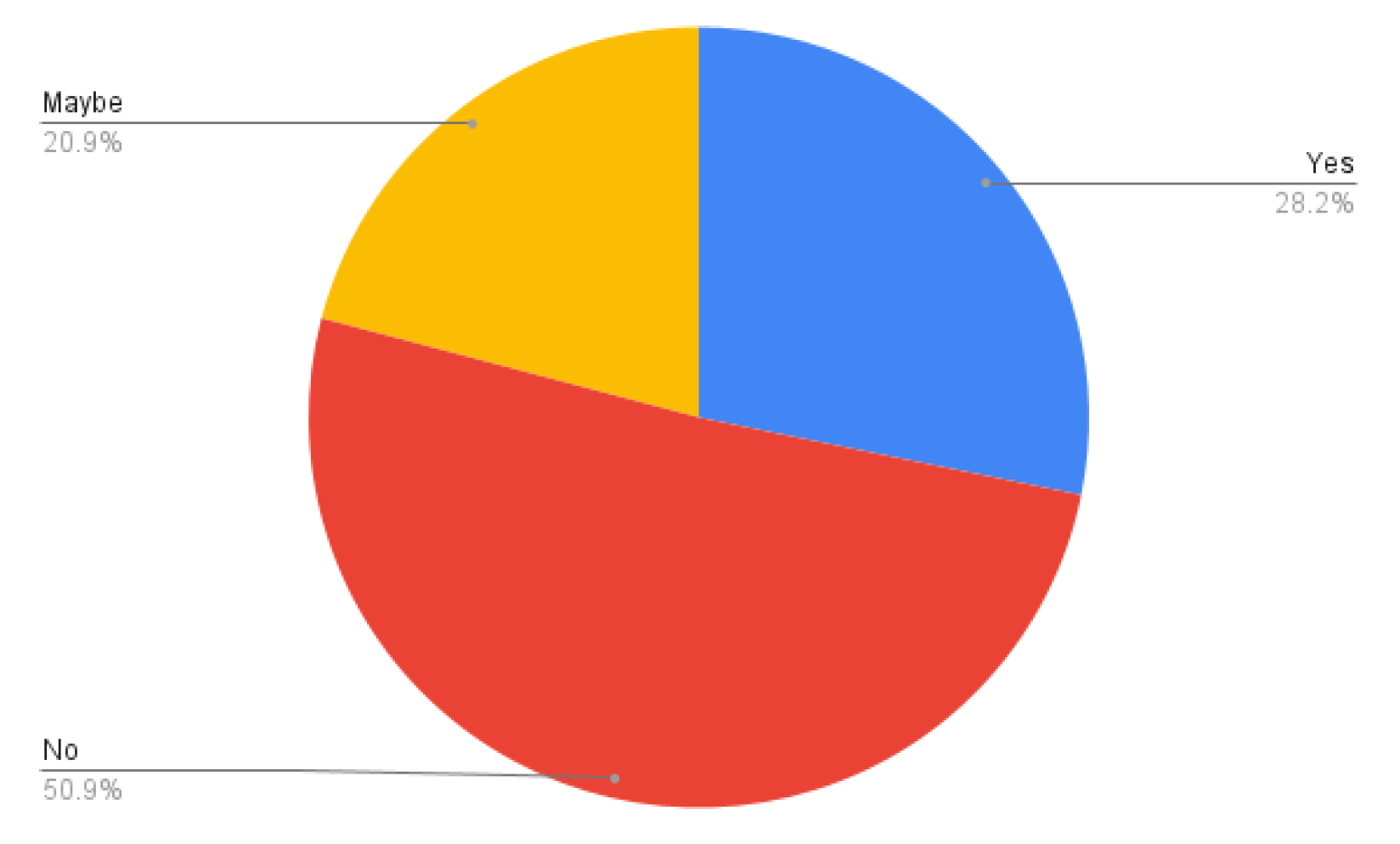

- Personal or Known Impact (Question 11): 28.2% stated that they or someone they know had been "affected by AI-generated content (e.g., deepfake videos or images) used for harassment or bullying on social media".[1] This indicates a direct or indirect impact on a substantial portion of the respondent pool. 50.9% reported no impact, and 20.9% were unsure ("Maybe").[1]

D. User Preferences and Trust

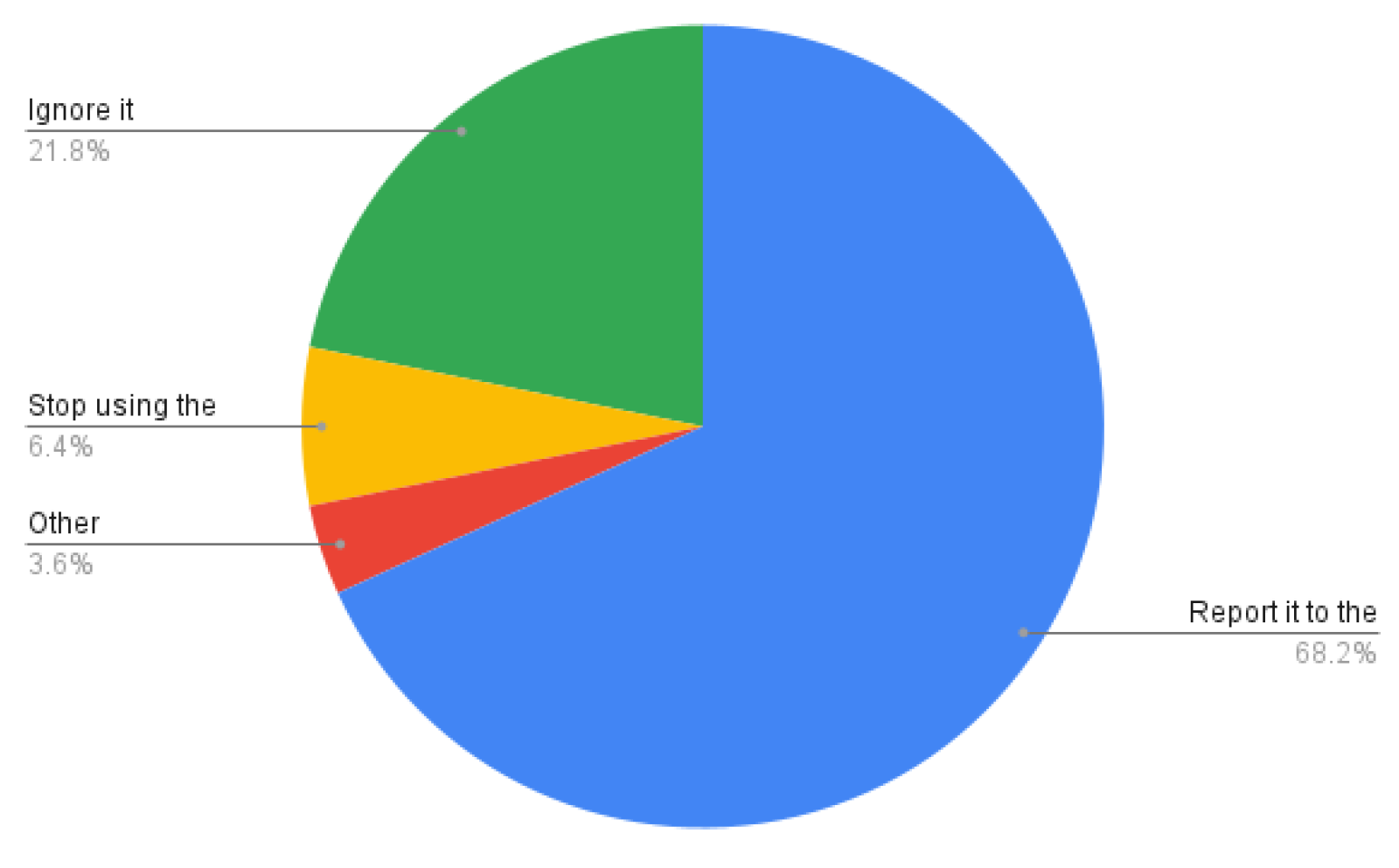

- Actions Taken (Question 12): If encountering offensive or harassing AI-generated content, 68.2% would "Report it to the platform".[1] 21.8% would "Ignore it," 6.4% would "Stop using the platform," and 3.6% would take "Other" actions.[1] This indicates a primary reliance on platform reporting mechanisms.

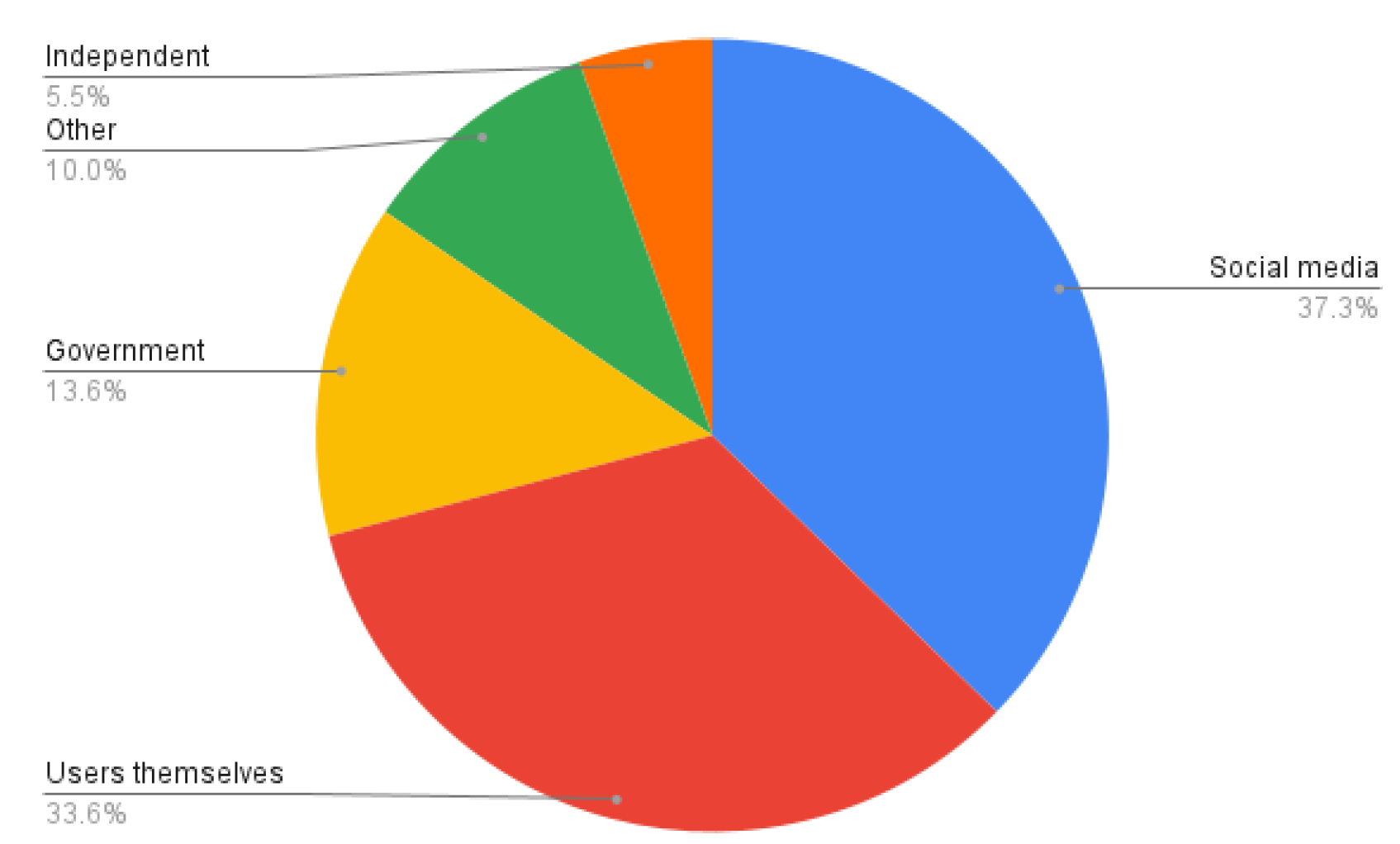

- Responsibility (Question 14): When asked who should be primarily responsible for preventing AI-generated content from being used for harassment or bullying, social media companies were identified by 37.3%.[1] Users themselves were cited by 33.6%, government regulators by 13.6%, and independent organizations by 5.5%.[1] This indicates a split perception, with a slight leaning towards platform responsibility.

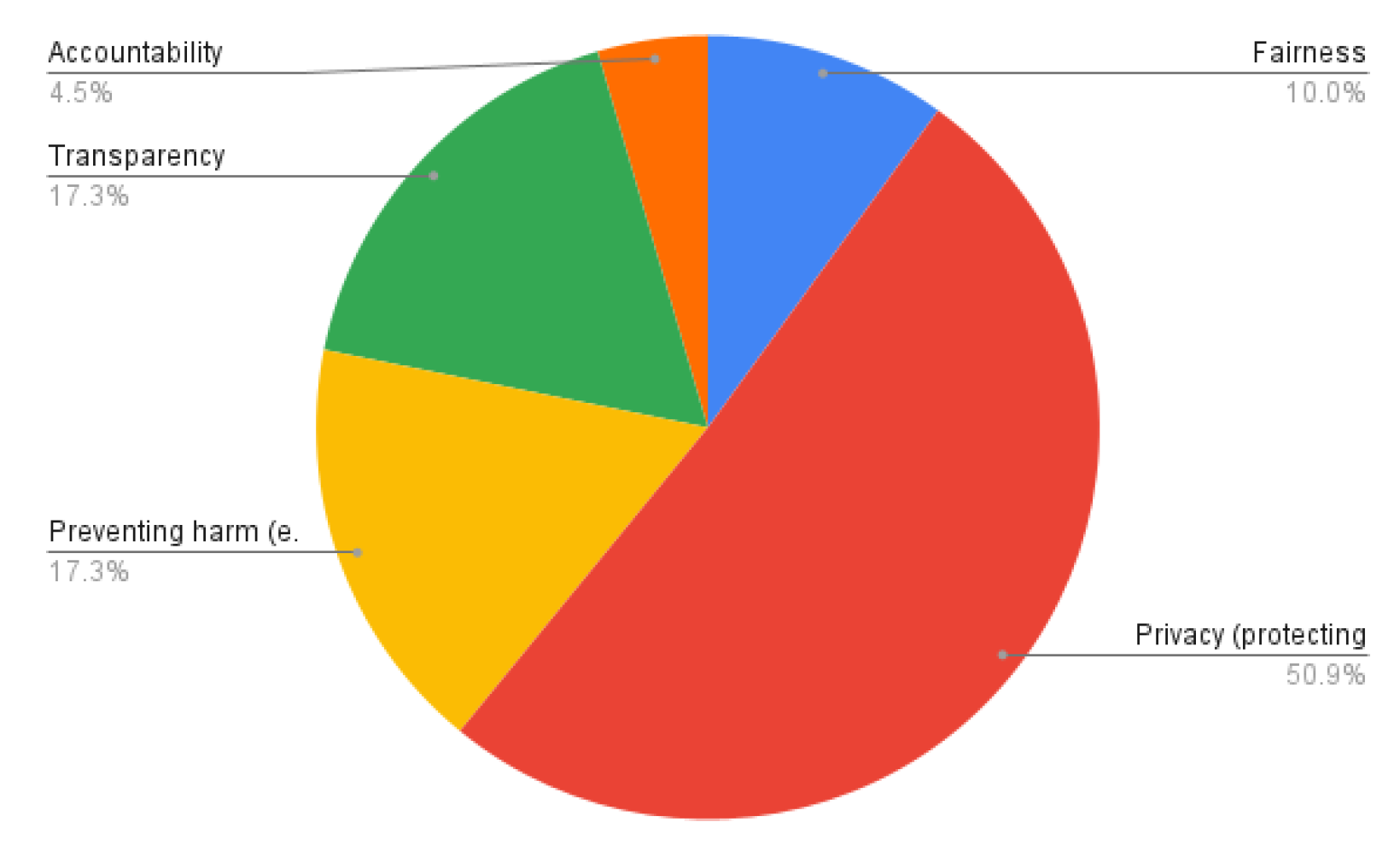

- Ethical Principles (Question 16): The most important ethical principle for AI in social media was identified as "Privacy (protecting user data)" by 50.9%.[1] "Preventing harm (e.g., stopping harassment or bullying)" was chosen by 17.3%, "Transparency (disclosing AI use)" by 17.3% (28 respondents), "Fairness (preventing bias)"

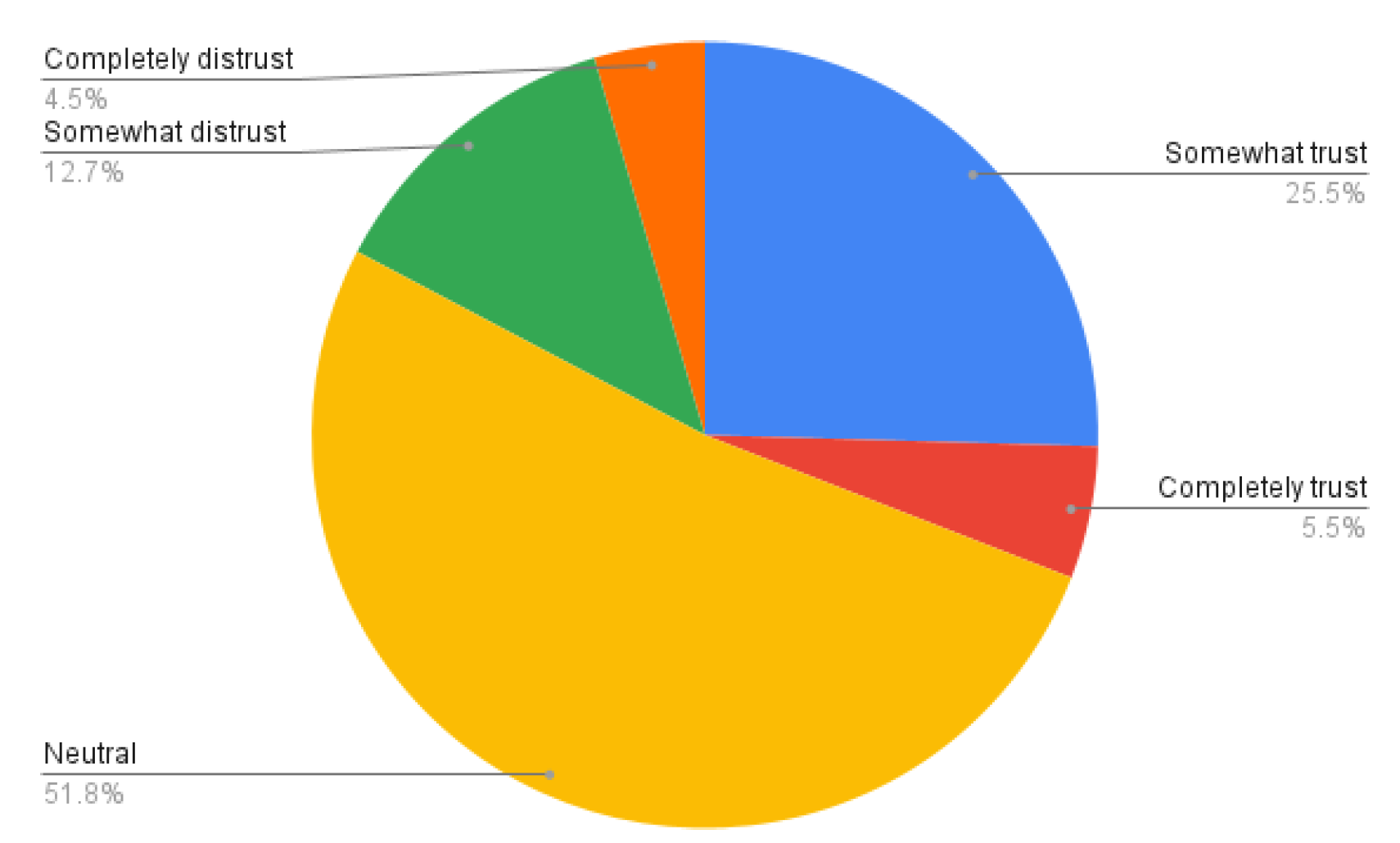

- Trust in Companies (Question 17): Trust in social media companies to use AI ethically, especially in preventing harassment or bullying, was mixed. 31% expressed some or complete trust, while 17.2% expressed some or complete distrust.[1] A substantial 51.8% remained neutral, indicating a significant portion of the user base is undecided or lacks a strong opinion on corporate ethical conduct.[1]

VIII. Discussion

IX. Future Directions and Recommendations

A. Enhanced User Control and Empowerment

- Opt-out of AI-generated content: Platforms should provide clear and easily configurable options for users to filter out or be explicitly alerted to AI-generated content, particularly deepfakes and manipulated images.[30] This aligns with the strong user demand for disclosure [1] and helps users navigate the increasingly complex information landscape.[13]

- Manage personalized recommendations: Users should have granular control over the algorithms that curate their content feeds, enabling them to understand and modify the criteria used for recommendations.[21] This can mitigate the formation of "echo chambers" and reduce exposure to potentially harmful or polarizing content.[24]

- Control data usage for AI training: Given the high concern for privacy (51% identified privacy as the most important ethical principle) [1], users must have transparent control over how their

B. Robust Platform Policies and Technological Safeguards

- Improved AI Detection and Moderation: Platforms must invest heavily in advanced AI detection systems that can identify deepfakes, voice clones, and malicious text with greater accuracy, especially "in-the-wild" content.[13] This includes developing multimodal detection approaches that analyse both visual and auditory cues.[43] The current low confidence in detection (only 33% confident) [1] highlights this as a critical area for improvement.

- Mandatory Disclosure and Labelling: Platforms should implement clear and consistent labelling mechanisms for all AI-generated or AI- modified content.[28] This could involve digital watermarking or metadata tags that are machine- readable and detectable, ensuring transparency without relying solely on human discernment.[28]

- Stricter Enforcement and Accountability: Policies against AI-driven harassment and bullying must be rigorously enforced, with clear penalties for misuse, including account suspension or bans.[29] Platforms should also provide easier and more effective reporting tools, as 75% of users would report offensive content.[1]

- Proactive Harm Prevention: Platforms should proactively identify and address algorithmic vulnerabilities that could lead to the

C. Regulatory Collaboration and International Standards

- Legislation for AI Accountability: Laws should be enacted that clearly define liability for harm caused by AI systems, holding companies and developers accountable for the ethical implications of their products.[7] The ongoing lawsuit against Character.AI in the Sewell Setzer III case [35] exemplifies the need for clearer legal precedents.

- Mandatory Safety Audits and Risk Assessments: Regulatory bodies should require AI developers and social media platforms to conduct regular, independent safety audits and risk assessments of their AI systems, particularly those with high potential for societal impact.[28]

- Funding for Research and Development: Governments should invest in research for robust AI detection methods and ethical AI development, fostering an ecosystem where solutions can keep pace with the evolving threats.[13]

B. Public Education and Media Literacy

- Digital Literacy Programs: Comprehensive educational programs should be developed to teach users, especially younger demographics, how to critically evaluate online content, identify AI-generated fakes, and understand the mechanisms of algorithmic influence.[60]

- Awareness Campaigns: Platforms and public health organizations should launch awareness campaigns about the risks of AI-driven harassment and the psychological impacts of deepfakes and misinformation.[17]

- Support for Victims: Accessible and effective support systems for victims of AI-driven harassment and bullying are essential, including mental health resources and clear reporting pathways.[17]

X. Conclusion

Appendix

References

- Survey Responses 200, Dataset. Available: https://drive.google.com/file/d/1s3R- DCL20PcAA0XTaJ4oeYW1TUG6oFMn/view?usp= sharing.

- J. Doe et al., “Artificial Intelligence in Creative Industries: Advances Prior to 2025,” arXiv, vol. 2501.02725v1, 2025. [Online]. Available: https://arxiv.org/html/2501.02725v1.

- International AI Safety Report, GOV.UK, 2025. [Online]. Available: https://assets.publishing.service.gov.uk/media/679a0c 48a77d250007d313ee/International_AI_Safety_Repo rt_2025_accessible_f.pdf.

- Smith et al., “AI Ethics and Social Norms: Exploring ChatGPT’s Capabilities From What to How,” arXiv, vol. 2504.18044, 2025. [Online]. Available: https://arxiv.org/pdf/2504.18044.

- Johnson et al., “Developing an Ethical Regulatory Framework for Artificial Intelligence: Integrating Systematic Review, Thematic Analysis, and Multidisciplinary Theories,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/385917294_Developing_an_Ethical_Regulatory_Framework_fo r_Artificial_Intelligence_Integrating_Systematic_Re view_Thematic_Analysis_and_Multidisciplinary_Th eories.

- Ethics Guidelines, NeurIPS, 2025. [Online]. Available: https://neurips.cc/public/EthicsGuidelines.

- Lee et al., “Transparency and accountability in AI systems: safeguarding wellbeing in the age of algorithmic decision-making,” Front. Hum. Dyn., vol. 6, p. 1421273, 2024. [CrossRef]

- Brown et al., “The Ethics of AI Ethics: An Evaluation of Guidelines,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/338983166_The_Ethics_of_AI_Ethics_An_Evaluation_of_Guid elines.

- The ethics of artificial intelligence: Issues and initiatives, European Parliament, 2020. [Online]. Available: https://www.europarl.europa.eu/RegData/etudes/STU D/2020/634452/EPRS_STU(2020)634452_EN.

- Davis et al., “Toward Fairness, Accountability, Transparency, and Ethics in AI for Social Media and Health Care: Scoping Review,” PubMed Central, vol. 10, p. e47447, 2024. [CrossRef]

- Garcia et al., “Governance of Generative AI,”Policy Soc., vol. 44, no. 1, pp. 1–17, 2025. [CrossRef]

- Wilson et al., “Artificial Intelligence and Ethics: A Comprehensive Review of Bias Mitigation, Transparency, and Accountability in AI Systems,” ResearchGate, 2023. [Online]. Available: https://www.researchgate.net/publication/375744287_Artificial_Intelligence_and_Ethics_A_Comprehensi ve_Review_of_Bias_Mitigation_Transparency_and_ Accountability_in_AI_Systems.

- Kim et al., “Deepfake-Eval-2024: A Multi- Modal In-the-Wild Benchmark of Deepfakes Circulated in 2024,” arXiv, vol. 2503.02857v4, 2024. [Online]. Available: https://arxiv.org/html/2503.02857v4.

- Patel et al., “A Multi-Modal In-the-Wild Benchmark of Deepfakes Circulated in 2024,” arXiv, vol. 2401.04364v4, 2025. [Online]. Available: https://arxiv.org/pdf/2401.04364.

- Lee et al., “Generative Artificial Intelligence and the Evolving Challenge of Deepfake Detection: A Systematic Analysis,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/388760523_Generative_Artificial_Intelligence_and_the_Evolvi ng_Challenge_of_Deepfake_Detection_A_Systemati c_Analysis.

- H. Kim et al., “A Multi-Modal In-the-Wild Benchmark of Deepfakes Circulated in 2024,” arXiv, vol. 2503.02857v2, 2024. [Online]. Available: https://arxiv.org/html/2503.02857v2.

- Nguyen et al., “Moderating Harm: Benchmarking Large Language Models for Cyberbullying Detection in YouTube Comments,” arXiv, vol. 2505.18927v2, 2025. [Online]. Available: https://arxiv.org/html/2505.18927v2.

- Zhang et al., “Chinese Cyberbullying Detection: Dataset, Method, and Validation,” arXiv, vol. 2505.20654v1, 2025. [Online]. Available: https://arxiv.org/html/2505.20654v1.

- Thompson et al., “Psychological Impacts of Deepfakes: Understanding the Effects on Human Perception, Cognition, and Behavior,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/393022880_Psychological_Impacts_of_Deepfakes_Understandi ng_the_Effects_on_Human_Perception_Cognition_a nd_Behavior.

- Thompson et al., “Psychological Impacts of Deepfakes: Understanding the Effects on Human Perception, Cognition, and Behavior,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/393067059_Psychological_Impacts_of_Deepfakes_Understandi ng_the_Effects_on_Human_Perception_Cognition_a nd_Behavior.

- Clark, “This Is Not a Game: The Addictive Allure of Digital Companions,” Seattle Univ. Law Rev., 2025. [Online]. Available: https://digitalcommons.law.seattleu.edu/cgi/viewcont ent.cgi?article=2918&context=sulr.

- Adams et al., “The Psychological Impacts of Algorithmic and AI-Driven Social Media on Teenagers: A Call to Action,” Beadle Scholar, 2025. [Online]. Available: https://scholar.dsu.edu/cgi/viewcontent.cgi?article=1 222&context=ccspapers.

- Social Media, Mental Health and Body Image, University of Alabama News, 2025. [Online]. Available: https://news.ua.edu/2025/03/social-media- mental-health-and-body-image/ [DOI unavailable].

- Adams et al., “The Psychological Impacts of Algorithmic and AI-Driven Social Media on Teenagers: A Call to Action,” arXiv, vol. 2408.10351v1, 2025. [Online]. Available: https://arxiv.org/html/2408.10351v1.

- Top 10 Terrifying Deepfake Examples, Arya.ai, 2025. [Online]. Available: https://arya.ai/blog/top- deepfake-incidents [DOI unavailable].

- Martinez et al., “Filters of Identity: AR Beauty and the Algorithmic Politics of the Digital Body,” arXiv, vol. 2506.19611v1, 2025. [Online]. Available: https://arxiv.org/html/2506.19611v1.

- Chen et al., “Algorithmic Arbitrariness in Content Moderation,” arXiv, vol. 2402.16979, 2024. [Online]. Available: https://arxiv.org/abs/2402.16979.

- Taylor et al., “Standards, frameworks, and legislation for artificial intelligence (AI) transparency,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/388484888_Standards_frameworks_and_legislation_for_artifici al_intelligence_AI_transparency.

- Kumar et al., “Theory and Practice of Social Media’s Content Moderation by Artificial Intelligence in Light of European Union’s AI Act and Digital Services Act,” Eur. J. Politics, 2025. [Online]. Available: https://www.ej- politics.org/index.php/politics/article/view/165.

- Huang et al., “Understanding Users’ Security and Privacy Concerns and Attitudes Towards Conversational AI Platforms,” arXiv, vol. 2504.06552v2, 2025. [Online]. Available: https://arxiv.org/abs/2504.06552.

- Gupta et al., “SoK: A Classification for AI- driven Personalized Privacy Assistants,” arXiv, vol. 2502.07693v2, 2025. [Online]. Available: https://arxiv.org/html/2502.07693v2.

- T. Huang et al., “Understanding Users’ Security and Privacy Concerns and Attitudes Towards Conversational AI Platforms,” arXiv, vol. 2504.06552v2, 2025. [Online]. Available: https://arxiv.org/pdf/2504.06552.

- Publications in 2023/2024/2025 related to Information Security, University of Illinois, 2025. [Online]. Available: https://ischool.illinois.edu/sites/default/files/documen ts/Complete%20List%20of%20Publications%20as% 20of%20Nov2024.pdf [DOI unavailable].

- Mom Sues AI Chatbot in Federal Lawsuit After Sons Death, Social Media Victims, 2025. [Online]. Available: https://socialmediavictims.org/blog/lawsuit-filed- against-character-ai-after-teens-death/ [DOI unavailable].

- In lawsuit over Orlando teen’s suicide, judge rejects that AI chatbots have free speech rights, WUSF, 2025. [Online]. Available: https://www.wusf.org/courts-law/2025-05-22/in- lawsuit-over-orlando-teens-suicide-judge-rejects- that-ai-chatbots-have-free-speech [DOI unavailable].

- Lessons learned from AI chatbot’s role in one man’s suicide, DPEX Network, 2025. [Online]. Available: https://www.dpexnetwork.org/articles/lessons- learned-ai-chatbots-role-in-one-mans-suicide [DOI unavailable].

- Belgian man’s suicide attributed to AI chatbot, CARE, 2023. [Online]. Available: https://care.org.uk/news/2023/03/belgian-mans- suicide-attributed-to-ai-chatbot [DOI unavailable].

- Positive Approaches Journal, Volume 12, Issue 3, MyODP, 2025. [Online]. Available: https://www.myodp.org/mod/book/tool/print/index.p hp?id=48947&chapterid=1051 [DOI unavailable].

- Family Sues TikTok After Son’s Suicide, Claiming He Was Inundated with FYP Videos, People.com, 2025. [Online]. Available: https://people.com/family-sues-blaming-tiktok-for- son-suicide-being-inundated-fyp-videos-11683054 [DOI unavailable].

- Molly Russell - Prevention of future deaths report, Judiciary.uk, 2022. [Online]. Available: https://www.judiciary.uk/wp- content/uploads/2022/10/Molly-Russell-Prevention- of-future-deaths-report-2022-0315_Published.pdf [DOI unavailable].

- Death of Molly Russell, Wikipedia, 2025. [Online]. Available: https://en.wikipedia.org/wiki/Death_of_Molly_Russe ll [DOI unavailable].

- Students Are Sharing Sexually Explicit ‘Deepfakes.’ Are Schools Prepared?, Education Week, 2024. [Online]. Available: https://www.edweek.org/leadership/students-are- sharing-sexually-explicit-deepfakes-are-schools- prepared/2024/09 [DOI unavailable].

- Sharma et al., “A Comprehensive Review on Deepfake Generation, Detection, Challenges, and Future Directions,” Int. J. Res. Appl. Sci. Eng. Technol., 2025. [Online]. Available: https://www.ijraset.com/best-journal/a- comprehensive-review-on-deepfake-generation- detection-challenges-and-future-directions.

- How do artificial intelligence and disinformation impact elections?, Brookings Institution, 2025. [Online]. Available: https://www.brookings.edu/articles/how-do-artificial- intelligence-and-disinformation-impact-elections/ [DOI unavailable].

- T. Huang et al., “Unmasking Digital Falsehoods: A Comparative Analysis of LLM-Based Misinformation Detection Strategies,” arXiv, vol. 2503.00724v1, 2025. [Online]. Available: https://arxiv.org/html/2503.00724v1.

- Liu et al., “Characterizing AI-Generated Misinformation on Social Media,” arXiv, vol. 2505.10266v1, 2025. [Online]. Available: https://arxiv.org/html/2505.10266v1.

- From policy to practice: Responsible media AI implementation, Digital Content Next, 2025. [Online]. Available: https://digitalcontentnext.org/blog/2025/06/30/from- policy-to-practice-responsible-media-ai- implementation/ [DOI unavailable].

- Yang et al., “Understanding Human-Centred AI: a review of its defining elements and a research agenda,” Behav. Inf. Technol., 2025. [CrossRef]

- Zhao et al., “Artificial intelligence (AI) for user experience (UX) design: a systematic literature review and future research agenda,” ResearchGate, 2023. [Online]. Available: https://www.researchgate.net/publication/373389004_Artificial_intelligence_AI_for_user_experience_UX_design_a_systematic_literature_review_and_future_ research_agenda.

- Khan et al., “Utilizing Generative AI for Instantaneous Content Moderation on Social Media Platforms,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/392927657_Utilizing_Generative_AI_for_Instantaneous_Conten t_Moderation_on_Social_Media_Platforms.

- Roberts et al., “Governing artificial intelligence: ethical, legal and technical opportunities and challenges,” Philos. Trans. R. Soc. A, vol. 376, no. 2133, p. 20180080, 2018. [CrossRef]

- Patel et al., “Ethical and regulatory challenges of AI technologies in healthcare: A narrative review,” PubMed Central, vol. 11, p. e10879008, 2025. [CrossRef]

- Formatted_Social Media Governance project: Summary of work in 2024, OECD, 2025. [Online]. Available: https://wp.oecd.ai/app/uploads/2025/05/social-media- governance-project_summary-of-work-in-2024.pdf [DOI unavailable].

- C. Wu et al., “FutureGen: LLM-RAG Approach to Generate the Future Work of Scientific Article,” arXiv, vol. 2503.16561v1, 2025. [Online]. Available: https://arxiv.org/html/2503.16561v1.

- D. Singh et al., “Deepfake Attacks: Generation, Detection, Datasets, Challenges, and Research Directions,” ResearchGate, 2023. [Online]. Available: https://www.researchgate.net/publication/374932579_Deepfake_Attacks_Generation_Detection_Datasets_Challenges_and_Research_Directions.

- E. Brown et al., “Ethical Considerations in Artificial Intelligence: A Comprehensive Discussion from the Perspective of Computer Vision,” ResearchGate, 2023. [Online]. Available: https://www.researchgate.net/publication/376518424_Ethical_Considerations_in_Artificial_Intelligence_ A_Comprehensive_Discussion_from_the_Perspectiv e_of_Computer_Vision.

- F. Davis et al., “Reviewing the Ethical Implications of AI in Decision Making Processes,” ResearchGate, 2025. [Online]. Available: https://www.researchgate.net/publication/378295986_REVIEWING_THE_ETHICAL_IMPLICATIONS_ OF_AI_IN_DECISION_MAKING_PROCESSES.

- G. Lee et al., “Ethical and social considerations of applying artificial intelligence in healthcare—a two-pronged scoping review,” PubMed Central, vol. 12, p. e12107984, 2025. [CrossRef]

- H. Wang et al., “Face Deepfakes - A Comprehensive Review,” arXiv, vol. 2502.09812v1, 2025. [Online]. Available: https://arxiv.org/html/2502.09812v1.

- Chen et al., “Charting the Landscape of Nefarious Uses of Generative Artificial Intelligence for Online Election Interference,” arXiv, vol. 2406.01862, 2024. [Online]. Available: https://arxiv.org/pdf/2406.01862.

- J. Kumar et al., “Body Perceptions and Psychological Well-Being: A Review of the Impact of Social Media and Physical Measurements on Self- Esteem and Mental Health with a Focus on Body Image Satisfaction and Its Relationship with Cultural and Gender Factors,” PubMed Central, vol. 12, p. e11276240, 2025. [CrossRef]

- K. Taylor et al., “The impact of digital technology, social media, and artificial intelligence on cognitive functions: a review,” Front. Cogn., vol. 2, p. 1203077, 2023. [CrossRef]

- L. Nguyen et al., “How AI and Human Behaviors Shape Psychosocial Effects of Chatbot Use: A Longitudinal Randomized Controlled Study,” arXiv, vol. 2503.17473v1, 2025. [Online]. Available: https://arxiv.org/html/2503.17473v1.

- M. Lee, “The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers,” Microsoft, 2025. [Online]. Available: https://www.microsoft.com/en- us/research/wp- content/uploads/2025/01/lee_2025_ai_critical_thinki ng_survey.pdf [DOI unavailable].

- AI and Misinformation - 2024 Dean’s Report, University of Florida, 2024. [Online]. Available: https://2024.jou.ufl.edu/page/ai-and-misinformation [DOI unavailable].

- N. Gupta et al., “Exposing the Fake: Effective Diffusion-Generated Images Detection,” arXiv, vol. 2307.06272, 2023. [Online]. Available: https://arxiv.org/abs/2307.06272.

- N. Gupta et al., “Exposing the Fake: Effective Diffusion-Generated Images Detection,” arXiv, vol. 2307.06272v1, 2023. [Online]. Available: https://arxiv.org/html/2307.06272v1.

- Zhang et al., “Enhancing Deepfake Detection: Proactive Forensics Techniques Using Digital Watermarking,” CMC-Comput. Mater. Contin., vol. 82, no. 1, p. 59264, 2025. [CrossRef]

- P. Sharma et al., “Advancing GAN Deepfake Detection: Mixed Datasets and Comprehensive Artifact Analysis,” Appl. Sci., vol. 15, no. 2, p. 923, 2025. [CrossRef]

- Q. Liu et al., “Deepfake Generation and Detection: A Benchmark and Survey,” arXiv, vol. 2403.17881, 2024. [Online]. Available: https://arxiv.org/abs/2403.17881.

- R. Patel et al., “Ethical Challenges and Solutions of Generative AI: An Interdisciplinary Perspective,” Informatics, vol. 11, no. 3, p. 58, 2024. [CrossRef]

- S. Kim et al., “A Comprehensive Survey with Critical Analysis for Deepfake Speech Detection,” arXiv, vol. 2409.15180, 2024. [Online]. Available: http://arxiv.org/pdf/2409.15180.

- T. Wilson et al., “The Impact of Affect on the Perception of Fake News on Social Media: A Systematic Review,” Soc. Sci., vol. 12, no. 12, p. 674, 2023. [CrossRef]

- U. Chen et al., “Ethical and Legal Considerations in Mitigating Disinformation,” arXiv, vol. 2406.18841, 2024. [Online]. Available: https://arxiv.org/pdf/2406.18841.

- V. Adams et al., “’I Hadn’t Thought About That’: Creators of Human-like AI Weigh in on Ethics And Neurodivergence,” arXiv, vol. 2506.12098, 2025. [Online]. Available: https://arxiv.org/abs/2506.12098.

- SPS Webinar: Recent Advances and Challenges of Deepfake Detection, Signal Processing Society, 2025. [Online]. Available: https://signalprocessingsociety.org/blog/sps-webinar- recent-advances-and-challenges-deepfake-detection [DOI unavailable].

- Artificial intelligence and child sexual abuse: A rapid evidence assessment, Australian Institute of Criminology, 2025. [Online]. Available: https://www.aic.gov.au/publications/tandi/tandi711 [DOI unavailable].

- Addressing AI-generated child sexual exploitation and abuse, Tech Coalition, 2025. [Online]. Available: https://technologycoalition.org/resources/addressing- ai-generated-child-sexual-exploitation-and-abuse/ [DOI unavailable].

- W. Taylor et al., “AI-Driven Content Moderation: Ethical and Technical Challenges,” arXiv, vol. 2502.07931v1, 2025. [Online]. Available: https://arxiv.org/pdf/2502.07931.

- X. Brown et al., “Balancing Innovation and Regulation in the Age of Generative Artificial Intelligence,” J. Inf. Policy, vol. 14, p. 0012, 2024. [CrossRef]

- “Courtroom Scene from Sewell Setzer III Lawsuit,” USA Today, 2024. [Online]. Available: https://www.usatoday.com/story/news/nation/2024/1 0/23/sewell-setzer-iii/75814524007/.

- “Dragon-Themed AI Illustration,” Unsplash, 2025. [Online]. Available: https://www.usatoday.com/gcdn/authoring/authoring- images/2024/10/23/USAT/75815793007-20240920-t-230449-z-788801772-rc-244-aae-2-vha-rtrmadp-3- auctiongameofthrones.JPG?width=1320&height=100 2&fit=crop&format=pjpg&auto=webp .

- “Courtroom Scene from Chase Nasca Lawsuit,” People.com, 2025. [Online]. Available: https://people.com/family-sues-blaming-tiktok-for-son-suicide-being-inundated-fyp-videos-11683054.

- “Social Media Interface,” Unsplash, 2025. [Online]. Available: https://people.com/thmb/yugXTntaClygvSz4N7mLkc NYWlw=/4000x0/filters:no_upscale():max_bytes(15 0000):strip_icc():focal(999x0:1001x2):format(webp)/ tiktok-ban-042424- 6026fa82c6db43c89f66829518b027fe.jpg.

- “Courtroom Illustration,” Pixabay, 2025. [Online]. Available: https://static01.nyt.com/images/2022/09/30/business/ INTERNET-SUICIDE-01/INTERNET-SUICIDE-01-superJumbo.jpg?quality=75&auto=webp.

- “Courtroom Illustration,” Pixabay, 2025. [Online]. Available: https://ichef.bbci.co.uk/news/1024/cpsprodpb/183C4/ production/_126786299_mollyrussell1.jpg.webp.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).