Submitted:

02 July 2025

Posted:

03 July 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

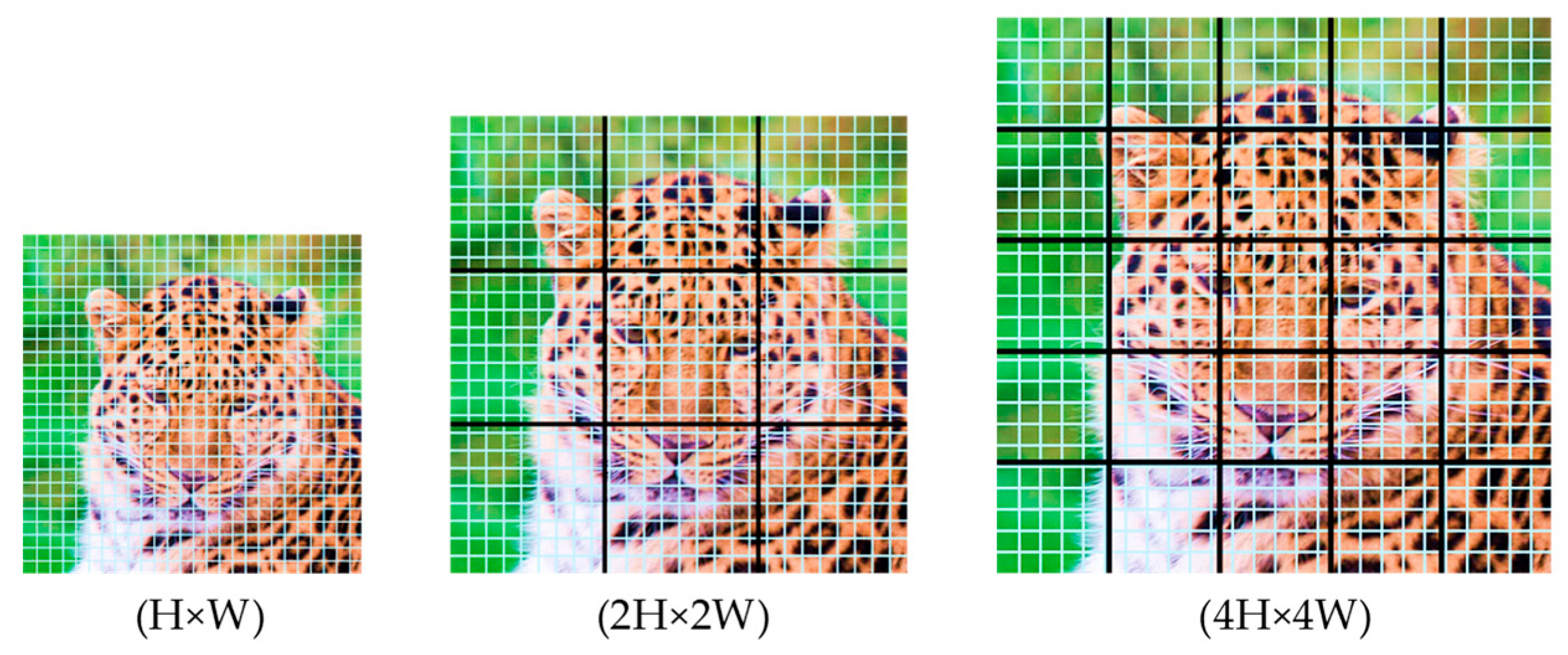

- A multi-scale ViT discriminator that replaces the CNN-based discriminator of StyleGAN2-ADA. It employs efficient grid-based self-attention, effectively capturing both local textures and global image structures.

- We adopt two patch-level augmentations: Patch Dropout and Patch Shuffle, that operate directly on token embeddings. These augmentations adjust dynamically based on discriminator feedback, reinforcing local detail preservation and global coherence.

- Transformer-specific loss adaptations, including token-level gradient penalties (R1) and Path Length Regularization (PLR) applied directly to the ViT class token, to enhance stability and convergence.

- We tailor the training recipe for data-limited regimes by incorporating relative position encoding, token-wise normalization, and ADA heuristics, stabilizing adversarial learning.

2. Related Works

2.1. Motivation

3. Research Methodology

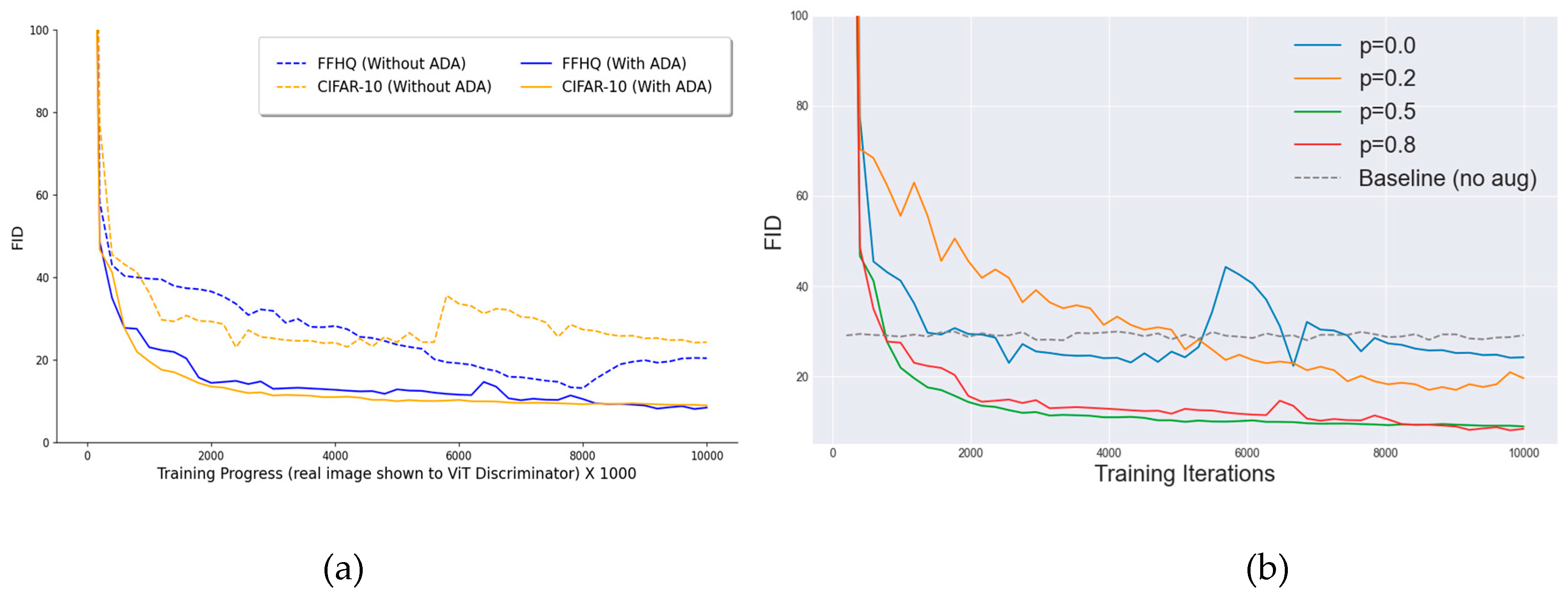

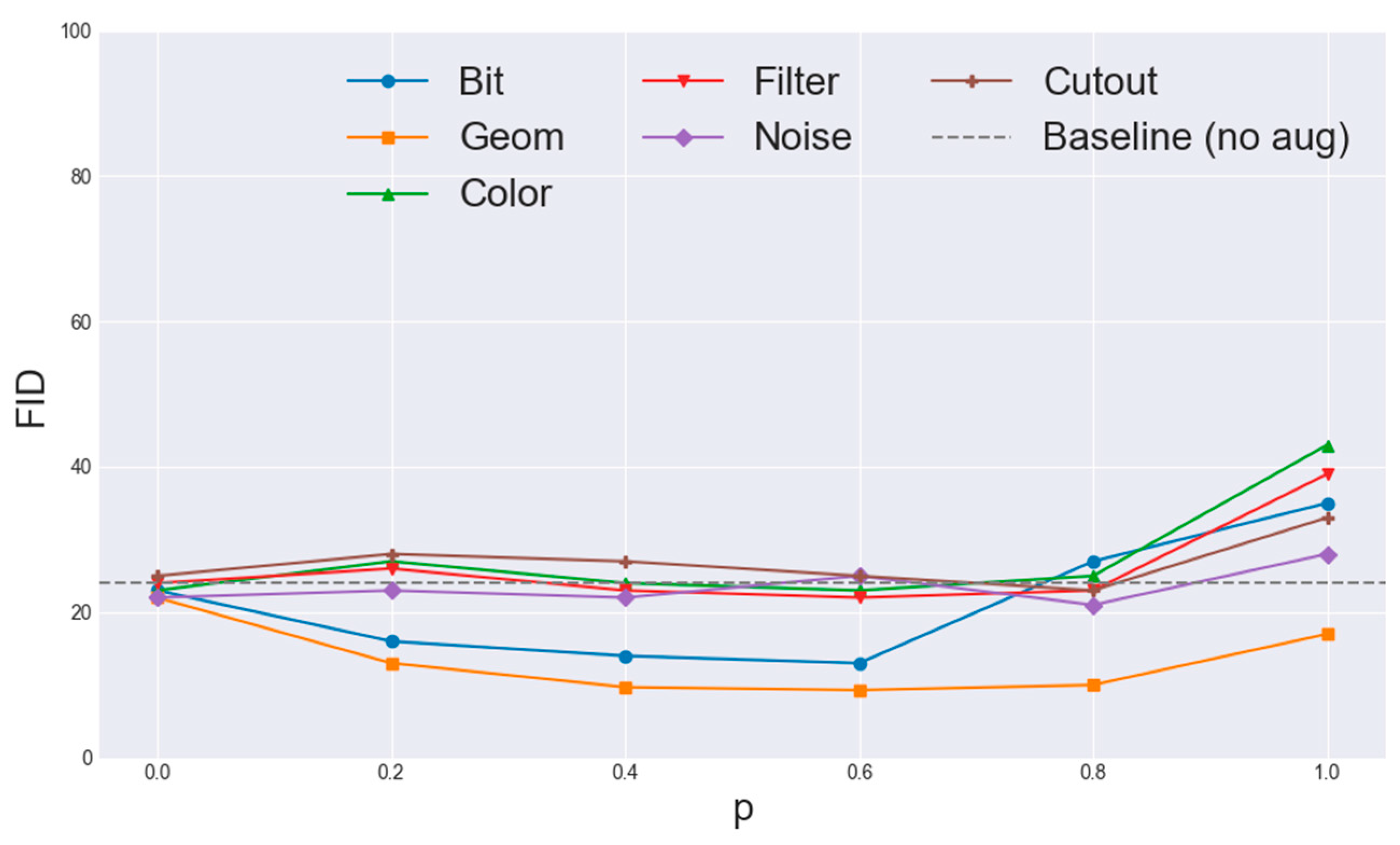

3.1. Augmentation Pipeline

- Patch Dropout randomly eliminates a fraction of image tokens at the input level. Specifically, prior to feeding patch embeddings into transformer blocks, a subset of tokens is arbitrarily sampled and removed, while positional embeddings are preserved. This method leverages spatial redundancy in image data, compelling the discriminator to infer global structure from incomplete observations, thereby enhancing generalization,

- Patch Shuffle randomly rearranges the positions of patch embeddings within the image. Although this increases training data variability, it introduces a potential bias due to the altered data distribution. Consequently, Patch Shuffle is applied selectively and sparingly across layers, balancing the bias-variance tradeoff and promoting robust feature extraction by forcing the discriminator to recognize global coherence amidst shuffled spatial information,

3.2. CNN-Based Generator

3.3. VIT-Based Discriminator

3.4. Training Protocol

3.5. Loss Functions and Modifications

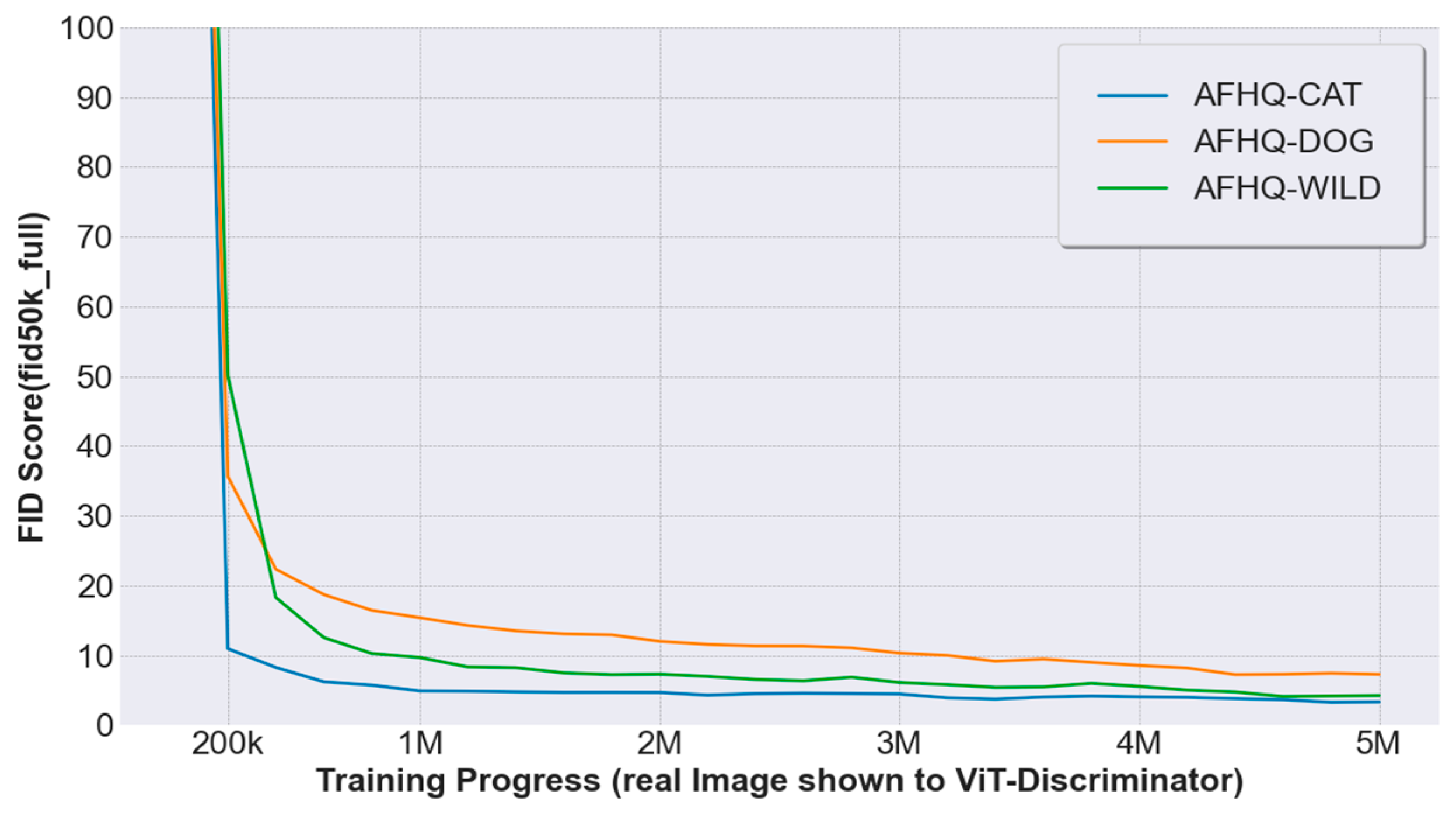

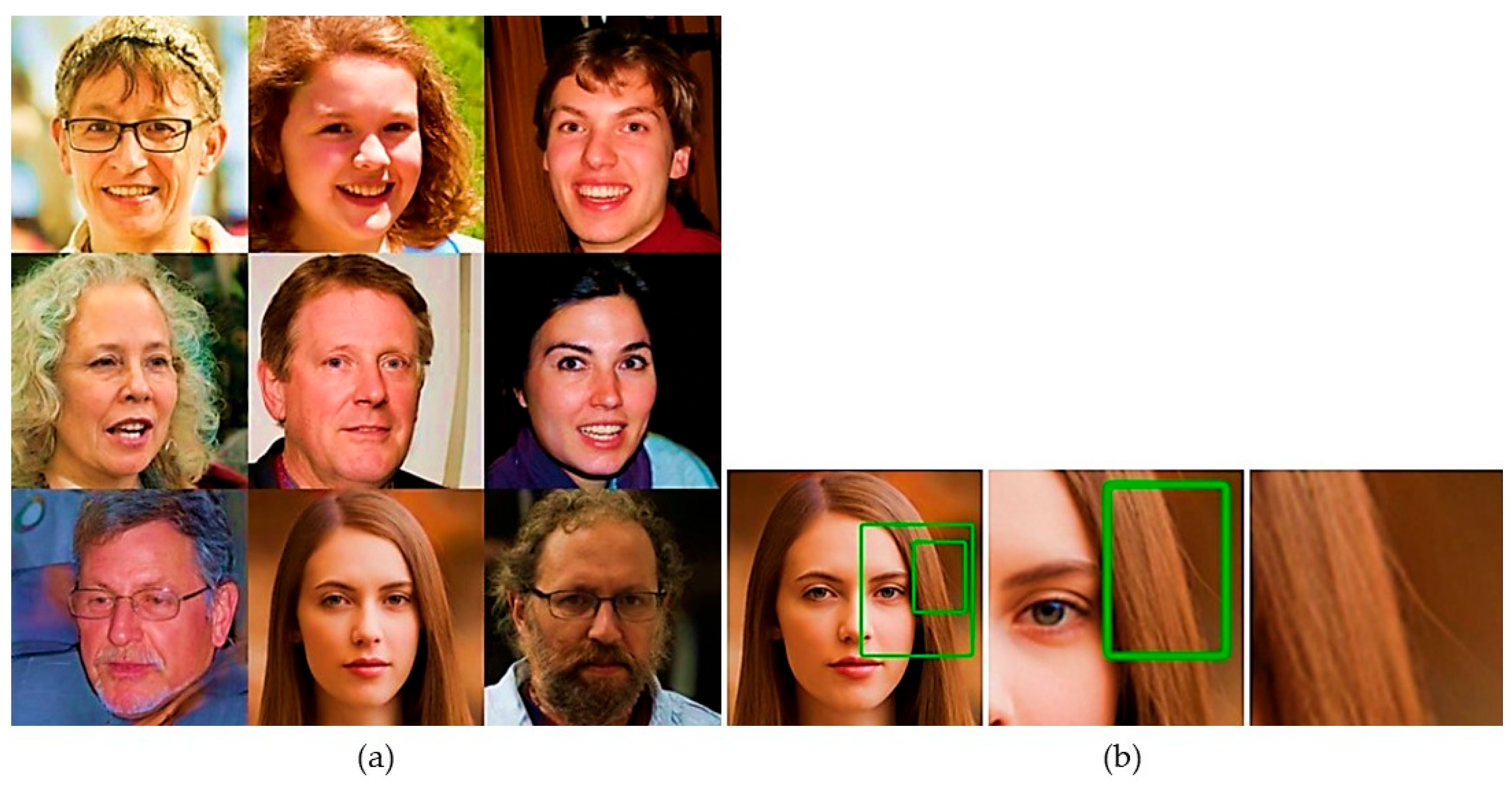

4. Experimental Results

4.1. Experimental Setup

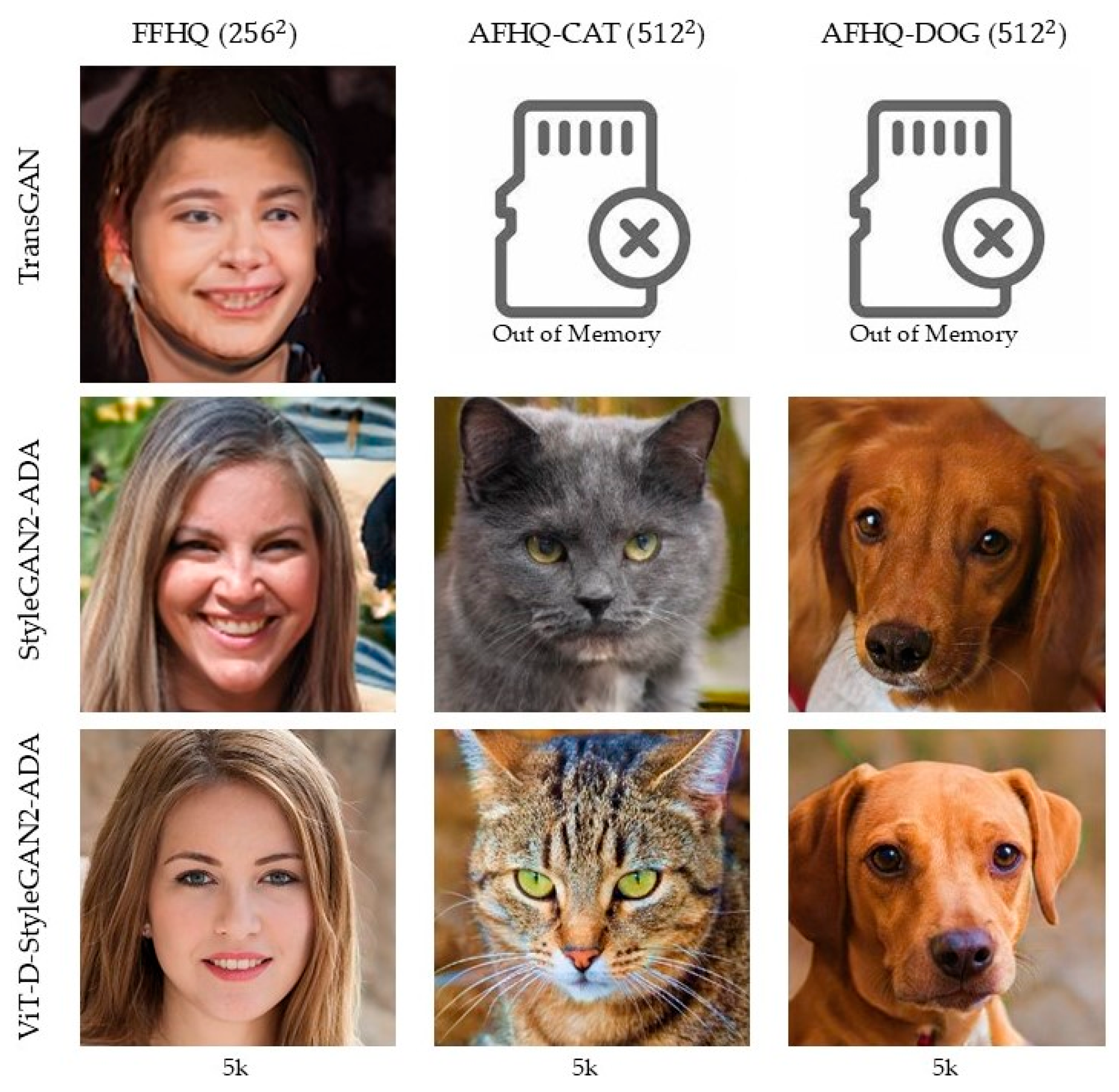

4.2. Comparison of Results on Small-Scale Datasets

4.3. Ablation Studies

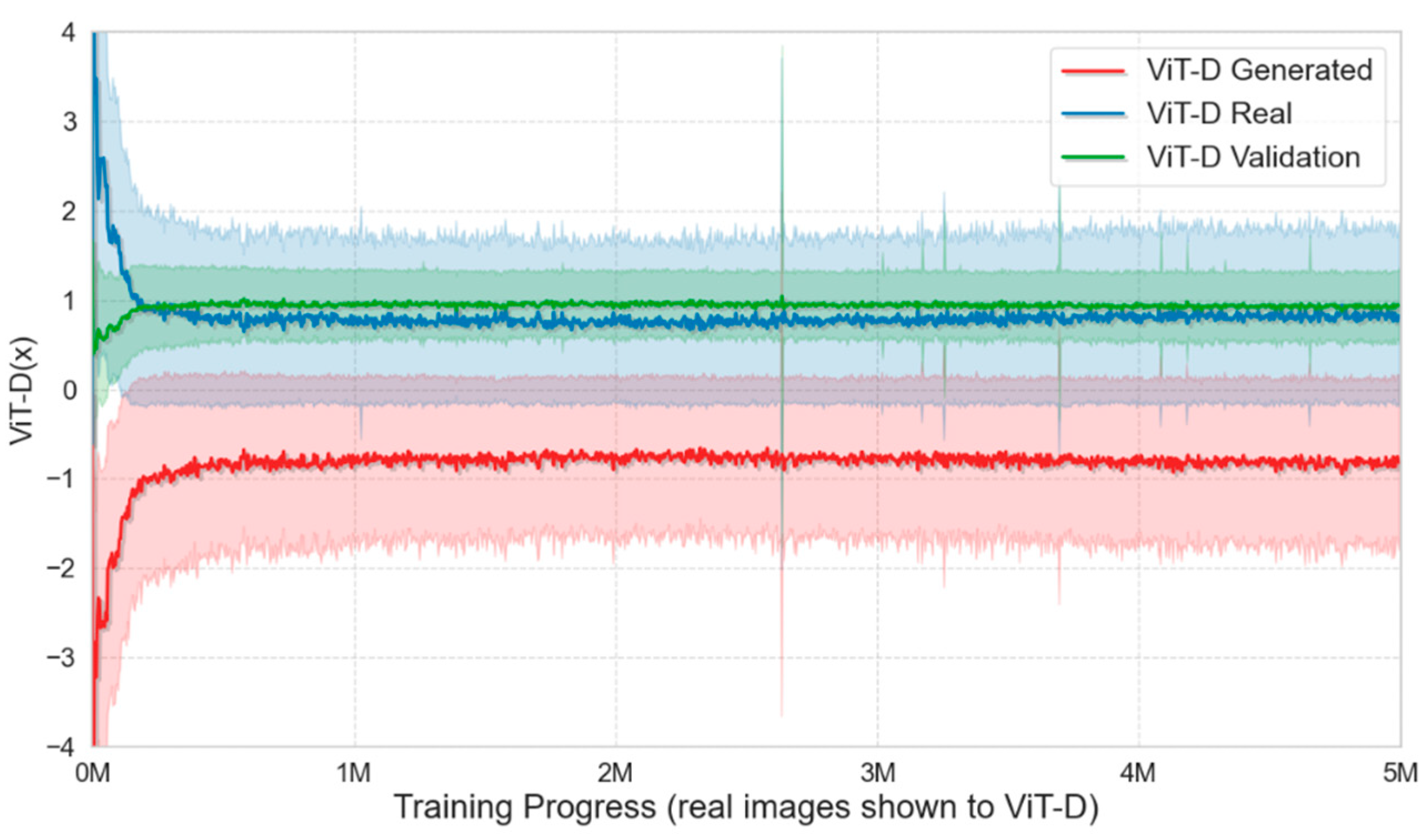

4.4. Training Observations and Augmentation Impact

4.5. Loss Function and Energy Consumption

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Jiang, Y.; Gong, X.; Liu, D.; Cheng, Y.; Fang, C.; Shen, X.; Yang, J.; Zhou, P.; Wang, Z. EnlightenGAN: Deep light enhancement without paired supervision. IEEE Trans. Image Process. 2021, 30, 2340–2349. [CrossRef] [PubMed]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. Neural Inf. Process. Syst. 2014, 27.

- Karras, T.; Aittala, M.; Hellsten, J.; Laine, S.; Lehtinen, J.; Aila, T. Training generative adversarial networks with limited data. Neural Inf. Process. Syst. 2020, 33, 12104–12114.

- Showrov, A.; Aziz, M.; Nabil, H.; Jim, J.; Kabir, M.; Mridha, M.; Asai, N.; Shin, J. Generative adversarial networks (GANs) in medical imaging: Advancements, applications, and challenges. IEEE Access 2024, 12, 35728–35753. [CrossRef]

- Arjovsky, M.; Bottou, L. Towards principled methods for training generative adversarial networks. In Proceedings of the International Conference on Learning Representations, 2017.

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; Uszkoreit, J. An image is worth 16×16 words: Transformers for image recognition at scale. arXiv 2021. arXiv:2010.11929.

- Jiang, Y.; Chang, S.; Wang, Z. TransGAN: Two pure transformers can make one strong GAN, and that can scale up. Neural Inf. Process. Syst. 2021, 34, 14745–14758.

- Lee, K.; Chang, H.; Jiang, L.; Zhang, H.; Tu, Z.; Liu, C. ViTGAN: Training GANs with vision transformers. In Proceedings of the International Conference on Learning Representations, 2022.

- Wang, Y.; Wu, C.; Herranz, L.; van de Weijer, J.; Gonzalez-García, A.; Raducanu, B. Transferring GANs: Generating images from limited data. In Proceedings of the European Conference on Computer Vision, 2018; pp. 218–234.

- Zhao, S.; Liu, Z.; Lin, J.; Zhu, J.-Y.; Han, S. Differentiable augmentation for data-efficient GAN training. Neural Inf. Process. Syst. 2020, 33, 7559–7570.

- Zhao, Z.; Singh, S.; Lee, H.; Zhang, Z.; Odena, A.; Zhang, H. Improved consistency regularization for GANs. In Proceedings of the AAAI Conference on Artificial Intelligence, 2021; Volume 35, No. 12.

- Salimans, T.; Goodfellow, I.; Zaremba, W.; Cheung, V.; Radford, A.; Chen, X. Improved techniques for training GANs. Neural Inf. Process. Syst. 2016, 29.

- Isola, P.; Zhu, J.-Y.; Zhou, T.; Efros, A.A. Image-to-image translation with conditional adversarial networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1125–1134.

- Ho, J.; Ermon, S. Generative adversarial imitation learning. Neural Inf. Process. Syst. 2016, 29.

- Karras, T.; Laine, S.; Aittala, M.; Hellsten, J.; Lehtinen, J.; Aila, T. Analyzing and improving the image quality of StyleGAN. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 8107–8116.

- Gulrajani, I.; Ahmed, F.; Arjovsky, M.; Dumoulin, V.; Courville, A. Improved training of Wasserstein GANs. Neural Inf. Process. Syst. 2017, 30.

- Karras, T.; Laine, S.; Aila, T. A style-based generator architecture for generative adversarial networks. Neural Comput. Appl. 2019, 31, 789–798.

- Brock, A.; Donahue, J.; Simonyan, K. Large scale GAN training for high-fidelity natural image synthesis. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019.

- Miyato, T.; Kataoka, T.; Koyama, M.; Yoshida, Y. Spectral normalization for generative adversarial networks. arXiv 2018. arXiv:1802.05957.

- Cubuk, E.; Zoph, B.; Shlens, J.; Le, Q.V. RandAugment: Practical automated data augmentation with a reduced search space. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, WA, USA, 14–19 June 2020.

- Zhang, H.; Zhang, Z.; Odena, A.; Lee, H. Consistency regularization for generative adversarial networks. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019.

- Aksac, A.; Demetrick, D.J.; Ozyer, T.; Alhajj, R. BreCaHAD: A dataset for breast cancer histopathological annotation and diagnosis. BMC Res. Notes 2019, 12, 400. [CrossRef] [PubMed]

- Karras, T.; Aila, T.; Laine, S.; Lehtinen, J. Progressive growing of GANs for improved quality, stability, and variation. In Proceedings of the International Conference on Learning Representations, Vancouver, Canada, 30 April–3 May 2018.

- Hirose, S.; Wada, N.; Katto, J.; Sun, H. ViT-GAN: Using vision transformer as discriminator with adaptive data augmentation. In 2021 3rd International Conference on Computer Communication and the Internet (ICCCI), 2021; pp. 185–189.

- Zhao, Y.; Li, C.; Yu, P.; Gao, J.; Chen, C. Feature quantization improves GAN training. arXiv 2020. arXiv:2004.02088.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 10–17 October 2021; pp. 10012–10022.

- Dong, Y.; Cordonnier, J.-B.; Loukas, A. Attention is not all you need: Pure attention loses rank doubly exponentially with depth. Proc. Mach. Learn. Res. 2021, 139, 460–470.

- Liu, Y.; Matsoukas, C.; Strand, F.; Azizpour, H.; Smith, K. PatchDropout: Economizing vision transformers using patch dropout. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2023.

- Kang, G.; Dong, X.; Zheng, L.; Yang, Y. PatchShuffle regularization. arXiv 2017. arXiv:1707.07103.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Neural Inf. Process. Syst. 2017, 30, 5998–6008.

- Bertasius, G.; Wang, H.; Torresani, L. Is space-time attention all you need for video understanding? In Proceedings of the 38th International Conference on Machine Learning, Virtual Event, 18–24 July 2021; Volume 2, No. 3.

- Kumar, M.; Weissenborn, D.; Kalchbrenner, N. Colorization transformer. In Proceedings of the International Conference on Learning Representations, Virtual Event, 3–7 May 2021.

- Shaw, P.; Uszkoreit, J.; Vaswani, A. Self-Attention with relative position representations. In Proceedings of NAACL, Minneapolis, MN, USA, 2–7 June 2018; pp. 464–468.

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 2020, 21, 1–67.

- Hu, H.; Zhang, Z.; Xie, Z.; Lin, S. Local relation networks for image recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, South Korea, 27 October–2 November 2019; pp. 3464–3473.

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [CrossRef]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer normalization. arXiv 2016. arXiv:1607.06450.

- Ulyanov, D.; Vedaldi, A.; Lempitsky, V. Instance normalization: The missing ingredient for fast stylization. arXiv 2016. arXiv:1607.08022.

- Chandar, S.; Sankar, C.; Vorontsov, E.; Kahou, S.E.; Bengio, Y. Towards non-saturating recurrent units for modelling long-term dependencies. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, No. 01.

- Arjovsky, M.; Chintala, S.; Bottou, L. Wasserstein generative adversarial networks. In Proceedings of the International Conference on Machine Learning, 2017.

| Datasets | StyleGAN2-ADA | + ViT-D (Ours) | + ViT-D + DiffAug (Ours) | + ViT-D + bCR (Ours) | |

| FFHQ | 5k | 10.96 | 10.69 | 11.02 | 10.13 |

| 10k | 8.13 | 7.87 | 10.11 | 8.24 | |

| AFHQ WILD | 5k | 3.05 | 4.12 | 4.98 | 3.30 |

| AFHQ DOG | 5k | 7.40 | 6.35 | 6.84 | 5.81 |

| AFHQ CAT | 5k | 3.55 | 3.29 | 4.78 | 3.43 |

| Methods | CIFAR-10 | |

| FID↓ | IS↑ | |

| ViTGAN [7] | 5.03 | 9.43 |

| TransGAN [8] | 8.96 | 8.34 |

| ProGAN [18] | 15.88 | 8.62 |

| BigGAN [23] | 14.32 | 9.31 |

| FQ-GAN [25] | 5.72 | 8.53 |

| StyleGAN2-ADA [3] | 4.76 | 9.98 |

| +ViT-D (Ours) | 3.57 | 10.68 |

| +ViT-D + DiffAug (Ours) | 4.11 | 9.93 |

| +ViT-D + bCR (Ours) | 3.01 | 10.97 |

| Method | FFHQ | AFHQ WILD | AFHQ DOG | AFHQ CAT | Average |

| Baseline | 8.13 | 3.41 | 7.40 | 3.55 | 5.62 |

| +ViT-D | 7.87 | 4.12 | 6.35 | 3.29 | 5.41 |

| +ViT-D + DiffAug | 10.11 | 4.98 | 6.84 | 4.78 | 6.68 |

| +ViT-D + bCR | 7.92 | 3.23 | 5.81 | 3.12 | 5.10 |

| Dataset | Number of Runs | Memory Usages (GB) | Time per Run (days) | GPU-years (Dule RTX 3090) | Electricity (MWh) |

| AFHQ CIFAR-10 FFHQ |

24 16 100 |

8.2 ±0.5 2.7 ±0.5 4.5 ±0.5 |

12.5 2.5 4.9 |

0.41 0.05 0.67 |

8.96 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).