Submitted:

28 June 2025

Posted:

01 July 2025

You are already at the latest version

Abstract

Keywords:

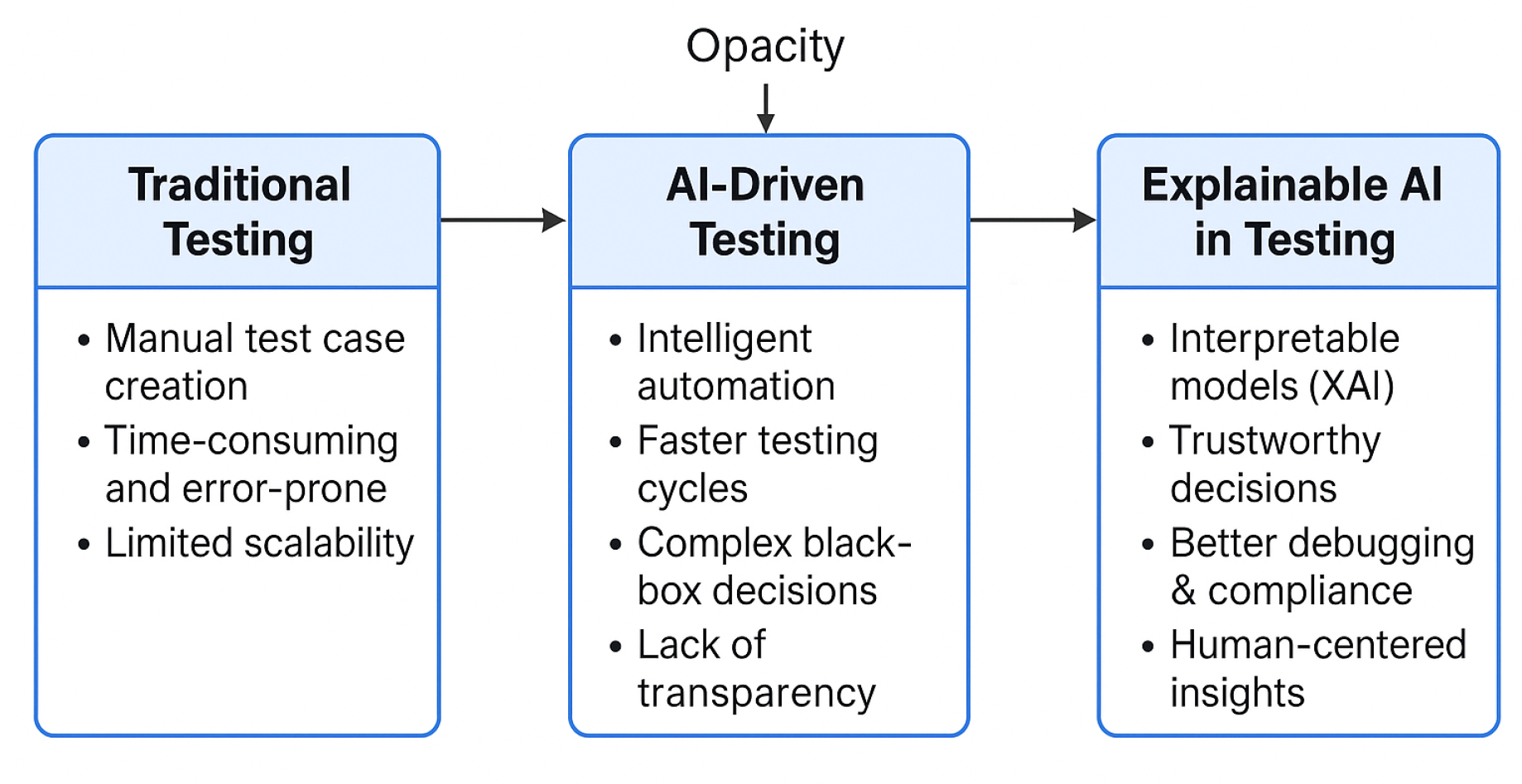

1. Introduction

2. Literature Review

| Author(s) | Focus Area | Key Contribution |

|---|---|---|

| Tufano et al. [1] | Multi-agent orchestration for CI/CD | AutoDev: GPT-4 based system for autonomous test generation and pipeline management |

| Kambala et al. [2] | DevOps security enhancement | Anomaly detection, rollback automation, and intelligent agents for proactive security |

| Goyal et al. [3] | Resource optimization in CI/CD | Serverless and microservices-based modeling for dynamic test resource allocation |

| Nishat et al. [4] | ML container security | Automated threat modeling and auditability integration in CI/CD pipelines |

| Loevenich et al. [5] | Adaptive cyber defense | Deep reinforcement learning agents adapting to live threat environments |

| Rahman et al. [6] | Bug prediction and prioritization | Intelligent testing sequence prediction using decision trees and feedback learning |

| Akhter et al. [7] | Developer trust in AI pipelines | Study on the role of explainability in secure SDLC acceptance by developers |

| Deloitte et al. [8] | AI system security lifecycle | Secure Software Development Lifecycle (SSDL) model emphasizing explainability and auditing |

2.1. Enhanced Developer Trust and Adoption

2.2. Improved Debugging and Root Cause Analysis

2.3. Stakeholder Communication and Compliance

2.4. Smarter Test Optimization and Resource Allocation

2.5. Facilitation of Continuous Learning

2.6. Better Integration in CI/CD Pipelines

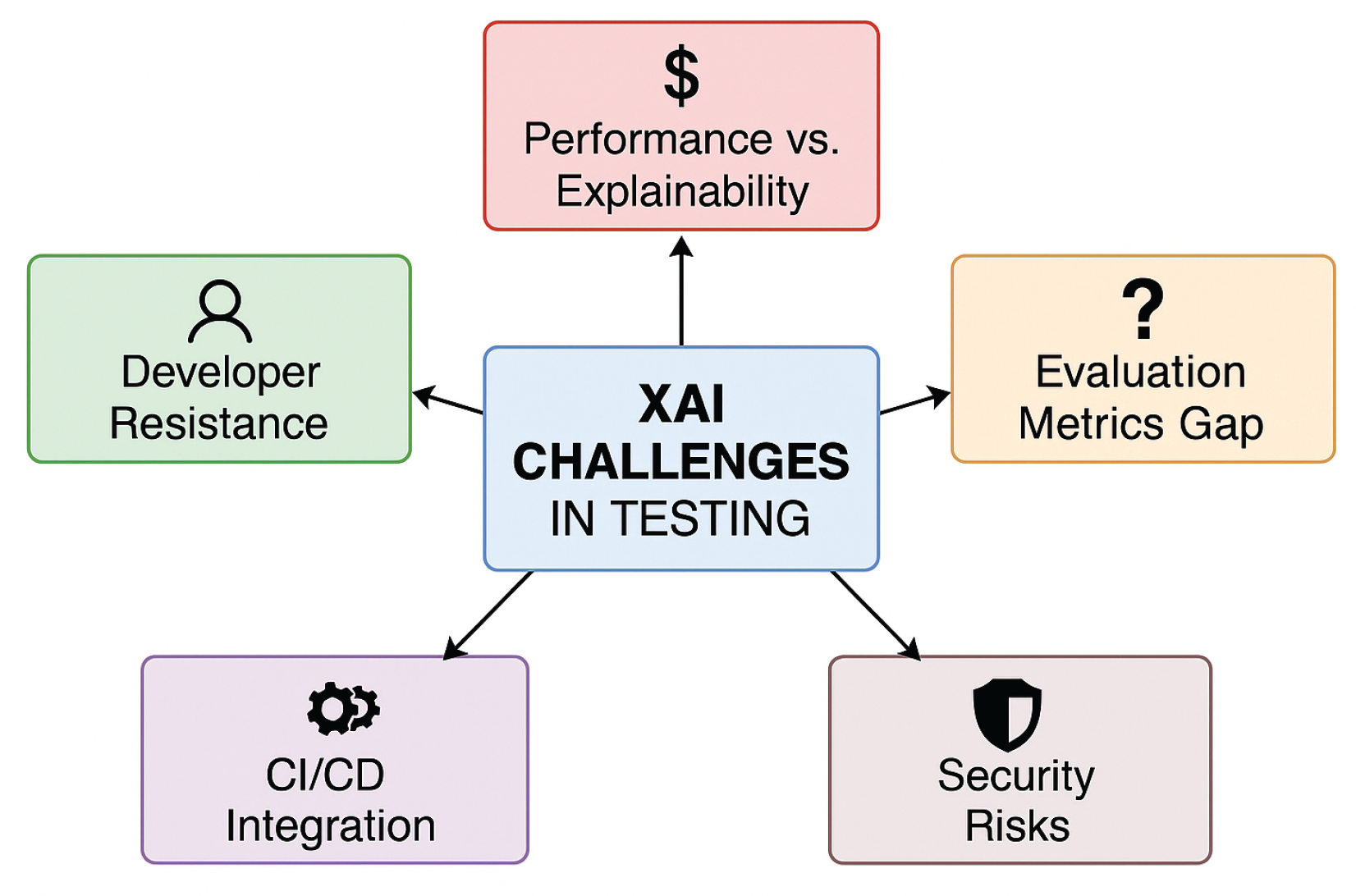

3. Challenges

3.1. Trade-off Between Performance and Explainability

3.2. Lack of Standardized Evaluation Metrics

3.3. Developer Resistance and Cognitive Overhead

3.4. Integration Complexity in CI/CD Environments

3.5. Security and Privacy Risks of Explanations

4. Discussion

| Aspect | Benefits | Challenges |

|---|---|---|

| Security Integration | End-to-end security across CI/CD stages using autonomous agents | Requires continuous policy updates and threat intelligence integration |

| Explainability (XAI) | Improves developer trust and compliance with explainable alerts | Explainable models may add inference overhead and complexity |

| Automation | Reduces manual effort via self-healing and rollback mechanisms | High initial setup cost and agent tuning required |

| Scalability | Microservice-based agent deployment supports distributed pipelines | May introduce orchestration complexity in large environments |

| Adoption Readiness | Supports integration with common DevOps tools (GitLab, Jenkins, etc.) | Legacy systems may need significant customization |

5. Limitations

| Limitation | Proposed Mitigation |

|---|---|

| Lack of real-world deployment | Begin pilot testing with academic/industry CI/CD environments |

| Resource overhead of agents | Use lightweight inference models; enable asynchronous execution |

| False positives by ML agents | Improve with feedback loops and developer annotations |

| Complex legacy system integration | Provide plug-and-play Docker-based micro-agents |

| Agent trust and security | Implement zero-trust security model and internal sandboxing |

6. Future Work

| Focus Area | Description |

|---|---|

| Standardized Metrics | Develop human-centered frameworks to assess explanation quality, focusing on clarity, usefulness, and decision impact. |

| Explainability-by-Design | Create testing tools that embed explainability from the ground up using interpretable models and reasoning engines. |

| Security-Aware XAI | Research secure explanation techniques using differential privacy or selective disclosure to prevent information leakage. |

| Human-AI Collaboration | Conduct longitudinal studies on how developers use and trust XAI outputs over time. |

| Real-World Benchmarking | Deploy XAI tools in CI/CD pipelines, document performance, and compare across domains to guide real-world adoption. |

6.1. Development of Standardized Metrics

6.2. Explainability-by-Design Testing Tools

6.3. Security-Aware XAI Techniques

6.4. Human-AI Collaboration Studies

6.5. Real-World Case Studies and Benchmarking

7. Conclusions

References

- M. Tufano et al., "AutoDev: LLM Agents for End-to-End Software Engineering,". arXiv 2024, arXiv:2403.06952.

- V. Kambala, "Intelligent Software Agents for CD Pipelines, arXiv 2024, arXiv:2403.08299.

- A. Goyal, "Optimizing CI/CD Pipelines with ML,". Int. J. Comput. Sci. Trends Technol. 2024, 12.

- A. Nishat, "Enhancing CI/CD Pipelines and Container Security Through Machine Learning and Advanced Automation," EasyChair Preprint No. 15622, Dec. 2024.

- J. Loevenich et al., "Design and Evaluation of an Autonomous Cyber Defence Agent using DRL and an Augmented LLM," SSRN Preprint, Apr. 2024.

- A. Rahman, M. H. Hossain, “Optimizing Continuous Integration and Continuous Deployment Using Machine Learning, ASTRJ 2021, 10, 99–104.

- M. M. Alam, M. Akhter, “Perception of Threats in Secure Software Development Lifecycle using AI-based Approaches,”. IJCRT 2021, 9.

- Deloitte, “Secure Software Development Lifecycle (SSDL) for Precision AI,” Deloitte Report, 2024.

- A. B. Arrieta et al., “Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI,”. Information Fusion 2020, 58, 82–115. [CrossRef]

- F. Doshi-Velez and B. Kim, Towards a rigorous science of interpretable machine learning. arXiv, 2017; arXiv:1702.08608.

- R. Guidotti et al., “A survey of methods for explaining black box models,”. ACM Computing Surveys (CSUR) 2018, 51, 1–42.

- D. Gunning, “Explainable Artificial Intelligence (XAI),” DARPA, [Online]. Available: https://www.darpa.mil/program/explainable-artificial-intelligence.

- S. Chakraborty, A. S. Chakraborty, A. Alam, and V. N. Gudivada, “A survey of explainable AI in software engineering,”. Journal of Systems and Software 2022, 191, 111361. [Google Scholar]

- P. Linardatos, V. P. Linardatos, V. Papastefanopoulos, and S. Kotsiantis, “Explainable AI: A review of machine learning interpretability methods,”. Entropy 2021, 23, 18. [Google Scholar] [CrossRef] [PubMed]

- Z. B. Bucinca, A. Z. B. Bucinca, A. Malaya, A. Siddiqui, and K. Z. Gajos, “Trust and understanding in human-AI partnerships,” in Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, pp. 1–14, 2021.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).