Submitted:

18 June 2025

Posted:

19 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Non-deterministic and unstable convergence. While symbolic regression employs modified genetic programming (GP) to discover polynomial expressions [15,16], its tree-based evolutionary approach struggles to converge in high-dimensional space. The resulting formulas demonstrate acute sensitivity to certain hyperparameters, such as initialization conditions, population size, and mutation rate. This may confuse users about whether the identified formula is truly suitable for the task or due to the random perturbation, therefore hurting the legibility of task-driven neurons.

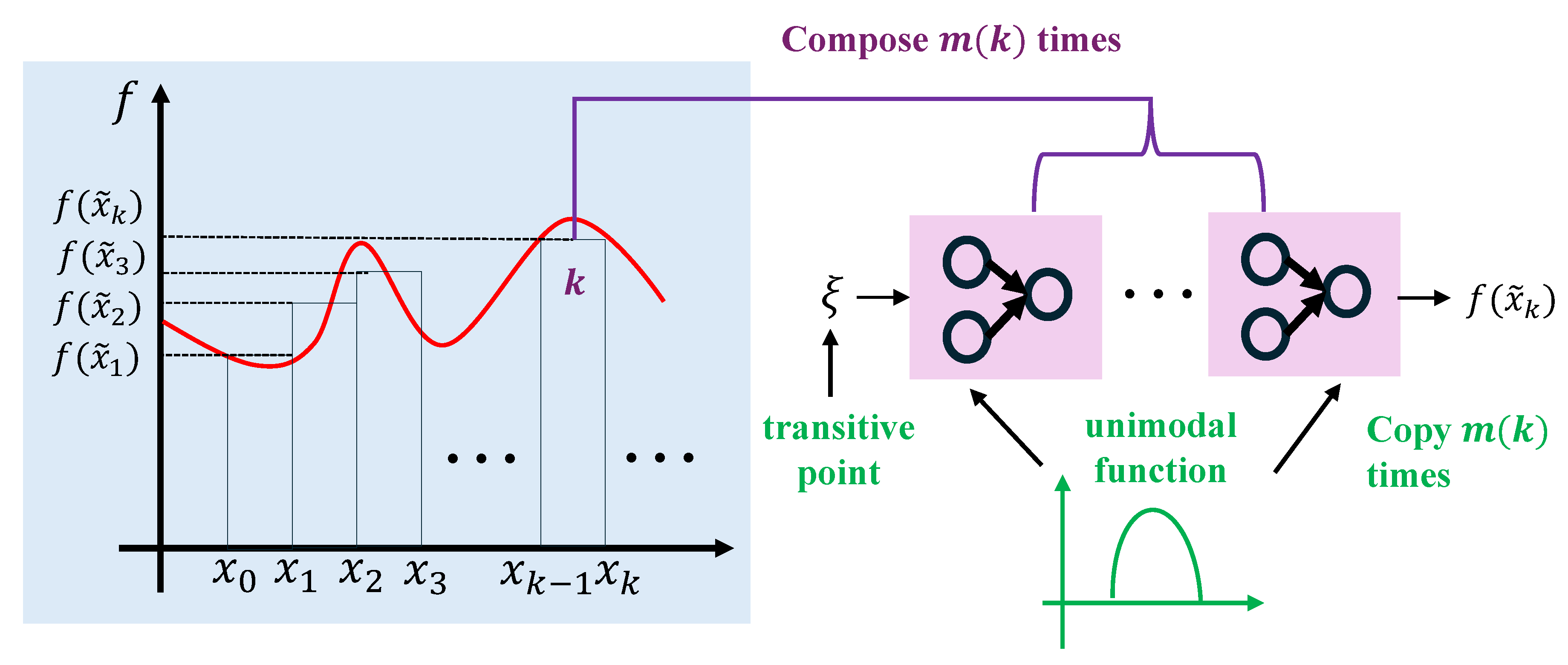

- Necessity of task-driven neurons. In the realm of deep learning theory, it was shown [17,18,19] that certain activation functions can empower a neural network using a fixed number of neurons to approximate any continuous function with an arbitrarily small error. These functions are termed “super-expressive" activation functions. Such a unique and desirable property allows a network to achieve a precise approximation without increasing structural complexity. Given the tremendous theoretical advantages of adjusting activation functions, it is necessary to address the following issue: Can the super-expressive property be achieved via revising the aggregation function while retaining common activation functions?

- We introduce tensor decomposition to prototype stable task-driven neurons by enhancing the stability of the process of finding a suitable formula for the given task. Our study is a major modification to NeuronSeek.

- We theoretically show that task-driven neurons with common activation functions also enjoy the “super-super-expressive” property, which puts the task-driven neuron on a solid theoretical foundation. The novelty of our construction lies in that it fixes both the number and values of parameters, while the previous construction only fixes the number of parameters.

- Systematic experiments on public datasets and real-world applications demonstrate that NeuronSeek-TD achieves not only superior performance but also stability compared to NeuronSeek-SR. Moreover, its performance is also competitive compared to other state-of-the-art baselines.

2. Related Works

2.1. Neuronal Diversity and Task-Driven Neurons

2.2. Symbolic Regression and Deep Learning

2.3. Super-Expressive Activation Functions

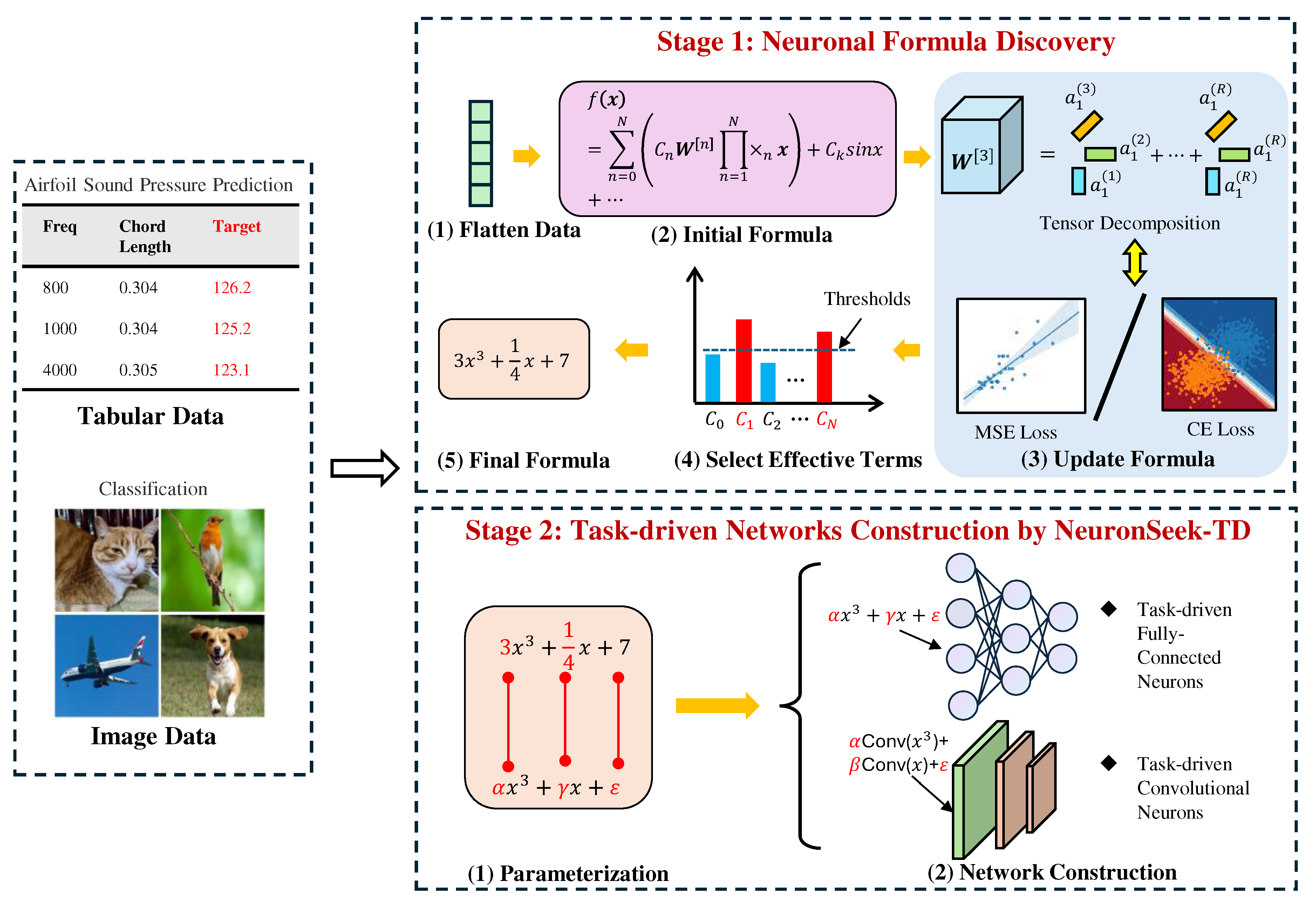

3. Task-Driven Neuron Based on Tensor Decomposition (NeuronSeek-TD)

3.1. NeuronSeek-SR

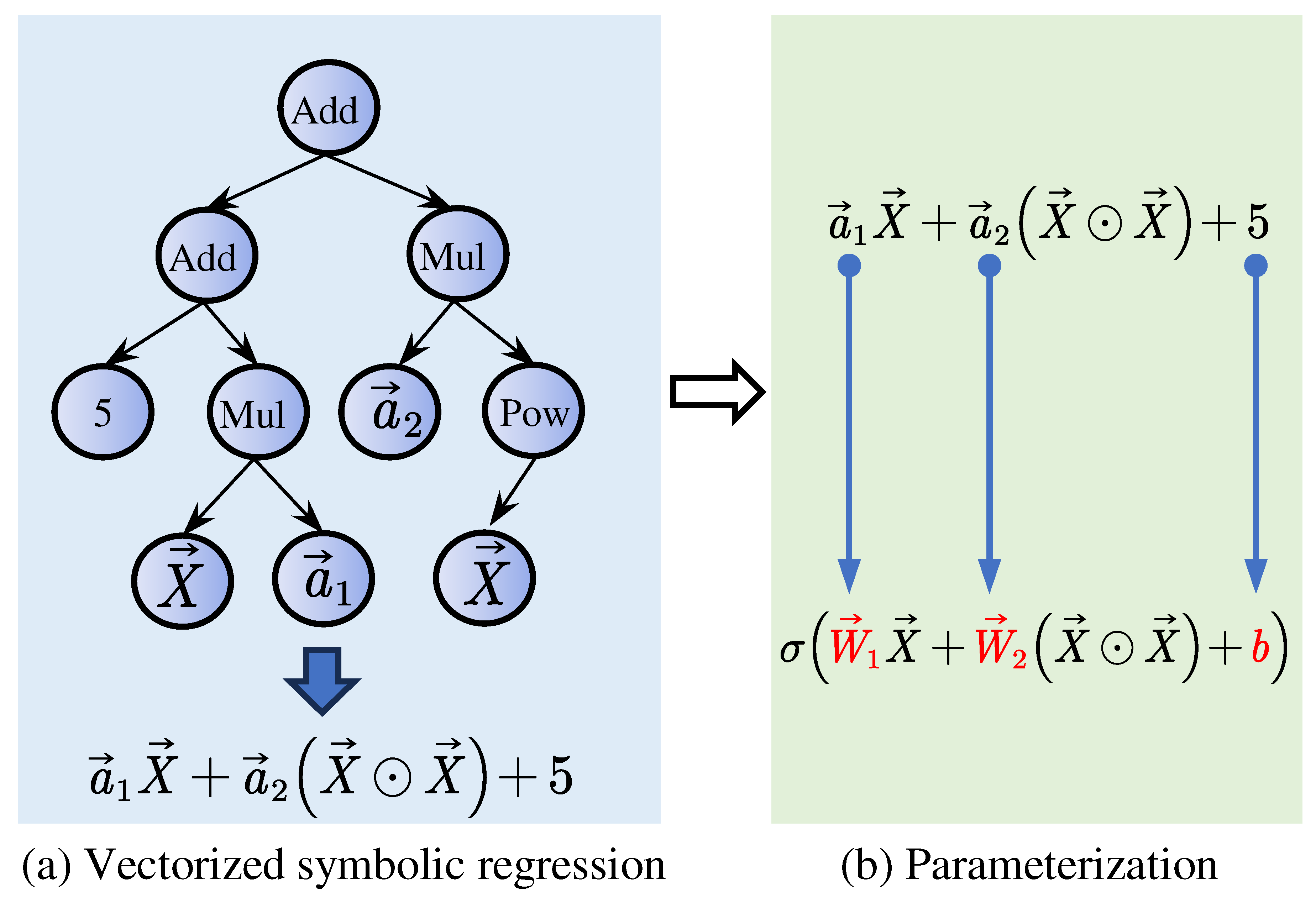

3.1.1. Vectorized SR

3.1.2. Parameterization

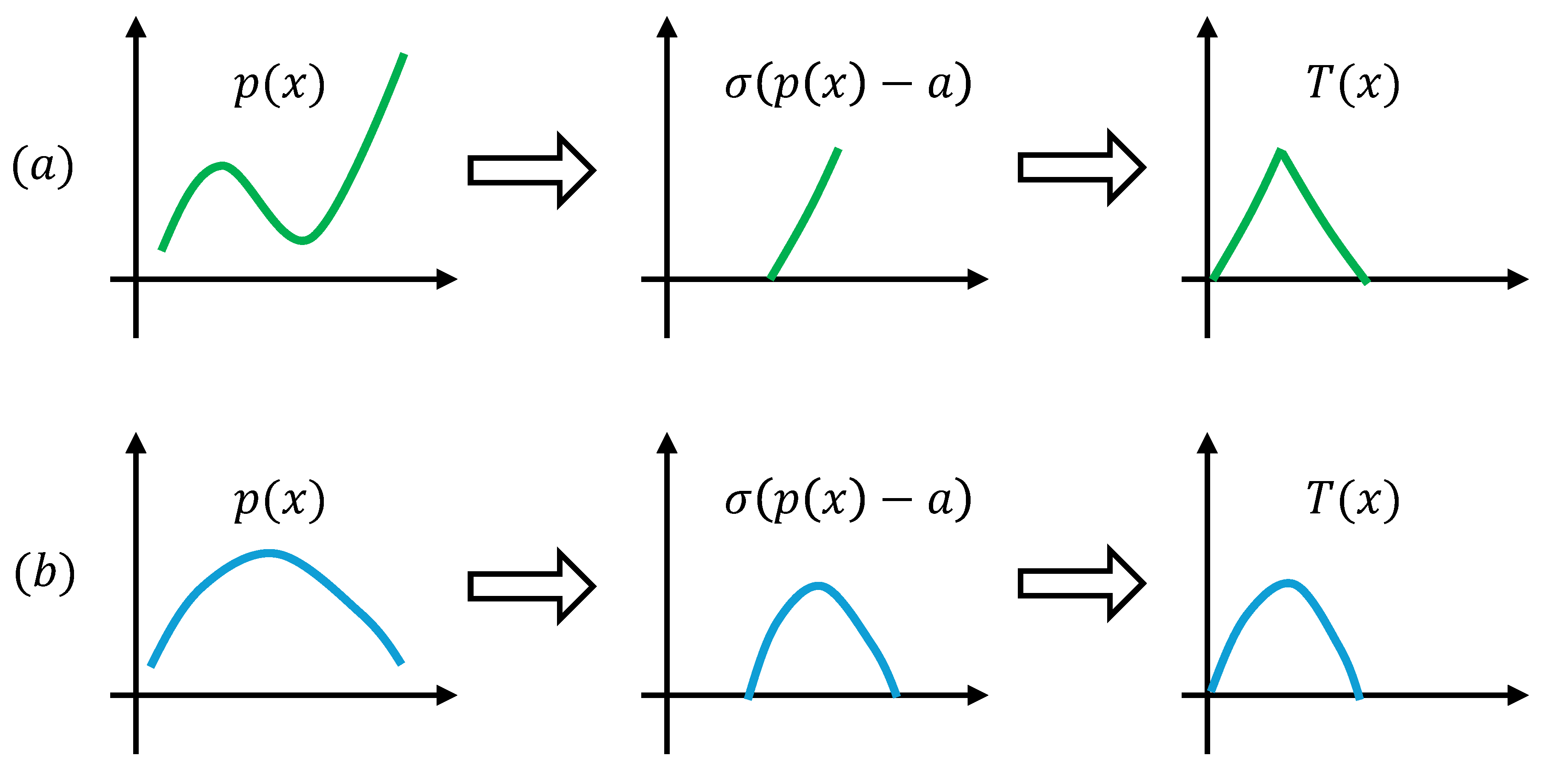

3.2. NeuronSeek-TD

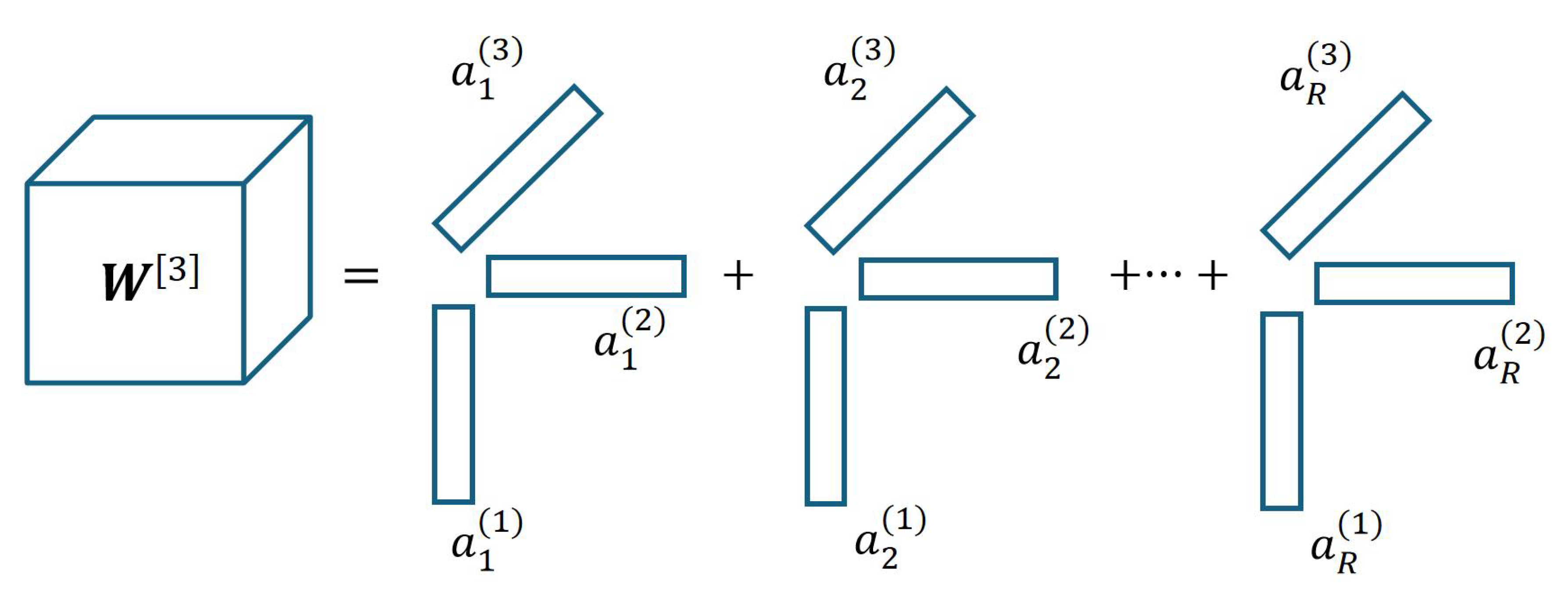

3.2.1. Neuronal Formula Discovery

3.2.2. Task-Driven Network Construction

4. Super-Expressive Property Theory of Task-Driven Neuron

5. Comparative Experiments on NeuronSeek

5.1. Experiment on Synthetic Data

5.1.1. Experimental Settings

5.1.2. Experimental Results

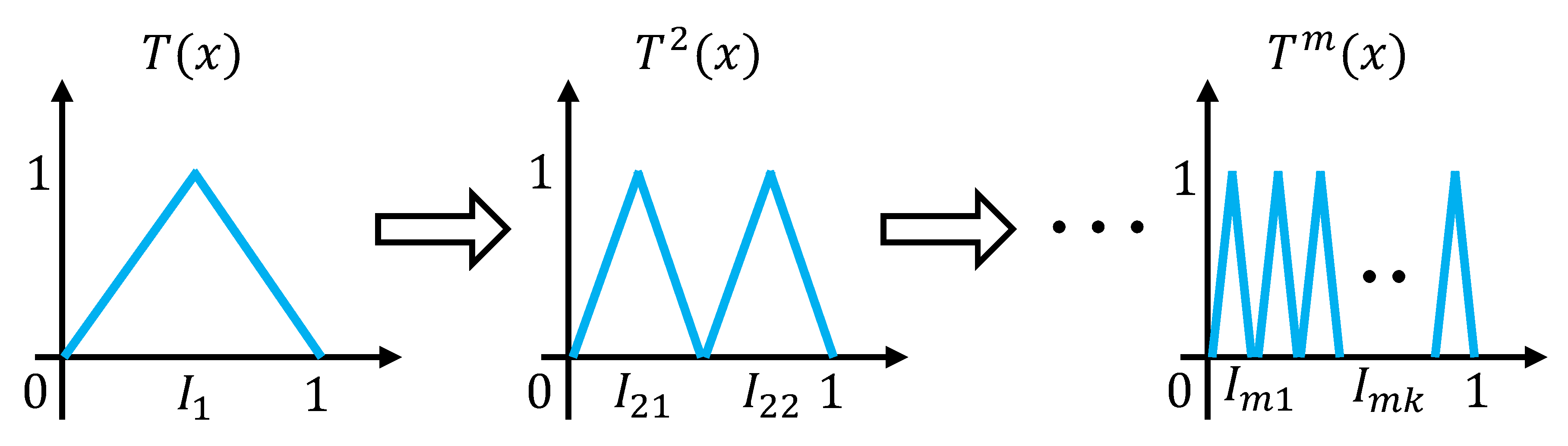

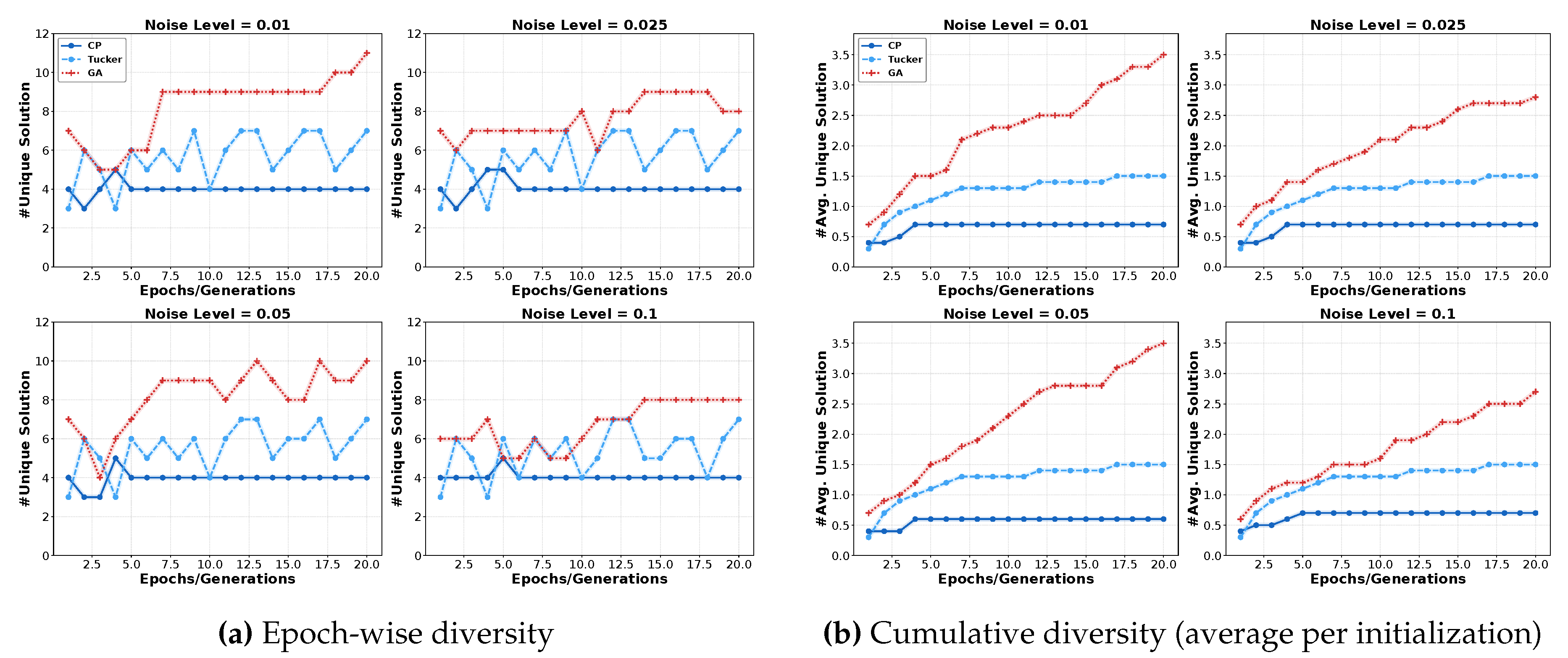

5.2. Experiments on Uniqueness and Stability

5.2.1. Experimental Settings

- Epoch-wise diversity represents the number of different formulas within each epoch (or generation) across all initializations. For instance, and are considered identical, whereas and are distinct.

- Cumulative diversity measures the average number of unique formulas identified per initialization as the optimization goes on.

5.2.2. Experimental Results

5.3. Superiority of NeuronSeek-TD over NeuronSeek-SR

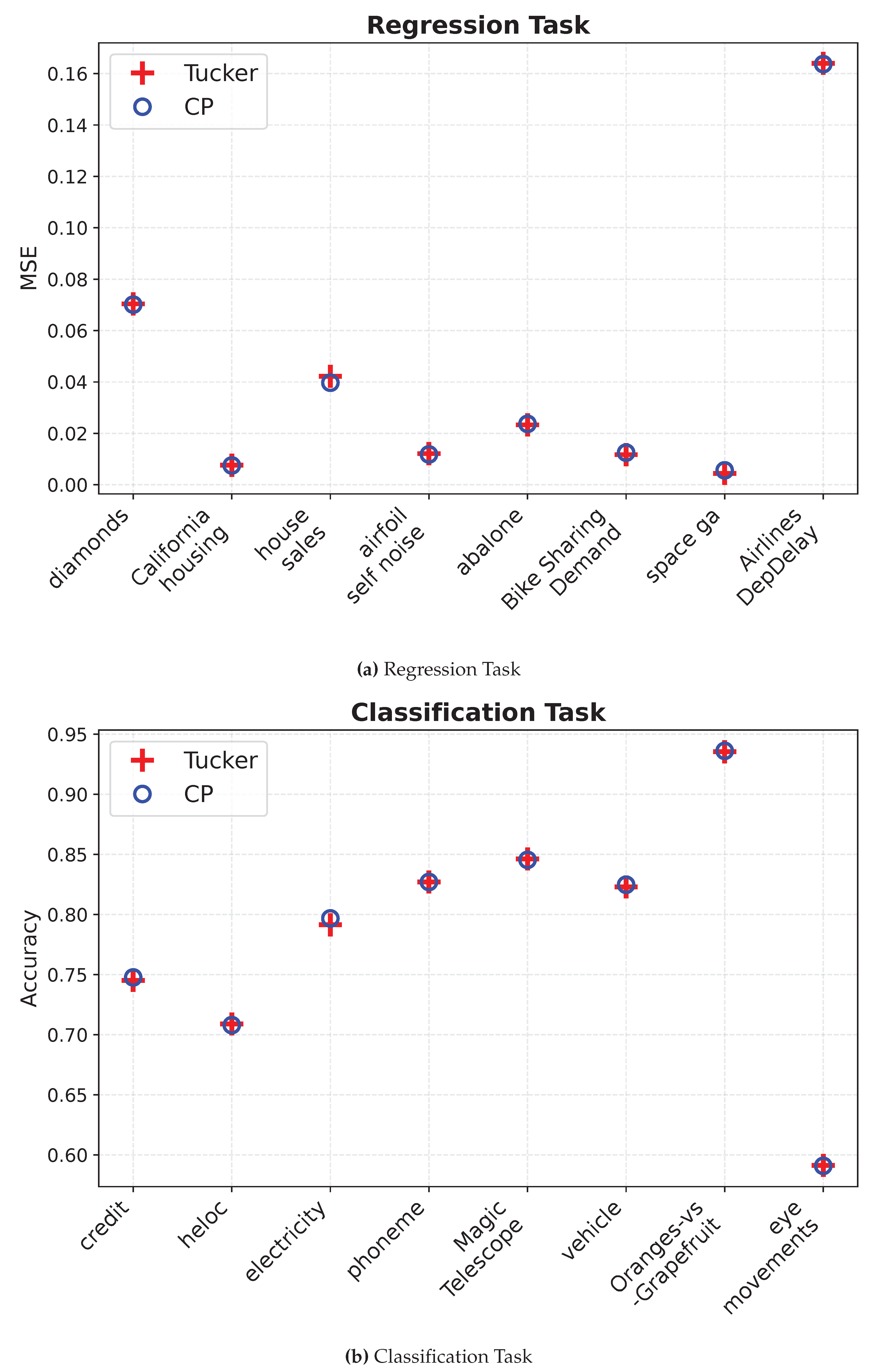

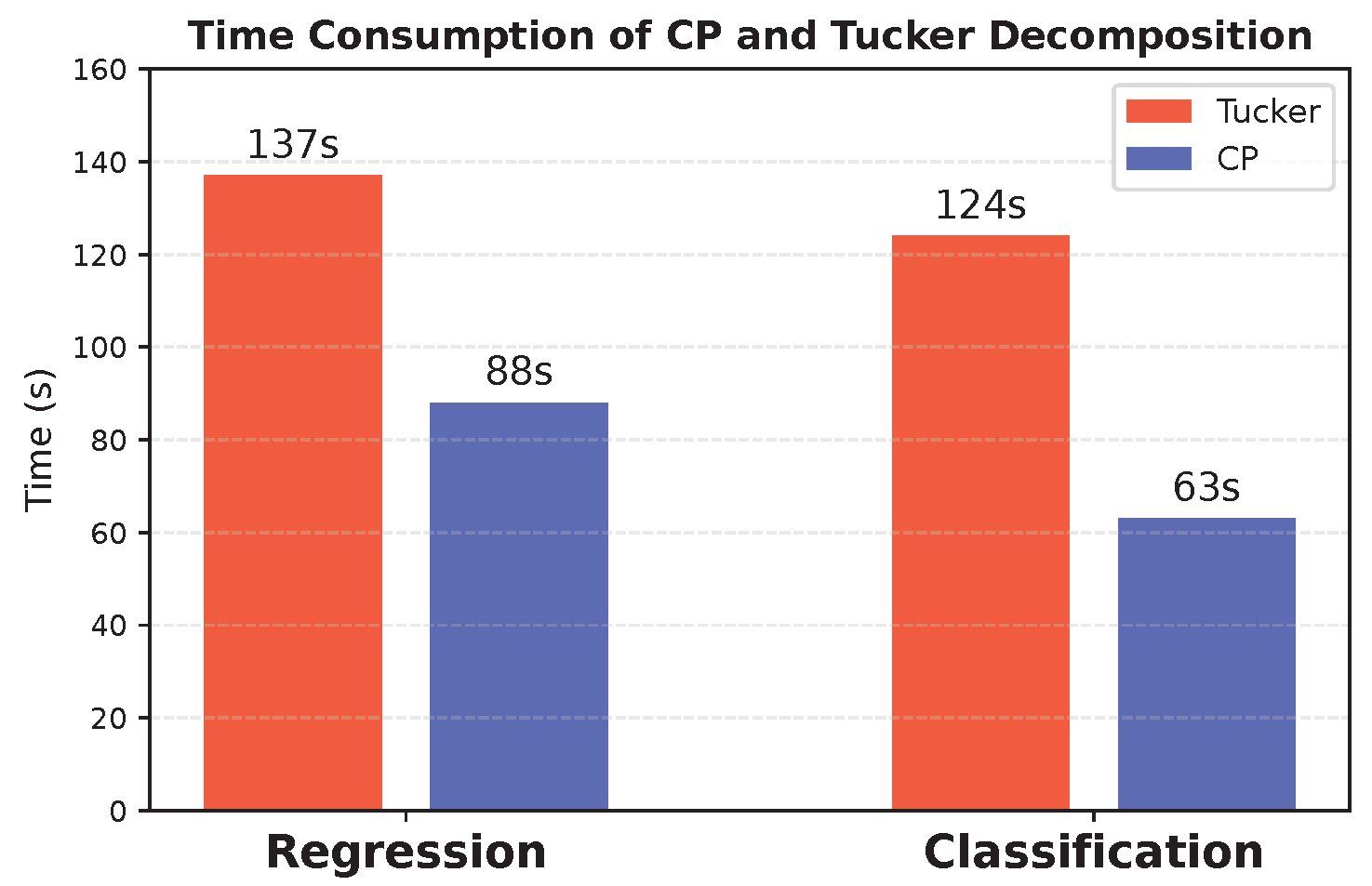

5.4. Comparison of Tensor Decomposition Methods

5.4.1. Experimental Settings

5.4.2. Experimental results

6. Competitive Experiments of NeuronSeek-TD over Other Baselines

6.1. Experiment on Tabular Data

6.2. Experiments on Images

7. Discussions and Conclusions

References

- Yang, X.; Song, Z.; King, I.; Xu, Z. A Survey on Deep Semi-Supervised Learning. IEEE Transactions on Knowledge and Data Engineering 2023, 35, 8934–8954. [CrossRef]

- Wang, S.; Cao, J.; Yu, P.S. Deep Learning for Spatio-Temporal Data Mining: A Survey. IEEE Transactions on Knowledge and Data Engineering 2022, 34, 3681–3700. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016, pp. 770–778.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017, pp. 5998–6008.

- MacAvaney, S.; Soldaini, L.; Yates, A. Mamba: Learning neural IR models with random walks. In Proceedings of the Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR), 2021, pp. 1642–1646.

- Zador, A.; Escola, S.; Richards, B.; et al.. Catalyzing next-generation Artificial Intelligence through NeuroAI. Nature Communications 2023, 14, 1597. [CrossRef]

- Fan, F.L.; Li, Y.; Zeng, T.; Wang, F.; Peng, H. Towards NeuroAI: introducing neuronal diversity into artificial neural networks. Med-X 2025, 3, 2.

- Peng, H.; Xie, P.; Liu, L.; et al.. Morphological diversity of single neurons in molecularly defined cell types. Nature 2021, 598, 174–181. [CrossRef]

- Xu, Z.; Yu, F.; Xiong, J.; Chen, X. Quadralib: A Performant Quadratic Neural Network Library for Architecture Optimization and Design Exploration. In Proceedings of the Proceedings of Machine Learning and Systems, 2022, Vol. 4, pp. 503–514.

- Chrysos, G.G.; Moschoglou, S.; Bouritsas, G.; Deng, J.; Panagakis, Y.; Zafeiriou, S. Deep Polynomial Neural Networks. IEEE Transactions on Pattern Analysis and Machine Intelligence 2020.

- Xu, W.; Song, Y.; Gupta, S.; Jia, D.; Tang, J.; Lei, Z.; Gao, S. Dmixnet: a dendritic multi-layered perceptron architecture for image recognition. Artificial Intelligence Review 2025, 58, 129.

- Fan, F.L.; Wang, M.; Dong, H.; Ma, J.; Zeng, T. No One-Size-Fits-All Neurons: Task-based Neurons for Artificial Neural Networks. arXiv preprint 2024.

- Schmidt, M.; Lipson, H. Distilling free-form natural laws from experimental data. Science 2009, 324, 81–85.

- Bartlett, D.J.; Desmond, H.; Ferreira, P.G. Exhaustive Symbolic Regression. IEEE Transactions on Evolutionary Computation 2023.

- Espejo, P.G.; Ventura, S.; Herrera, F. A survey on the application of genetic programming to classification. IEEE Transactions on Systems, Man, and Cybernetics, Part C (Applications and Reviews) 2009, 40, 121–144.

- de Carvalho, M.G.; Laender, A.H.F.; Goncalves, M.A.; da Silva, A.S. A Genetic Programming Approach to Record Deduplication. IEEE Transactions on Knowledge and Data Engineering 2012, 24, 399–412. [CrossRef]

- Yarotsky, D. Elementary superexpressive activations. In Proceedings of the International Conference on Machine Learning. PMLR, 2021, pp. 11932–11940.

- Shen, Z.; Yang, H.; Zhang, S. Deep Network Approximation: Achieving Arbitrary Accuracy with Fixed Number of Neurons. Journal of Machine Learning Research 2022, 23, 1–60.

- Shen, Z.; Yang, H.; Zhang, S. Neural network approximation: Three hidden layers are enough. Neural Networks 2021, 141, 160–173. [CrossRef]

- Makke, N.; Chawla, S. Interpretable Scientific Discovery with Symbolic Regression: A Review. arXiv preprint arXiv:2211.10873 2023.

- Kolda, T.G.; Bader, B.W. Tensor decompositions and applications. SIAM Review 2009, 51, 455–500.

- Fukushima, K. Recent advances in the deep CNN neocognitron. Nonlinear Theory and Its Applications, IEICE 2019, 10, 304–321. [CrossRef]

- Zhang, Z.; Ding, X.; Liang, X.; Zhou, Y.; Qin, B.; Liu, T. Brain and Cognitive Science Inspired Deep Learning: A Comprehensive Survey. IEEE Transactions on Knowledge and Data Engineering 2025, 37, 1650–1671. [CrossRef]

- Plant, C.; Zherdin, A.; Sorg, C.; Meyer-Baese, A.; Wohlschläger, A.M. Mining Interaction Patterns among Brain Regions by Clustering. IEEE Transactions on Knowledge and Data Engineering 2014, 26, 2237–2249. [CrossRef]

- Zoumpourlis, G.; Doumanoglou, A.; Vretos, N.; Daras, P. Non-linear convolution filters for CNN-based learning. In Proceedings of the Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2017, pp. 4761–4769.

- Jiang, Y.; Yang, F.; Zhu, H.; Zhou, D.; Zeng, X. Nonlinear CNN: Improving CNNs with Quadratic Convolutions. Neural Computing and Applications 2020, 32, 8507–8516.

- Mantini, P.; Shah, S.K. CQNN: Convolutional Quadratic Neural Networks. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR), 2021, pp. 9819–9826. [CrossRef]

- Goyal, M.; Goyal, R.; Lall, B. Improved Polynomial Neural Networks with Normalised Activations. In Proceedings of the 2020 International Joint Conference on Neural Networks (IJCNN). IEEE, 2020, pp. 1–8.

- Bu, J.; Karpaten, A. Quadratic Residual Networks: A New Class of Neural Networks for Solving Forward and Inverse Problems in Physics Involving PDEs. In Proceedings of the Proceedings of the 2021 SIAM International Conference on Data Mining (SDM). SIAM, 2021, pp. 675–683.

- Fan, F.; Cong, W.; Wang, G. A New Type of Neurons for Machine Learning. International Journal for Numerical Methods in Biomedical Engineering 2018, 34, e2920.

- Chrysos, G.; Moschoglou, S.; Bouritsas, G.; Deng, J.; Panagakis, Y.; Zafeiriou, S.P. Deep Polynomial Neural Networks. IEEE Transactions on Pattern Analysis and Machine Intelligence 2021.

- Chrysos, G.G.; Georgopoulos, M.; Deng, J.; Kossaifi, J.; Panagakis, Y.; Anandkumar, A. Augmenting Deep Classifiers with Polynomial Neural Networks. In Proceedings of the European Conference on Computer Vision. Springer, 2022, pp. 692–716.

- Chrysos, G.G.; Wang, B.; Deng, J.; Cevher, V. Regularization of polynomial networks for image recognition. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 16123–16132.

- Kiyani, E.; Shukla, K.; Karniadakis, G.E.; Karttunen, M. A framework based on symbolic regression coupled with extended physics-informed neural networks for gray-box learning of equations of motion from data. Computer Methods in Applied Mechanics and Engineering 2023, 415, 116258.

- Wang, R.; Sun, D.; Wong, R.K. Symbolic Minimization on Relational Data. IEEE Transactions on Knowledge and Data Engineering 2023, 35, 9307–9318. Note: Publication year (2023) differs from DOI year (2022), . [CrossRef]

- Sahoo, S.; Lampert, C.; Martius, G. Learning Equations for Extrapolation and Control. In Proceedings of the Proceedings of the 35th International Conference on Machine Learning. PMLR, 2018, Vol. 80, PMLR, pp. 4442–4450.

- Tohme, T.; Khojasteh, M.J.; Sadr, M.; Meyer, F.; Youcef-Toumi, K. ISR: Invertible Symbolic Regression. arXiv preprint 2024, arXiv:2405.06848. Submitted on 10 May 2024, . [CrossRef]

- Liu, Z.; Wang, Y.; Vaidya, S.; Ruehle, F.; Halverson, J.; Soljačić, M.; Hou, T.Y.; Tegmark, M. Kan: Kolmogorov-arnold networks. arXiv preprint arXiv:2404.19756 2024.

- Li, C.; Liu, X.; Li, W.; Wang, C.; Liu, H.; Liu, Y.; Chen, Z.; Yuan, Y. U-kan makes strong backbone for medical image segmentation and generation. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025, Vol. 39, pp. 4652–4660.

- Toscano, J.D.; Oommen, V.; Varghese, A.J.; Zou, Z.; Ahmadi Daryakenari, N.; Wu, C.; Karniadakis, G.E. From pinns to pikans: Recent advances in physics-informed machine learning. Machine Learning for Computational Science and Engineering 2025, 1, 1–43.

- Kamienny, P.a.; d’Ascoli, S.; Lample, G.; Charton, F. End-to-end Symbolic Regression with Transformers. In Proceedings of the Advances in Neural Information Processing Systems; Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; Oh, A., Eds. Curran Associates, Inc., 2022, Vol. 35, pp. 10269–10281.

- Kim, S.; et al. Integration of neural network-based symbolic regression in deep learning for scientific discovery. IEEE Transactions on Neural Networks and Learning Systems 2020, 32, 4166–4177.

- Valipour, M.; You, B.; Panju, M.H.; Ghodsi, A. SymbolicGPT: A Generative Transformer Model for Symbolic Regression. In Proceedings of the Second Workshop on Efficient Natural Language and Speech Processing (ENLSP-II), NeurIPS, 2022.

- Newell, A.; Simon, H. The logic theory machine–A complex information processing system. IRE Transactions on information theory 1956, 2, 61–79.

- Taha, I.A.; Ghosh, J. Symbolic Interpretation of Artificial Neural Networks. IEEE Transactions on Knowledge and Data Engineering 1999, 11, 448–463. [CrossRef]

- Smolensky, P. Connectionist AI, symbolic AI, and the brain. Artificial Intelligence Review 1987, 1, 95–109.

- Foggia, P.; Genna, R.; Vento, M. Symbolic vs. Connectionist Learning: An Experimental Comparison in a Structured Domain. IEEE Transactions on Knowledge and Data Engineering 2001, 13, 176–195. [CrossRef]

- Fu, L. Knowledge Discovery by Inductive Neural Networks. IEEE Transactions on Knowledge and Data Engineering 1999, 11, 992–998. [CrossRef]

- Maiorov, V.; Pinkus, A. Lower bounds for approximation by MLP neural networks. Neurocomputing 1999, 25, 81–91. [CrossRef]

- Zhang, S.; Lu, J.; Zhao, H. On Enhancing Expressive Power via Compositions of Single Fixed-Size ReLU Network. In Proceedings of the Proceedings of the 40th International Conference on Machine Learning; Krause, A.; Brunskill, E.; Cho, K.; Engelhardt, B.; Sabato, S.; Scarlett, J., Eds. PMLR, 23–29 Jul 2023, Vol. 202, Proceedings of Machine Learning Research, pp. 41452–41487.

- Guo, W.; Kotsia, I.; Patras, I. Tensor Learning for Regression. IEEE Transactions on Image Processing 2012.

- Yuan, L.; Li, C.; Cao, J.; Zhao, Q. Randomized Tensor Ring Decomposition and Its Application to Large-scale Data Reconstruction. In Proceedings of the ICASSP 2019 - 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2019, pp. 2127–2131. [CrossRef]

- Grasedyck, L. Hierarchical Singular Value Decomposition of Tensors. SIAM Journal on Matrix Analysis and Applications 2010, 31, 2029–2054, [. [CrossRef]

- Tancik, M.; Srinivasan, P.; Mildenhall, B.; Fridovich-Keil, S.; Raghavan, N.; Singhal, U.; Ramamoorthi, R.; Barron, J.; Ng, R. Fourier Features Let Networks Learn High Frequency Functions in Low Dimensional Domains. In Proceedings of the Advances in Neural Information Processing Systems, 2020, Vol. 33.

- Fan, F.L.; Li, M.; Wang, F.; Lai, R.; Wang, G. On expressivity and trainability of quadratic networks. IEEE Transactions on Neural Networks and Learning Systems 2023.

- Katok, A.; Katok, A.; Hasselblatt, B. Introduction to the modern theory of dynamical systems; Cambridge university press, 1995.

- Huang, W. On complete chaotic maps with tent-map-like structures. Chaos, Solitons & Fractals 2005, 24, 287–299. [CrossRef]

- Değirmenci, N.; Koçak, Ş. Existence of a dense orbit and topological transitivity: when are they equivalent? Acta Mathematica Hungarica 2003, 99, 185–187.

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD), 2016, pp. 785–794.

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Ye, Q.; Liu, T.Y. LightGBM: A Highly Efficient Gradient Boosting Decision Tree. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017, Vol. 30, pp. 1–9.

- Hancock, J.T.; Khoshgoftaar, T.M. CatBoost for Big Data: An Interdisciplinary Review. Journal of Big Data 2020, 7, 1–45.

- Arik, S.Ö.; Pfister, T. TabNet: Attentive Interpretable Tabular Learning. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2021, Vol. 35, pp. 6679–6687.

- Huang, X.; Khetan, A.; Cvitkovic, M.; Karnin, Z. TabTransformer: Tabular Data Modeling Using Contextual Embeddings. arXiv preprint arXiv:2012.06678 2020.

- Gorishniy, Y.; Rubachev, I.; Khrulkov, V.; Babenko, A. Revisiting Deep Learning Models for Tabular Data. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2021, Vol. 34, pp. 18932–18943.

- Chen, J.; Liao, K.; Wan, Y.; Chen, D.Z.; Wu, J. DANets: Deep Abstract Networks for Tabular Data Classification and Regression. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2022, Vol. 36, pp. 3930–3938.

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 4700–4708.

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 7132–7141.

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 1–9.

- Krizhevsky, A.; Hinton, G.; et al. Learning multiple layers of features from tiny images 2009.

- Netzer, Y.; Wang, T.; Coates, A.; Bissacco, A.; Wu, B.; Ng, A.Y.; et al. Reading digits in natural images with unsupervised feature learning. In Proceedings of the NIPS workshop on deep learning and unsupervised feature learning. Granada, 2011, Vol. 2011, p. 4.

- Coates, A.; Ng, A.; Lee, H. An analysis of single-layer networks in unsupervised feature learning. In Proceedings of the Proceedings of the fourteenth international conference on artificial intelligence and statistics. JMLR Workshop and Conference Proceedings, 2011, pp. 215–223.

- White, C.; Safari, M.; Sukthanker, R.; Ru, B.; Elsken, T.; Zela, A.; Dey, D.; Hutter, F. Neural architecture search: Insights from 1000 papers. arXiv preprint arXiv:2301.08727 2023.

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 |

| Works | Formulations |

|---|---|

| Zoumponuris et al. (2017) [25] | |

| Fan et al. (2018) [30] | |

| Jiang et al. (2019) [26] | |

| Mantini & Shah (2021) [27] | |

| Goyal et al. (2020) [28] | |

| Bu & Karpante (2021) [29] | |

| Xu et al. (2022) [9] | |

| Fan et al. (2024) [12] | Task-driven polynomial |

| Ground Truth Formula | Time (s) | |||||||

|---|---|---|---|---|---|---|---|---|

| SR | TD | SR | TD | SR | TD | SR | TD | |

| 0.0013 | 0.0008 | 0.0108 | 0.0120 | 0.0232 | 0.0138 | 13.94 | 4.46 | |

| 0.0007 | 0.0006 | 0.0163 | 0.0095 | 0.0191 | 0.0166 | 12.75 | 6.69 | |

| 0.0235 | 0.0028 | 0.2489 | 0.0102 | 0.1905 | 0.1706 | 28.77 | 7.73 | |

| 0.0016 | 0.0012 | 0.0139 | 0.0101 | 0.0147 | 0.0116 | 22.01 | 8.82 | |

| 0.0095 | 0.0067 | 0.0116 | 0.0086 | 0.0495 | 0.0064 | 15.16 | 5.80 | |

| 0.0011 | 0.0002 | 0.0230 | 0.0165 | 0.0561 | 0.0193 | 24.77 | 9.44 | |

| 0.0055 | 0.0014 | 0.0125 | 0.0104 | 0.0416 | 0.0109 | 15.80 | 8.21 | |

| 0.0102 | 0.0062 | 0.0134 | 0.0111 | 0.0170 | 0.0126 | 13.30 | 8.24 | |

| Datasets | Instances | Features | Classes | Metrics | Structure | Test results | ||

|---|---|---|---|---|---|---|---|---|

| TN | NeuronSeek-TD | NeuronSeek-SR | NeuronSeek-TD | |||||

| California housing | 20640 | 8 | continuous | MSE | 6-4-1 | 6-3-1 | 0.0720 ± 0.0024 | 0.0701 ± 0.0014 |

| house sales | 21613 | 15 | continuous | 6-4-1 | 6-4-1 | 0.0079 ± 0.0008 | 0.0075 ± 0.0004 | |

| airfoil self-noise | 1503 | 5 | continuous | 4-1 | 3-1 | 0.0438 ± 0.0065 | 0.0397 ± 0.0072 | |

| diamonds | 53940 | 9 | continuous | 4-1 | 4-1 | 0.0111 ± 0.0039 | 0.0102 ± 0.0015 | |

| abalone | 4177 | 8 | continuous | 5-1 | 4-1 | 0.0239 ± 0.0024 | 0.0237 ± 0.0027 | |

| Bike Sharing Demand | 17379 | 12 | continuous | 5-1 | 5-1 | 0.0176 ± 0.0026 | 0.0125 ± 0.0146 | |

| space ga | 3107 | 6 | continuous | 2-1 | 2-1 | 0.0057 ± 0.0029 | 0.0056 ± 0.0030 | |

| Airlines DepDelay | 8000 | 5 | continuous | 4-1 | 4-1 | 0.1645 ± 0.0055 | 0.1637 ± 0.0052 | |

| credit | 16714 | 10 | 2 | ACC | 6-2 | 6-2 | 0.7441 ± 0.0092 | 0.7476 ± 0.0092 |

| heloc | 10000 | 22 | 2 | 18-2 | 17-2 | 0.7077 ± 0.0145 | 0.7089 ± 0.0113 | |

| electricity | 38474 | 8 | 2 | 5-2 | 5-2 | 0.7862 ± 0.0075 | 0.7967 ± 0.0087 | |

| phoneme | 3172 | 5 | 2 | 8-5 | 7-5 | 0.8242 ± 0.0207 | 0.8270 ± 0.0252 | |

| MagicTelescope | 13376 | 10 | 2 | 6-2 | 6-2 | 0.8449 ± 0.0092 | 0.8454 ± 0.0079 | |

| vehicle | 846 | 18 | 4 | 10-2 | 10-2 | 0.8176 ± 0.0362 | 0.8247 ± 0.0354 | |

| Oranges-vs-Grapefruit | 10000 | 5 | 2 | 8-2 | 7-2 | 0.9305 ± 0.0160 | 0.9361 ± 0.0240 | |

| eye movements | 7608 | 20 | 2 | 10-2 | 10-2 | 0.5849 ± 0.0145 | 0.5908 ± 0.0164 | |

| Dataset | Task | Data Size | Features |

|---|---|---|---|

| Electron Collision Prediction | Regression | 99,915 | 16 |

| Asteroid Prediction | Regression | 137,636 | 19 |

| Heart Failure Detection | Classification | 1,190 | 11 |

| Stellar Classification | Classification | 100,000 | 17 |

| Method | electron collision (MSE) | asteroid prediction (MSE) | heartfailure (F1) | Stellar Classification (F1) |

|---|---|---|---|---|

| XGBoost | 0.0094 ± 0.0006 | 0.0646 ± 0.1031 | 0.8810 ± 0.02 | 0.9547 ± 0.002 |

| LightGBM | 0.0056 ± 0.0004 | 0.1391 ± 0.1676 | 0.8812 ± 0.01 | 0.9656 ± 0.002 |

| CatBoost | 0.0028 ± 0.0002 | 0.0817 ± 0.0846 | 0.8916 ± 0.01 | 0.9676 ± 0.002 |

| TabNet | 0.0040 ± 0.0006 | 0.0627 ± 0.0939 | 0.8501 ± 0.03 | 0.9269 ± 0.043 |

| TabTransformer | 0.0038 ± 0.0008 | 0.4219 ± 0.2776 | 0.8682 ± 0.02 | 0.9534 ± 0.002 |

| FT-Transformer | 0.0050 ± 0.0020 | 0.2136 ± 0.2189 | 0.8577 ± 0.02 | 0.9691 ± 0.002 |

| DANETs | 0.0076 ± 0.0009 | 0.1709 ± 0.1859 | 0.8948 ± 0.03 | 0.9681 ± 0.002 |

| NeuronSeek-SR | 0.0016 ± 0.0005 | 0.0513 ± 0.0551 | 0.8874 ± 0.05 | 0.9613 ± 0.002 |

| NeuronSeek-TD (ours) | 0.0011 ± 0.0003 | 0.0502 ± 0.0800 | 0.9023 ± 0.03 | 0.9714 ± 0.001 |

| CIFAR10 | CIFAR100 | SVHN | STL10 | |

|---|---|---|---|---|

| ResNet18 | 0.9419 | 0.7385 | 0.9638 | 0.6724 |

| NeuronSeek-ResNet18 | 0.9464 | 0.7582 | 0.9655 | 0.7005 |

| DenseNet121 | 0.9386 | 0.7504 | 0.9636 | 0.6216 |

| NeuronSeek-DenseNet121 | 0.9399 | 0.7613 | 0.9696 | 0.6638 |

| SeResNet101 | 0.9336 | 0.7382 | 0.9650 | 0.5583 |

| NeuronSeek-SeResNet101 | 0.9385 | 0.7720 | 0.9685 | 0.6560 |

| GoogleNet | 0.9375 | 0.7378 | 0.9644 | 0.7241 |

| NeuronSeek-GoogleNet | 0.9400 | 0.7519 | 0.9658 | 0.7298 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).