Submitted:

13 June 2025

Posted:

13 June 2025

You are already at the latest version

Abstract

Keywords:

CCS CONCEPTS

- Computing methodologies Neural networks

1. Introduction

2. Related Work

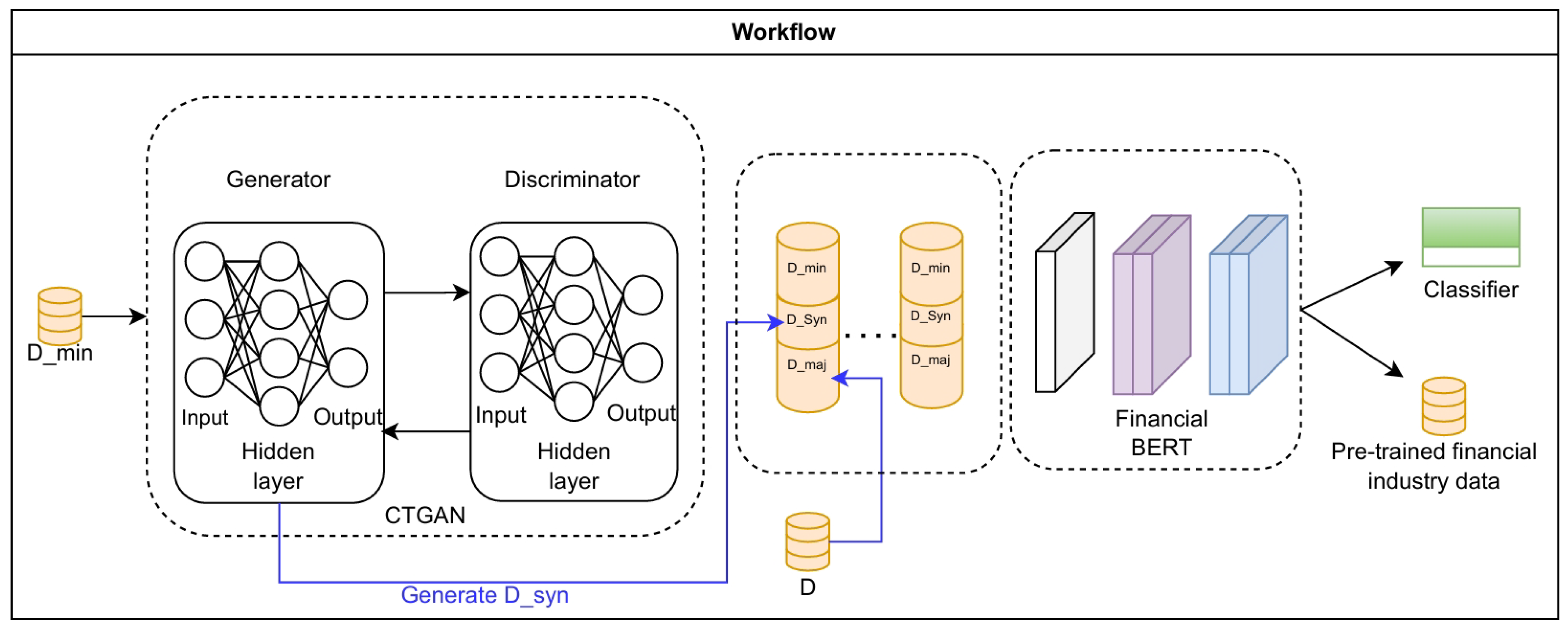

3. Methodology

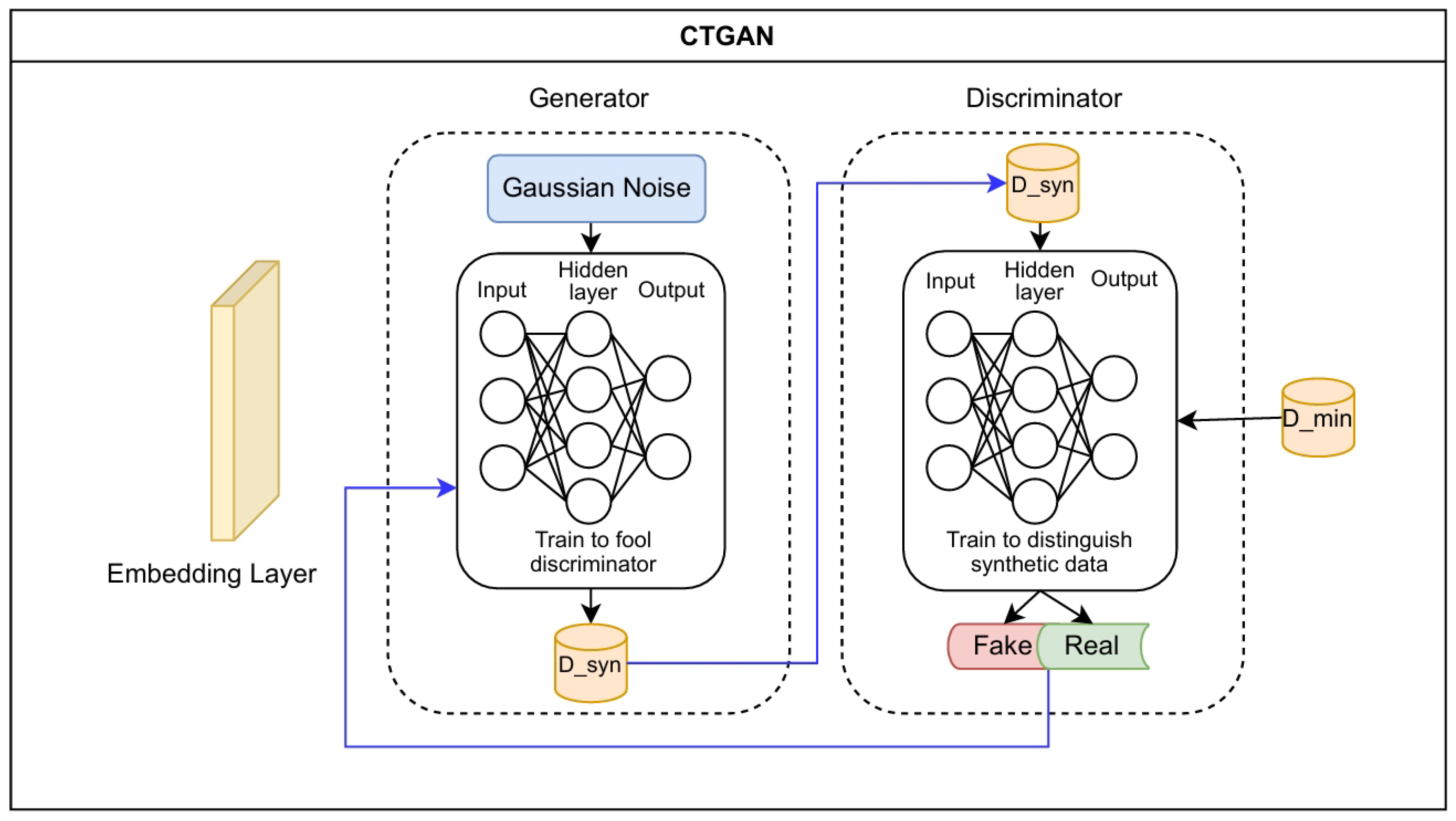

3.1. Synthetic Data Generation via Conditional Tabular Generative Adversarial Networks (CTGAN)

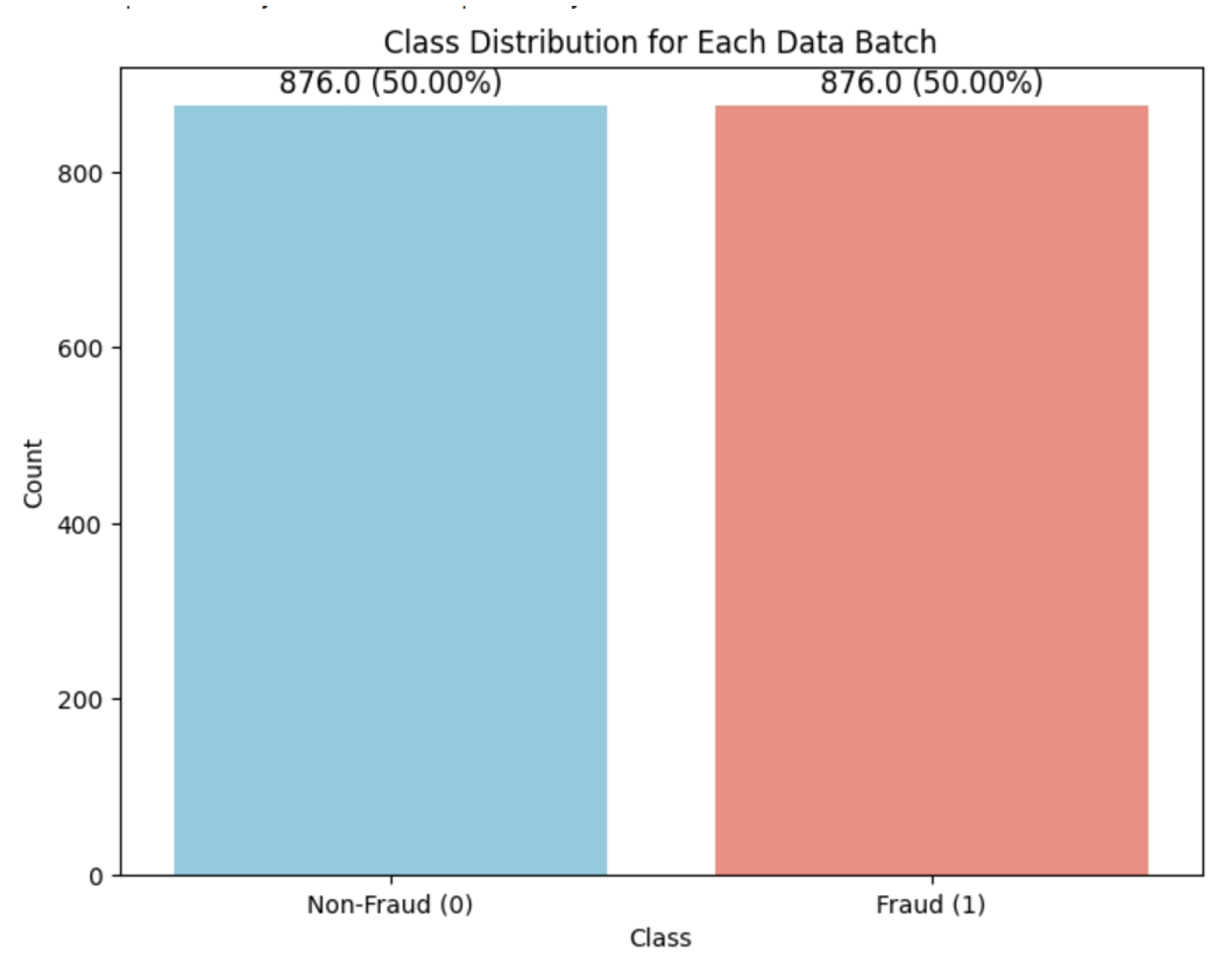

3.2. Stratified Data Preparation for Balanced Batch Training

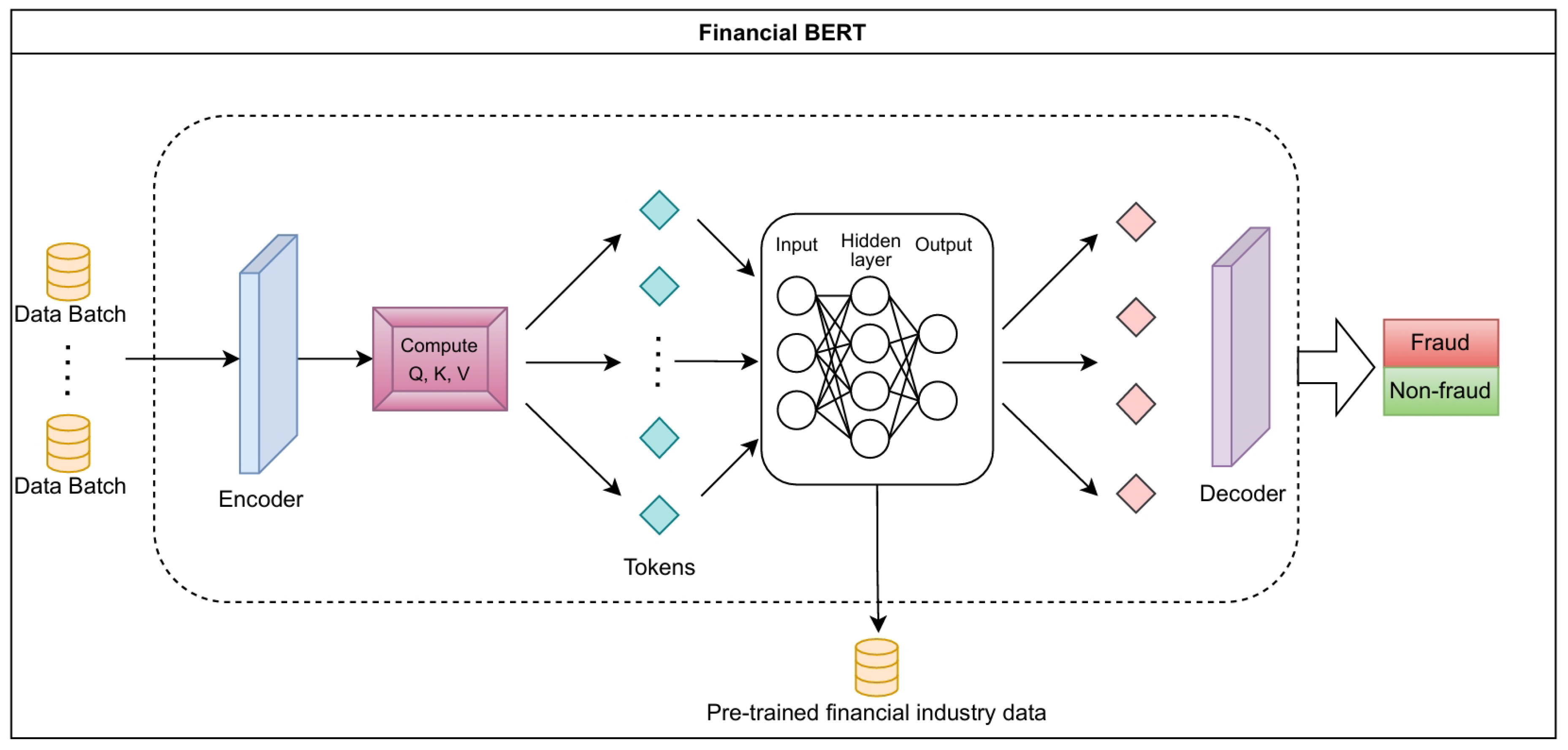

3.3. FinBERT Classifier

4. Experiments

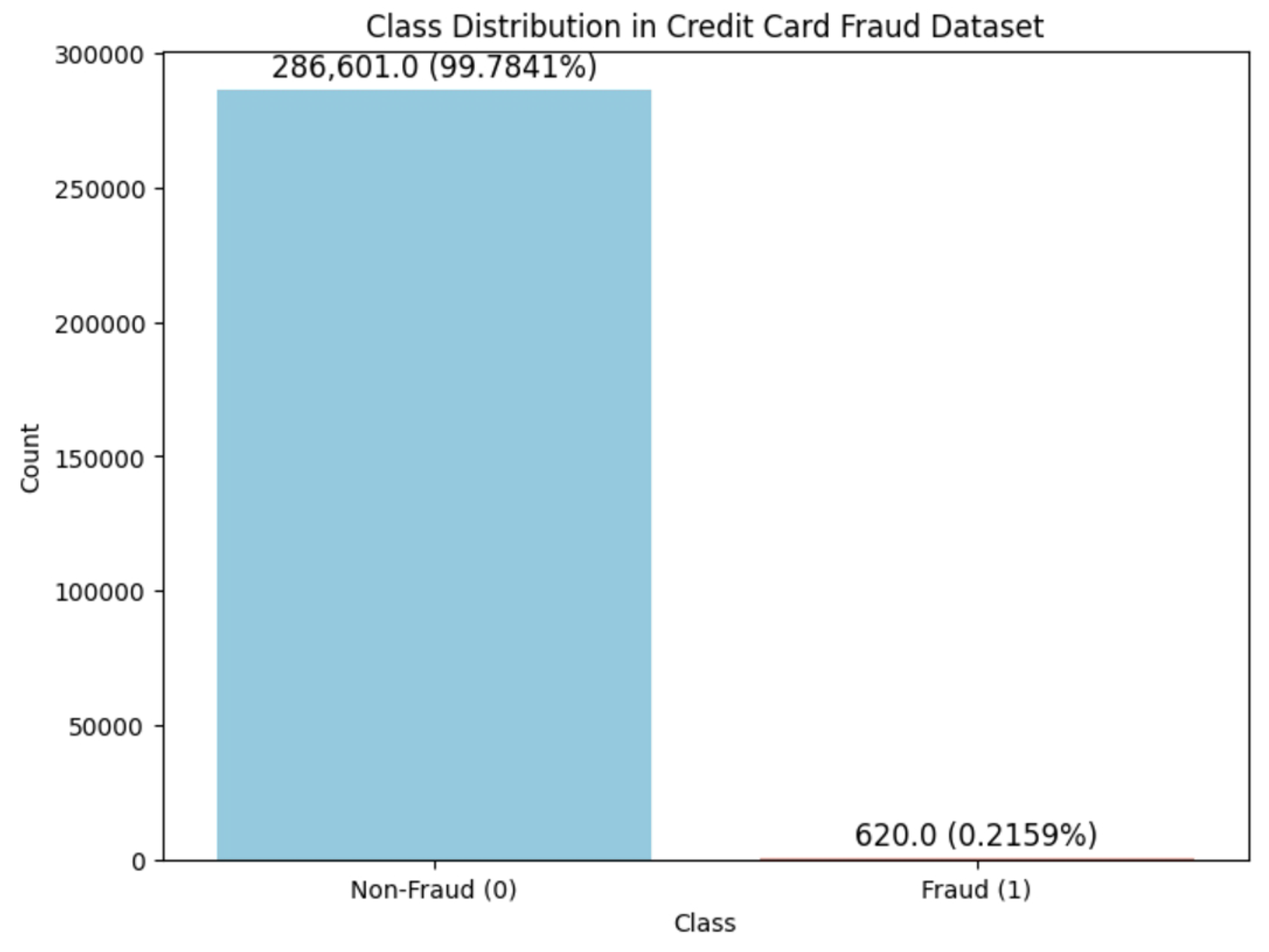

4.1. Dataset and Baselines

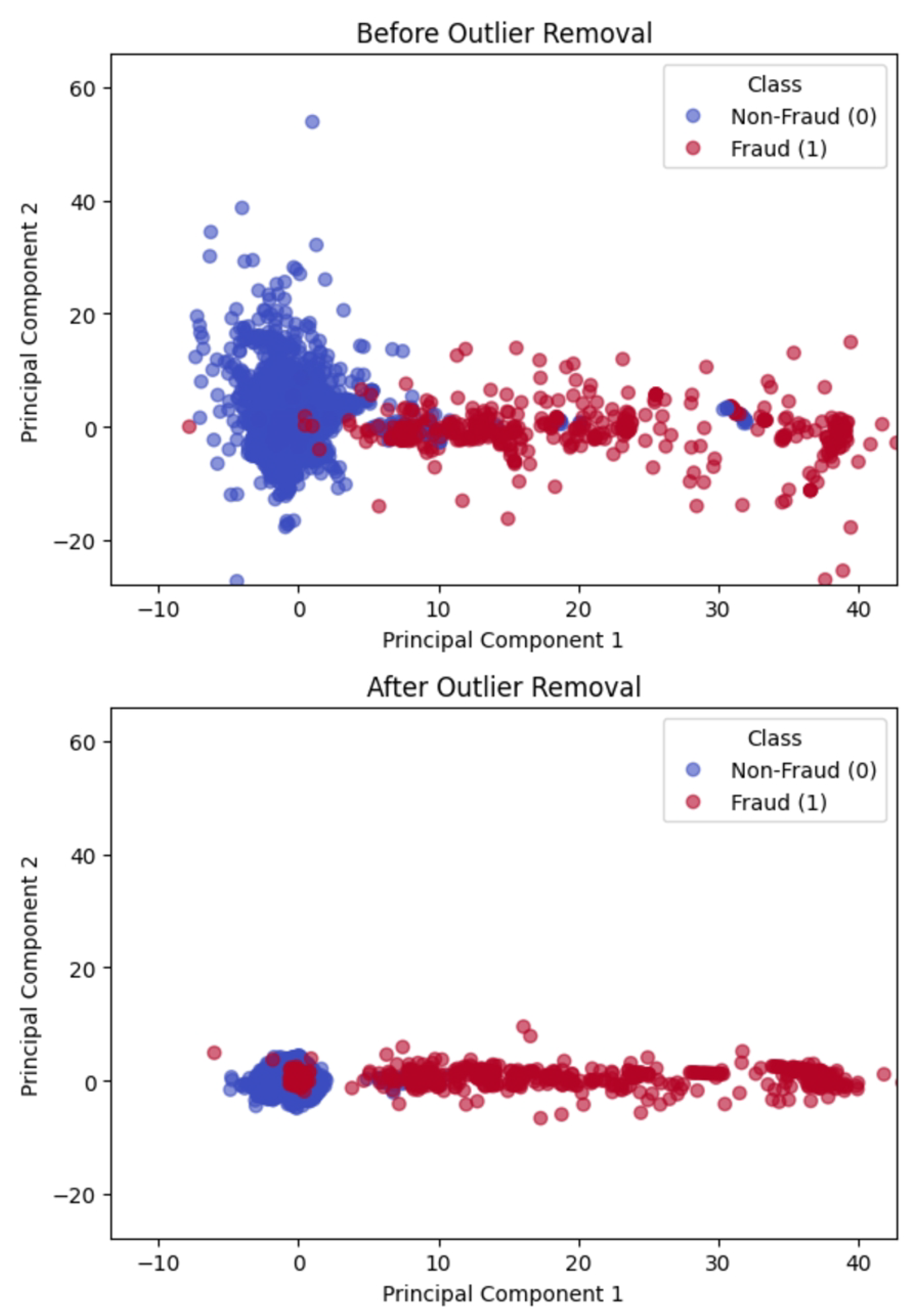

4.2. Outlier Detection

4.3. Experiment Setup

Evaluation Metrics

- Precision: Precision measures the proportion of correctly identified positive cases among all cases that are predicted as positive:where TP and FP denote the number of true and false positives respectively. High precision indicates few false positives, showing accurate positive identification.

- Recall: Recall, or sensitivity, is the proportion of correctly identified positive cases among all actual positives:where FN represents false negatives. High recall is critical in fraud detection, reducing the risk of missed fraudulent cases.

- F1-Score: The F1-score, defined as the harmonic mean of precision and recall, balances these metrics to evaluate model performance on imbalanced data:

- Accuracy: Accuracy, the ratio of correctly classified instances to total instances, provides an overall performance measure:where TN represents true negatives.

- AUC-ROC Score: The AUC-ROC score represents the area under the ROC curve that plots the true positive rate (recall) versus the false positive rate. It ranges from 0 to 1, a high AUC score means the model can consistently separate fraudulent and non-fraudulent cases across all possible classification thresholds. It is particularly useful when evaluating performance on an imbalanced dataset.

Experiment Conditions

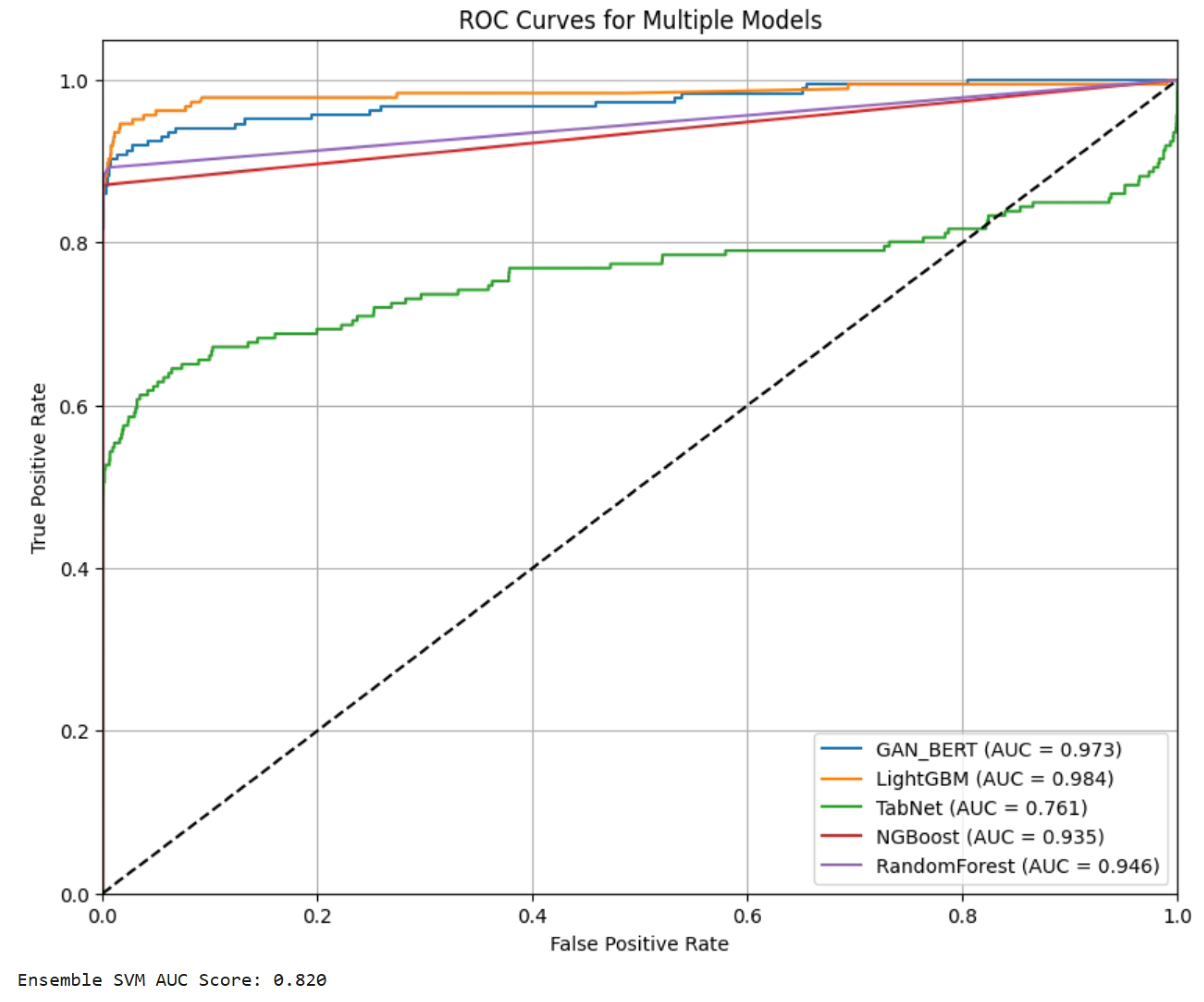

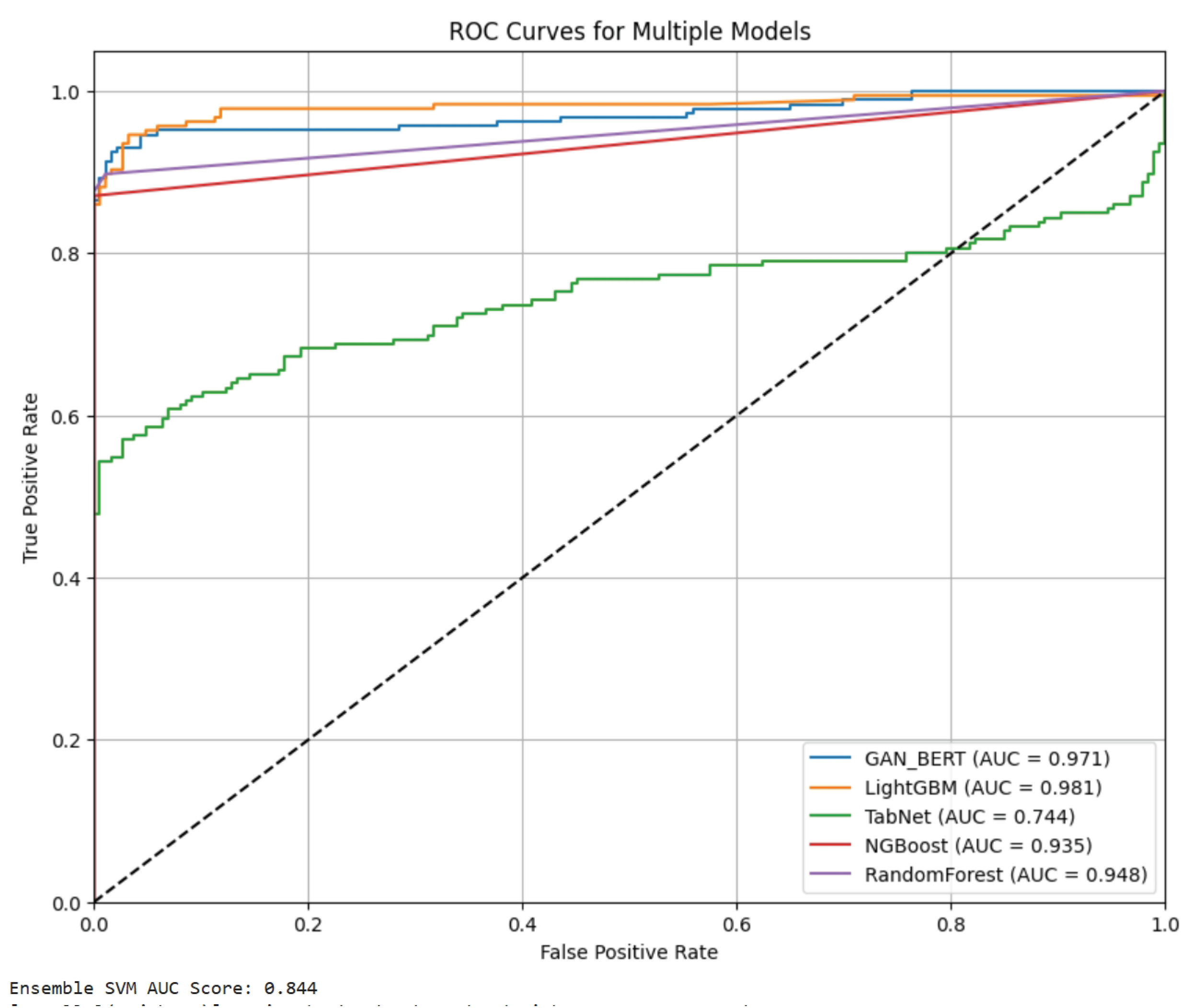

5. Main Results

6. Conclusions

References

- Weng, Y.; Wu, J. Fortifying the global data fortress: a multidimensional examination of cyber security indexes and data protection measures across 193 nations. International Journal of Frontiers in Engineering Technology 2024, 6. [Google Scholar] [CrossRef]

- Weng, Y.; Wu, J. Big data and machine learning in defence. International Journal of Computer Science and Information Technology 2024, 16. [Google Scholar] [CrossRef]

- Krawczyk, B. Learning from imbalanced data: Open challenges and future directions. Progress in Artificial Intelligence 2016, 5, 221–232. [Google Scholar] [CrossRef]

- Steinberg, L.; Matthews, J. Impacts of data privacy regulations on financial institutions: A comparative analysis. Journal of Financial Services Research 2021, 45, 132–148. [Google Scholar]

- Douzas, G.; Bacao, F. Improving imbalanced learning through a heuristic balancing of class distributions. Expert Systems with Applications 2018, 91, 464–480. [Google Scholar] [CrossRef]

- Haixiang, G.; et al. Learning from class-imbalanced data: Review of methods and applications. Expert Systems with Applications 2017, 73, 220–239. [Google Scholar] [CrossRef]

- Yang, J.; Liu, J.; Yao, Z.; Ma, C. Measuring digitalization capabilities using machine learning. Research in International Business and Finance 2024, 70, 102380. [Google Scholar] [CrossRef]

- Li, K.; Liu, L.; Chen, J.; Yu, D.; Zhou, X.; Li, M.; Wang, C.; Li, Z. Research on reinforcement learning based warehouse robot navigation algorithm in complex warehouse layout. In Proceedings of the 2024 6th International Conference on Artificial Intelligence and Computer Applications (ICAICA). IEEE, 2024, pp. 296–301.

- Zhu, W.; Hu, T. In Proceedings of the 2021 5th International Conference on Artificial Intelligence and Virtual Reality (AIVR), 2021, pp. 118–122.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. arXiv preprint arXiv:1810.04805, 2018. [Google Scholar]

- Mao, Y.; Tao, D.; Zhang, S.; Qi, T.; Li, K. Research and Design on Intelligent Recognition of Unordered Targets for Robots Based on Reinforcement Learning. arXiv preprint arXiv:2503.07340, 2025. [Google Scholar]

- Hu, T.; Zhu, W.; Yan, Y. Artificial intelligence aspect of transportation analysis using large scale systems. In Proceedings of the Proceedings of the 2023 6th Artificial Intelligence and Cloud Computing Conference, 2023, pp. 54–59.

- He, W.; Dong, H.; Gao, Y.; Fan, Z.; Guo, X.; Hou, Z.; Lv, X.; Jia, R.; Han, S.; Zhang, D. HermEs: Interactive Spreadsheet Formula Prediction via Hierarchical Formulet Expansion. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Rogers, A.; Boyd-Graber, J.; Okazaki, N., Eds., Toronto, Canada, 2023; pp. 8356–8372. [CrossRef]

- Zareapoor, M.; Shamsolmoali, P. Enhancing Credit Card Fraud Detection Using Ensemble Support Vector Machine. International Journal of Computer Applications 2015, 98. [Google Scholar]

- Zhu, W. Optimizing distributed networking with big data scheduling and cloud computing. In Proceedings of the International Conference on Cloud Computing, Internet of Things, and Computer Applications (CICA 2022). SPIE, 2022, Vol. 12303, pp. 23–28.

- Jurgovsky, J.; Granitzer, M.; Ziegler, K.; Calabretto, S.; Portier, P.; He-Guelton, L.; Caelen, O. Sequence classification for credit-card fraud detection. Expert Systems with Applications 2018, 100, 234–245. [Google Scholar] [CrossRef]

- Chen, Y.; Wen, Z.; Fan, G.; Chen, Z.; Wu, W.; Liu, D.; Li, Z.; Liu, B.; Xiao, Y. Mapo: Boosting large language model performance with model-adaptive prompt optimization. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 3279–3304.

- Chen, Y.; Xiao, Y.; Liu, B. Grow-and-Clip: Informative-yet-Concise Evidence Distillation for Answer Explanation. In Proceedings of the 2022 IEEE 38th International Conference on Data Engineering (ICDE). IEEE, 2022, pp. 741–754.

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Nets. In Proceedings of the Advances in Neural Information Processing Systems, 2014, pp. 2672–2680.

- Xu, L.; Skoularidou, M.; Cuesta-Infante, A.; Veeramachaneni, K. Modeling Tabular Data Using Conditional GAN. In Proceedings of the Advances in Neural Information Processing Systems. Curran Associates, Inc., 2019, Vol. 32.

- Zheng, T.; Jin, Y.; Zhao, H.; Ma, Z.; Chen, Y.; Xu, K. Learning coverage paths in unknown environments with deep reinforcement learning. Engineering Archive 2025. [Google Scholar] [CrossRef]

- Ke, Z.; Zhou, S.; Zhou, Y.; Chang, C.H.; Zhang, R. Detection of AI Deepfake and Fraud in Online Payments Using GAN-Based Models. arXiv preprint arXiv:2501.07033, 2025; arXiv:2501.07033 2025. [Google Scholar]

- Dan, H.C.; Lu, B.; Li, M. Evaluation of asphalt pavement texture using multiview stereo reconstruction based on deep learning. Construction and Building Materials 2024, 412, 134837. [Google Scholar] [CrossRef]

- Jiang, T.; Liu, L.; Jiang, J.; Zheng, T.; Jin, Y.; Xu, K. Trajectory Tracking Using Frenet Coordinates with Deep Deterministic Policy Gradient. arXiv preprint arXiv:2411.13885, 2024. [Google Scholar]

- Zhao, Y.; Gao, H. Utilizing Large Language Models for Information Extraction from Real Estate Transactions, 2024, [arXiv:cs.CL/2404.18043].

- Xian, J.; Li, D.; Li, A.H.; Liu, D.; Fan, X.; Guo, C.E.; Liu, Y.; Tang, Y. Unified denoising pretraining and finetuning for data and text 2021.

- Ke, Z.; Yin, Y. Tail Risk Alert Based on Conditional Autoregressive VaR by Regression Quantiles and Machine Learning Algorithms. arXiv preprint arXiv:2412.06193, 2024. [Google Scholar]

- Qiu, S.; Wang, H.; Zhang, Y.; Ke, Z.; Li, Z. Convex Optimization of Markov Decision Processes Based on Z Transform: A Theoretical Framework for Two-Space Decomposition and Linear Programming Reconstruction. Mathematics 2025, 13, 1765. [Google Scholar] [CrossRef]

- Ouyang, K.; Fu, S. Graph Neural Networks Are Evolutionary Algorithms. arXiv preprint arXiv:2412.17629, 2024. [Google Scholar]

- Araci, D. FinBERT: A Pretrained Language Model for Financial Communications. arXiv preprint arXiv:1908.10063, 2019. [Google Scholar]

- Gao, H.; Wang, Z.; Li, Y.; Long, K.; Yang, M.; Shen, Y. A survey for foundation models in autonomous driving. arXiv preprint arXiv:2402.01105, 2024. [Google Scholar]

- Liu, F.T.; Ting, K.M.; Zhou, Z.H. Isolation forest. In Proceedings of the 2008 eighth IEEE international conference on data mining. IEEE, 2008, pp. 413–422.

- Zhang, L.; Liang, R.; et al. Avocado Price Prediction Using a Hybrid Deep Learning Model: TCN-MLP-Attention Architecture. arXiv preprint arXiv:2505.09907, 2025. [Google Scholar]

- Lv, K. CCi-YOLOv8n: Enhanced Fire Detection with CARAFE and Context-Guided Modules. arXiv preprint arXiv:2411.11011, 2024. [Google Scholar]

- Smith, J.; Doe, J. Evaluating Classifiers on Imbalanced Datasets: A Study of Balanced and Imbalanced Test Sets. Journal of Machine Learning Research 2018, 19, 451–470. [Google Scholar]

- Lyu, W.; Zheng, S.; Ma, T.; Chen, C. A Study of the Attention Abnormality in Trojaned BERTs. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022, pp. 4727–4741.

- Bian, W.; Jang, A.; Zhang, L.; Yang, X.; Stewart, Z.; Liu, F. Diffusion modeling with domain-conditioned prior guidance for accelerated mri and qmri reconstruction. IEEE Transactions on Medical Imaging 2024. [Google Scholar] [CrossRef] [PubMed]

- Lyu, W.; Pang, L.; Ma, T.; Ling, H.; Chen, C. TrojVLM: Backdoor Attack Against Vision Language Models. European Conference on Computer Vision, 2024.

- Wang, Y.; Zhao, J.; Lawryshyn, Y. GPT-Signal: Generative AI for Semi-automated Feature Engineering in the Alpha Research Process. In Proceedings of the Proceedings of the Eighth Financial Technology and Natural Language Processing and the 1st Agent AI for Scenario Planning; Chen, C.C.; Ishigaki, T.; Takamura, H.; Murai, A.; Nishino, S.; Huang, H.H.; Chen, H.H., Eds., Jeju, South Korea, 3 2024; pp. 42–53.

- Fang, X.; Si, S.; Sun, G.; Wu, W.; Wang, K.; Lv, H. A Domain-Aware Crowdsourcing System with Copier Removal. In Proceedings of the International Conference on Internet of Things, Communication and Intelligent Technology. Springer, 2022, pp. 761–773.

- Wang, Z.; Shen, Q.; Bi, S.; Fu, C. AI empowers data mining models for financial fraud detection and prevention systems. Procedia Computer Science 2024, 243, 891–899. [Google Scholar] [CrossRef]

- Wu, W. Alphanetv4: Alpha mining model. arXiv preprint arXiv:2411.04409, 2024. [Google Scholar]

- Bian, W.; Zhang, Q.; Ye, X.; Chen, Y. A learnable variational model for joint multimodal MRI reconstruction and synthesis. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention. Springer, 2022, pp. 354–364.

| Model | Precision | Recall | F1-Score | Accuracy |

| GAN_BERT | 0.998 ± 0.001 | 0.999 ± 0.001 | 0.999 ± 0.001 | 0.997 ± 0.001 |

| LightGBM | 0.999 ± 0.001 | 0.996 ± 0.001 | 0.997 ± 0.001 | 0.995 ± 0.001 |

| TabNet | 0.994 ± 0.001 | 0.999 ± 0.001 | 0.997 ± 0.001 | 0.994 ± 0.001 |

| NGBoost | 0.997 ± 0.001 | 0.999 ± 0.001 | 0.998 ± 0.001 | 0.995 ± 0.001 |

| EnsembleSVM | 0.997 ± 0.001 | 0.999 ± 0.001 | 0.998 ± 0.001 | 0.997 ± 0.001 |

| RandomForest | 0.998 ± 0.001 | 0.999 ± 0.001 | 0.999 ± 0.001 | 0.998 ± 0.001 |

| Model | Precision | Recall | F1-Score | Accuracy |

| GAN_BERT | 0.901 ± 0.057 | 0.838 ± 0.027 | 0.862 ± 0.009 | 0.997 ± 0.001 |

| LightGBM | 0.672 ± 0.001 | 0.893 ± 0.001 | 0.767 ± 0.001 | 0.995 ± 0.001 |

| TabNet | 0.999 ± 0.001 | 0.387 ± 0.001 | 0.558 ± 0.001 | 0.994 ± 0.001 |

| NGBoost | 0.997 ± 0.001 | 0.683 ± 0.001 | 0.812 ± 0.001 | 0.995 ± 0.001 |

| EnsembleSVM | 0.999 ± 0.001 | 0.681 ± 0.006 | 0.809 ± 0.006 | 0.997 ± 0.001 |

| RandomForest | 0.999 ± 0.001 | 0.759 ± 0.001 | 0.873 ± 0.001 | 0.998 ± 0.001 |

| Model | Precision | Recall | F1-Score | Accuracy |

| GAN_BERT | 0.860 ± 0.019 | 0.998 ± 0.002 | 0.929 ± 0.011 | 0.917 ± 0.014 |

| LightGBM | 0.896 ± 0.008 | 0.983 ± 0.008 | 0.938 ± 0.007 | 0.931 ± 0.001 |

| TabNet | 0.620 ± 0.001 | 0.999 ± 0.001 | 0.765 ± 0.001 | 0.694 ± 0.001 |

| NGBoost | 0.759 ± 0.001 | 0.999 ± 0.001 | 0.863 ± 0.001 | 0.841 ± 0.001 |

| EnsembleSVM | 0.750 ± 0.027 | 0.999 ± 0.001 | 0.854 ± 0.022 | 0.832 ± 0.024 |

| RandomForest | 0.818 ± 0.008 | 0.999 ± 0.001 | 0.900 ± 0.004 | 0.891 ± 0.006 |

| Model | Precision | Recall | F1-Score | Accuracy |

| GAN_BERT | 0.978 ± 0.036 | 0.837 ± 0.027 | 0.909 ± 0.021 | 0.917 ± 0.014 |

| LightGBM | 0.887 ± 0.102 | 0.868 ± 0.016 | 0.878 ± 0.018 | 0.931 ± 0.001 |

| TabNet | 0.999 ± 0.001 | 0.387 ± 0.001 | 0.558 ± 0.001 | 0.694 ± 0.001 |

| NGBoost | 0.999 ± 0.001 | 0.683 ± 0.001 | 0.812 ± 0.001 | 0.841 ± 0.001 |

| EnsembleSVM | 0.999 ± 0.001 | 0.682 ± 0.023 | 0.798 ± 0.031 | 0.832 ± 0.024 |

| RandomForest | 0.999 ± 0.001 | 0.764 ± 0.014 | 0.874 ± 0.009 | 0.891 ± 0.006 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).