Submitted:

25 May 2025

Posted:

26 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Model Construction

2.1. Enhanced Learning Model

2.2. Ad Sorting Model

2.3. Personalized Recommendation Model

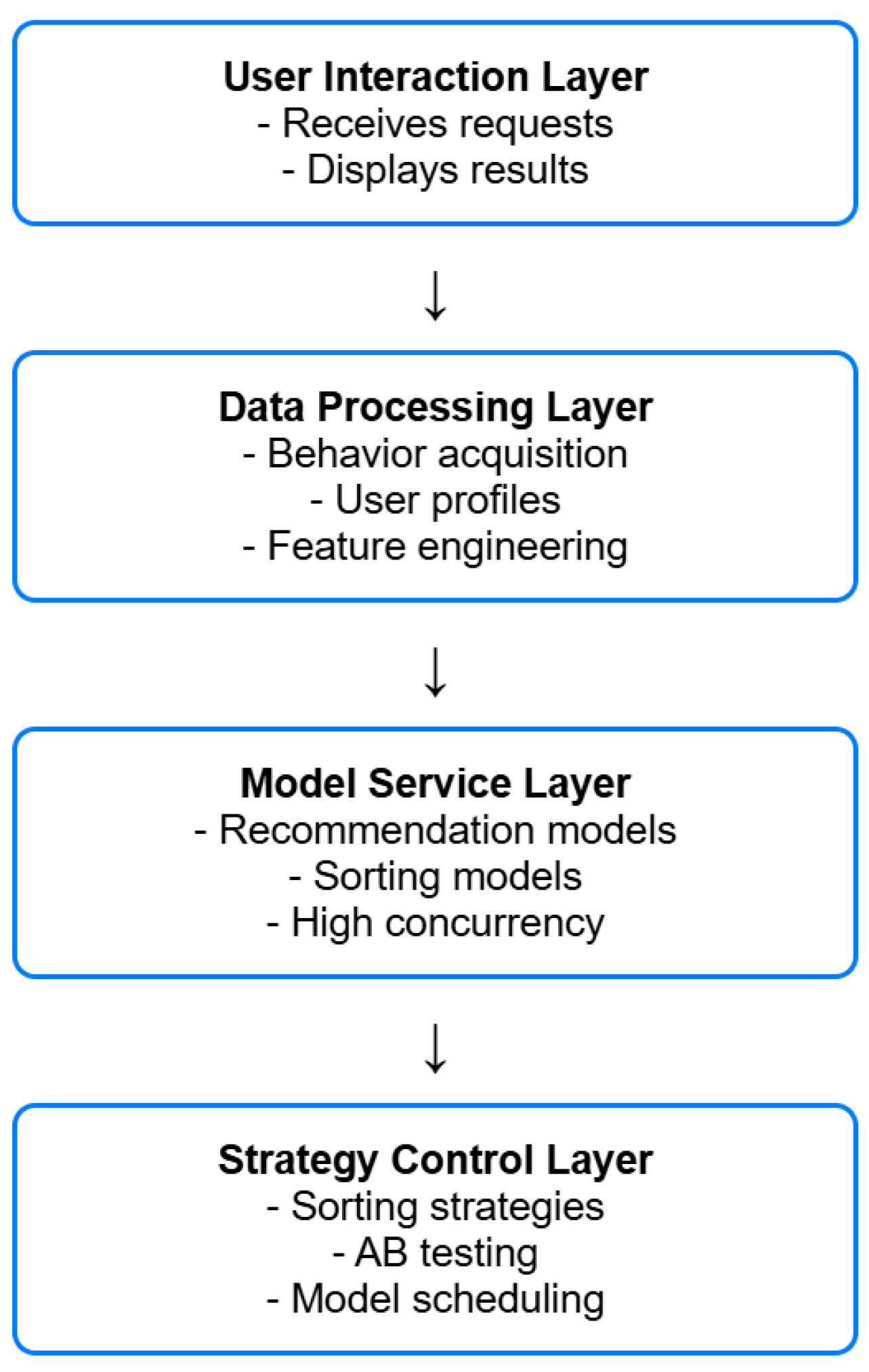

3. System Design

3.1. System Architecture

3.2. Data Processing Flow

3.3. Model Training and Updating

3.4. System Deployment and Go-Live

5. Experimental Results and Analysis

5.1. Experimental Environment Configuration

5.2. Experimental Design

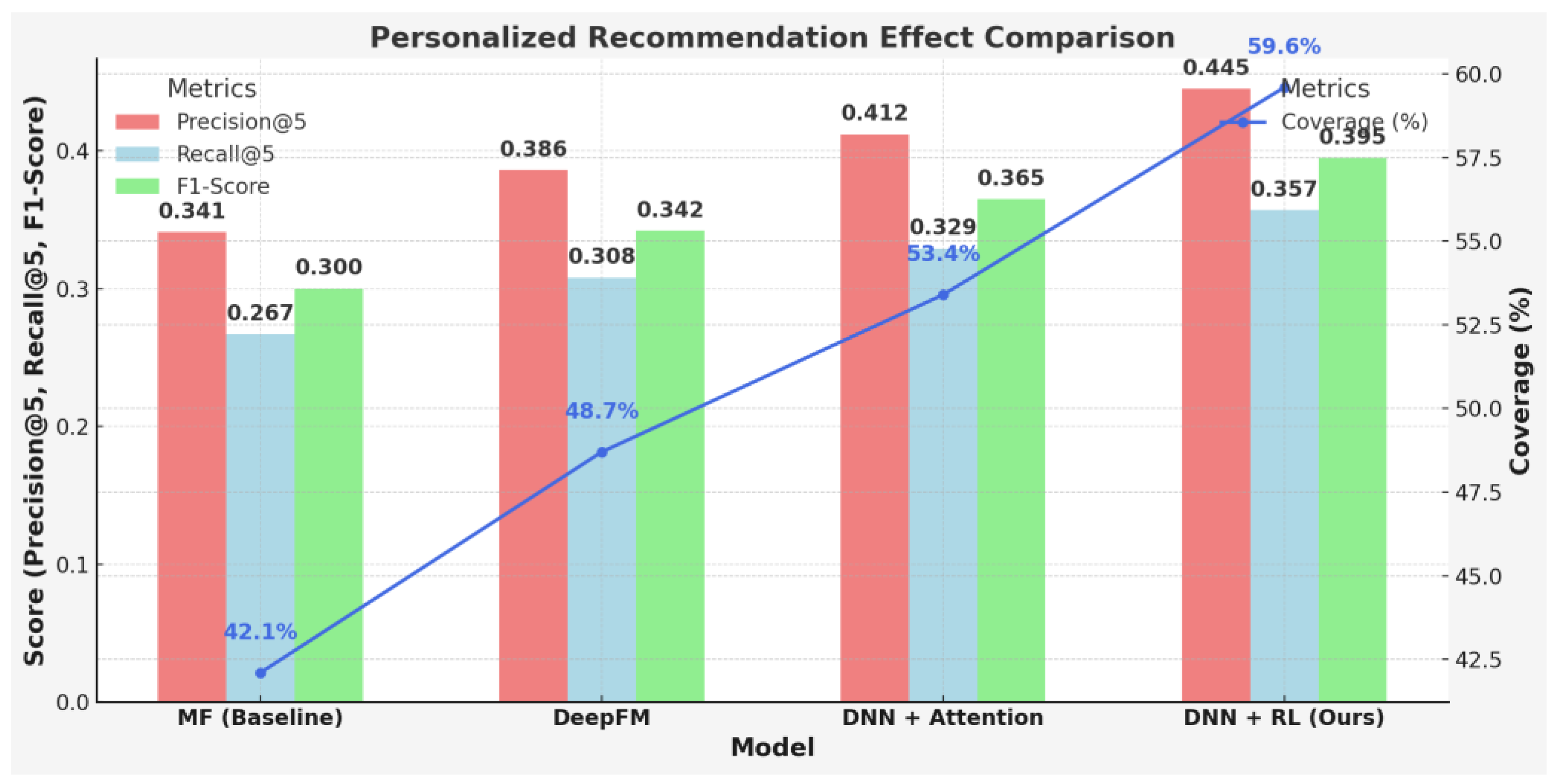

5.3. Experimental Results

5.4. Performance Evaluation

6. Conclusions

References

- Li, M.; Pan, X.; Liu, C.; et al. Federated deep reinforcement learning-based urban traffic signal optimal control. Scientific Reports, 2025, 15, 11724–11724. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Wang, Y. A personalized recommendation algorithm for English exercises incorporating fuzzy cognitive models and multiple attention mechanisms. Scientific Reports, 2025, 15, 11531–11531. [Google Scholar] [CrossRef] [PubMed]

- Farooq, M.; Zafar, A.; Samad, A. FR-EAHTS: federated reinforcement learning for enhanced task scheduling with hierarchical load balancing and dynamic power adjustment in multi-core systems. Telecommunication Systems, 2025, 88, 48–48. [Google Scholar] [CrossRef]

- Mohammadi, P.; Darshi, R.; Darabkhani, G.H.; et al. Multiagent Energy Management System Design Using Reinforcement Learning: The New Energy Lab Training Set Case Study. International Transactions on Electrical Energy Systems, 2025, 2025, 3574030–3574030. [Google Scholar] [CrossRef]

- Watanabe, T.; Kubo, A.; Tsunoda, K.; et al. Hierarchical reinforcement learning with central pattern generator for enabling a quadruped robot simulator to walk on a variety of terrains. Scientific Reports, 2025, 15, 11262–11262. [Google Scholar] [CrossRef] [PubMed]

- Li, Z.; Peng, B.X.; Abbeel, P.; et al. Reinforcement learning for versatile, dynamic, and robust bipedal locomotion control. The International Journal of Robotics Research, 2025, 44, 840–888. [Google Scholar] [CrossRef]

- Fu, M. The design of library resource personalised recommendation system based on deep belief network. International Journal of Applied Systemic Studies, 2023, 10, 205–219. [Google Scholar] [CrossRef]

- Yang, G.; Eben, S.L.; Jingliang, D.; et al. Direct and indirect reinforcement learning. International Journal of Intelligent Systems, 2021, 36, 4439–4467. [Google Scholar]

- Yunpeng, W.; Kunxian, Z.; Daxin, T.; et al. Pre-training with asynchronous supervised learning for reinforcement learning based autonomous driving. Frontiers of Information Technology & Electronic Engineering, 2021, 22, 673–686. [Google Scholar]

- Lu, L.; Zheng, H.; Jie, J.; et al. Reinforcement learning-based particle swarm optimization for sewage treatment control. Complex & Intelligent Systems, 2021, 7, 1–12. [Google Scholar]

- Jannis, B.; Matteo, M.; Ali, O.; et al. PaccMannRL: De novo generation of hit-like anticancer molecules from transcriptomic data via reinforcement learning. iScience, 2021, 24, 102269–102269. [Google Scholar]

- Xiaolu, W. Design of travel route recommendation system based on fast Spark artificial intelligence architecture. International Journal of Industrial and Systems Engineering, 2021, 38, 328–345. [Google Scholar] [CrossRef]

- Xia, H.; Wei, X.; An, W.; et al. Design of electronic-commerce recommendation systems based on outlier mining. Electronic Markets, 2020, 31, 1–17. [Google Scholar] [CrossRef]

- Jian, W.; Qiuju, F. Recommendation system design for college network education based on deep learning and fuzzy uncertainty. Journal of Intelligent & Fuzzy Systems, 2020, 38, 7083–7094. [Google Scholar]

| Model | CTR (%) | NDCG@5 | ARP | Training Time (s) |

| Logistic Regression | 7.43 | 0.732 | 2.87 | 45 |

| GBDT | 8.36 | 0.759 | 2.65 | 97 |

| DQN | 9.81 | 0.803 | 2.12 | 186 |

| PPO | 10.26 | 0.819 | 1.98 | 220 |

| Model | Precision@5 | Recall@5 | F1-Score | Coverage (%) |

| MF (Baseline) | 0.341 | 0.267 | 0.300 | 42.1 |

| DeepFM | 0.386 | 0.308 | 0.342 | 48.7 |

| DNN + Attention | 0.412 | 0.329 | 0.365 | 53.4 |

| DNN + RL (Ours) | 0.445 | 0.357 | 0.395 | 59.6 |

| Metric | Baseline System | RL System |

| Average Response Time (ms) | 85 | 47 |

| Max QPS (Queries/sec) | 950 | 1230 |

| CPU Usage (%) | 63 | 58 |

| GPU Usage (%) | 48 | 71 |

| Memory Usage (GB) | 15.2 | 17.6 |

| Throughput (req/min) | 57000 | 72500 |

| System Availability (%) | 98.3 | 99.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).