1. Introduction

The discrete Fourier transform (DFT) is an orthogonal transform [

1] which, for the case of N input/output samples, may be expressed in normalized form via the equation

where the input/output data vectors belong to C

N, the linear space of complex-valued N-tuples, and the transform kernel is expressed in terms of powers of W

N, where

the primitive N

th complex root of unity [

3,

15]. Fast solutions to the DFT are referred to generically as fast Fourier transform (FFT) algorithms [4-6] and, when the transform length is expressible as some power of a fixed integer R – whereby the algorithm is referred to as a

fixed-radix FFT with

radix R – enable the associated arithmetic complexity to be reduced from

to just

.

The complex-valued exponential terms, , appearing in Eqtn. 1, each comprise two trigonometric components – with each pair being more commonly referred to as twiddle factors [4-6] – that are required to be fed to each instance of the FFT’s butterfly, this being the computational engine used for carrying out the algorithm’s repetitive arithmetic operations and which, for a radix-R transform, produces R complex-valued outputs from R complex-valued inputs.

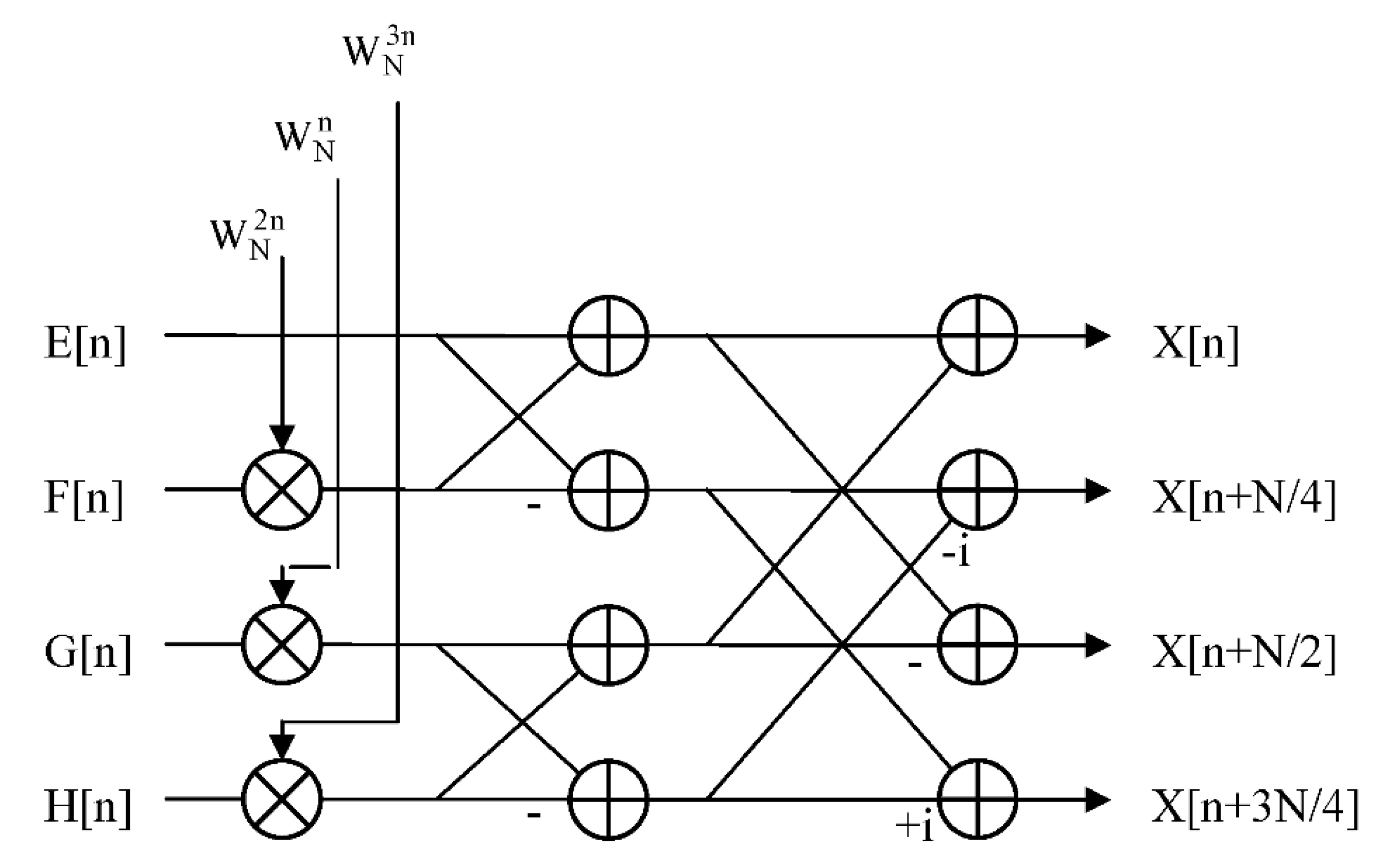

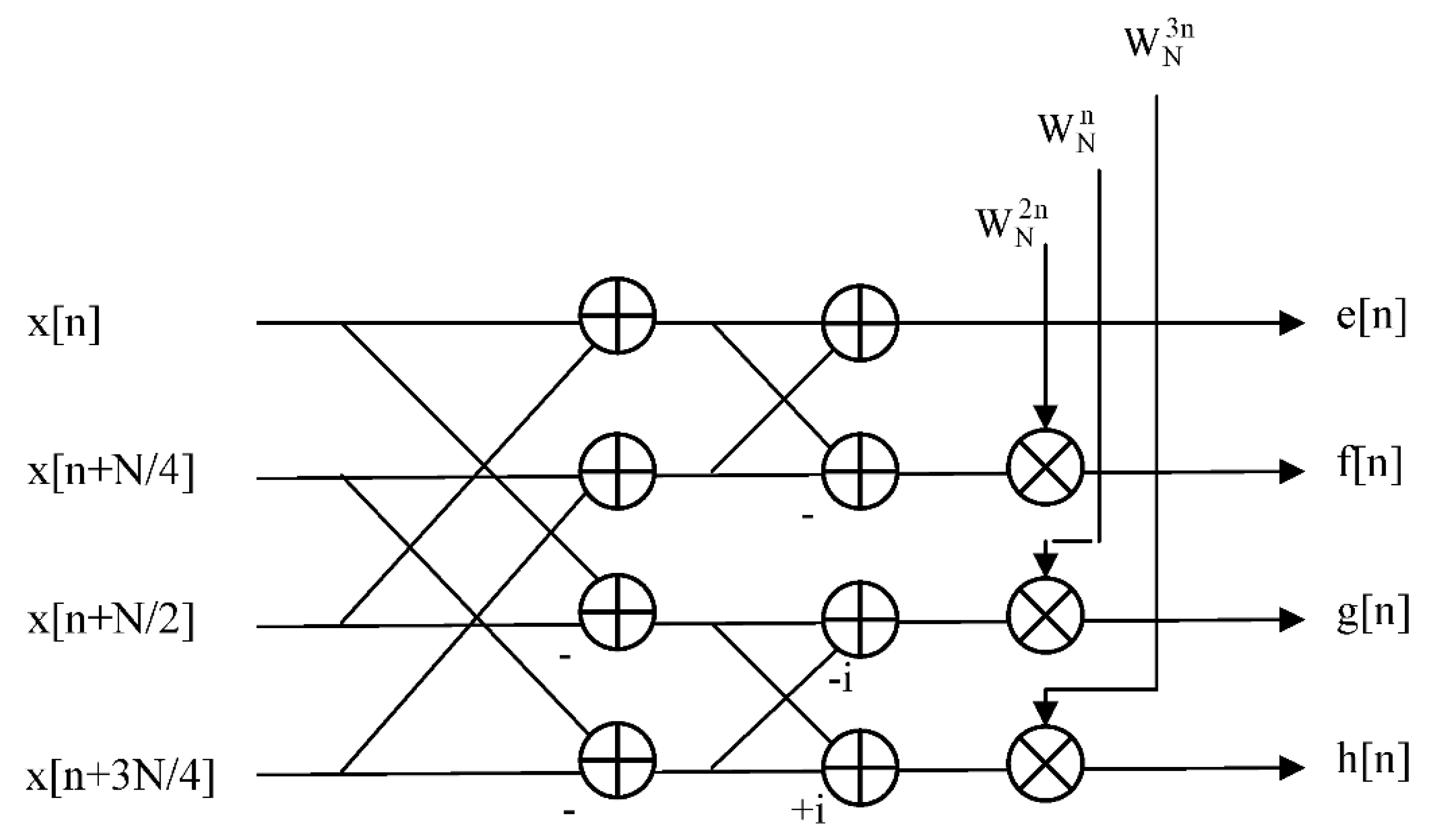

The twiddle factor requirement, more exactly, is that for a radix-R FFT algorithm there will be R-1 non-trivial twiddle factors to be applied to each butterfly. For a decimation-in-time (DIT) type FFT design [4-6], with typically digit-reverse (DR) -ordered inputs and naturally (NAT) -ordered outputs, the twiddle factors are applied to the butterfly inputs – see

Figure 1, whilst for a decimation-in-frequency (DIF) type FFT design [4-6], with typically NAT-ordered inputs and DR-ordered outputs, the twiddle factors are applied to the butterfly outputs – see

Figure 2. Each twiddle factor, as stated above, possesses two components, one being defined by the sine function and the other by the cosine function, which may be retrieved directly from the

coefficient memory or generated

on-the-fly in order to be able to carry out the necessary processing for the FFT butterfly.

Note that the DR-ordered and NAT-ordered index mappings referred to above may each be applied to either the input or output data sets for both the DIT and DIF variations of the fixed-radix FFT, yielding a total of four possible combinations for any fixed-radix transform: namely, DIT

NR, DIT

RN, DIF

NR and DIF

RN where ‘N’ refers to the NAT-ordered mapping and ‘R’ to the DR-ordered mapping and where the first letter of each pair corresponds to the input data mapping and the second letter to the output data mapping [

5]. The DIT

RN and DIF

NR radix-4 butterflies correspond to those already illustrated in Figs. 1 and 2, respectively, from which one can easily visualize how the designs may be straightforwardly generalized to higher radices. For the case of an arbitrary radix-R DIT algorithm, for example, the twiddle factors will be applied to all but one of the R butterfly inputs, whereas with the corresponding DIF algorithm, the twiddle factors will be applied to all but one of the R butterfly outputs.

When the DFT is viewed as a matrix operator, Eqtn. 1 becomes a matrix-vector product, with the effect of the radix-R FFT being to factorize the N×N DFT matrix into a product of logRN sparse matrices, with each sparse matrix being of size N×N and comprising N/R sub-matrices, each of size R×R, where each sub-matrix corresponds in turn to the operation of a radix-R butterfly. Thus, each sparse matrix factor produces N partial-DFT outputs though the execution of N/R radix-R butterflies. The effect of such a factorization is thus to reduce the computation of the original length-N transform to the execution of logRN computational stages, with each stage comprising radix-R butterflies, where the execution of a given stage can only commence once that of its predecessor has been completed, and with the FFT output being produced on completion of the final computational stage. Thus, from this analysis, it is evident that the computational efficiency of the fixed-radix FFT is totally dependent upon the computational efficiency of the individual butterfly.

Also, an efficient parallel implementation of the fixed-radix FFT – particularly for the processing of long (of order one million samples, as might be encountered with the processing of wideband signals embedded in astronomical data) to ultra-long (of order one billion samples, as might be encountered with the processing of ultra-wideband signals embedded in cosmic microwave data) data sets [

13] – invariably requires an efficient parallel mechanism for the generation or computation of the twiddle factors (unless the DFT is solved by approximate means via the adoption of a suitably defined sparse FFT algorithm [

8,

11]) as it is essential that the full set of R-1 non-trivial terms required by each radix-R butterfly – whether applied to the inputs or the outputs – should be available simultaneously in order to avoid potential timing issues and delays and to be produced at a rate that is consistent with the processing rate of the FFT.

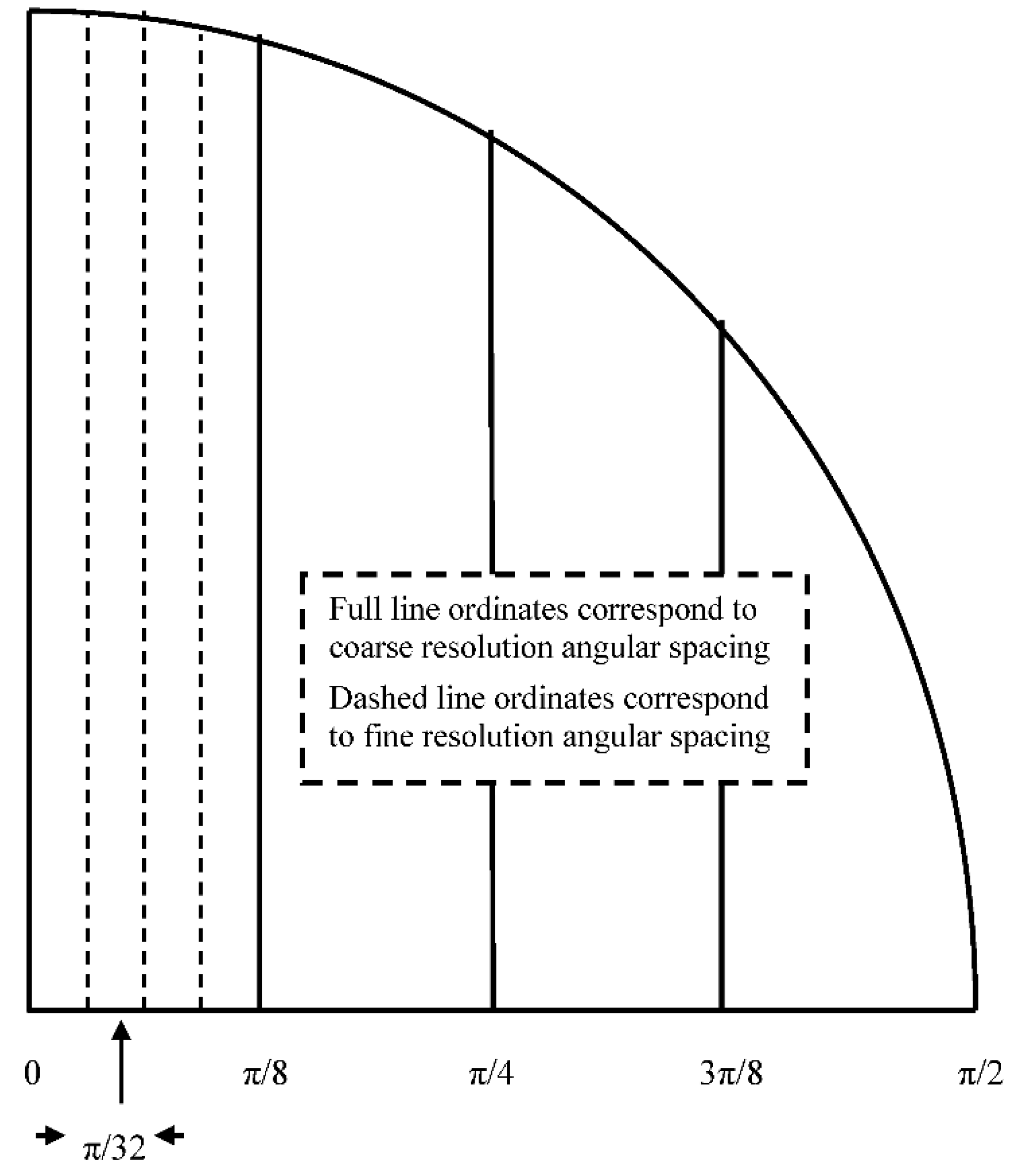

The computational efficiency of the twiddle factor generation for the fixed-radix FFT may be optimized by trading off the arithmetic component of the resource requirement against the memory component through the combined use of appropriately defined look-up tables (LUTs) – which may be of either single-level (comprising a single LUT with a fixed angular resolution) or multi-level (comprising multiple single-level LUTs each with its own distinct fixed angular resolution) type [

10,

12] – and trigonometric identities. Although the FFT radix can actually take on any integer value, the most commonly used radices are those of two and four, primarily for the resulting flexibility (generating a large number of possible transform sizes for various possible applications) that they offer and for ease of implementation. The attraction of choosing a solution based upon the radix-4 factorization, rather than that of the more familiar radix-2, is that of its greater computational efficiency – in terms of both a reduced arithmetic requirement (that is, of the required numbers of multiplications and additions) and reduced number of memory accesses (four complex-valued samples at a time instead of just two – comprising both real and imaginary components) for the retrieval of data from memory.

There is, in turn, the potential for exploiting greater parallelism, at the arithmetic level, via the use of a larger sized butterfly, thereby offering the possibility of achieving a higher

computational density (that is, throughput per unit area of silicon [

9]) when implemented in silicon with a suitably chosen computing device and architecture. As a result, we will be particularly concerned with the radix-4 version of the FFT algorithm, together with the two-level version of the LUT, as these two algorithmic choices facilitate ease of illustration and offer the potential for flexible computationally-efficient FFT designs.

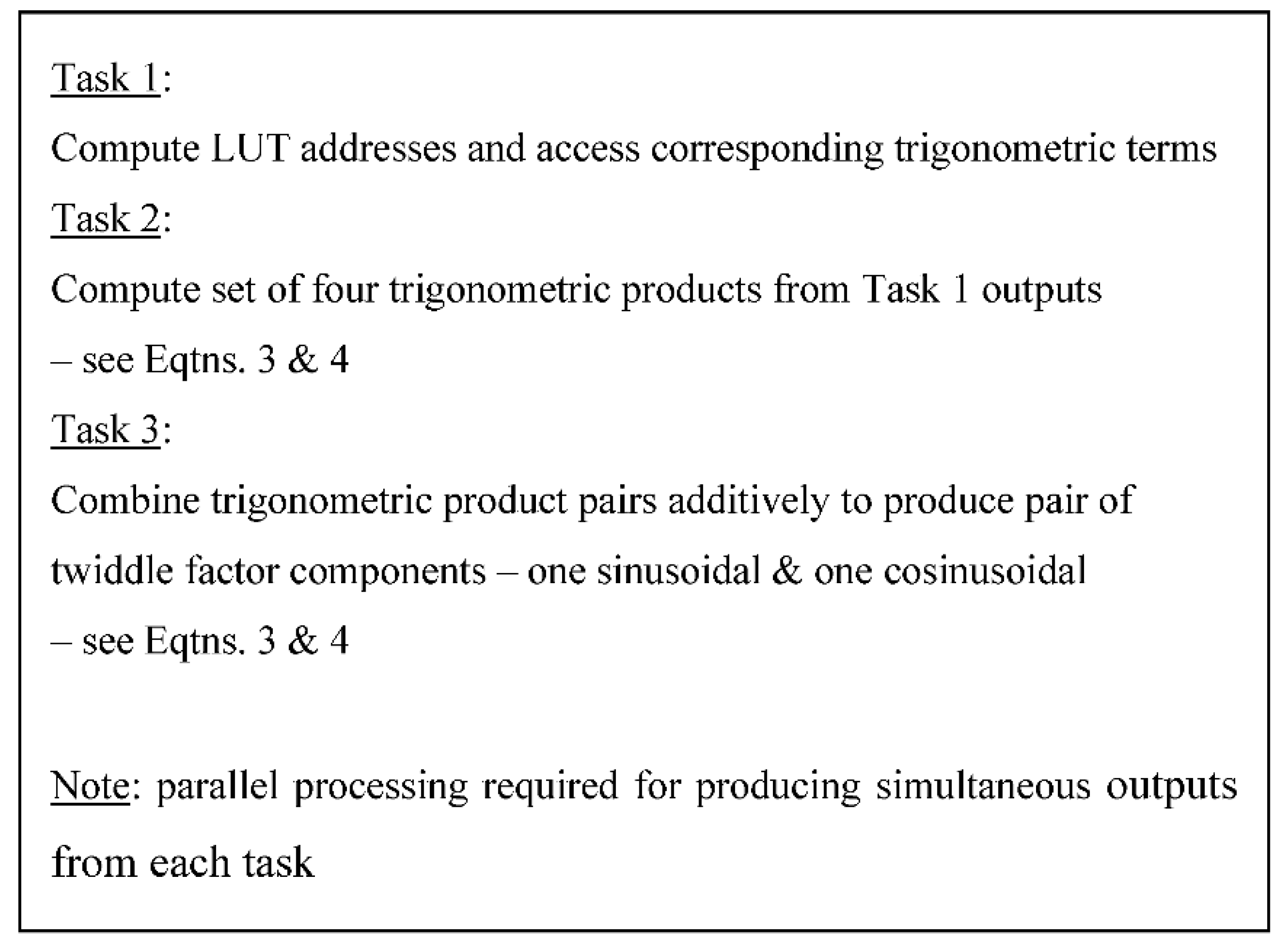

Thus, following this introductory section, complexity considerations for both sequential and parallel computing devices are discussed in

Section 2, followed by the derivation of a collection of formulae in

Section 3 for some of the standard trigonometric identities which, together with suitably defined LUTs – dealing with both single-level and multi-level types – as discussed in

Section 4, will be required for the efficient computation of the twiddle factors.

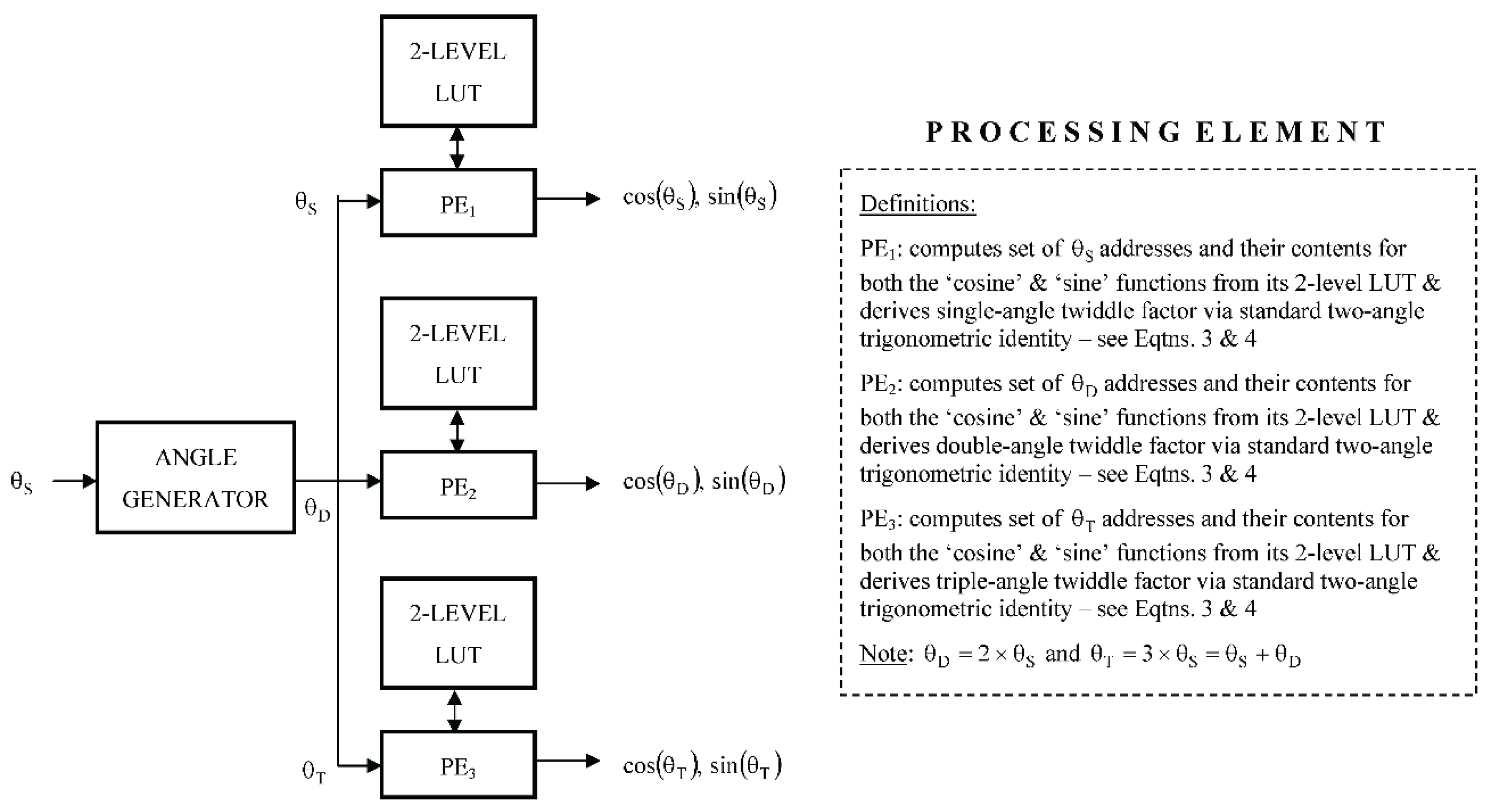

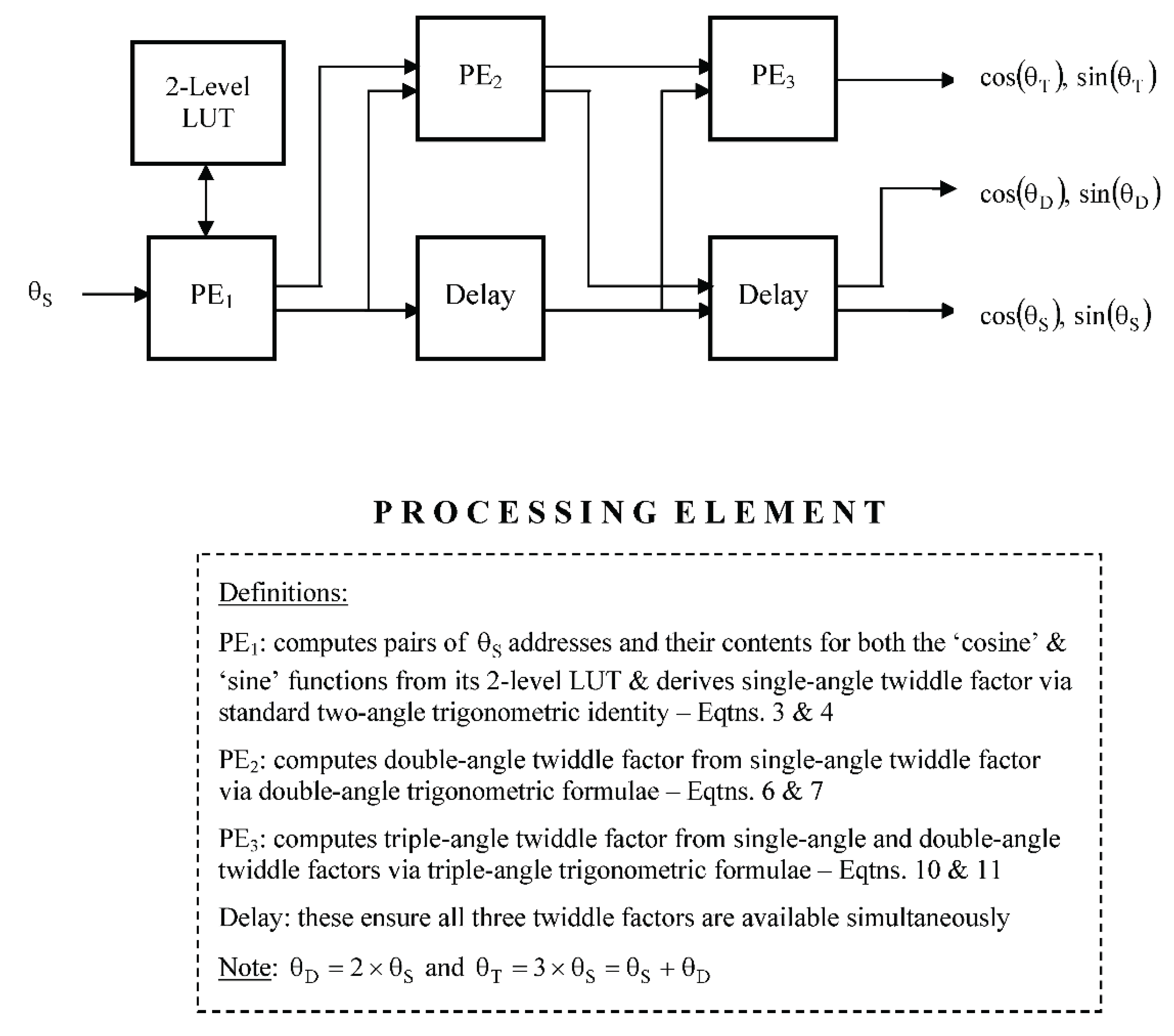

Section 5 then describes, in some detail, two different parallel computing solutions to the task of twiddle factor computation where the computation of each twiddle factor is assigned its own processing element (PE). The first scheme is based upon the adoption of the

single- instruction multiple-data (SIMD) technique [

2], as applied in the

spatial domain, whereby the PEs operate independently upon their own individual LUTs and may thus be executed simultaneously, whilst the second scheme is based upon the adoption of the

pipelining technique [

2], as applied in the

temporal domain, whereby the operation of all but the first LUT-based PE is based upon second-order recursion using previously computed PE outputs. Detailed examples for these two approaches are provided using a radix-4 butterfly combined with two-level LUTs and a brief comparative analysis provided in terms of both the

space-complexity, as expressed in terms of their relative arithmetic and memory components, and the

time-complexity, as expressed in terms of their associated latencies and throughputs, for a silicon-based hardware implementation. Finally, a brief summary and conclusions is provided in

Section 6.