Submitted:

10 May 2025

Posted:

13 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

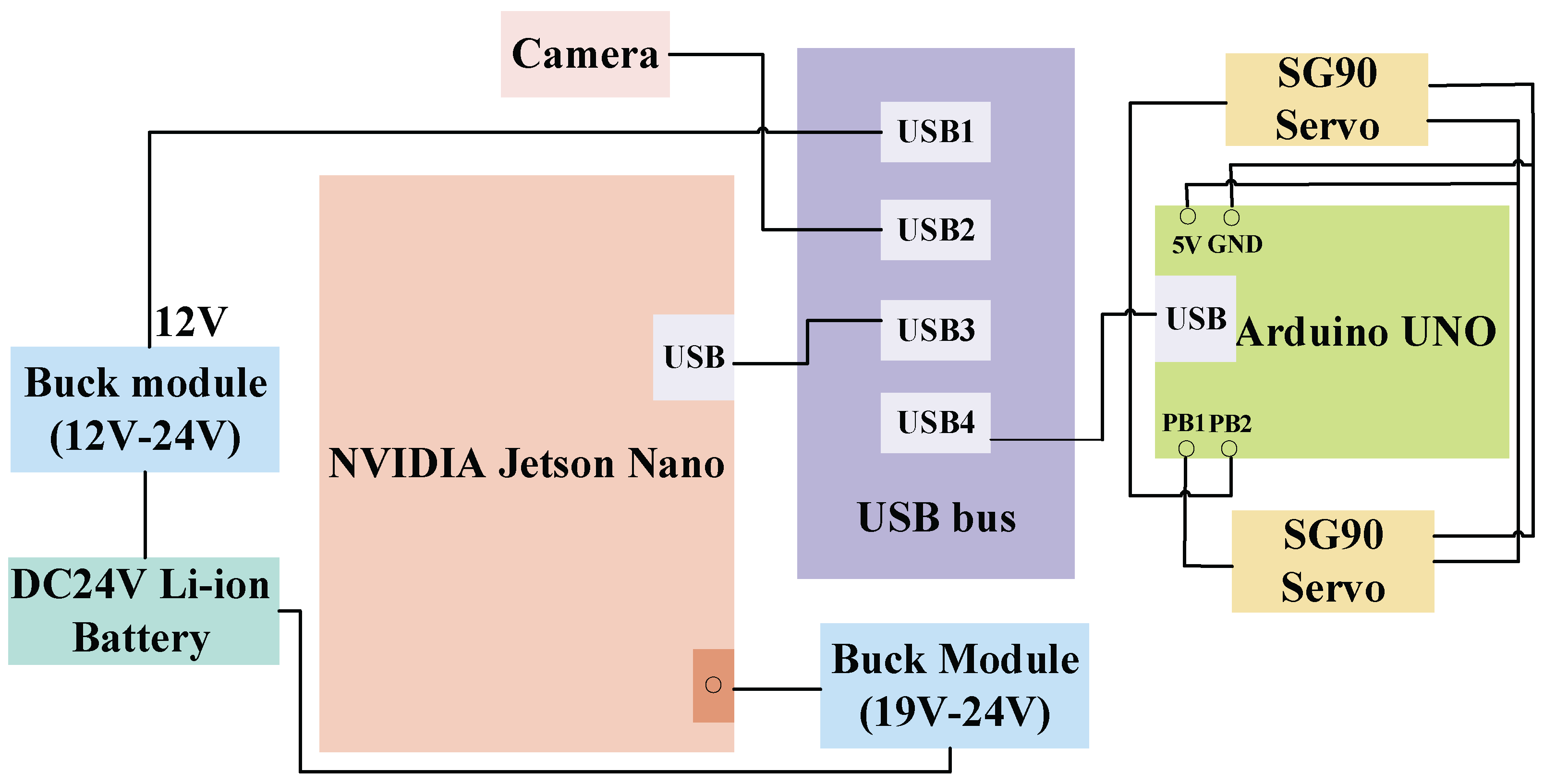

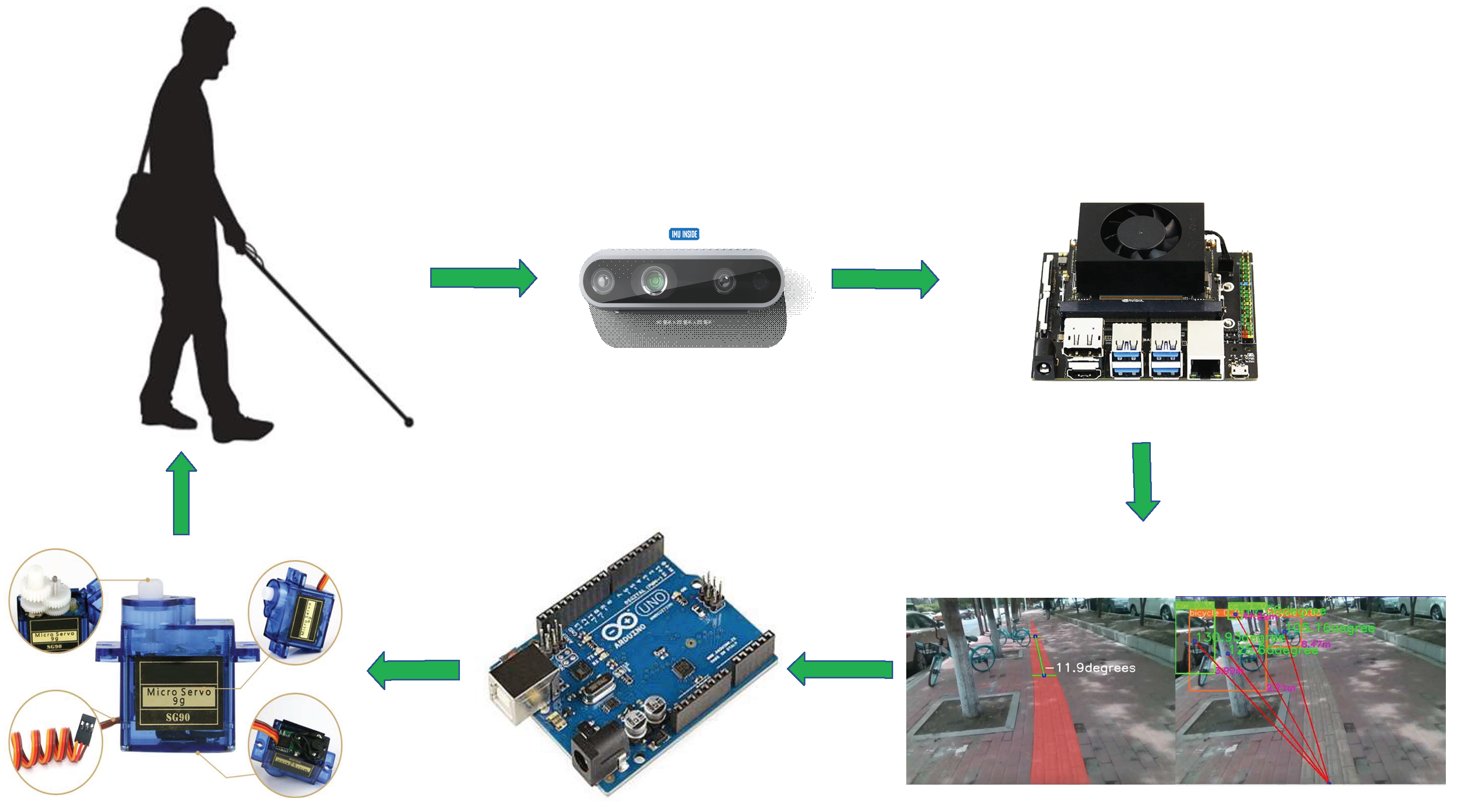

- A practical hardware system of travel assistance device for the blind is built. The NVIDIA Jetson nano embedded platform is used as the edge computing device, combined with the D435i depth camera for environment sensing, and the Arduino microcontroller and SG90 servo to realize vibration feedback. While meeting the functional requirements, the device considers the portability and practicality, providing a stable hardware foundation for the blind to travel assistance.

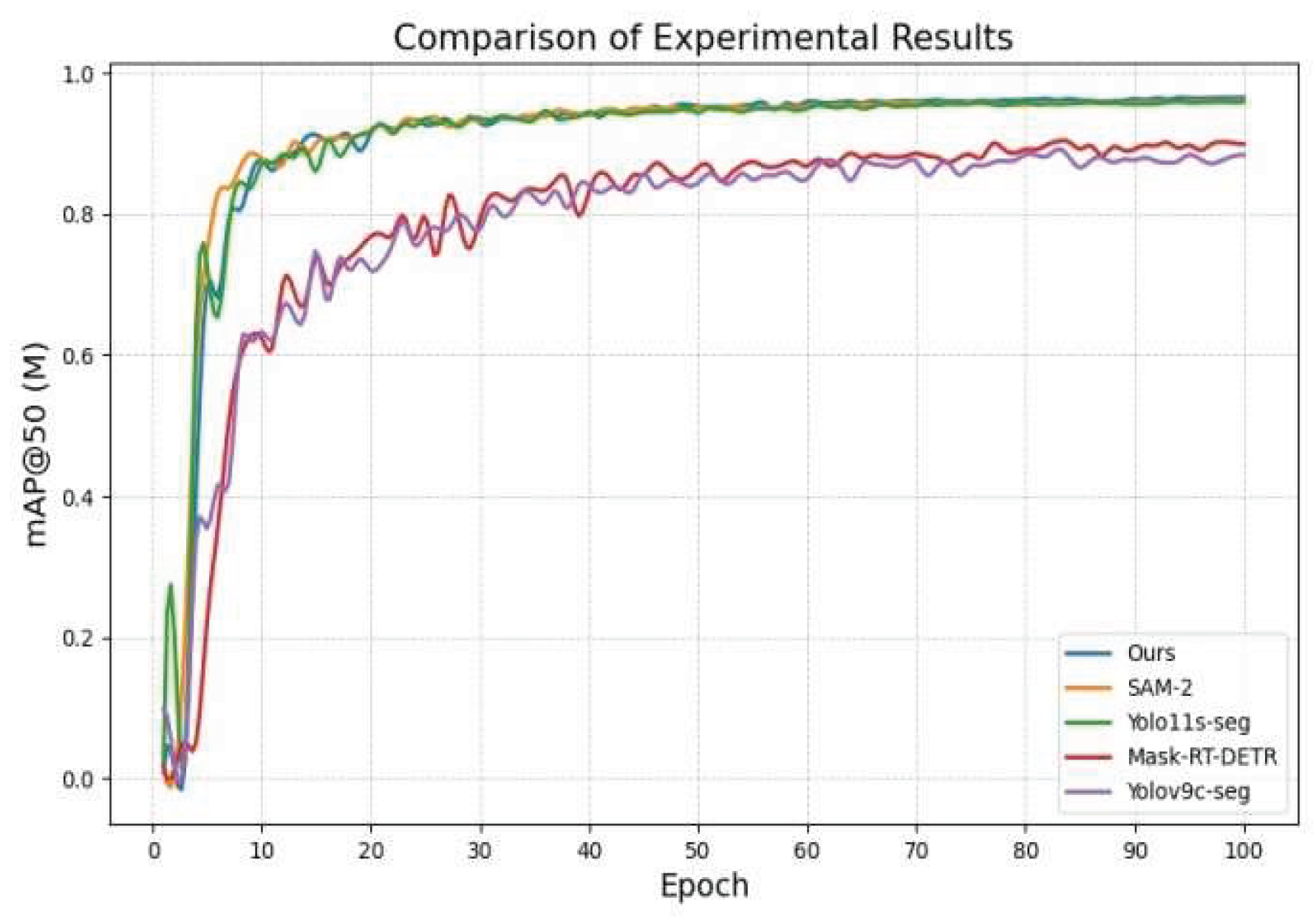

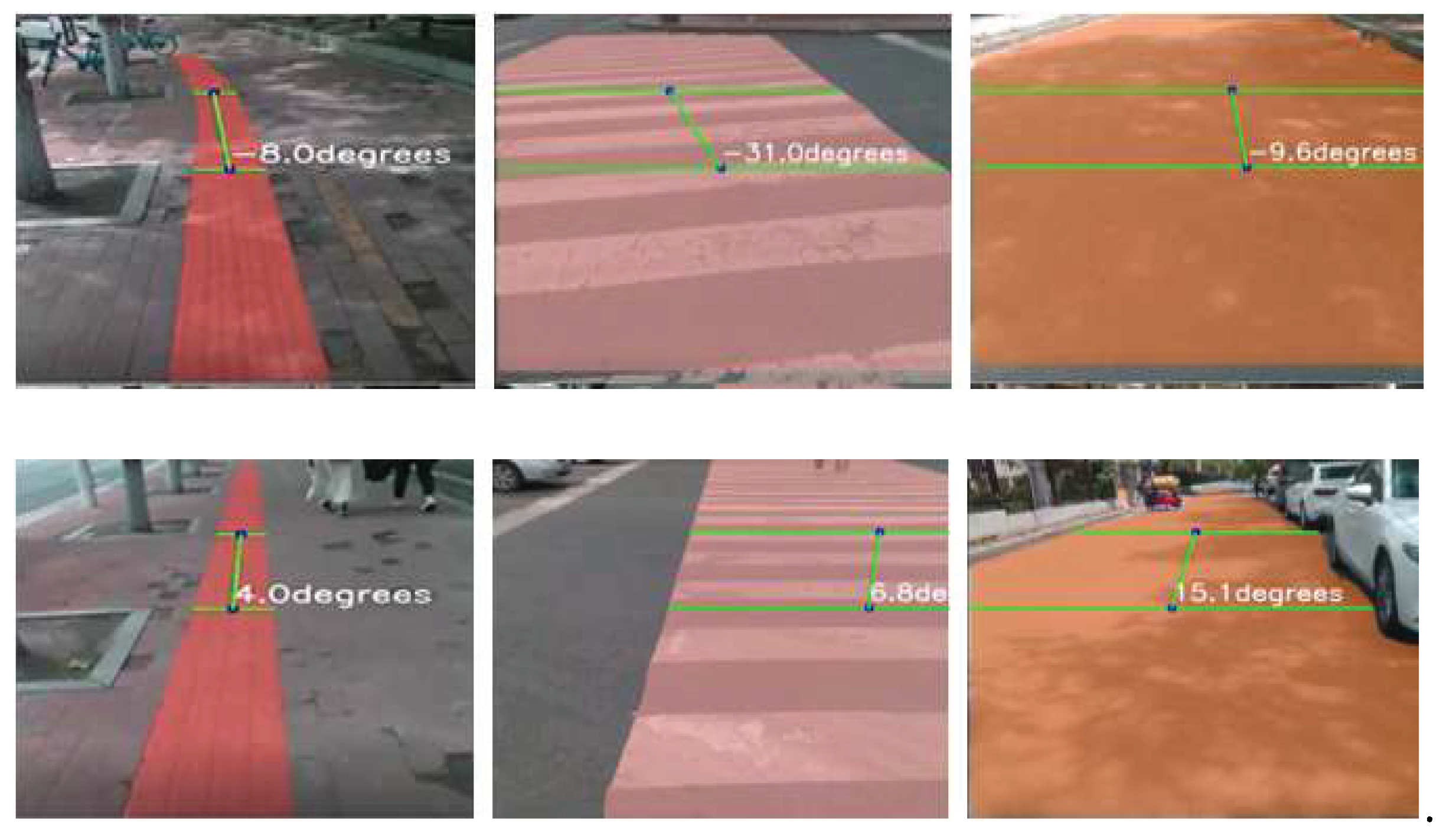

- In order to solve the problem of limited computing power of edge devices, a new lightweight object detection and segmentation network model was designed. The network model is mainly composed of three parts: a multi-scale attention feature extraction backbone network, a dual-stream feature fusion module combined with the Mamba architecture, and an adaptive context-aware detection segmentation head. In the road segmentation and obstacle detection tasks, compared with the baseline network, the accuracy of the algorithm is significantly improved, for example, the mAPmask of road segmentation is increased by 2.7 percentage points, and the mAP of obstacle detection is increased by 3.1 percentage points. At the same time, the computational complexity is greatly reduced, the model size, parameter number and GFLOPs are reduced, and the frame rate is maintained at a high level (more than 95 FPS for road segmentation and more than 90 FPS for obstacle detection), effectively balancing accuracy and efficiency, making it run well on low-power embedded devices and meet the real-time needs of blind travel.

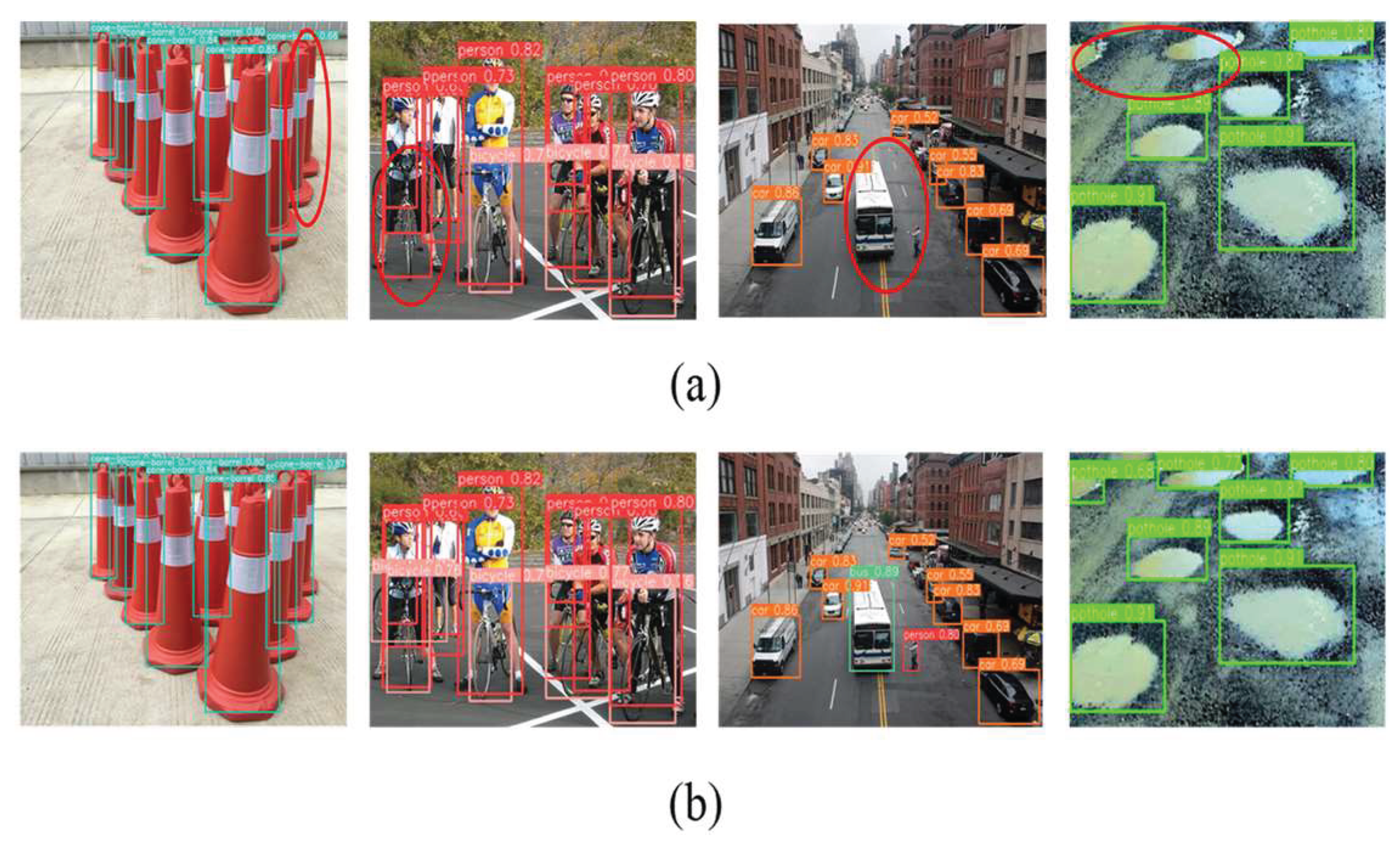

- Through many experiments, the effectiveness and superiority of the equipment and algorithm are comprehensively verified. In terms of data collection and processing, multi-source data is carefully integrated, enhanced and annotated to ensure data quality. The ablation experiments clearly demonstrate the important role and improvement effect of each module of the algorithm, and the comparative experiments show that the algorithm has significant advantages in accuracy, lightweight and real-time performance compared with various typical networks such as yolov9c-seg, yolov10n and other typical networks in road segmentation and obstacle detection tasks, such as the road segmentation mAPmask reaches 0.979 and the model is only 5.1MB, and the obstacle detection mAP reaches 0.757 and the model is only 5.2MB. It fully proves its potential in practical application and provides strong support for the development of travel assistance technology for the blind.

2. Related Work

3. Hardware System Build

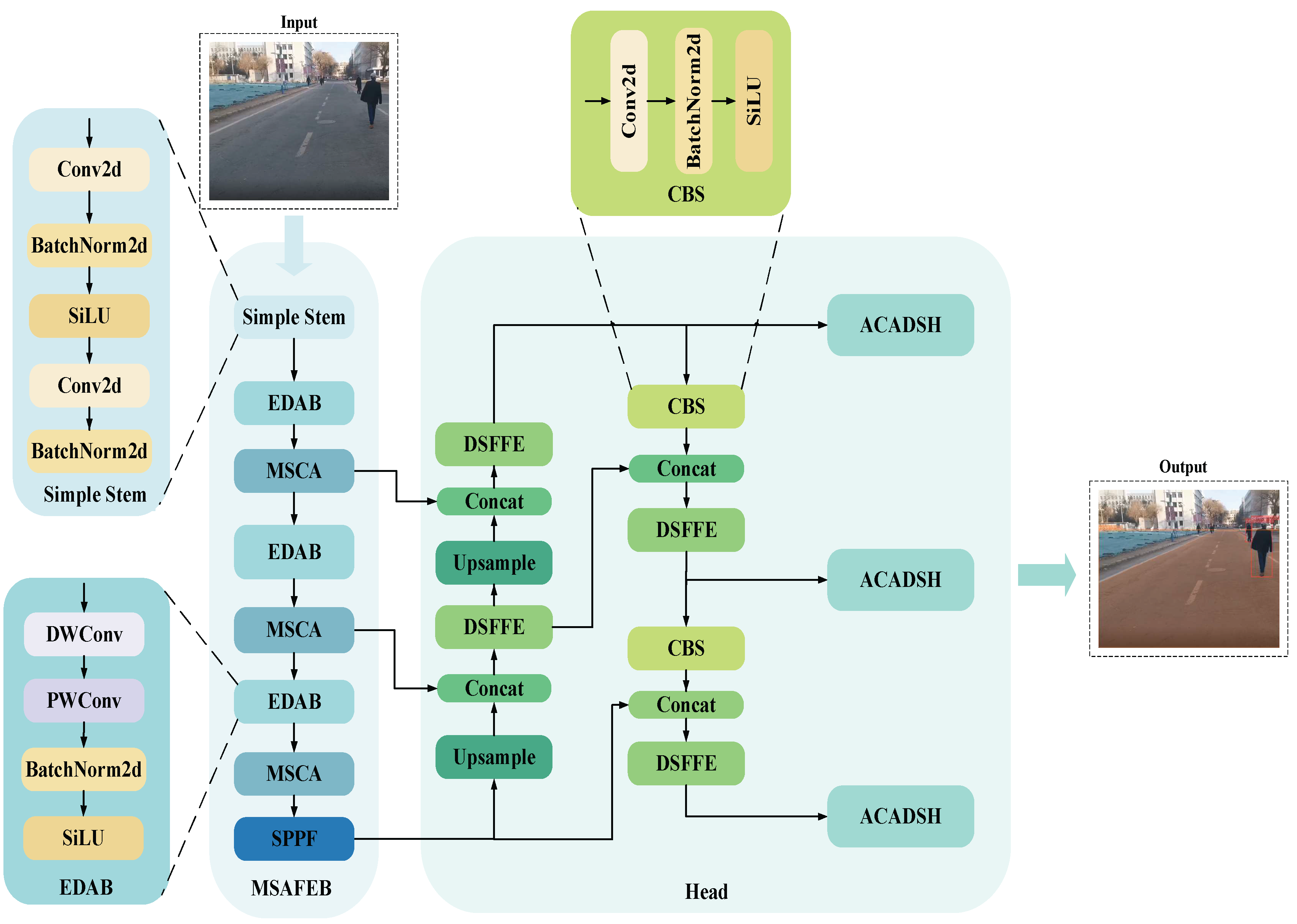

4. Method

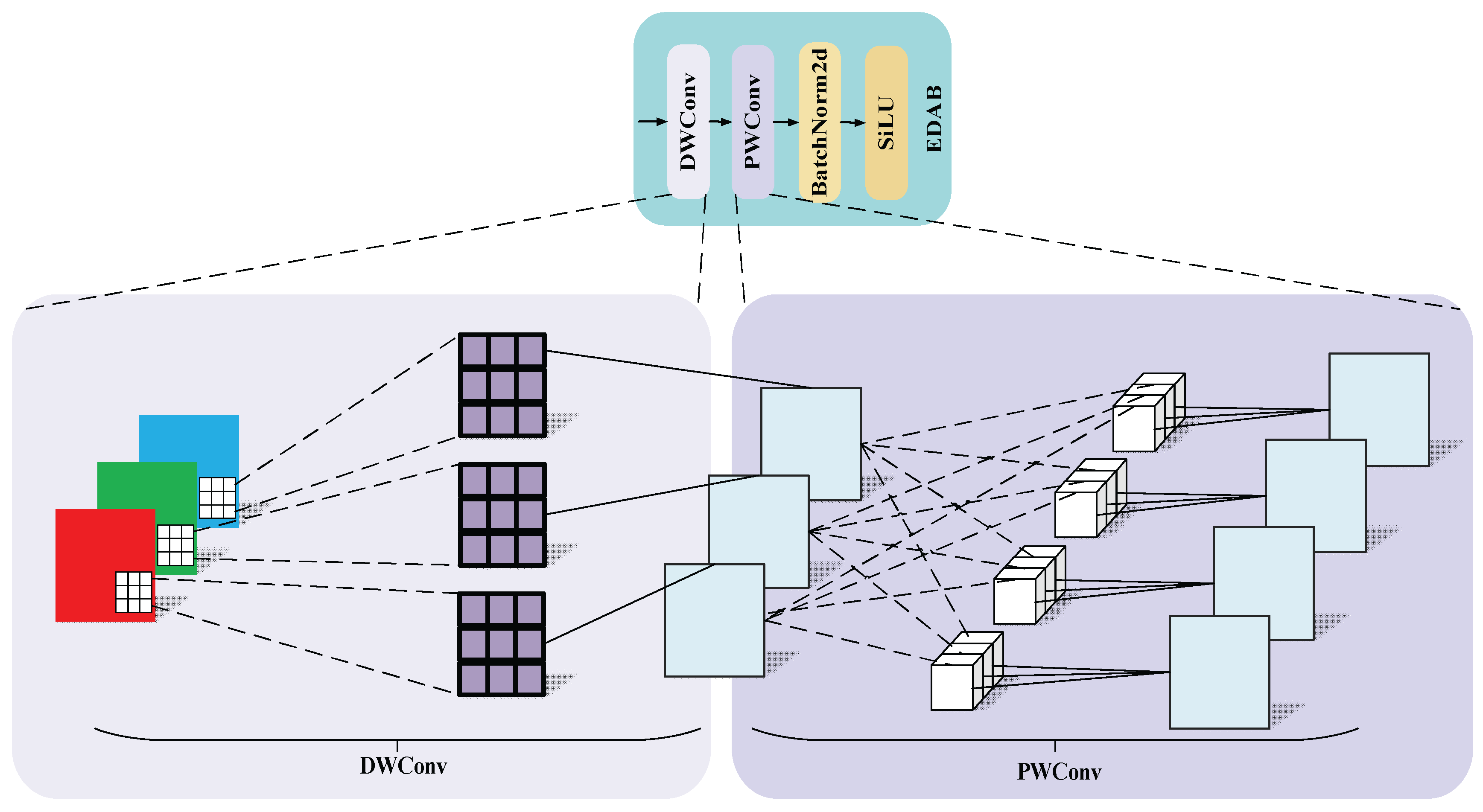

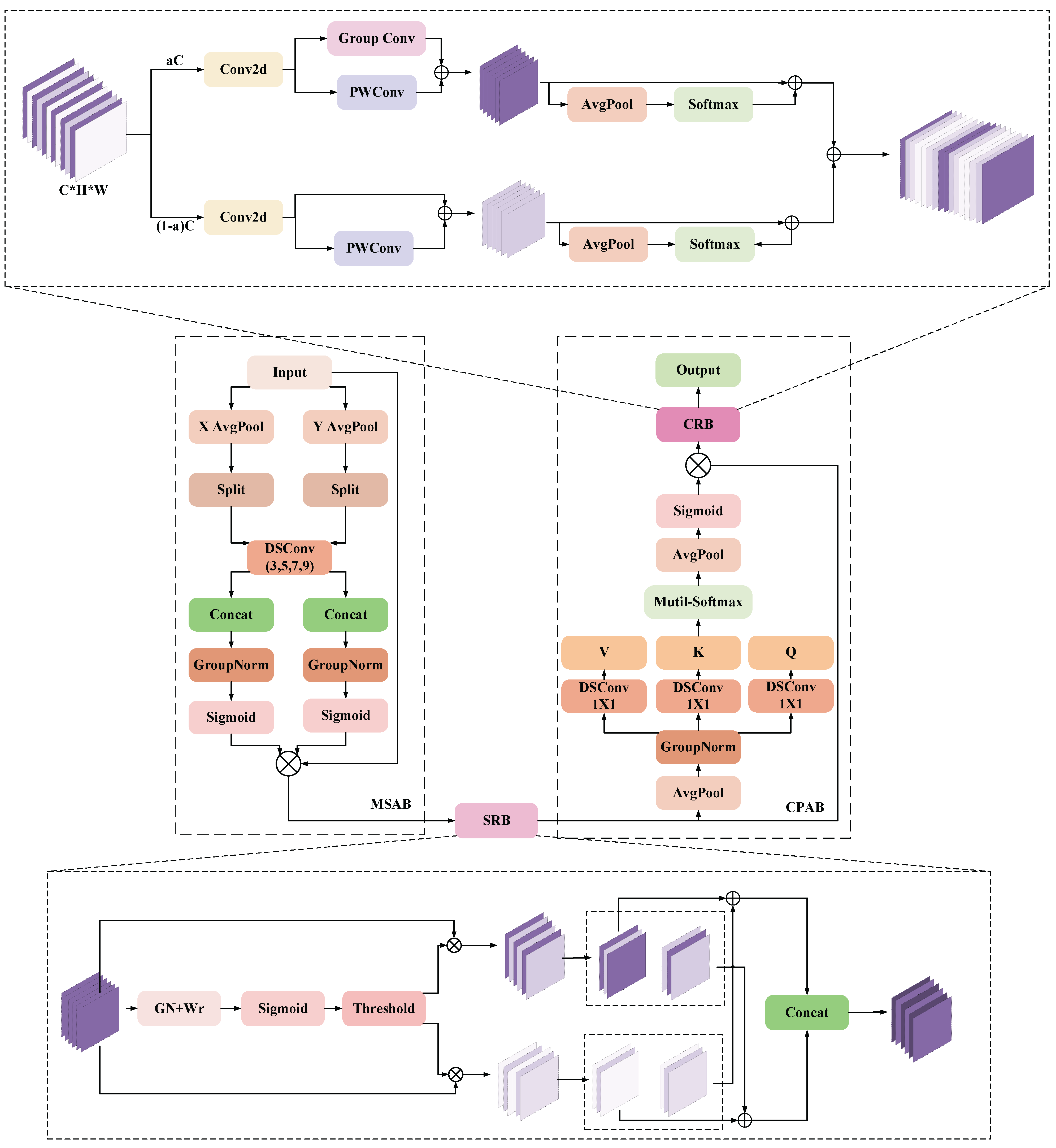

4.1. Multi-Scale Attention Feature Extraction Backbone

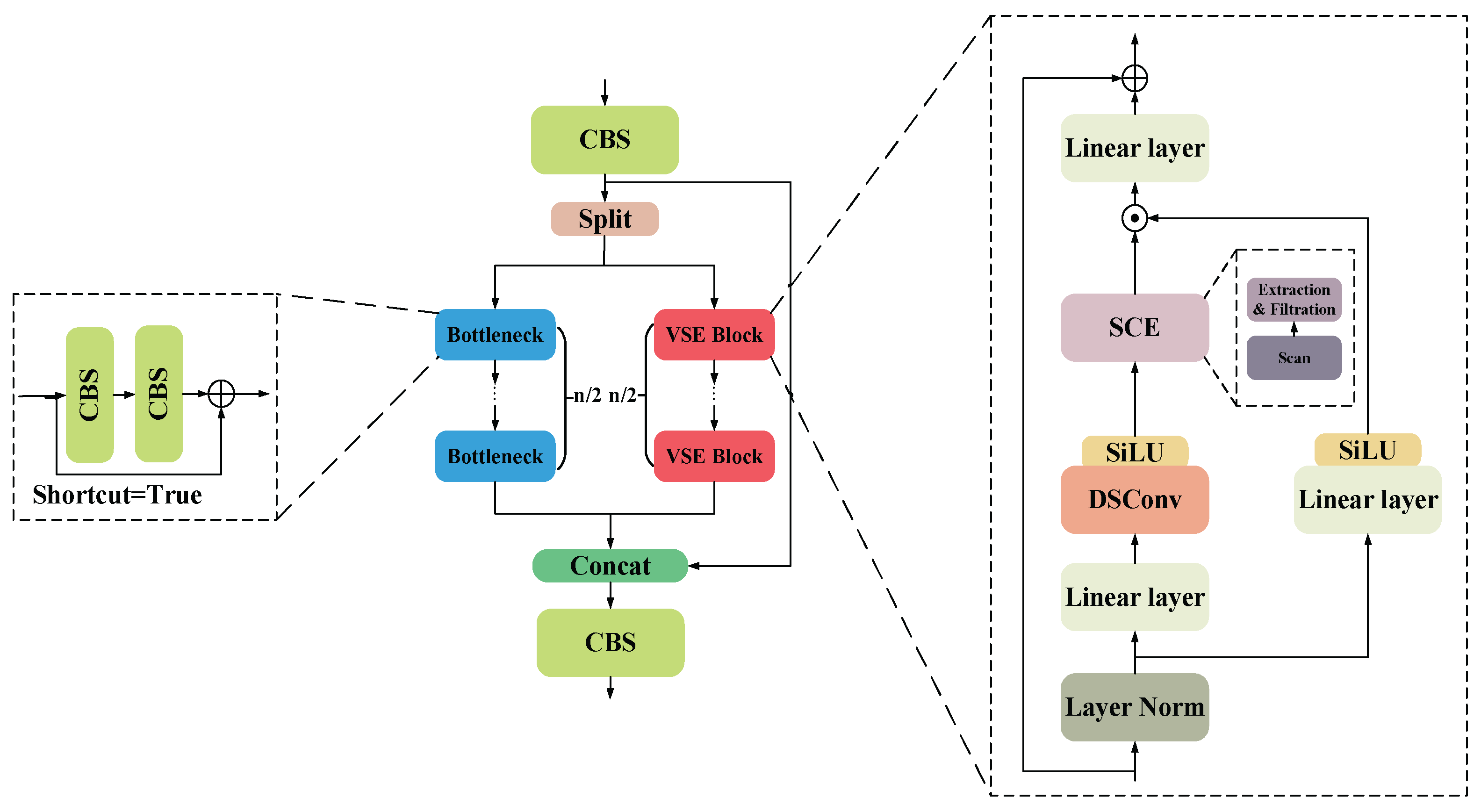

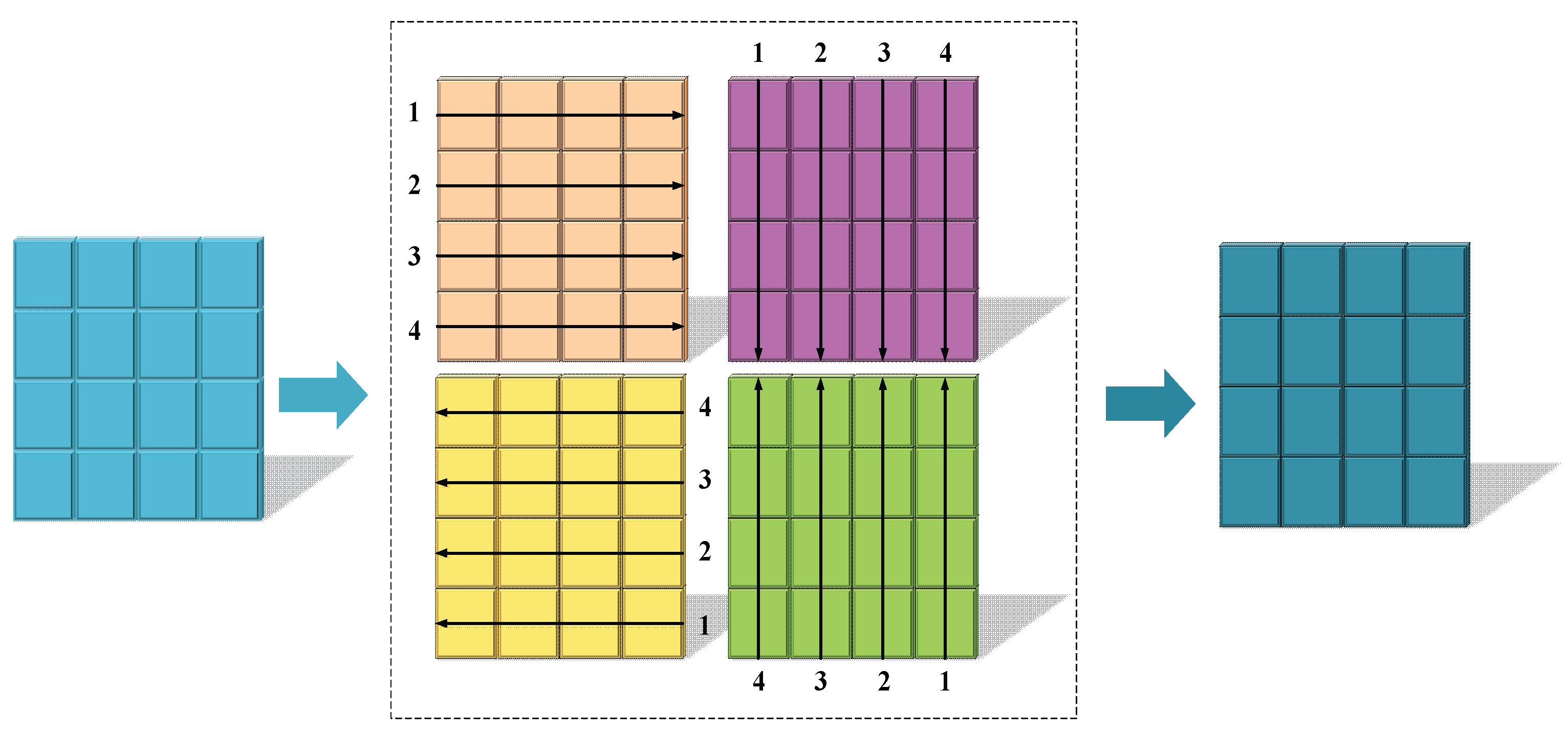

4.2. Dual-Stream Feature Fusion Module

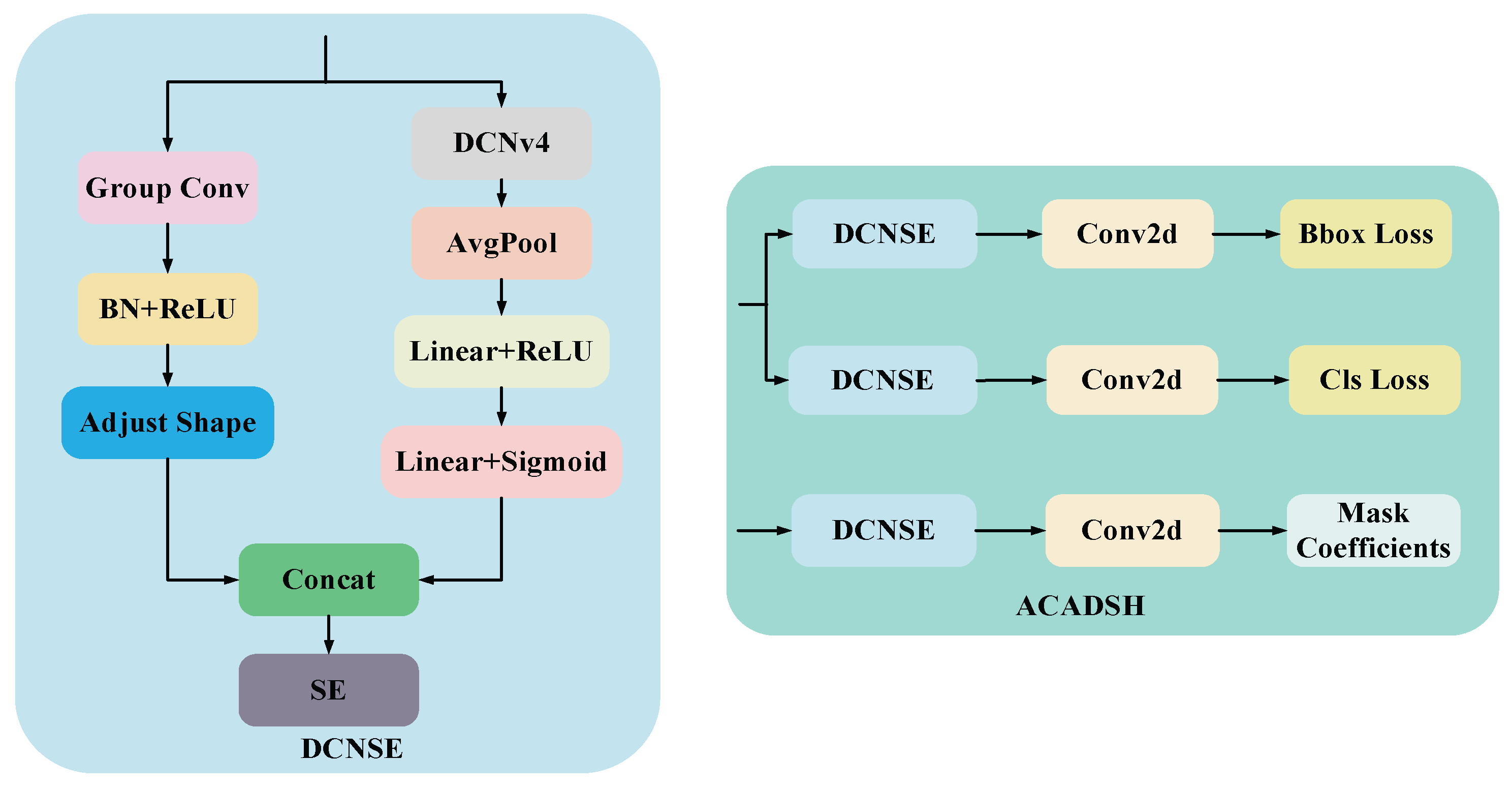

4.3. Adaptive Context-Aware Detection and Segmentation Head

5. Experiments and Results

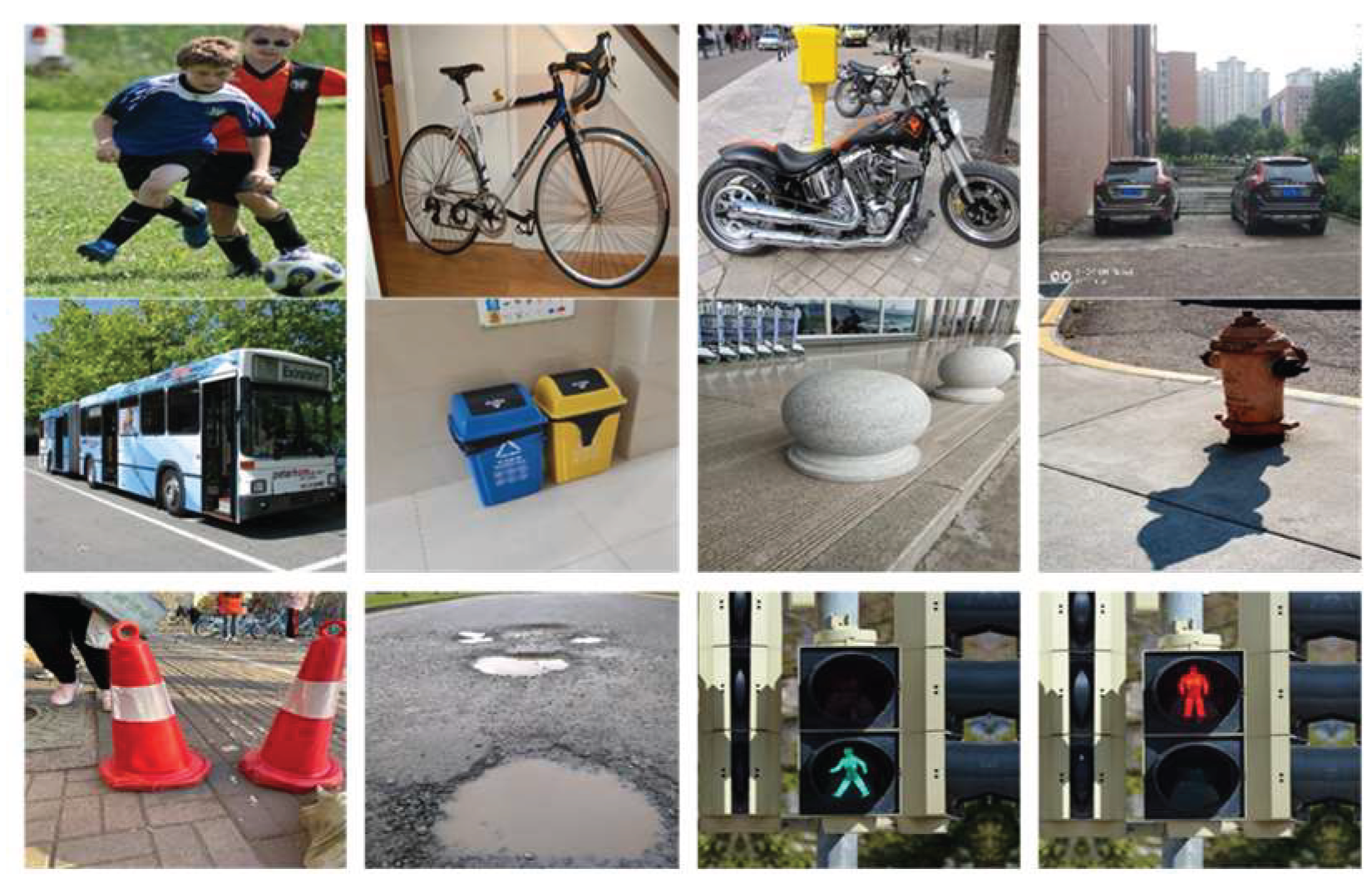

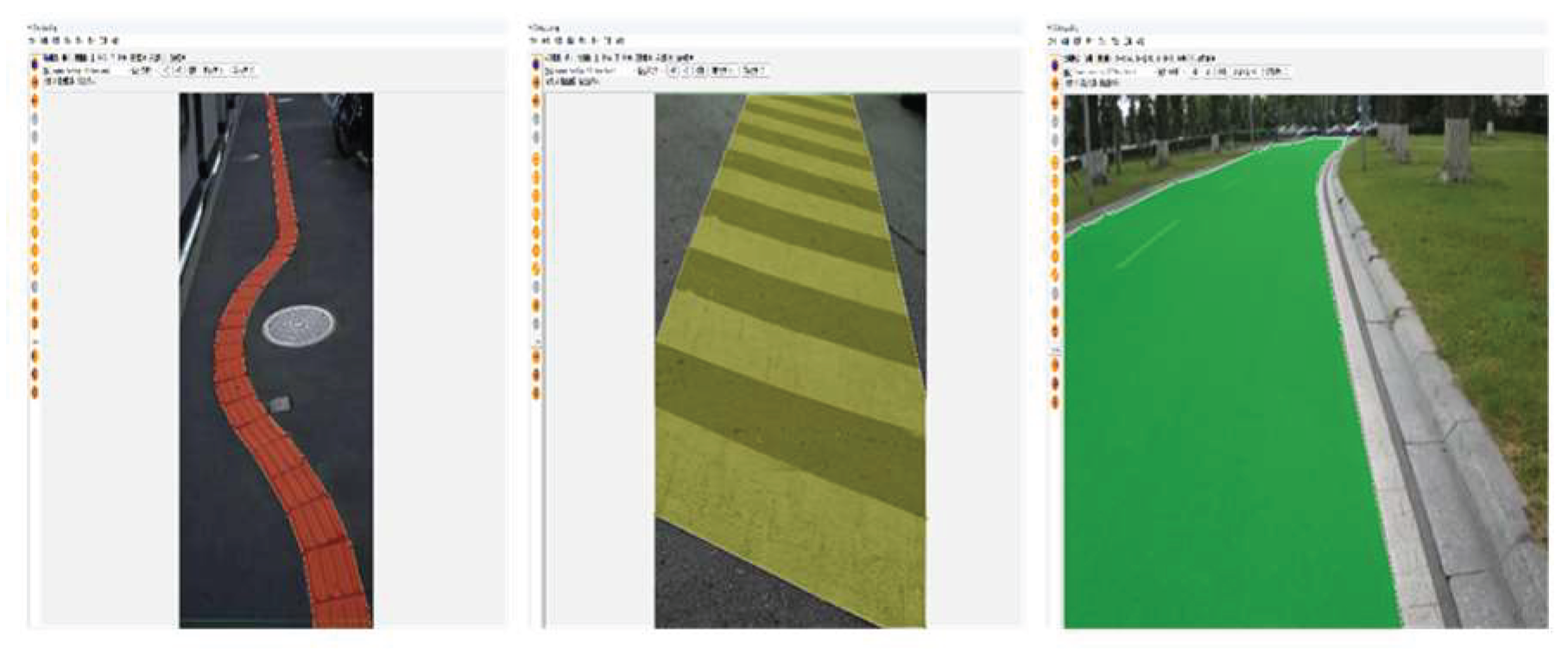

5.1. Data Acquisition and Production

5.2. Image Processing

5.3. Experimental Setup and Environment

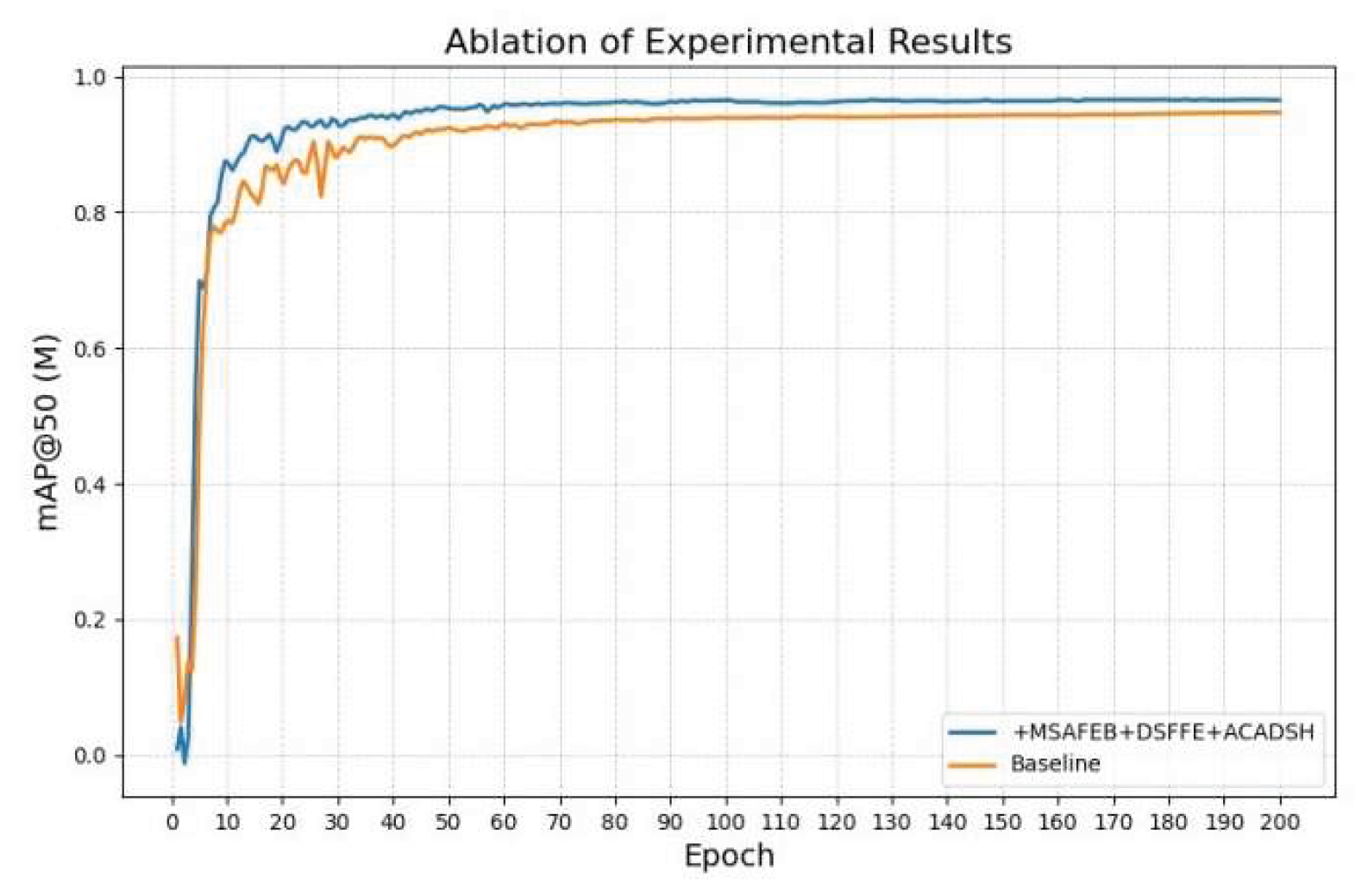

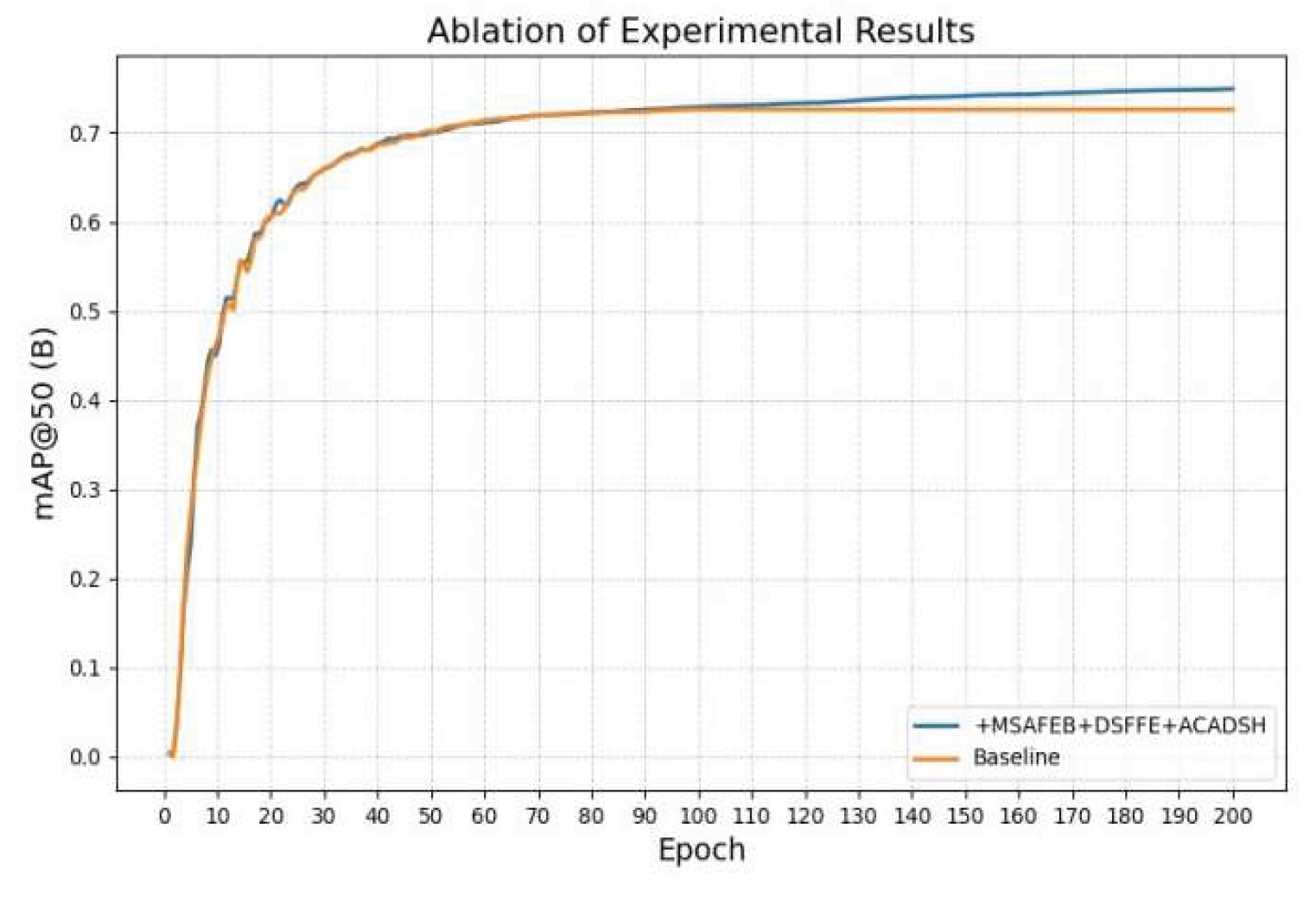

5.4. Ablation Experiments

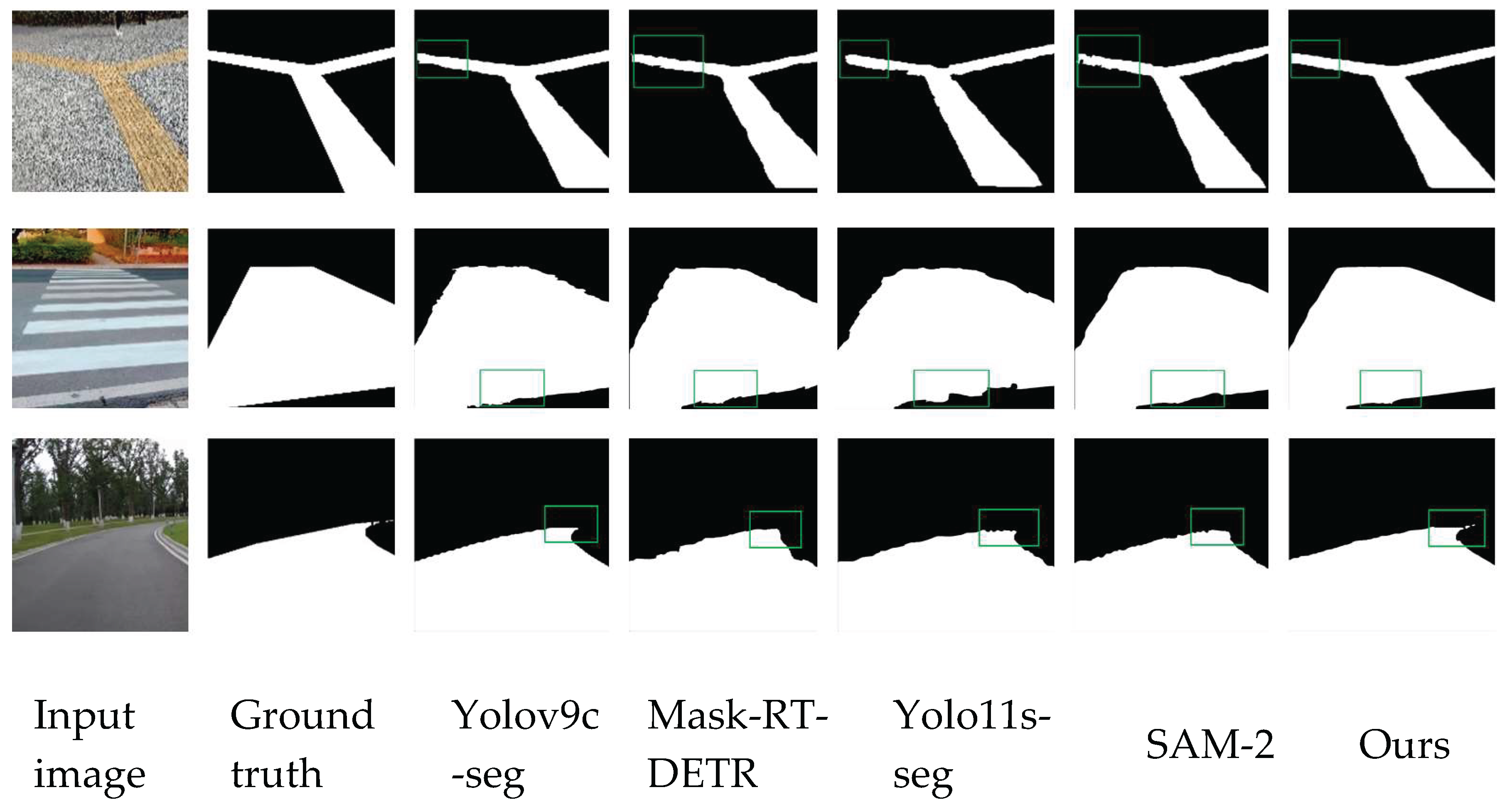

5.5. Comparative Experiments

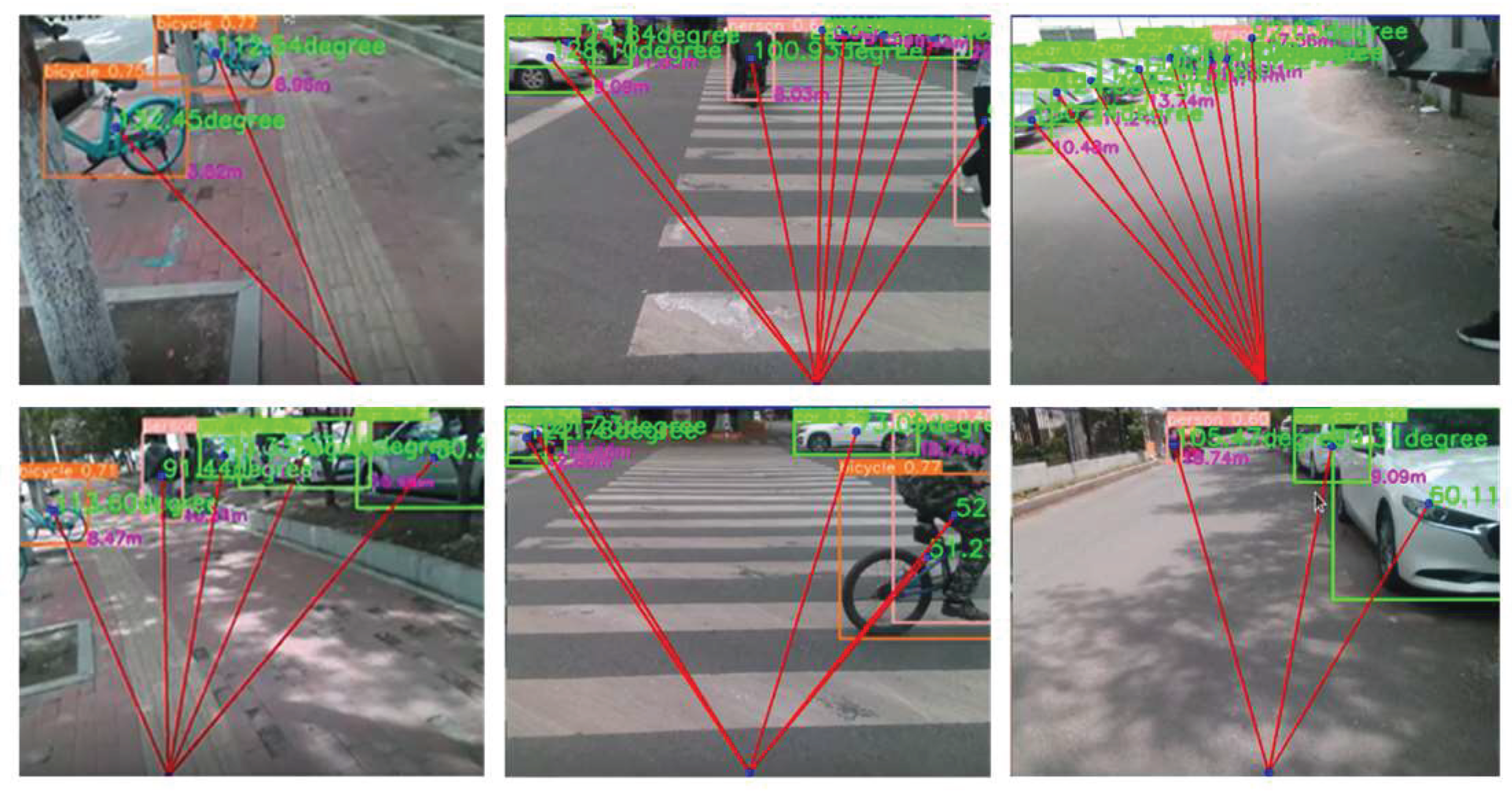

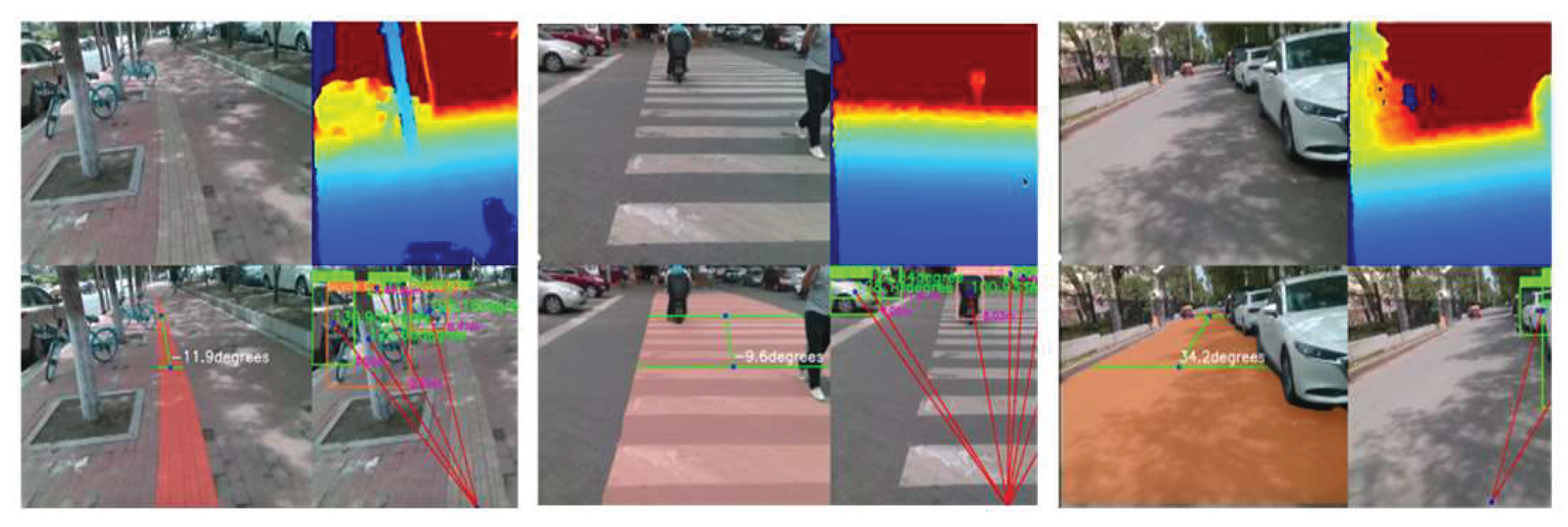

5.6. Algorithm Results Visualization and Analysis

5.7. Real-World Scenario Test Visualization and Analysis

6. Conclusion

References

- Bourne, R.; Steinmetz, J.D.; Flaxman, S.; et al. Trends in prevalence of blindness and distance and near vision impairment over 30 years: an analysis for the Global Burden of Disease Study. The Lancet global health, 2021, 9, e130–e143. [Google Scholar] [CrossRef] [PubMed]

- Petsiuk, A.L.; Pearce J, M. Low-cost open-source ultrasound-sensing based navigational support for the visually impaired. Sensors, 2019, 19, 3783. [Google Scholar] [CrossRef] [PubMed]

- Papagianopoulos, I.; De Mey, G.; Kos, A.; et al. Obstacle detection in infrared navigation for blind people and mobile robots. Sensors, 2023, 23, 7198. [Google Scholar] [CrossRef] [PubMed]

- Wu Z, H. , Rong, X. W.; Fan Y. Review of research on guide robots. Computer Engineering and Applications, 2020, 56, 1–13. [Google Scholar]

- Lu, C.L.; Liu, Z.Y.; Huang, J.T.; et al. Assistive navigation using deep reinforcement learning guiding robot with UWB/voice beacons and semantic feedbacks for blind and visually impaired people. Frontiers in Robotics and AI, 2021, 8: 654132. [CrossRef]

- Arulkumaran, K.; Deisenroth, M.P.; Brundage, M.; et al. Deep reinforcement learning: a brief survey. IEEE Signal Processing Magazine, 2023, 34, 26–38. [Google Scholar] [CrossRef]

- Cao, Z.; Xu, X.; Hu, B.; et al. Rapid detection of blind roads and crosswalks by using a lightweight semantic segmentation network. IEEE Transactions on Intelligent Transportation Systems, 2020, 22, 6188–6197. [Google Scholar] [CrossRef]

- Dimas, G.; Diamantis, D.E.; Kalozoumis, P.; et al. Uncertainty-aware visual perception system for outdoor navigation of the visually challenged. Sensors, 2020, 20, 2385–2394. [Google Scholar] [CrossRef] [PubMed]

- Ma, Y.; Xu, Q.; Wang, Y.; et al. EOS: an efficient obstacle segmentation for blind guiding. Future Generation Computer Systems, 2023, 140, 117–128. [Google Scholar] [CrossRef]

- Hsieh, Y.Z.; Lin, S.S.; Xu, F.X.; et al. Development of a wearable guide device based on convolutional neural network for blind or visually impaired persons. Multimedia Tools and Applications, 2020, 79, 29473–29491. [Google Scholar] [CrossRef]

- Suman, S.; Mishra, S.; Sahoo, K.S.; et al. Vision navigator: a smart and intelligent obstacle recognition model for visually impaired users. Mobile Information Systems, 2022, 33, 891–971. [Google Scholar] [CrossRef]

- Mai, C.; Chen, H.; Zeng, L.; et al. A smart cane based on 2D LiDAR and RGB-D camera sensor-realizing navigation and obstacle recognition. Sensors, 2024, 24, 870–886. [Google Scholar] [CrossRef] [PubMed]

- Chen, Z.; Liu, X.; Kojima, M.; et al. A wearable navigation device for visually impaired people based on the real-time semantic visual SLAM system. Sensors, 2021, 21, 1536–1542. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H. mixup: Beyond empirical risk minimization. arXiv:1710.09412, 2017.

- Wang, C.Y.; Yeh, I.H.; Mark Liao H, Y. Yolov9: Learning what you want to learn using programmable gradient information. European Conference on Computer Vision. Springer, Cham, 2025: 1-21. [CrossRef]

- Khanam, R.; Hussain, M. Yolov11: An overview of the key architectural enhancements. arXiv:2410.17725, 2024.

- Ravi, N.; Gabeur, V.; Hu, Y.T.; et al. Sam 2: Segment anything in images and videos. arXiv:2408.00714, 2024.

- Zhao, Y.; Lv, W.; Xu, S.; et al. Detrs beat yolos on real-time object detection. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2024: 16965-16974. [CrossRef]

- Wang, A.; Chen, H.; Liu, L.; et al. Yolov10: Real-time end-to-end object detection. arXiv:2405.14458, 2024.

- Liu, S.; Zeng, Z.; Ren, T.; et al. Grounding dino: Marrying dino with grounded pre-training for open-set object detection. European Conference on Computer Vision. Springer, Cham, 2025: 38-55. [CrossRef]

| NVIDIA Jetson nano | |

| GPU | 128-core Maxwell |

| CPU | Quad-core ARM A57 @ 1.43 GHZ |

| memory | 4 GB 64-bit LPDDR4 25.6 GB/s |

| storage | microSD (not included) |

| Video encoding | 4K @ 30|4x 1080p @ 30|9x 720p @ 30 [H.264/H.265) |

| Video decoding | 4K @ 60|2x 4K @ 30|8x 1080p @ 30 [H.264/H.265) |

| camera | 1x MIPI CS1-2 DPHY lanes |

| connectivity | Gigabit Ethernet, M.2 Key E |

| display | HDMI 2.0 and eDP 1.4 |

| USB interface | 4x USB 3.0, USB 2.0 Micro-B |

| other | GPIO, 2C, 2S, SPI. UART |

| Mechanical part | 69 mm x 45 mm, 260-pin edge connector |

| Project | Configure |

| Operating System | Ubuntu20.04 |

| Graphics Card | GeForce RTX 3060(12GB) |

| CUDA Version | 11.8 |

| Python | 3.8.16 |

| Deep Learning Framework | Pytorch1.13.1 |

| Model | Model size↓ | parameters↓ | GFLOPs↓ | mAPmask↑ | FPS↑ |

|---|---|---|---|---|---|

| Baseline | 6.8 | 3.26 | 12.1 | 0.952 | 67 |

| +MSAFEB | 5.6 | 2.74 | 10.2 | 0.963 | 94 |

| +DSFFM | 6.4 | 3.18 | 11.8 | 0.966 | 85 |

| +ACADSH | 5.9 | 2.96 | 10.5 | 0.961 | 88 |

| +MSAFEB+DSFFM | 5.9 | 2.84 | 10.9 | 0.971 | 95 |

| +MSAFEB+ACADSH | 5.4 | 2.79 | 10.3 | 0.972 | 94 |

| +DSFFH+ACADSH | 6.1 | 3.09 | 11.2 | 0.971 | 90 |

| +MSAFEB+DSFFE+ACADSH | 5.1 | 2.69 | 9.8 | 0.979 | 98 |

| Model | Model size↓ | parameters↓ | GFLOPs↓ | mAP↑ | FPS↑ |

|---|---|---|---|---|---|

| Baseline | 6.3 | 3.01 | 8.2 | 0.726 | 73 |

| +MSAFEB | 5.7 | 2.86 | 7.9 | 0.741 | 93 |

| +DSFFM | 6.2 | 2.97 | 6.7 | 0.732 | 87 |

| +ACADSH | 5.9 | 2.91 | 7.3 | 0.728 | 85 |

| +MSAFEB+DSFFM | 5.4 | 2.64 | 6.5 | 0.749 | 97 |

| +MSAFEB+ACADSH | 5.8 | 2.63 | 7.1 | 0.743 | 95 |

| +DSFFH+ACADSH | 6.0 | 2.81 | 6.9 | 0.741 | 92 |

| +MSAFEB+DSFFE+ACADSH | 5.2 | 2.47 | 6.1 | 0.757 | 98 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).