1. Introduction

Object Re-identification (ReID) focuses on retrieving specific object instances across diverse viewpoints [

1,

2,

3,

4,

5] and has gained significant attention within the computer vision community due to its broad range of practical applications. Substantial advancements have been made in both supervised [

6,

7,

8,

9] and unsupervised ReID tasks [

5,

10,

11] , with most approaches employing backbone models originally designed for generic image classification tasks [

12,

13].

Unsupervised domain adaptation (UDA) for object ReID aims to transfer knowledge learned from a labeled source domain to accurately measure inter-instance affinities in an unlabeled target domain. Typical ReID tasks, such as person ReID and vehicle ReID, involve source and target domain datasets that do not share identical class identities. State-of-the-art UDA methods [

11,

14,

15,

16,

17,

18] generally adopt a two-stage training paradigm: (1) supervised pre-training on the source domain and (2) unsupervised fine-tuning on the target domain. During the second stage, pseudo-labeling strategies have demonstrated effectiveness in recent works [

11,

16,

17]. These strategies iteratively alternate between generating pseudo-class labels through clustering target-domain instances and refining the network by training on these pseudo-classes. This iterative process enables the pre-trained source-domain network to adapt to the target domain by capturing inter-sample relationships, despite the inherent noise in pseudo-class labels.

The integration of global features, which encapsulate coarse semantic information, and local features, which provide fine-grained details, has proven effective in enhancing algorithm performance. CORE-ReID [

11] introduced the Ensemble Fusion framework which combines global and local features with the Efficient Channel Attention Block (ECAB). ECAB leverages inter-channel relationships to guide the model’s attention toward salient structures within the input image. Although CORE-ReID achieves competitive results in Person ReID under the UDA setting, it has three main limitations. Firstly, ECAB is exclusively to local features, leaving global features unenhanced. Secondly, CORE-ReID only supports deep and complex backbone networks, such as ResNet50, ResNet101, and ResNet152, while neglecting shallower architectures like ResNet18 and ResNet34, which offer computational efficient and are well-suited for resource-constrained environments. Finally, CORE-ReID is limited to the Person ReID task, restricting its applicability to other ReID scenarios.

In this paper, we present CORE-ReID V2, an enhanced version of CORE-ReID that addresses its limitations and introduces several novel contributions. CORE-ReID V2 not only achieves superior performance in UDA Person ReID but also extends its applicability to Object ReID tasks and supports lightweight backbone networks, such as ResNet18 and ResNet34, making it suitable for real-time systems and mobile devices. Building on the principles of LF2 [

17] and CORE-ReID, we design a mean-teacher-based framework that iteratively learns multi-view features and refines noisy pseudo-labels through multiple clustering steps. We introduce the Ensemble Fusion++ module in CORE-ReID V2, which adaptively enhances both local and global features. This module applies ECAB to local features and the Simplified Efficient Channel Attention Block (SECAB) to global features, resulting in a fused representation that provides a more comprehensive feature set. Furthermore, we improve clustering outcomes by incorporating the KMeans++ [

19] initialization strategy, which balances randomness and centroid selection to enhance cluster quality. To validate the framework, we pre-train the model on a source domain that integrates camera-aware style-transferred data for Person ReID and domain-aware style-transferred data for Vehicle ReID. Additionally, we adopt a teacher-student architecture for iterative domain adaptation, where the teacher network captures global features while the student network refines diverse local features, both contributing to a more effective pseudo-labeling process. To summarize, the key contributions of CORE-ReID V2 are as follows:

Advanced Data Augmentation Techniques: The framework integrates novel data augmentation strategies, such as Local Grayscale Patch Replacement and Random Image-to-Grayscale Conversion for UDA task. These methods introduce diversity in the training data, enhancing the model's stability.

Dynamic and Flexible Backbone Support: CORE-ReID V2 extends compatibility to smaller backbone architectures, including ResNet18 and ResNet34, without compromising performance. This flexibility allows for deployment in resource-constrained environments while maintaining high accuracy.

Expansion to Vehicle and further Object ReID: Unlike its predecessor, which focused solely on person re-identification, CORE-ReID V2 extends its scope to Vehicle Re-identification and further general Object Re-identification. This expansion demonstrates its versatility and adaptability across various domains.

Introduction of Ensemble Fusion++: The framework incorporates the SECAB into the global feature extraction pipeline to enhance feature representation by dynamically emphasizing informative channels, thereby improving discrimination between instances.

2. Related Work

Extensive research has been conducted on UDA for Object ReID and knowledge transfer techniques, such as knowledge distillation, which enable well-trained models to transfer expertise and improve learning complex domain scenarios. Methods generally fall into two categories: domain translation, which aligns visual styles between domains, and pseudo-labeling, which iteratively cluster target samples to generate pseudo-labels. While pseudo-labeling has demonstrated superior performance, both methods face challenges related to domain shifts and noisy labels. Moreover, the fusion of global and local features has proven effective in various tasks, including classification, object detection, and semantic segmentation, by integrating contextual information with fine-grained details. Building on these insights, our work refines the CORE-ReID [

11] fusion module to achieve a better balance between global and local features, leading to improved performance across multiple ReID tasks, including Person and Vehicle ReID.

2.1. UDA for Object ReID

UDA has gained significant attention for its ability to reduce reliance on costly manual annotations. By utilizing labeled data from a source domain, UDA enhances model performance in a target domain without requiring target-specific annotations. Research in Object ReID has primarily concentrated on Person ReID and Vehicle ReID [

20]. Existing UDA approaches for ReID can be broadly grouped into two categories: domain translation-based methods and pseudo-label-based methods [

21,

22].

Domain translation-based methods: These methods align the visual style of labeled source domain images with that of the target domain. The translated images, along with their original ground-truth labels, are then used for training [

23].

Several methods attempt to map source and target distributions to mitigate domain shifts [

24,

25,

26,

27,

28]. Saenko et al. [

24] introduced a domain adaptation technique based on cross-domain transformations by learning a regularized non-linear transformation that brings source domain points closer to the target domain. In [

25], the Geodesic Flow Kernel (GFK) was proposed to address domain shifts by integrating an infinite number of subspaces that capture geometric and statistical changes between the source and target domains. Similarly, Fernando et al. [

26] developed a mapping function to align the source subspace with the target subspace for improved adaptation. Correlation Alignment (CORAL) [

27] addressed domain shifts by computing the covariance statistics of each domain and applying a whitening and re-coloring linear transformation to align the source feature with the target domain. The Disentanglement Then Reconstruction (DTR) framework [

28] enhanced alignment by disentangling the distributions and reconstructing them to ensure consistency across domains.

Another line of research [

29,

30,

31,

32] adopts adversarial approaches to learn transformations in the pixel space between domains. Methods like PixelDA [

29], PTGAN [

30], and SBSGAN [

31] enforce pixel-level constraints to preserve color consistency during domain translation. CoGAN [

32] extends this concept by learning joint distributions, such as the joint distribution of color and depth images or face images with varying attributes.

Other methods focus on discover a domain-invariant feature space to bridge domain gaps [

22,

33,

34,

35,

36,

37,

38]. SPGAN [

22] and CGAN-TM [

35] improve feature-level similarity between translated and original images. Deep Adaption Network (DAN) [

37] employes the Maximum Mean Discrepancy (MMD) [

39,

40,

41] to align feature distributions across domains. Similarly, Ganin et al. [

33] and Ajakan et al. [

42] introduced a domain confusion loss to encourage the learning of domain-invariant features. Hoffman et al. [

36] proposed the Intermediate Domain Module (IDM) to generate intermediate domain representations dynamically by mixing the hidden features of the source and target domains through two domain features. CyCADA [

38] combines both pixel-level and feature-level adaptation to improve domain adaptation.

Pseudo-label-based methods: The second category, pseudo-labeling methods [

11,

14,

15,

16,

17,

43,

44,

45,

46], models the relationships between unlabeled target-domain data with generated pseudo labels. Fan et al. [

43] proposed the progressive unsupervised learning (PUL) method that alternates between assigning labels to unlabeled samples and optimizing the network using the generated targets. This iterative refinement aligns the model’s representations more closely with the target domain, enhancing adaptation over time. Lin et al. [

44] developed a bottom-up clustering framework enhanced by a repelled loss mechanism, which aims to increase the discriminative power of learned features while mitigating intra-cluster variations. Similarly, UDAP [

14] proposed a self-training scheme that minimizes loss functions iteratively using clustering-based pseudo labels. SSG [

16], LF

2 [

17], and CORE-ReID [

11] further contributed to this category, which introduce techniques to assign pseudo labels to both global and local features. Ge et al. [

15] proposed Mutual Mean Teaching (MMT), which combines offline hard pseudo labels and online soft pseudo labels in an alternating training process, enhancing the model’s ability to adapt to domain shifts. This technique improves the model’s capacity to handle domain shifts by iteratively refining both the pseudo labels and feature representations throughout training. SpCL [

45] advanced this field by using a hybrid memory module that stored centroids of labeled source domain images alongside un-clustered target instances and target domain clusters. This hybrid memory provides additional supervision to the feature extractor, while minimizing a unified contrastive loss over the three types of stored information. Additionally, Zheng et al. [

46] developed the Uncertainty-Guided Noise Resilient Network (UNRN), which evaluates the reliability of predicted pseudo labels for target domain samples. By incorporating uncertainty estimates into the training process, UNRN improves performance with noisy annotations, thereby enhancing performance in domain adaptation scenarios.

Pseudo-labeling methods analyze data at different levels of detail, allowing them to capture small differences within the target domain. As a result, they achieve better performance than domain translation-based approaches and continue to lead in accuracy across most public datasets [

11,

15,

45,

46].

2.2. Knowledge Transfer

Knowledge transfer, the process of passing knowledge from a well-trained neural network (referred to as the teacher model) to another model (the student model), has gained considerable attention in recent years [

47,

48,

49,

50]. The core idea is to provide consistent training supervision for both labeled and unlabeled data through predictions generated by various models. Knowledge transfer techniques help student networks become more accurate and generalize better, as the teacher model’s output implicitly contains rich information about the relationships between training samples and their underlying distribution [

51]. In this way, knowledge transfer serves as a regularizer, enhancing the student model’s performance. For example, Laine and Aila [

52] introduced the Mean Teacher model which averaged model weights across multiple training iterations to guide supervision for unlabeled data. In contrast, Deep Mutual Learning (DML) [

53], proposed by Zhang et al., shifts from the traditional teacher-student framework by employing a group of student models that train collaboratively, providing mutual supervision and facilitating the exploration of diverse feature representations. Ge et al. introduced MMT [

15], which adopts an alternative training method that uses both offline refined hard pseudo-labels and online refined soft pseudo-labels. MEB-Net [

54] further builds on this by using three networks (six models in total) to conduct mutual mean teacher training and generate pseudo-labels.

2.3. Feature Fusion

The feature fusion of global and local features has proven highly effective across various computer vision tasks, including classification [

55,

56,

57,

58], object detection [

59,

60,

61,

62,

63], semantic segmentation [

64,

65,

66,

67], and more [

68].

In image classification, global features capture the overall structure and appearance, while local features focus on fine-grained details. Combining both types provides complementary information, enhancing the model's ability to generalize across variations such as pose, lighting, and occlusions. For example, Tian et al. [

55] proposed a vehicle model recognition system using an iterative discrimination CNN based on selective multi-convolutional region feature extraction. Their SMCR model combines global and local features to boost classification accuracy. Similarly, Lu et al. [

56] introduced a script identification framework that leverages both global CNNs, trained on segmented images, and local CNNs, trained on image patches. He et al. [

57] presented a traffic sign recognition approach that integrates global and local features using histograms of oriented gradients (HOG), color histograms, and edge features. Suh et al. [

58] employed fusion layers to concatenate global and local features for shipping label image classification, improving image quality verification. These studies demonstrated that fusing global and local features consistently improves classification performance compared to models relying on whole-image analysis alone.

In object detection, global features provide spatial awareness of objects within a scene, while local features capture subtle patterns, such as textures and edges, which are essential for accurate detection under occlusions. Cong et al. [

59] proposed an end-to-end co-salient object detection network that uses collaborative learning to enhance inter-image relationships. Their model includes a global correspondence module to extract interactive information across images and a local correspondence module to capture pairwise relationships. Li et al. [

60] developed an anchor-free object detector that leverages a global-local feature extraction transformer (GLFT) to capture semantic information from both micro- and macro-level perspectives.

In semantic segmentation, the fusion of global and local features improves pixel-level predictions by combining overall scene context with localized information, especially in complex environments. Yang et al. [

64] introduced AFNet, which uses a multi-path encoder to extract diverse features, a multi-path attention fusion module, and a fine-grained attention fusion module to combine high-level abstract and low-level spatial features. Tian et al [

65] extended this concept with two encoders to extract both global high-order interactive features and local low-order features. These encoders form the backbone of the global and local feature fusion network (GLFFNet), enabling effective segmentation of remote sensing images through a dual-encoder structure. Later, Zhou et al [

66] proposed a local–global multi-scale fusion network (LGMFNet) for building segmentation in SAR images. LGMFNet includes a dual encoder-decoder structure, with a transformer-based auxiliary encoder complementing the CNN-based primary encoder. The global–local semantic aggregation module (GLSM) is also introduced to bridge the two encoders, enabling semantic guidance across multiple scales through a specialized fusion decoder.

Inspired by these advances, feature fusion techniques have gained traction in domain adaptation for Object Re-identification. Self-Similarity Grouping (SSG) [

16] is the first approach to applied both global and local features for unsupervised domain adaptation (UDA) in Person ReID. However, SSG faces two challenges: first, using a single network for feature extraction often introduces noisy pseudo-labels, and second, it performs clustering independently on global and local features, potentially assigning multiple inconsistent pseudo-labels to the same sample. To address these limitations, LF

2 [

17] was proposed to fuse global and local features into a unified representation, reducing noise and improving clustering consistency. Building on this idea, CORE-ReID [

11] introduced an Ensemble Fusion module equipped with the ECAB, which effectively fuses global and local features.

3. Materials and Methods

Despite advancements in domain translation-based methods, these often suffer from a persistent domain gap between translated images and real target domain images, which can adversely impact performance. To address this issue, our approach employs a pseudo-labeling strategy, which enables data analysis at multiple levels of granularity. This method has demonstrated superior performance compared to domain translation-based techniques [

11,

15,

45,

46].

While existing pseudo-labeling frameworks such as Deep Mutual Learning (DML) [

53], MMT [

15], and MEB-Net [

54] have proven effective, they suffer from limitations due to their heavy reliance on pseudo-labels generated by the teacher model. These pseudo-labels can be noisy or inaccurate, adversely affecting model training. To mitigate this issue, we utilize a teacher-student network paradigm, where the student network is trained on labeled source domain data, and the teacher network is iteratively refined using the Mean Teacher method. Furthermore, we incorporate the Ensemble Fusion++ module, which enhances feature extraction by adaptively refining both local and global representations, thereby it is expected to produce more stable and reliable pseudo-labels than existing approaches.

In CORE-ReID [

11], the Efficient Channel Attention Block (ECAB) was primarily applied to local features, restricting the full potential of the Ensemble Fusion module. In CORE-ReID V2, we extend and enhance this module to ensure that both global and local features undergo comprehensive optimization. This enhancement results in a more balanced and discriminative feature representation, improving generalization across diverse ReID tasks. Additionally, the improved Ensemble Fusion++ is not only effective for Person ReID but also demonstrates strong domain adaptation capabilities in Vehicle ReID, further validating its versatility in Object ReID.

This chapter outlines the methodology and materials used in CORE-ReID V2 for unsupervised domain adaptation (UDA) in Object ReID. The proposed framework consists of two main stages: (1) pre-training on a labeled source domain and (2) fine-tuning on an unlabeled target domain.

3.1. Overview

3.1.1. CORE-ReID V1 and CORE-ReID V2

CORE-ReID V1: A Baseline for Unsupervised Domain Adaptation in Person Re-identification: CORE-ReID V1 was introduced as a framework to address Unsupervised Domain Adaptation (UDA) in Person Re-identification (ReID). It effectively tackled domain shifts between camera views by leveraging Camera-Aware Style Transfer for synthetic data generation, Random Grayscale Patch Replacement for data augmentation, and K-Means Clustering for pseudo-labeling. Additionally, the Ensemble Fusion module with Efficient Channel Attention Block (ECAB) played a crucial role in integrating local and global features, improving the model’s performance in cross-domain scenarios.

Despite its success, CORE-ReID V1 had several limitations:

Limited Application Domain: The framework was specifically designed for Person ReID, restricting its applicability to other ReID tasks such as Vehicle ReID and Object ReID.

Synthetic Data Generation Challenge: The Camera-Aware Style Transfer method relied on predefined camera information, making it ineffective when the number of cameras was unspecified.

Inefficient Data Augmentation: The Random Grayscale Patch Replacement technique only operated locally, limiting its effectiveness in learning color-invariant features.

Clustering Limitations: The K-Means clustering used random centroid initialization, leading to poor centroid placement, slow convergence, high variance in clustering results, and imbalanced cluster sizes.

Feature Fusion Issue: The ECAB module enhanced only local features, neglecting improvements to global representations.

Restricted Backbone Support: The framework exclusively supported deep networks such as ResNet50, ResNet101, and ResNet152, making it computationally expensive and unsuitable for lightweight applications.

CORE-ReID V2: Expanding Scope, Enhancing Performance: To overcome these limitations, CORE-ReID V2 is proposed as a enhancement over CORE-ReID V1, expanding its capabilities to Vehicle ReID and Object ReID while introducing architectural and methodological improvements.

Expanded Application Scope: Unlike CORE-ReID V1, which was restricted to Person ReID, CORE-ReID V2 extends its applicability to Vehicle ReID and Object ReID, making it a versatile framework for various ReID tasks.

Advanced Synthetic Data Generation: CORE-ReID V2 incorporates both Camera-Aware Style Transfer and Domain-Aware Style Transfer, allowing effective synthetic data generation even when the number of cameras is unknown.

Improved Data Augmentation: A new grayscale patch replacement strategy considers both local grayscale transformation and global grayscale conversion, leading to better feature generalization across domains.

Enhanced Clustering with Greedy K-Means++: Instead of relying on random initialization, CORE-ReID V2 employs Greedy K-Means++, which selects optimized centroids to improve cluster spread; minimizes redundancy, requiring fewer iterations; enhances stability and consistency, reducing randomness; ensures better centroid distribution, leading to improved clustering performance.

Ensemble Fusion++ for Comprehensive Feature Enhancement: CORE-ReID V2 introduces Ensemble Fusion++, which integrates both ECAB and SECAB, ensuring that global features are enhanced alongside local features, leading to a more balanced and comprehensive feature representation.

Flexible Backbone Support: CORE-ReID V2 broadens its applicability by supporting lightweight networks such as ResNet18 and ResNet34, alongside ResNet50, ResNet101, and ResNet152. This allows deployment in computationally constrained environments, such as real-time and edge-based applications.

CORE-ReID V2 represents a substantial advancement over CORE-ReID V1 by expanding its scope beyond Person ReID, improving clustering stability, introducing adaptive feature enhancement mechanisms, and supporting lightweight architectures.

Table 1 shows the summary of these improvements.

3.1.2. Problem Definition and Methodology

Problem definition: We represent the customized labeled source domain data as , where and denote the source image and its corresponding ground truth identity label, respectively, and is the total number of source images. Similarly, the unlabeled target domain data is denoted as , where indicates the target image, and is the number of target images. Identity labels are unavailable for the images in the target domain dataset, and it is important to note that the identities across the source and target domains do not overlap. The objective of Unsupervised Domain Adaptation (UDA) for Object ReID is to transfer knowledge from the source domain to the target domain . To accomplish this, we propose the CORE-ReID V2 framework, designed to achieve effective knowledge transfer through a pseudo-label-based method.

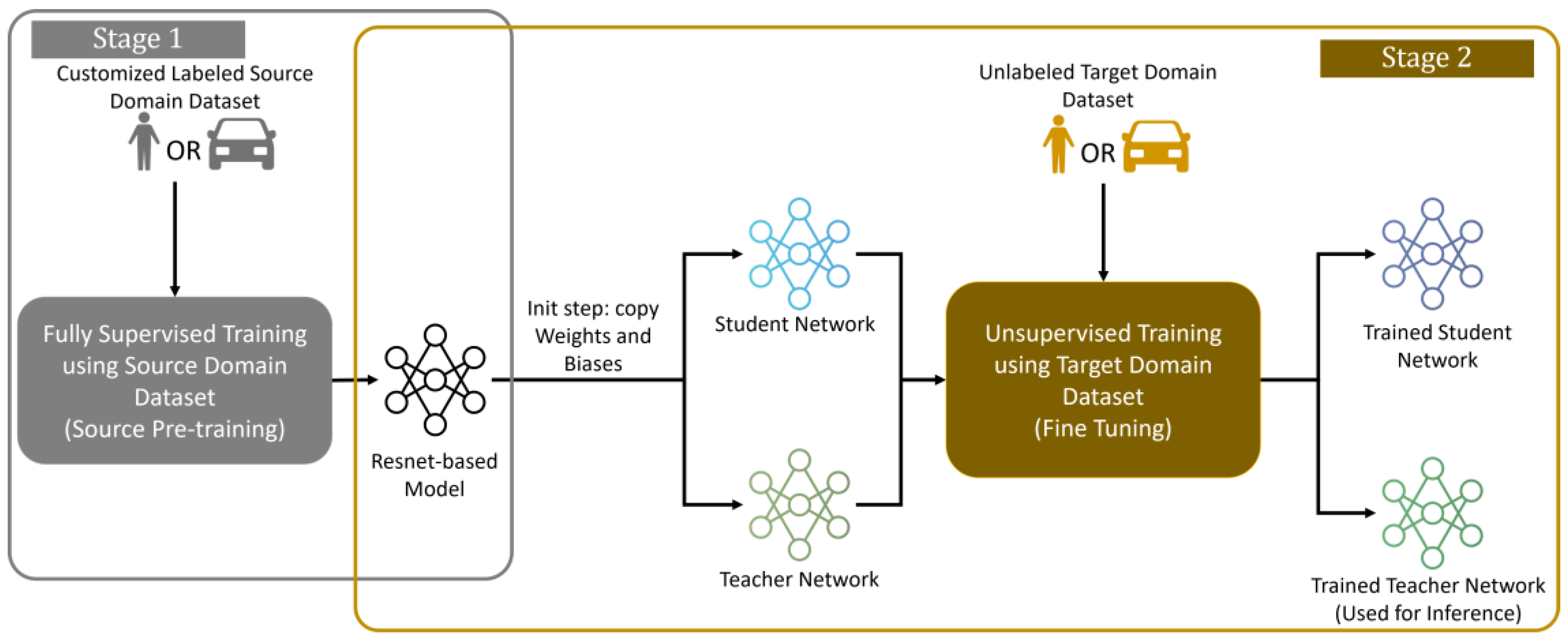

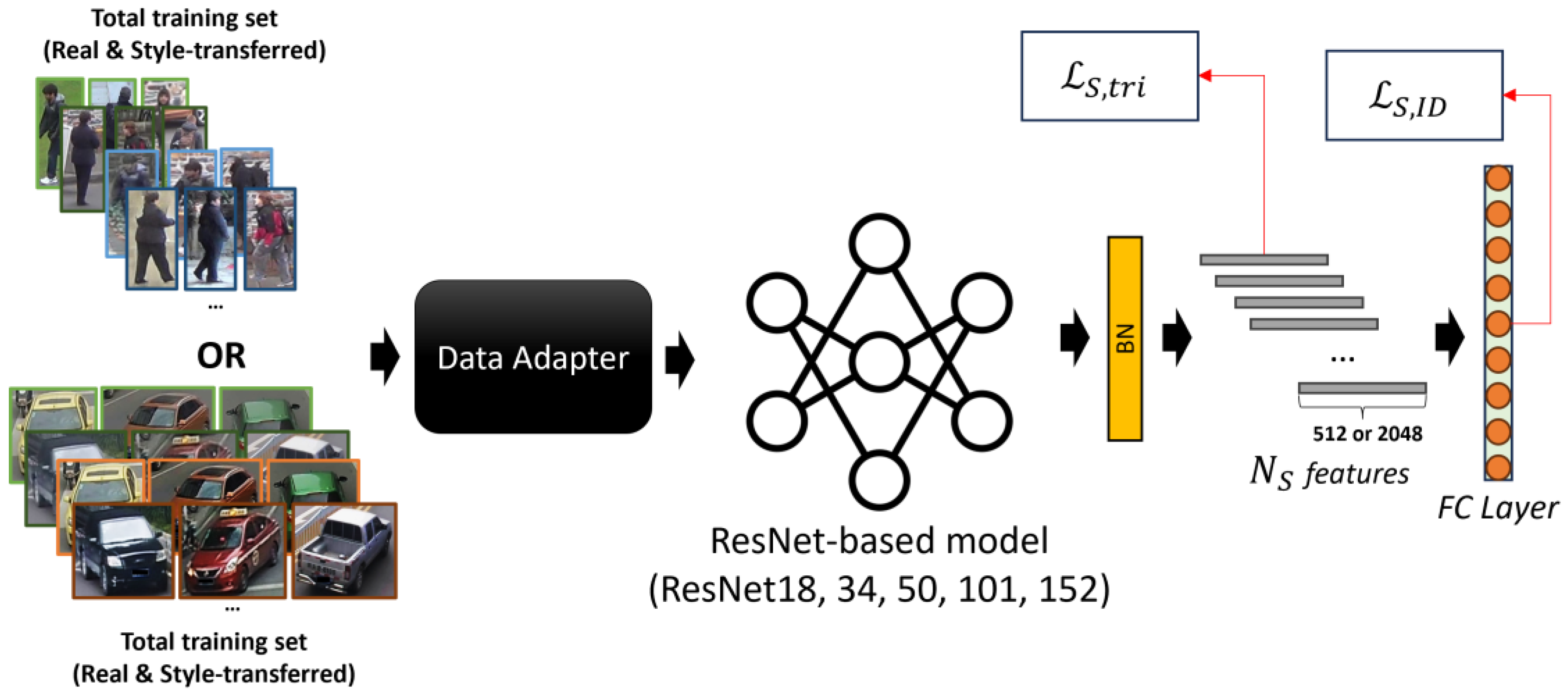

Methodology: we adopt a pseudo-label-based approach by dividing the process into two stages: pre-training the model on the source domain using a fully supervised strategy, followed by fine-tuning it on the target domain through an unsupervised learning approach (

Figure 1).

Depending on the specific task (Person ReID or Vehicle ReID), the appropriate dataset is utilized. Our method uses a pair of teacher–student networks. After training the model on a customized labeled source domain dataset, the parameters of the pre-trained model are copied to both the student and teacher networks as an initialization step for the fine-tuning stage. During fine-tuning, we first train the student model and then optimize the teacher model using the Mean Teacher method [

69]. This is because averaging model weights across multiple training steps generally yields a more accurate model than relying solely on the final weights [

70]. Following the Mean Teacher method, the teacher model uses Exponential Moving Average (EMA) weight parameters of the student model instead of directly sharing weights. This approach allows the teacher network to aggregate information after every step, rather than every epoch, improving consistency. To minimize computational costs, only the teacher model is used during inference.

3.2. Source-Domain Pre-Training

3.2.1. Image-to-Image Translation

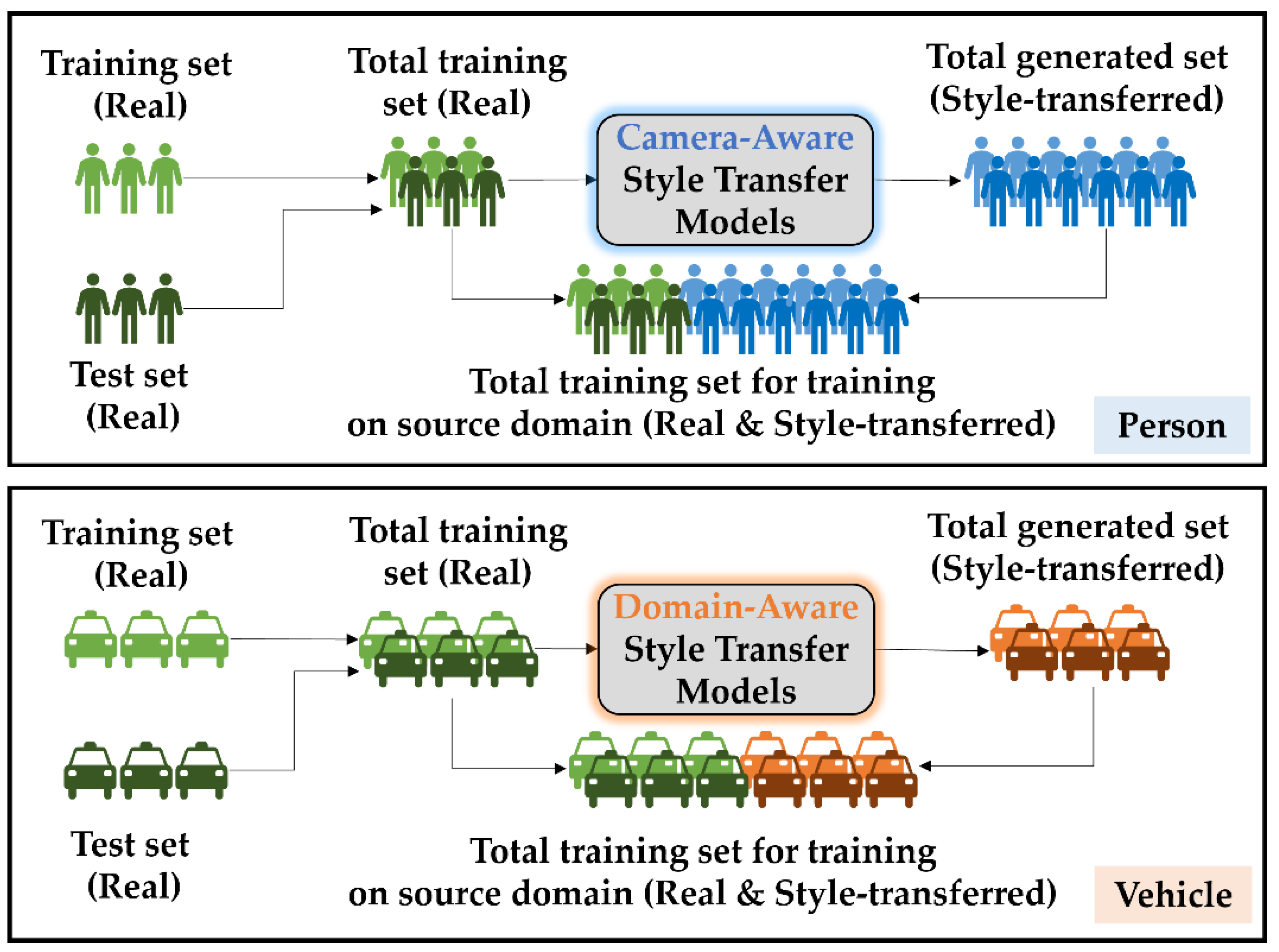

Inheriting from CORE-ReID, we employ CycleGAN to generate additional training samples by treating the stylistic variations across different cameras as distinct domains for Person ReID task. This involves training image-to-image translation models using CycleGAN for images captured from various camera views within the dataset. Our goal is to train on a source domain

and evaluate the algorithm during the fine-tuning phase on a different target domain

. By incorporating test data into the training set, similar to DGNet++ [

71], we can fully leverage the available data in

. In the Person ReID task, for a source domain dataset containing images from

different cameras, we utilize

generative models to produce data in both

and

directions. The final training set is a combination of the original real images and the style-transferred images from both the training and test sets within the source domain dataset. These style-transferred images retain the labels of their corresponding real images.

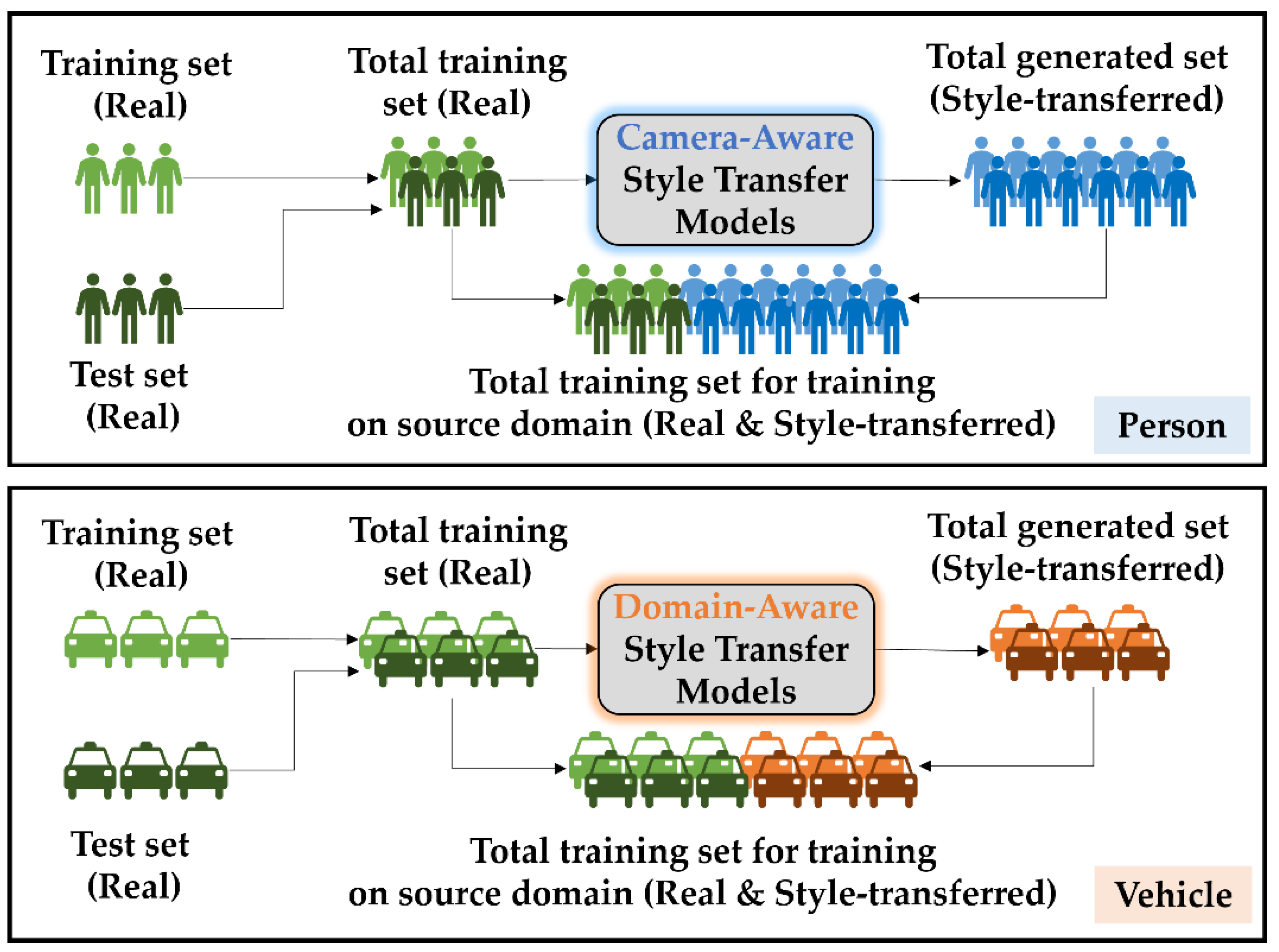

In the case of Vehicle ReID, due to the simpler nature of vehicle features and the large number of cameras used (some datasets do not provide the number of cameras used), we adopt domain-aware transfer models instead of camera-aware models. As a result, only a single transfer model is needed to generate style-transferred images from the source domain to the target domain (

Figure 2).

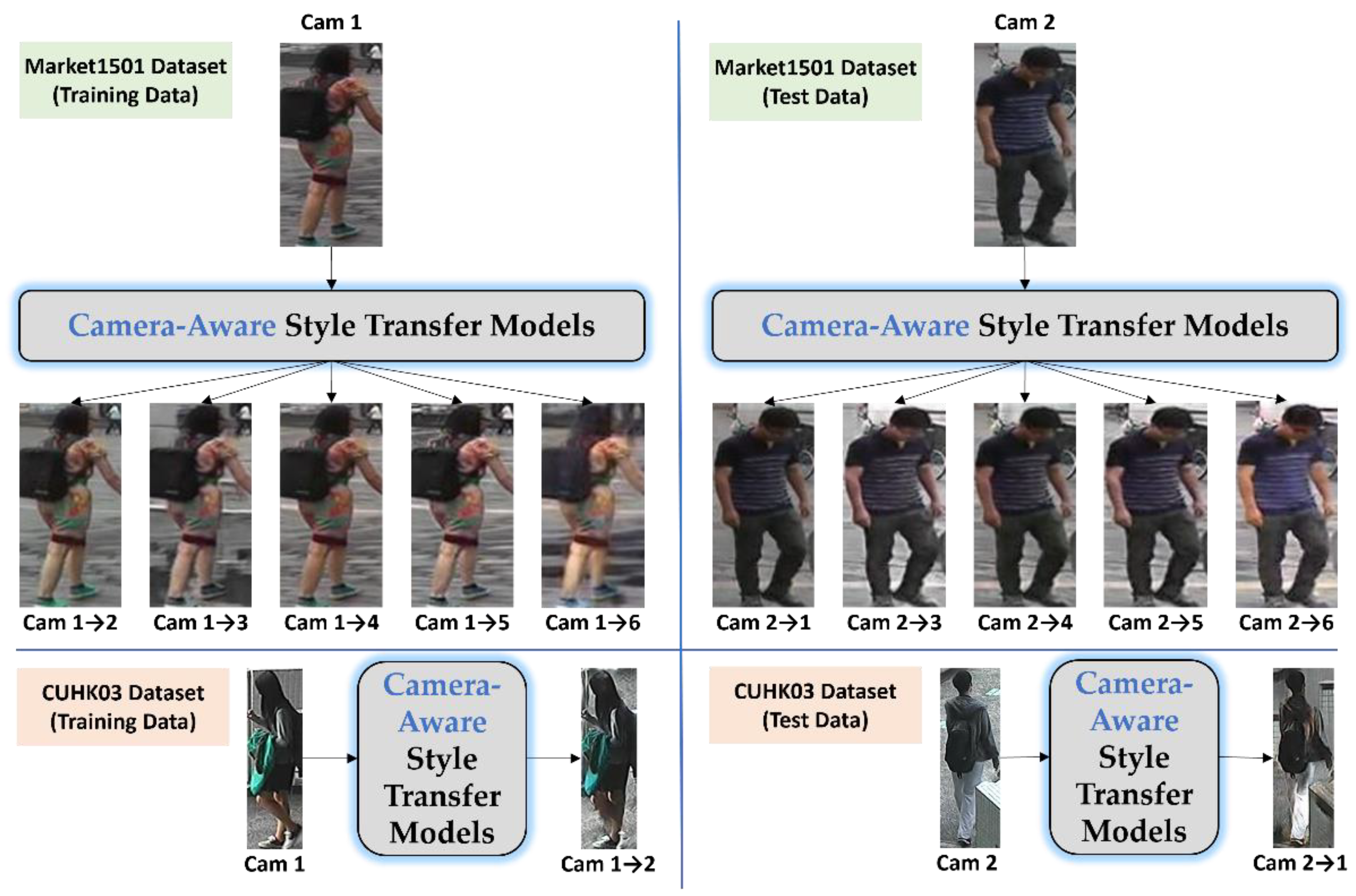

Figure 3 illustrates two representative examples from both the training and test sets in the Market-1501 and CUHK03 datasets, where image styles have been modified according to camera views. This adjustment showcases our approach to data augmentation, where images are transformed to mimic the visual characteristics associated with each camera’s unique viewpoint and color distribution. By aligning image styles with camera perspectives, this method effectively reduces the inconsistencies in appearance caused by differences in lighting, angles, and color shifts across camera views. This approach helps the model generalize better, thus mitigating overfitting in Convolutional Neural Networks (CNNs). Furthermore, incorporating camera-specific style information allows the model to learn more robust pedestrian features that are less sensitive to variations across different camera setups, leading to enhanced performance in ReID tasks.

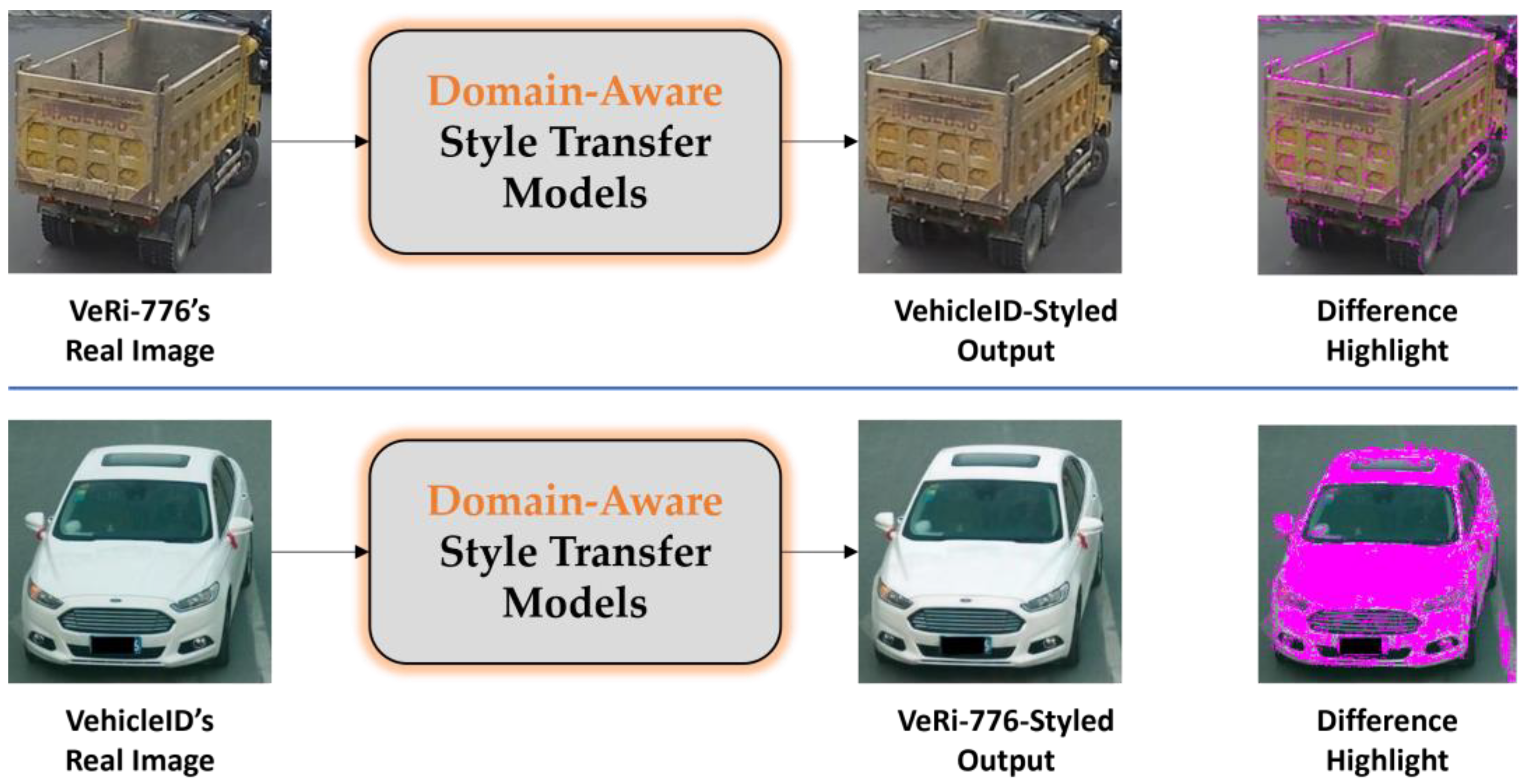

Given the simpler nature of vehicle features and the large number of cameras involved (with some datasets not specifying the number of cameras), we aim to utilize labels from the source domain along with target-domain-style-transferred images to create a shared-features domain dataset. This approach involves developing domain-aware style transfer models to bridge the feature gap between the two domains by transforming source domain images into target-domain-style outputs.

Figure 4 shows two examples of input images from the VeRi-776 [

1] and VehicleID [

72] datasets, with the pixel differences between the input and output images highlighted in pink.

3.2.2. Fully Supervised Pre-Training

Like many existing UDA approaches [

17] that rely on a model pre-trained on a source dataset, we employ a ResNet-based model, pre-trained on ImageNet as the backbone network. In this setup, the original final fully connected (FC) layer is removed and replaced with two new layers. The first one is a batch normalization layer with either 2,048 or 512 features, depending on the specific ResNet architecture. The second layer is an FC layer with

dimensions, where

represents the number of identities (classes) in the source dataset

(

Figure 5).

In our training process, we define the number of identities for the full training set in the source domain as:

where

and

represent the number of identities in the original training and test sets of

, respectively. For each labeled image

and its ground truth identity

in the source domain data

with

representing the total number of images, we train the model using both identity classification (cross-entropy) loss

and triplet loss

. The identity classification loss is applied to the final fully connected (FC) layer, handling the task as a classification problem, while the triplet loss, applied after batch normalization, is used for feature verification. The loss functions are defined as follows:

where

is the feature of the source image

,

is the cross-entropy loss,

is a learnable classifier in the source domain:

.

denotes the

-norm distance,

and

are the hardest positive and hardest negative feature indices in each mini-batch for the sample

, and

represents the triplet distance margin. Using a balance parameter

the total loss for source-domain pre-training is:

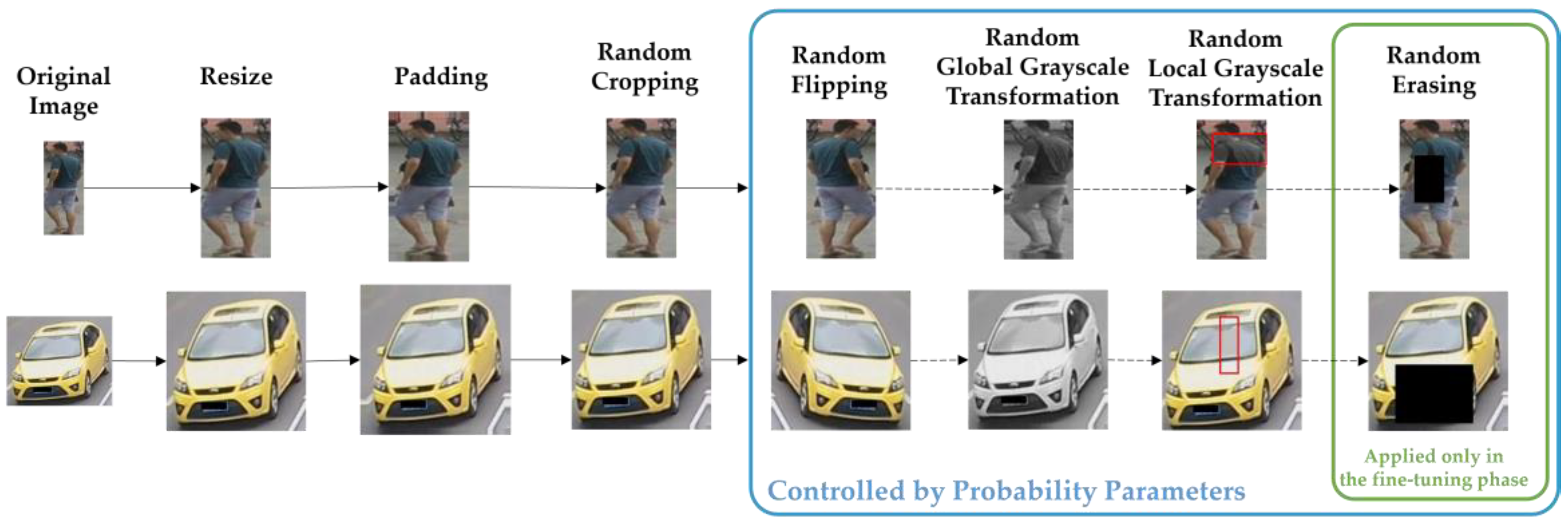

The model is expected to achieve strong performance on fully labeled source-domain data, but its performance significantly drops when applied directly to the unlabeled target domain. Before feeding images into the network, we utilize the “Data Adapter” component (

Figure 6) to preprocess them by resizing to a specific size depending on the type of object. We then apply several data augmentation techniques, including edge padding, random cropping, and random horizontal flipping. To address color deviation, we incorporate random color dropout through global and local grayscale transformations [

73], preserving key information while minimizing overfitting and enhancing the model's generalization. These approaches specifically balance the model’s weighting of color features and color-independent features, resulting in improved feature robustness in the neural network.

We employ the global grayscale transformation to a training batch with a set probability

, then feed it into the model for training. This process is defined as:

, where

represents the grayscale conversion function using the NTSC formula (

),

denotes the input image and

is the randomly grayscale image. This function operates by performing pixel-wise accumulation on the red, green, and blue channels of the original RGB image, resulting in a grayscale output. Importantly, the labels remain consistent between the converted grayscale image and the original. The procedure for Local Grayscale Transformation is outlined in Algorithm 1.

|

Algorithm 1: Global Grayscale Transformation |

Input: Input image ;

Grayscale transformation probability . |

|

Output: Randomly grayscale image . |

|

Initialization: . |

| 1: |

if then

|

| 2: |

. |

| 3: |

else |

| 4: |

. |

| 5: |

return . |

| 6: |

end |

To enhance model adaptability to significant biases from localized color dropout, we apply a local grayscale transformation to each visible image

in the training batch using the following equation:

where

generates a random rectangular region within the image

, The transformed sample is represented by

. During model training, local grayscale transformation is applied randomly to images in each batch with a probability

. This involves selecting a random rectangular region within the image and replacing it with the grayscale pixels of that same region. Consequently, images with mixed grayscale levels are generated, aiding the model in learning with color-variant features without altering object structure. The process includes several parameters:

and

define the minimum and maximum size ratios of the rectangle relative to the full image area; the rectangle’s area

is computed by sampling from

, where

is the input image area;

is a coefficient that sets the rectangle’s shape ratio within the interval

; coordinates

and

for the rectangle's top-left corner are generated randomly. If the rectangle exceeds image boundaries, new coordinates and dimensions are selected. This approach produces images with grayscale sections that vary in intensity without impacting the core structure, allowing the model to learn features invariant to color variations. The full procedure for local grayscale transformation is detailed in Algorithm 2.

|

Algorithm 2: Local Grayscale Transformation |

Input: Input image ;

Grayscale transformation probability ;

Area ratio range (low to high) and ;

Aspect ratio . |

|

Output: Randomly transformed image . |

Initialization: ;

, ;

. |

| 1: |

if then

|

| 2: |

; return . |

| 3: |

else |

| 4: |

while do

|

| 5: |

; |

| 6: |

; |

| 7: |

; |

| 8: |

, ; |

| 9: |

if and then

|

| 10: |

; |

| 11: |

; |

| 12: |

; |

| 13: |

return . |

| 14: |

end

|

| 15: |

end |

| 16: |

end |

3.2.3. Implementation Details

To perform camera-aware image-to-image translation for generating synthetic data, we train 30 generative models for the Market-1501 dataset and 2 models for the CUHK03 dataset. These numbers are derived from the formulas and , corresponding to the number of camera pairs in each dataset. For domain-aware image-to-image translation, we train 2 generative models (VeRi-776 to VehicleID and reverse). During training, all input images are first resized to 286×286 pixels, followed by cropping them to 256×256 pixels. We use the Adam optimizer for training all models from scratch, with a batch size of 8. The learning rate is initialized at 0.0002 for the Generator and 0.0001 for the Discriminator. For the first 30 epochs, these rates are kept constant and then linearly decayed to near zero over the subsequent 20 epochs according to a lambda learning rate schedule.

For pre-training, we adopt ResNet101 as the backbone (with support for other ResNet architectures as well). The initial learning rate is set to 0.00035, then reduced by a factor of 0.1 at the 40th and 70th epochs, totaling 350 training epochs with a 10-epoch warmup period. Each training batch consists of 32 identities, with 4 images per identity, resulting in a final batch size of 128. The balance parameter for computing the total loss is set to 1. Regarding preprocessing, each image is resized to 256×128 pixels for Person ReID task and 256×256 pixels for Vehicle ReID task. The resized images are padded with 10 pixels using edge padding, followed by random cropping back to their original resized dimensions. Additional augmentation techniques included random horizontal flipping, global grayscale transformation , and local grayscale transformation , applied with probabilities of 0.5, 0.05, and 0.4, respectively. Images are then converted to 32-bit floating-point pixel values normalized to the range. The RGB channels are further normalized by subtracting mean values of and dividing by standard deviations of .

3.3. Target-Domain Fine-Tuning

3.3.1. Overall Algorithm

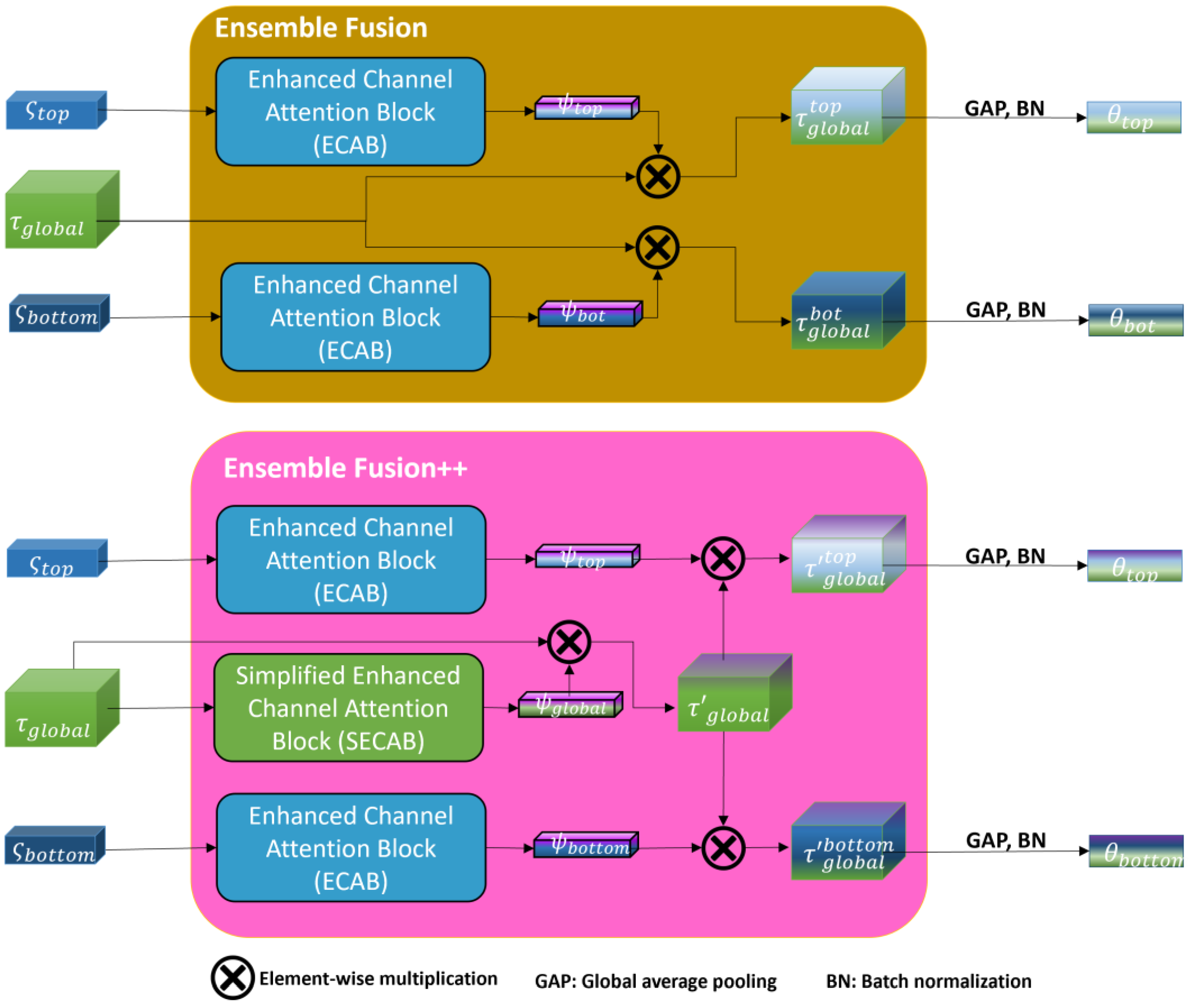

In this phase, we use the pre-trained model to perform comprehensive optimization. We present our CORE-ReID V2 framework (

Figure 7) along with Efficient Channel Attention Block (ECAB) and Simplified Efficient Channel Attention Block (SECAB) in Ensemble Fusion++.

Building upon the strategies utilized in SSG [

16], LF

2 [

17], and CORE-ReID [

11], our objective is to enable the model to dynamically integrate both global and local features. This approach allows for feature representations that encompass comprehensive global and detailed local information. To further enhance these feature representations, we incorporate ECAB and SECAB modules during the fusion process. By organizing multiple clusters based on global and fused features, we aim to generate more reliable pseudo-labels, thereby reducing the risk of ambiguous learning.

To refine these pseudo-labels, we implement a teacher-student network pair grounded in the mean-teacher framework. We feed the same unlabeled image from the target domain into both the teacher and student networks. During iteration

, the student network's parameters,

, are updated using Mean Teacher momentum, adjusting them through backpropagation within the target domain training. In parallel, the teacher network's parameters,

, are derived as a moving average of the student network's parameters

. This is controlled by the temporal momentum coefficient

, which is restricted to the range

. The update rule is defined as:

We utilize the K-means algorithm for clustering to assign pseudo-labels to the data. As a result, each sample is assigned three pseudo-labels (global, top, and bottom). The target domain dataset is defined as: , where and represents the total number of images in the target dataset . The pseudo-label indicates that is derived from the clustering results . These are obtained using the combined feature with its flipped counterpart generated by BMFN, denoted as . Here, stands for the number of distinct identities (classes) in the clustering outcome .

Before computing the loss function, we use BMFN to extract optimized features from networks

,

,

and

from the Ensemble Fusion++. Given an image

in the target dataset, along with its flipped version

, we extract the feature maps

and the flipped feature maps

for

and

. The BMFN output is computed as follows:

After obtaining multiple pseudo-labels, we generate three new target-domain datasets to train the student network. The pseudo-labels derived from the local fusion features, denoted as

, are used to calculate the softmax triplet loss for the corresponding local features

from the student network:

where

and

are the parameters of the teacher and student networks, respectively. The optimized local feature from the student network is denoted as

, with

. Here,

and

represent the hardest positive and negative samples relative to the anchor image

in the target domain.

In a similar fashion to supervised learning, we utilize the cluster results

of the globally clustered feature

as pseudo-labels to compute the classification loss

and the global triplet loss

. These losses are defined as follows:

where

represents the fully connected classification layer of the student network, mapping

to the set

. The notation

indicates the

-norm distance.

The total loss is computed by combining the different losses with weighting parameters

:

During the inference phase, the Ensemble Fusion++ process is bypassed, using only the optimized teacher network to reduce computational overhead. Specifically, the global feature map from the teacher network is split into two segments, referred to as top and bottom features (which also acts similarly in the student network). These segments undergo global average pooling, after which the two local features and the global feature are concatenated. Finally, normalization and the BMFN method are applied to obtain the optimal feature representation for inference.

3.3.2. Ensemble Fusion++ Component

To extract the fusion features, we horizontally divide the final global feature map of the student network into two segments (top and bottom), resulting in and after applying global average pooling. Unlike the Ensemble Fusion component in CORE-ReID [Nguyen, 2024 #29], the final global feature map from the teacher network is further enhanced using the proposed SECAB module. These features and from the student network, along with from the teacher network are then utilized for adaptive feature fusion through the Ensemble Fusion++ module, which includes learnable parameters.

The inputs and are processed by ECAB, while is processed by SECAB for adaptive fusion. The enhanced attention maps ( and ) generated by ECAB are combined with the output through element-wise multiplication, resulting in the ensemble fusion feature maps: and . These maps undergo Global Average Pooling (GAP) and batch normalization, yielding the fusion features and . These features are then fed into the BMFN for predicting pseudo-labels using clustering algorithms in subsequent steps.

The process within Ensemble Fusion++ (

Figure 8) can be summarized as follows:

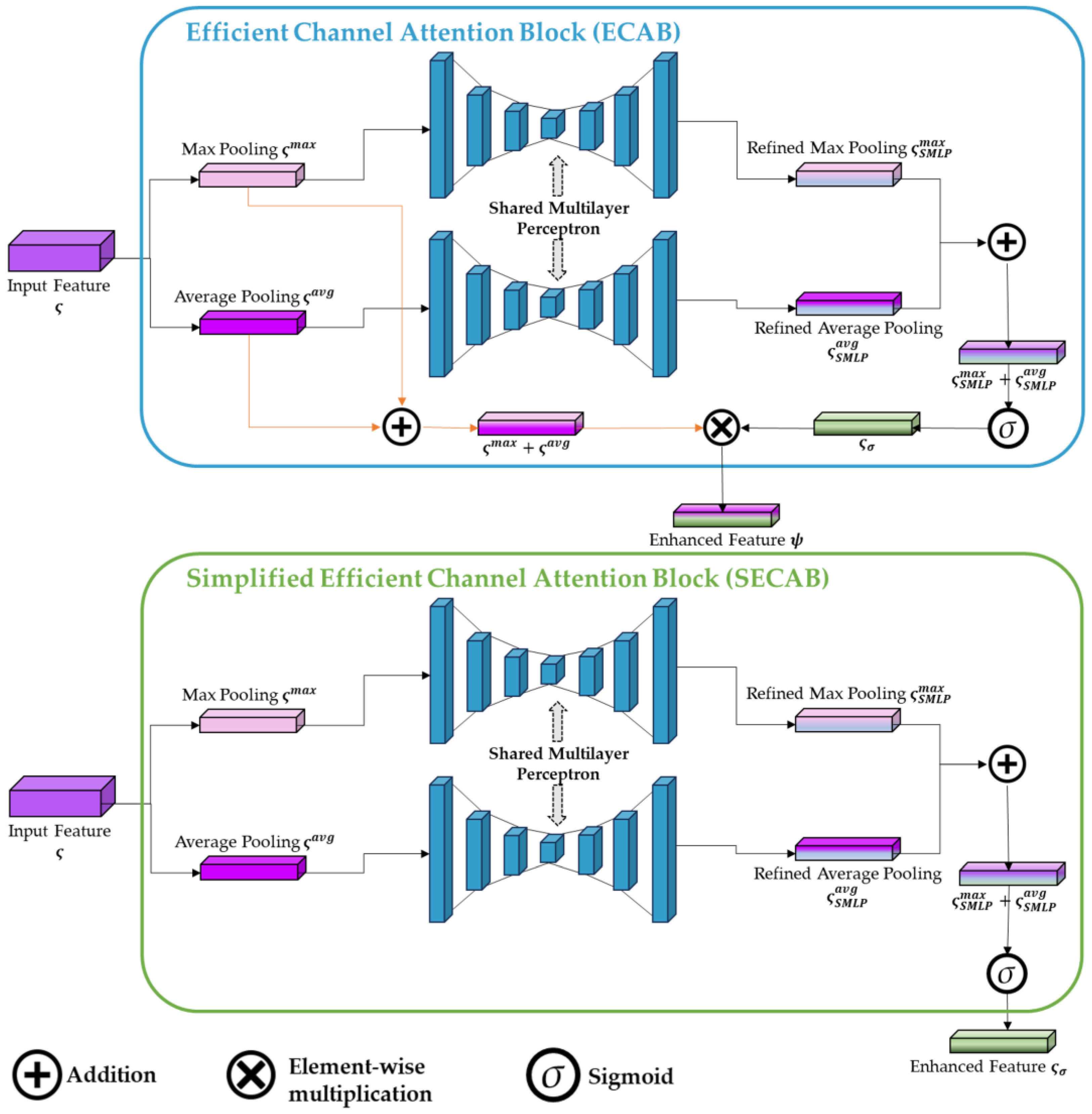

3.3.3. SECAB

The importance of attention has been extensively explored in previous literature [

74,

75,

76]. Attention not only guides where to focus but also enhances the representation of relevant features. Inspired by the ECAB [

11], we introduce a new component named SECAB (

Figure 9), a straightforward yet impactful attention module for feed-forward convolutional neural networks to enhance the global feature. This module enhances representation power through attention mechanisms that emphasize crucial features while suppressing unnecessary ones. We generate a channel attention map by leveraging inter-channel relationships within features. Each channel of a feature map serves as a feature detector, and channel attention directs focus towards the most meaningful aspects of an input image. To efficiently compute channel attention, we compress the spatial dimension of the input feature map.

We utilize both average-pooling and max-pooling features simultaneously to aggregate spatial information. These operations squeeze the spatial dimensions of the input to 1x1 using adaptive pooling, which aggregates global spatial information.

Figure 9 shows the design of SECAB. Given an intermediate input feature map

, where

denote the number channel, width, and height respectively. After performing max-pooling and average-pooling, then fit the outputs

,

into a Shared Multilayer Perceptron (SMP), we can obtain refined feature as

and

. The SMP has multiple hidden layers with reduction rate

and the same expansion rate, activation function ReLU. Sigmoid activation squashes the sum to the range between 0 and 1, producing a channel-wise attention mask. The enhanced attention map

is calculated as:

3.3.4. Greedy K-Means++

In the K-means clustering problem, we are given a set of points

in a

-dimensional space, a specified number of clusters

. The objective is to identify a set of

centroids

that minimizes the total sum of squared distances from each point in

to its nearest centroid. Specifically, if we define the cost of a point

with respect to a set of centers

as

, the goal is to find

such that

and the cost

is minimized. In practice, a simple way to initialize centroids is to select a random subset of

with the size

. However, this random approach does not guarantee any approximation bounds and can perform poorly in certain cases, such as when

well-separated clusters exist along a single line. Arthur and Vassilvitskii [

19] propose a probabilistic seeding method known as K-means++, which improves centroid initialization by favoring points that are far from already-selected centroids, while still maintaining a degree of randomness. Empirical results demonstrate that K-means++ consistently outperforms random seeding on real-world datasets [

19]

.

The Greedy K-means++ algorithm [

77] refines this process further by eliminating randomness and selecting centroids deterministically to explicitly maximize the spatial spread. The algorithm operates as follows. At each step, it samples

candidate

from a distribution constructed based on the current centroid configuration. Then, for each candidate

, the algorithm calculates the new cost

that would result from adding this candidate to the set of centroids. Next, the candidate center that minimizes this cost is selected as the next centroid. In our implementation,

is typically set to

By systematically evaluating multiple candidates at each step, Greedy K-means++ ensures better centroid initialization and improved clustering outcomes (Algorithm 3).

|

Algorithm 3: Greedy K-means++ seeding |

Input: The number of images in target dataset ;

The number of the clusters ;

The number of candidate centers. |

|

Output: The set of centers . |

|

Initialization: Uniformly independently sample . |

| 1: |

Let and set . |

| 2: |

for do

|

| 3: |

Sample independently; |

| 4: |

Sample with probability ; |

| 5: |

Let ; |

| 6: |

Set . |

| 7: |

return

|

3.3.5. Detailed Implementation

The training process lasts 80 epochs, with each epoch consisting of 400 iterations. A fixed learning rate of 0.00035 for Person ReID task (0.00007 for Vehicle ReID task) is maintained throughout, and the Adam optimizer is employed with a weight decay of 0.0005 to ensure stable convergence. Clustering operations utilize the K-means algorithm with Greedy K-means++ initialization, where the maximum number of iterations is capped at 100, striking a balance between computational efficiency and solution accuracy. The mini-batch size is set to 512, allowing for efficient centroid updates without processing the entire dataset. An early stopping criterion is applied, terminating clustering if no improvement in inertia is observed over 50 consecutive mini-batches. To address the issue of empty clusters, a reassignment ratio of 0.05 is used, ensuring toughness in dynamic data distributions. For centroid initialization, 1,500 data points are used for global features, while 900 data points are allocated for both top and bottom local features.

In the temporal ensemble regularization process, the momentum parameter () is set to 0.999. To balance the contributions of the various components in the loss function, we assign weights as follows: and . For the Ensemble Fusion++ module, a reduction ratio and expansion rate () of 4 are utilized, along with 5 hidden layers () for both ECAB and SECAB components.

The data adapter resizes input images to for the Person ReID task and for the Vehicle ReID task. Edge padding of 10 pixels is applied before randomly cropping the images to their respective dimensions ( or ). Data augmentation strategies include random horizontal flipping, global grayscale transformation, local grayscale transformation, and random erasing, applied with probabilities of 0.5, 0.05, 0.4, and 0.5, respectively. These steps ensure a robust and diverse training dataset to improve generalization performance.

4. Results

In this section, we present experimental results, comparing our method against state-of-the-art (SOTA) techniques on widely-used datasets for the task of Unsupervised Domain Adaptation (UDA) for Object ReID.

4.1. Dataset Description

We evaluate the effectiveness of our proposal by conducting evaluations on three benchmark datasets: Market-1501 [

78], CUHK03 [

79], and MSMT17 [

30] for Person ReID and two benchmark datasets: Veri-776 [

1] and VehicleID [

72] for Vehicle ReID.

Market-1501 [

78] contains 32,668 images of 1,501 individuals captured from six different camera views. The training set includes 12,936 images representing 751 identities, while the testing set comprises 3,368 query images and 19,732 gallery images, covering the remaining 750 identities.

CUHK03 [

79] features 14,097 images of 1,467 unique individuals, recorded by 6 campus cameras, with each identity captured by 2 cameras. The dataset provides two types of annotations: manually labeled bounding boxes and those generated by an automatic detector. For both training and testing, we utilize the manually annotated bounding boxes. Additionally, we follow a more rigorous testing protocol proposed in [

80], which splits the dataset into 767 identities (7,365 images) for training and 700 identities for testing, with 5,332 images in the gallery and 1,400 images in the query set.

MSMT17 [

30] is a large-scale dataset comprising 126,441 bounding boxes of 4,101 identities, recorded by 12 outdoor and 3 indoor cameras (15 cameras total) during three periods of the day (morning, afternoon, and noon) over 4 different days. The training set includes 32,621 images featuring 1,041 identities, while the testing set contains 93,820 images representing 3,060 identities. The testing set is further divided into 11,659 query images and 82,161 gallery images. Especially, MSMT17 is significantly larger in scale than both Market-1501 and CUHK03.

VeRi-776 [

1] was collected from 20 real-world surveillance cameras in an urban area under diverse conditions, such as orientations, illuminations, and occlusions. It comprises over 50,000 images of 776 vehicles, and approximately 9000 trajectories. The dataset provides a variety of labels, including identity annotations, vehicle attributes, and spatiotemporal information. It is divided into two subsets for training and testing: the training set contains 37,778 images of 576 vehicles, while the test set consists of 11,579 images of the remaining 200 vehicles.

VehicleID [

72] contains vehicle images captured by real-world cameras during the daytime. Each subject in the dataset has numerous images taken from the front and back, with some images annotated with model information to aid vehicle identification. The training set comprises 110,178 images of 13,134 vehicles. The test set is divided into three sections: Test800, with 6,532 query images and 800 gallery images of 800 vehicles; Test1600, with 11,385 query images and 1,600 gallery images of 1,600 vehicles; and Test2400, with 17,638 query images and 2,400 gallery images of 2,400 subjects. Following the evaluation protocol of the authors [

72], each testing subset divides the query, and gallery sets by randomly selecting one image per subject for the query subset, while the remaining images for each subject form the gallery subset.

The comprehensive overview of the datasets utilized in this document is presented in

Table 2.

4.2. Evaluation Metrics

For the cross-domain Object ReID task, we utilize Rank- accuracy (where and mean average precision (mAP) to evaluate overall performance on test images.

Rank Ratio Accuracy (Rank-): The ranking process involves comparing the features extracted from a query object image

with all images in the gallery. This comparison results in a list of images sorted in descending order of similarity, with the most similar images appearing at the top. According to the ground truth of the selected dataset, the position within this sorted list where an image corresponds to the same object as the query image determines its rank. The Rank-

metric reflects the algorithm's accuracy in correctly identifying object images within the top

ranks among the retrieved results for each query:

Here,

represents the total number of probe images queried from the gallery, and

is a binary function:

Mean Average Precision (mAP): In object ReID, where models produce a ranked list of images, it is crucial to consider the position of each image within the list. For each probe image, the average precision (AP) is calculated as follows:

where

N is the total number of images in the gallery set. The values

and

represent the precision at the

-th position in the ranking list and a binary function, respectively. If the probe matches the

-th element, then

; otherwise,

. The mean average precision (mAP) across all probe images is then computed using the

values:

Here, denotes the total number of probe images queried, and is the average precision calculated for each probe image .

4.3. Benchmark on Person ReID

Our study begins by comparing CORE-ReID V2 with state-of-the-art (SOTA) methods on two domain adaptation tasks: Market ➝ CUHK and CUHK ➝ Market (

Table 2). We then expand the evaluation to include two additional tasks: Market ➝ MSMT and CUHK ➝ MSMT (

Table 3). In these comparisons, “Baseline” refers to the CORE-ReID method developed in our previous work, while CORE-ReID V2 represents the framework proposed in this paper. Additionally, CORE-ReID V2 Tiny is a lightweight version utilizing the smaller ResNet18 backbone. The evaluation metrics include mAP (%) and rank (R) at

k accuracy (%).

Table 3.

Experimental results of the proposed CORE-ReID V2 framework and SOTA methods (Acc %) on Market-1501 and CUHK03 datasets. Bold values represent the best results while Underline values indicate the second-best performance.

Table 3.

Experimental results of the proposed CORE-ReID V2 framework and SOTA methods (Acc %) on Market-1501 and CUHK03 datasets. Bold values represent the best results while Underline values indicate the second-best performance.

| |

|

Market ➝ CUHK |

CUHK ➝ Market |

| Method |

Reference |

mAP |

R-1 |

R-5 |

R-10 |

mAP |

R-1 |

R-5 |

R-10 |

| SNR a [81] |

CVPR 2020 |

17.5 |

17.1 |

- |

- |

52.4 |

77.8 |

- |

- |

| UDAR [14] |

PR 2020 |

20.9 |

20.3 |

- |

- |

56.6 |

77.1 |

- |

- |

| QAConv50 a [82] |

ECCV 2020 |

32.9 |

33.3 |

- |

- |

66.5 |

85.0 |

- |

- |

| M3L a [83] |

CVPR 2021 |

35.7 |

36.5 |

- |

- |

62.4 |

82.7 |

- |

- |

| MetaBIN a [84] |

CVPR 2021 |

43.0 |

43.1 |

- |

- |

67.2 |

84.5 |

- |

- |

| DFH-Baseline [85] |

CVPR 2022 |

10.2 |

11.2 |

- |

- |

13.2 |

31.1 |

- |

- |

| DFH a [85] |

CVPR 2022 |

27.2 |

30.5 |

- |

- |

31.3 |

56.5 |

- |

- |

| META a [86] |

ECCV 2022 |

47.1 |

46.2 |

- |

- |

76.5 |

90.5 |

- |

- |

| ACL a [87] |

ECCV 2022 |

49.4 |

50.1 |

- |

- |

76.8 |

90.6 |

- |

- |

| RCFA [88] |

Electronics 2023 |

17.7 |

18.5 |

33.6 |

43.4 |

34.5 |

63.3 |

78.8 |

83.9 |

| CRS [89] |

JSJTU 2023 |

- |

- |

- |

- |

65.3 |

82.5 |

93.0 |

95.9 |

| MTI [90] |

JVCIR 2024 |

16.3 |

16.2 |

- |

- |

- |

- |

- |

- |

| PAOA+ a [91] |

WACV 2024 |

50.3 |

50.9 |

- |

- |

77.9 |

91.4 |

- |

- |

| Baseline (CORE-ReID) [11] |

Software 2024 |

62.9 |

61.0 |

79.6 |

87.2 |

83.6 |

93.6 |

97.3 |

98.7 |

| CORE-ReID V2 Tiny (ResNet18) |

Ours |

33.0 |

31.9 |

48.9 |

59.1 |

60.3 |

83.4 |

91.8 |

94.7 |

| CORE-ReID V2 |

Ours |

66.4 |

66.9 |

83.4 |

88.9 |

84.5 |

93.9 |

97.6 |

98.7 |

Table 4.

Experimental results of the proposed CORE-ReID framework and SOTA methods (Acc %) from Market-1501 and CUHK03 source datasets to target domain MSMT17 dataset. Bold values represent the best results while Underline values indicate the second-best performance. a denotes the method uses multiple source datasets, b indicates the implementation is based on the author’s code.

Table 4.

Experimental results of the proposed CORE-ReID framework and SOTA methods (Acc %) from Market-1501 and CUHK03 source datasets to target domain MSMT17 dataset. Bold values represent the best results while Underline values indicate the second-best performance. a denotes the method uses multiple source datasets, b indicates the implementation is based on the author’s code.

| |

|

Market ➝ MSMT |

CUHK ➝ MSMT |

| Method |

Reference |

mAP |

R-1 |

R-5 |

R-10 |

mAP |

R-1 |

R-5 |

R-10 |

| NRMT [92] |

ECCV 2020 |

19.8 |

43.7 |

56.5 |

62.2 |

- |

- |

- |

- |

| DG-Net++ [71] |

ECCV 2020 |

22.1 |

48.4 |

- |

- |

- |

- |

- |

- |

| MMT [15] |

ICLR 2020 |

22.9 |

52.5 |

- |

- |

13.5 b

|

30.9 b

|

44.4 b

|

51.1 b

|

| UDAR [14] |

PR 2020 |

12.0 |

30.5 |

- |

- |

11.3 |

29.6 |

- |

- |

| Dual-Refinement [93] |

ArXiv 2020 |

25.1 |

53.3 |

66.1 |

71.5 |

- |

- |

- |

- |

| SNR a [81] |

CVPR 2020 |

- |

- |

- |

- |

7.7 |

22.0 |

- |

- |

| QAConv50 a [82] |

ECCV 2020 |

- |

- |

- |

- |

17.6 |

46.6 |

- |

- |

| M3L a [83] |

CVPR 2021 |

- |

- |

- |

- |

17.4 |

38.6 |

- |

- |

| MetaBIN a [84] |

CVPR 2021 |

- |

- |

- |

- |

18.8 |

41.2 |

- |

- |

| RDSBN [94] |

CVPR 2021 |

30.9 |

61.2 |

73.1 |

77.4 |

- |

- |

- |

- |

| ClonedPerson [95] |

CVPR 2022 |

14.6 |

41.0 |

- |

- |

13.4 |

42.3 |

- |

- |

| META a [86] |

ECCV 2022 |

- |

- |

- |

- |

24.4 |

52.1 |

- |

- |

| ACL a [87] |

ECCV 2022 |

- |

- |

- |

- |

21.7 |

47.3 |

- |

- |

| CLM-Net [96] |

NCA 2022 |

29.0 |

56.6 |

69.0 |

74.3 |

- |

- |

- |

- |

| CRS [89] |

JSJTU 2023 |

22.9 |

43.6 |

56.3 |

62.7 |

22.2 |

42.5 |

55.7 |

62.4 |

| HDNet [97] |

IJMLC 2023 |

25.9 |

53.4 |

66.4 |

72.1 |

- |

- |

- |

- |

| DDNet [98] |

AI 2023 |

28.5 |

59.3 |

72.1 |

76.8 |

- |

- |

- |

- |

| CaCL [99] |

ICCV 2023 |

36.5 |

66.6 |

75.3 |

80.1 |

- |

- |

- |

- |

| PAOA+ a [91] |

WACV 2024 |

- |

- |

- |

- |

26.0 |

52.8 |

- |

- |

| OUDA [100] |

WACV 2024 |

20.2 |

46.1 |

- |

- |

- |

- |

- |

- |

| M-BDA [101] |

VCIR 2024 |

26.7 |

51.4 |

64.3 |

68.7 |

- |

- |

- |

- |

| UMDA [102] |

VCIR 2024 |

32.7 |

62.4 |

72.7 |

78.4 |

- |

- |

- |

- |

| Baseline (CORE-ReID) [11] |

Software 2024 |

41.9 |

69.5 |

80.3 |

84.4 |

40.4 |

67.3 |

79.0 |

83.1 |

| CORE-ReID V2 Tiny (ResNet18) |

Ours |

35.8 |

64.7 |

76.6 |

80.8 |

18.8 |

44.2 |

57.1 |

62.3 |

| CORE-ReID V2 |

Ours |

44.1 |

71.3 |

82.4 |

86.0 |

40.7 |

68.7 |

79.7 |

83.4 |

The results highlight that CORE-ReID V2 significantly outperforms existing SOTA methods, demonstrating the effectiveness of our approach. By incorporating the Ensemble Fusion++ component with ECAB and proposed SECAB, CORE-ReID V2 achieves substantial improvements over the original CORE-ReID. Notably, CORE-ReID V2 surpasses PAOA+ by large margins, achieving mAP improvements of 16.1% and 6.6% on the Market ➝ CUHK and CUHK ➝ Market tasks, respectively, even though PAOA+ utilizes additional training data. Additionally, our framework delivers significant enhancements over CACL and PAOA+, achieving mAP gains of 7.6% and 14.7% mAP on Market → MSMT and CUHK → MSMT tasks, respectively.

4.4. Benchmark on Vehicle ReID

We evaluate CORE-ReID V2 against state-of-the-art methods on VehicleID ➝ VeRi-776 (

Table 5) and VeRi-776➝ VehicleID (

Table 6) tasks. "Baseline" refers to the implementation based on CORE-ReID [

11] with Ensemble Fusion component, while CORE-ReID V2 is the proposed algorithm. CORE-ReID V2 Tiny is a lightweight variant using ResNet18. Metrics include mAP (%) and rank (R) at

k accuracy (%).

Across both transfer scenarios (VeRi-776 ➝ VehicleID and VehicleID ➝ VeRi-776), the proposed CORE-ReID V2 framework demonstrates superior performance compared to existing state-of-the-art (SOTA) methods. Specifically, our evaluation includes supervised approaches such as FACT and Mixed Diff+CCL, as well as unsupervised Person ReID methods PUL and UDAR. Additionally, we incorporate leading SOTA techniques, including PAL, MMT, SPCL PLM, CSP+FCD, and DMDU, for a comprehensively comparative analysis. For the VeRi-776 ➝ VehicleID adaptation task, CORE-ReID V2 achieves 67.0%, 63.02%, and 57.99% mAP across three test modes, surpassing the DMDU method by 5.21%, 6.29%, and 4.02% in each case. In the VehicleID ➝ VeRi-776 transfer scenario, MGR-GCL and MATNet+DMDU attain 48.73% and 49.25% mAP, respectively. CORE-ReID V2 outperforms all competing methods, achieving a mAP of 49.50% and Rank-1 accuracy of 80.15%, setting its position as a new SOTA approach.

4.5. Ablation Study

To validate our approach, we employ Grad-CAM [

111] to visualize feature maps at the global feature level. Key features for each person and vehicle are highlighted using heatmaps, where color intensity indicates importance - blue represents less significant regions, while red denotes the most crucial areas for Object Re-identification. As illustrated in

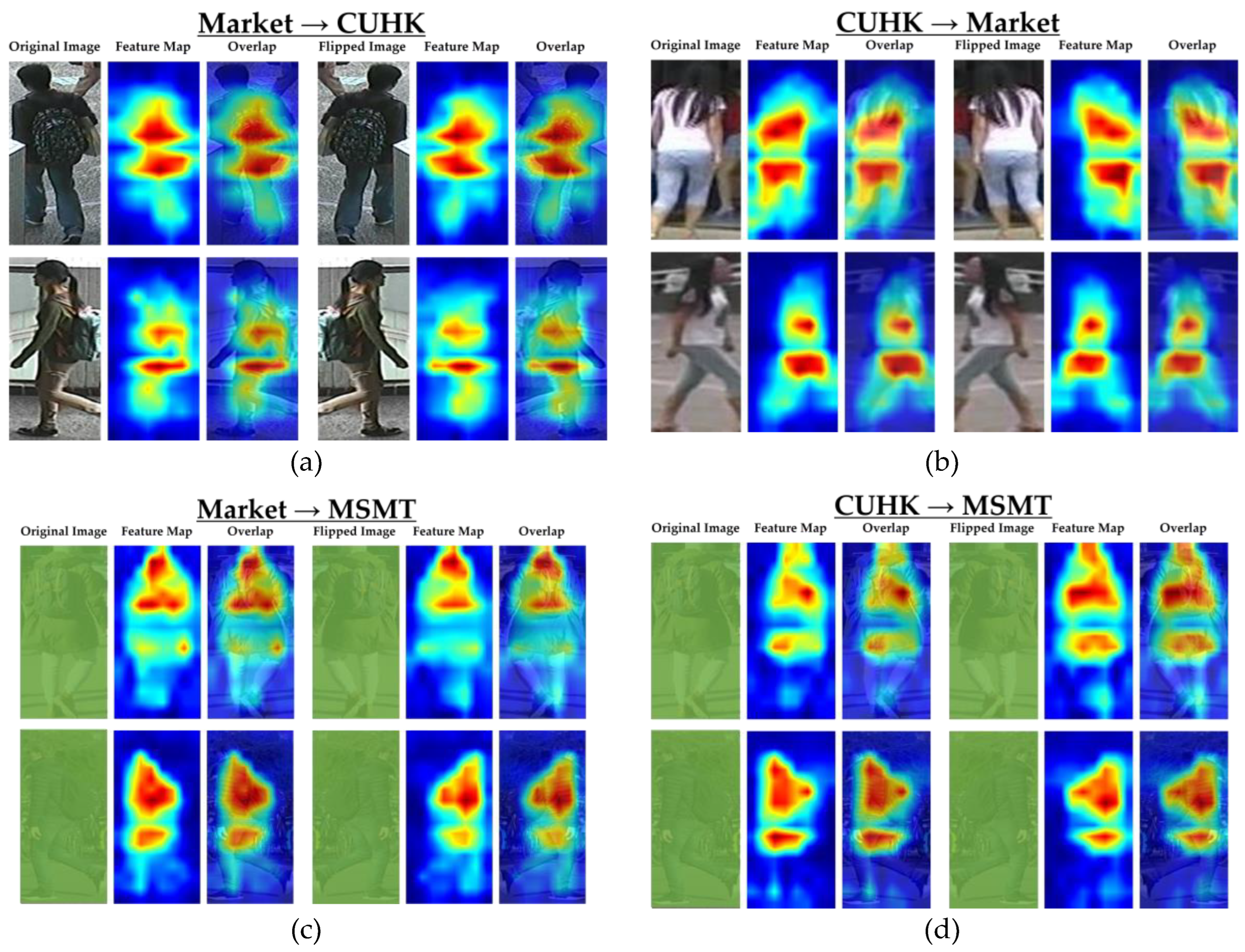

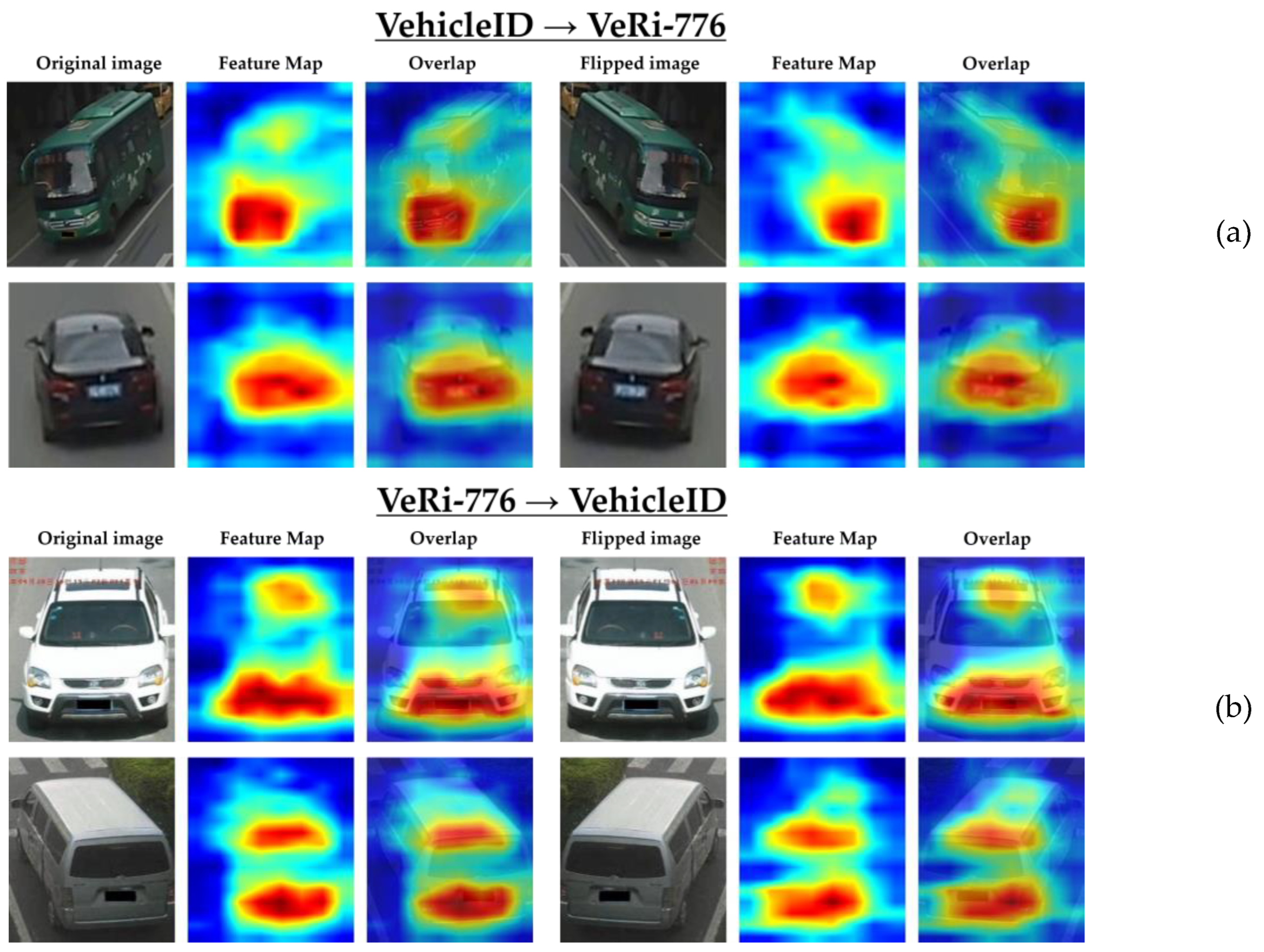

Figure 10 and

Figure 11, the essential features are concentrated on the target person’s body and the vehicle’s structure. Furthermore, the heatmaps exhibit similar distributions between the original and flipped images, reinforcing the performance of our method. This consistency aligns with the accuracy results reported above, further validating the effectiveness of CORE-ReID V2.

In the Market ➝ MSMT and CUHK ➝ MSMT transfer scenarios, the Market ➝ MSMT model demonstrates a slightly superior ability to extract important features. The heatmaps reveal a more concentrated distribution in the middle and lower body regions for both the original and flipped images. This observation may explain the higher accuracy achieved by the Market ➝ MSMT model compared to the CUHK ➝ MSMT model, as reported in

Table 4.

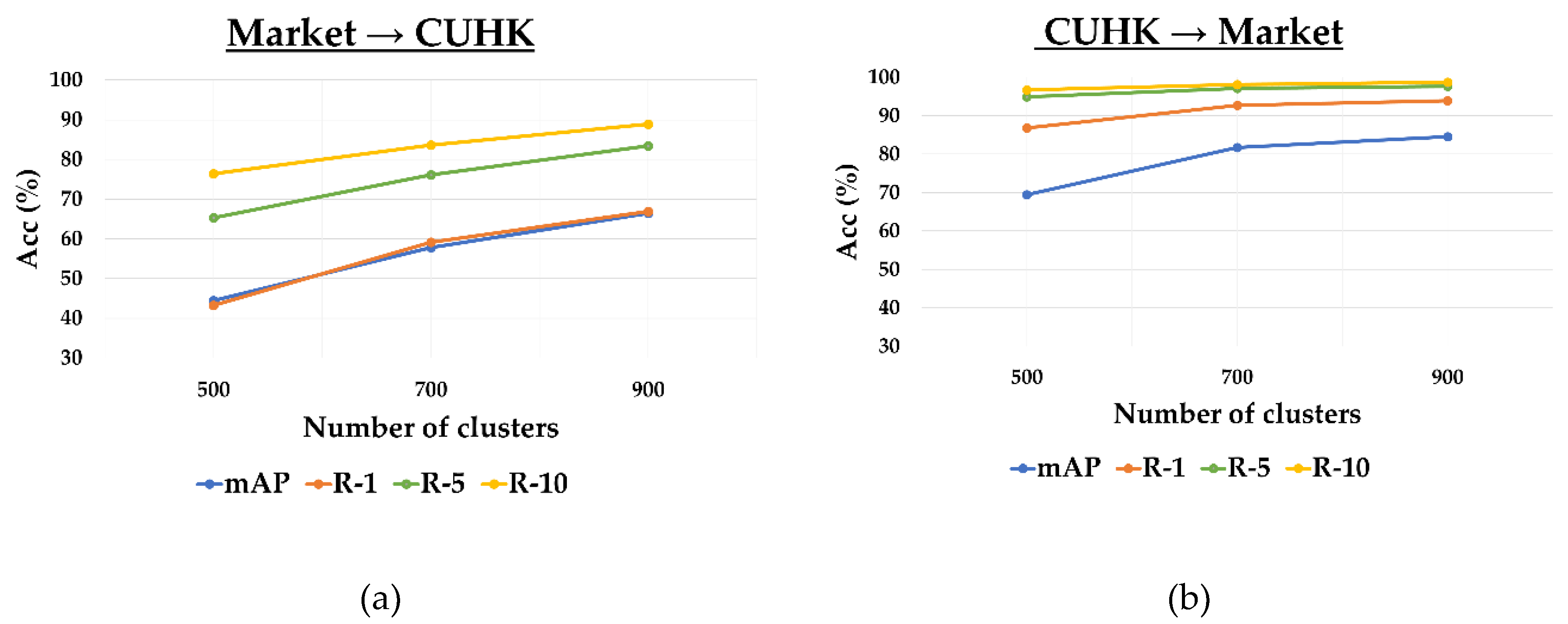

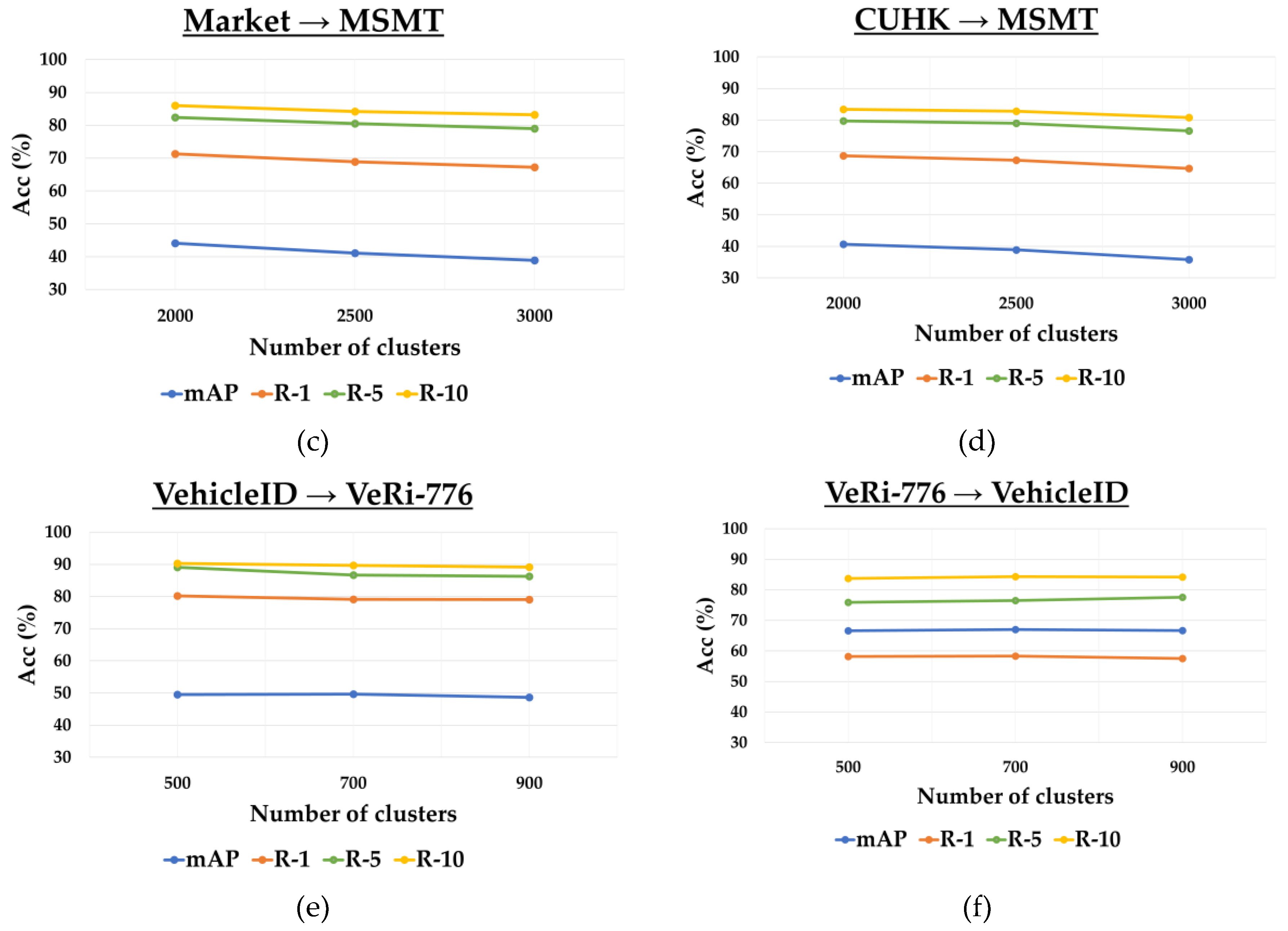

K-means Clustering Settings: we utilize the K-Means clustering approach to generate pseudo-labels for the target domain, with parameters varying across different datasets. As shown in

Table 7, our framework achieves optimal performance on Market

→ CUHK, CUHK

→ Market, Market

→ MSMT, CUHK

→ MSMT, VehicleID

→ VeRi-776, and VeRi-776

→ VehicleID Small with cluster settings of 900, 900, 2000, 2000, 500 and 700, respectively.

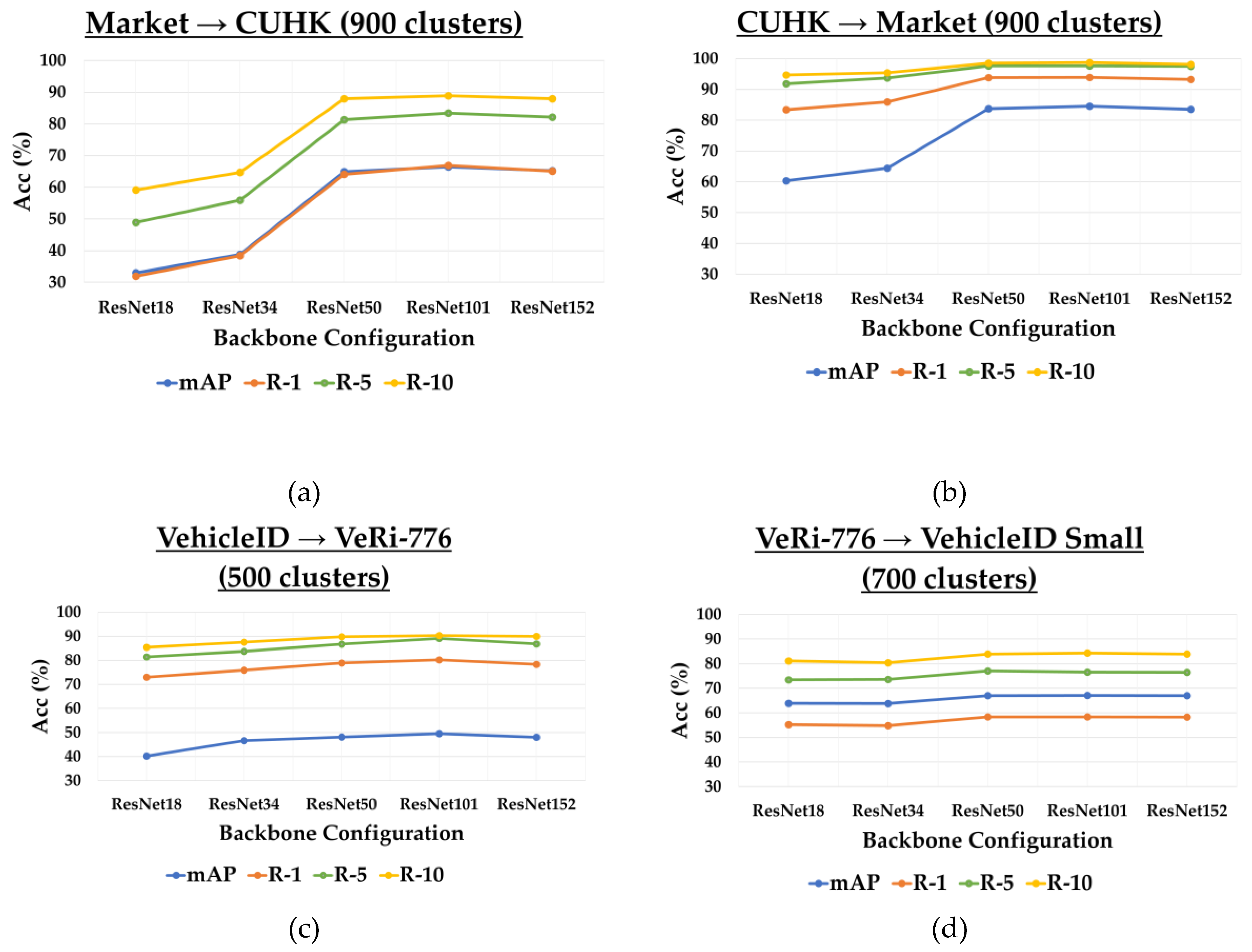

Figure 12 shows that the performance of our approach varies depending on the dataset pairs and the clustering parameter values (

) utilized.

Greedy K-means++ Initialization: we enhance clustering performance by employing the greedy K-means++ initialization strategy, which optimally balances randomness and centroid selection to improve cluster quality. This approach not only strengthens feature learning but also ensures a more stable pseudo-label generation, addressing challenges associated with obscure learning.

Table 8 presents the experimental results comparing greedy K-means++ initialization with a random approach.

SECAB Configuration: We use SECAB to enhance global features, leveraging attention mechanisms to emphasize crucial features while suppressing unnecessary ones. To validate the effectiveness of SECAB, we conduct an experiment by removing it from our network, as shown in

Table 9.

Backbone Settings: we assess the performance of both complex backbones (ResNet50, ResNet101, and ResNet152) and lightweight backbones (ResNet18 and ResNet34) for unsupervised domain adaptation in Object ReID. Through extensive experiments and analysis, we gain insights into the impact of backbone architecture on overall performance, as well as its computational efficiency and suitability for resource-constrained environments, as shown in

Table 10. Among the tested configurations, ResNet101 delivers the best performance in Market

→ CUHK, CUHK

→ Market, VehicleID

→ VeRi-776, and VeRi-776

→ VehicleID scenarios. All experiments were conducted on two machines, each equipped with dual Quadro RTX 8000 GPUs.

Figure 13 demonstrates that lightweight backbones, such as ResNet18 and ResNet34, are also supported, highlighting the adaptability of our framework to various backbone architectures.

5. Conclusions

In this paper, we introduced CORE-ReID V2, an enhanced framework designed to address limitations in its predecessor, CORE-ReID, while extending its applicability to Object ReID tasks. By incorporating the novel Ensemble Fusion++ module, which adaptively enhances both local and global features, and utilizing advanced clustering techniques such as greedy KMeans++ initialization, CORE-ReID V2 achieves superior performance in Unsupervised Domain Adaptation (UDA) for Person and Vehicle ReID tasks. Furthermore, support for lightweight backbones like ResNet18 and ResNet34 makes the framework suitable for real-time and resource-constrained applications. Experimental results on widely used UDA Person ReID and Vehicle ReID datasets demonstrate that CORE-ReID V2 outperforms state-of-the-art methods, showcasing its strength and adaptability. These contributions not only push the boundaries of UDA-based Object ReID but also provide a solid foundation for further exploration in this domain.

Despite its advancements, CORE-ReID V2 has several limitations that warrant attention. The scalability of the framework to large-scale datasets with millions of instances remains unexplored, which could introduce challenges in both performance and efficiency. While the framework performs well on benchmark datasets, its scalability to larger and more diverse datasets with millions of instances remains unexplored. Furthermore, the framework's primary focus on Person and Vehicle ReID tasks also limits its exploration of broader Object ReID applications, such as animal or product identification. Moreover, its reliance on the quality of pseudo-labels makes it vulnerable to performance degradation in noisy or highly complex scenarios.

Future work will address these limitations by incorporating distributed training techniques and more efficient clustering algorithms to enhance scalability for large datasets. Extending the framework’s application to a broader range of Object ReID tasks, such as animal, product, or scene-specific ReID, by incorporating domain-specific priors and augmentation strategies. Exploring advanced techniques, such as contrastive learning and adversarial regularization, to mitigate the impact of noisy pseudo-labels and improve model performance. Hopefully, these directions aim to unlock the full potential of CORE-ReID V2 and inspire future advancements in UDA for Object ReID.

Author Contributions

Writing—original draft, T.Q.N. and O.D.A.P.; conceptualization, T.Q.N., O.D.A.P. and S.A.I; methodology, T.Q.N. and O.D.A.P.; software, T.Q.N., O.D.A.P. and S.A.I.; validation, T.Q.N., H.D.P. and R.T.; formal analysis, T.Q.N. and O.D.A.P.; investigation, T.Q.N., O.D.A.P. and S.A.I.; resources, T.Q.N., O.D.A.P., H.D.P. and R.T.; data curation, T.Q.N., S.A.I., H.D.P. and R.T.; writing—review and editing, T.Q.N., O.D.A.P., S.A.I., H.D.P. and R.T.; visualization, T.Q.N., H.D.P. and R.T.; supervision, O.D.A.P.; project administration, T.Q.N.; funding acquisition, T.Q.N. and O.D.A.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by a Grant-in-Aid for Scientific Research (KAKENHI) from the Japan Society for the Promotion of Science (JSPS). Grant number: 25K15160.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Acknowledgments

The authors sincerely appreciate the support of CyberCore Co., Ltd. for providing the necessary training environments. They also extend their gratitude to the anonymous reviewers for their insightful feedback, which has contributed to enhancing the quality of this paper. Additionally, the authors would like to thank Raymond Swannack, a Ph.D. candidate at the Graduate School of Information and Computer Science, Iwate Prefectural University, for his assistance in proofreading the manuscript.

Conflicts of Interest

The authors declare that they have no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| ECAB |

Efficient Channel Attention Block |

| BMFN |

Bidirectional Mean Feature Normalization |

| CBAM |

Convolutional Block Attention Module |

| CNN |

Convolutional Neural Network |

| CORE-ReID |

Comprehensive Optimization and Refinement through Ensemble fusion in Domain Adaptation for Person Re-identification |

| HHL |

Hetero-Homogeneous Learning |

| MMFA |

Multi-task Mid-level Feature Alignment |

| MMT |

Mutual Mean-Teaching |

| Object ReID |

Object Re-identification |

| SECAB |

Simplified Efficient Channel Attention Block |

| SOTA |

State-Of-The-Art |

| SSG |

Self-Similarity Grouping |

| UDA |

Unsupervised Domain Adaptation |

| UNRN |

Uncertainty-Guided Noise-Resilient Network |

References

- Liu, X.; Liu, W.; Mei, T.; Ma, H. A Deep Learning-Based Approach to Progressive Vehicle Re-identification for Urban Surveillance. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 2016; pp. 869–884.

- Liu, X.; Liu, W.; Mei, T.; Ma, H. PROVID: Progressive and Multimodal Vehicle Reidentification for Large-Scale Urban Surveillance. IEEE Transactions on Multimedia 2018, 20, 645-658. [CrossRef]

- Wang, Z.; He, L.; Tu, X.; Zhao, J.; Gao, X.; Shen, S. Robust Video-Based Person Re-Identification by Hierarchical Mining. IEEE Transactions on Circuits and Systems for Video Technology 32, 8179 - 8191. [CrossRef]

- Zheng, L.; Yang, Y.; Hauptmann, A.G. Person Re-identification: Past, Present and Future. arXiv 2016, arXiv:1610.02984.

- Chang, Z.; Zheng, S. Revisiting Multi-Granularity Representation via Group Contrastive Learning for Unsupervised Vehicle Re-identification. ArXiv 2024, abs/2410.21667. [CrossRef]

- Luo, H.; Jiang, W.; Gu, Y.; Liu, F.; Liao, X.; Lai, S. A Strong Baseline and Batch Normalization Neck for Deep Person Re-Identification. IEEE Transactions on Multimedia 22, 2597 - 2609. [CrossRef]

- Sharma, C.; Kapil, S.R.; Chapman, D. Person Re-Identification with a Locally Aware Transformer. ArXiv 2021, abs/2106.03720. [CrossRef]

- Chen, W.; Xu, X.; Jia, J.; Luo, H.; Wang, Y.; Wang, F. Beyond Appearance: A Semantic Controllable Self-Supervised Learning Framework for Human-Centric Visual Tasks. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17-24 June 2023, 2023; pp. 15050-15061.

- Almeida, E.; Silva, B.; Batista, J. Strength in Diversity: Multi-Branch Representation Learning for Vehicle Re-Identification. In Proceedings of the 2023 IEEE 26th International Conference on Intelligent Transportation Systems (ITSC), Bilbao, Spain, 24-28 September 2023; pp. 4690-4696.

- Li, J.; Gong, X. Prototypical Contrastive Learning-based CLIP Fine-tuning for Object Re-identification. arXiv 2023. [CrossRef]

- Nguyen, T.Q.; Prima, O.D.A.; Hotta, K. CORE-ReID: Comprehensive Optimization and Refinement through Ensemble Fusion in Domain Adaptation for Person Re-Identification. Software 2024, 3, 227-249. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), as Vegas, NV, USA, 27-30 June 2016; pp. 770-778.

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jegou, H. Training Data-efficient Image Transformers & Distillation Through Attention. In Proceedings of the 38th International Conference on Machine Learning, Virtual, 2021; pp. 10347 - 10357.

- Song, L.; Wang, C.; Zhang, L.; Du, B.; Zhang, Q.; Huang, C.; Wang, X. Unsupervised Domain Adaptive Re-identification: Theory and Practice. Pattern Recognition 2020, 102, 11. [CrossRef]

- Ge, Y.; Chen, D.; Li, H. Mutual Mean-Teaching: Pseudo Label Refinery for Unsupervised Domain Adaptation on Person Re-identification. ArXiv 2020. [CrossRef]

- Fu, Y.; Wei, Y.; Wang, G.; Zhou, Y.; Shi, H.; Uiuc, U. Self-Similarity Grouping: A Simple Unsupervised Cross Domain Adaptation Approach for Person Re-Identification. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea (South), 27 October 2019 - 02 November 2019; pp. 6111-6120.

- Ding, J.; Zhou, X. Learning Feature Fusion for Unsupervised Domain Adaptive Person Re-identification. In Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR), Montreal, QC, Canada, 21–25 August 2022; pp. 2613-2619.

- Zhou, R.; Wang, Q.; Cao, L.; Xu, J.; Zhu, X.; Xiong, X.; Zhang, H.; Zhong, Y. Dual-Level Viewpoint-Learning for Cross-Domain Vehicle Re-Identification. Electronics 2024, 13. [CrossRef]

- Arthur, D.; Vassilvitskii, S. K-means++: The Advantages of Careful Seeding. In Proceedings of the Eighteenth Annual ACM-SIAM Symposium on Discrete Algorithms, New Orleans, Louisiana, 2007; pp. 1027 - 1035.

- He, S.; Luo, H.; Wang, P.; Wang, F.; Li, H.; Jiang, W. TransReID: Transformer-based Object Re-Identification. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10-17 October 2021; pp. 15013-15022.

- Chen, Y.; Zhu, X.; Gong, S. Instance-Guided Context Rendering for Cross-Domain Person Re-Identification. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea (South), 27 October - 02 November 2019; pp. 232-242.

- Deng, W.; Zheng, L.; Ye, Q.; Kang, G.; Yang, Y.; Jiao, J. Image-Image Domain Adaptation with Preserved Self-Similarity and Domain-Dissimilarity for Person Re-identification. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18-23 June 2018; pp. 994-1003.

- Zhang, X.; Ge, Y.; Qiao, Y.; Li, H. Refining Pseudo Labels with Clustering Consensus over Generations for Unsupervised Object Re-identification. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20-25 June 2021; pp. 3435-3444.

- Saenko, K.; Kulis, B.; Fritz, M.; Darrell, T. Adapting Visual Category Models to New Domains. In Proceedings of the Computer Vision – ECCV 2010, Berlin, Heidelberg, 5-11 September, 2010; pp. 213–226.