Submitted:

23 May 2025

Posted:

26 May 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

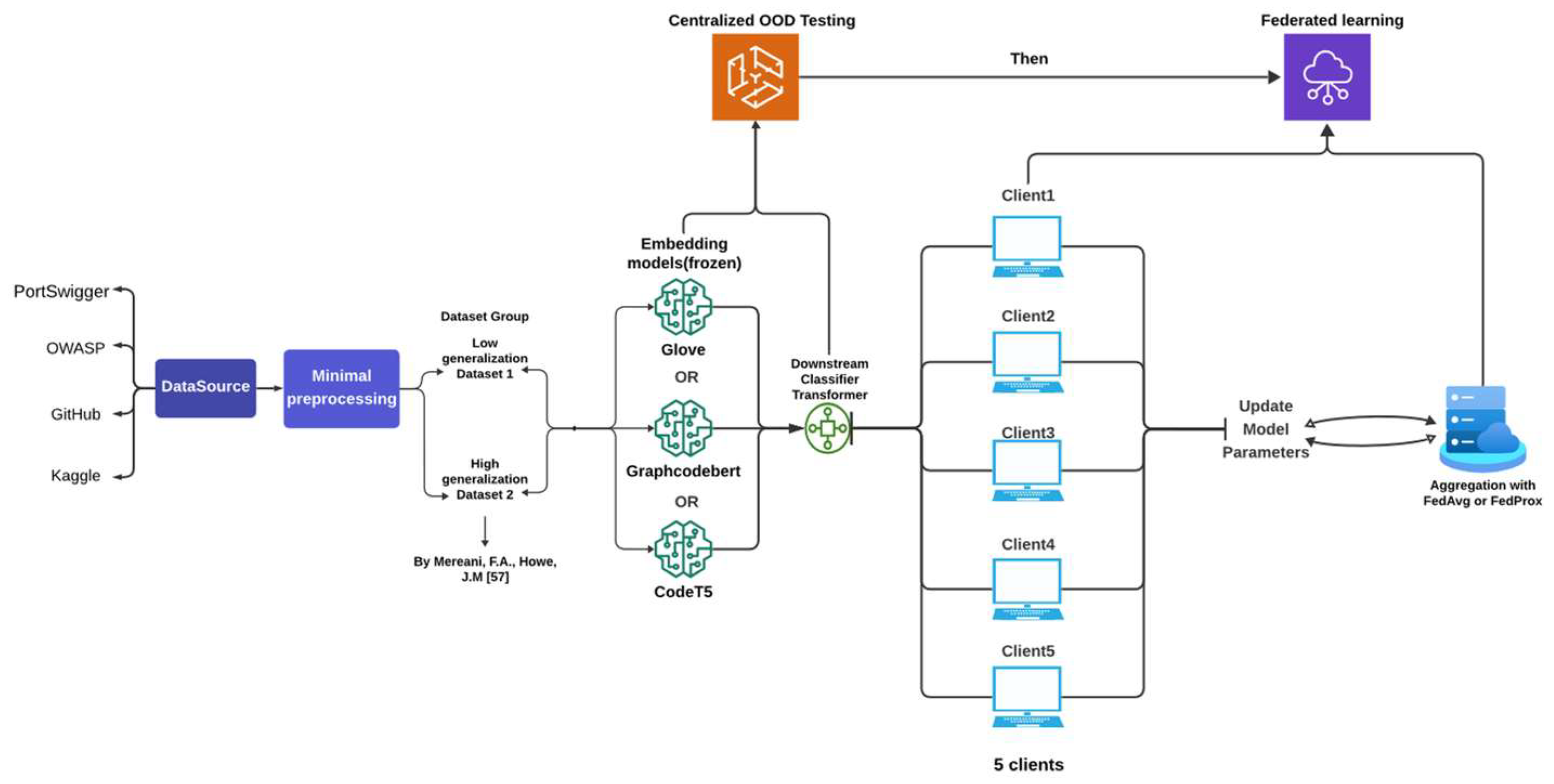

- We design a federated learning (FL) framework for XSS detection under structurally non-IID client distributions, incorporating diverse XSS types, obfuscation styles, and attack patterns. This setup reflects real-world asymmetry, where some clients contain partial or ambiguous indicators and others contain clearer attacks. Importantly, structural divergence also affects negatives, whose heterogeneity is a key yet underexplored factor in generalisation failure. Our framework enables the study of bidirectional OOD, where fragmented negatives cause high false positive rates under distribution mismatch.

- Unlike prior work that mixes lexical or contextual features across splits, we maintain strict structural separation between training and testing data. By using an external dataset [57] as an OOD domain, we isolate bidirectional distributional shifts across both classes under FL. Our analysis shows that generalisation failure is can also be driven by structurally complicated benign samples not only by rare or obfuscated attacks, emphasizing the importance of structure-aware dataset design.

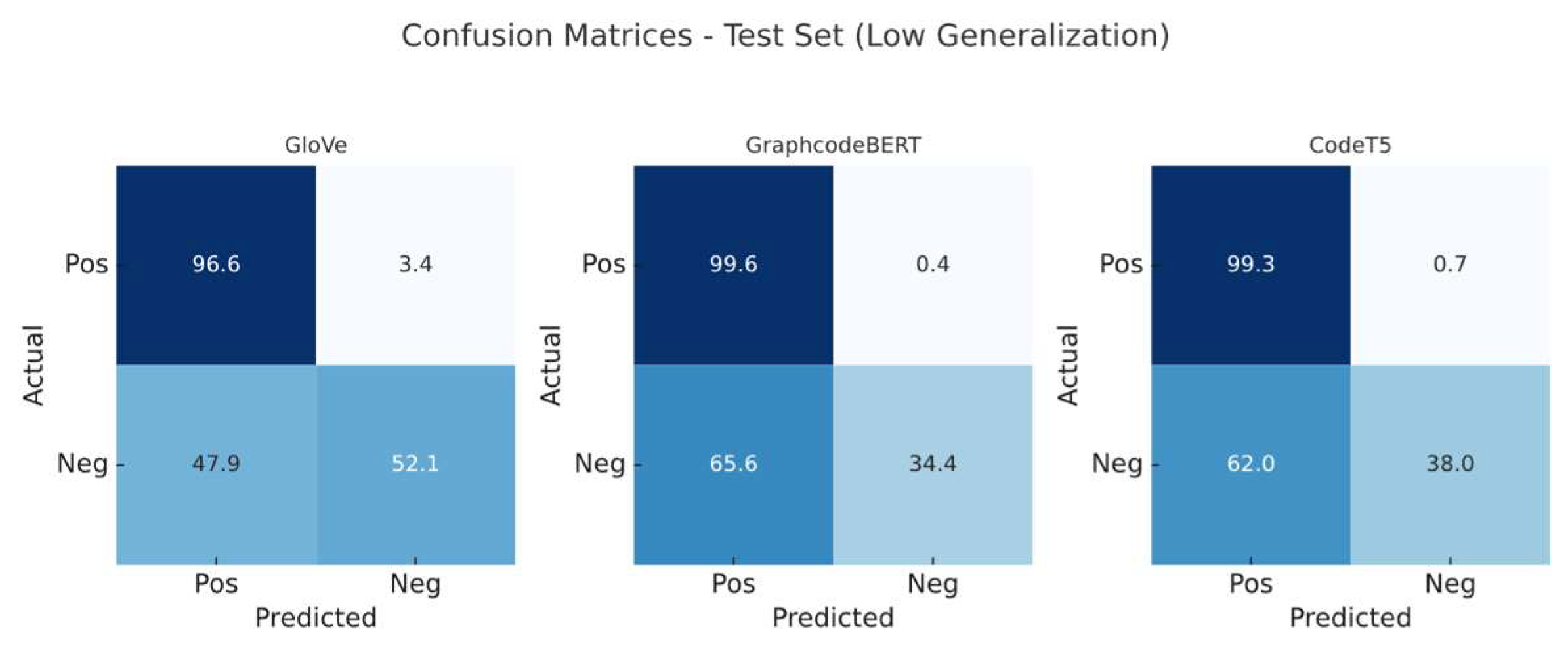

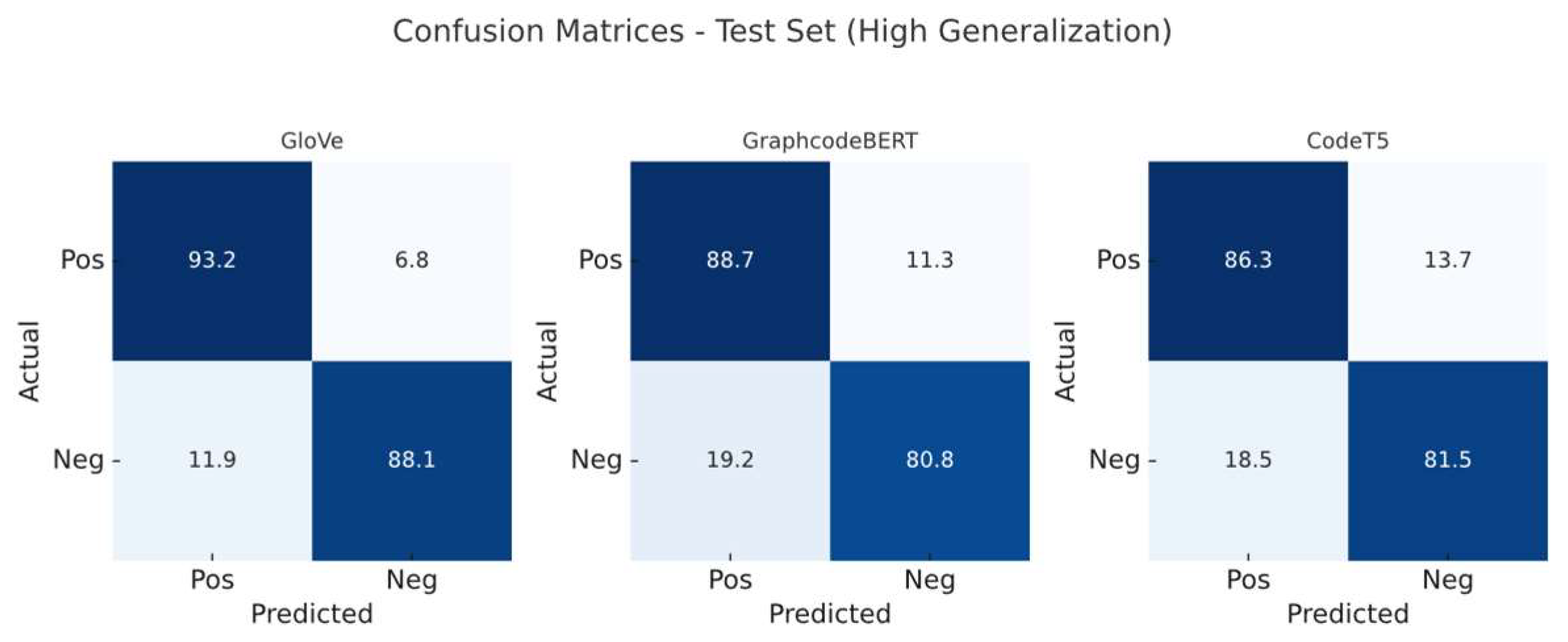

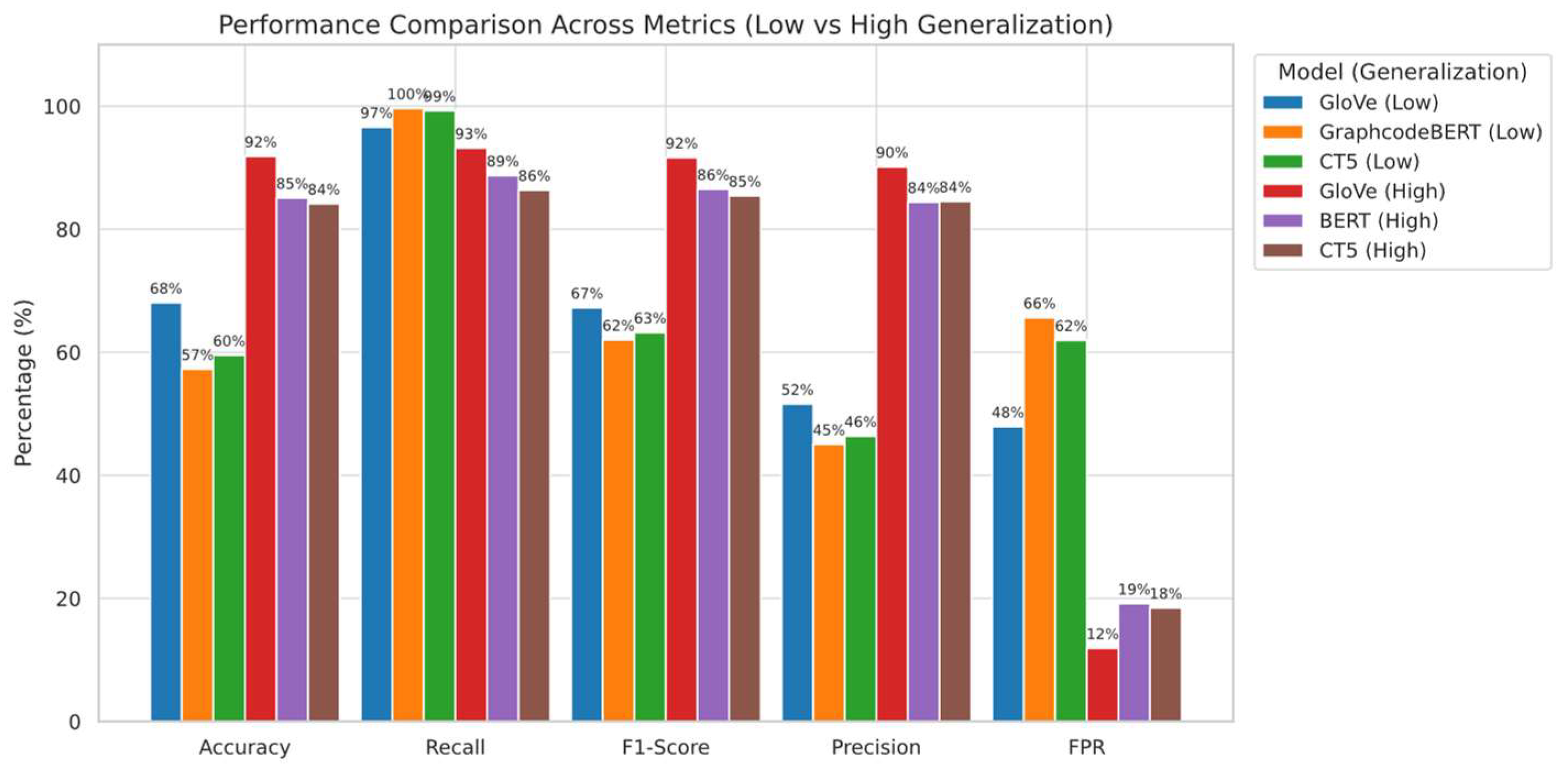

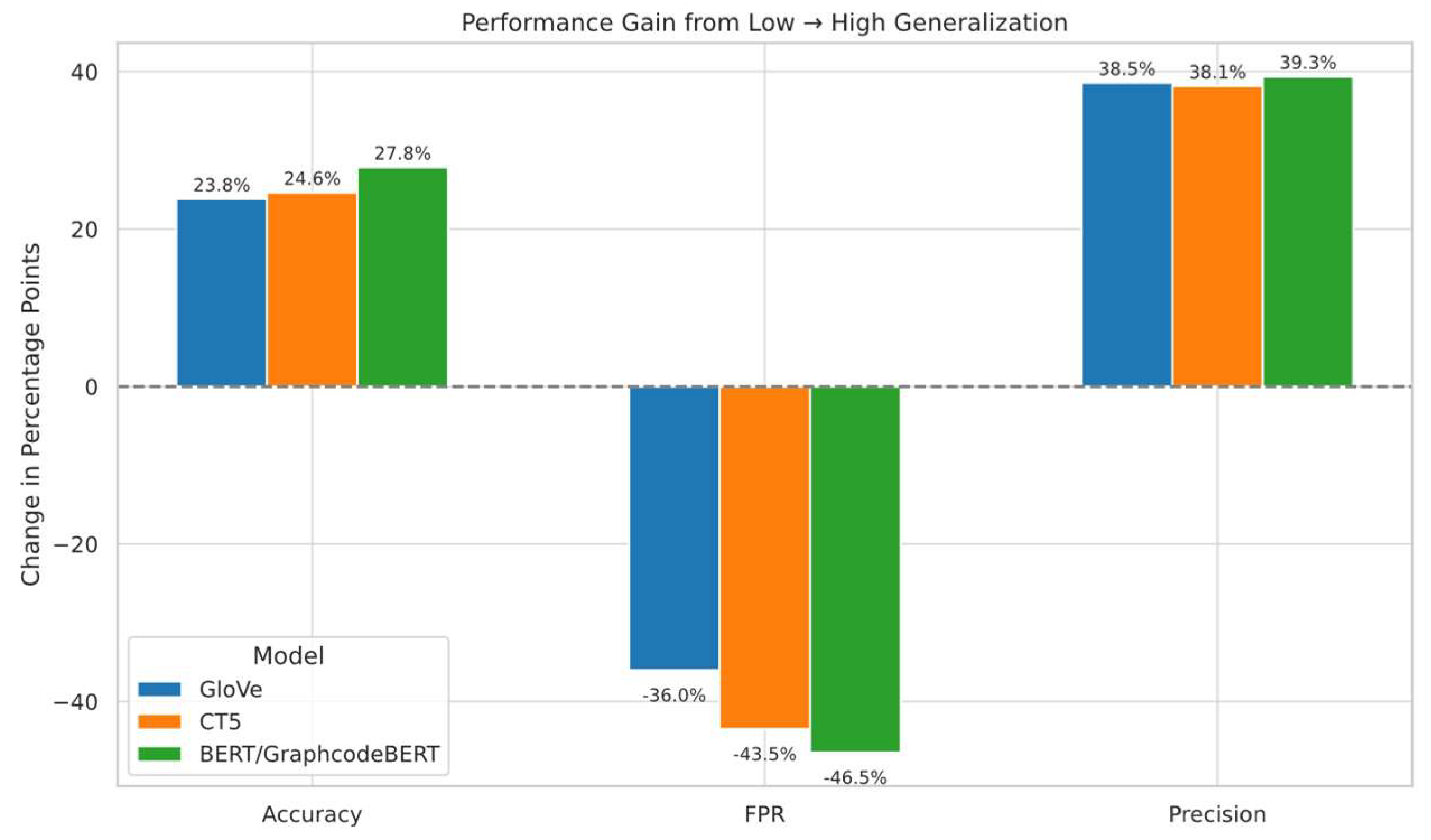

- We compare three embedding models (GloVe [24], CodeT5 [26], GraphCodeBERT [25]) in centralised and federated settings, showing that generalisation depends more on embedding compatibility with class heterogeneity than on model capacity. Using divergence metrics and ablation studies, we demonstrate that structurally complex and underrepresented negatives lead to severe false positives. Static embeddings like GloVe show more robust generalisation under structural OOD, indicating that stability relies more on representational resilience than expressiveness.

2. Related work

3. Methodology and Experimental Design

3.1. Settings and Rationale

3.1.1. Experiment Environment

3.1.2. Embedding Selection Rationale

- GloVe-6B-300d (static embedding): A word embedding model that maps words to fixed-dimensional vectors based on co-occurrence statistics.

- GraphcodeBERT-base (BERT-derived, pre-trained with data flow graphs A transformer trained on code using masked language modeling, edge prediction, and token-graph alignment. It models syntax and variable dependencies, making it suited for well-structured XSS payloads.

- CodeT5-base (sequence-to-sequence, code-aware): A unified encoder-decoder model pre-trained on large-scale code corpora. In our setting, we utilize the encoder component to extract contextual embeddings. CodeT5 captures both local and global structural patterns through its masked span prediction and identifier-aware objectives, making it suitable for modeling fragmented or obfuscated payloads that lack explicit syntax trees.

3.1.3. Freeze Emebedding

3.1.4. Downstream Classifier

3.1.5. Optimization and Aggregation

3.2. Dataset Design and Explanation

3.2.1. Dataset Construction

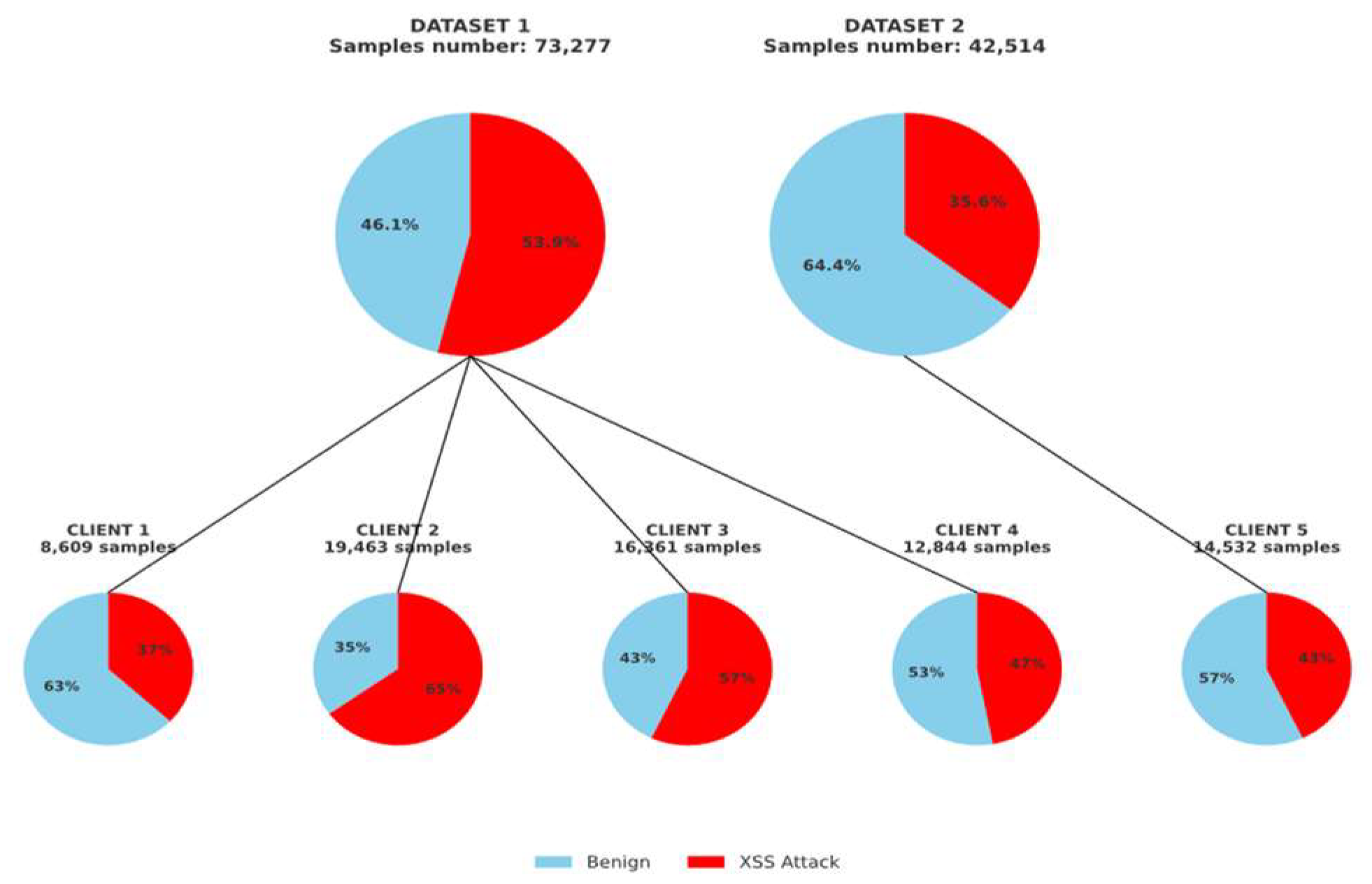

- Dataset 1: A manually curated training set (73,277 samples; 39,134 positives) sourced from OWASP, GitHub, and PortSwigger. It includes diverse XSS types (Reflected, Stored, DOM-based) and obfuscation styles. Positive samples are often partial or fragmented payloads, while negative samples are heterogeneous, including mixed-format code snippets, incomplete traces, and unrelated injections.

- Dataset 2: A structurally consistent test set (42,514 samples; 15,137 positives) from [57], dominated by fully-formed Reflected XSS payloads (~95.7%) with high lexical and syntactic regularity. Its negative samples are more cleanly separated (e.g., full URLs, plain text), resulting in lower structural ambiguity.

- We partition Dataset 1 across five clients with attack-type and source-specific imbalance;

- We use Dataset 2 as an out-of-distribution (OOD) test set to evaluate generalisation under structural shift.

3.2.3. Semantic-Preserving Substitution and Lexical Regularisation

3.2.4. Quantitative Lexical-Level Analysis Reveals Distributional Divergence

3.2.5. Visualisation of Different Datasets’ Positive Samples

3.3. Experimental Procedure Overview

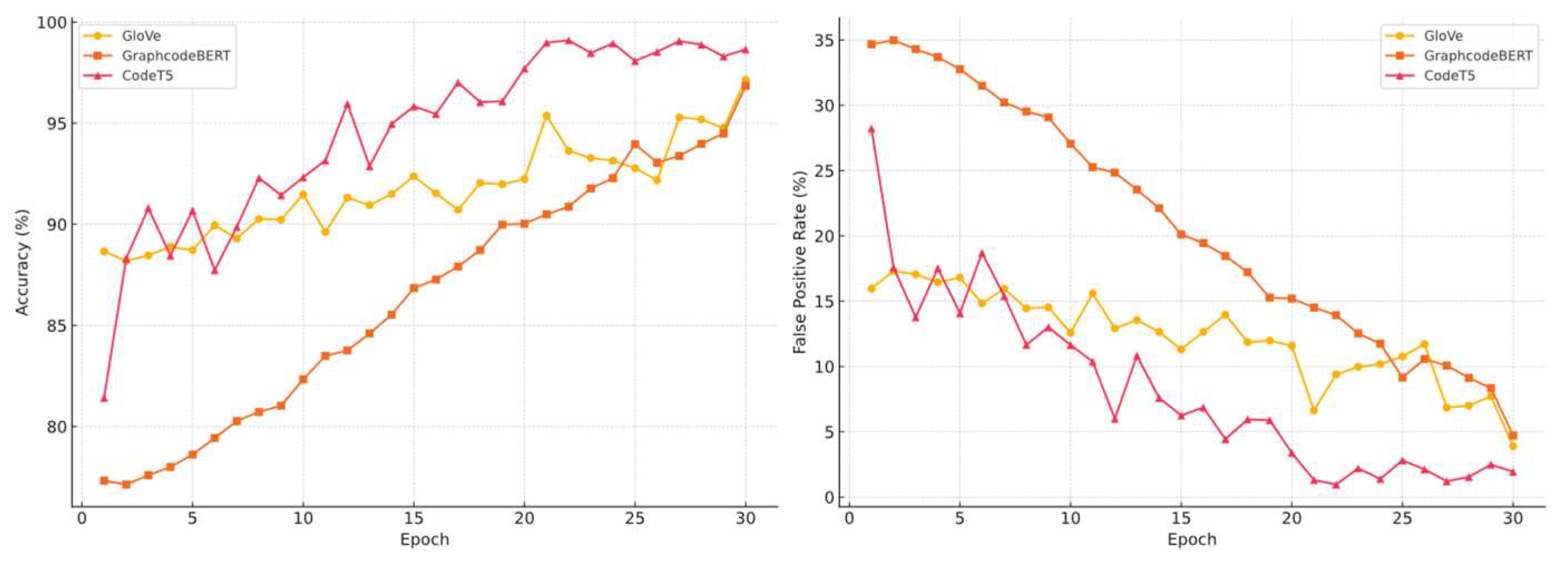

- Centralized Embedding Evaluation: We tested three embedding models, GloVe, GraphcodeBERT, and CodeT5 under centralised settings using Dataset 1 for training and Dataset 2 for testing. This setup evaluates each model’s generalisation ability to unseen attack structures in an OOD context.

- Dataset Swap OOD Test: To further explore the impact of feature distribution divergence, we reversed the datasets: training on Dataset 2 and testing on Dataset 1. This demonstrates how models trained on one domain generalise (or fail to generalise) to structurally distinct inputs.

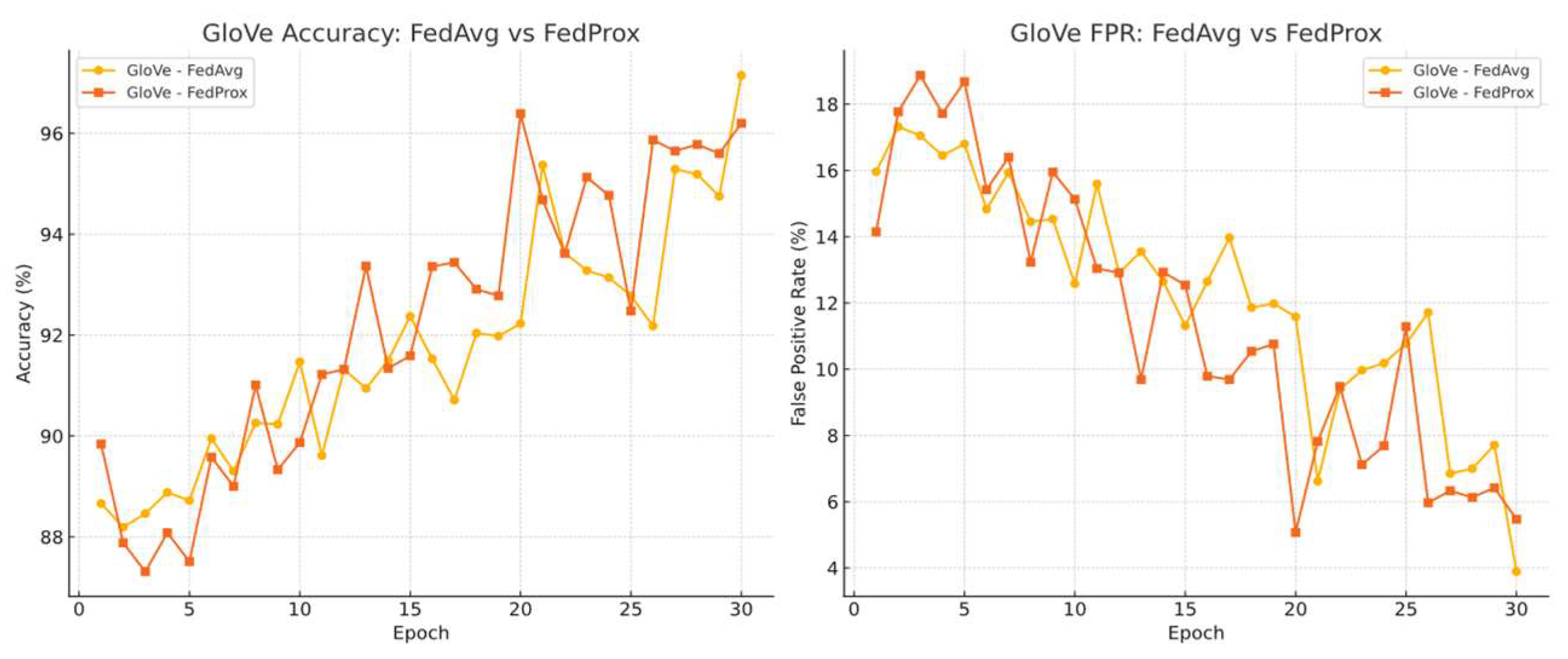

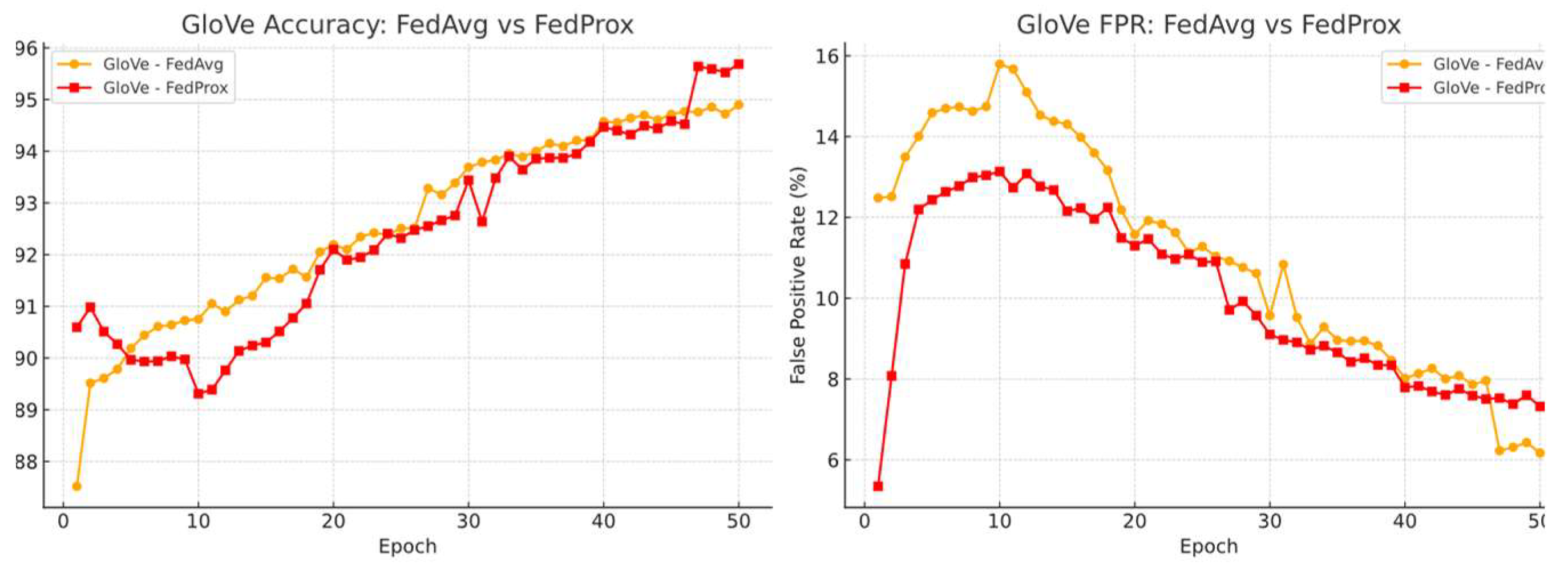

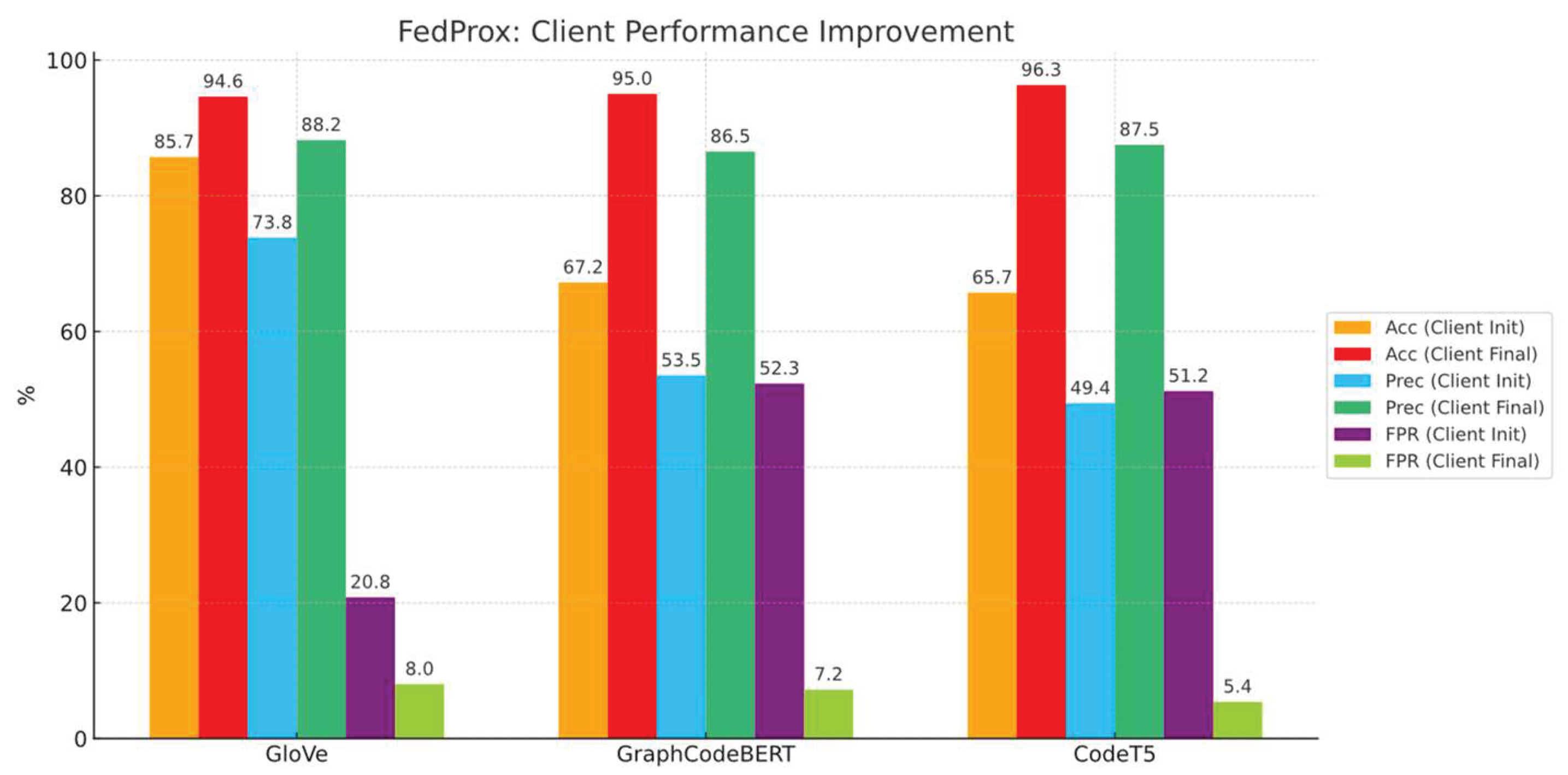

- Federated Learning with Non-IID Clients: We simulated a more realistic extreme horizontal FL setup with five clients. Dataset 1 and Dataset 2 were partitioned across clients to introduce heterogeneous distributions. Each client was trained locally and evaluated on unseen data from the other dataset. We used FedAvg and FedProx for aggregation, evaluating accuracy, false positive rate, recall, and precision.

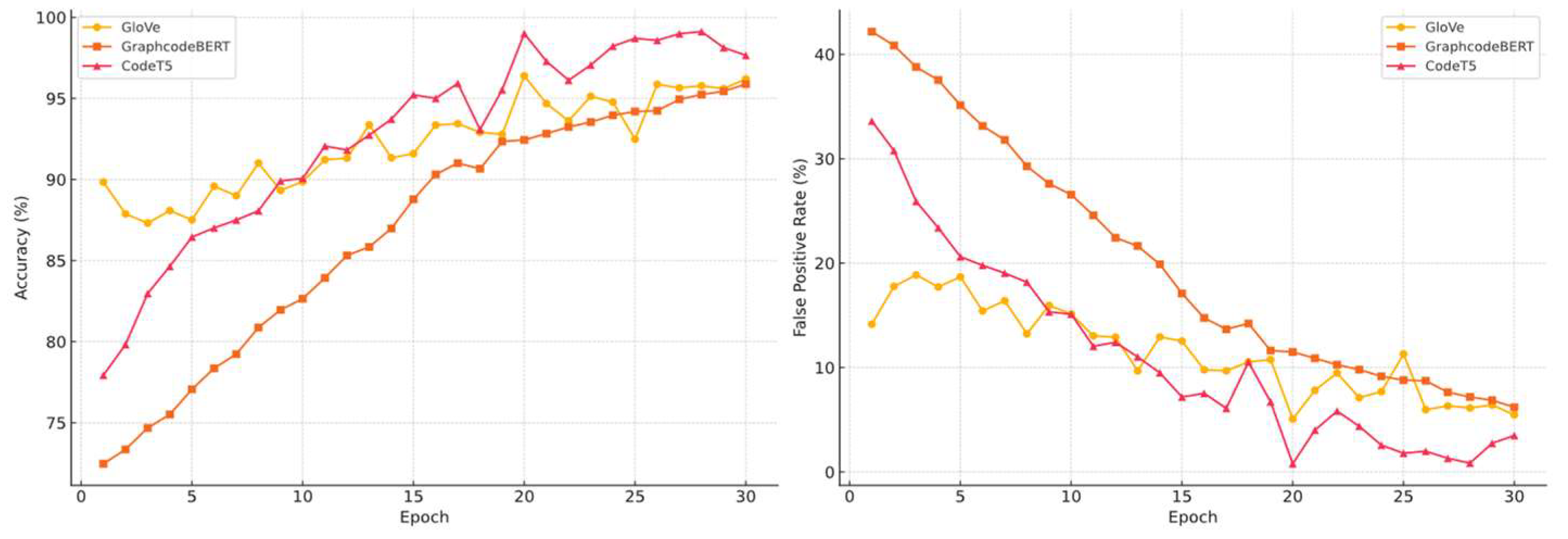

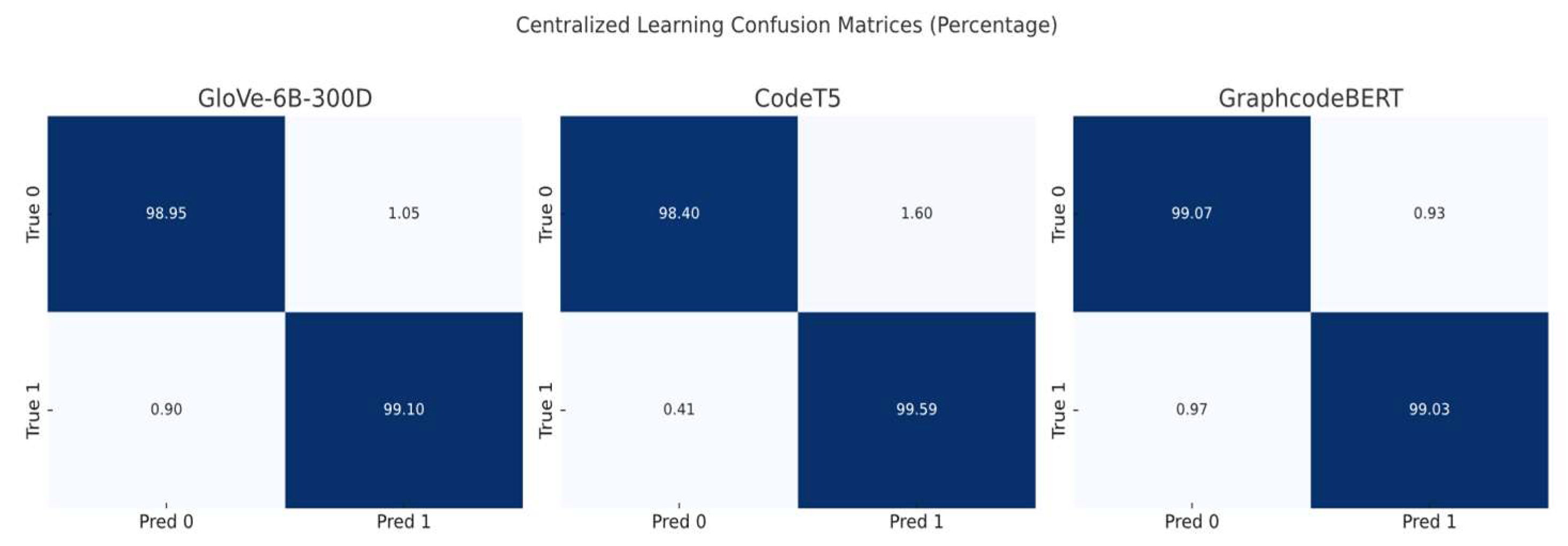

- Centralised In-Distribution Control Test: As a baseline, we trained and evaluated the classifier based on three embedding models on a single, fully centralised test set that merge both datasets. This set-up lets us contrast truly centralised learning with our federated-learning regime, isolate any performance gains attributable to data decentralisation, and expose the limits of federated learning when distributional heterogeneity is removed.

4. Independent Client Testing with OOD Distributed Data

4.1. Generalisation Performance Analysis

4.1.1. Sensitivity of Embeddings to Regularization Under OOD

4.2. Embedding Level Analysis

4.2.1. Kernel-Based Statistical Validation of OOD Divergence

5. Federated Learning Tests Under Non-IID Scenarios

5.1. Federated Learning Settings

5.1.1. Dataset Distribution

5.1.2. Federated Learning Setup

5.1.3. Aggregation Algorithms

5.2. Federated Learning Performance

5.2.1. Centralised No Data Isolation Testing Baseline

5.3. Federated Learning Result Analysis

6. Conclusions

7. Limitations and Future Work

- Incorporating Partial Participation with Invariant Learning. Our current setup assumes synchronous client participation per round, whereas real-world FL often involves dropout or intermittent availability. While we do not explicitly simulate asynchronous updates, recent methods such as FEDIIR [55] have shown robustness under partial participation by implicitly aligning inter-client gradients to learn invariant relationships. Extending such approaches to our structure-variant OOD setting may improve robustness in realistic, non-synchronous FL environments.

- Data Quality as a Structural Bottleneck. A key challenge in federated XSS detection lies not in algorithmic optimisation, but in the difficulty of acquiring high-quality, generalisable data across all clients. Our results suggest that if no clients possess substantial structural diversity or sufficient sample representation, the global model’s generalisation ability will be severely impaired, even with robust aggregation. Federated learning in XSS detection contexts fundamentally depends on partial data sufficiency among clients. As part of future work, we plan to expand the dataset to include more structurally complex XSS payloads, especially context-dependent polyglot attacks that combine HTML, CSS, and JavaScript in highly obfuscated forms. Such samples are essential to better simulate real-world, evasive behaviours and stress-test federated models under extreme structural variability.

- Deployment Feasibility and Optimisation Needs.While the current framework employs a lightweight Transformer classifier, future work may explore further simplification of the downstream classifier through distilled models (e.g., TinyBERT), linear-attention architectures (e.g., Performer), or hybrid convolution-attention designs to reduce computational overhead and improve real-world deplorability.

Abbreviations

References

- Alqura’n, R. , et al.: Advancing XSS Detection in IoT over 5G: A Cutting-Edge Artificial Neural Network Approach. IoT 5(3), 478–508 (2024). [CrossRef]

- Jazi, M. , Ben-Gal, I.: Federated Learning for XSS Detection: A Privacy-Preserving Approach. In: Proceedings of the 16th International Joint Conference on Knowledge Discovery, Knowledge Engineering and Knowledge Management, pp. 283–293. SCITEPRESS, Porto, Portugal (2024). [CrossRef]

- Tan, X. , Xu, Y., Wu, T., Li, B.: Detection of Reflected XSS Vulnerabilities Based on Paths-Attention Method. Appl. Sci. 13(13), 7895 (2023). [CrossRef]

- Fang, Y. , Li, Y., Liu, L., Huang, C.: DeepXSS: Cross Site Scripting Detection Based on Deep Learning. In: Proceedings of the 2018 International Conference on Computing and Artificial Intelligence, pp. 47–51. ACM, Chengdu (2018). [CrossRef]

- Abu Al-Haija, Q. : Cost-effective detection system of cross-site scripting attacks using hybrid learning approach. Results Eng. 19, 101266 (2023). [CrossRef]

- Nagarjun, P. , Shakeel, S.: Ensemble Methods to Detect XSS Attacks. Int. J. Adv. Comput. Sci. Appl. 11(5) (2020). [CrossRef]

- Tariq, I. , et al.: Resolving cross-site scripting attacks through genetic algorithm and reinforcement learning. Expert Syst. Appl. 168, 114386 (2021). [CrossRef]

- MITRE: CWE Top 25 Most Dangerous Software Weaknesses. https://cwe.mitre.org/top25/archive/2023/2023_top25_list.html (2023). Accessed 18 Aug 2024.

- Sakazi, I. , Grolman, E., Elovici, Y., Shabtai, A.: STFL: Utilizing a Semi-Supervised, Transfer-Learning, Federated-Learning Approach to Detect Phishing URL Attacks. In: 2024 International Joint Conference on Neural Networks (IJCNN). pp. 1–10. IEEE, Yokohama, Japan (2024). [CrossRef]

- Bakır, R., Bakır, H.: Swift Detection of XSS Attacks: Enhancing XSS Attack Detection by Leveraging Hybrid Semantic Embeddings and AI Techniques. Arab. J. Sci. Eng. (2024). [CrossRef]

- Li, L. , et al.: A review of applications in federated learning. Comput. Ind. Eng. 149, 106854 (2020). [CrossRef]

- Rathore, S., Sharma, P.K., Park, J.H.: XSSClassifier: An Efficient XSS Attack Detection Approach Based on Machine Learning Classifier on SNSs. J. Inf. Process. Syst. 13(4), 1014–1028 (2017). [CrossRef]

- Byun, J.-E. , Song, J.: A general framework of Bayesian network for system reliability analysis using junction tree. Reliab. Eng. Syst. Saf. 216, 107952 (2021). [CrossRef]

- Côté, P.-O. , et al.: Data cleaning and machine learning: a systematic literature review. Autom. Softw. Eng. 31(2), 54 (2024). [CrossRef]

- Kaur, J. , Garg, U., Bathla, G.: Detection of cross-site scripting (XSS) attacks using machine learning techniques: a review. Artif. Intell. Rev. 56(11), 12725–12769 (2023). [CrossRef]

- Rodríguez-Galán, G., Torres, J.: Personal data filtering: a systematic literature review comparing the effectiveness of XSS attacks in web applications vs cookie stealing. Annals of Telecommunications. (2024).

- Fang, Y. , et al.: RLXSS: Optimizing XSS Detection Model to Defend Against Adversarial Attacks Based on Reinforcement Learning. Future Internet 11(8), 177 (2019). [CrossRef]

- Zhao, Y., et al.: Federated Learning with Non-IID Data. arXiv preprint arXiv:1806.00582 (2018). https://doi.org/10.48550/arXiv.1806.00582.

- Flower Framework Documentation. https://flower.ai/docs/framework/_modules/flwr/server/strategy/fedprox.html#FedProx (2024). Accessed 20 Sep 2024.

- Thajeel, I.K. , et al.: Machine and Deep Learning-based XSS Detection Approaches: A Systematic Literature Review. J. King Saud Univ. Comput. Inf. Sci. 35(7), 101628 (2023). [CrossRef]

- McMahan, H.B. , et al.: Communication-Efficient Learning of Deep Networks from Decentralised Data. In: Proceedings of the 20th International Conference on Artificial Intelligence and Statistics, pp. 1273–1282. PMLR (2017).

- Li, T., et al.: Federated Optimization in Heterogeneous Networks. arXiv preprint arXiv:1812.06127 (2020). https://arxiv.org/abs/1812.06127.

- Blanchard, P., et al.: Byzantine-Tolerant Machine Learning. arXiv preprint arXiv:1703.02757 (2017). https://arxiv.org/abs/1703.02757.

- Pennington, J., et al.: GloVe: Global Vectors for Word Representation. https://nlp.stanford.edu/projects/GloVe/ (2014). Accessed 20 Oct 2024.

- Guo, D., et al.: GraphCodeBERT: Pre-training Code Representations with Data Flow. arXiv preprint arXiv:2009.08366 (2021). https://arxiv.org/abs/2009.08366. arXiv:2009.08366 (2021). https://arxiv.org/abs/2009.

- Y. Wang, W. Y. Wang, W. Wang, S. Joty, and S.C.H. Hoi, “CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation,” in *Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing (EMNLP)*, pp. 8696–8708, 2021.

- NF-ToN-IoT Dataset. https://staff.itee.uq.edu.au/marius/NIDS_datasets/ (2024). Accessed 20 Aug 2024.

- CICIDS2017 Dataset. https://www.unb.ca/cic/datasets/ids-2017.html (2024). Accessed 18 Aug 2024.

- Sarhan, M. , Layeghy, S., Portmann, M.: Towards a Standard Feature Set for Network Intrusion Detection System Datasets. Mobile Netw. Appl. 27(1), 357–370 (2022). [CrossRef]

- Qin, Q. , et al.: Detecting XSS with Random Forest and Multi-Channel Feature Extraction. Comput. Mater. Contin. 80(1), 843–874 (2024). [CrossRef]

- Sun, Z. , Niu, X., Wei, E.: Understanding Generalisation of Federated Learning via Stability: Heterogeneity Matters. In: Proceedings of the 39th International Conference on Machine Learning, pp. 1–15. PMLR (2022).

- Chan, D.M. , et al.: T-SNE-CUDA: GPU-Accelerated T-SNE and its Applications to Modern Data. In: 2018 30th International Symposium on Computer Architecture and High Performance Computing, pp. 330–338. IEEE, Lyon (2018). [CrossRef]

- Vahidian, S. , et al.: Rethinking Data Heterogeneity in Federated Learning: Introducing a New Notion and Standard Benchmarks. IEEE Trans. Artif. Intell. 5(3), 1386–1397 (2024). [CrossRef]

- Lin, T.-Y. , et al.: Focal Loss for Dense Object Detection. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2980–2988. IEEE, Venice (2017).

- Rieke, N. , et al.: The future of digital health with federated learning. npj Digit. Med. 3(1), 119 (2020). [CrossRef]

- Li, Q., et al.: Federated Learning on Non-IID Data Silos: An Experimental Study. arXiv preprint arXiv:2102.02079 (2021). https://arxiv.org/abs/2102.02079.

- Khramtsova, E. , et al.: Federated Learning For Cyber Security: SOC Collaboration For Malicious URL Detection. In: 2020 IEEE 40th International Conference on Distributed Computing Systems, pp. 1316–1321. IEEE, Singapore (2020). [CrossRef]

- Zhou, Y. , Wang, P.: An ensemble learning approach for XSS attack detection with domain knowledge and threat intelligence. Comput. Secur. 82, 261–269 (2019). [CrossRef]

- Hannousse, A., Yahiouche, S., Nait-Hamoud, M.C.: Twenty-two years since revealing cross-site scripting attacks: A systematic mapping and a comprehensive survey. Comput. Sci. Rev. 52, 100634 (2024). [CrossRef]

- Wang, T. , Zhai, L., Yang, T., Luo, Z., Liu, S.: Selective privacy-preserving framework for large language models fine-tuning. Information Sciences. 678, 121000 (2024). [CrossRef]

- Du, H. , Liu, S., Zheng, L., Cao, Y., Nakamura, A., Chen, L.: Privacy in Fine-tuning Large Language Models: Attacks, Defenses, and Future Directions (2025). [CrossRef]

- Kirchner, R., Möller, J., Musch, M., Klein, D., Rieck, K., Johns, M. Dancer in the Dark: Synthesizing and Evaluating Polyglots for Blind Cross-Site Scripting. Proceedings of the 33rd USENIX Security Symposium, August 14–16, 2024, Philadelphia, PA, USA. https://www.usenix.org/conference/usenixsecurity24/presentation/kirchner.

- OpenAI. “GPT-4 Technical Report.” OpenAI (2023). https://openai.com/research/gpt-4.

- DeepSeek AI. DeepSeek-Coder-6.7B-Instruct. 2024. Available at: https://huggingface.co/deepseek-ai/deepseek-coder-6.7b-instruct. (Accessed Sep 2024).

- Chen, H. , Zhao, H., Gao, Y., Liu, Y., Zhang, Z.: Parameter-Efficient Federal-Tuning Enhances Privacy Preserving for Speech Emotion Recognition. In: ICASSP 2025 - 2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). pp. 1–5. IEEE, Hyderabad, India (2025). [CrossRef]

- Rao, B. , Zhang, J., Wu, D., Zhu, C., Sun, X., Chen, B.: Privacy Inference Attack and Defense in Centralized and Federated Learning: A Comprehensive Survey. IEEE Trans. Artif. Intell. 6, 333–353 (2025). [CrossRef]

- Peterson, D. , Kanani, P., Marathe, V.J.: Private Federated Learning with Domain Adaptation, http://arxiv.org/abs/1912.06733, (2019). [CrossRef]

- Zhang, J. , Li, C., Qi, J., He, J.: A Survey on Class Imbalance in Federated Learning, http://arxiv.org/abs/2303.11673, (2023).

- J.S. Lin. Divergence Measures Based on the Shannon Entropy. IEEE Transactions on Information Theory, 37(1), 145–151 (1991).

- M. Arjovsky, S. Chintala, L. Bottou. Wasserstein GAN. arXiv preprint arXiv:1701.07875 (2017).

- Gretton, K.M. Borgwardt, M.J. Rasch, B. Schölkopf, A. Smola. A Kernel Two-Sample Test. Journal of Machine Learning Research, 13, 723–773 (2012).

- Sun, W. , Fang, C., Miao, Y., You, Y., Yuan, M., Chen, Y., Zhang, Q., Guo, A., Chen, X., Liu, Y., Chen, Z.: Abstract Syntax Tree for Programming Language Understanding and Representation: How Far Are We?, http://arxiv.org/abs/2312.00413, (2023). [CrossRef]

- Pimenta, I., Silva, D., Moura, E., Silveira, M., & Gomes, R.L. (2024). Impact of Data Anonymization in Machine Learning Models. In: Proceedings of the 13th Latin-American Symposium on Dependable and Secure Computing (LADC 2024), ACM, pp. 188–191. [CrossRef]

- Rahman, A., Iqbal, A., Ahmed, E., Tanvirahmedshuvo, & Ontor, M.R.H. (2024). Privacy-Preserving Machine Learning: Techniques, Challenges, and Future Directions in Safeguarding Personal Data Management. Frontline Marketing Management and Economics Journal, 4(12), 84–106. [CrossRef]

- Guo, Y., Li, J., Wang, X., Liu, Y., Wu, Y., & Wang, Y. (2023). Out-of-Distribution Generalization of Federated Learning via Implicit Invariant Relationships. Proceedings of the 40th International Conference on Machine Learning (ICML 2023), PMLR 202:11560–11584.

- Pei, J. , Liu, W., Li, J., Wang, L., Liu, C.: A Review of Federated Learning Methods in Heterogeneous Scenarios. IEEE Trans. Consumer Electron. 70, 5983–5999 (2024). [CrossRef]

- Mereani, F.A. , Howe, J.M.: Detecting Cross-Site Scripting Attacks Using Machine Learning. In: Hassanien, A.E., Tolba, M.F., Kim, T.-h. (eds.) Advanced Machine Learning Technologies and Applications. AISC, vol. 723, pp. 200–210. Springer, Cham (2018). [CrossRef]

- McInnes, L., Healy, J., Melville, J.: UMAP: Uniform Manifold Approximation and Projection for Dimension Reduction. arXiv preprint arXiv:1802.03426 (2018).

- Liao, X. , Liu, W., Zhou, P., Yu, F., Xu, J., Wang, J., Wang, W., Chen, C., Zheng, X.: FOOGD: Federated Collaboration for Both Out-of-distribution Generalization and Detection.

- Gao, C. , Zhang, X., Han, M., Liu, H.: A review on cyber security named entity recognition. Front Inform Technol Electron Eng. 22, 1153–1168 (2021). [CrossRef]

- Wang, H. , Singhal, A., Liu, P.: Tackling imbalanced data in cybersecurity with transfer learning: a case with ROP payload detection. Cybersecurity. 6, 2 (2023). [CrossRef]

- Al-Shehari, T. , Kadrie, M., Al-Mhiqani, M.N., Alfakih, T., Alsalman, H., Uddin, M., Ullah, S.S., Dandoush, A.: Comparative evaluation of data imbalance addressing techniques for CNN-based insider threat detection. Sci Rep. 14, 24715 (2024). [CrossRef]

- Assessment of Dynamic Open-source Cross-site Scripting Filters for Web Application. KSII TIIS. 15, (2021). [CrossRef]

- Pramanick, N. , Srivastava, S., Mathew, J., Agarwal, M.: Enhanced IDS Using BBA and SMOTE-ENN for Imbalanced Data for Cybersecurity. SN COMPUT. SCI. 5, 875 (2024). [CrossRef]

| Function Name Examples | Rationale |

| Console.error | Outputs an error message to the console. |

| confirm | Displays a confirmation dialog asking the user to confirm an action. |

| prompt | Displays a prompt to input information. |

| Metrics | Baseline (IID) | Negative samples Positive Samples |

| Top-100 TF-IDF | 70-90 | 20 ± 1 63 ± 1 |

| Jaccard similarity | 70-90% | 10.50% ± 1 45.98% ± 1 |

| cosine similarity | 0.85-0.95 | 0.2230 ± 0.01 0.4988 ± 0.01 |

| Embedding Model | Accuracy | FPR | Recall | Precision | Test Dataset Type |

| GloVe-6B-300d | 98.12±1% | 1.31±1% | 98.45±1% | 98.29±1% | 20% of Same dataset |

| CodeT5 | 98.30±1% | 2.21±2% | 98.31±1% | 98.16±1% | 20% of Same dataset |

| GraphcodeBERT | 99.24±0.5% | 0.87±2% | 99.40±0.5% | 99.02±0.5% | 20% of Same dataset |

| Embedding Model | Accuracy | FPR | Precision | Recall | Positive Sample |

| GraphcodeBERT | 56.80% | 66.22% | 44.82% | 99.69% | Dataset 1 |

| 71.57% | 68.19% | 68.39% | 99.70% | Dataset 2 |

| Embedding Model | Accuracy | Recall | Precision | FPR | Classifier Hyperparameters |

| GloVe-6B-300d | 65.84% 69.31% 79.00% |

98.53% 98.08% 94.74% |

50.65% 53.38% 63.41% |

51.79% 46.21% 29.49% |

Lr = 0.005, drop out = 0.1 Lr = 0.001, drop out = 0.1 Lr = 0.001, drop out = 0.5 |

| GraphcodeBERT | 56.80% | 99.69% | 44.82% | 66.22% | Lr = 0.001, drop out = 0.1 |

| 57.25% | 99.63% | 45.03% | 65.24% | Lr = 0.0005, drop out = 0.3 | |

| CodeT5 | 59.50% | 99.26% | 46.36% | 61.95% | Lr = 0.001, drop out = 0.1 |

| 66.42% | 97.86% | 51.09% | 51.06% | Lr = 0.0005, drop out = 0.3 |

| Comparison | JSD | WD |

| GraphCodeBERT | 0.2444 | 0.0758 |

| GloVe | 0.3402 | 0.0562 |

| CodeT5 | 0.3008 | 0.0237 |

| Embedding Model | Accuracy | FPR | Precision | Recall | F-1 |

| GraphcodeBERT | 99.92 / 95.02% | 0.69 / 6.76% | 99.94 / 86.48% | 99.94 / 99.49% | 99.94 / 92.86% |

| GloVe-6b-300d | 98.63 / 94.06% | 1.35 / 9.69% | 99.69 / 86.84% | 99.61 / 98.87% | 99.65 / 93.25% |

| Code T5 | 99.64 / 96.13% | 0.31 / 3.19% | 99.70 / 94.48% | 99.74 / 99.04% | 99.04 / 96.77% |

| Embedding model | Accuracy | FPR | Precision | Recall | F1-Score |

| GloVe-6B-300d | 99.01%±1.2 | 1.05%±1.4 | 98.56%±1.5 | 99.10%±1.5 | 98.83%±1.1 |

| CodeT5 | 98.90%±0.5 | 1.60%±1.1 | 97.83%±0.5 | 99.59%±0.3 | 98.70%±1.4 |

| GraphcodeBERT | 99.05%±0.7 | 0.93%±1.2 | 98.72%±1.5 | 99.03%±0.5 | 98.87%±1.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).