Submitted:

28 April 2025

Posted:

29 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

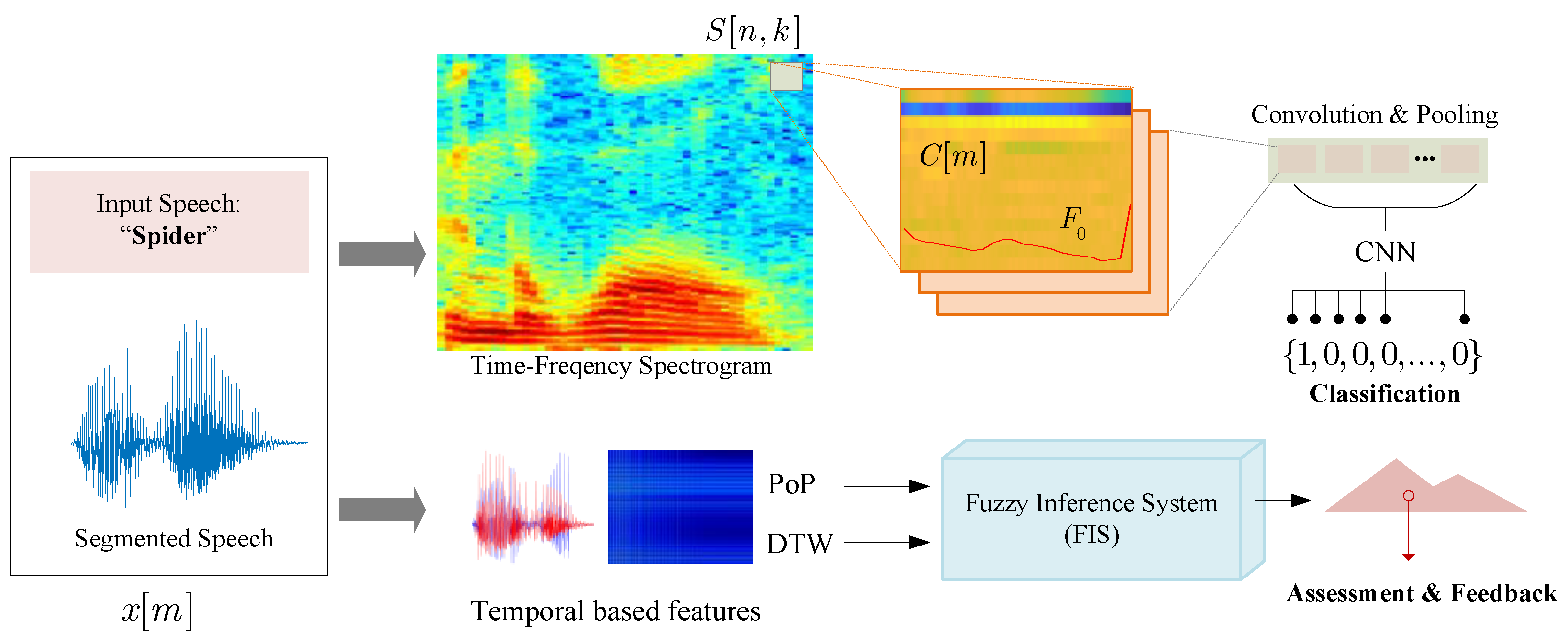

2. Materials and Methods

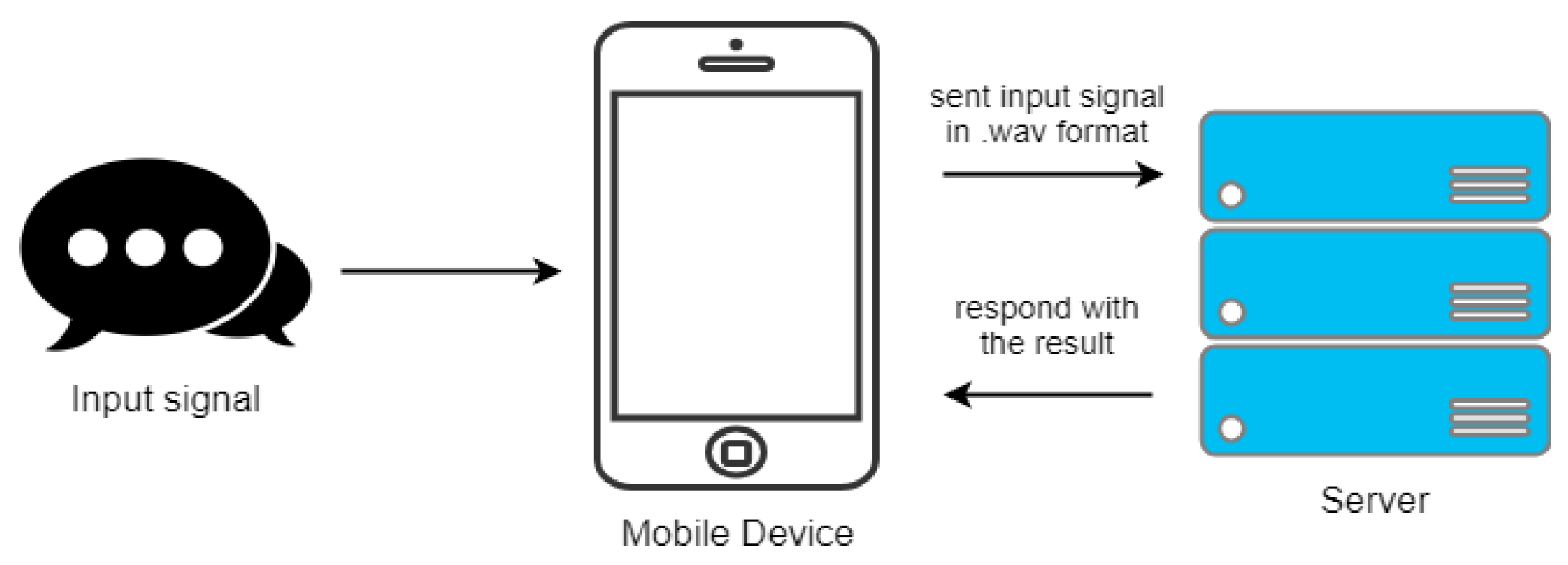

2.1. System Architecture

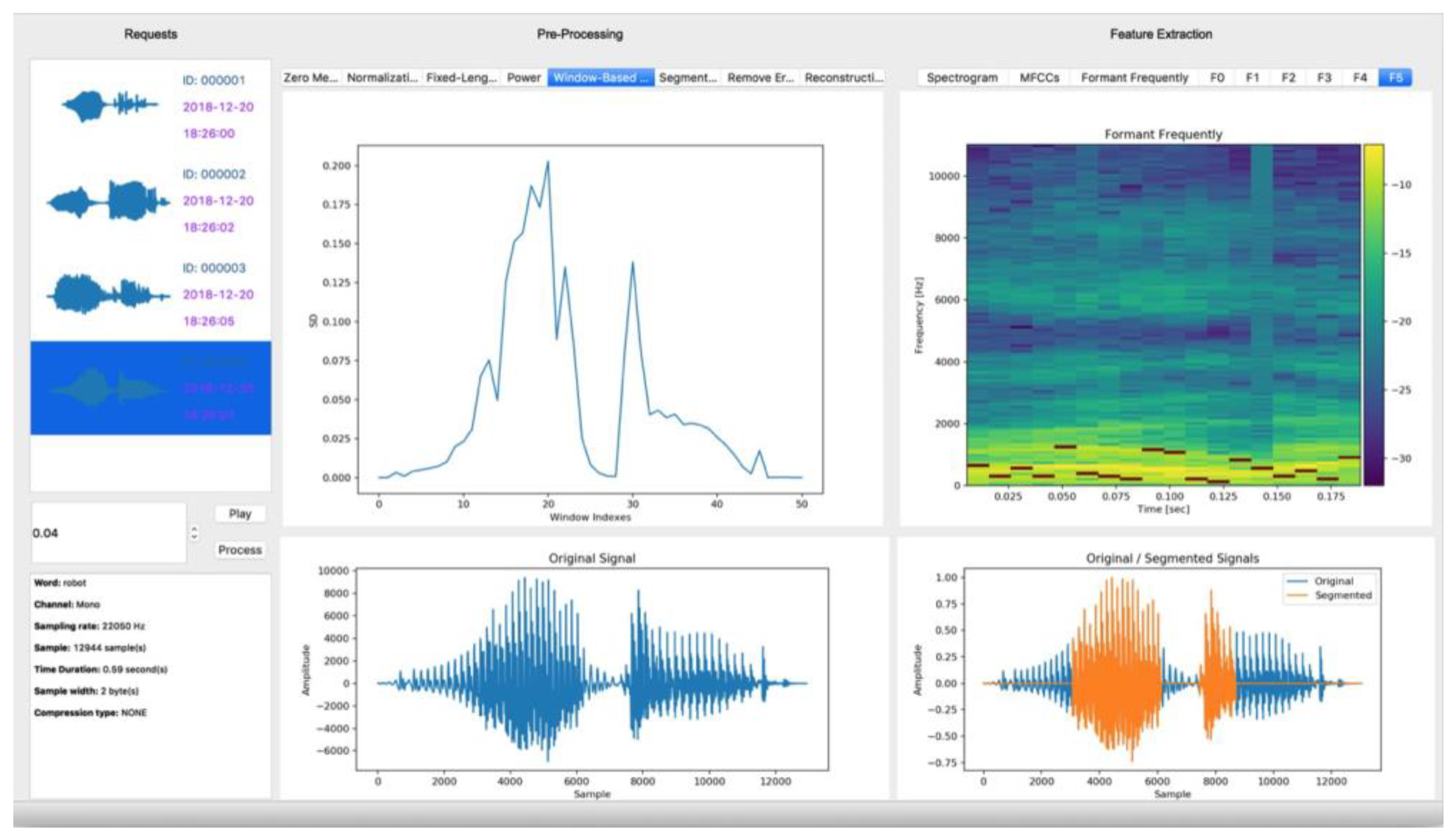

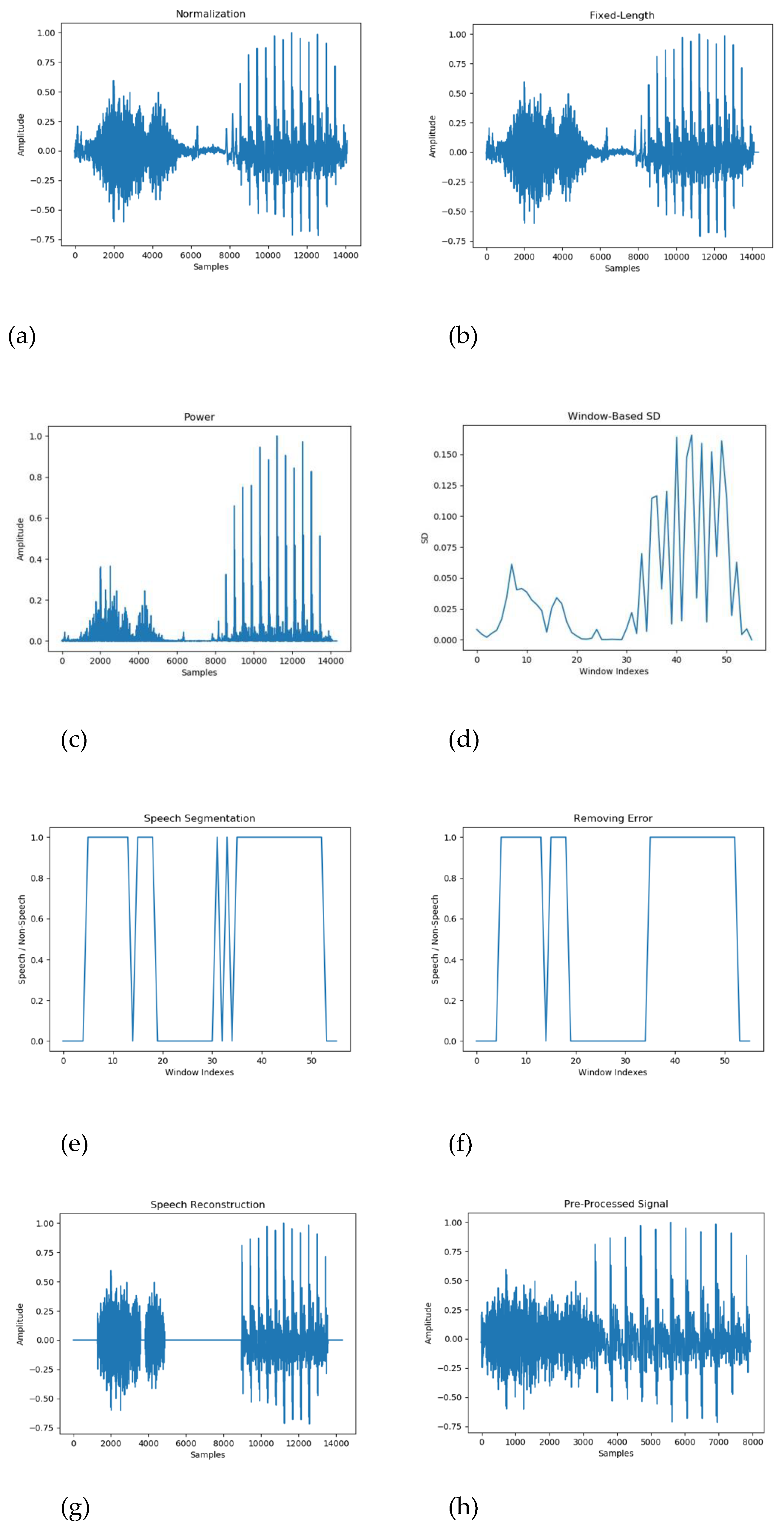

2.2. Pre-Processing Techniques

2.2.1. Speech Signal Normalization

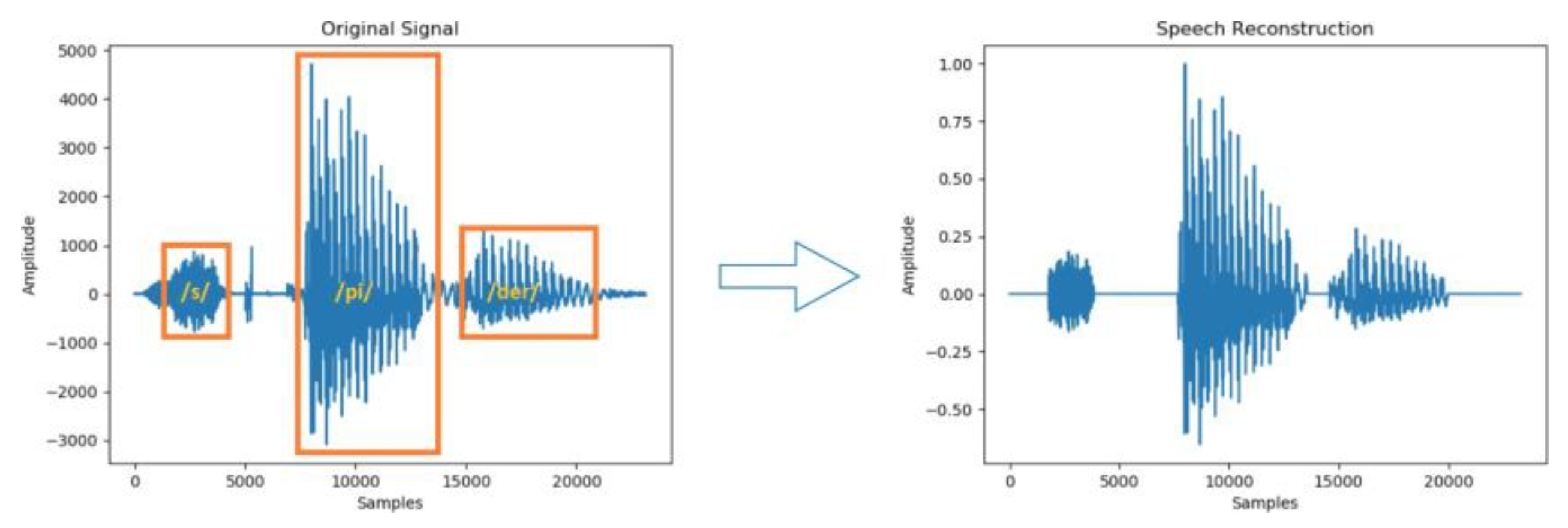

2.2.2. Speech Segmentation

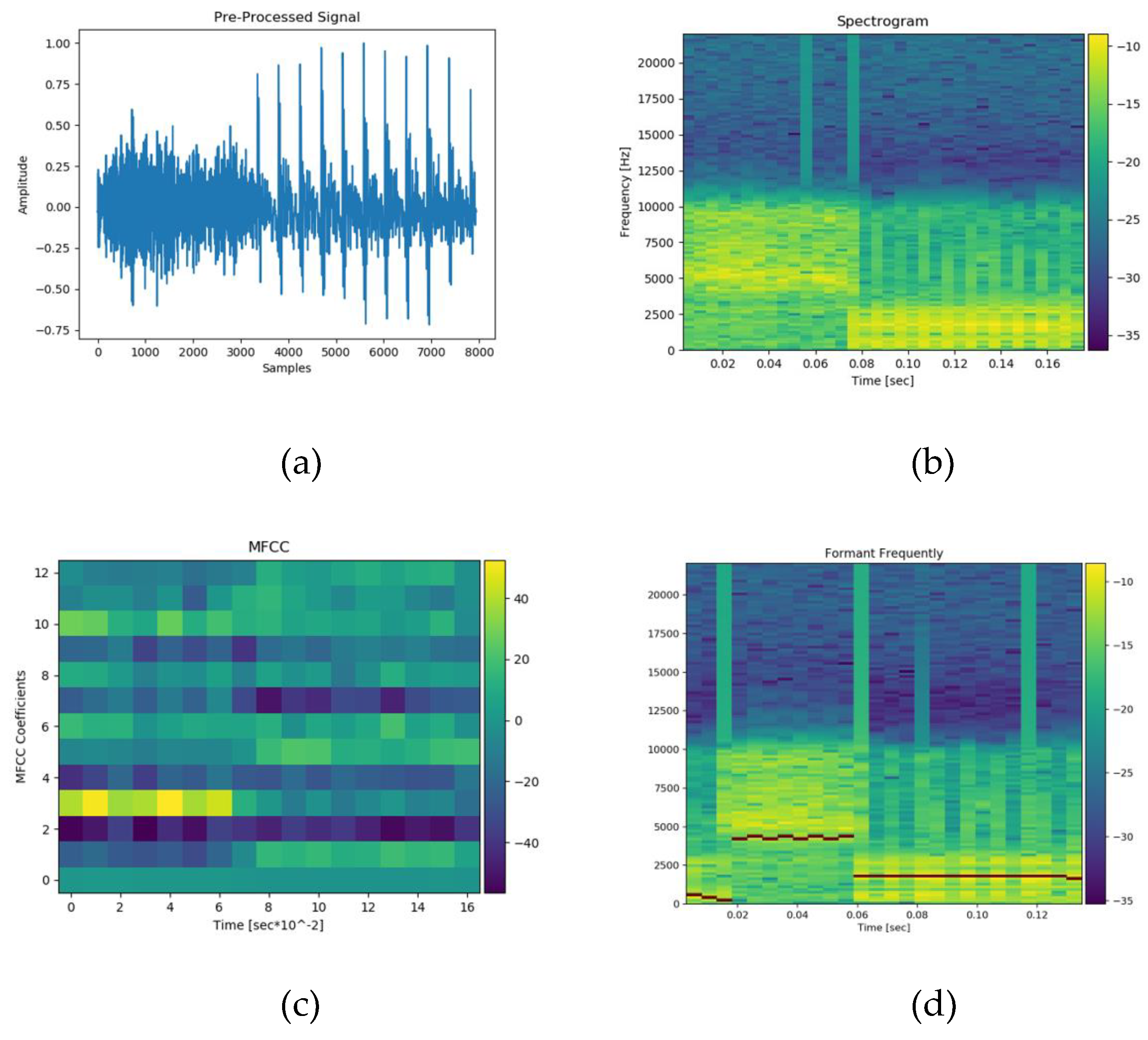

2.3. Feature Extraction and Model Training

2.3.1. Spectrogram Analysis

2.3.2. Mel-Frequency Cepstral Coefficients

- Applying a mel-scale filter bank to map the linear frequency scale onto a non-linear mel scale.

- Taking the logarithm of the mel-filtered spectrum to compress dynamic range.

- Applying Discrete Cosine Transform (DCT) to obtain the final coefficients.

2.3.3. Formant Frequency Analysis

2.3.4. Model Training and Optimization

- -

- Loss function: Cross-entropy

- -

- Optimizer: Adam (learning rate: 0.001)

- -

- Epochs: 50

- -

- Batch size: 32

- -

- Dataset split: 75% training, 15% validation, 15% testing

3. Results

3.1. Data Preparation

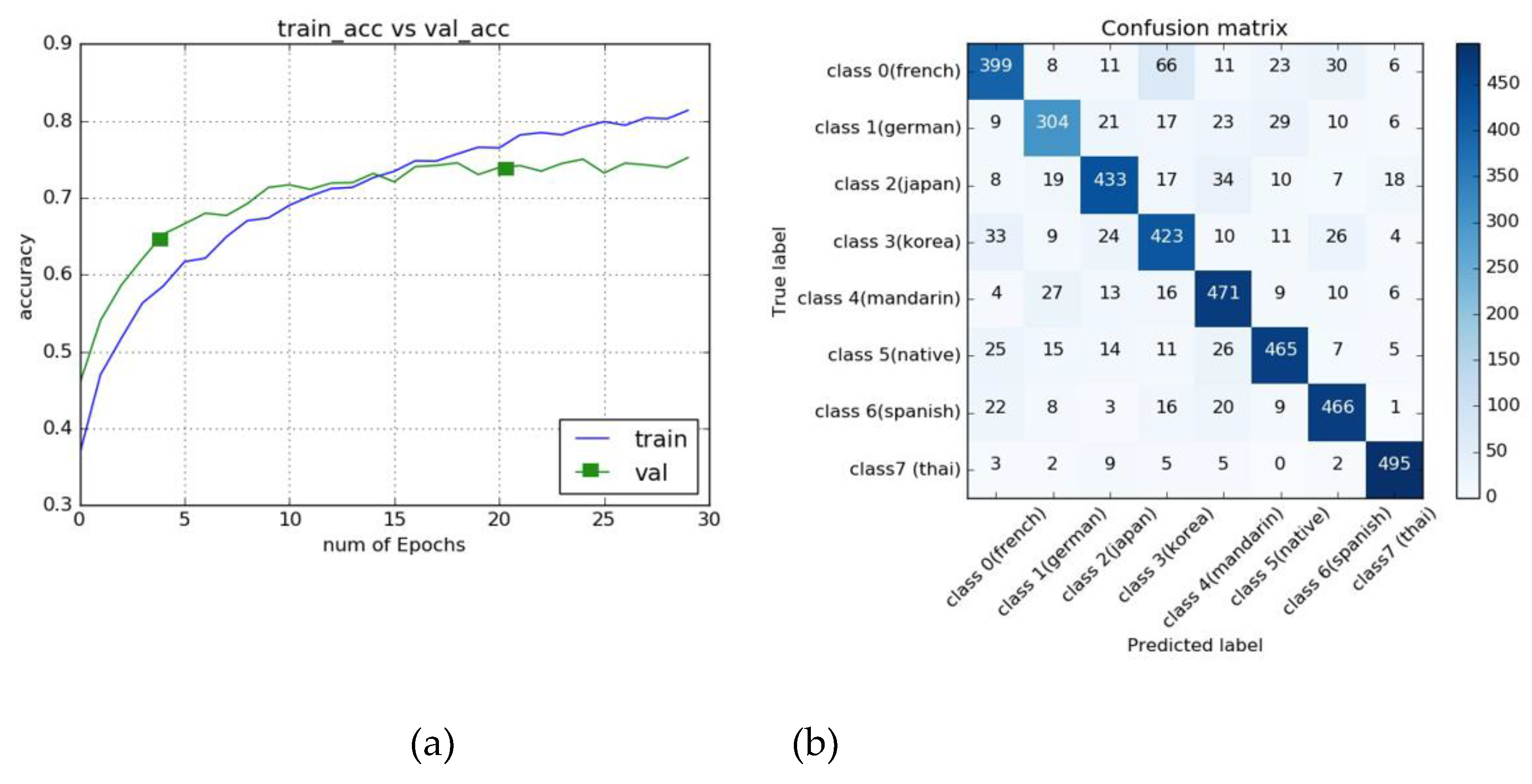

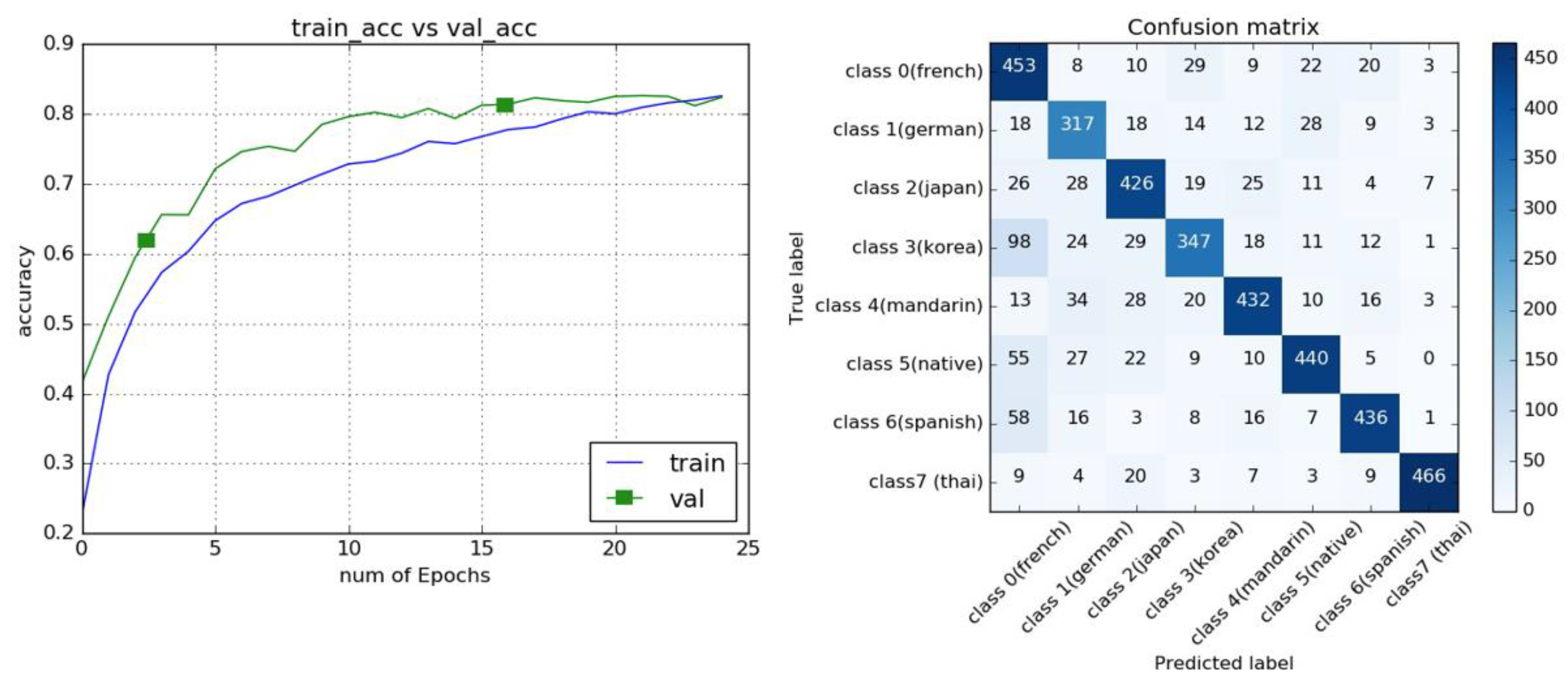

3.2. Model Training and Test Results

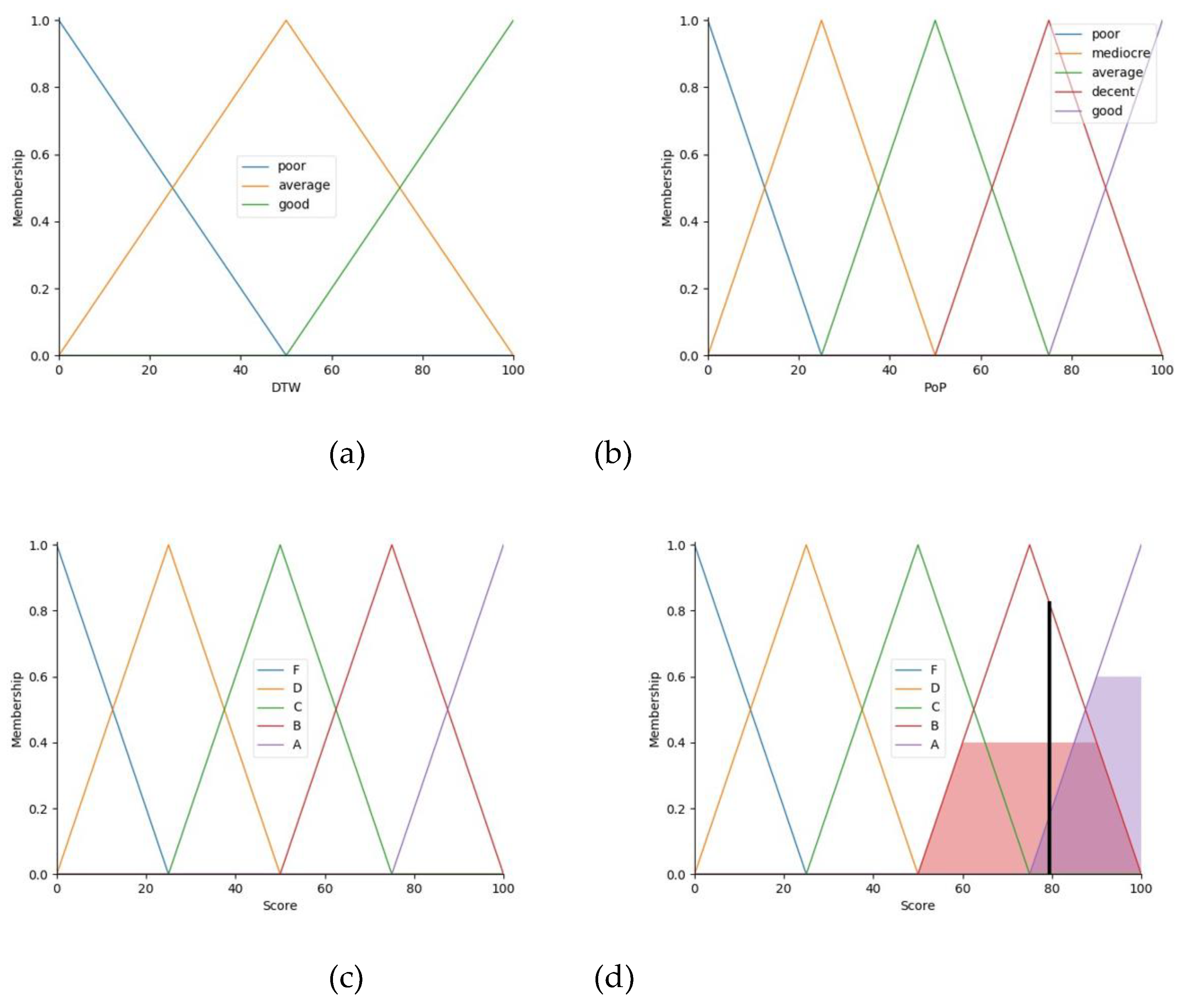

3.3. Period of Phonetic (PoP)

3.4. Dynamic Time Warping (DTW)

3.5. Knowledge Base

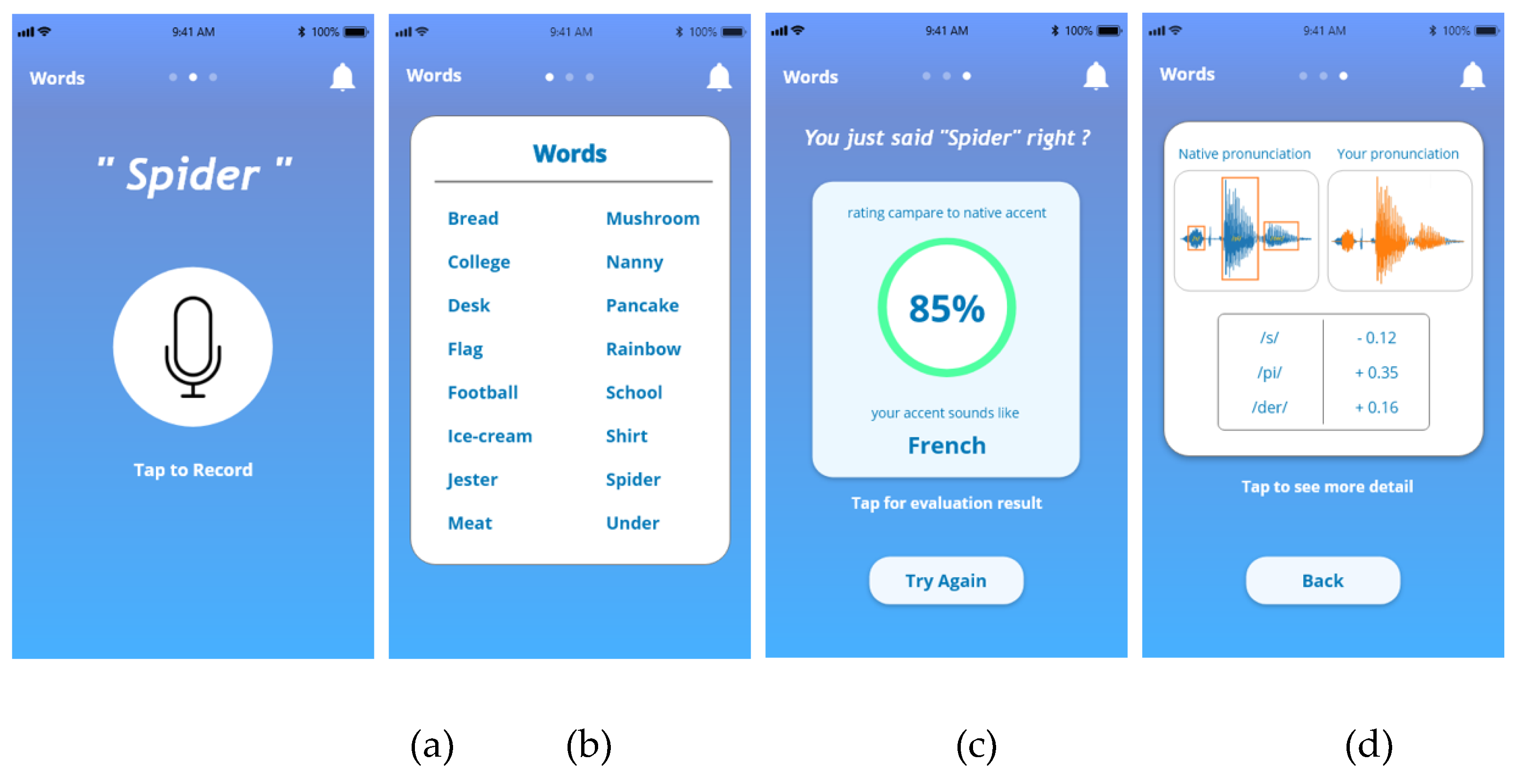

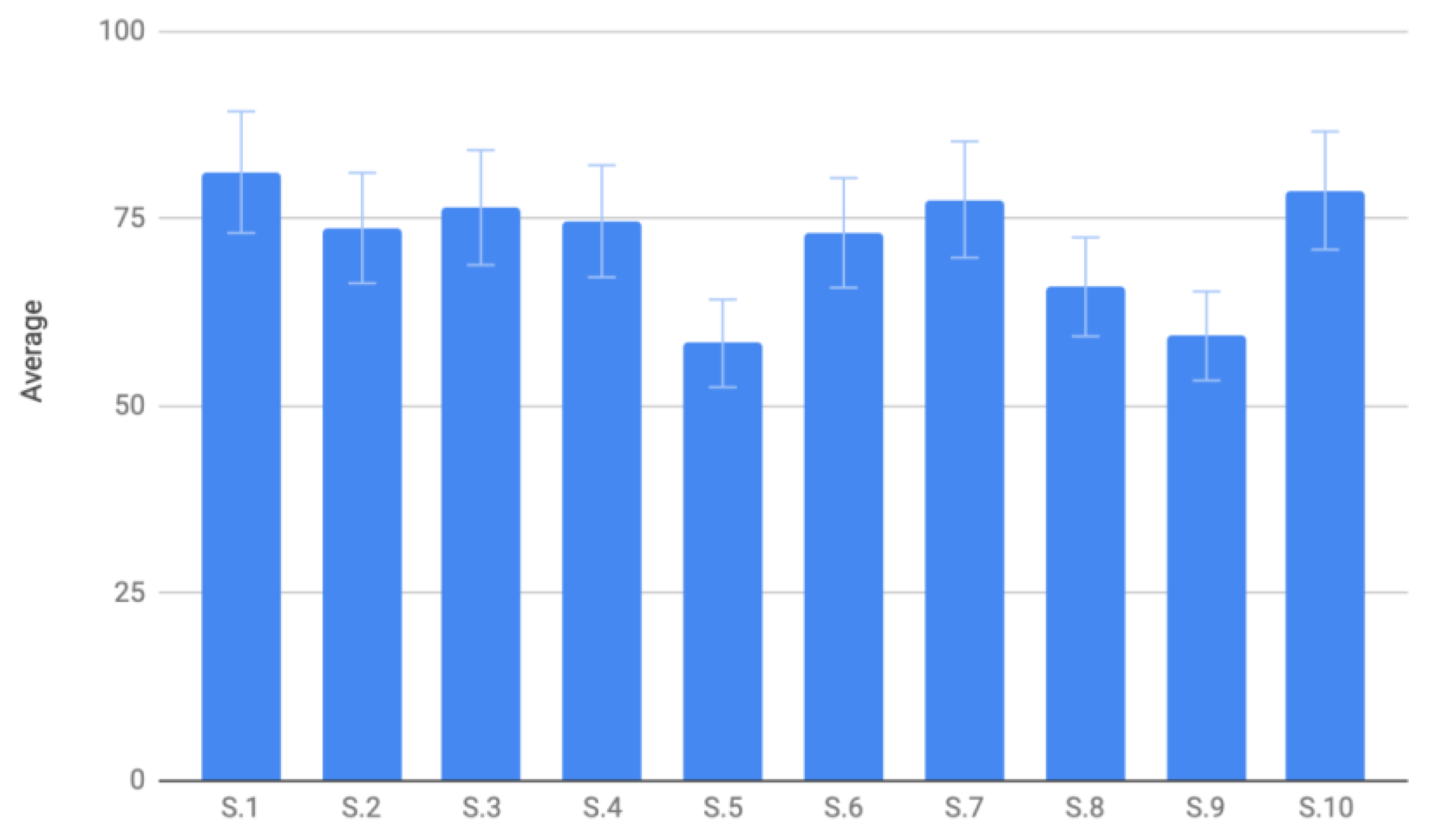

3.6. Mobile-Assisted Language Learning (MALL)

4. Discussion

4.1. Comparison of Study Findings with Existing Literature

4.2. System Potential and Challenges in Practical Application

4.3. Importance of Improved Pronunciation Feedback

4.4. Limitations and Future Directions

5. Conclusions

Funding

Acknowledgments

Conflicts of Interest

References

- Amano, T.; Ram´ırez-Castan˜eda, V.; Berdejo-Espinola, V.; Borokini, I.; Chowdhury, S.; Golivets, M.; Gonz´alez-Trujillo, J.D.; Montan˜o-Centellas, F.; Paudel, K.; White, R.L.; Ver´ıssimo, D. The manifold costs of being a nonnative English speaker in science. PLoS Biology 2023, 21, e3002184. [Google Scholar] [CrossRef] [PubMed]

- Chen, Y.; Liu, Z. WordDecipher: Enhancing Digital Workspace Communication with Explainable AI for nonnative English Speakers. CoRR 2024. [Google Scholar]

- Huang, C.; Chen, T.; Chang, E. Accent Issues in Large Vocabulary Continuous Speech Recognition. International Journal of Speech Technology, 2004, 7, 141–153. [Google Scholar] [CrossRef]

- Upadhyay, R.; Lui, S. ; editor, Foreign English Accent Classification Using Deep Belief Networks. In Proceedings of the 2018 IEEE 12th International Conference on Semantic Computing (ICSC), Laguna Hills, CA, USA, 31 Jan. - 02 Feb. 2018. [Google Scholar]

- Russell, M.; Najafian, M. editor. Modelling Accents for Automatic Speech Recognition. In Proceedings of the 23rd European Signal Processing Conference (EUSIPCO), 2015, 1568.

- Ensslin, A.; Goorimoorthee, T.; Carleton, S.; Bulitko, V.; Hernandez, S. Deep Learning for Speech Accent Detection in Videogames. The Workshops of the Thirteenth AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment, 2017, 13, 59–74. [Google Scholar] [CrossRef]

- Sejdic, E.; Djurovic, I.; Jiang, J. Time-Frequency Feature Representation Using Energy Concentration: An Overview of Recent Advances. Digital Signal Processing, 2009, 19, 153–183. [Google Scholar] [CrossRef]

- Kasahara, S.; Minematsu, N.; Shen, H.; Saito, D.; Hirose, K. Structure-Based Prediction of English Pronunciation Distances and Its Analytical Investigation. In Proceedings of the 2014 4th IEEE International Conference on Information Science and Technology, Shenzhen, China, 26-28 April 2014. [Google Scholar]

- Lee, J.; Lee, C.H.; Kim, D.; Kang, B. Smartphone-Assisted Pronunciation Learning Technique for Ambient Intelligence. IEEE Access, 2017, 5, 312–325. [Google Scholar] [CrossRef]

- Nicolao, M.; Beeston, A.V.; Hain, T. Automatic Assessment of English Learner Pronunciation Using Discriminative Classifiers. In Proceeding of the Speech and Signal Processing (ICASSP), 2015 IEEE International Conference on. 40th IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brisbane, Australia, 19-24 Apr 2015. [Google Scholar]

- Chanamool, N.; Unhalekajit, B.; Phothisonothai, M. Computer Software for Phonetic Analyzing of Thai Language Using Speech Processing Techniques. KMUTT Research and Development Journal, 2010, 33, 319–328. [Google Scholar]

- Grenander, U. Some Non-Linear Problems in Probability Theory. In Probability and Statistics: The Harald Cram´er Volume; Almqvist Wiksell: Stockholm, 1959; pp. 353–365. [Google Scholar]

- Sheng, L.; Edmund, M. Deep Learning Approach to Accent Classification. Machine Learning Stanford, 2017. https://cs229.stanford.edu/proj2017/final-reports/5244230.pdf.

- Lee, A.; Zhang, Y.; Glass, J. Mispronunciation Detection via Dynamic Time Warping on Deep Belief Network-Based Posteriorgrams. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Vancouver, BC, Canada, 26-31 May 2013. [Google Scholar]

- Campbell, W.; Reynolds, D.; Campbell, J.; Brady, K. Estimating and Evaluating Confidence for Forensic Speaker Recognition. In Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Philadelphia, PA, USA, 23-23 March 2005. [Google Scholar]

- Scikit-Fuzzy. Available online: https://pythonhosted.org/ scikit-fuzzy/overview.html. (accessed on 24 December 2024].

- Rashmi, M.; Yogeesh, N.; Girija, D.K.; William, P. Robust Speech Processing with Fuzzy Logic-Driven Anti-Spoofing Techniques. In Proceedings of the 2023 International Conference on Self Sustainable Artificial Intelligence Systems (ICSSAS), Erode, India, 18-20 October 2023. [Google Scholar]

- Lesnichaia, M.; Mikhailava, V.; Bogach, N.; Lezhenin, I.; Blake, J.; Pyshkin, E. Classification of Accented English Using CNN Model Trained on Amplitude Mel-Spectrograms. Interspeech, 2022, 3669–3673.

- Mikhailava, V.; Lesnichaia, M.; Bogach, N.; Lezhenin, I.; Blake, J.; Pyshkin, E. Language Accent Detection with CNN Using Sparse Data from a Crowd-Sourced Speech Archive. Mathematics, 2022, 10, 2913. [Google Scholar] [CrossRef]

- Hamilton, L.S. Neural Processing of Speech Using Intracranial Electroencephalography: Sound Representations in the Auditory Cortex. Oxford Research Encyclopedia of Neuroscience, 2024.

- Asswad, R.; Boscain, U.; Turco, G.; Prandi, D.; Sacchelli, L. An Auditory Cortex Model for Sound Processing. Lecture Notes in Computer Science, 2021, 12829, 56–64. [Google Scholar]

- Wang, R.; Wang, Y.; Flinker, A. Reconstructing Speech Stimuli From Human Auditory Cortex Activity Using a WaveNet Approach. Frontiers in Neuroscience, 2018, 12, 422. [Google Scholar] [CrossRef] [PubMed]

- Al-Jumaili, Z.; Bassiouny, T.; Alanezi, A.; Khan, W.; Al-Jumeily, D.; Hussain, A.J. Classification of Spoken English Accents Using Deep Learning and Speech Analysis. In Intelligent Computing Methodologies, 2022, 277–287.

- Bartelds, M.; de Vries, W.; Sanal, F.; Richter, C.; Liberman, M.; Wieling, M. Neural Representations for Modeling Variation in Speech. Journal of Phonetics. 2020, 92, 101137. [Google Scholar] [CrossRef]

- Tourville, J.A.; Reilly, K.J.; Guenther, F.H. Neural mechanisms underlying auditory feedback control of speech. NeuroImage, 2008, 39, 1429–1443. [Google Scholar] [CrossRef] [PubMed]

- Habib, S.; Haider, A.; Suleman, S.S.M.; Akmal, S.; Khan, M.A. Mobile As sisted Language Learning: Evaluation of Accessibility, Adoption, and Perceived Outcome among Students of Higher Education. Electronics, 2022, 11, 1113. [Google Scholar] [CrossRef]

- Liu, L.; Li, W.; Morris, S.; Zhuang, M. Knowledge-Based Features for Speech Analysis and Classification: Pronunciation Diagnoses. Electronics, 2023, 12, 2055. [Google Scholar] [CrossRef]

- Rukwong, N.; Pongpinigpinyo, S. An Acoustic Feature-Based Deep Learning Model for Automatic Thai Vowel Pronunciation Recognition. Applied Sciences, 2022, 12, 6595. [Google Scholar] [CrossRef]

| Library | Version | Purpose |

| TensorFlow | 1.13.1 | Machine learning library |

| Keras | 2.2.4 | High-level neural network API written in Python |

| SciPy | 1.10 | Data management and computation |

| PythonSpeechFeatures | 0.6 | Extraction of MFCCs and filterbank energies |

| Matplotlib | 3.0.0 | Plotting library for generating figures |

| Flask | 1.0.2 | RESTful request dispatching |

| NumPy | 2.0 | Core library for scientific computing |

| Category | Number of recordings |

| Native | 2200 |

| French | 2150 |

| German | 1650 |

| Mandarin | 2200 |

| Spanish | 2200 |

| Japanese | 2200 |

| Korean | 2200 |

| Thai | 2195 |

| Total | 16995 |

| Category | Number of recordings |

| 160 | Hearing in Noise Test for Children sentences |

| 10 | Digit words |

| 48 | Multi-syllabic Lexical Neighborhood Test words |

| 50 | Northwestern University-Children’s Perception of Speech words |

| 100 | Lexical Neighborhood Test words |

| 50 | Lexical Neighborhood Sentence Test sentences |

| 40 | Pediatric Speech Intelligibility sentences |

| 20 | Pediatric Speech Intelligibility words |

| 339 | Bamford-Kowal-Bench sentences |

| 150 | Phonetically Balanced Kindergarten words |

| 72 | Spondee words |

| 100 | Word Intelligibility by Picture Identification words |

| MFCC: 28×28 | MFCC: 64×48 | MFCC: 128×48 | |||||

| Iter. | Test Acc. [%] | Time [s] | Test Acc. [%] | Time [s] | Test Acc. [%] | Time [s] | |

| 1 | 40.48 | 26 | 45.37 | 123 | 45.21 | 552 | |

| 5 | 57.73 | 130 | 60.16 | 612 | 60.43 | 2734 | |

| 10 | 64.21 | 261 | 65.54 | 1231 | 63.94 | 5467 | |

| 15 | 67.67 | 391 | 69.45 | 1830 | 66.40 | 8251 | |

| 20 | 70.29 | 522 | 70.72 | 2421 | 67.05 | 11024 | |

| 30 | 73.89 | 781 | 74.27 | 3636 | 67.51 | 16591 | |

| MFCC: 28×28 | MFCC: 64×48 | MFCC: 128×48 | ||||

| Iter. | Test Acc. [%] | Time [s] | Test Acc. [%] | Time [s] | Test Acc. [%] | Time [s] |

| 1 | 34.21 | 26 | 38.51 | 122 | 38.29 | 552 |

| 5 | 63.48 | 130 | 71.62 | 615 | 68.43 | 2734 |

| 10 | 70.45 | 260 | 76.19 | 1231 | 77.13 | 5467 |

| 15 | 75.11 | 390 | 78.35 | 1846 | 80.84 | 8251 |

| 20 | 76.83 | 520 | 79.97 | 2459 | 81.94 | 11024 |

| 30 | 78.78 | 780 | 81.16 | 3690 | 82.86 | 16591 |

| DTW \ PoP | Poor (0-20) |

Mediocre (20-40) | Average (40-60) | Decent (60-80) | Good (80-100) |

|---|---|---|---|---|---|

| Poor (0-33) |

F | F | D | D | D |

| Average (34-66) |

C | C | B | A | A |

| Good (67-100) |

C | B | A | A | A |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).