Submitted:

18 April 2025

Posted:

21 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

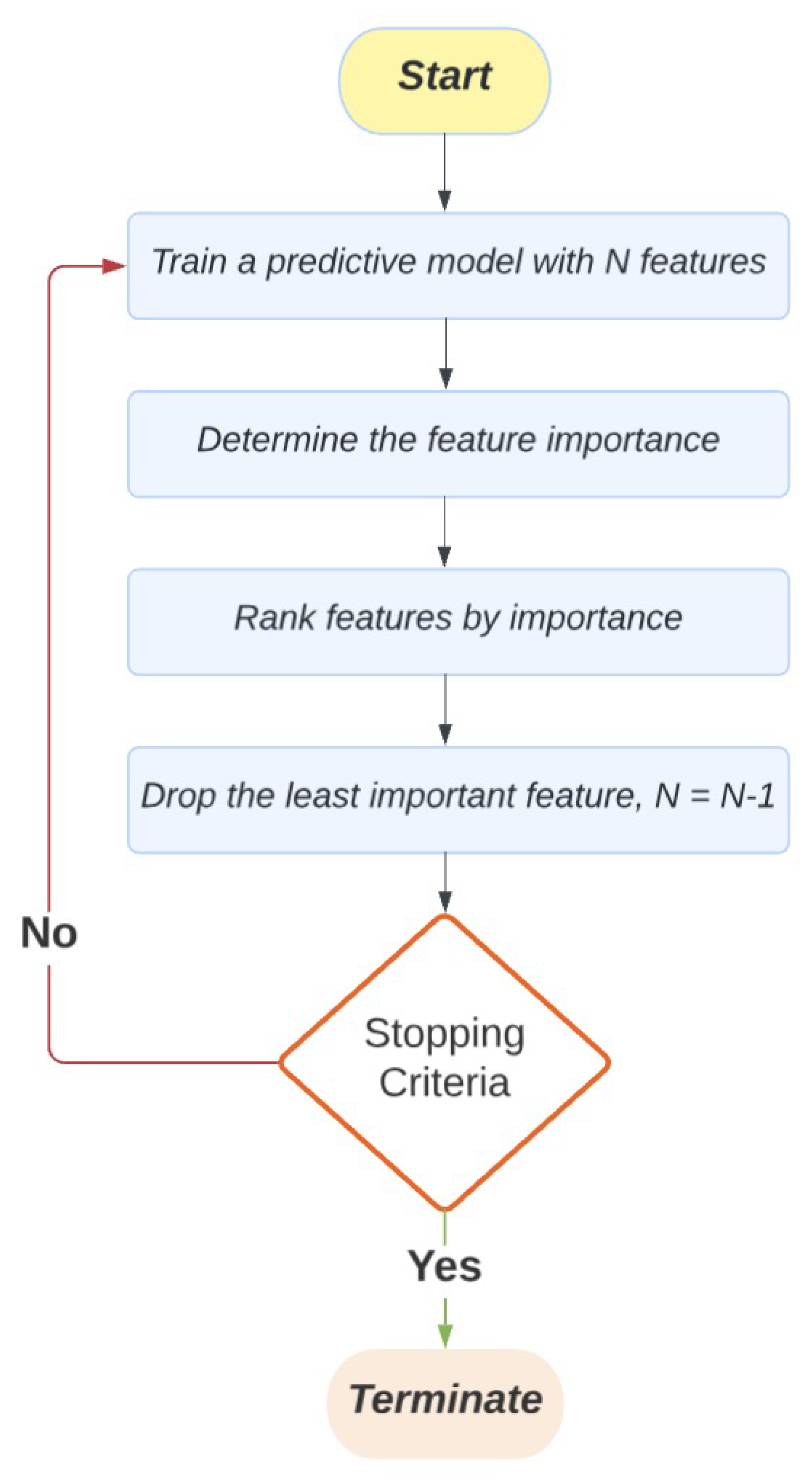

2. A Narrative Review of RFE Algorithms and Their Applications in EDM

2.1. The Original RFE Algorithm

2.2. Variants of the RFE Algorithm

2.2.1. RFE Wrapped with Different ML Models

2.2.2. Combinations of ML Models or Feature Importance Metrics

2.2.3. Modifications to the RFE Process

2.2.4. RFE Hybridized with Other Feature Selection or Dimension Reduction Methods

3. Methods

3.1. Datasets and Data Preprocessing

3.2. Model Training, Validation, and Testing

4. Results

4.1. Results for the Educational Dataset

4.1.1. Baseline: SVR-RFE

4.1.2. RF-RFE

4.1.3. RFE with Local Search Operators

4.1.4. Enhanced RFE

4.1.5. Summary of Regression Findings

4.2. Results for the Health Dataset

4.2.1. Baseline: SVR-RFE

4.2.2. RF-RFE

4.2.3. Enhanced RFE

4.2.4. RFE with Local Search Operators

4.3. Summary of Classification Findings

5. Discussion

5.1. Limitations and Directions for Future Research

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AUC | Area under the Curve |

| CV | Cross-validation |

| DT | Decision Trees |

| EDM | Educational Data Mining |

| GI | Gini index |

| LASSO | Least Absolute Shrinkage and Selection Operator |

| LR | Logistic Regression |

| LLM | Large Language Models |

| MAE | Mean Absolute Error |

| ML | Machine Learning |

| MOOC | Massive Open Online Courses |

| NLP | Natural Language Processing |

| PCA | Principal Component Analysis |

| PSI | Problem Solving and Inquiry |

| RF | Random Forests |

| RFE | Recursive Feature Elimination |

| RMSE | Root Mean Square Error |

| SMOTE | Synthetic Minority Oversampling Technique |

| SVM | Support Vector Machine |

| SVR | Support Vector Regression |

| TIMSS | Trends in International Mathematics and Science Study |

References

- Romero, C.; Ventura, S. Data mining in education. Wiley Interdisciplinary Reviews: Data mining and knowledge discovery 2013, 3, 12–27. [Google Scholar] [CrossRef]

- Algarni, A. Data mining in education. International Journal of Advanced Computer Science and Applications 2016, 7. [Google Scholar] [CrossRef]

- Romero, C.; Ventura, S. Educational data mining: a review of the state of the art. IEEE Transactions on Systems, Man, and Cybernetics, Part C (applications and reviews) 2010, 40, 601–618. [Google Scholar] [CrossRef]

- Wongvorachan, T.; He, S.; Bulut, O. A comparison of undersampling, oversampling, and SMOTE methods for dealing with imbalanced classification in educational data mining. Information 2023, 14, 54. [Google Scholar] [CrossRef]

- Bulut, O.; Wongvorachan, T.; He, S.; Lee, S. Enhancing high-school dropout identification: a collaborative approach integrating human and machine insights. Discover Education 2024, 3, 109. [Google Scholar] [CrossRef]

- Cui, Z.; Gong, G. The effect of machine learning regression algorithms and sample size on individualized behavioral prediction with functional connectivity features. Neuroimage 2018, 178, 622–637. [Google Scholar] [CrossRef]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed representations of words and phrases and their compositionality. Advances in neural information processing systems 2013, 26. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems. 2017; 30. [CrossRef]

- Shaik, T.; Tao, X.; Dann, C.; Xie, H.; Li, Y.; Galligan, L. Sentiment analysis and opinion mining on educational data: A survey. Natural Language Processing Journal 2023, 2, 100003. [Google Scholar] [CrossRef]

- James, T.P.G.; Karthikeyan, B.Y.; Ashok, P.; Suganya, R.; Maharaja, K.; et al. Strategic Integration of CNN, SVM, and XGBoost for Early-stage Tumor Detection using Hybrid Deep Learning Method. In Proceedings of the 2023 International Conference on Innovative Computing, Intelligent Communication and Smart Electrical Systems (ICSES). IEEE, 2023, pp. 1–6.

- Palo, H.K.; Sahoo, S.; Subudhi, A.K. Dimensionality reduction techniques: Principles, benefits, and limitations. Data Analytics in Bioinformatics: A Machine Learning Perspective 2021, pp. 77–107.

- Alalawi, K.; Athauda, R.; Chiong, R. Contextualizing the current state of research on the use of machine learning for student performance prediction: A systematic literature review. Engineering Reports, 2023; e12699. [Google Scholar]

- Venkatesh, B.; Anuradha, J. A review of feature selection and its methods. Cybernetics and information technologies 2019, 19, 3–26. [Google Scholar] [CrossRef]

- Liu, W.; Wang, J. Recursive elimination–election algorithms for wrapper feature selection. Applied Soft Computing 2021, 113, 107956. [Google Scholar] [CrossRef]

- Tibshirani, R. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society Series B: Statistical Methodology 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Guyon, I.; Elisseeff, A. An introduction to variable and feature selection. Journal of machine learning research 2003, 3, 1157–1182. [Google Scholar] [CrossRef]

- Guyon, I.; Weston, J.; Barnhill, S.; Vapnik, V. Gene selection for cancer classification using support vector machines. Machine learning 2002, 46, 389–422. [Google Scholar] [CrossRef]

- Albreiki, B.; Zaki, N.; Alashwal, H. A systematic literature review of student’performance prediction using machine learning techniques. Education Sciences 2021, 11, 552. [Google Scholar] [CrossRef]

- Chen, R.; Manongga, W.; Dewi, C. Recursive Feature Elimination for Improving Learning Points on Hand-Sign Recognition. Future Internet, 14 (12), 352, 2022.

- Samb, M.L.; Camara, F.; Ndiaye, S.; Slimani, Y.; Esseghir, M.A. A novel RFE-SVM-based feature selection approach for classification. International Journal of Advanced Science and Technology 2012, 43, 27–36. [Google Scholar]

- Reunanen, J. Overfitting in making comparisons between variable selection methods. Journal of Machine Learning Research 2003, 3, 1371–1382. [Google Scholar]

- Yeung, C.K.; Yeung, D.Y. Incorporating features learned by an enhanced deep knowledge tracing model for stem/non-stem job prediction. International Journal of Artificial Intelligence in Education 2019, 29, 317–341. [Google Scholar] [CrossRef]

- Pereira, F.D.; Oliveira, E.; Cristea, A.; Fernandes, D.; Silva, L.; Aguiar, G.; Alamri, A.; Alshehri, M. Early dropout prediction for programming courses supported by online judges. In Proceedings of the Artificial Intelligence in Education: 20th International Conference, AIED 2019, Chicago, IL, USA, June 25-29, 2019, Proceedings, Part II 20. Springer, 2019; pp. 67–72.

- Duan, K.B.; Rajapakse, J.C.; Wang, H.; Azuaje, F. Multiple SVM-RFE for gene selection in cancer classification with expression data. IEEE transactions on nanobioscience 2005, 4, 228–234. [Google Scholar] [CrossRef]

- Mao, Y.; Zhou, X.; Yin, Z.; Pi, D.; Sun, Y.; Wong, S.T. Gene selection using Gaussian kernel support vector machine based recursive feature elimination with adaptive kernel width strategy. In Proceedings of the Rough Sets and Knowledge Technology: First International Conference, RSKT 2006, Chongquing, China, July 24-26, 2006. Proceedings 1. Springer, 2006; pp. 799–806.

- Zhou, X.; Tuck, D.P. MSVM-RFE: extensions of SVM-RFE for multiclass gene selection on DNA microarray data. Bioinformatics 2007, 23, 1106–1114. [Google Scholar] [CrossRef]

- Zhang, L.; Zheng, X.; Pang, Q.; Zhou, W. Fast Gaussian kernel support vector machine recursive feature elimination algorithm. Applied Intelligence 2021, 51, 9001–9014. [Google Scholar] [CrossRef]

- Cao, J.; Zhang, L.; Wang, B.; Li, F.; Yang, J. A fast gene selection method for multi-cancer classification using multiple support vector data description. Journal of biomedical informatics 2015, 53, 381–389. [Google Scholar] [CrossRef] [PubMed]

- Chen, F.; Sakyi, A.; Cui, Y. Identifying key contextual factors of digital reading literacy through a machine learning approach. Journal of Educational Computing Research 2022, 60, 1763–1795. [Google Scholar] [CrossRef]

- Hu, J.; Peng, Y.; Ma, H. Examining the contextual factors of science effectiveness: a machine learning-based approach. School Effectiveness and School Improvement 2022, 33, 21–50. [Google Scholar] [CrossRef]

- Lian, W.; Nie, G.; Jia, B.; Shi, D.; Fan, Q.; Liang, Y. An intrusion detection method based on decision tree-recursive feature elimination in ensemble learning. Mathematical Problems in Engineering 2020, 2020, 1–15. [Google Scholar] [CrossRef]

- Granitto, P.M.; Furlanello, C.; Biasioli, F.; Gasperi, F. Recursive feature elimination with random forest for PTR-MS analysis of agroindustrial products. Chemometrics and intelligent laboratory systems 2006, 83, 83–90. [Google Scholar] [CrossRef]

- Zheng, S.; Liu, W. Lasso based gene selection for linear classifiers. In Proceedings of the 2009 IEEE International Conference on Bioinformatics and Biomedicine Workshop. IEEE; 2009; pp. 203–208. [Google Scholar] [CrossRef]

- Gitinabard, N.; Okoilu, R.; Xu, Y.; Heckman, S.; Barnes, T.; Lynch, C. Student Teamwork on Programming Projects: What can GitHub logs show us? arXiv preprint arXiv:2008.11262, 2020. [Google Scholar] [CrossRef]

- Jeon, H.; Oh, S. Hybrid-recursive feature elimination for efficient feature selection. Applied Sciences 2020, 10, 3211. [Google Scholar] [CrossRef]

- Chai, Y.; Lei, C.; Yin, C. Study on the influencing factors of online learning effect based on decision tree and recursive feature elimination. In Proceedings of the Proceedings of the 10th International Conference on E-Education, E-Business, E-Management and E-Learning, 2019, pp. 52–57. [CrossRef]

- Alarape, M.A.; Ameen, A.O.; Adewole, K.S. Hybrid students’ academic performance and dropout prediction models using recursive feature elimination technique. In Advances on Smart and Soft Computing: Proceedings of ICACIn 2021; Springer, 2021; pp. 93–106.

- Nguyen, H.N.; Ohn, S.Y. Drfe: Dynamic recursive feature elimination for gene identification based on random forest. In Proceedings of the International conference on neural information processing. Springer, 2006, pp. 1–10. [CrossRef]

- Artur, M. Review the performance of the Bernoulli Naïve Bayes Classifier in Intrusion Detection Systems using Recursive Feature Elimination with Cross-validated selection of the best number of features. Procedia computer science 2021, 190, 564–570. [Google Scholar] [CrossRef]

- Wottschel, V.; Chard, D.T.; Enzinger, C.; Filippi, M.; Frederiksen, J.L.; Gasperini, C.; Giorgio, A.; Rocca, M.A.; Rovira, A.; De Stefano, N.; et al. SVM recursive feature elimination analyses of structural brain MRI predicts near-term relapses in patients with clinically isolated syndromes suggestive of multiple sclerosis. NeuroImage: Clinical 2019, 24, 102011. [Google Scholar] [CrossRef]

- van der Ploeg, T.; Steyerberg, E.W. Feature selection and validated predictive performance in the domain of Legionella pneumophila: a comparative study. BMC Research Notes 2016, 9, 1–7. [Google Scholar] [CrossRef]

- Chen, F.; Cui, Y.; Chu, M.W. Utilizing game analytics to inform and validate digital game-based assessment with evidence-centered game design: A case study. International Journal of Artificial Intelligence in Education 2020, 30, 481–503. [Google Scholar] [CrossRef]

- Sánchez-Pozo, N.; Chamorro-Hernández, L.; Mina, J.; Márquez, J. Comparative analysis of feature selection techniques in predictive modeling of mathematics performance: An Ecuadorian case study. Educ. Sci. Manag 2023, 1, 111–121. [Google Scholar] [CrossRef]

- Sivaneasharajah, L.; Falkner, K.; Atapattu, T. Investigating Students’ Learning in Online Learning Environment. In Proceedings of the EDM, 2020.

- Chen, X.w.; Jeong, J.C. Enhanced recursive feature elimination. In Proceedings of the Sixth international conference on machine learning and applications (ICMLA 2007). IEEE, 2007, pp. 429–435. [CrossRef]

- Ding, X.; Li, Y.; Chen, S. Maximum margin and global criterion based-recursive feature selection. Neural Networks 2024, 169, 597–606. [Google Scholar] [CrossRef]

- Han, Y.; Huang, L.; Zhou, F. A dynamic recursive feature elimination framework (dRFE) to further refine a set of OMIC biomarkers. Bioinformatics 2021, 37, 2183–2189. [Google Scholar] [CrossRef] [PubMed]

- Nafis, N.S.M.; Awang, S. An enhanced hybrid feature selection technique using term frequency-inverse document frequency and support vector machine-recursive feature elimination for sentiment classification. IEEE Access 2021, 9, 52177–52192. [Google Scholar] [CrossRef]

- Paddalwar, S.; Mane, V.; Ragha, L. Predicting students’ academic grade using machine learning algorithms with hybrid feature selection approach. In Proceedings of the ITM Web of Conferences. EDP Sciences, 2022, Vol. 44, p. 03036. [CrossRef]

- Lei, H.; Govindaraju, V. Speeding up multi-class SVM evaluation by PCA and feature selection. Feature Selection for Data Mining 2005, 72. [Google Scholar]

- Huang, X.; Zhang, L.; Wang, B.; Li, F.; Zhang, Z. Feature clustering based support vector machine recursive feature elimination for gene selection. Applied Intelligence 2018, 48, 594–607. [Google Scholar] [CrossRef]

- Almutiri, T.; Saeed, F. A hybrid feature selection method combining Gini index and support vector machine with recursive feature elimination for gene expression classification. International Journal of Data Mining, Modelling and Management 2022, 14, 41–62. [Google Scholar] [CrossRef]

- Lin, X.; Wang, Q.; Yin, P.; Tang, L.; Tan, Y.; Li, H.; Yan, K.; Xu, G. A method for handling metabonomics data from liquid chromatography/mass spectrometry: combinational use of support vector machine recursive feature elimination, genetic algorithm and random forest for feature selection. Metabolomics 2011, 7, 549–558. [Google Scholar] [CrossRef]

- Louw, N.; Steel, S. Variable selection in kernel Fisher discriminant analysis by means of recursive feature elimination. Computational Statistics & Data Analysis 2006, 51, 2043–2055. [Google Scholar] [CrossRef]

- Mullis, I.V.; Martin, M.O.; Fishbein, B.; Foy, P.; Moncaleano, S. Findings from the TIMSS 2019 problem solving and inquiry tasks. Retrieved from Boston College, TIMSS & PIRLS International Study Center. website: https://timssandpirls. bc. edu/timss2019/psi 2021.

- Martin, M.O.; von Davier, M.; Mullis, I.V. Methods and procedures: TIMSS 2019 Technical Report. International Association for the Evaluation of Educational Achievement 2020.

- Fishbein, B.; Foy, P.; Yin, L. TIMSS 2019 user guide for the international database. Hentet fra https://timssandpirls. bc. edu/timss2019/international-database 2021.

- Ulitzsch, E.; Yildirim-Erbasli, S.N.; Gorgun, G.; Bulut, O. An explanatory mixture IRT model for careless and insufficient effort responding in self-report measures. British Journal of Mathematical and Statistical Psychology 2022, 75, 668–698. [Google Scholar] [CrossRef]

- Wongvorachan, T.; Bulut, O.; Liu, J.X.; Mazzullo, E. A Comparison of Bias Mitigation Techniques for Educational Classification Tasks Using Supervised Machine Learning. Information 2024, 15, 326. [Google Scholar] [CrossRef]

- Zhang, Z. Multiple imputation with multivariate imputation by chained equation (MICE) package. Annals of translational medicine 2016, 4. [Google Scholar]

- Ahsan, M.M.; Mahmud, M.P.; Saha, P.K.; Gupta, K.D.; Siddique, Z. Effect of data scaling methods on machine learning algorithms and model performance. Technologies 2021, 9, 52. [Google Scholar] [CrossRef]

- Golovenkin, S.; Gorban, A.; Mirkes, E.; Shulman, V.; Rossiev, D.; Shesternya, P.; Nikulina, S.Y.; Orlova, Y.V.; Dorrer, M. Complications of myocardial infarction: a database for testing recognition and prediction systems, 2020.

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: synthetic minority over-sampling technique. Journal of artificial intelligence research 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Mao, A.; Huang, E.; Wang, X.; Liu, K. Deep learning-based animal activity recognition with wearable sensors: Overview, challenges, and future directions. Computers and Electronics in Agriculture 2023, 211, 108043. [Google Scholar] [CrossRef]

- Blagus, R.; Lusa, L. SMOTE for high-dimensional class-imbalanced data. BMC bioinformatics 2013, 14, 1–16. [Google Scholar] [CrossRef]

- Van Hulse, J.; Khoshgoftaar, T.M.; Napolitano, A.; Wald, R. Feature selection with high-dimensional imbalanced data. In Proceedings of the 2009 IEEE International Conference on Data Mining Workshops. IEEE, 2009, pp. 507–514.

- Gunn, S.R. Support vector machines for classification and regression. Technical report, Citeseer, 1997.

| Algorithm | Number of Features | RMSE | MAE | R2 |

|---|---|---|---|---|

| SVR-RFE (Baseline) | 82 | 57.357 | 45.957 | 0.359 |

| RF-RFE | 108 | 56.474 | 44.377 | 0.379 |

| Enhanced RFE | 62 | 58.234 | 46.577 | 0.340 |

| RFE with local search operator | 85 | 57.442 | 46.091 | 0.357 |

| Algorithm | Number of Features | F1 | Precision | Recall |

|---|---|---|---|---|

| SVR-RFE (Baseline) | 118 | 0.438 | 0.284 | 0.962 |

| RF-RFE | 110 | 0.260 | 0.619 | 0.165 |

| Enhanced RFE | 106 | 0.640 | 0.633 | 0.663 |

| RFE with local search operator | 106 | 0.618 | 0.613 | 0.638 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).