Submitted:

14 April 2025

Posted:

15 April 2025

Read the latest preprint version here

Abstract

Keywords:

Introduction

0.1. Historical Context and Significance

0.2. Types of Hallucination

0.3. Challenges and Open Research Questions

- A structured taxonomy of hallucination types across different NLP tasks

- An analysis of state-of-the-art detection methods, including probabilistic, contrastive, and retrieval-based approaches

- A detailed discussion on mitigation techniques, including reinforcement learning, prompt optimization, and adversarial fine-tuning

- A review of evaluation metrics and benchmarks, such as FEVER, TruthfulQA, and the Hallucination Evaluation Benchmark (HALL-E)

- Research gaps and future directions, including the need for standardized hallucination definitions, real-time detection techniques, and multimodal hallucination evaluation

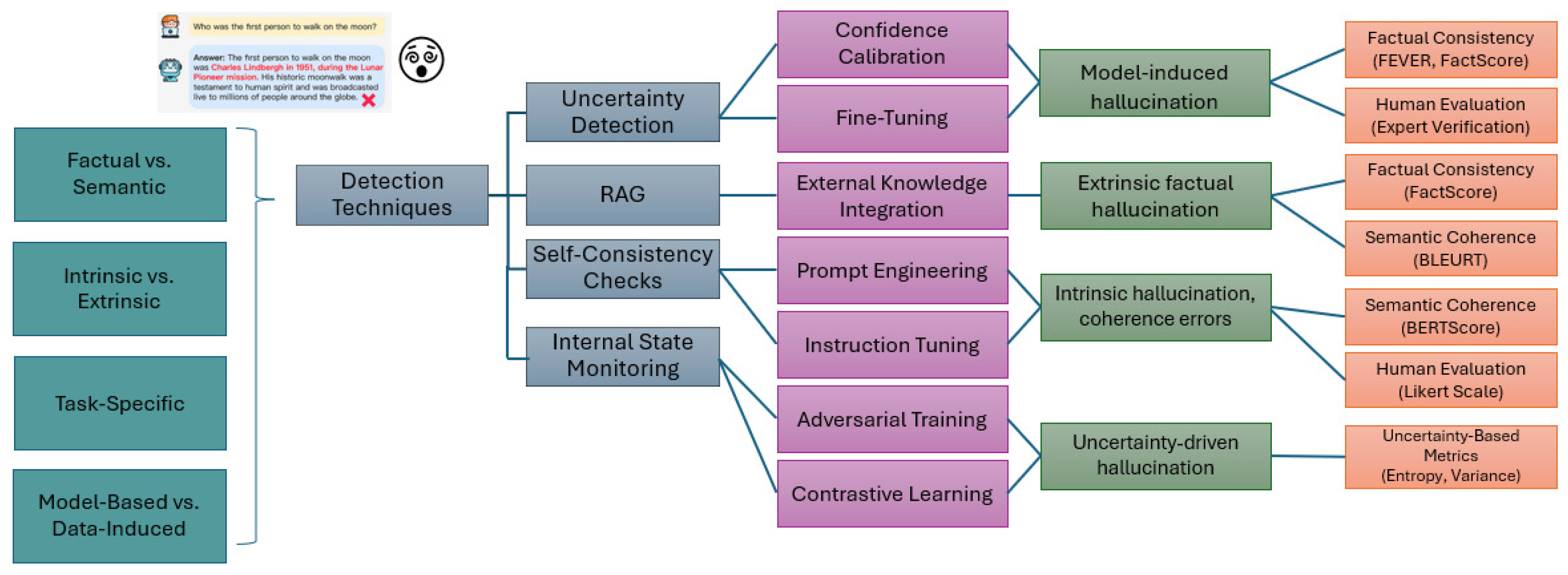

1. Taxonomy of Hallucination

1.1. Intrinsic vs. Extrinsic Hallucination

1.2. Factual vs. Semantic Hallucination

1.3. Task-Specific Hallucination Categories

1.4. Model-Based vs. Data-Induced Hallucination

1.5. Summary of Hallucination Taxonomy

2. Detection Techniques

2.1. Uncertainty Estimation

2.2. Retrieval-Augmented Generation (RAG) and External Fact Verification

2.3. Self-Consistency Checks

2.4. Internal State Monitoring

2.5. Benchmarking and Evaluation of Detection Methods

3. Mitigation Strategies

3.1. Fine-Tuning and Reinforcement Learning from Human Feedback (RLHF)

3.2. Retrieval-Augmented Generation (RAG) and External Knowledge Integration

3.3. Prompt Engineering and Instruction Tuning

3.4. Adversarial Training and Contrastive Learning

3.5. Hybrid Approaches and Multimodal Mitigation Strategies

3.6. Contrastive Learning for Hallucination Mitigation

4. Evaluation Metrics and Benchmarks

4.1. Evaluation Metrics for Hallucination

4.1.1. Factual Consistency Metrics

4.1.2. Semantic Coherence and Fluency Metrics

4.1.3. Uncertainty-Based Metrics

4.1.4. Human Evaluation Metrics

4.2. Benchmarks and Datasets for Hallucination Evaluation

5. Research Gaps and Future Directions

5.1. Lack of Standardized Hallucination Definitions and Taxonomies

5.2. Limitations of Existing Hallucination Detection Techniques

5.3. Challenges in Hallucination Mitigation Strategies

5.4. Addressing Hallucination in Multimodal and Multilingual Models

5.5. Real-World Deployment and Trustworthy AI Considerations

5.6. Ethical Considerations and Explainability in Hallucination Detection

6. Conclusion

References

- Susan Zhang, Stephen Roller, Naman Goyal, Mikel Artetxe, Moya Chen, Shuohui Chen, Christopher Dewan, Mona Diab, Xian Li, Xi Victoria Lin, et al. Opt: Open pre-trained transformer language models. arXiv, 2022; arXiv:2205.01068.

- Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. Llama 2: Open foundation and fine-tuned chat models. arXiv, 2023; arXiv:2307.09288.

- Ziwei Ji, Nayeon Lee, Rita Frieske, Tiezheng Yu, Dan Su, Yan Xu, Etsuko Ishii, Ye Jin Bang, Andrea Madotto, and Pascale Fung. Survey of hallucination in natural language generation. ACM computing surveys, 55(12):1–38, 2023.

- Vipula Rawte, Amit Sheth, and Amitava Das. A survey of hallucination in large foundation models. arXiv, 2023; arXiv:2309.05922.

- Lei Huang, Weijiang Yu, Weitao Ma, Weihong Zhong, Zhangyin Feng, Haotian Wang, Qianglong Chen, Weihua Peng, Xiaocheng Feng, Bing Qin, et al. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions. ACM Transactions on Information Systems, 43(2):1–55, 2025.

- Stephanie Lin, Jacob Hilton, and Owain Evans. Truthfulqa: Measuring how models mimic human falsehoods. arXiv, 2021; arXiv:2109.07958.

- Joshua Maynez, Shashi Narayan, Bernd Bohnet, and Ryan McDonald. On faithfulness and factuality in abstractive summarization. arXiv, 2020; arXiv:2005.00661.

- Ari Holtzman, Jan Buys, Li Du, Maxwell Forbes, and Yejin Choi. The curious case of neural text degeneration. arXiv, 2019; arXiv:1904.09751.

- Kurt Shuster, Spencer Poff, Moya Chen, Douwe Kiela, and Jason Weston. Retrieval augmentation reduces hallucination in conversation. arXiv, 2021; arXiv:2104.07567.

- Zheng Zhao, Shay B Cohen, and Bonnie Webber. Reducing quantity hallucinations in abstractive summarization. arXiv, 2020; arXiv:2009.13312.

- Nuno M Guerreiro, Elena Voita, and André FT Martins. Looking for a needle in a haystack: A comprehensive study of hallucinations in neural machine translation. arXiv, 2022; arXiv:2208.05309.

- Yue Zhang, Yafu Li, Leyang Cui, Deng Cai, Lemao Liu, Tingchen Fu, Xinting Huang, Enbo Zhao, Yu Zhang, Yulong Chen, et al. Siren’s song in the ai ocean: a survey on hallucination in large language models. arXiv, 2023; arXiv:2309.01219.

- Bhuwan Dhingra, Manaal Faruqui, Ankur Parikh, Ming-Wei Chang, Dipanjan Das, and William W Cohen. Handling divergent reference texts when evaluating table-to-text generation. arXiv, 2019; arXiv:1906.01081.

- Amos Azaria and Tom Mitchell. The internal state of an llm knows when it’s lying. arXiv, 2023; arXiv:2304.13734.

- Haoyue Bai, Xuefeng Du, Katie Rainey, Shibin Parameswaran, and Yixuan Li. Out-of-distribution learning with human feedback. arXiv, 2024; arXiv:2408.07772.

- Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems, 35:27730–27744, 2022.

- Sean Welleck, Ilia Kulikov, Stephen Roller, Emily Dinan, Kyunghyun Cho, and Jason Weston. Neural text generation with unlikelihood training. arXiv, 2019; arXiv:1908.04319.

- Sean Welleck, Jason Weston, Arthur Szlam, and Kyunghyun Cho. Dialogue natural language inference. arXiv, 2018; arXiv:1811.00671.

- Zhen Lin, Shubhendu Trivedi, and Jimeng Sun. Generating with confidence: Uncertainty quantification for black-box large language models. arXiv, 2023; arXiv:2305.19187.

- Potsawee Manakul, Adian Liusie, and Mark JF Gales. Selfcheckgpt: Zero-resource black-box hallucination detection for generative large language models. arXiv, 2023; arXiv:2303.08896.

- Muqing Miao and Michael Kearns. Hallucination, monofacts, and miscalibration: An empirical investigation. arXiv, 2025; arXiv:2502.08666.

- Yuheng Huang, Jiayang Song, Zhijie Wang, Shengming Zhao, Huaming Chen, Felix Juefei-Xu, and Lei Ma. Look before you leap: An exploratory study of uncertainty measurement for large language models. arXiv, 2023; arXiv:2307.10236.

- Artidoro Pagnoni, Vidhisha Balachandran, and Yulia Tsvetkov. Understanding factuality in abstractive summarization with frank: A benchmark for factuality metrics. arXiv, 2021; arXiv:2104.13346.

- Lorenz Kuhn, Yarin Gal, and Sebastian Farquhar. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. arXiv, 2023; arXiv:2302.09664.

- Saurav Kadavath, Tom Conerly, Amanda Askell, Tom Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zac Hatfield-Dodds, Nova DasSarma, Eli Tran-Johnson, et al. Language models (mostly) know what they know. arXiv, 2022; arXiv:2207.05221.

- Shiliang Sun, Zhilin Lin, and Xuhan Wu. Hallucinations of large multimodal models: Problem and countermeasures. Information Fusion, page 102970, 2025.

- Nick McKenna, Tianyi Li, Liang Cheng, Mohammad Javad Hosseini, Mark Johnson, and Mark Steedman. Sources of hallucination by large language models on inference tasks. arXiv preprint arXiv:2305.14552, arXiv:2305.14552, 2023.

- Collin Burns, Haotian Ye, Dan Klein, and Jacob Steinhardt. Discovering latent knowledge in language models without supervision. arXiv, 2022; arXiv:2212.03827.

- Tianhang Zhang, Lin Qiu, Qipeng Guo, Cheng Deng, Yue Zhang, Zheng Zhang, Chenghu Zhou, Xinbing Wang, and Luoyi Fu. Enhancing uncertainty-based hallucination detection with stronger focus. arXiv, 2023; arXiv:2311.13230.

- Andrey Malinin and Mark Gales. Uncertainty estimation in autoregressive structured prediction. arXiv, 2020; arXiv:2002.07650.

- Gabriel Yanci Arteaga. Hallucination detection in llms: Using bayesian neural network ensembling, 2024.

- Yijun Xiao and William Yang Wang. On hallucination and predictive uncertainty in conditional language generation. arXiv, 2021; arXiv:2103.15025.

- Liangru Xie, Hui Liu, Jingying Zeng, Xianfeng Tang, Yan Han, Chen Luo, Jing Huang, Zhen Li, Suhang Wang, and Qi He. A survey of calibration process for black-box llms. arXiv, 2024; arXiv:2412.12767.

- Kavita Ganesan. Rouge 2.0: Updated and improved measures for evaluation of summarization tasks. arXiv, 2018; arXiv:1803.01937.

- Charlotte Nicks, Eric Mitchell, Rafael Rafailov, Archit Sharma, Christopher D Manning, Chelsea Finn, and Stefano Ermon. Language model detectors are easily optimized against. In The twelfth international conference on learning representations, 2023.

- Kaitlyn Zhou, Dan Jurafsky, and Tatsunori Hashimoto. Navigating the grey area: How expressions of uncertainty and overconfidence affect language models. arXiv, 2023; arXiv:2302.13439.

- Weijia Shi, Xiaochuang Han, Mike Lewis, Yulia Tsvetkov, Luke Zettlemoyer, and Wen-tau Yih. Trusting your evidence: Hallucinate less with context-aware decoding. In Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 2: Short Papers), pages 783–791, 2024.

- Harsha Nori, Yin Tat Lee, Sheng Zhang, Dean Carignan, Richard Edgar, Nicolo Fusi, Nicholas King, Jonathan Larson, Yuanzhi Li, Weishung Liu, et al. Can generalist foundation models outcompete special-purpose tuning? case study in medicine. arXiv, 2023; arXiv:2311.16452.

- Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems, 33:9459–9474, 2020.

- Jie Ren, Jiaming Luo, Yao Zhao, Kundan Krishna, Mohammad Saleh, Balaji Lakshminarayanan, and Peter J Liu. Out-of-distribution detection and selective generation for conditional language models. arXiv, 2022; arXiv:2209.15558.

- Roi Cohen, May Hamri, Mor Geva, and Amir Globerson. Lm vs lm: Detecting factual errors via cross examination. arXiv, 2023; arXiv:2305.13281.

- Huiling Tu, Shuo Yu, Vidya Saikrishna, Feng Xia, and Karin Verspoor. Deep outdated fact detection in knowledge graphs. In 2023 IEEE International Conference on Data Mining Workshops (ICDMW), pages 1443–1452. IEEE, 2023.

- Fen Wang, Bomiao Wang, Xueli Shu, Zhen Liu, Zekai Shao, Chao Liu, and Siming Chen. Chartinsighter: An approach for mitigating hallucination in time-series chart summary generation with a benchmark dataset. arXiv, 2025; arXiv:2501.09349.

- Takeshi Kojima, Shixiang Shane Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213, 2022.

- Junyi Li, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, and Ji-Rong Wen. Halueval: A large-scale hallucination evaluation benchmark for large language models. arXiv, 2023; arXiv:2305.11747.

- James Thorne, Andreas Vlachos, Christos Christodoulopoulos, and Arpit Mittal. Fever: a large-scale dataset for fact extraction and verification. arXiv, 2018; arXiv:1803.05355.

- Mandar Joshi, Eunsol Choi, Daniel S Weld, and Luke Zettlemoyer. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. arXiv, 2017; arXiv:1705.03551.

- Wenhao Yu, Hongming Zhang, Xiaoman Pan, Kaixin Ma, Hongwei Wang, and Dong Yu. Chain-of-note: Enhancing robustness in retrieval-augmented language models. arXiv preprint arXiv:2311.09210, 2023.

- Haoyue Bai, Gregory Canal, Xuefeng Du, Jeongyeol Kwon, Robert D Nowak, and Yixuan Li. Feed two birds with one scone: Exploiting wild data for both out-of-distribution generalization and detection. In International Conference on Machine Learning, pages 1454–1471. PMLR, 2023.

- Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300, 2020.

- Ian J Goodfellow, Jonathon Shlens, and Christian Szegedy. Explaining and harnessing adversarial examples. arXiv preprint arXiv:1412.6572, 2014.

- Or Honovich, Leshem Choshen, Roee Aharoni, Ella Neeman, Idan Szpektor, and Omri Abend. Evaluating factual consistency in knowledge-grounded dialogues via question generation and question answering. arXiv preprint arXiv:2104.08202, 2021.

- Nouha Dziri, Andrea Madotto, Osmar Zaïane, and Avishek Joey Bose. Neural path hunter: Reducing hallucination in dialogue systems via path grounding. arXiv preprint arXiv:2104.08455, 2021.

- Prakhar Gupta, Chien-Sheng Wu, Wenhao Liu, and Caiming Xiong. Dialfact: A benchmark for fact-checking in dialogue. arXiv preprint arXiv:2110.08222, 2021.

- Tobias Falke, Leonardo FR Ribeiro, Prasetya Ajie Utama, Ido Dagan, and Iryna Gurevych. Ranking generated summaries by correctness: An interesting but challenging application for natural language inference. In Proceedings of the 57th annual meeting of the association for computational linguistics, pages 2214–2220, 2019.

- Wojciech Kryściński, Bryan McCann, Caiming Xiong, and Richard Socher. Evaluating the factual consistency of abstractive text summarization. arXiv, 2019; arXiv:1910.12840.

- Saadia Gabriel, Asli Celikyilmaz, Rahul Jha, Yejin Choi, and Jianfeng Gao. Go figure: A meta evaluation of factuality in summarization. arXiv preprint arXiv:2010.12834, 2020.

- Yang Feng, Wanying Xie, Shuhao Gu, Chenze Shao, Wen Zhang, Zhengxin Yang, and Dong Yu. Modeling fluency and faithfulness for diverse neural machine translation. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 34, pages 59–66, 2020.

- Katherine Lee, Orhan Firat, Ashish Agarwal, Clara Fannjiang, and David Sussillo. Hallucinations in neural machine translation. 2018.

- Clément Rebuffel, Marco Roberti, Laure Soulier, Geoffrey Scoutheeten, Rossella Cancelliere, and Patrick Gallinari. Controlling hallucinations at word level in data-to-text generation. Data Mining and Knowledge Discovery, pages 1–37, 2022.

- Sam Wiseman, Stuart M Shieber, and Alexander M Rush. Challenges in data-to-document generation. arXiv preprint arXiv:1707.08052, 2017.

- Ondřej Dušek and Zdeněk Kasner. Evaluating semantic accuracy of data-to-text generation with natural language inference. arXiv preprint arXiv:2011.10819, 2020.

- Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Tianrui Guan, Fuxiao Liu, Xiyang Wu, Ruiqi Xian, Zongxia Li, Xiaoyu Liu, Xijun Wang, Lichang Chen, Furong Huang, Yaser Yacoob, et al. Hallusionbench: an advanced diagnostic suite for entangled language hallucination and visual illusion in large vision-language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14375–14385, 2024.

- I Chern, Steffi Chern, Shiqi Chen, Weizhe Yuan, Kehua Feng, Chunting Zhou, Junxian He, Graham Neubig, Pengfei Liu, et al. Factool: Factuality detection in generative ai–a tool augmented framework for multi-task and multi-domain scenarios. arXiv preprint arXiv:2307.13528, 2023.

- Sewon Min, Kalpesh Krishna, Xinxi Lyu, Mike Lewis, Wen-tau Yih, Pang Wei Koh, Mohit Iyyer, Luke Zettlemoyer, and Hannaneh Hajishirzi. Factscore: Fine-grained atomic evaluation of factual precision in long form text generation. arXiv preprint arXiv:2305.14251, 2023.

- Ran Tian, Shashi Narayan, Thibault Sellam, and Ankur P Parikh. Sticking to the facts: confident decoding for faithful data-to-text generation (2019). arXiv preprint arXiv:1910.08684, 2019.

- Tianyi Zhang, Varsha Kishore, Felix Wu, Kilian Q Weinberger, and Yoav Artzi. Bertscore: Evaluating text generation with bert. arXiv preprint arXiv:1904.09675, 2019.

- Thibault Sellam, Dipanjan Das, and Ankur P Parikh. Bleurt: Learning robust metrics for text generation. arXiv preprint arXiv:2004.04696, 2020.

- Ping Jiang, Xiaoheng Deng, Shaohua Wan, Huamei Qi, and Shichao Zhang. Confidence-enhanced mutual knowledge for uncertain segmentation. IEEE Transactions on Intelligent Transportation Systems, 25(1):725–737, 2023.

- Yarin Gal and Zoubin Ghahramani. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In international conference on machine learning, pages 1050–1059. PMLR, 2016.

- Nayeon Lee, Wei Ping, Peng Xu, Mostofa Patwary, Pascale N Fung, Mohammad Shoeybi, and Bryan Catanzaro. Factuality enhanced language models for open-ended text generation. Advances in Neural Information Processing Systems, 35:34586–34599, 2022.

- Yingbo Zhang, Shumin Ren, Jiao Wang, Chaoying Zhan, Mengqiao He, Xingyun Liu, Rongrong Wu, Jing Zhao, Cong Wu, Chuanzhu Fan, et al. Expertise or hallucination? a comprehensive evaluation of chatgpt’s aptitude in clinical genetics. IEEE Transactions on Big Data, 2025.

| Category | Definition | Example | Key References |

|---|---|---|---|

| Intrinsic Hallucination | Internal inconsistency within the generated text | A summary contradicting itself | Ji et al. (2023) [3] |

| Extrinsic Hallucination | Misinformation that diverges from the input or real-world knowledge | A fabricated fact in a generated response | Huang et al. (2025) [5,22] |

| Factual Hallucination | Statements that contradict real-world facts | Incorrect scientific claims | Rawte et al. (2023) [4] |

| Semantic Hallucination | Fluent but logically incoherent responses | An irrelevant chatbot reply | Zhang et al. (2023) [29] |

| Task-Specific Hallucination | Hallucinations in different NLP tasks | Incorrect translations, misleading summaries, hallucinated image descriptions | Dale et al. (2022), Bai et al. (2023) [15] |

| Model-Based Hallucination | Hallucination due to training biases or fine-tuning strategies | Errors introduced by reinforcement learning objectives | Burns et al. (2023) [28], Ouyang et al. (2022) [16] |

| Data-Induced Hallucination | Hallucination due to incomplete or biased training data | Incorrect outputs stemming from flawed datasets | Zhao et al. (2021)[10] |

| Method | Principle | Strengths | Limitations | Key References |

|---|---|---|---|---|

| Uncertainty Estimation | Measures confidence in model outputs | Detects low-confidence hallucinations | Less effective for confidently incorrect statements | Ji et al. (2023)[3], Liu et al. (2023)[45] |

| Retrieval-Augmented Generation (RAG) | Compares output against retrieved facts | High accuracy in factual consistency | Requires high-quality external sources | Lewis et al. (2020)[39], Yu et al. (2023)[48] |

| Self-Consistency Checks | Compares multiple generated outputs | Detects variance-based hallucinations | Computationally expensive | Wang et al. (2023) [43], Kojima et al. (2022)[44] |

| Internal State Monitoring | Analyzes hidden activations of LLMs | Directly probes model knowledge | Requires model access | Azaria & Mitchell (2023)[14], Burns et al. (2023)[28] |

| Category | Task | Principle | Strengths | Limitations & Key References |

|---|---|---|---|---|

| Evaluation Metrics | Dialogue | Measures factual consistency in conversational AI | Detects inconsistencies | Sensitive to open-ended dialogue, may require human validation [9,52,53,54] |

| Summarization | Evaluates faithfulness of generated summaries | Captures factual errors | Struggles with abstractive models that paraphrase well [23,55,56,57] | |

| Translation | Checks alignment between source and translated output | Identifies extrinsic hallucination | Limited for low-resource languages [11,42,58,59] | |

| Data-to-Text | Assesses alignment of structured data with text output | Task-specific, improves reliability | May not generalize well across datasets [60,61,62] | |

| Multimodal | Validates consistency between image and generated text | Reduces visual-text mismatches | Requires strong vision-language benchmarks [14,15,26] | |

| RAG | Compares generated text with retrieved knowledge | Enhances factual accuracy | Relies on knowledge quality and retrieval effectiveness [9,39,40] | |

| Mitigation Strategies | Fine-Tuning & RLHF | Trains on curated datasets, optimizes via reward models | Reduces factual hallucinations, aligns with human intent | May reinforce biases, risk of overfitting [16,28] |

| Retrieval-Augmented Generation (RAG) | Uses external databases for fact verification | Enhances factuality | Dependent on retrieval source quality [39,48] | |

| Prompt Engineering & Instruction Tuning | Guides models using structured prompts | Lightweight, computationally cheap | Temporary fix, requires frequent updates [44,63] | |

| Adversarial Training | Exposes model to adversarial examples | Improves model robustness | Computationally expensive, requires large adversarial datasets [50,51] | |

| Hybrid & Multimodal Approaches | Combines multiple mitigation techniques | Increases adaptability | Complex to implement, needs careful balancing [15,64] |

| Category | Metric / Benchmark | Application | Key References |

|---|---|---|---|

| Factual Consistency | FEVER Score, FactScore, Entity-Level Fact Checking | Summarization, Question Answering | Thorne et al. (2018)[46], Kryscinski et al. (2020)[56] |

| Semantic Coherence | BERTScore, BLEURT, Self-BLEU | Open-ended text generation | Zhang et al. (2020)[68], Sellam et al. (2020)[69] |

| Uncertainty Estimation | Entropy-Based Confidence, Prediction Variance Analysis | Long-form text, Multi-step reasoning | Jiang et al. (2023)[70], Liu et al. (2023) |

| Human Evaluation | Likert-Scale Ratings, Expert Verification | High-risk AI applications | Nori et al. (2023)[38], Zhang et al. (2023)[68] |

| Benchmarks | FEVER, HALL-E, TruthfulQA, ERBench, HaluEval | Standardized hallucination assessment | Thorne et al. (2018)[46], Shuster et al. (2022)[9], Lin et al. (2022), Yu et al. (2023)[48] |

| Research Gap | Future Direction | Key References |

|---|---|---|

| Lack of Standardized Taxonomy | Develop unified hallucination classification frameworks | Ji et al. (2023)[3], Rawte et al. (2023)[4] [4] |

| Limitations of Detection Methods | Hybrid uncertainty + fact verification models | Lewis et al. (2020)[39], Jiang et al. (2023)[70] |

| Challenges in Mitigation Strategies | Adaptive fine-tuning and self-supervised learning | Ouyang et al. (2022)[16], Bai et al. (2022)[49] |

| Hallucination in Multimodal Models | Cross-modal grounding for vision-language AI | Mitchell et al. (2023)[14], Zhang et al. (2023)[68] |

| Trustworthy AI and Deployment | Human-AI hybrid verification systems | Nori et al. (2023)[38], Yu et al. (2023)[48] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).