Submitted:

12 April 2025

Posted:

14 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Neural Radiance Fields (NeRF)

1.2. 3D Gaussian Splatting (3DGS)

1.3. Practical Contribution

1.4. Theoretical Contribution

2. Methodology

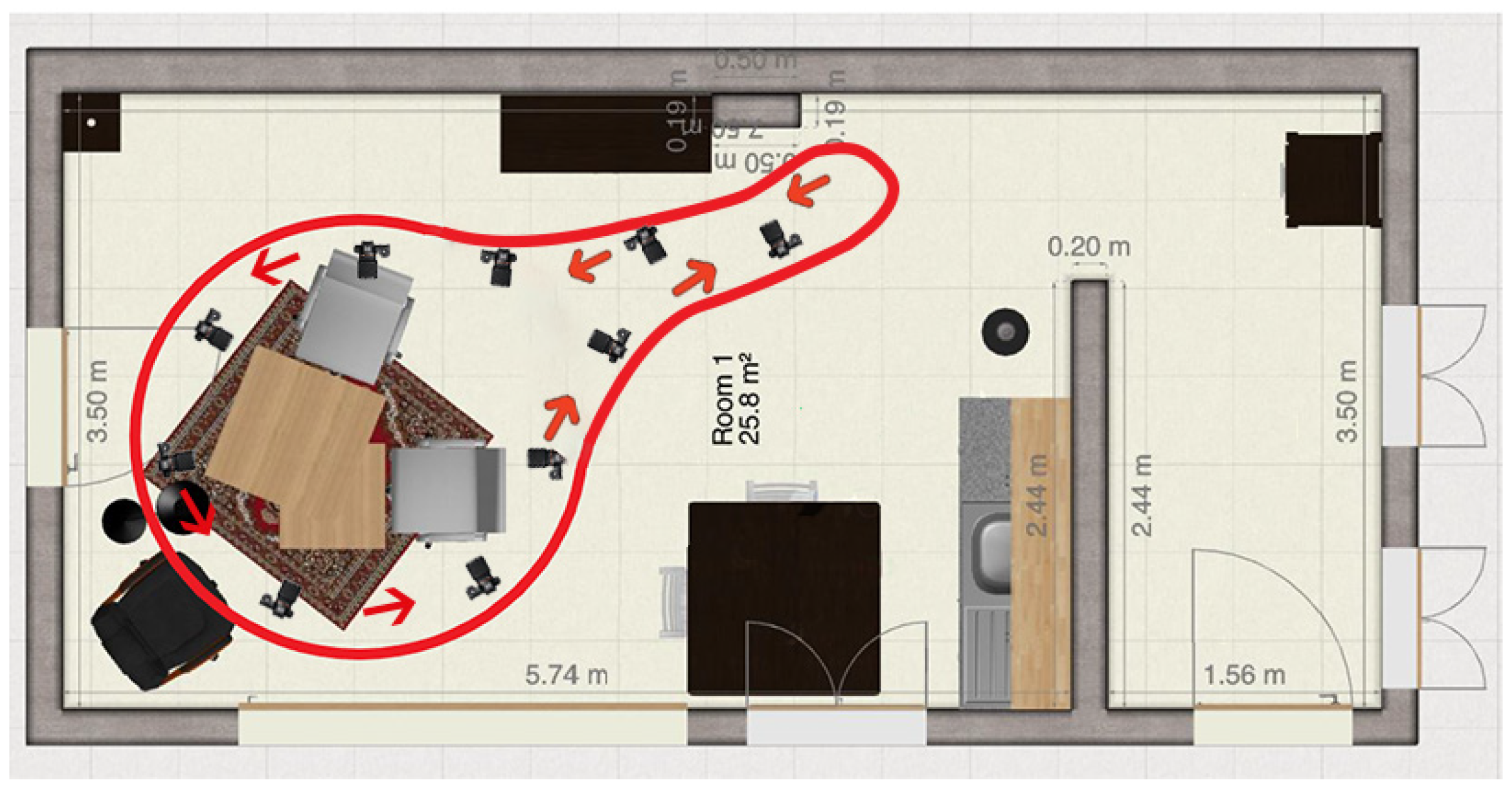

2.1. Camera Setup

2.2. Data Capture Process

2.3. Environmental Setup

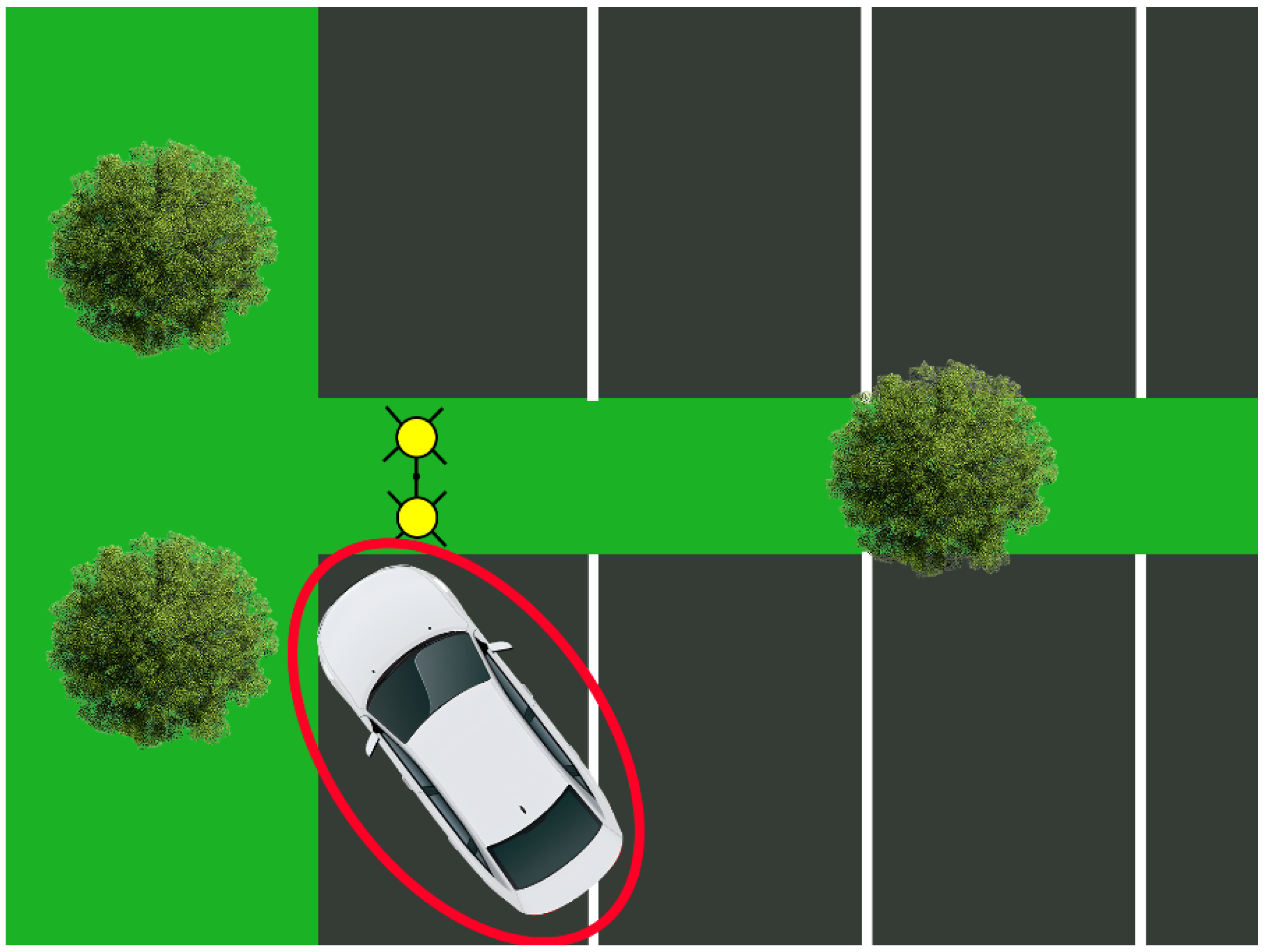

Outdoor Environment

2.4. Experimental Design

2.4.1. Algorithm Comparison

2.4.2. Evaluation Criteria

3. Results

3.1. Comparison 1: Outdoor Environment

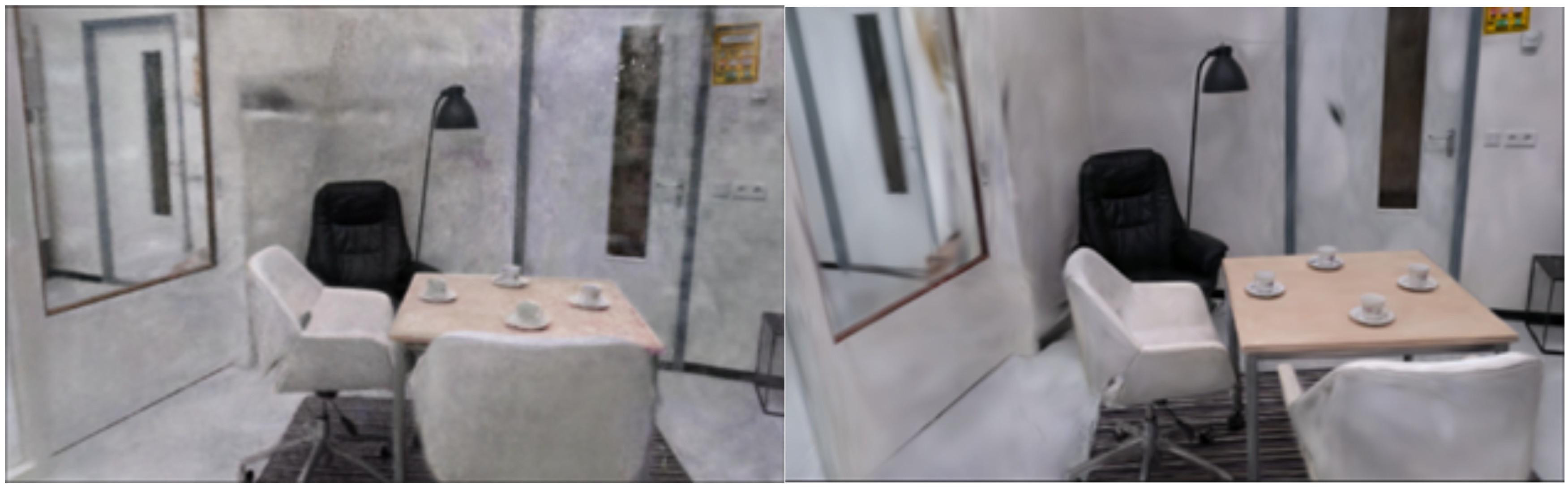

3.2. Comparison 2: Indoor Environment

4. Discussion

4.1. Interpretation of Results

4.2. Comparison with Existing Literature

4.3. Practical Implications

4.4. Limitations

4.5. Future Work

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- E. M. Robinson, “Photogrammetry,” Crime Scene Photography: Third Edition, pp. 411–453, Jan. 2016. [CrossRef]

- M. M. Houck, F. M. M. Houck, F. Crispino, and T. McAdam, “Photogrammetry and 3D Reconstruction,” The Science of Crime Scenes, pp. 361–377, Jan. 2018. [Google Scholar] [CrossRef]

- I. Kalisperakis, L. Grammatikopoulos, E. Petsa, and G. Karras, “A Structured-Light Approach for the Reconstruction of Complex Objects,” Geoinformatics FCE CTU, vol. 6, pp. 259–266, Dec. 2011, doi: 10.14311/GI.6.32.

- Y. Feng, R. Wu, X. Liu, and L. Chen, “Three-Dimensional Reconstruction Based on Multiple Views of Structured Light Projectors and Point Cloud Registration Noise Removal for Fusion,” Sensors 2023, Vol. 23, Page 8675, vol. 23, no. 21, p. 8675, Oct. 2023, doi: 10.3390/S23218675.

- B. Mildenhall, P. P. Srinivasan, M. Tancik, J. T. Barron, R. Ramamoorthi, and R. Ng, “NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis,” Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), vol. 12346 LNCS, pp. 405–421, Mar. 2020, doi: 10.1007/978-3-030-58452-8_24.

- T. Nguyen-Phuoc, F. Liu, and L. Xiao, “SNeRF: Stylized Neural Implicit Representations for 3D Scenes,” ACM Trans Graph, vol. 41, no. 4, p. 11, Jul. 2022, doi: 10.1145/3528223.3530107.

- J. Kulhanek and T. Sattler, “Tetra-NeRF: Representing Neural Radiance Fields Using Tetrahedra,” 2023, Accessed: Jul. 15, 2024. [Online]. Available: https://github.com/jkulhanek/tetra-nerf.

- M. Tancik et al., “Nerfstudio: A Modular Framework for Neural Radiance Field Development,” Pro-ceedings - SIGGRAPH 2023 Conference Papers, Feb. 2023, doi: 10.1145/3588432.3591516.

- T. Müller, S. Nvidia, A. Evans, C. Schied, and A. 2022 Keller, “Instant Neural Graphics Primitives with a Multiresolution Hash Encoding,” ACM Trans. Graph, vol. 41, no. 4, p. 102, 2022, doi: 10.1145/3528223.3530127.

- R. Liang, J. Zhang, H. Li, C. Yang, Y. Guan, and N. Vijaykumar, “SPIDR: SDF-based Neural Point Fields for Illumination and Deformation,” Oct. 2022, Accessed: Jul. 15, 2024. [Online]. Available: https://arxiv.org/abs/2210.08398v3.

- C. Reiser et al., “MERF: Memory-Efficient Radiance Fields for Real-time View Synthesis in Unbounded Scenes,” ACM Trans Graph, vol. 42, no. 4, Feb. 2023, doi: 10.1145/3592426.

- B. Kerbl, G. Kopanas, T. Leimkuehler, and G. Drettakis, “3D Gaussian Splatting for Real-Time Radiance Field Rendering,” ACM Trans Graph, vol. 42, no. 4, p. 14, Aug. 2023, doi: 10.1145/3592433.

- G. Chen and W. Wang, “A Survey on 3D Gaussian Splatting,” Jan. 2024, Accessed: Mar. 31, 2025. [Online]. Available: https://arxiv.org/abs/2401.03890v6.

- D. Rangelov, S. Waanders, K. Waanders, M. van Keulen, and R. Miltchev, “Impact of Data Capture Methods on 3D Reconstruction with Gaussian Splatting,” Journal of Imaging 2025, Vol. 11, Page 65, vol. 11, no. 2, p. 65, Feb. 2025, doi: 10.3390/JIMAGING11020065.

- D. Rangelov, S. Waanders, K. Waanders, M. van Keulen, and R. Miltchev, “Impact of Camera Settings on 3D Reconstruction Quality: Insights from NeRF and Gaussian Splatting,” Sensors 2024, Vol. 24, Page 7594, vol. 24, no. 23, p. 7594, Nov. 2024, doi: 10.3390/S24237594.

- Sony, “Sony Alpha 7C Full-Frame Mirrorless Camera - Black| ILCE7C.” Accessed: Dec. 22, 2024. [Online]. Available: https://electronics.sony.com/imaging/interchangeable-lens-cameras/all-interchangeable-lens-cameras/p/ilce7c-b?srsltid=AfmBOoo5N6vG9O3tR3d9p7ZKy9YqWMPZSzdnQnfZjfl4XP9WE2vRx1bz.

- Sigma, “14mm F1.4 DG DN | Art | Lenses | SIGMA Corporation.” Accessed: Dec. 22, 2024. [Online]. Available: https://www.sigma-global.com/en/lenses/a023_14_14.

- Jawset, “Jawset Postshot.” Accessed: Dec. 22, 2024. [Online]. Available: https://www.jawset.com/.

- W. Bian, Z. Wang, K. Li, J. W. Bian, and V. A. Prisacariu, “NoPe-NeRF: Optimising Neural Radiance Field with No Pose Prior,” Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, vol. 2023-June, pp. 4160–4169, Dec. 2022, doi: 10.1109/CVPR52729.2023.00405.

- Y. Xia, H. Tang, R. Timofte, and L. Van Gool, “SiNeRF: Sinusoidal Neural Radiance Fields for Joint Pose Estimation and Scene Reconstruction,” BMVC 2022 - 33rd British Machine Vision Conference Proceedings, Oct. 2022, Accessed: Apr. 12, 2025. [Online]. Available: https://arxiv.org/abs/2210.04553v1.

- S. J. Garbin, M. Kowalski, M. Johnson, J. Shotton, and J. Valentin, “FastNeRF: High-Fidelity Neural Rendering at 200FPS,” Proceedings of the IEEE International Conference on Computer Vision, pp. 14326–14335, Mar. 2021, doi: 10.1109/ICCV48922.2021.01408.

- W. Xiao et al., “Neural Radiance Fields for the Real World: A Survey,” Jan. 2025, Accessed: Apr. 12, 2025. [Online]. Available: http://arxiv.org/abs/2501.13104.

- J. T. Barron, B. Mildenhall, D. Verbin, P. P. Srinivasan, and P. Hedman, “Mip-NeRF 360: Unbounded Anti-Aliased Neural Radiance Fields,” Proceedings of the IEEE Computer Society Conference on Com-puter Vision and Pattern Recognition, vol. 2022-June, pp. 5460–5469, Nov. 2021, doi: 10.1109/CVPR52688.2022.00539.

- A. Amamra, Y. Amara, K. Boumaza, and A. Benayad, “Crime scene reconstruction with RGB-D sensors,” in Proceedings of the 2019 Federated Conference on Computer Science and Information Systems, FedCSIS 2019, Institute of Electrical and Electronics Engineers Inc., Sep. 2019, pp. 391–396. doi: 10.15439/2019F225.

- G. Galanakis et al., “A Study of 3D Digitisation Modalities for Crime Scene Investigation,” Forensic Sci-ences 2021, Vol. 1, Pages 56-85, vol. 1, no. 2, pp. 56–85, Jul. 2021, doi: 10.3390/FORENSICSCI1020008.

- A. N. Sazaly, M. F. M. Ariff, and A. F. Razali, “3D Indoor Crime Scene Reconstruction from Micro UAV Photogrammetry Technique,” Engineering, Technology & Applied Science Research, vol. 13, no. 6, pp. 12020–12025, Dec. 2023, doi: 10.48084/ETASR.6260.

- C. Villa, N. Lynnerup, and C. Jacobsen, “A Virtual, 3D Multimodal Approach to Victim and Crime Scene Reconstruction,” Diagnostics 2023, Vol. 13, Page 2764, vol. 13, no. 17, p. 2764, Aug. 2023, doi: 10.3390/DIAGNOSTICS13172764.

- M. A. Maneli and O. E. Isafiade, “3D Forensic Crime Scene Reconstruction Involving Immersive Tech-nology: A Systematic Literature Review,” IEEE Access, vol. 10, pp. 88821–88857, 2022, doi: 10.1109/ACCESS.2022.3199437.

- S. Kottner, M. J. Thali, and D. Gascho, “Using the iPhone’s LiDAR technology to capture 3D forensic data at crime and crash scenes,” Forensic Imaging, vol. 32, p. 200535, Mar. 2023, doi: 10.1016/J.FRI.2023.200535.

- M. M. Houck, F. Crispino, and T. McAdam, “Photogrammetry and 3D Reconstruction,” The Science of Crime Scenes, pp. 361–377, Jan.

| Criteria | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|

| Noise | There is too much noise present, and nothing can be seen | There is too much noise present, but the environment is visible | There is some noise present, however, the outline of the environment is still visible | Almost no noise is present, and the environment is quite clear in visibility | There is no noise |

| Details | The reconstruction appears pixelated, yet it is discernible that an object should be present in that location. | The reconstruction is pixelated, but it's still possible to discern the object type (e.g. table, chair, paper). | Identification of the object types is easily achievable | Capable of accurately identifying the object and providing brand information | Extremely detailed; there is no discernible difference between the model and the video. |

| Processing time | The processing time takes longer than 24 hours | The processing time takes longer than 12 hours | The processing time takes longer than 4 hours | The processing time takes longer than 2 hours | The processing time takes less than 2 hours |

| Environment | Algorithm | Processing time (hr:min:s) | Noise | Details |

|---|---|---|---|---|

| Outdoor | NeRF | 5 (01:03:09) | 3 | 4 |

| Outdoor | 3D Gaussian Splatting | 5 (00:52:24) | 4 | 4 |

| Environment | Algorithm | Processing time (hr:min:s) | Noise | Details |

|---|---|---|---|---|

| Indoor | NeRF | 5(01:14:07) | 3 | 3 |

| Indoor | 3D Gaussian Splatting | 5(00:42:23) | 4 | 4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).