Submitted:

08 April 2025

Posted:

08 April 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1.3. D Modeling Using NeRF

2.2 3D Modeling Using 3DGS

2.3. Large-Scale 3D Scene Reconstruction

3. Methodology

3.1. Architecture Overview

3.2. Point Cloud Filtering

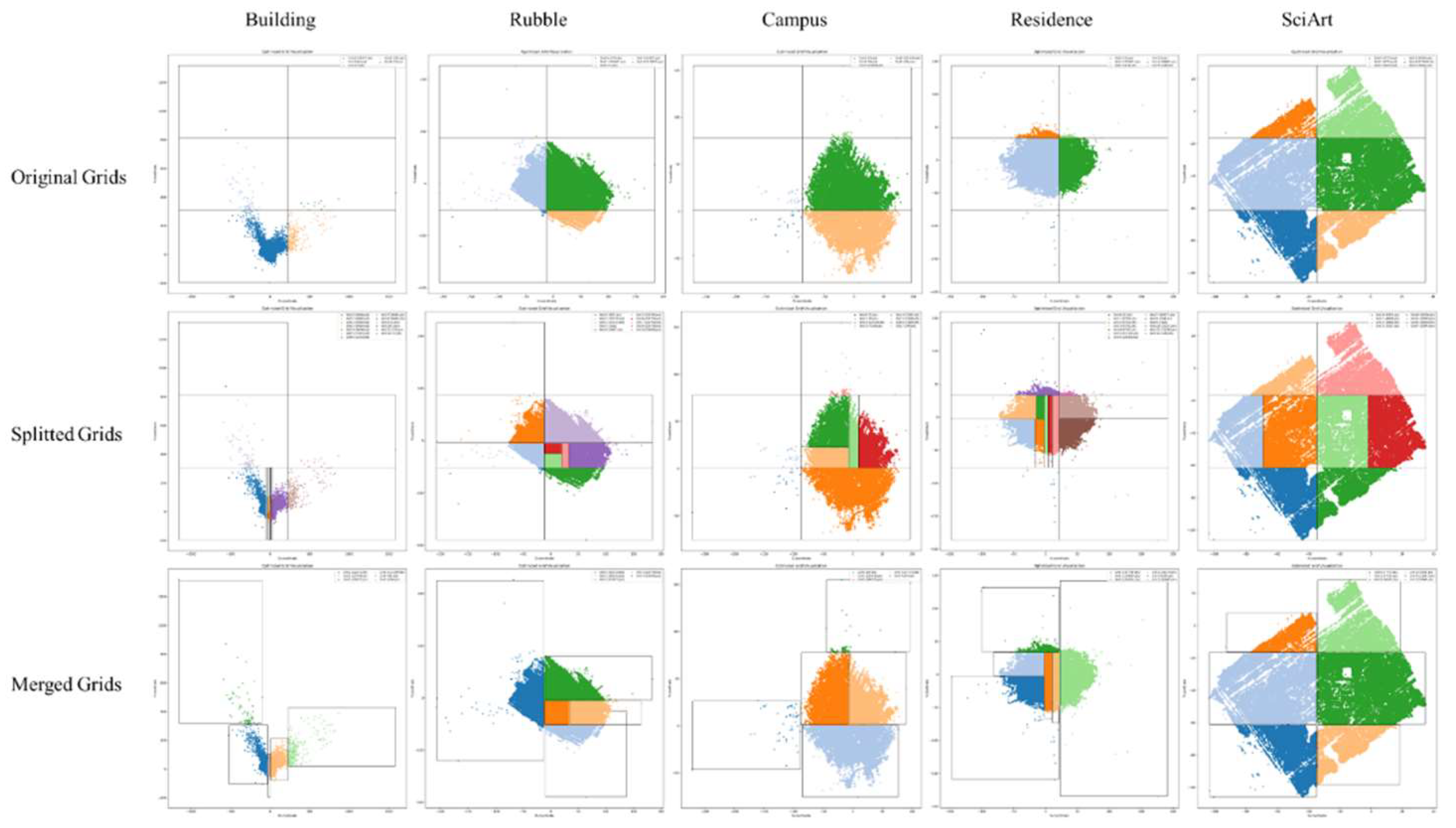

3.3. Grid-Based Scene Segmentation

3.3.1. Create Initial Grid

3.3.2. Grid Partitioning

3.3.3. Grid Merging

3.3. Evaluation Metrics

4. Experiments and Results

4.1. Data

4.1.3. Mill19

4.1.3. Urban Scene 3D

4.1.3. Self-Collected

| Original Resolution |

Sampling Resolution |

Original Images |

Colmap Images |

|

|---|---|---|---|---|

| Rubble | 4608×3456 | 1152×864 | 1657 | 1657 |

| Building | 4608×3456 | 1152×864 | 1920 | 685 |

| Campus | 5472×3648 | 1368×912 | 2129 | 1290 |

| Residence | 5472×3648 | 1368×912 | 2582 | 2346 |

| SciArt | 5472×3648 | 1368×912 | 3620 | 668 |

| NJU | 5280×3956 | 1320×989 | 304 | 286 |

| CMCC- NanjingIDC |

5280×3956 | 1320×989 | 2520 | 2098 |

4.2. Preprocessing

4.3. Training Details

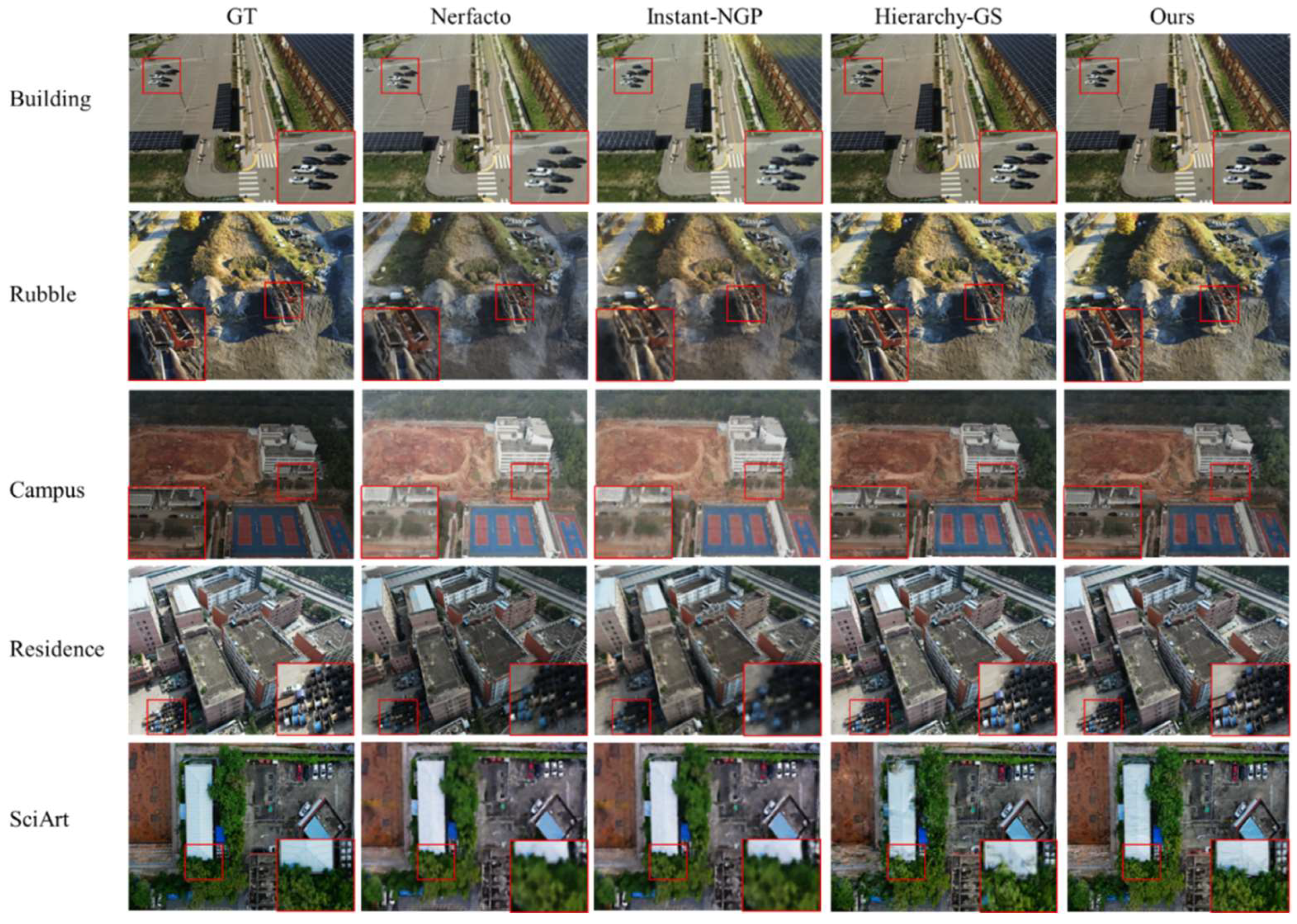

4.4. Comparison with SOTA Implicit Methods

4.4.1. Single Block

4.4.2. Full Scene

5. Discussion

5.1. Importance of Filter

5.2. Ablation Analysis

5.3. Shortcoming

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zhu, H.; Zhang, Z.; Zhao, J.; Duan, H.; Ding, Y.; Xiao, X.; Yuan, J. Scene Reconstruction Techniques for Autonomous Driving: A Review of 3D Gaussian Splatting. Artif Intell Rev 2024, 58, 30. [CrossRef]

- Cui, B.; Tao, W.; Zhao, H. High-Precision 3D Reconstruction for Small-to-Medium-Sized Objects Utilizing Line-Structured Light Scanning: A Review. Remote Sensing 2021, 13, 4457. [CrossRef]

- Chen, W.; Xu, H.; Zhou, Z.; Liu, Y.; Sun, B.; Kang, W.; Xie, X. CostFormer:Cost Transformer for Cost Aggregation in Multi-View Stereo 2023.

- Ding, Y.; Yuan, W.; Zhu, Q.; Zhang, H.; Liu, X.; Wang, Y.; Liu, X. TransMVSNet: Global Context-Aware Multi-View Stereo Network with Transformers. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 8575–8584.

- Kato, H.; Ushiku, Y.; Harada, T. Neural 3D Mesh Renderer. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, June 2018; pp. 3907–3916.

- Berger, M.; Tagliasacchi, A.; Seversky, L.M.; Alliez, P.; Guennebaud, G.; Levine, J.A.; Sharf, A.; Silva, C.T. A Survey of Surface Reconstruction from Point Clouds. Computer Graphics Forum 2017, 36, 301–329. [CrossRef]

- Häne, C.; Tulsiani, S.; Malik, J. Hierarchical Surface Prediction for 3D Object Reconstruction. In Proceedings of the 2017 International Conference on 3D Vision (3DV); October 2017; pp. 412–420.

- Izadi, S.; Kim, D.; Hilliges, O.; Molyneaux, D.; Newcombe, R.; Kohli, P.; Shotton, J.; Hodges, S.; Freeman, D.; Davison, A.; et al. KinectFusion: Real-Time 3D Reconstruction and Interaction Using a Moving Depth Camera. In Proceedings of the Proceedings of the 24th annual ACM symposium on User interface software and technology; ACM: Santa Barbara California USA, October 16 2011; pp. 559–568.

- Fuentes Reyes, M.; d’Angelo, P.; Fraundorfer, F. Comparative Analysis of Deep Learning-Based Stereo Matching and Multi-View Stereo for Urban DSM Generation. Remote Sensing 2024, 17, 1. [CrossRef]

- Wang, T.; Gan, V.J.L. Enhancing 3D Reconstruction of Textureless Indoor Scenes with IndoReal Multi-View Stereo (MVS). Automation in Construction 2024, 166, 105600. [CrossRef]

- Huang, H.; Yan, X.; Zheng, Y.; He, J.; Xu, L.; Qin, D. Multi-View Stereo Algorithms Based on Deep Learning: A Survey. Multimed Tools Appl 2024. [CrossRef]

- Park, J.J.; Florence, P.; Straub, J.; Newcombe, R.; Lovegrove, S. DeepSDF: Learning Continuous Signed Distance Functions for Shape Representation. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Long Beach, CA, USA, June 2019; pp. 165–174.

- Mescheder, L.; Oechsle, M.; Niemeyer, M.; Nowozin, S.; Geiger, A. Occupancy Networks: Learning 3D Reconstruction in Function Space. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Long Beach, CA, USA, June 2019; pp. 4455–4465.

- Chen, Z.; Zhang, H. Learning Implicit Fields for Generative Shape Modeling. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Long Beach, CA, USA, June 2019; pp. 5932–5941.

- Michalkiewicz, M.; Pontes, J.K.; Jack, D.; Baktashmotlagh, M.; Eriksson, A. Deep Level Sets: Implicit Surface Representations for 3D Shape Inference 2019.

- Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Barron, J.T.; Ramamoorthi, R.; Ng, R. NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis. Commun. ACM 2022, 65, 99–106. [CrossRef]

- Kerbl, B.; Kopanas, G.; Leimkuehler, T.; Drettakis, G. 3D Gaussian Splatting for Real-Time Radiance Field Rendering. ACM Trans. Graph. 2023, 42, 1–14. [CrossRef]

- Chen, G.; Wang, W. A Survey on 3D Gaussian Splatting 2024.

- Barron, J.T.; Mildenhall, B.; Tancik, M.; Hedman, P.; Martin-Brualla, R.; Srinivasan, P.P. Mip-NeRF: A Multiscale Representation for Anti-Aliasing Neural Radiance Fields. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Montreal, QC, Canada, October 2021; pp. 5835–5844.

- Cen, J.; Zhou, Z.; Fang, J.; Yang, C.; Shen, W.; Xie, L.; Jiang, D.; Zhang, X.; Tian, Q. Segment Anything in 3D with NeRFs 2023.

- Chen, Z.; Funkhouser, T.; Hedman, P.; Tagliasacchi, A. MobileNeRF: Exploiting the Polygon Rasterization Pipeline for Efficient Neural Field Rendering on Mobile Architectures.; 2023; pp. 16569–16578.

- Deng, C. NeRDi: Single-View NeRF Synthesis With Language-Guided Diffusion As General Image Priors.

- Garbin, S.J.; Kowalski, M.; Johnson, M.; Shotton, J.; Valentin, J. FastNeRF: High-Fidelity Neural Rendering at 200FPS. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Montreal, QC, Canada, October 2021; pp. 14326–14335.

- Hu, T.; Liu, S.; Chen, Y.; Shen, T.; Jia, J. EfficientNeRF Efficient Neural Radiance Fields.; 2022; pp. 12902–12911.

- Jia, Z.; Wang, B.; Chen, C. Drone-NeRF: Efficient NeRF Based 3D Scene Reconstruction for Large-Scale Drone Survey. Image Vision Comput 2024, 143, 104920. [CrossRef]

- Johari, M.M.; Lepoittevin, Y.; Fleuret, F. GeoNeRF: Generalizing NeRF with Geometry Priors. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 18344–18347.

- Mari, R.; Facciolo, G.; Ehret, T. Sat-NeRF: Learning Multi-View Satellite Photogrammetry With Transient Objects and Shadow Modeling Using RPC Cameras. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: New Orleans, LA, USA, June 2022; pp. 1310–1320.

- Xu, Q.; Xu, Z.; Philip, J.; Bi, S.; Shu, Z.; Sunkavalli, K.; Neumann, U. Point-NeRF: Point-Based Neural Radiance Fields.; 2022; pp. 5438–5448.

- Zhang, G.; Xue, C.; Zhang, R. SuperNeRF: High-Precision 3-D Reconstruction for Large-Scale Scenes. Ieee T Geosci Remote 2024, 62, 1–13. [CrossRef]

- 赵强; 佘江峰; 万奇峰 神经辐射场应用于大规模实景三维场景可视化研究进展评述. 遥感学报 2023, 1–20. [CrossRef]

- Wang, Z.; Wu, S.; Xie, W.; Chen, M.; Prisacariu, V.A. NeRF--: Neural Radiance Fields Without Known Camera Parameters 2022.

- Ma, L.; Li, X.; Liao, J.; Zhang, Q.; Wang, X.; Wang, J.; Sander, P.V. Deblur-NeRF: Neural Radiance Fields From Blurry Images.; 2022; pp. 12861–12870.

- Zeng, J.; Bao, C.; Chen, R.; Dong, Z.; Zhang, G.; Bao, H.; Cui, Z. Mirror-NeRF: Learning Neural Radiance Fields for Mirrors with Whitted-Style Ray Tracing. In Proceedings of the Proceedings of the 31st ACM International Conference on Multimedia; ACM: Ottawa ON Canada, October 26 2023; pp. 4606–4615.

- Pumarola, A.; Corona, E.; Pons-Moll, G.; Moreno-Noguer, F. D-NeRF: Neural Radiance Fields for Dynamic Scenes. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Nashville, TN, USA, June 2021; pp. 10313–10322.

- Xu, H.; Alldieck, T.; Sminchisescu, C. H-NeRF: Neural Radiance Fields for Rendering and Temporal Reconstruction of Humans in Motion. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc., 2021; Vol. 34, pp. 14955–14966.

- Wang, C.; Chai, M.; He, M.; Chen, D.; Liao, J. CLIP-NeRF: Text-and-Image Driven Manipulation of Neural Radiance Fields. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 3825–3834.

- Low, W.F.; Lee, G.H. Robust E-NeRF: NeRF from Sparse & Noisy Events under Non-Uniform Motion. In Proceedings of the 2023 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Paris, France, October 1 2023; pp. 18289–18300.

- Neff, T.; Stadlbauer, P.; Parger, M.; Kurz, A.; Mueller, J.H.; Chaitanya, C.R.A.; Kaplanyan, A.; Steinberger, M. DONeRF: Towards Real-Time Rendering of Compact Neural Radiance Fields Using Depth Oracle Networks. Computer Graphics Forum 2021, 40, 45–59. [CrossRef]

- Reiser, C.; Peng, S.; Liao, Y.; Geiger, A. KiloNeRF: Speeding up Neural Radiance Fields with Thousands of Tiny MLPs. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Montreal, QC, Canada, October 2021; pp. 14315–14325.

- Müller, T.; Evans, A.; Schied, C.; Keller, A. Instant Neural Graphics Primitives with a Multiresolution Hash Encoding. Acm T Graphic 2022, 41, 1–15. [CrossRef]

- Chen, A.; Xu, Z.; Zhao, F.; Zhang, X.; Xiang, F.; Yu, J.; Su, H. MVSNeRF: Fast Generalizable Radiance Field Reconstruction from Multi-View Stereo. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Montreal, QC, Canada, October 2021; pp. 14104–14113.

- Verbin, D.; Hedman, P.; Mildenhall, B.; Zickler, T.; Barron, J.T.; Srinivasan, P.P. Ref-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); June 2022; pp. 5481–5490.

- Guo, Y.-C.; Kang, D.; Bao, L.; He, Y.; Zhang, S.-H. NeRFReN: Neural Radiance Fields with Reflections. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 18388–18397.

- Bao, Y.; Ding, T.; Huo, J.; Liu, Y.; Li, Y.; Li, W.; Gao, Y.; Luo, J. 3D Gaussian Splatting: Survey, Technologies, Challenges, and Opportunities 2024.

- Yan, Z.; Low, W.F.; Chen, Y.; Lee, G.H. Multi-Scale 3D Gaussian Splatting for Anti-Aliased Rendering. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Seattle, WA, USA, June 16 2024; pp. 20923–20931.

- Lee, B.; Lee, H.; Sun, X.; Ali, U.; Park, E. Deblurring 3D Gaussian Splatting 2024.

- Yu, Z.; Chen, A.; Huang, B.; Sattler, T.; Geiger, A. Mip-Splatting: Alias-Free 3D Gaussian Splatting.

- Lin, Y.; Dai, Z.; Zhu, S.; Yao, Y. Gaussian-Flow: 4D Reconstruction with Dynamic 3D Gaussian Particle 2023.

- Chen, Y.; Chen, Z.; Zhang, C.; Wang, F.; Yang, X.; Wang, Y.; Cai, Z.; Yang, L.; Liu, H.; Lin, G. GaussianEditor: Swift and Controllable 3D Editing with Gaussian Splatting 2023.

- Fang, J.; Wang, J.; Zhang, X.; Xie, L.; Tian, Q. GaussianEditor: Editing 3D Gaussians Delicately with Text Instructions 2023.

- Ye, M.; Danelljan, M.; Yu, F.; Ke, L. Gaussian Grouping: Segment and Edit Anything in 3D Scenes 2023.

- Li, Z.; Zheng, Z.; Wang, L.; Liu, Y. Animatable Gaussians: Learning Pose-Dependent Gaussian Maps for High-Fidelity Human Avatar Modeling 2023.

- Fan, Z.; Wang, K.; Wen, K.; Zhu, Z.; Xu, D.; Wang, Z. LightGaussian: Unbounded 3D Gaussian Compression with 15x Reduction and 200+ FPS 2023.

- Jiang, Y.; Shen, Z.; Wang, P.; Su, Z.; Hong, Y.; Zhang, Y.; Yu, J.; Xu, L. HiFi4G: High-Fidelity Human Performance Rendering via Compact Gaussian Splatting 2023.

- Xie, Z.; Zhang, J.; Li, W.; Zhang, F.; Zhang, L. S-NeRF: Neural Radiance Fields for Street Views 2023.

- Wan, Q.; Guan, Y.; Zhao, Q.; Wen, X.; She, J. Constraining the Geometry of NeRFs for Accurate DSM Generation from Multi-View Satellite Images. IJGI 2024, 13, 243. [CrossRef]

- Turki, H.; Ramanan, D.; Satyanarayanan, M. Mega-NeRF: Scalable Construction of Large-Scale NeRFs for Virtual Fly- Throughs. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 12912–12921.

- Tancik, M.; Casser, V.; Yan, X.; Pradhan, S.; Mildenhall, B.P.; Srinivasan, P.; Barron, J.T.; Kretzschmar, H. Block-NeRF: Scalable Large Scene Neural View Synthesis. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 8238–8248.

- Lin, J.; Li, Z.; Tang, X.; Liu, J.; Liu, S.; Liu, J.; Lu, Y.; Wu, X.; Xu, S.; Yan, Y.; et al. VastGaussian: Vast 3D Gaussians for Large Scene Reconstruction. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Seattle, WA, USA, June 16 2024; pp. 5166–5175.

- Ren, K.; Jiang, L.; Lu, T.; Yu, M.; Xu, L.; Ni, Z.; Dai, B. Octree-GS: Towards Consistent Real-Time Rendering with LOD-Structured 3D Gaussians 2024.

- Chen, Y.; Lee, G.H. DoGaussian: Distributed-Oriented Gaussian Splatting for Large-Scale 3D Reconstruction Via Gaussian Consensus 2024.

- Kerbl, B.; Meuleman, A.; Kopanas, G.; Wimmer, M.; Lanvin, A.; Drettakis, G. A Hierarchical 3D Gaussian Representation for Real-Time Rendering of Very Large Datasets. Acm T Graphic 2024, 43, 1–15. [CrossRef]

| Grid|Points | Building | Rubble | Campus | Residence | SciArt |

|---|---|---|---|---|---|

| Grid 0 | 8738 | 102052 | 44354 | 114020 | 38764 |

| Grid 1 | 59739 | 720013 | 99433 | 410024 | 64313 |

| Grid 2 | 45488 | 164498 | 64408 | 135380 | 52740 |

| Grid 3 | 108314 | 201176 | 40313 | 79331 | 40785 |

| Grid 4 | 252504 | 185314 | 236387 | 234410 | 67292 |

| Grid 5 | 11003 | 73592 | 34348 | 117398 | 119335 |

| Max/Min | 28.9 | 9.78 | 6.88 | 5.17 | 3.08 |

| Mean | 80964.33 | 241107.5 | 86540.5 | 181760.5 | 63871.5 |

| Std | 91645.16 | 239718.5 | 77137.25 | 123491.26 | 29581.68 |

| Grid|Points | Building | Rubble | Campus | Residence | SciArt |

|---|---|---|---|---|---|

| Grid 0 | 8738 | 102052 | 44354 | 114020 | 38764 |

| Grid 1 | 59739 | 720013 | 99433 | 410024 | 64313 |

| Grid 2 | 45488 | 164498 | 64408 | 135380 | 52740 |

| Grid 3 | 108314 | 201176 | 40313 | 79331 | 40785 |

| Grid 4 | 252504 | 185314 | 236387 | 234410 | 67292 |

| Grid 5 | 11003 | 73592 | 34348 | 117398 | 119335 |

| Max/Min | 28.9 | 9.78 | 6.88 | 5.17 | 3.08 |

| Mean | 80964.33 | 241107.5 | 86540.5 | 181760.5 | 63871.5 |

| Std | 91645.16 | 239718.5 | 77137.25 | 123491.26 | 29581.68 |

| Data | Building | Rubble | Campus | Residence | SciArt | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Method | Metric | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM |

| 3DGS | 27.83 | 0.180 | 0.872 | 28.84 | 0.173 | 0.877 | 24.30 | 0.256 | 0.783 | 24.56 | 0.197 | 0.831 | 19.88 | 0.576 | 0.484 | |

| Octree-GS | 27.63 | 0.171 | 0.857 | 28.35 | 0.209 | 0.857 | 24.19 | 0.277 | 0.769 | 24.29 | 0.207 | 0.825 | 21.83 | 0.399 | 0.608 | |

| Hierarchy-GS | 27.36 | 0.184 | 0.870 | 27.28 | 0.238 | 0.837 | 24.78 | 0.346 | 0.766 | 23.72 | 0.247 | 0.799 | 22.14 | 0.387 | 0.611 | |

| Ours | 28.15 | 0.179 | 0.875 | 28.42 | 0.204 | 0.855 | 24.91 | 0.233 | 0.799 | 24.36 | 0.221 | 0.821 | 21.49 | 0.461 | 0.562 | |

| Building | Rubble | Campus | Residence | SciArt | |

|---|---|---|---|---|---|

| 3DGS | 18.8 GB | 19.7 GB | 22.6 GB | 22.8 GB | OOM |

| Octree-GS | 19.7 GB | 20.0 GB | 20.2 GB | 20.1 GB | 22.4 GB |

| Hierarchy-GS | 10.8 GB | 12.6 GB | 11.8 GB | 12.2 GB | 13.3 GB |

| Ours | 9.4 GB | 12.4 GB | 11.5 GB | 11.3 GB | 12.9 GB |

| Dataset | Building | Rubble | Camps | Residence | SciArt | |||||||||||

| Method | Metric | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM | PSNR | LPIPS | SSIM |

| Nerfacto-big | 15.70 | 0.465 | 0.325 | 18.38 | 0.452 | 0.440 | 18.05 | 0.537 | 0.463 | 16.46 | 0.405 | 0.464 | 17.31 | 0.758 | 0.363 | |

| Instant-NGP | 20.47 | 0.460 | 0.574 | 18.67 | 0.537 | 0.525 | 19.53 | 0.625 | 0.529 | 16.16 | 0.533 | 0.495 | 20.28 | 0.713 | 0.453 | |

| Hierarchy-GS | 26.28 | 0.210 | 0.836 | 26.73 | 0.246 | 0.830 | 23.62 | 0.364 | 0.731 | 21.47 | 0.282 | 0.702 | 20.05 | 0.426 | 0.558 | |

| Ours | 26.67 | 0.185 | 0.844 | 27.36 | 0.221 | 0.846 | 23.74 | 0.344 | 0.745 | 22.89 | 0.239 | 0.799 | 20.38 | 0.441 | 0.561 | |

| Model | Dataset | PSNR↑ | LPIPS↓ | SSIM↑ |

| Complete | NJU | 27.58 | 0.161 | 0.904 |

| CMCC- NanjingIDC |

24.66 | 0.283 | 0.787 | |

| Rubble | 27.36 | 0.221 | 0.846 | |

| Remove Grid-based Scene Segmentation | NJU | 27.23 | 0.162 | 0.895 |

| CMCC- NanjingIDC |

24.59 | 0.291 | 0.779 | |

| Rubble | 26.81 | 0.247 | 0.831 | |

| Remove Point Cloud Filter | NJU | 27.12 | 0.166 | 0.897 |

| CMCC- NanjingIDC |

24.10 | 0.303 | 0.772 | |

| Rubble | 26.32 | 0.254 | 0.825 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).