Submitted:

07 April 2025

Posted:

08 April 2025

Read the latest preprint version here

Abstract

Keywords:

1. Results

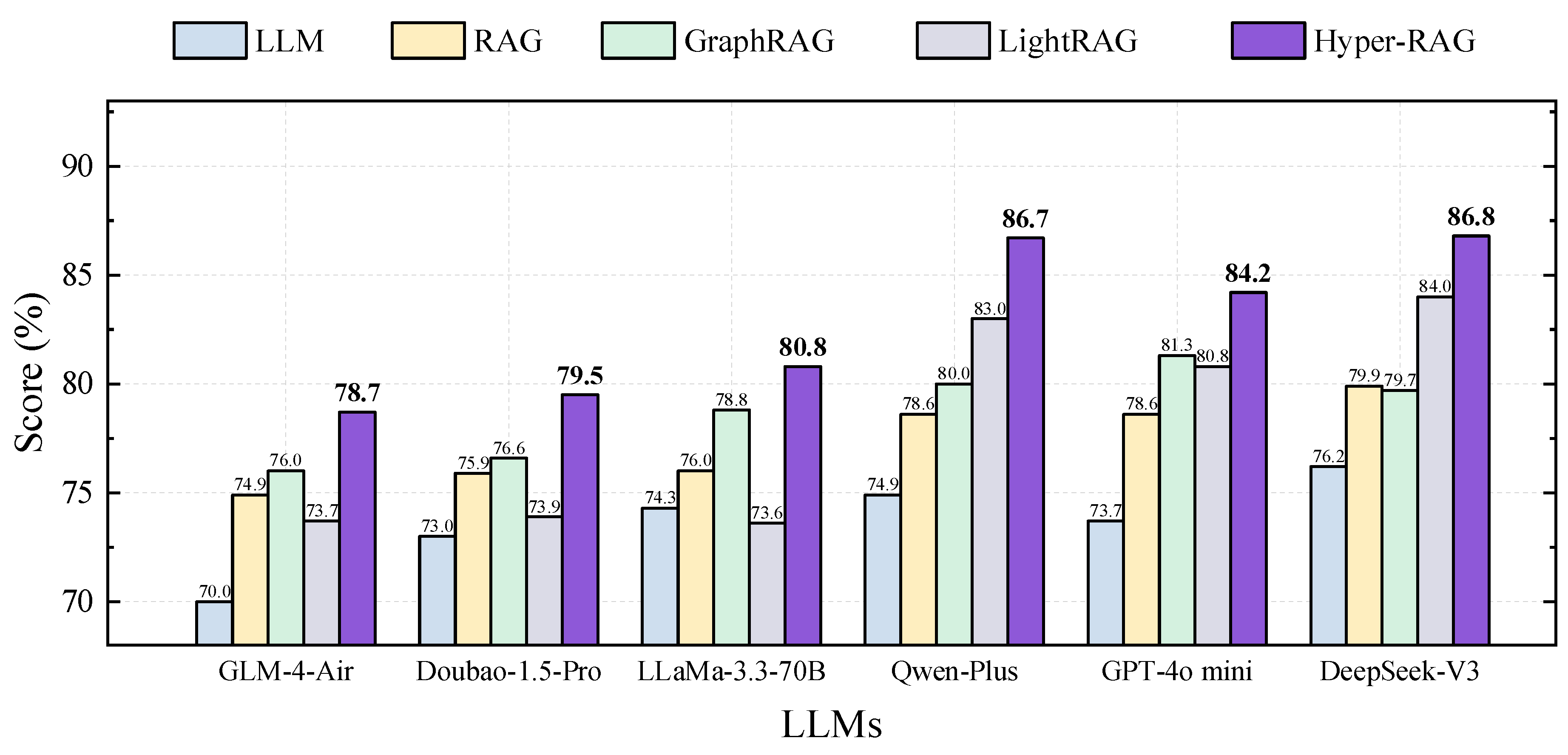

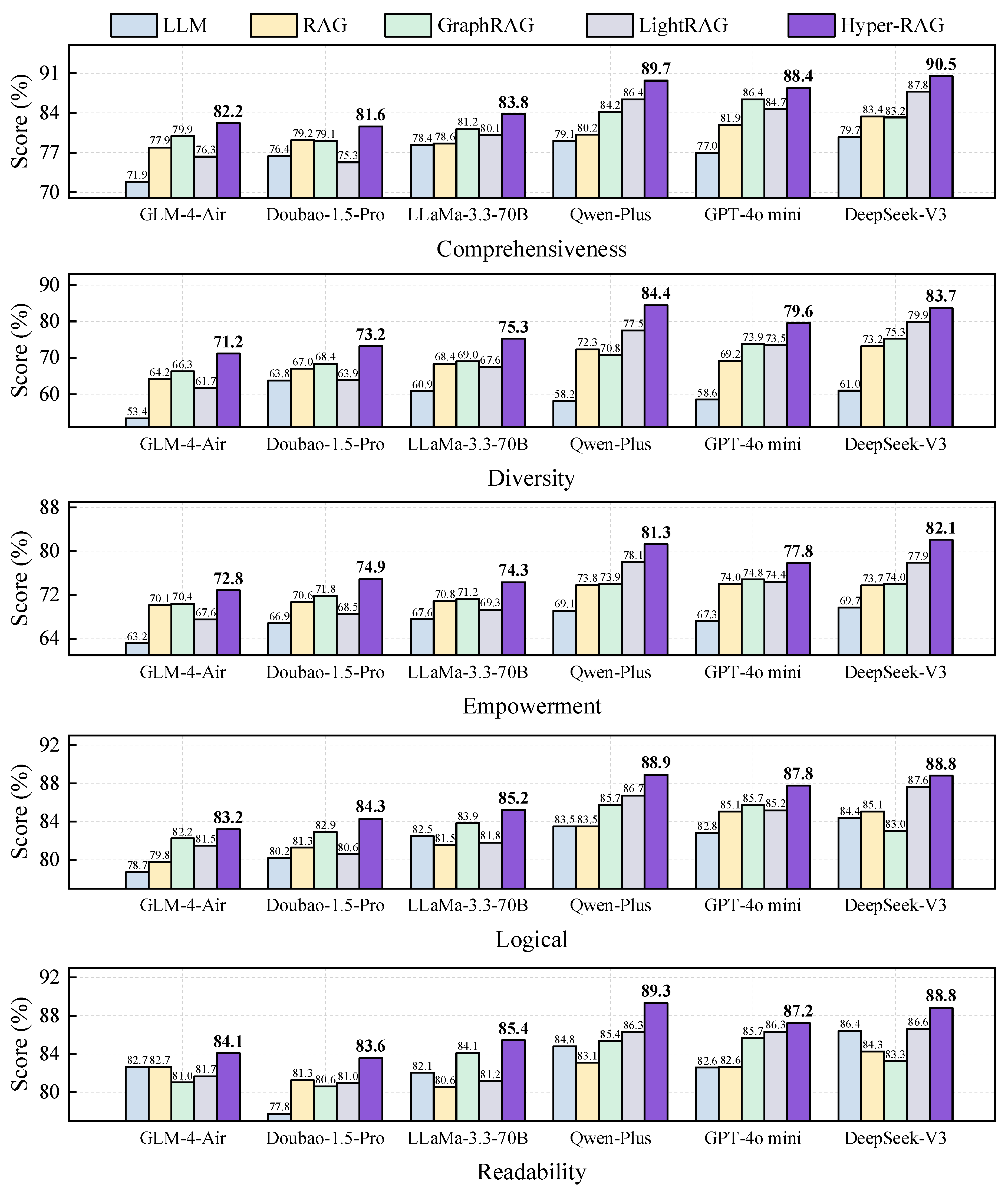

1.1. Performance of Integrating with Diversity LLMs

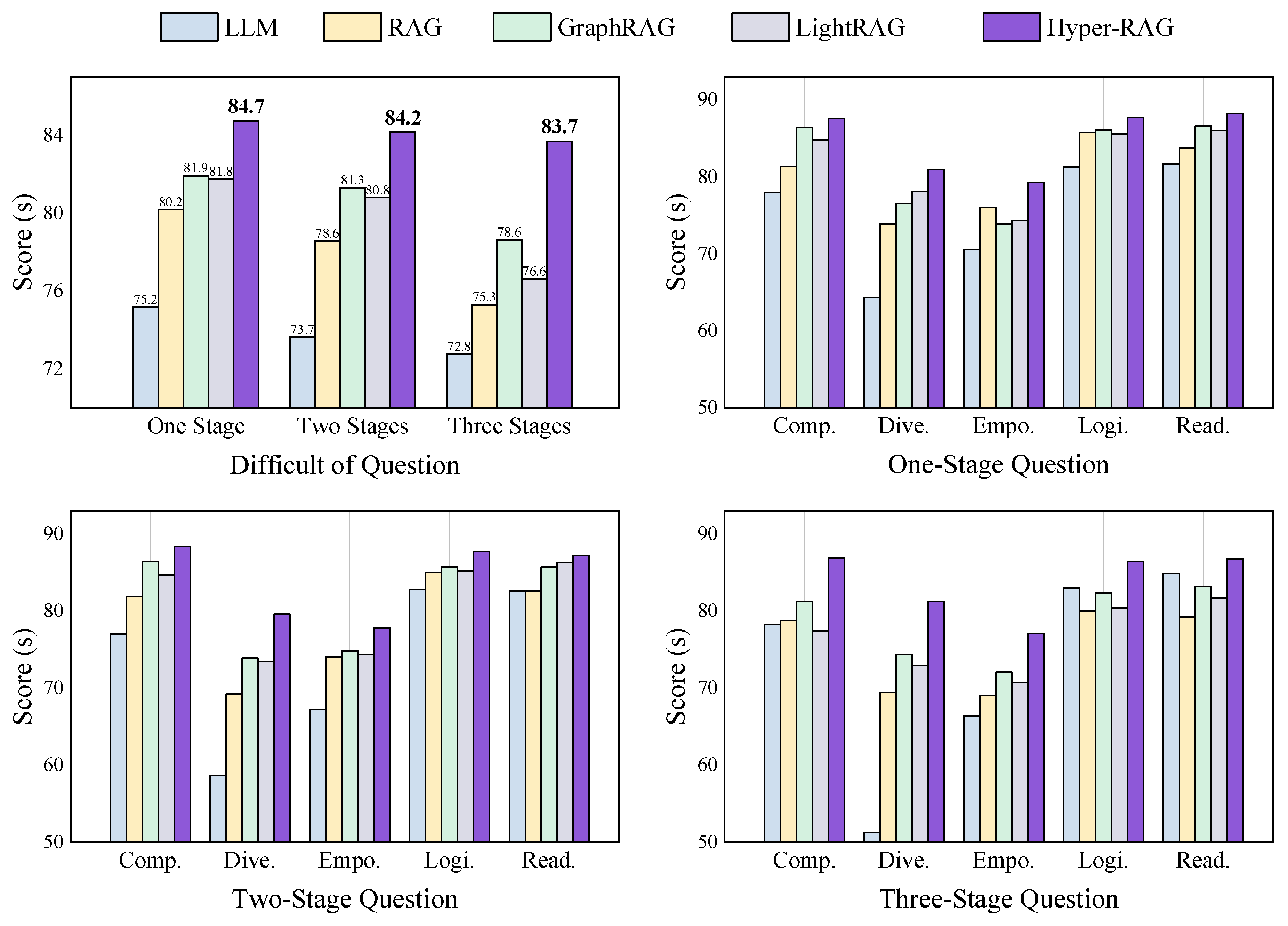

1.2. Performance of Different Questioning Strategies

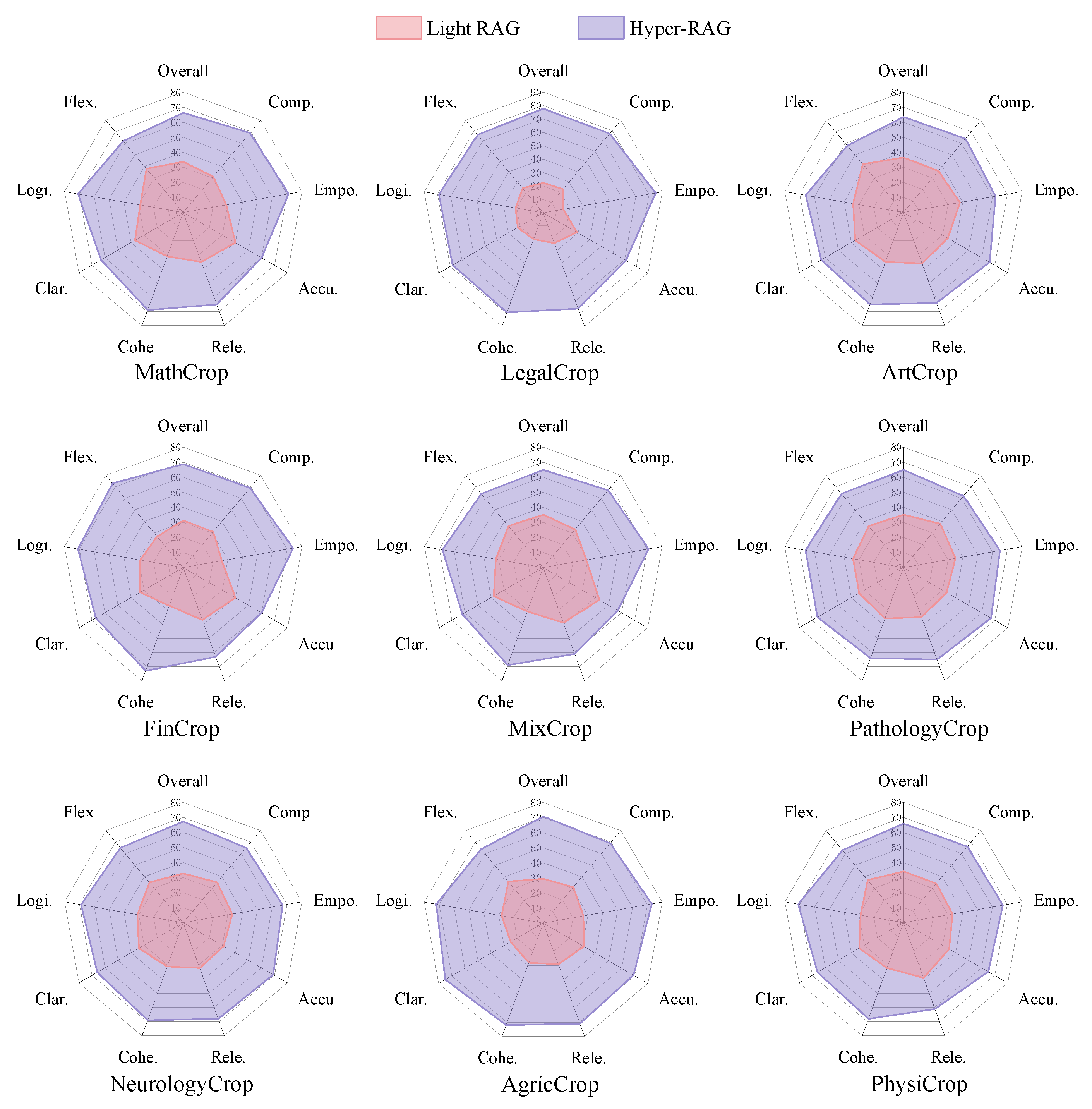

1.3. Performance in Diversity Domains

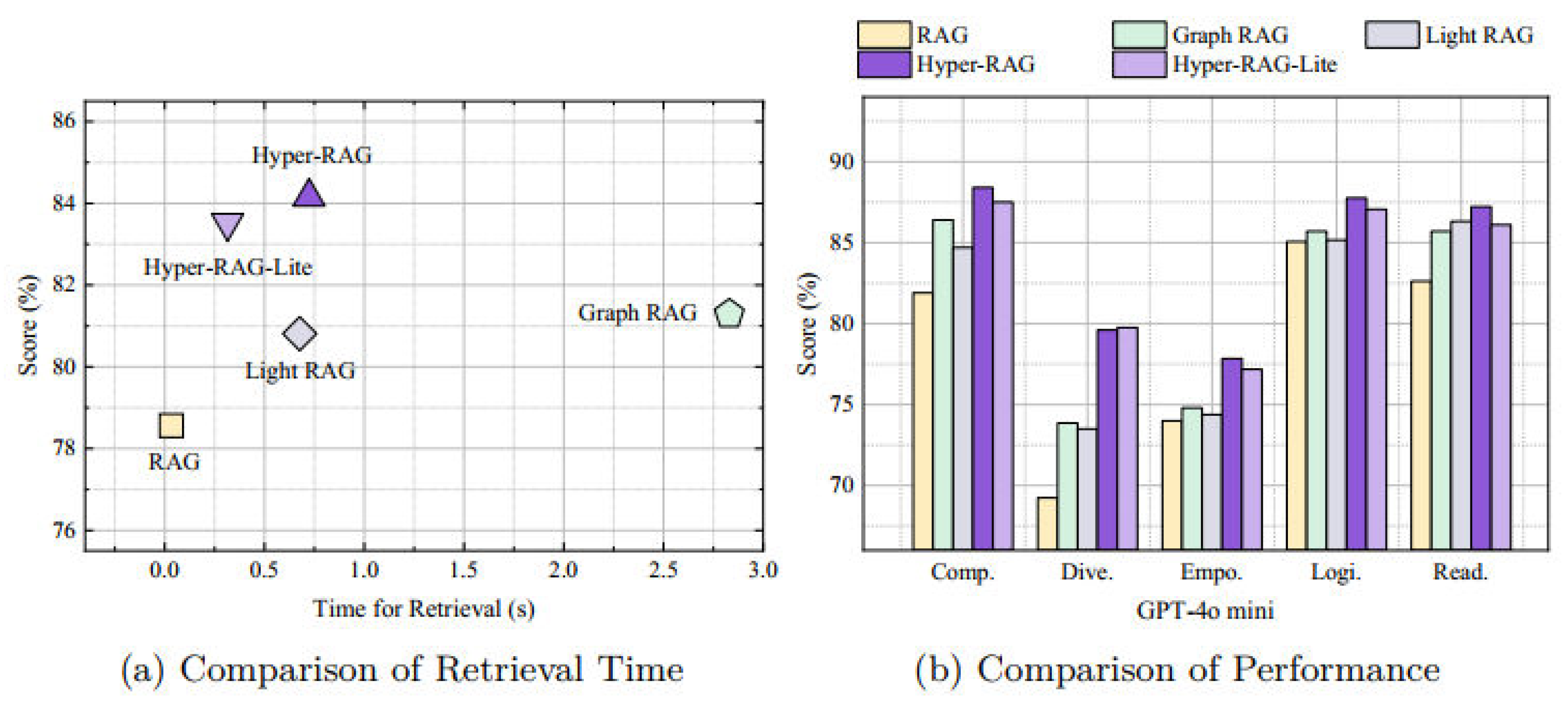

1.4. Experiments of Different Knowledge Representations

1.5. Efficiency Analysis

2. Discussion

3. Methods

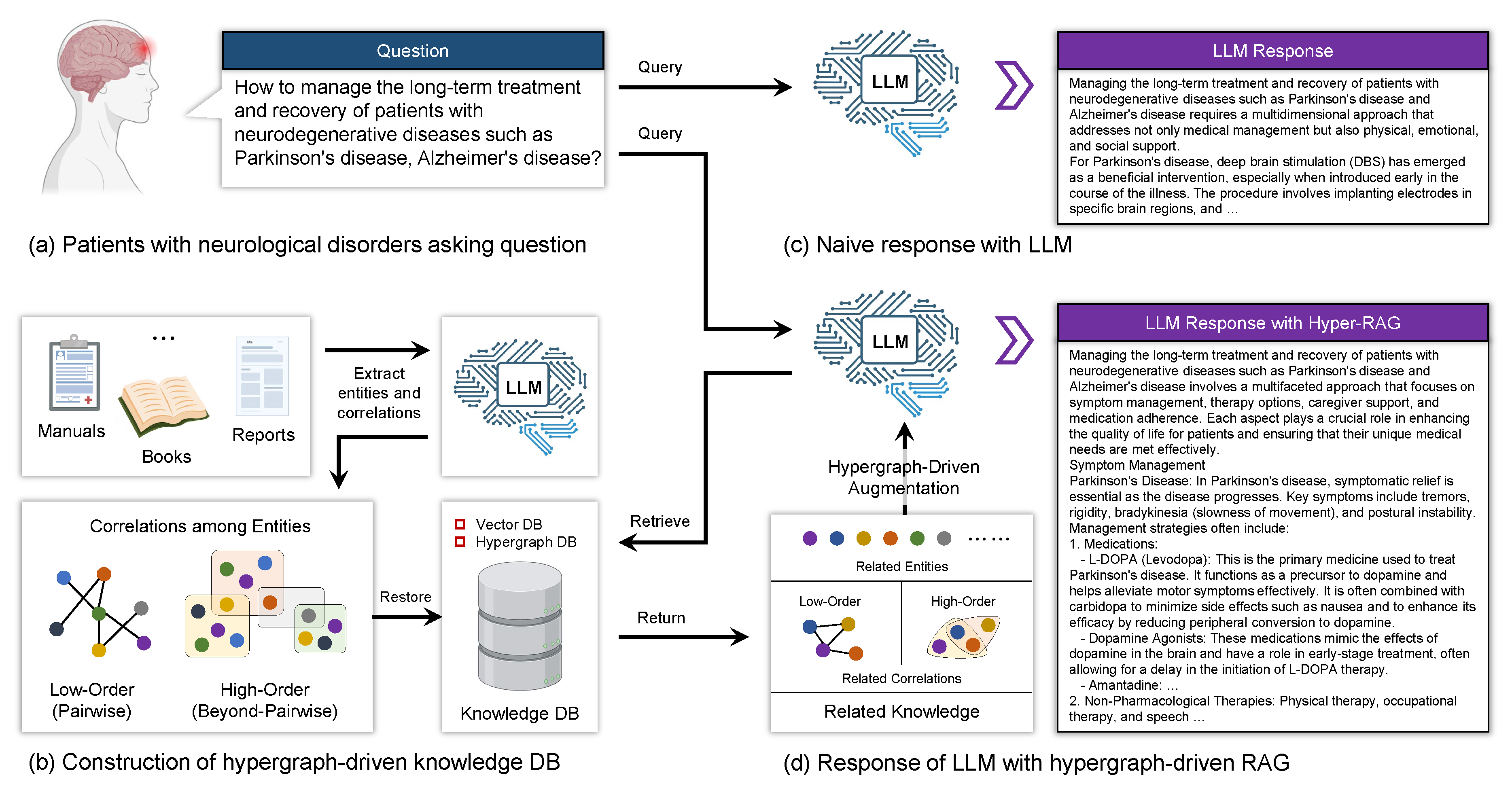

3.1. Framework Schema

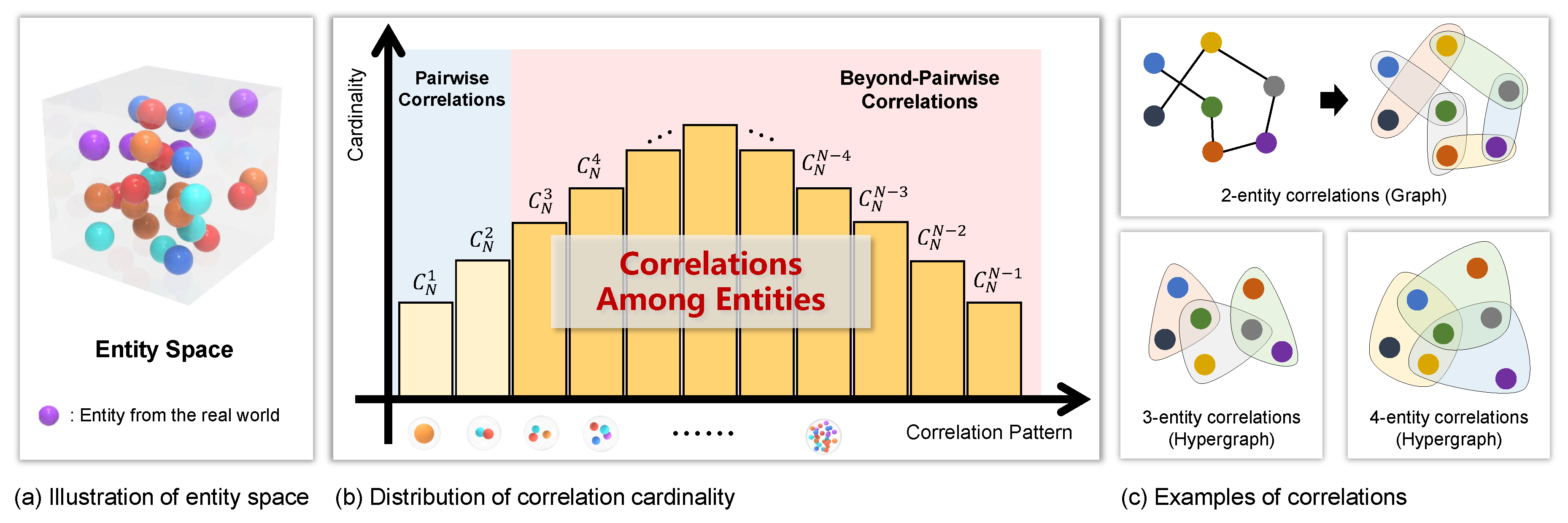

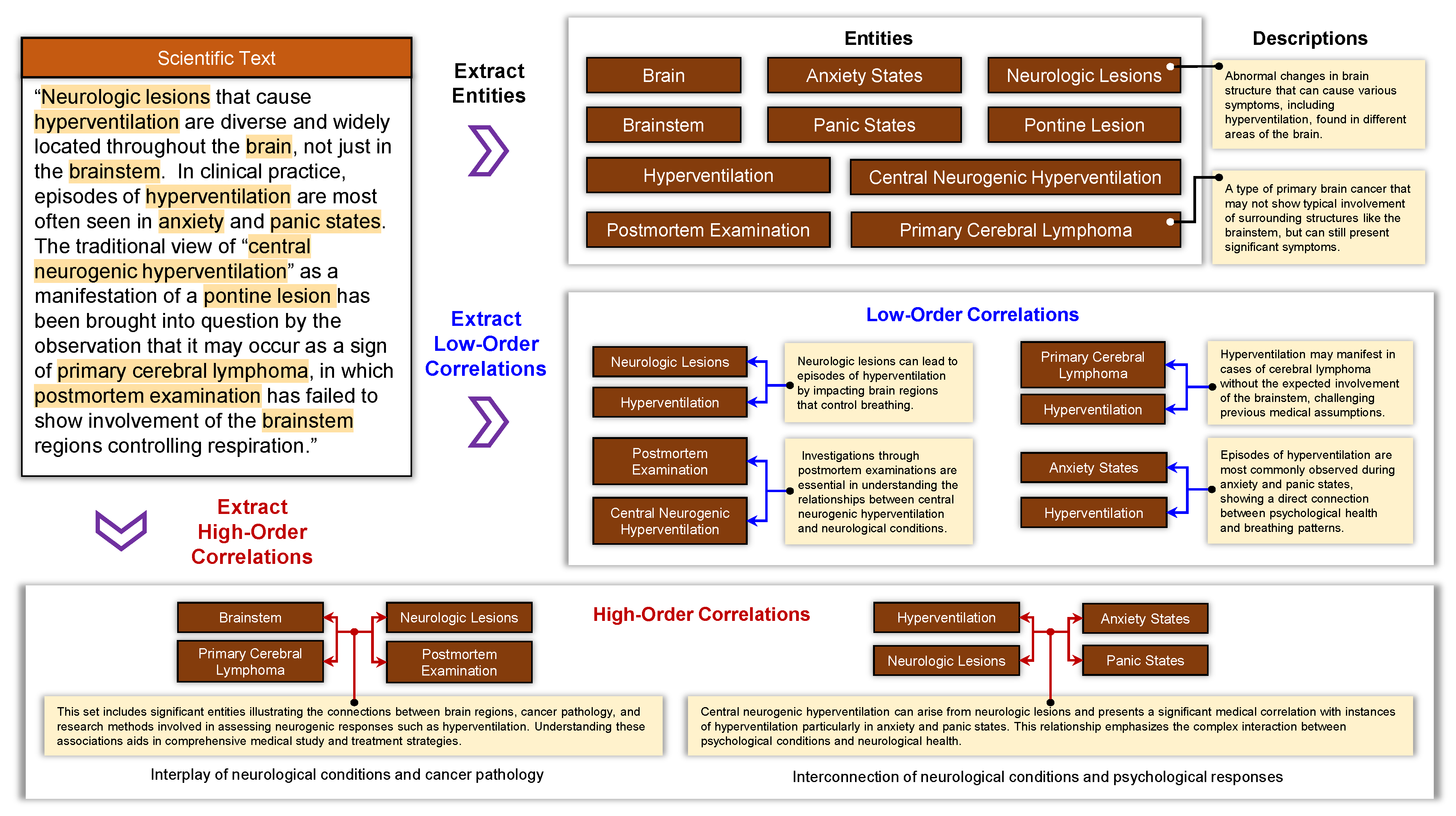

3.2. Knowledge Extraction

3.3. Knowledge Indexing

Vector Database

Hypergraph Database

Integration of Vector and Hypergraph Databases

3.4. Knowledge Retrieval and LLMs Augmentation

3.5. Dataset

4. Evaluation Criteria

4.1. Scoring-Based Assessment

- Comprehensiveness (0-100): Assesses whether the response sufficiently addresses all relevant aspects of the question without omitting critical information.

- Diversity (0-100): Evaluates the richness of the content, including additional related knowledge beyond the direct answer.

- Empowerment (0-100): Measures the credibility of the response, ensuring it is free from hallucinations and instills confidence in the reader regarding its accuracy.

- Logical (0-100): Determines the coherence and clarity of the response, ensuring that the arguments are logically structured and well-articulated.

- Readability (0-100): Examines the organization and formatting of the response, ensuring it is easy to read and understand.

- Level 1 | 0-20: The answer is extremely one-sided, leaving out key parts or important aspects of the question.

- Level 2 | 20-40: The answer has some content but misses many important aspects and is not comprehensive enough.

- Level 3 | 40-60: The answer is more comprehensive, covering the main aspects of the question, but there are still some omissions.

- Level 4 | 60-80: The answer is comprehensive, covering most aspects of the question with few omissions.

- Level 5 | 80-100: The answer is extremely comprehensive, covering all aspects of the question with no omissions, enabling the reader to gain a complete understanding.

4.2. Selection-Based Assessment

- Comprehensiveness: How much detail does the answer provide to cover all aspects and details of the question?

- Empowerment: How well does the answer help the reader understand and make informed judgments about the topic?

- Accuracy: How well does the answer align with factual truth and avoid hallucination based on the retrieved context?

- Relevance: How precisely does the answer address the core aspects of the question without including unnecessary information?

- Coherence: How well does the system integrate and synthesize information from multiple sources into a logically flowing response?

- Clarity: How well does the system provide complete information while avoiding unnecessary verbosity and redundancy?

- Logical: How well does the system maintain consistent logical arguments without contradicting itself across the response?

- Flexibility: How well does the system handle various question formats, tones, and levels of complexity?

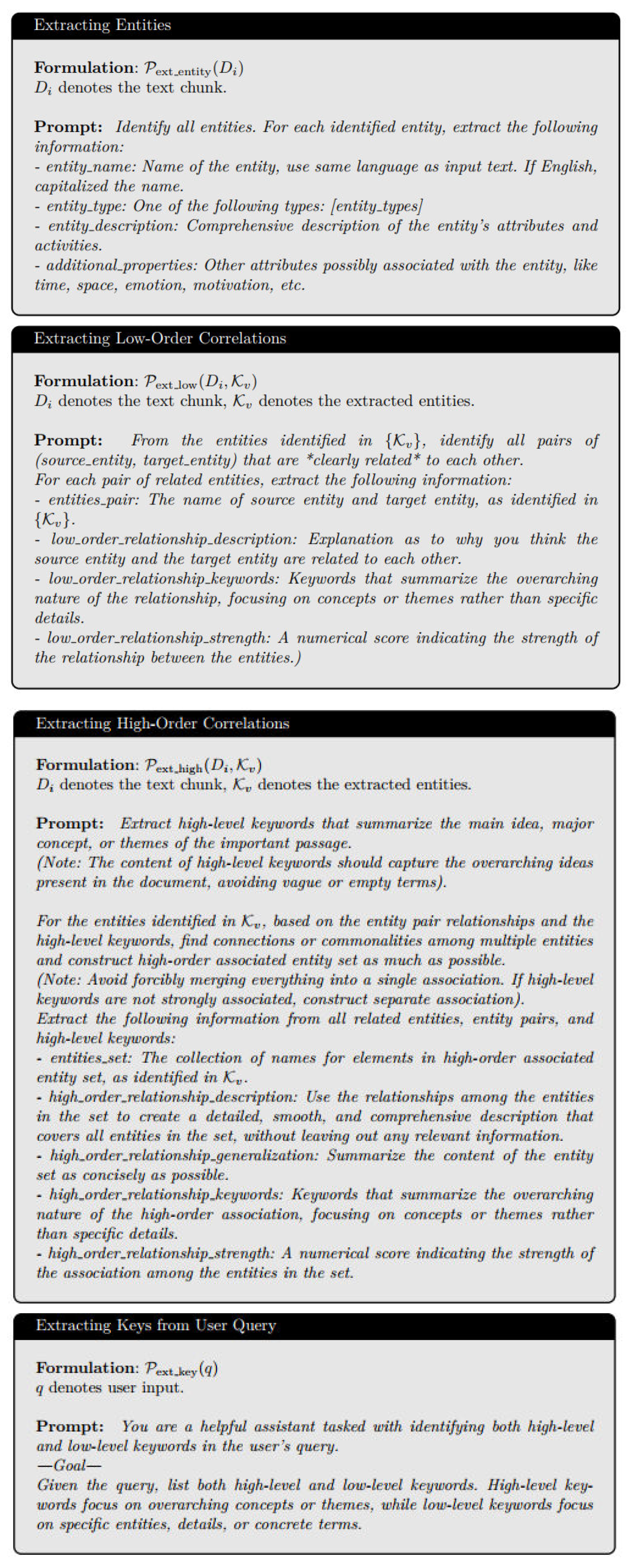

5. Prompts

5.1. Extracting Entities, Correlations and Keywords

5.2. Evaluation

References

- Razafinirina, M. A., Dimbisoa, W. G. & Mahatody, T. Pedagogical alignment of large language models (llm) for personalized learning: a survey, trends and challenges. Journal of Intelligent Learning Systems and Applications 2024, 16, 448–480.

- Naseer, F., Khan, M. N., Tahir, M., Addas, A. & Aejaz, S. H. Integrating deep learning techniques for personalized learning pathways in higher education. Heliyon 2024, 10.

- Dagdelen, J. et al. Structured information extraction from scientific text with large language models. Nature Communications 2024, 15, 1418. [CrossRef] [PubMed]

- Prince, M. H. et al. Opportunities for retrieval and tool augmented large language models in scientific facilities. npj Computational Materials 2024, 10, 251. [CrossRef]

- Zhao, W. X., Liu, J., Ren, R. & Wen, J.-R. Dense text retrieval based on pretrained language models: A survey. ACM Transactions on Information Systems 2024, 42, 1–60.

- Cao, L. Ai in finance: challenges, techniques, and opportunities. ACM Computing Surveys (CSUR) 2022, 55, 1–38.

- Nahar, J., Hossain, M. S., Rahman, M. M. & Hossain, M. A. Advanced predictive analytics for comprehensive risk assessment in financial markets: Strategic applications and sector-wide implications. Global Mainstream Journal of Business, Economics, Development & Project Management 2024, 3, 39–53.

- Ullah, E., Parwani, A., Baig, M. M. & Singh, R. Challenges and barriers of using large language models (llm) such as chatgpt for diagnostic medicine with a focus on digital pathology–a recent scoping review. Diagnostic pathology 2024, 19, 43.

- Savage, T., Nayak, A., Gallo, R., Rangan, E. & Chen, J. H. Diagnostic reasoning prompts reveal the potential for large language model interpretability in medicine. NPJ Digital Medicine 2024, 7, 20.

- Lukkien, D. R. et al. Toward responsible artificial intelligence in long-term care: a scoping review on practical approaches. The Gerontologist 2023, 63, 155–168. [CrossRef]

- Quinn, T. P., Senadeera, M., Jacobs, S., Coghlan, S. & Le, V. Trust and medical ai: the challenges we face and the expertise needed to overcome them. Journal of the American Medical Informatics Association 2021, 28, 890–894.

- Hussain, S. et al. Modern diagnostic imaging technique applications and risk factors in the medical field: a review. BioMed research international 2022, 2022, 5164970. [CrossRef]

- Hager, P. et al. Evaluation and mitigation of the limitations of large language models in clinical decisionmaking. Nature medicine 2024, 30, 2613–2622. [CrossRef] [PubMed]

- Huang, L. et al. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions. ACM Transactions on Information Systems 2025, 43, 1–55.

- Buciluˇa, C., Caruana, R. & Niculescu-Mizil, A. Editor, D. (ed.) Model compression. (ed.Editor, D.) Proceedings of the 12th ACM SIGKDD international conference on Knowledge discovery and data mining, 535–541 (2006).

- Jones, N. Ai hallucinations can’t be stopped—but these techniques can limit their damage. Nature 2025, 637, 778–780. [CrossRef] [PubMed]

- Ke, Z., Liu, B., Ma, N., Xu, H. & Shu, L. Achieving forgetting prevention and knowledge transfer in continual learning. Advances in Neural Information Processing Systems 2021, 34, 22443–22456.

- Es, S., James, J., Anke, L. E. & Schockaert, S. Editor, D. (ed.) Ragas: Automated evaluation of retrieval augmented generation. (ed.Editor, D.) Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations , 150–158 (2024).

- Xiong, G., Jin, Q., Lu, Z. & Zhang, A. Editor, D. (ed.) Benchmarking retrieval-augmented generation for medicine. (ed.Editor, D.). Findings of the Association for Computational Linguistics ACL 2024, 2024, 6233–6251.

- Gao, Y. et al. Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997 2 (2023).

- Miao, J., Thongprayoon, C., Suppadungsuk, S., Garcia Valencia, O. A. & Cheungpasitporn,W. Integrating retrieval-augmented generation with large language models in nephrology: advancing practical applications. Medicina 2024, 60, 445.

- Edge, D. et al. From local to global: A graph rag approach to query-focused summarization. arXiv preprint arXiv:2404.16130 (2024).

- Guo, Z., Xia, L., Yu, Y., Ao, T. & Huang, C. Lightrag: Simple and fast retrieval-augmented generation. arXiv preprint arXiv:2410.05779 (2024).

- Srinivasan, G. et al. Quantifying topological uncertainty in fractured systems using graph theory and machine learning. Scientific reports 2018, 8, 11665. [CrossRef]

- Santos, A. et al. A knowledge graph to interpret clinical proteomics data. Nature biotechnology 2022, 40, 692–702. [CrossRef]

- Pais, C. et al. Large language models for preventing medication direction errors in online pharmacies. Nature medicine 2024, 30, 1574–1582. [CrossRef]

- Li, T. et al. Cancergpt for few shot drug pair synergy prediction using large pretrained language models. NPJ Digital Medicine 2024, 7, 40. [CrossRef] [PubMed]

- Singhal, K. et al. Large language models encode clinical knowledge. Nature 2023, 620, 172–180. [CrossRef] [PubMed]

- Labatut, V. & Bost, X. Extraction and analysis of fictional character networks: A survey. ACM Computing Surveys (CSUR) 2019, 52, 1–40.

- Gao, Y., Feng, Y., Ji, S. & Ji, R. HGNN+: General hypergraph neural networks. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, 45, 3181–3199.

- Achiam, J. et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 (2023).

- Bai, J. et al. Qwen technical report. arXiv preprint arXiv:2309.16609 (2023).

- Touvron, H. et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971 (2023).

- Liu, A. et al. DeepSeek-v3 technical report. arXiv preprint arXiv:2412.19437 (2024).

- Gong, L. et al. Seedream 2.0: A native chinese-english bilingual image generation foundation model. arXiv preprint arXiv:2503.07703 (2025).

- Du, Z. et al. Glm: General language model pretraining with autoregressive blank infilling. arXiv preprint arXiv:2103.10360 (2021).

- Yu, Y. et al. Rankrag: Unifying context ranking with retrieval-augmented generation in llms. Advances in Neural Information Processing Systems 2024, 37, 121156–121184.

- Yu, H. et al. Editor, D. (ed.) Evaluation of retrieval-augmented generation: A survey. (ed.Editor, D.) CCF Conference on Big Data, 102–120 (2024).

- Wang, M. et al. Editor, D. (ed.) Leave no document behind: Benchmarking long-context llms with extended multi-doc qa. (ed.Editor, D.) Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 5627–5646 (2024).

- Zhu, X., Guo, X., Cao, S., Li, S. & Gong, J.Editor, D. (ed.) Structugraphrag: Structured document-informed knowledge graphs for retrieval-augmented generation. (ed.Editor, D.) Proceedings of the AAAI Symposium Series, Vol. 4, 242–251 (2024).

- Jiang, X. et al. Ragraph: A general retrieval-augmented graph learning framework. Advances in Neural Information Processing Systems 2024, 37, 29948–29985.

- He, X. et al. G-retriever: Retrieval-augmented generation for textual graph understanding and question answering. Advances in Neural Information Processing Systems 2024, 37, 132876–132907.

- Qian, H., Zhang, P., Liu, Z., Mao, K. & Dou, Z. Memorag: Moving towards next-gen rag via memory-inspired knowledge discovery. arXiv preprint arXiv:2409.05591 (2024).

| Dataset | Domain | #Token | #Chunk | #Ques |

|---|---|---|---|---|

| NeurologyCorp | Medicine | 1,968,716 | 1,790 | 2,173 |

| PathologyCorp | Medicine | 905,760 | 824 | 2,530 |

| MathCrop | Mathematics | 3,863,538 | 3,513 | 3,976 |

| AgricCorp | Agriculture | 1,993,515 | 1,813 | 2,472 |

| FinCorp | Finance | 3,825,459 | 3,478 | 2,698 |

| PhysiCrop | Physics | 2,179,328 | 1,982 | 2,673 |

| LegalCrop | Law | 4,956,748 | 4,507 | 2,787 |

| ArtCrop | Art | 3,692,286 | 3,357 | 2,993 |

| MixCorp | Mix | 615,355 | 560 | 2,797 |

| Type | Examples |

| One-Stage Question | What is the role of the ventrolateral preoptic nucleus in the flip-flop mechanism described for transitions between sleep and wakefulness? |

| Two-Stage Question | Identify the anatomical origin of the corticospinal and corticobulbar tracts, and explain how the identified structures contribute to the control of voluntary movement. |

| Three-Stage Question | How does the corticospinal system function in terms of movement control, and specifically, what are the roles and interconnections of the basal ganglia and the thalamus in modulating these movements, including the effects of lesions in these areas on movement disorders? |

| Method | D | Comp. | Dive. | Empo. | Logi. | Read. | Overall | Rank | ||

| LLM | x | x | x | 77.00 | 58.60 | 67.26 | 82.80 | 82.60 | 73.65 | 8 |

| - | x | ✓ | x | 83.40 | 74.96 | 74.72 | 86.06 | 86.24 | 81.08 | 6 |

| - | x | x | ✓ | 84.40 | 75.00 | 75.68 | 86.46 | 86.22 | 81.55 | 5 |

| - | x | ✓ | ✓ | 85.90 | 78.34 | 77.14 | 87.02 | 86.70 | 83.02 | 3 |

| RAG | ✓ | x | x | 81.90 | 69.24 | 74.00 | 85.06 | 82.62 | 78.56 | 7 |

| - | ✓ | ✓ | x | 85.80 | 77.20 | 76.56 | 86.58 | 86.84 | 82.60 | 4 |

| - | ✓ | x | ✓ | 88.26 | 78.80 | 77.52 | 87.34 | 87.04 | 83.79 | 2 |

| Hyper-RAG | ✓ | ✓ | ✓ | 88.40 | 79.60 | 77.84 | 87.76 | 87.22 | 84.16 | 1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).