Submitted:

02 April 2025

Posted:

07 April 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Methods

2.1. Research Design

2.2. Search Strategy

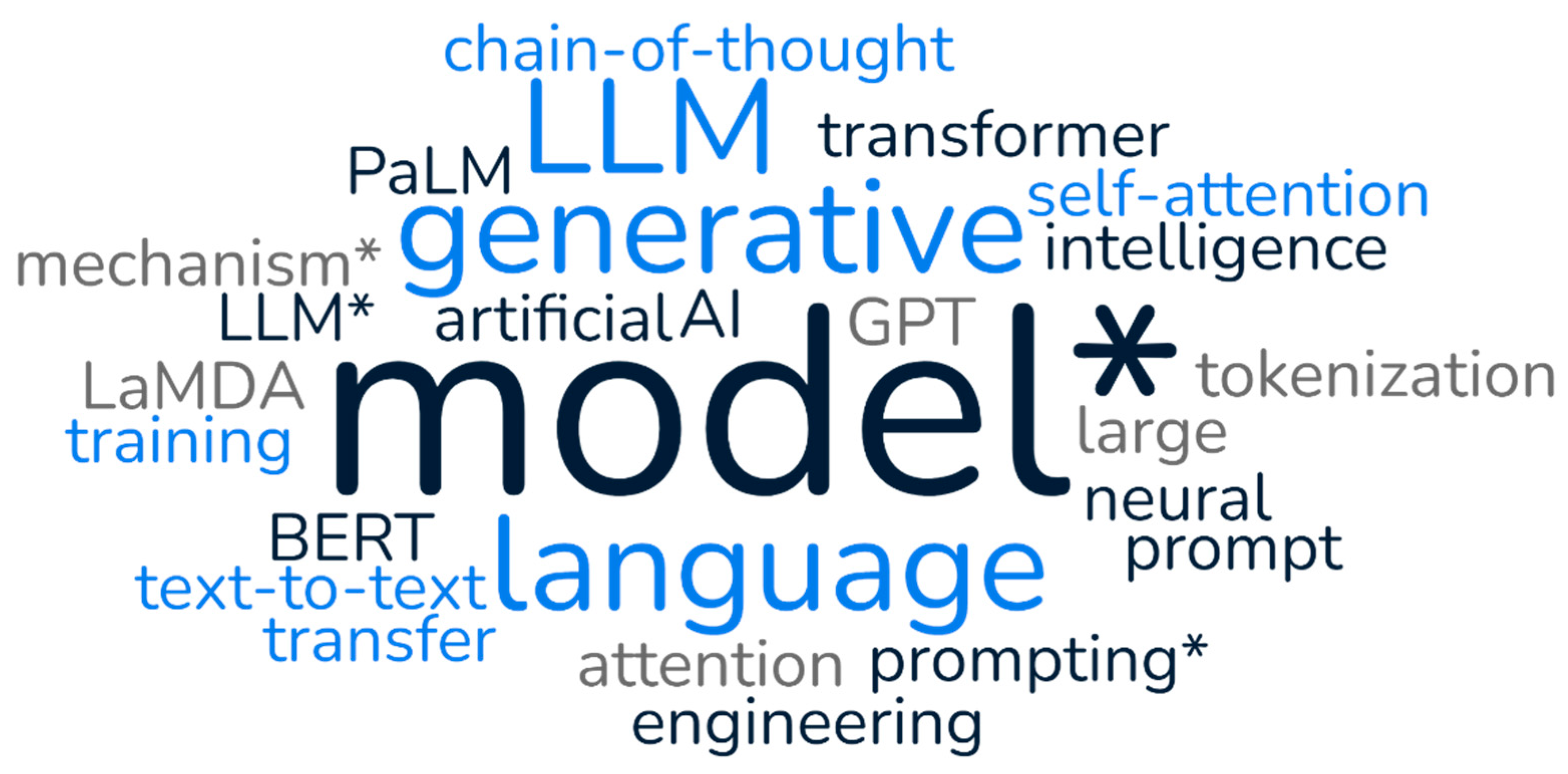

- "generative AI" OR "generative artificial intelligence"

- "large language model*" OR "LLM*"

- "transformer model*" OR "attention mechanism*"

- "GPT" OR "BERT" OR "LaMDA" OR "PaLM"

- "neural language model*"

- "self-attention"

- "LLM model*"

- "LLM training"

- "chain-of-thought prompting*"

- "prompt engineering"

- "tokenization"

- "text-to-text transfer"

2.3. Inclusion and Exclusion Criteria

- Peer-reviewed journal articles, conference proceedings, and technical reports

- Literature focusing on generative AI and large language models

- Studies examining technical architecture, applications, limitations, or future directions

- Publications in English

- Literature published between 2017 and March 2025

- Websites for institutions (European Commission) and blogs for main LLM providers (OpenAI, DeepSeek, Google)

- Non-English publications

- Opinion pieces without substantial technical or empirical content

- Studies focusing exclusively on other AI technologies without significant discussion of generative AI or LLMs

- Duplicate publications or multiple reports of the same study

2.4. Data Extraction and Synthesis

- Publication details (authors, year, journal/conference)

- Study objectives and methodology

- Key findings and contributions

- Technical details of models or architectures discussed

- Applications and use cases

- Limitations and challenges identified

- Future research directions proposed

2.5. Quality Assessment and Limitations of Review Methodology

3. Results

3.1. Historical Evolution of Large Language Models

3.1.1. Early Foundations (1880s-1950s)

3.1.2. Machine Learning and Neural Networks (1950s-1980s)

3.1.3. Statistical Approaches and Small Language Models (1980s-1990s)

3.1.4. Deep Learning and Neural Networks (1990s-2010s)

3.1.5. The Transformer Revolution (2017-Present)

3.1.6. Current Landscape (2021-Present)

3.2. Technical Architecture of Generative LLMs

3.2.1. Transformer Architecture

3.2.2. Components of Modern LLMs

3.2.3. Architectural Variations

3.2.4. Scaling Properties and Emergent Capabilities

3.2.5. Efficiency Innovations

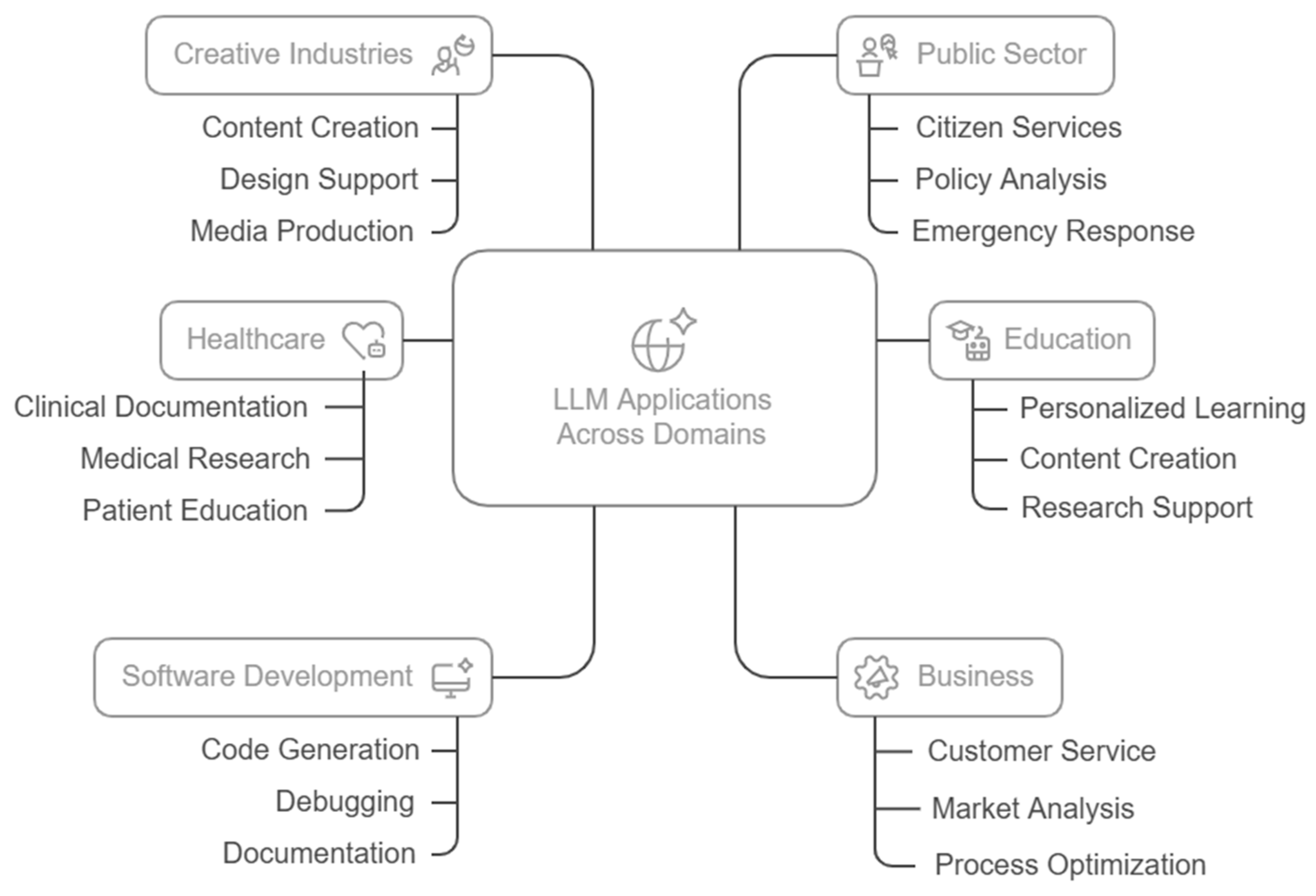

3.3. Applications and Use Cases

3.3.1. Natural Language Understanding and Text Generation

3.3.2. Educational Applications

3.3.3. Healthcare Applications

3.3.4. Software Development and Programming

3.3.5. Business and Enterprise Applications

3.3.6. Creative and Media Applications

3.3.7. Public Sector and Governance

3.4. Limitations and Challenges

3.4.1. Technical Limitations

3.4.2. Ethical Concerns

3.4.3. Regulatory and Compliance Challenges

3.4.4. Environmental Impact

3.4.5. Implementation and Integration Challenges

3.5. Future Directions and Emerging Trends

3.5.1. Advancements in Model Architecture

3.5.2. Efficiency Improvements

3.5.3. Domain-Specific Specialization

3.5.4. Enhanced Reasoning Capabilities

3.5.5. Ethical AI and Responsible Development

3.5.6. Regulatory and Governance Frameworks

3.5.7. Integration with Other Technologies

4. Discussion

4.1. Implications for Research and Practice

4.1.1. Research Implications

4.1.2. Practical Implications

4.2. Ethical Considerations

4.3. Limitations of the Current Review

5. Conclusions

Acknowledgments

Conflicts of Interest

References

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-Training of Deep Bidirectional Transformers for Language Understanding 2019.

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models Are Few-Shot Learners 2020.

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arora, S.; von Arx, S.; Bernstein, M.S.; Bohg, J.; Bosselut, A.; Brunskill, E.; et al. On the Opportunities and Risks of Foundation Models 2021.

- A. Radford, J. Wu, R. Child, D. Luan, D. Amodei, and I. Sutskever Language Models Are Unsupervised Multitask Learners. OpenAI Blog.

- Yu, P.; Xu, H.; Hu, X.; Deng, C. Leveraging Generative AI and Large Language Models: A Comprehensive Roadmap for Healthcare Integration. Healthcare 2023, 11, 2776. [Google Scholar] [CrossRef] [PubMed]

- Salierno, G.; Leonardi, L.; Cabri, G. Generative AI and Large Language Models in Industry 5.0: Shaping Smarter Sustainable Cities. Encyclopedia 2025, 5, 30. [Google Scholar] [CrossRef]

- Sallam, M. ChatGPT Utility in Healthcare Education, Research, and Practice: Systematic Review on the Promising Perspectives and Valid Concerns. Healthcare 2023, 11, 887. [Google Scholar] [CrossRef] [PubMed]

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; Pinto, H.P. de O.; Kaplan, J.; Edwards, H.; Burda, Y.; Joseph, N.; Brockman, G.; et al. Evaluating Large Language Models Trained on Code 2021.

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New Orleans, LA, USA, June 2022; pp. 10674–10685.

- Shuster, K.; Poff, S.; Chen, M.; Kiela, D.; Weston, J. Retrieval Augmentation Reduces Hallucination in Conversation. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021; Association for Computational Linguistics: Punta Cana, Dominican Republic, 2021; pp. 3784–3803. [Google Scholar]

- Bengio, Y.; Ducharme, R.; Vincent, P. A Neural Probabilistic Language Model. In Proceedings of the Proceedings of the 14th International Conference on Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2000; pp. 893–899. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need 2017.

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling Laws for Neural Language Models 2020.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.-A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models 2023.

- DeepSeek-AI; Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; Zhu, Q.; Ma, S.; Wang, P.; et al. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning 2025.

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? . In Proceedings of the Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency; ACM: Virtual Event Canada, March 3 2021; pp. 610–623.

- Bolukbasi, T.; Chang, K.-W.; Zou, J.; Saligrama, V.; Kalai, A. Man Is to Computer Programmer as Woman Is to Homemaker? Debiasing Word Embeddings 2016.

- Patterson, D.; Gonzalez, J.; Le, Q.; Liang, C.; Munguia, L.-M.; Rothchild, D.; So, D.; Texier, M.; Dean, J. Carbon Emissions and Large Neural Network Training 2021.

- Tamkin, A.; Brundage, M.; Clark, J.; Ganguli, D. Understanding the Capabilities, Limitations, and Societal Impact of Large Language Models 2021.

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G. ; The PRISMA Group Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Med 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- Critical Appraisal Skills Programme CASP Systematic Review Checklist; 2018.

- Bréal, M. Essai de semantique: (science des significations), 3 rd ed.; Lambert- Lucas: Paris, 2005; ISBN 978-2-915806-01-4. [Google Scholar]

- Saussure, F. de; Bally, C.; Sechehaye, C.-A.; Urbain, J.-D. Cours de linguistique générale; Petite bibliothèque Payot; Éditions Payot & Rivages: Paris, 2016. ISBN 978-2-228-91561-8.

- Hutchins, W.J. Machine Translation: Past, Present and Future; Ellis Horwood series in computers and their applications; Ellis Horwood [u.a.]: Chichester, 1986; ISBN 978-0-470-20313-2. [Google Scholar]

- Turing, A.M. Computing Machinery and Intelligence; Springer, 2009.

- Rosenblatt, F. The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain. Psychological review 1958, 65, 386. [Google Scholar] [CrossRef] [PubMed]

- Weizenbaum, J. ELIZA—a Computer Program for the Study of Natural Language Communication between Man and Machine. Communications of the ACM 1966, 9, 36–45. [Google Scholar] [CrossRef]

- JELINEK, F. STATISTICAL METHODS FOR SPEECH RECOGNITION; MIT PRESS: S.l., 2022; ISBN 978-0-262-54660-7.

- Brown, P.F.; Pietra, V.J.D.; Pietra, S.A.D.; Mercer, R.L. The Mathematics of Statistical Machine Translation: Parameter Estimation. Comput. Linguist. 1993, 19, 263–311. [Google Scholar]

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient Estimation of Word Representations in Vector Space 2013.

- Sutskever, I.; Vinyals, O.; Le, Q.V. Sequence to Sequence Learning with Neural Networks 2014.

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. ; others Improving Language Understanding by Generative Pre-Training. 2018.

- OpenAI; Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; et al. GPT-4 Technical Report 2024.

- Kitaev, N.; Kaiser, Ł.; Levskaya, A. Reformer: The Efficient Transformer 2020.

- Beltagy, I.; Peters, M.E.; Cohan, A. Longformer: The Long-Document Transformer 2020.

- Shaw, P.; Uszkoreit, J.; Vaswani, A. Self-Attention with Relative Position Representations. In Proceedings of the Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 2 (Short Papers); Association for Computational Linguistics: New Orleans, Louisiana, 2018; pp. 464–468.

- Sennrich, R.; Haddow, B.; Birch, A. Neural Machine Translation of Rare Words with Subword Units. In Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Association for Computational Linguistics: Berlin, Germany, 2016; pp. 1715–1725. [Google Scholar]

- Hendrycks, D.; Gimpel, K. Gaussian Error Linear Units (GELUs) 2016.

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer Normalization 2016.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition; 2016; pp. 770–778.

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer 2019.

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A.; Barham, P.; Chung, H.W.; Sutton, C.; Gehrmann, S.; et al. PaLM: Scaling Language Modeling with Pathways 2022.

- Hoffmann, J.; Borgeaud, S.; Mensch, A.; Buchatskaya, E.; Cai, T.; Rutherford, E.; Casas, D. de L.; Hendricks, L.A.; Welbl, J.; Clark, A.; et al. Training Compute-Optimal Large Language Models 2022.

- Henighan, T.; Kaplan, J.; Katz, M.; Chen, M.; Hesse, C.; Jackson, J.; Jun, H.; Brown, T.B.; Dhariwal, P.; Gray, S.; et al. Scaling Laws for Autoregressive Generative Modeling 2020.

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.H.; Le, Q.V.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. In Proceedings of the Proceedings of the 36th International Conference on Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2022.

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models 2021.

- Han, S.; Mao, H.; Dally, W.J. Deep Compression: Compressing Deep Neural Networks with Pruning, Trained Quantization and Huffman Coding 2016.

- Hinton, G.; Vinyals, O.; Dean, J. Distilling the Knowledge in a Neural Network 2015.

- Zhang, J.; Zhao, Y.; Saleh, M.; Liu, P. Pegasus: Pre-Training with Extracted Gap-Sentences for Abstractive Summarization. In Proceedings of the International conference on machine learning; PMLR, 2020; pp. 11328–11339.

- Johnson, M.; Schuster, M.; Le, Q.V.; Krikun, M.; Wu, Y.; Chen, Z.; Thorat, N.; Viégas, F.; Wattenberg, M.; Corrado, G.; et al. Google`s Multilingual Neural Machine Translation System: Enabling Zero-Shot Translation. Transactions of the Association for Computational Linguistics 2017, 5, 339–351. [Google Scholar] [CrossRef]

- Roller, S.; Dinan, E.; Goyal, N.; Ju, D.; Williamson, M.; Liu, Y.; Xu, J.; Ott, M.; Shuster, K.; Smith, E.M.; et al. Recipes for Building an Open-Domain Chatbot 2020.

- Chu, Z.; Wang, S.; Xie, J.; Zhu, T.; Yan, Y.; Ye, J.; Zhong, A.; Hu, X.; Liang, J.; Yu, P.S.; et al. LLM Agents for Education: Advances and Applications 2025.

- Ouyang, X.; Wang, S.; Pang, C.; Sun, Y.; Tian, H.; Wu, H.; Wang, H. ERNIE-M: Enhanced Multilingual Representation by Aligning Cross-Lingual Semantics with Monolingual Corpora 2021.

- Beltagy, I.; Lo, K.; Cohan, A. SciBERT: A Pretrained Language Model for Scientific Text 2019.

- Esteva, A.; Kale, A.; Paulus, R.; Hashimoto, K.; Yin, W.; Radev, D.; Socher, R. CO-Search: COVID-19 Information Retrieval with Semantic Search, Question Answering, and Abstractive Summarization 2020.

- Chow, J.C.L.; Wong, V.; Li, K. Generative Pre-Trained Transformer-Empowered Healthcare Conversations: Current Trends, Challenges, and Future Directions in Large Language Model-Enabled Medical Chatbots. BioMedInformatics 2024, 4, 837–852. [Google Scholar] [CrossRef]

- Ciubotaru, B.-I.; Sasu, G.-V.; Goga, N.; Vasilățeanu, A.; Marin, I.; Păvăloiu, I.-B.; Gligore, C.T.I. Frailty Insights Detection System (FIDS)—A Comprehensive and Intuitive Dashboard Using Artificial Intelligence and Web Technologies. Applied Sciences 2024, 14, 7180. [Google Scholar] [CrossRef]

- Svyatkovskiy, A.; Deng, S.K.; Fu, S.; Sundaresan, N. IntelliCode Compose: Code Generation Using Transformer. In Proceedings of the Proceedings of the 28th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering; ACM: Virtual Event USA, November 8 2020; pp. 1433–1443.

- Iyer, S.; Konstas, I.; Cheung, A.; Zettlemoyer, L. Summarizing Source Code Using a Neural Attention Model. In Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Association for Computational Linguistics: Berlin, Germany, 2016; pp. 2073–2083. [Google Scholar]

- Grootendorst, M. BERTopic: Neural Topic Modeling with a Class-Based TF-IDF Procedure 2022.

- Ramesh, A.; Dhariwal, P.; Nichol, A.; Chu, C.; Chen, M. Hierarchical Text-Conditional Image Generation with CLIP Latents 2022.

- Hawthorne, C.; Stasyuk, A.; Roberts, A.; Simon, I.; Huang, C.-Z.A.; Dieleman, S.; Elsen, E.; Engel, J.; Eck, D. Enabling Factorized Piano Music Modeling and Generation with the MAESTRO Dataset 2019.

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mané, D. Concrete Problems in AI Safety 2016.

- Wei, J.; He, J.; Chen, K.; Zhou, Y.; Tang, Z. Collaborative Filtering and Deep Learning Based Recommendation System for Cold Start Items. Expert Systems with Applications 2017, 69, 29–39. [Google Scholar] [CrossRef]

- Hendrycks, D.; Burns, C.; Kadavath, S.; Arora, A.; Basart, S.; Tang, E.; Song, D.; Steinhardt, J. Measuring Mathematical Problem Solving With the MATH Dataset 2021.

- Lemley, M.A.; Casey, B. Fair Learning. SSRN Journal 2020. [Google Scholar] [CrossRef]

- European Comission Proposal for a Regulation Laying down Harmonised Rules on Artificial Intelligence. Available online: https://digital-strategy.ec.europa.eu/en/library/proposal-regulation-laying-down-harmonised-rules-artificial-intelligence (accessed on 4 January 2025).

- Doshi-Velez, F.; Kim, B. Towards A Rigorous Science of Interpretable Machine Learning 2017.

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Deep Learning in NLP 2019.

- Guu, K.; Lee, K.; Tung, Z.; Pasupat, P.; Chang, M.-W. REALM: Retrieval-Augmented Language Model Pre-Training 2020.

- Bengio, Y.; Louradour, J.; Collobert, R.; Weston, J. Curriculum Learning. In Proceedings of the Proceedings of the 26th Annual International Conference on Machine Learning; ACM: Montreal Quebec Canada, June 14 2009; pp. 41–48.

- Tuan, N.T.; Moore, P.; Thanh, D.H.V.; Pham, H.V. A Generative Artificial Intelligence Using Multilingual Large Language Models for ChatGPT Applications. Applied Sciences 2024, 14, 3036. [Google Scholar] [CrossRef]

- Bengio, Y.; Deleu, T.; Rahaman, N.; Ke, R.; Lachapelle, S.; Bilaniuk, O.; Goyal, A.; Pal, C. A Meta-Transfer Objective for Learning to Disentangle Causal Mechanisms 2019.

- Leike, J.; Krueger, D.; Everitt, T.; Martic, M.; Maini, V.; Legg, S. Scalable Agent Alignment via Reward Modeling: A Research Direction 2018.

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model Cards for Model Reporting. 2018. [CrossRef]

- Acemoglu, D.; Restrepo, P. Automation and New Tasks: How Technology Displaces and Reinstates Labor. Journal of Economic Perspectives 2019, 33, 3–30. [Google Scholar] [CrossRef]

| Model Family |

Architecture Type | Parameters | Key Features |

Training Approach |

Notable Capabilities |

Limitations |

| GPT Series | Decoder-only | GPT-3: 175B GPT-4: ~1.7T (estimated) |

Autoregressive generation Masked self-attention |

Generative pre-training followed by fine-tuning | Text generation Few-shot learning In-context learning |

Limited bidirectional context Tendency to hallucinate |

| BERT | Encoder-only | BERT-Large: 340M RoBERTa: 355M |

Bidirectional attention Masked language modeling |

Masked token prediction Next sentence prediction |

Strong text understanding Classification tasks Question answering |

Limited generation capabilities Fixed context window |

| T5 | Encoder-decoder | T5-11B: 11B | Text-to-text framework Complete transformer architecture |

Unified text-to-text approach for all NLP tasks | Versatile across tasks Strong transfer learning |

Larger computational requirements Complex training process |

| PaLM | Decoder-only | PaLM: 540B PaLM 2: ~340B |

Pathways architecture Efficient training |

Trained on diverse multilingual data | Multilingual capabilities Strong reasoning Code generation |

Proprietary architecture Limited public information |

| LLaMA | Decoder-only | LLaMA 2: 7B-70B | Optimized for efficiency Open weights |

Trained on diverse text corpora | Open-source accessibility Efficient fine-tuning |

Smaller parameter count than largest models Limited multimodal capabilities |

| Claude | Decoder-only | Claude 2: ~140B (estimated) | Constitutional AI approach RLHF training |

Trained with constitutional principles | Safety and alignment focus Long context window |

Less information on architecture Proprietary system |

| Gemini | Multimodal transformer | Gemini Ultra: ~1T (estimated) | Multimodal from ground up Advanced reasoning |

Trained on multimodal data | Strong multimodal reasoning Advanced problem-solving |

Proprietary architecture High computational requirements |

| Mistral | Decoder-only | Mistral 7B: 7B Mixtral 8x7B: 47B |

Grouped-query attention Mixture of experts |

Efficient training techniques | Strong performance despite smaller size Efficient inference |

Newer architecture with less testing Smaller parameter count |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).