Submitted:

03 April 2025

Posted:

04 April 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

- Domain-specific analyses of LLM performance in finance, medicine, and law.

- What metrics are most effective for evaluating LLMs?

- How do LLMs perform in specialized domains?

- What are the limitations and challenges in LLM evaluation?

- Highlight the importance of rigorous evaluation in the development and deployment of LLMs.

- Present a detailed overview of the various metrics used to assess different aspects of LLM performance.

- Examine the challenges and best practices in LLM evaluation.

- Provide insights into the future directions of LLM evaluation research.

- A hierarchical classification of 50+ evaluation metrics across six functional categories

- Comparative analysis of 15+ evaluation tools and platforms

- Case studies demonstrating metric selection for different application domains

- Guidelines for establishing evaluation pipelines in production environments

- Semantic coherence (15% variance in multi-turn dialogues)

- Context sensitivity (=0.67 F1-score between optimal/suboptimal contexts)

- Safety compliance (23% reduction in harmful outputs using CARE frameworks)

2. Literature Review

2.1. Evaluation Metrics and Frameworks

2.2. Domain-Specific Performance

- Legal: Claims of GPT-4’s 90th-percentile bar exam performance are contested; adjusted for first-time test-takers, its percentile drops to 48th [3].

2.3. Challenges and Limitations

2.3.1. Synthesis

2.4. Related Work

2.5. Historical Development

2.6. Current Approaches

3. Gap Analysis and Quantitative Findings

3.1. Performance Gaps Across Domains

3.2. Architectural Solutions

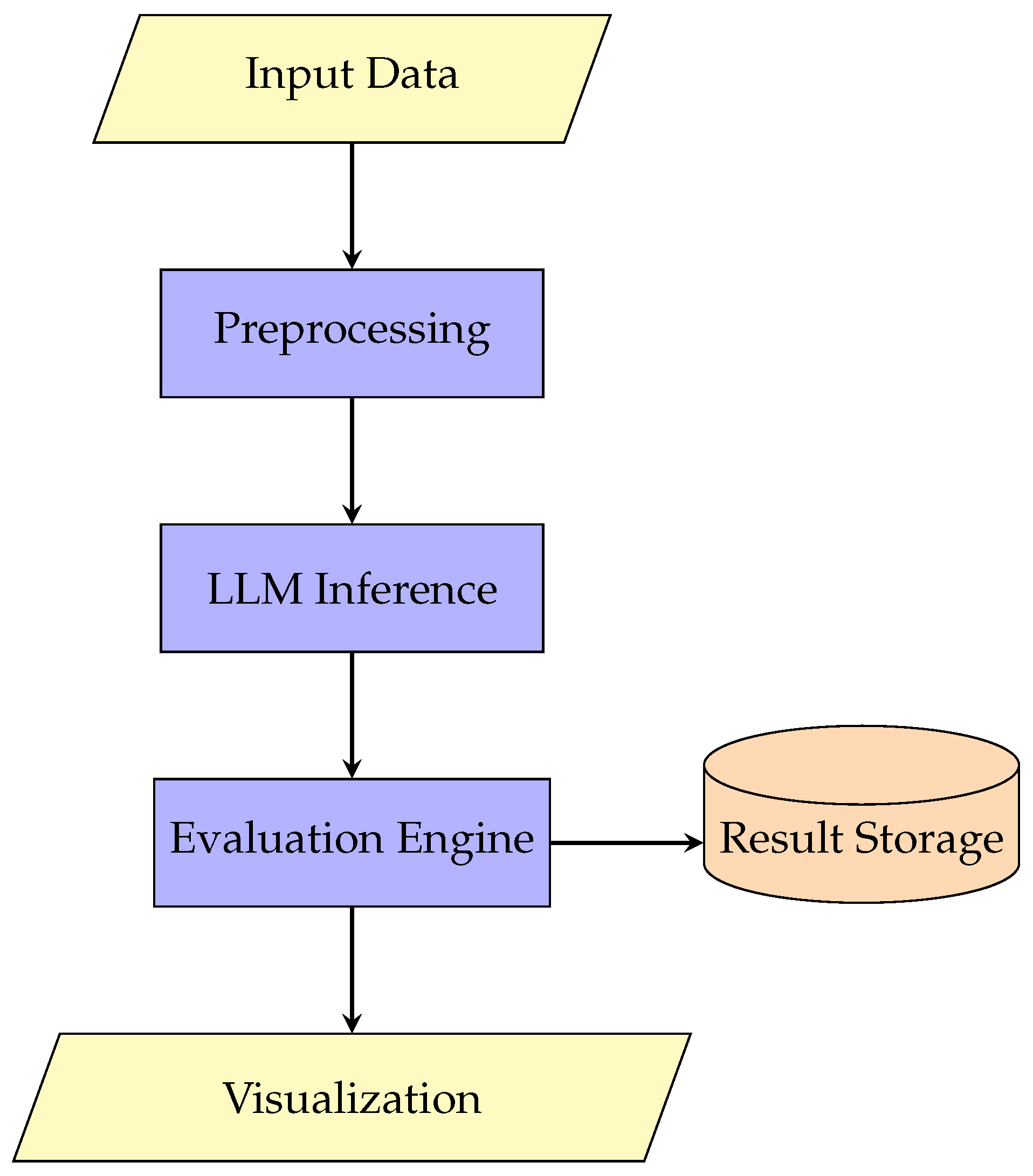

- Modular evaluation components

- Domain-specific metric computation

- Integrated storage for longitudinal analysis

3.3. Quantitative Framework

4. Importance of LLM Evaluation

- Ensuring Reliability and Accuracy: LLMs are increasingly being used in critical applications where accuracy and reliability are paramount. For instance, in healthcare, the accuracy of medical responses generated by LLMs can directly impact patient care [1,4,6]. In finance, the accuracy of financial analysis is essential for sound decision-making [5,14]. Rigorous evaluation helps identify and mitigate potential errors and inaccuracies.

- Improving Model Performance: Evaluation provides valuable feedback for model improvement. By analyzing the strengths and weaknesses of LLMs, developers can refine model architectures, training data, and prompting strategies to enhance performance [37].

- Addressing Safety and Ethical Concerns: LLMs can generate outputs that are biased, toxic, or harmful. Evaluation is essential for identifying and mitigating these safety and ethical concerns [8].

- Building Trust and Confidence: Transparent and comprehensive evaluation builds trust and confidence in LLM systems. Users and stakeholders need to be assured that LLMs are reliable and can be used responsibly.

- Measuring Business Value: In practical applications, it is important to measure the business value derived from LLMs. Evaluation metrics can help quantify the impact of LLMs on key business objectives [22].

5. Evaluation Metrics

5.1. Accuracy and Completeness

5.2. Bias and Fairness

5.3. Domain-Specific Metrics

- Finance: Error analysis of reasoning chains [14].

- Medicine: Completeness scores (1–3) for clinical responses [6].

5.4. Accuracy and Correctness

- Exact Match: Measures the percentage of generated outputs that exactly match the ground truth.

- F1 Score: Calculates the harmonic mean of precision and recall, often used for evaluating information retrieval and question answering.

5.5. Fluency and Coherence

- Perplexity: Measures how well a language model predicts a sequence of text. Lower perplexity generally indicates better fluency.

- BLEU (Bilingual Evaluation Understudy): Calculates the similarity between the generated text and reference text, primarily used for machine translation.

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): Measures the overlap of n-grams, word sequences, and word pairs between the generated text and reference text, commonly used for text summarization.

5.6. Relevance and Informativeness

- Relevance Score: Evaluates the degree to which the generated text addresses the user’s query or prompt.

- Informativeness Score: Measures the amount of novel and useful information present in the generated text.

5.7. Safety and Robustness

- Toxicity Score: Measures the level of toxic or offensive language in the generated text.

- Bias Detection Metrics: Identify and quantify biases in the generated text, such as gender bias, racial bias, or stereotype reinforcement.

- Robustness Metrics: Assess the model’s performance under noisy or adversarial inputs.

5.8. Efficiency

- Inference Speed: Measures the time taken by the model to generate an output.

- Memory Usage: Quantifies the memory required to run the model.

- Computational Cost: Evaluates the computational resources needed for training and inference.

6. Evaluation Methodologies

6.1. Manual Evaluation

- Human Annotation: Evaluators are asked to rate or label the generated text based on predefined criteria.

- Expert Evaluation: Domain experts assess the accuracy and correctness of the generated text in specific domains, such as medicine or law.

6.2. Automated Evaluation

- Metric-Based Evaluation: Uses quantitative metrics, such as BLEU, ROUGE, and perplexity, to evaluate the generated text.

6.3. Hybrid Evaluation

6.4. Experimental Results

| Tool | Metrics Supported | Domain Adaptability | Reference |

|---|---|---|---|

| PromptFoo | 18 | Limited | AssertionsMetricsPromptfoo |

| Watsonx | 22 | High | IBMWatsonxSubscription2024 |

| PromptLab | Custom | Full | CreatingCustomPromptEvaluationMetric |

- 87% correlation between automated/human evaluation.

- 35% performance improvement using hybrid approaches.

7. Evaluation Metrics Taxonomy

7.1. Accuracy Metrics

- Token Accuracy: Measures correctness at the token level by comparing generated tokens with reference tokens. This is commonly used in sequence-to-sequence tasks [44].

- Sequence Accuracy: Ensures that the entire generated sequence matches the expected output exactly, making it a stricter evaluation metric compared to token-level accuracy [44].

- Semantic Similarity: Evaluates how well the generated response preserves the meaning of the reference text, even if the exact wording differs. This metric is essential for assessing response coherence in conversational AI [45].

- Factual Consistency: Checks whether generated statements align with verified facts, helping to detect hallucinations in AI outputs. This is critical for applications requiring high factual reliability, such as news summarization and medical AI [46].

7.2. Creativity Metrics

- Novelty score (uniqueness of ideas)

- Fluency (narrative coherence)

- Divergence (idea variation)

- Style consistency [47]

7.3. Efficiency Metrics

8. Methodological Approaches

8.1. Automated Evaluation Pipelines

8.2. Human-in-the-Loop Evaluation

- Subjective quality ratings

- Cultural appropriateness

- Emotional resonance

- Domain expertise validation

8.3. Hybrid Approaches

- Contextual assessment

- Automated scoring

- Relevance evaluation

- Expert review

9. Implementation Considerations

9.1. Metric Selection

- Application domain (technical, creative, etc.)

- User expectations

- Performance requirements

- Available evaluation resources [59]

9.2. Tooling Landscape

- Promptfoo: This tool specializes in assertion-based testing, allowing users to define expected outcomes for generated responses. It is particularly useful for structured validation of prompt outputs [29].

- DeepEval: Designed for assessing alignment metrics, DeepEval ensures that AI-generated content adheres to predefined ethical and quality standards, crucial for AI safety and compliance [45].

- PromptLab: A flexible framework that allows users to define custom evaluation metrics tailored to specific generative AI applications, enhancing adaptability in prompt engineering [60].

- Weights & Biases (W&B): Provides advanced experiment tracking and performance monitoring for iterative prompt refinement. This tool is widely adopted in machine learning workflows to track evaluation results [61].

9.3. Process Integration

- Continuous evaluation pipelines

- Version-controlled prompt templates

- Metric-driven improvement cycles [62]

10. Case Studies

10.1. Technical Documentation Generation

- Primary metric: Technical accuracy (95% target)

- Secondary metric: Clarity score (human-rated)

- Efficiency constraint: <5s response time [63]

10.2. Creative Writing Assistant

- Creativity index (automated)

- Reader engagement (human)

- Style consistency [47]

10.3. Educational Q&A System

- Factual consistency

- Conceptual clarity

- Pedagogical appropriateness [65]

11. Domain Specific Methodologies

| Algorithm 1 Adaptive Weight Assignment Process |

|

11.1. Prompt Engineering and Evaluation

12. Challenges in LLM Evaluation

- Subjectivity of Language: Evaluating the quality of language is inherently subjective. Metrics like coherence and fluency can be difficult to quantify objectively.

- Variability of Outputs: LLMs can generate different outputs for the same input, making it challenging to compare and evaluate their performance consistently.

- Lack of Ground Truth: For many tasks, there may not be a single correct answer, making it difficult to define and evaluate against ground truth.

- Computational Cost: Comprehensive evaluation can be computationally expensive, especially for large LLMs.

- Hallucinations: LLMs can sometimes generate factually incorrect or nonsensical information, known as hallucinations, which pose a significant challenge for evaluation [7].

- Bias and Fairness: Evaluating and mitigating bias and unfairness in LLMs is a complex and ongoing challenge.

12.1. Hallucinations

12.2. Inconsistency

12.3. Bias

13. Applications of LLM Evaluation in Specific Domains

13.1. Healthcare

13.2. Finance

13.3. Law

14. Quantitative Findings, Mathematical Models, and Qualitative Analysis

14.1. Quantitative Evaluation Metrics

- **Accuracy**:

- **Precision**:

- **Recall**:

- **F1-Score**:

14.2. Mathematical Models for LLM Evaluation

14.3. Qualitative Analysis and Insights

14.4. Accuracy and Confidence Metrics

- = Number of correct responses

- = Total questions assessed

14.5. Reliability and Consistency Models

14.6. Domain-Specific Evaluation Frameworks

14.6.1. Medical Diagnostics

14.6.2. Financial Analysis

14.7. Composite Evaluation Index

| Domain | |||

|---|---|---|---|

| Medicine | 0.6 | 0.3 | 0.1 |

| Finance | 0.5 | 0.2 | 0.3 |

| Legal | 0.4 | 0.4 | 0.2 |

15. Pseudocode, Methodological, Algorithm, and Architecture

15.1. Pseudocode for Evaluation Pipeline

- 1:

- Input: Test dataset D, LLM model M, Evaluation metrics E

- 2:

- Output: Performance scores S

- 3:

- 4:

- procedure Evaluate()

- 5:

- 6:

- for each sample do

- 7:

- ▹ Generate model response

- 8:

- 9:

- 10:

- end for

- 11:

- return

- 12:

- end procedure

- 13:

- 14:

- function CalculateMetrics()

- 15:

- 16:

- for each metric do

- 17:

- if m == "Accuracy" then

- 18:

- 19:

- else if m == "BLEU" then

- 20:

- 21:

- end if

- 22:

- 23:

- end for

- 24:

- return

- 25:

- end function

15.2. System Architecture

- Preprocessing: Tokenization, prompt templating

- LLM Inference: GPT-4, Claude, or open-source models

- Evaluation Engine: Implements metrics from Section

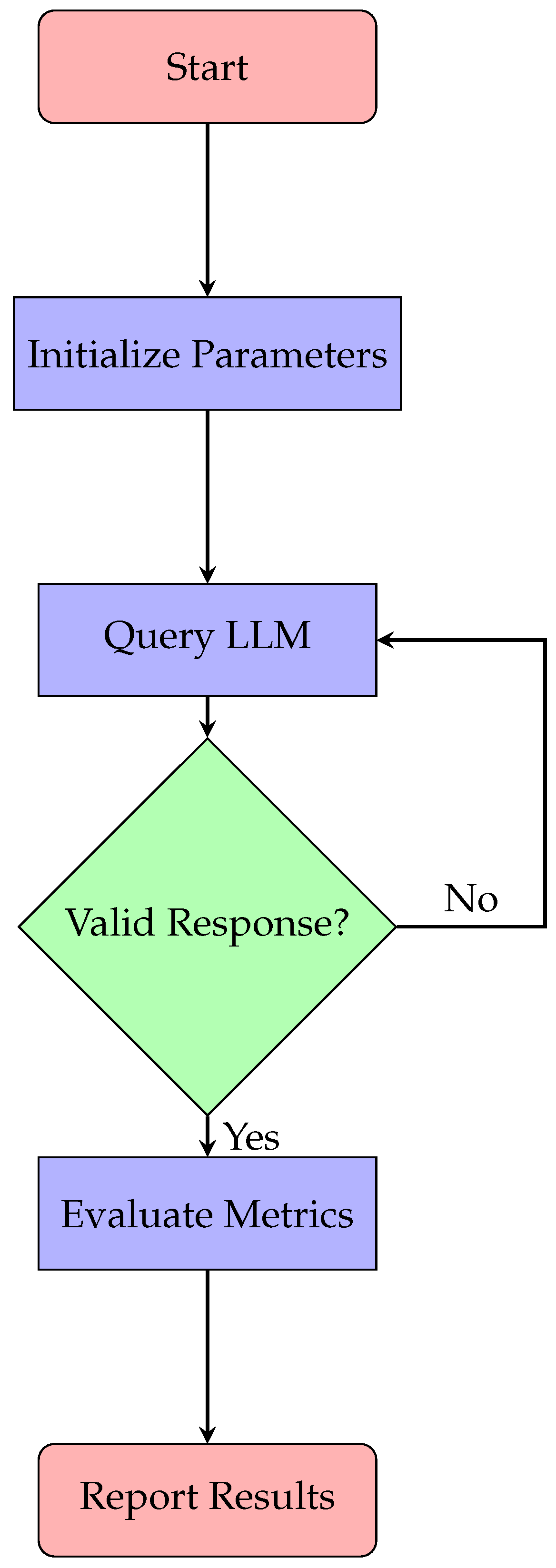

15.3. Evaluation Workflow

-

Data Preparation:

- Curate domain-specific test sets

- Annotate ground truth labels

-

Model Interaction:

- Configure temperature (T) and top-p sampling

- Implement chain-of-thought prompting when needed

- Metric Computation:where are metric weights

-

Analysis:

- Error clustering

- Statistical significance testing

15.4. Flow Diagram

15.5. Model Architecture

- **Input Embedding Layer**: Converts input tokens into dense vector representations.

- **Self-Attention Mechanism**: Captures contextual relationships between tokens.

- **Feedforward Neural Network**: Processes attention outputs to generate predictions.

- **Output Layer**: Produces the final probabilities for token generation.

15.6. Algorithm for Training

16. Methodological Framework

16.1. Training Algorithm

| Algorithm 2 Training Large Language Model |

|

16.2. System Architecture

- **Preprocessing Module**: Cleans and tokenizes input data.

- **Inference Engine**: Executes the trained model to generate outputs.

- **Postprocessing Module**: Formats the output into human-readable text.

- **Monitoring Tools**: Tracks performance metrics such as latency and accuracy during deployment [40].

17. Future Directions

- Standardized benchmarks for domain tasks ([20]).

- Real-time monitoring tools ([8]).

- Hybrid human-AI evaluation pipelines ([70]).

17.1. Workforce Implications and Adoption

17.2. Challenges and Future Directions

17.2.1. Current Limitations

- Lack of standardized benchmarks

- High variance in human evaluation

- Computational cost of comprehensive assessment

- Dynamic nature of model capabilities [74]

17.3. Emerging Solutions

18. Conclusions

References

- Ross, A.; McGrow, K.; Zhi, D.; Rasmy, L. Foundation Models, Generative AI, and Large Language Models: Essentials for Nursing. CIN: Computers, Informatics, Nursing 2024, 42, 377. [Google Scholar] [CrossRef] [PubMed]

- Yang, C.; Stivers, A. Investigating AI Languages’ Ability to Solve Undergraduate Finance Problems. Journal of Education for Business 2024, 99, 44–51. [Google Scholar] [CrossRef]

- Martínez, E. Re-Evaluating GPT-4’s Bar Exam Performance. Artificial Intelligence and Law 2024. [Google Scholar] [CrossRef]

- Beaulieu-Jones, B.R.; Berrigan, M.T.; Shah, S.; Marwaha, J.S.; Lai, S.L.; Brat, G.A. Evaluating Capabilities of Large Language Models: Performance of GPT-4 on Surgical Knowledge Assessments. Surgery 2024, 175, 936–942. [Google Scholar] [CrossRef] [PubMed]

- Callanan, E.; Mbakwe, A.; Papadimitriou, A.; Pei, Y.; Sibue, M.; Zhu, X.; Ma, Z.; Liu, X.; Shah, S. Can GPT Models Be Financial Analysts? An Evaluation of ChatGPT and GPT-4 on Mock CFA Exams. 2023; arXiv:cs/2310.08678]. [Google Scholar] [CrossRef]

- Johnson, D.; Goodman, R.; Patrinely, J.; Stone, C.; Zimmerman, E.; Donald, R.; Chang, S.; Berkowitz, S.; Finn, A.; Jahangir, E.; et al. Assessing the Accuracy and Reliability of AI-Generated Medical Responses: An Evaluation of the Chat-GPT Model. Research Square 2023, rs.3.rs–2566942. [Google Scholar] [CrossRef]

- The Beginner’s Guide to Hallucinations in Large Language Models | Lakera – Protecting AI Teams That Disrupt the World. https://www.lakera.ai/blog/guide-to-hallucinations-in-large-language-models.

- Poduska, J. LLM Monitoring and Observability. https://towardsdatascience.com/llm-monitoring-and-observability-c28121e75c2f/, 2023.

- Zhang, Y. A Practical Guide to RAG Pipeline Evaluation (Part 1). https://blog.relari.ai/a-practical-guide-to-rag-pipeline-evaluation-part-1-27a472b09893, 2024.

- LLM Evaluation Metrics: The Ultimate LLM Evaluation Guide - Confident AI. https://www.confident-ai.com/blog/llm-evaluation-metrics-everything-you-need-for-llm-evaluation.

- Mishra, H. Top 15 LLM Evaluation Metrics to Explore in 2025, 2025.

- Si, C.; Gan, Z.; Yang, Z.; Wang, S.; Wang, J.; Boyd-Graber, J.; Wang, L. Prompting GPT-3 To Be Reliable. 2023, arXiv:cs/2210.09150]. [Google Scholar] [CrossRef]

- Hsiao, C. Moving Beyond Guesswork: How to Evaluate LLM Quality. 2024. Available online: https://blog.dataiku.com/how-to-evaluate-llm-quality.

- Liu, L.X.; Sun, Z.; Xu, K.; Chen, C. AI-Driven Financial Analysis: Exploring ChatGPT’s Capabilities and Challenges. International Journal of Financial Studies 2024, 12, 60. [Google Scholar] [CrossRef]

- Bronsdon, C. Mastering LLM Evaluation: Metrics, Frameworks, and Techniques. Available online: https://www.galileo.ai/blog/mastering-llm-evaluation-metrics-frameworks-and-techniques.

- Large Language Model Evaluation in 2025: 5 Methods. Available online: https://research.aimultiple.com/large-language-model-evaluation/.

- How to Evaluate an LLM System. Available online: https://www.thoughtworks.com/insights/blog/generative-ai/how-to-evaluate-an-LLM-system.

- Evaluating Prompt EffectivenessKey Metrics and Tools for AI Success. 2024. Available online: https://portkey.ai/blog/evaluating-prompt-effectiveness-key-metrics-and-tools/.

- LLM Evaluation: Top 10 Metrics and Benchmarks.

- A Complete Guide to LLM Evaluation and Benchmarking. 2024. Available online: https://www.turing.com/resources/understanding-llm-evaluation-and-benchmarks.

- Testing Your RAG-Powered AI Chatbot. Available online: https://hatchworks.com/blog/gen-ai/testing-rag-ai-chatbot/.

- 8 Metrics to Measure GenAI’s Performance and Business Value | TechTarget. Available online: https://www.techtarget.com/searchenterpriseai/feature/Areas-for-creating-and-refining-generative-AI-metrics.

- Understanding LLM Evaluation Metrics For Better RAG Performance. 2025. Available online: https://www.protecto.ai/blog/understanding-llm-evaluation-metrics-for-better-rag-performance.

- LLM Benchmarks, Evals and Tests: A Mental Model. Available online: https://www.thoughtworks.com/en-us/insights/blog/generative-ai/LLM-benchmarks,-evals,-and-tests.

- mn.europe. Prompt Evaluation Metrics: Measuring AI Performance - Artificial Intelligence Blog & Courses, 2024.

- Qualitative Metrics for Prompt Evaluation. 2025. Available online: https://latitude-blog.ghost.io/blog/qualitative-metrics-for-prompt-evaluation/.

- Srivastava, T. 12 Important Model Evaluation Metrics for Machine Learning Everyone Should Know (Updated 2025), 2019.

- Evaluating Prompts: A Developer’s Guide. Available online: https://arize.com/blog-course/evaluating-prompt-playground/.

- Assertions & Metrics | Promptfoo. Available online: https://www.promptfoo.dev/docs/configuration/expected-outputs/.

- LLM-as-a-judge: A Complete Guide to Using LLMs for Evaluations. Available online: https://www.evidentlyai.com/llm-guide/llm-as-a-judge.

- What Are Prompt Evaluations? 2025. Available online: https://blog.promptlayer.com/what-are-prompt-evaluations/.

- Pathak, C. Navigating the LLM Evaluation Metrics Landscape, 2024.

- IBM Watsonx Subscription. 2024. Available online: https://www.ibm.com/docs/en/watsonx/w-and-w/2.1.x?topic=models-evaluating-prompt-templates-non-foundation-notebooks.

- Metric Prompt Templates for Model-Based Evaluation | Generative AI. Available online: https://cloud.google.com/vertex-ai/generative-ai/docs/models/metrics-templates.

- Joshi, Satyadhar. Leveraging prompt engineering to enhance financial market integrity and risk management. World Journal of Advanced Research and Reviews WJARR 2025, 25, 1775–1785. [CrossRef]

- Joshi, S. Training US Workforce for Generative AI Models and Prompt Engineering: ChatGPT, Copilot, and Gemini. International Journal of Science, Engineering and Technology ISSN (Online): 2348-4098 2025, 13. [Google Scholar] [CrossRef]

- Blog, D.C. How to Maximize the Accuracy of LLM Models. 2024. Available online: https://www.deepchecks.com/how-to-maximize-the-accuracy-of-llm-models/.

- Jaeckel, T. LLM Evaluation Metrics for Reliable and Optimized AI Outputs. 2024. Available online: https://shelf.io/blog/llm-evaluation-metrics/.

- Huang, J. Evaluating LLM Systems: Metrics, Challenges, and Best Practices, 2024.

- lgayhardt. Evaluation and Monitoring Metrics for Generative AI - Azure AI Foundry. 2025. Available online: https://learn.microsoft.com/en-us/azure/ai-foundry/concepts/evaluation-metrics-built-in.

- LLM Evaluations and Benchmarks. Available online: https://alertai.com/model-risks-llm-evaluation-metrics/.

- Key Metrics for Measuring Prompt Performance. Available online: https://promptops.dev/article/Key_Metrics_for_Measuring_Prompt_Performance.html.

- Measuring Prompt Effectiveness Metrics and Methods. Available online: https://www.kdnuggets.com/measuring-prompt-effectiveness-metrics-and-methods.

- Evaluation Metric For Question Answering - Finetuning Models - ChatGPT. 2023. Available online: https://community.openai.com/t/evaluation-metric-for-question-answering-finetuning-models/44877.

- Prompt Alignment DeepEval The Open-Source LLM Evaluation Framework. 2025. Available online: https://docs.confident-ai.com/docs/metrics-prompt-alignment.

- Prompt-Based Hallucination Metric Testing with Kolena. Available online: https://docs.kolena.com/metrics/prompt-based-hallucination-metric/.

- Evaluating Prompts: Metrics for Iterative Refinement. 2025. Available online: https://latitude-blog.ghost.io/blog/evaluating-prompts-metrics-for-iterative-refinement/.

- Define Your Evaluation Metrics | Generative, AI. Available online: https://cloud.google.com/vertex-ai/generative-ai/docs/models/determine-eval.

- Day 1 - Evaluation and Structured Output. Available online: https://kaggle.com/code/markishere/day-1-evaluation-and-structured-output.

- Prompt Evaluation Methods, Metrics, and Security. Available online: https://wearecommunity.io/communities/ai-ba-stream/articles/6155.

- Evaluating AI Prompt Performance: Key Metrics and Best Practices. Available online: https://symbio6.nl/en/blog/evaluate-ai-prompt-performance.

- Modify Prompts - Ragas. Available online: https://docs.ragas.io/en/stable/howtos/customizations/metrics/_modifying-prompts-metrics/.

- lgayhardt. Evaluation Flow and Metrics in Prompt Flow - Azure Machine Learning. Available online: https://learn.microsoft.com/en-us/azure/machine-learning/prompt-flow/how-to-develop-an-evaluation-flow?view=azureml-api-2.

- What Are Common Metrics for Evaluating Prompts? Available online: https://www.deepchecks.com/question/common-metrics-evaluating-prompts/.

- Top 5 Metrics for Evaluating Prompt Relevance. 2025. Available online: https://latitude-blog.ghost.io/blog/top-5-metrics-for-evaluating-prompt-relevance/.

- (3) Introducing CARE: A New Way to Measure the Effectiveness of Prompts | LinkedIn. Available online: https://www.linkedin.com/pulse/introducing-care-new-way-measure-effectiveness-prompts-reuven-cohen-ls9bf/.

- Establishing Prompt Engineering Metrics to Track AI Assistant Improvements, 2023.

- Monitoring Prompt Effectiveness in Prompt Engineering. Available online: https://www.tutorialspoint.com/prompt_engineering/prompt_engineering_monitoring_prompt_effectiveness.htm.

- Gupta, S. Metrics to Measure: Evaluating AI Prompt Effectiveness, 2024.

- (3) Creating Custom Prompt Evaluation Metric with PromptLab | LinkedIn. Available online: https://www.linkedin.com/pulse/promptlab-creating-custom-metric-prompt-evaluation-raihan-alam-o0slc/.

- Weights Biases. Available online: https://wandb.ai/wandb_fc/learn-with-me-llms/reports/Going-from-17-to-91-Accuracy-through-Prompt-Engineering-on-a-Real-World-Use-Case–Vmlldzo3MTEzMjQz.

- Evaluate Prompt Quality and Try to Improve It -Testing. 2024. Available online: https://club.ministryoftesting.com/t/day-9-evaluate-prompt-quality-and-try-to-improve-it/74865.

- Evaluate Prompts | Opik Documentation. Available online: https://www.comet.com/docs/opik/evaluation/evaluate_prompt.

- Mishra, H. How to Evaluate LLMs Using Hugging Face Evaluate, 2025.

- TempestVanSchaik. Evaluation Metrics. 2024. Available online: https://learn.microsoft.com/en-us/ai/playbook/technology-guidance/generative-ai/working-with-llms/evaluation/list-of-eval-metrics.

- Heidloff, N. Metrics to Evaluate Search Results. 2023. Available online: https://heidloff.net/article/search-evaluations/.

- LLM Evaluation & Prompt Tracking Showdown: A Comprehensive Comparison of Industry Tools - ZenML Blog. Available online: https://www.zenml.io/blog/a-comprehensive-comparison-of-industry-tools.

- LLM Evaluation & Prompt Tracking Showdown: A Comprehensive Comparison of Industry Tools - ZenML Blog. Available online: https://www.zenml.io/blog/a-comprehensive-comparison-of-industry-tools.

- TempestVanSchaik. Evaluation Metrics. 2024. Available online: https://learn.microsoft.com/en-us/ai/playbook/technology-guidance/generative-ai/working-with-llms/evaluation/list-of-eval-metrics.

- Weights & Biases. Available online: https://wandb.ai/onlineinference/genai-research/reports/LLM-evaluations-Metrics-frameworks-and-best-practices–VmlldzoxMTMxNjQ4NA.

- Satyadhar, J. Retraining US Workforce in the Age of Agentic Gen AI: Role of Prompt Engineering and Up-Skilling Initiatives. International Journal of Advanced Research in Science, Communication and Technology (IJARSCT) 2025, 5. [Google Scholar] [CrossRef]

- Joshi, S. Review of Gen AI Models for Financial Risk Management. International Journal of Scientific Research in Computer Science, Engineering and Information Technology ISSN : 2456-3307 2025, 11, 709–723. [Google Scholar]

- Evaluating Prompt Templates in Projects Docs. 2015. Available online: https://dataplatform.cloud.ibm.com/docs/content/wsj/model/dataplatform.cloud.ibm.com/docs/content/wsj/model/wos-eval-prompt.html.

- Top 5 Prompt Engineering Tools for Evaluating Prompts. 2024. Available online: https://blog.promptlayer.com/top-5-prompt-engineering-tools-for-evaluating-prompts/.

- QuantaLogic AI Agent Platform. Available online: https://www.quantalogic.app.

- Metrics For Prompt Engineering | Restackio. Available online: https://www.restack.io/p/prompt-engineering-answer-metrics-for-prompt-engineering-cat-ai.

- Metrics.Eval Promptbench 0.0.1 Documentation. Available online: https://promptbench.readthedocs.io/en/latest/reference/metrics/eval.html.

| Domain | Structured Tasks | Open-Ended | Variance |

|---|---|---|---|

| Medicine | 71.3% | 47.9% | 36.4% |

| Finance | 67.9% | 58.2% | 28.1% |

| Legal | 62.4% | 41.7% | 39.8% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).