Submitted:

25 March 2025

Posted:

28 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- a new architecture and a model for multi-person pose forecasting that achieves state-of-the-art results

- a Multi-person joint distance loss (MPJD) and Velocity loss (VL) to encourage the model to learn interaction and movement dynamics

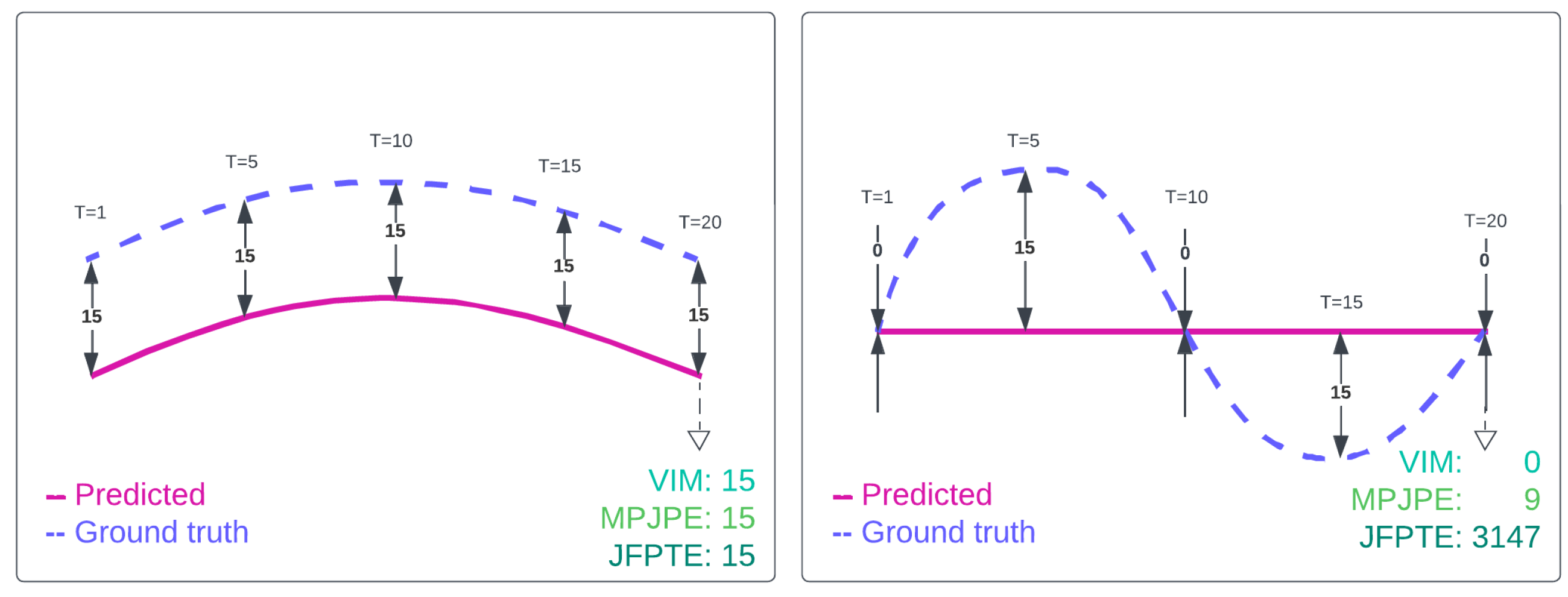

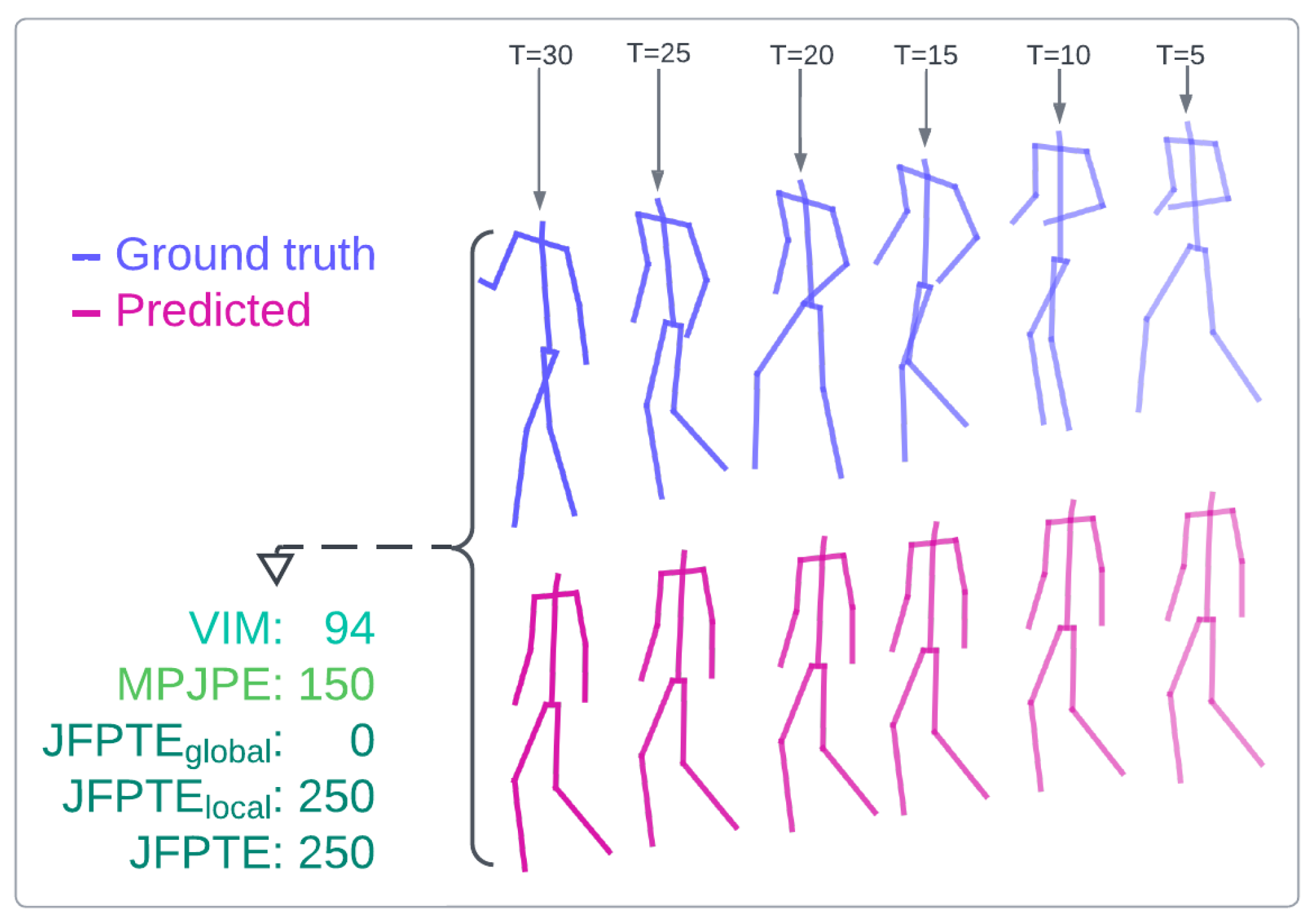

- a new evaluation metric for pose forecasting that considers the movement trajectory and the final position (FJPTE)

2. Related Work

3. Background of Graph Convolutional Networks and Transformers

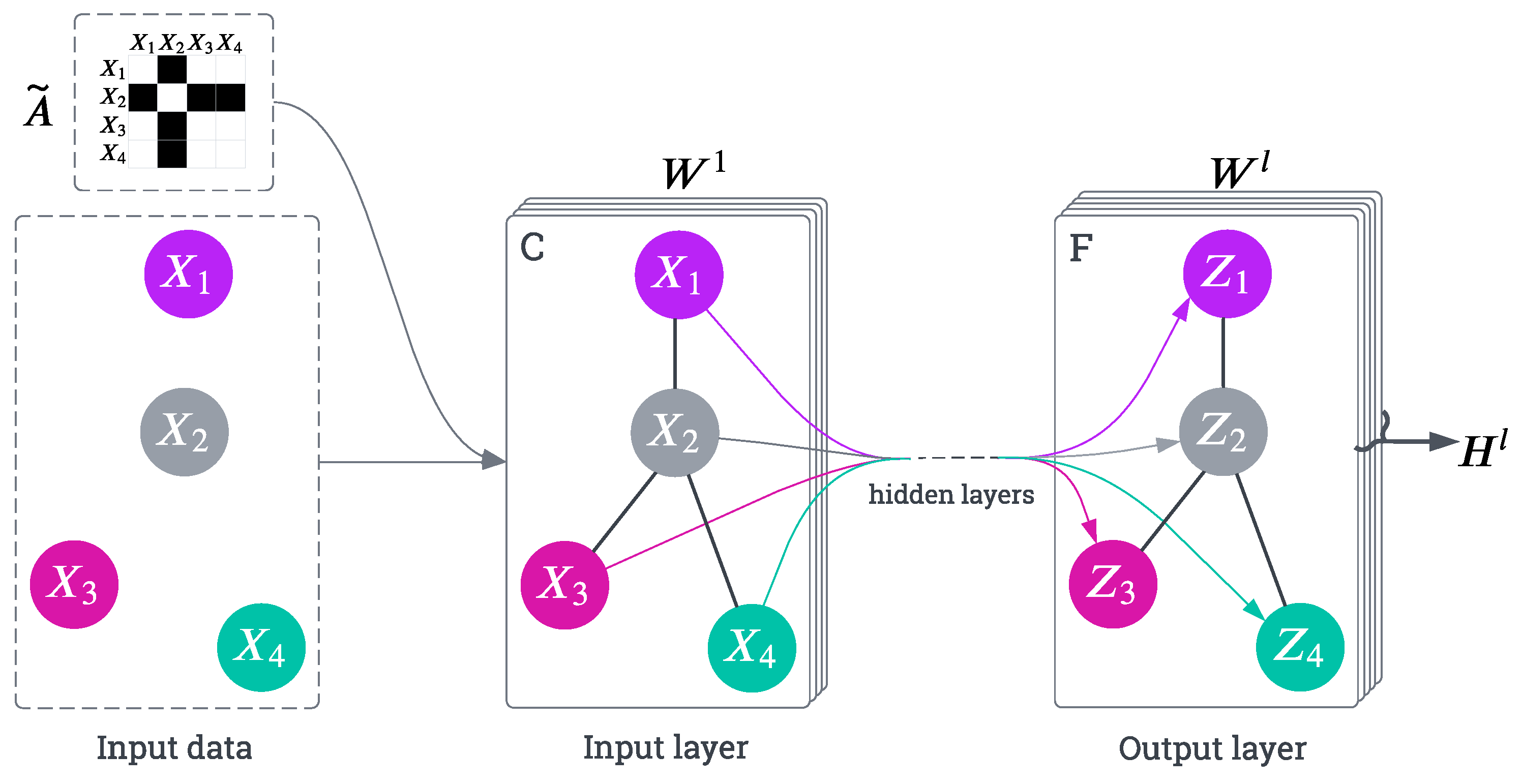

3.1. Graph Convolutional Networks

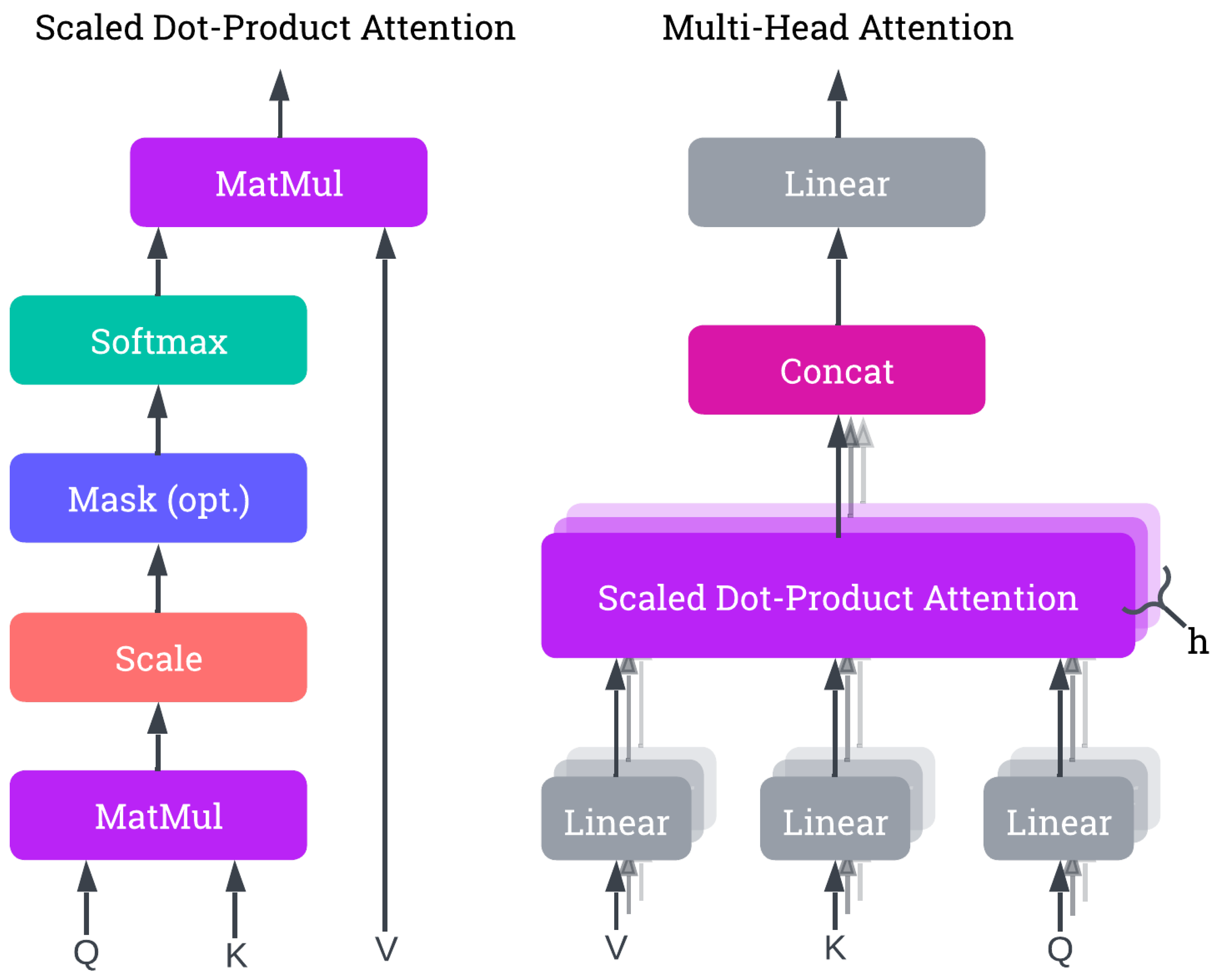

3.2. Transformer Architecture

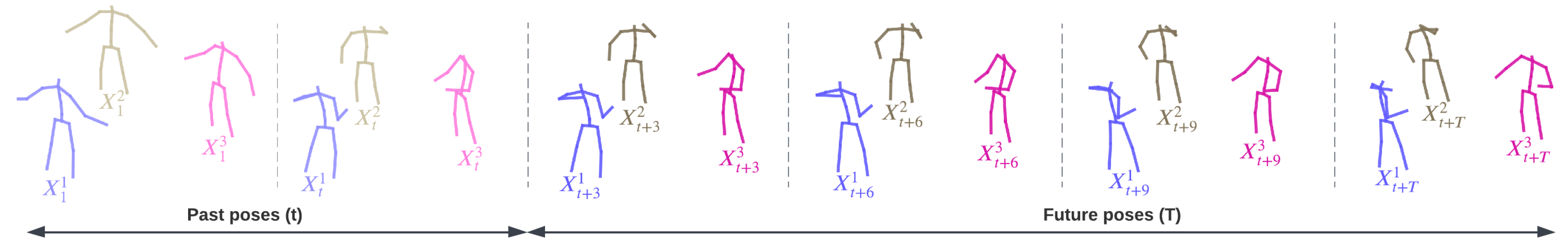

4. Problem Formulation for Multi-Person Forecasting

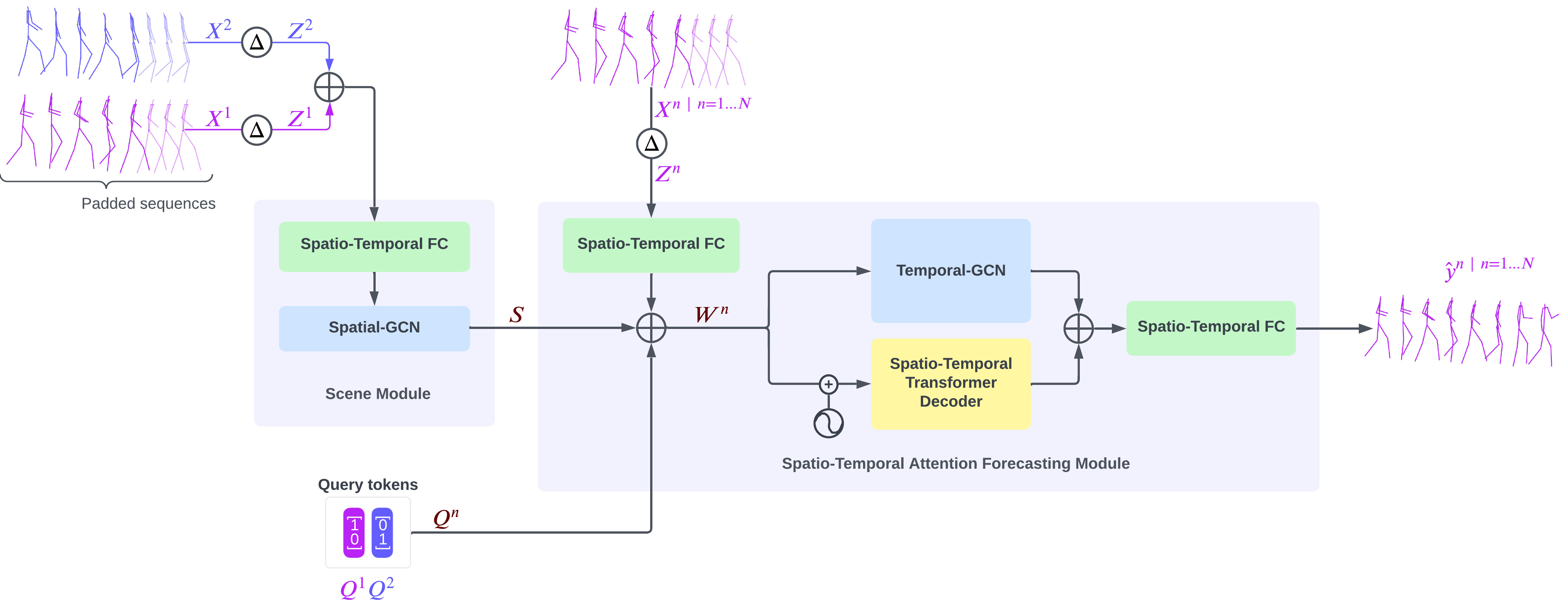

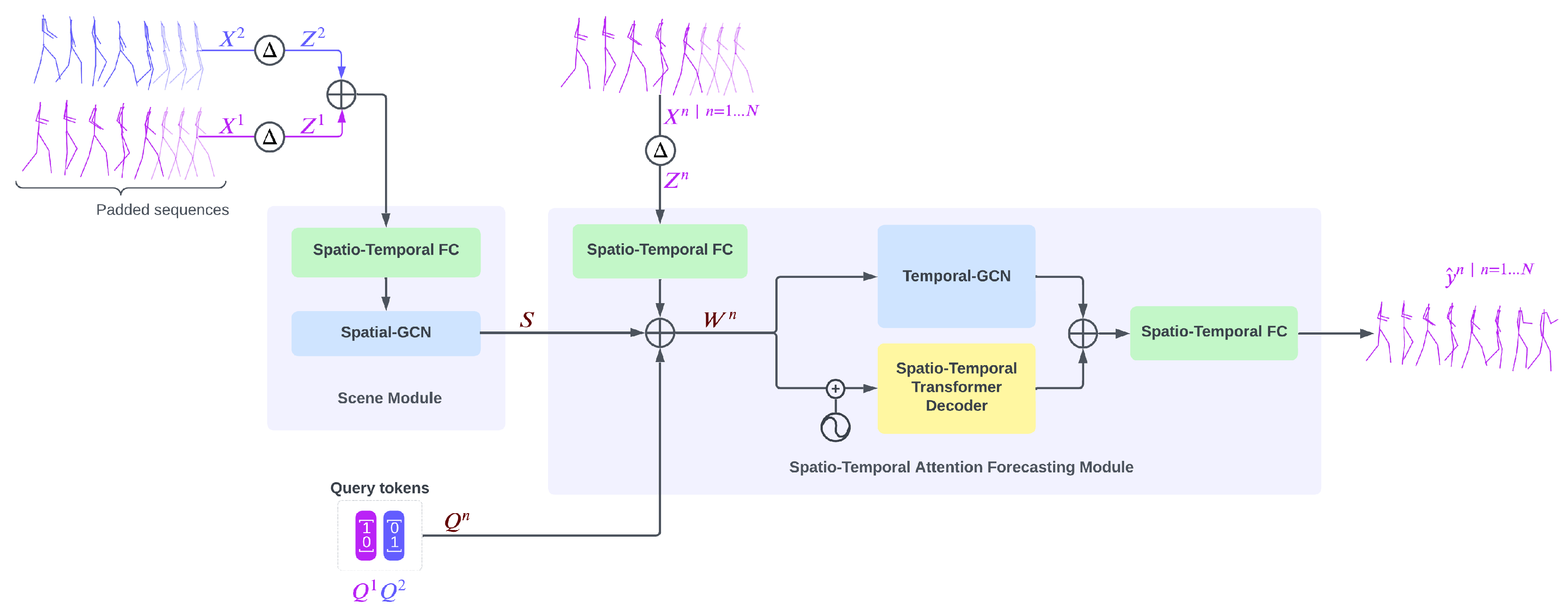

5. Proposed Architecture and Model

5.1. Scene Module

5.2. Spatio-Temporal Attention Forecasting Module

5.3. Data Preprocessing

5.4. Data Augmentation

5.5. Training

6. Experimental Results

6.1. Metrics

6.2. Datasets

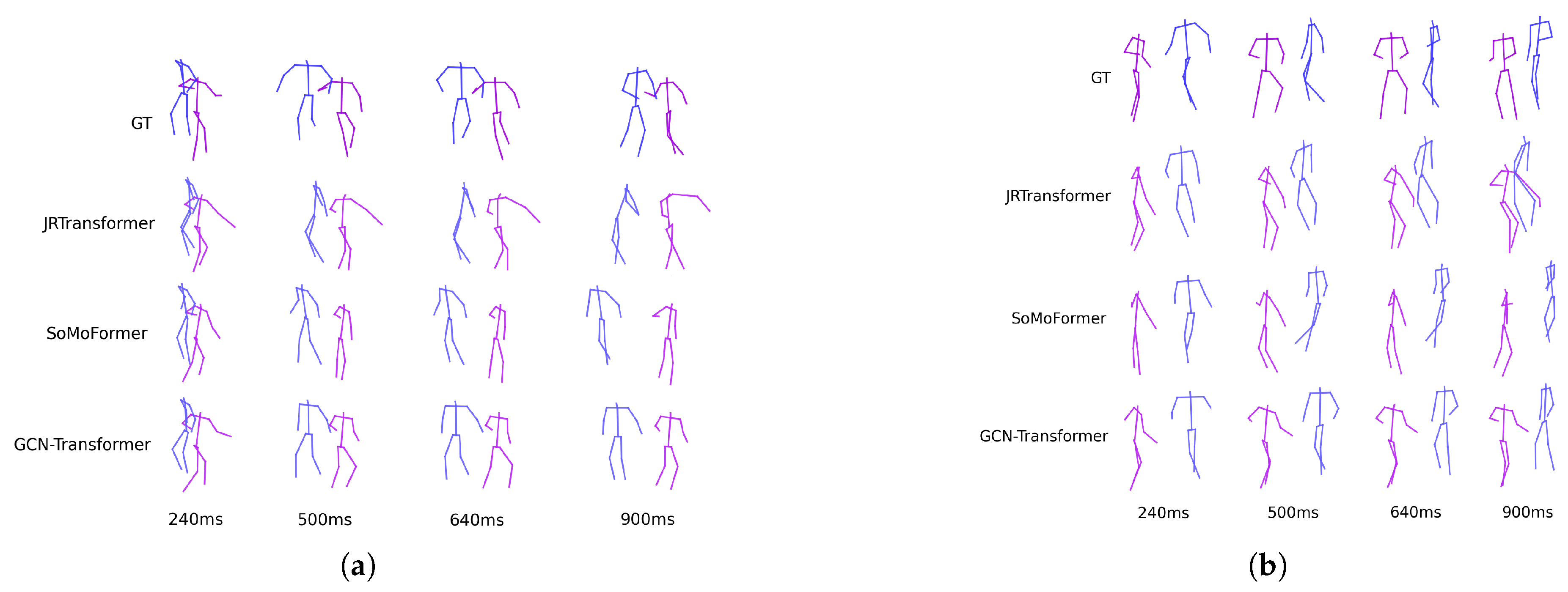

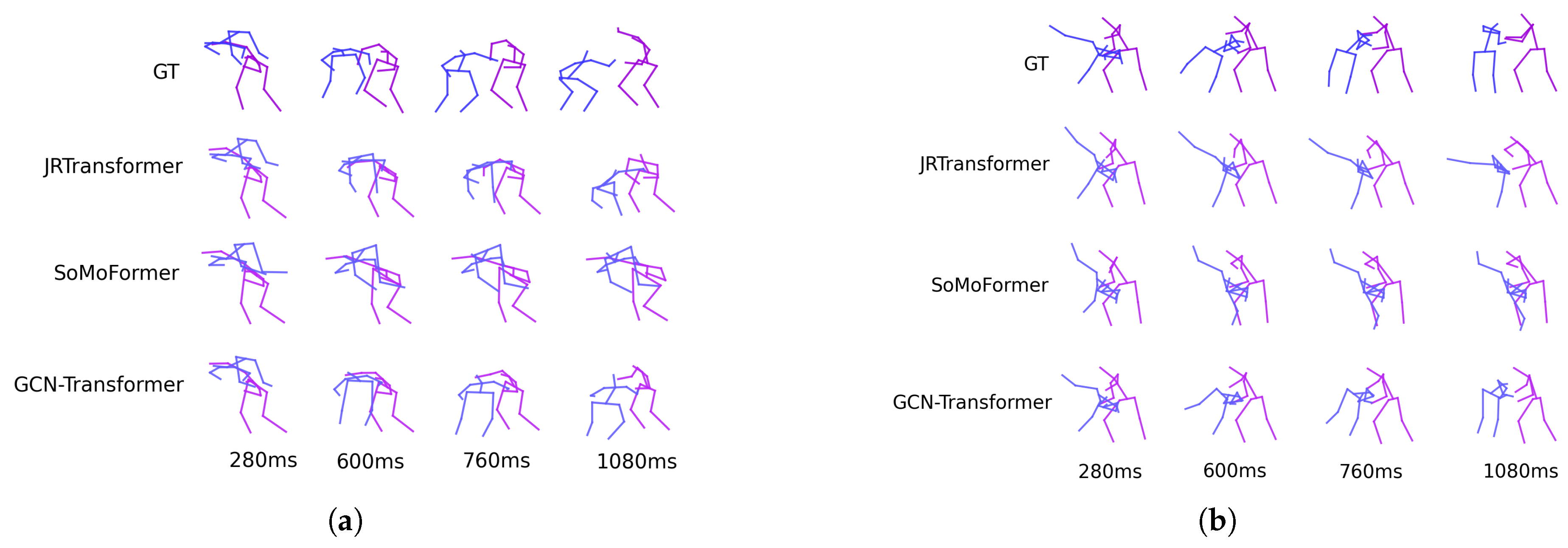

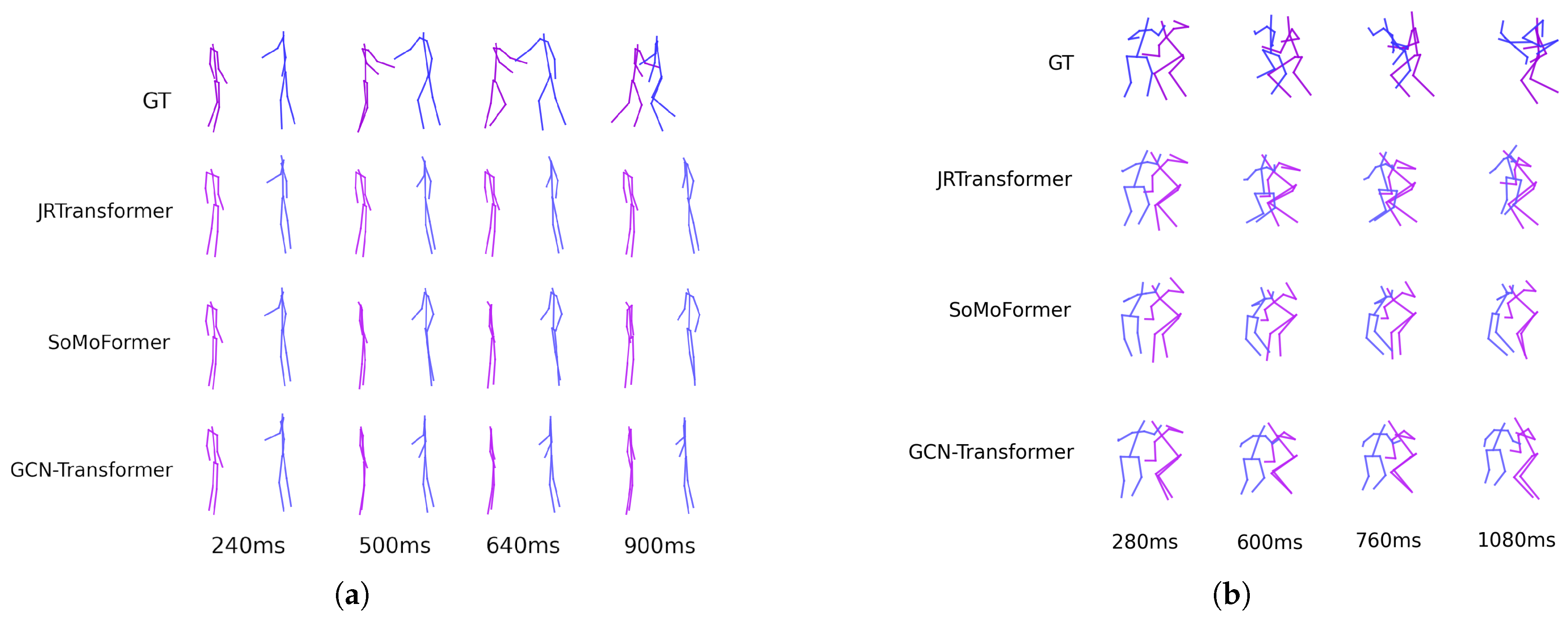

6.3. Results on SoMoF Benchmark

6.4. Results on ExPI dataset

7. Ablation Study

8. FJPTE: FINAL JOINT POSITION AND TRAJECTORY ERROR

9. Limitations

10. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Chiu, H.k.; Adeli, E.; Wang, B.; Huang, D.A.; Niebles, J.C. Action-agnostic human pose forecasting. In Proceedings of the 2019 IEEE winter conference on applications of computer vision (WACV). IEEE; 2019; pp. 1423–1432. [Google Scholar]

- Huang, Y.; Bi, H.; Li, Z.; Mao, T.; Wang, Z. Stgat: Modeling spatial-temporal interactions for human trajectory prediction. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision; 2019, pp. 6272–6281.

- Mao, W.; Liu, M.; Salzmann, M.; Li, H. Learning trajectory dependencies for human motion prediction. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision; 2019, pp. 9489–9497.

- Medjaouri, O.; Desai, K. Hr-stan: High-resolution spatio-temporal attention network for 3d human motion prediction. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2022, pp. 2540–2549.

- He, X.; Zhang, W.; Li, X.; Zhang, X. TEA-GCN: Transformer-Enhanced Adaptive Graph Convolutional Network for Traffic Flow Forecasting. Sensors 2024, 24. [Google Scholar] [CrossRef] [PubMed]

- Jiang, J.; Yan, K.; Xia, X.; Yang, B. A Survey of Deep Learning-Based Pedestrian Trajectory Prediction: Challenges and Solutions. Sensors 2025, 25. [Google Scholar] [CrossRef] [PubMed]

- Guo, W.; Du, Y.; Shen, X.; Lepetit, V.; Alameda-Pineda, X.; Moreno-Noguer, F. Back to mlp: A simple baseline for human motion prediction. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision; 2023, pp. 4809–4819.

- Bouazizi, A.; Holzbock, A.; Kressel, U.; Dietmayer, K.; Belagiannis, V. MotionMixer: MLP-based 3D Human Body Pose Forecasting. In Proceedings of the Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence, IJCAI-22; Raedt, L.D., Ed. International Joint Conferences on Artificial Intelligence Organization, 7 2022, pp. 791–798. Main Track.

- Parsaeifard, B.; Saadatnejad, S.; Liu, Y.; Mordan, T.; Alahi, A. Learning decoupled representations for human pose forecasting. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 2294–2303.

- Wang, C.; Wang, Y.; Huang, Z.; Chen, Z. Simple baseline for single human motion forecasting. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 2260–2265.

- Jaramillo, I.E.; Chola, C.; Jeong, J.G.; Oh, J.H.; Jung, H.; Lee, J.H.; Lee, W.H.; Kim, T.S. Human Activity Prediction Based on Forecasted IMU Activity Signals by Sequence-to-Sequence Deep Neural Networks. Sensors 2023, 23. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Xu, H.; Narasimhan, M.; Wang, X. Multi-person 3D motion prediction with multi-range transformers. Advances in Neural Information Processing Systems 2021, 34, 6036–6049. [Google Scholar]

- Vendrow, E.; Kumar, S.; Adeli, E.; Rezatofighi, H. SoMoFormer: Multi-Person Pose Forecasting with Transformers. arXiv 2022, arXiv:2208.14023 2022. [Google Scholar]

- Šajina, R.; Ivasic-Kos, M. MPFSIR: An Effective Multi-Person Pose Forecasting Model With Social Interaction Recognition. IEEE Access 2023, 11, 84822–84833. [Google Scholar] [CrossRef]

- Xu, Q.; Mao, W.; Gong, J.; Xu, C.; Chen, S.; Xie, W.; Zhang, Y.; Wang, Y. Joint-Relation Transformer for Multi-Person Motion Prediction. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 9816–9826.

- Peng, X.; Mao, S.; Wu, Z. Trajectory-aware body interaction transformer for multi-person pose forecasting. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 17121–17130.

- Peng, X.; Zhou, X.; Luo, Y.; Wen, H.; Ding, Y.; Wu, Z. The MI-Motion Dataset and Benchmark for 3D Multi-Person Motion Prediction. arXiv 2023, arXiv:2306.13566 2023. [Google Scholar]

- Adeli, V.; Ehsanpour, M.; Reid, I.; Niebles, J.C.; Savarese, S.; Adeli, E.; Rezatofighi, H. Tripod: Human trajectory and pose dynamics forecasting in the wild. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 13390–13400.

- Mao, W.; Hartley, R.I.; Salzmann, M.; et al. Contact-aware human motion forecasting. Advances in Neural Information Processing Systems 2022, 35, 7356–7367. [Google Scholar]

- Zhong, C.; Hu, L.; Zhang, Z.; Ye, Y.; Xia, S. Spatio-temporal gating-adjacency gcn for human motion prediction. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 6447–6456.

- Jeong, J.; Park, D.; Yoon, K.J. Multi-agent Long-term 3D Human Pose Forecasting via Interaction-aware Trajectory Conditioning. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 1617–1628.

- Tanke, J.; Zhang, L.; Zhao, A.; Tang, C.; Cai, Y.; Wang, L.; Wu, P.C.; Gall, J.; Keskin, C. Social diffusion: Long-term multiple human motion anticipation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 9601–9611.

- Xu, S.; Wang, Y.X.; Gui, L. Stochastic multi-person 3d motion forecasting. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023.

- Li, L.; Pagnucco, M.; Song, Y. Graph-based spatial transformer with memory replay for multi-future pedestrian trajectory prediction. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 2231–2241.

- Aydemir, G.; Akan, A.K.; Güney, F. Adapt: Efficient multi-agent trajectory prediction with adaptation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 8295–8305.

- Hu, X.; Zhang, Z.; Fan, Z.; Yang, J.; Yang, J.; Li, S.; He, X. GCN-Transformer-Based Spatio-Temporal Load Forecasting for EV Battery Swapping Stations under Differential Couplings. Electronics 2024, 13, 3401. [Google Scholar] [CrossRef]

- Xiong, L.; Su, L.; Wang, X.; Pan, C. Dynamic adaptive graph convolutional transformer with broad learning system for multi-dimensional chaotic time series prediction. Applied Soft Computing 2024, 157, 111516. [Google Scholar] [CrossRef]

- Zhai, K.; Nie, Q.; Ouyang, B.; Li, X.; Yang, S. Hopfir: Hop-wise graphformer with intragroup joint refinement for 3d human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 14985–14995.

- Cheng, H.; Wang, J.; Zhao, A.; Zhong, Y.; Li, J.; Dong, L. Joint graph convolution networks and transformer for human pose estimation in sports technique analysis. Journal of King Saud University - Computer and Information Sciences 2023, 35, 101819. [Google Scholar] [CrossRef]

- Bruna, J.; Zaremba, W.; Szlam, A.; LeCun, Y. Spectral networks and locally connected networks on graphs. arXiv 2013, arXiv:1312.6203 2013. [Google Scholar]

- Atwood, J.; Towsley, D. Diffusion-convolutional neural networks. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907 2016. [Google Scholar]

- Cui, Q.; Sun, H.; Yang, F. Learning dynamic relationships for 3d human motion prediction. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 6519–6527.

- Vaswani, A. Attention is all you need. Advances in Neural Information Processing Systems 2017.

- Mart’inez-Gonz’alez, A.; Villamizar, M.; Odobez, J.M. Pose transformers (potr): Human motion prediction with non-autoregressive transformers. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 2276–2284.

- Peng, X.; Shen, Y.; Wang, H.; Nie, B.; Wang, Y.; Wu, Z. SoMoFormer: Social-Aware Motion Transformer for Multi-Person Motion Prediction. arXiv 2022, arXiv:2208.09224 2022. [Google Scholar]

- Mao, W.; Liu, M.; Salzmann, M. History repeats itself: Human motion prediction via motion attention. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XIV 16. Springer, 2020, pp. 474–489.

- Von Marcard, T.; Henschel, R.; Black, M.J.; Rosenhahn, B.; Pons-Moll, G. Recovering accurate 3d human pose in the wild using imus and a moving camera. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 601–617.

- Mahmood, N.; Ghorbani, N.; Troje, N.F.; Pons-Moll, G.; Black, M.J. AMASS: Archive of motion capture as surface shapes. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 5442–5451.

- Carnegie Mellon University Motion Capture Database.

- Mehta, D.; Sotnychenko, O.; Mueller, F.; Xu, W.; Sridhar, S.; Pons-Moll, G.; Theobalt, C. Single-shot multi-person 3d pose estimation from monocular rgb. In Proceedings of the 2018 International Conference on 3D Vision (3DV). IEEE, 2018, pp. 120–130.

- Guo, W.; Bie, X.; Alameda-Pineda, X.; Moreno-Noguer, F. Multi-person extreme motion prediction. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 13053–13064.

- Šajina, R.; Ivašić-Kos, M. 3D Pose Estimation and Tracking in Handball Actions Using a Monocular Camera. Journal of Imaging 2022, 8, 308. [Google Scholar] [CrossRef] [PubMed]

- Lie, W.N.; Vann, V. Estimating a 3D Human Skeleton from a Single RGB Image by Fusing Predicted Depths from Multiple Virtual Viewpoints. Sensors 2024, 24. [Google Scholar] [CrossRef] [PubMed]

| Method | VIM | MPJPE | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 100ms | 240ms | 500ms | 640ms | 900ms | Overall | 100ms | 240ms | 500ms | 640ms | 900ms | Overall | |

| Zero Velocity | 29.35 | 53.56 | 94.52 | 112.68 | 143.10 | 86.65 | 55.28 | 87.98 | 146.10 | 173.30 | 223.16 | 137.16 |

| DViTA [9] | 17.40 | 35.62 | 72.06 | 90.87 | 127.27 | 68.65 | 32.09 | 54.48 | 100.03 | 124.07 | 173.01 | 96.74 |

| LTD [3] | 18.07 | 34.88 | 68.16 | 85.07 | 116.83 | 64.60 | 33.57 | 55.21 | 97.57 | 119.58 | 163.69 | 93.92 |

| TBIformer [16] | 17.62 | 34.67 | 67.50 | 84.01 | 116.38 | 64.03 | 32.26 | 53.65 | 95.61 | 117.22 | 160.99 | 91.94 |

| MRT [12] | 15.31 | 31.23 | 63.16 | 79.61 | 111.86 | 60.24 | 27.97 | 47.64 | 87.87 | 108.93 | 151.96 | 84.88 |

| SocialTGCN [17] | 12.84 | 27.41 | 58.12 | 74.59 | 107.19 | 56.03 | 23.10 | 40.24 | 76.91 | 96.89 | 139.01 | 75.23 |

| JRTransformer [15] | 11.17 | 25.73 | 56.50 | 73.19 | 106.87 | 54.69 | 18.44 | 35.38 | 72.26 | 92.42 | 135.12 | 70.73 |

| MPFSIR [14] | 11.57 | 25.37 | 54.04 | 69.65 | 101.13 | 52.35 | 20.31 | 35.69 | 69.58 | 88.36 | 128.37 | 68.46 |

| Future Motion [10] | 10.76 | 24.52 | 54.14 | 69.58 | 100.81 | 51.96 | 18.66 | 34.38 | 69.76 | 88.91 | 129.18 | 68.18 |

| SoMoFormer [13] | 10.45 | 23.10 | 49.76 | 64.30 | 93.34 | 48.19 | 17.63 | 32.42 | 63.86 | 81.20 | 117.97 | 62.62 |

| GCN-Transformer | 10.14 | 22.54 | 48.81 | 63.67 | 94.94 | 48.02 | 17.11 | 31.48 | 62.62 | 80.14 | 118.14 | 61.90 |

| GCN-Transformer* | 9.82 | 21.80 | 46.61 | 60.88 | 91.95 | 46.21 | 16.41 | 30.36 | 60.31 | 76.94 | 113.36 | 59.48 |

| Method | VIM | MPJPE | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 120ms | 280ms | 600ms | 760ms | 1080ms | Overall | 120ms | 280ms | 600ms | 760ms | 1080ms | Overall | |

| Zero Velocity | 25.61 | 48.66 | 84.39 | 97.41 | 118.10 | 74.84 | 46.16 | 74.66 | 124.32 | 145.22 | 181.33 | 114.34 |

| DViTA [9] | 15.44 | 35.27 | 74.43 | 91.44 | 119.51 | 67.22 | 28.31 | 51.63 | 100.85 | 124.49 | 167.98 | 94.65 |

| LTD [3] | 16.22 | 32.94 | 62.73 | 74.60 | 92.84 | 55.87 | 28.83 | 48.73 | 87.37 | 104.82 | 135.61 | 81.07 |

| TBIformer [16] | 16.96 | 35.09 | 67.95 | 81.22 | 103.02 | 60.85 | 30.59 | 52.55 | 95.63 | 115.19 | 150.33 | 88.86 |

| MRT [12] | 15.32 | 32.07 | 61.84 | 74.04 | 94.59 | 55.57 | 27.79 | 47.91 | 87.01 | 104.80 | 137.22 | 80.95 |

| SocialTGCN [17] | 16.79 | 32.71 | 62.61 | 75.24 | 99.15 | 57.30 | 31.14 | 50.58 | 89.18 | 106.95 | 140.68 | 83.71 |

| JRTransformer [15] | 8.40 | 21.14 | 46.20 | 57.63 | 76.94 | 42.06 | 13.57 | 28.01 | 58.47 | 73.27 | 101.04 | 54.87 |

| MPFSIR [14] | 9.15 | 23.05 | 52.31 | 65.49 | 92.46 | 48.49 | 15.56 | 30.55 | 64.84 | 81.81 | 114.94 | 61.54 |

| Future Motion [10] | 16.94 | 34.83 | 68.45 | 83.33 | 108.03 | 62.32 | 30.51 | 52.37 | 96.06 | 116.88 | 156.04 | 90.37 |

| SoMoFormer [13] | 9.43 | 23.88 | 54.78 | 68.71 | 92.38 | 49.84 | 15.22 | 31.08 | 67.33 | 85.37 | 119.37 | 63.67 |

| GCN-Transformer | 8.32 | 20.84 | 44.56 | 54.81 | 74.66 | 40.64 | 13.37 | 27.63 | 57.27 | 71.25 | 97.71 | 53.45 |

| Method | 100ms | 240ms | 500ms | 640ms | 900ms | Overall |

|---|---|---|---|---|---|---|

| Baseline | 15.39 | 28.53 | 55.90 | 68.72 | 93.92 | 52.49 |

| + Temporal-GCN | 12.69 | 28.96 | 58.96 | 69.74 | 89.56 | 51.98 |

| + MPJD loss | 11.08 | 28.80 | 57.52 | 67.55 | 87.95 | 50.58 |

| + Velocity loss | 12.21 | 28.30 | 56.12 | 66.42 | 87.67 | 50.14 |

| + Augmentation | 7.56 | 19.66 | 44.72 | 56.08 | 75.12 | 40.63 |

| Baseline | 31.81 | 45.19 | 77.03 | 93.68 | 127.60 | 75.06 |

| + Temporal-GCN | 23.99 | 41.47 | 79.33 | 96.38 | 127.61 | 73.76 |

| + MPJD loss | 18.09 | 37.54 | 76.08 | 92.69 | 123.51 | 69.58 |

| + Velocity loss | 22.79 | 39.90 | 75.28 | 91.15 | 121.77 | 70.18 |

| + Augmentation | 11.68 | 24.35 | 53.50 | 68.34 | 96.97 | 50.97 |

| Method | FJPTElocal | FJPTEglobal | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 100ms | 240ms | 500ms | 640ms | 900ms | Overall | 100ms | 240ms | 500ms | 640ms | 900ms | Overall | |

| Zero Velocity | 65.36 | 97.18 | 142.35 | 158.79 | 178.72 | 128.48 | 91.12 | 146.51 | 241.69 | 284.08 | 363.52 | 225.38 |

| DViTA [9] | 55.15 | 91.84 | 147.91 | 168.07 | 194.29 | 131.45 | 47.60 | 81.35 | 162.46 | 212.71 | 319.11 | 164.65 |

| LTD [3] | 48.96 | 78.96 | 127.59 | 145.98 | 170.41 | 114.38 | 52.86 | 88.66 | 159.64 | 201.40 | 290.96 | 158.70 |

| TBIformer [16] | 55.24 | 88.28 | 138.76 | 156.81 | 178.97 | 123.61 | 51.19 | 84.53 | 150.47 | 190.78 | 283.36 | 152.07 |

| MRT [12] | 56.38 | 90.59 | 143.17 | 162.19 | 186.11 | 127.69 | 46.74 | 77.70 | 147.95 | 189.65 | 279.84 | 148.37 |

| SocialTGCN [17] | 51.50 | 83.54 | 137.45 | 157.54 | 183.19 | 122.64 | 39.76 | 65.92 | 132.28 | 175.90 | 271.09 | 136.99 |

| JRTransformer [15] | 41.20 | 72.47 | 124.75 | 145.87 | 174.81 | 111.82 | 26.87 | 54.81 | 122.92 | 166.64 | 264.94 | 127.24 |

| MPFSIR [14] | 43.53 | 75.36 | 127.59 | 148.60 | 180.67 | 115.15 | 27.37 | 51.27 | 109.84 | 151.17 | 248.05 | 117.54 |

| Future Motion [10] | 42.74 | 72.22 | 122.18 | 140.77 | 165.83 | 108.75 | 31.04 | 54.72 | 117.86 | 158.93 | 249.45 | 122.40 |

| SoMoFormer [13] | 37.69 | 65.48 | 111.48 | 128.79 | 154.44 | 99.58 | 26.13 | 48.37 | 104.01 | 139.66 | 217.92 | 107.22 |

| GCN-Transformer | 37.22 | 63.78 | 109.06 | 126.12 | 152.72 | 97.78 | 24.35 | 47.42 | 107.12 | 146.38 | 234.51 | 111.96 |

| GCN-Transformer* | 36.76 | 62.29 | 104.96 | 121.68 | 147.97 | 94.73 | 23.63 | 45.89 | 102.05 | 138.45 | 228.94 | 107.79 |

| Method | FJPTElocal | FJPTEglobal | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 120ms | 280ms | 600ms | 760ms | 1080ms | Overall | 120ms | 280ms | 600ms | 760ms | 1080ms | Overall | |

| Zero Velocity | 76.63 | 119.52 | 182.09 | 205.19 | 240.31 | 164.75 | 79.80 | 127.56 | 201.88 | 230.77 | 280.05 | 184.01 |

| DViTA [9] | 56.91 | 101.25 | 176.21 | 206.20 | 252.27 | 158.57 | 45.58 | 83.58 | 164.19 | 202.36 | 271.01 | 153.34 |

| LTD [3] | 60.27 | 97.73 | 159.16 | 182.82 | 217.66 | 143.53 | 47.42 | 80.89 | 141.84 | 169.41 | 215.70 | 131.05 |

| TBIformer [16] | 67.38 | 109.04 | 174.85 | 200.29 | 239.29 | 158.17 | 50.23 | 86.97 | 155.57 | 184.96 | 238.15 | 143.18 |

| MRT [12] | 65.77 | 107.77 | 173.87 | 199.12 | 236.71 | 156.65 | 43.80 | 75.45 | 133.75 | 162.58 | 214.24 | 125.96 |

| SocialTGCN [17] | 72.62 | 110.05 | 174.62 | 201.84 | 247.24 | 161.27 | 52.04 | 83.27 | 149.11 | 178.12 | 237.98 | 140.10 |

| JRTransformer [15] | 37.98 | 71.62 | 130.94 | 155.35 | 197.44 | 118.67 | 26.21 | 52.63 | 102.44 | 126.11 | 168.75 | 95.23 |

| MPFSIR [14] | 41.12 | 77.88 | 145.78 | 174.01 | 225.03 | 132.76 | 27.21 | 54.68 | 112.28 | 140.63 | 207.33 | 108.43 |

| Future Motion [10] | 64.87 | 105.26 | 175.12 | 206.69 | 247.48 | 159.88 | 48.70 | 86.51 | 160.21 | 197.70 | 270.41 | 152.71 |

| SoMoFormer [13] | 41.91 | 80.52 | 150.92 | 179.58 | 224.17 | 135.42 | 28.82 | 57.92 | 118.39 | 148.45 | 204.18 | 111.55 |

| GCN-Transformer | 38.39 | 71.60 | 125.41 | 146.24 | 181.17 | 112.56 | 26.67 | 52.74 | 100.23 | 122.83 | 172.73 | 95.04 |

| Method | SoMoF Benchmark | ExPI | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 100ms | 240ms | 500ms | 640ms | 900ms | Overall | 120ms | 280ms | 600ms | 760ms | 1080ms | Overall | |

| Zero Velocity | 156.48 | 243.69 | 384.04 | 442.87 | 542.24 | 353.86 | 156.43 | 247.07 | 383.97 | 435.95 | 520.36 | 348.76 |

| DViTA [9] | 102.75 | 173.20 | 310.36 | 380.78 | 513.40 | 296.10 | 102.48 | 184.82 | 340.40 | 408.56 | 523.29 | 311.91 |

| LTD [3] | 101.82 | 167.62 | 287.23 | 347.38 | 461.37 | 273.08 | 107.69 | 178.62 | 301.01 | 352.23 | 433.36 | 274.58 |

| TBIformer [16] | 106.43 | 172.81 | 289.23 | 347.59 | 462.33 | 275.68 | 117.61 | 196.01 | 330.42 | 385.25 | 477.45 | 301.35 |

| MRT [12] | 103.11 | 168.29 | 291.12 | 351.84 | 465.95 | 276.06 | 109.58 | 183.22 | 307.63 | 361.70 | 450.95 | 282.62 |

| SocialTGCN [17] | 91.26 | 149.46 | 269.73 | 333.44 | 454.28 | 259.63 | 124.66 | 193.32 | 323.73 | 379.95 | 485.22 | 301.38 |

| JRTransformer [15] | 68.07 | 127.29 | 247.68 | 312.51 | 439.75 | 239.06 | 64.19 | 124.25 | 233.39 | 281.46 | 366.19 | 213.90 |

| MPFSIR [14] | 70.91 | 126.63 | 237.44 | 299.78 | 428.72 | 232.69 | 68.33 | 132.56 | 258.06 | 314.65 | 432.35 | 241.19 |

| Future Motion [10] | 73.78 | 126.94 | 240.04 | 299.70 | 415.28 | 231.15 | 113.57 | 191.77 | 335.33 | 404.39 | 517.89 | 312.59 |

| SoMoFormer [13] | 63.82 | 113.85 | 215.50 | 268.45 | 372.35 | 206.79 | 70.73 | 138.44 | 269.31 | 328.03 | 428.35 | 246.97 |

| GCN-Transformer | 61.57 | 111.21 | 216.17 | 272.50 | 387.22 | 209.73 | 65.07 | 124.34 | 225.64 | 269.07 | 353.90 | 207.60 |

| GCN-Transformer* | 60.39 | 108.19 | 207.01 | 260.13 | 376.91 | 202.53 | - | - | - | - | - | - |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).