Submitted:

14 March 2025

Posted:

20 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Existential Risk and the Astronomical Value Thesis

Decreasing the probability of a particular risk by one in a million would result in an additional 10,000 expected years of civilization, and that would be at least 100 times better than making things go twice as well during this period (p. 68).

Even if we use the most conservative [population estimate], which entirely ignores the possibility of space colonisation and software minds, we find that… the expected value of reducing existential risk by a mere one millionth of one percentage point is at least a hundred times the value of a million human lives (pp. 18–19).

Assuming that on average people have lives of significantly positive welfare, according to total utilitarianism… premature human extinction would be astronomically bad… Even if there are ‘only’ 1014 lives to come (as on our restricted estimate), a reduction in near-term risk of extinction by one millionth of one percentage point would be equivalent in value to a million lives saved; on our main estimate of 1024 expected future lives, this becomes ten quadrillion (1016) lives saved (pp. 10–11).

3. The Perils of Projection

[T]here is no evidence that geopolitical or economic forecasters can predict anything ten years out beyond the excruciatingly obvious… These limits on predictability are the predictable results of the butterfly dynamics of nonlinear systems. In my [expert political judgment] research, the accuracy of expert predictions declined toward chance five years out (Tetlock & Gardner, 2015).

4. Strong Axiological Cluelessness

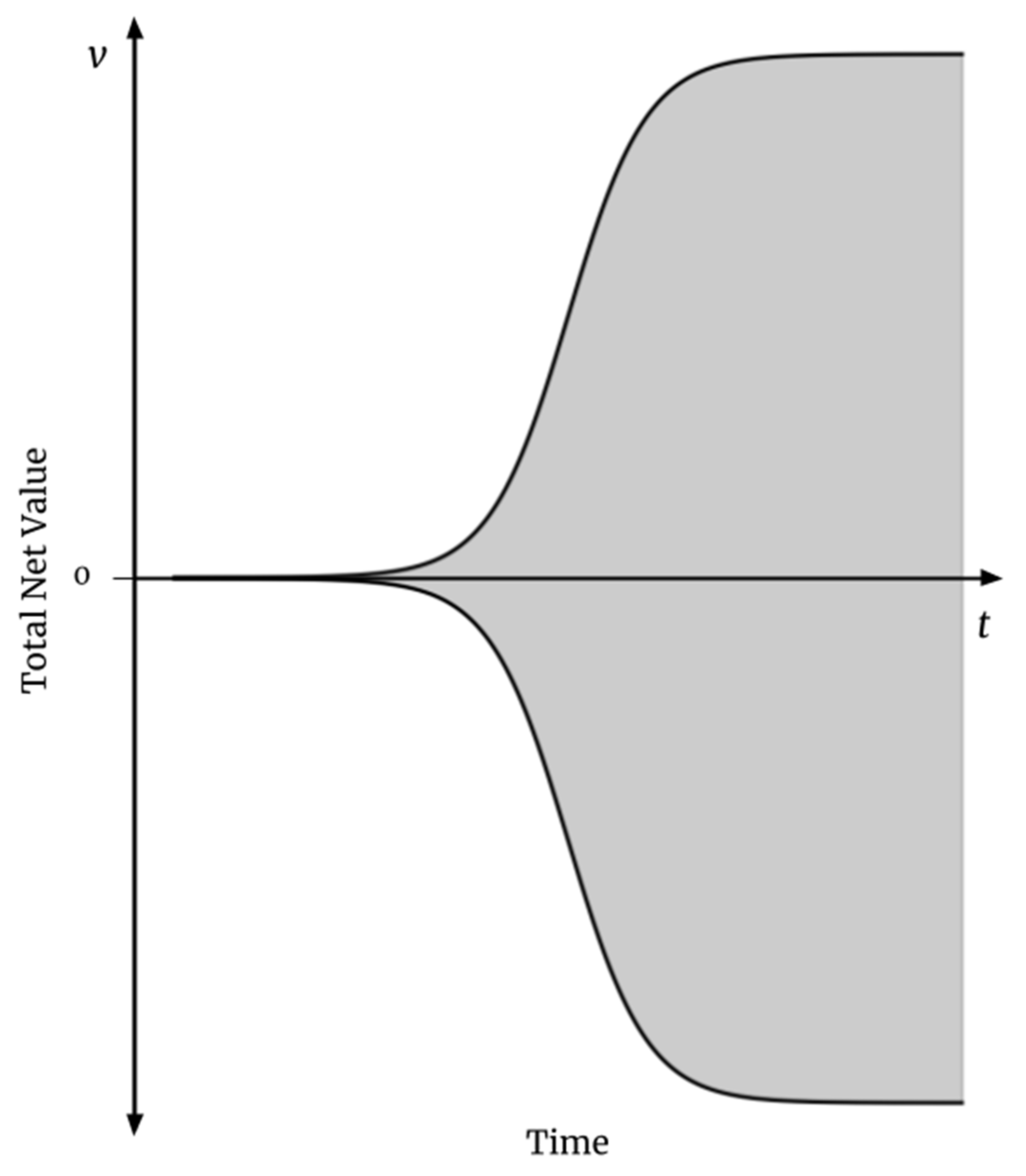

4.1. Expected Value of Humanity’s Continued Existence into the Long-Term Future Under Strong Axiological Cluelessness

4.1.1. Objections

4.2. Cost-Effectiveness of (most) Existential Risk Reduction Under Strong Axiological Cluelessness

5. What If I Don’t Buy Strong Axiological Cluelessness?

5.1. Expected Value of Humanity’s Continued Existence into the Axiologically Uncertain Long-Term Future

5.2. Cost-Effectiveness of (Most) Existential Risk Reduction Under Weak Axiological Cluelessness

6. Implications

The highest far-future ex ante benefits that are attainable without net near-future harm are many times greater than the highest attainable near-future ex ante benefits (Greaves and MacAskill, 2021 p. 4).

The example of [artificial superintelligence] risk also ensures that our argument goes through even if, in expectation, the continuation of civilisation into the future would be bad (Althaus and Gloor 2018; Arrhenius and Bykvist 1995: ch. 3; Benatar 2006). If this were true, then reducing the risk of human extinction would no longer be a good thing, in expectation. But in the AI lock-in scenarios we have considered, there will be a long-lasting civilisation either way. By working on AI safety and policy, we aim to make the trajectory of that civilisation better, whether or not it starts out already ‘better than nothing’ (2021 p. 18).

7. Conclusion

References

- Althaus, David, and Gloor, Lukas. 2016. “Reducing risks of astronomical suffering: A neglected priority.” Center on Long-Term Risk. Available online: https://longtermrisk.org/reducing-risks-of-astronomical-suffering-a-neglected-priority/.

- Anthis, Jacy Reese and Paez, Eze. 2021. “Moral circle expansion: A promising strategy to impact the far future.” Futures.

- Armstrong et al. 2014. “The errors, insights and lessons of famous AI predictions – and what they mean for the future” Journal of Experimental & Theoretical Artificial Intelligence 26:317-342.

- Beckstead, Nicholas. 2013. On the overwhelming importance of shaping the far future. Ph.D. thesis, Rutgers University. Available online: https://rucore.libraries.rutgers.edu/rutgers-lib/40469/PDF/1/play/.

- Beckstead, Nick and Thomas, Teruji. 2023. “A paradox for tiny probabilities and enormous values.” Noûs 58:431–455.

- Bernard, D.R. 2020. “Estimating long-term treatment effects without long-term outcome data.” Global Priorities Institute Working Paper No. 11-2020.

- Bostrom, Nick. 2013. “Existential risk prevention as a global priority.” Global Policy 4:15–31.

- —. 2014. Superintelligence: Paths, dangers, strategies. Oxford University Press.

- Bramble, Ben and Fischer, Bob. 2015. The moral complexities of eating meat. Oxford University Press.

- Browning, H. & Veit, W. (2022). “Longtermism and animals.” Preprint.

- Christensen et al. 2018. “Uncertainty in forecasts of long-run economic growth.” Proceedings of the National Academy of Sciences 115:5409–5414.

- Ekman, Paul. and Davidson, Richard J. 1994. The nature of emotion: Fundamental questions. Oxford University Press.

- Faria, Catia. 2022. Animal ethics in the wild: Wild animal suffering and intervention in nature. Cambridge University Press.

- Feldman, Fred. 2004. “Classic objections to hedonism.” In Pleasure and the good life: Concerning the nature, varieties, and plausibility of hedonism, 38–54. Oxford University Press.

- Geruso, Michael, and Spears, Dean. 2023. “With a whimper: Depopulation and longtermism.” Population Wellbeing Initiative Working Paper 2304.

- Gillies, Donald. 2000. Philosophical theories of probability. Routledge.

- GiveWell. 2024. “GiveWell's cost-effectiveness analyses.”. Available online: https://www.givewell.org/how-we-work/our-criteria/cost-effectiveness/cost-effectiveness-models.

- Granger, Clive W.J. and Jeon, Yongil. 2007. “Long-term forecasting and evaluation.” International Journal of Forecasting 23:539–551.

- Greaves, Hilary. 2016. “Cluelessness.” Proceedings of the Aristotelian Society 116:311–339.

- Greaves, Hilary and MacAskill, William. 2019. “The case for strong longtermism.” Global Priorities Institute Working Paper 7-2019.

- Hagen, Edward H. 2011. “Evolutionary theories of depression: A critical review.” The Canadian Journal of Psychiatry 56:716–726.

- Heyhoe et al. 2017. “Climate models, scenarios, and projections.” In technical report, Climate Science Special Report: Fourth National Climate Assessment, Volume I.

- Horta, Oscar. 2010. “Debunking the idyllic view of natural processes: Population dynamics and suffering in the wild.” Télos 17:73-88.

- —. 2015. “The problem of evil in nature: Evolutionary bases of the prevalence of disvalue.” Relations 3:17-32.

- Intergovernmental Panel on Climate Change (IPCC). 2021. “Climate change 2021: The physical science basis.” Sixth Assessment Report of the Intergovernmental Panel on Climate Change.

- International Monetary Fund (IMF). 2024. World economic outlook: April 2024. Available online: https://www.imf.org/en/Publications/WEO/Issues/2024/04/16/world-economic-outlook-april-2024.

- Johannsen, Kyle. 2020. Wild animal ethics: The moral and political problem of wild animal suffering. Routledge.

- Kaneda, Toshiko and Bremner, Jason. 2014. “Understanding population projections: Assumptions behind the numbers.” Technical report, Population Reference Bureau.

- Karvetski, Christopher W. 2021. “Superforecasters: A decade of stochastic dominance.” Technical White Paper, October 2021. Good Judgment Inc. Available online: https://goodjudgment.com/wp-content/uploads/2021/10/Superforecasters-A-Decade-of-Stochastic-Dominance.pdf.

- Lazarus, Richard S. 1991. Emotion and adaptation. Oxford University Press.

- Marks, Isaac M., and Nesse, Randolph M. 1994. “Fear and fitness: An evolutionary analysis of anxiety disorders.” Ethology and Sociobiology 15:247-261.

- Masayuki, Morikawa. 2019. “Uncertainty in long-term economic forecasts.” RIETI Discussion Paper Series 19-J-058.

- Millett, P., and Snyder-Beattie, A. 2017. “Existential risk and cost-effective biosecurity.” Health Security 15:373–380.

- Moore, Andrew. 2019. “Hedonism.” The Stanford Encyclopedia of Philosophy. Available online: https://plato.stanford.edu/archives/win2019/entries/hedonism/.

- Muehlhauser, Luke. 2019. “How feasible is long-range forecasting?” Technical report, Open Philanthropy.

- National Academies of Sciences, Engineering, and Medicine. 2000. Beyond six billion: Forecasting the world's population. The National Academies Press.

- Nesse, Randolph M., and Ellsworth, Phoebe C. 2009. “Evolution, emotions, and emotional disorders.” American Psychologist 64:129–139.

- Nesse, Randolph M. 2000. “Is depression an adaptation?” Archives of General Psychiatry 57:14-20.

- Newberry, Toby. 2021 “How many lives does the future hold?” Global Priorities Institute Technical Report No. T2-2021.

- Ng, Yew-Kwang. 1995. “Towards welfare biology: Evolutionary economics of animal consciousness and suffering.” Biology and Philosophy 10:255–285.

- Our World in Data. 2024a. “Life expectancy.”. Available online: https://ourworldindata.org/grapher/life-expectancy.

- —. 2024b. “Childhood Deaths from the Five Most Lethal Infectious Diseases Worldwide.”. Available online: https://ourworldindata.org/grapher/childhood-deaths-from-the-five-most-lethal-infectious-diseases-worldwide.

- O’Brien, Gary David. 2024. “The case for animal-inclusive longtermism.” Journal of Moral Philosophy (published online ahead of print 2024). [CrossRef]

- Paltsev et al. 2021. Global change outlook 2021. MIT Joint Program on the Science and Policy of Global Change.

- Pinker, Steven. 2011. The better angels of our nature: Why violence has declined. Penguin Books.

- Plant, Michael. 2022. “The meat eater problem.” Journal of Controversial Ideas 2:1–21.

- Popper, Karl. 1950. “Indeterminism in quantum physics and in classical physics.” The British Journal for the Philosophy of Science 1:117–133.

- —. 1994. The poverty of historicism. Routledge Classics.

- Rantala, Markus J., and Luoto, Severi. 2022. “Evolutionary perspectives on depression.” In Evolutionary Psychiatry: Current Perspectives on Evolution and Mental Health 117-133. Cambridge University Press.

- Ritchie, Hannah. 2023. “The UN has made population projections for more than 50 years – how accurate have they been?” Our World in Data. Available online: https://ourworldindata.org/population-projections.

- Roser, Max, and Ortiz-Ospina, Esteban. 2016. “Global health.”. Available online: https://ourworldindata.org/health-meta.

- Russell, Jeffrey Sanford. 2023. “On two arguments for fanaticism.” Noûs 58:565–595.

- Singer, Peter. 2011. The expanding circle: Ethics, evolution, and moral progress. Princeton University Press.

- —. 2023. Animal liberation now: The definitive classic renewed. Harper Perennial.

- Tarsney, Christian. 2023a. “The epistemic challenge to longtermism.” Synthese 201:1–37.

- —. 2023b. “Against anti-fanaticism.” Global Priorities Institute Working Paper No. 15-2023.

- etlock, Philip E., and Gardner, Dan M. 2015. Superforecasting: The art and science of prediction. Crown Publishing Group.

- Tetlock, Philip E. 2005. Expert political judgment: How good is it? How can we know? Princeton University Press.

- Tetlock et al. 2023. “Long-range subjective-probability forecasts of slow-motion variables in world politics: Exploring limits on expert judgment.” Futures & Foresight Science 6:1–22.

- Thorstad, David. forthcoming. “Mistakes in the moral mathematics of existential risk.” Ethics.

- Tomasik, Brian. 2015a. “The importance of wild-animal suffering.” Relations 3:133-152.

- —. 2015b. “Risks of astronomical future suffering.” Center on Long-Term Risk. Available online: https://longtermrisk.org/risks-of-astronomical-future-suffering/.

- United Nations. 2024. World population prospects 2024. United Nations, Department of Economic and Social Affairs, Population Division.

- US Census Bureau. 2024. “US population clock.”. Available online: https://www.census.gov/popclock/.

- Wilkinson, Hayden. 2022. “In defense of fanaticism.” Ethics 132:832–862.

- Williamson, Jon. 2018. “Justifying the principle of indifference.” European Journal for Philosophy of Science 8:559–586.

- Wuebbles et al. 2017. “Executive summary.” In technical report, Climate Science Special Report: Fourth National Climate Assessment, Volume I.

| N (biothreats/century) | Original C/NLR ($/life-year) |

|---|---|

| 0.005 to 0.02 | 0.125 to 5.00 |

| 1.6 ✕ 10-6 to 8 ✕ 10-5 | 31.00 to 1,600 |

| 5 ✕ 10-5 to 1.4 ✕ 10-4 | 18.00 to 50.00 |

| N (biothreats/century) | Original C/NLR ($/life-year) | Strongly Axiologically Clueless C/NLR ($/life-year) |

|---|---|---|

| 0.005 to 0.02 | 0.125 to 5.00 | 31.57 to 126 |

| 1.6 ✕ 10-6 to 8 ✕ 10-5 | 31.00 to 1,600 | 7,891 to 394,571 |

| 5 ✕ 10-5 to 1.4 ✕ 10-4 | 18.00 to 50.00 | 4,509 to 12,626 |

| Future population size | Probability needed for astronomical value | |

|---|---|---|

| Constant distribution | Normal distribution | |

| 1058 (Bostrom, 2014 p. 101–102) | 50.0000000000001% | 50.0000000000001% |

| 1024 (Greaves & MacAskill, 2021 p. 9; Newberry, 2021 p. 11–12) | 50.00000001% | 50.00000001% |

| 1018 (Greaves & MacAskill, 2021 p. 9; Newberry, 2021 p. 11–12) | 50.01% | 50.01% |

| 1014 (Greaves & MacAskill, 2021 p. 9; Newberry, 2021 p. 11–12) | 100% | 100% |

| 3 ✕ 1010 = 30 billion (Geruso & Spears, 2023) | N/A | N/A |

| N (biothreats/century) | Original C/NLR ($/life-year) | Strongly Axiologically Clueless C/NLR ($/life-year) | Weakly Axiologically Clueless C/NLR ($/life-year) | |

|---|---|---|---|---|

| Probability of saving more net-positive life-years than negative ones | N/A | 100% | 50% | 50.1% |

| 0.005 to 0.02 | 0.125 to 5.00 | 31.57 to 126 | 0.4 to 1.60 | |

| 1.6 ✕ 10-6 to 8 ✕ 10-5 | 31.00 to 1,600 | 7,891 to 394,571 | 99.83 to 4,991 | |

| 5 ✕ 10-5 to 1.4 ✕ 10-4 | 18.00 to 50.00 | 4,509 to 12,626 | 57.04 to 159.73 |

| 1 | I’m thankful to David Thorstad for his feedback on this description. |

| 2 | Further on in their paper, Greaves and MacAskill (2021) discuss the ramifications of this assumption. I will address this in section six of this paper: “Implications.” |

| 3 | By “to a decision-relevant extent,” I mean that the net expected value of humanity’s continued existence must be large enough to shift the decision of someone who aims to do the most impartial good they can with limited resources. For instance, the future’s net expected value is large to a decision-relevant extent if it means that existential risk mitigation saves more net-positive lives per dollar than other interventions do. |

| 4 | I haven’t discussed the following reservations in this paper, but I believe they deserve a brief mention. First, it appears plausible that, due to the suffering of non-human beings, total net value has not increased over time, turning the “outside view” consideration against astronomically positive expected value in the future. Second, plausible criteria for moral progress outside of Singer’s tell a less clear story about moral progress. Third, it may be difficult to trust criteria for moral progress developed after events that may have determined our moral views and used ex post. |

| 5 | I thank Adeoluwawumi Adedoyin for his assistance in revising this description. |

| 6 | Popper (1950) offers the original proof, and this summary of it is described by Popper (1994, p. 9). |

| 7 | I’m thankful to Christopher Clay for his assistance with revising this explanation. |

| 8 | I emphasize the verifiability of these (and other) quantitative forecasts because judging the accuracy of long-term forecasts is difficult when made in qualitative terms (Muehlhauser, 2019). |

| 9 | Even if we think AI might become capable of reliable long-term forecasts, we do not yet have these forecasts, and we do not know what they will predict, or their implications for the future’s total net value. Hence, this possibility does not undermine strong axiological cluelessness until such forecasts are made. Until then, we should account for our enormous—or complete—uncertainty about the long-term future’s total net value. |

| 10 | I emphasize that the principle of indifference is only being applied to this particular case to avoid objections to a general form of the principle of indifference. I discuss further in Section 4.1.1. On a separate note, because the future’s total net value qualifies as a continuous random variable, we can effectively ignore the possibility of the long-term future’s total net value being zero, as there is zero probability of the future net value being exactly equal to zero. |

| 11 | While strong axiological cluelessness permits us to consider the value of humans expected to live in the foreseeable future, I omit this value for two reasons. First, precisely estimating the number of years that our forecasts about the future’s net value remain accurate is outside of this paper’s scope. Second, this omission does not affect the point I intend to illustrate, as it appears implausible that the future’s expected population over the next millennium or so is astronomically large. |

| 12 | Assuming that a rational agent subscribes to Bayesian epistemology and the principle of indifference or other methods of assigning probabilities that produce the same outcome. |

| 13 | (10 × 0.9) + (-10 × 0.1) = 8 |

| 14 | First, this example uses single point-estimate probabilities of two outcomes instead of a probability distribution over many possible states. Second, the exact probability of short-term interventions having a negative value is not necessarily 10 percent; it may be higher or lower. Finally, as mentioned above, with no certainty about the long-term future’s net value, ignoring indirect effects on non-human beings, the expected value of decreasing the probability of human extinction (or a permanent reduction in the size of the human population) becomes the number of humans that will die in such an event (eight billion for human extinction) multiplied by the percentage reduction in the risk of these outcomes. |

| 15 | I’m deeply grateful to David Thorstad for pressing me on this subject. |

| 16 | Millett and Snyder-Beattie use the expected size of the human population, assuming a constant population of ten billion people on Earth for one million years to derive the estimated life-years lost (L) in a biological existential catastrophe. |

| 17 | For instance, arguing for strong longtermism, Greaves and MacAskill (2021) write, “higher [future population] numbers would make little difference to the arguments of this paper” (p. 9). |

| 18 | In this case, I did not create a normal probability distribution for modeling simplicity. As indicated by Table 3, this omission should not affect the results. |

| 19 | I say “perhaps” because Thorstad (forthcoming) proposes other plausible reasons why Millett and Snyder-Beattie’s model overestimates the cost-effectiveness of biorisk reduction. If these reasons were accounted for, the probability that we assign to futures full of net-positive life-years would need to rise to offset the reduced cost-effectiveness of such a revised model. |

| 20 | To clarify which existential risks are robust to strong axiological cluelessness, I’ll explain the distinction between s-risks and existential risks (x-risks). Neither of these two concepts are a subset of the other, but they sometimes overlap, such that a risk is considered both an s-risk and an x-risk, as in the prospect of “AI lock-in” (Althaus & Gloor, 2016). If we subscribe to strong axiological cluelessness, it is only in the reduction of risks considered to be both s-risks and x-risks that astronomical gains are to be found. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).