2.1. Deep Learning-Based Plants Recognition Methods

Convolutional neural networks can extract higher dimensional spatial features than traditional statistical or machine learning methods and effectively capture local image details through convolutional kernels. Overhead images from unmanned aerial vehicles provide clear views of tree canopies and are commonly used for identification and segmentation of tree species.

Moritake et al. [

8] verified that the ResNet\Swin\ViT\ConvNeXt outperforms human observers in the classification accuracy of tree species. Beloiu et al. [

9] verified that Faster R-CNN can accurately classify the four categories of Norway spruce, silver fir, Scots pine, and European beech using to train the model for single and multiple tree species, respectively. Zhang et al. [

10] proposed a scale sequence residual method, SS Res U-Net, for frailejones tree extraction. The features learned on small scales are gradually transferred to larger scales to achieve multi-scale information fusion while preserving the fine spatial details of interest. Santos et al. [

11] evaluated the effectiveness of the Faster R-CNN, YOLOv3, and RetinaNet methods for the endangered tree (Dipteryx alata Vogel). Ventura et al. [

12] proposed HR SFANet based on the structure of SFANet to predict single trees in urban environments and then localize them using a peak finding algorithm. Lv et al. [

13] proposed an attention-coupled network MCAN to improve the feature extraction ability of the model through a convolutional block attention module, enhance the local detail information detection ability, and improve the individual tree extraction accuracy. Choi et al. [

14] predicted tree height, diameter, and location of street trees in Google Street View images based on the Yolo model, which estimates tree profile parameters by determining the tree's relative position to the surface interface.

Transformer [

15,

16] can better capture long-distance dependencies, and more and more scholars have begun to explore how to introduce the self-attention mechanism into image feature extraction methods. Yuan et al. [

17] proposed a lightweight attention-enhanced Yolo method via the necking network CACSNet to enhance the detection of single-grained diseased trees and optimize the loss function to improve localization accuracy. Amirkolaee et al. [

18] proposed a semi-supervised TreeFormer for tree counting in satellite imagery. Firstly, multi-scale features are acquired by the Transformer encoder, and subsequently, the encoder output is processed using a designed feature fusion module and a tree density regressor. Finally, tree counter markers are introduced to regulate the network by calculating global tree counts for labeled and unlabeled images. Gibril et al. [

19] proposed a SwinT-based instance segmentation framework for detecting individuals of date palm trees.

Satellite remote sensing and airborne remote sensing images are limited to the canopy, while trunks and branches provide essential features for classifying specific tree species [

20]. Managing urban greening often requires monitoring tree diseases, tree growth status, and tree species, which is usually done with natural images from flat or overhead viewpoints. Li et al. [

21] proposed TrunkNet, a tree trunk detection method for urban scenes, which adds a texture attention module to increase the attention to the bark features and improve the detection accuracy. Benchallal et al. [

22] proposed a semi-supervised ConvNeXt method to fully use many unlabeled data to train the model for weed species identification and characterization without labeled data. Qian et al. [

23] increased the multi-scale feature fusion capability of the network by adding RFB and SA modules that realized real-time identification and monitoring of Eichhornia crassipes under different environmental and weather conditions. Li et al. [

24] proposed a tree species identification and factor explanation model Ev2S_SHAP to explain the effects of environmental and foliage factors on tree identification and recognition accuracy. Sun et al. [

25] provided a dataset of plant images in a natural scene based on residual modeling for large-scale plant species classification.

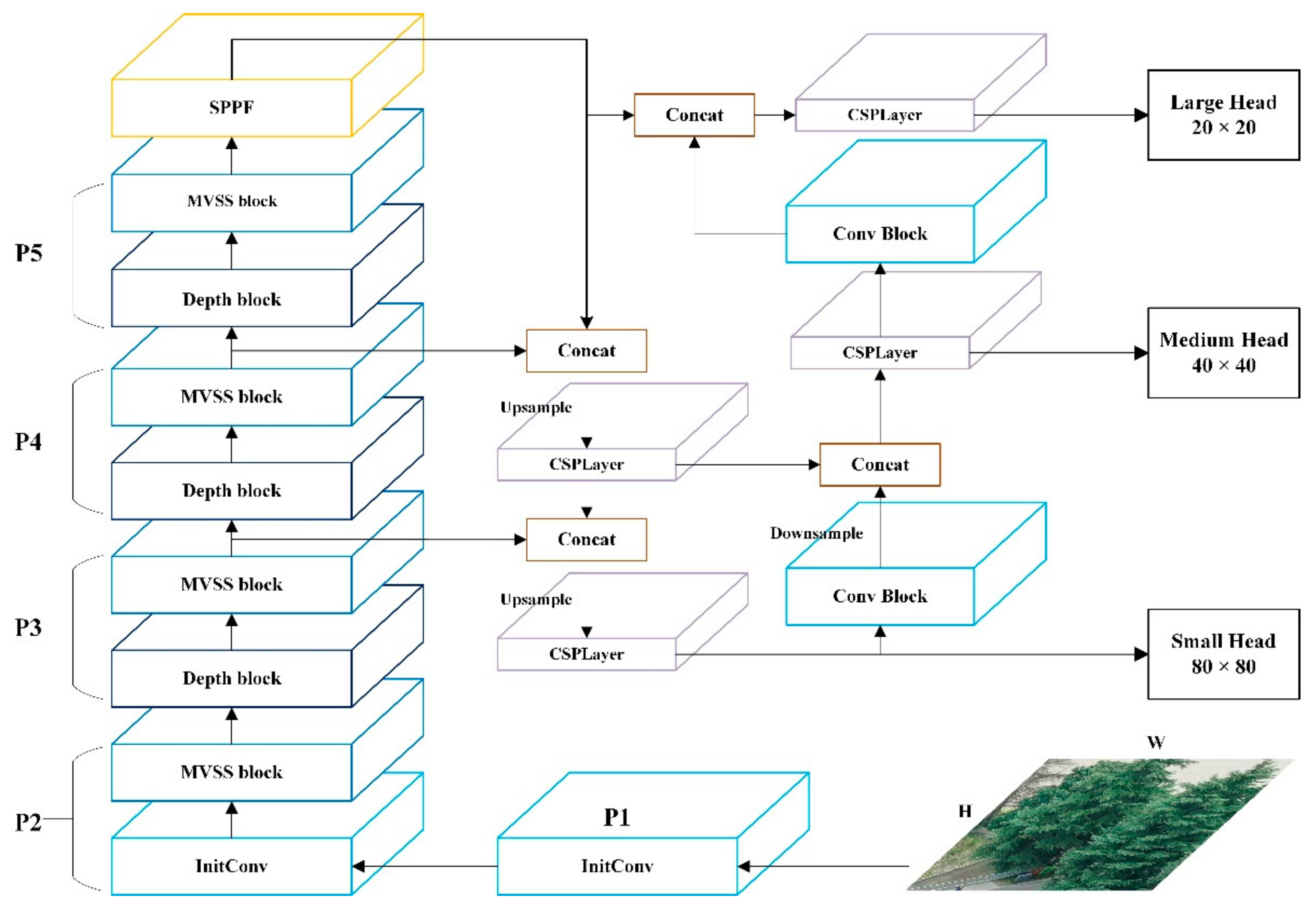

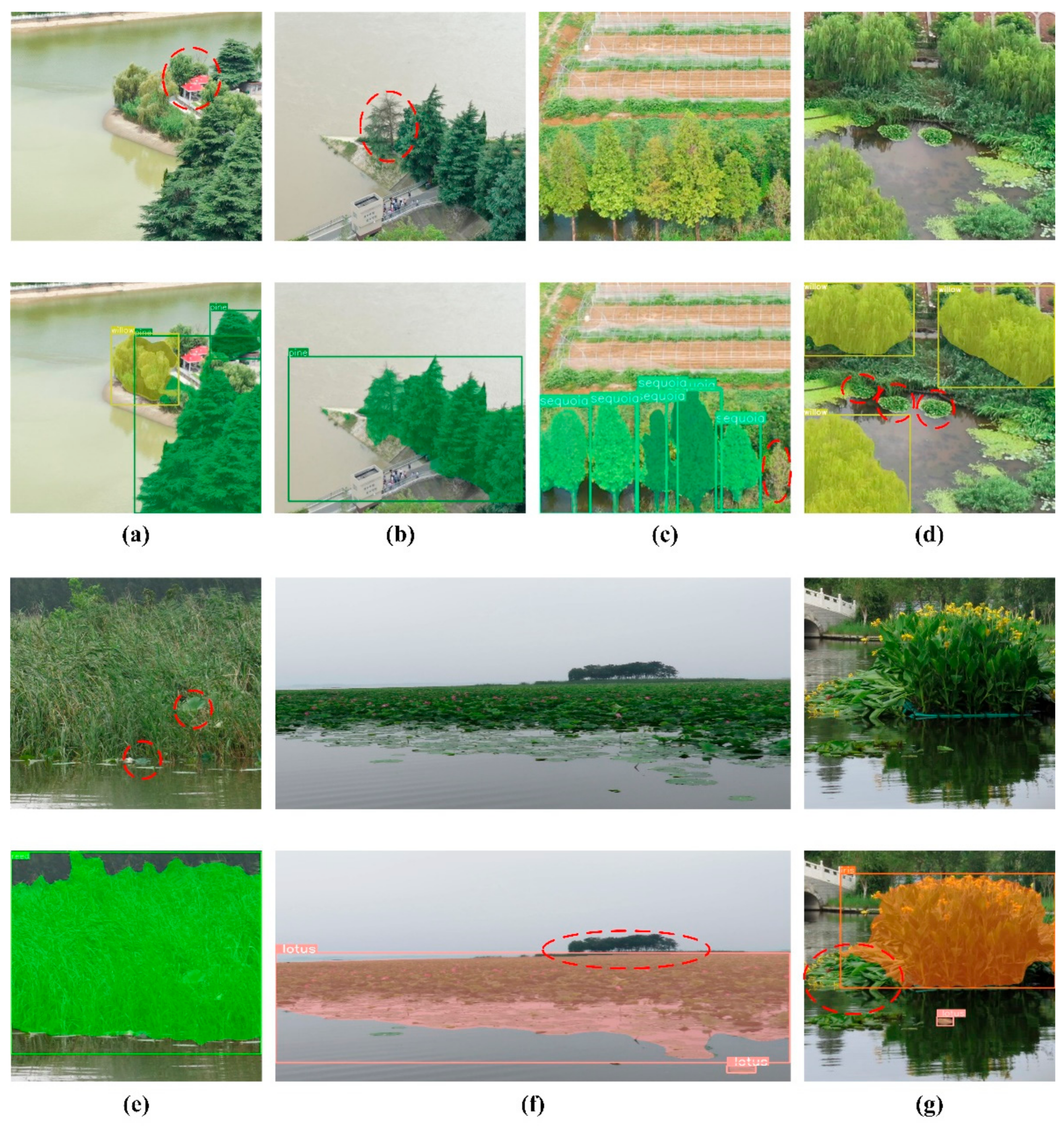

Existing plant recognition methods typically use datasets limited to a single viewpoint image. We address this limitation by constructing a dataset that aligns with the spatial-temporal characteristics of plants in terms of climate and watershed, enabled by unmanned equipment application scenarios. We also design a new YOLO structure to enhance the extraction accuracy of multiscale feature information.

2.2. Mamba-Based Image Recognition Methods

The multi-head self-attention mechanism brings O(n2) quadratic time complexity, and although the training method can be processed in parallel, the inference process is relatively slow. Mamba [

26] introduces a hidden structured state space sequence model into the input-to-output steganography process to achieve linear computational complexity. Since natural images do not have apparent sequential relationships compared to, for example, language, how image blocks are scanned has been a focus of researchers. For example, local scanning is able to capture richer detail information by dividing the image into smaller graphic blocks and then scanning each image block locally and separately [

27].

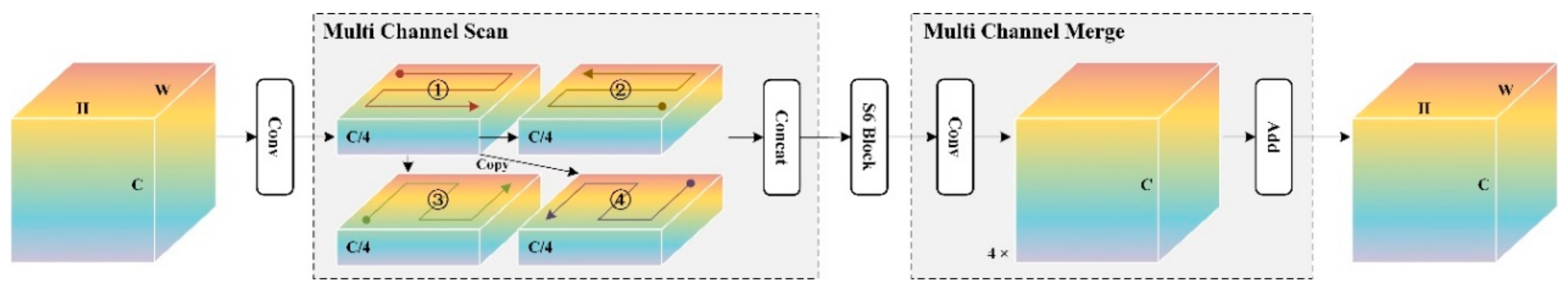

VMamba [

28] proposed an SS2D module using a four-way scanning strategy, i.e., scanning from all four corners of the feature map simultaneously to ensure that each element in the feature integrates information from all other locations in a different direction, resulting in a global sensory field. EfficientVMamba [

29] integrated a selective scanning method based on atrous through efficient jump sampling that utilizes both global and local representations of features.

Many base models combined with Mamba have demonstrated powerful performance, including image classification, target detection, and semantic segmentation. Mamba-YOLO [

30] builds on the YOLO architecture by combining the wavelet transform with the LSBlock and RGBlock modules to improve the modeling of local image dependencies for more accurate detection.VM-UNet [

31] Based on the encoder-decoder structure of UNet, VSS blocks employing a new fusion mechanism is introduced to preserve spatial information at different scales of the network. The results show that VM-UNet outperforms multiple types of UNet for medical image segmentation. MambaVision [

32] proposes a hybrid Mamba-Transformer architecture, and the experimental results show that adding self-attention blocks to the last layers of the Mamba architecture improves the modeling ability of the model to capture spatial dependencies over long distances.

Currently, Mamba has also been widely used in remote sensing. Chen et al. [

33] proposed a multipath scanning activation mechanism to improve the modeling ability of the mamba structure for non-causal data. Yao et al. [

34] addressed the problem of high computational complexity due to the difficulty of parallelizing the classification task of hyperspectral images. They proposed the PSS and GSSM to simplify the order in the state domain learning and correcting spectra in the spatial, spectral domain. Zhao et al. [

35] proposed RS-Mamba to globally model the background of an image in multiple directions by using an omnidirectional selective scanning module to capture large spatial features from all directions. Extensive experiments on semantic segmentation and change detection tasks for various types of features proved better efficiency and accuracy than Transformer.

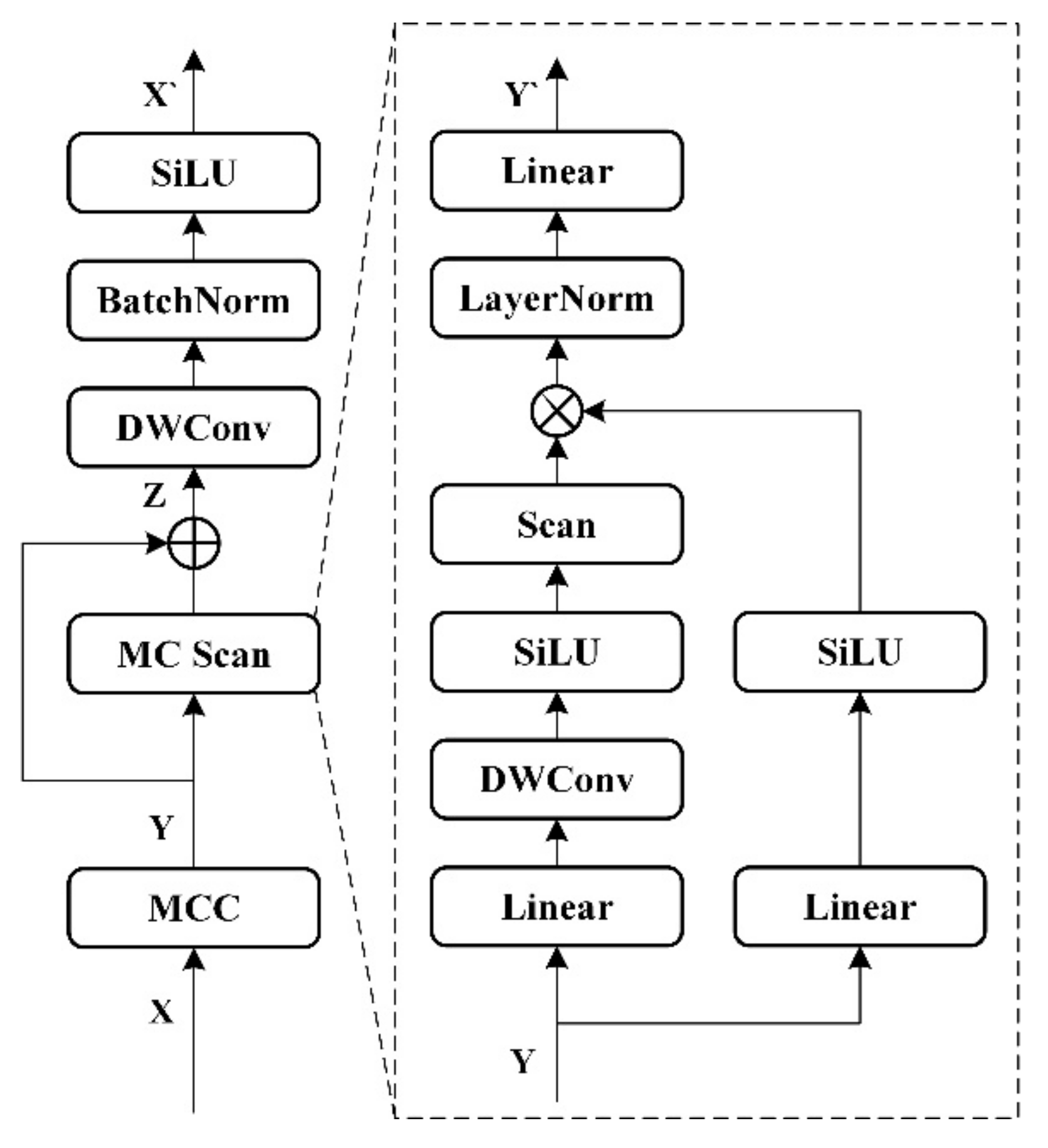

This paper addresses the high computational effort of the Mamba structure by pre-emptively reducing the number of channels with convolution to ensure unchanged scanning diversity (i.e., forward, reverse, forward flip, and reverse flip), thereby improving the ability to characterize local information in plant images.