Submitted:

10 March 2025

Posted:

11 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We construct an unified observation pose optimization method to ensure the observation quality during motion.

- We build a factor graph for pose optimization to guarantee the global optimality of the observation trajectory.

- We construct the object completeness observation factor, self-observation prevention factor, and camera motion smoothness factor, based on the ellipsoid model and camera FOV.

- We validate our method through simulation experiments, demonstrating improvements in object modeling accuracy, exploration efficiency, and camera localization precision.

2. Related Work

2.1. Information-Entroy Based ASLAM

2.2. Object Based ASLAM

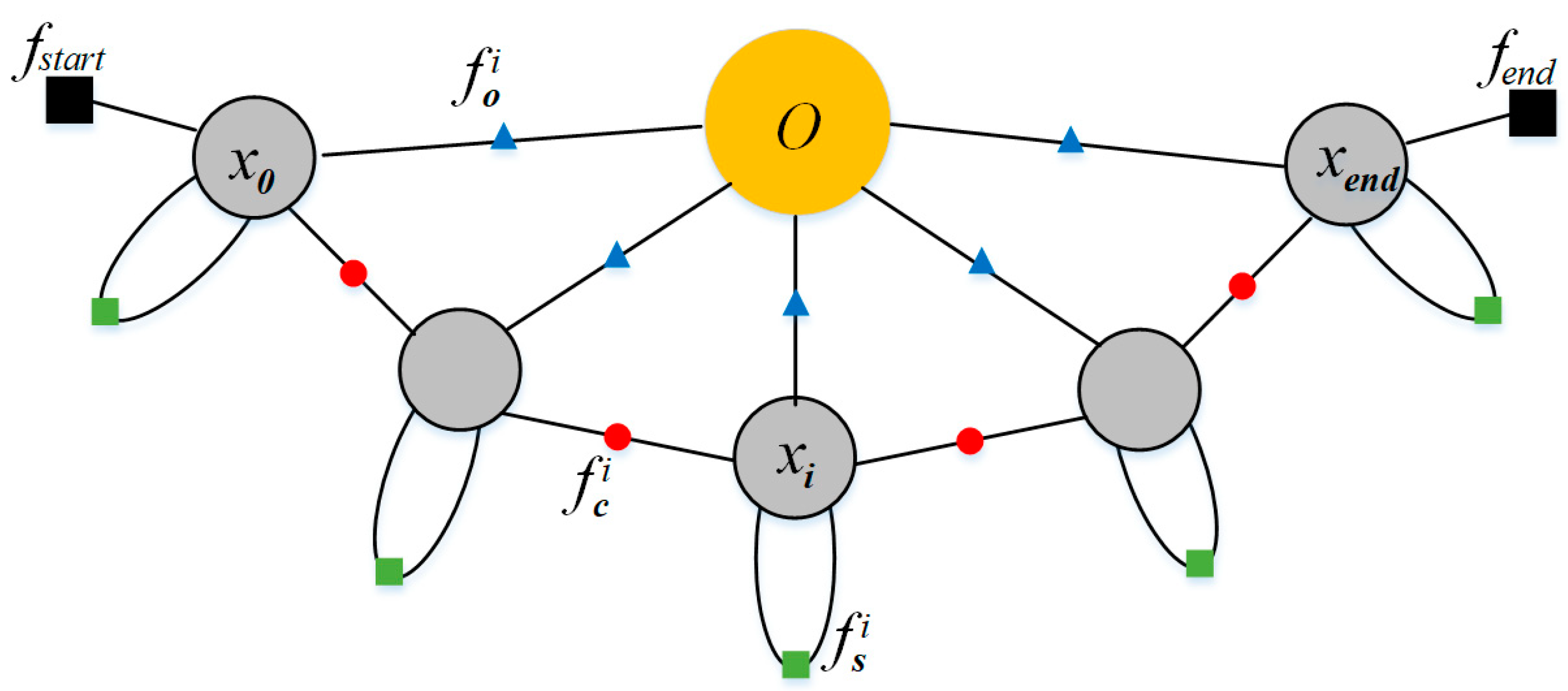

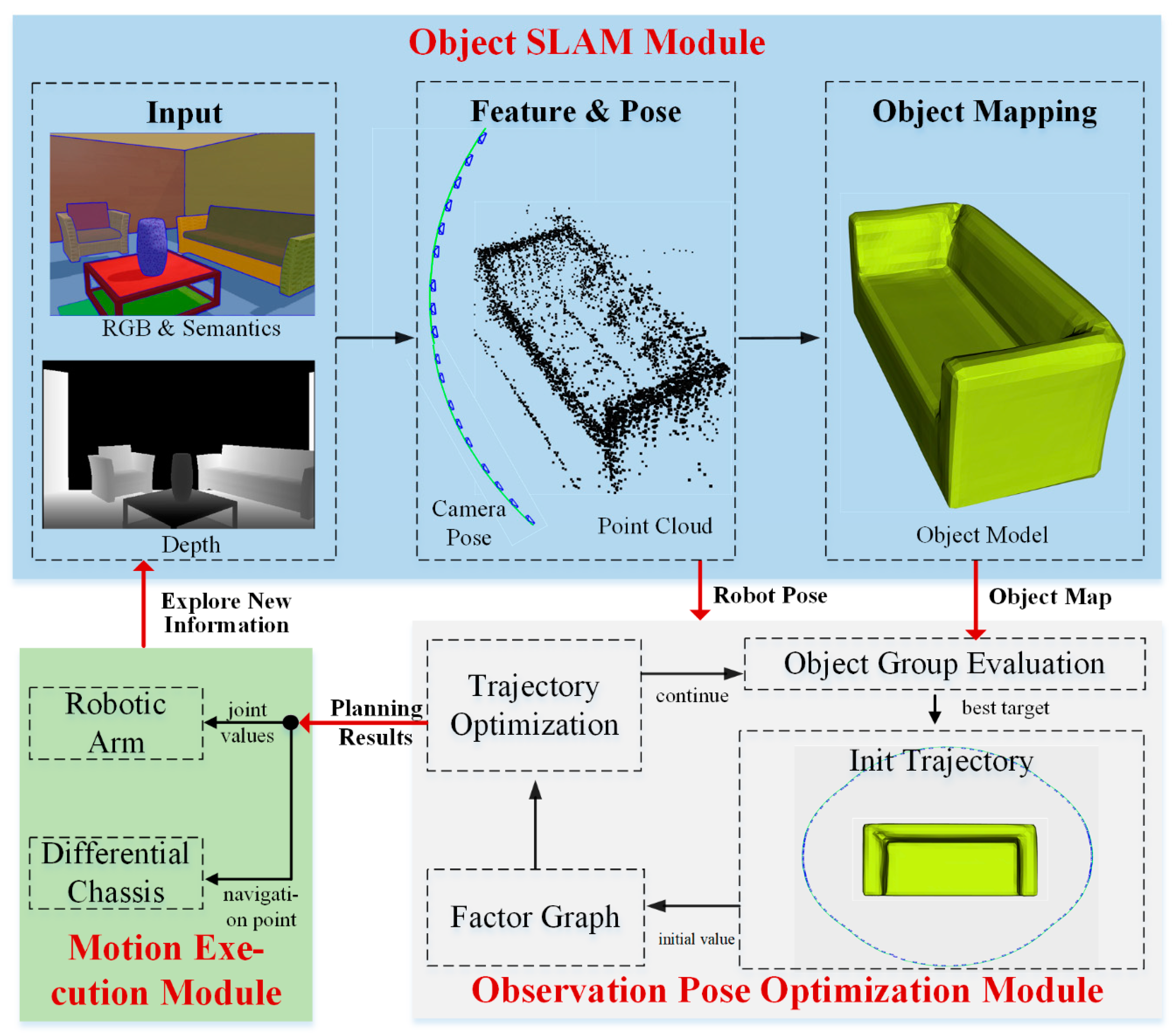

3. Factor Graph for Object Observation Pose Optimization

3.1. Scenario Analysis for Active Object SLAM

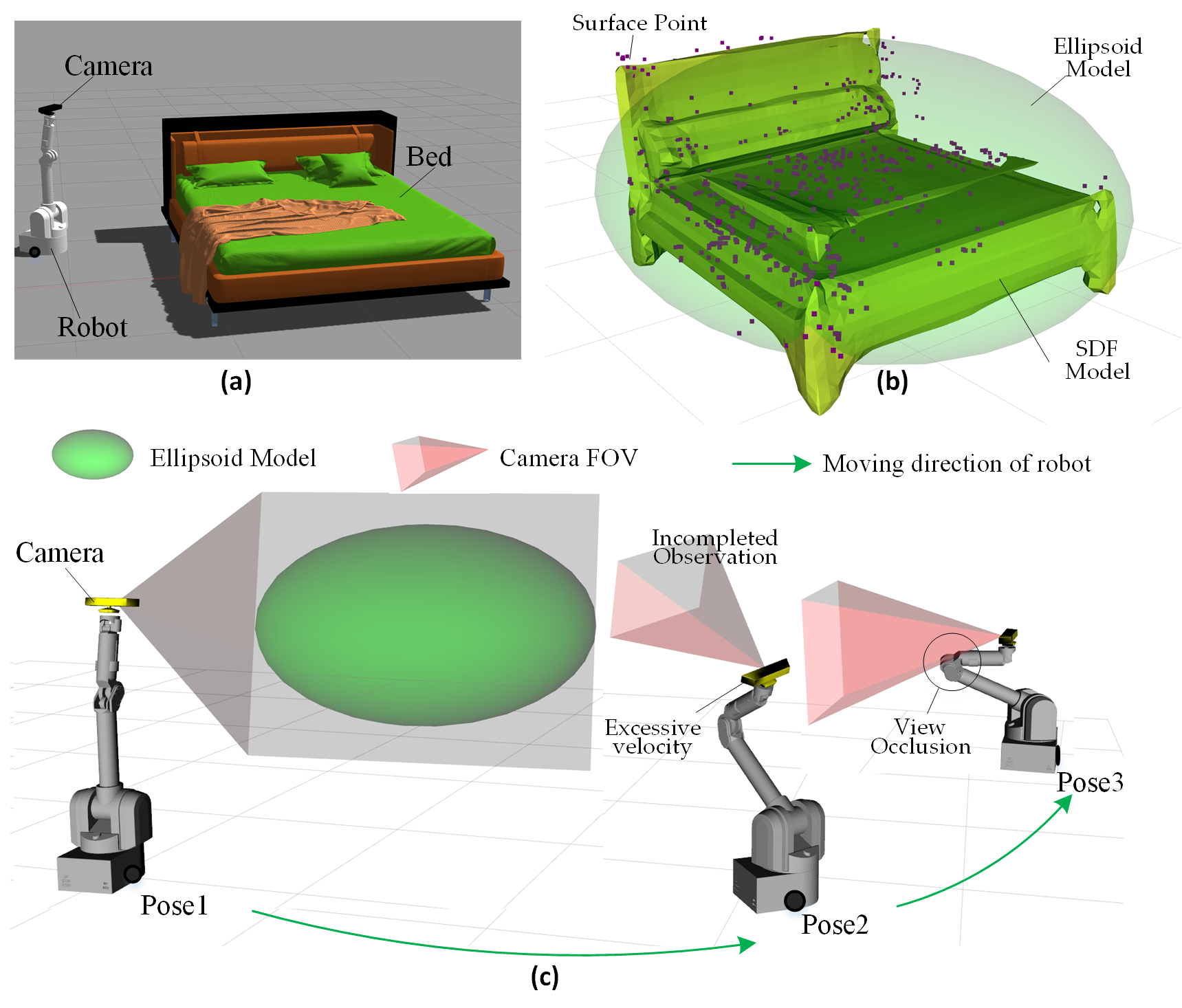

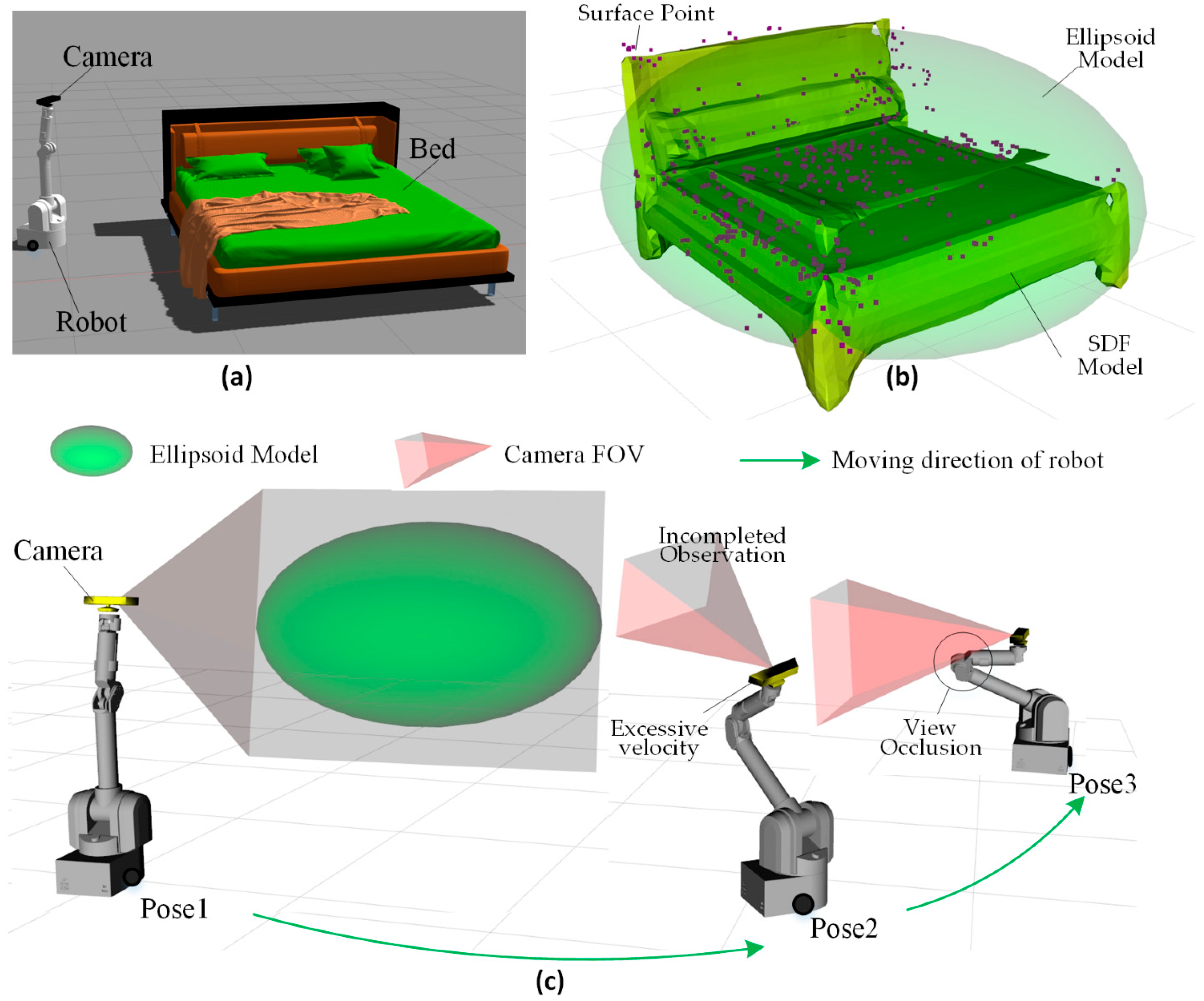

- Incomplete Observation: The bed is a large object, making it easy to extend beyond the camera’s FOV, leading to incomplete observations. This increases frame-to-frame tracking errors, reduces object association accuracy. The incomplete observation may result in incorrect object modeling or split the model into multiple erroneous parts. This is a critical issue that must be avoided in object modeling. In Figure 1c, the camera at Pose1 has a complete view of the bed, ensuring full observation. However, at Pose2, the camera is in an upward-tilted pose, capturing only part of the bed, leading to incomplete observation.

- Robot Self-Occlusion of the Camera: In real-world scenarios, robots often have complex shapes and structures, which can lead to self-occlusion of the camera. In Figure 1, the robot includes a 7-DoF robotic arm, and during movement, it is difficult to prevent parts of the robot body from entering the camera’s FOV (as shown by the Pose3 in Figure 1c). This reduces the effective observation range and increases the risk of incorrect feature extraction, object detection and SLAM crush.

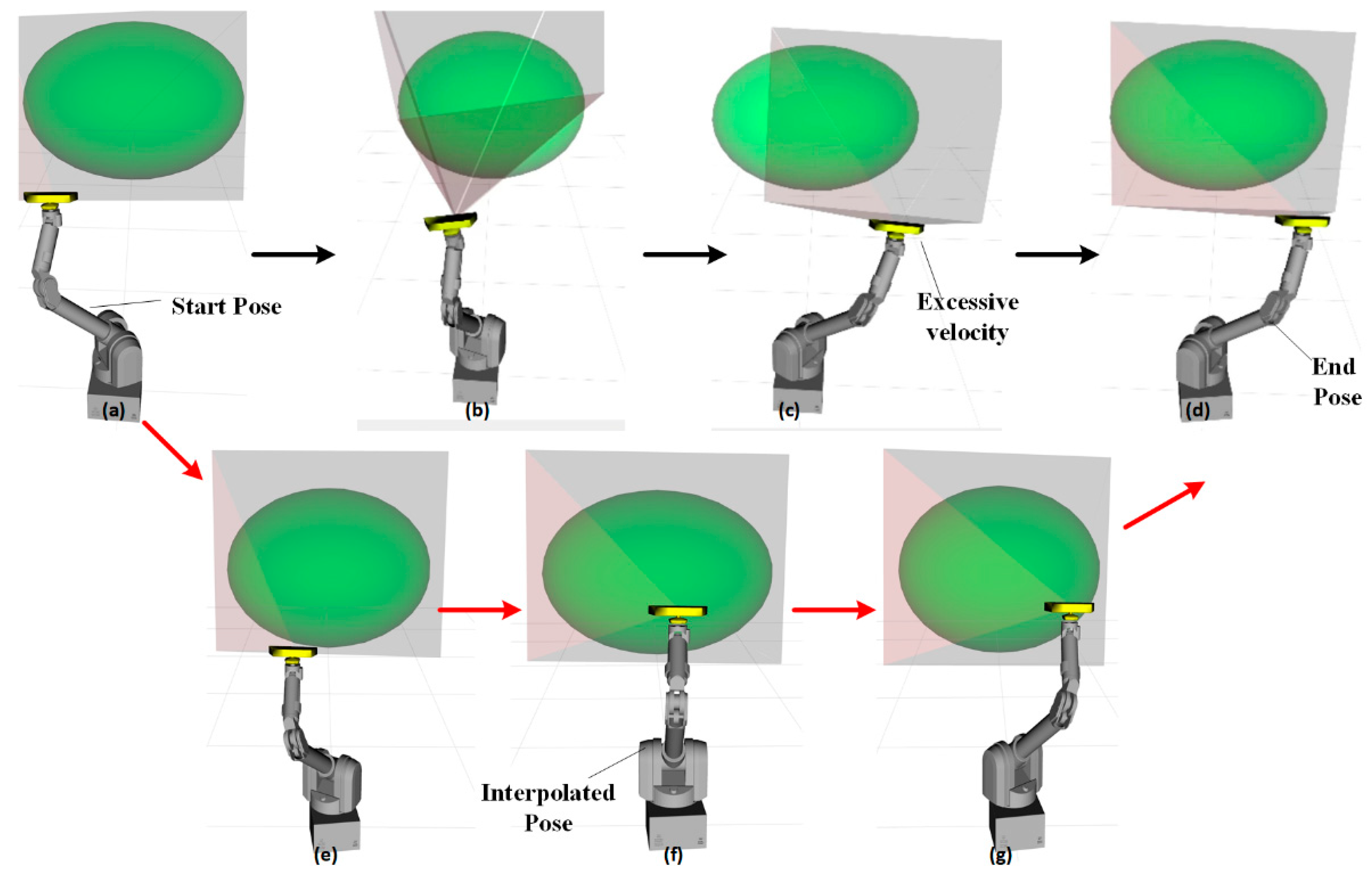

- Poor Camera motion smoothness: In Figure 1c, the three observation poses have large differences in the robot’s chassis position and arm joint angles. This results in abrupt changes in the camera’s motion trajectory and excessively high speeds during camera movement. Unstable camera motion may lead to failures in the feature extraction and inter-frame tracking of SLAM. Therefore, maintaining smoothness in both camera position and velocity is crucial for SLAM.

- Disconnection Between View planning and Motion Planning: In traditional Active SLAM, motion planning only serves the results of view planning, while neglecting the impact of the motion process on observation quality. As a result, during transitions between different observation poses, many issues such as incomplete object observation, self-occlusion of the camera, and motion oscillations may unpredictably occur.

3.2. Observation Pose Optimization Based on Probabilistic Inference

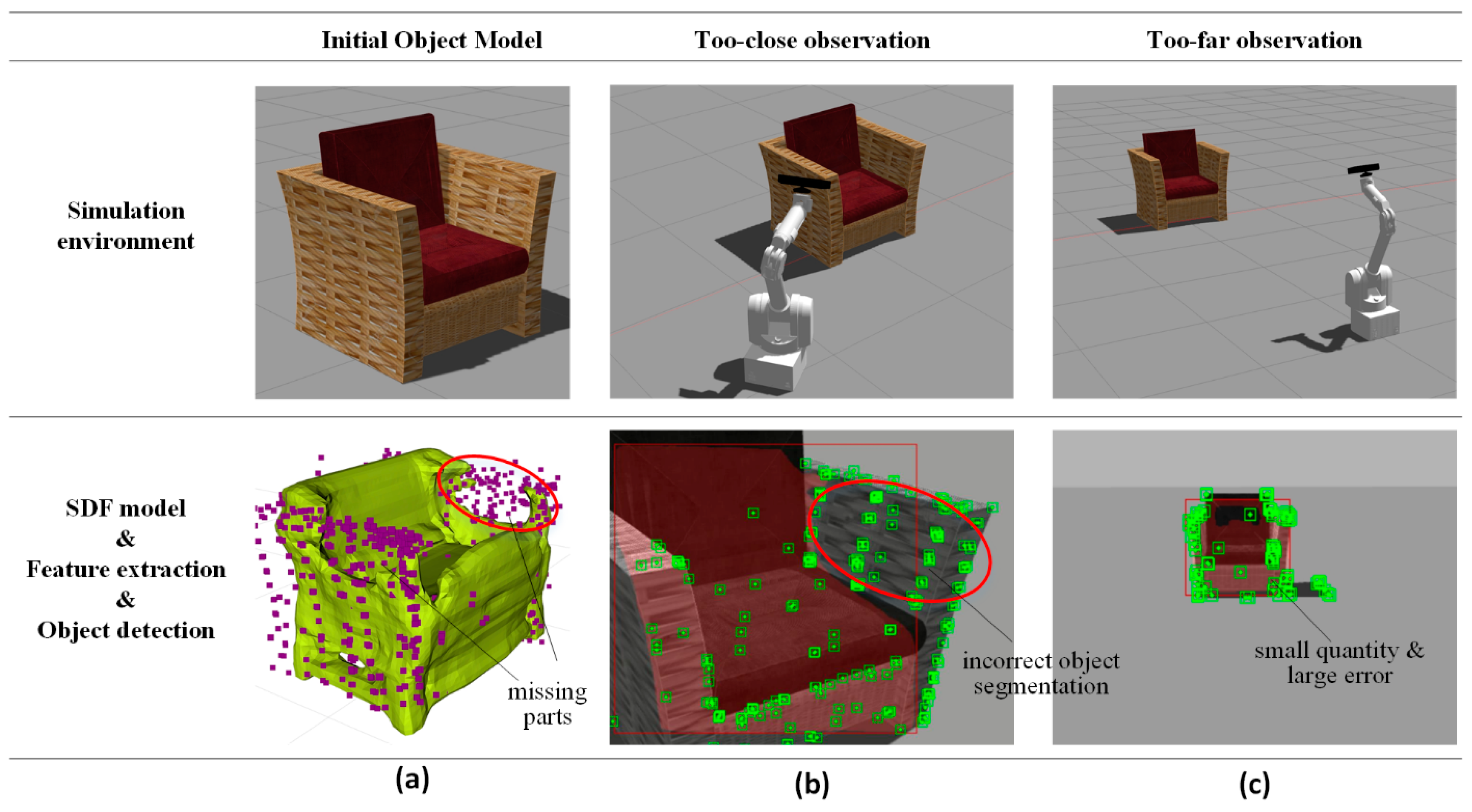

4. Object Completeness Observation Factor

4.1. Construction Idea

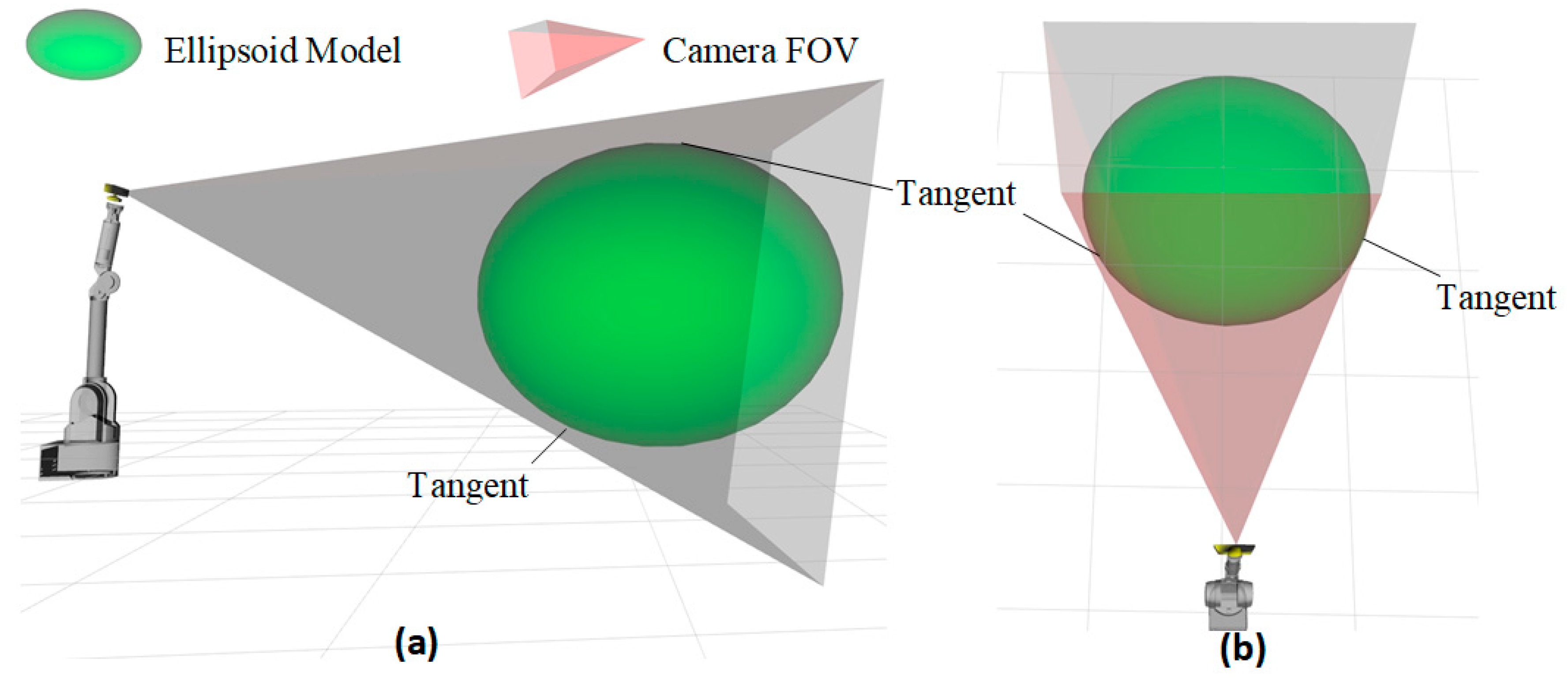

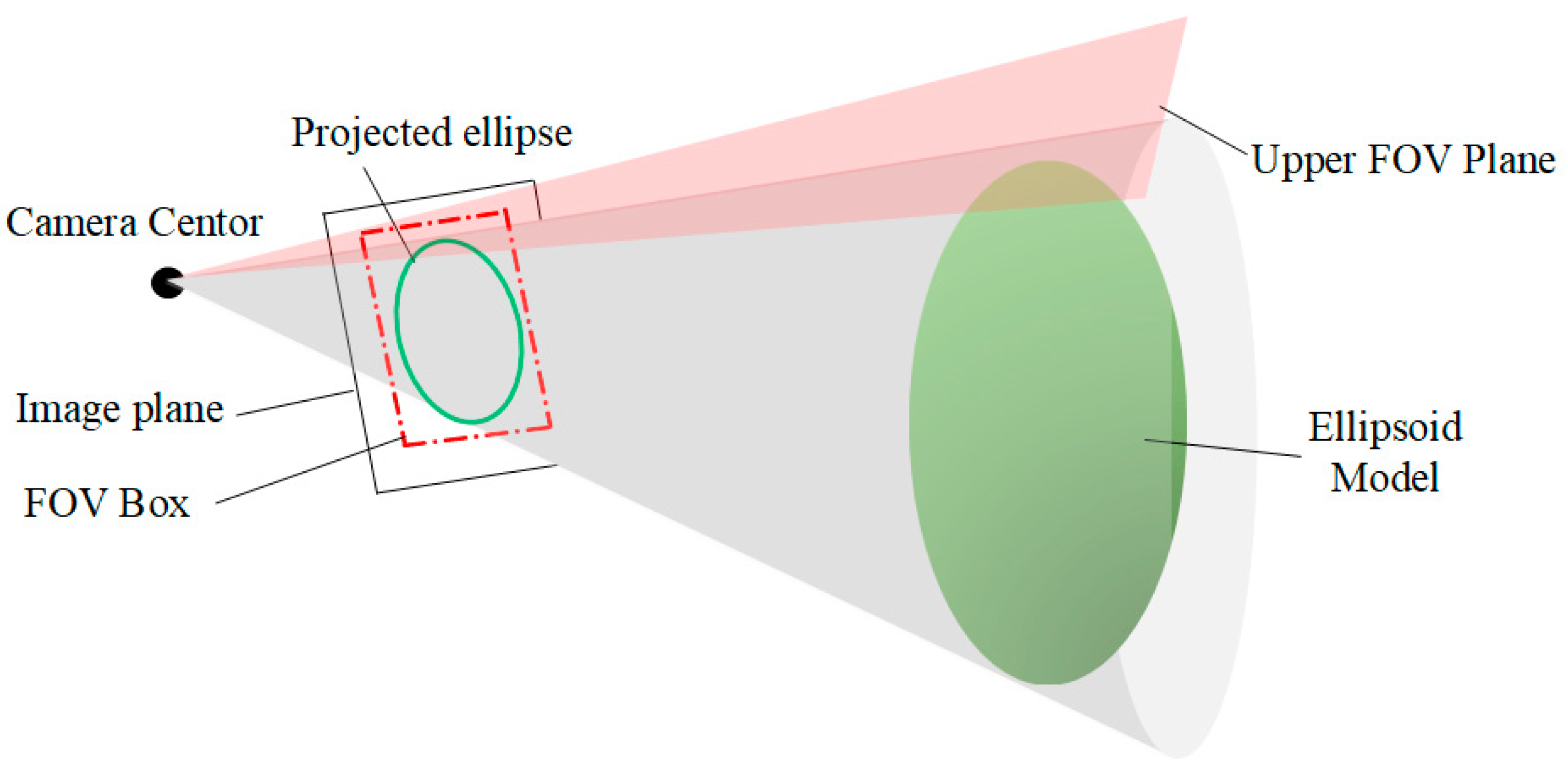

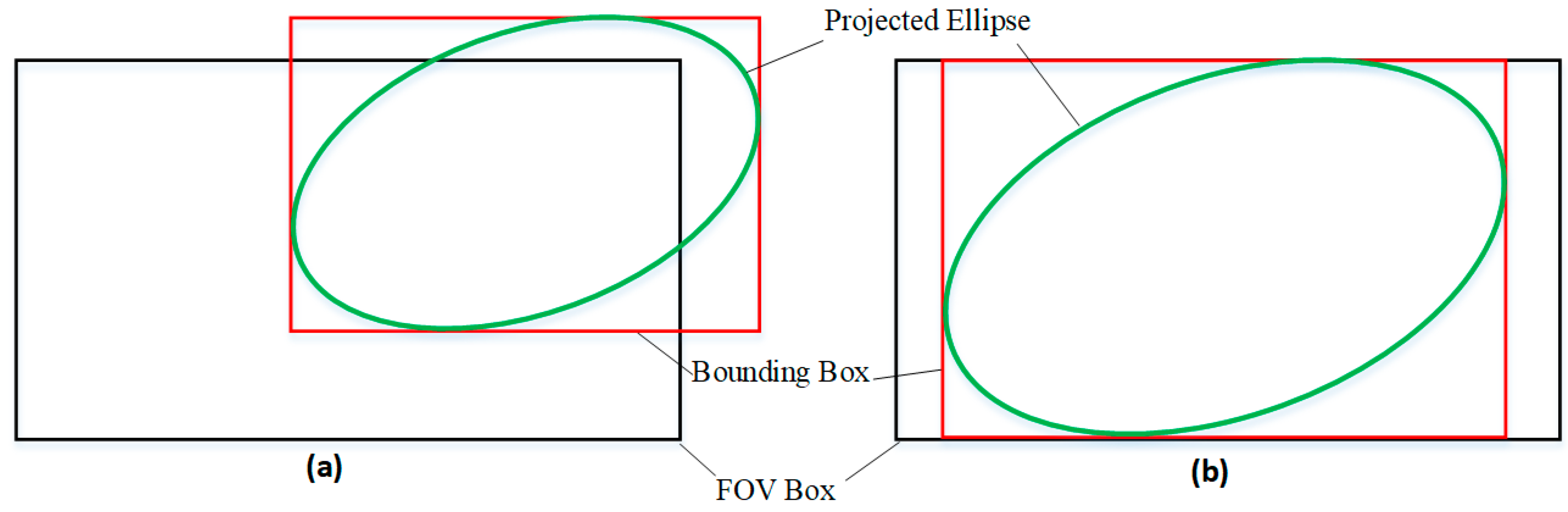

- The object must be within the camera’s FOV.

- The object must not be too far from the camera.

4.2. Relationship Between the Ellipsoid and the Plane

4.3. Factor Construction

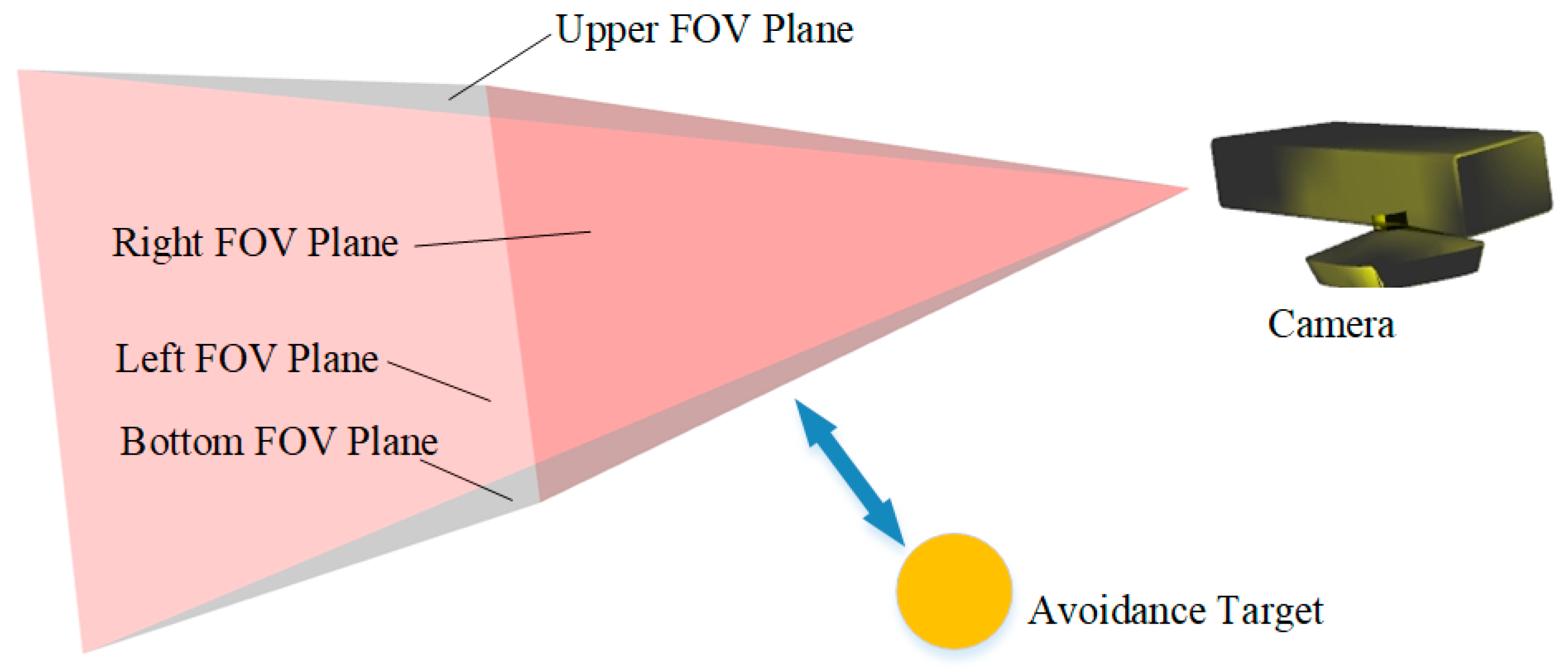

5. Self-Observation Prevention Factor

5.1. Construction Concept

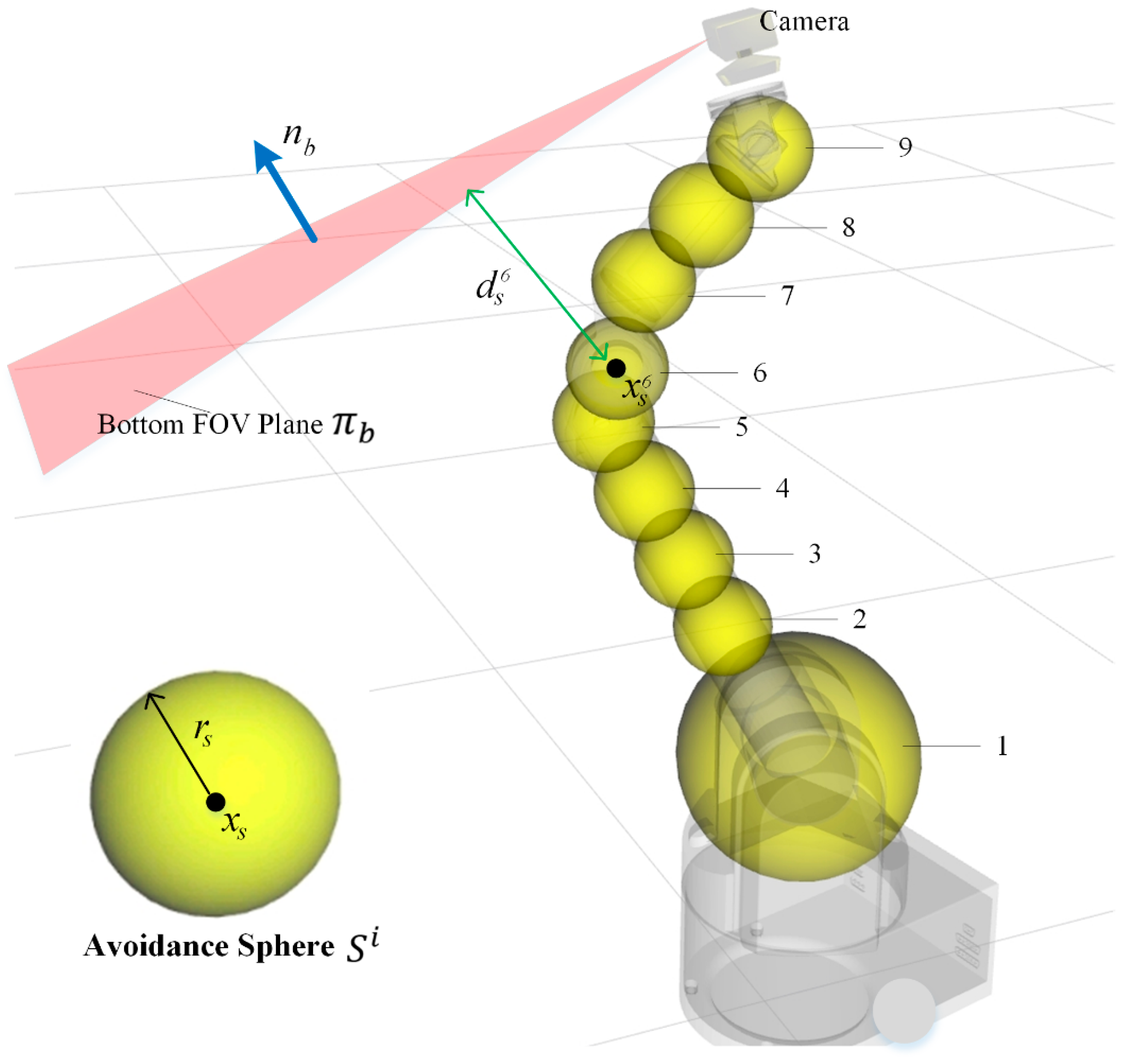

5.2. Avoidance Sphere Coordinate Calculation Based on Robot Kinematics

- Simplified Computation: By decomposing the robot body into a series of spheres, we can reduce computational complexity significantly.

- Efficient Mathematical Representation: Spheres and planes have simple mathematical forms. It’s easy for distance and gradient calculations.

5.3. Collision Detection

5.4. Factor Construction

6. Camera Motion Smoothness Factor

- Maintaining Temporal Consistency of the Trajectory:

- Controlling Speed Variations:

7. Experiment

7.1. Active SLAM System Construction

7.2. Experimental Setup

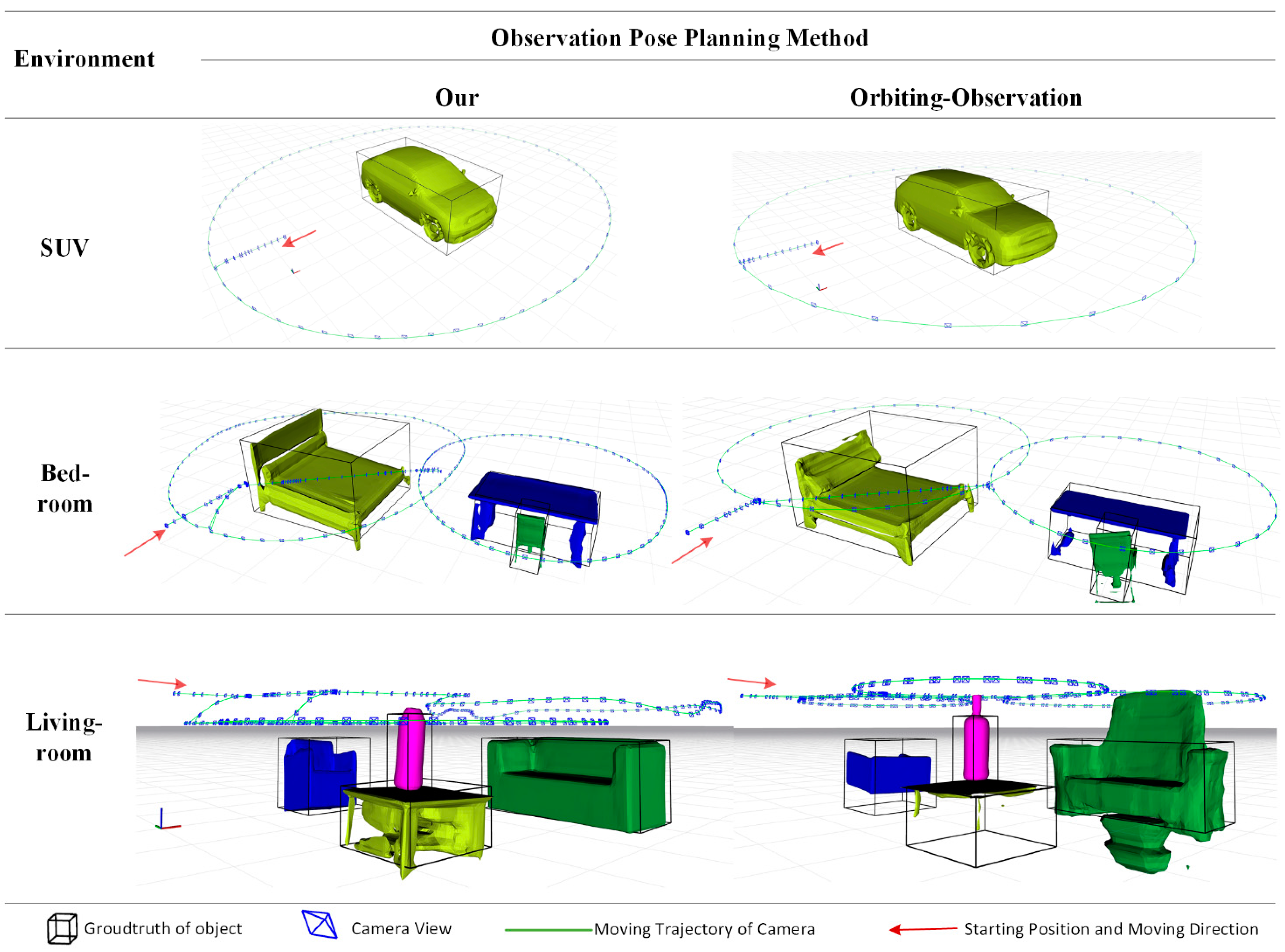

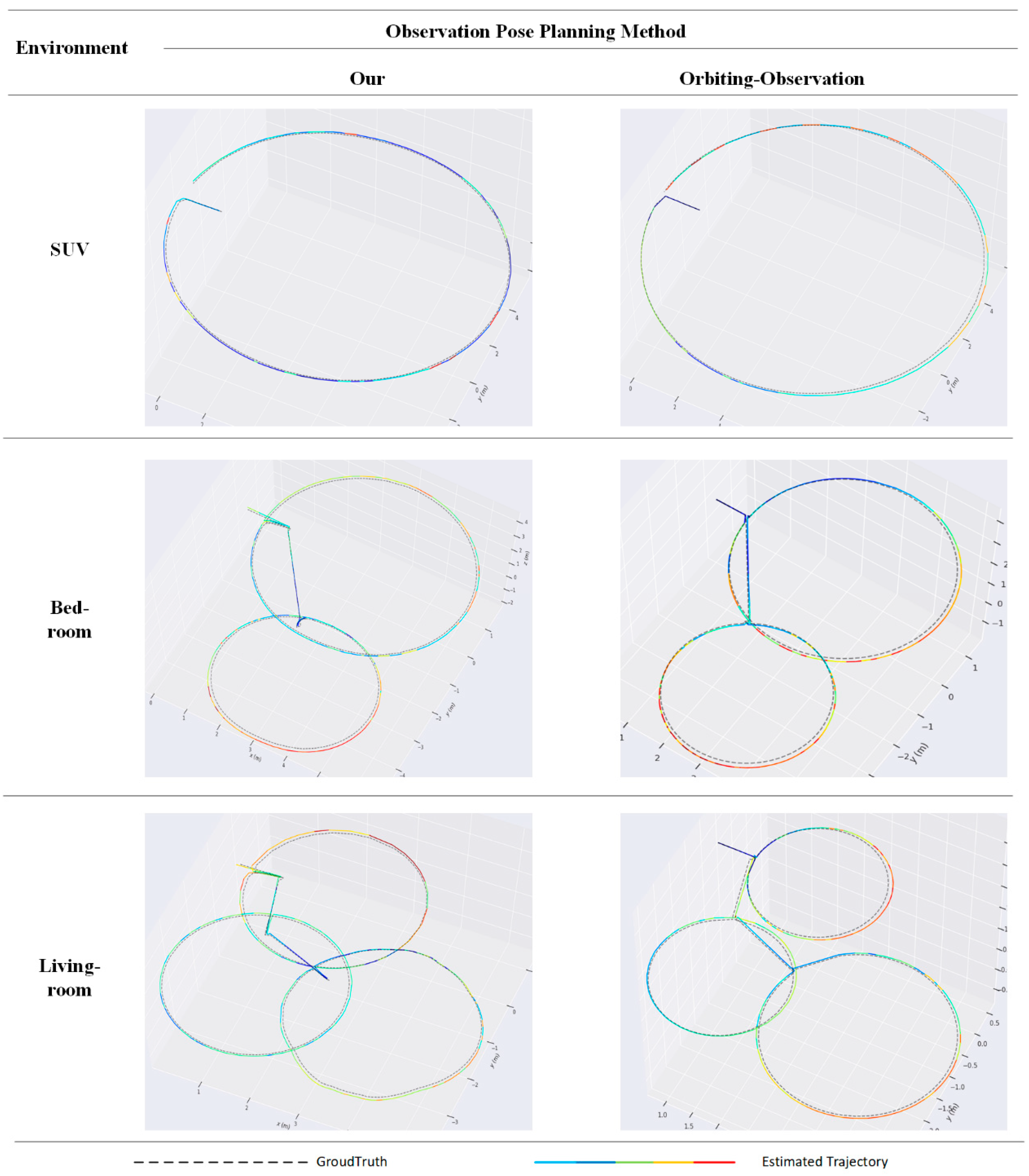

- Our method: Utilizes our method in the observation pose optimization module.

7.3. Object Modeling Performance

- IoU (Intersection over Union): Measures the overlap ratio between the predicted model and the ground-truth model. By aligning the 3D bounding box of object model with the ground-truth object’s center and orientation, we compute their 3D IoU to reflect size accuracy. A higher IoU indicates a greater overlap between the predicted model and the real object.

- Trans (Translation Error): Measures the Euclidean distance between the predicted position and the ground-truth position of the object’s center. A smaller value indicates lower error.

- Rot (Rotation Error): Represents the angular deviation in the predicted object pose. A smaller value indicates more accurate rotation estimation.

7.4. Travel Distance and Mapping Time

- Length of Chassis Path: Measures the total path length traveled by the robot’s chassis during the mapping process. A smaller value indicates better path optimization.

- Motion Time: Consists of planning time and execution time. planning time refers to the time required for observation pose planning, while execution time refers to the time taken by the motion execution module to implement the output of the observation pose optimization module. Since orbiting-observation method only requires motion computation during the observation pose planning phase, its planning time can be considered negligible (approximately zero). A smaller motion time value indicates higher system efficiency.

7.5. The Accuracy of SLAM

8. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Jeong Joon Park, Peter Florence, Julian Straub, Richard Newcombe, and Steven Lovegrove. Deepsdf: Learning continuous signed distance functions for shape representation. In The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2019.

- Runz, M.; Buffier, M.; Agapito, L. Maskfusion: Real-time recognition, tracking and reconstruction of multiple moving objects. In Proceedings of the 2018 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 16–20 October 2018; pp. 10–20. [Google Scholar]

- Ok, K.; Liu, K.; Frey, K.; How, J.P.; Roy, N. Robust object-based slam for high-speed autonomous navigation. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 669–675. [Google Scholar]

- Yamauchi, B. Frontier-based exploration using multiple robots. In Proceedings of the Second International Conference on Autonomous Agents, St. Paul, MN, USA, 9–13 May 1998; pp. 47–53. [Google Scholar]

- Bourgault, F.; Makarenko, A.A.; Williams, S.B.; Grocholsky, B.; Durrant-Whyte, H.F. Information based adaptive robotic exploration. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, Lausanne, Switzerland, 30 September–4 October 2002; pp. 540–545; Volume 1. [Google Scholar]

- Bai, S.; Wang, J.; Chen, F.; Englot, B. Information-theoretic exploration with bayesian optimization. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2016), Daejeon, Republic of Korea, 9–14 October 2016; pp. 1816–1822. [Google Scholar]

- Charrow, B. , Liu, S., Kumar, V., & Michael, N. (2015, May). Information-theoretic mapping using cauchy-schwarz quadratic mutual information. In 2015 IEEE International Conference on Robotics and Automation (ICRA) (pp. 4791-4798). IEEE.

- Wu, Y.; Zhang, Y.; Zhu, D.; Chen, X.; Coleman, S.; Sun, W.; Hu, X.; Deng, Z. Object slam-based active mapping and robotic grasping.

- Zhang, J.; Wang, W. VP-SOM: View-Planning Method for Indoor Active Sparse Object Mapping Based on Information Abundance and Observation Continuity. Sensors 2023, 23, 9415. [Google Scholar] [CrossRef] [PubMed]

- Shannon, C. E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Yamauchi, B. Frontier-based exploration using multiple robots. In Proceedings of the Second International Conference on Autonomous Agents, St. Paul, MN, USA, 9–13 May 1998; pp. 47–53. [Google Scholar]

- Carrillo, H.; Dames, P.; Kumar, V.; Castellanos, J.A. Autonomous robotic exploration using a utility function based on rényi’s general theory of entropy. Auton. Robot. 2018, 42, 235–256. [Google Scholar] [CrossRef]

- Isler, S.; Sabzevari, R.; Delmerico, J.; Scaramuzza, D. An information gain formulation for active volumetric 3d reconstruction. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 3477–3484. [Google Scholar]

- Wang, Y.; Ramezani, M.; Fallon, M. Actively mapping industrial structures with information gain-based planning on aquadruped robot. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 8609–8615. [Google Scholar]

- Zheng, L.; Zhu, C.; Zhang, J.; Zhao, H.; Huang, H.; Niessner, M.; Xu, K. Active scene understanding via online semantic reconstruction. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2019; Volume 38, pp. 103–114. [Google Scholar]

- Placed, J.A.; Rodríguez, J.J.G.; Tardós, J.D.; Castellanos, J.A. ExplORB-SLAM: Active visual SLAM exploiting the pose-graph topology. In Proceedings of the Iberian Robotics Conference, Zaragoza, Spain, 23 November 2022; Springer International Publishing: Cham, Switzerland, 2022; pp. 199–210. [Google Scholar]

- Placed, J.A.; José, A. Castellanos. A general relationship between optimality criteria and connectivity indices for active graphSLAM. IEEE Robot. Autom. Lett. 2022, 8, 816–823. [Google Scholar]

- Scott, W.R.; Roth, G.; Rivest, J.-F. View planning for automated three-dimensional object reconstruction and inspection. ACM Comput. (CSUR) 2003, 35, 64–96. [Google Scholar] [CrossRef]

- Scott, W. R. Model-based view planning. Mach. Vis. Appl. 2009, 20, 47–69. [Google Scholar] [CrossRef]

- Cui, J.; Wen, J.T.; Trinkle, J. A multi-sensor next-best-view framework for geometric model-based robotics applications. In Proceedings of the 2019 IEEE International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 8769–8775. [Google Scholar]

- Chen, S.; Li, Y. Vision sensor planning for 3-d model acquisition. IEEE Trans. Syst. Man Cybern. Part B (Cybern.) 2005, 35, 894–904. [Google Scholar] [CrossRef] [PubMed]

- Whaite, P.; Ferrie, F.P. Autonomous exploration: Driven by uncertainty. IEEE Trans. Pattern Anal. Mach. Intell. 1997, 19, 193–205. [Google Scholar] [CrossRef]

- Li, Y.; Liu, Z. Information entropy-based view planning for 3-d object reconstruction. IEEE Trans. Robot. 2005, 21, 324–337. [Google Scholar] [CrossRef]

- Wong, L.M.; Dumont, C.; Abidi, M.A. Next best view system in a 3d object modeling task. In Proceedings of the 1999 IEEE International Symposium on Computational Intelligence in Robotics and Automation, CIRA’99 (Cat. No. 99EX375), Monterey, CA, USA, 8–9 November 1999; pp. 306–311. [Google Scholar]

- Dornhege, C.; Kleiner, A. A frontier-void-based approach for autonomous exploration in 3d. Adv. Robot. 2013, 27, 459–468. [Google Scholar] [CrossRef]

- Monica, R.; Aleotti, J. Contour-based next-best view planning from point cloud segmentation of unknown objects. Auton. Robot. 2018, 42, 443–458. [Google Scholar] [CrossRef]

- Wu, Y.; Zhang, Y.; Zhu, D.; Deng, Z.; Sun, W.; Chen, X.; Zhang, J. An Object SLAM Framework for Association, Mapping, and High-Level Tasks. In IEEE Transactions on Robotics; Springer International Publishing: Cham, Switzerland, 2023. [Google Scholar]

- Patten, T.; Zillich, M.; Fitch, R.; Vincze, M.; Sukkarieh, S. View evaluation for online 3-d active object classification. IEEE Robot. Autom. Lett. 2015, 1, 73–81. [Google Scholar] [CrossRef]

- Kschischang, F. R. , Frey, B. J., & Loeliger, H. A. (2002). Factor graphs and the sum-product algorithm. IEEE Transactions on information theory, 47(2), 498-519.

- Mukadam, M. , Dong, J., Dellaert, F., & Boots, B. (2017, July). Simultaneous trajectory estimation and planning via probabilistic inference. In Robotics: Science and systems.

- Hartley, R. , & Zisserman, A. (2003). Multiple view geometry in computer vision. Cambridge university press.

- Denavit, Jacques, and Richard S. Hartenberg. (1955). A kinematic notation for lower-pair mechanisms based on matrices. 215-221.

- Richter, C. , Bry, A., & Roy, N. (2016). Polynomial trajectory planning for aggressive quadrotor flight in dense indoor environments. In Robotics Research: The 16th International Symposium ISRR (pp. 649-666). Springer International Publishing.

- He, K. He, K., Gkioxari, G., Dollár, P., & Girshick, R. (2017). Mask r-cnn. In Proceedings of the IEEE international conference on computer vision (pp. 2961–2969).

- Quigley, M. , Conley, K., Gerkey, B., Faust, J., Foote, T., Leibs, J., Wheeler, R., & Ng, A. Y. (2009). ROS: an open-source Robot Operating System. In ICRA Workshop on Open Source Software (Vol. 3, No. 3.2, p. 5).

| Scene | Metrics | Our | Orbiting |

|---|---|---|---|

| SUV | IoU | 0.795 | 0.758 |

| Trans | 0.141 | 0.314 | |

| Rot | 7.3 | 17.2 | |

| Bed-room | IoU | 0.769 | 0.649 |

| Trans | 0.189 | 0.327 | |

| Rot | 9.9 | 36.4 | |

| Living-room | IoU | 0.694 | 0.502 |

| Trans | 0.152 | 0.362 | |

| Rot | 8.9 | 30.1 | |

| Ave | IoU | 0.753 | 0.636 |

| Trans | 0.161 | 0.334 | |

| Rot | 8.7 | 27.9 |

| Scene | Metrics | Our | Orbiting |

|---|---|---|---|

| SUV | Length of Chasis Path | 40.7893 | 43.048 |

| Motion Time | 5.3 + 109 | 170 | |

| Bed-room | Length of Chasis Path | 18.4859 | 20.741 |

| Motion Time | 9.2 + 330 | 487 | |

| Living-room | Length of Chasis Path | 27.4061 | 31.4954 |

| Motion Time | 14.8 + 431 | 672 | |

| Ave | Length of Chasis Path | 28.8938 | 31.7615 |

| Motion Time | 9.8 + 290 | 443 |

| Scene | Our | Orbiting |

|---|---|---|

| SUV | 0.105279 | 0.110965 |

| Bed-room | 0.074610 | 0.086051 |

| Living-room | 0.074924 | 0.093292 |

| Ave | 0.08494 | 0.09677 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).