Submitted:

10 March 2025

Posted:

11 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Theme Background and Significance

1.2. Research Status

1.3. The Purpose of This Study

1.4. Brief Layout of the Paper

2. Prediction Method Based on Statistical Analysis Theory

2.1. Introduction

2.2. Specific Methods Listed

- (1)

- Historical average model (HA)

- (2)

- Autoregressive Integrated Moving Average model (ARIMA)

- (3)

- Kalman filter model

2.3. Disadvantages

3. Traditional Machine Learning Models

3.1. Introduction

3.2. Specific Methods

- (1)

- Feature extraction-based methods are primarily employed to train regression models to solve practical traffic prediction problems. Their main advantage lies in their simplicity and ease of implementation. However, these methods also suffer from limitations, such as focusing only on time series data and neglecting the complexities of spatiotemporal relationships. Cheng et al. [14] proposed an adaptive K-Nearest Neighbors (KNN) algorithm that treats spatial features of the road network as adaptive spatial neighbor nodes, time intervals, and spatiotemporal iso value functions. The algorithm was evaluated using speed data collected from highways in California and urban roads in Beijing.

- (2)

- Gaussian process methods utilize multiple kernel functions to capture the internal features of traffic data, while considering both spatial and temporal correlations. These methods are useful and practical for traffic forecasting but come with higher computational complexity and greater data storage demands when compared to feature extraction-based methods.

- (3)

- State space modeling methods assume that the observations are derived from a hidden Markov model, which is adept at capturing hidden data structures and can naturally model uncertainty within the system. This is an ideal characteristic for traffic flow prediction applications. However, these models can be challenging when it comes to establishing nonlinear relationships. Therefore, they are not always the best choice for modeling complex dynamic traffic data, particularly in long-term forecasting scenarios. Tu et al. [15] introduced a congestion pattern prediction model, SG-CNN, based on a hidden Markov model and compared it with the well-known ARIMA baseline model for traffic prediction. Experimental results demonstrated that the SG-CNN model exhibited strong performance.

4. Deep Learning Models

4.1. Introduction

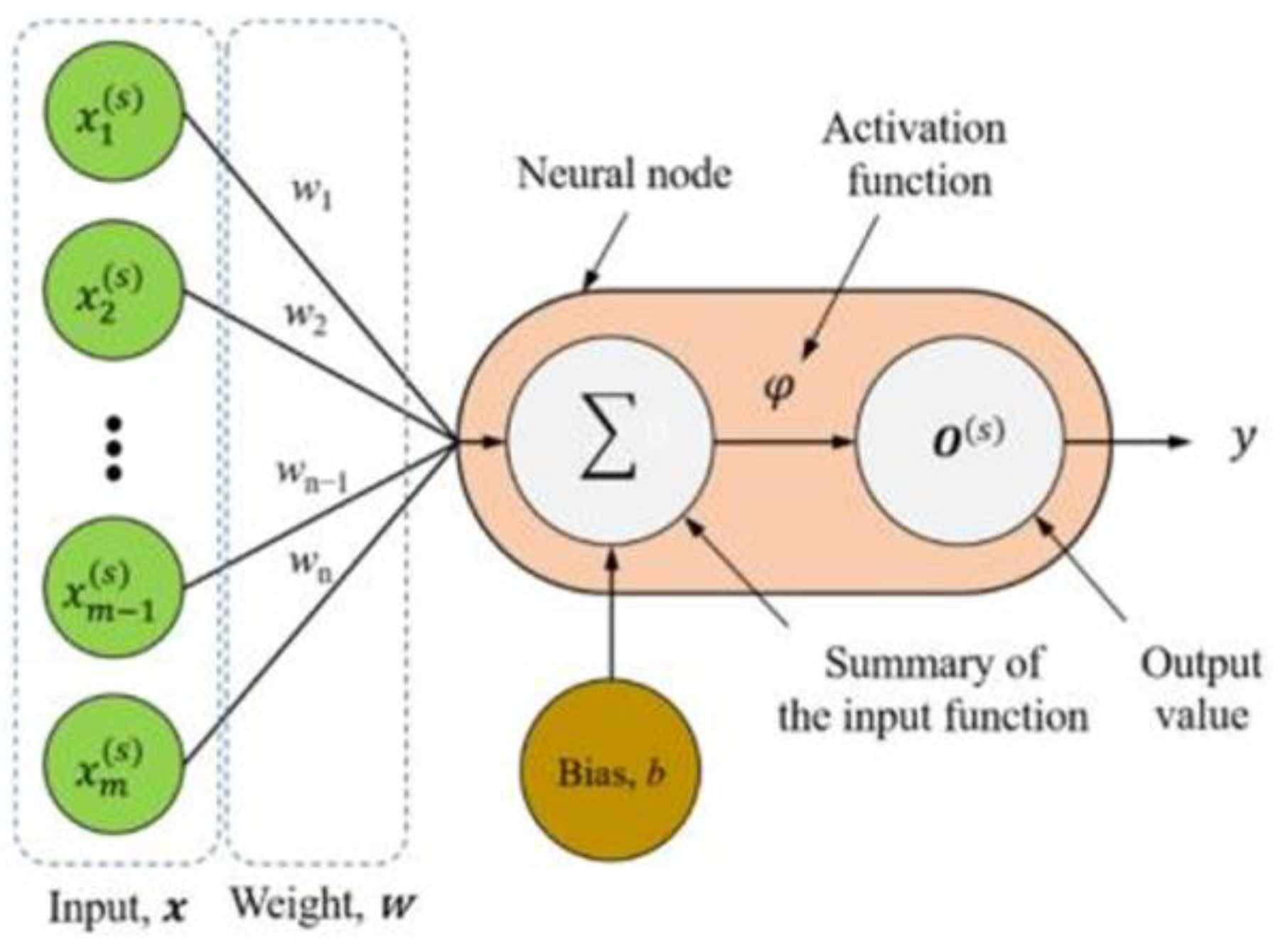

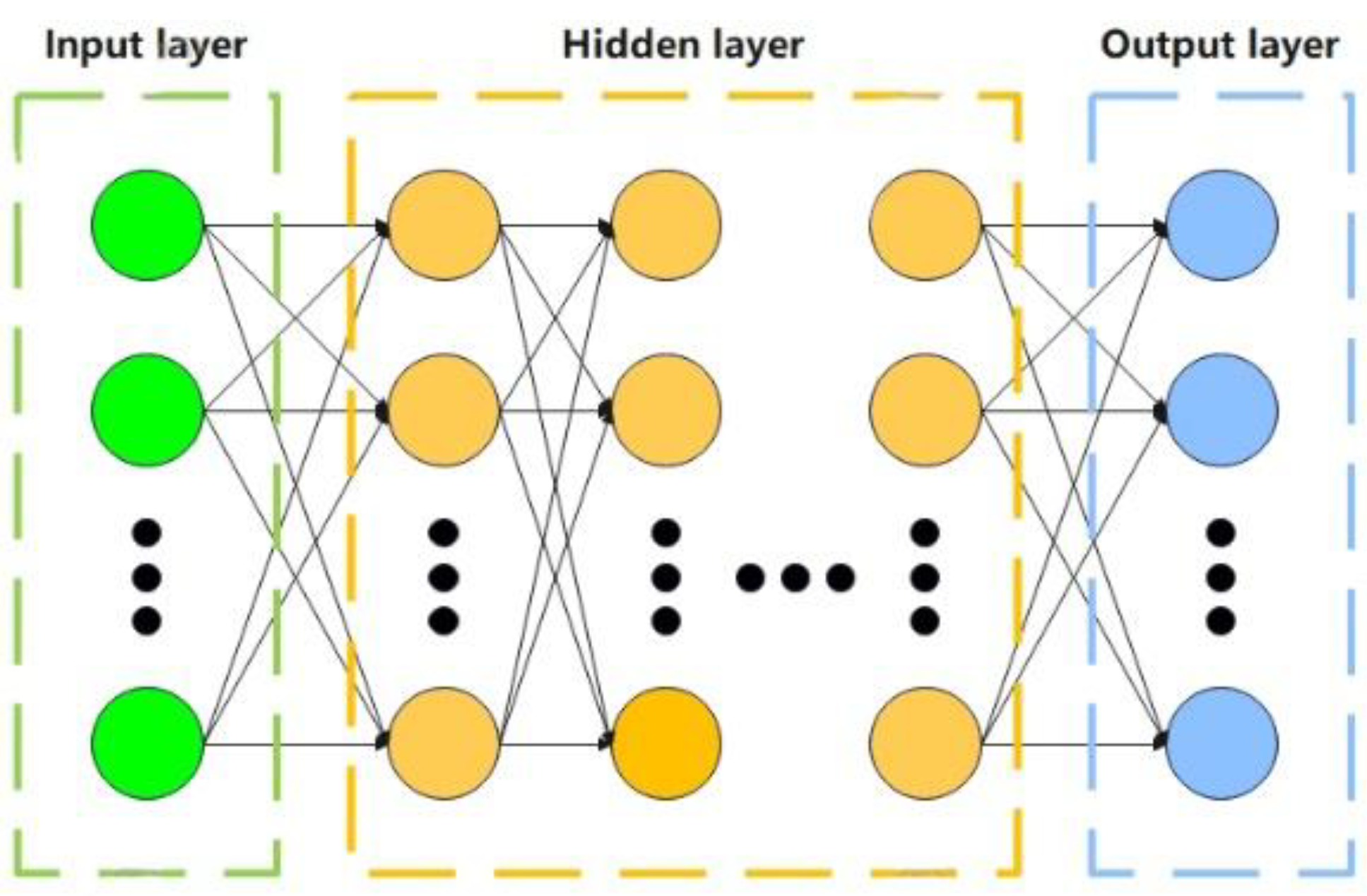

4.2. Multi-Layer Perceptron Network (MLP)

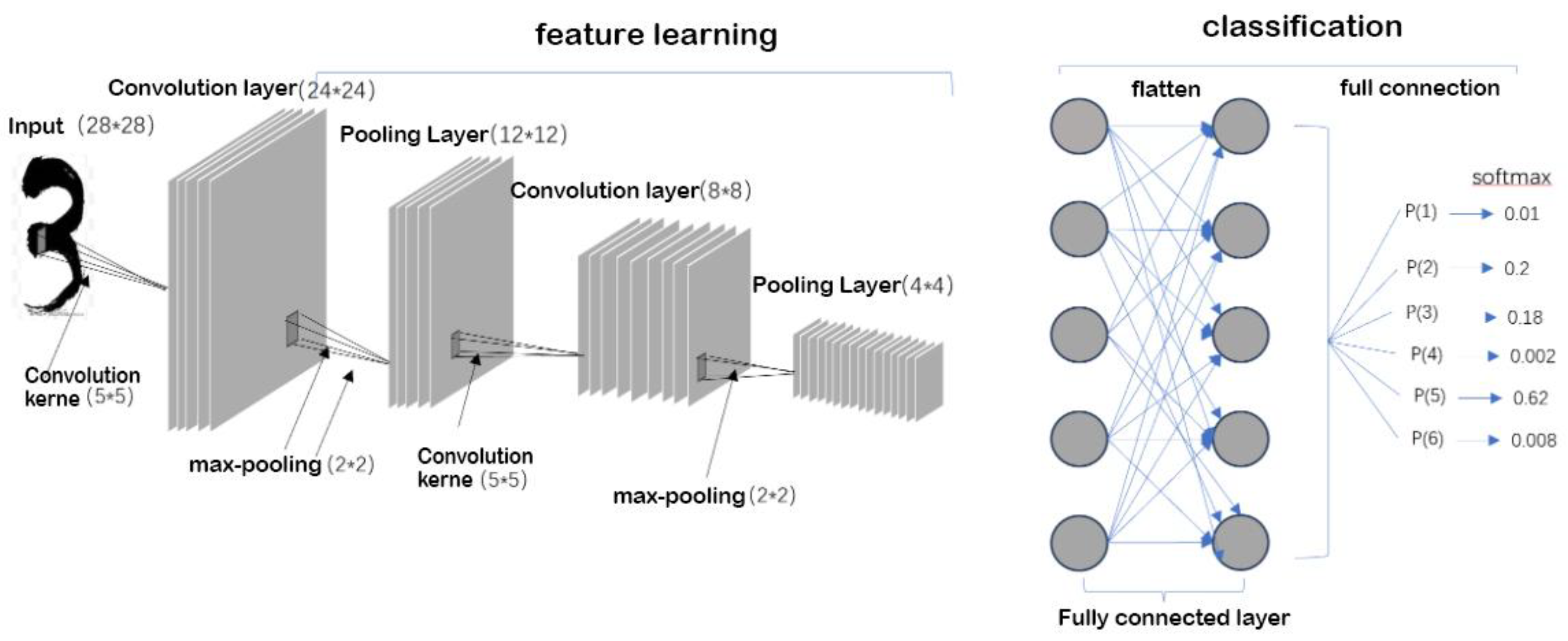

4.3. Convolutional Neural Networks (CNN)

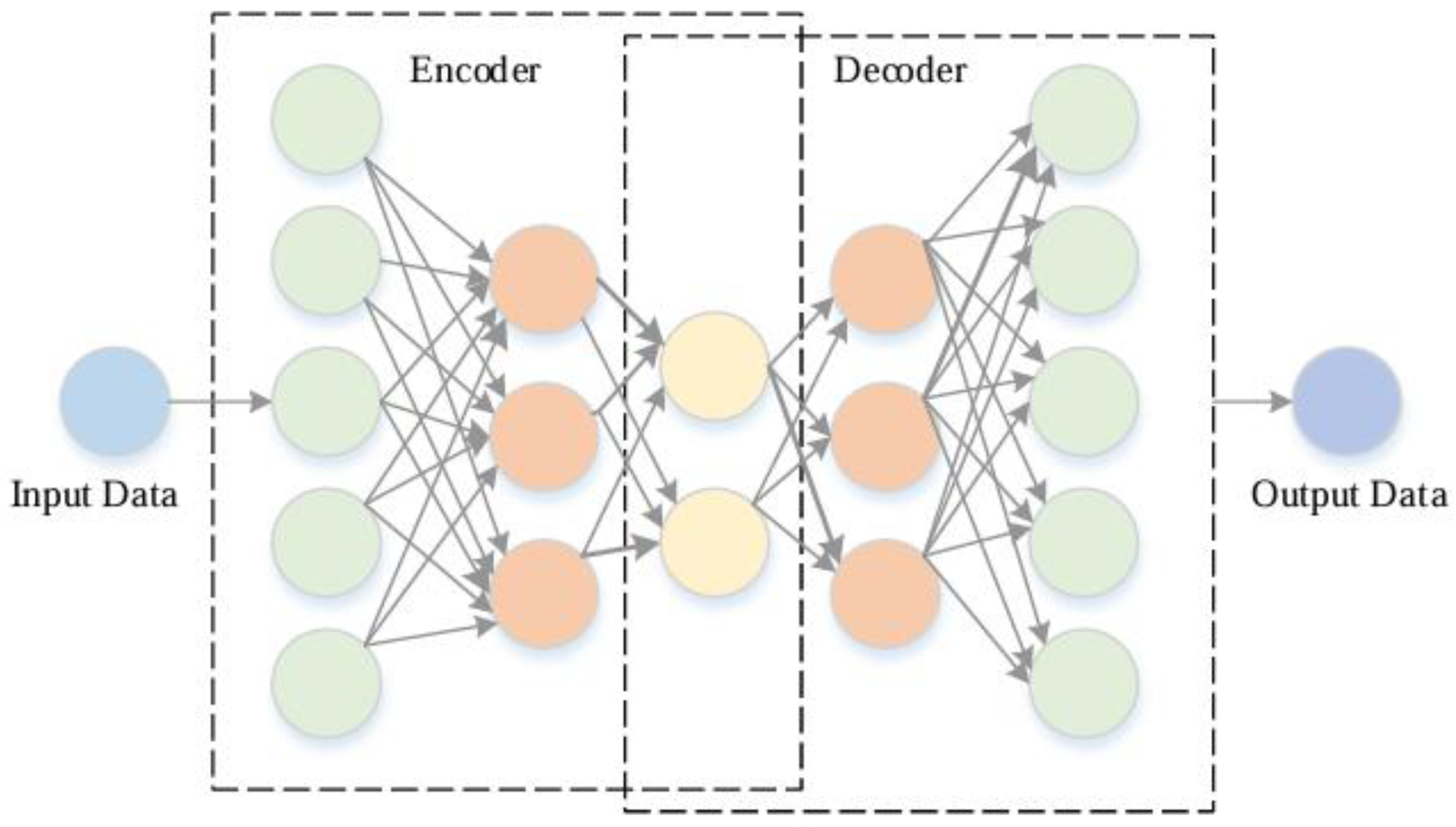

4.4. Autoencoder (AE)

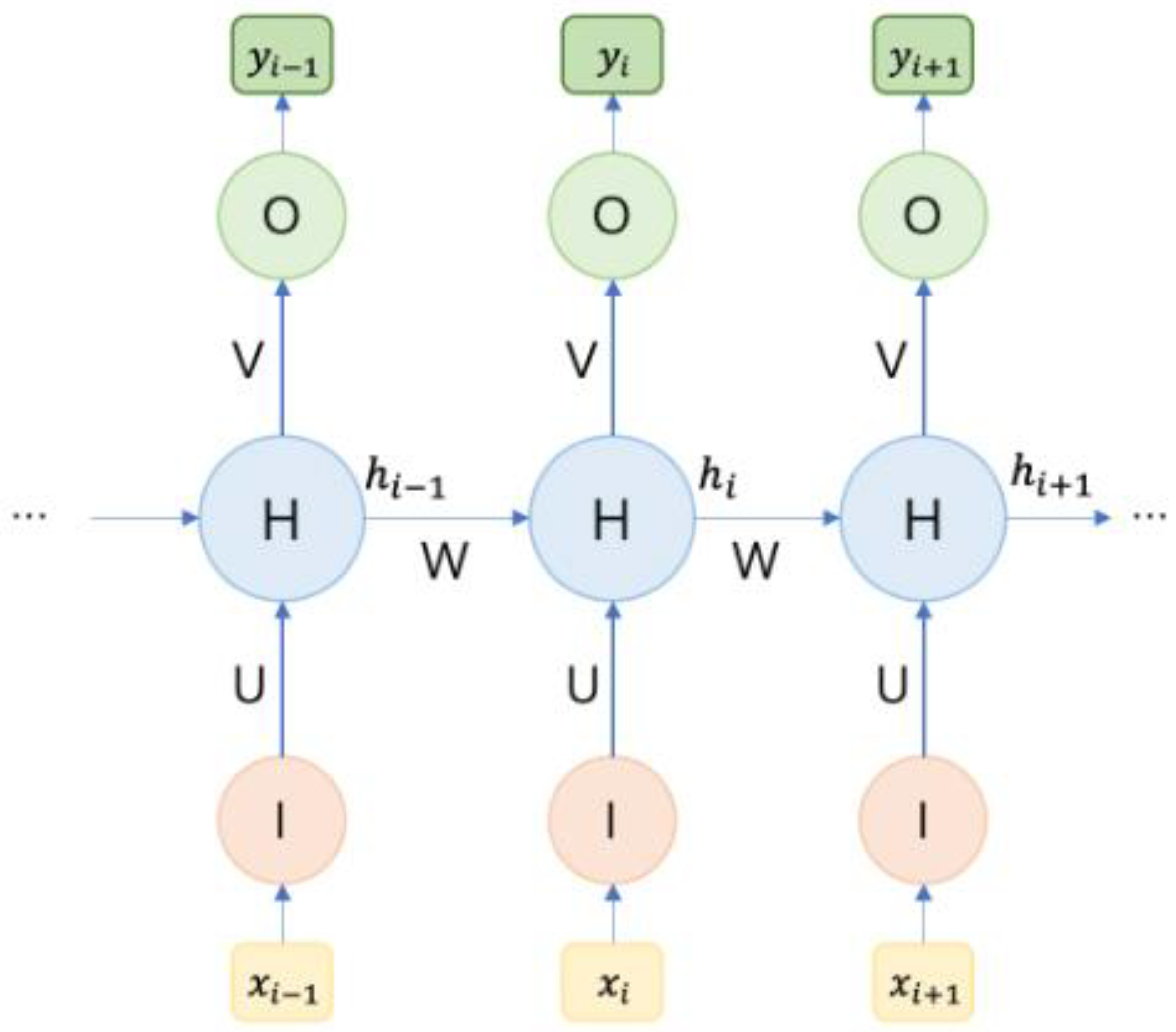

4.5. Recurrent Neural Networks (RNN)

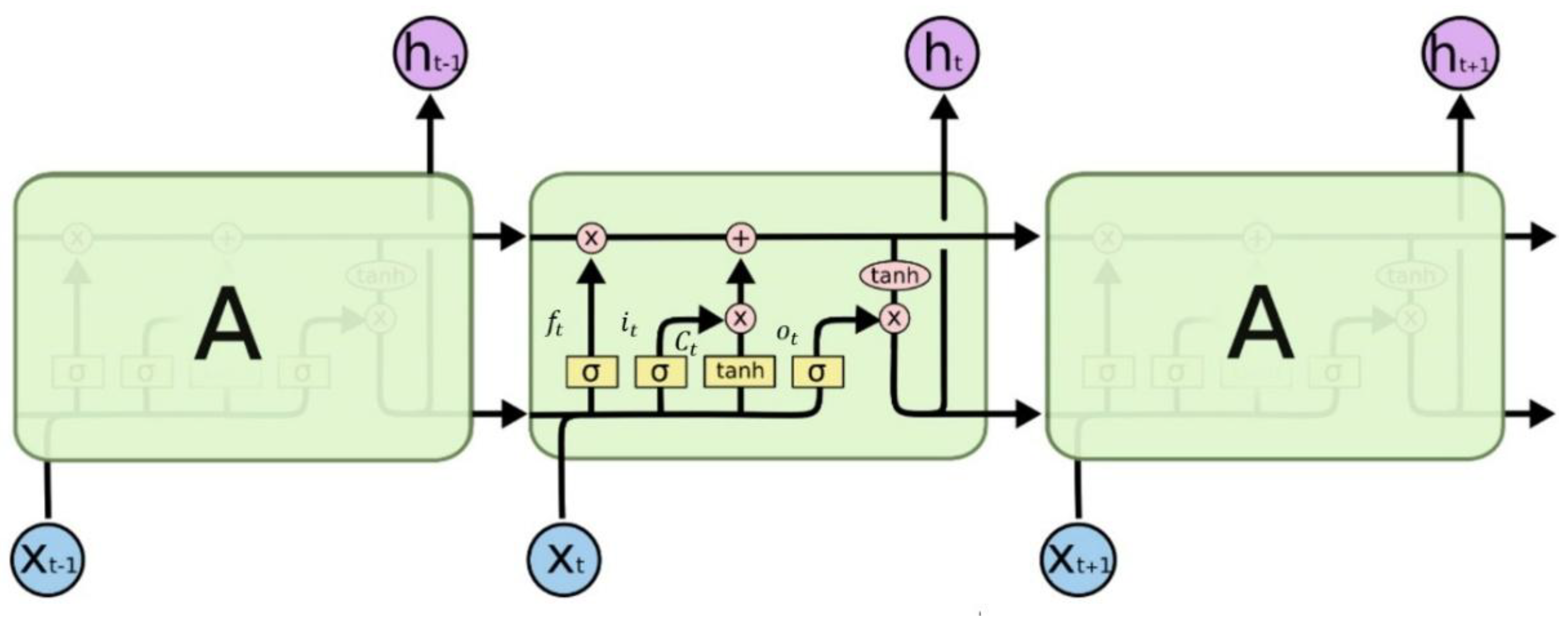

4.6. Long Short-Term Memory Recurrent Neural Networks (LSTM)

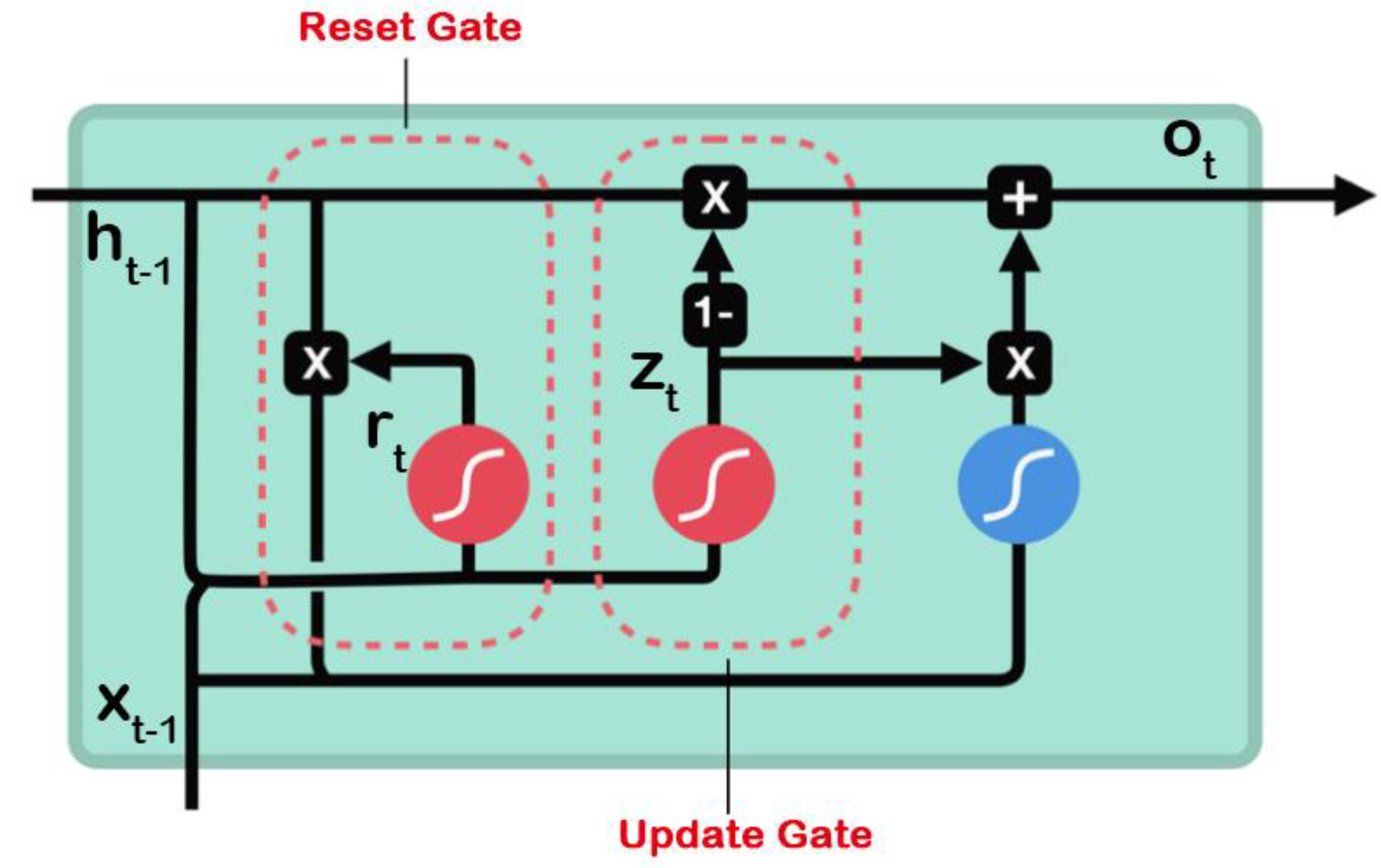

4.7. Gated Recurrent Unit (GRU)

4.8. Graph Convolutional Neural Networks (GCN)

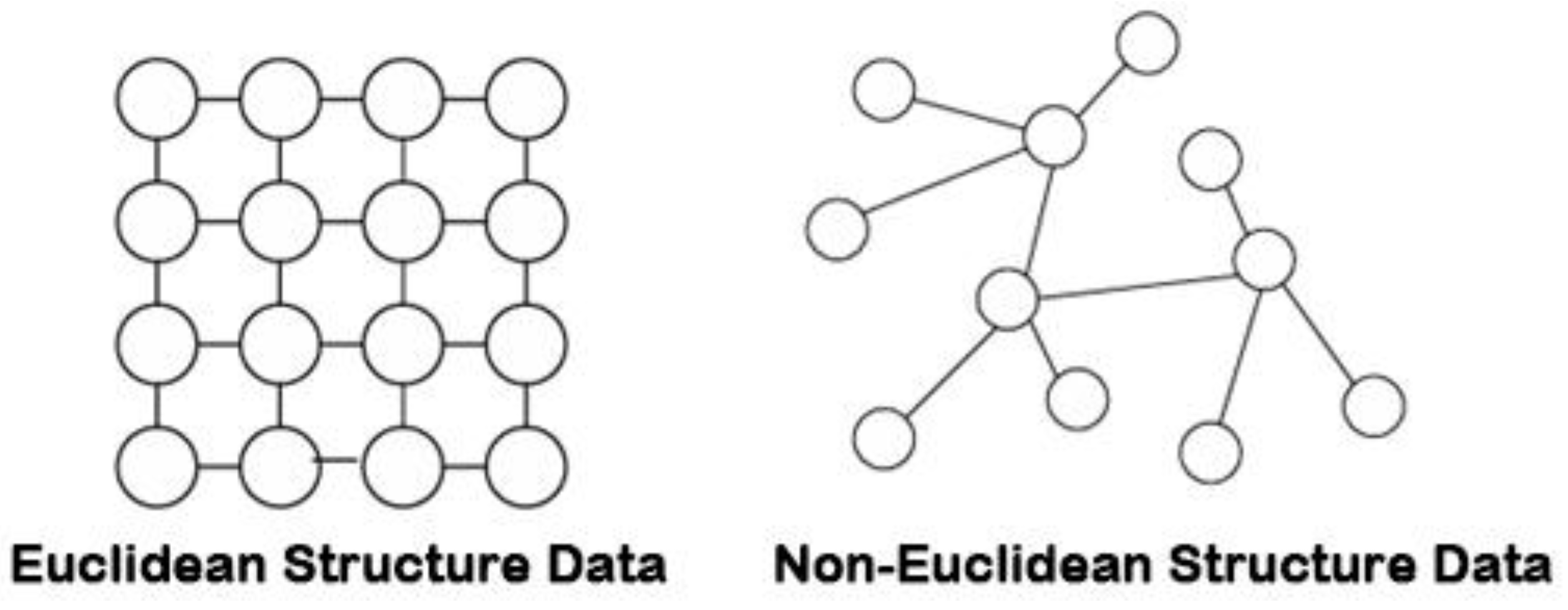

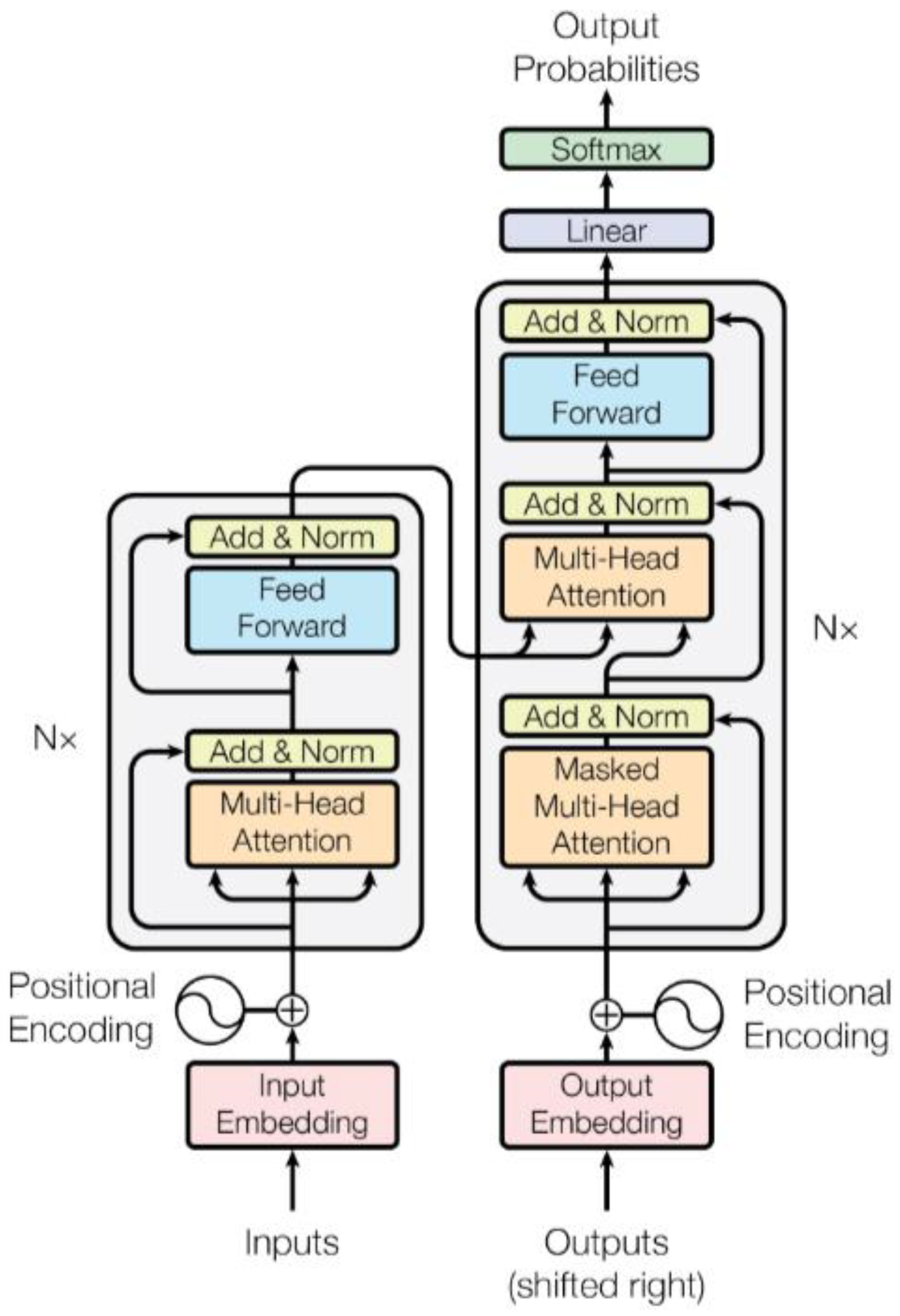

4.9. Attention Mechanism and Transformer

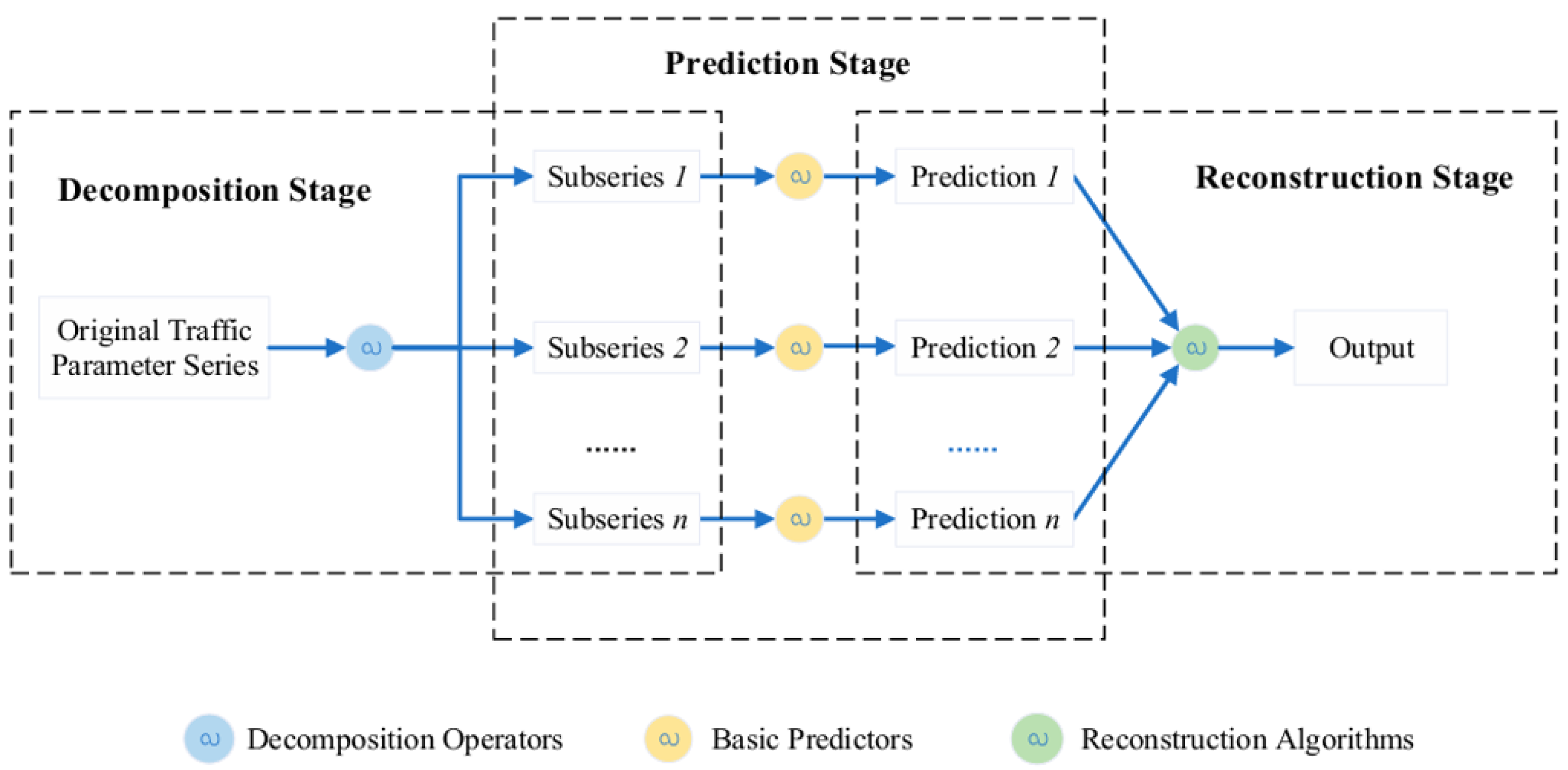

4.10. Hybrid Neural Network

5. Traffic Prediction Related Datasets

5.1. Stationary Traffic Data

- (1)

- PeMS: PeMS is a traffic flow database in California, which collects real-time data from more than 39,000 independent detectors on California highways. Among them, the detector data is mainly from highways and metropolitan areas. These data include information such as vehicle speed, flow, congestion, etc., which provide important basis for traffic management, planning and research. The minimum time interval of the data is 5 minutes, which is very suitable for short-term prediction. It enables the historical average method to automatically fill in the missing data. In the task of traffic flow prediction, the commonly used sub-datasets are PeMS03, PeMS04, PeMS07, PeMS08, PeMS-BAY, etc.

- (2)

- The METR-LA traffic dataset contains traffic information collected from detectors on freeway loops in Los Angeles County. The Los Angeles Freeway Dataset contains traffic data detected by 207 detectors from March 1 to June 30, 2012, with a sampling interval of 5 minutes.

- (3)

- The Loop dataset is mainly loop data in the Seattle area, covering data from four highways: I-5, I-405, I-90, and SR-520. The Loop dataset contains traffic status data from 323 sensor stations, with a sampling interval of 5 minutes [40].

- (4)

- The Korean urban area dataset UVDS contains data on major urban roads collected by 104 VDS sensors, with traffic characteristics such as traffic flow, vehicle type, traffic speed, and occupancy rate [41].

5.2. Mobile Traffic Data

- (1)

- TaxiBJ Dataset: TaxiBJ is a dataset of Beijing taxi data, which includes trajectory and meteorological data from over 34,000 taxis in the Beijing area over a period of 3 years. The data is converted into inflow and outflow traffic for various regions. The sampling interval for the dataset is 30 minutes, and it is primarily used for traffic demand prediction.

- (2)

- Shanghai Taxi Dataset: This dataset, proposed by the Smart City Research Group at the Hong Kong University of Science and Technology, contains GPS reports from 4,000 taxis in Shanghai on February 20, 2007. The vehicle data is sampled at 1-minute intervals and includes information such as vehicle ID, timestamp, longitude and latitude, and speed.

- (3)

- SZ-taxi Dataset: The SZ-taxi dataset consists of taxi trajectory data from Shenzhen, covering the period from January 1 to January 31, 2015. The dataset focuses on the Luohu District of Shenzhen and includes data from 156 main roads. Traffic speeds for each road are calculated every 15 minutes in this dataset.

- (4)

- NYC Bike Dataset: The NYC Bike dataset records bicycle trajectories collected from the New York City Bike Share system. The dataset includes data from 13,000 bicycles and 800 docking stations, providing detailed information about bike usage and movement across the city.

5.3. Common Evaluation Indicators

6. Method Comparison and Analysis

7. Conclusions and Future Perspectives

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Qingcai, C., Wei, Z., & Rong, Z. (2017, May). Smart city construction practices in BFSP. In 2017 29th Chinese Control And Decision Conference (CCDC) (pp. 2714-2717). IEEE.

- Shen, X., & Zhang, Q. (2016, April). Thought on Smart City Construction Planning Based on System Engineering. In International Conference on Education, Management and Computing Technology (ICEMCT-16) (pp. 1156-1162). Atlantis Press.

- Lana, I.; Del Ser, J.; Velez, M.; Vlahogianni, E.I. Road Traffic Forecasting: Recent Advances and New Challenges. IEEE Intell. Transp. Syst. Mag. 2018, 10, 93–109. [CrossRef]

- Daeho K. Cooperative Traffic Signal Control with Traffic Flow Prediction in Multi-Intersection[J]. Sensors, 2020, 20(1): 137.

- Ahn, J.; Ko, E.; Kim, E.Y. Highway traffic flow prediction using support vector regression and Bayesian classifier. 2016 International Conference on Big Data and Smart Computing (BigComp). 2016; pp. 239–244.

- Dai, G.; Ma, C.; Xu, X. Short-Term Traffic Flow Prediction Method for Urban Road Sections Based on Space–Time Analysis and GRU. IEEE Access 2019, 7, 143025–143035. [CrossRef]

- Smith, B.L.; Demetsky, M.J. Traffic Flow Forecasting: Comparison of Modeling Approaches. J. Transp. Eng. 1997, 123, 261–266. [CrossRef]

- Chen, C.; Hu, J.; Meng, Q.; Zhang, Y. Short-time traffic flow prediction with ARIMA-GARCH model. 2011 IEEE Intelligent Vehicles Symposium (IV). pp. 607–612.

- Makridakis, S.; Hibon, M. ARMA Models and the Box-Jenkins Methodology. J. Forecast. 1997, 16, 147–163. [CrossRef]

- Li, Q., Li, R., Ji, K., & Dai, W. (2015, November). Kalman filter and its application. In 2015 8th international conference on intelligent networks and intelligent systems (ICINIS) (pp. 74-77). IEEE.

- Min, X., Hu, J., Chen, Q., Zhang, T., & Zhang, Y. (2009, October). Short-term traffic flow forecasting of urban network based on dynamic STARIMA model. In 2009 12th International IEEE conference on intelligent transportation systems (pp. 1-6). IEEE.

- Min, W.; Wynter, L. Real-time road traffic prediction with spatio-temporal correlations. Transp. Res. Part C: Emerg. Technol. 2011, 19, 606–616. [CrossRef]

- Yin, X., Wu, G., Wei, J., Shen, Y., Qi, H., & Yin, B. (2021). Deep learning on traffic prediction: Methods, analysis, and future directions. IEEE Transactions on Intelligent Transportation Systems, 23(6), 4927-4943.

- Cheng, S.; Lu, F.; Peng, P.; Wu, S. Short-term traffic forecasting: An adaptive ST-KNN model that considers spatial heterogeneity. Comput. Environ. Urban Syst. 2018, 71, 186–198. [CrossRef]

- Tu, Y.; Lin, S.; Qiao, J.; Liu, B. Deep traffic congestion prediction model based on road segment grouping. Appl. Intell. 2021, 51, 8519–8541. [CrossRef]

- Ke, K.-C.; Huang, M.-S. Quality Prediction for Injection Molding by Using a Multilayer Perceptron Neural Network. Polymers 2020, 12, 1812. [CrossRef]

- Slimani, N.; Slimani, I.; Sbiti, N.; Amghar, M. Machine Learning and statistic predictive modeling for road traffic flow. Int. J. Traffic Transp. Manag. 2021, 03, 17–24. [CrossRef]

- Aljuaydi, F.; Wiwatanapataphee, B.; Wu, Y.H. Multivariate machine learning-based prediction models of freeway traffic flow under non-recurrent events. Alex. Eng. J. 2022, 65, 151–162. [CrossRef]

- Girshick, R. (2015). Fast r-cnn. In Proceedings of the IEEE international conference on computer vision (pp. 1440-1448).

- Bogaerts, T.; Masegosa, A.D.; Angarita-Zapata, J.S.; Onieva, E.; Hellinckx, P. A graph CNN-LSTM neural network for short and long-term traffic forecasting based on trajectory data. Transp. Res. Part C: Emerg. Technol. 2020, 112, 62–77. [CrossRef]

- Palm, R. B. (2012). Prediction as a candidate for learning deep hierarchical models of data. Technical University of Denmark, 5, 19-22.

- Kashyap, A.A.; Raviraj, S.; Devarakonda, A.; K, S.R.N.; V, S.K.; Bhat, S.J. Traffic flow prediction models – A review of deep learning techniques. Cogent Eng. 2021, 9. [CrossRef]

- Zheng, H.; Lin, F.; Feng, X.; Chen, Y. A Hybrid Deep Learning Model With Attention-Based Conv-LSTM Networks for Short-Term Traffic Flow Prediction. IEEE Trans. Intell. Transp. Syst. 2020, 22, 6910–6920. [CrossRef]

- Zhao, Z.; Chen, W.; Wu, X.; Chen, P.C.Y.; Liu, J. LSTM network: a deep learning approach for short-term traffic forecast. IET Intell. Transp. Syst. 2017, 11, 68–75. [CrossRef]

- Fu, R., Zhang, Z., & Li, L. (2016, November). Using LSTM and GRU neural network methods for traffic flow prediction. In 2016 31st Youth academic annual conference of Chinese association of automation (YAC) (pp. 324-328). IEEE.

- Dey, R., & Salem, FM (2017, August). Gate-variants of gated recurrent unit (GRU) neural networks. In 2017 IEEE 60th international midwest symposium on circuits and systems (MWSCAS) (pp. 1597-1600). IEEE.

- Chung, J., Gulcehre, C., Cho, K., & Bengio, Y. (2015, June). Gated feedback recurrent neural networks. In International conference on machine learning (pp. 2067-2075). PMLR.

- Kipf, T. N., & Welling, M. (2016). Semi-supervised classification with graph convolutional networks. arxiv preprint arxiv:1609.02907.

- Han, X.; Gong, S. LST-GCN: Long Short-Term Memory Embedded Graph Convolution Network for Traffic Flow Forecasting. Electronics 2022, 11, 2230. [CrossRef]

- Hu, N.; Zhang, D.; Xie, K.; Liang, W.; Hsieh, M.-Y. Graph learning-based spatial-temporal graph convolutional neural networks for traffic forecasting. Connect. Sci. 2021, 34, 429–448. [CrossRef]

- Zuo, D.; Li, M.; Zeng, L.; Wang, M.; Zhao, P. A Cross-Attention Based Diffusion Convolutional Recurrent Neural Network for Air Traffic Forecasting. AIAA SCITECH 2025 Forum. 2025.

- Yao, Z.; Xia, S.; Li, Y.; Wu, G.; Zuo, L. Transfer Learning With Spatial–Temporal Graph Convolutional Network for Traffic Prediction. IEEE Trans. Intell. Transp. Syst. 2023, 24, 8592–8605. [CrossRef]

- Bahdanau, D., Cho, K., & Bengio, Y. (2014). Neural machine translation by jointly learning to align and translate. arxiv preprint arxiv:1409.0473.

- Vaswani, A. (2017). Attention is all you need. Advances in Neural Information Processing Systems.

- Ashish, V. (2017). Attention is all you need. Advances in neural information processing systems, 30, I.

- Yan, H.; Ma, X.; Pu, Z. Learning Dynamic and Hierarchical Traffic Spatiotemporal Features With Transformer. IEEE Trans. Intell. Transp. Syst. 2021, 23, 22386–22399. [CrossRef]

- Fan, Y., Yeh, C. C. M., Chen, H., Wang, L., Zhuang, Z., Wang, J., ... & Zhang, W. (2023, September). Spatial-Temporal Graph Sandwich Transformer for Traffic Flow Forecasting. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases (pp. 210-225). Cham: Springer Nature Switzerland.

- Chen, Y.; Wang, W.; Hua, X.; Zhao, D. Survey of Decomposition-Reconstruction-Based Hybrid Approaches for Short-Term Traffic State Forecasting. Sensors 2022, 22, 5263. [CrossRef]

- Guo, S.; Lin, Y.; Feng, N.; Song, C.; Wan, H. Attention Based Spatial-Temporal Graph Convolutional Networks for Traffic Flow Forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, Hawaii, 27 January 2019; Volume 33, pp. 922–929.

- Cui, Z., Ke, R., Pu, Z., & Wang, Y. (2018). Deep bidirectional and unidirectional LSTM recurrent neural network for network-wide traffic speed prediction. arxiv preprint arxiv:1801.02143.

- Bui, K.-H.N.; Yi, H.; Cho, J. (2021, April). Uvds: a new dataset for traffic forecasting with spatial-temporal correlation. In Asian Conference on Intelligent Information and Database Systems (pp. 66-77). Cham: Springer International Publishing.

- Bui, K.-H.N.; Cho, J.; Yi, H. Spatial-temporal graph neural network for traffic forecasting: An overview and open research issues. Appl. Intell. 2021, 52, 2763–2774. [CrossRef]

| Type | Dataset | Features | Time interval | Traffic data | Source |

| Stationary traffic data | PeMS | Flow, speed | 5min | 39000 | https://github.com//Davidham3/ASTGCN |

| METR-LA | speed | 5min | 207 | https://github.com//liyaguang/DCRNN | |

| Loop | speed | 5min | 323 | https://github.com/zhiyongc/Seattle-Loop-Data | |

| UVDS | Flow, speed | 5min | 104 | [17] | |

| Mobile traffic data | TaxiBJ | Flow | 30min | 34,000 vehicles | https://github.com/TolicWang/DeepST/tree/master/data/TaxiBJ |

| SZ-taxi | Flow, speed | 15min | 156 roads | https://paperswithcode.com/dataset/sz-taxi | |

| NYC Bike | Flow | 60min | 13,000 bicycles | https://paperswithcode.com/dataset/nycbike1 | |

| Shanghai Taxi Dataset | speed | 1min | 4000 vehicles | https://www.cse.ust.hk/scrg/ |

| Model | PEMS08 | METR-LA | ||

| MAE | RMSE | MAE | RMSE | |

| ARIMA | 33.04 | 50.41 | 2.04 | 5.89 |

| MLP | 26.73 | 35.81 | 1.84 | 4.62 |

| LSTM | 27.34 | 37.43 | 1.86 | 4.66 |

| GRU | 26.95 | 36.61 | 1.86 | 4.71 |

| ASTGCN | 19.63 | 27.50 | 1.31 | 3.54 |

| Category | Model | Advantage | Disadvantages |

| Traditional statistical models | HA | The model algorithm is simple, runs fast, and has low execution time overhead | The model predicts future data based on the mean of historical data. Simple linear operations cannot represent the deep nonlinear relationship of spatiotemporal series. It is not ideal for predicting data with strong disturbance. |

| ARIMA | The model algorithm is simple and based on a large amount of uninterrupted data, this model has a high prediction accuracy and is particularly suitable for stable traffic flow. | Its modeling capability is relatively weak for nonlinear trends or very complex time series data, and is not suitable for non-stationary traffic flows. | |

| Kalman filter model | The algorithm is efficient, can process large amounts of data quickly, is adaptive, and can automatically adjust prediction results based on historical data, making it suitable for traffic flow prediction. | Because of its iterative nature, it requires continuous matrix operations, the algorithm has a large time overhead and cannot adapt to nonlinear changes in traffic flow. | |

| Machine Learning Models | KNN | High portability, simple principle, easy to implement. High model accuracy, good adaptability to nonlinear and non-homogeneous data. Suitable for short-term traffic status prediction | The processing efficiency of large-scale data sets is low, the model converges slowly, and the running time is long, which may not meet the requirements of real-time prediction of road traffic flow. |

| Deep Learning Models | MLP | The training process of MLP is relatively simple, and there are many optimization methods | The training process of MLP may take a long time and require high computing resources. When the amount of data or features are small, MLP may face overfitting problems. |

| CNN | CNN can effectively extract the spatial characteristics and temporal dependencies of traffic flow data, and has strong model generalization ability and high prediction accuracy. | Due to the complex structure of the model, the training process requires a lot of computing resources and time. At the same time, the structure of CNN cannot capture time dependencies well and has limited ability to process sequence data. | |

| AE | AE can learn the laws and patterns of historical traffic data to predict future traffic flow, which improves the efficiency and accuracy of traffic management. | For complex data distributions, training autoencoders can be difficult and requires careful tuning of the network structure and hyperparameters. | |

| RNN | RNN can capture the dynamic change characteristics of time series data, effectively use historical traffic flow information to predict future trends, and is suitable for processing traffic data with time dependence. | It is easy to encounter gradient vanishing or gradient exploding problems during training, making it difficult to effectively learn long-term dependencies. | |

| LSTM | LSTM’s unique gating mechanism can effectively alleviate the long-term dependency problem of traditional RNN, enabling the model to better capture long-term trends and cyclical changes in time series and improve prediction accuracy. | The LSTM model is relatively complex, takes a long time to train, and requires a large amount of historical data for training to achieve better results. | |

| GRU | GRU is simpler than LSTM, but it can also solve the problems of long-term dependence and gradient disappearance. | Its prediction performance is affected by data quality and feature selection. In complex scenarios, its prediction ability may be slightly inferior to LSTM. | |

| GCN | Good at processing non-Euclidean structure data, able to capture the relationship between nodes, and has good generalization ability | The computational resource requirements are high and overfitting may occur when processing large graphs | |

| Transformer | Transformer’s parallel computing capability greatly improves computational efficiency, and its self-attention mechanism enables the model to consider all positions in the sequence at the same time, thereby better capturing long-distance dependencies. | Requires a large amount of historical data for training, and the computational cost is high | |

| Hybrid Neural Network | Comprehensive learning and accurate prediction of traffic flow data can be achieved by combining the advantages of multiple neural network models. | The model is highly complex, and the training and optimization process is relatively difficult, requiring longer computing time and higher computing resources. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).