Submitted:

05 March 2025

Posted:

06 March 2025

Read the latest preprint version here

Abstract

Keywords:

I. Introduction

II. Purpose of the Study

III. Related Work

- Artificial Neural Networks (ANNs): ANNs, inspired by the human brain, consist of interconnected units and can adapt to different learning algorithms. They are particularly effective in complex tasks like churn prediction. The Multi-Layer Perceptron, a common ANN model, is trained using the Back-Propagation Network algorithm. ANNs have shown superior performance over Decision Trees and Logistic Regression in churn prediction scenarios [5,6].

- Support Vector Machine (SVM): SVMs, classified as supervised learning techniques, are adept at uncovering latent patterns within data. Kernel functions, such as the Gaussian Radial Basis and Polynomial kernel, enhance SVMs' performance. In some cases, SVMs outperform ANNs and Decision Trees in churn prediction, depending on data characteristics [7,8].

- Logistic Regression (LR): LR, a probabilistic statistical classification method, predicts churn based on multiple predictor variables. With appropriate data pre-processing, LR's accuracy can rival that of Decision Trees [12].

- (A)

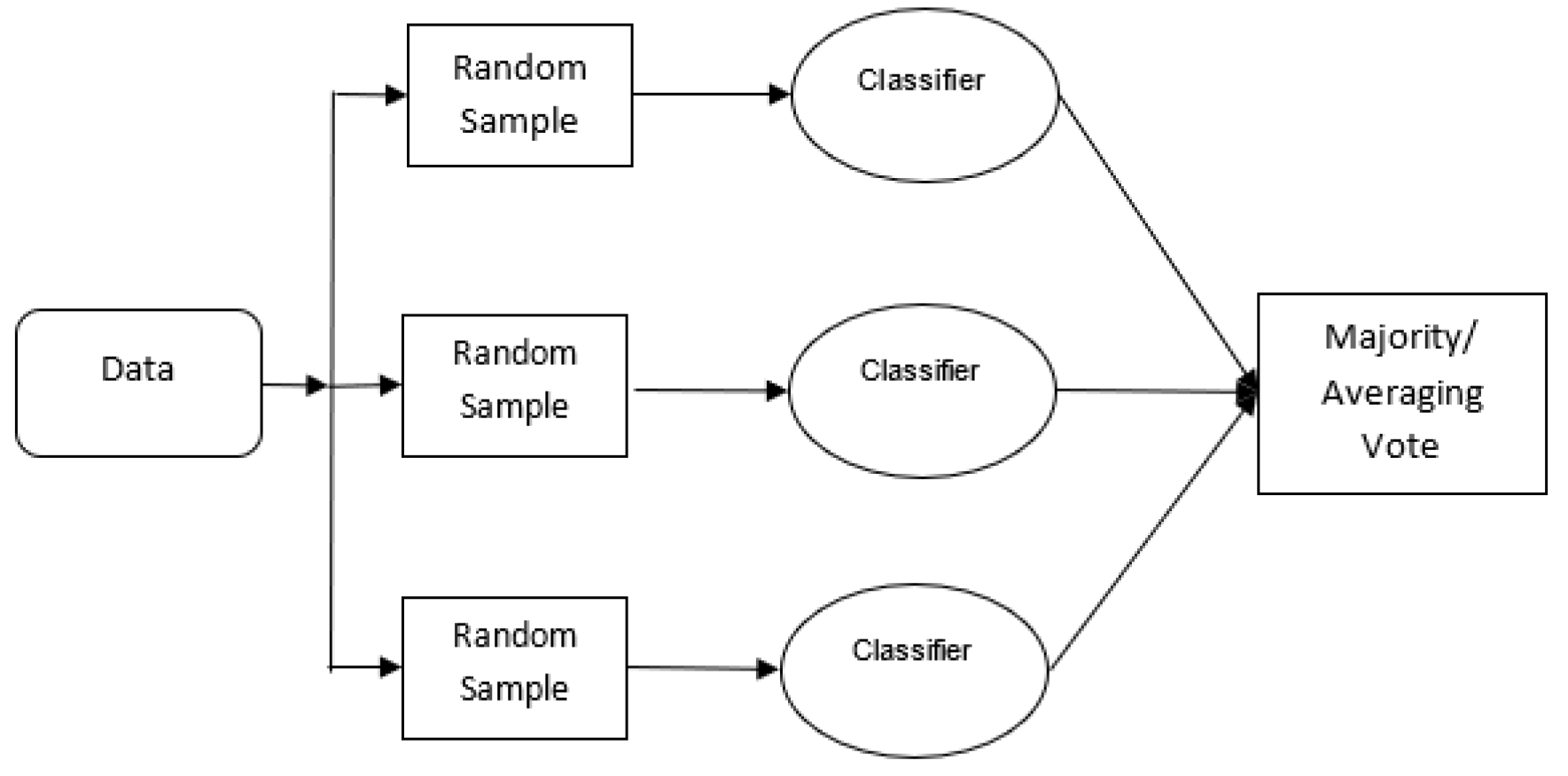

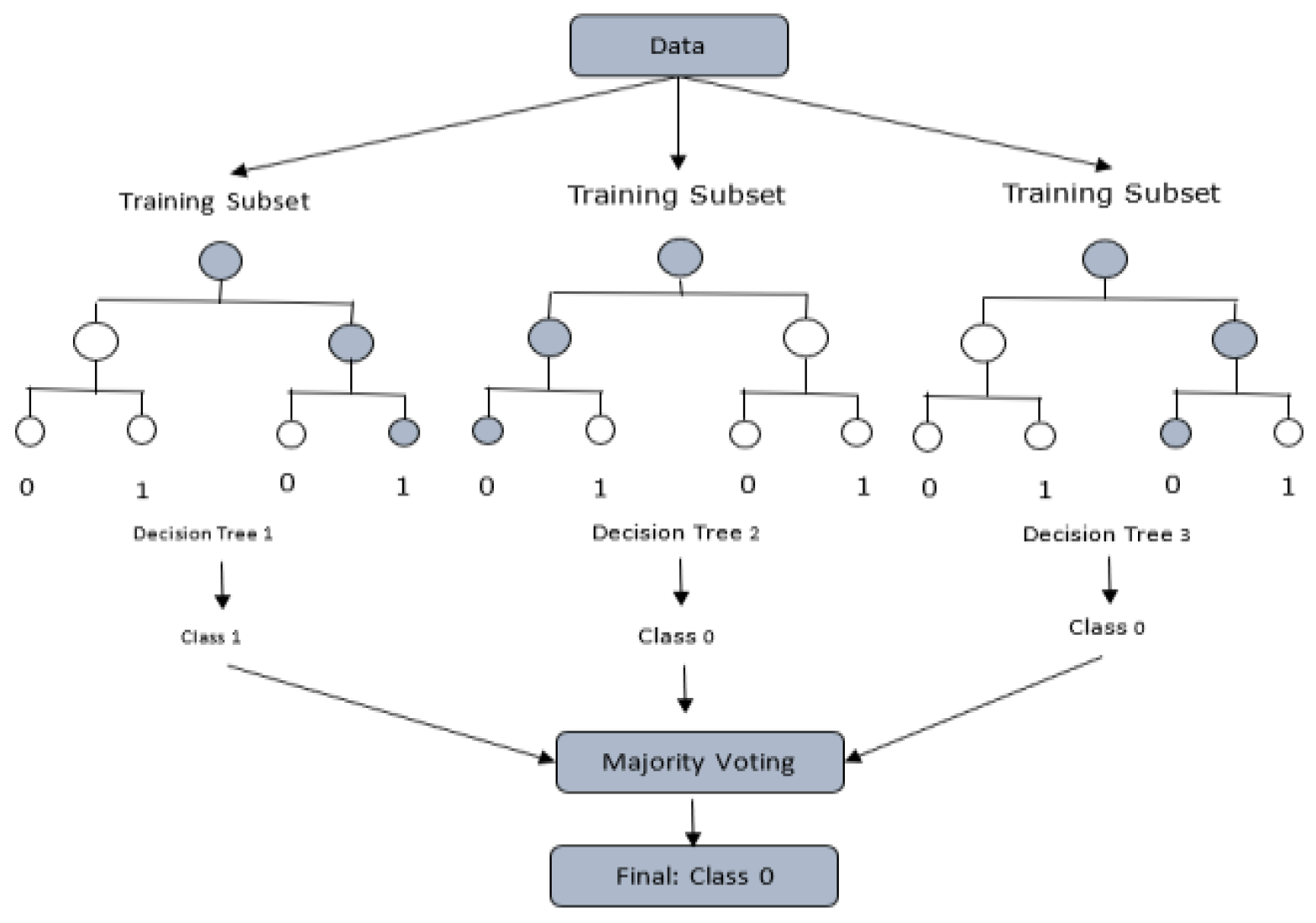

- Bagging: Involves training models on different subsets of the training data and combining their outputs through majority or average voting, as shown in Figure 1. Random Forests, an advancement of Decision Trees, use the bagging technique to yield better performance than individual DTs [13,14,15,16,17,18], as shown in Figure 2.

- (B)

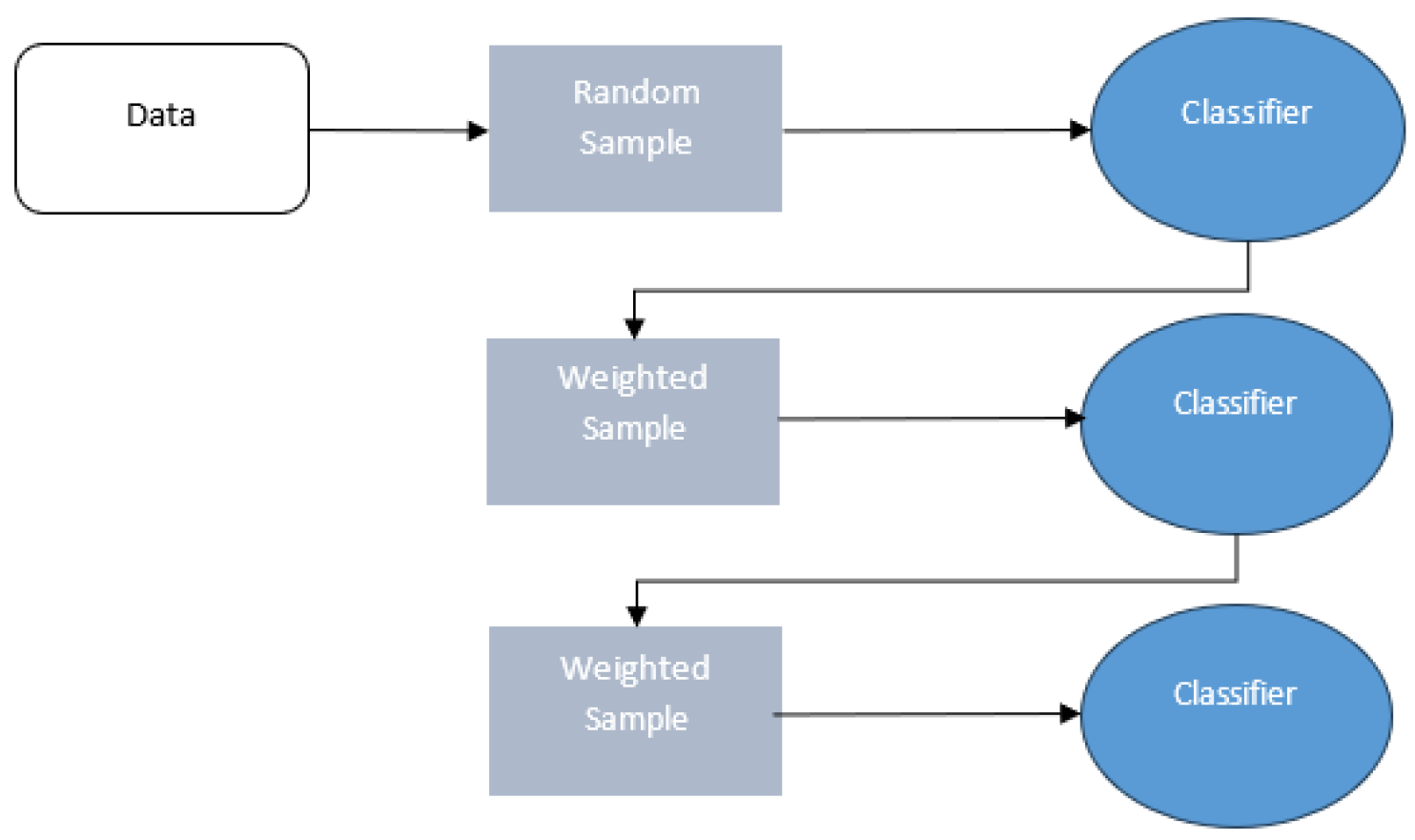

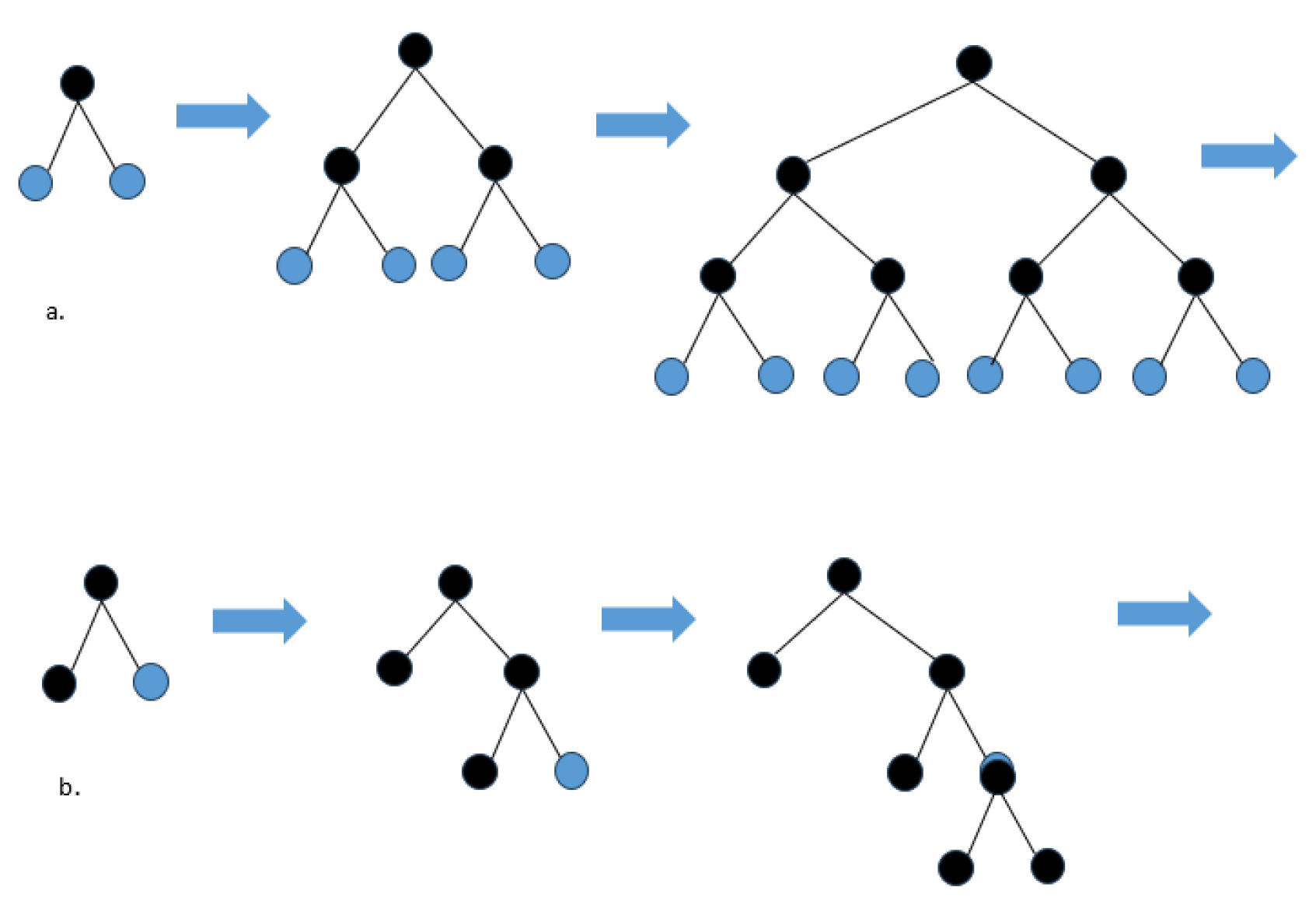

- Boosting: This method sequentially combines weak learners to form a stronger model, reducing the bias of the model, as shown in Figure 3. Gradient boosting techniques like XGBoost, LightGBM, and CatBoost are notable examples. They address over-fitting through loss function optimization and are effective in handling categorical data and large-scale datasets [13,19,20,21,22,23,24,25], as shown in Figure 4.

IV. Method

A. Training and Validation Process

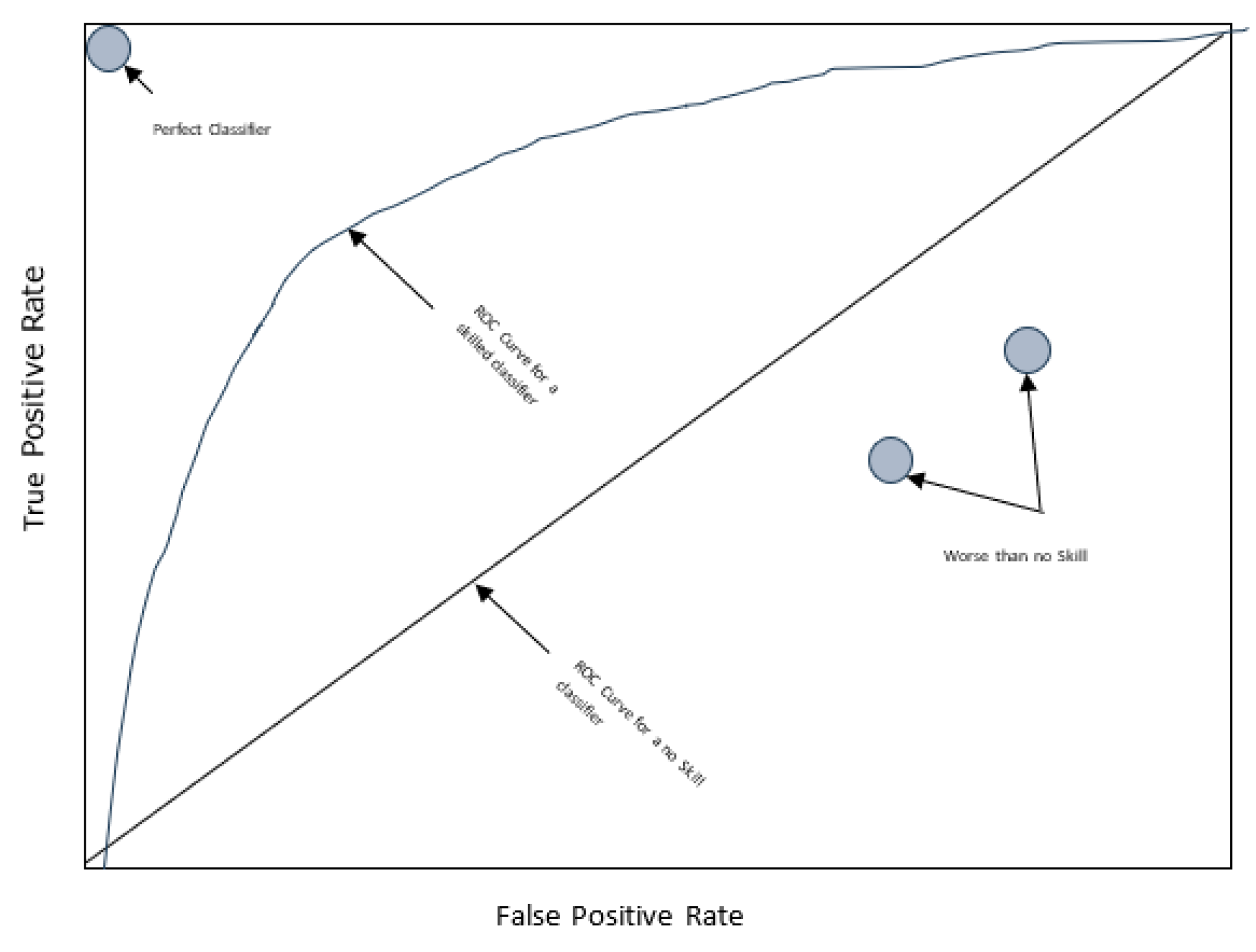

B. Evaluation Metrics

V. Results

A. Simulation Setup

B. Simulation Results

VI. Conclusions

References

- Alok, K.; Mayank, J. Ensemble Learning for AI Developers; BApress: Berkeley, CA, USA, 2020.

- Van Wezel, M.; Potharst, R. Improved customer choice predictions using ensemble methods. Eur. J. Oper. Res. 2007, 181, 436–452. [Google Scholar]

- Ullah, I.; Raza, B.; Malik, A.K.; Imran, M.; Islam, S.U.; Kim, S.W. A Churn Prediction Model Using Random Forest: Analysis of Machine Learning Techniques for Churn Prediction and Factor Identification in Telecom Sector. IEEE Access 2019, 7, 60134–60149. [Google Scholar]

- Lalwani, P.; Mishra, M.K.; Chadha, J.S.; Sethi, P. Customer churn prediction system: A machine learning approach. Computing 2021, 104, 271–294. [Google Scholar] [CrossRef]

- Tarekegn, A.; Ricceri, F.; Costa, G.; Ferracin, E.; Giacobini, M. Predictive Modeling for Frailty Conditions in Elderly People:Machine Learning Approaches. Psychopharmacol. 2020, 8, e16678. [Google Scholar]

- Ahmed, M.; Afzal, H.; Siddiqi, I.; Amjad, M.F.; Khurshid, K. Exploring nested ensemble learners using overproduction and choose approach for churn prediction in telecom industry. Neural Comput. Appl. 2018, 32, 3237–3251. [Google Scholar]

- Shaaban, E.; Helmy, Y.; Khedr, A.; Nasr, M. A proposed churn prediction model. J. Eng. Res. Appl. 2012, 2, 693–697. [Google Scholar]

- Hur, Y.; Lim, S. Customer churning prediction using support vector machines in online auto insurance service. In Advances in Neural Networks, Proceedings of the ISNN 2005, Chongqing, China, 30 May–1 June 2005; Springer: Berlin/Heidelberg, Germany, 2005; pp. 928–933. [Google Scholar]

- Lee, S.J.; Siau, K. A review of data mining techniques. Ind. Manag. Data Syst. 2001, 101, 41–46. [Google Scholar]

- Mazhari, N.; et al. "An overview of classification and its algorithms." 3th Data Mining Conference (IDMC'09): Tehran. 2009.

- Linoff, G.S.; Berry, M.J. Data Mining Techniques: For Marketing, Sales, and Customer Relationship Management; John Wiley & Sons: Hoboken, NJ, USA, 2011. [Google Scholar]

- Jadhav, R.J.; Pawar, U.T. Churn prediction in telecommunication using data mining technology. IJACSA Edit. 2011, 2, 17–19. [Google Scholar]

- Witten, I.H.; Frank, E.; Hall, M.A. Data Mining: Practical Machine Learning Tools and Techniques; Elsevier Science & Technology: San Francisco, CA, USA, 2016. [Google Scholar]

- Ho, T.K. Random decision forests. In Proceedings of the 3rd International Conference on Document Analysis and Recognition, Montreal, QC, Canada, 14–16 August 1995; Volume 1. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar]

- Karlberg, J.; Axen, M. Binary Classification for Predicting Customer Churn; Umeå University: Umeå, Sweden, 2020. [Google Scholar]

- Windridge, D.; Nagarajan, R. Quantum Bootstrap Aggregation. In Proceedings of the International Symposium on Quantum Interaction, San Francisco, CA, USA, 20–22 July 2016; Springer: Berlin/Heidelberg, Germany, 2017. [Google Scholar]

- Wang, J.C.; Hastie, T. Boosted Varying-Coefficient Regression Models for Product Demand Prediction. J. Comput. Graph. Stat. 2014, 23, 361–382. [Google Scholar] [CrossRef]

- Al Daoud, E. Intrusion Detection Using a New Particle Swarm Method and Support Vector Machines. World Acad. Sci. Eng. Technol. 2013, 77, 59–62. [Google Scholar]

- Al Daoud, E.; Turabieh, H. New empirical nonparametric kernels for support vector machine classification. Appl. Soft Comput. 2013, 13, 1759–1765. [Google Scholar]

- Al Daoud, E. An Efficient Algorithm for Finding a Fuzzy Rough Set Reduct Using an Improved Harmony Search. Int. J. Mod. Educ. Comput. Sci. (IJMECS) 2015, 7, 16–23. [Google Scholar]

- Zhang, Y.; Haghani, A. A gradient boosting method to improve travel time prediction. Transp. Res. Part C Emerg. Technol. 2015, 58, 308–324. [Google Scholar]

- Dorogush, A.; Ershov, V.; Gulin, A. CatBoost: Gradient boosting with categorical features support. In Proceedings of the Thirty-first Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 1–7. [Google Scholar]

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Ye, Q.; Liu, T.Y. Lightgbm: A highly efficient gradient boosting decision tree. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2017; Volume 30. [Google Scholar]

- Klein, A.; Falkner, S.; Bartels, S.; Hennig, P.; Hutter, F. Fast Bayesian optimization of machine learning hyperparameters on large datasets. In Proceedings of the Machine Learning Research PMLR, Sydney, NSW, Australia, 6–11 August 2017; Volume 54, pp. 528–536. [Google Scholar]

- Imani, M.; Arabnia, H.R. Hyperparameter optimization and combined data sampling techniques in machine learning for customer churn prediction: a comparative analysis. Technologies 2023, 11, 167. [Google Scholar] [CrossRef]

- Joudaki, Majid, et al. "Presenting a New Approach for Predicting and Preventing Active/Deliberate Customer Churn in Telecommunication Industry." Proceedings of the International Conference on Security and Management (SAM). The Steering Committee of The World Congress in Computer Science, Computer Engineering and Applied Computing (WorldComp), 2011.

- Imani, Mehdi, et al. "The Impact of SMOTE and ADASYN on Random Forest and Advanced Gradient Boosting Techniques in Telecom Customer Churn Prediction." 2024 10th International Conference on Web Research (ICWR). IEEE, 2024.

- Joudaki, M.; Imani, M.; Arabnia, H.R. "A New Efficient Hybrid Technique for Human Action Recognition Using 2D Conv-RBM and LSTM with Optimized Frame Selection." Technologies 2025, 13, 53.

- Imani, M.; Beikmohammadi, A.; Arabnia, H.R. "Comprehensive Analysis of Random Forest and XGBoost Performance with SMOTE, ADASYN, and GNUS Under Varying Imbalance Levels. " Technologies 2025, 13, 88. [Google Scholar] [CrossRef]

- Christy, R. Customer Churn Prediction 2020, Version 1. 2020. Available online: https://www.kaggle.com/code/rinichristy/customer-churn-prediction-2020 (accessed on 20 January 2022).

| Predicted Class | |||

| Churners | Non-churners | ||

| Actual Class | Churners | TP | FN |

| Non-churners | FP | TN | |

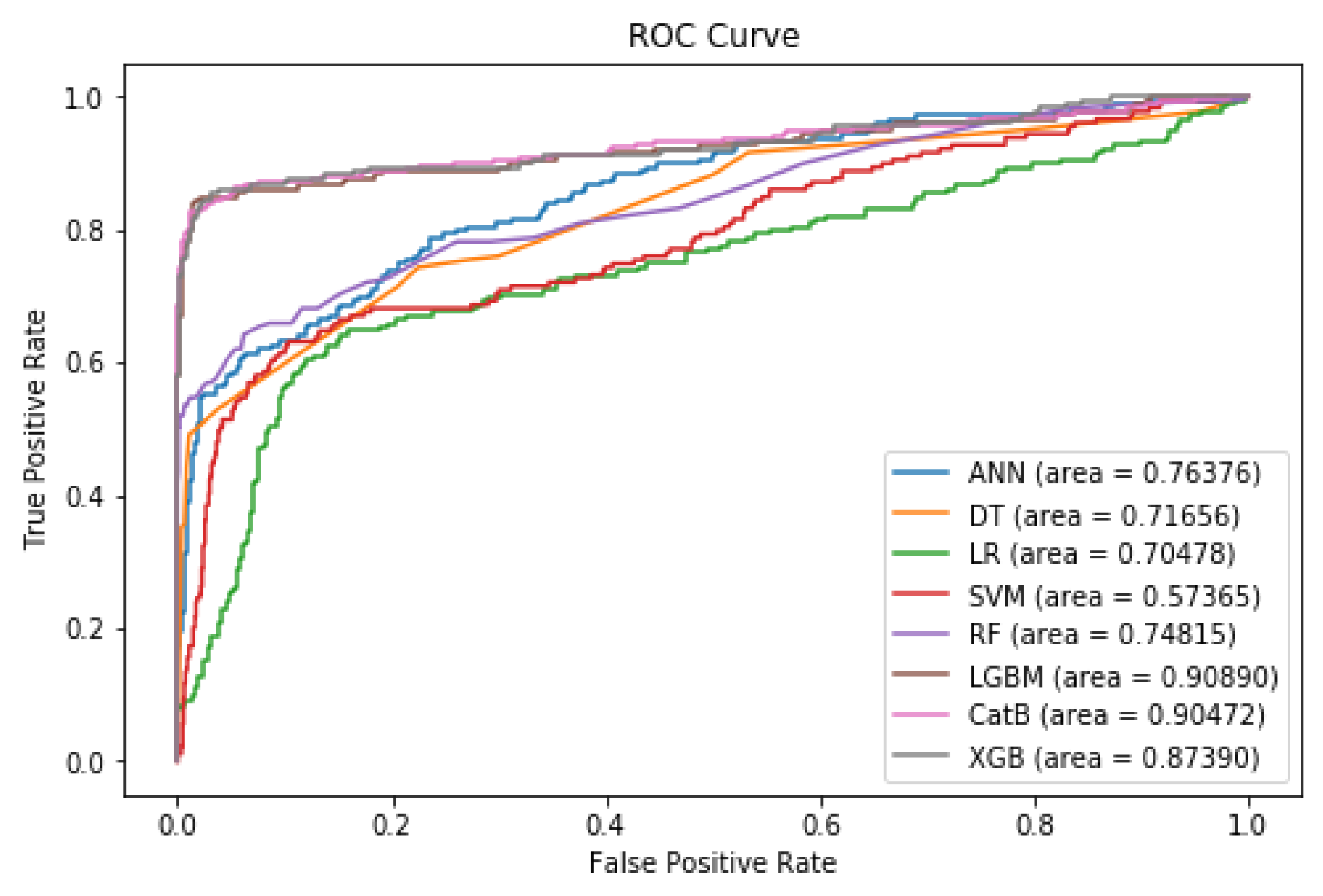

| Models | Precision% | Recall% | F1-score% | ROC AUC% |

| DT | 91 | 72 | 77 | 72 |

| ANN | 85 | 76 | 80 | 77 |

| LR | 61 | 70 | 62 | 70 |

| SVM | 81 | 57 | 59 | 57 |

| RF | 96 | 75 | 81 | 75 |

| CatBoost | 90 | 90 | 90 | 90 |

| LightGBM | 94 | 91 | 92* | 91* |

| XGBoost | 96 | 87 | 91 | 87 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).