3. Methodology and Evaluation Framework

This section presents our methodology for evaluating embedding techniques and large language models (LLMs) in Information Retrieval (IR) and Question Answering (QA) tasks. We describe the frameworks, datasets, models, and evaluation metrics employed in our study, as well as the rationale behind our choices and the potential limitations of our approach.

3.1. Overview of Approach

Our study encompasses a comprehensive evaluation of state-of-the-art embedding techniques and LLMs for enhancing IR and QA tasks, with a focus on English and Italian languages. The key components of our methodology include:

A diverse set of datasets across different domains and languages

A Retrieval-Augmented Generation (RAG) pipeline for QA tasks

A range of embedding models and LLMs

A comprehensive evaluation framework combining traditional and LLM-based metrics

3.2. RAG Pipeline

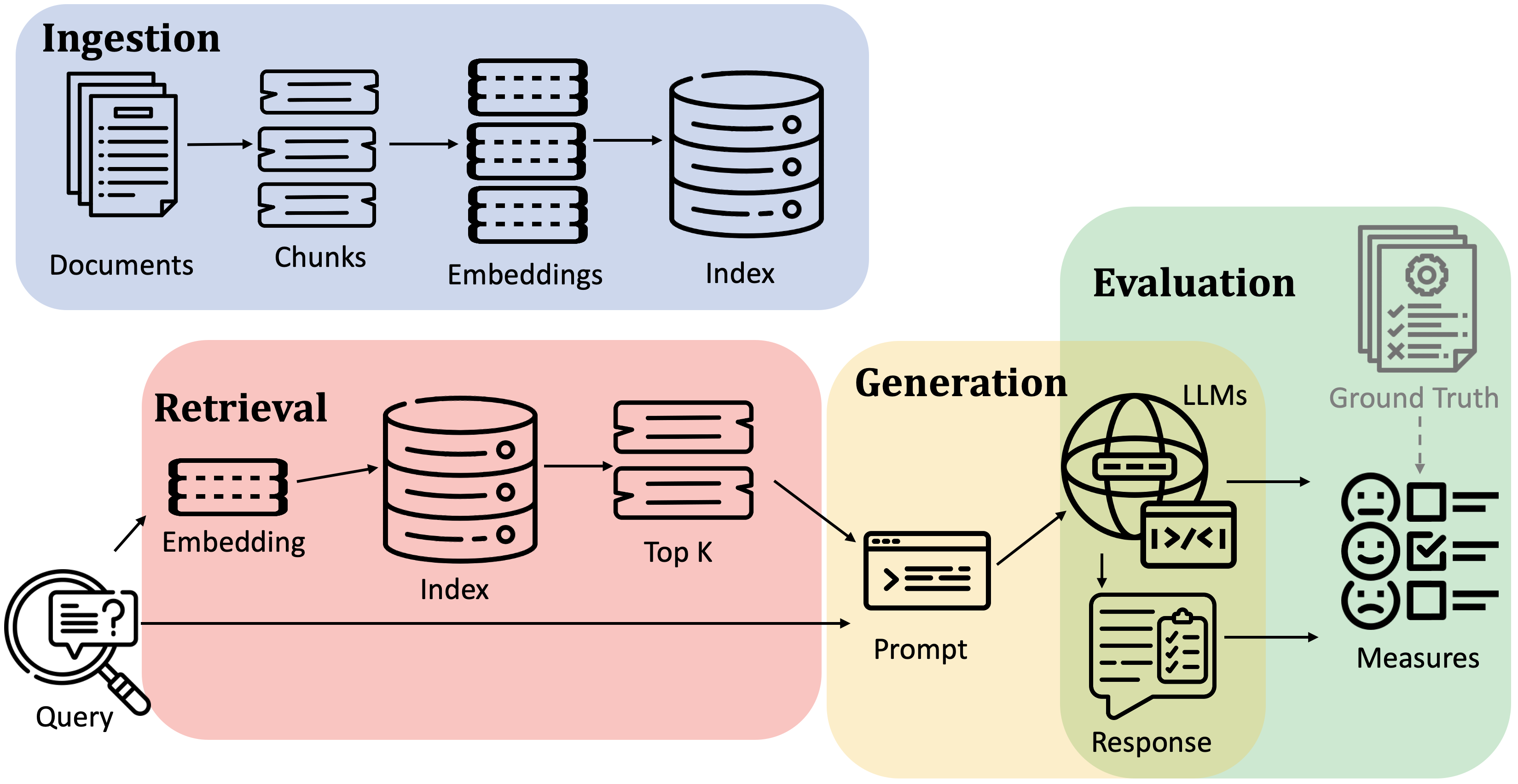

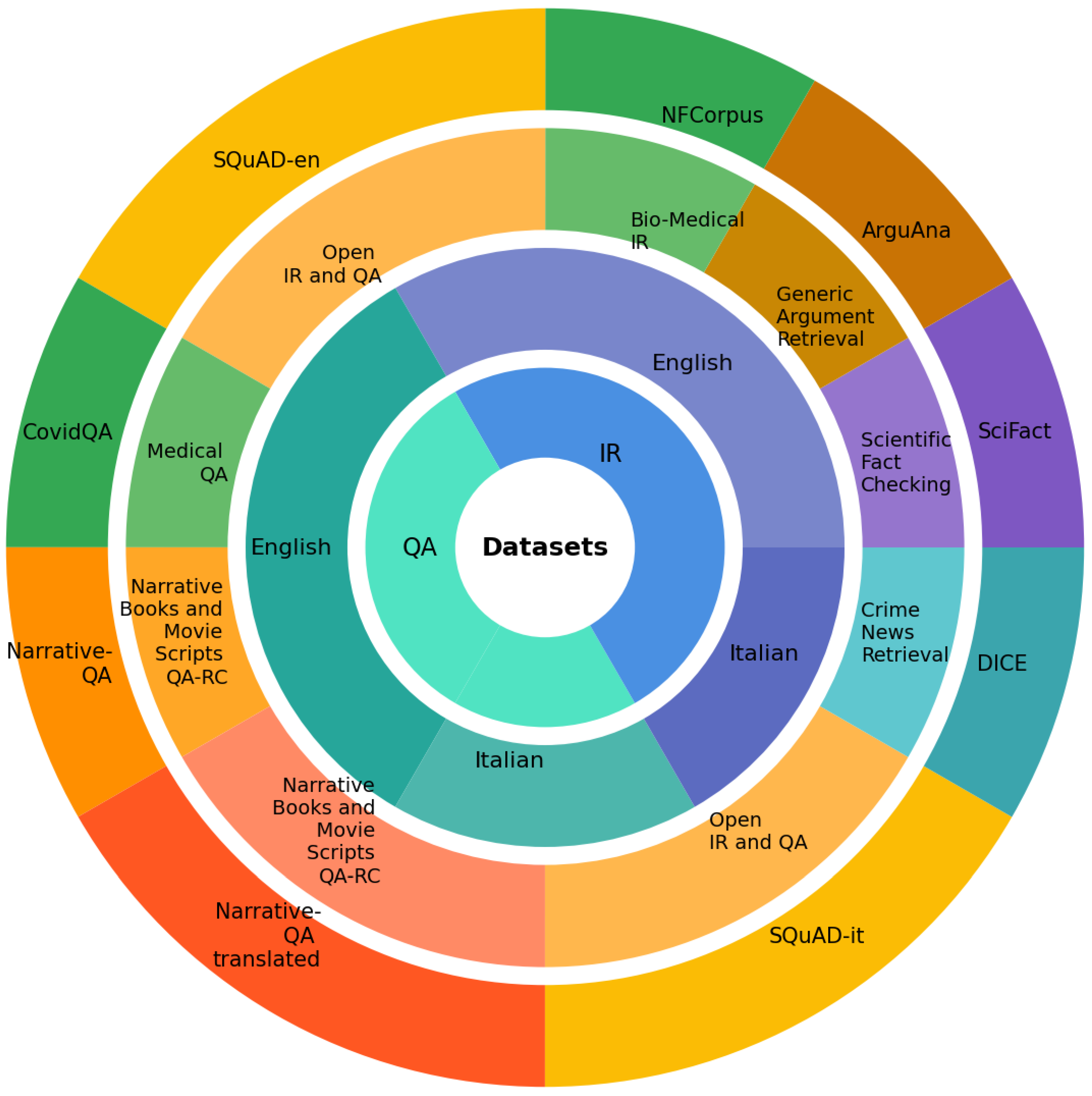

Our RAG pipeline consists of four main phases: ingestion, retrieval, generation, and evaluation, as illustrated in

Figure 1. Each phase serves a specific function in the pipeline.

Ingestion. The initial phase processes input documents to create manageable and searchable chunks. This is achieved by segmenting the documents into smaller parts, referred to as "chunks". Different chunking strategies can be implemented, and for visual-oriented input documents like PDFs, we exploit Document Layout Analysis to recognize more significant splitting of the document. These chunks are then embedded. The embedding step transforms the textual information into high-dimensional vectors that capture the semantic essence of each chunk. Following the embedding, these vector representations are ingested into a vector store such as Pinecone

4, Weaviate

5, and

Milvus6. These vector databases are designed for efficient similarity search operations. The embedding and indexing process is critical for facilitating rapid and accurate retrieval of information relevant to user queries.

Retrieval. Upon receiving a query, the system employs the same embedding model to convert the query into its vector form. This query vector undergoes a similarity search within the vector store to identify the k most similar embeddings corresponding to previously indexed chunks. The similarity search leverages the vector space to find chunks whose content is most relevant to the query, thereby ensuring that the information retrieved is pertinent and comprehensive. This step is pivotal in narrowing down the vast amount of available information to the most relevant chunks for answer generation.

Generation. In this phase, a large language model (LLM) processes the query enriched with retrieved context to generate the final answer. The system first formats retrieved chunks into structured prompts, which are combined with the original query. The LLM synthesizes this information to construct a coherent and informative response, leveraging its ability to understand context and generate natural language answers.

Evaluation. The final phase of the system involves evaluating the quality of the generated answers. We employ both ground-truth dependent and independent metrics. Ground-truth-dependent metrics require a set of pre-defined correct answers against which the system’s outputs are compared, allowing for the assessment of correctness. In contrast, ground-truth independent metrics evaluate the responses based on the answer’s relevance to the question and are independent of a predefined answer set. This dual evaluation approach enables a comprehensive assessment of the system’s performance, providing insights into both its correctness in relation to known answers and the overall quality of its generated text. In addition, the system can receive human evaluation of question-answer pairs as input and use it to evaluate metrics reliability and correspondence to expectations.

3.3. Datasets for Information Retrieval and Question Answering

We utilize a diverse set of datasets to evaluate models across different languages, domains, and task types. This diversity allows us to assess the models’ generalization capabilities and domain adaptability.

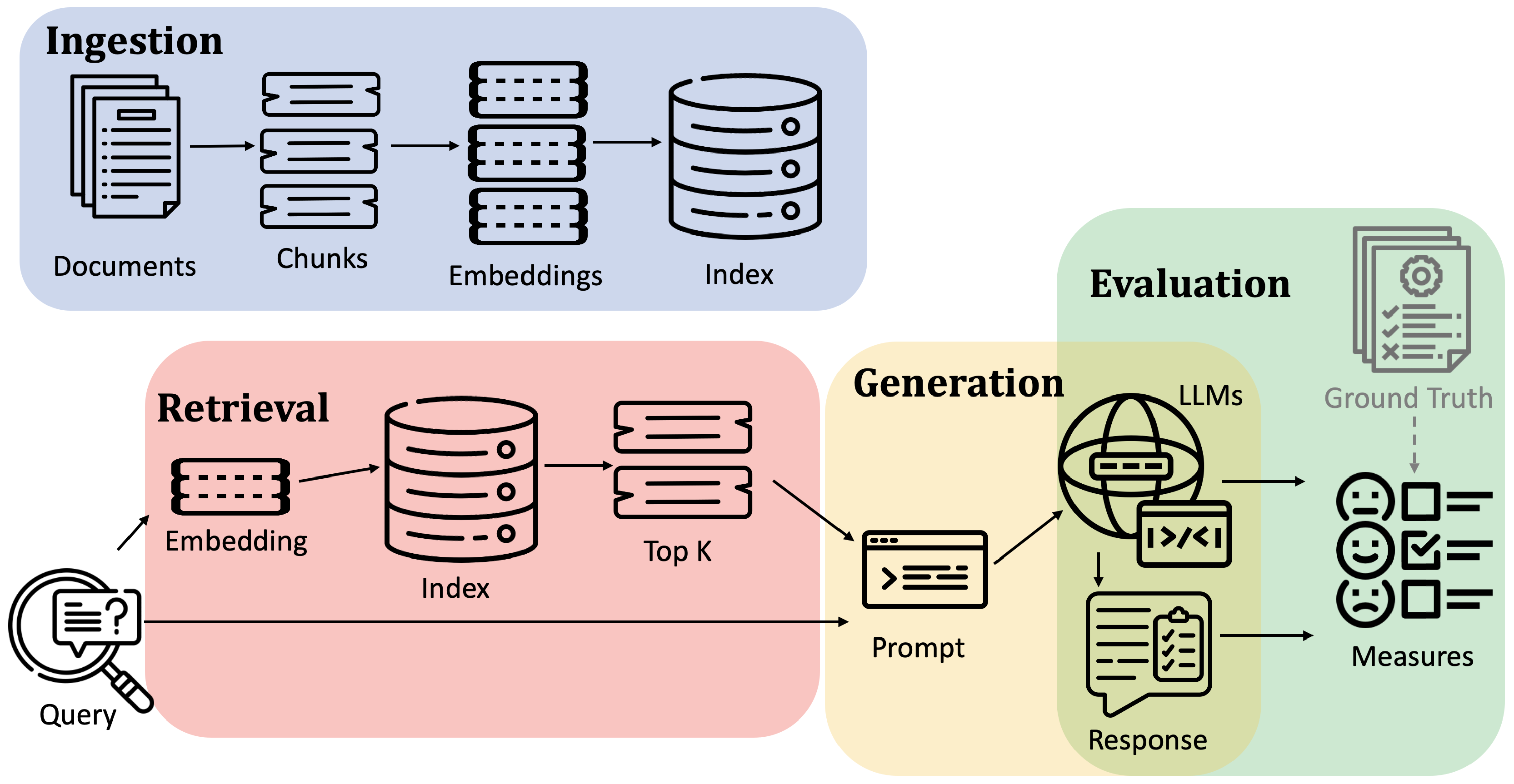

Table 1 provides an overview of the key datasets used in this study, and

Figure 2 illustrates their distribution.

Below, we provide detailed descriptions of each dataset, including their specific characteristics and how they are used in our study:

SQuAD-en

SQuAD (Stanford Question Answering Dataset)

7 is a benchmark dataset focused on reading comprehension for Question Answering and Passage Retrieval tasks. The initial release, SQuAD 1.1 [

34], comprises over 100K question-answer pairs about passages from 536 articles. These pairs were created through crowdsourcing, with each query linked to both its answer and the source passage. A subsequent release, SQuAD 2.0 [

35], introduced an additional 50K unanswerable questions designed to evaluate systems’ ability to identify when no answer exists in the given passage. SQuAD Open was developed for passage retrieval based on SQuAD 1.1 [

36,

37]. This variant uses the original crowdsourced questions but enables open-domain search across Wikipedia content dump. Each SQuAD entry contains four key elements:

- (i)

id: Unique entry identifier

- (ii)

title: Wikipedia article title

- (iii)

context: Source passage containing the answer

- (iv)

answers: Gold-standard answers with context position indices

Our study used SQuAD 1.1 for both IR and QA tasks, selecting 150 tuples from the validation set of 10.6k entries (1.5%) due to resource constraints. We ensured these selections matched the corresponding SQuAD-it samples to enable direct cross-lingual comparison. For IR, we processed the documents by splitting them into paragraphs and generating embeddings for each paragraph. We used the same splits for QA to evaluate our RAG pipeline’s ability to generate answers.

SQuAD-it

The SQuAD 1.1 dataset has been translated into several languages, including Italian and Spanish. SQuAD-it

8 [

38], the Italian version of SQuAD 1.1, contains over 60K question-answer pairs translated from the original English dataset. For our evaluation of both Italian IR and QA capabilities, we selected 150 tuples from the test set of 7.6k entries (1.9% of the test set), using random seed 433 for reproducibility and to work with limited resources. These samples directly correspond to the selected English SQuAD tuples, enabling parallel evaluation across languages. As with the English version, we processed the documents for IR by splitting them into paragraphs and generating embeddings for each segment, while using the same splits for QA evaluation.

DICE

Dataset of Italian Crime Event news (DICE)

9 [

39] is a specialized corpus for Italian NLP tasks, containing 10.3k online crime news articles from Gazzetta di Modena. The dataset includes automatically annotated information for each article. Each entry contains the following key fields:

- (i)

id: Unique document identifier

- (ii)

url: Article URL

- (iii)

title: Article title

- (iv)

subtitle: Article subtitle

- (v)

publication date: Article publication date

- (vi)

event date: Date of the reported crime event

- (vii)

newspaper: Source newspaper name

We used DICE to evaluate IR performance in the specific domain of Italian crime news. In our experimental setting, we used the complete dataset (10.3k articles), with article titles serving as queries and their corresponding full texts as the retrieval corpus. The task involves retrieving the complete article text given its title, creating a one-to-one correspondence between queries and passages.

SciFact

SciFact

10 [

40] is a dataset designed for scientific fact-checking, containing 1.4K expert-written scientific claims paired with evidence from research abstracts. In the retrieval task, claims serve as queries to find supporting evidence from scientific literature. The complete dataset contains 5,183 research abstracts, with multiple abstracts potentially supporting each claim. For our evaluation, we used the BEIR version

11of the dataset, which preserves all passages from the original collection. We specifically used 300 queries from the original test set. Each corpus entry contains:

- (i)

id: Unique text identifier

- (ii)

title: Scientific article title

- (iii)

text: Article abstract

ArguAna

ArguAna

12 [

41] is a dataset of argument-counterargument pairs collected from the online debate platform iDebate

13. The corpus contains 8,674 passages, comprising 4,299 arguments and 4,375 counterarguments. The dataset is designed to evaluate retrieval systems’ ability to find relevant counterarguments for given arguments. The evaluation set consists of 1,406 arguments serving as queries, each paired with a corresponding counterargument. The dataset is accessible through the BEIR datasets loader

14. Each corpus entry contains:

- (i)

id: Unique argument identifier

- (ii)

title: Argument title

- (iii)

text: Argument content

NFCorpus

NFCorpus [

42] is a dataset designed for evaluating the retrieval of scientific nutrition information from PubMed. The dataset comprises 3,244 natural language queries in non-technical English, collected from NutritionFacts.org

15. These queries are paired with 169,756 automatically generated relevance judgments across 9,964 medical documents. For our evaluation, we used the BEIR version of the dataset, containing 3,633 passages and 323 queries selected from the original set. The dataset allows multiple relevant passages per query. Each corpus entry contains:

- (i)

id: Unique document identifier

- (ii)

title: Document title

- (iii)

text: Document content

CovidQA

CovidQA

16 [

43] is a manually curated question answering dataset focused on COVID-19 research, built from Kaggle’s COVID-19 Open Research Dataset Challenge (CORD-19)

17 [

44]. While too small for training purposes, the dataset is valuable for evaluating models’ zero-shot capabilities in the COVID-19 domain. The dataset contains 124 question-answer pairs referring to 27 questions across 85 unique research articles. Each query includes:

- (i)

category: Semantic category

- (ii)

subcategory: Specific subcategory

- (iii)

query: Keyword-based query

- (iv)

question: Natural language question form

Each answer entry contains:

- (i)

id: Answer identifier

- (ii)

title: Source document title

- (iii)

answer: Answer text

In our evaluation, we used the complete CovidQA dataset to assess domain-specific QA capabilities. For each query, which is associated with a set of potentially relevant paper titles, our system retrieves chunks of 512 tokens from the vector store and generates answers. Since multiple answers are generated for a query (one for each title), we compute the mean value of evaluation metrics per query. Due to slight variations in paper titles between CovidQA and CORD-19, we matched documents using Jaccard similarity with a 0.7 threshold.

NarrativeQA

NarrativeQA

18 [

45] is an English dataset for question answering over long narrative texts, including books and movie scripts. The dataset spans diverse genres and styles, testing models’ ability to comprehend and respond to complex queries about extended narratives. NarrativeQA training set contains 1102 documents divided into 548 books and 552 movie scripts, it also contains over 32k question-answer pairs. The test set contains 355 documents divided into 177 books and 178 movie scripts, it also contains over 10k question-answer pairs. Each entry contains:

- (i)

document: Source book or movie script

- (ii)

question: Query to be answered

- (iii)

answers: List of valid answers

For our evaluation, we used a balanced subsample of the test set (1%, 100 pairs total), consisting of 50 questions from books (covering 41 unique books) and 50 questions from movie scripts (covering 42 unique scripts). Using random seed 42 for reproducibility, this sampling strategy was chosen to manage OpenAI API costs while maintaining representation across both narrative types. We processed documents using 512-token chunks, retrieving relevant segments from the source document for each query.

NarrativeQA-cross-lingual

To evaluate cross-lingual capabilities, we created an Italian version of the NarrativeQA test set by maintaining the original English documents but translating the question-answer pairs into Italian. This approach allows us to assess how well LLMs can bridge the language gap between source documents and queries.

3.4. Models

3.4.1. Models Used for Information Retrieval

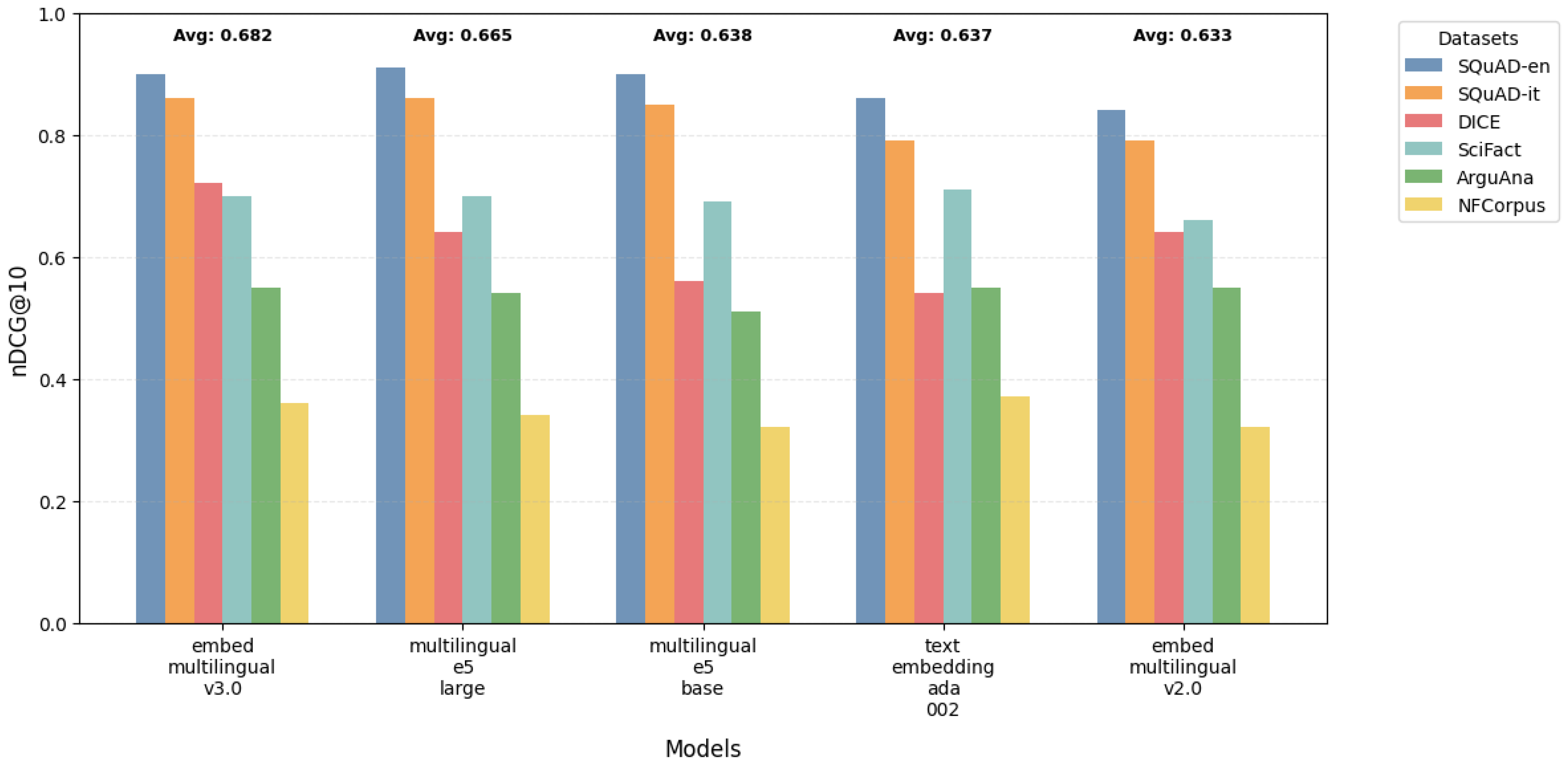

We evaluate a diverse set of embedding models, focusing on their performance in both English and Italian. All models were used with their default pretrained weights without additional fine-tuning.

Table 2 provides an overview of these models.

Rationale: This selection covers both language-specific and multilingual models, enabling us to assess cross-lingual performance and the effectiveness of specialized versus general-purpose embeddings.

3.4.2. Large Language Models for Question Answering

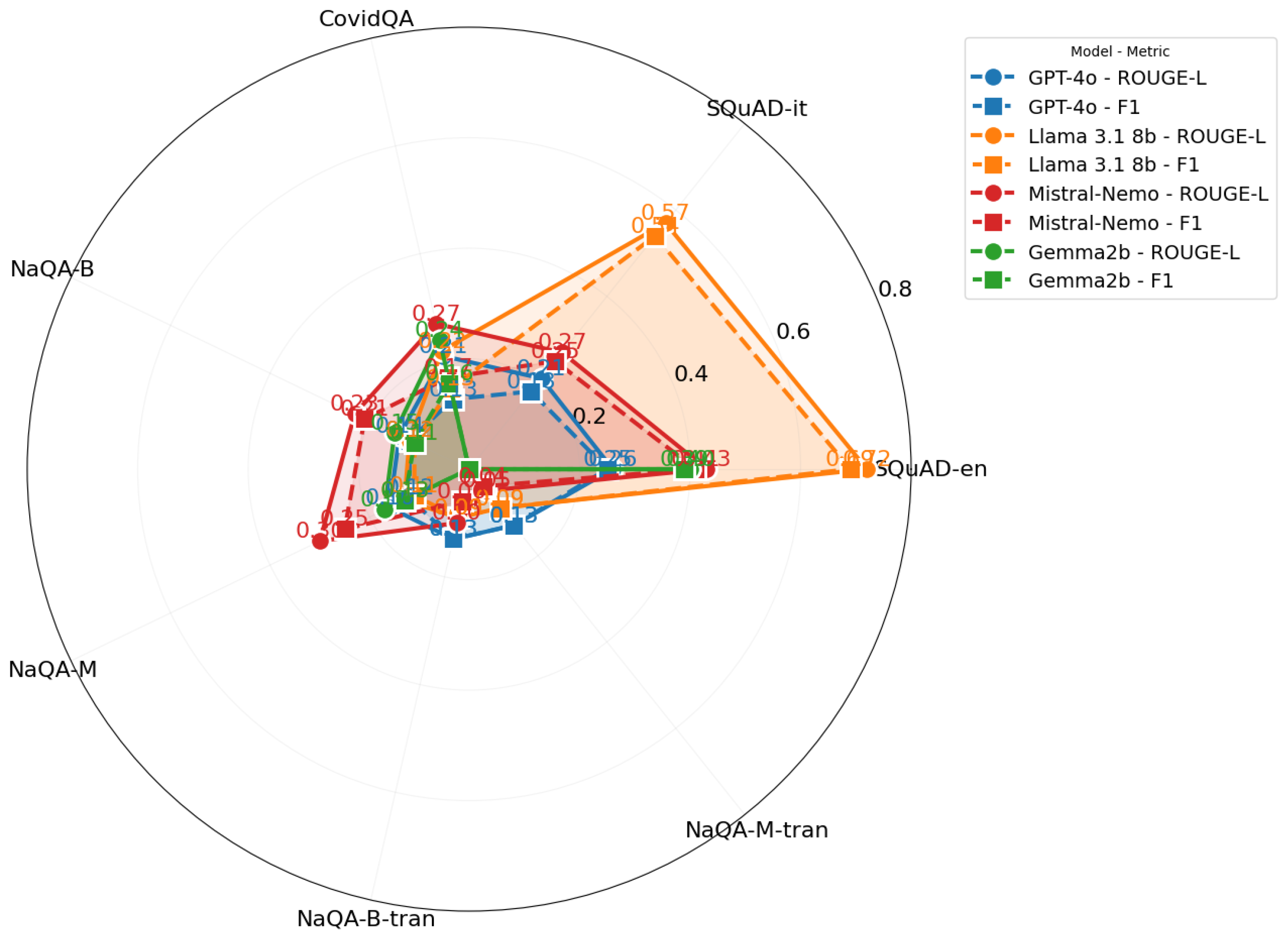

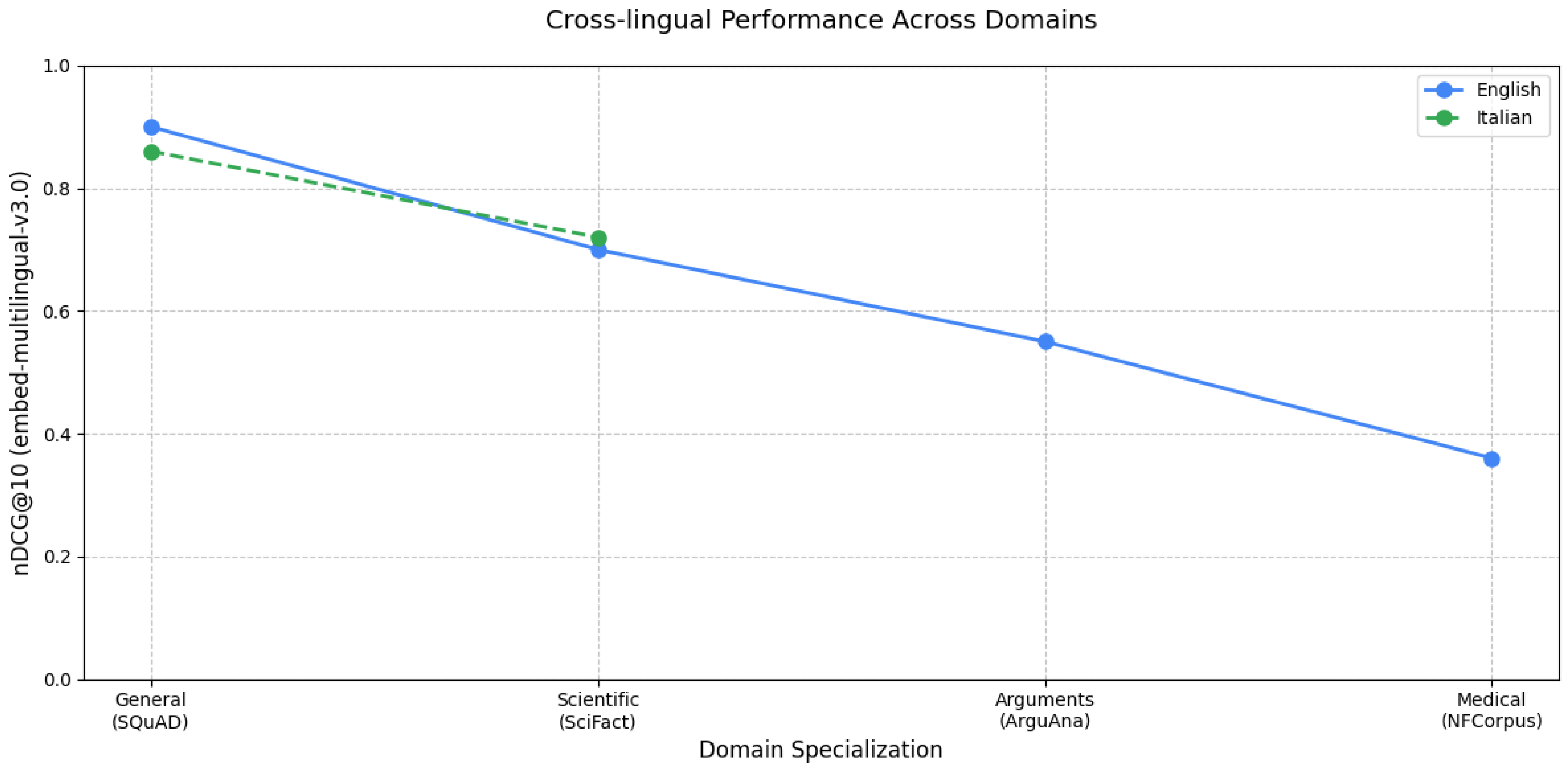

For QA tasks, we focus on retrieval-augmented generation (RAG) pipelines, integrating dense retrieval with LLMs for answer generation. For the retrieval component of our RAG pipeline, we selected the Cohere embed-multilingual-v3.0 model based on its superior performance in our IR experiments. This model achieved the highest consistent

scores across both English (0.90) and Italian (0.86) tasks, making it ideal for cross-lingual retrieval. We configured it to retrieve the top 10 passages for each query, balancing comprehensive context capture with computational efficiency. We tested different LLMs for answer generation and compared a widely used commercial API model with open-source alternatives.

Table 3 provides an overview of the LLMs used in our study.

Rationale: This selection of LLMs represents a range of model sizes and architectures, allowing us to assess the impact of these factors on QA performance. The number of parameters for the OpenAI GPT-4o model is not disclosed but is likely greater than 175 billion.

3.5. Evaluation Metrics

We employ a set of evaluation metrics to assess both IR and QA performance. We focus on NDCG for IR tasks and a combination of reference-based (e.g., BERTScore, ROUGE) and reference-free metrics (e.g., Answer Relevance, Groundedness) for QA tasks. This diverse set of metrics allows for a multifaceted evaluation, capturing different aspects of model performance.

3.5.1. IR Evaluation Metric

For IR tasks, we primarily use the Normalized Discounted Cumulative Gain (NDCG) metric:

Normalized Discounted Cumulative Gain (NDCG@k) [27]:

Definition: A ranking quality metric comparing rankings to an ideal order where relevant items are at the top.

Formula: where is the Discounted Cumulative Gain at k, and is the Ideal at k, with k is a chosen cutoff point. measures the total item relevance in a list with a discount that helps address the diminishing value of items further down the list.

Range: 0 to 1, where 1 indicates a perfect match with the ideal order.

Use: Primary metric for evaluating ranking quality, with

k typically set to 10.

is used for experimental evaluation in different IR works such as [

50] and [

51].

Rationale: NDCG is chosen as our sole IR metric because it effectively captures the quality of ranking, considering both the relevance and position of retrieved items. It’s particularly useful for evaluating systems where the order of results matters, making it well-suited for assessing the performance of our embedding models in retrieval tasks.

Implementation: Available in PyTorch, TensorFlow, and the BEIR framework.

3.5.2. QA Evaluation Metrics

For QA tasks, we employ both reference-based and reference-free metrics. Reference-based metrics use provided gold answers and may focus on either word overlap or semantic similarity. Reference-free metrics do not require gold answers, instead using LLMs to evaluate candidate answers along different dimensions.

Reference-based metrics:

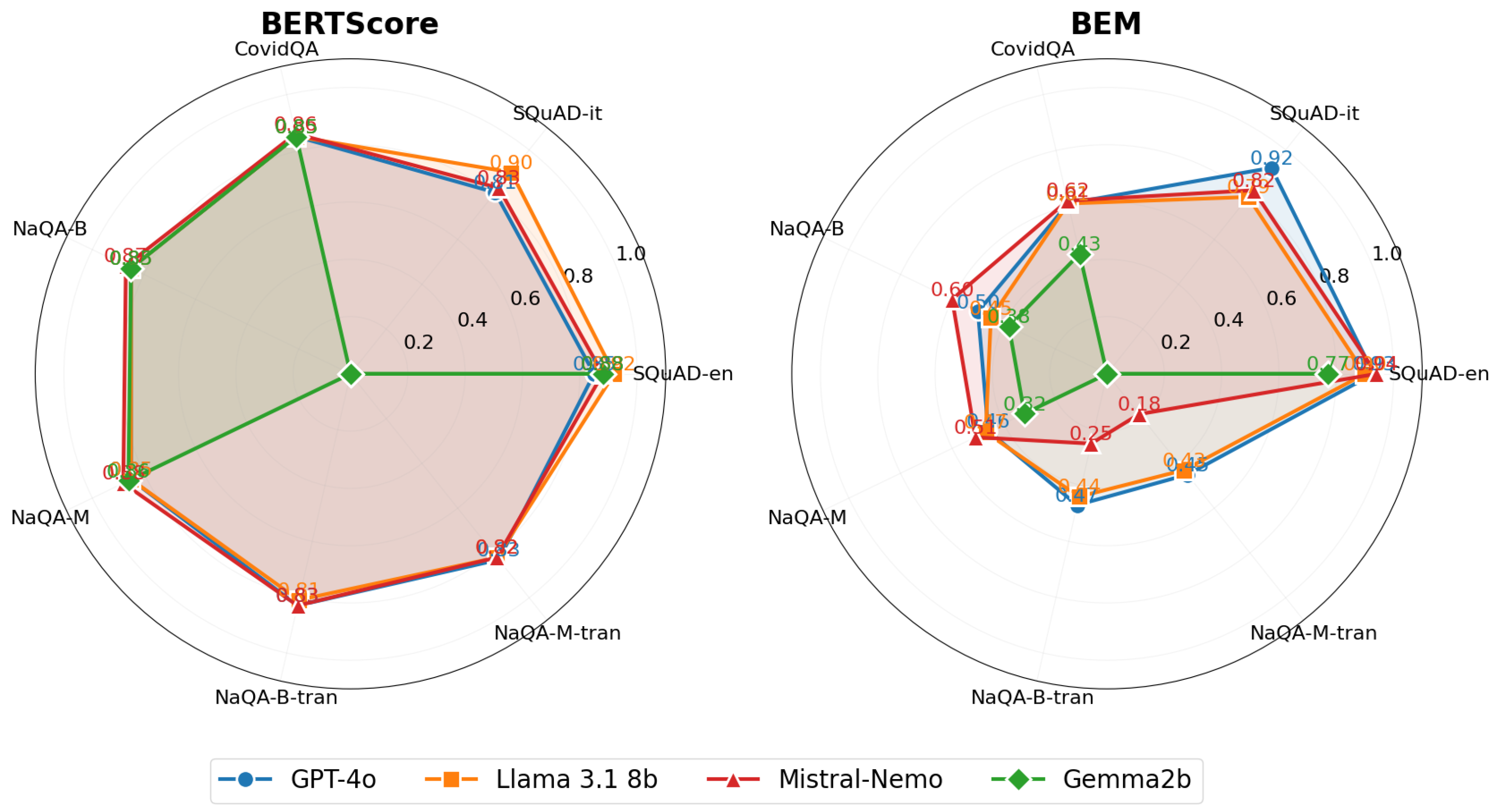

BERTScore [30]: Measures semantic similarity using contextual embeddings. BERTScore is a language generation evaluation metric based on pre-trained BERT contextual embeddings [

11]. It computes the similarity of two sentences as a sum of cosine similarities between their tokens’ embeddings. This metric can handle such cases where two sentences are semantically similar but differ in form. This evaluation method is used in many papers like [

52] and [

53]. This metric is often used in question-answering, Summarization, and translation. This metric can be implemented using different libraries, including TensorFlow and HuggingFace.

BEM (BERT-based Evaluation Metric) [

54]: Uses a fine-tuned BERT trained to assess answer equivalence. This model receives a question, a candidate answer, and a reference answer as input and returns a score quantifying the similarity between the candidate and the reference answers. This evaluation method is used in some recent papers like [

55] and [

56]. This metric can be implemented using TensorFlow. The model trained to perform the answer equivalence task is available on the TensorFlow hub.

-

ROUGE [29]: Evaluates n-gram overlap between generated and reference answers. ROUGE (Recall-Oriented Understudy for Gisting Evaluation) evaluates the overlap of n-grams between generated and reference answers. More in detail, it is a set of different metrics (ROUGE-1, ROUGE-2, ROUGE-L) used to evaluate text summarization and machine comprehension systems:

ROUGE-N: Is defined as a n-gram recall between a predicted text and a ground truth text: where is the maximum number of n-grams of size n co-occurring in a candidate text and the ground truth text. The denominator is the total sum of the number of n-grams occurring in the ground truth text.

-

ROUGE-L: Calculates an F-measure using the Longest Common Subsequence (LCS); the idea is that the longer the LCS of two texts is, the more similar the two summaries are. Given two texts, the ground truth X of length m and the prediction Y of length n, the formal definition is: where:

and .

ROUGE metrics are very popular in Natural Language Processing specific tasks involving text generation like Summarization and Question Answering [

57]. The advantage of ROUGE is that it allows us to estimate the quality of a generative model’s output in common NLP tasks without dependencies from language. The disadvantages are:

Rouge metrics are implemented in PyTorch, TensorFlow, and Huggingface.

-

F1 Score: Harmonic mean of precision and recall of word overlap. The F1 score is defined as the harmonic mean of precision and recall of word overlap between generated and reference answers.

. This score summarizes the information on both aspects of a classification problem, focusing on precision and recall. F1 score is a very popular metric to evaluate performances of Artificial Intelligence and Machine Learning systems on classification tasks [

58]. In question answering two popular benchmark datasets that use F1 as one of the metrics for evaluation are SQuAD [

34] and TriviaQA [

59]. The advantages of the F1 score are:

It can handle unbalanced classes well.

It captures and summarizes in a single metric, both the aspects of Precision and Recall.

The main disadvantage is that, if left alone, the F1 score can be harder to interpret. The F1 score could be used in both Information Extraction and Question Answering settings. F1 score is implemented in all the popular libraries of Machine/Deep Learning and Data Analysis, such as Scikit-learn, PyTorch, and TensorFlow.

Reference-free metrics:

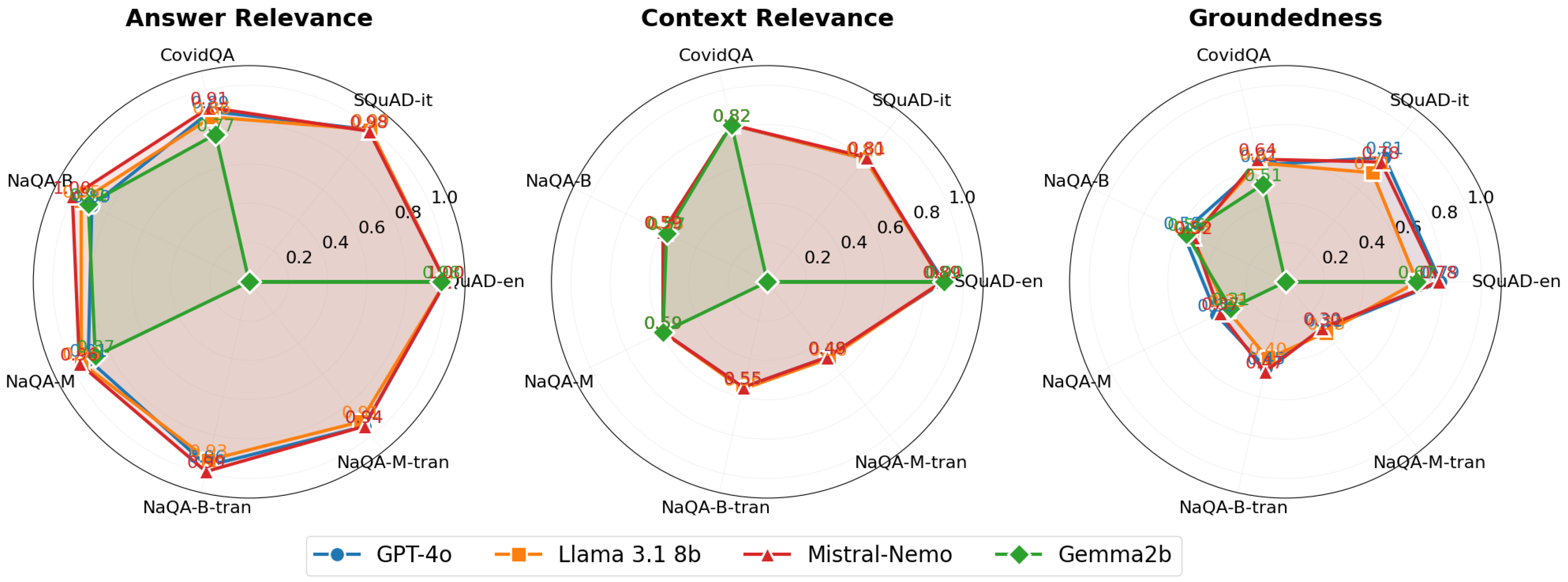

Then, we analyze reference-free LLM-based metrics: Context Relevance, Groundedness, and Answer Relevance. All of these are implemented using TruLens, which calls LLM GPT-3.5-turbo. These metrics are implemented using standard libraries and custom scripts, ensuring a comprehensive evaluation of our models across various IR and QA performance aspects. The combination of traditional IR metrics, reference-based QA metrics, and novel reference-free metrics provides a holistic view of model capabilities, allowing for nuanced comparisons across different approaches and datasets. LLM-based metrics are recent, mentioned in a few recent papers like [

60]. Retrieval Augmented Generation Assessment (RAGAs) is an evaluation framework introduced in [

31] which uses Large Language Models to test RAG pipelines. These metrics are implemented into ARES

37 [

33] and into a library called TruLens. We used TruLens with GPT-3.5-turbo in this paper.

Context Relevance: Evaluates retrieved-context relevance to the question. It assesses if the passage returned is relevant for answering the given query. Therefore, this measure is useful for evaluating IR, after obtaining the answer.

Groundedness or Faithfulness: Assesses the degree to which the generated answer is supported by retrieved documents obtained in a RAG pipeline. Therefore it measures if the generated answer is faithful to the retrieved passage or if it contains hallucinated or extrapolated statements beyond the passage.

Answer Relevance: Measures the relevance of the generated answer to the query and retrieved passage.

Metric Classification:

We can classify all the previous metrics into two categories based on their capabilities to evaluate the answer, to exploit pure syntactic or also semantic aspects:

Syntactic metrics evaluate formal response aspects, including BLEU [

61], ROUGE [

29], Precision, Recall, F1, and Exact Match [

34]. These focus on text properties rather than semantic meaning. These metrics are generally considered less indicative of the semantic value of the generated responses. This is due to their focus on the text’s formal properties rather than its content or inherent meaning.

Semantic metrics evaluate response meaning, including BERTScore [

30] and BEM score [

54]. The BEM score is preferred to BERTScore for its correlation with human evaluations as reported in the original study we refer to and because we empirically found that the BERTScore tends to take values in a very short subset of values in the

range. The LLM-based metrics also belong to this group.

Manual Evaluation:

We conduct manual evaluations using a 5-point Likert scale. This method is not so popular because it requires high costs in terms of both money and time. Indeed, a lot of work done by human experts is required. We use manual evaluation principally to verify the reliability of automated evaluation metrics. Three independent human annotators with domain expertise evaluated the generated answers. For each evaluation session, the annotators were presented with: the original question, the RAG system’s generated answer, and the ground truth from the dataset or customer answers. Annotators used a 5-point Likert scale to assess the quality of the generated answer in relation to the posed question, considering relevance, accuracy, and coherence. The criteria for scoring were as follows:

Very Poor: The generated answer is totally incorrect or irrelevant to the question. This case indicates a failure of the system to comprehend the query or retrieve pertinent information.

Poor: The generated answer is predominantly incorrect but with glimpses of relevance suggesting some level of understanding or appropriate retrieval.

Neither: The generated answer mixes relevant and irrelevant information almost equally, showcasing the system’s partial success in addressing the query.

Good: The generated answer is largely correct but includes minor inaccuracies or irrelevant details, demonstrating a strong understanding and response to the question.

Very Good: Reserved for completely correct and fully relevant answers, reflecting an ideal outcome where the system accurately understood and responded to the query.

The annotators conducted their assessments independently to ensure unbiased evaluations. Upon completion, the scores for each question-answer pair were collected and compared. In cases of discrepancy, a consensus discussion was initiated among the annotators to agree on the most accurate score. This consensus process allowed for mitigating individual bias and considering different perspectives in evaluating the quality of the generated answers. This manual evaluation process helps particularly in assessing the reliability and validity of our system’s automated evaluation metrics.

Inter-metric Correlation:

We use

Spearman Rank Correlation [

62] to assess automated metrics’ reliability against human evaluation. This non-parametric measure evaluates the statistical dependence between rankings of two variables through a monotonic function. Computed on ranked data, it enables ordinal and continuous variables analysis. The correlation coefficient (

) ranges from

to 1, where 1 indicates perfect positive correlation, 0 indicates no correlation, and

indicates perfect negative correlation.

3.6. Experimental Design

Our experimental methodology aims to comprehensively evaluate embedding models and LLMs across multiple dimensions of IR and QA tasks. We structure our investigation around two complementary areas: (i) Information Retrieval performance, evaluating embedding models across domains and languages, and (ii) Question-answering capabilities, assessing LLM performance in RAG pipelines.

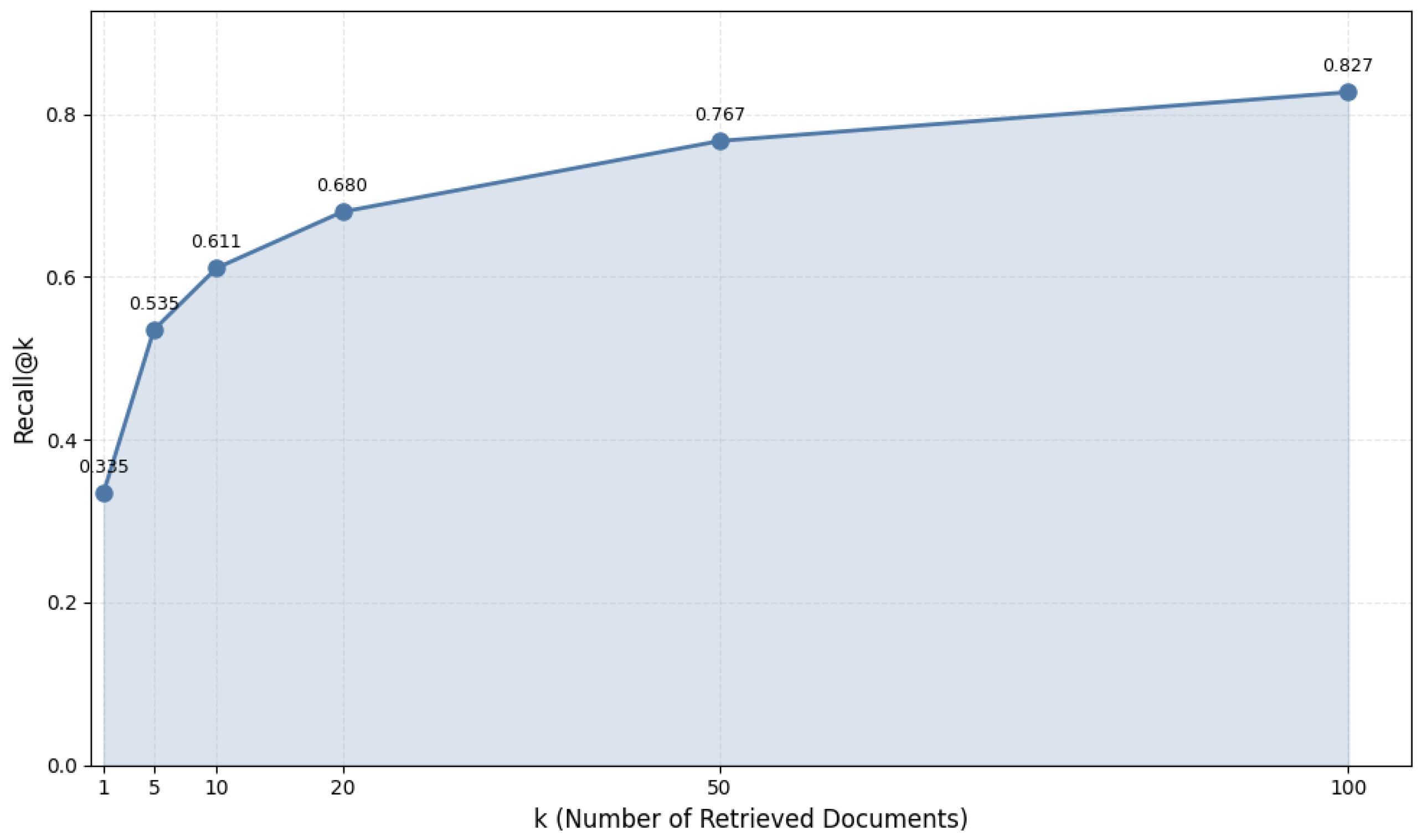

In the IR domain, we first evaluate embedding model performance across different domains, using datasets that span from general knowledge (SQuAD) to specialized scientific and medical content (SciFact, ArguAna, and NFCorpus). We complement this with cross-language evaluation using Italian datasets (SQuAD-it and DICE) to assess how language-specific and multilingual models perform in non-English contexts. Additionally, we analyze the impact of retrieval size by varying the number of retrieved documents (), with particular attention to recall metrics.

For QA tasks, our evaluation encompasses several dimensions. We assess LLM performance using both reference-based metrics (ROUGE-L, F1, BERTScore, BEM) and reference-free metrics (Answer Relevance, Context Relevance, Groundedness). We specifically test system capabilities in general domains using SQuAD for both English and Italian languages, in specialized domains using CovidQA for medical knowledge and NarrativeQA for narrative understanding. The cross-lingual aspect is explored using NarrativeQA with English documents and Italian queries, allowing us to measure the effectiveness of language transfer in QA contexts.

Across both IR and QA domains, we examine the relationship between model size and performance to understand scaling effects. Additionally, we perform manual assessments of system outputs to validate automated metrics and understand real-world effectiveness.

To ensure systematic evaluation, we implement the experiments following a structured methodology:

Dataset preparation: We preprocess and embed each dataset using the relevant embedding model

IR evaluation: For retrieval tasks, we implement top-k document retrieval () and evaluate using NDCG@10

QA pipeline: For question answering, we implement the complete RAG pipeline and generate answers using multiple LLMs

Metric application: We apply our comprehensive set of evaluation metrics, including both reference-based and reference-free measures

Validation: We conduct the manual evaluation on carefully selected result subsets and analyze correlation with automated metrics

3.6.1. Hardware and Software Specifications

We conducted our experiments using the Google Colab platform

38. Our implementation uses Python with the following key components: (i) Langchain framework for RAG pipeline implementation, (ii) Milvus vector store for efficient similarity search, (iii) HuggingFace endpoints, OpenAI and Cohere APIs for embedding models, (iii) OpenAI and HuggingFace endpoints for large language models.

3.6.2. Procedure

For IR activities, we followed this procedure:

For QA tasks, we employed the following protocol:

-

Data Preparation:

- (a)

Indexed documents using Cohere embed-multilingual-v3.0 (best-performing IR model based on nDCG@10)

- (b)

Split documents into passages of 512 tokens without sliding windows, balancing semantic integrity with information relevance

Query Processing: Encoded each query using the corresponding embedding model

Retrieval Stage: Used Cohere embed-multilingual-v3.0 to retrieve top-10 passages

-

Answer Generation:

- (a)

Constructed bilingual prompts combining questions and retrieved passages

- (b)

Applied consistent prompt templates across all models and datasets

- (c)

Generated answers using each LLM

During generation, we employed the following prompt structure for both English and Italian tasks:

Table 4.

Standardized prompts used for English and Italian QA tasks

Table 4.

Standardized prompts used for English and Italian QA tasks

You are a Question Answering system that is

rewarded if the response is short, concise

and straight to the point, use the following

pieces of context to answer the question

at the end. If the context doesn’t provide

the required information simply respond

<no answer>.

Context: {retrieved_passages}

Question: {human_question}

Answer: |

Sei un sistema in grado di rispondere a domande

e che viene premiato se la risposta è breve,

concisa e dritta al punto, utilizza i seguenti

pezzi di contesto per rispondere alla domanda

alla fine. Se il contesto non fornisce le

informazioni richieste, rispondi semplicemente

<nessuna risposta>.

Context: {retrieved_passages}

Question: {human_question}

Answer: |

This prompt structure provides explicit instructions and context to the language model while encouraging concise and truthful answers without fabrication.

-

Evaluation:

- (a)

Computed reference-based metrics (BERTScore, BEM, ROUGE, BLEU, EM, F1) using generated answers and ground truth

- (b)

Used GPT-3.5-turbo to compute reference-free metrics (Answer Relevance, Groundedness, Context Relevance) through prompted evaluation

3.7. Cross-lingual and Domain Adaptation Experiments

To assess cross-lingual and domain adaptation capabilities:

-

Cross-lingual:

- (a)

Evaluated multilingual models (e.g., E5, BGE) on both English and Italian datasets without fine-tuning

- (b)

Compared performance against monolingual models (BERTino for Italian)

-

Domain Adaptation:

- (a)

Tested models trained on general domain data (e.g., SQuAD) on specialized datasets (e.g., SciFact, NFCorpus)

- (b)

Analyzed performance changes when moving to domain-specific tasks

3.8. Reproducibility Measures

We implemented several measures to ensure experimental reproducibility:

All configurations, datasets (including NarrativeQA-translated), and detailed protocols are available in our public repository

3940.

3.9. Ethical Considerations

In conducting our experiments, we prioritized responsible research practices by carefully paying attention to ethical guidelines. We ensured strict compliance with dataset licenses and usage agreements while maintaining complete transparency regarding our data sources and processing methods. This commitment to data rights and transparency forms the foundation of reproducible and ethical research.

For model deployment, we paid particular attention to the ethical use of API-based models like GPT-4o, adhering strictly to providers’ usage policies and rate limits. We thoroughly documented model limitations and potential biases in outputs, ensuring transparency about system capabilities and constraints. This documentation serves both to support reproducibility and to help future researchers understand the boundaries of these systems.

3.10. Limitations and Potential Biases

While our methodology strives for comprehensive evaluation, several important limitations warrant careful consideration when interpreting our results.

Our dataset selection, though diverse, cannot fully capture the complexity of real-world IR and QA scenarios. Despite including both general and specialized datasets, our coverage represents only a fraction of potential use cases across languages and domains. While our datasets span general knowledge (SQuAD), scientific content (SciFact), and specialized domains (NFCorpus), they cannot encompass the full breadth of linguistic variations and domain-specific applications.

Model accessibility imposed significant constraints on our evaluation scope. Access limitations to proprietary models and computational resource constraints prevented exhaustive experimentation with larger models.

The current state of evaluation metrics presents another important limitation. Though we employed both traditional metrics and specialized metrics, these measurements may not capture all nuanced aspects of model performance. This limitation becomes particularly apparent in complex QA tasks requiring sophisticated context understanding and reasoning capabilities. The challenge of quantifying aspects like answer relevance and factual accuracy remains an active area of research.

Practical resource constraints necessitated trade-offs in our experimental design. These limitations influenced our choices regarding sample sizes and the number of evaluation runs, though we worked to maximize the utility of available resources. For instance, our use of selected subsets from larger datasets (1.5-1.9% of original data) represents a necessary compromise between comprehensive evaluation and computational feasibility.

The temporal nature of our findings presents a final important consideration. Given the rapid evolution of NLP technology, our results represent a snapshot of model capabilities at a specific point in time. Future developments may shift the relative performance characteristics we observed, particularly as new models and architectures emerge.

These limitations collectively affect the generalization of our results. To address these constraints, we have: (i) Maintained complete transparency in our experimental setup. (ii) Documented all assumptions and methodological choices. (iii) Employed diverse evaluation metrics where possible. (iv) Provided detailed documentation of our implementation choices

While our findings have limitations, this approach ensures that they provide valuable insights within their defined scope and contribute meaningfully to the field’s understanding of multilingual IR and QA systems.