Submitted:

17 February 2025

Posted:

18 February 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Relevant Work

2.1. Supervised Fine-Tuning

2.2. Reinforcement Learning with Human Feedback (RLHF)

2.3. Constraint-Based Decoding

2.4. Prompt Engineering

2.5. Hybrid Constraint-Based Decoding and Prompt Engineering

3. Method

-

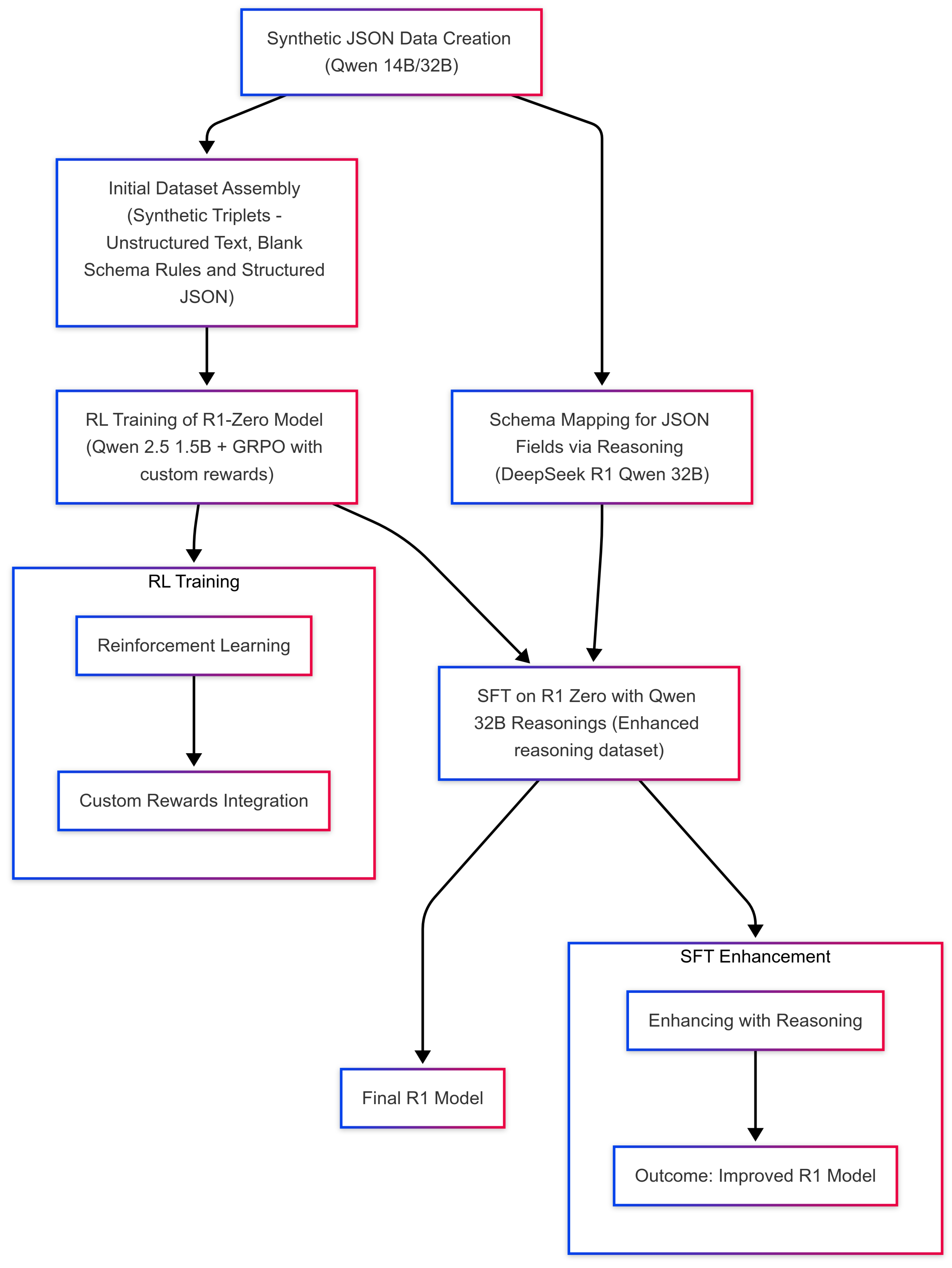

Build RL reasoning datasetCreate synthetic unstructured and structured data [10,11] in tandem using controlled prompts and Qwen 14B/32B [12],Reverse-engineer how unstructured text can map onto an empty JSON schema by engaging a distilled DeepSeek R1 Qwen 32B [13] to explain—step by step—how each schema field is populated.

-

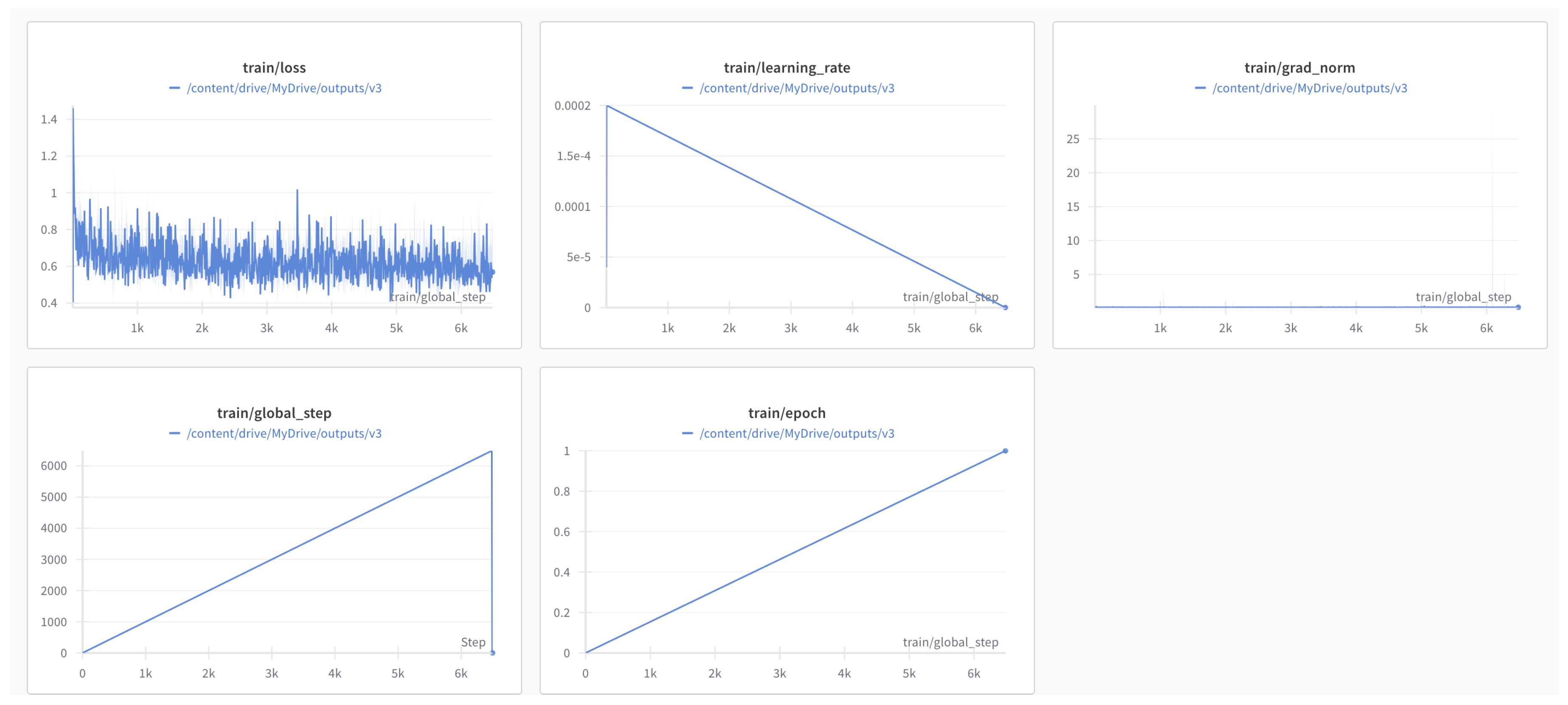

Train reasoning model with RL and SFT.Develop custom reward mechanisms that directly evaluate how well the outputs adhere to a predefined schema while balancing fluency, completeness, and correctness.Train R1-Zero reasoning model from Qwen 2.5 1.5B base model using RL [14,15] and synthetic unstructured-structured pair dataset, integrate custom rewards into GRPO [14] without altering the core policy optimization loop. The combined reward drives the training so that the model produces outputs that score highly on all relevant criteria.Fine tune R1-Zero model into R1 with supervised fine-tuning using reasoning dataset.

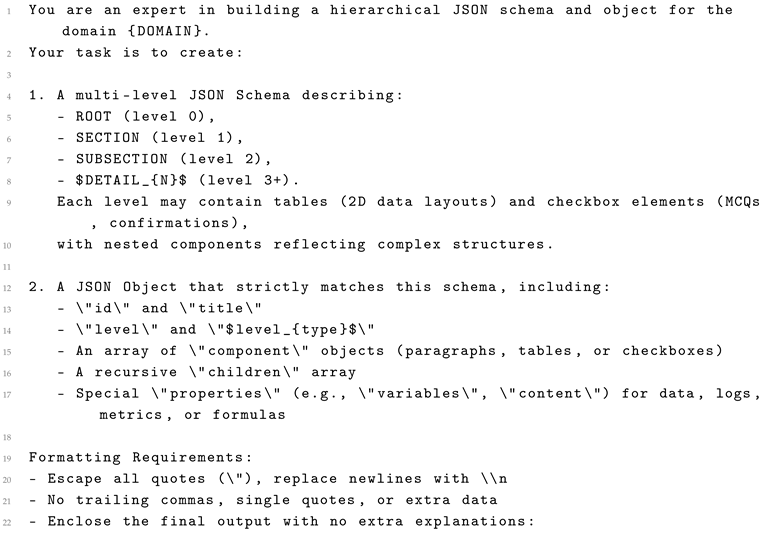

3.1. Generating Structured and Unstructured Data

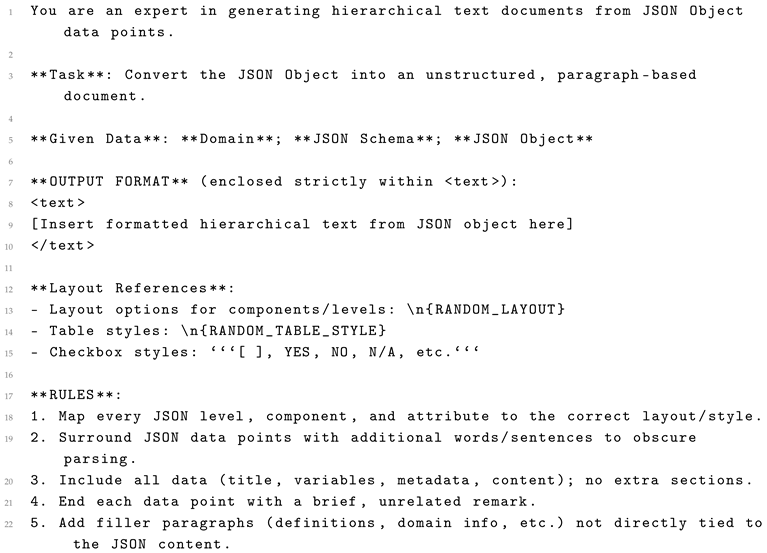

- In doing so, we create a synthetic corpus that covers a broad range of domain contexts, from general manufacturing logs to specialized quality assurance frameworks. Each piece of unstructured text is logically equivalent to a filled JSON schema, yet differs in structure, style, and formatting.

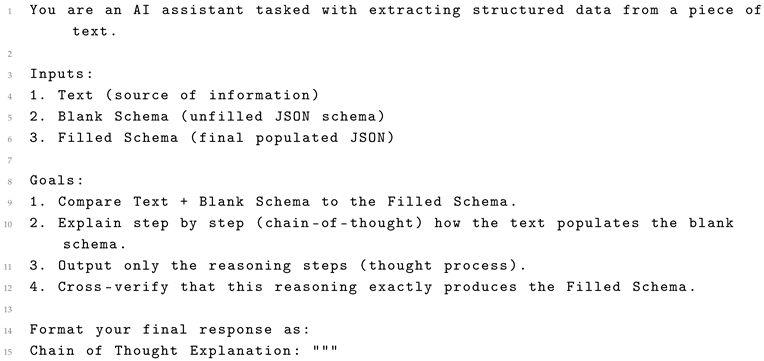

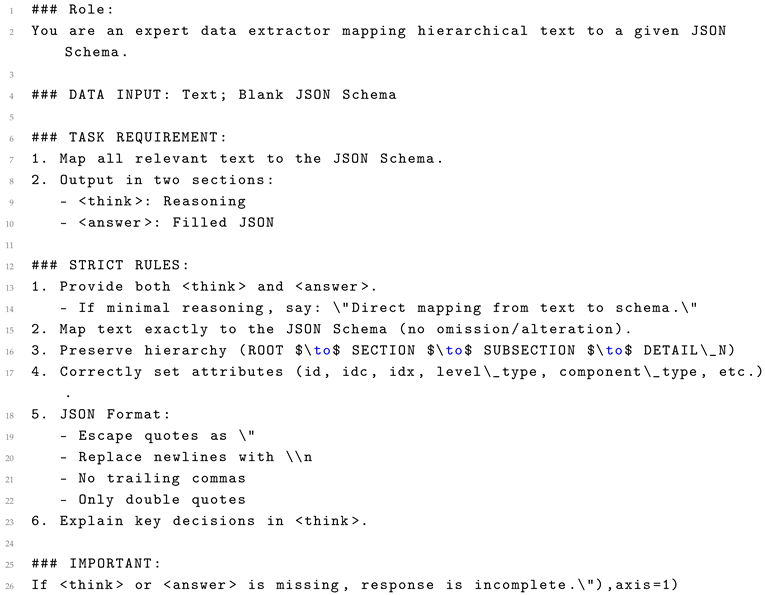

3.2. Reasoning Dataset: Reverse-Engineering from Text to Schema

- The LLM is instructed to output only its chain-of-thought reasoning, explicitly describing the mapping from text to schema. Such self-explaining prompts push the model to maintain strict schema fidelity while revealing the logic behind each structural decision. Because the prompt demands an explicit reasoning path, the LLM self-checks how each field is filled, minimizing random or malformed output. The chain-of-thought not only ensures correctness but also documents how the text was interpreted which is vital for regulated environments. By varying the domain (e.g., different types of QA reports) and text layout styles, we create a dataset that fosters LLM resilience to formatting quirks.

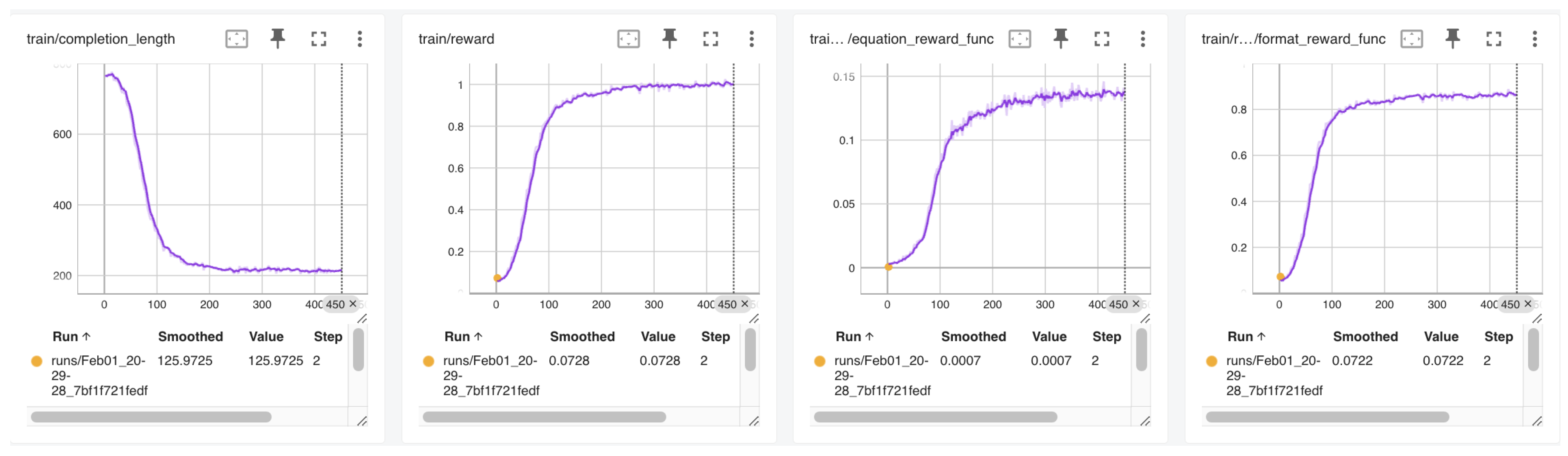

3.3. GRPO Training on a Small Foundation Model

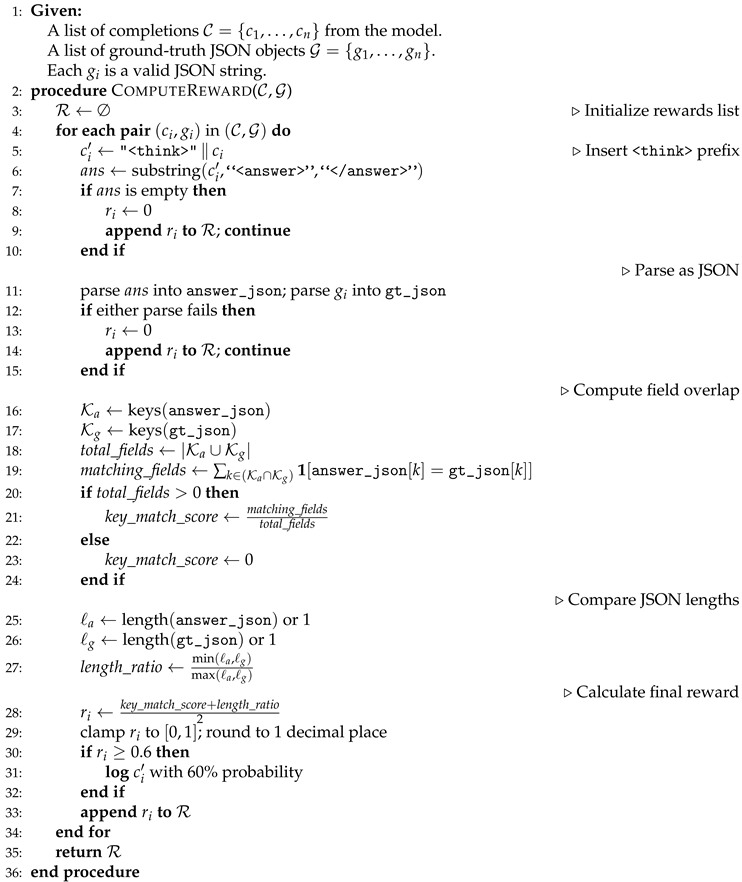

3.3.1. JSON-Based Reward

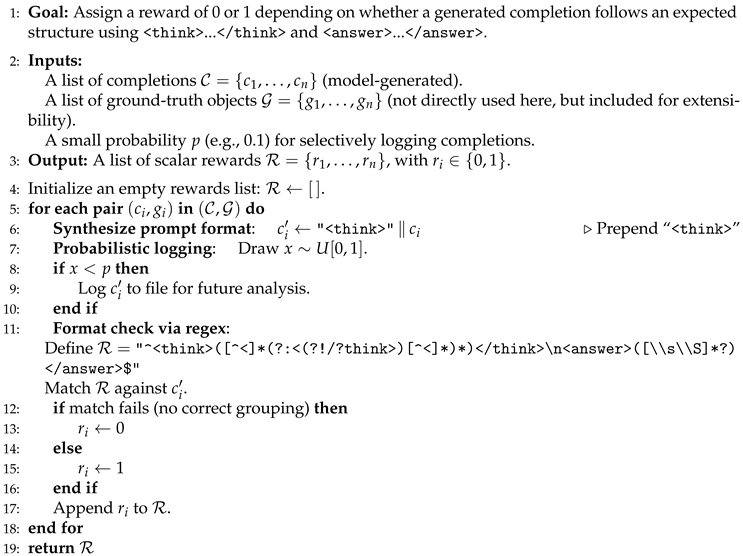

3.3.2. Format Verification Reward

| Algorithm 1 JSON-Based Reward Computation |

|

| Algorithm 2 Format Verification Reward |

|

| Algorithm 3 GRPO with Multiple Reward Functions |

|

3.4. Supervised Fine-Tuning

4. Evaluation

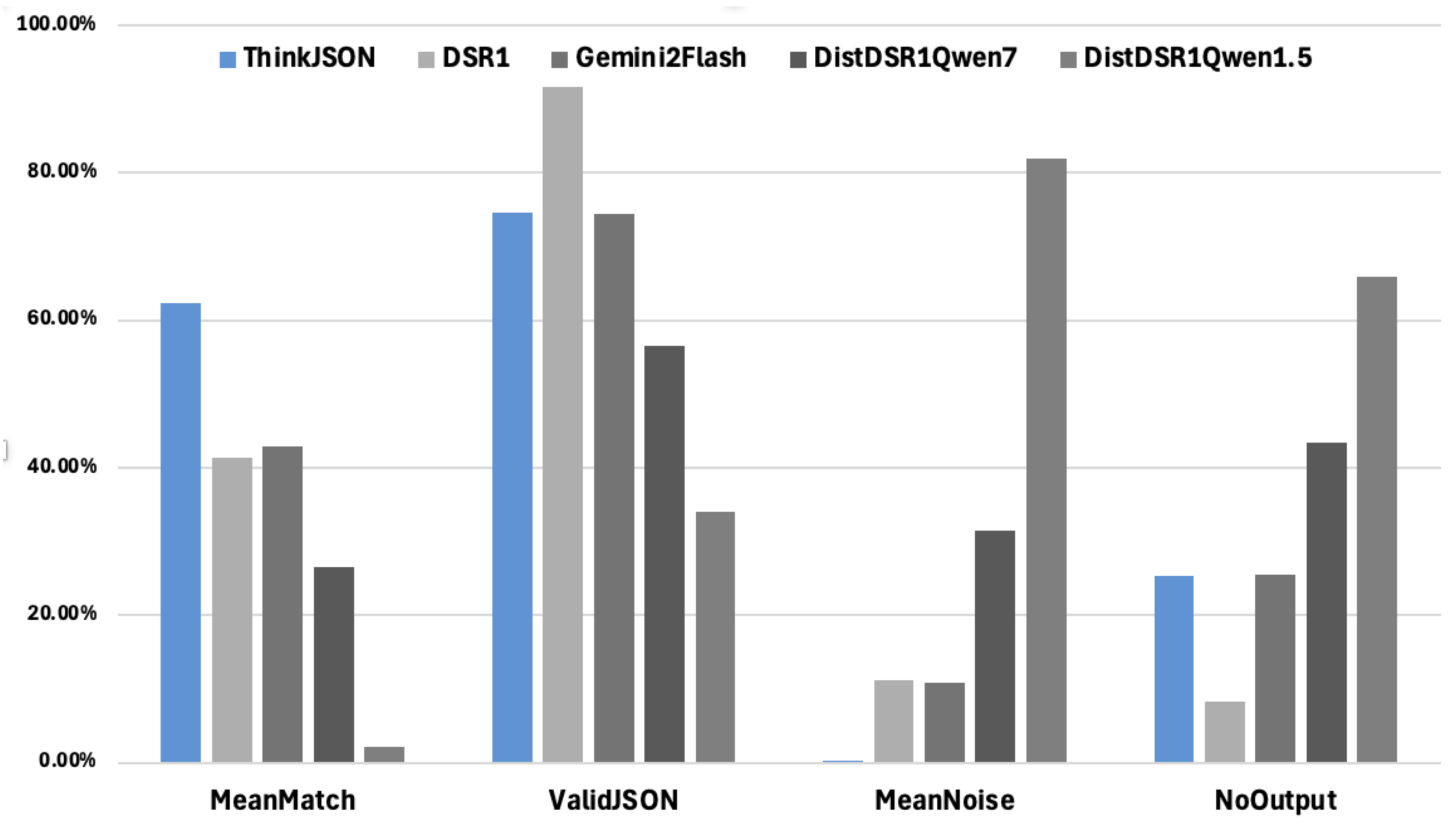

- Rows With No Output: Number of rows for which the model produced no structured output.

- Rows With Valid JSON: Number of rows resulting in syntactically valid JSON objects.

- Mean Match Percentage: Average proportion of fields correctly mapped.

- Mean Noise Percentage: Average proportion of extraneous or malformed tokens within the extracted JSON.

5. Discussion and Future Direction

References

- Labant, M. Smart Biomanufacturing: From Piecemeal to All of a Piece. https://www.genengnews.com/topics/bioprocessing 2025.

- et all, M.L. “We Need Structured Output”: Towards User-centered Constraints on Large Language Model Output. https://lxieyang.github.io/assets/files/pubs/llm-constraints-2024/llm-constraints-2024.pdf 2024.

- et all, S.G. Generating Structured Outputs from Language Models: Benchmark and Studies. https://arxiv.org/html/2501.10868 2025.

- et all, C.S. StructuredRAG: JSON Response Formatting with Large Language Models. https://arxiv.org/abs/2408.11061 2024.

- Souza, T. Taming LLMs 2024.

- et all, D.L. Large Language Model-Driven Structured Output: A Comprehensive Benchmark and Spatial Data Generation Framework. https://www.mdpi.com/2220-9964/13/11/405 2024.

- et all, Z.W. Verifiable Format Control for Large Language Model Generations. https://arxiv.org/html/2502.04498 2025.

- et all, Y.D. XGRAMMAR: FLEXIBLE AND EFFICIENT STRUCTURED GENERATION ENGINE FOR LARGE LANGUAGE MODELS. https://arxiv.org/pdf/2411.15100 2024.

- Brandon T. Willard, R.L. Efficient Guided Generation for Large Language Models. https://arxiv.org/pdf/2307.09702 2023.

- et all, A.M. Self-Refine: Iterative Refinement with Self-Feedback. https://arxiv.org/abs/2303.17651 2023.

- et all, Y.W. Self-Instruct: Aligning Language Models with Self-Generated Instructions. https://arxiv.org/abs/2212.10560 2022.

- Team, Q. Qwen. Qwen2.5: A Party of Foundation Models. https://qwenlm.github.io/blog/qwen2.5 2024.

- DeepSeek-AI. Deepeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. https://arxiv.org/pdf/2501.12948 2025.

- at all, Z.S. Pushing the Limits of Mathematical Reasoning in Open Language Models. https://arxiv.org/pdf/arXiv:2402.03300 2024.

- et all, P.W. Math-Shepherd: A Labelfree Step-by-Step Verifier for LLMs in Mathematical Reasoning. https://arxiv.org/pdf/2312.08935 2023.

- et all, C.D. Reinforcement Learning Can Be More Efficient with Multiple Rewards. https://proceedings.mlr.press/v202/dann23a.html 2023.

- team, G.A. Generate Structured Output with the Gemini API. https://ai.google.dev/gemini-api/docs/structured-output?lang=python 2025.

- et all, N.E. AI Maturity Model for GxP Application: A Foundation for AI Validation. https://ispe.org/pharmaceutical-engineering/march-april-2022/ai-maturity-model-gxp-application-foundation-ai 2022.

- et al., V.A. An Overview on Pharmaceutical Regulatory Affairs Using Artificial Intelligence. https://www.ijpsjournal.com/article/An+Overview+on+Pharmaceutical+Regulatory+Affairs+Using+Artificial+Intelligence+ 2025.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).