Submitted:

10 February 2025

Posted:

12 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- derive the likelihood function from the truth function and source, enabling semantic probability predictions, thereby quantifying semantic information, and

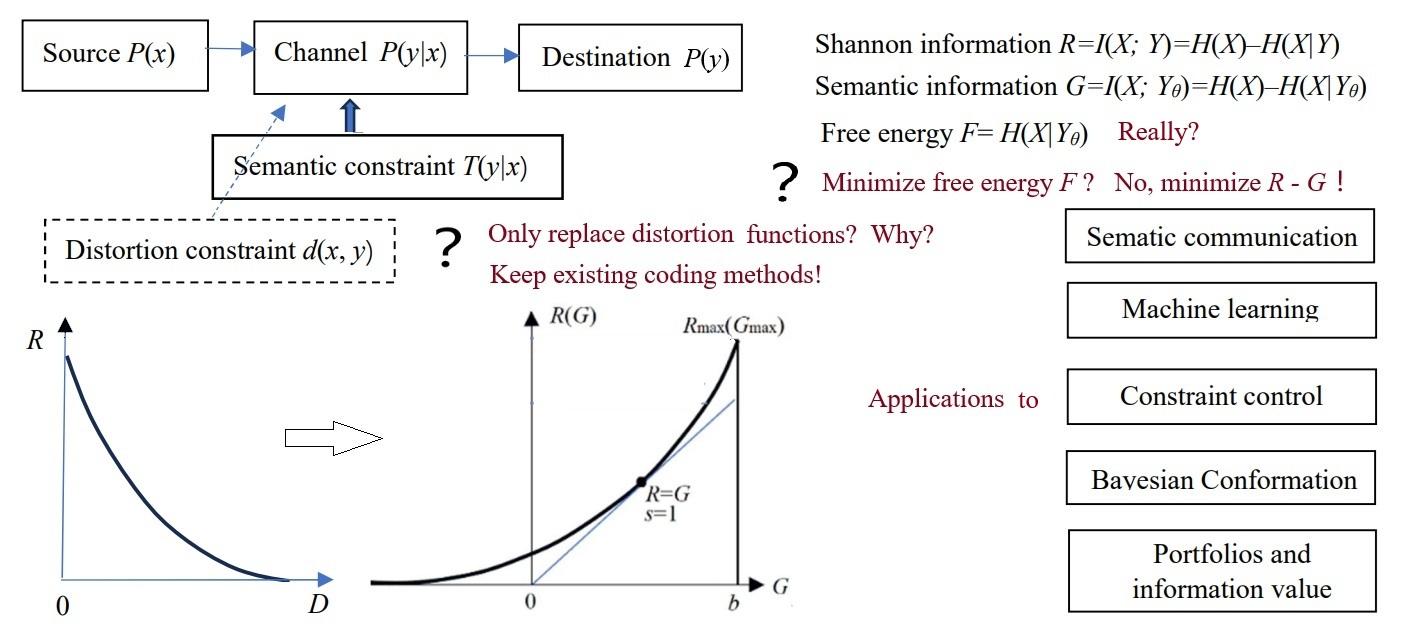

- replace the distortion constraint in Shannon's theory with semantic constraints, which include semantic distortion, semantic information quantity, and semantic information loss constraints.

2. From Shannon's Information Theory to the Semantic Information G Theory

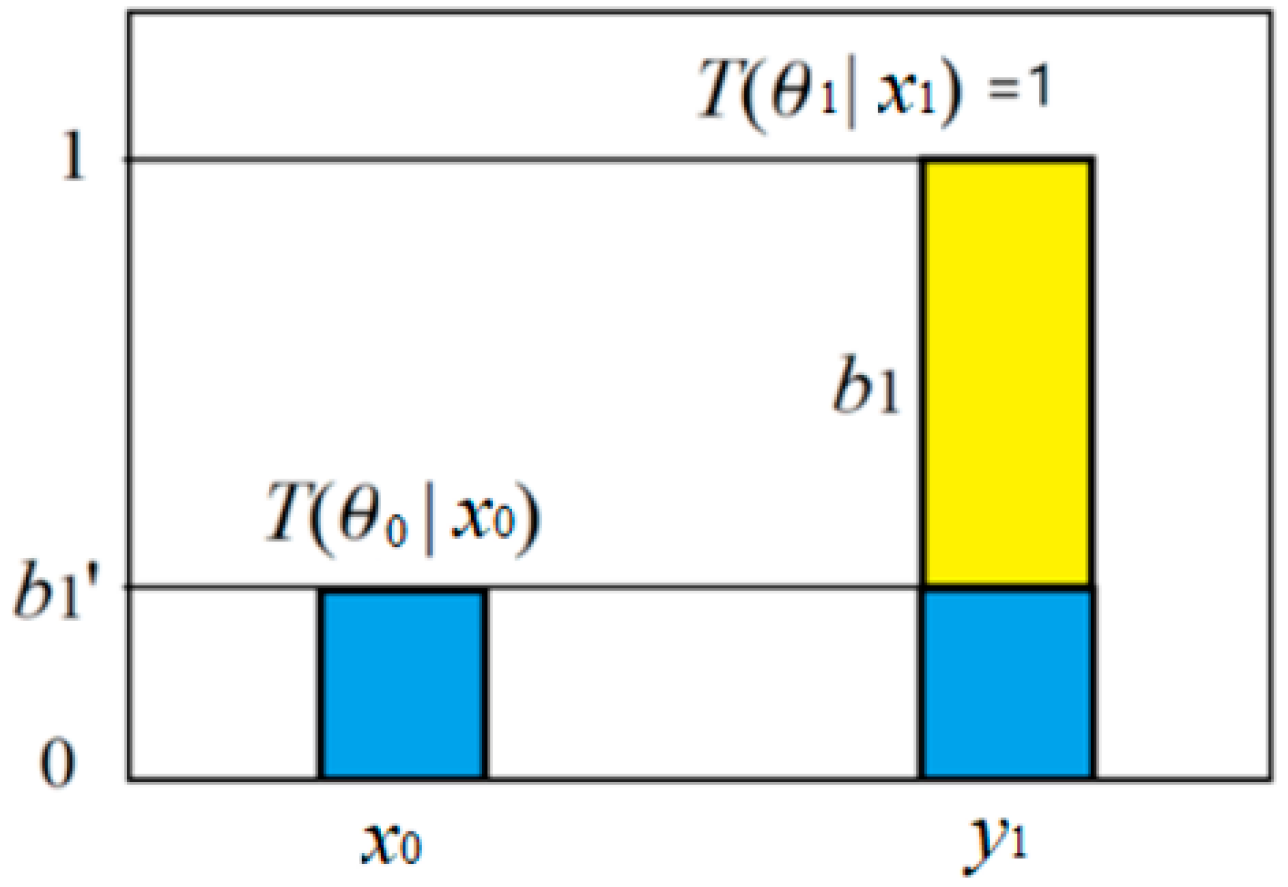

2.1. Semantics and Semantic Probabilistic Predictions

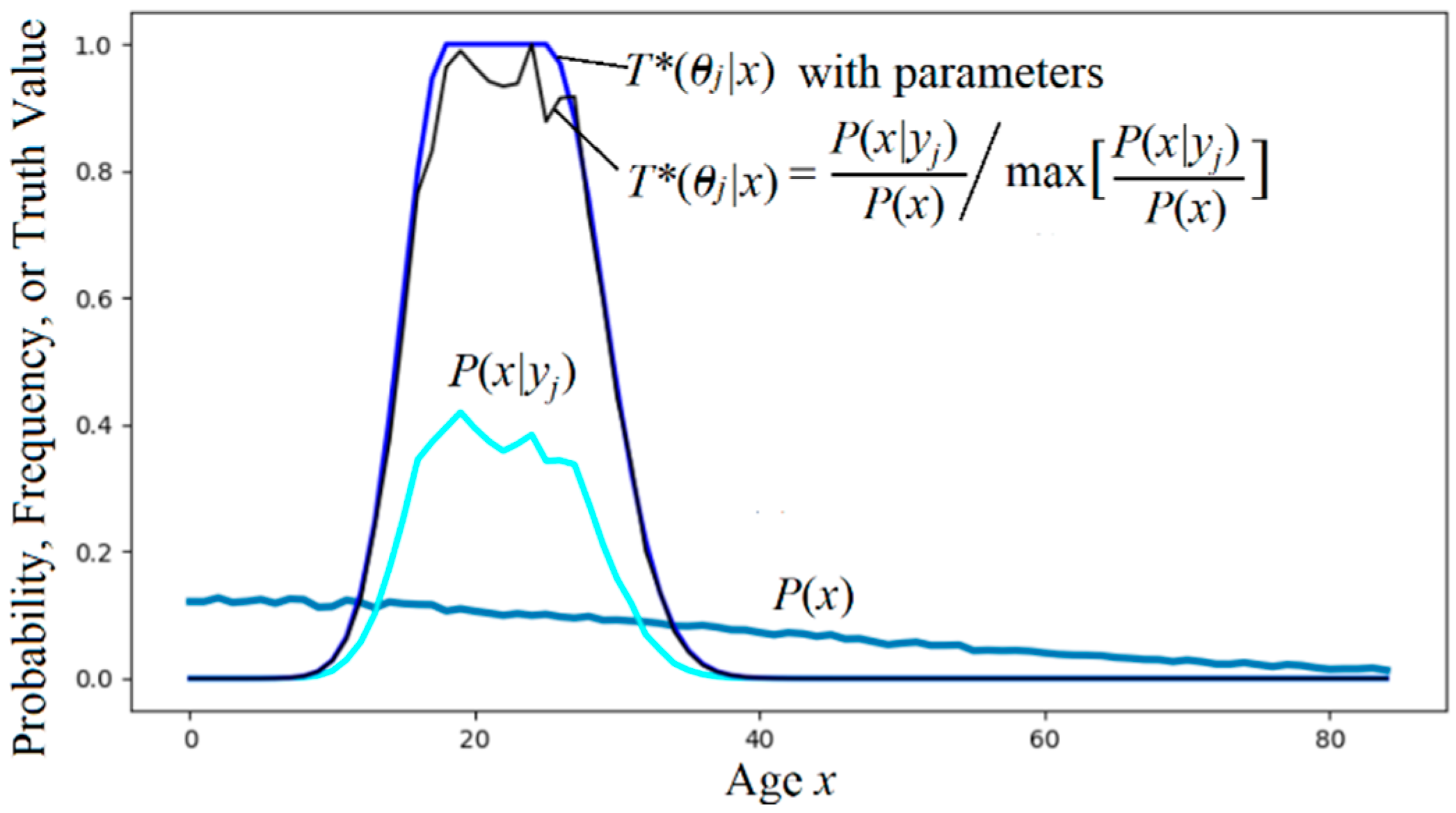

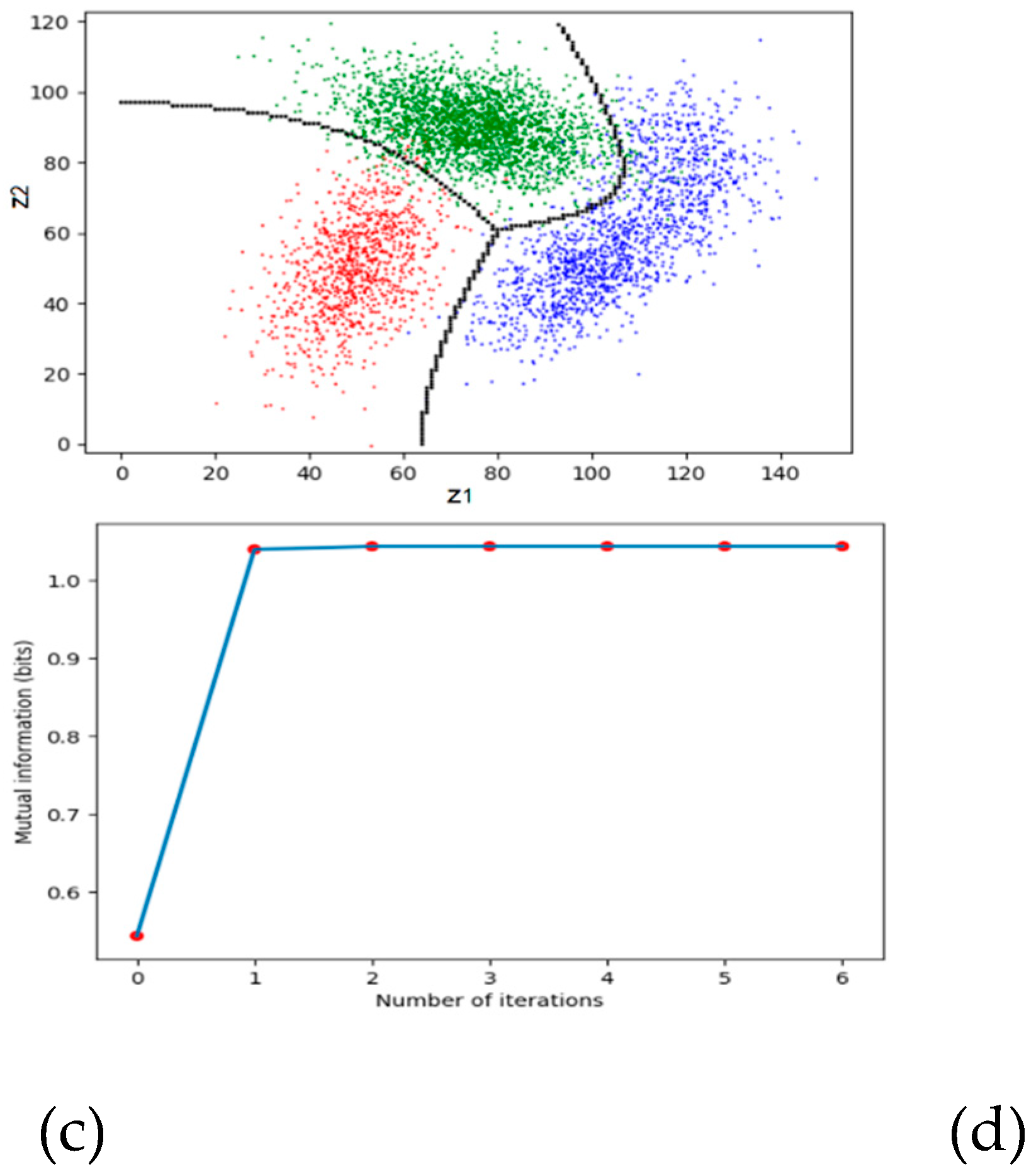

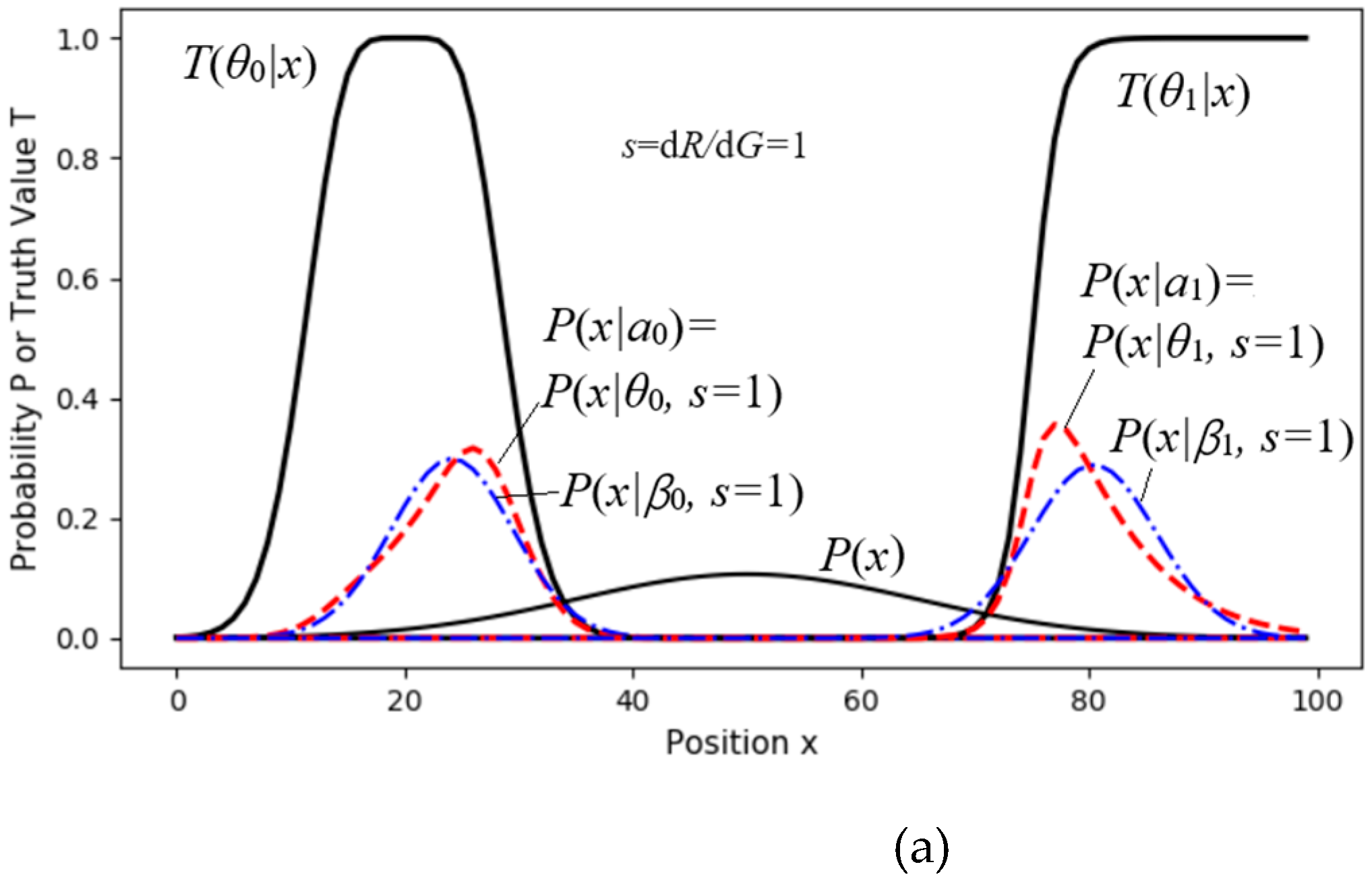

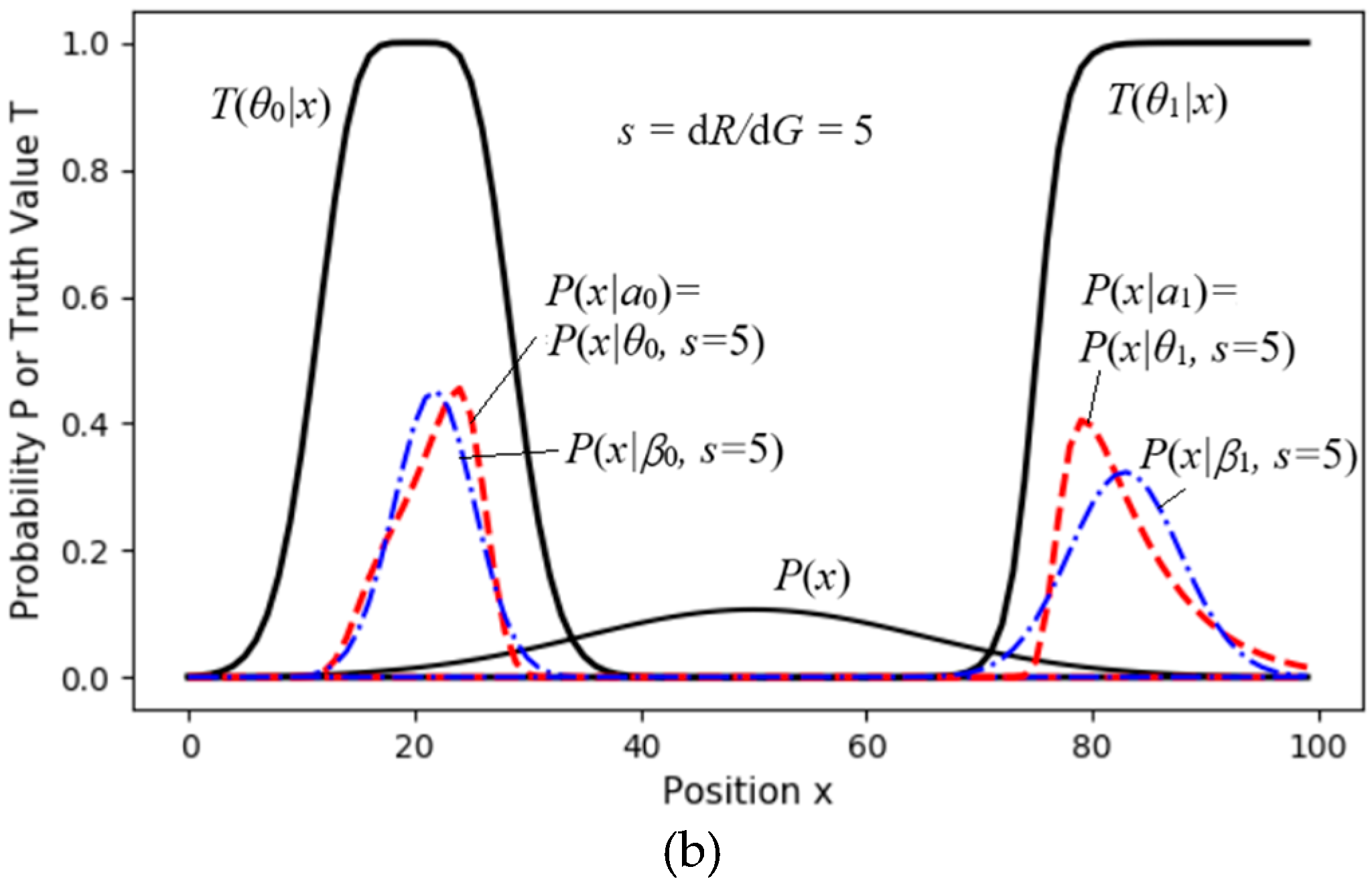

2.2. The P-T Probability Framework

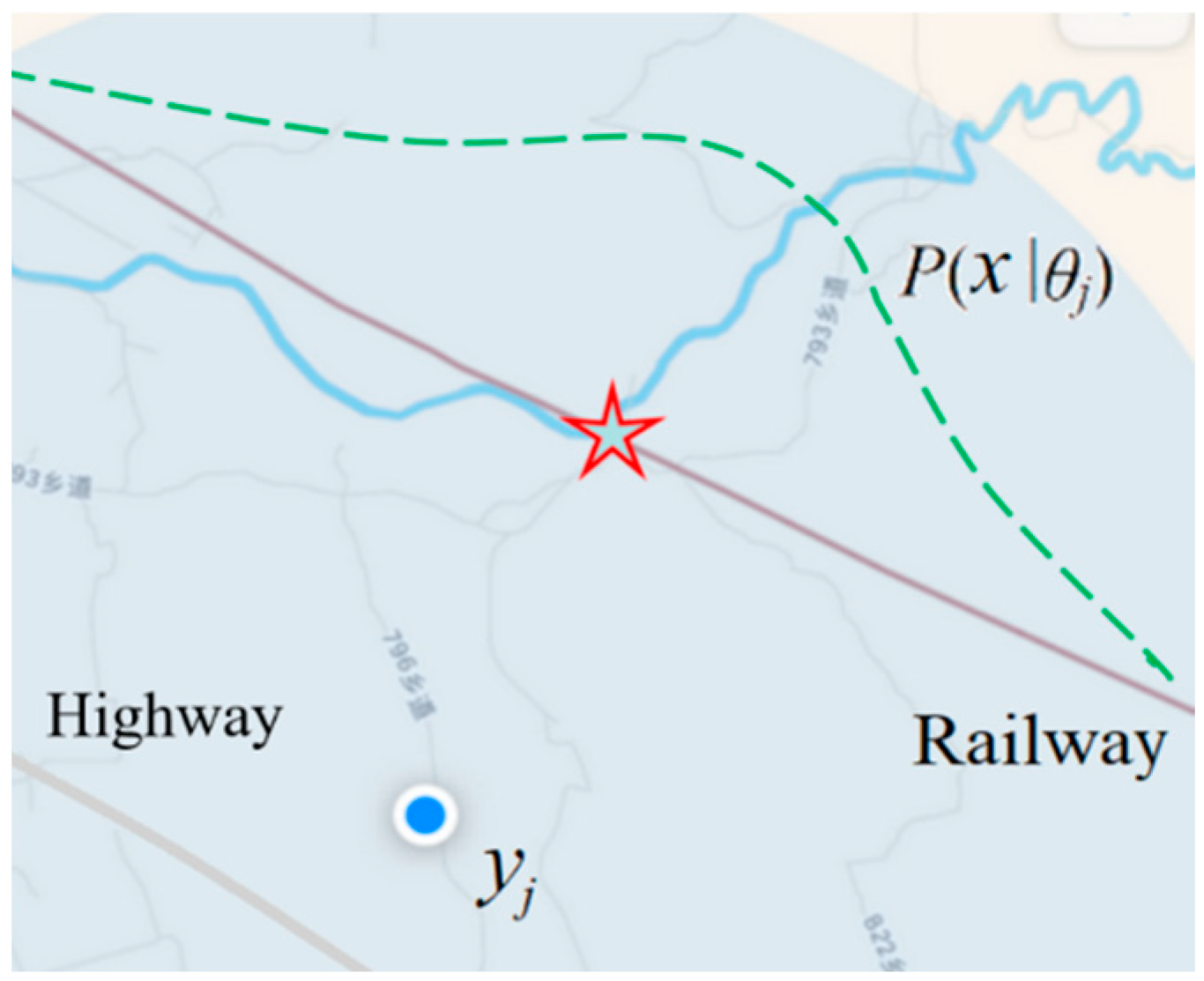

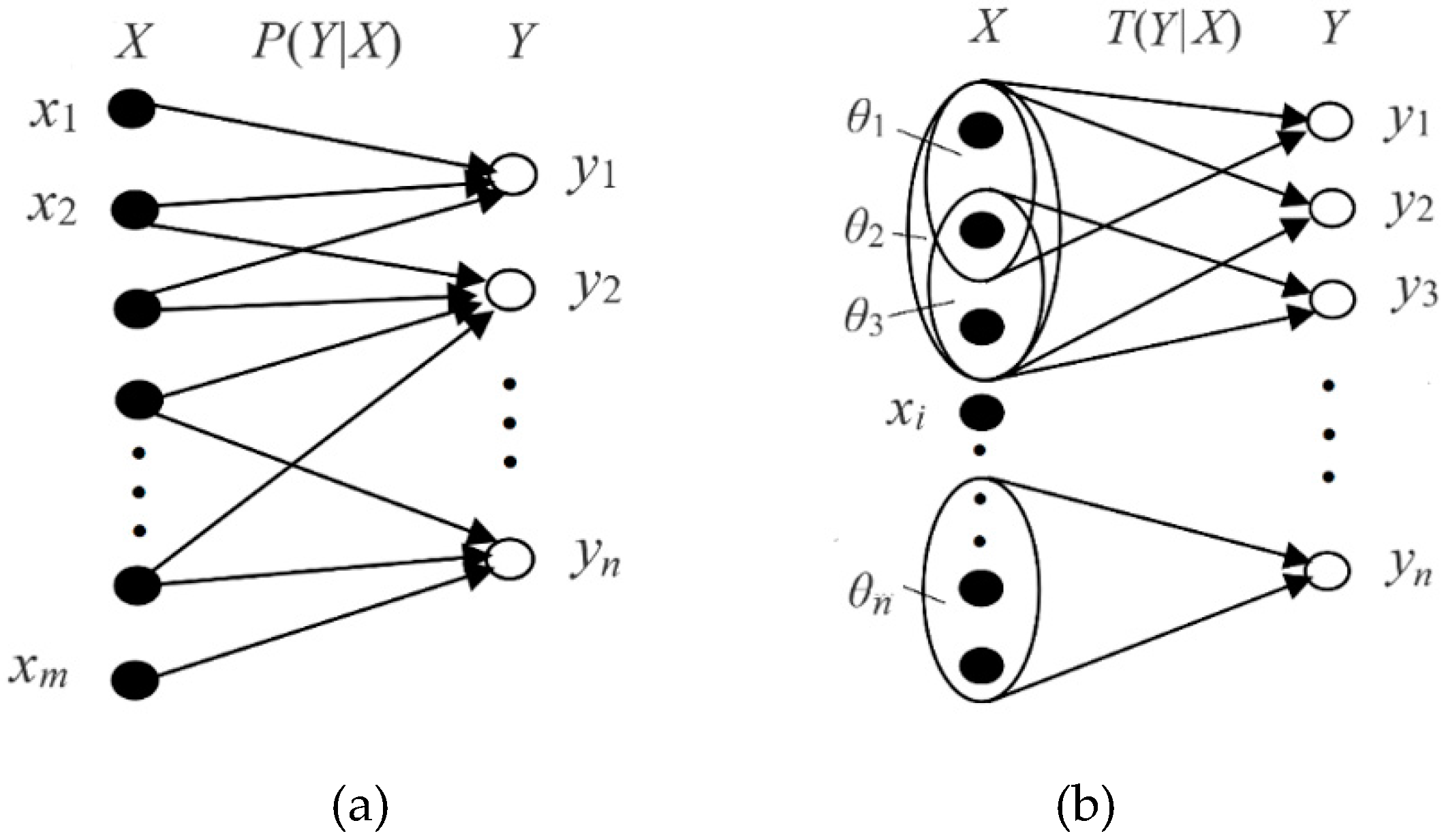

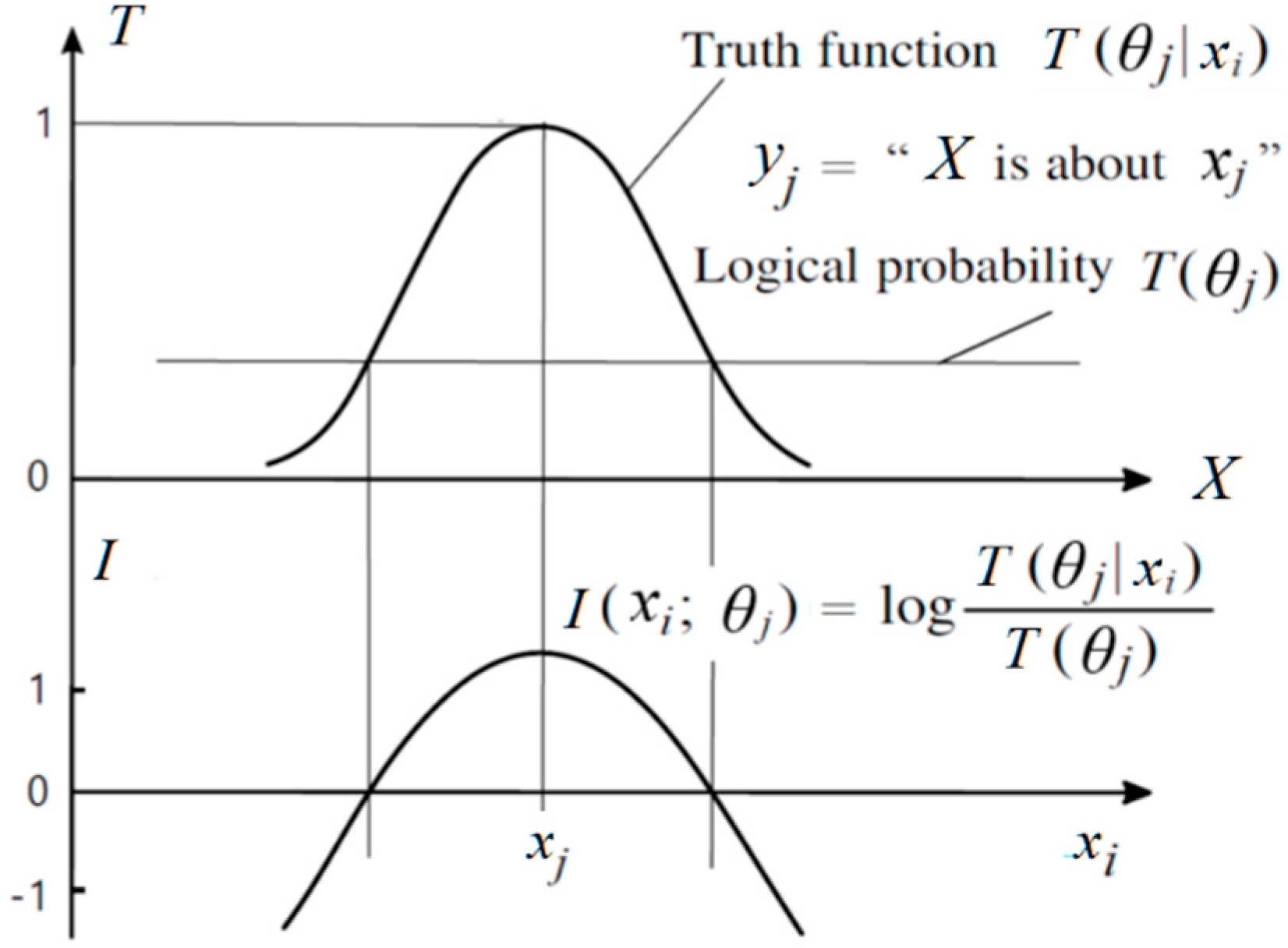

- X and Y are two random variables, taking x ϵ U={x1, x2, …} and y ϵ V ={y1, y2, …} as their values. For machine learning, xi is an instance, and yj is a label or hypothesis; yj(xi) is a proposition, and yj(x) is a proposition function.

- The θj is a fuzzy subset of the domain U, whose elements make yj true. We have yj(x) = "x ϵ θj". The θj can also be understood as a model or a set of model parameters.

- Probability defined by “=”, such as P(yj)≡P(Y = yj), is a statistical probability; probability defined by “ϵ”, such as P(X ϵ θj), is a logical probability. To distinguish P(Y = yj) and P(X ϵ θj), we define the logical probability of yj as T(yj)≡ T(θj)≡ P(X ϵ θj).

- T(yj|x) ≡ T(θj|x)≡ P(X ϵ θj|X = x)∈[0,1] is the truth function of yj and also the membership function mθj(x) of the fuzzy set θj, that is,

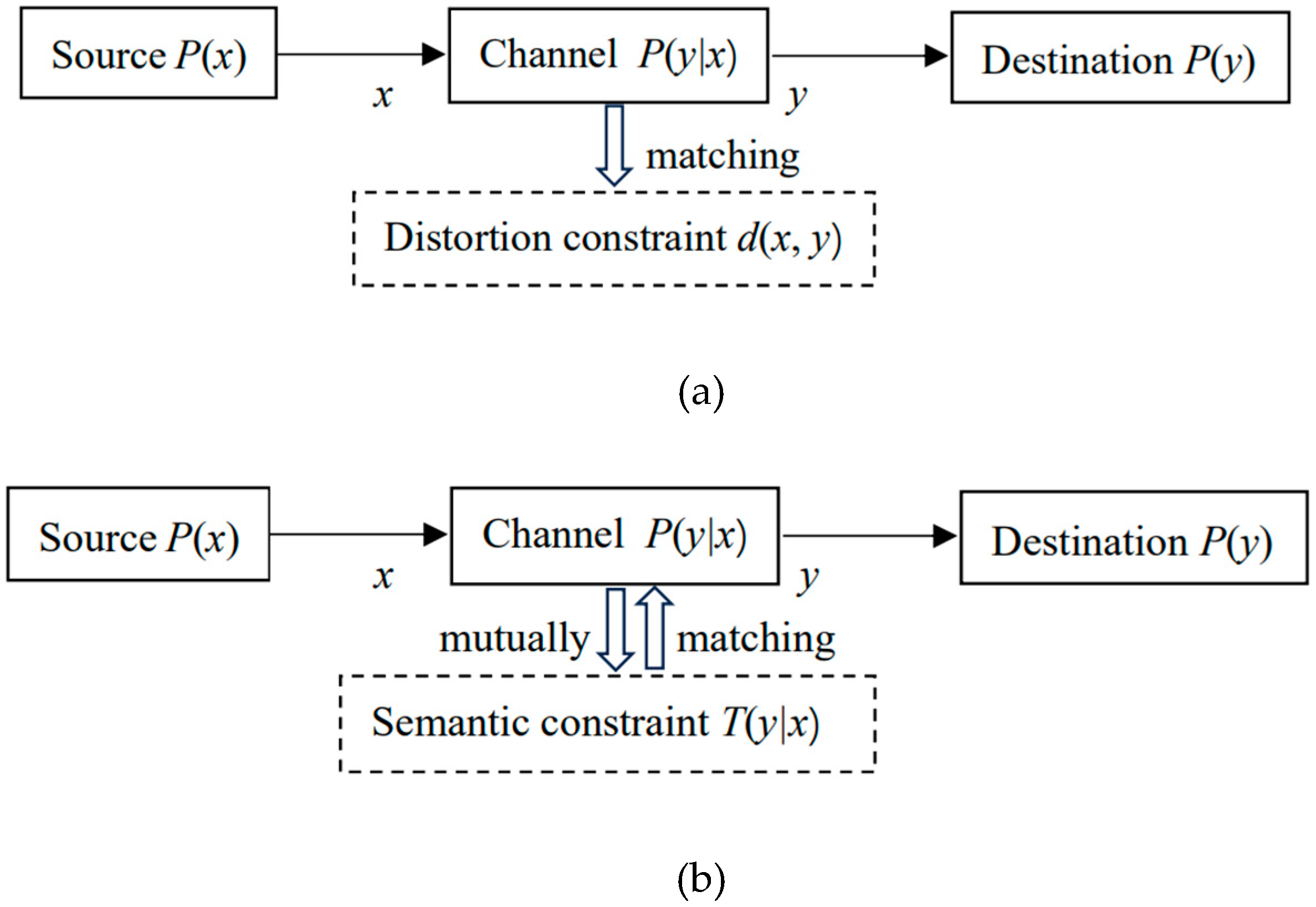

2.3. Semantic Channel and Semantic Communication Model

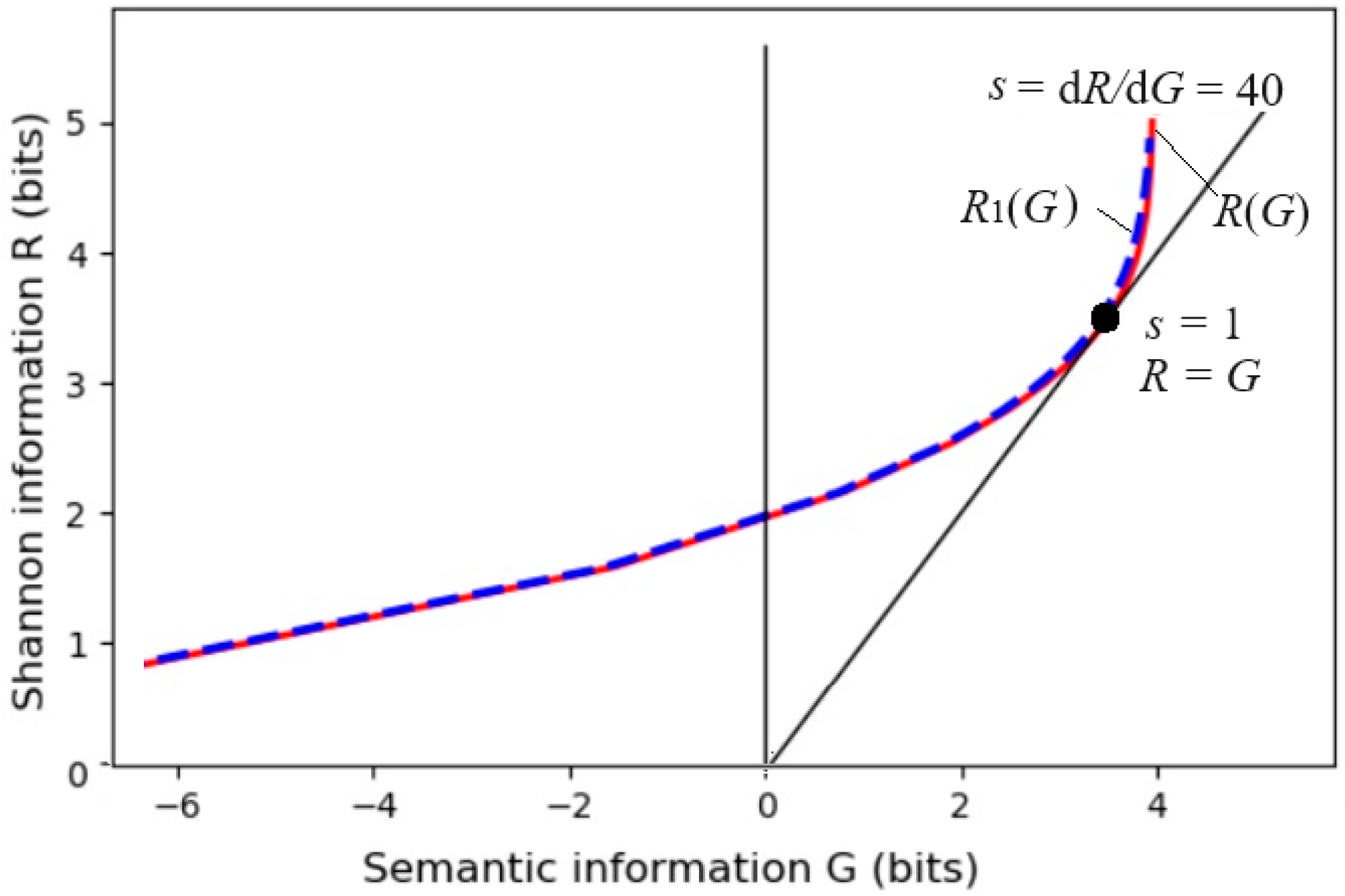

2.4. Generalizing Shannon Information Measure to Semantic Information G Measure

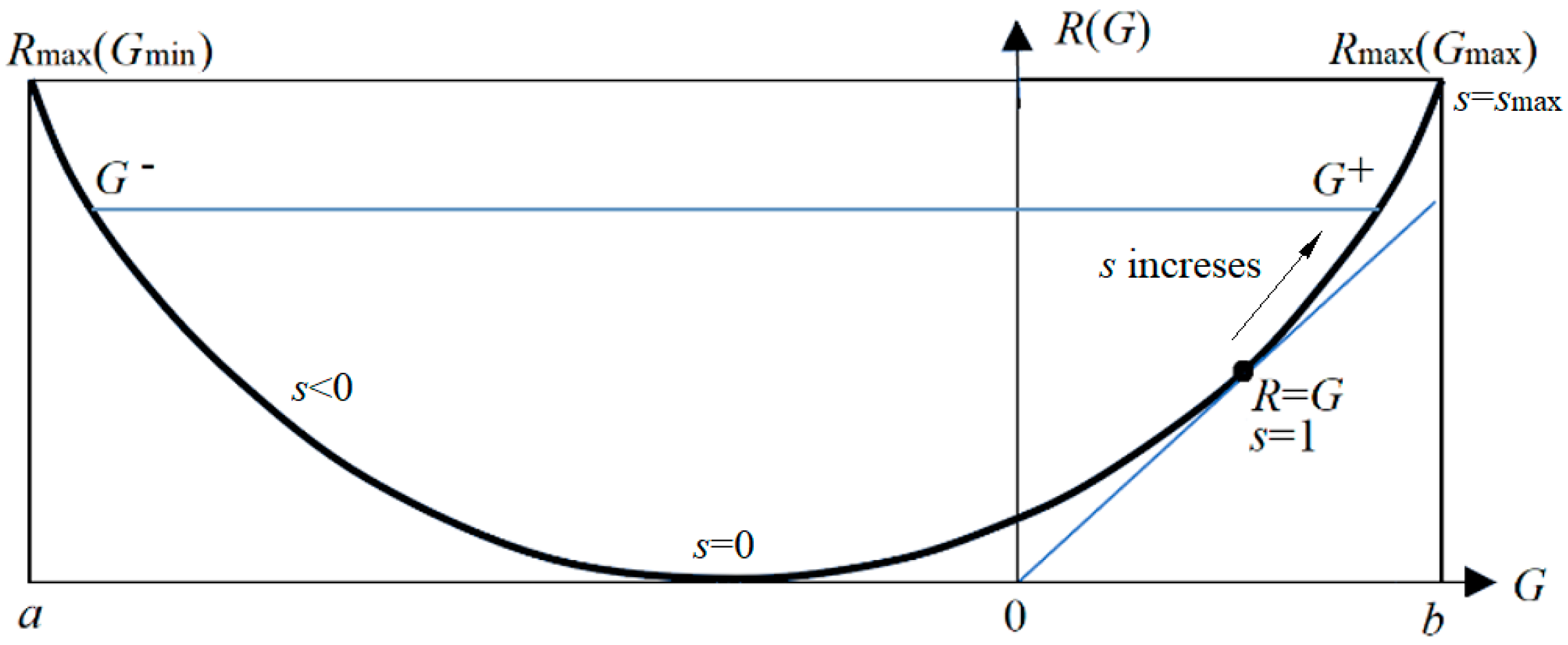

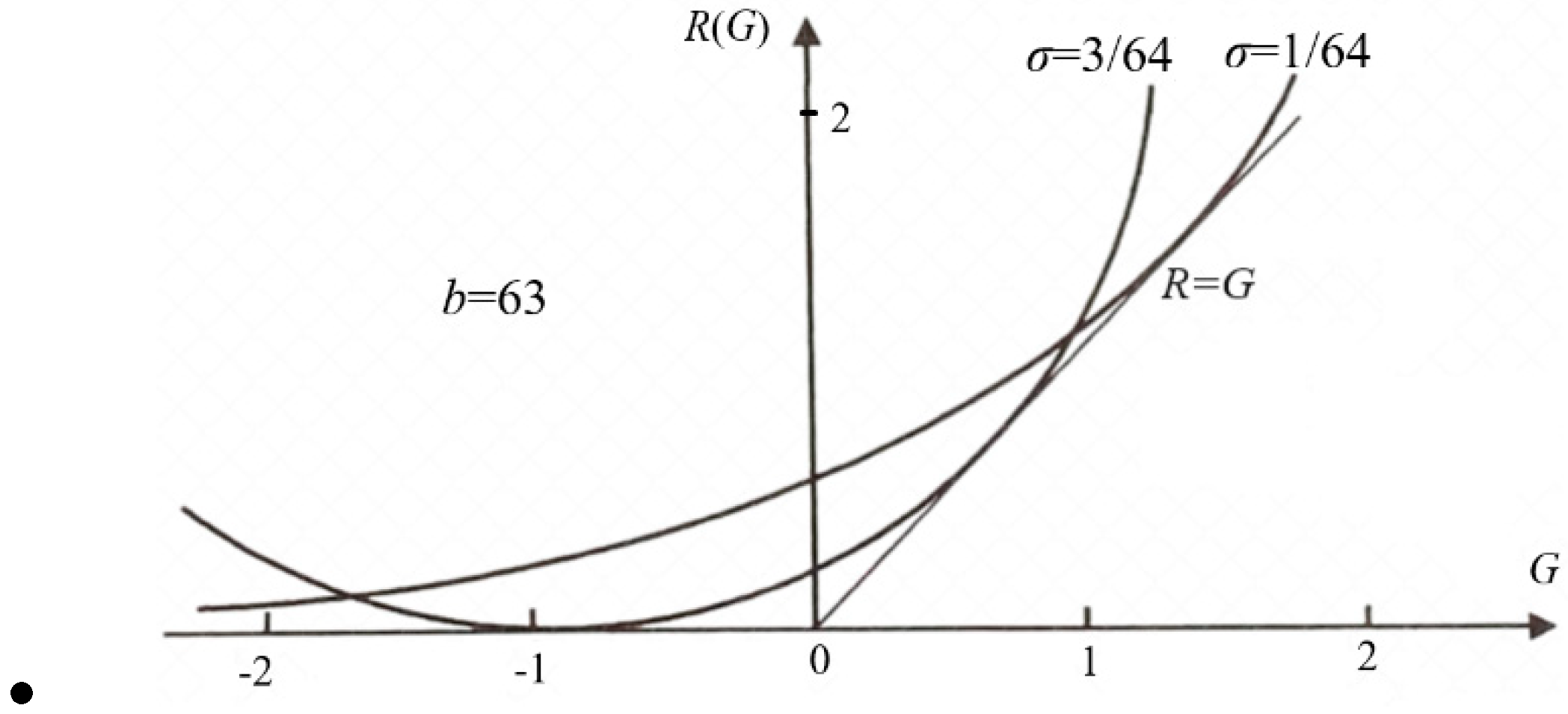

2.5. From the Information Rate-distortion Function to the Information Rate-fidelity Function

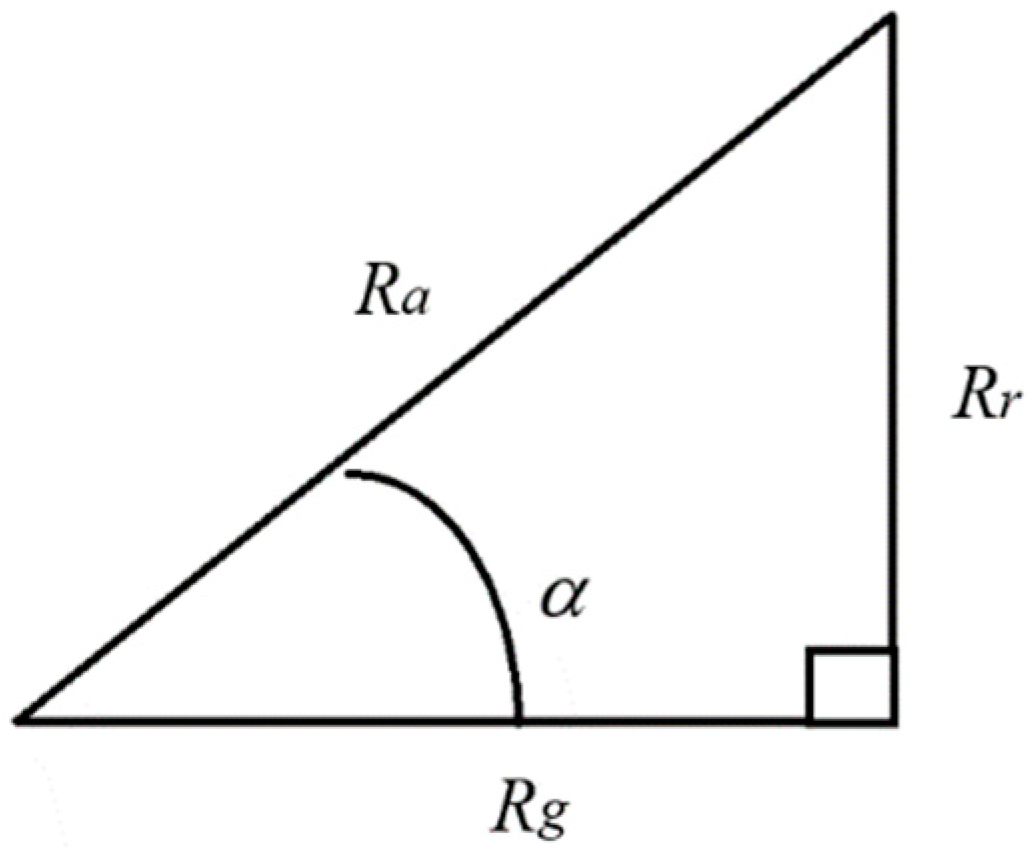

2.6. Semantic Channel Capacity

- .Try to choose x that only makes one label's true value 1 (avoid ambiguity and reduce the logical probability of y);

- Encoding should make P(yj|xj)=1 as much as possible (to ensure that Y is used correctly).

- Choose P(x) so that each Y's probability and logical probability are as equal as possible (close to 1/n, thereby maximizing the semantic entropy).

3. Electronic Semantic Communication Optimization

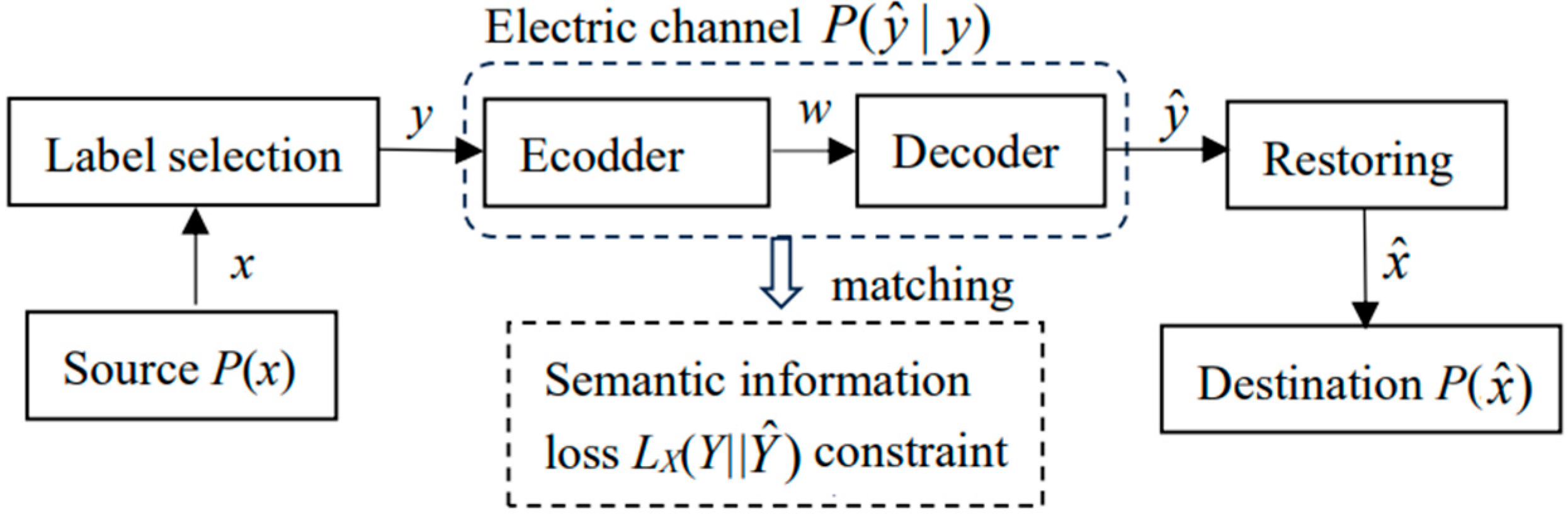

3.1. Electronic Semantic Communication Model

3.2. Optimization of Electronic Semantic Communication with Semantic Information Loss as Distortion

3.3. Experimental Results: Compress Image Data According to Visual Discrimination

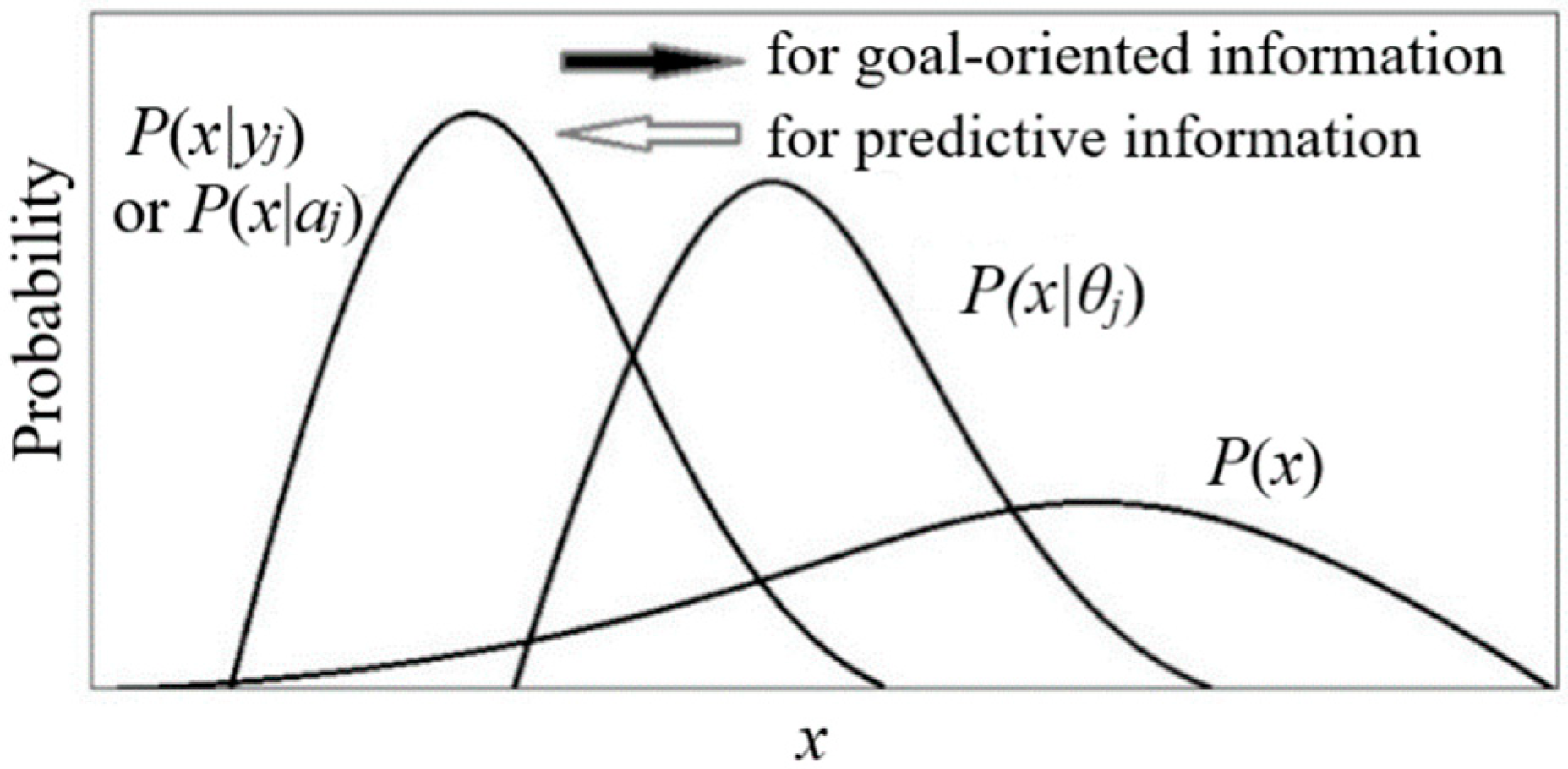

4. Goal-Oriented Information, Information Value, Physical Entropy and Free Energy

4.1. Three Kinds of Information Related to Value

4.2. Goal-Oriented Information

4.2.1. Similarities and Differences Between Goal-Oriented Information and Prediction Information

- "Workers' wages should preferably exceed 5000 dollars";

- "The age of death of the population had better exceed 80 years old";

- "The cruising distances of electric vehicles should preferably exceed 500 kilometers";

- "The error of train arrival time had better be less than one minute".

4.2.2. Optimization of Goal-Oriented Information

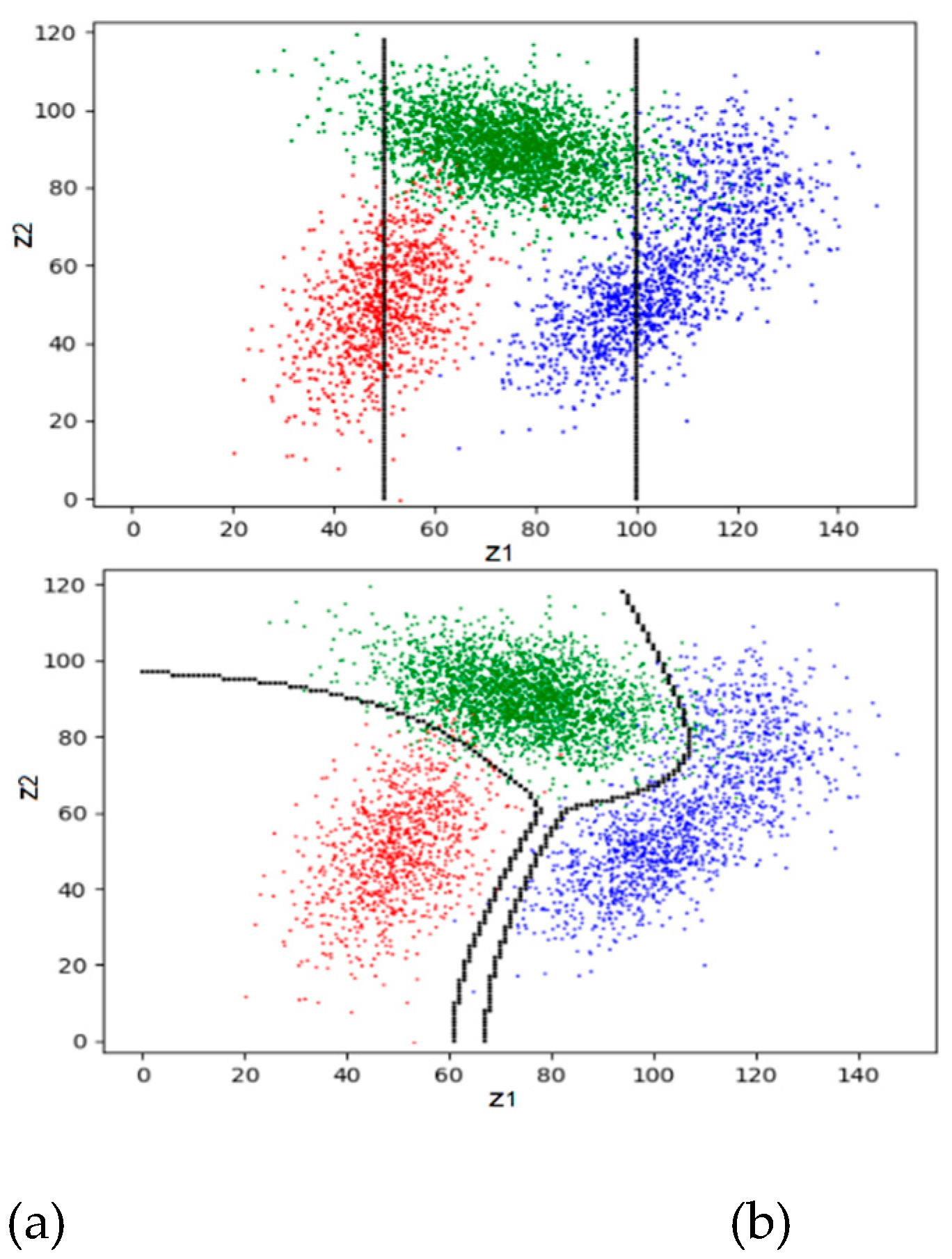

4.2.3. Experimental Results: Trade-Off Between Maximizing Purposiveness and MIE

4.3. Investment Portfolios and Information Values

4.3.1. Capital Growth Entropy

4.3.2. Generalization of Kelley's Formula

4.3.3. Risk Measurement, Investment Channels, and Investment Channel Capacity

4.3.4. Information Value Formula Based on Capital Growth Entropy

4.3.5. Comparison with Arrow's Information Value Formula

4.4. Information, Entropy, and Free Energy in Thermodynamic Systems

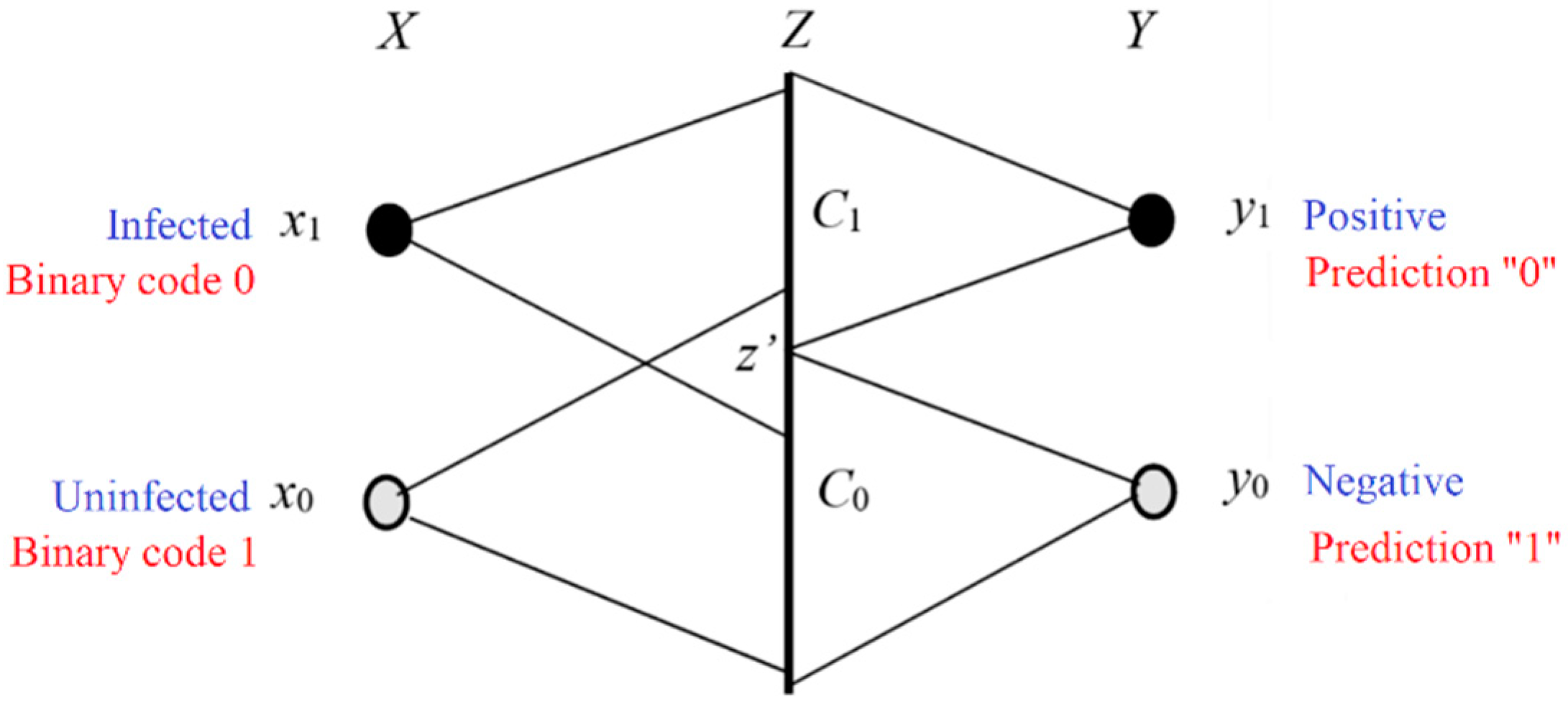

5. The G Theory for Machine Learning

5.1. Basic Methods of Machine Learning: Learning Functions and Optimization Criteria

- First, we use samples or sample distributions to train the learning functions with a specific criterion, such as maximum likelihood or RLS criterion;

- Then, we make probability predictions or classifications utilizing the learning function with minimum distortion, minimum loss, or maximum likelihood criteria.

5.2. For Multi-Label Learning and Classification

5.3. Maximum MI Classification for Unseen Instances

5.4. Explanation and Improvement of the EM Algorithm for Mixed Models

5.5. Semantic Variational Bayes: A Simple Method for Solving Hidden Variables

- Criteria: In the definition of VB, it adopts the MFE (i.e., minimum semantic posterior entropy) criterion, whereas, for solving P(y), it uses P(y|x) as the variation, actually uses the maximum likelihood criterion that makes the mixture model converge. In contrast, SVB uses the MID criterion, equal to the maximum likelihood criterion (optimizing model parameters) plus the ME criterion.

- Variational method: VB only uses P(y) or P(y|x) as the variation, while SVB alternatively uses P(y|x) and P(y) as the variation.

- Computational complexity: VB uses logarithmic and exponential functions to solve P(y|x) [65]; the calculation of P(y|x) in SVB is relatively simple (for the same task, i.e., when s=1).

- Constraints: VB only uses likelihood functions as constraint functions. In contrast, SVB allows using various learning functions (including likelihood, truth, membership, similarity, and distortion functions) as constraints. In addition, SVB can use the parameter s to enhance constraints.

5.6. Bayesian Confirmation and Causal Confirmation

- There are no suitable mathematical tools; for example, statistical and logical probabilities are not well distinguished.

- Many people do not distinguish between the confirmation of the relationship (i.e. →) in the major premise y→x and the confirmation of the consequent (i.e., x occurs);

- No confirmation measure can reasonably clarify the Raven Paradox.

5.7. Emerging and Potential Applications

- The membership function T(θj|x) or similarity function S(x, yj) proportional to P(yj|x) is used as the learning function. Its maximum value is generally 1, and its average is the partition function Zj.

- The estimated information or semantic information between x and yj is log[T(θj|x)/Zj] or log[S(x, yj)/Zj].

- The statistical probability distribution P(x, y) is still used when calculating the average information.

6. Discussion and Summary

6.1. Why Is the G Theory a Generalization of Shannon's Information Theory?

- In addition to the probability prediction P(x|yj), the semantic probability prediction P(x|θj)=P(x)T(θj|x)/T(θj) is also used;

- The G measure also has coding meaning, which means the average code length saved by the semantic probability prediction.

6.2. What Is Information?

6.3. Relationships and Differences Between the G Theory and Other Demantic Information Theories

6.3.1. Carnap and Bar-Hillel's Semantic Information Theory

6.3.2. Dretske's Knowledge and Information Theory:

- The information must correspond to facts and eliminate all other possibilities.

- The amount of information relates to the extent of uncertainty eliminated.

- Information used to gain knowledge must be true and accurate.

6.3.3. Florida's Strong Semantic Information Theory:

- The information must contain semantic content and be consistent with reality:

- False or misleading information cannot qualify as true information.

6.3.4. Other Semantic Information Theories:

6.4. Relationship Between the G Theory and Kolmogorov Complexity Theory

6.5. Comparison of the MIE Principle and the MFE Principle

- The G theory regards Shannon's MI I(X; Y) as free energy, while Friston's theory regards the semantic posterior entropy H(X|Yθ) as free energy.

- The methods for finding the latent variable P(y) and the Shannon channel P(y|x) are different. Friston uses VB, and the G theory uses SVB.

6.6. Limitations and Areas That Need Exploration

6.7. Conclusion

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Abbreviations

| Abbreviation | Original text |

| EM | Expectation-Maximization |

| EnM | Expectation-n-Maximization |

| GPS | Global Positioning System |

| G theory | Semantic information G theory (G means generalization) |

| InfoNCE | Information Noise Contrast Estimation |

| KL | Kullback–Leibler |

| LBI | Logical Bayes' Inference |

| ME | Maximum Entropy |

| MI | Mutual Information |

| MIE | Maximum Information Efficiency |

| MID | Minimum Information Difference |

| MINE | Mutual Information Neural Estimation |

| SVB | Variational Byes |

| VB | Semantic Variational Byes |

References

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Weaver, W. Shannon, C.E., Weaver, W., Eds.; Recent contributions to the mathematical theory of communication. In The Mathematical Theory of Communication, 1st ed.; The University of Illinois Press: Urbana, IL, USA, 1963; pp. 93–117. [Google Scholar]

- Carnap, R.; Bar-Hillel, Y. An Outline of a Theory of Semantic Information; Tech. Rep. No. 247; Research Laboratory of Electronics, MIT: Cambridge, MA, USA, 1952. [Google Scholar]

- Lu, C. Shannon equations reform and applications. BUSEFAL 1990, 44, 45–52. Available online: https://www.listic.univ-smb.fr/production-scientifique/revue-busefal/version-electronique/ebusefal-44/ (accessed on 5 March 2019).

- Lu, C. A Generalized Information Theory; China Science and Technology University Press: Hefei, China, 1993; (in Chinese). ISBN 7-312-00501-2. [Google Scholar]

- Lu, C. Meanings of generalized entropy and generalized mutual information for coding. J. China Inst. Commun. 1994, 15, 37–44 (in Chinese). (in Chinese). [Google Scholar]

- Lu, C. A generalization of Shannon’s information theory. Int. J. Gen. Syst. 1999, 28, 453–490. [Google Scholar] [CrossRef]

- Lu, C. Semantic Information G Theory and Logical Bayesian Inference for Machine Learning. Information, 2019, 10, 261. [Google Scholar] [CrossRef]

- Dretske, F. Knowledge and the Flow of Information. The MIT Press: Cambridge, Massachusetts, 1981; ISBN 0-262-04063-8. [Google Scholar]

- Wu, W. General source and general entropy. Journal of Beijing University of Posts and Telecommunications, (in Chinese). 吴伟陵 [1]吴伟陵.广义信息源与广义熵[J].北京邮电大学学报, 1982, 5(1):29-41. 1982, 5, 29–41. [Google Scholar]

- Zhong, Y. A Theory of Semantic Information. Proceedings 2017, 1, 129. [Google Scholar] [CrossRef]

- Floridi, L. Outline of a theory of strongly semantic information. Minds and Machines, 2004, 14, 197–221. [Google Scholar] [CrossRef]

- Floridi, L. Semantic conceptions of information. In Stanford Encyclopedia of Philosophy; Stanford University: Stanford, CA, USA, 2005; Available online: http://seop.illc.uva.nl/entries/information-semantic/ (accessed on 17 June 2020).

- D’Alfonso, S. On Quantifying Semantic Information. Information 2011, 2, 61–101. [Google Scholar] [CrossRef]

- Xin, G.; Fan, P.; Letaief, K.B. Semantic Communication: A Survey of Its Theoretical Development. Entropy 2024, 26, 102. [Google Scholar] [CrossRef]

- Strinati, E.C.; Barbarossa, S. 6G networks: Beyond Shannon towards semantic and goal-oriented communications. Comput. Netw. 2021, 190, 107930. [Google Scholar] [CrossRef]

- Kai Niu, Ping Zhang. A mathematical theory of semantic communication. Journal on Communications 2024, 45, 8–59. [Google Scholar]

- Davidson, D. Truth and meaning. Synthese 1967, 3, 304–323. [Google Scholar] [CrossRef]

- Popper, K. Logik Der Forschung: Zur Erkenntnistheorie Der Modernen Naturwissenschaft; Springer: Vienna, Austria, 1935. [Google Scholar]

- Popper, K. Conjectures and Refutations, 1st ed.; Routledge: London and New York, 2002. [Google Scholar]

- Fisher, R.A. On the mathematical foundations of theoretical statistics. Philos. Trans. R. Soc. 1922, 222, 309–368. [Google Scholar]

- Zadeh, L.A. Fuzzy sets. Inf. Control 1965, 8, 338–353. [Google Scholar] [CrossRef]

- Zadeh, L.A. Probability measures of fuzzy events. J. Math. Anal. Appl. 1986, 23, 421–427. [Google Scholar] [CrossRef]

- Lu, C. The P–T probability framework for semantic communication, falsification, confirmation, and Bayesian reasoning. Philosophies 2020, 5, 25. [Google Scholar] [CrossRef]

- Lu, C. Reviewing evolution of learning functions and semantic information measures for understanding deep learning. Entropy 2023, 25, 802. [Google Scholar] [CrossRef]

- Lu, C. Channels’ confirmation and predictions’ confirmation: From the medical test to the raven paradox. Entropy 2020, 22, 384. Available online: https://www.mdpi.com/1099-4300/22/4/384. [CrossRef]

- Lu, C. Causal Confirmation Measures: From Simpson’s Paradox to COVID-19. Entropy 2023, 25, 143. [Google Scholar] [CrossRef]

- Lu, C. Using the Semantic Information G Measure to Explain and Extend Rate-Distortion Functions and Maximum Entropy Distributions. Entropy 2021, 23, 1050. [Google Scholar] [CrossRef] [PubMed]

- Lu, C. Semantic Information G Theory for Range Control with Tradeoff between Purposiveness and Efficiency, Available online:. Available online: https://arxiv.org/abs/2411.05789. (accessed on 1 January 2025).

- Lu, C. Semantic Variational Bayes Based on a Semantic Information Theory for Solving Latent Variables. Available online: https://doi.org/10.48550/arXiv.2408.13122 (accessed on 1 January 2025).

- Shannon, C.E. Coding theorems for a discrete source with a fidelity criterion. IRE Nat. Conv. Rec. 1959, 4, 142–163. [Google Scholar]

- Berger, T. Rate Distortion Theory; Prentice-Hall: Enklewood Cliffs, NJ, USA, 1971. [Google Scholar]

- Zhou, J.P. Fundamentals of information theory. 1983. (in Chinese). [Google Scholar]

- Belghazi, M.I.; Baratin, A.; Rajeswar, S.; Ozair, S.; Bengio, Y.; Courville, A.; Hjelm, R.D. MINE: Mutual information neural estimation. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; pp. 1–44. [Google Scholar] [CrossRef]

- Oord, A.V.D.; Li, Y.; Vinyals, O. Representation Learning with Contrastive Predictive Coding. Available online: https://arxiv.org/abs/1807.03748 (accessed on 10 January 2023).

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef]

- Hjelm, R.D.; Fedorov, A.; Lavoie-Marchildon, S.; Grewal, K.; Trischler, A.; Bengio, Y. Learning Deep Representations by Mutual Information Estimation and Maximization. Available online: https://arxiv.org/abs/1808.06670. (accessed on 22 December 2022).

- Tschannen, M.; Djolonga, J.; Rubenstein, P.K.; Gelly, S.; Luci, M. On Mutual Information Maximization for Representation Learning. Available online: https://arxiv.org/pdf/1907.13625.pdf (accessed on 23 February 2023).

- Tishby, N.; Zaslavsky, N. Deep learning and the information bottleneck principle. In Proceedings of the Information Theory Workshop (ITW), Jerusalem, Israel, 26 April–1 May 2015; pp. 1–5. [Google Scholar]

- Tarski, A. The semantic conception of truth: and the foundations of semantics. Philos. Phenomenol. Res. 1994, 4, 341–376. [Google Scholar] [CrossRef]

- Kolmogorov, A.N. Grundbegriffe der Wahrscheinlichkeitrechnung; Dover Publications: New York, NY, USA, 1950; Ergebnisse Der Mathematik (1933); translated as Foundations of Probability. [Google Scholar]

- von Mises, R. Probability, Statistics and Truth, 2nd ed.; George Allen and Unwin Ltd.: London, UK, 1957. [Google Scholar]

- Akaike, H. A new look at the statistical model identification. IEEE Trans. Autom. Control. 1974, 19, 716–723. [Google Scholar] [CrossRef]

- Geoffrey, E. Hinton and Drew van Camp. Keeping the neural networks simple by minimizing the description length of the weights. In Proceedings of COLT; pp. 5–13.

- Neal, R.; Hinton, G. Michael, I.J., Ed.; A view of the EM algorithm that justifies incremental, sparse, and other variants. In Learning in Graphical Models; MIT Press: Cambridge, MA, USA, 1999; pp. 355–368. [Google Scholar]

- Friston, K. The free-energy principle: a unified brain theory? Nat Rev Neurosci 2010, 11, 127–138. [Google Scholar] [CrossRef]

- Wang, P.Z. From the fuzzy statistics to the falling random subsets. In Advances in Fuzzy Sets, Possibility Theory and Applications; Wang, P.P., Ed.; Plenum Press: New York, NY, USA, 1983; pp. 81–96. [Google Scholar]

- Wang, P.Z. Fuzzy Sets and Falling Shadows of Random Set; Beijing Normal University Press: Beijing, China, 1985. (In Chinese) [Google Scholar]

- Farsad, N.; Rao, M.; Goldsmith, A. Deep learning for joint source-channel coding of text. In Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Calgary, AB, Canada, 15–20 April 2018; IEEE: New York, NY, USA, 2018; pp. 2326–2330. [Google Scholar]

- Güler, B.; Yener, A.; Swami, A. The semantic communication game. IEEE Trans. Cogn. Commun. Netw. 2018, 4, 787–802. [Google Scholar] [CrossRef]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. Bleu: A method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics, Philadelphia, PA, USA, 6–12 July 2002; pp. 311–318. [Google Scholar]

- Xie, H.; Qin, Z.; Li, G.Y.; Juang, B.H. Deep learning enabled semantic communication systems. IEEE Trans. Signal Process. 2021, 69, 2663–2675. [Google Scholar] [CrossRef]

- Markowitz, H.M. Portfolio selection. The Journal of Finance 1952, 7, 77–91. [Google Scholar] [CrossRef]

- Kelly, J. L. A new interpretation of information rate. Bell System Technical Journal 1956, 35, 917–926. [Google Scholar] [CrossRef]

- Latané H., A.; Tuttle, D. A. Criteria for Portfolio Building. The Journal of Finance 1967, 22, 359–373. [Google Scholar] [CrossRef]

- Arrow, K.J. The economics of information: An exposition. Empirica 1996, 23, 119–128. [Google Scholar] [CrossRef]

- Cover, T. M. Universal portfolios. Mathematical Finance. 1991, 1, 1–29. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory; John Wiley & Sons: New York, NY, USA, 2006. [Google Scholar]

- Lu, C. The Entropy Theory of Portfolios and Information Values. China Science and Technology University Press: Hefei, China, 1997; ISBN 7-312-00952-2F.36. (in Chinese) [Google Scholar]

- Jaynes, E.T. Probability Theory: The Logic of Science. Bretthorst, G.L., Ed.; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Zhang, M.L.; Li, Y.K.; Liu, X.Y.; Geng, X. Binary relevance for multi-label learning: An overview. Front. Comput. Sci. 2018, 12, 191–202. [Google Scholar] [CrossRef]

- Dempster, A.P.; Laird, N.M.; Rubin, D.B. Maximum Likelihood from Incomplete Data via the EM Algorithm. J. R. Stat. Soc. Ser. B 1997, 39, 1–38. [Google Scholar] [CrossRef]

- Ueda, N.; Nakano, R. Deterministic annealing EM algorithm. Neural Networks 1998, 11, 271–282. [Google Scholar] [CrossRef]

- Lu, C. Understanding and accelerating EM algorithm’s convergence by fair competition principle and rate-verisimilitude function. Available online: https://arxiv.org/abs/2104.12592 (accessed on 20 January 2025).

- Wikipedia, Variational Bayesian methods. Available online: https://en.wikipedia.org/wiki/Variational_Bayesian_methods. (accessed on 22 Dec. 2024).

- Beal, M. J. Variational algorithms for approximate Bayesian inference. Ph.D. Thesis, University College London, 2003. [Google Scholar]

- Koller, D. Probabilistic Graphical Models: Principles and Techniques. The MIT Press: Cambridge, Massachusetts, USA, 2009. [Google Scholar]

- Sebastian Gottwald1,2 and Daniel A. Braun1, The Two Kinds of Free Energy and the Bayesian Revolution, Available online:. Available online: https://arxiv.org/abs/2004.11763. (accessed on 20 January 2025).

- Hempel, C.G. Studies in the logic of confirmation. Mind 1945, 54, 1–26. [Google Scholar] [CrossRef]

- Carnap, R. Logical Foundations of Probability, 1st ed.; University of Chicago Press: Chicago, IL, USA, 1950. [Google Scholar]

- Scheffler, I.; Goodman, N.J. Selective confirmation and the ravens: A reply to Foster. J. Philos. 1972, 69, 78–83. [Google Scholar] [CrossRef]

- Fitelson, B.; Hawthorne, J. How Bayesian confirmation theory handles the paradox of the ravens. In the Place of Probability in Science; Eells, E., Fetzer, J., Eds.; Springer: Dordrecht, German, 2010; pp. 247–276. [Google Scholar]

- Crupi, V.; Tentori, K.; Gonzalez, M. On Bayesian measures of evidential support: Theoretical and empirical issues. Philos. Sci. 2007, 74, 229–252. [Google Scholar] [CrossRef]

- Greco, S.; Slowi ´nski, R.; Szcz ˛ech, I. Properties of rule interestingness measures and alternative approaches to normalization of measures. Inf. Sci. 2012, 216, 1–16. [Google Scholar] [CrossRef]

- Pearl, J. Causal inference in statistics: An overview. Stat. Surv. 2009, 3, 96–146. [Google Scholar] [CrossRef]

- Lu, C. Decoding model of color vision and verifications. Acta Opt. Sin. (In Chinese). 1989, 9, 158–163. [Google Scholar]

- Lu, C. B-fuzzy quasi-Boolean algebra and a generalized mutual entropy formula. Fuzzy Syst. Math. (in Chinese). 1991, 5, 76–80. [Google Scholar]

- Lu, C. Explaining color evolution, color blindness, and color recognition by the decoding model of color vision. In Proceedings of the 11th IFIP TC 12 International Conference, IIP 2020, Hangzhou, China, 3–6 July 2020; Shi, Z., Vadera, S., Chang, E., Eds.; Springer: Cham, Switzerland, 2020; pp. 287–298. [Google Scholar]

- Hinton, G. E. Deep belief networks. Scholarpedia 2009, 4, 5947. [Google Scholar] [CrossRef]

- RAE, J. Compression for AGI, Stanford MLSys Seminar, Available online:. Available online: https://www.youtube.com/watch?v=dO4TPJkeaaU. (accessed on 18 January 2025).

- Sutskever, L. An observation on Generalization, Bekeley: Simons Institute, Available online:. Available online: https://simons.berkeley.edu/talks/ilya-sutskever-openai-2023-08-14. (accessed on 18 January 2025).

- Mark Burgin. Theory of Information: Fundamentality, Diversity And Unification. In Theory of Information: Fundamentality, Diversity And Unification; Knowledge Copyright Publishing: Beijing, China, 2015; ISBN 978-7-5130-3095-3. World Science (in Chinese) [Google Scholar]

- De Luca, A.; Termini, S. A definition of a non-probabilistic entropy in setting of fuzzy sets. Inf. Control 1972, 20, 301–312. [Google Scholar] [CrossRef]

- D’Alfonso, S. On quantifying semantic information. Information 2011, 2, 61–101. [Google Scholar] [CrossRef]

- Basu, P.; Bao, J.; Dean, M.; Hendler, J. Preserving quality of information by using semantic relationships. Pervasive Mobile Comput. 2014, 11, 188–202. [Google Scholar] [CrossRef]

- Melamed, D. Measuring semantic entropy, 1997, Available online:. Available online: https://api.semanticscholar.org/CorpusID:7165973. (accessed on 20 January 2025).

- Guo, T.; Wang, Y.; Han, J.; et al. Semantic compression with side information: a rate-distortion perspective. Available online: https://arxiv.org/pdf/2208.06094. (accessed on 20 January 2025).

- Liu, J.; Zhang, W.; Poor, H.V. A rate-distortion framework for characterizing semantic information. Proceedings of 2021 IEEE International Symposium on Information Theory (ISIT); IEEE Press: Piscataway, 2021. ISBN 2894-2899. [Google Scholar]

- Kumar, T.; Bajaj, R.K.; Gupta, B. On some parametric generalized measures of fuzzy information, directed divergence and information Improvement. Int. J. Comput. Appl. 2011, 30, 5–10. [Google Scholar]

- Ohlan, A.; Ohlan, R. Fundamentals of fuzzy information measures. In Generalizations of Fuzzy Information Measures; Springer: Cham, Switzerland, 2016. [Google Scholar] [CrossRef]

- Klir, G. Generalized information theory. Fuzzy Sets Syst. 1991, 40, 127–142. [Google Scholar] [CrossRef]

- Kolmogorov, A. N. , Three approaches to the quantitative definition of information. Problems of Information and Transmission, 1965, 1, 1. [Google Scholar] [CrossRef]

- Wikipedia, Semantic similarity, Available online:. Available online: https://en.wikipedia.org/wiki/Semantic_similarity (accessed on 20 January 2025).

- Tao, X.; et al. Federated Edge Learning for 6G: Foundations, Methodologies, and Applications, in Proceedings of the IEEE. [CrossRef]

- Letaief, K.B.; et al. AI empowered wireless networks. IEEE Commun. Mag. 2019, 57, 84–90. [Google Scholar] [CrossRef]

- Gündüz, D.; et al. Beyond transmitting bits: Context, semantics, and task-oriented communications. IEEE J. Sel. Areas Commun. 2022, 41, 5–41. [Google Scholar] [CrossRef]

- Niu, K; et al. A paradigm shift towards semantic communications. IEEE Communications Magazine, 2022, 60, 113–119.

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient estimation of word representations in vector space. Available online: arXiv:1301.3781 (accessed on 20 January 2025).

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J.; Distributed representations of words and phrases and their compositionality. Advances in Neural Information Processing Systems. Available online: arXiv:1310.4546 (accessed on 20 January 2025).

- Vaswani, *!!! REPLACE !!!*; et al. Attention is all you need. Available online: https://arxiv.org/abs/1706.03762. (accessed on 18 January 2025).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).