Submitted:

07 February 2025

Posted:

10 February 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Key Features of HyperLLM

2.1. Multimodal Capabilities in HyperLLM

2.2. Advanced Reasoning and Adaptive Logic in HyperLLM

2.2.1. Advanced Reasoning

2.2.2. Adaptive Logic

2.2.3. Implementing Advanced Reasoning and Adaptive Logic in HyperLLM

2.3. Dynamic Learning & Continuous Adaptation In HyperLLM

2.4. Deep Personalization in HyperLLM

2.5. Computational Efficiency and Energy Efficiency in HyperLLM

3. Proposed Architecture of HyperLLM

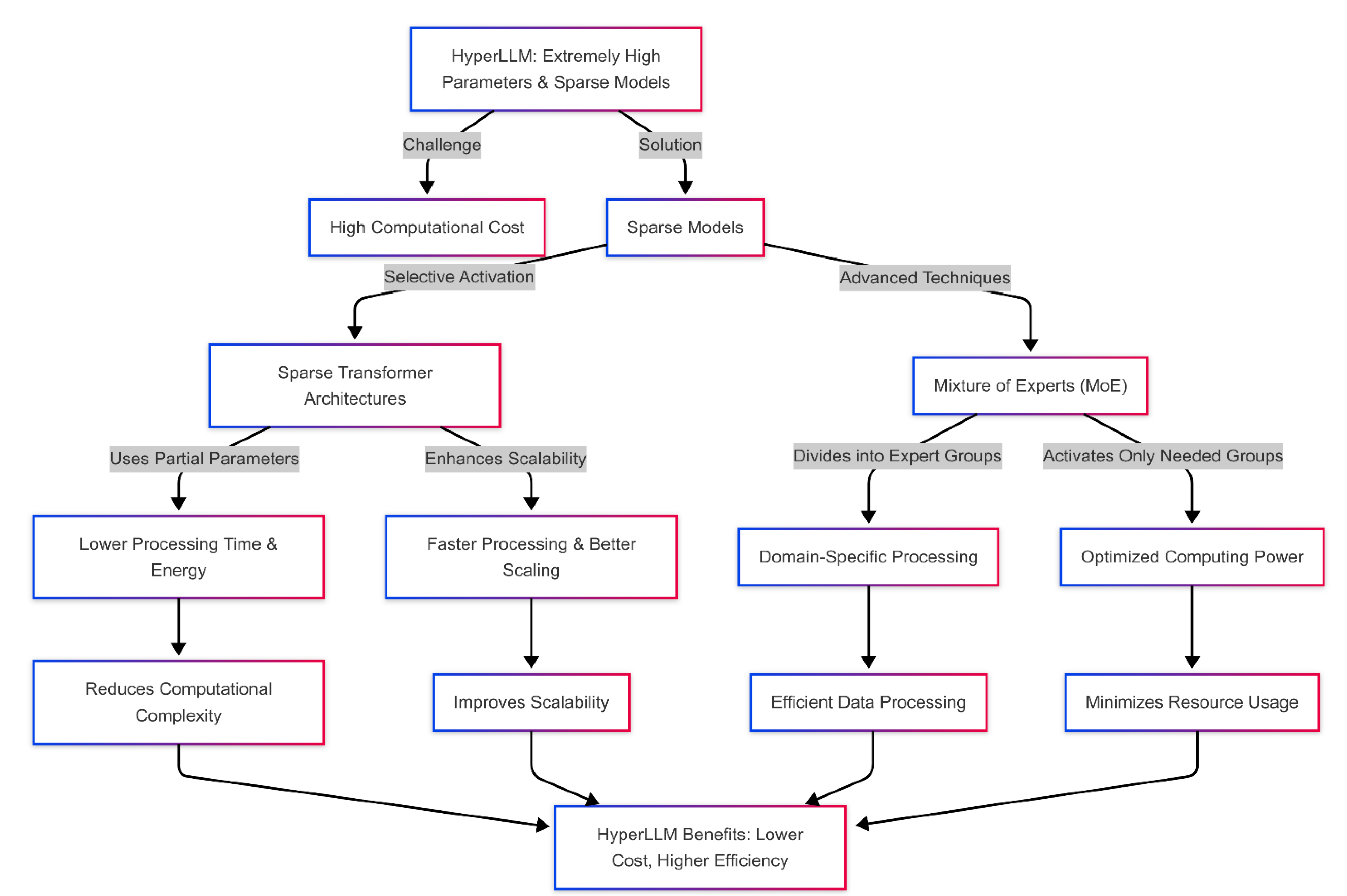

3.1. Extremely High Parameters and Sparse Models in HyperLLM

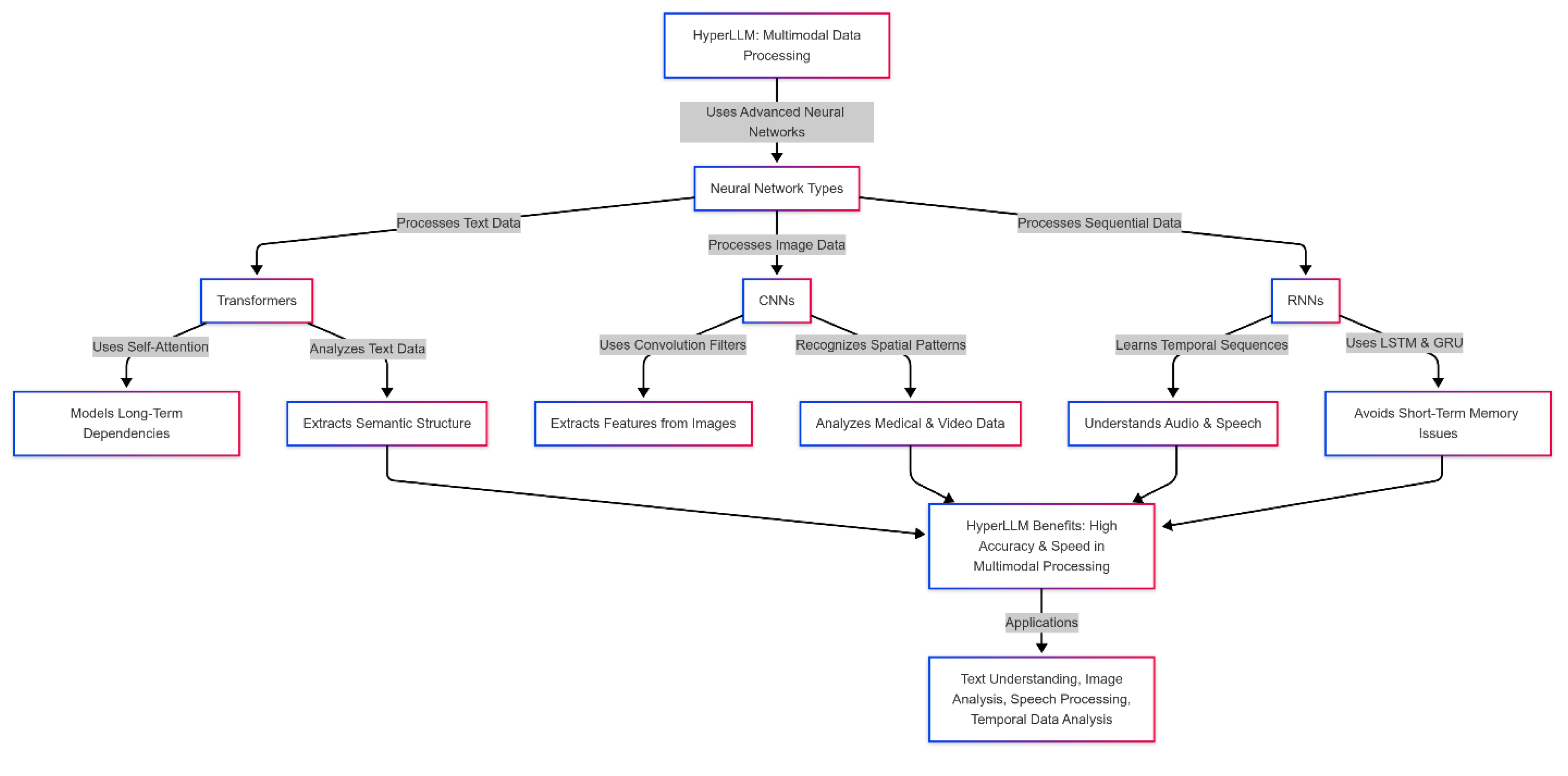

3.2. Combining Advanced Neural Networks (Transformers + CNNs + RNNs) in HyperLLM

- Transformers: Transformer networks are one of the most advanced and widely used Natural Language Processing (NLP) architectures. These networks are specifically designed to process sequential data such as text. Transformers can model long-term and complex dependencies between words and phrases in a sentence or paragraph, especially by using a self-attention mechanism that allows the model to examine the semantic relationships between all input words simultaneously. These features make HyperLLM capable of processing complex and lengthy texts with higher accuracy and efficiency. In HyperLLM, Transformers are used to understand and analyze text data. These networks can effectively discover the semantic structure and how different text sentences are related, producing more accurate and meaningful text.

- Convolutional Neural Networks (CNNs): CNNs are traditionally used to process image data. These networks extract essential features from images using convolution filters and various dimensionality reduction techniques. CNNs are particularly good at recognizing complex spatial patterns and structures, which is critical in image data processing. In HyperLLM, CNNs analyze image data or data with spatial features (such as medical images or video-based data). CNNs allow the model to extract essential features such as edges, textures, and shapes from images and pass them to other networks (such as Transformers) for further analysis.

- Recurrent Neural Networks (RNNs): RNNs are designed to process sequential and temporal data. They are instrumental in audio data processing, video analysis, and temporal predictions. By learning from temporal sequences and preserving previous states in subsequent processing, RNNs can understand temporal patterns and audio sequences or continuous signals. In HyperLLM, RNNs process audio, speech, and other sequential data. The model can effectively analyze spoken language and understand an audio conversation's temporal and semantic relationships. Also, using more advanced LSTM and GRU models, which avoid the short-term memory problems of traditional RNNs, provides higher accuracy and efficiency in processing more complex data.

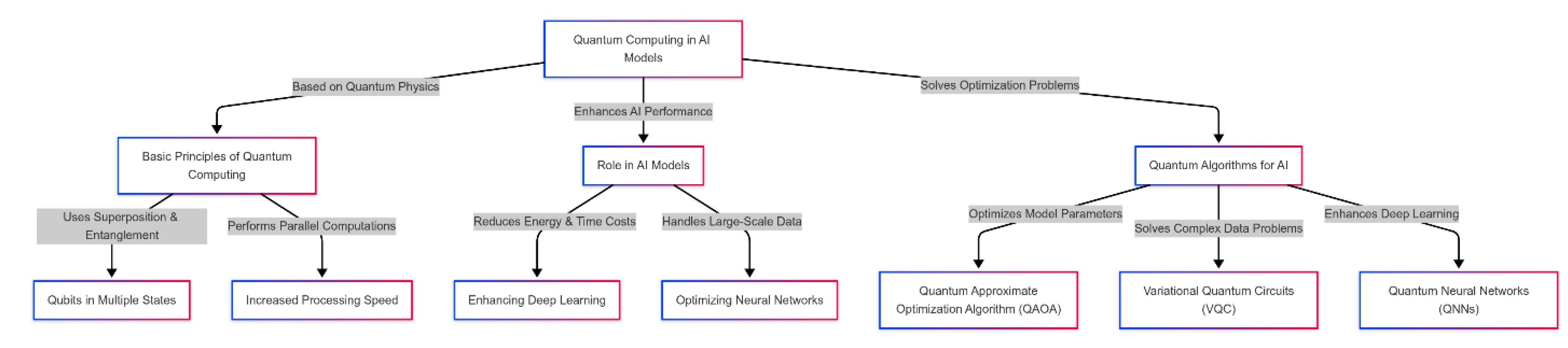

3.3. Quantum Computing in AI Models

- Basic principles of quantum computing: Quantum computing is based on the principles of quantum physics and takes advantage of the properties of quantum particles, such as superposition and entanglement. Unlike classical computing, where information is stored and processed in binary form (zero and one), in quantum computing, quantum bits or qubits can be in different states at the same time. These features allow quantum computing to perform calculations in parallel and significantly increase processing speed.

- The role of quantum computing in AI models: In AI models, especially in complex and large-scale data processing, classical computing usually faces problems such as high energy consumption and long processing time. In particular, deep learning models and neural networks require complex calculations that are time-consuming and costly. Quantum computing using quantum algorithms can exponentially increase processing speed and reduce energy consumption, especially in parts of deep learning models that require complex matrix operations.

-

Quantum algorithms for optimization in AI: One of the biggest challenges of artificial intelligence models is optimizing model parameters and reducing computational complexity. In this context, quantum algorithms can play a prominent role:

- ✓

- Quantum Approximate Optimization Algorithm (QAOA): This algorithm is particularly useful in solving complex optimization problems in machine learning. QAOA can more efficiently search the ample search space of machine learning model parameters and help find more optimal solutions.

- ✓

- Variational Quantum Circuits (VQC): VQC algorithms solve optimization problems in complex data processing and deep learning models. These algorithms use a combined quantum structure for optimization, which can significantly reduce the time and cost of data processing.

- ✓

- Quantum Neural Networks (QNNs): Using quantum neural networks (QNNs) is an essential innovation in deep learning. QNNs specifically use quantum computing power to train and predict train and predict complex data. These networks can harness quantum computing power to perform complex calculations faster and more accurately.

- Advantages of Using Quantum Computing in AI: Quantum computing in artificial intelligence models brings several benefits that can significantly improve the performance and efficiency of AI systems. One of the main advantages is the increased processing speed; quantum computing, using superposition and entanglement capabilities, can perform complex calculations in parallel and in a shorter time, much faster than classical methods. Also, reducing computational complexity is another prominent advantage; quantum computing can help improve processing performance and find more optimal solutions, especially in optimization problems with a large and complex search space. On the other hand, reducing energy consumption compared to classical methods is another key feature of quantum computing, which makes this technology very suitable for use in large-scale and complex artificial intelligence models. Ultimately, quantum computing will allow AI systems to analyze more complicated and multifaceted data with greater accuracy, which has significant implications for applications such as natural language processing, computer vision, and advanced simulations. Figure 3 represents the role of quantum computing in AI models, illustrating its basic principles, optimization algorithms, and how it enhances deep learning models by improving processing speed and reducing energy consumption.

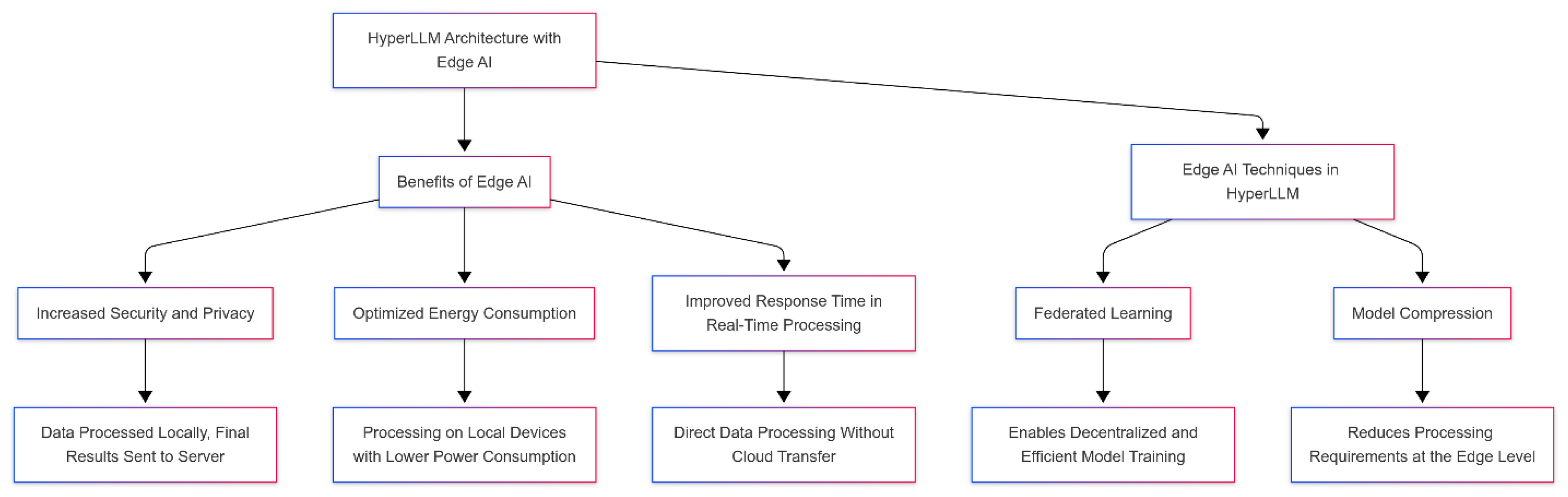

3.4. Using Edge AI for Faster Processing

4. Comparison with Existing Models

- Ability to process multi-modal data: One of the prominent features of HyperLLM is its ability to process multi-modal data. This model can process various data such as text, voice, and image simultaneously and accurately. Unlike models such as GPT-4, specifically designed to process text data, HyperLLM can simultaneously create complex relationships between different data and analyze them in a coordinated manner. This feature makes it very suitable for applications that require processing multiple data sets and their interaction, such as augmented reality (AR), virtual reality (VR), and complex analytics in medicine and engineering.

- Scalability and large-scale processing: HyperLLM can effectively provide high scalability in processing large data sets due to sparse models and quantum computing. Sparse models, especially compared to traditional models, can focus only on specific data parts, reducing computational complexity and improving model performance at large scales. In contrast, models such as DeepSeek, mainly designed for specific or limited data, have lower performance at larger scales. This high scalability feature of HyperLLM allows it to work effectively on massive projects with large data volumes, such as global predictions and large-scale models in data science.

- Computational optimization using quantum computing: One of HyperLLM's unique features is quantum computing. This model uses quantum algorithms such as QAOA and VQC to optimize processing operations, which makes HyperLLM significantly more efficient than other models such as GPT-4 and T5, which rely on substantial processing resources. Quantum computing can perform heavy processing with incredible speed and accuracy, allowing HyperLLM to perform much better in processing complex and diverse data. This feature is essential in advanced data analytics and scientific predictions that require high-speed and accurate calculations.

- Energy consumption and local processing with Edge AI: By leveraging Edge AI, HyperLLM has become one of the models that can perform processing locally. Hence, this means there is no need to send data to data centers, and the information is processed on local devices such as smartphones, Internet of Things (IoT) devices, or smart sensors. This approach makes HyperLLM consume less energy and significantly increases processing speed compared to models such as GPT-3, which rely on data centers for processing. This feature can dramatically help improve performance, especially in applications like machine learning models in the cloud or real-time medical diagnosis systems.

- Security and Privacy: One of the main challenges in machine learning models, especially in sensitive fields such as medicine and finance, is the issue of data security and privacy. HyperLLM uses local processing and federated learning to allow data to be processed locally without sending it to central servers. This feature is a great advantage, especially against concerns about privacy and unauthorized access to data. In contrast, models such as GPT-3, which send data to data centers, may pose additional security concerns, especially when sensitive data with private information becomes available.

- Processing Speed: HyperLLM uses Edge AI and distributed models to process data at very high speeds. This model performs exceptionally well in fields that require fast data processing, such as real-time medical diagnosis or instant financial analysis. Unlike models like GPT-4, which requires a lot of resources for heavy processing and processing large amounts of data, HyperLLM can significantly increase processing speed by using local processing and quantum optimizations.

- Compatibility with emerging technologies: Due to quantum computing and Edge AI, HyperLLM can coordinate and integrate with emerging technologies such as the Internet of Things (IoT), 5G, and augmented reality (AR). This feature is a great advantage, especially in advanced industries and in large-scale projects, such as smart cities or the development of innovative medical systems. In contrast, models like DeepSeek and BERT, which mainly focus on processing textual and structured data, may not be as efficient as HyperLLM in newer, more complex fields.

5. Challenges and Considerations in Developing HyperLLM

5.1. HyperLLM Development Requires Massive Computation

- Data volume and computational complexity: Large language models require massive datasets for deep learning and understanding language complexities, including multilingual texts, scientific texts, research papers, and extensive conversational data. Processing this data requires performing millions of mathematical operations on large numerical vectors and employing optimization algorithms such as AdamW to adjust weights and improve model convergence. This process requires highly high processing power and heavily burdens processing units.

- Large matrix operations and high processing load: Transformer models, which form the core of the HyperLLM architecture, rely on the Self-Attention mechanism to analyze dependencies between words on a large scale. This operation depends on high-dimensional matrix multiplication and integration, which has a computational cost of Ο(n2)d Here n is the number of tokens, and d is the embedded vector dimension). Huge models with billions of parameters require high-power GPU and TPU processing resources, increasing hardware costs and energy consumption.

- Energy consumption and infrastructure costs: Processing large models requires data centers with thousands of graphics processing units (GPUs) and tensor processors (TPUs). These data centers consume a significant amount of electrical energy annually, which, in addition to heavy financial costs, also has severe environmental impacts. Training a large language model like GPT-4 reportedly consumes hundreds of thousands of kilowatt-hours of energy, equivalent to several years of electricity consumption for a small city.

- Inference latency and slow response times: Even after training the model, inference is computationally intensive, mainly in real-time applications such as intelligent assistants, translation systems, or medical chatbots. This challenge leads to increased latency and reduced operational efficiency of the model, especially in scenarios that require fast processing and high scalability.

5.2. Bias Control and Ethical Issues in HyperLLM

- The impact of training data on model bias: Bias in language models such as HyperLLM usually stems from the training data's quality and diversity. Large language models are trained using vast amounts of text data collected from various sources, but this data may have historical, cultural, or social biases. For example, if the model is trained on texts in which gender roles are stereotypically defined, it may reproduce those biases in its responses. Also, imbalances in the training data can cause the model to favor particular groups, languages, or perspectives while underrepresenting others. Therefore, one of the essential steps in controlling bias is to diversify the training dataset, remove or reduce biased data, and create a reasonable balance between different perspectives.

- The role of model architecture in creating or reducing bias: In addition to the training data, the architecture of the model and how it learns can also create or exacerbate bias. Due to their reliance on statistical patterns and superficial correlations in the data, many deep learning models may misunderstand relationships as general rules. For example, the model may unconsciously generalize these patterns if the training data shows more negative sentiment expressions about particular groups. One key approach to this problem is employing weight adjustment and normalization techniques during model training. Also, using hybrid architectures that allow for active filtering of model outputs can help reduce bias.

- Ethical challenges and implications of bias in AI systems: Bias in language models goes beyond a technical issue and broadly impacts social justice, automated decision-making, and public trust in AI technologies. Models such as HyperLLM, which are used in medical, legal, economic, and social fields, if they are biased, can lead to unfair decisions, reproduce inequalities, and undermine the rights of affected groups. For example, if the model is trained on biased data in employment applications, it may unfairly deprive people of job opportunities. Also, when processing less widely used languages or specific cultural groups, the model may not be able to produce accurate information and, as a result, marginalize these groups.

5.3. Energy Sustainability and High Costs

- High energy consumption and environmental impact: Large language models like HyperLLM require massive processing power for training and inference. This process involves billions of matrix operations and parameter optimizations executed on GPUs, TPUs, and high-end servers. This amount of processing leads to significant power consumption and increased heat generation in data centers, creating challenges from an energy resource management perspective. Studies have shown that training a large language model can require several megawatt-hours of energy, equivalent to emitting several tons of carbon dioxide (CO₂) into the atmosphere. In addition, the increasing demand for simultaneous inference and providing fast responses at large scales also significantly increases energy consumption in the operational phase, placing significant constraints on the sustainability of these models in terms of the use of renewable energy sources.

- High infrastructure and computing costs: Implementing and running large models like HyperLLM requires advanced and costly computing infrastructure. These costs include high-power processing hardware such as advanced GPUs such as NVIDIA A100 and new generation TPUs, which have high operating costs due to the high processing power required. In addition, high energy consumption increases the need for advanced cooling systems and the costs of maintaining and operating data centers. Continuous model updates are another financial challenge in this area, as processing large volumes of new data and training complex models requires extensive and expensive computing infrastructure. These issues make developing and using advanced language models challenging and economically unviable for many small organizations and even some research institutions.

6. Future Perspectives and Research Directions

6.1. The Role of Quantum Computing and New Algorithms in the Future of HyperLLM

6.2. HyperLLM in the Fields of Medicine, Education, Big Data Analytics and Industry

- Medicine: One of the most critical applications of HyperLLM in modern medicine is to increase the accuracy and speed of disease diagnosis systems and suggest treatment methods based on multimodal data analysis. Given the ability to process text data (medical texts, scientific articles, and patient records), image data (medical imaging such as MRI, CT-Scan, and X-ray), and signal data (ECG, EEG, and genomic data), the HyperLLM model can perform clinical diagnoses with very high accuracy. Using Sparse Models and a Mixture of Expert (MoE) architectures optimizes model processing and reduces computational costs in complex medical analyses. In addition, using quantum computing (Quantum AI) to solve complex problems, such as molecular dynamics in the discovery of new drugs and modeling of protein interactions, allows for accelerating research processes in biotechnology. In personalized medicine, HyperLLM, by utilizing federated learning models and real-time processing in Edge AI, can provide treatment recommendations specifically for each patient without sending sensitive data to cloud processing centers, increasing patient data security and protecting their privacy.

- Education: HyperLLM will be key in developing intelligent and personalized learning systems. The use of multimodal natural language models (MLMs) allows for detailed analysis of how each individual learns and provides educational content tailored to the learner's knowledge and abilities. For example, by using Transformer-CNN-RNN networks, this model will improve voice and text interactions and enhance augmented and virtual reality (AR/VR)-based learning systems. In addition, in language and conversation training systems, HyperLLM can adjust training to the linguistic characteristics of each individual by understanding linguistic and dialect differences in depth. In more advanced sectors, developing Edge AI and Federated Learning-based models will enable the implementation of these educational systems without dependence on the Internet or cloud servers, reducing processing latency and increasing equitable access to innovative education in underserved areas.

- Big Data Analytics: HyperLLM can play a key role in predictive modeling, business trend analysis, and discovering hidden patterns in data. Sparse Models and distributed processing structures allow the model to process a massive amount of structured and unstructured data without experiencing scalability problems in traditional processing systems. For example, the Mixture of Experts (MoE) and Attention Mechanisms models in HyperLLM can analyze real-time streaming data and extract hidden patterns in data. In addition, using quantum algorithms such as QAOA and VQC can increase model performance in complex analyses such as social network analysis, fraud detection in financial systems, and search algorithm optimization. Also, developing decentralized processing systems using Edge AI and federated computing will enable big data analysis without dependence on expensive cloud data centers, leading to reduced processing costs and optimized energy consumption.

- Industry: In industry, HyperLLM applications can include supply chain management, predicting industrial equipment failures, and optimizing production processes. Distributed AI and Edge AI models enable industrial devices to process sensor data and make real-time decisions autonomously. In this regard, using Hybrid Quantum-Classical Learning can increase the efficiency of control systems and facilitate troubleshooting of complex equipment through deep learning-based modeling and IoT data processing. In the supply chain, HyperLLM can use Transformer models to analyze demand trends, predict resource shortages, and optimize logistics, which will increase productivity and reduce operating costs. On the other hand, transfer learning and edge computing enable the implementation of these models in industrial environments without heavy computing infrastructure, which will be of great importance in the automotive, semiconductor manufacturing, and energy management industries.

7. Conclusions

References

- Gallifant, J., Afshar, M., Ameen, S., Aphinyanaphongs, Y., Chen, S., Cacciamani, G., Demner-Fushman, D., Dligach, D., Daneshjou, R., Fernandes, C. and Hansen, L.H., 2025. The TRIPOD-LLM reporting guideline for studies using large language models. Nature Medicine, pp.1-10. [CrossRef]

- Zhu, X., Zhou, W., Han, Q.L., Ma, W., Wen, S. and Xiang, Y., 2025. When Software Security Meets Large Language Models: A Survey. IEEE/CAA Journal of Automatica Sinica, 12(2), pp.317-334. [CrossRef]

- Alber, D.A., Yang, Z., Alyakin, A., Yang, E., Rai, S., Valliani, A.A., Zhang, J., Rosenbaum, G.R., Amend-Thomas, A.K., Kurland, D.B. and Kremer, C.M., 2025. Medical large language models are vulnerable to data-poisoning attacks. Nature Medicine, pp.1-9. [CrossRef]

- Johri, S., Jeong, J., Tran, B.A., Schlessinger, D.I., Wongvibulsin, S., Barnes, L.A., Zhou, H.Y., Cai, Z.R., Van Allen, E.M., Kim, D. and Daneshjou, R., 2025. An evaluation framework for clinical use of large language models in patient interaction tasks. Nature Medicine, pp.1-10. [CrossRef]

- Wang, J., Shi, R., Le, Q., Shan, K., Chen, Z., Zhou, X., He, Y. and Hong, J., 2025. Evaluating the effectiveness of large language models in patient education for conjunctivitis. British Journal of Ophthalmology, 109(2), pp.185-191. [CrossRef]

- Ntinopoulos, V., Biefer, H.R.C., Tudorache, I., Papadopoulos, N., Odavic, D., Risteski, P., Haeussler, A. and Dzemali, O., 2025. Large language models for data extraction from unstructured and semi-structured electronic health records: a multiple model performance evaluation. BMJ Health & Care Informatics, 32(1), p.e101139. [CrossRef]

- Kachris, C., 2025. A survey on hardware accelerators for large language models. Applied Sciences, 15(2), p.586. [CrossRef]

- Long, S., Tan, J., Mao, B., Tang, F., Li, Y., Zhao, M. and Kato, N., 2025. A Survey on Intelligent Network Operations and Performance Optimization Based on Large Language Models. IEEE Communications Surveys & Tutorials. [CrossRef]

- Chandran, R. and Tan, M.L., 2025. Efficiently Scaling LLMs Challenges and Solutions in Distributed Architectures. Baltic Multidisciplinary Research Letters Journal, 2(1), pp.57-66.

- Zhang, C., Xu, Q., Yu, Y., Zhou, G., Zeng, K., Chang, F. and Ding, K., 2025. A survey on potentials, pathways, and challenges of large language models in new-generation intelligent manufacturing. Robotics and Computer-Integrated Manufacturing, 92, p.102883. [CrossRef]

- Wang, P., Lu, W., Lu, C., Zhou, R., Li, M. and Qin, L., 2025. Large Language Model for Medical Images: A Survey of Taxonomy, Systematic Review, and Future Trends. Big Data Mining and Analytics, 8(2), pp.496-517. [CrossRef]

- Song, S., Li, X., Li, S., Zhao, S., Yu, J., Ma, J., Mao, X., Zhang, W. and Wang, M., 2025. How to Bridge the Gap between Modalities: Survey on Multimodal Large Language Model. IEEE Transactions on Knowledge and Data Engineering. [CrossRef]

- Sun, Y., Li, X. and Sha, Z., 2025. Large Language Models for Computer-Aided Design (LLM4CAD) Fine-Tuned: Dataset and Experiments. Journal of Mechanical Design, pp.1-19. [CrossRef]

- Qi, C., Xu, H., Zheng, L., Chen, P. and Gu, X., 2025, January. TMATH A Dataset for Evaluating Large Language Models in Generating Educational Hints for Math Word Problems. In Proceedings of the 31st International Conference on Computational Linguistics (pp. 5082-5093).

- Memduhoğlu, A., 2025. Towards AI-Assisted Mapmaking: Assessing the Capabilities of GPT-4o in Cartographic Design. ISPRS International Journal of Geo-Information, 14(1), p.35. [CrossRef]

| Parameter | HyperLLM | GPT-4 | DeepSeek | BERT | T5 | XLNet | GPT-3 | LaMDA |

| Multimodal Data Processing Ability | Very High (combines text, audio, and visual data) | High (focused on text processing) | Moderate (best for text data) | Moderate (text processing) | High (text and structured data) | High (multimodal models) | Moderate (best for text) | Very High (focused on conversational and text data) |

| Scalability | Excellent (high scalability with Sparse Models) | Very Good | Suitable (best for specific data types) | Good (moderate scalability) | Very Good | Sound (structured data processing) | Very Good | Good (focused on conversational tasks) |

| Computational Optimization | Excellent (Quantum computing & Sparse Models) | Good (advanced techniques) | Moderate (limited optimization) | Good (optimization for text) | Excellent (high optimization for structures) | Good (complex computations) | Moderate (heavy computations) | Good (optimized for conversational processing) |

| Energy Consumption | Very Low (Edge AI and Sparse Models) | Moderate (high computational needs) | Moderate (limited optimization) | Moderate (computationally intensive) | Low (specific optimizations) | Moderate (high energy consumption) | High (requires robust infrastructure) | Low (local processing and optimization) |

| Processing Speed | Very Fast (local processing with Edge AI) | Fast (with advanced processing) | Moderate (limited processing speed) | Moderate (delayed processing) | Fast (for diverse data types) | Moderate (complex computations) | Fast (quick processing) | Very Fast (real-time conversational processing) |

| Security and Privacy | Very High (local processing & Federated Learning) | Good (data protection) | Moderate (sensitive data processed) | Good (high security in text processing) | Suitable (optimized privacy measures) | Good (data protection) | Moderate (privacy concerns) | Very Good (focus on privacy in conversation) |

| Compatibility with Emerging Technologies | Excellent (Quantum Computing & Edge AI) | Good (utilizes emerging technologies) | Moderate (limited compatibility) | Suitable (compatible with algorithms) | Very Good (integration with new technologies) | Good (can integrate with technologies) | Suitable (uses emerging technologies) | Excellent (advanced in conversational language) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).