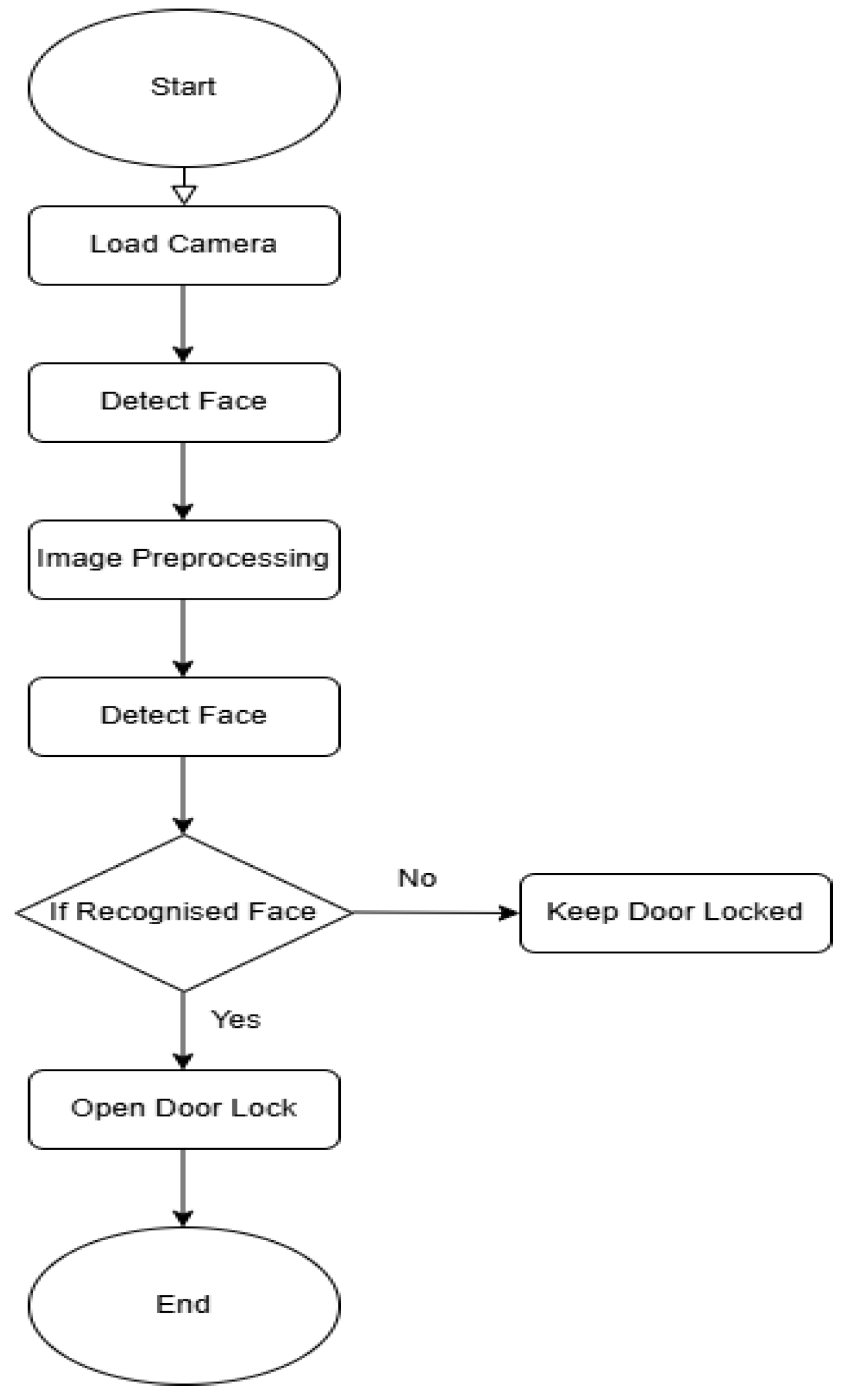

3. Design Methodology

The Raspberry Pi-based facial recognition door lock solution required hardware, software, and rigorous testing to meet modern access control issues. This study discusses hardware integration, adaptive facial recognition software development, and system testing to verify system performance. The implementation process prioritizes safety, reliability, scalability, and usability. The implementation process necessitated meticulous integration of hardware and software, consideration of Raspberry Pi processor limitations, and the resolution of environmental variables.

The paper's main processor, the Raspberry Pi, is connected to a camera module for real-time video, a relay module, and a solenoid lock for physical access. Each hardware component was selected based on compatibility, cost, and low-power applications. The Raspberry Pi and peripheral devices communicated well thanks to precise GPIO connections during assembly. OpenCV and DeepFace are Python libraries for facial recognition and emotion detection. Adaptive learning dynamically updates the authorized face database, preprocessing adjusts to environmental conditions, and real-time notifications notify users of access events. Coded systems behave smoothly and responsively between hardware and software.

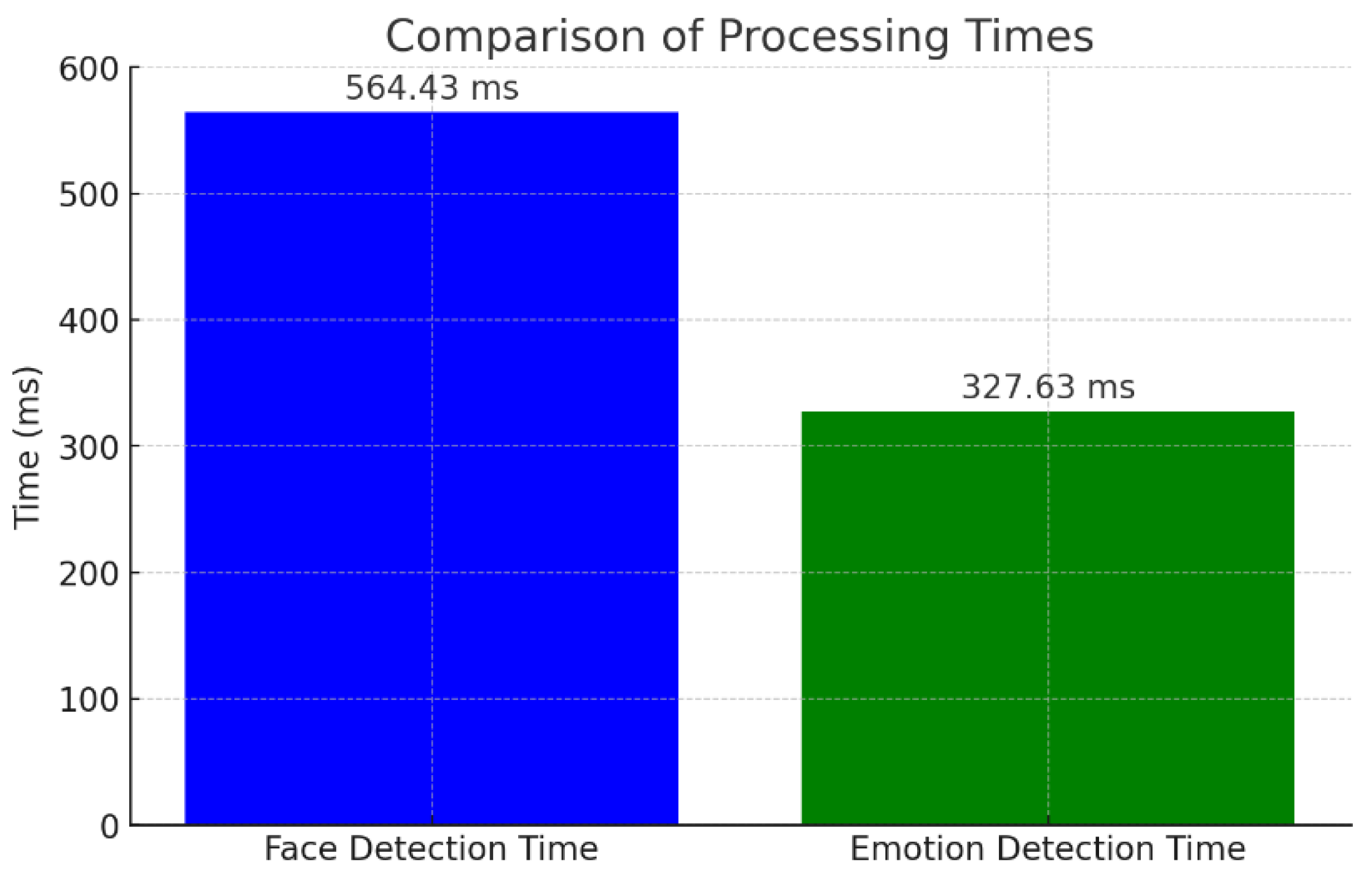

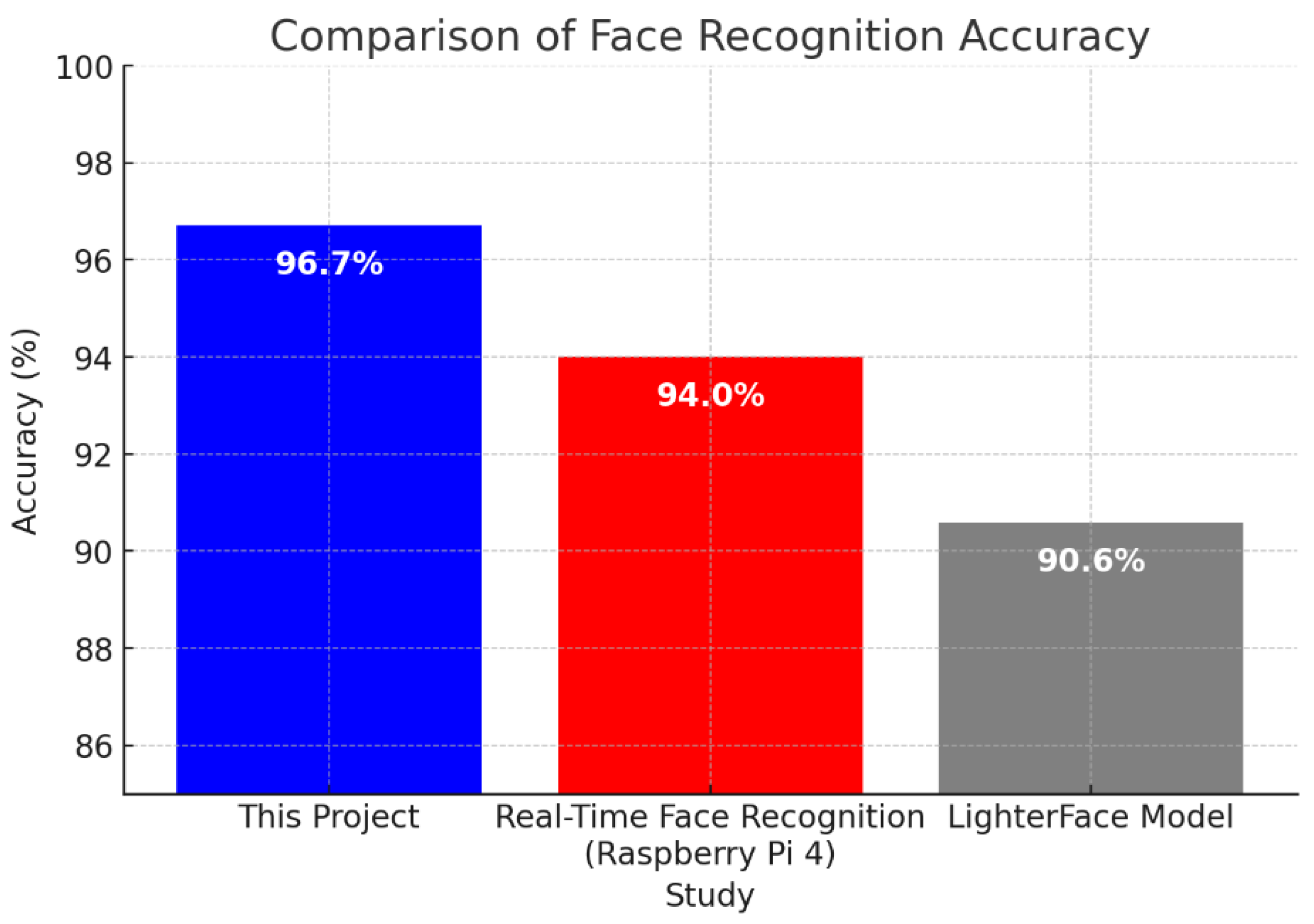

System stability and scalability require extensive testing and validation. The system was evaluated under various conditions, including lighting variations, distances, and facial orientations, to assess performance. Metrics such as detection accuracy, processing time, and emotion detection effectiveness were analyzed to ensure robustness. As highlighted in [

7], implementing an embedded facial recognition system on Raspberry Pi requires balancing computational efficiency, image processing techniques, and algorithmic selection to achieve optimal performance under real-world conditions. Testing insights led to iterative system modifications, further enhancing functionality and reliability.

3.1. Hardware Implementation

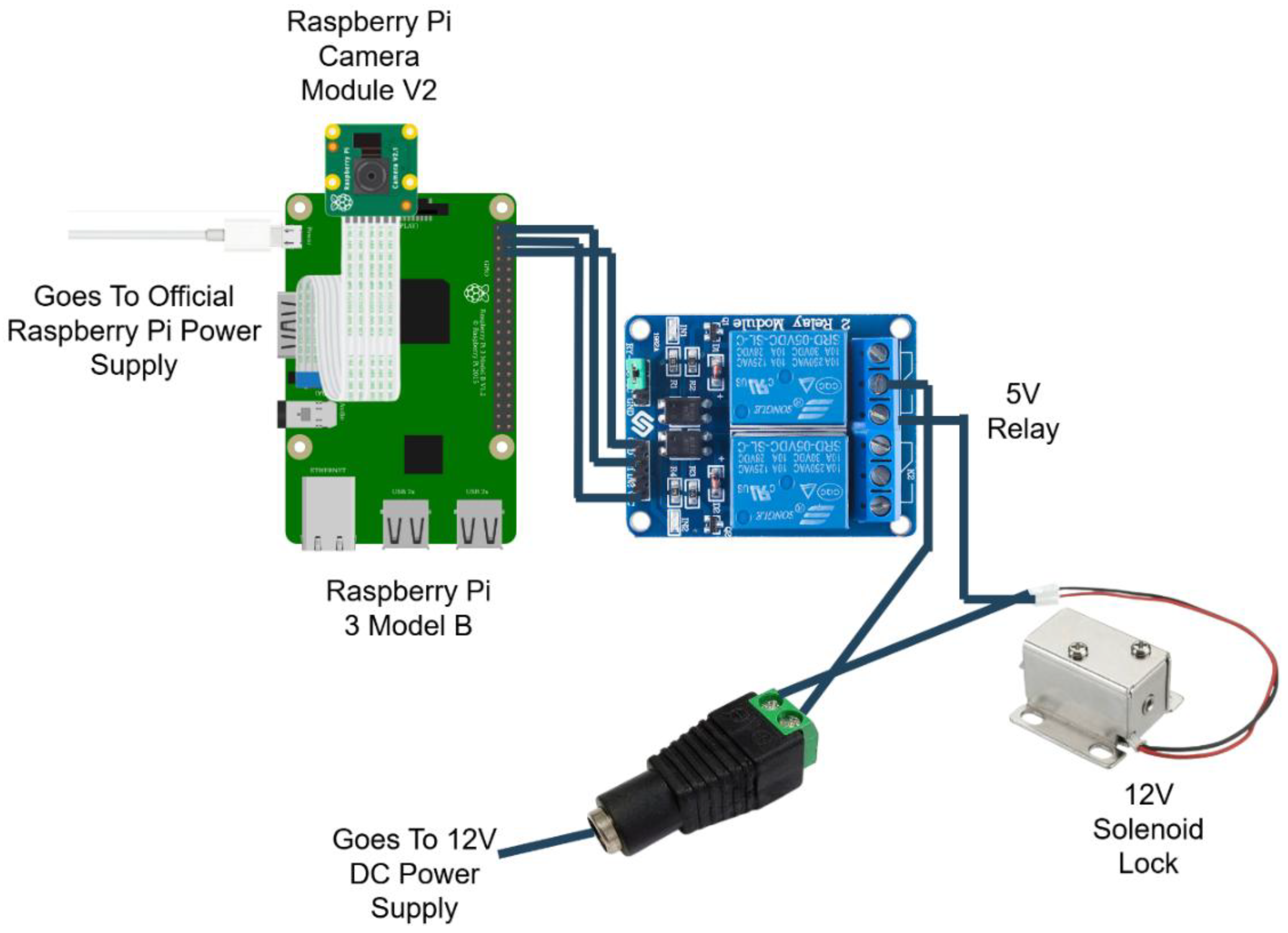

The hardware implementation of the Raspberry Pi-based facial recognition door lock system ensures smooth operation and reliability. This section details the setup, including components, roles, and connections. The Raspberry Pi Model 3B serves as the central processor, handling facial recognition, emotion detection, and device connectivity. It interfaces with a camera module for real-time video input and a relay module controlling the solenoid lock for secure access.

Key components such as DC barrel jacks, microSD cards, jumper wires, and power supplies ensure stable connections. The relay module enables safe control of the high-voltage solenoid lock circuit, while the 5V and 12V power supplies support system reliability. The Raspberry Pi stores the operating system and scripts on a microSD card.

Figure 2 provides a circuit schematic illustrating the interaction between hardware components, demonstrating the system's connectivity and functionality.

This diagram visually explains the flow of power and control signals within the system, offering clarity on the role and connectivity of each component. It simplifies the understanding of how the Raspberry Pi interacts with the solenoid lock through the relay module while maintaining separate power supplies for different components.

3.2. Hardware Assembly

This section illustrates the process of assembling the hardware components of the Raspberry Pi-based face recognition door lock system. The assembly integrates the Raspberry Pi, camera module, relay module, solenoid lock, and other necessary components into a functional setup. The objective is to ensure secure connections, operational reliability, and a well-organised layout for easy maintenance. Insert the preloaded microSD card containing the operating system and software into the Raspberry Pi Model 3B's slot. Connect the Raspberry Pi Camera Module to the CSI (Camera Serial Interface) port. Ensure the metal contacts on the ribbon cable face the CSI port for proper connectivity. Secure the Raspberry Pi on a stable platform or within a protective case to prevent damage during assembly. The position of the relay module is close to the Raspberry Pi for tidy and secure wiring. Connect the positive terminal of the 12V solenoid lock to the relay's NO (Normally Open) terminal. Run basic GPIO scripts on Raspberry Pi to test the relay and solenoid lock functionality. Ensure the relay triggers correctly, and the solenoid lock responds as intended. The Raspberry Pi-based face recognition door lock system's hardware implementation forms the paper's backbone, integrating multiple components into a cohesive and functional setup. Each component, from the Raspberry Pi Model 3B to the solenoid lock and relay module, is vital in ensuring secure and reliable access control. The carefully designed circuit connections and the logical assembly process provide a robust foundation for the system. Including power supply mechanisms and adaptable configurations further enhances the system's efficiency and scalability. This meticulously constructed hardware platform sets the stage for seamless integration with the software and testing phases, demonstrating the paper's commitment to precision and practicality.

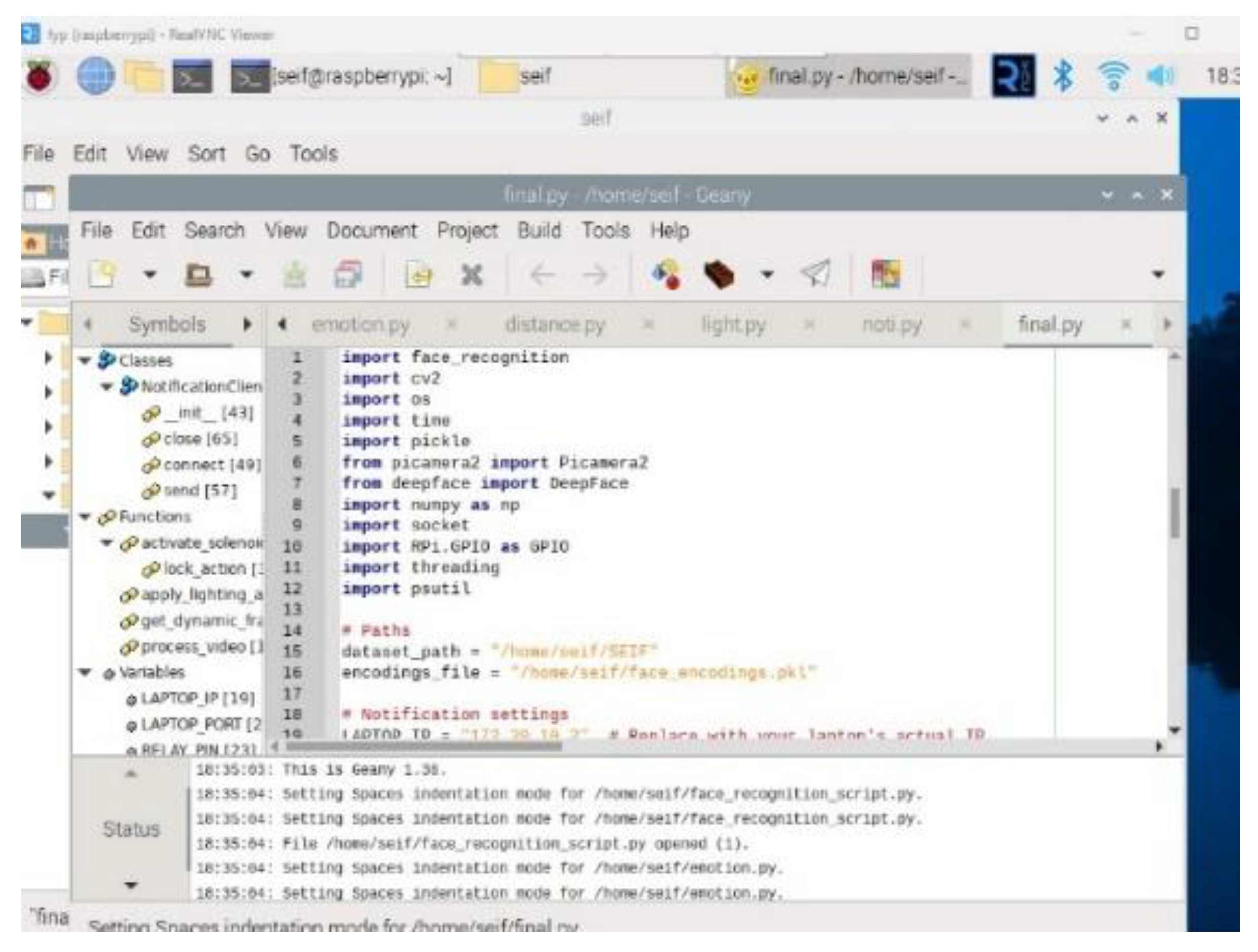

3.3. Software Implementation

The software implementation of the Raspberry Pi-based face recognition door lock system involves an intricate combination of algorithms and processes designed for efficiency, accuracy, and adaptability. The development began with a simulation phase, where the feasibility of the face recognition algorithm was tested using a laptop and a pre-trained CNN model. This simulation provided valuable insights into the algorithm's performance, forming the foundation for the subsequent hardware integration.

Building on this groundwork, the system was implemented on the Raspberry Pi to achieve real-time face detection, recognition, and dynamic adaptability. The system detects faces, recognizes authorized individuals, adapts to changing conditions, and notifies remote devices in real-time. The following subsections outline the implementation of key features, including the facial recognition algorithm, the notification system, emotion detection, and the dynamic addition of unknown faces to the authorized list.

The software components were developed with Python, leveraging libraries such as face_recognition, cv2, DeepFace, and others. Advanced preprocessing techniques, such as lighting normalization and gamma correction, ensure reliable performance under varying environmental conditions. These components collectively create a robust and efficient system capable of handling diverse use cases, such as recognizing faces at different distances, adapting to dynamic lighting conditions, and processing real-time notifications on remote devices. The project utilizes several essential Python libraries for face recognition, GPIO control, and notification handling, as shown in

Figure 3.

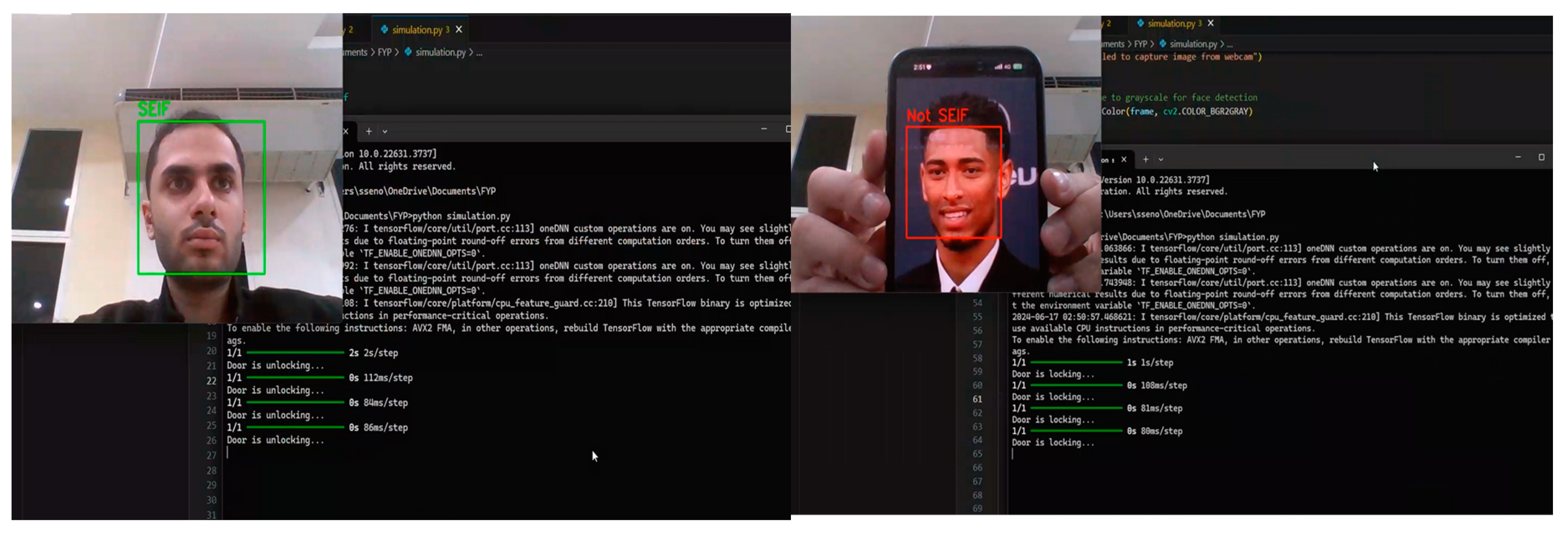

3.4. Simulation and Preliminary Testing

A facial recognition system simulation tested the algorithm's viability and real-time processing. The simulation includes simulated door lock/unlock functionality using facial recognition findings. System setup includes these: Haar Cascade Classifier for webcam face detection. The pre-trained CNN model classifies identified faces as authorised (e.g., “SEIF”) or unauthorised (“NOT SEIF”). Real-time frame processing uses the laptop webcam. OpenCV and TensorFlow are video processing and model execution core libraries.

Three steps were employed for the algorithm and process overview. Face Detection is the Haar Cascade Classifier that detected faces in the video stream and cropped and pre-processed them for recognition. The CNN model pre-trained in facial recognition identified faces as SEIF (authorised) or not SEIF. Face photos were resized to 224x224 pixels to meet model input criteria, normalised, and expanded. If SEIF was recognised, the system displayed "Door is unlocking..." to simulate unlocking the door. For unknown faces, the system simulated locking the door with "Door is locking..."

During the simulation, the system successfully detects and recognizes faces in real-time, as illustrated in

Figure 4.

3.5. Facial Recognition Algorithm

Face recognition systems deployed on edge devices, such as the Raspberry Pi, must balance computational efficiency with recognition accuracy. Traditional deep learning models, such as CNN-based approaches, offer high accuracy but are computationally expensive, making them impractical for real-time processing on low-power devices. In contrast, lightweight algorithms such as the Histogram of Oriented Gradients (HOG) have demonstrated their effectiveness in edge computing scenarios, enabling efficient face detection without requiring GPU acceleration [

8].

The Histogram of Oriented Gradients (HOG) algorithm has been widely used for efficient face detection [

9]. This method provides real-time performance while maintaining accuracy, making it suitable for resource-constrained environments. HOG-based detection extracts key facial features using gradient orientation patterns, ensuring robustness in different environmental conditions. Additionally, its computational simplicity allows it to outperform more complex deep learning-based approaches in terms of processing speed on embedded hardware.

3.6. System Setup for Facial Recognition

Before delving into the algorithm details, it is essential to understand how the system is prepared to execute facial recognition effectively: thus

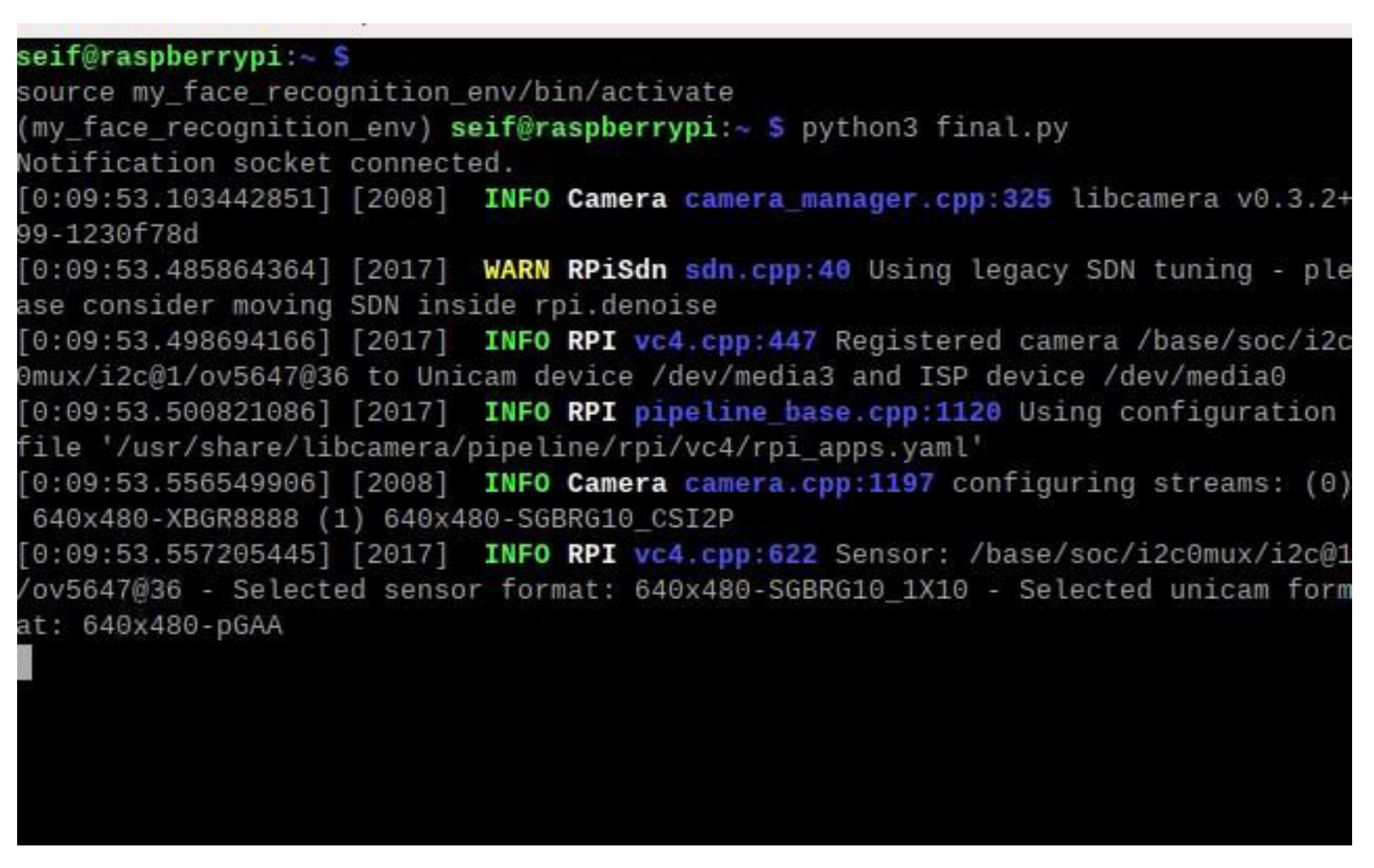

Virtual Environment: The facial recognition script is executed within a Python virtual environment on the Raspberry Pi. This ensures that all dependencies, such as OpenCV, DeepFace, and face_recognition libraries, are correctly managed and isolated from the base system. During the system setup, the script initializes the camera module and loads necessary libraries. The Raspberry Pi terminal logs provide a detailed summary of these initialization steps.

Figure 5 shows the Raspberry Pi terminal output during the initialization of the camera module, confirming successful detection and configuration.

Figure 6 shows the activation of the virtual environment in the Raspberry Pi terminal, ensuring isolated dependency management for the face recognition system.

Dataset of Authorized Faces: A preloaded dataset of photos of authorized individuals is stored on the Raspberry Pi. This dataset is processed during initialization to generate 128-dimensional encodings, which are stored in the encodings file. These encodings are later used for face matching during runtime.

RealVNC Viewer: RealVNC Viewer was utilized during development and testing to provide remote access to the Raspberry Pi. This tool allowed effective debugging, script execution monitoring, and system setup adjustments.

3.7. Process Overview

The face recognition process begins with face detection, where the HOG model identifies key facial landmarks such as the eyes, nose, and mouth, ensuring real-time performance on the Raspberry Pi 3B. Once a face is detected, it undergoes face encoding, converting it into a unique 128-dimensional vector that acts as a biometric fingerprint. These encodings are pre-stored in the system's encodings_file, containing authorized individuals' facial data. During face matching, the system compares the newly detected encoding with stored ones using Euclidean distance, recognizing a face if the distance falls below a 0.4 threshold, granting access accordingly.

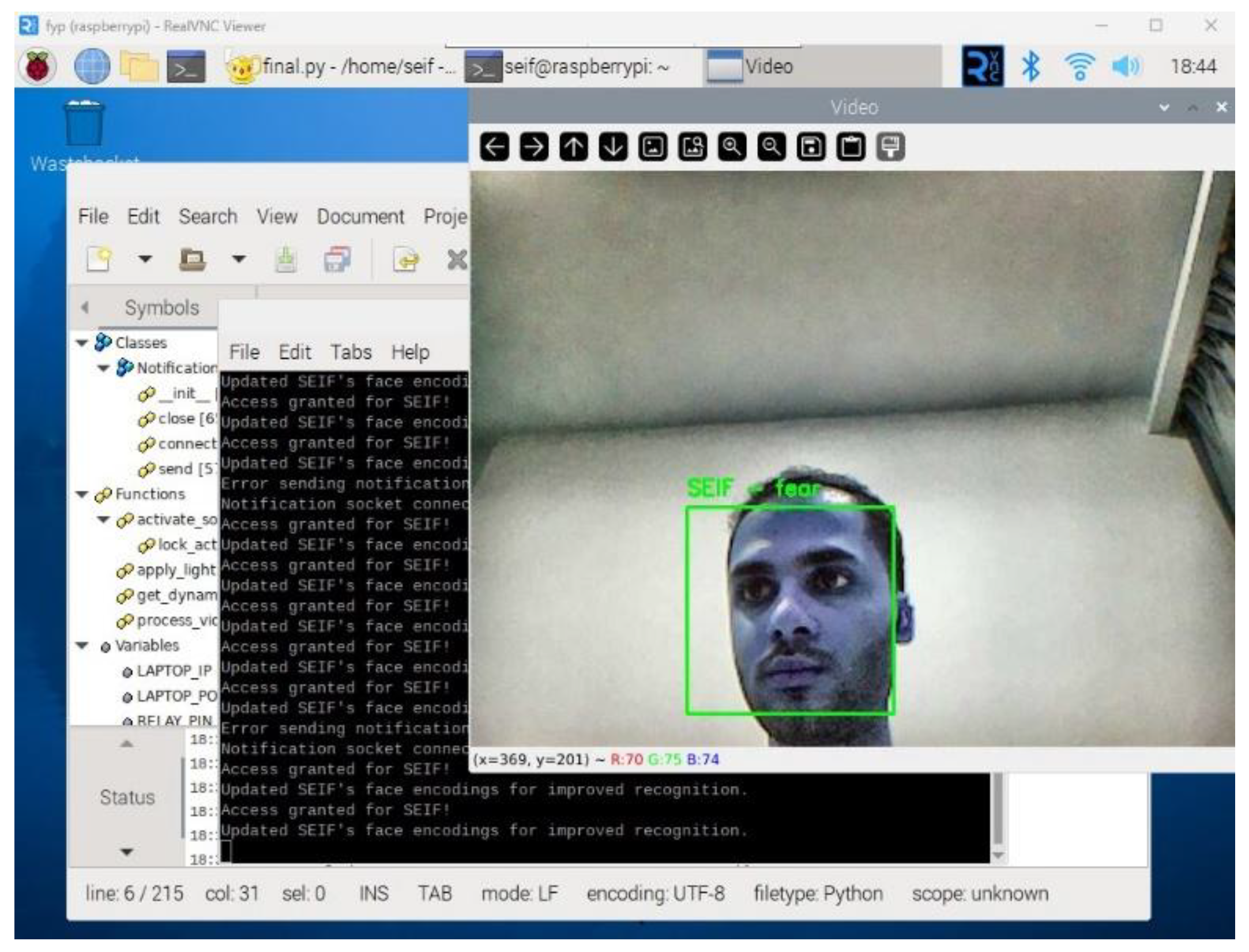

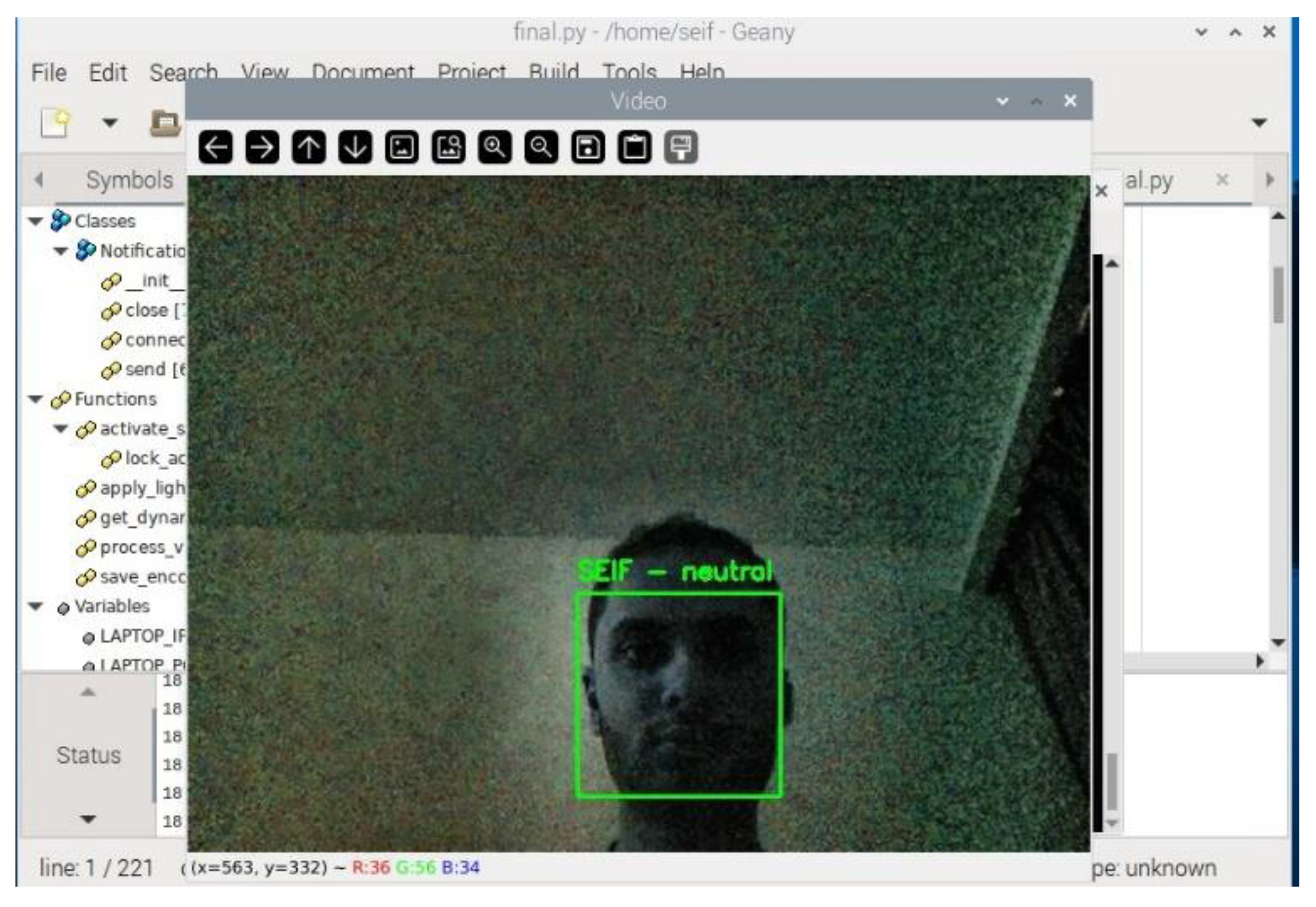

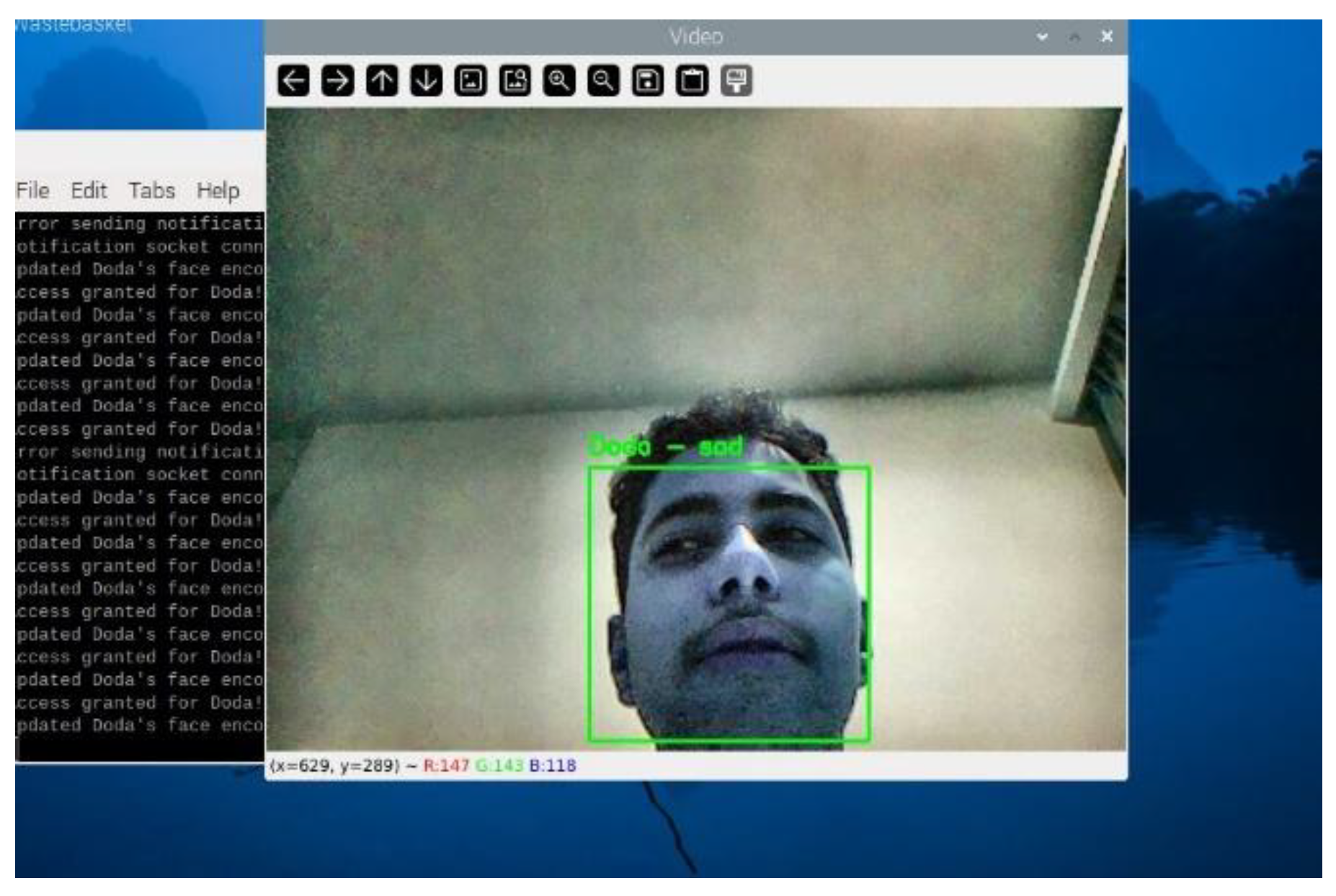

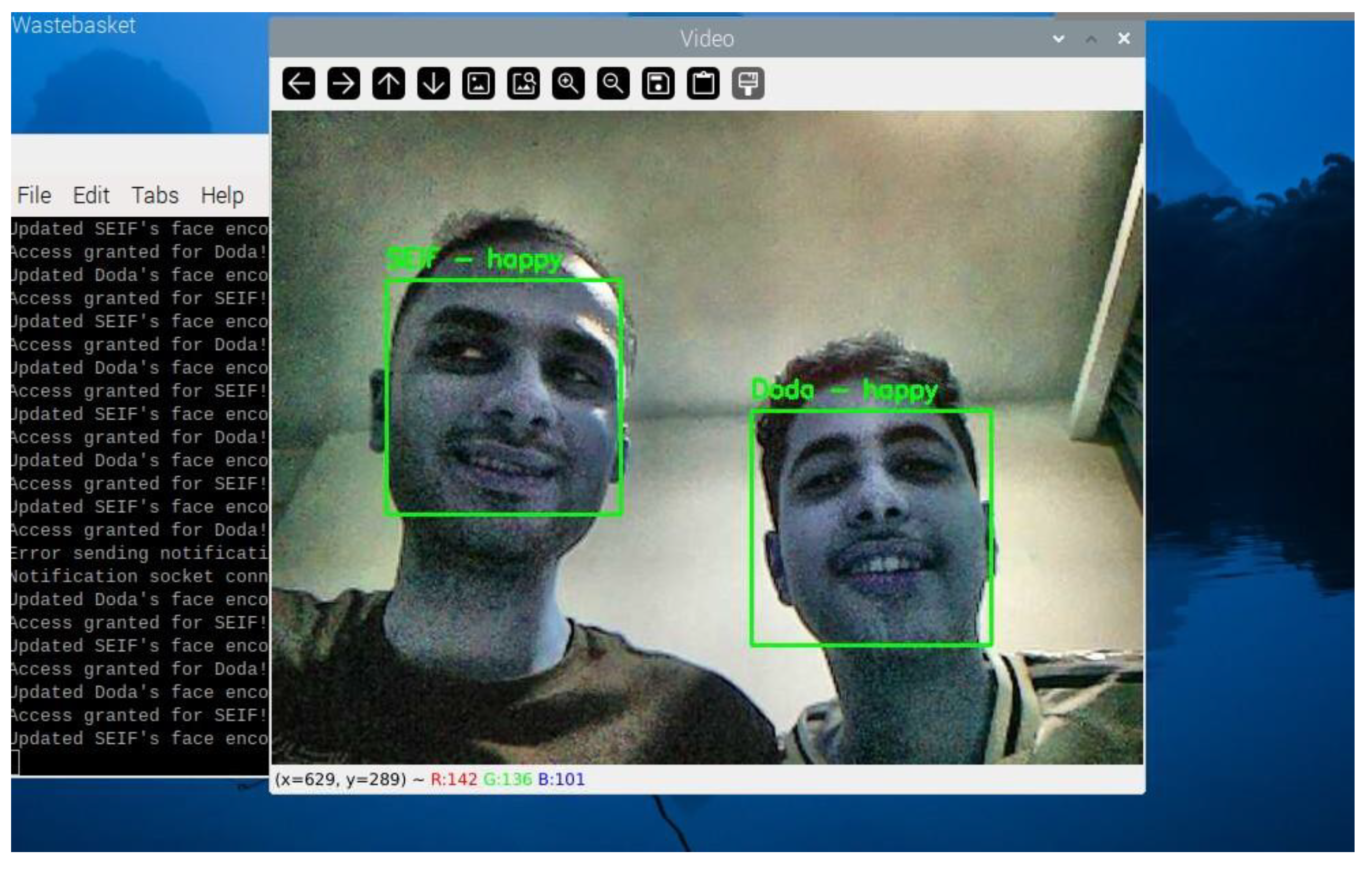

As the system processes video frames, it detects and recognizes faces in real time.

Figure 7 demonstrates the real-time face recognition process, displaying the detected face along with the identified emotion and access status.

To provide a structured overview of the facial recognition process,

Table 1 summarizes the key steps involved in the algorithm. This table outlines the sequential operations, starting from capturing the frame to displaying the recognition results and triggering corresponding actions.

3.8. Lighting Normalization and Gamma Correction

Lighting inconsistencies, such as low-light conditions or bright backgrounds, can negatively impact face detection and recognition accuracy. To address this, the system implements the following enhancements: Contrast Limited Adaptive Histogram Equalization (CLAHE), enhances image contrast by redistributing the intensity values across the image, ensuring uniform brightness. This is particularly useful in dim or uneven lighting environments. The Gamma correction adjusts the brightness dynamically, enhancing darker regions of the frame to reveal facial features. The gamma correction formula is:

Where:

Ioriginal is the original pixel intensity.

γ is the gamma adjustment factor (e.g., γ = 1.2).

Icorrected is the brightness-adjusted intensity.

Lighting conditions significantly impact face detection accuracy.

Figure 8 illustrates how the implemented lighting normalization technique enhances visibility and ensures consistent facial recognition even in low-light environments.

3.9. Integration into the System

The algorithm is integrated into the system via a Python script that processes each video frame captured by the Raspberry Pi Camera Module V2. It dynamically adjusts the brightness using the apply_lighting_adaptation function, which incorporates CLAHE and gamma correction. Detected faces are resized to reduce computational load, and their locations are scaled back for precise visualization. To ensure real-time performance, the system processes resized frames at 25% of their original size during detection. Detected face locations are scaled back to original dimensions for precise matching. Additionally, the system employs dynamic frame skipping, adjusting the number of frames processed based on CPU usage to avoid overloading the Raspberry Pi.

3.10. Adaptive Learning and Unknown Face Addition

The Raspberry Pi-based facial recognition system's capacity to dynamically adapt to new inputs is a major improvement. Unlike static face recognition systems, this system uses adaptive learning to update face encodings over time [

10]. This feature improves recognition accuracy by learning from repeated interactions and evolving with the user. Traditional facial recognition systems employ manually updated databases that must be retrained for new users. This work uses adaptive learning to dynamically update facial encodings and recognise new users without retraining them. Incremental learning improves facial recognition accuracy by continuously revising facial encodings based on new observations [

11]. This section details these features' design, implementation, and significance, showing how adaptive learning improves usability, scalability, and reliability.

Adaptive Learning: Recognition Improvement Over Time. Every contact improves the system's knowledge about authorised users through adaptive learning. This feature overcomes common facial recognition issues, such as modest appearance differences, due to:

Lighting: Adjustments can affect facial features.

Subtle changes in facial contours over time due to ageing.

Dynamic factors: Accessories like glasses or caps.

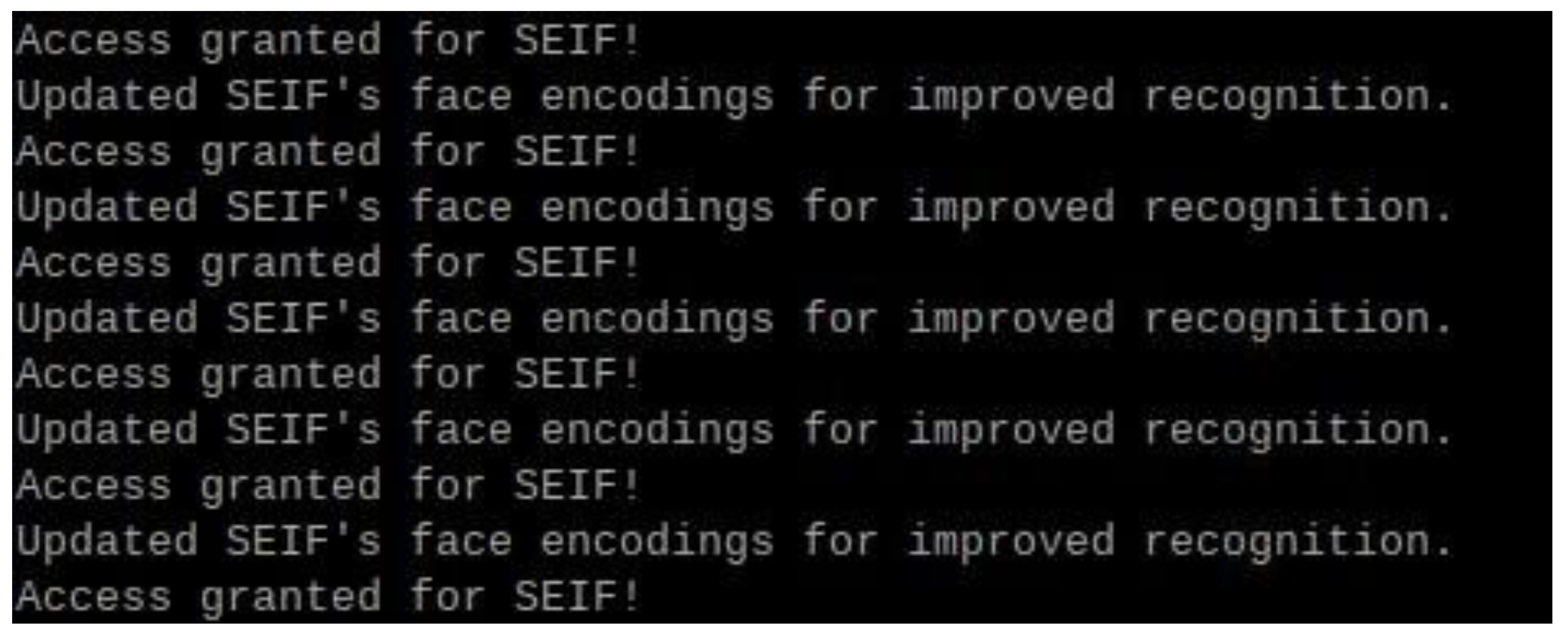

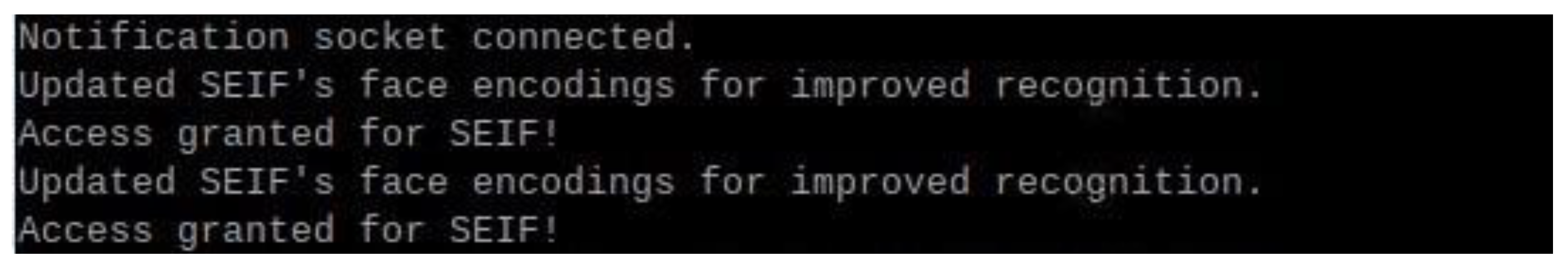

The system updates its encoding when it detects a known face. This continual learning process updates the stored data with the user, reducing false negatives and improving identification accuracy. During each identification cycle, the system compares the detected face's encoding to the stored ones. A match updates the stored encoding with the new one. This captures tiny face alterations over time, improving recognition reliability. Continuous recognition accuracy without manual updates is a benefit of adaptive learning. Adaptability allows for natural changes in user appearance, while efficiency ensures minimal retraining as shown in

Figure 9.

3.11. Unknown Face Detection and Dynamic Addition

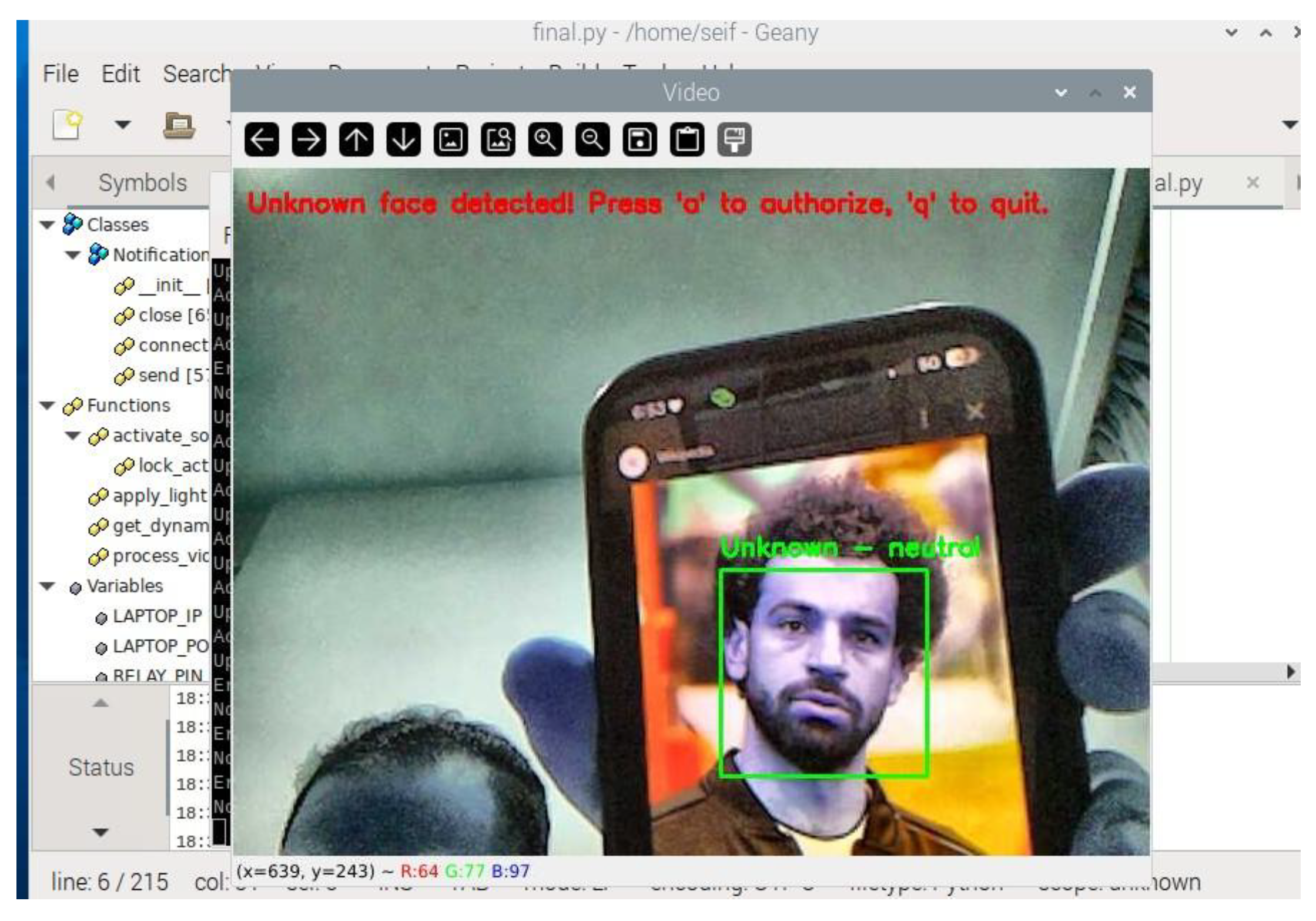

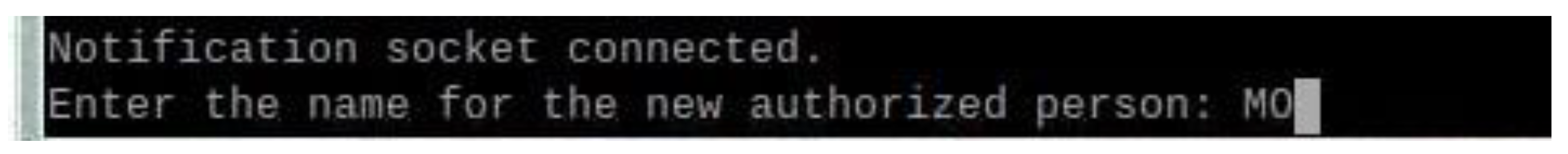

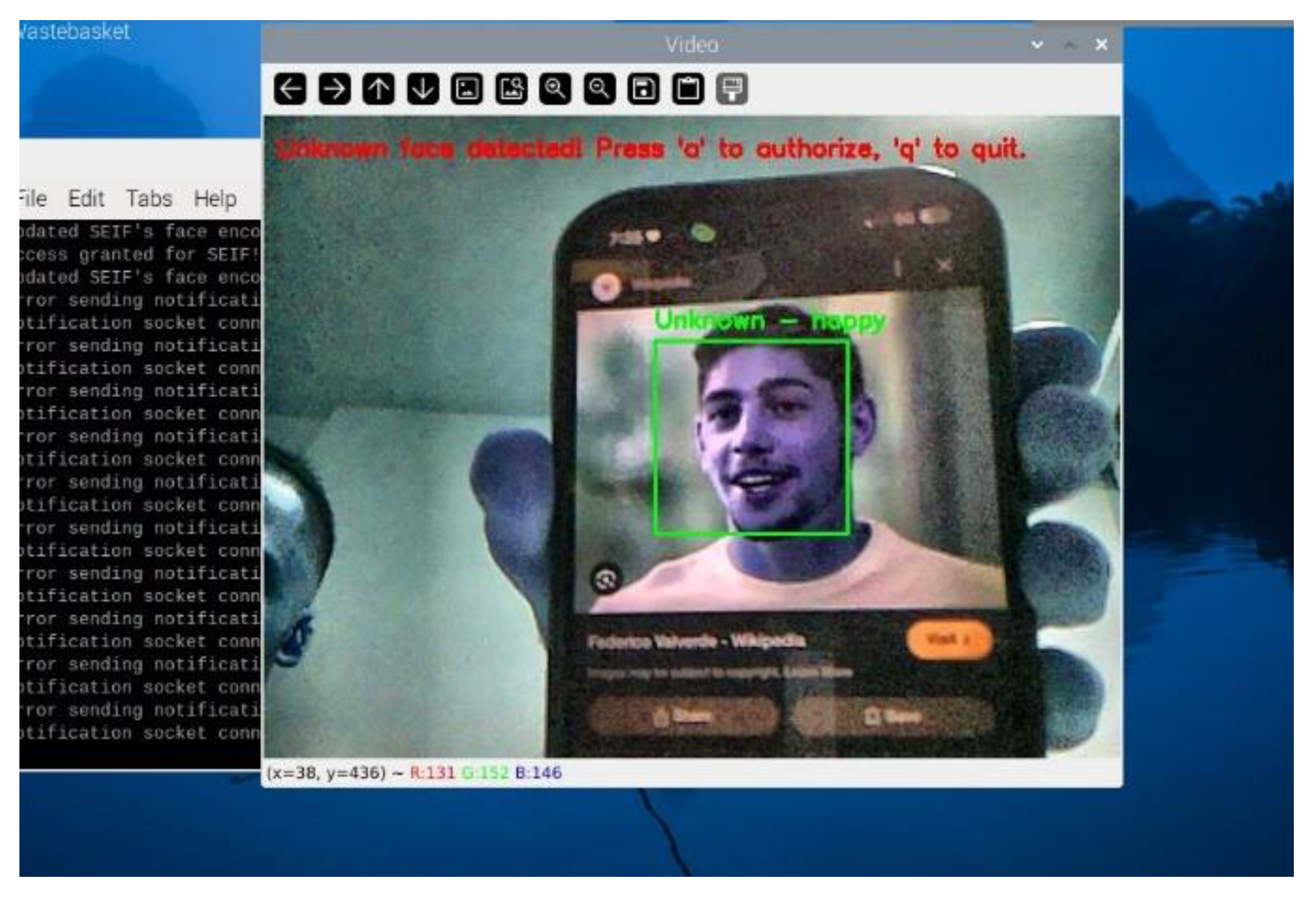

The system identifies faces that do not match stored encodings as "Unknown." This triggers a prompt to the user, allowing them to dynamically add new individuals to the list of authorized faces. This feature ensures scalability and eliminates the need for preloading datasets for every user. When an unrecognized face is detected, the system displays a real-time prompt on the Raspberry Pi terminal, requesting the user to decide whether to authorize the face. The prompt includes the following options:

This functionality is implemented in the Python script, where the system identifies faces as “Unknown” when no match is found in the encodings_file. Upon receiving user input to authorize the face, the system saves the corresponding 128-dimensional face encoding along with the name provided by the user. This interactive feature facilitates adaptive learning, as shown in

Figure 10.

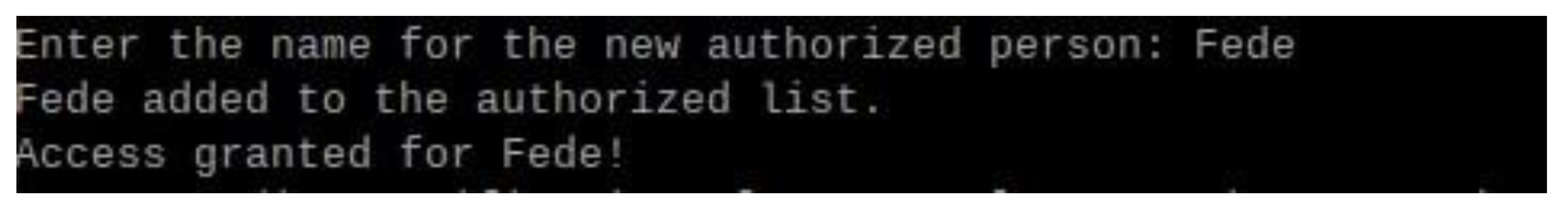

3.12. Storing New Face Encodings

Once a face is authorized, the system dynamically updates its dataset. The 128-dimensional encoding of the newly detected face, along with the name entered by the user, is stored in the encodings_file. This ensures that the face will be recognized in subsequent interactions without requiring a complete system restart or retraining process. This dynamic addition process as shown in

Figure 11 is efficient and secure, maintaining the robustness of the face recognition system while adapting to new users.

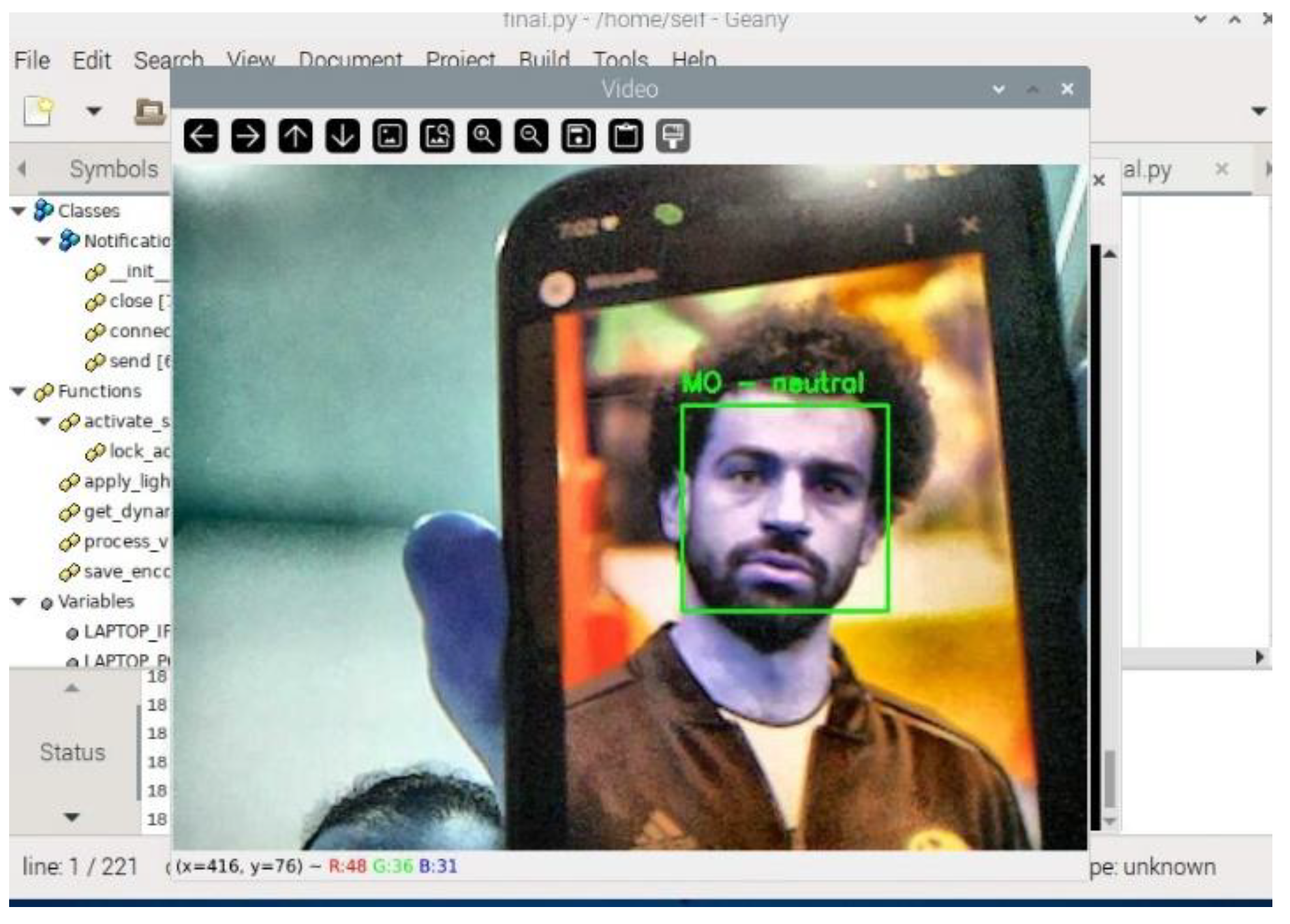

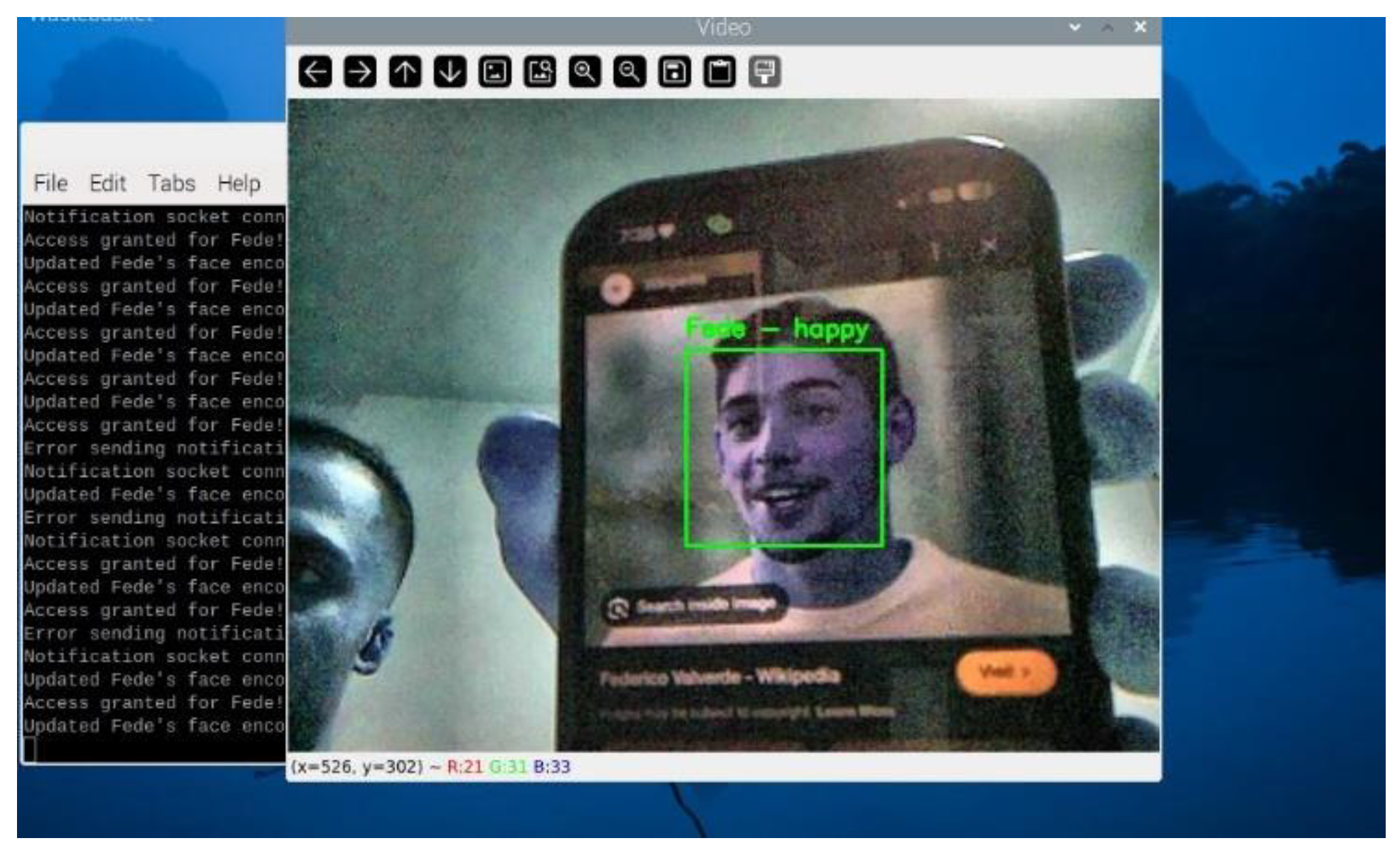

3.13. Recognizing Newly Added Faces

After adding a new face, the system immediately integrates the updated encoding into the recognition pipeline. Subsequent frames demonstrate the system’s ability to correctly identify the newly authorized individual by displaying their name and emotional state on the video stream. This step confirms the success of the adaptive learning feature and showcases the system's capability to evolve dynamically.

Once the unknown face is authorized and added to the system, it is successfully recognized in subsequent detections, as demonstrated in

Figure 12.

3.14. Integration into the Recognition Workflow

The recognition workflow naturally incorporates adaptive learning and dynamic addition features. "Detect a Face" identifies a face within the frame and calculates its encoding. Align with Established Encodings: We revise the encoded information for the recognised individual upon identification. If we find no match, we classify the face as "unknown." The "Unknown" classification prompts the user to authorise and assign a face a name. The Retail Establishment New Encodings represents the latest encoding, with its name saved permanently for future identification. Incorporating additional individuals dynamically guarantees the system's adaptability to changing user needs. The system grants complete authority over access rights by encouraging users to permit unidentified individuals. Adaptive learning guarantees the system's accuracy and relevance as users' appearances evolve. The facial recognition door lock system is much more flexible, scalable, and easy to use when adaptive learning and dynamic integration of unfamiliar faces are used. Because of these features, there is no need for retraining or updating datasets by hand, and the system will always work well in real-world situations. By incorporating these functionalities, the system dynamically grows, providing a strong and user-friendly access control solution.

3.15. Emotion Detection

Emotion detection is a critical feature of the system, enhancing its capabilities beyond basic facial recognition. By analysing facial expressions in real-time, the system determines the dominant emotion displayed by a recognized or unknown individual. This functionality adds depth to the user experience and potential applications, such as detecting stress, happiness, or anxiety in specific contexts.

For enhanced facial recognition and emotion detection, the system integrates DeepFace, a deep learning-based facial analysis library capable of performing real-time facial verification and emotion classification [

12]. DeepFace employs a convolutional neural network (CNN) model pre-trained on large datasets, ensuring high accuracy in recognizing user expressions such as happiness, sadness, and anger. Recent studies highlight the effectiveness of CNN-based emotion recognition models, demonstrating their ability to extract subtle facial features that contribute to accurate classification [

13]. The DeepFace Library provides pre-trained models that classify facial expressions into predefined emotional categories: happiness, sadness, anger, surprise, fear, disgust, and neutrality. The library was selected due to its high accuracy and efficient integration with Python, making it suitable for real-time processing on the Raspberry Pi. The system captures frames from the Raspberry Pi Camera Module V2. Once a face is detected, the bounding box of the detected face is used to isolate the facial region. The extracted face region is passed to DeepFace’s emotion recognition module. The module outputs the probabilities for each emotion and identifies the dominant emotion. The detected emotion is displayed on the live video feed as shown in

Figure 13 and sent to the connected notification system. For example, if a user is detected as "fear," the system sends a notification stating, "Detected emotion: Fear.".

3.16. Enhancements for Real-Time Performance

To ensure the emotion detection module operates efficiently on the Raspberry Pi, several optimizations were implemented: Emotion detection is computationally intensive. To balance performance and responsiveness, the system skips a configurable number of frames during processing based on CPU usage. For example, during high CPU load, emotion detection is performed every 10th frame instead of every frame. The bounding box containing the facial region is resized to a fixed resolution before passing it to DeepFace. This minimizes computation while preserving recognition accuracy. Detected emotions are displayed alongside the recognized name in the live video feed. For example, "SEIF – Happy" is overlaid on the bounding box of a recognized individual as shown in

Figure 14.

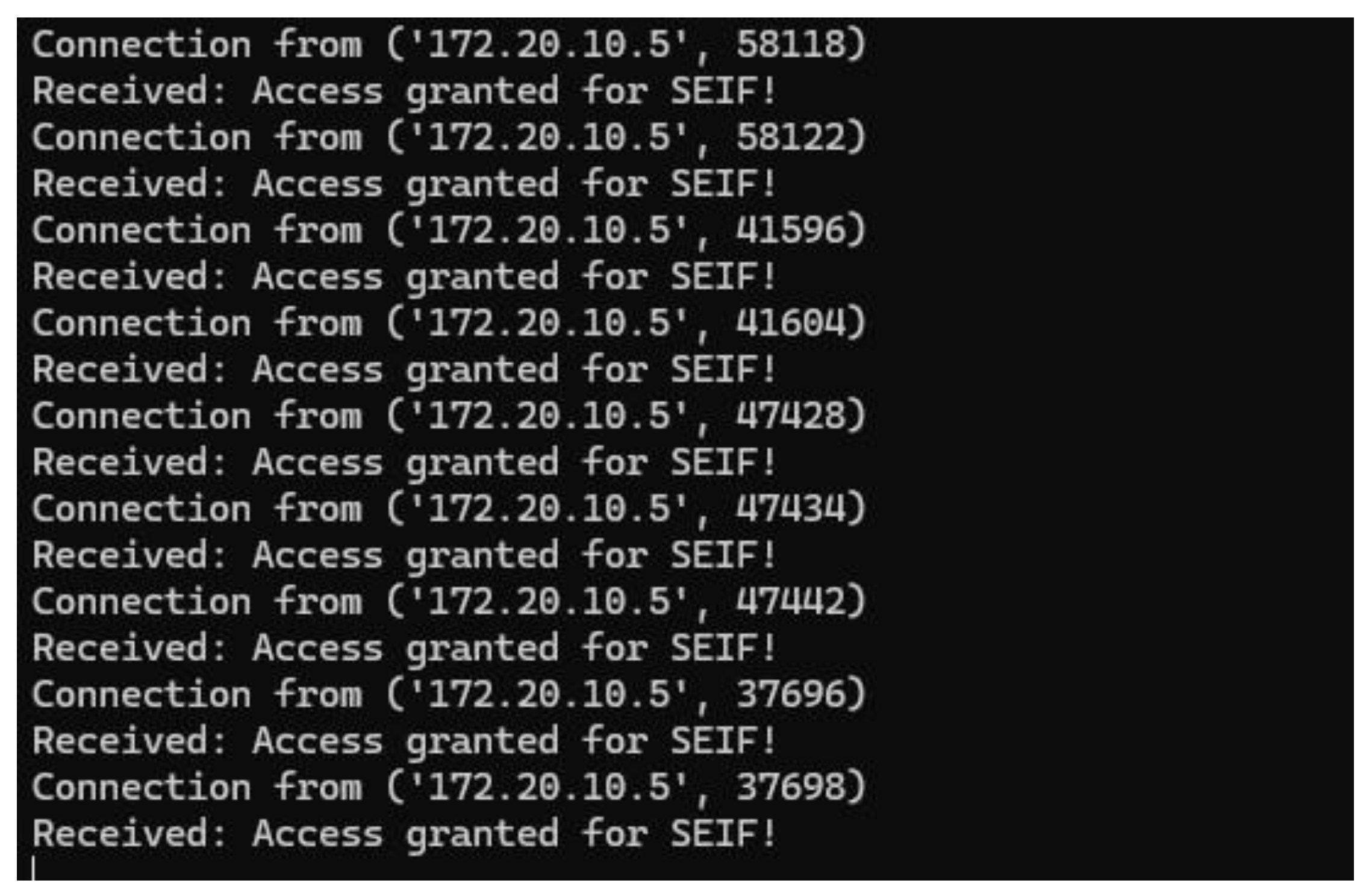

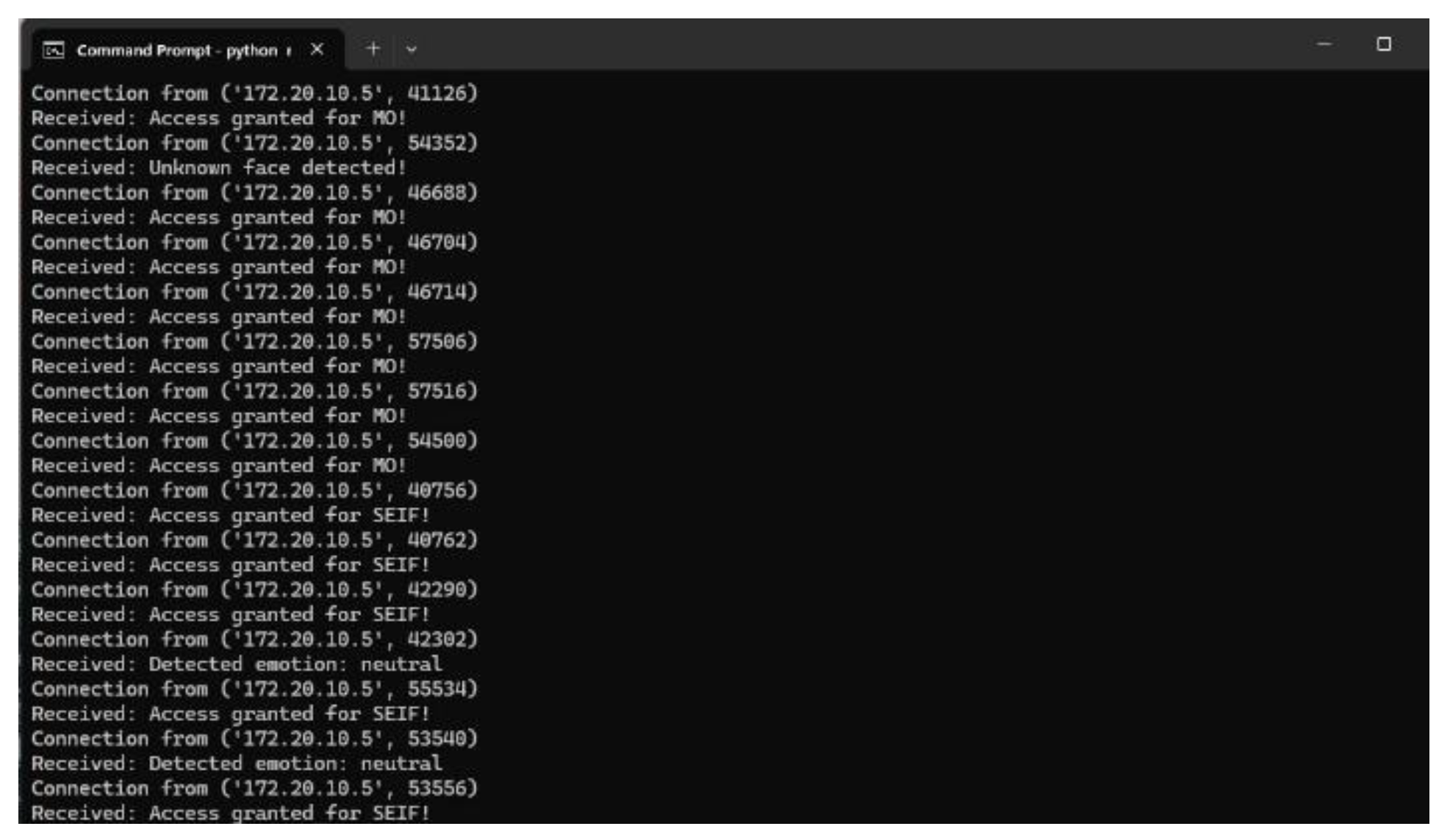

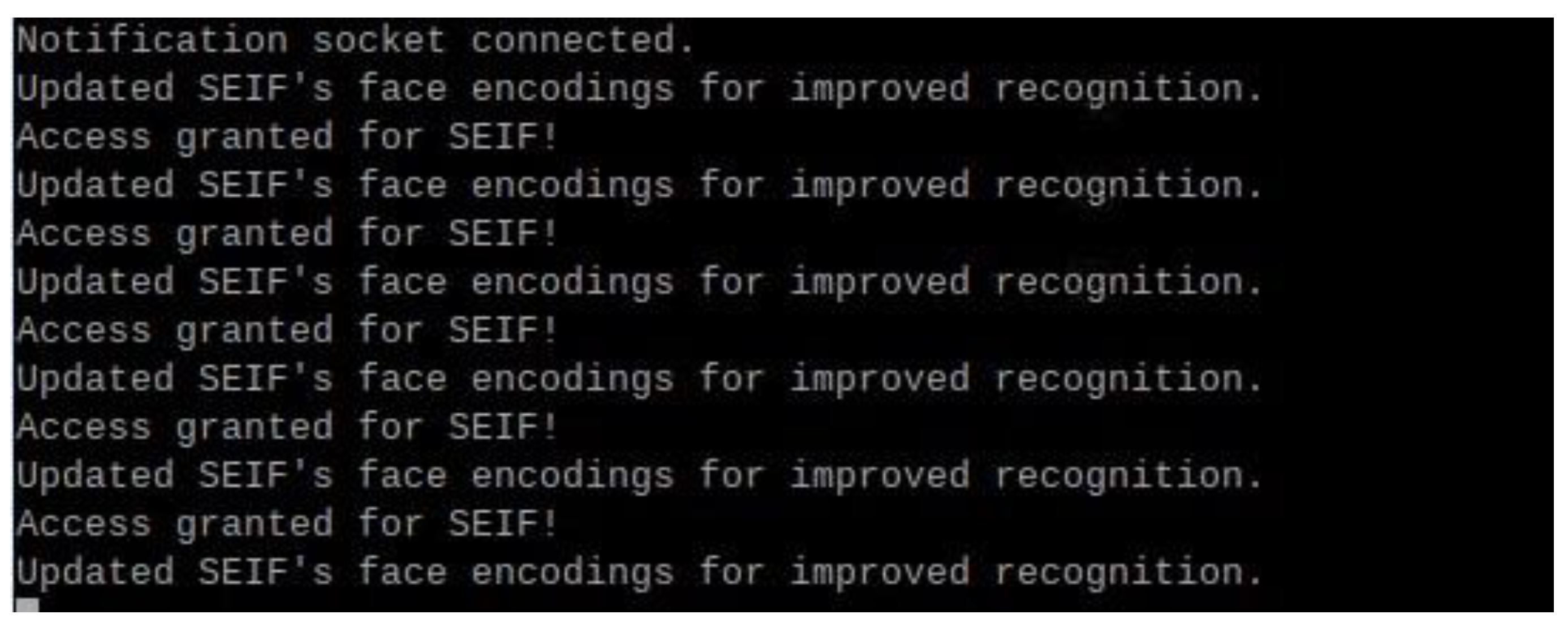

3.17. Notification System

The notification system is essential to the facial recognition door lock system, providing real-time access to event feedback. The system sends textual and auditory notifications for recognised faces, unknown faces, and emotions observed during recognition. It uses a socket-based client-server communication mechanism without hardware or mobile apps. Real-time notifications using IoT protocols improve face recognition systems. This approach logs access events and provides real-time notifications [

14]. Real-time updates offer swift feedback on facial recognition, encompassing successful recognition, identification of unknown faces, and identification of people's emotions. Improved Usability Text-to-speech (TTS) on the server (laptop) delivers notifications in text and audio formats. Simplicity and efficiency Use effortlessly without a smartphone app or cumbersome configurations, making it user-friendly. This system's Raspberry Pi client sends messages to the laptop server over a TCP socket. The server processes these alerts, presents them on the terminal, and sounds a TTS alert. This keeps users informed of system activities, whether near or remote. Notification Phases of the system workflow initiate notifications. It is activated when the notification system detects an authorised face, an unknown face, or an emotion. A message includes the recognised person's name and sentiments. Client-Side Communication: The client connects to the server via TCP and transmits a notification. The Server-Side Process The server logs the notification in the terminal and reads it aloud using TTS. Feedback sent to the user receives real-time system activity updates, allowing them to act (e.g., authorise an unfamiliar face) or stay informed. Real-time input from the notification system improves face recognition door lock usability and efficiency. Socket-based architecture assures low-latency communication, while TTS provides intuitive auditory notifications. This approach meets the paper's simplicity and accessibility goals by not requiring extra programs or hardware. The notification system is crucial to the paper's functionality providing access to authorised users, alerts about unknown faces, and reporting observed emotions.