Submitted:

13 October 2025

Posted:

16 October 2025

You are already at the latest version

Abstract

Keywords:

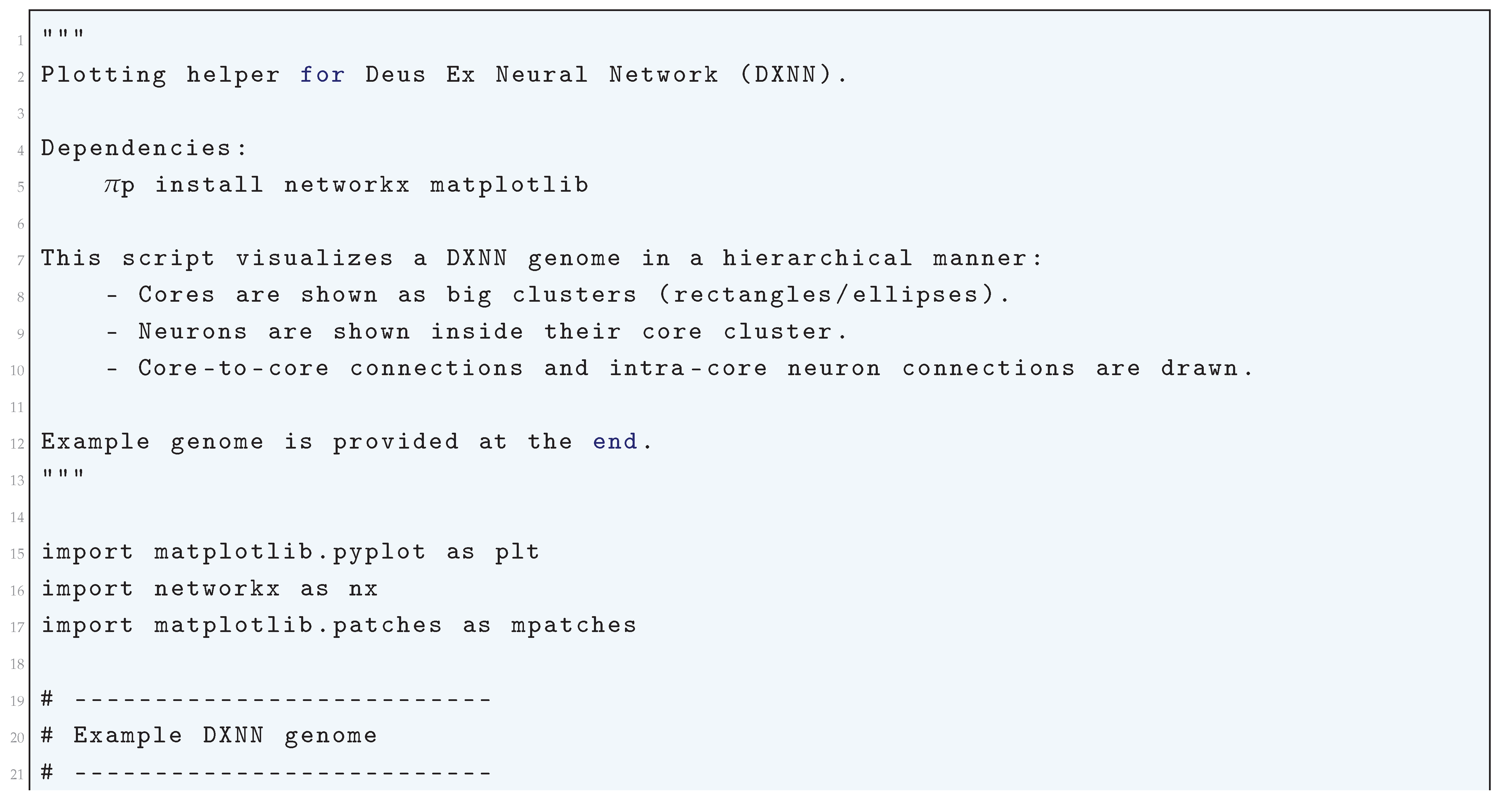

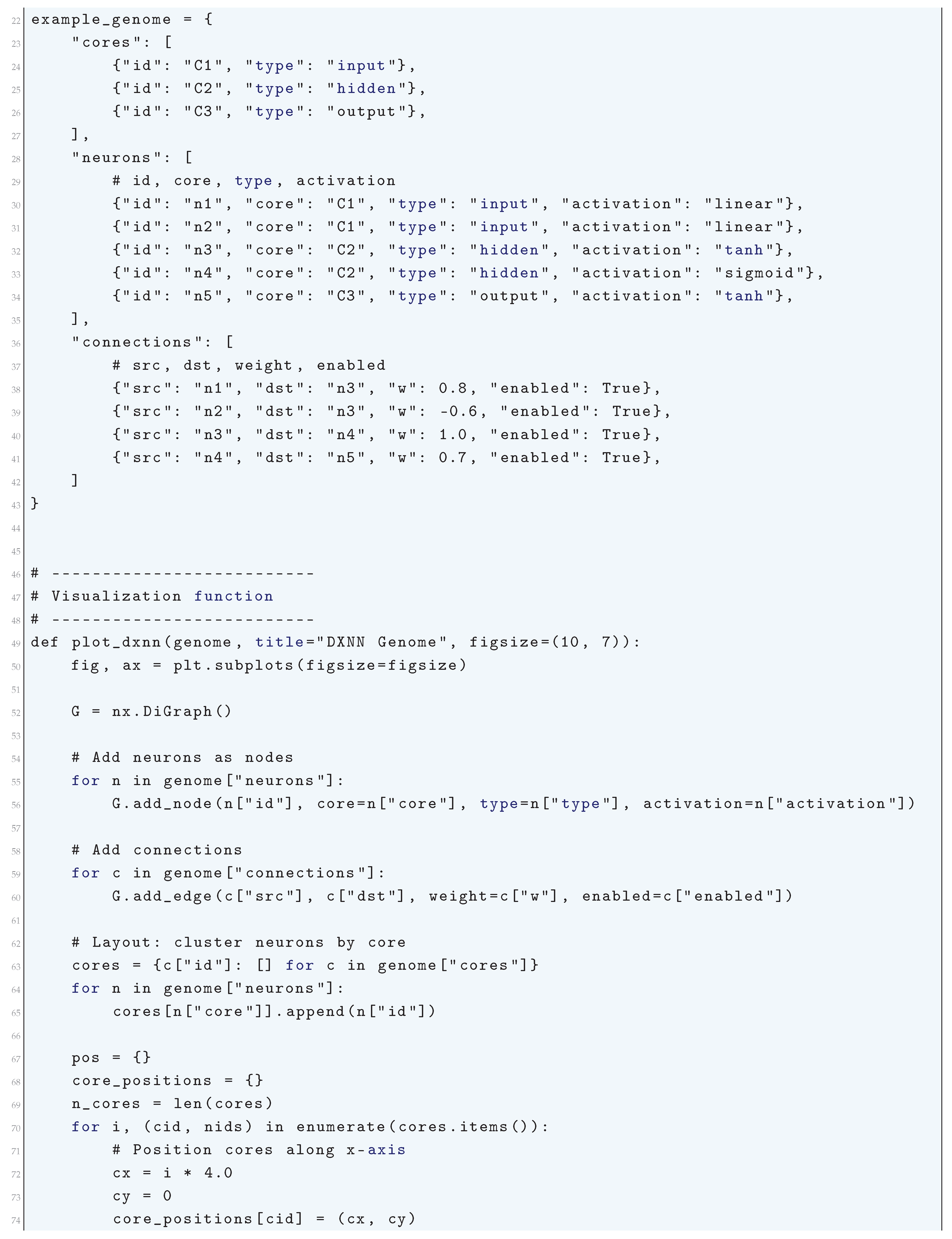

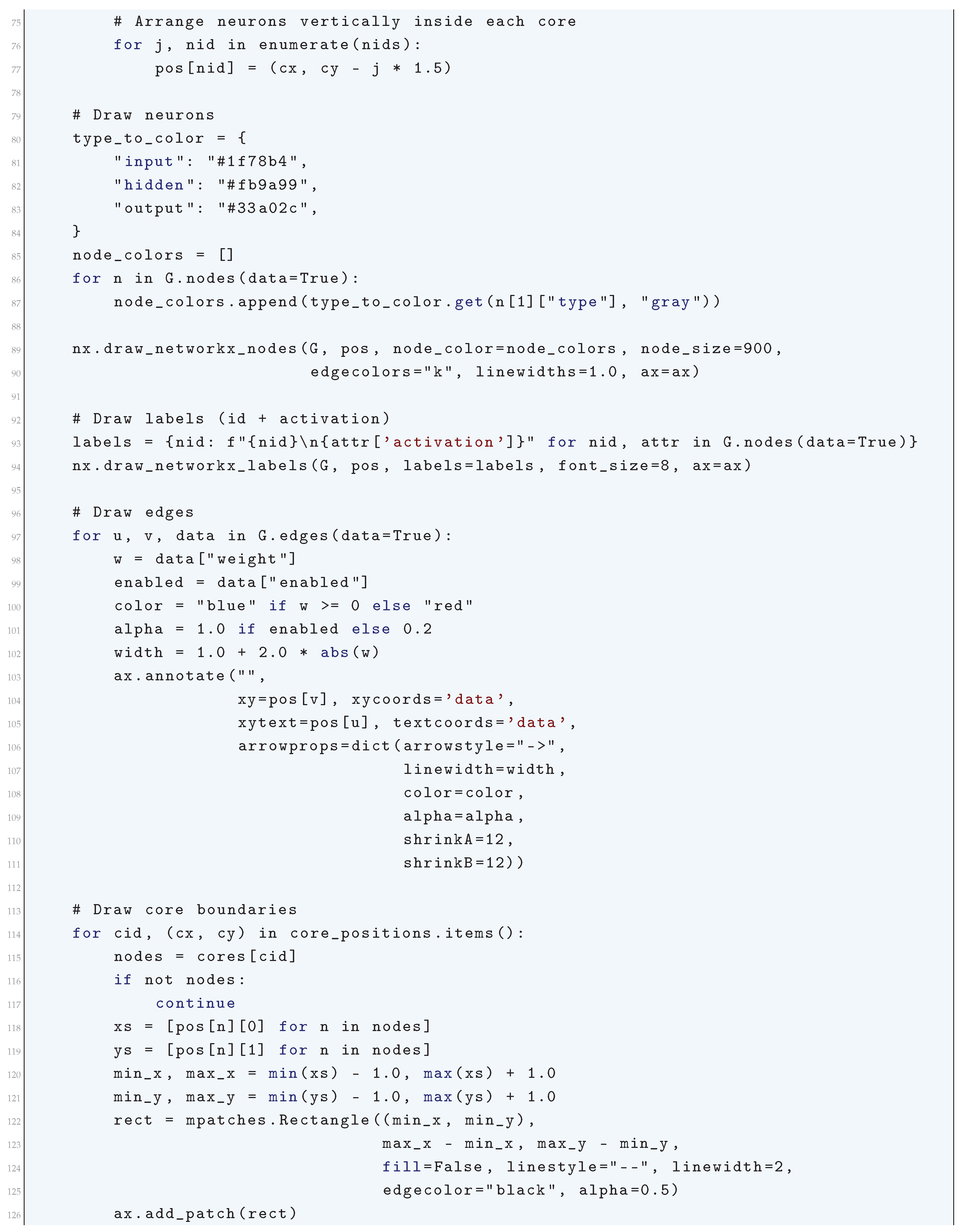

1. Mathematical Foundations

- Functional Approximation: Modeling complex, non-linear functions with neural networks. Functional approximation is one of the building blocks of deep learning, and it lies at the core of how deep learning models, especially neural networks, are able to tackle hard problems. In deep learning, functional approximation means the capability of neural networks to approximate complex, high-dimensional, and non-linear functions that tend to be hard or impossible to model with standard mathematical methods.

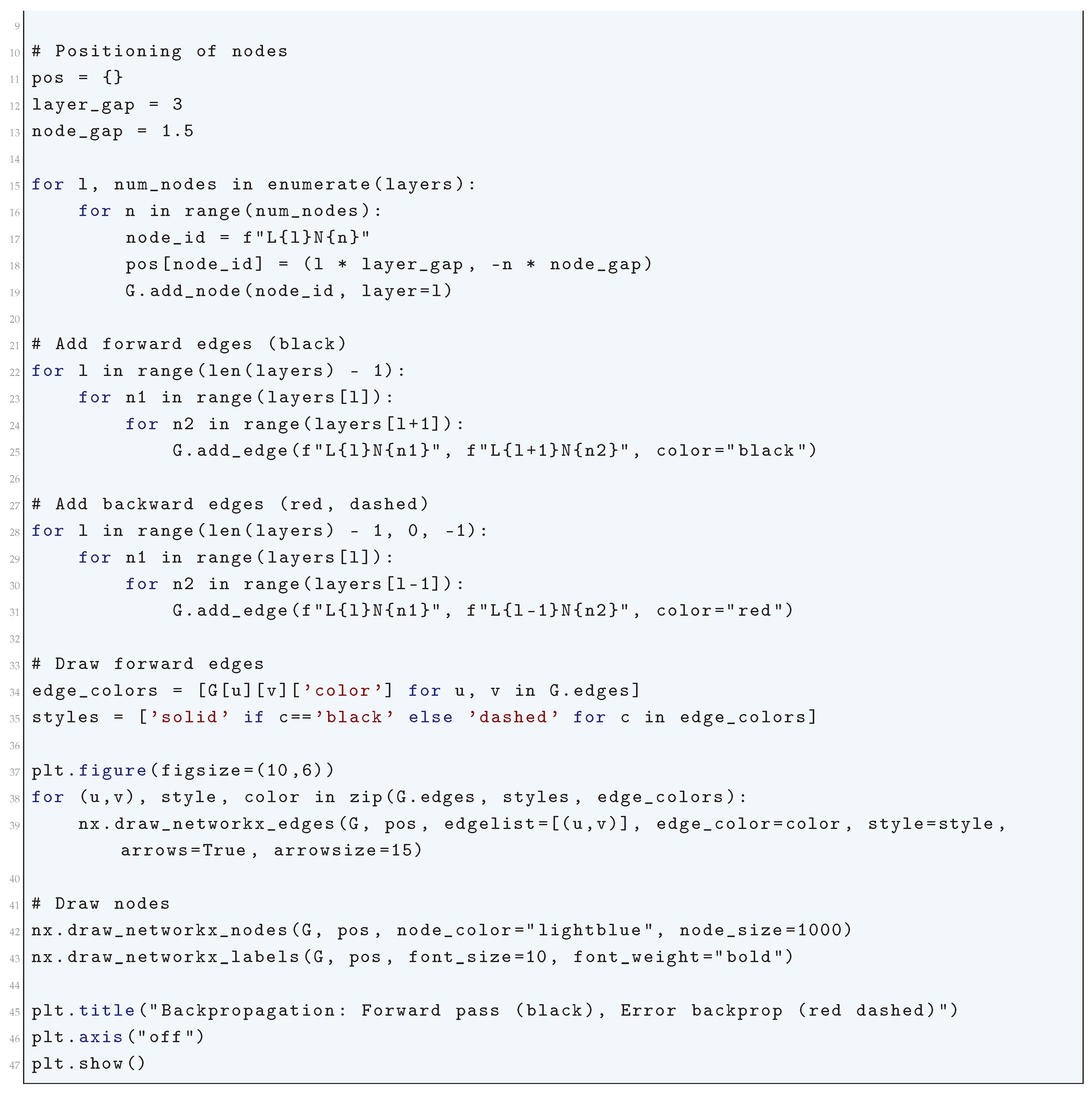

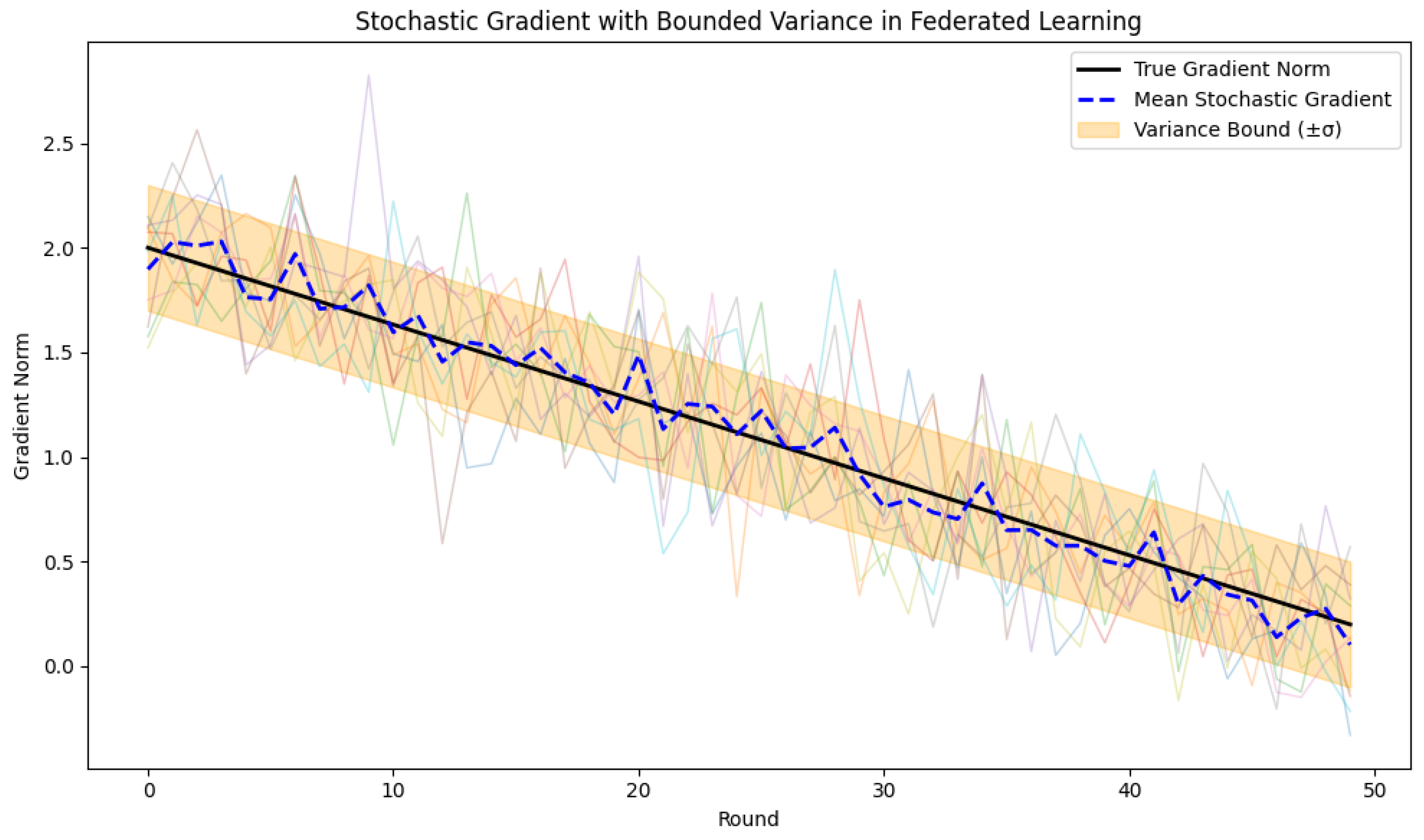

- Optimization Theory: Efficiently solving non-convex optimization problems. Optimization theory is a key area of deep learning, as training deep neural networks is really just minimizing the best set of parameters (weights and biases) of a given objective, commonly referred to as the loss function. The objective will usually be some measure of difference between the network outputs and the actual values. Optimization methods control the process of training and decide the extent to which a neural network may learn from data.

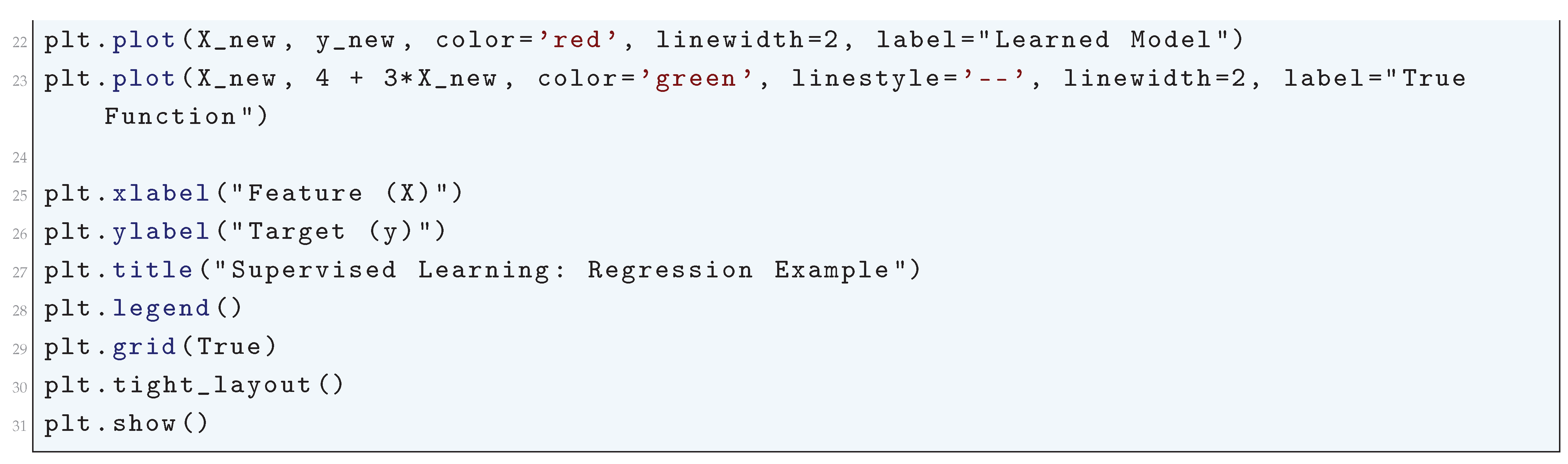

- Statistical Learning Theory: Generalization behavior on unseen data. Statistical Learning Theory (SLT) gives the mathematical basis for the generalization behavior of machine learning methods, including deep learning methods. It provides important insights into how models generalize from training data to novel data, and this is very important in making sure that deep learning models are not just correct on the training set but also work well on new, unseen data. SLT assists in solving basic issues like overfitting, bias-variance tradeoff, and generalization error.

1.1. Problem Definition: Risk Functional as a Mapping Between Spaces

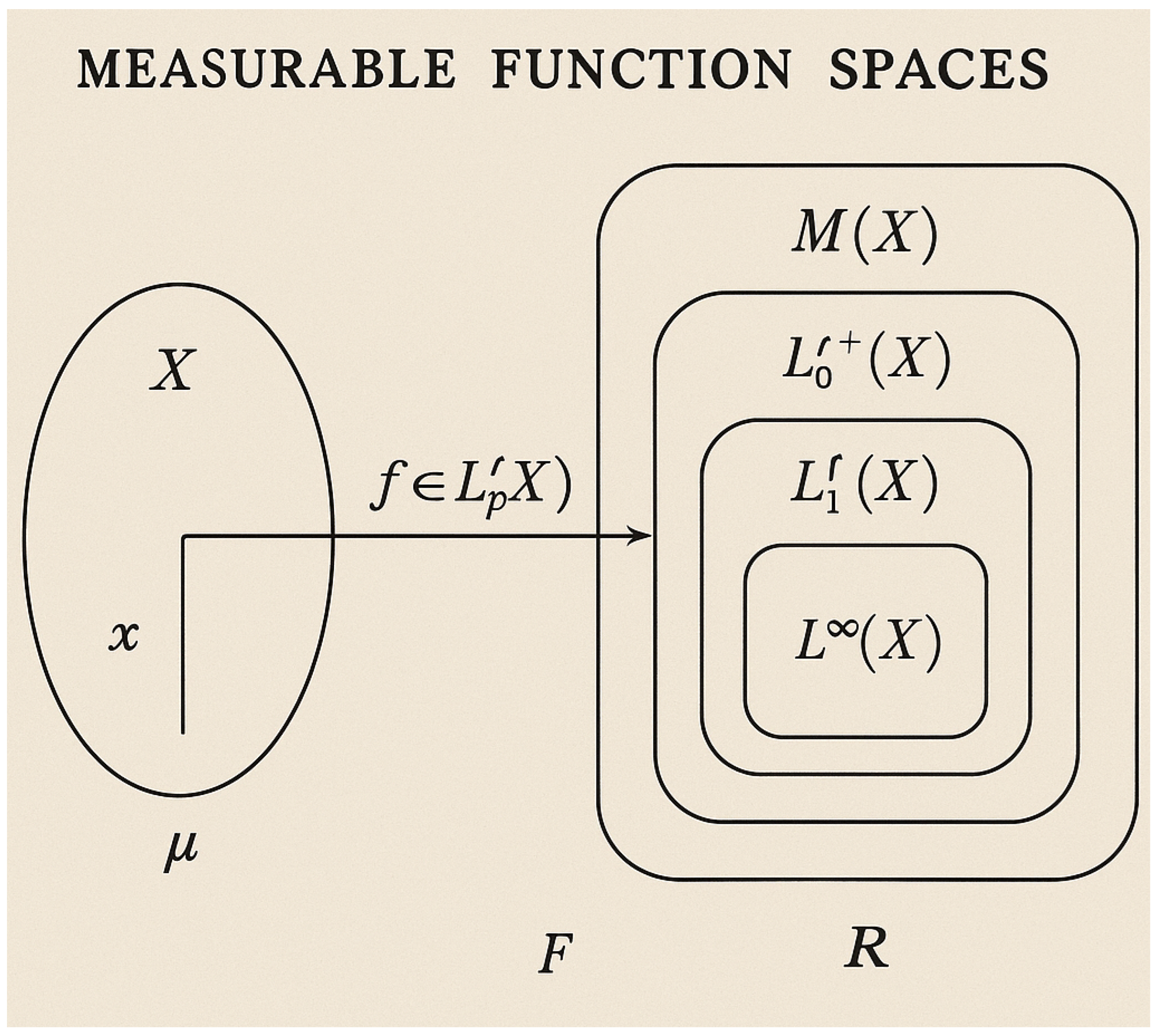

1.1.1. Measurable Function Spaces

1.1.1.1 Literature Review of Measurable Function Spaces

1.1.1.2 Analysis of Measurable Function Spaces

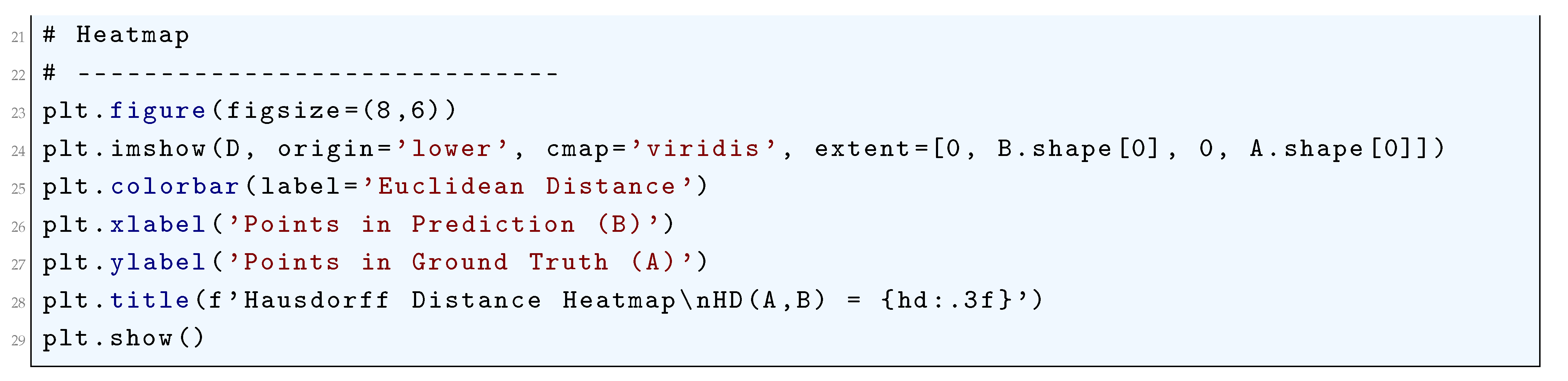

- f belongs to a hypothesis space .

- is a Borel probability measure over , satisfying .

1.1.2. Risk as a Functional

1.1.2.1 Literature Review of Risk as a Functional

1.1.2.2 Analysis of Risk as a Functional

1.2. Approximation Spaces for Neural Networks

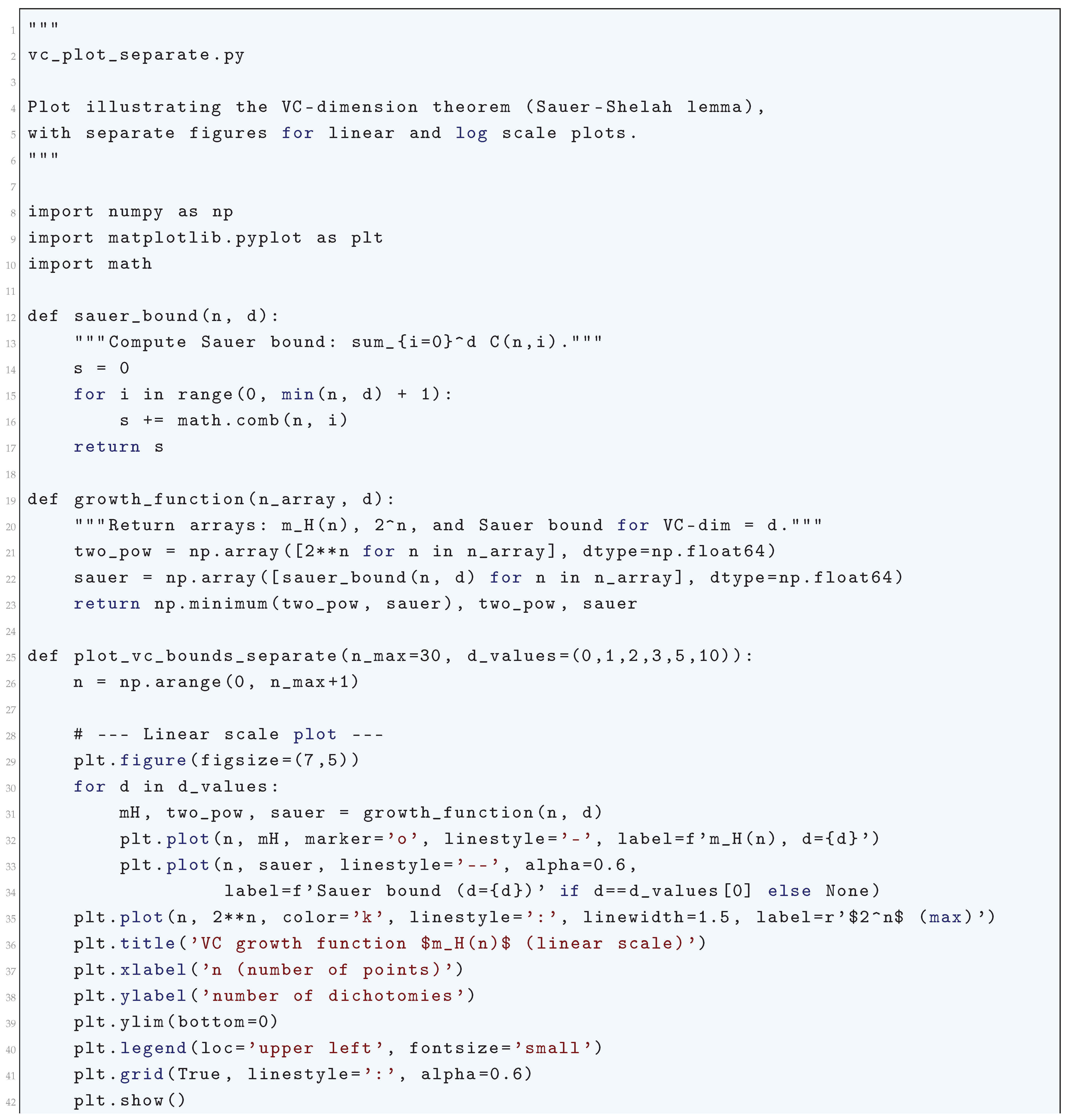

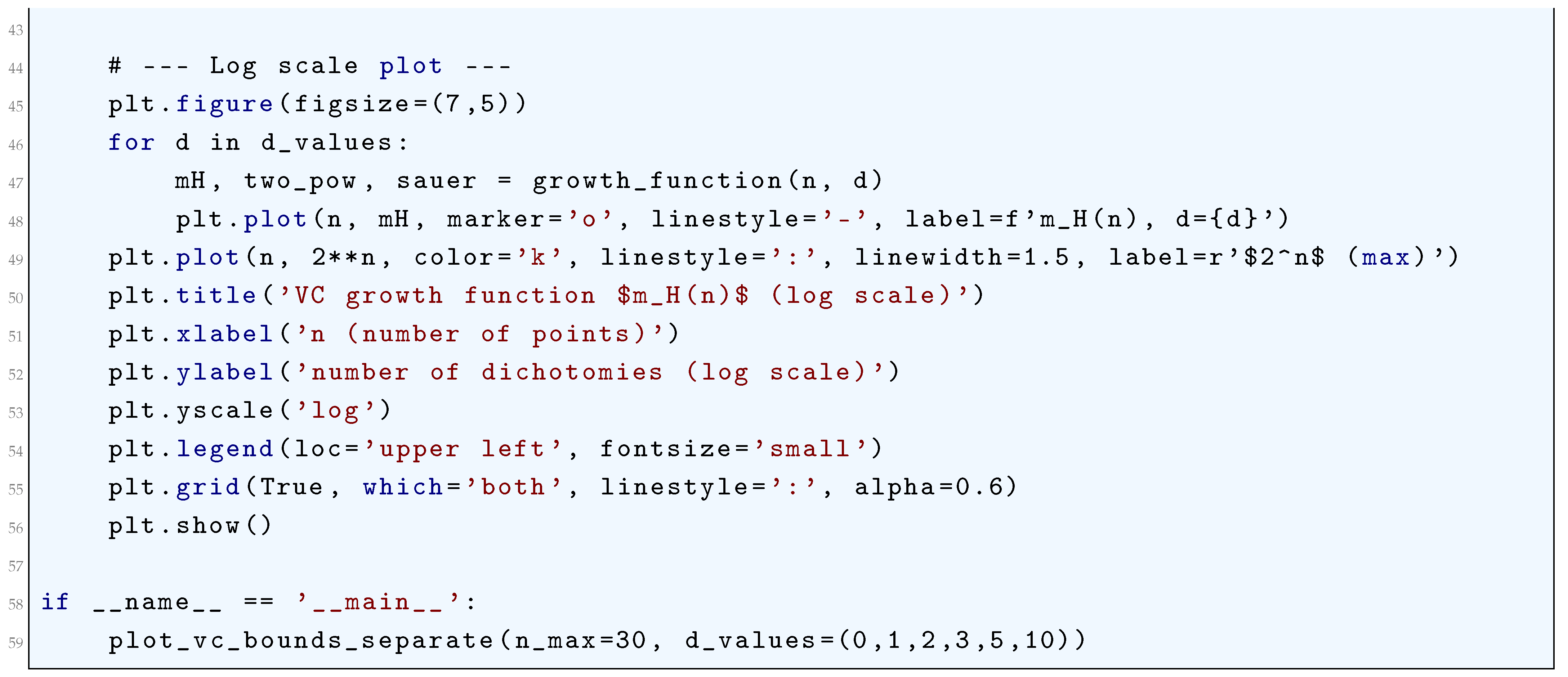

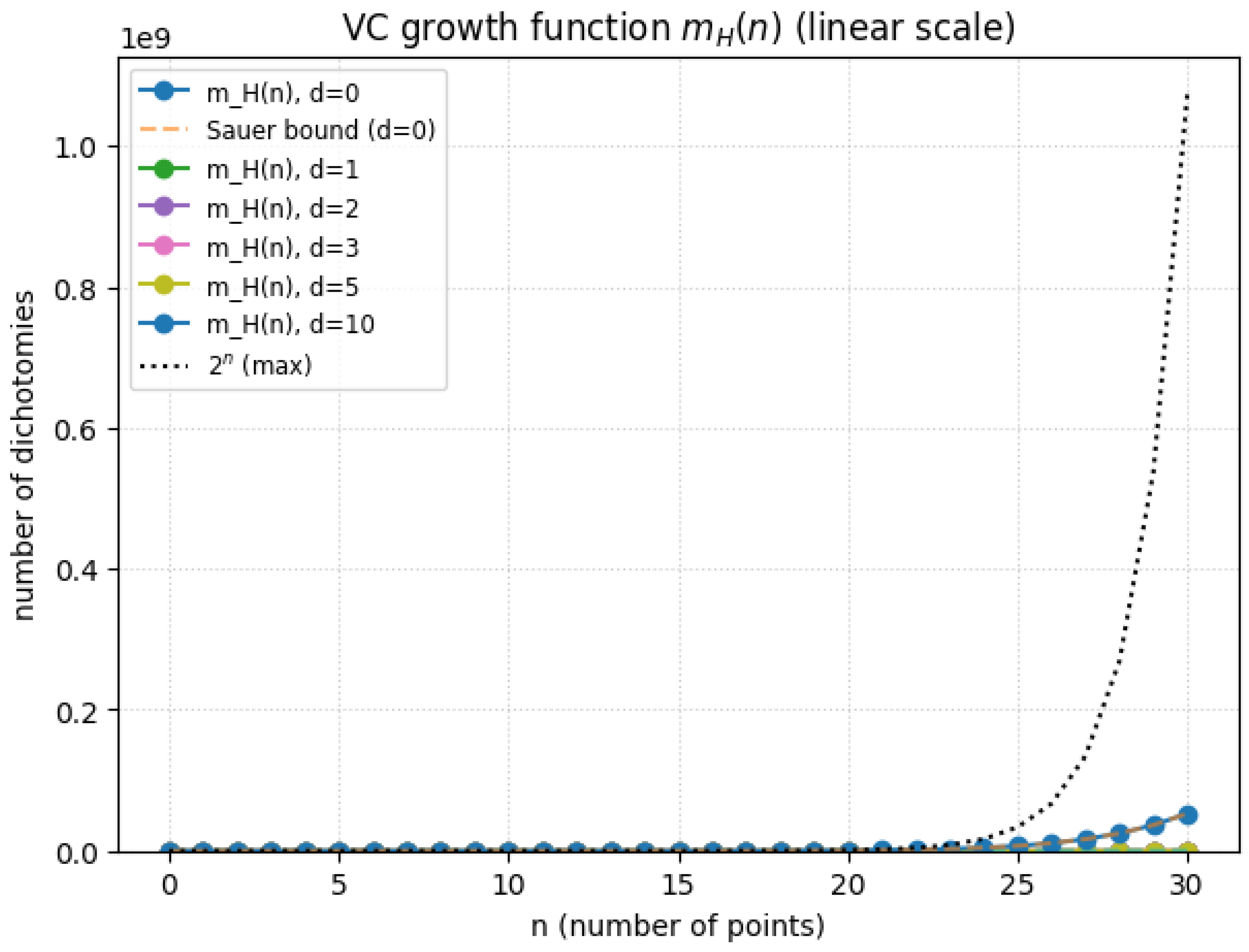

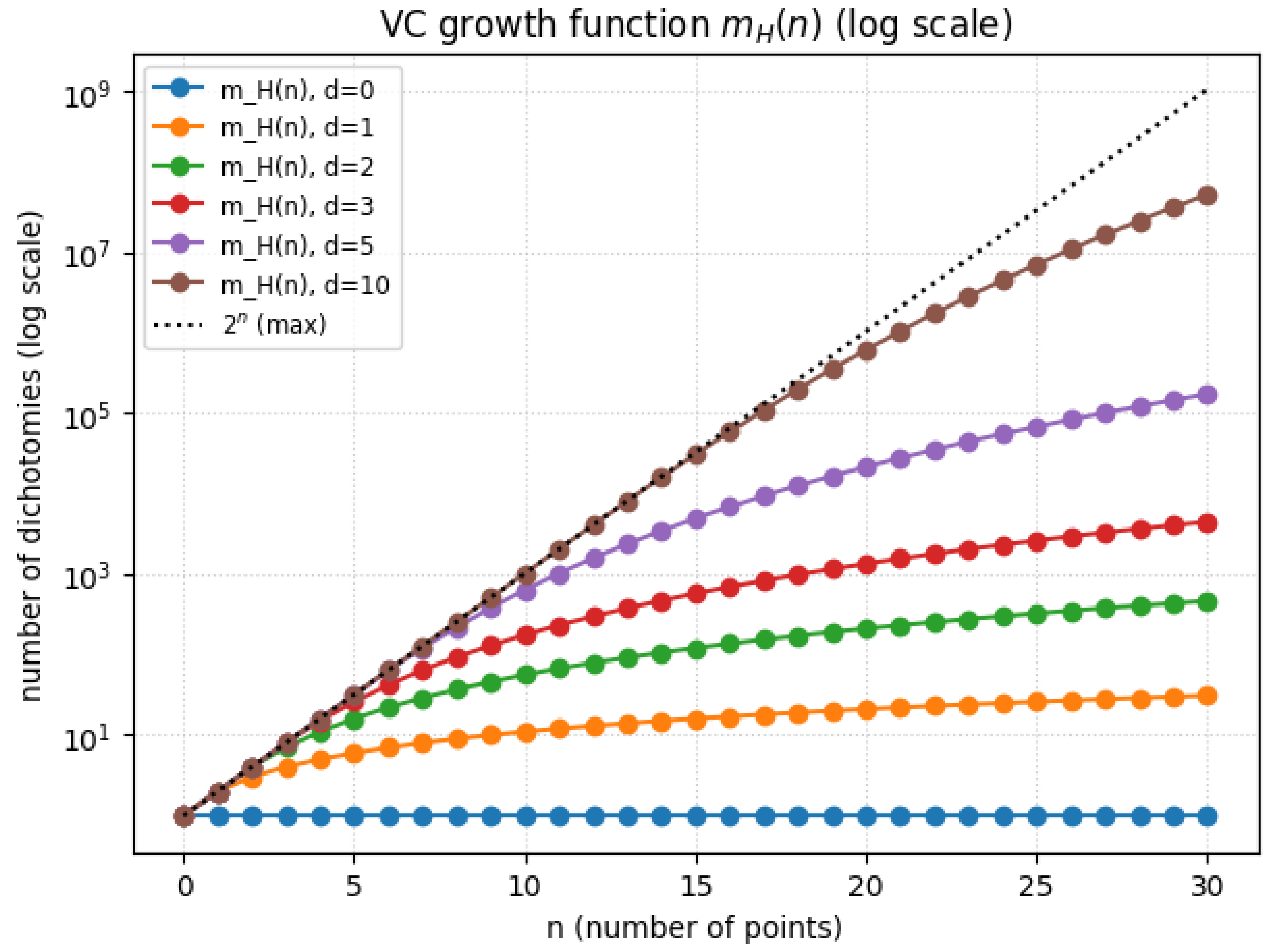

- VC-dimension theory for discrete hypotheses.

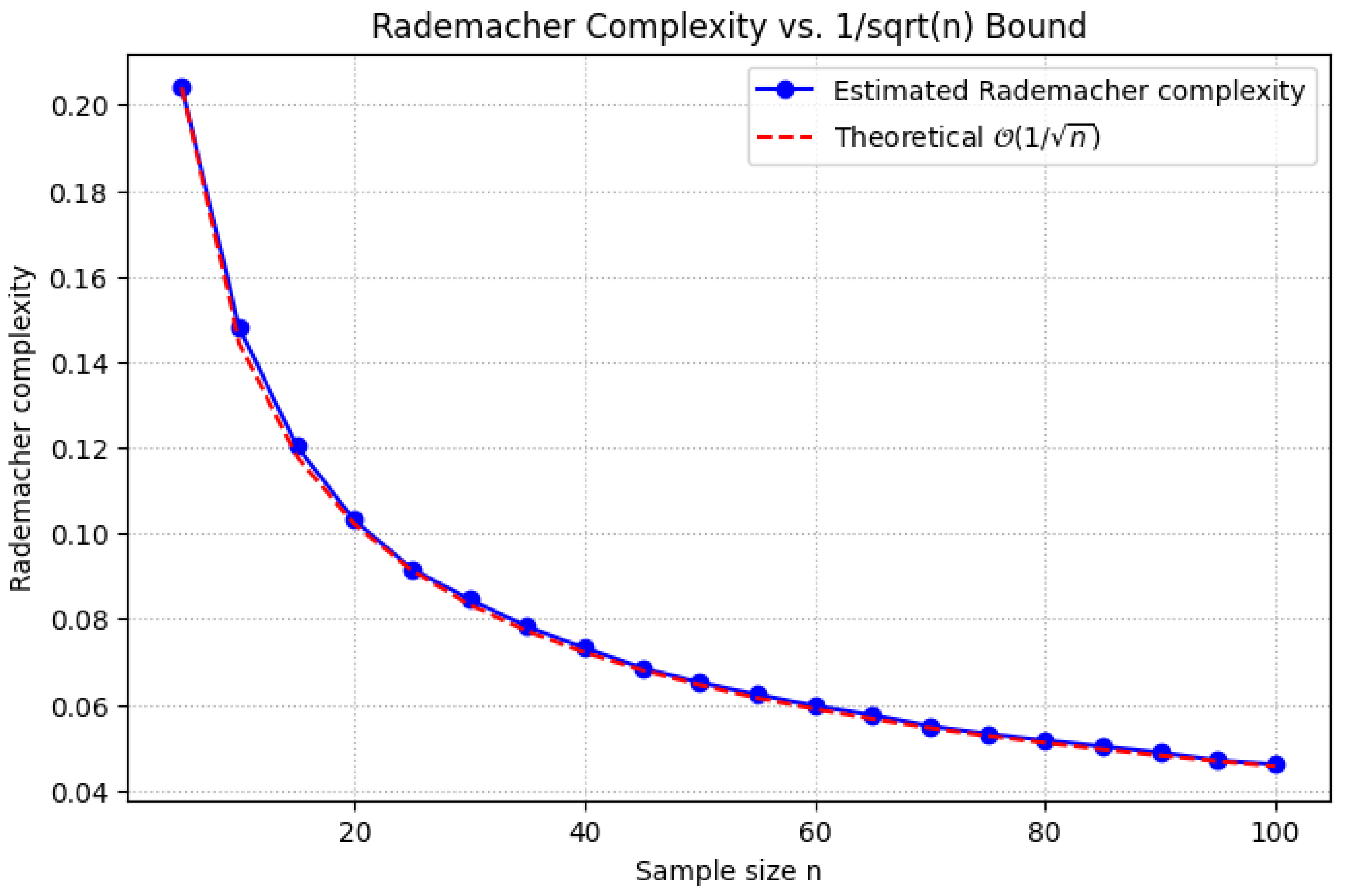

- Rademacher complexity for continuous spaces:where are i.i.d. Rademacher random variables.

1.2.1. VC-Dimension Theory for Discrete Hypotheses

1.2.1.1 Literature Review of VC-Dimension Theory for Discrete Hypotheses

1.2.1.2 Analysis of VC-Dimension Theory for Discrete Hypotheses

- Shattering Implies Non-empty Hypothesis Class: If a set S is shattered by H, then H is non-empty. This follows directly from the fact that for each labeling , there exists some that produces the corresponding labeling. Therefore, H must contain at least one hypothesis.

- Upper Bound on Shattering: Given a hypothesis class H, if there exists a set of size k such that H can shatter S, then any set of size greater than k cannot be shattered. This gives us the crucial result that:

- Implication for Generalization A central result in the theory of statistical learning is the connection between VC-dimension and the generalization error. Specifically, the VC-dimension bounds the ability of a hypothesis class to generalize to unseen data. The higher the VC-dimension, the more complex the hypothesis class, and the more likely it is to overfit the training data, leading to poor generalization.

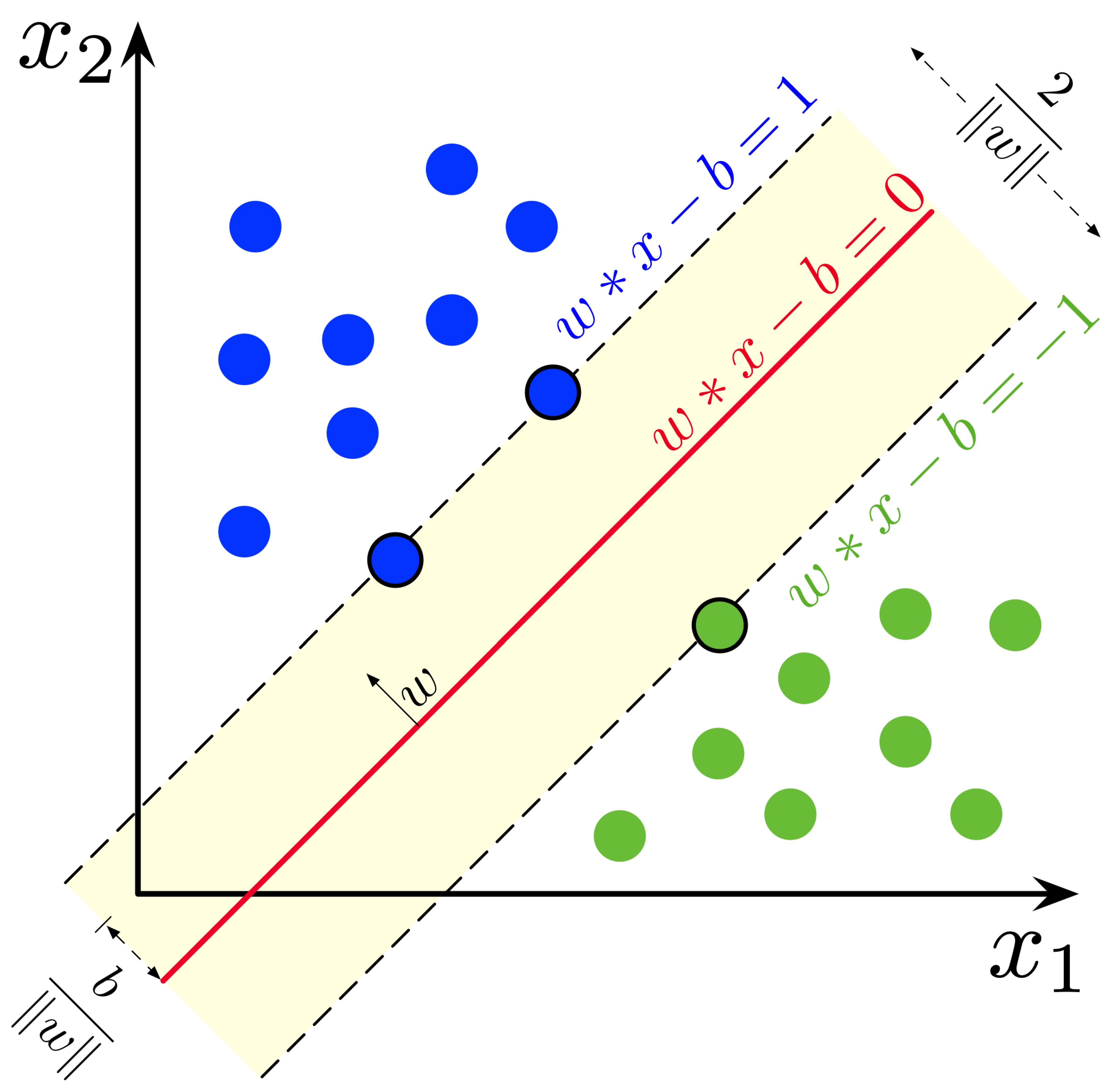

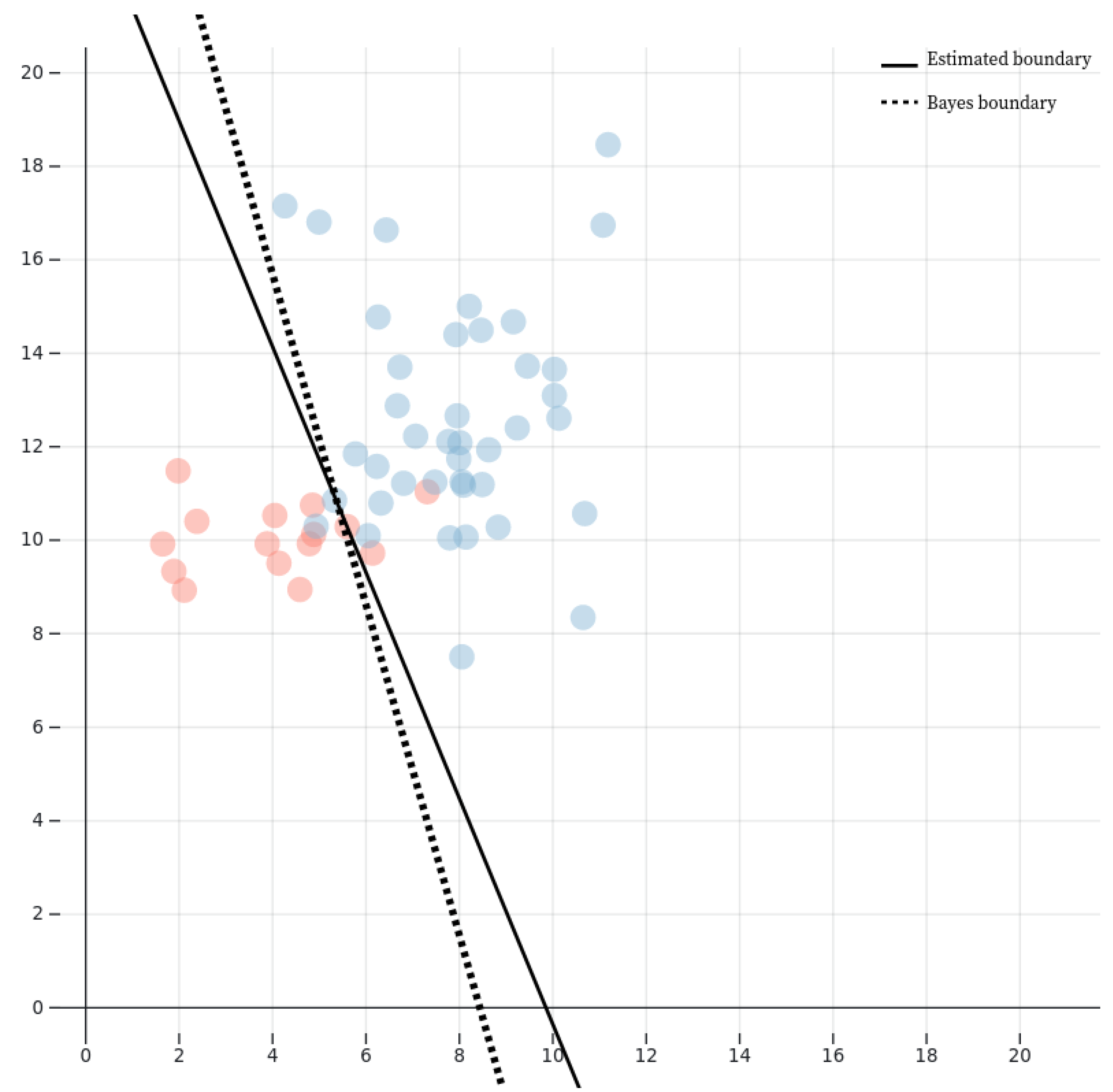

- Example 1: Linear Classifiers in : Consider the hypothesis class H consisting of linear classifiers in . These classifiers are hyperplanes in two dimensions, defined by:where is the weight vector and is the bias term. The VC-dimension of linear classifiers in is 3. This can be rigorously shown by noting that for any set of 3 points in , the hypothesis class H can shatter these points. In fact, any possible binary labeling of the 3 points can be achieved by some linear classifier. However, for 4 points in , it is impossible to shatter all possible binary labelings (e.g., the four vertices of a convex quadrilateral), meaning the VC-dimension is 3.

- Example 2: Polynomial Classifiers of Degree d: Consider a polynomial hypothesis class in of degree d. The hypothesis class H consists of polynomials of the form:where the are coefficients and . The VC-dimension of polynomial classifiers of degree d in grows as , implying that the complexity of the hypothesis class increases rapidly with both the degree d and the dimension n of the input space.

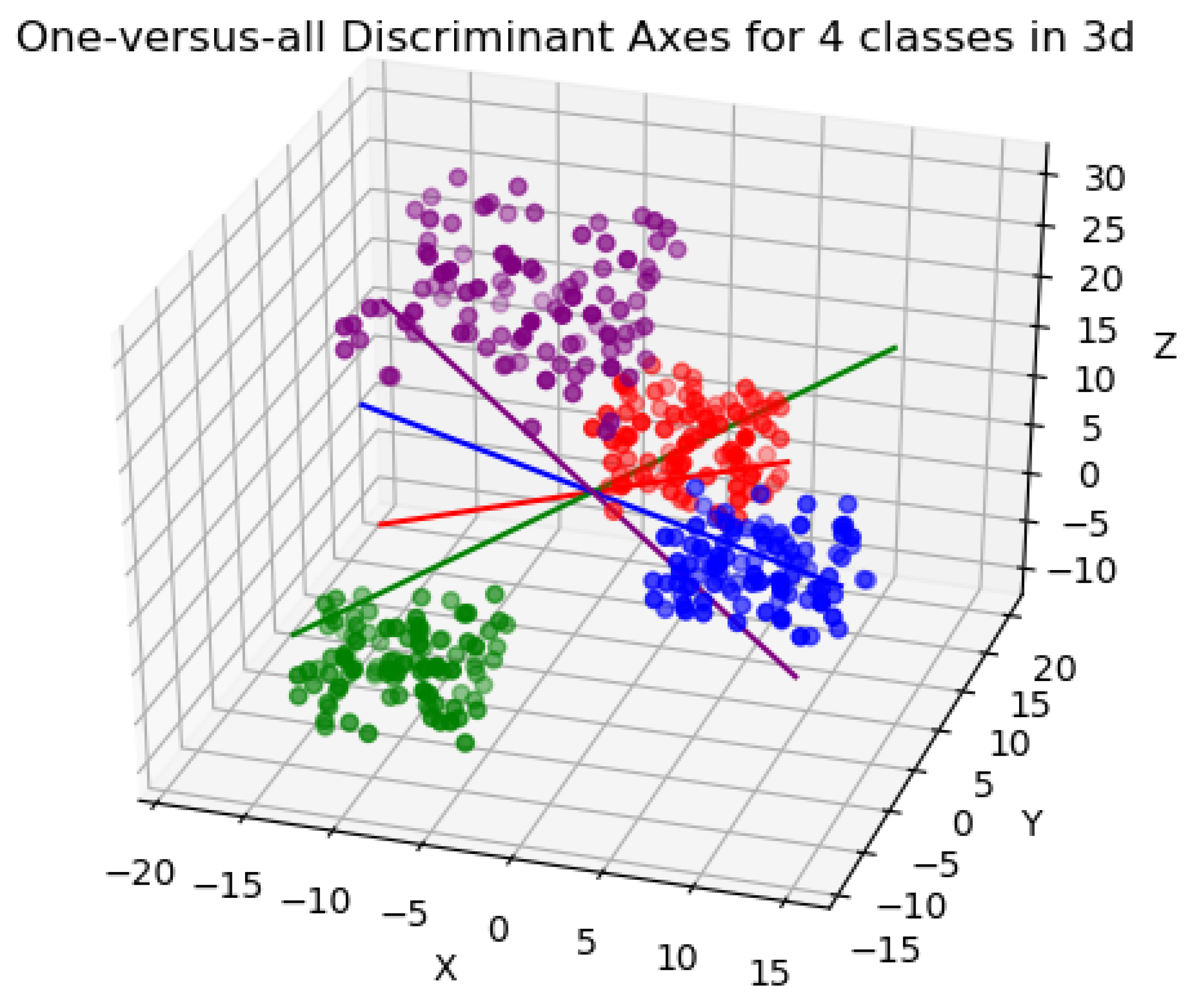

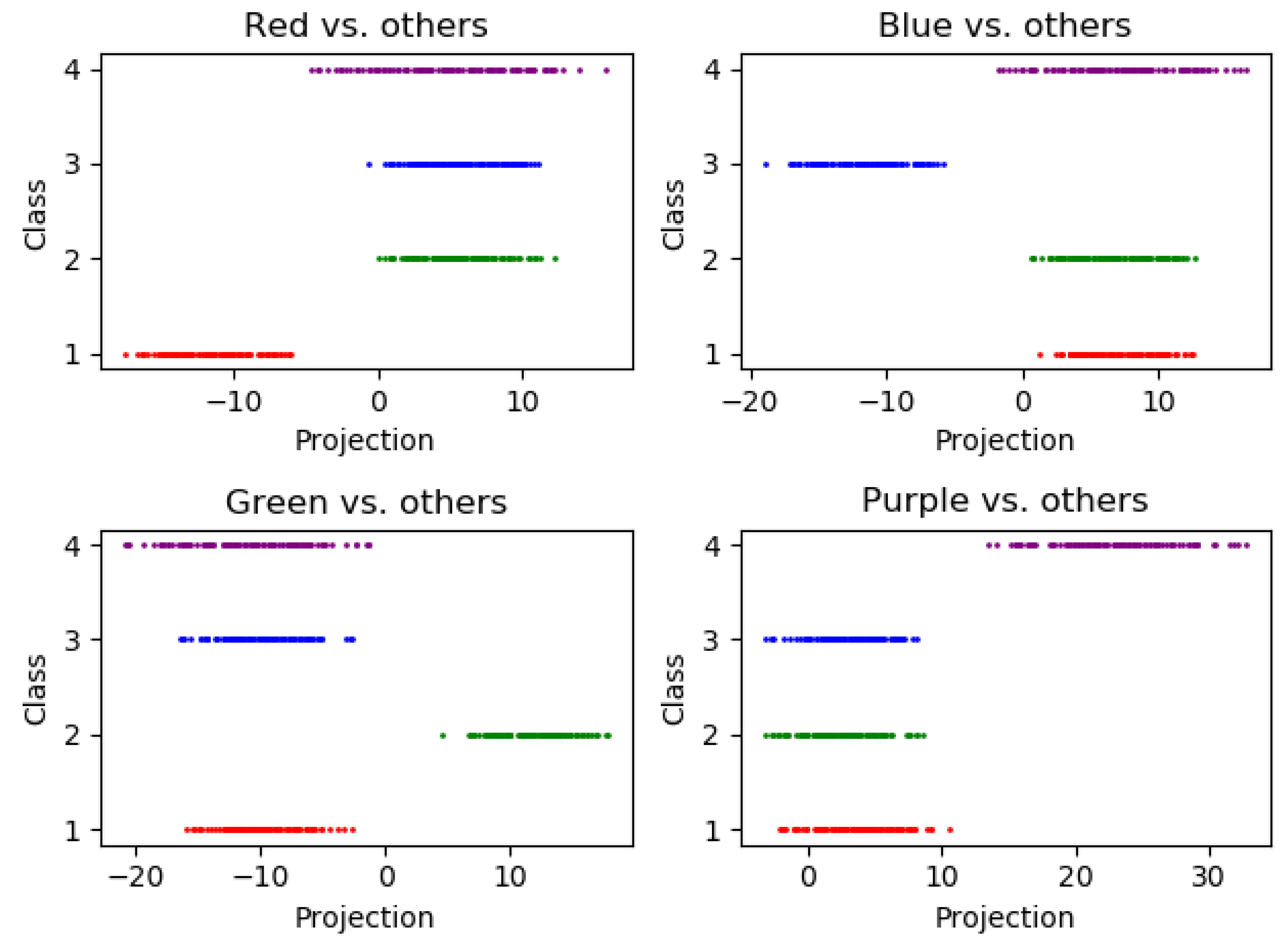

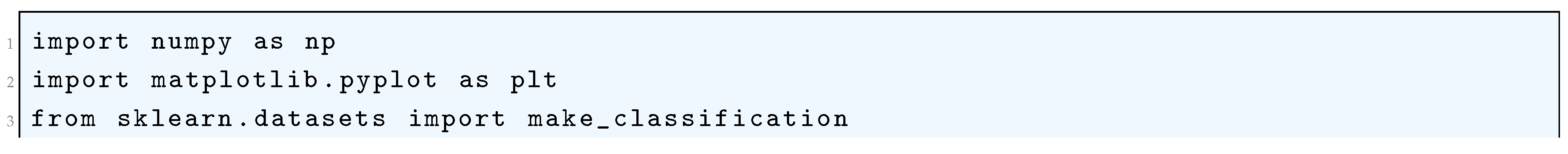

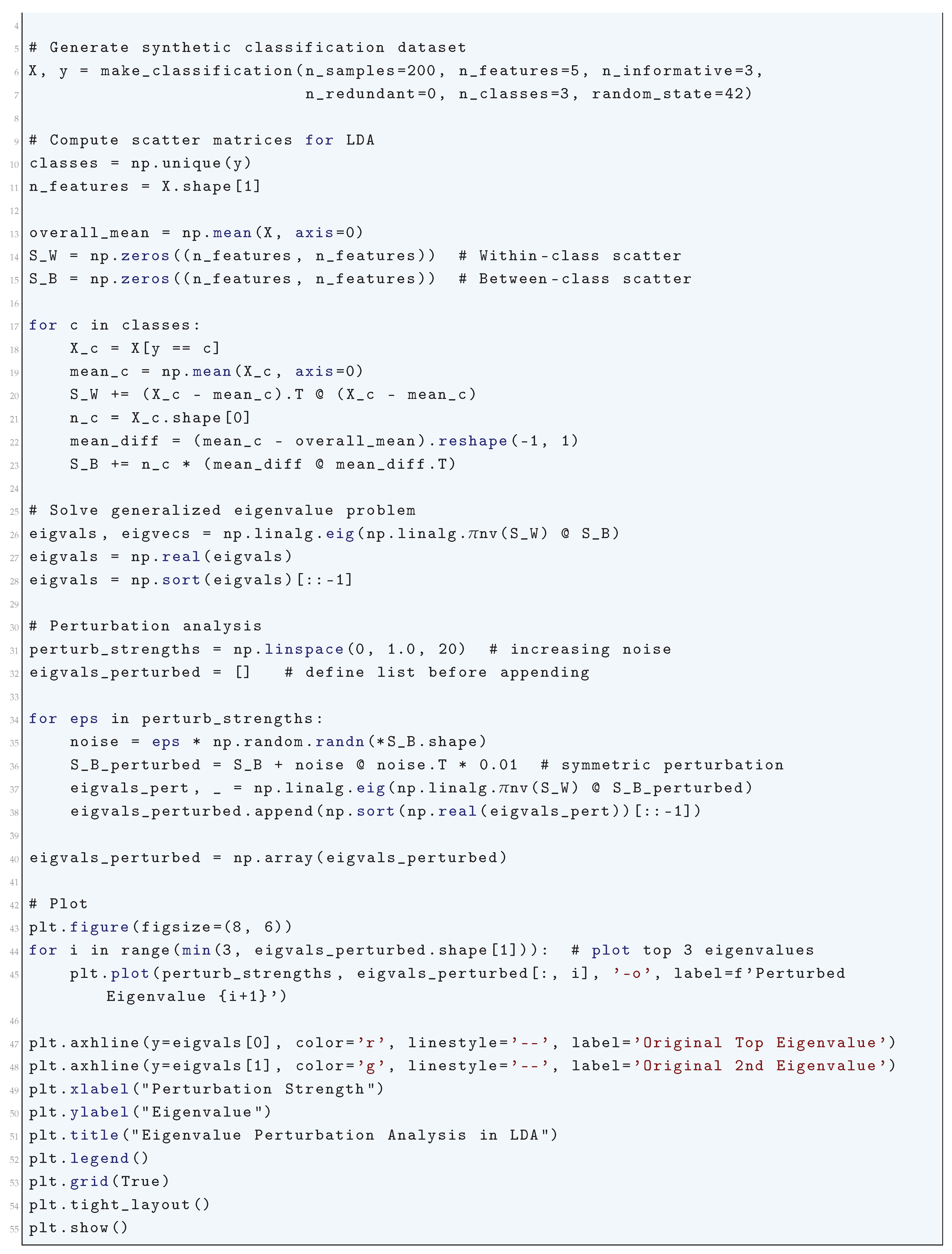

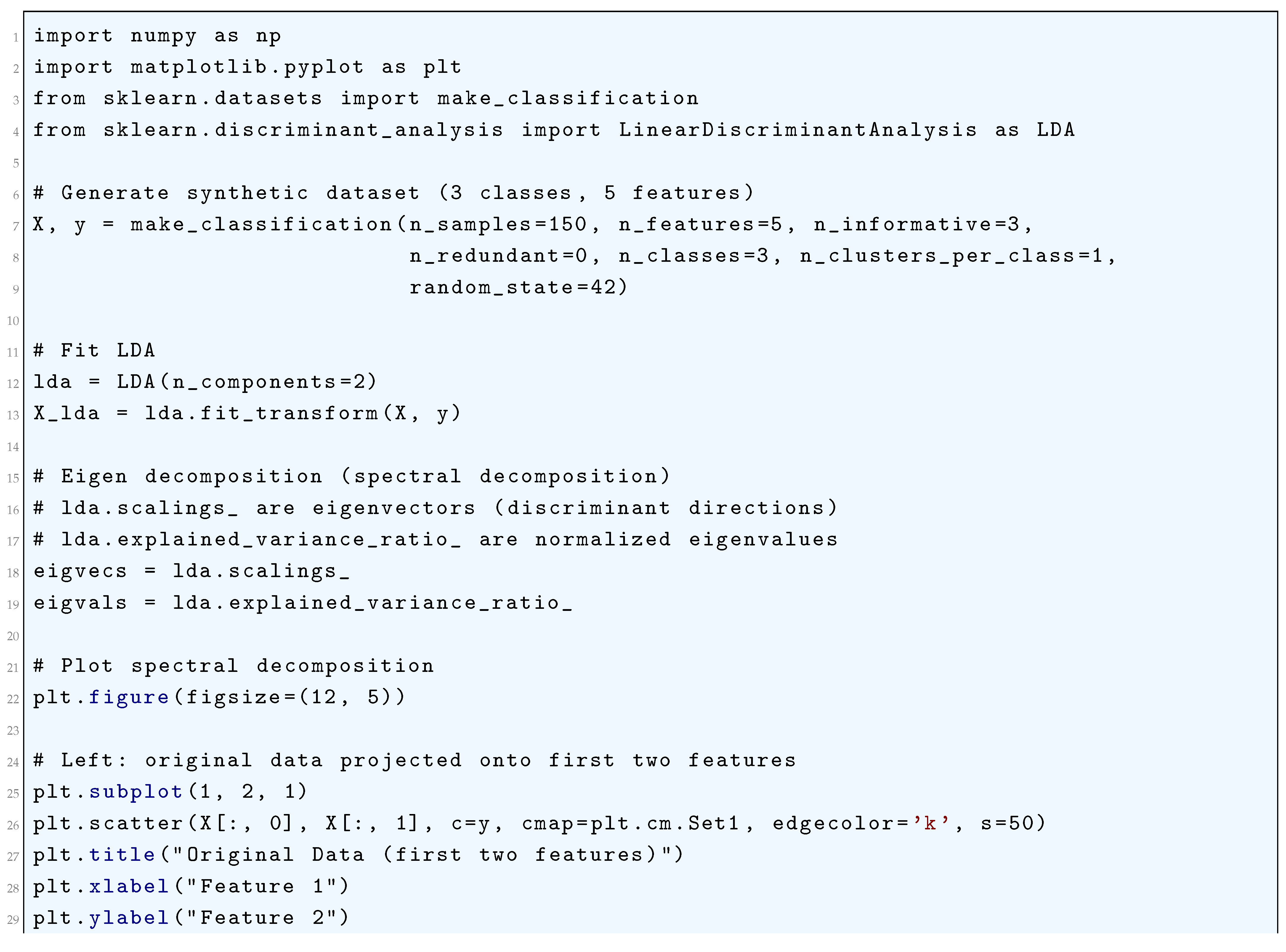

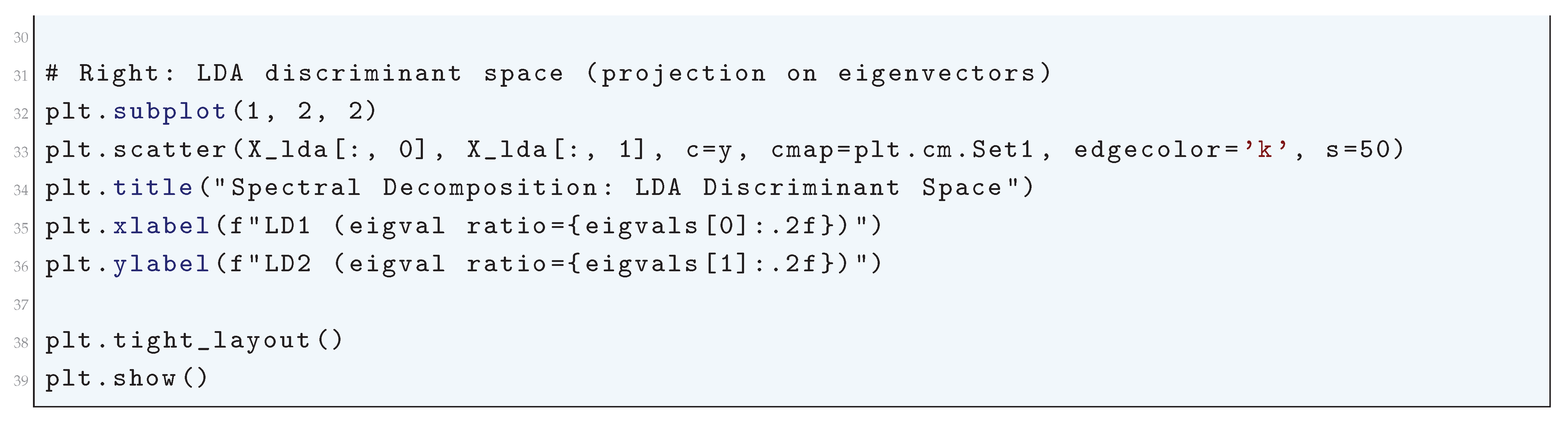

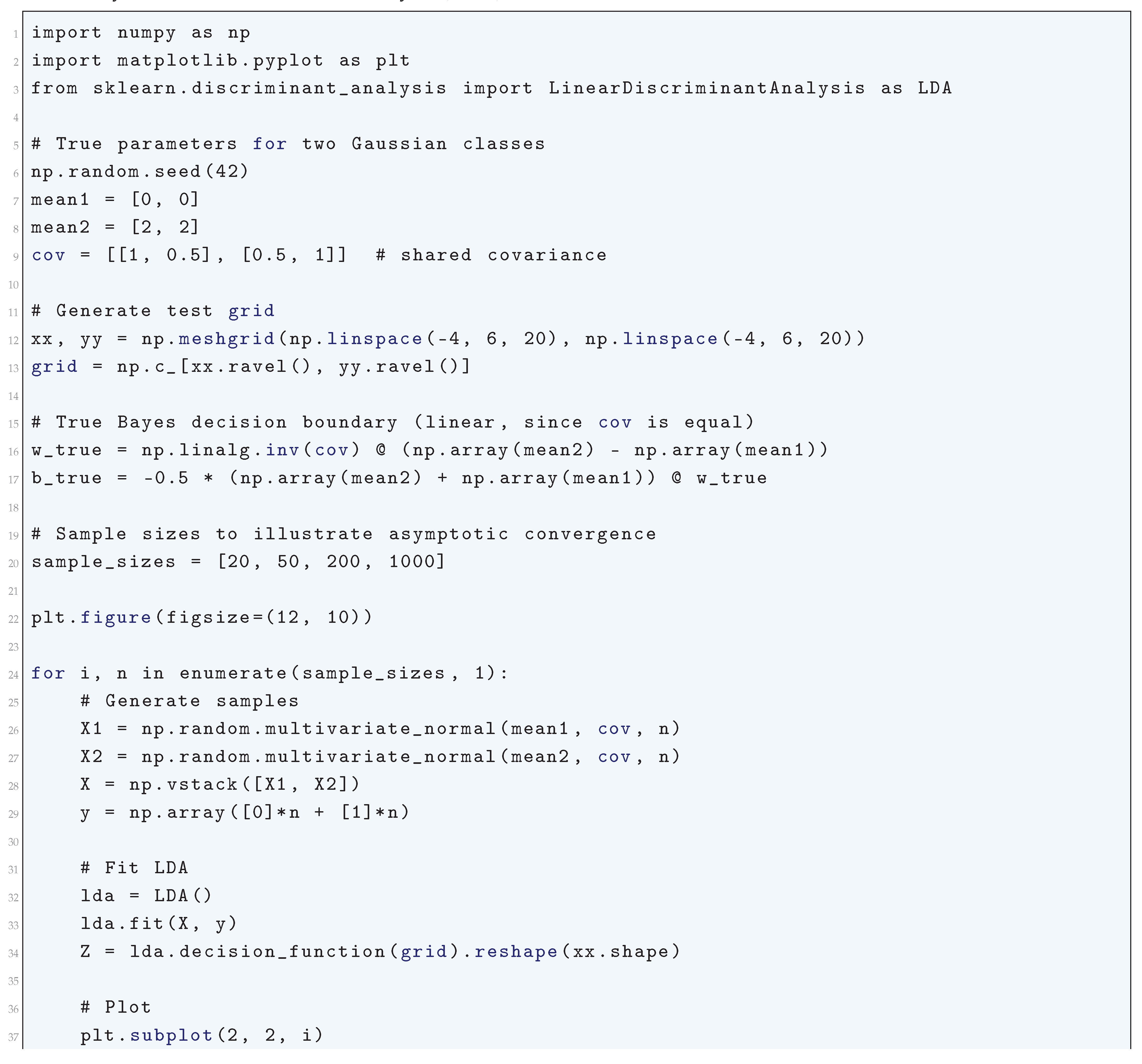

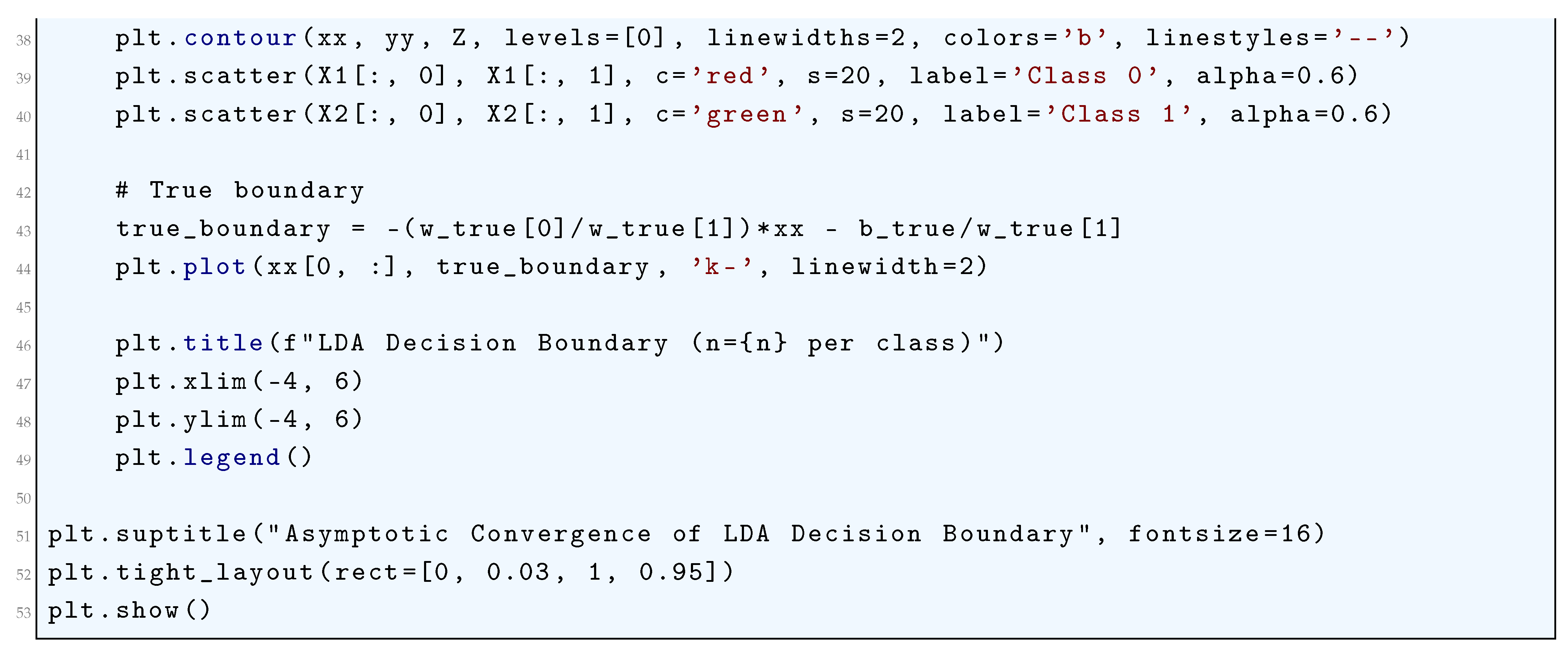

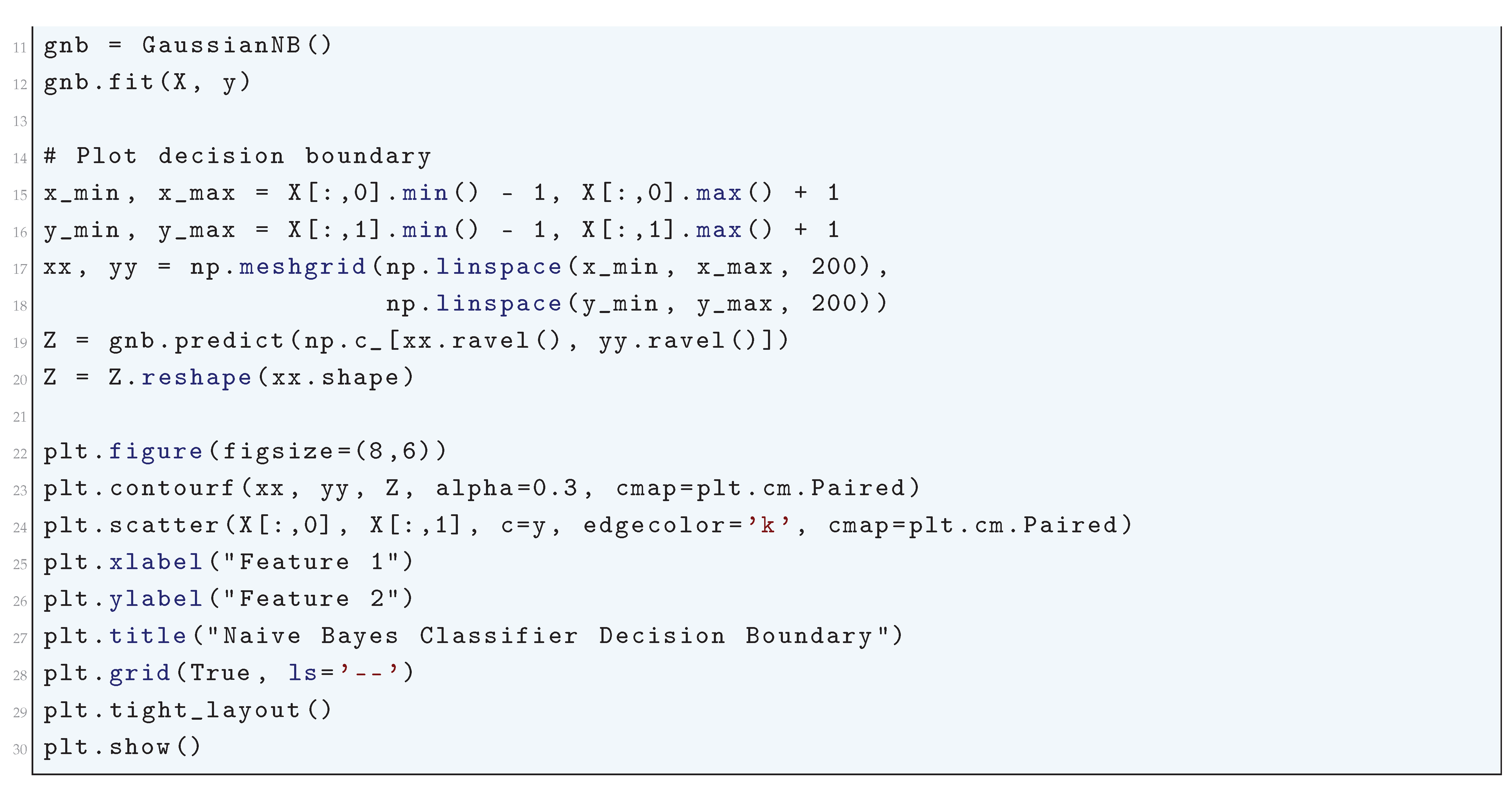

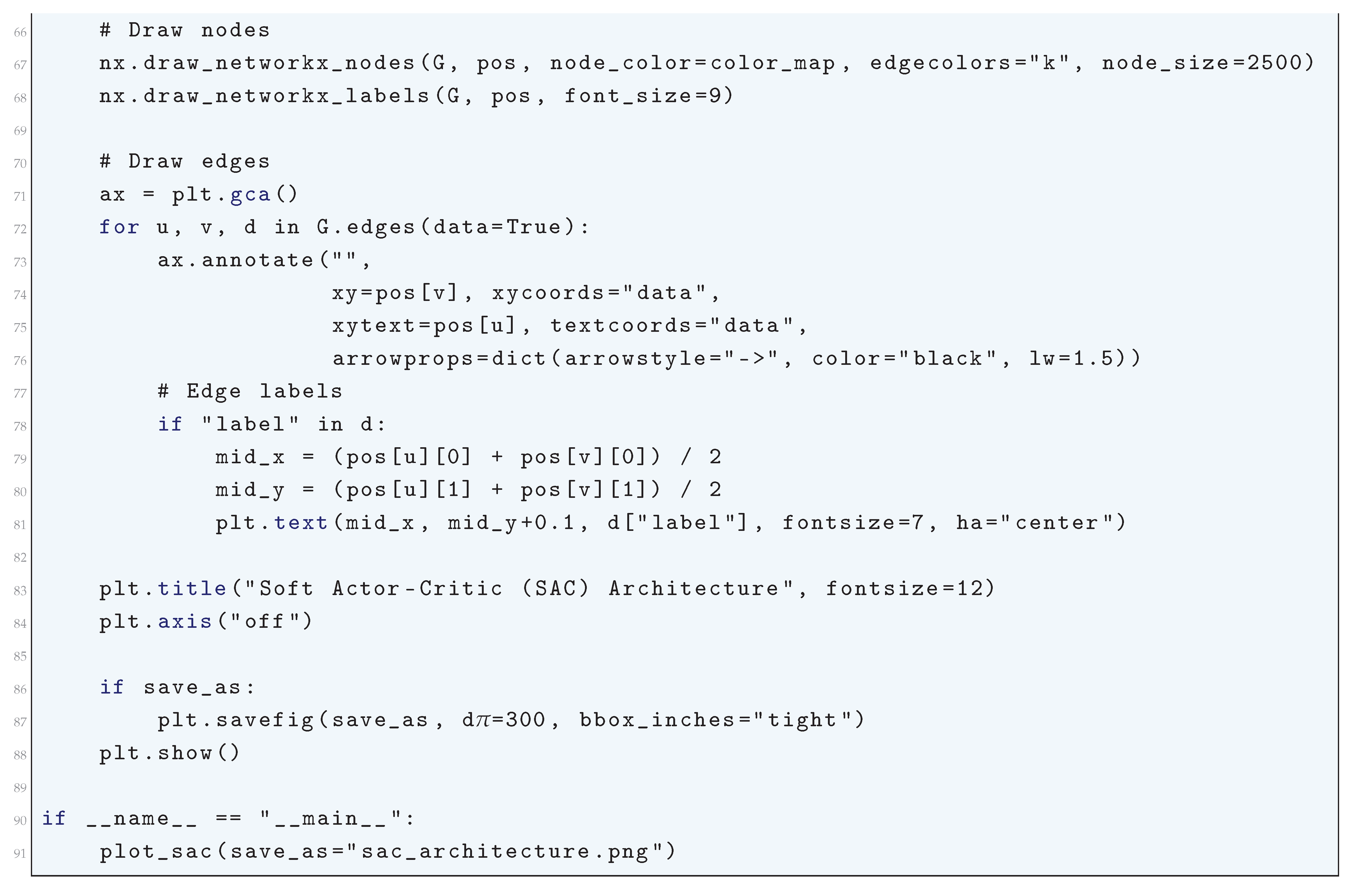

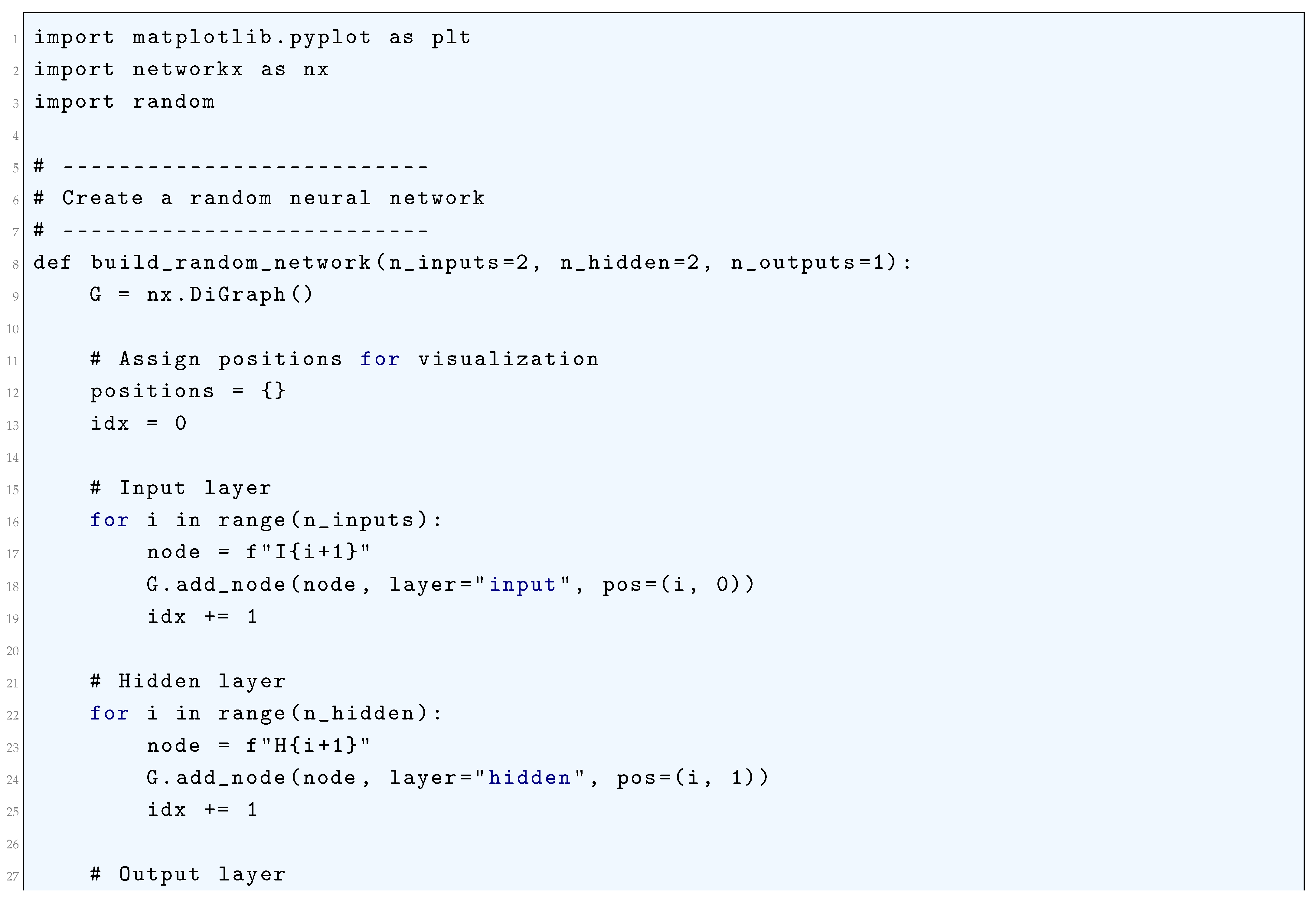

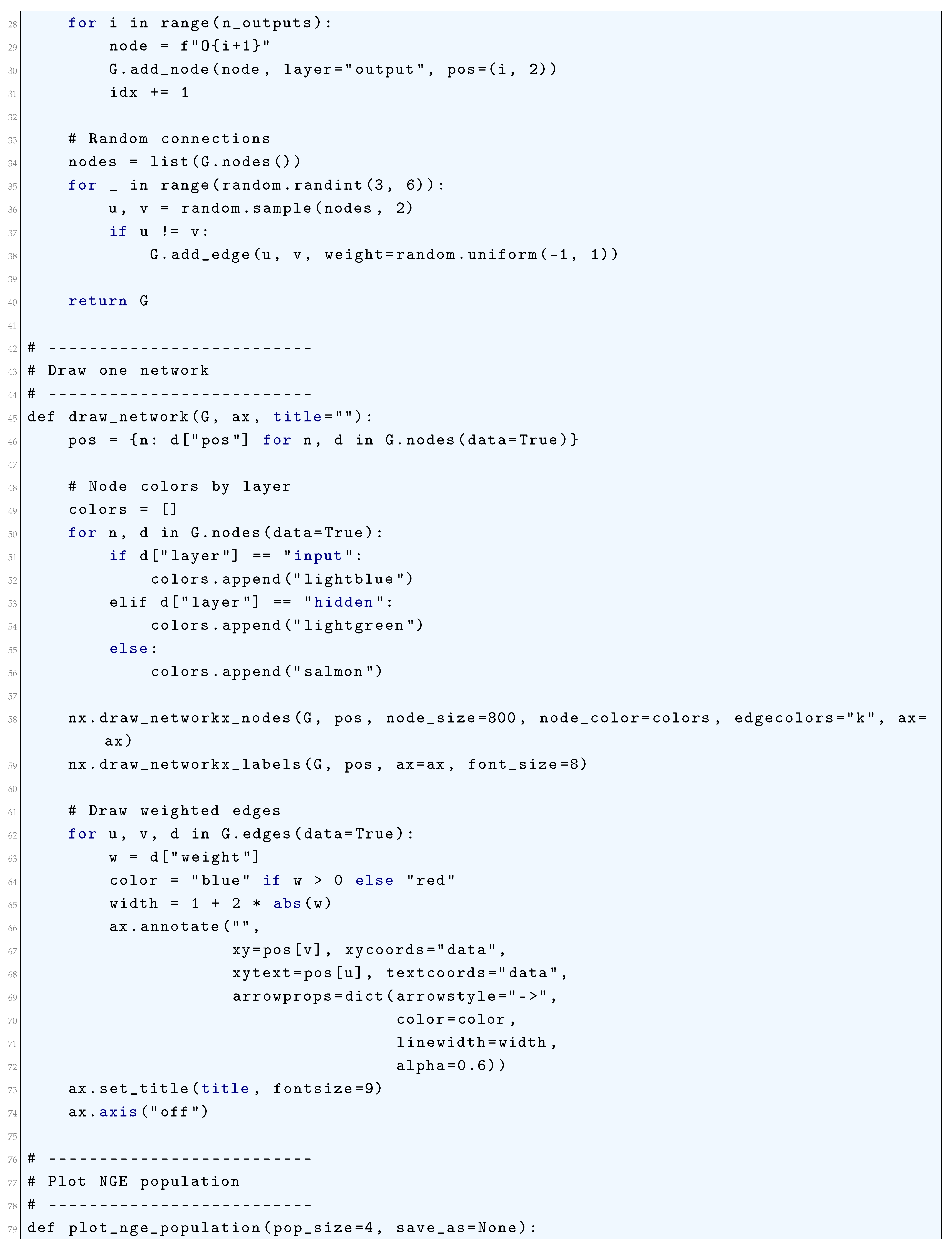

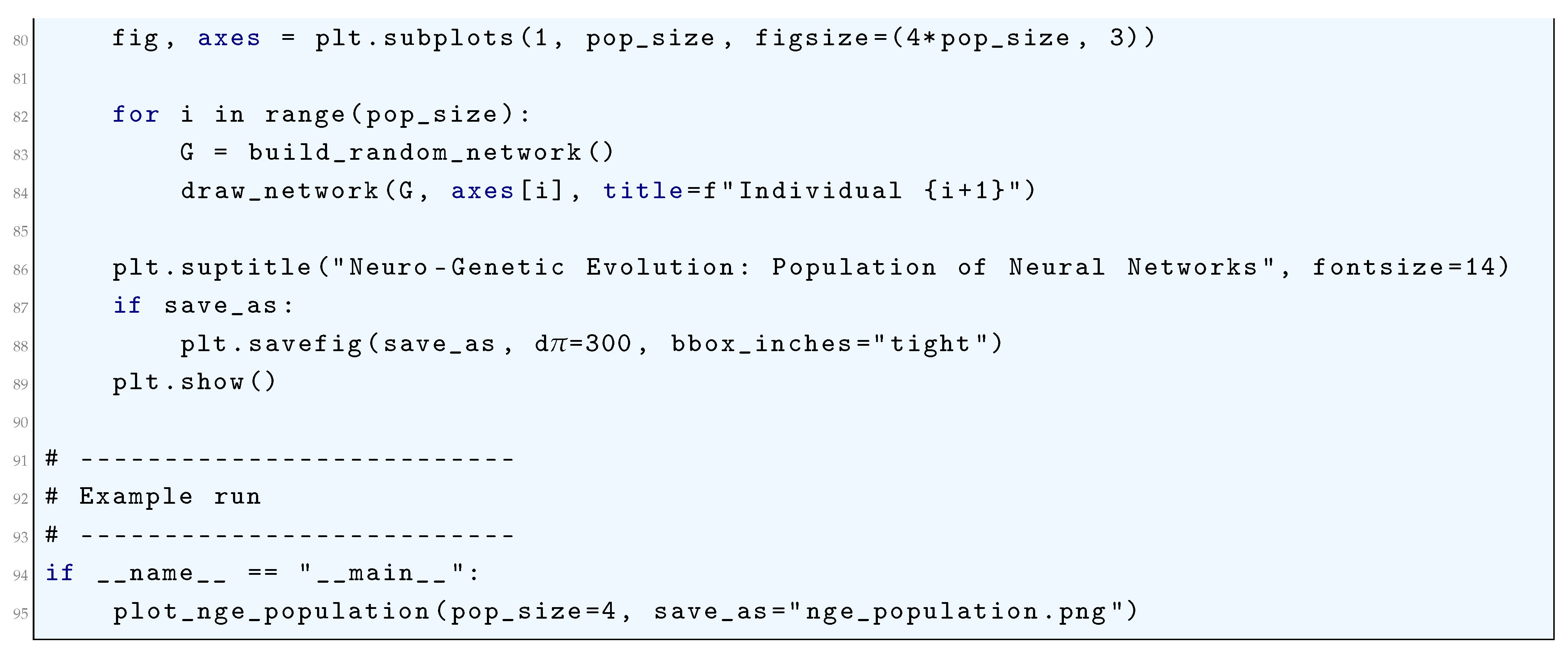

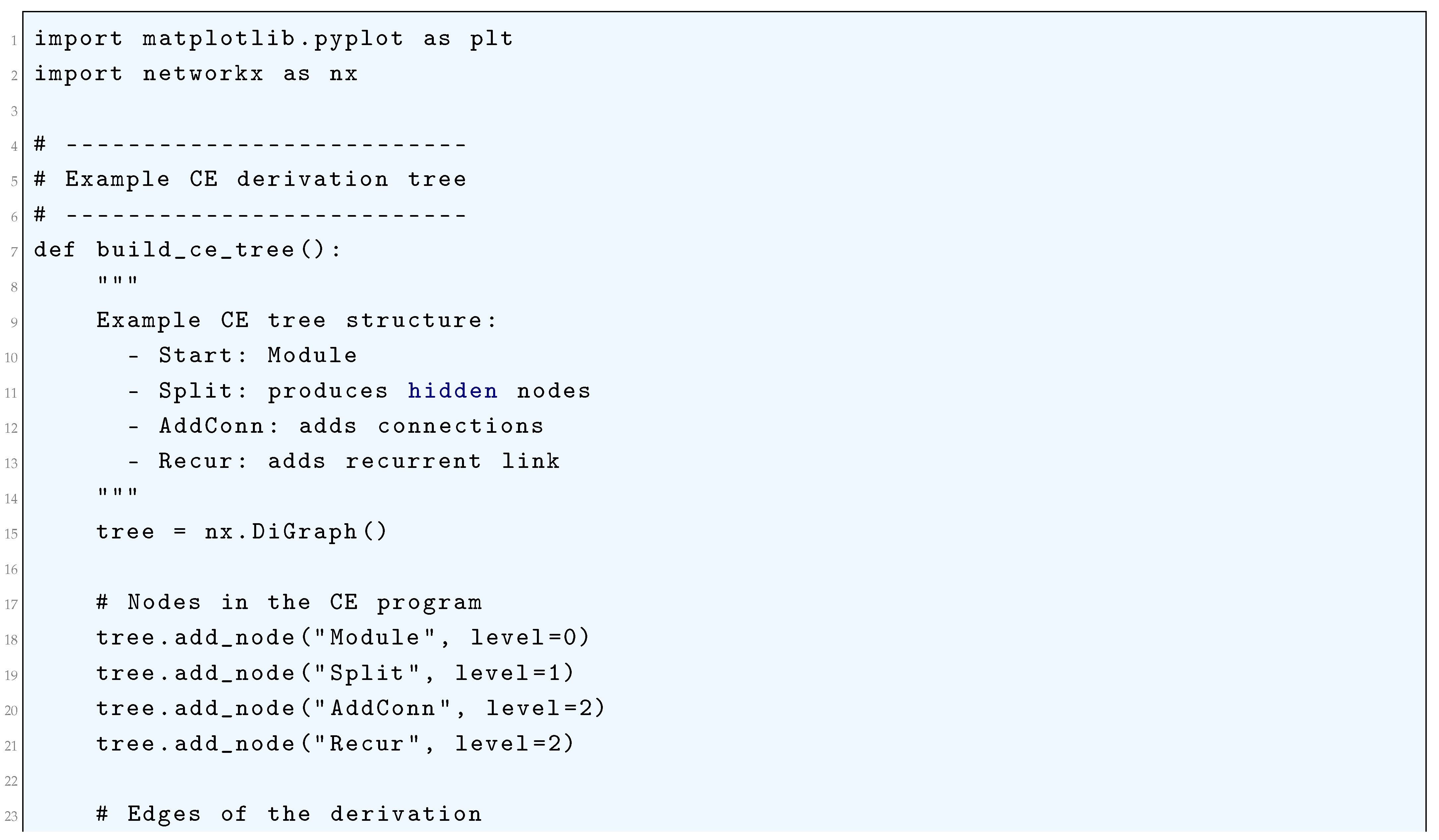

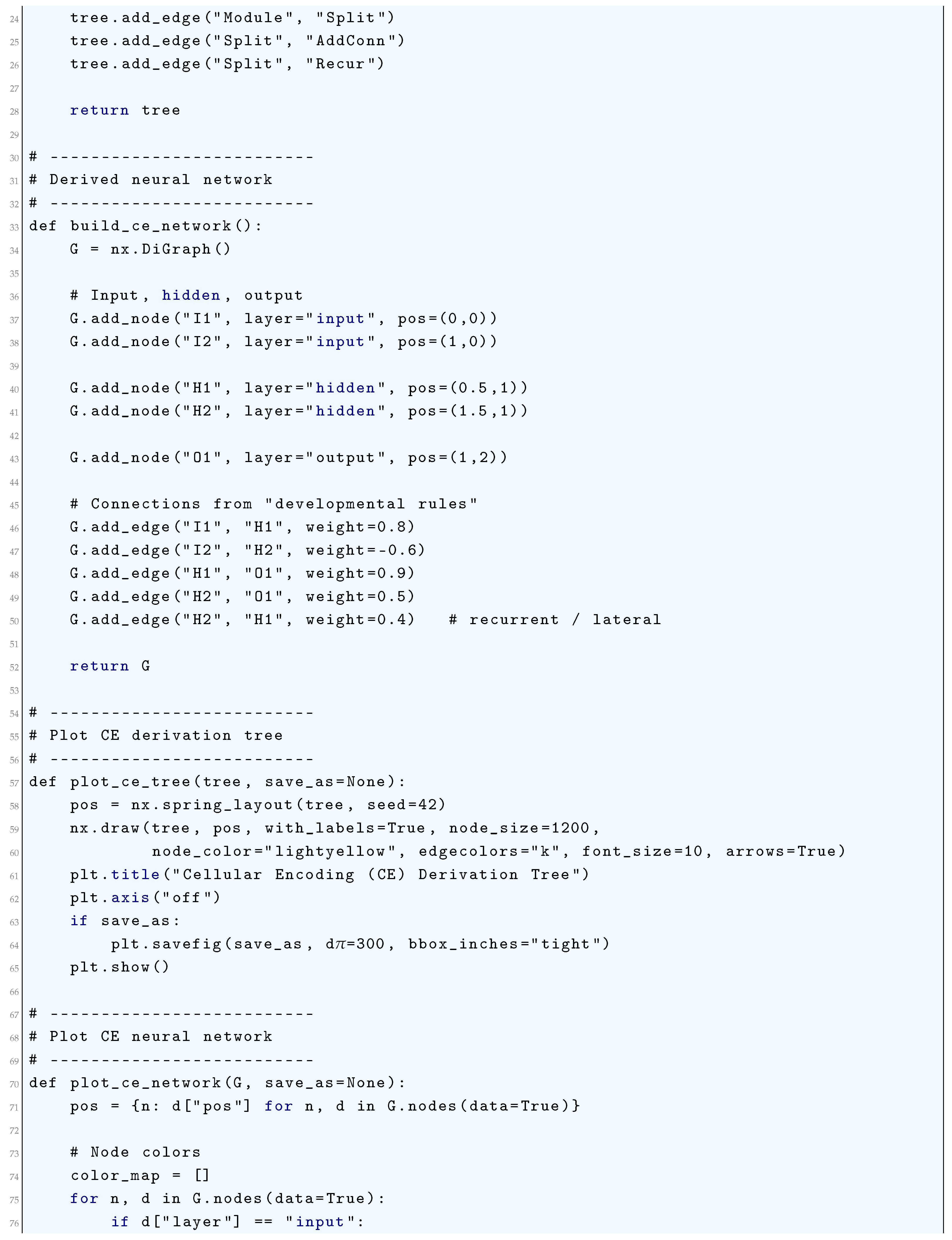

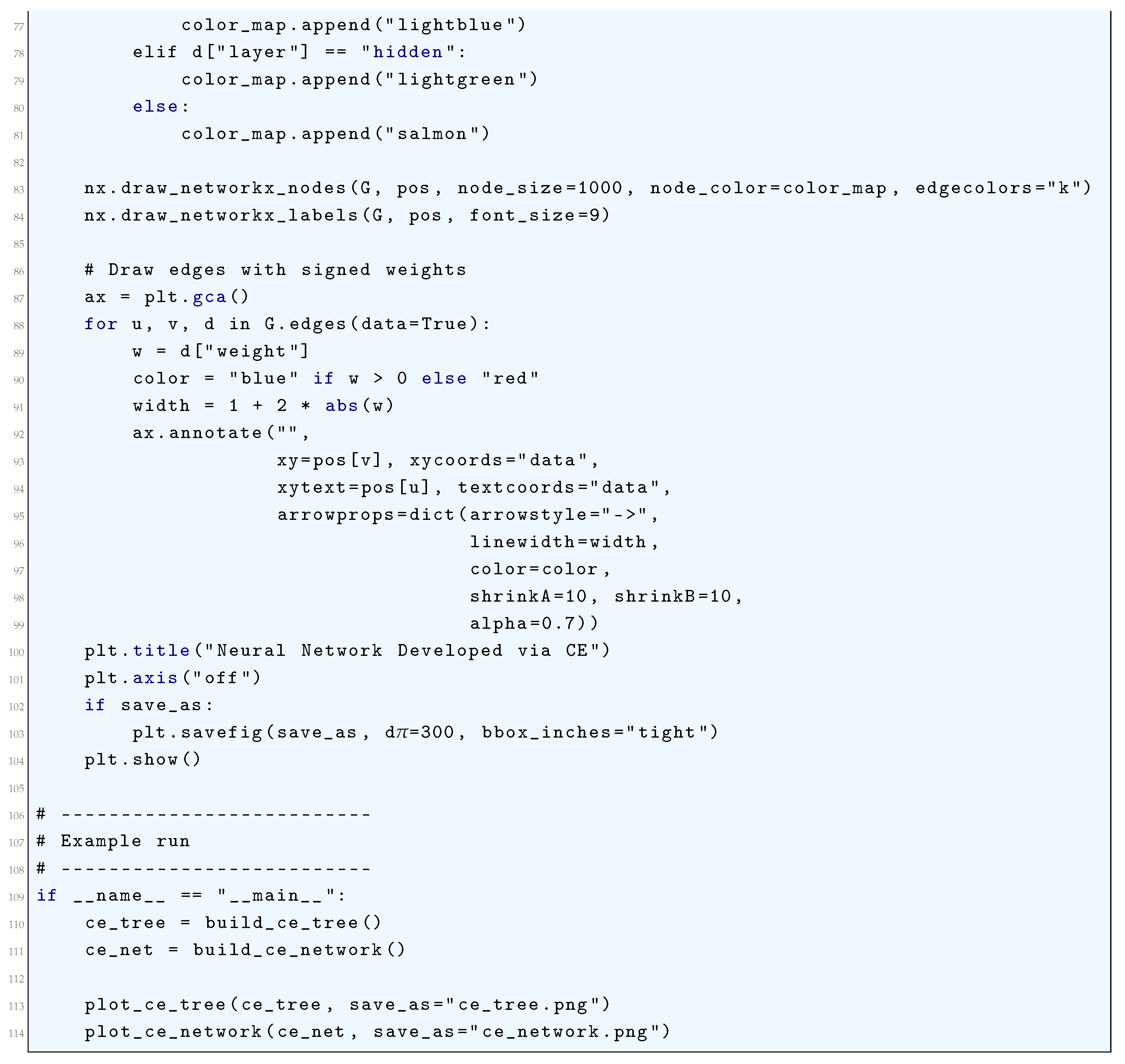

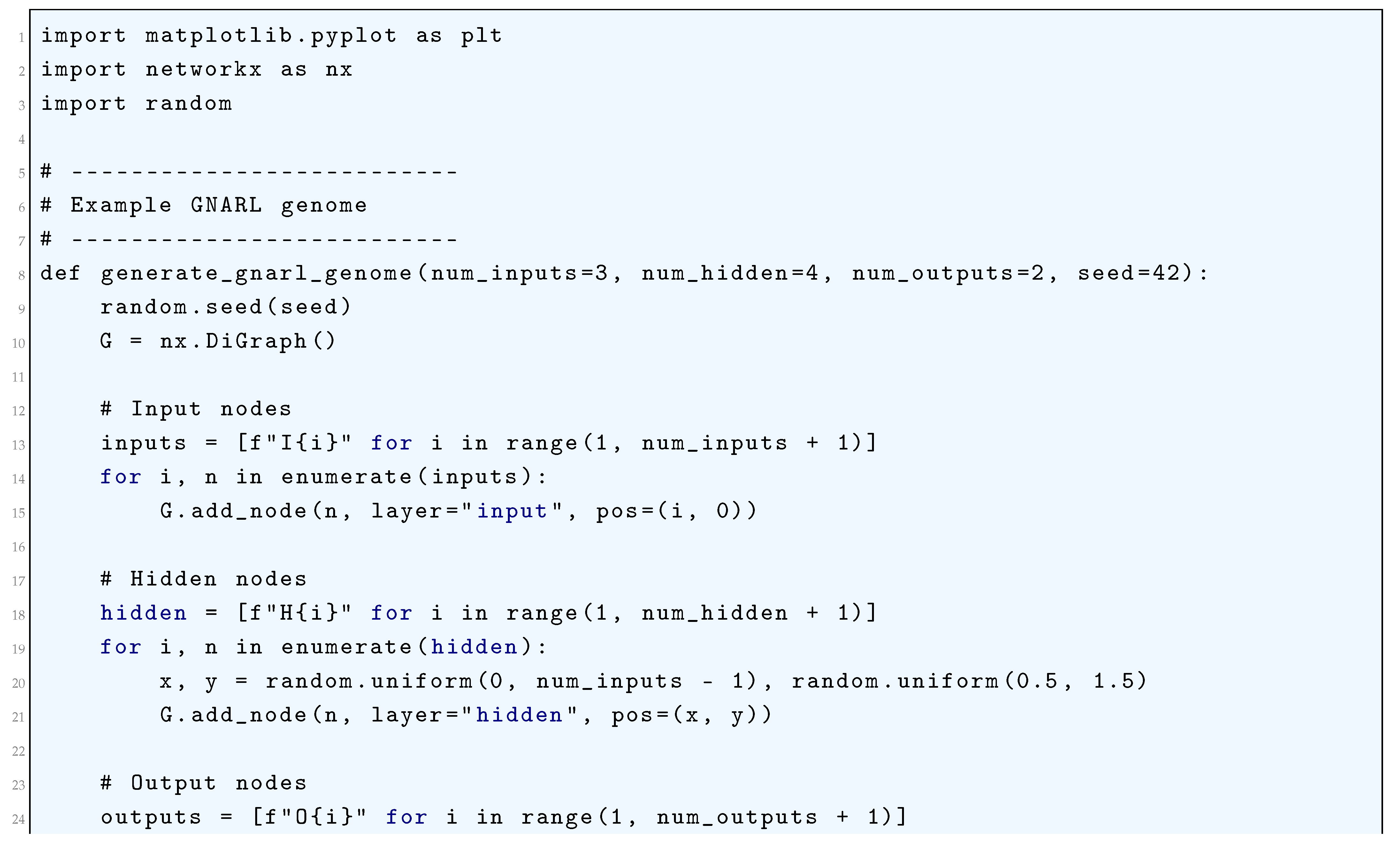

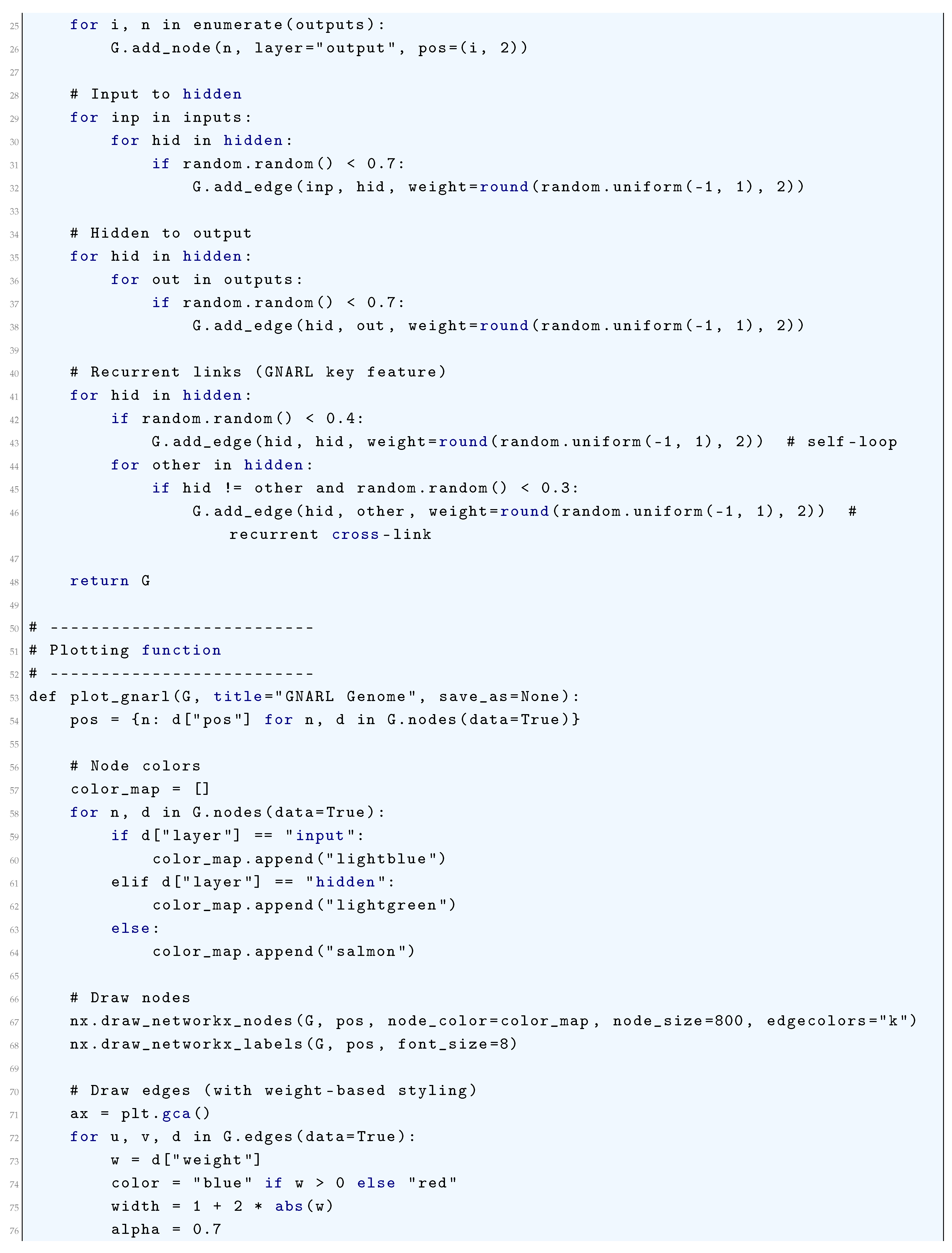

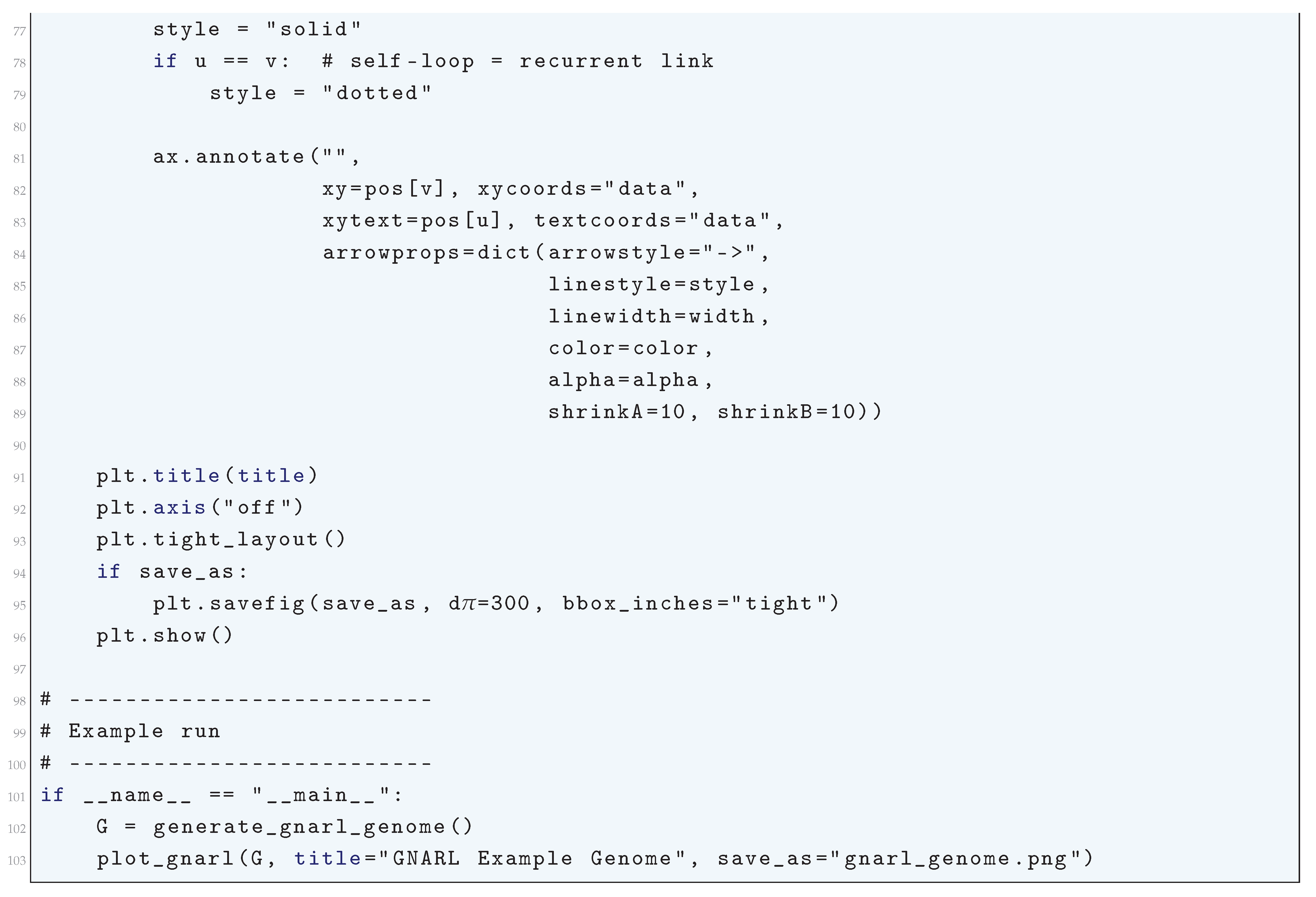

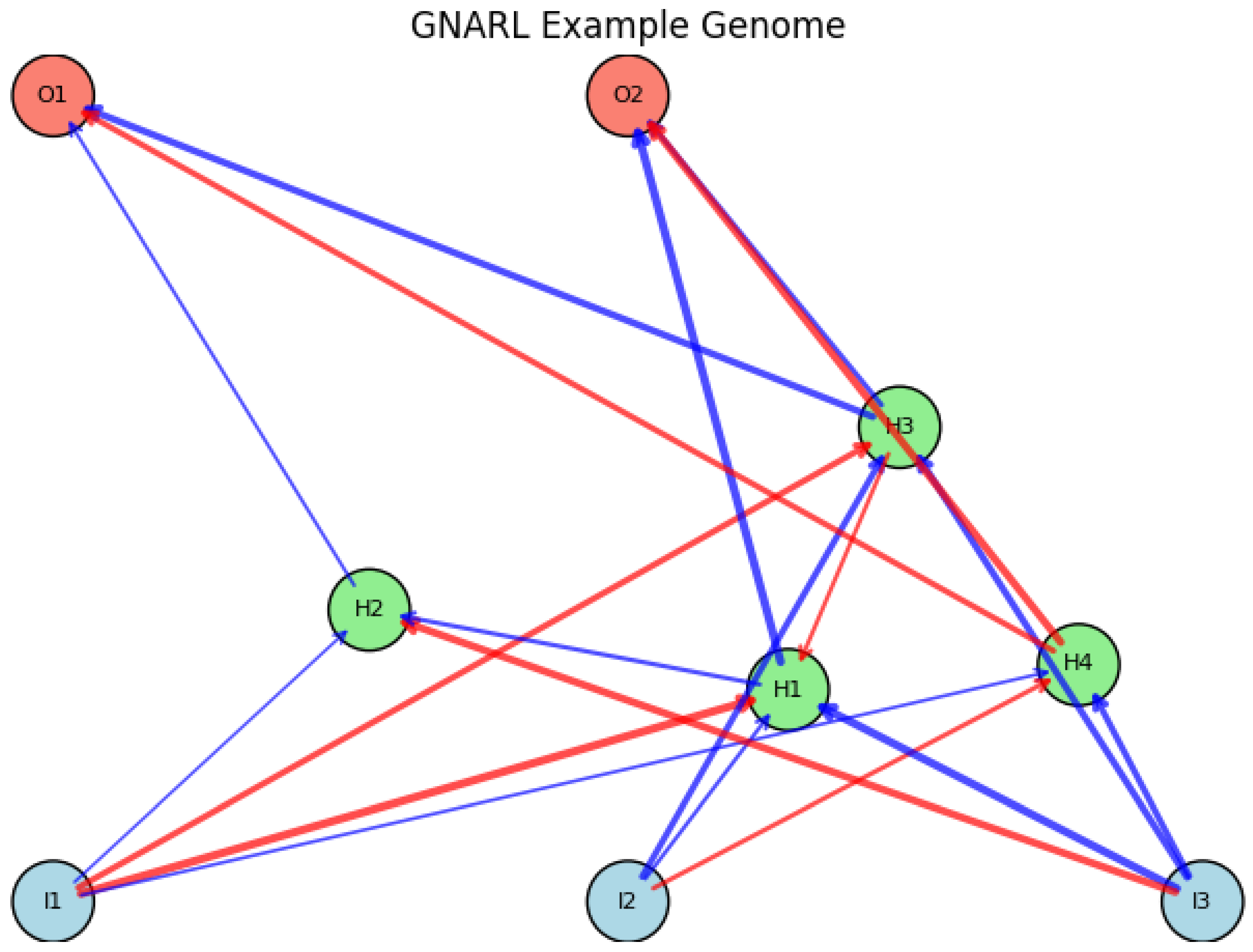

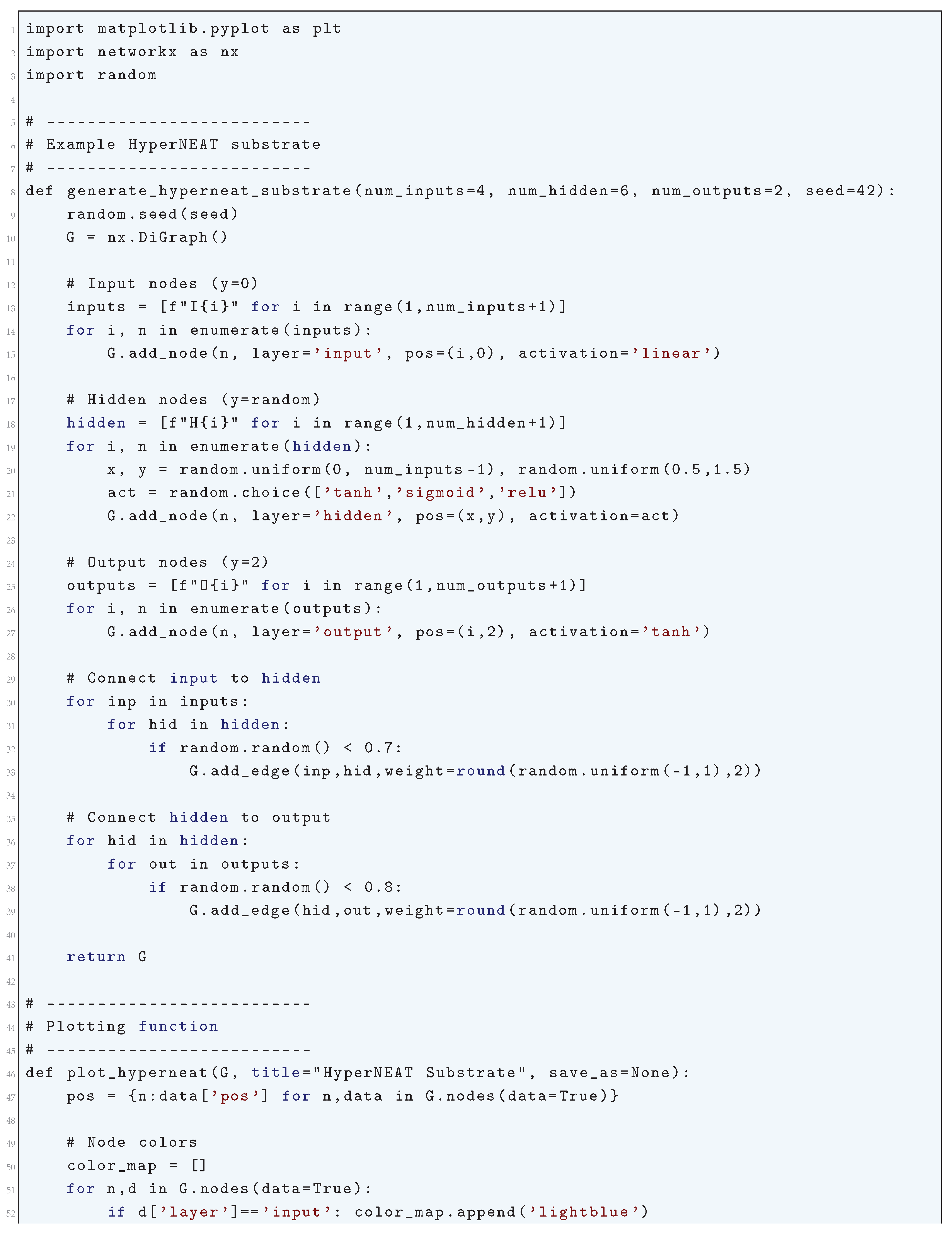

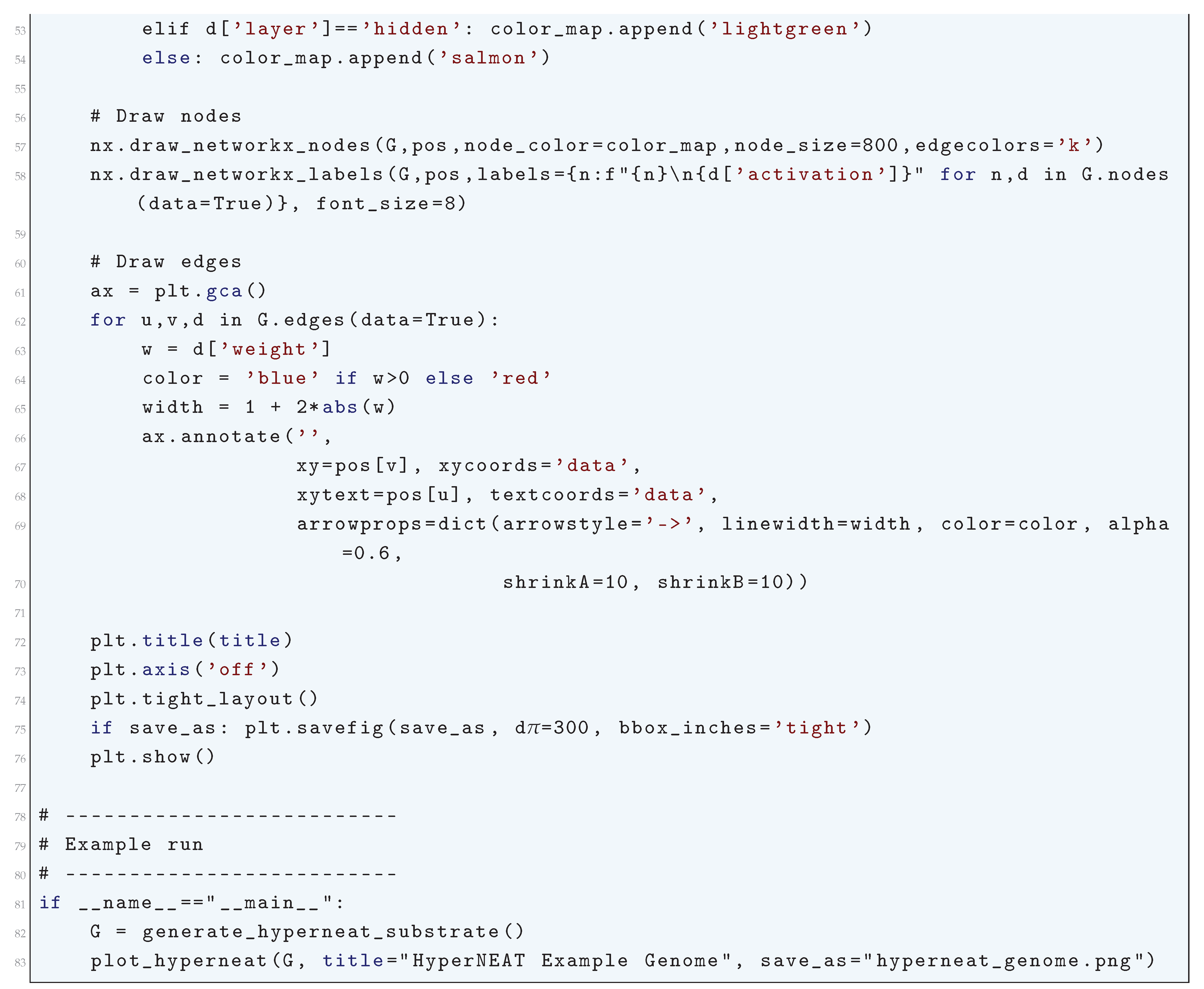

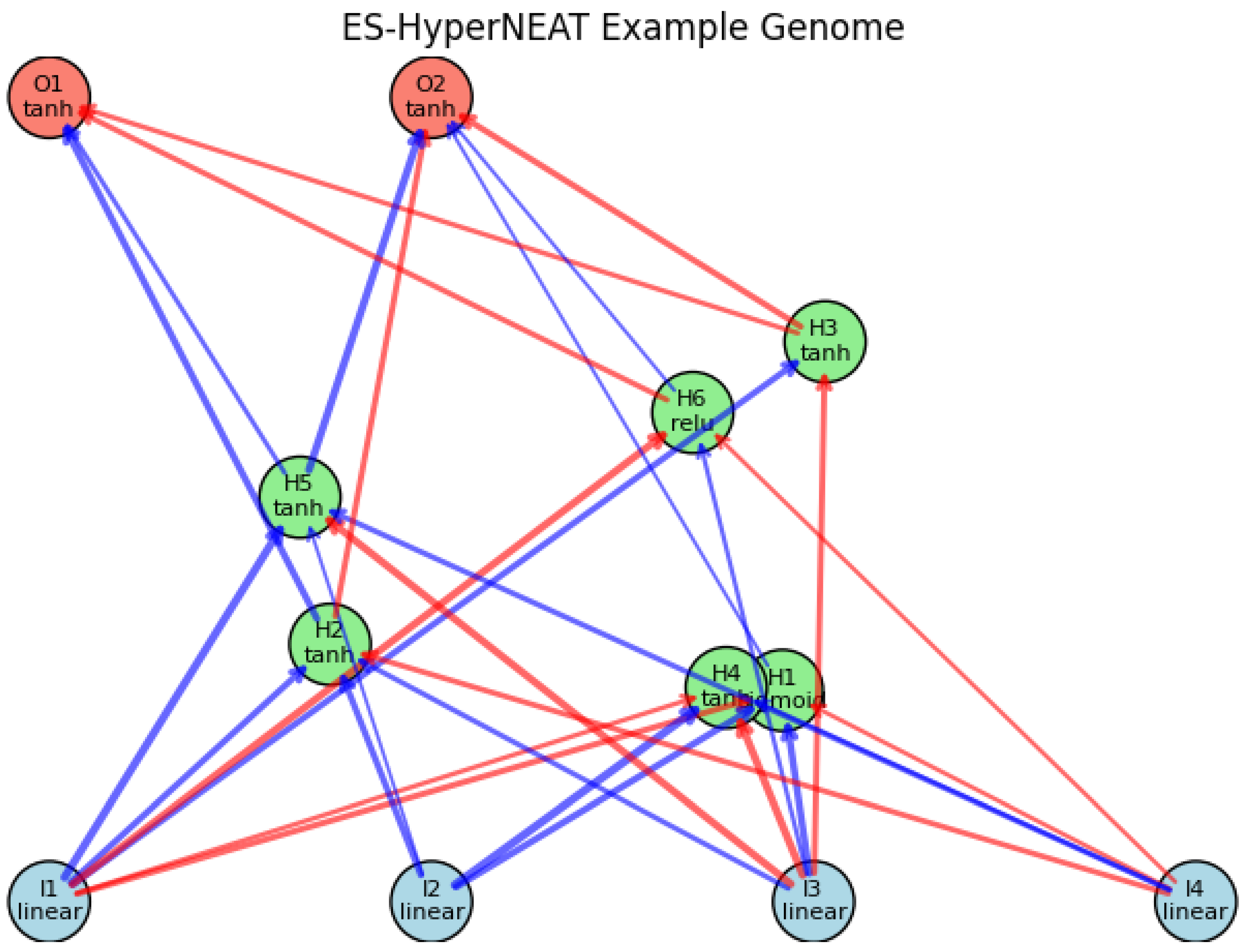

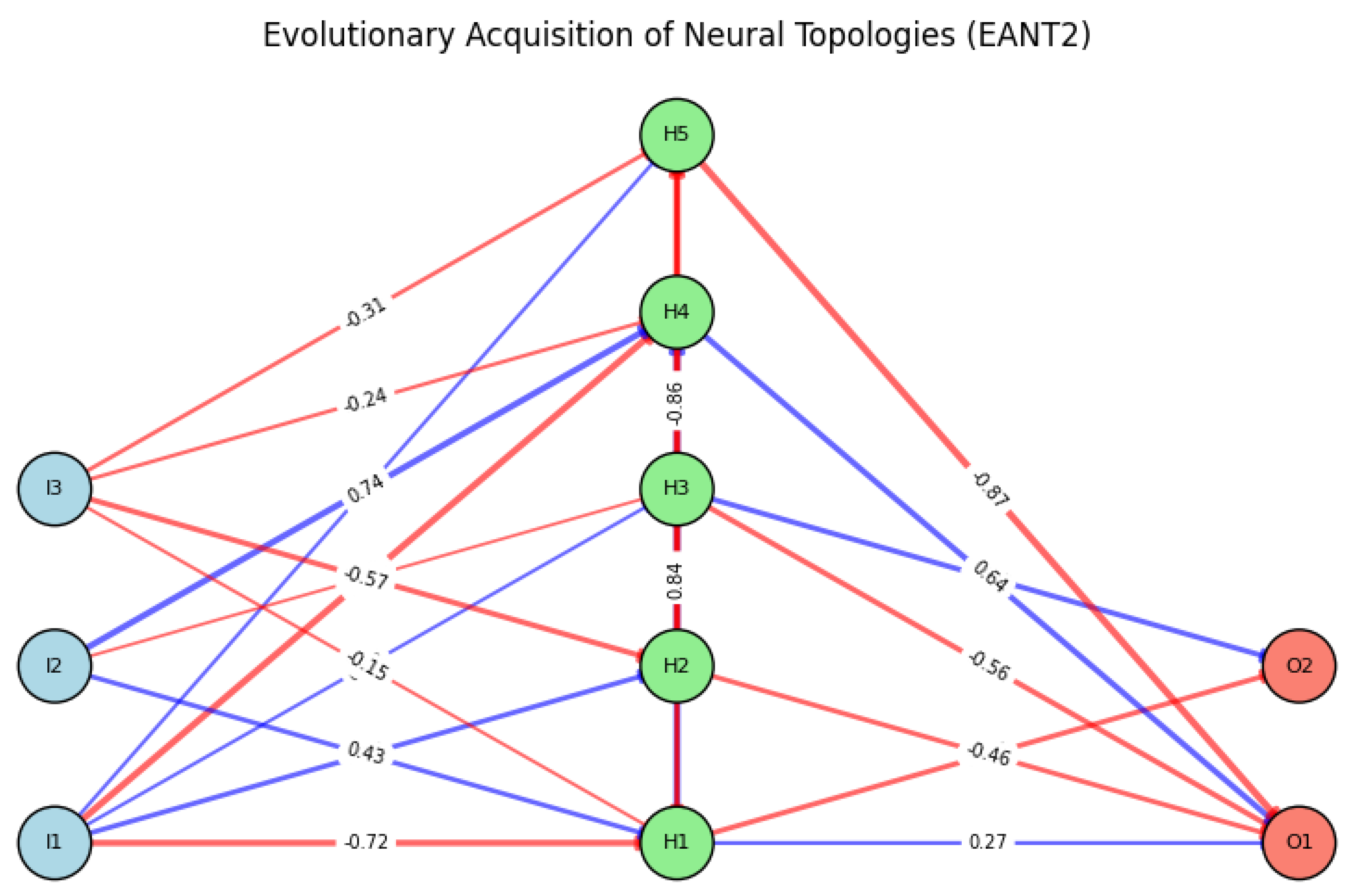

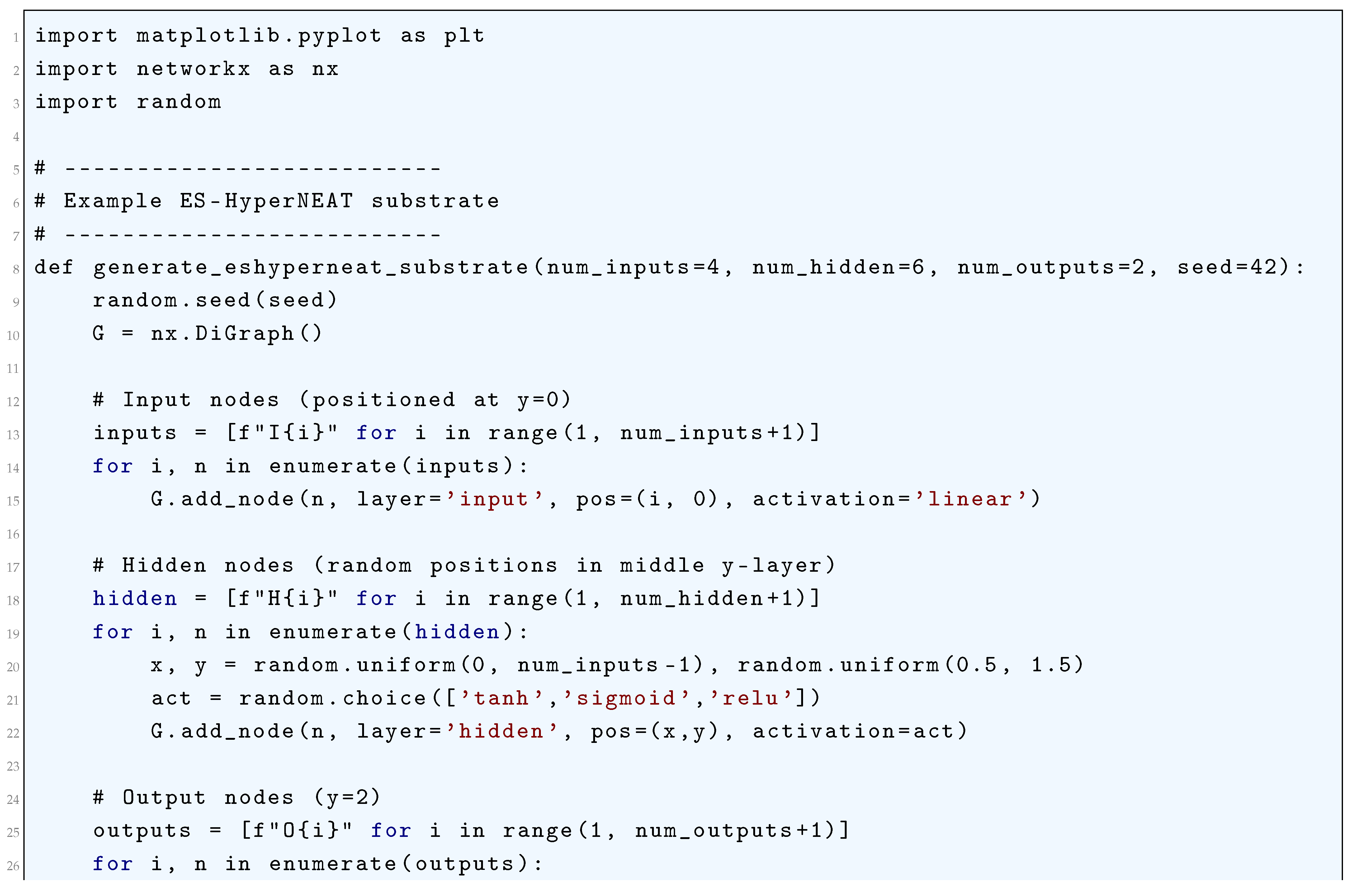

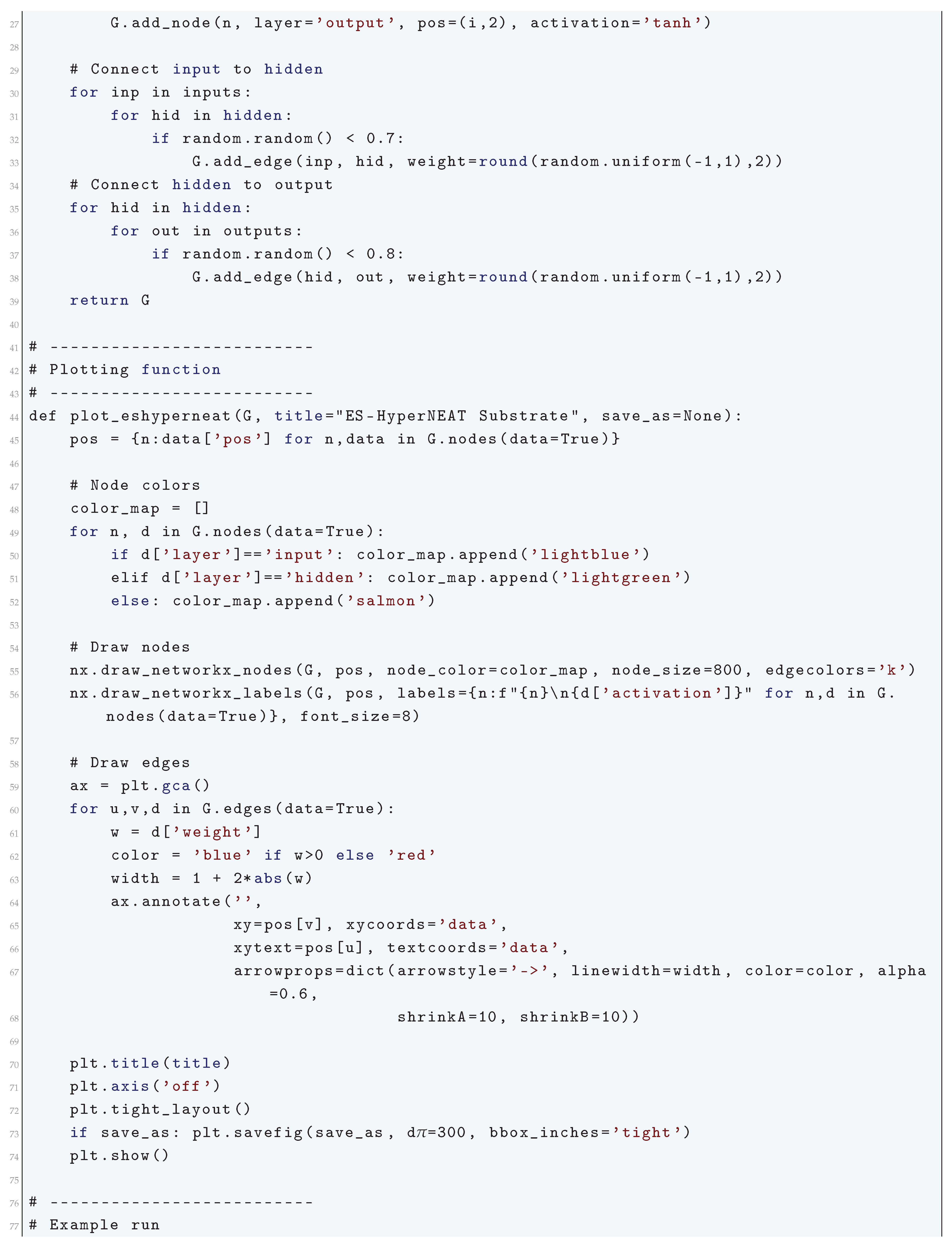

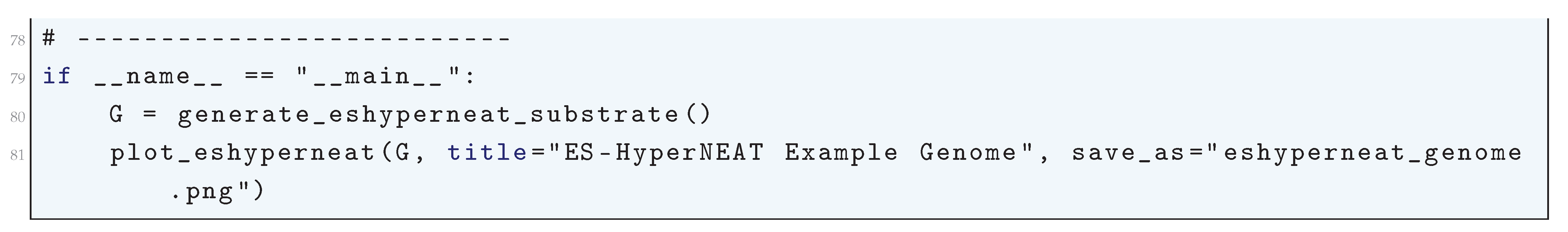

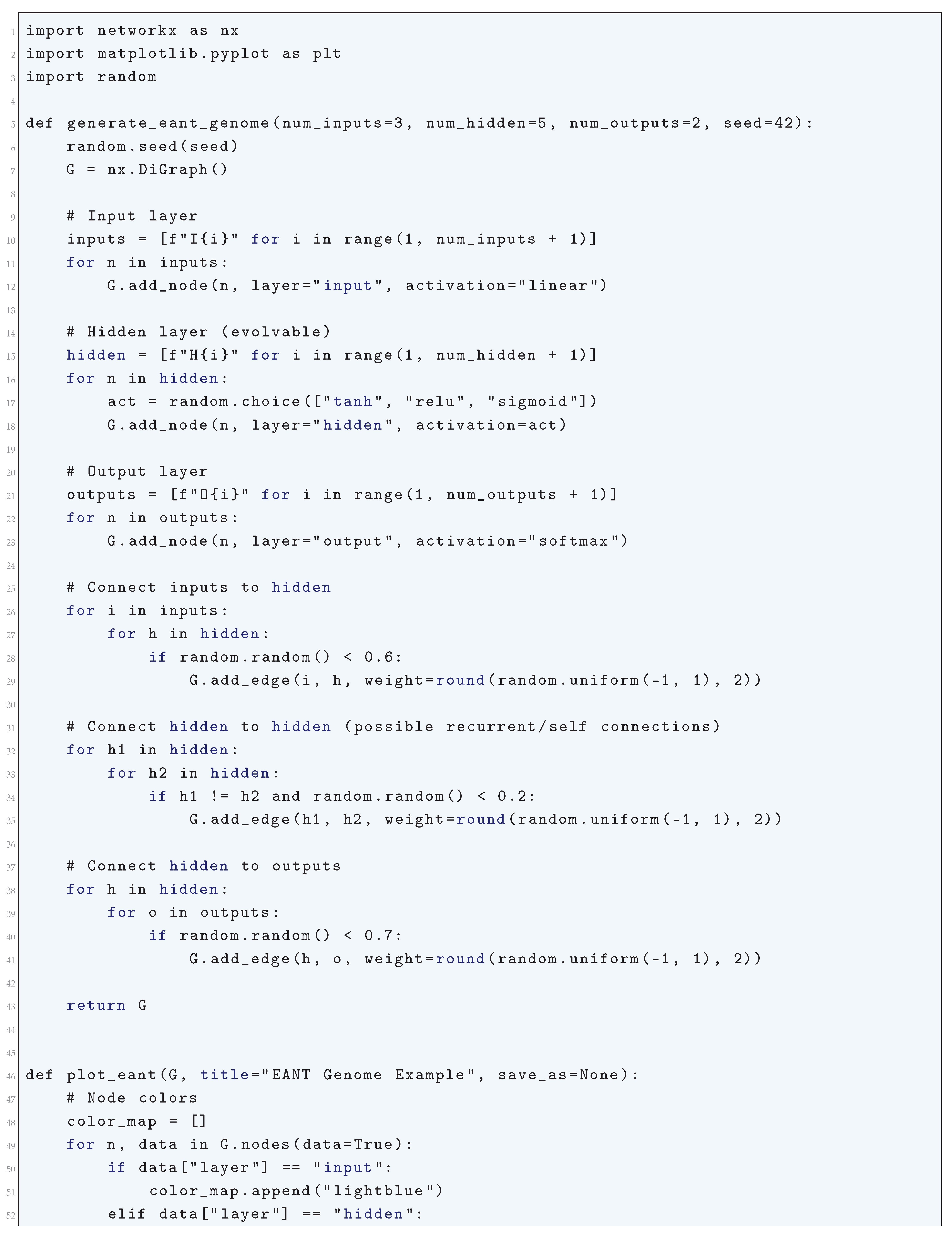

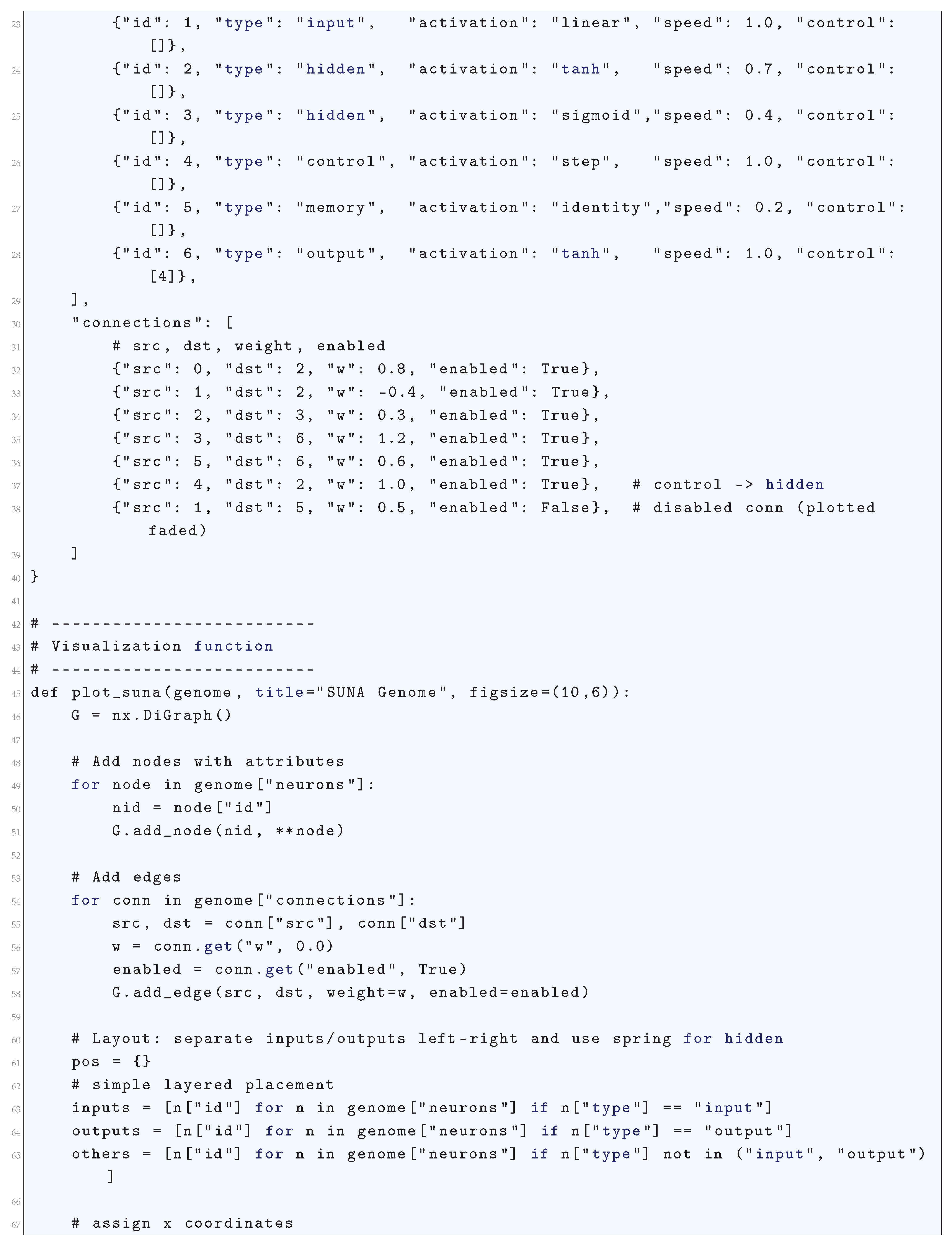

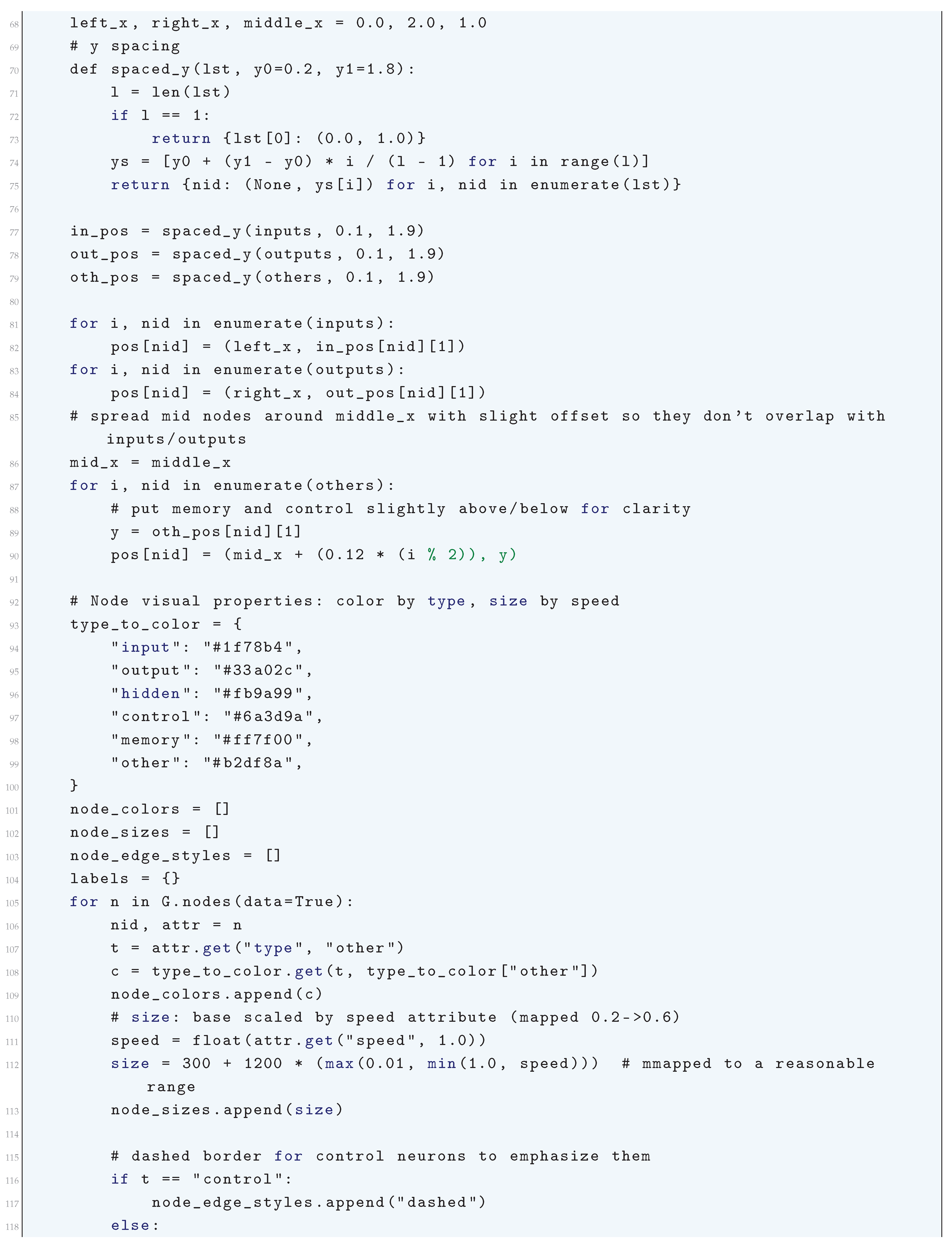

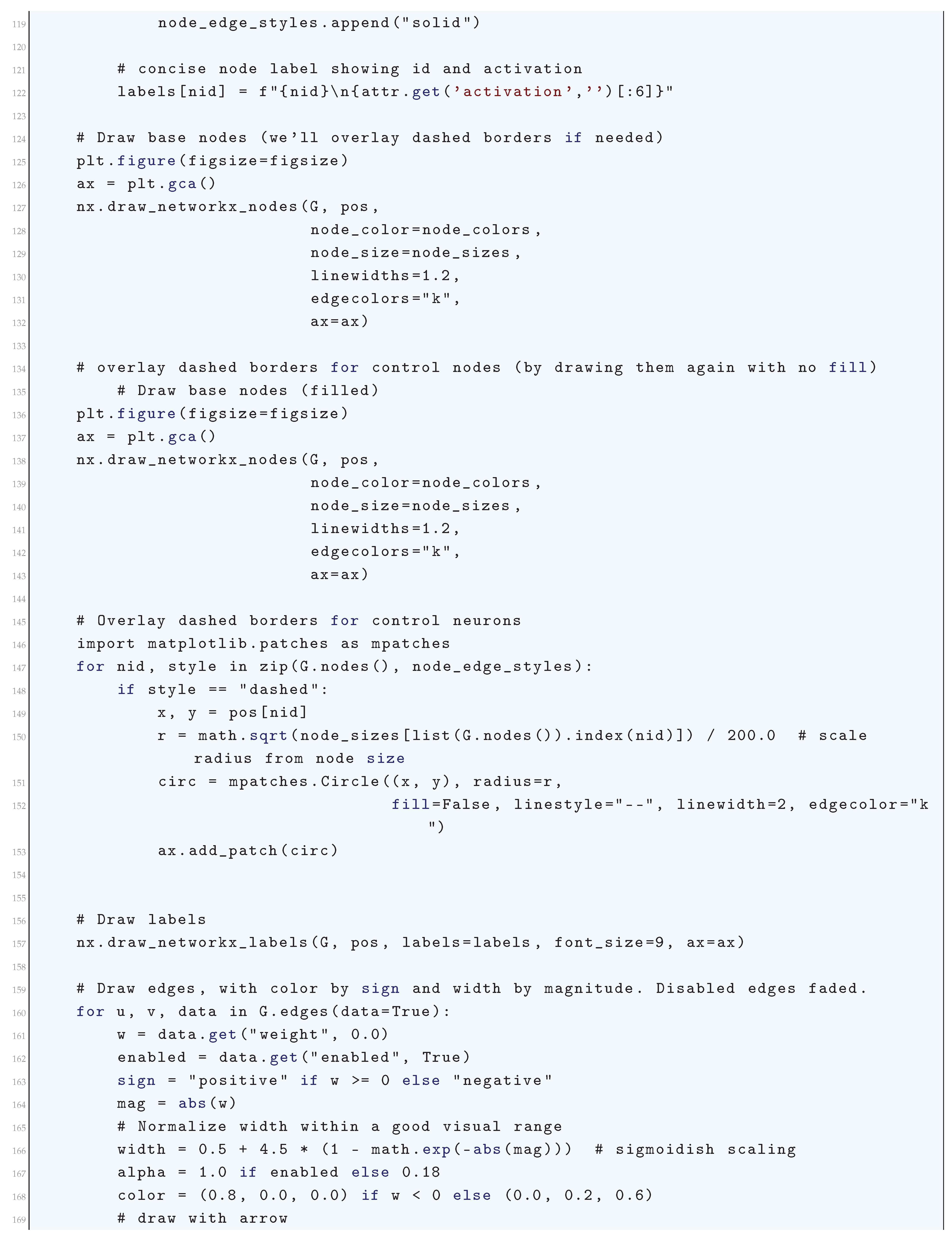

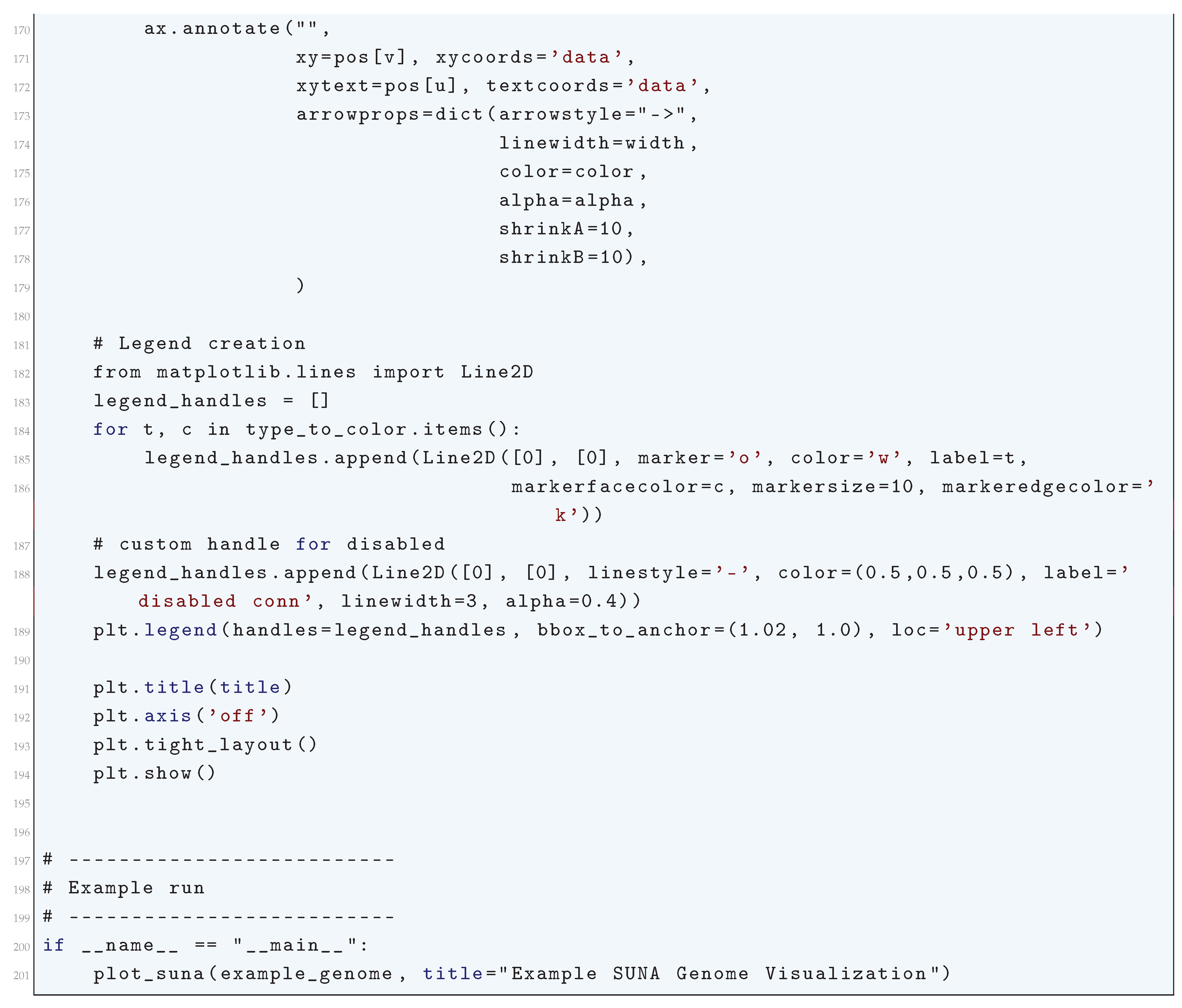

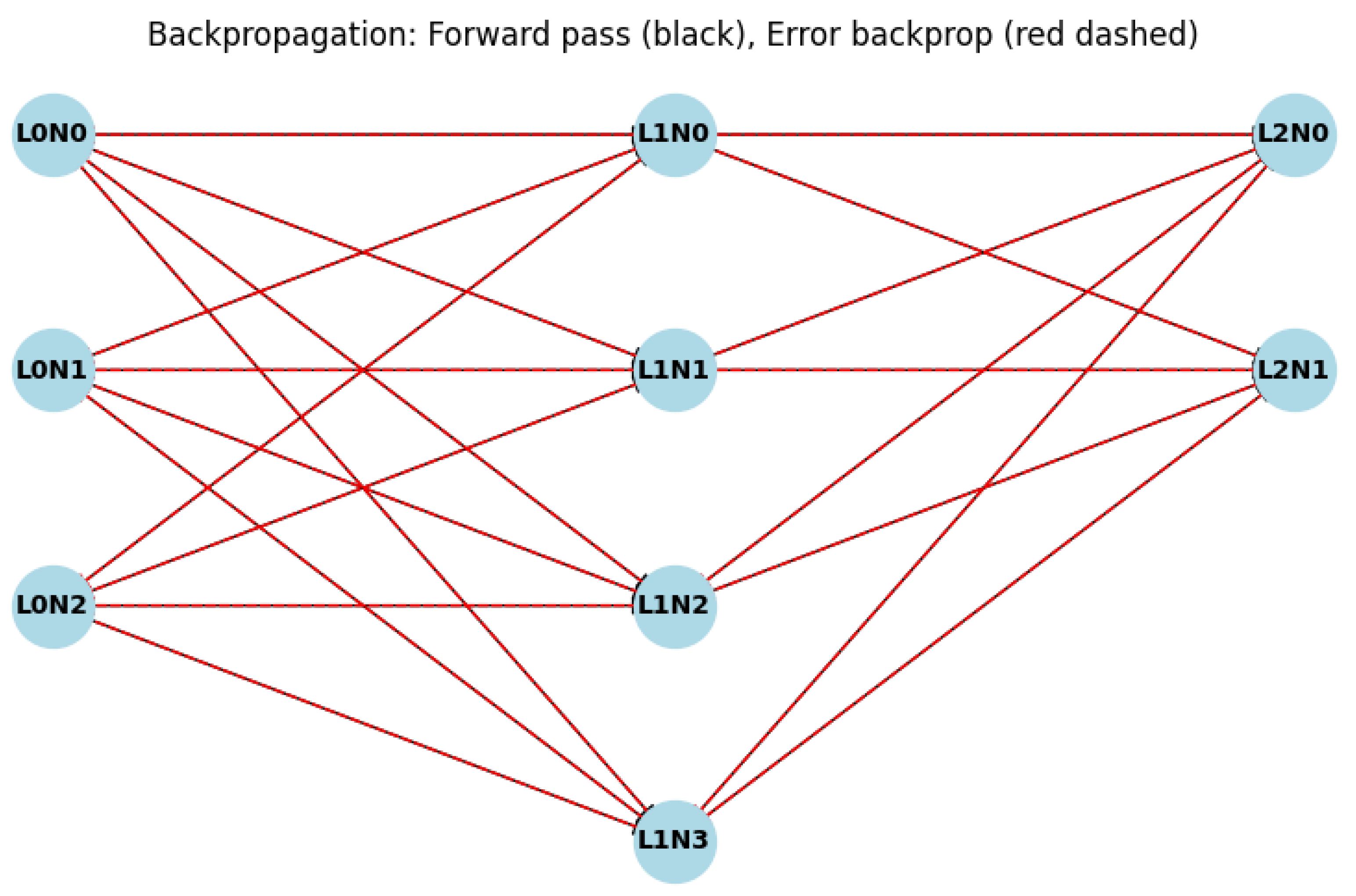

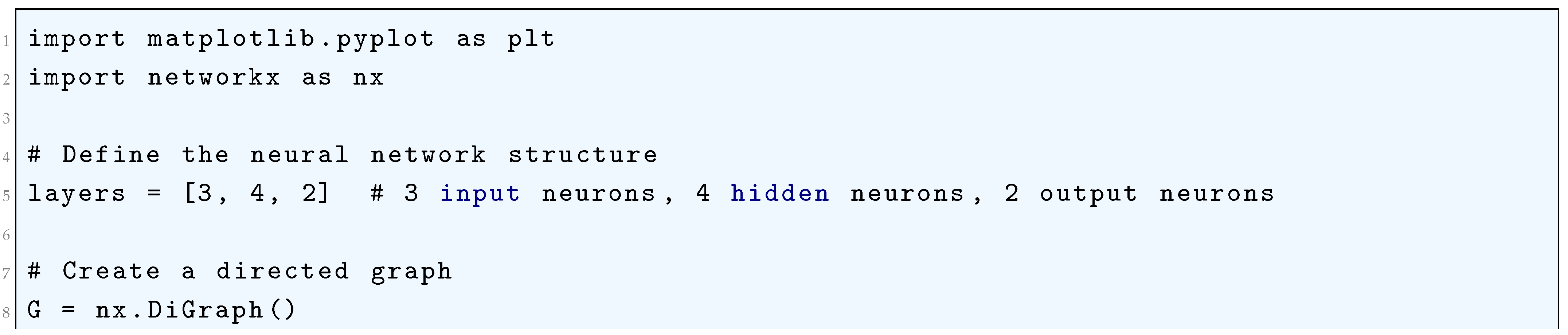

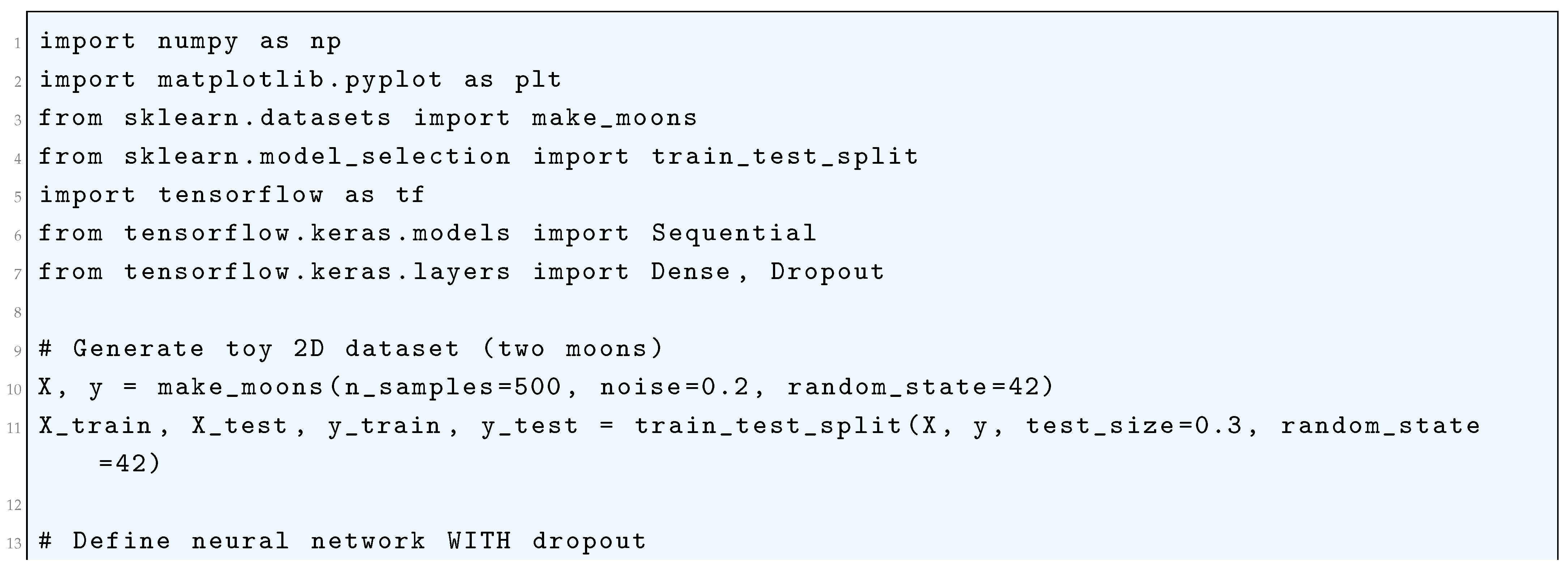

1.2.1.3 Python Code to Generate Figure 2 and Figure 3 Illustrating VC-Dimension Theory for Discrete Hypotheses

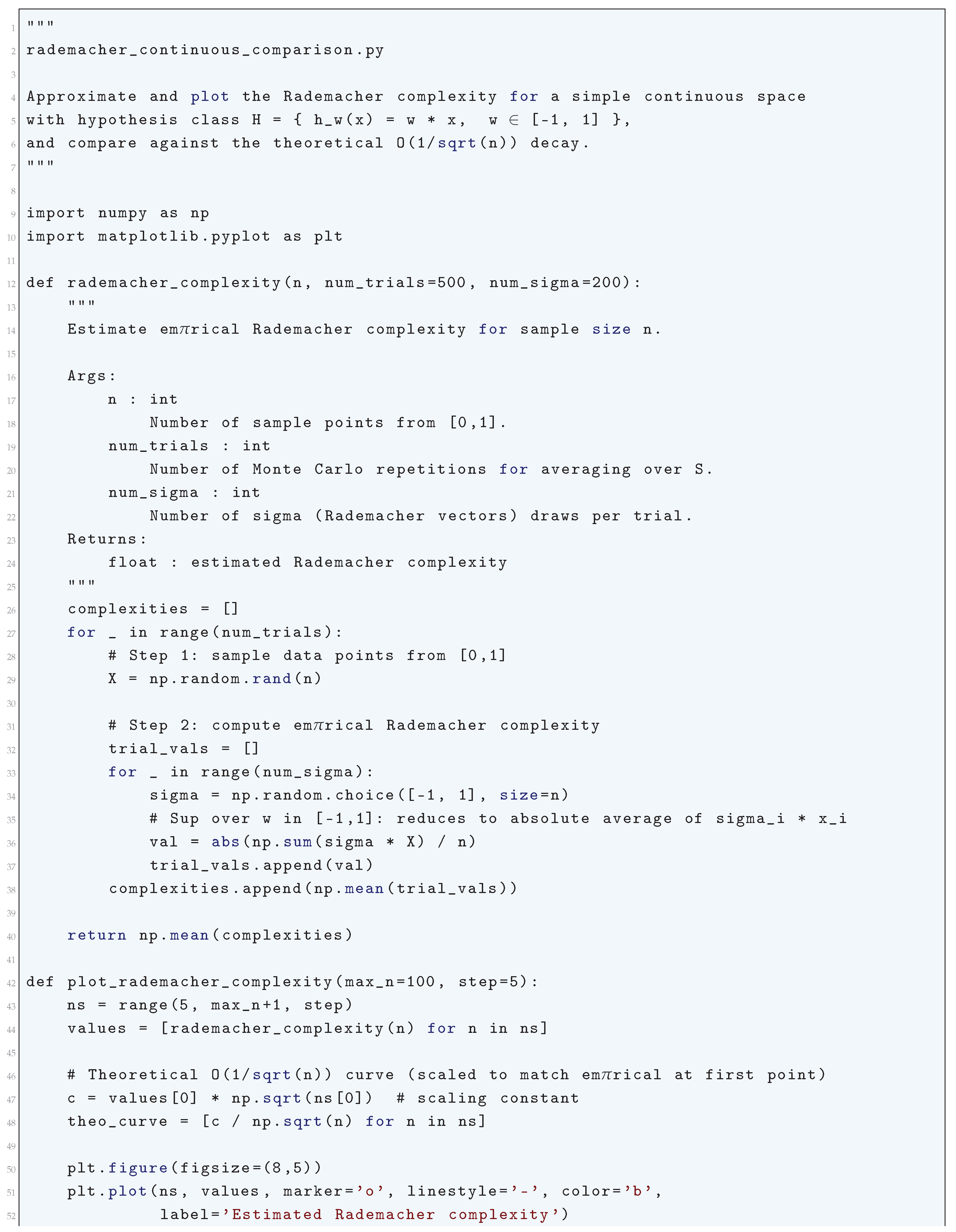

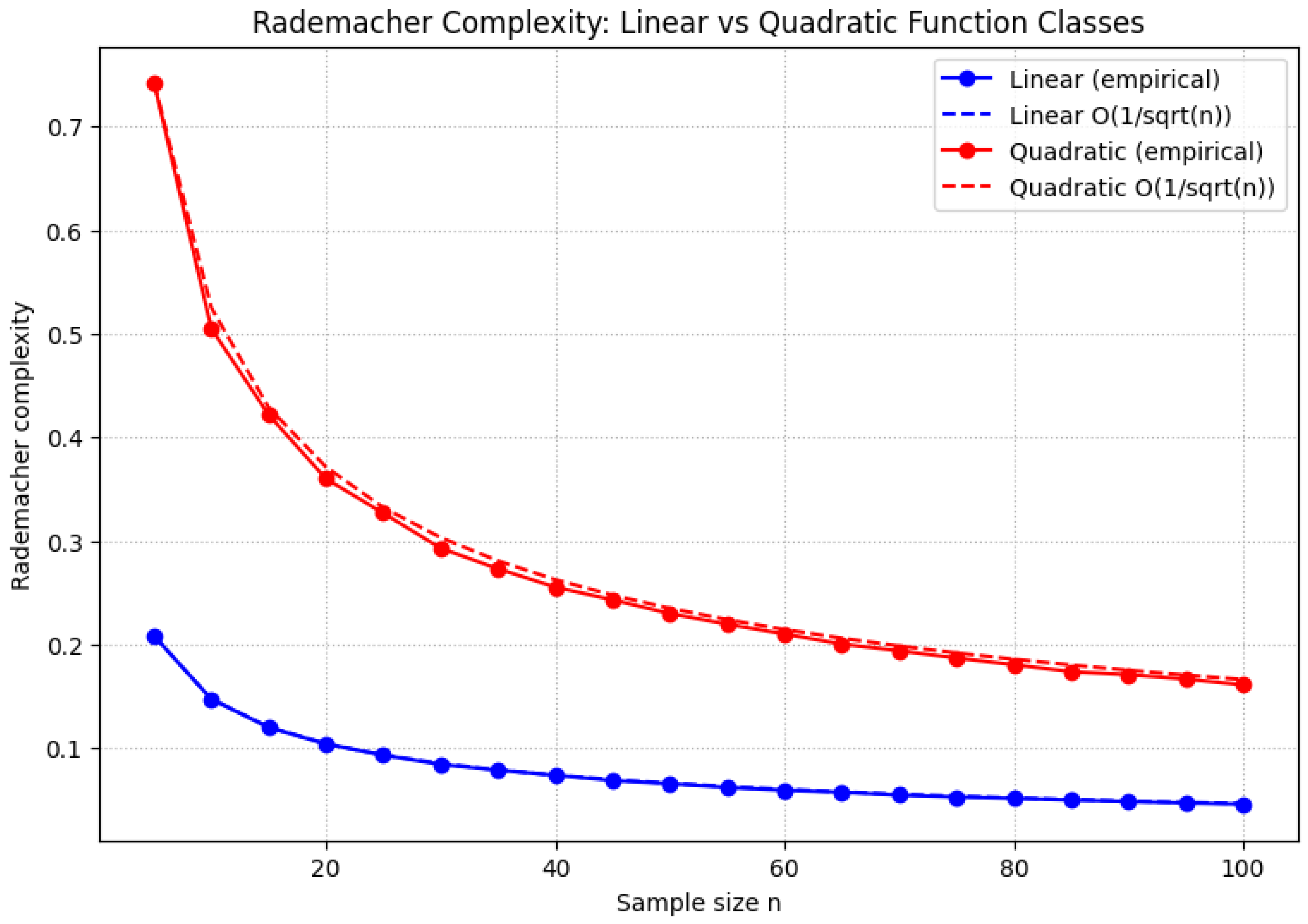

1.2.2. Rademacher Complexity for Continuous Spaces

1.2.2.1 Literature Review of Rademacher Complexity for Continuous Spaces

1.2.2.2 Analysis of Rademacher Complexity for Continuous Spaces

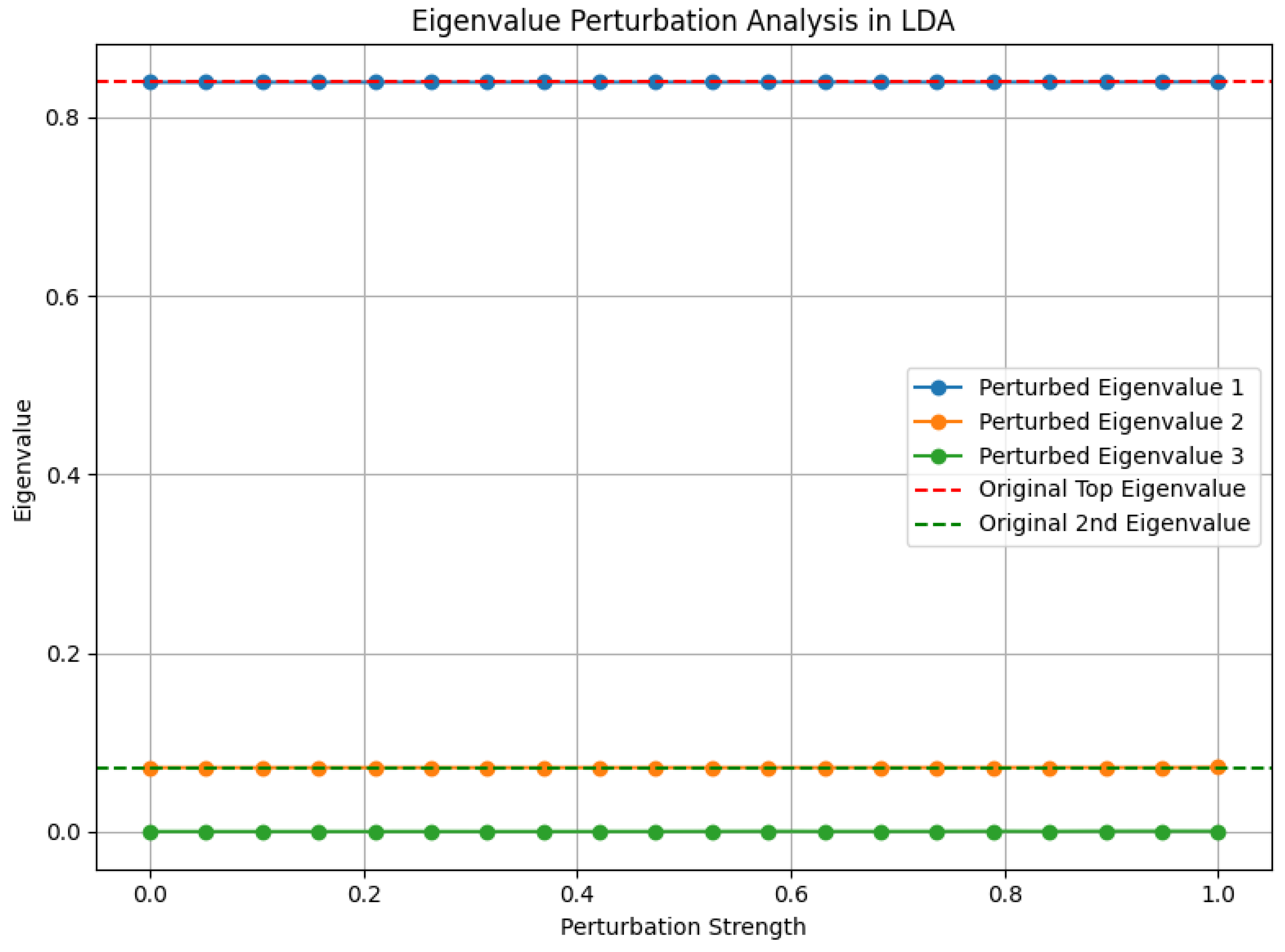

1.2.2.3 Python Code to Generate Figure 4 Illustrating Rademacher Complexity vs. Bound

1.2.2.4 Python Code to Generate Figure 5 Illustrating Rademacher Complexity: Linear vs Quadratic Function Classes

1.2.3. Sobolev Embeddings

1.2.3.1 Literature Review of Sobolev Embeddings

1.2.3.2 Analysis of Sobolev Embeddings

- Semi-norm Dominance: The Wk,p-norm is controlled by the seminorm , ensuring sensitivity to highorder derivatives.

- Poincaré Inequality: For Ω bounded, u − uΩ satisfies:

-

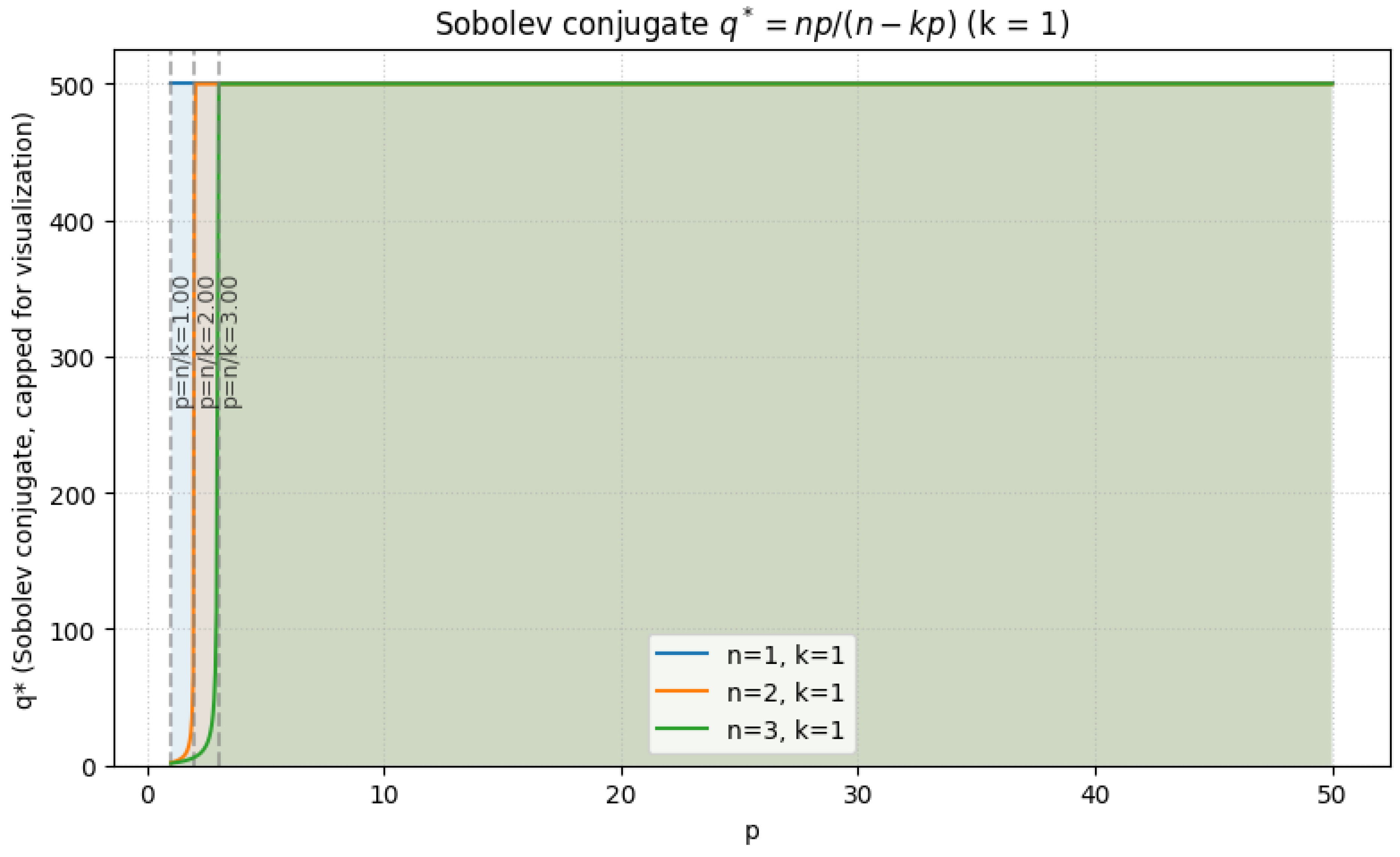

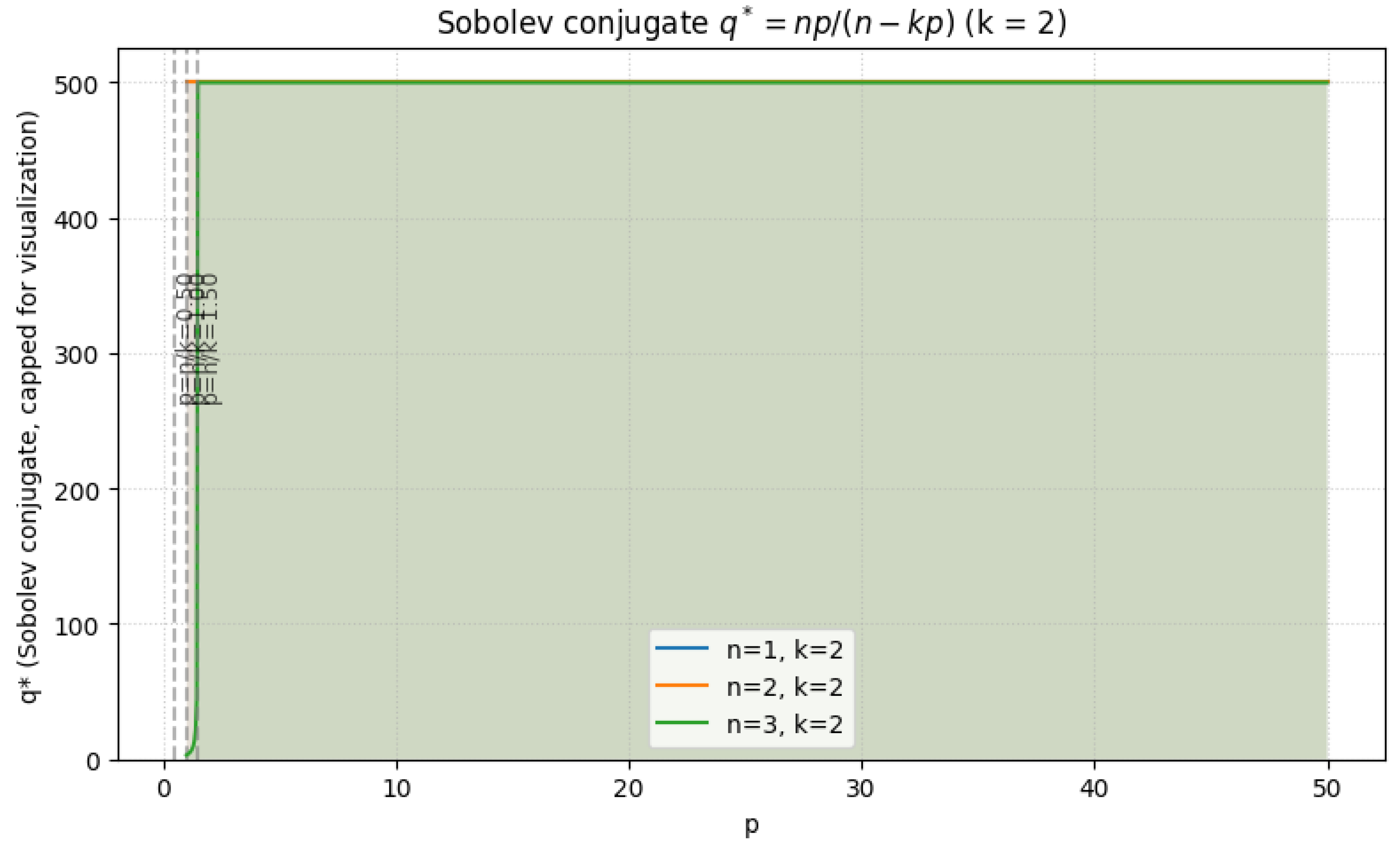

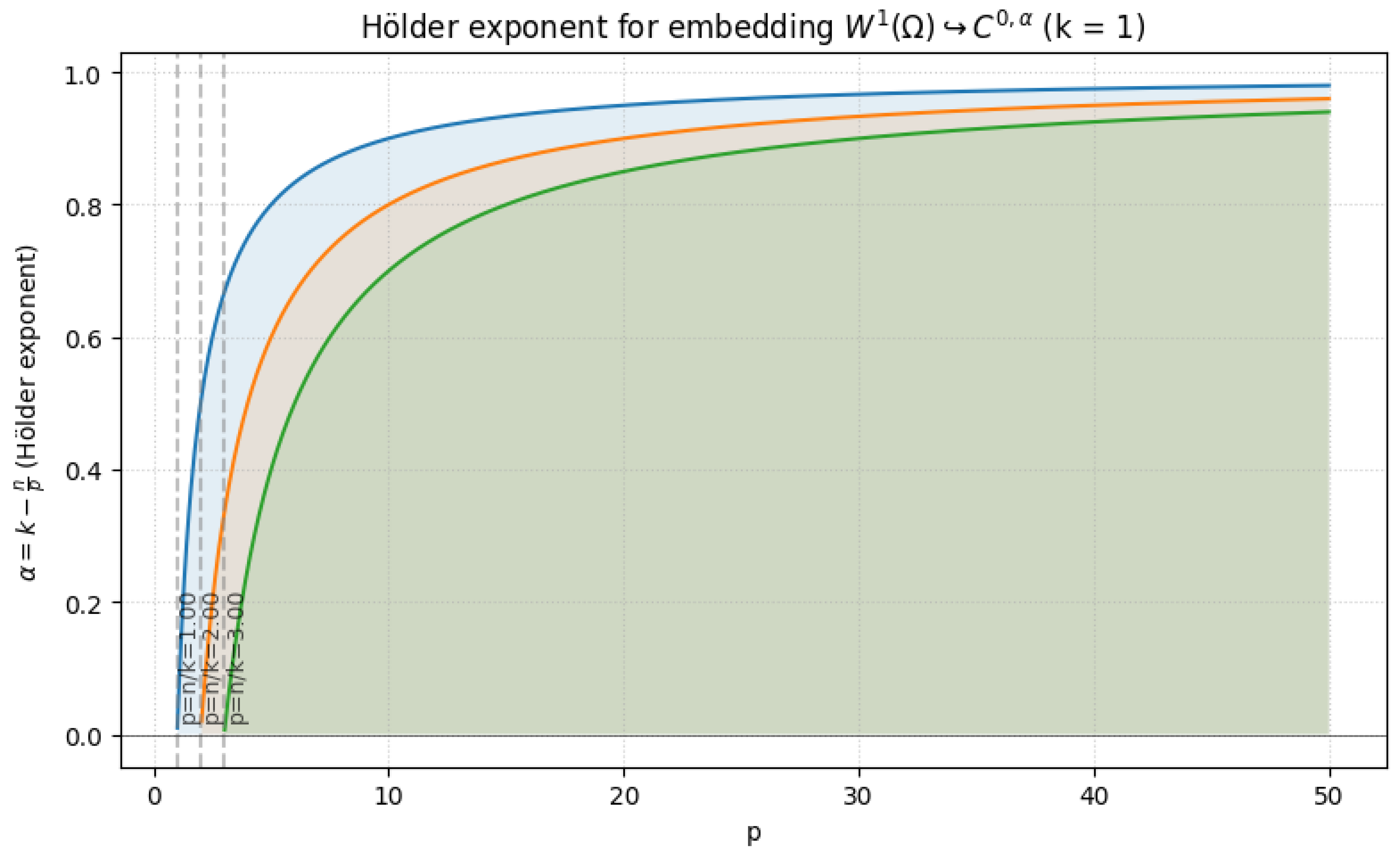

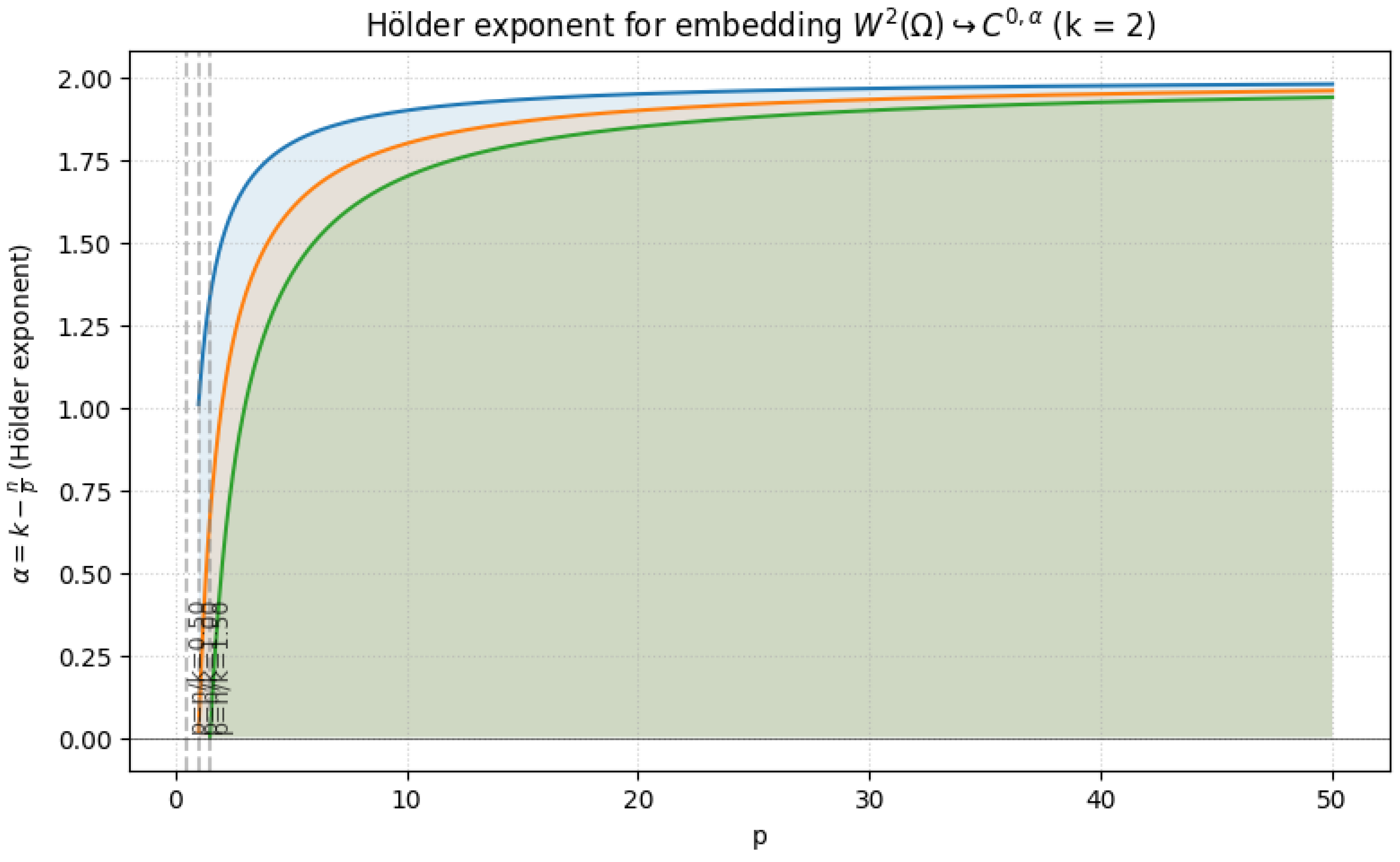

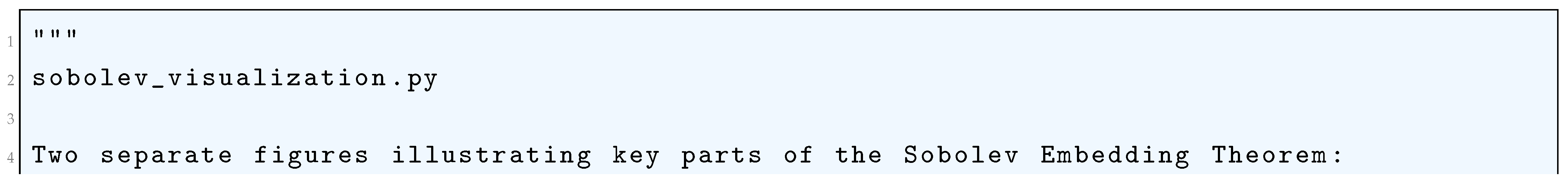

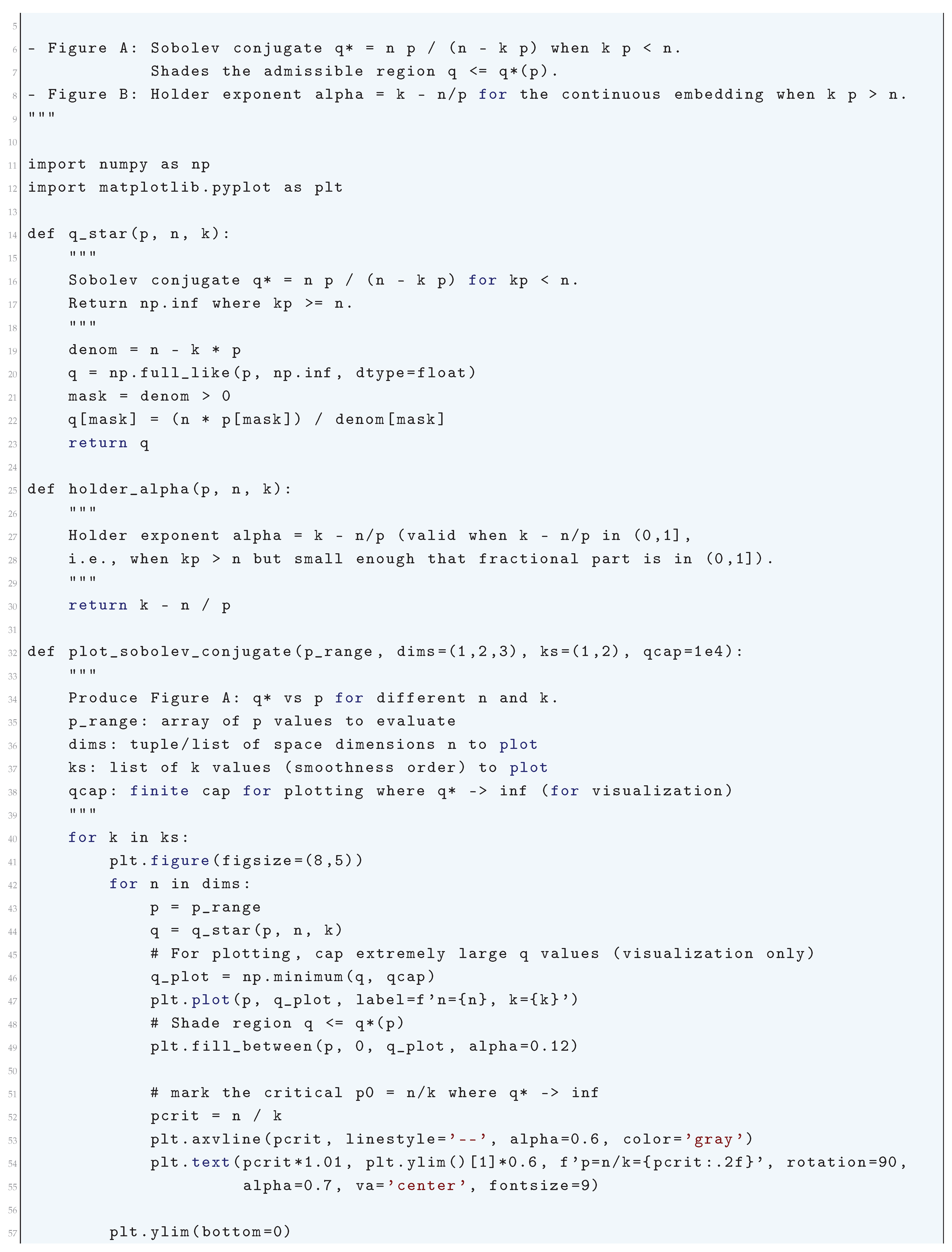

Sobolev Embedding Theorem: Let be a bounded domain with Lipschitz boundary. Then:

- If k > n/p, Wk,p(Ω) ↪ Cm,α() with m = ⌊k − n/p⌋ and α = k − n/p − m.

- If k = n/p, Wk,p(Ω) ↪ Lq(Ω) for q < ∞.

- If k < n/p, Wk,p(Ω) ↪ Lq(Ω) where .

-

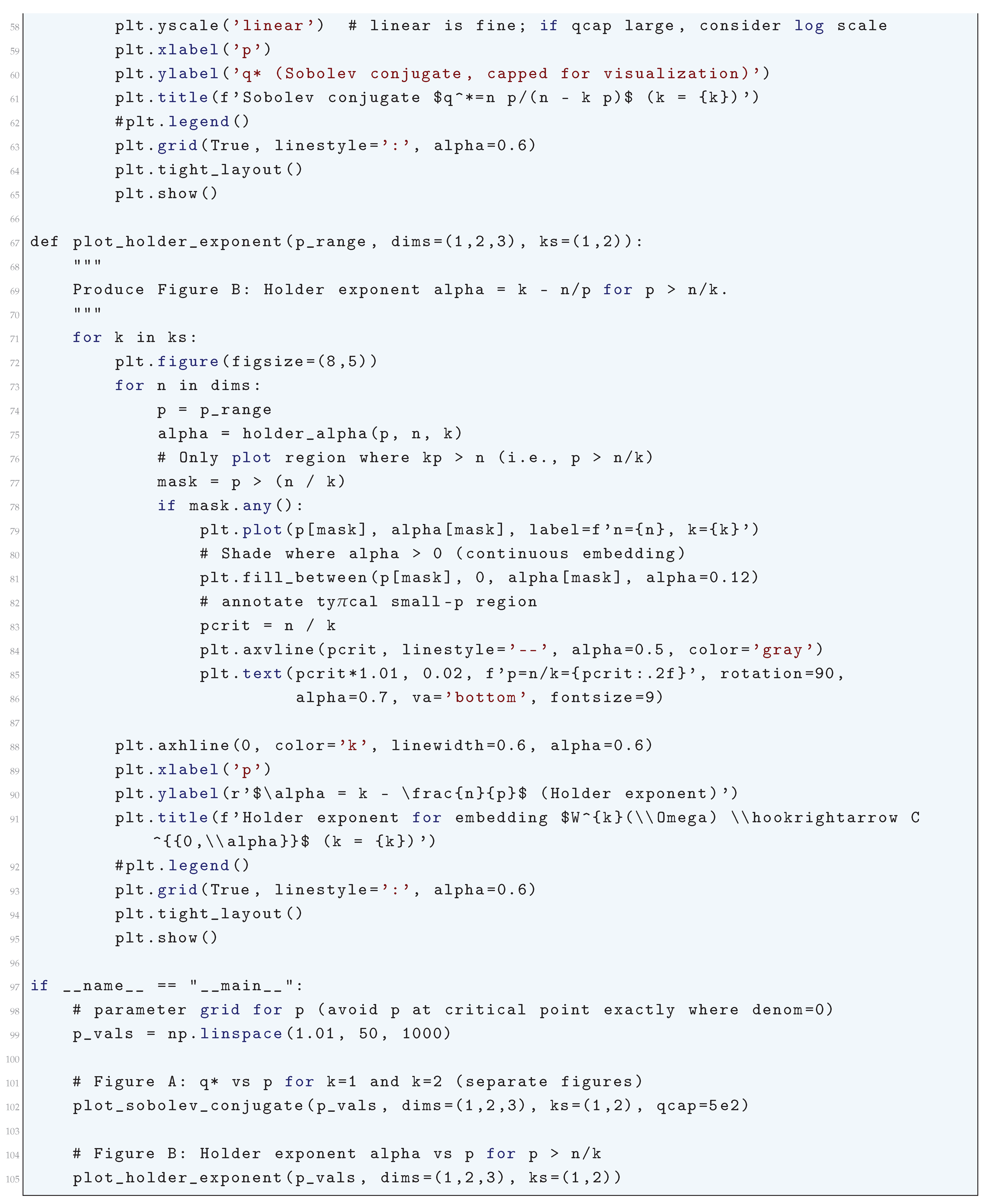

Rellich-Kondrachov Compactness Theorem: The embedding is compact for . Compactness follows from:

- (a)

- Equicontinuity: -boundedness ensures uniform control over oscillations.

- (b)

- Rellich’s Selection Principle: Strong convergence follows from uniform estimates and tightness.

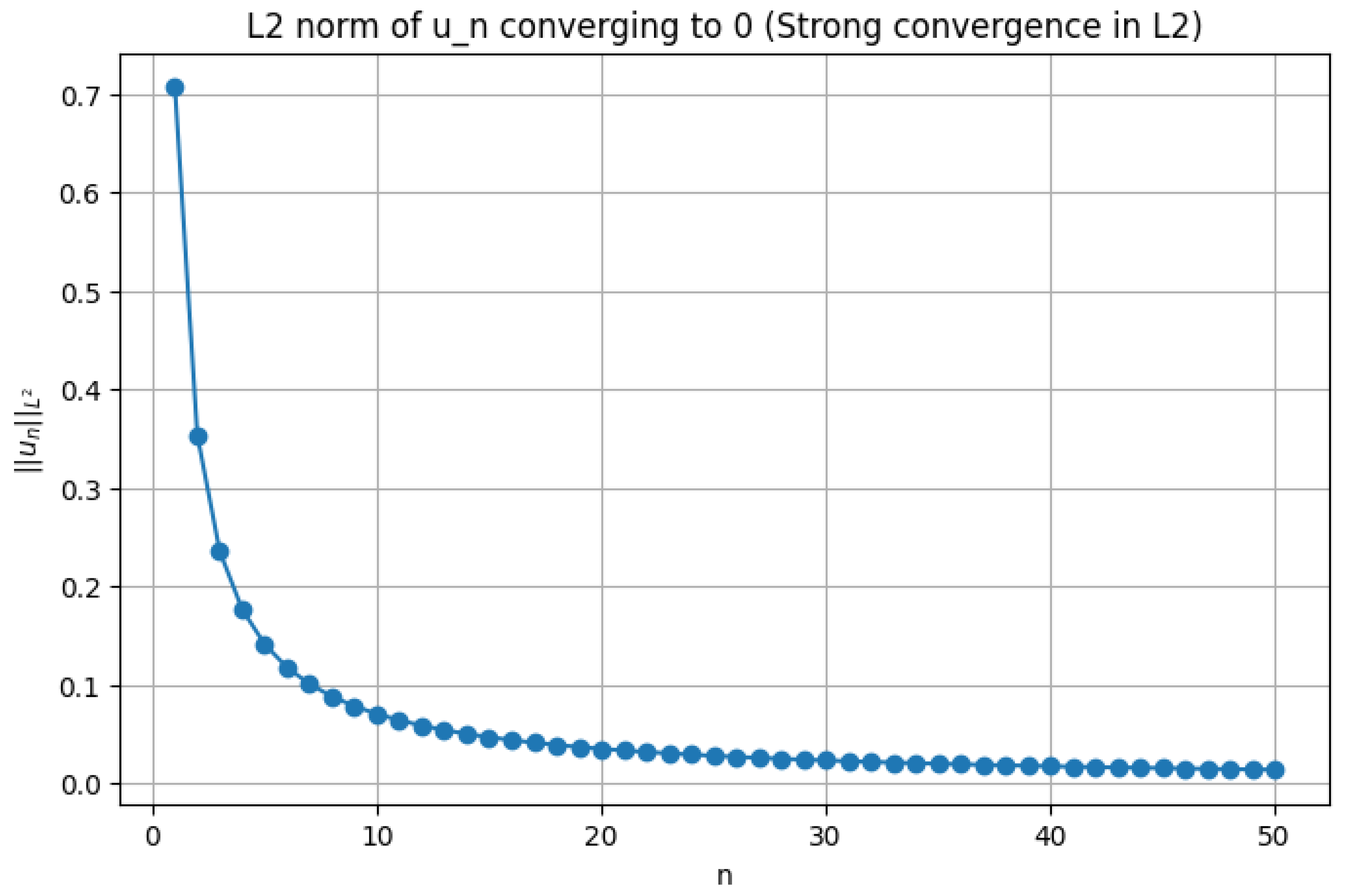

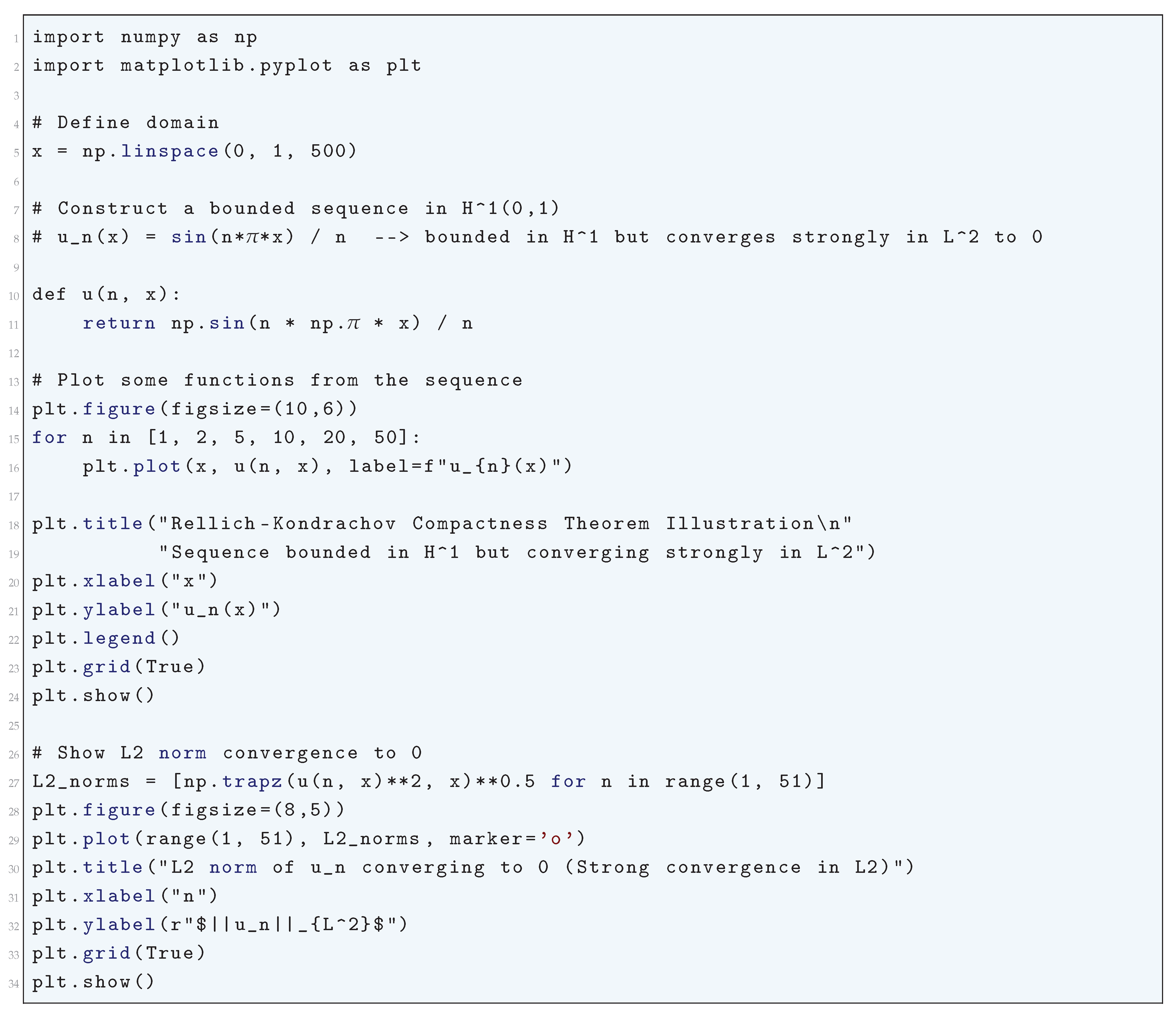

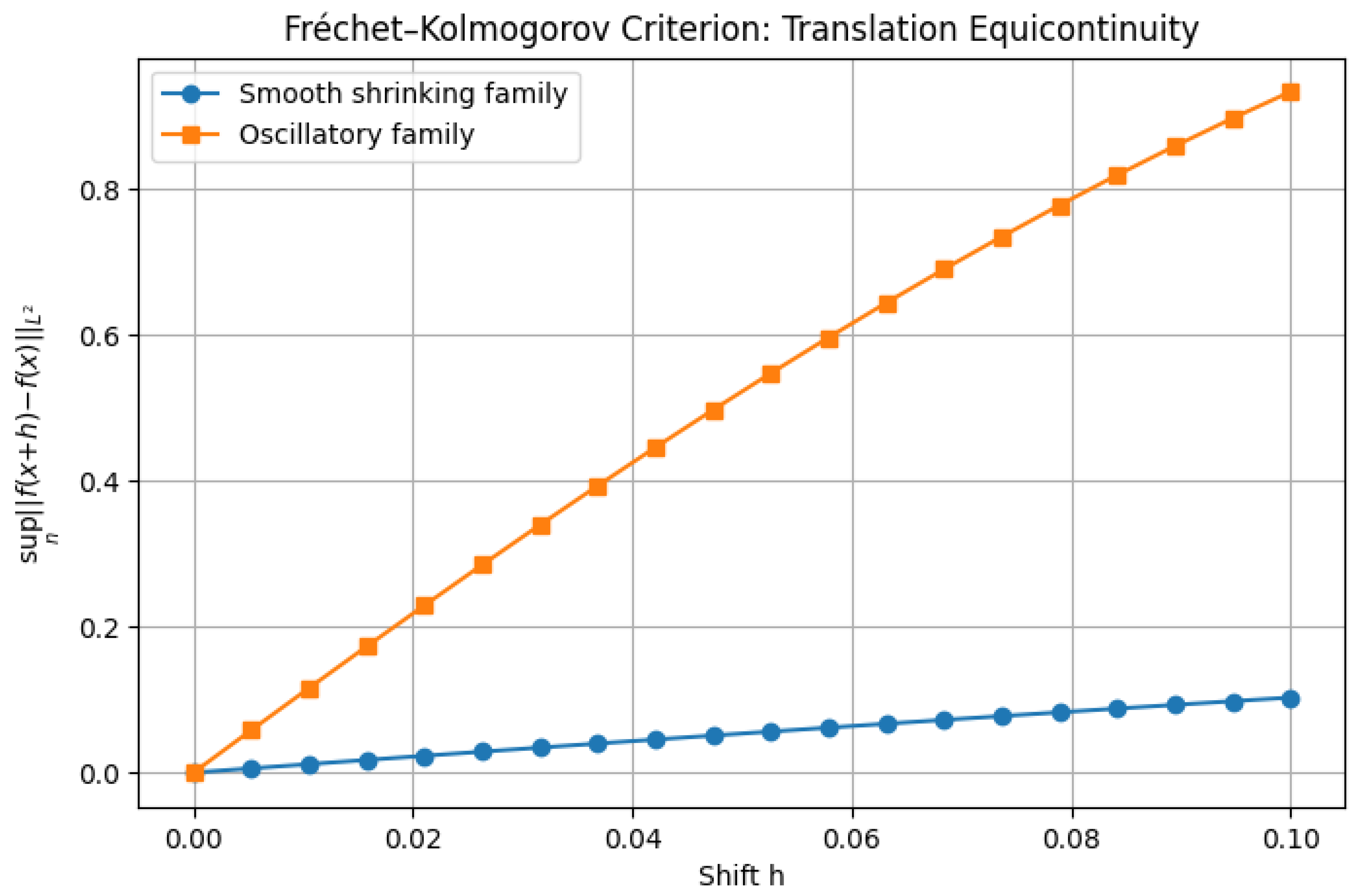

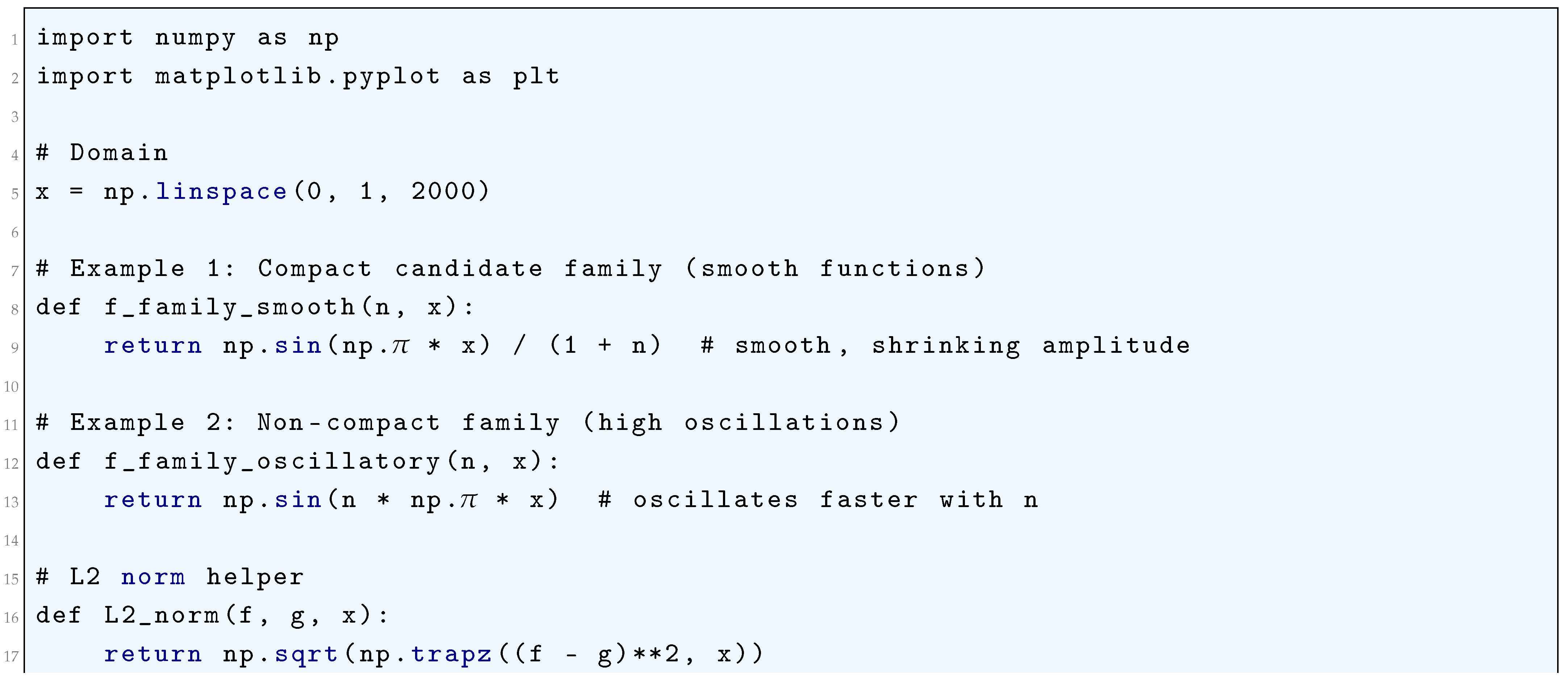

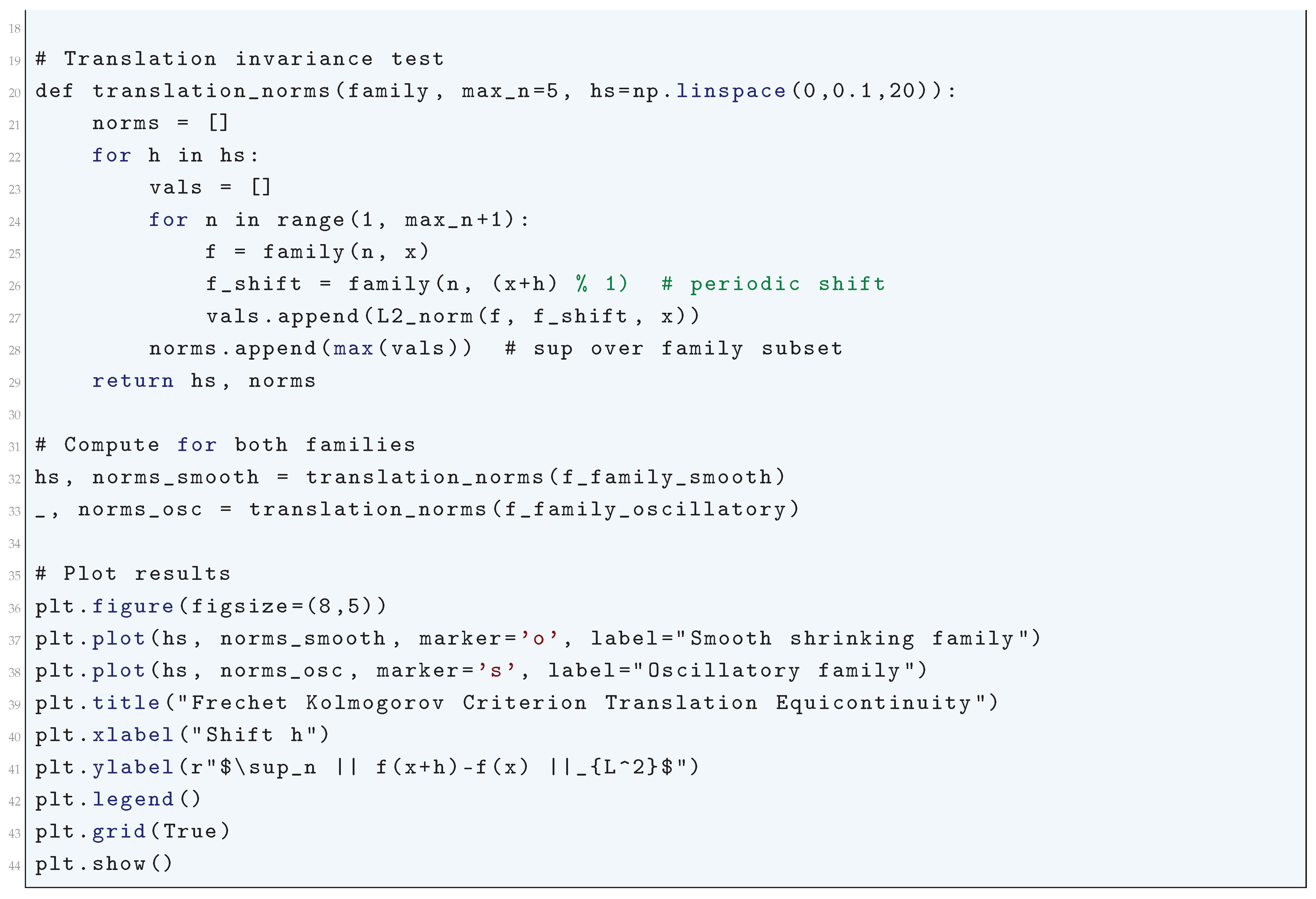

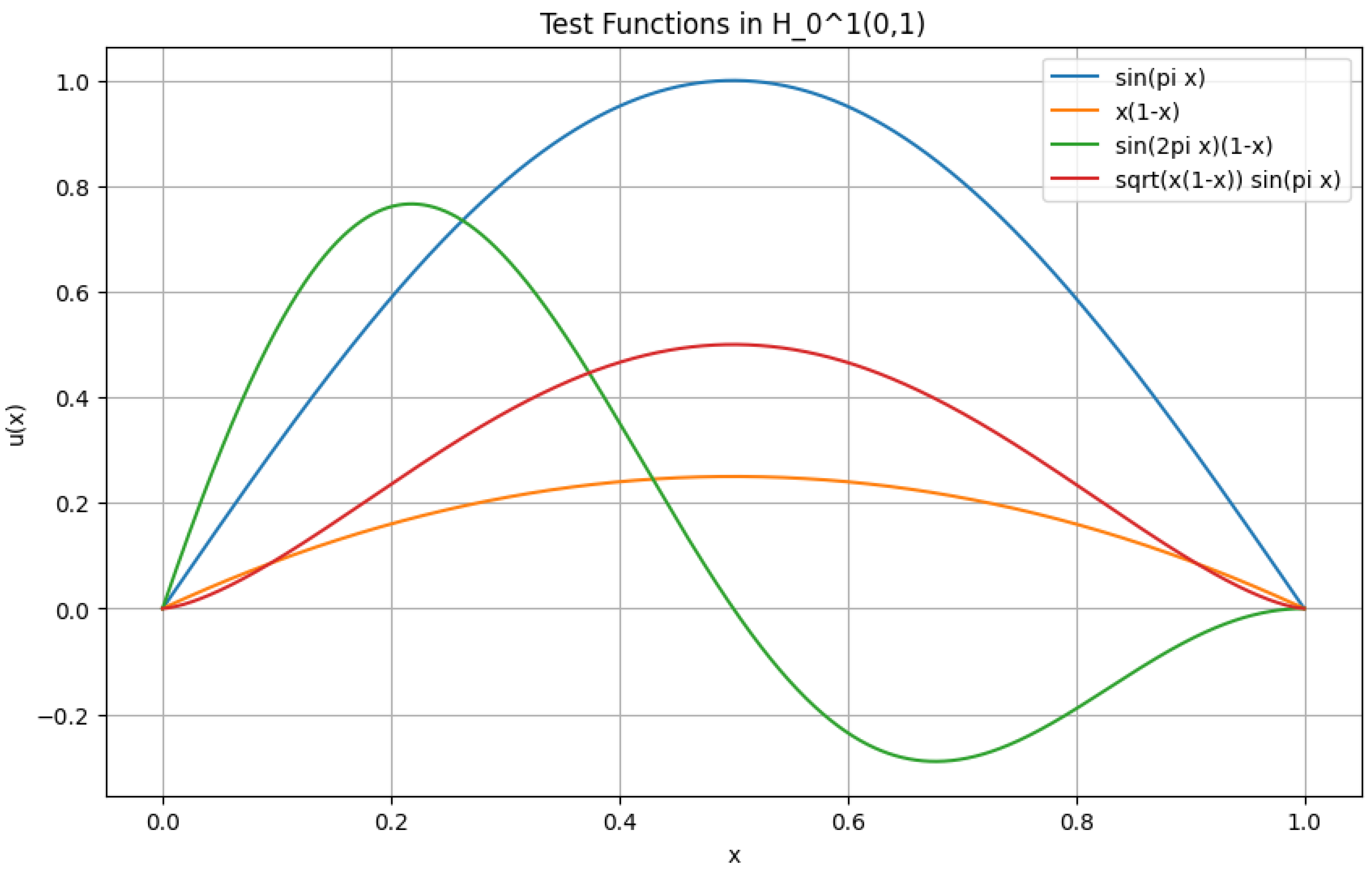

1.2.3.3 Python Code to Generate Figure 6, Figure 7, Figure 8, and Figure 9 Illustrating Sobolev Embeddings

1.2.4. Rellich-Kondrachov Compactness Theorem

1.2.4.1 Literature Review of Rellich-Kondrachov Compactness Theorem

1.2.4.2 Analysis of Rellich-Kondrachov Compactness Theorem

- The sequence does not oscillate excessively at small scales.

- The sequence does not escape to infinity in a way that prevents strong convergence.

1.2.4.3 Python Code to Generate Figure 10 and Figure 11 Illustrating Rellich-Kondrachov Compactness Theorem

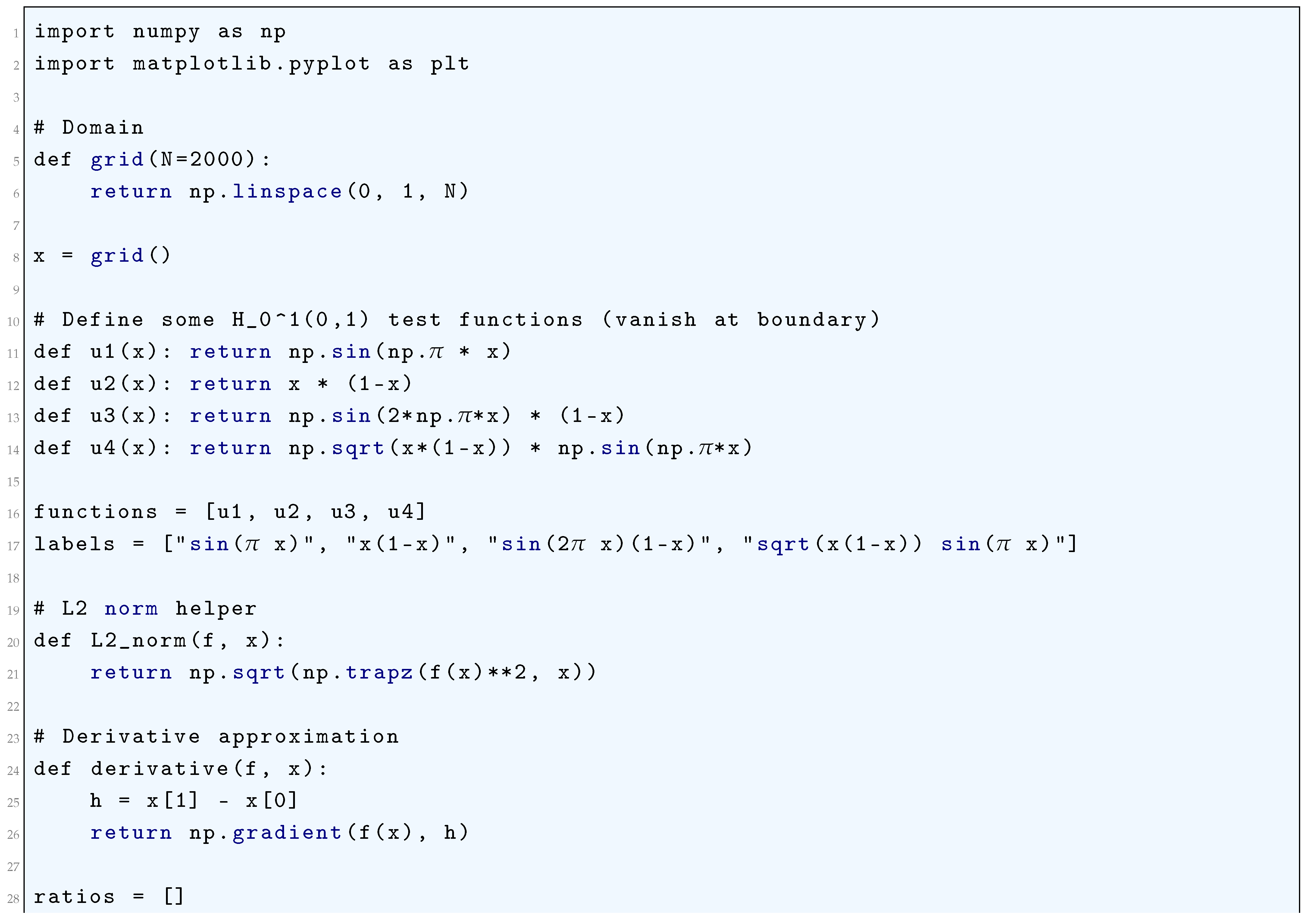

1.2.5. Fréchet-Kolmogorov Compactness Criterion

1.2.5.1 Literature Review of Fréchet-Kolmogorov Compactness Criterion

1.2.5.2 Analysis of Fréchet-Kolmogorov Compactness Criterion

1.2.5.3 Python Code to Generate Figure 12

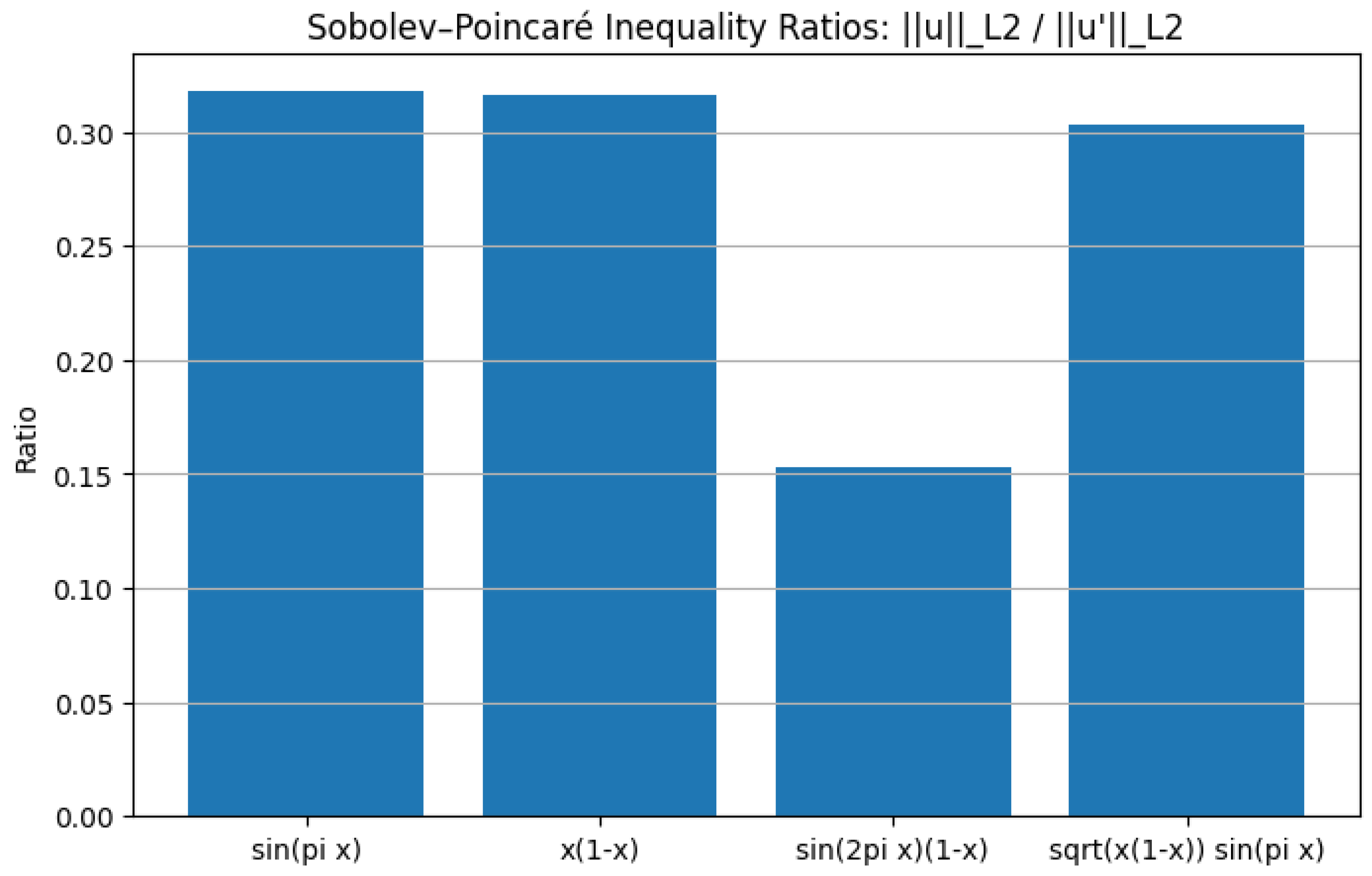

1.2.6. Sobolev-Poincaré Inequality

1.2.6.1 Literature Review of Sobolev-Poincaré Inequality

1.2.6.2 Analysis of Sobolev-Poincaré Inequality

- Regularity of PDE Solutions: The Sobolev-Poincaré inequality is crucial in proving the existence and regularity of weak solutions to elliptic PDEs.

- Compactness and Rellich-Kondrachov Theorem: It plays a role in proving the compact embedding of into , which is fundamental in functional analysis.

- Control of Function Oscillations: It quantifies how much a function can deviate from its mean, which is used in various areas of mathematical physics and geometry.

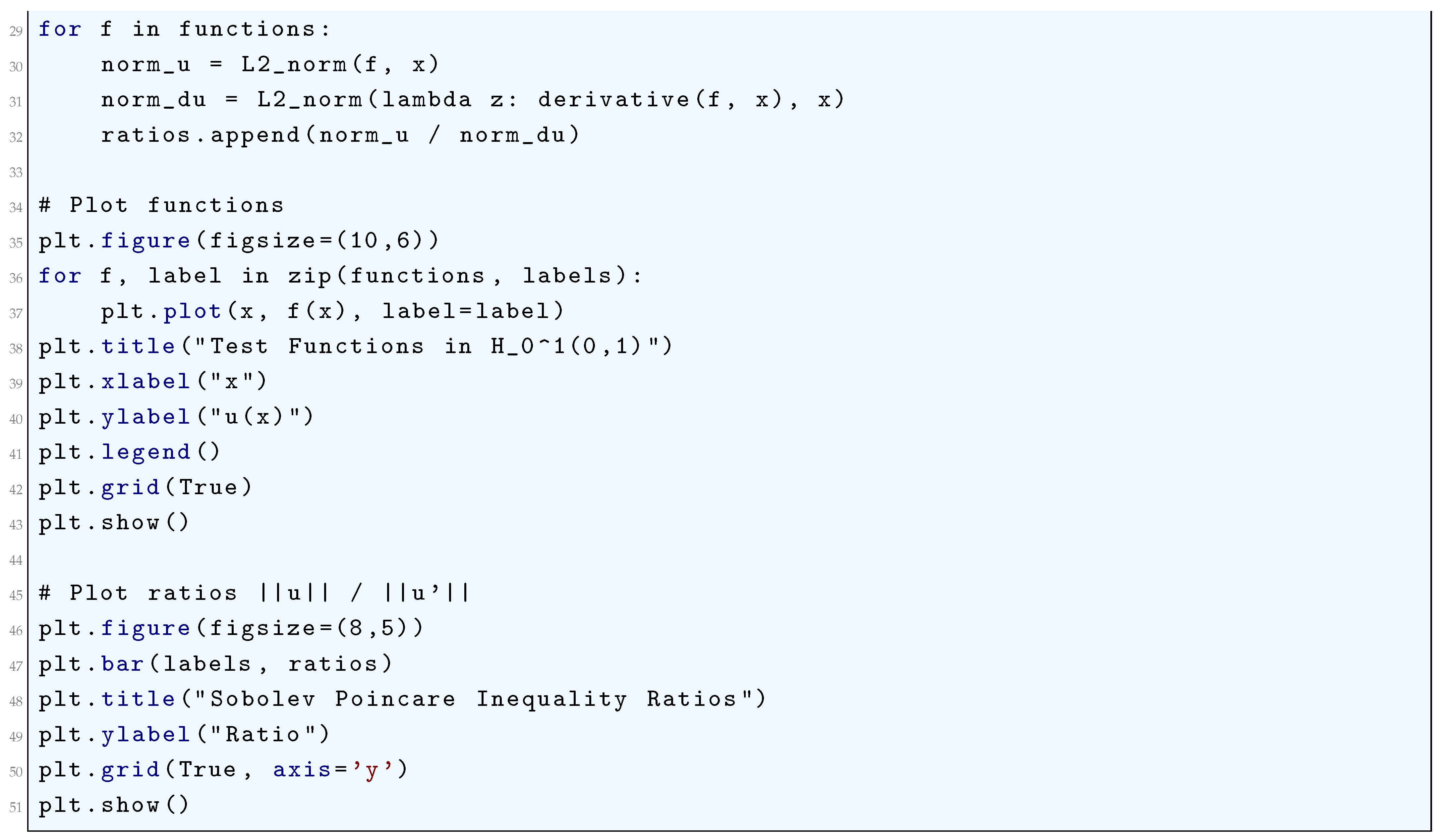

1.2.6.3 Python Code to Generate Figure 13 and Figure 14

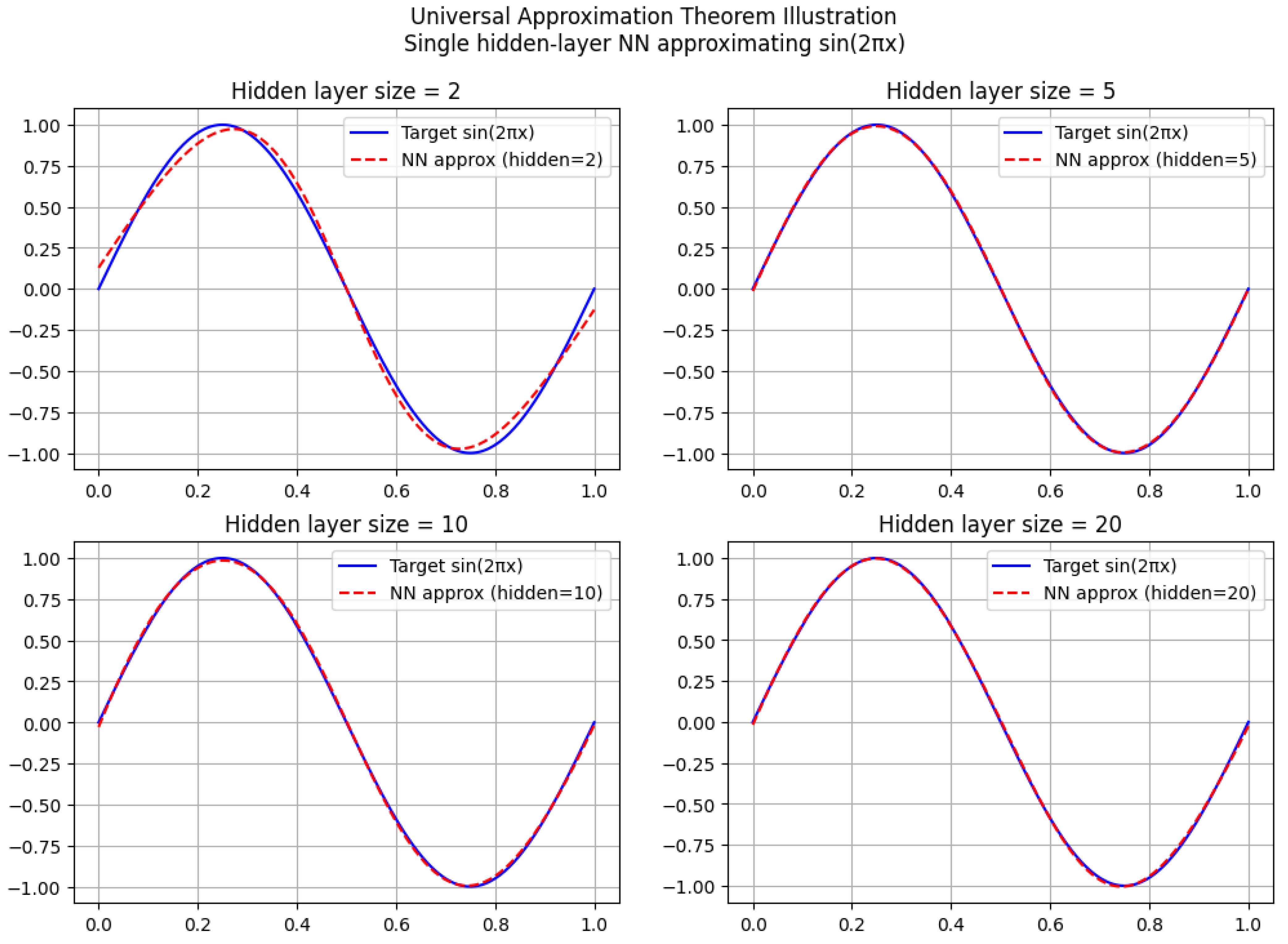

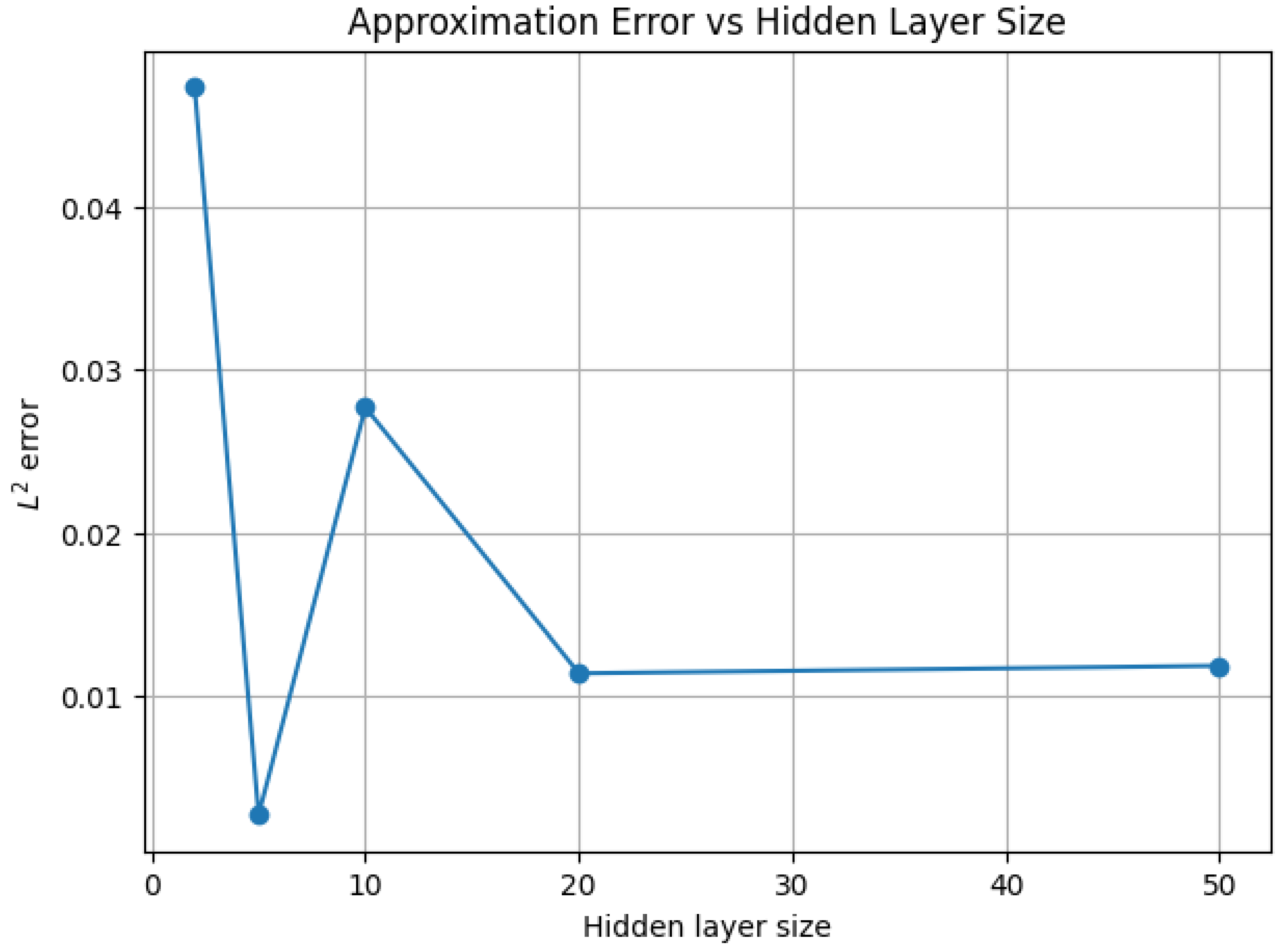

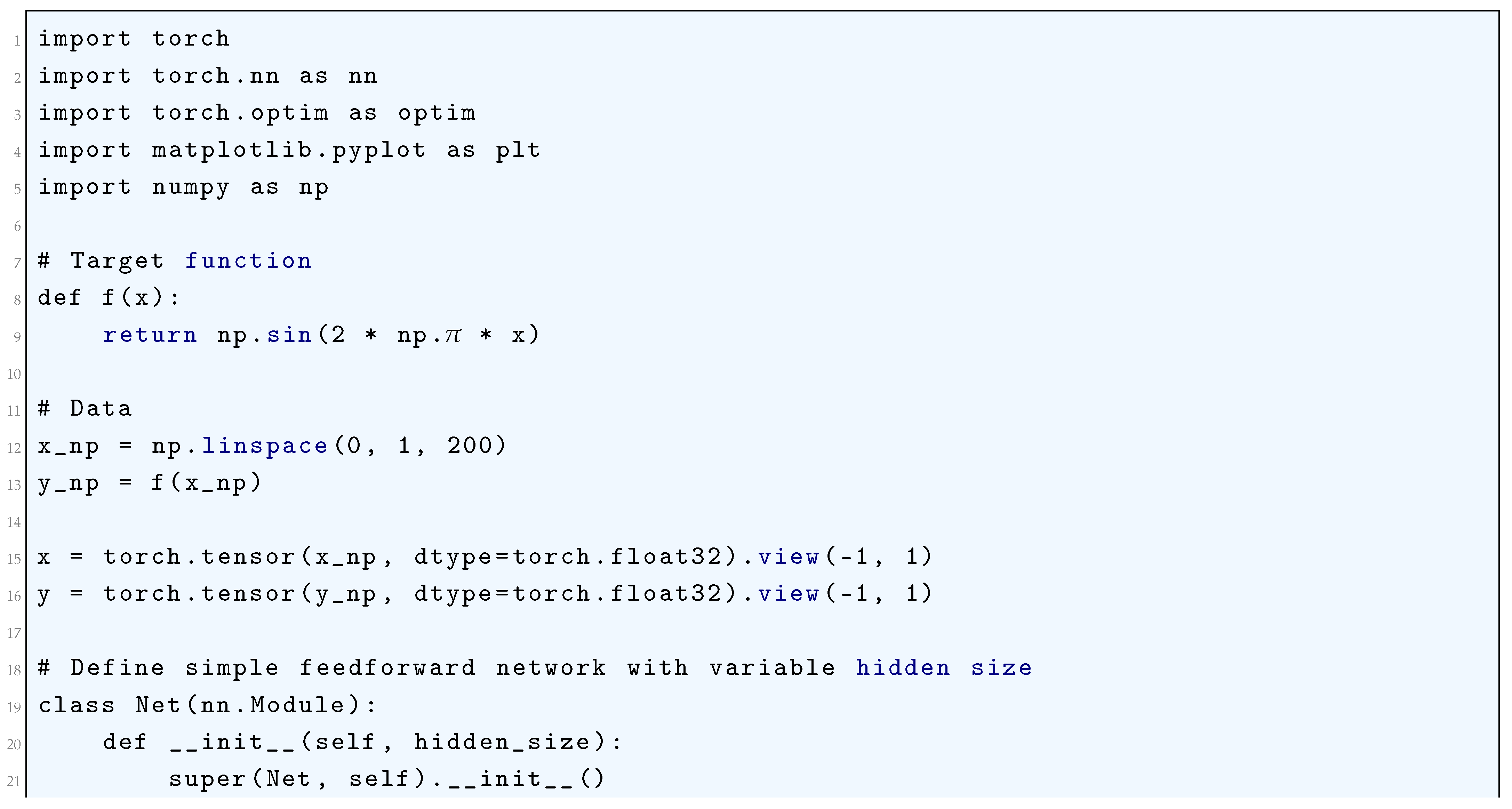

2. Universal Approximation Theorem: Refined Proof

2.1. Literature Review of Universal Approximation Theorem

2.2. Approximation Using Convolution Operators

2.2.1. Python Code to Generate Figure 15 and Figure 16

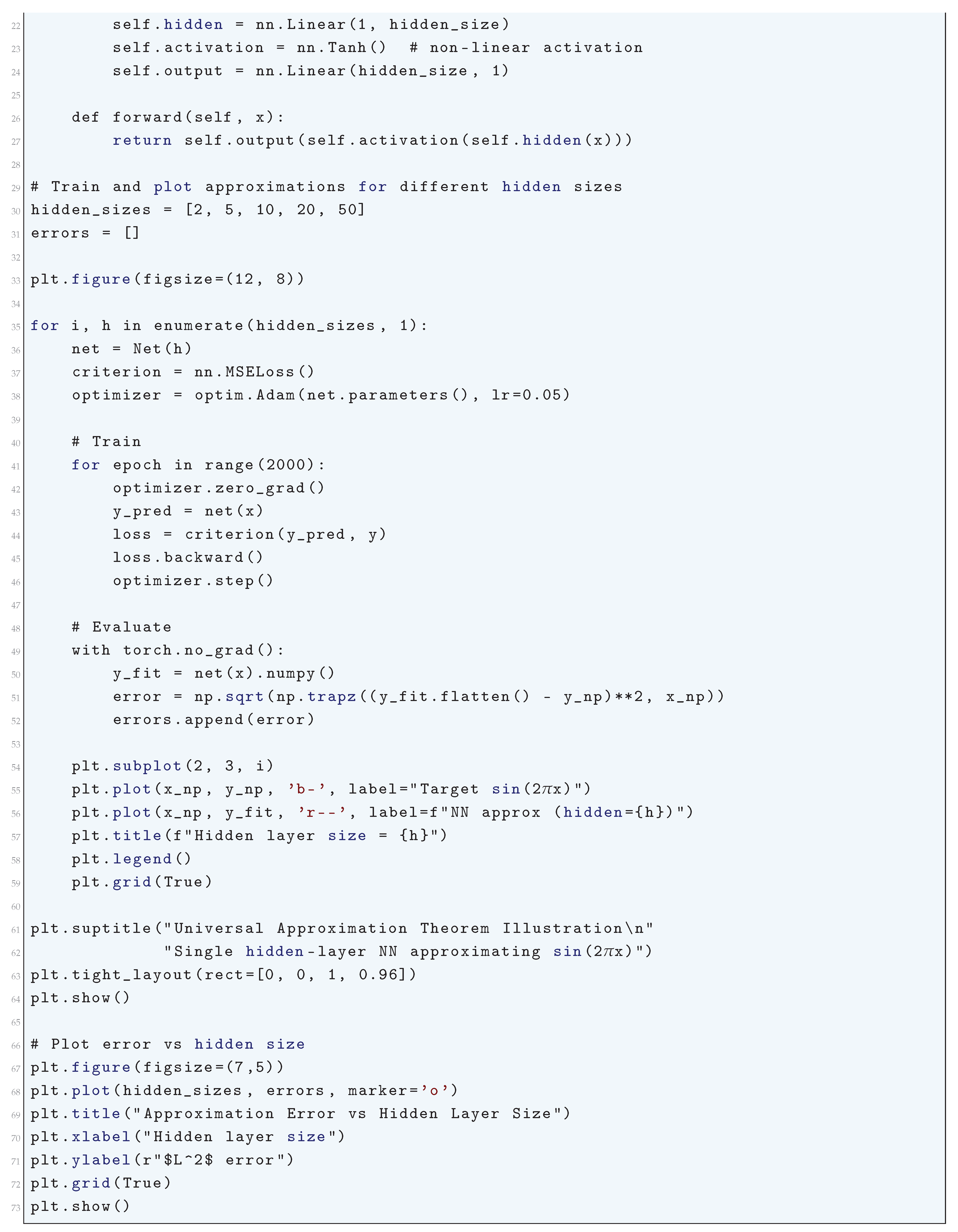

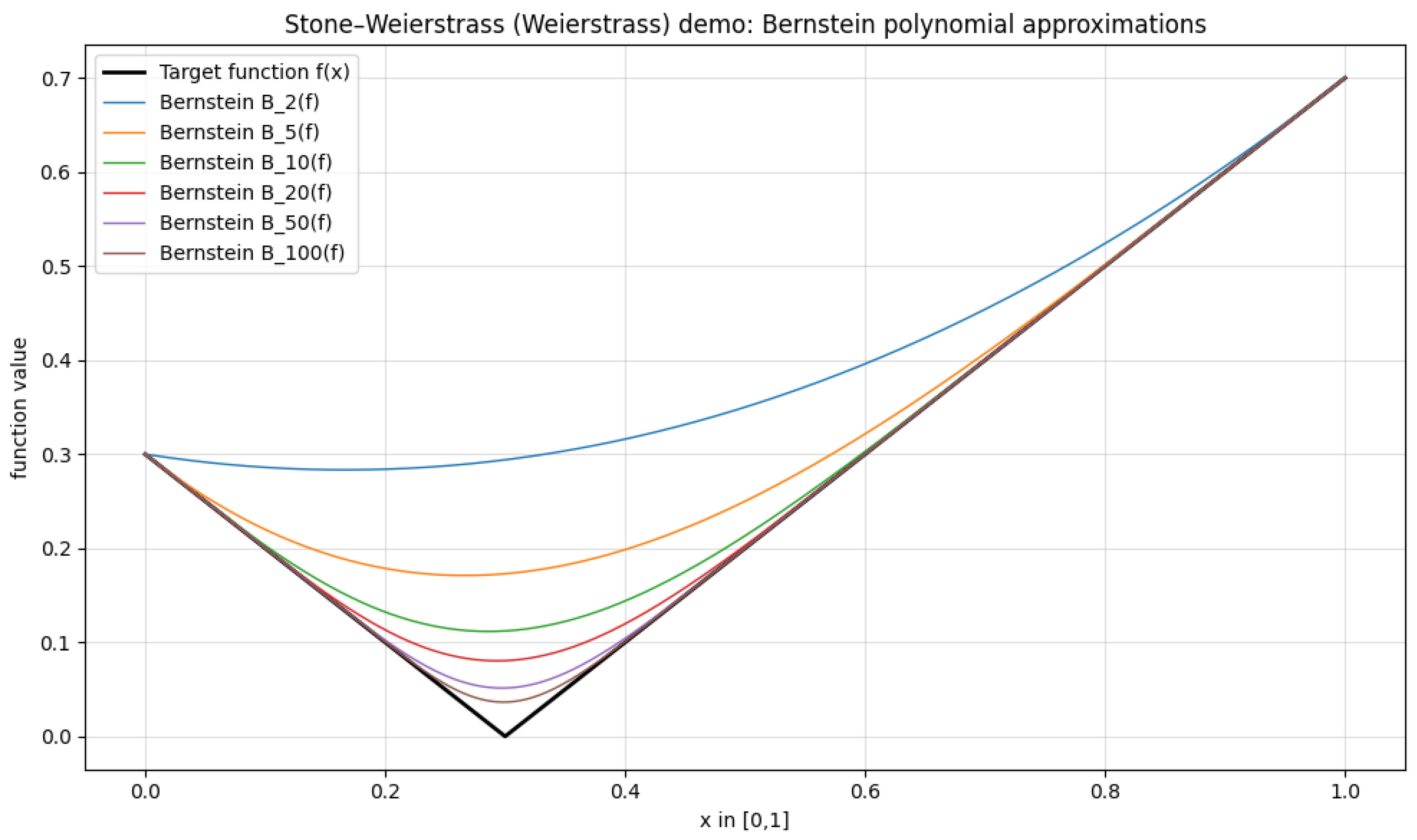

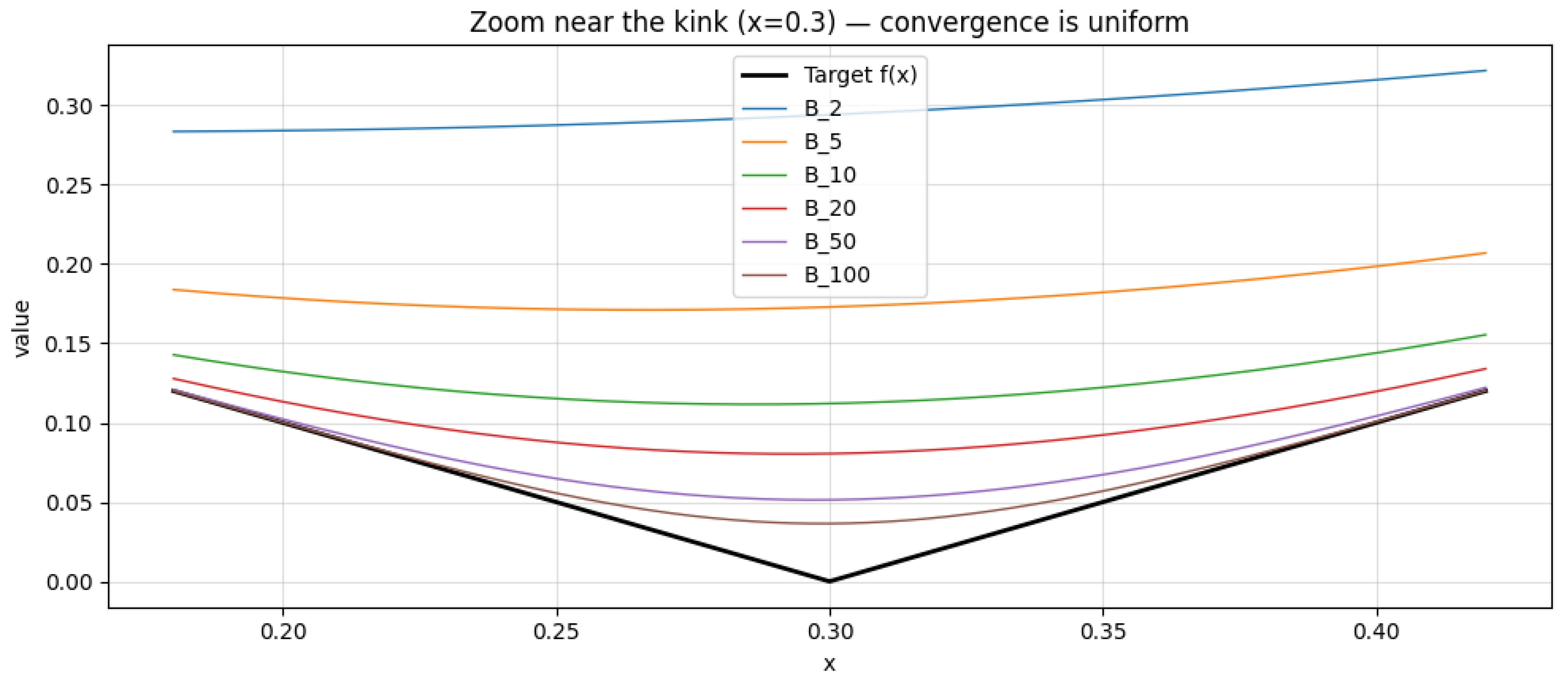

2.2.2. Stone-Weierstrass Application

2.2.2.1 Literature Review of Stone-Weierstrass Application

2.2.2.2 Analysis of Stone-Weierstrass Application

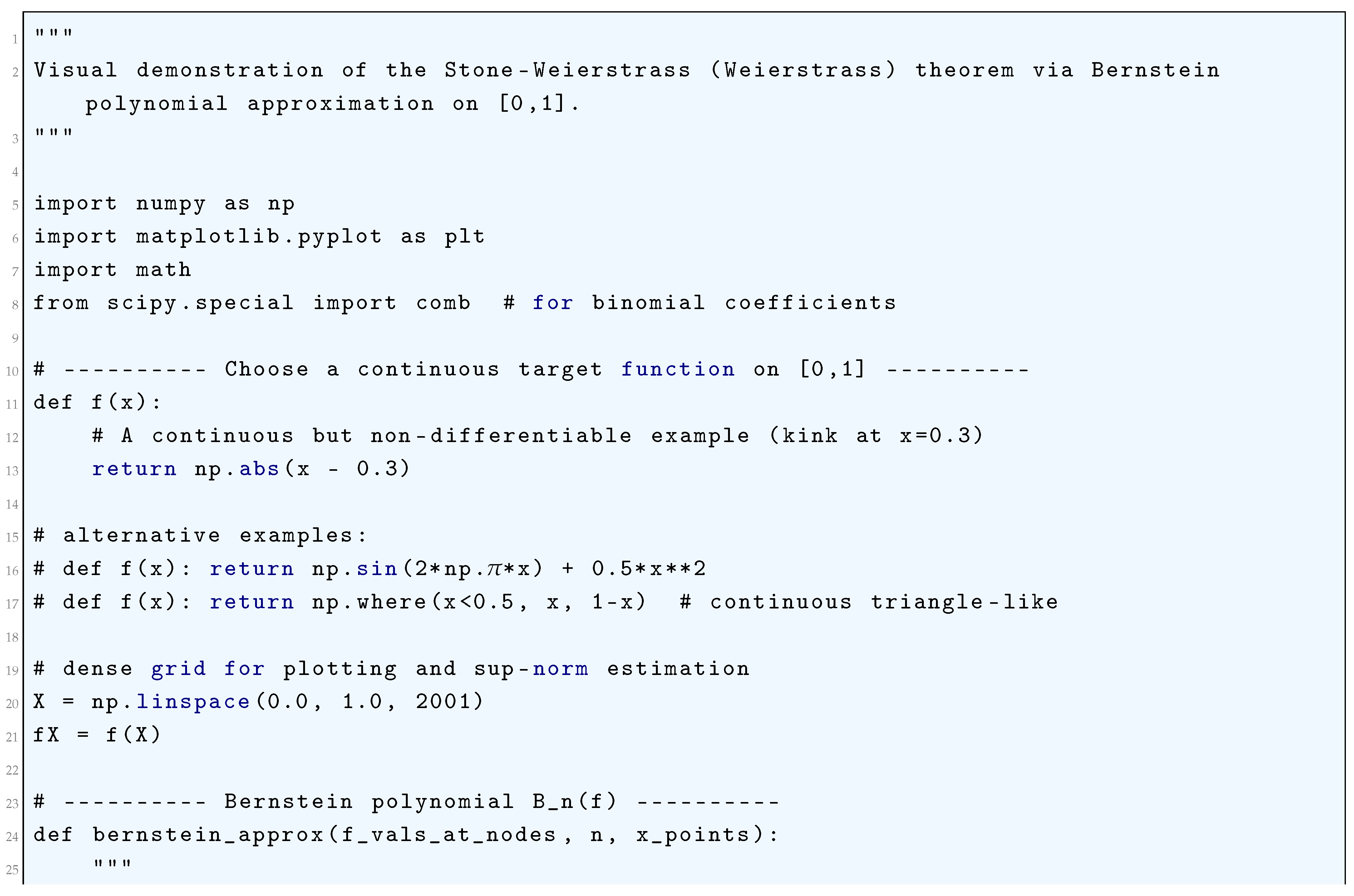

2.2.2.3 Python Code to Generate Figure 17, Figure 18 and Figure 19

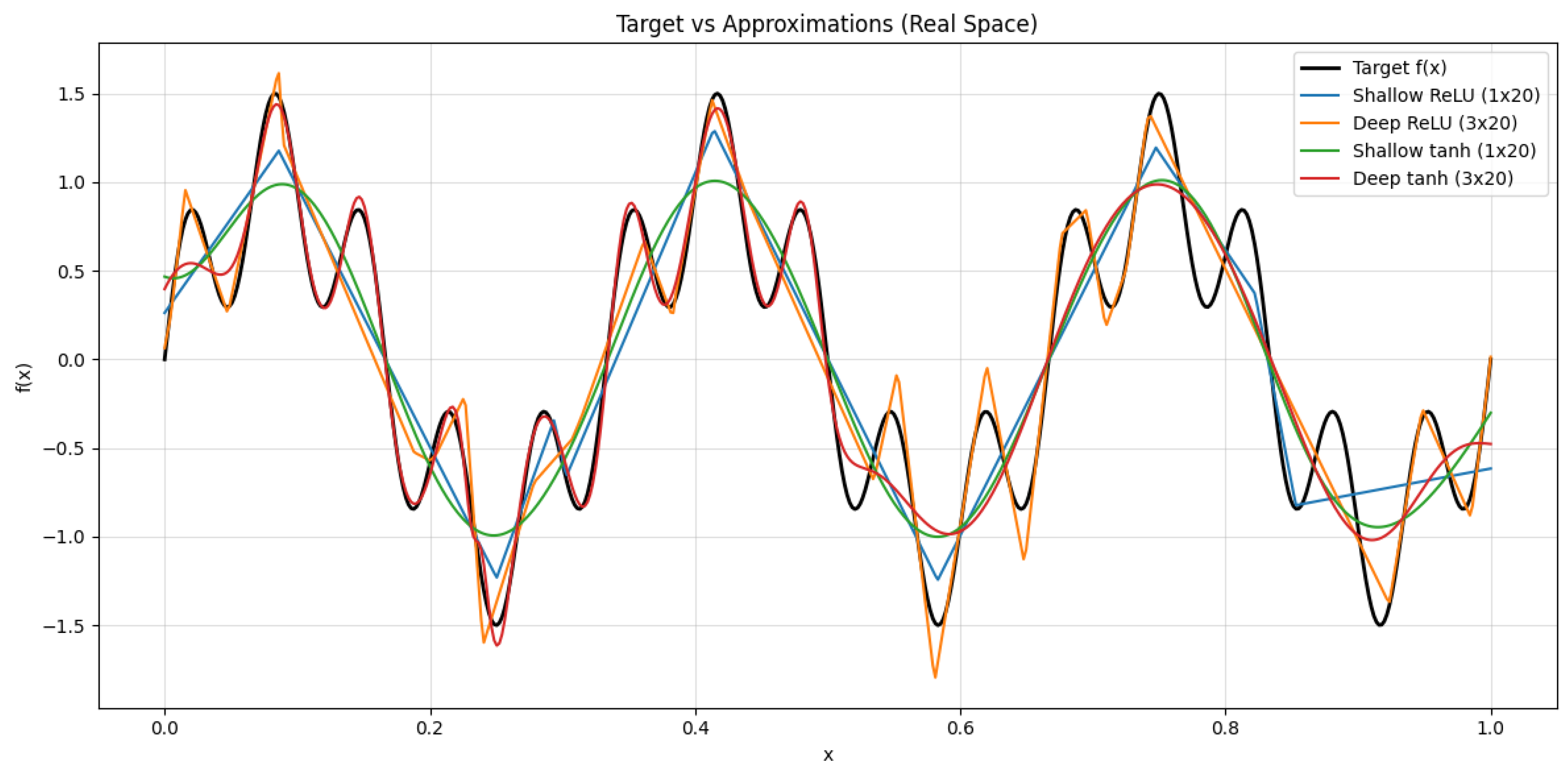

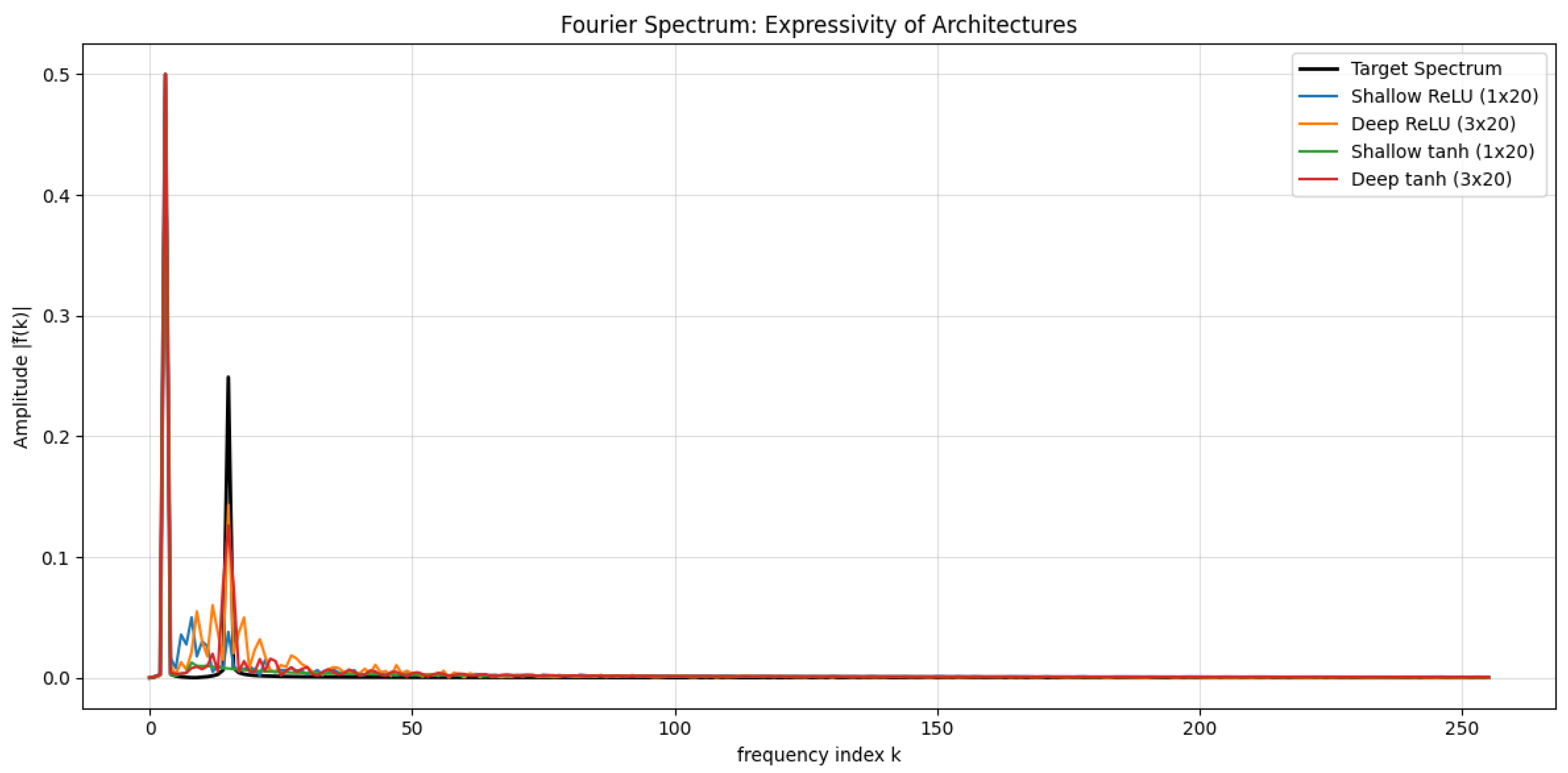

2.3. Depth vs. Width: Capacity Analysis

2.3.1. Bounding the Expressive Power

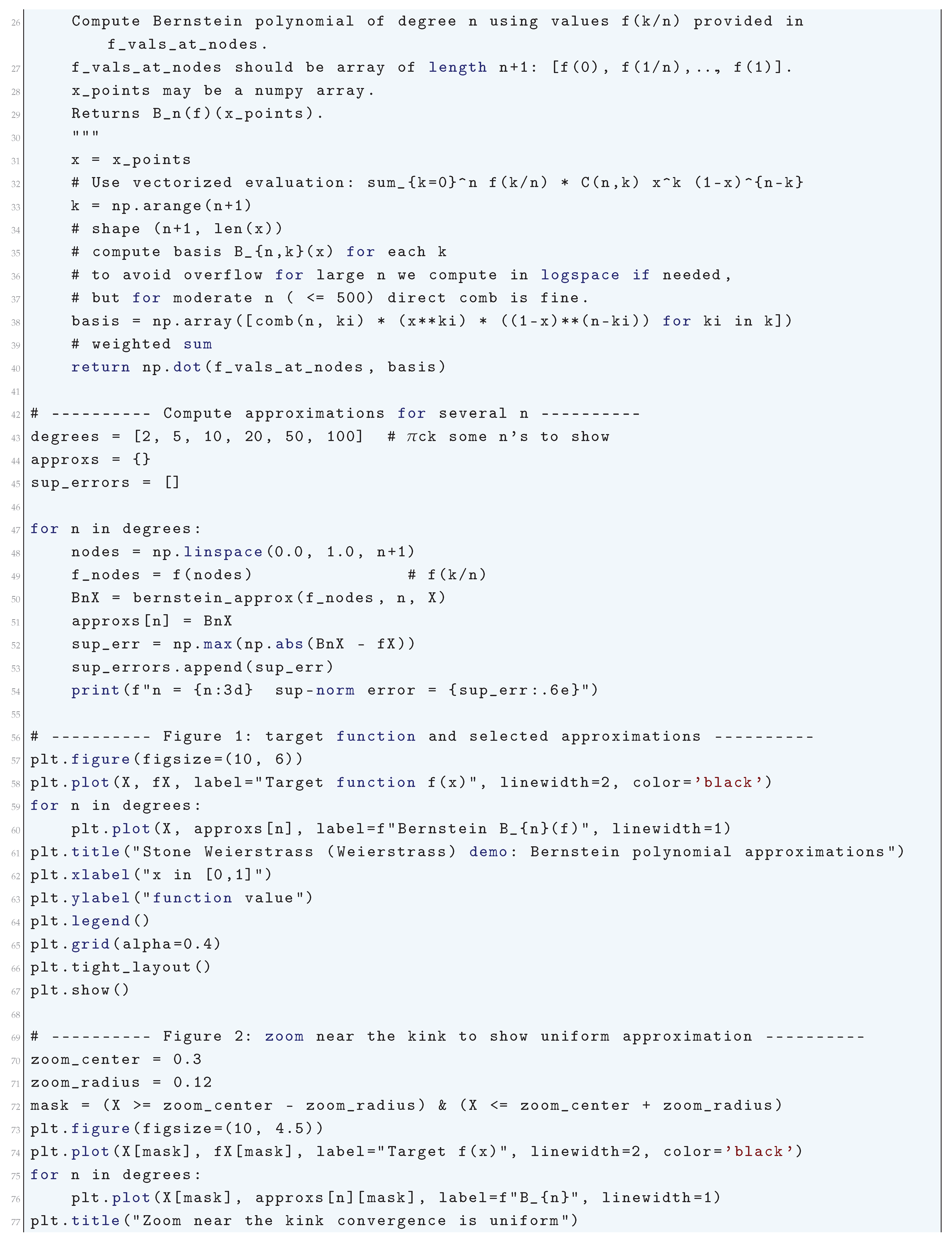

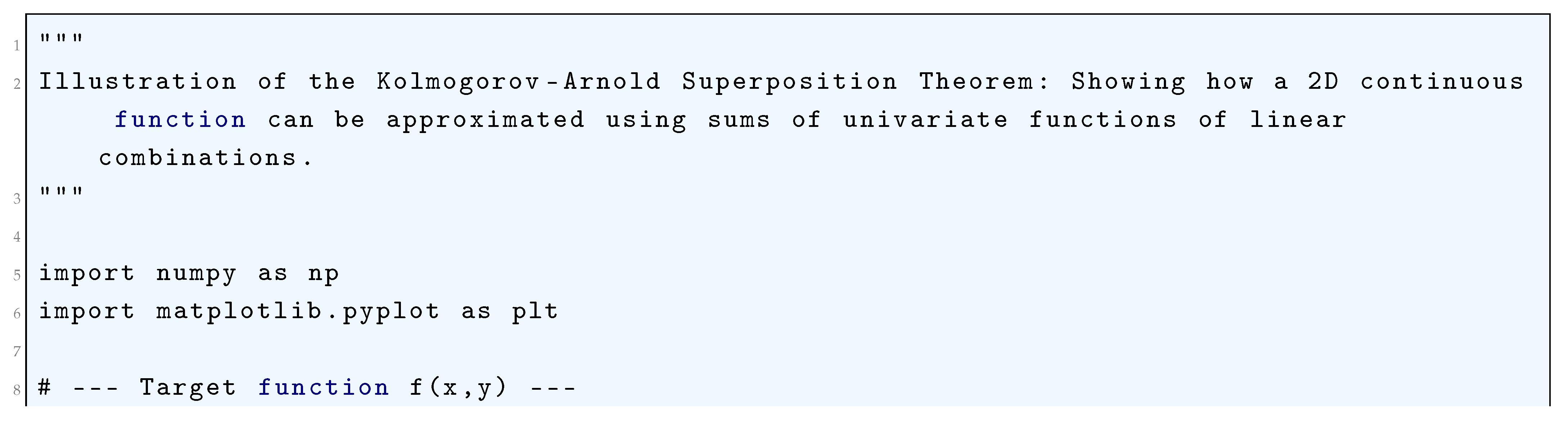

2.3.1.1 Literature Review of Kolmogorov-Arnold Superposition Theorem

| Paper | Main Contribution | Impact |

| Kolmogorov (1957) | Original KST theorem | Laid foundation for function decomposition |

| Arnold (1963) | Refinement using 2-variable functions | Made KST more practical for computation |

| Lorentz (2008) | KST in approximation theory | Linked KST to function approximation errors |

| Pinkus (1999) | KST in neural networks | Theoretical basis for deep learning |

| Perdikaris (2024) | Deep learning reinterpretation | Proposed Kolmogorov-Arnold Networks |

| Alhafiz (2025) | KST-based turbulence modeling | Improved CFD simulations |

| Lorencin (2024) | KST in naval propulsion | Optimized ship energy efficiency |

2.3.1.2 Analysis of Kolmogorov-Arnold Superposition Theorem

2.3.1.3 Python Code to Generate Figure 20

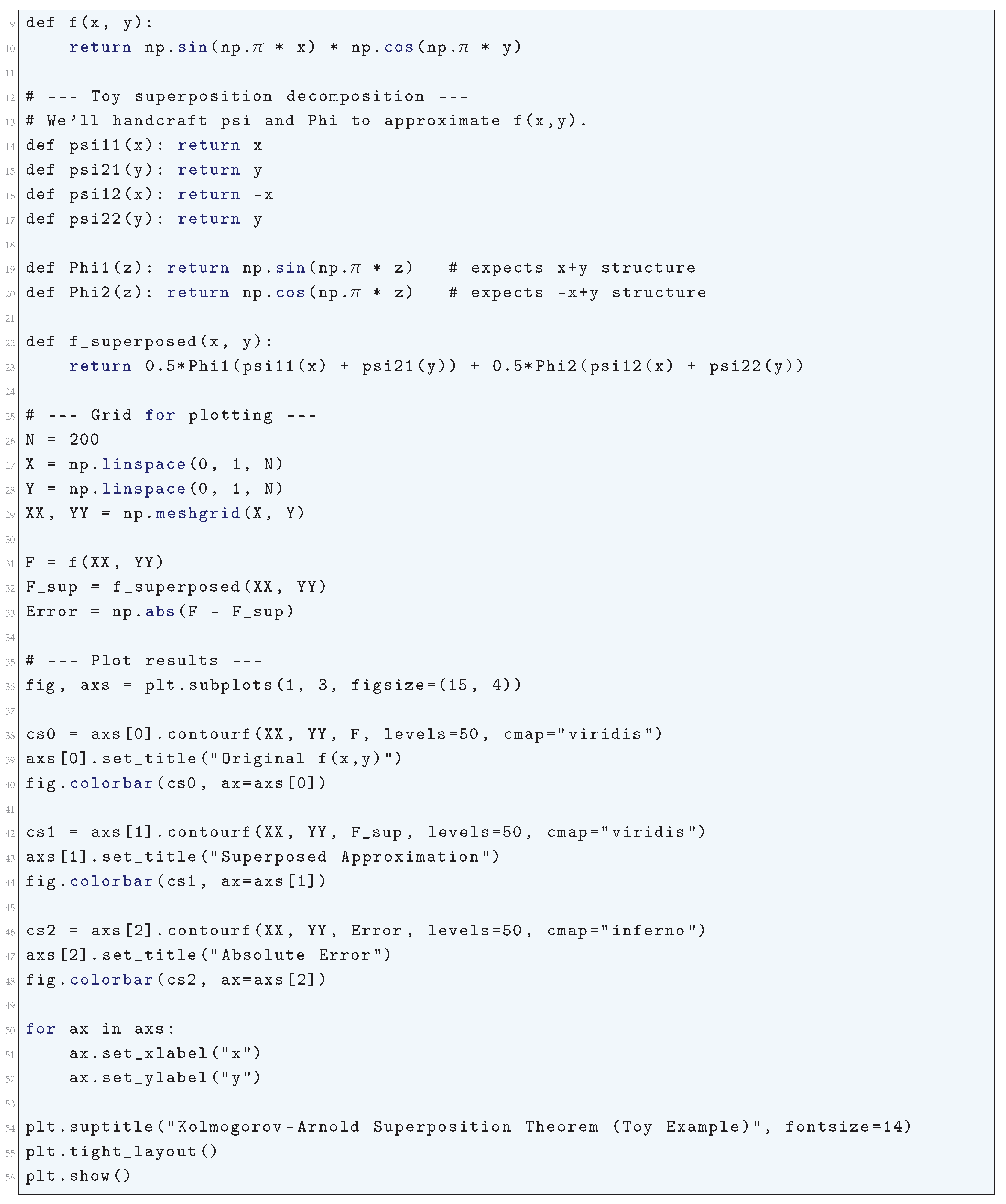

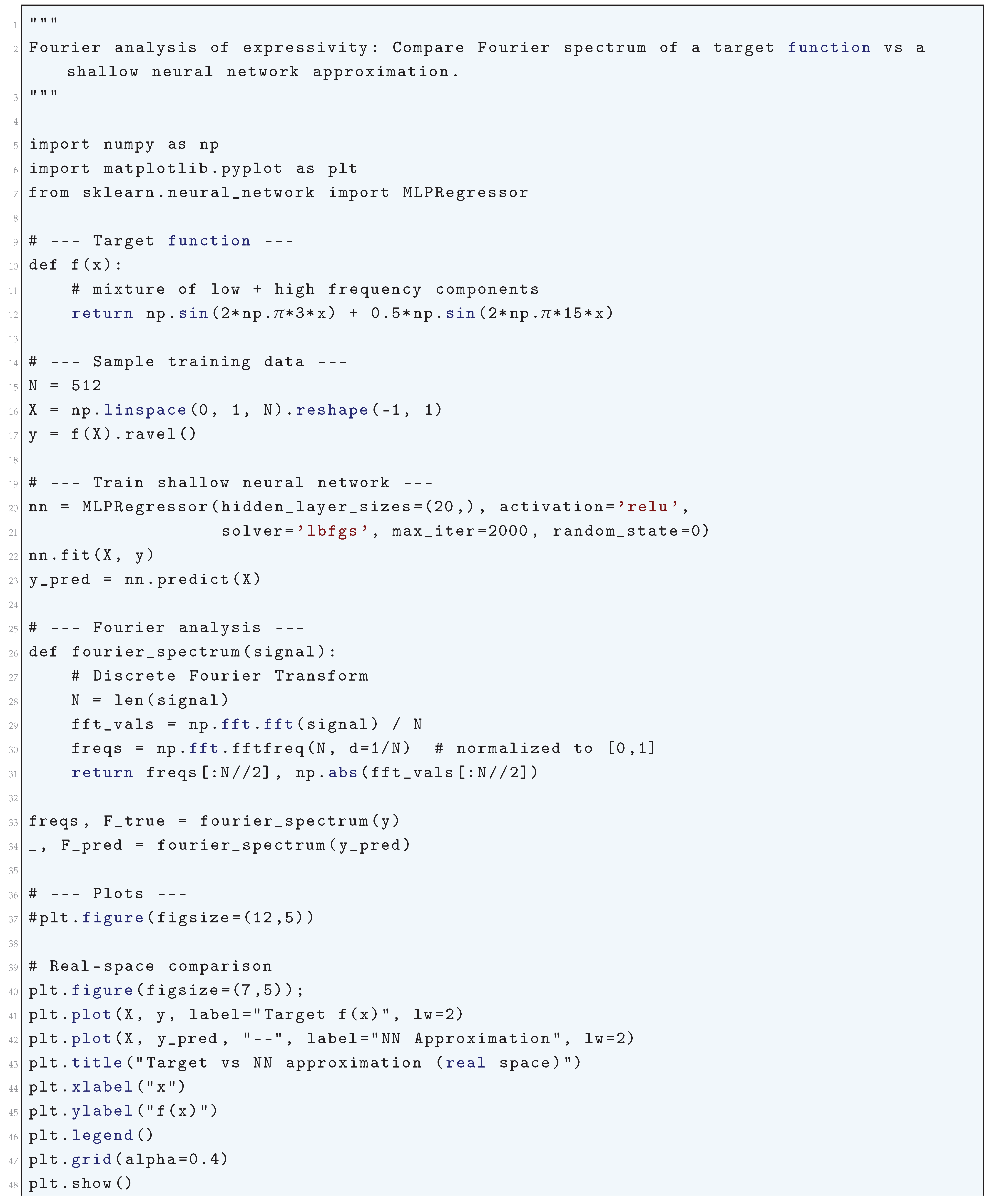

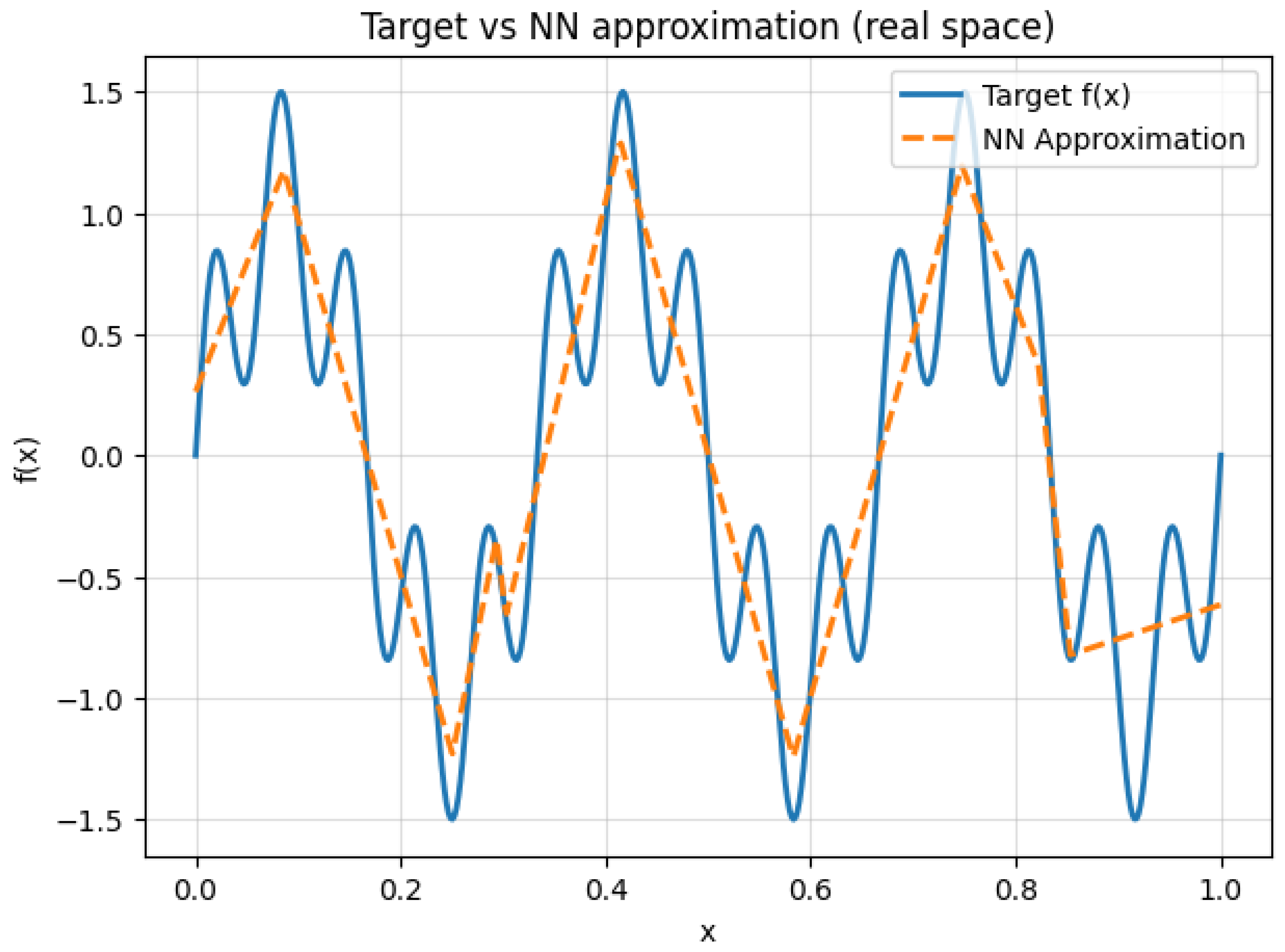

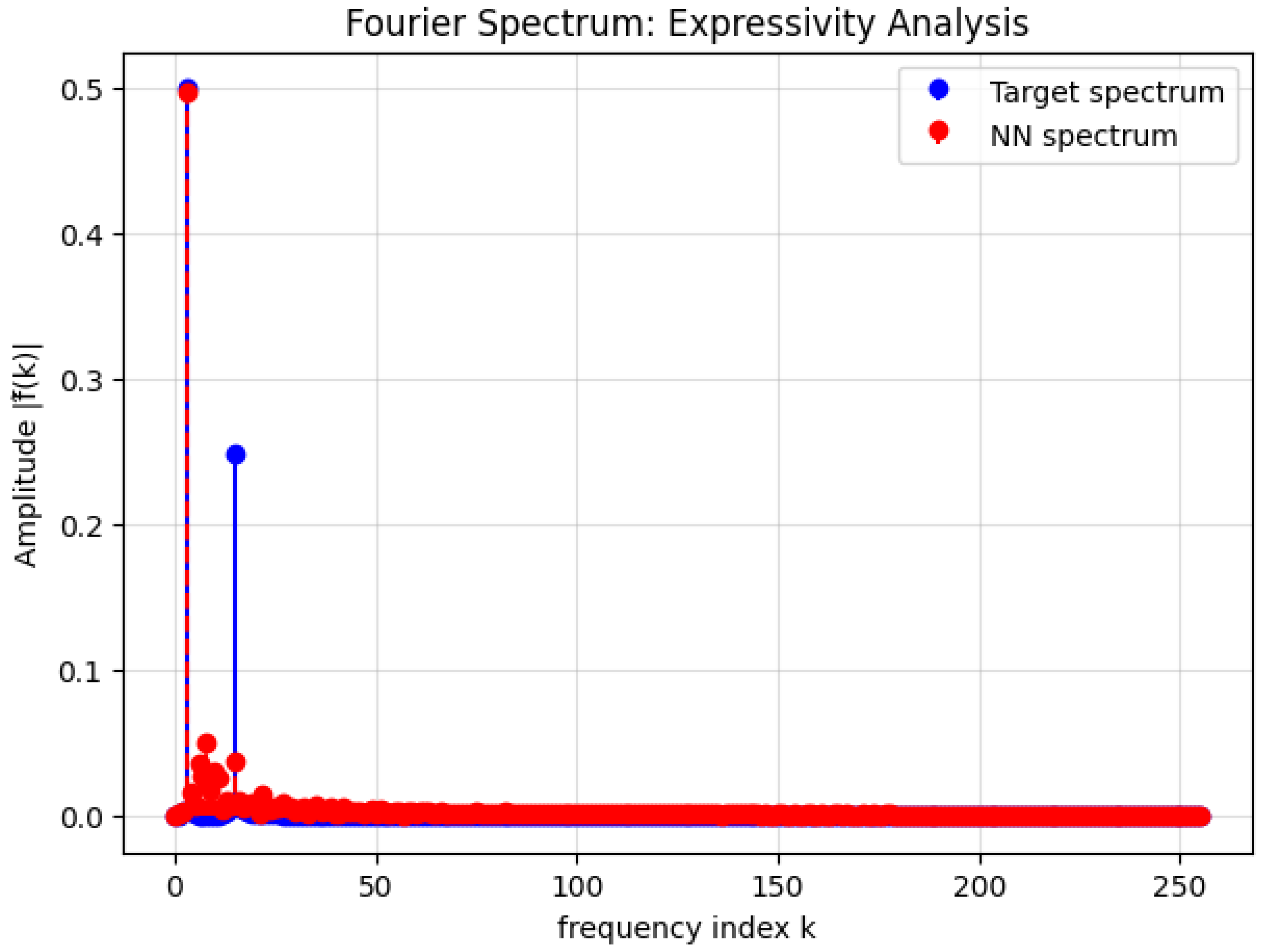

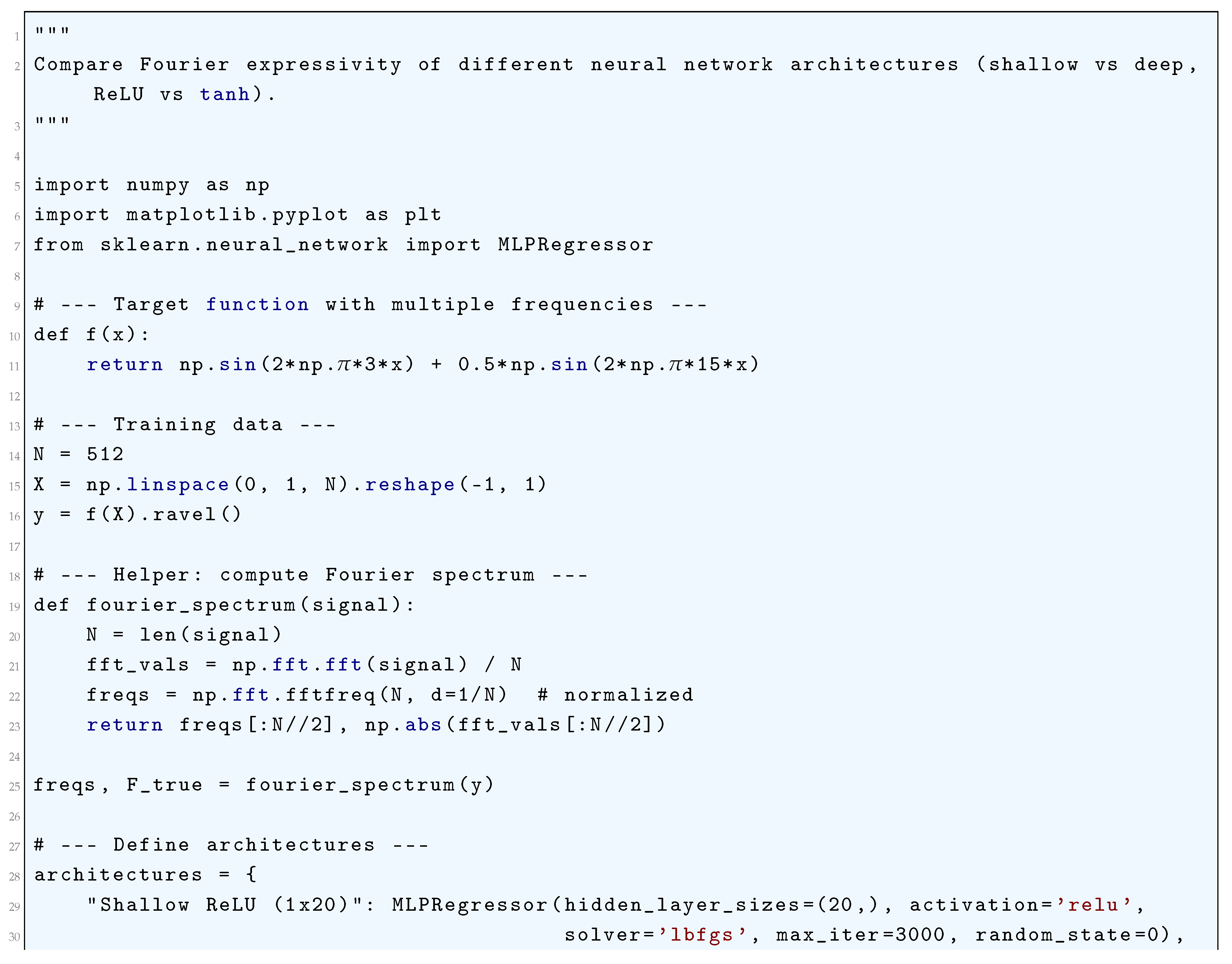

2.3.2. Fourier Analysis of Expressivity

2.3.2.1 Literature Review of Fourier Analysis of Expressivity

2.3.2.2 Analysis of Fourier Analysis of Expressivity

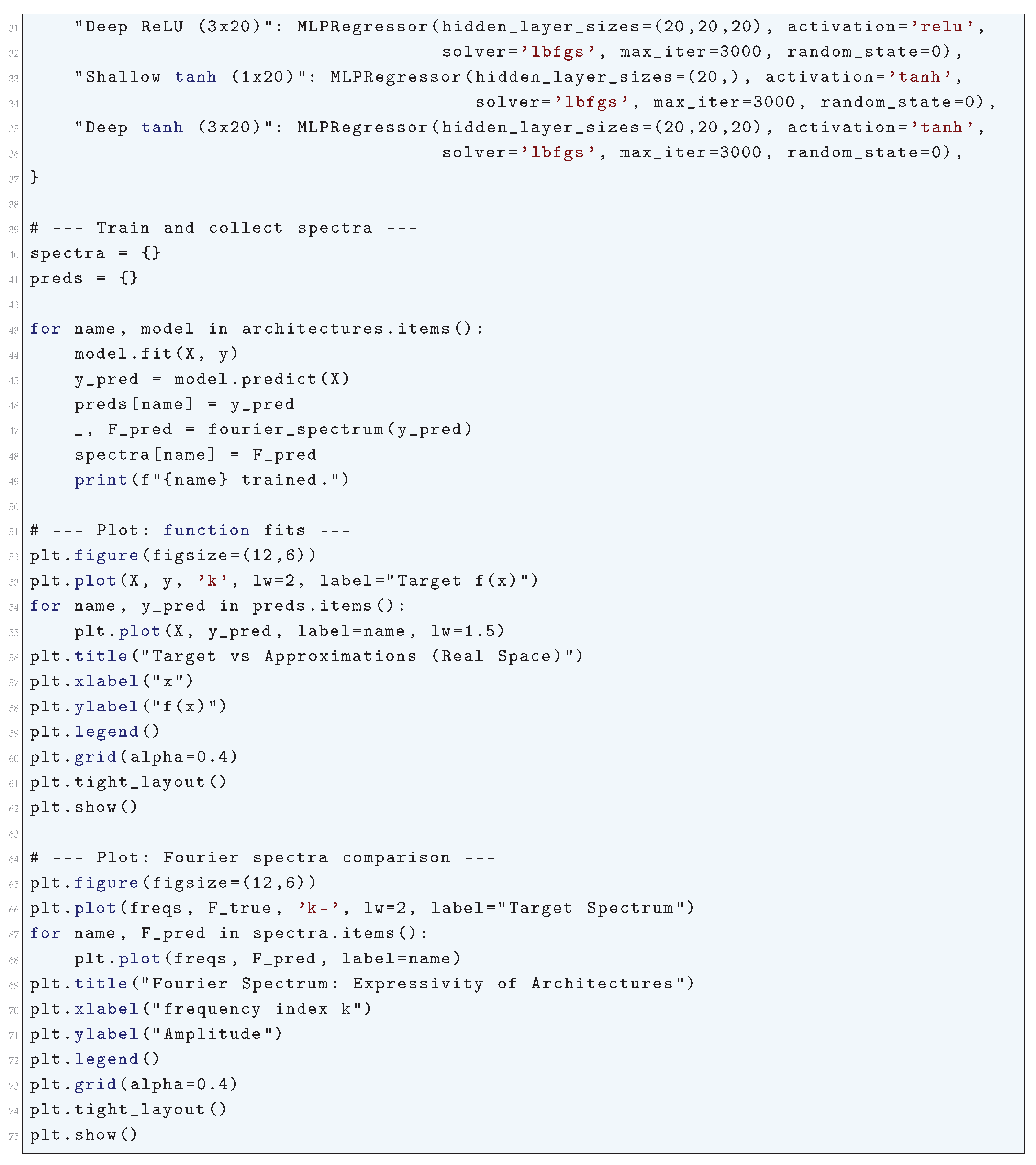

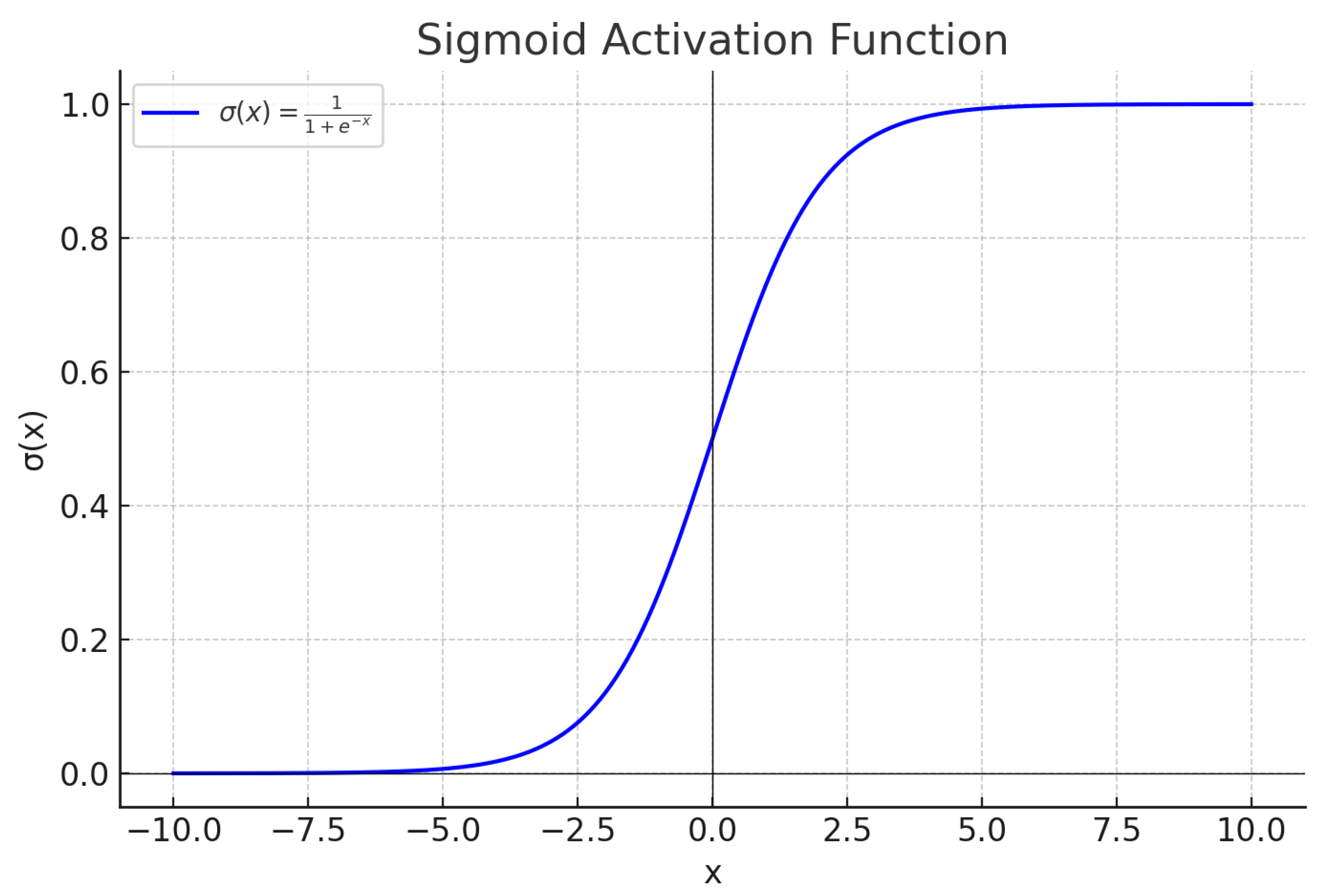

| Activation Function | Fourier Decay Rate | Effect on Frequency Learning |

| Sigmoid | (Exponential) | Strong low-pass filter, retains only low frequencies |

| Tanh | (Exponential) | Strong low-pass filter, smooth approximations |

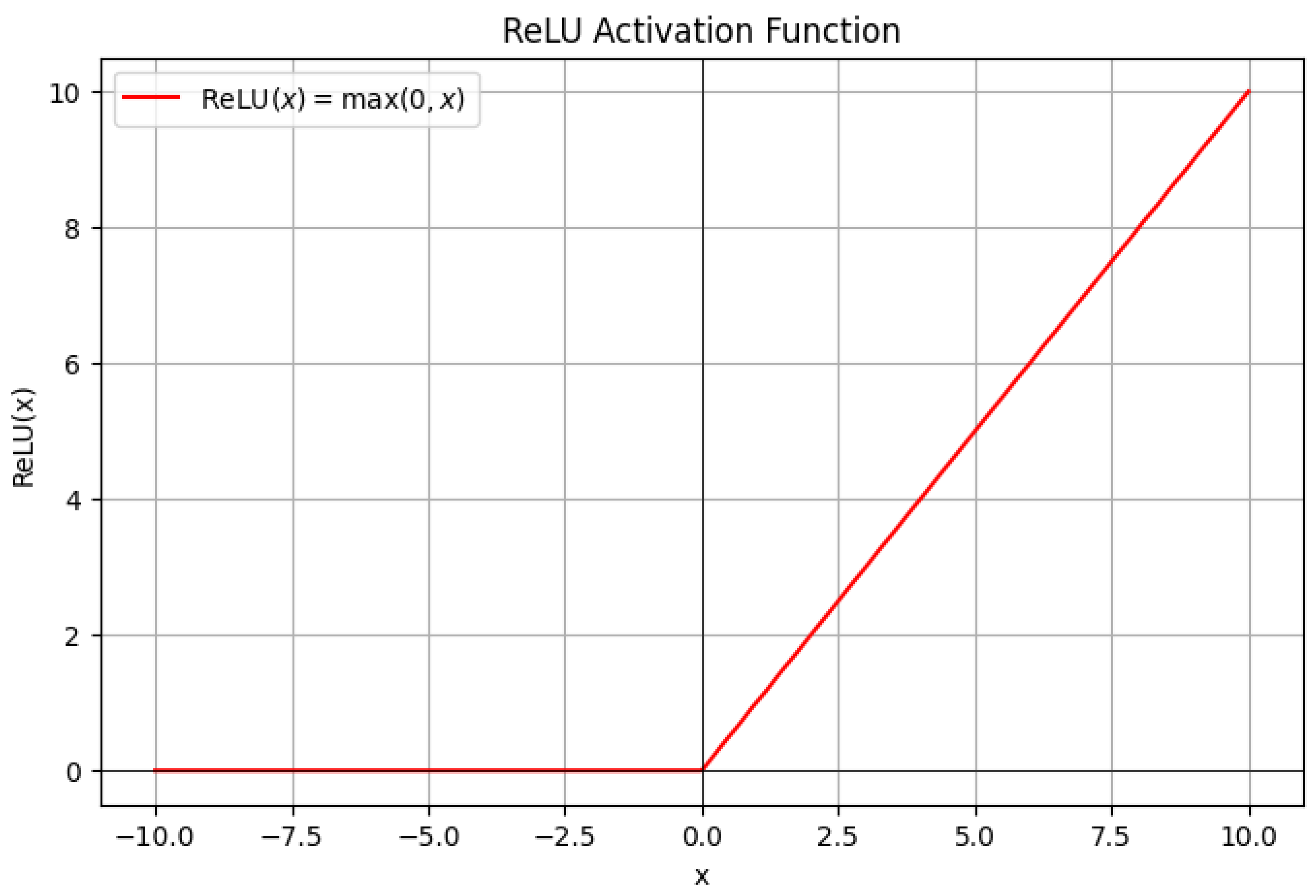

| ReLU | (Power-law) | Allows moderate frequency learning |

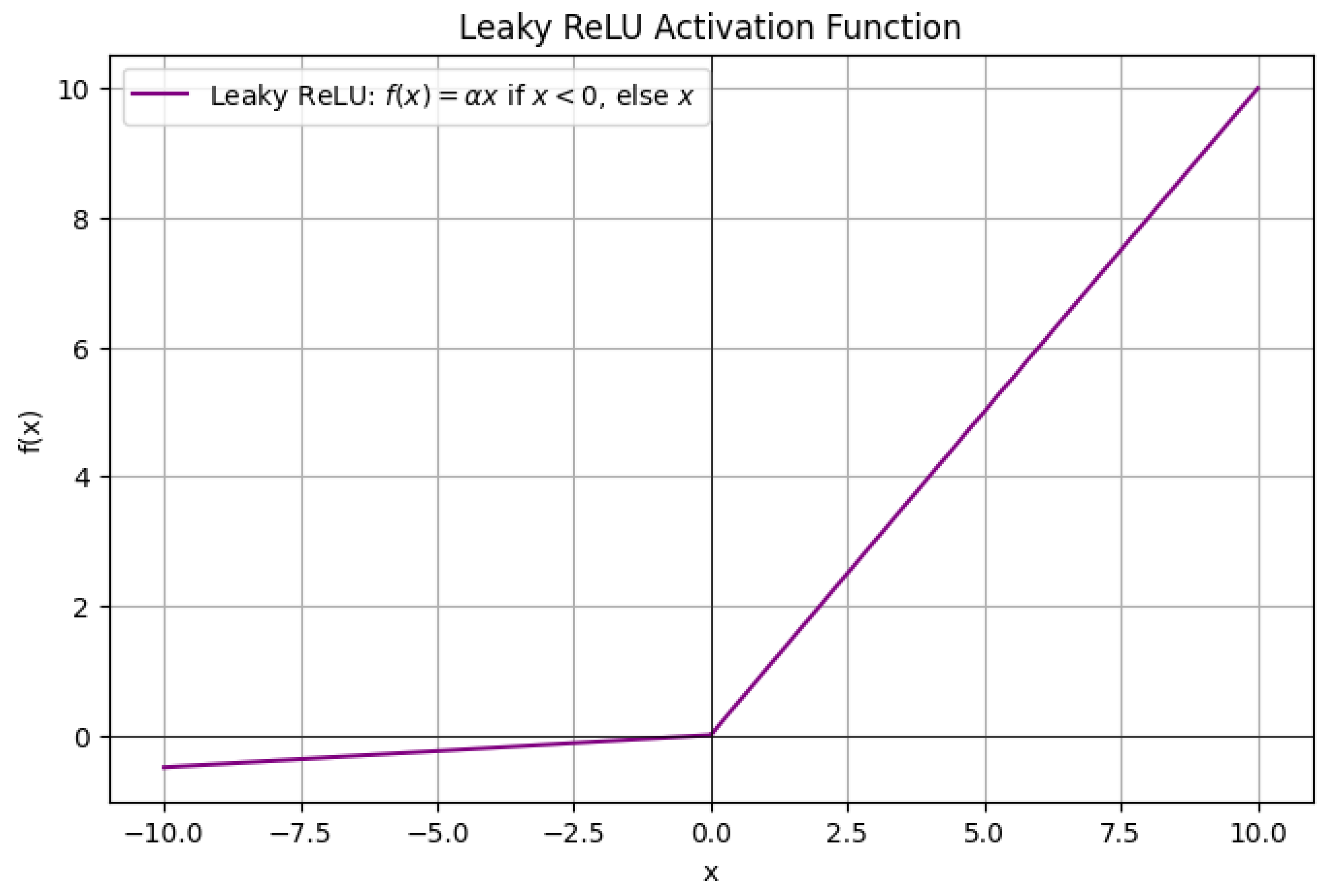

| Leaky ReLU | (Power-law) | Similar to ReLU with slightly improved high-frequency retention |

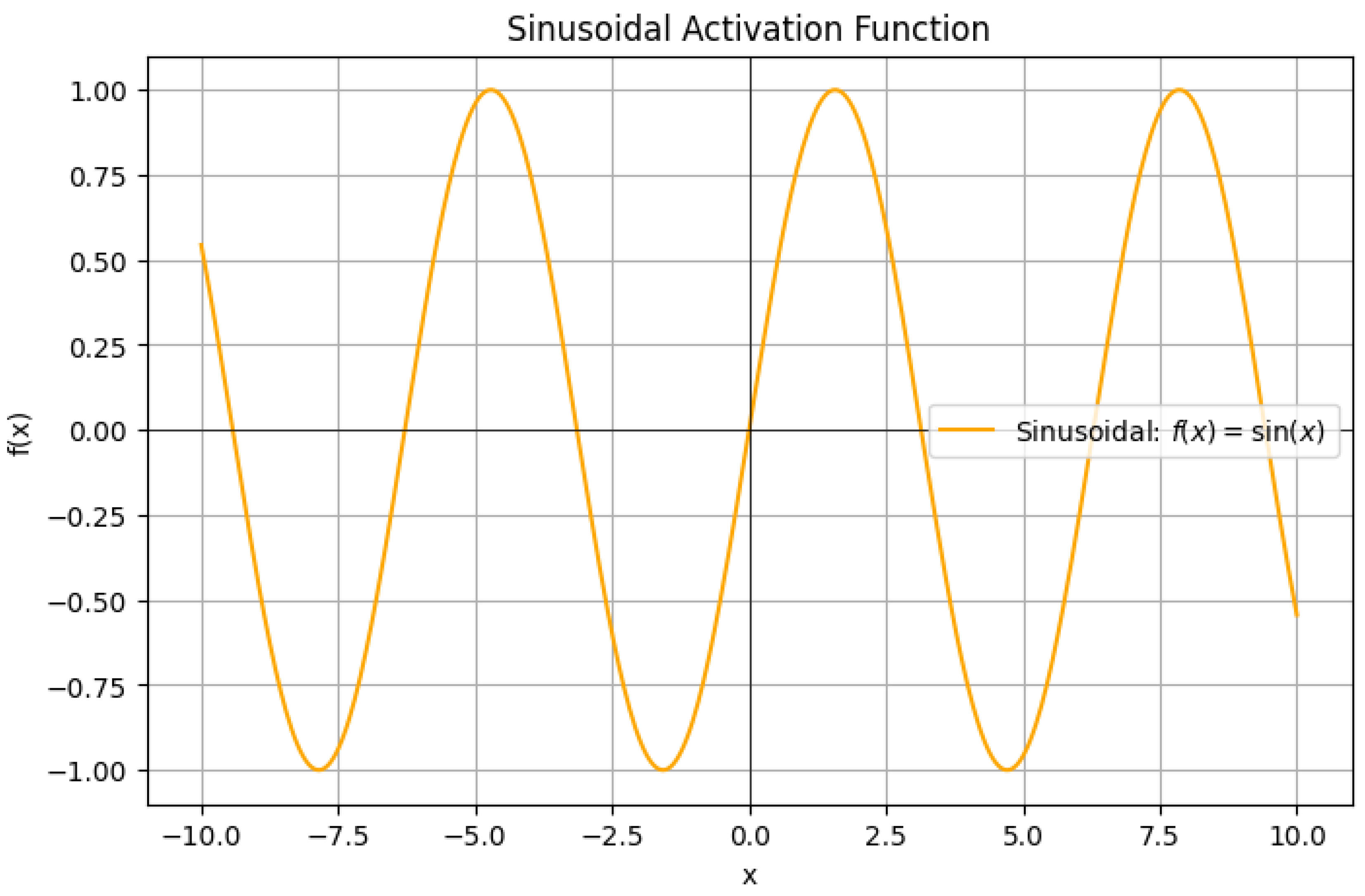

| Sinusoidal | No decay | Captures all frequencies, highly oscillatory functions |

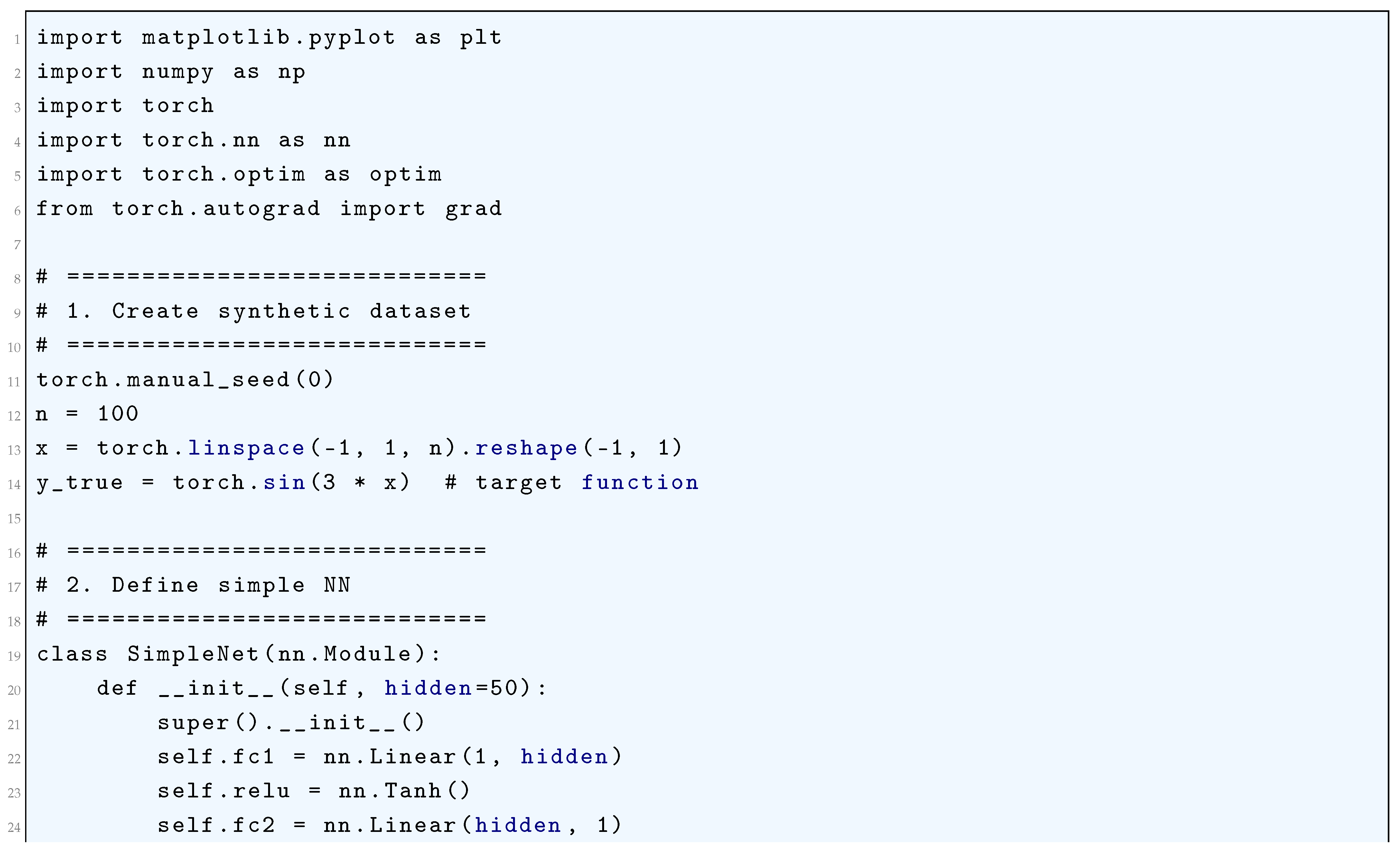

2.3.2.3 Python Code to Generate Figure 21 and Figure 22

2.3.2.4 Python Code to Generate Figure 23 and Figure 24

2.3.3. Fourier Transforms of Various Activation Functions

2.3.3.1 Fourier Transform of the Sigmoid Function

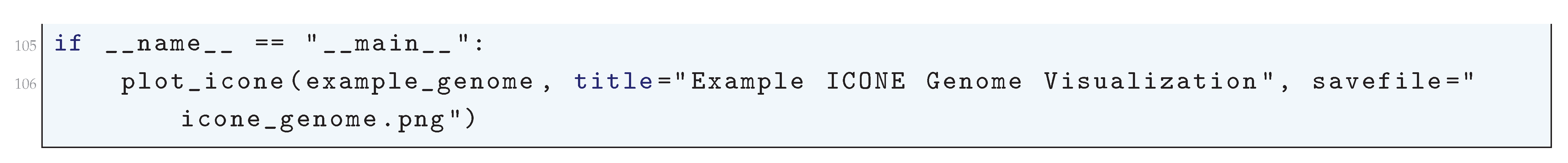

2.3.3.2 Fourier Transform of the Hyperbolic Tangent Function

2.3.3.3 Fourier Transform of the ReLU Function

2.3.3.4 Fourier Transform of the Leaky ReLU Function

2.3.3.5 Fourier Transform of the Sinusoidal Activation Function

2.4. The Connection Between Different Mathematics Problems and Deep Learning

2.4.1. Basel Problem and Deep Learning

2.4.1.1 The Basel Problem, Fourier Series, and Function Approximation in Deep Learning

2.4.1.2 The Role of the Basel Problem in Regularization and Weight Decay

2.4.1.3 Spectral Bias in Deep Learning and the Basel Problem

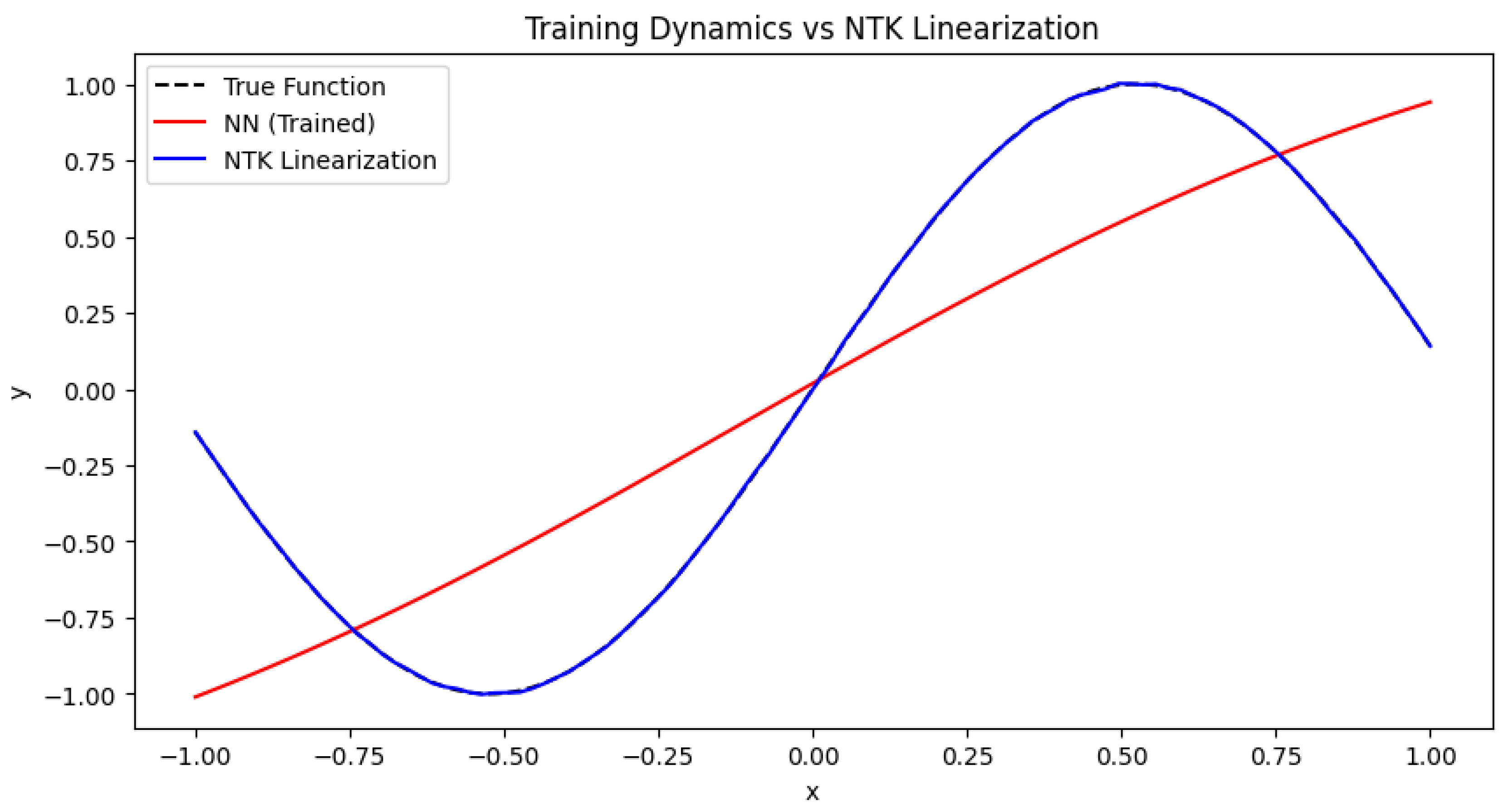

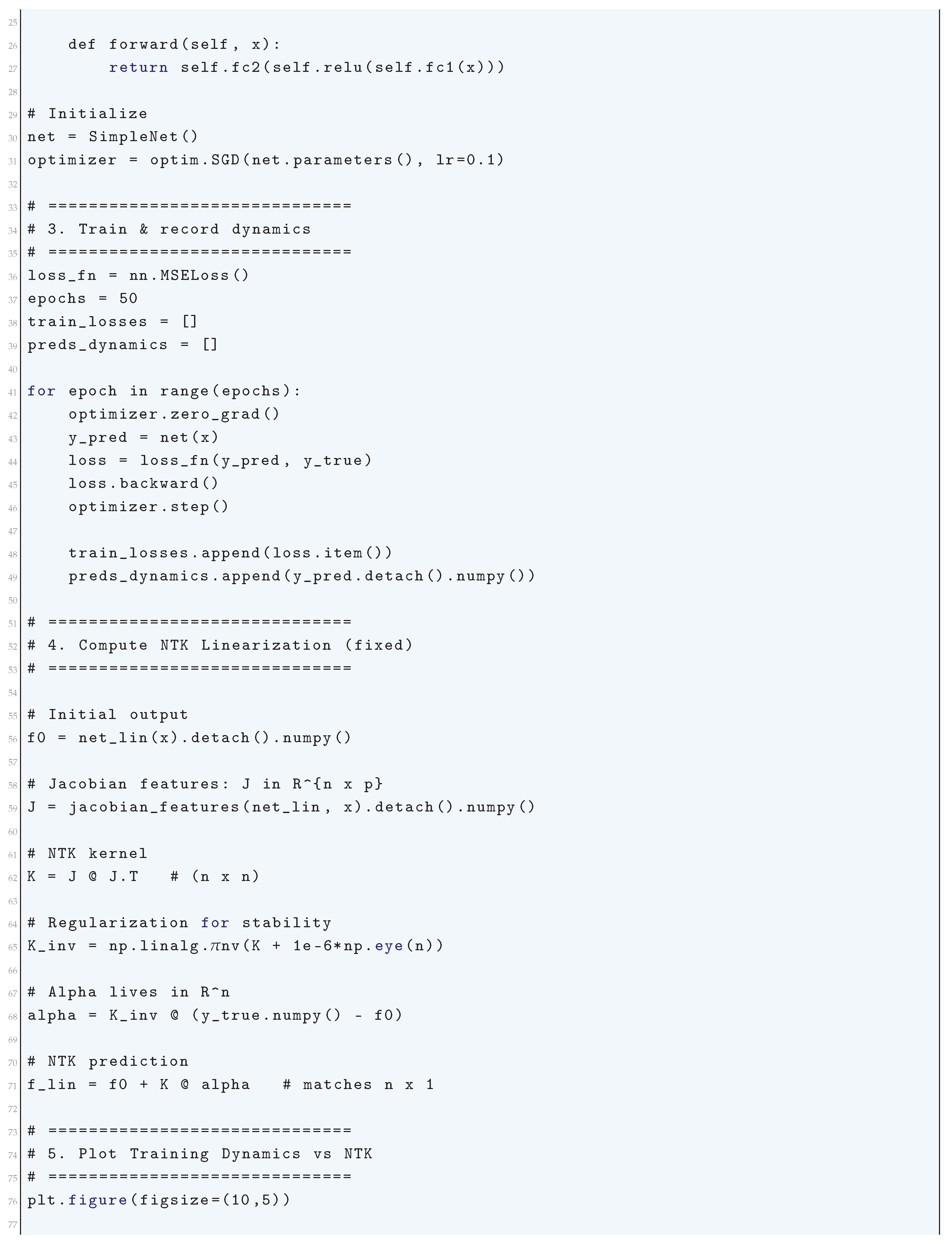

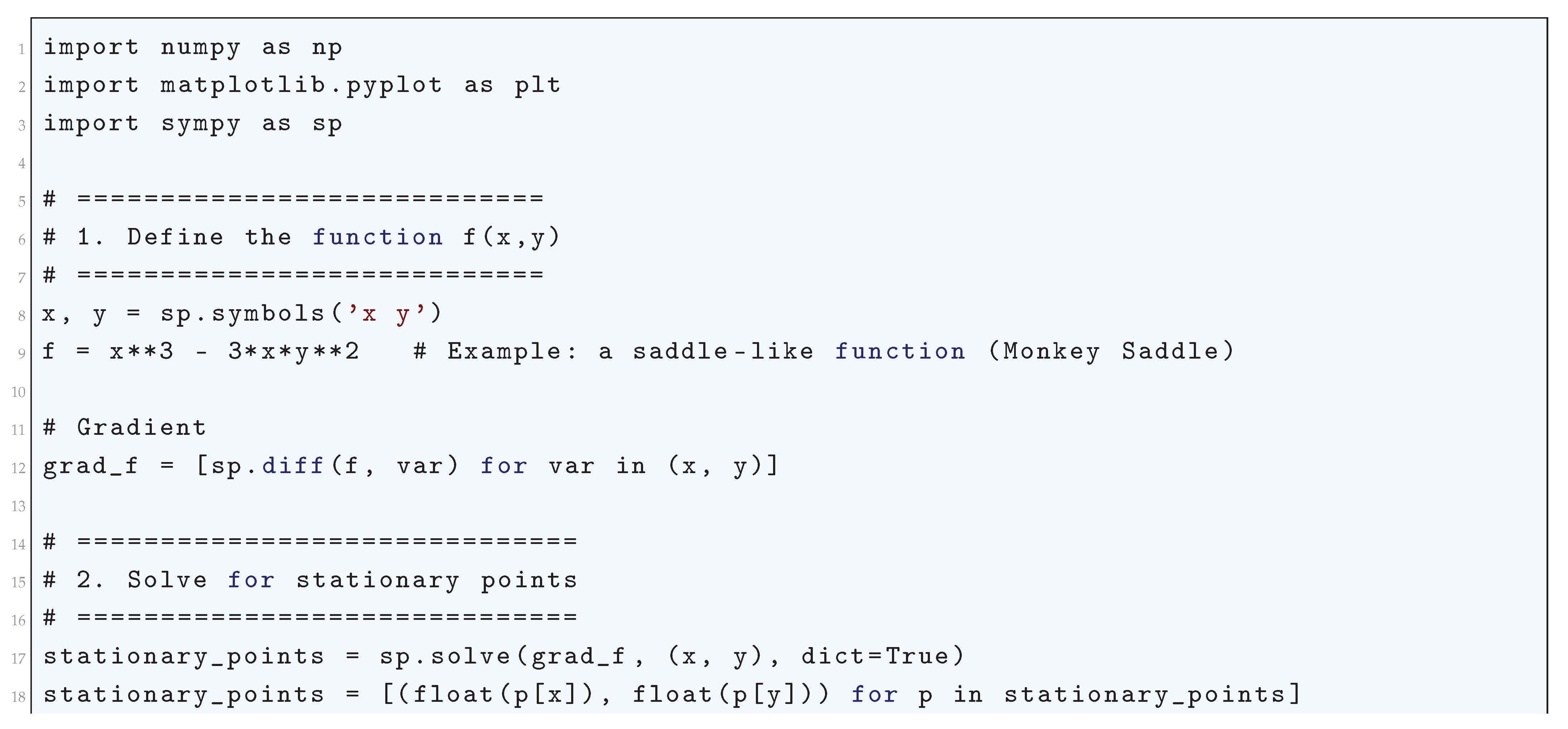

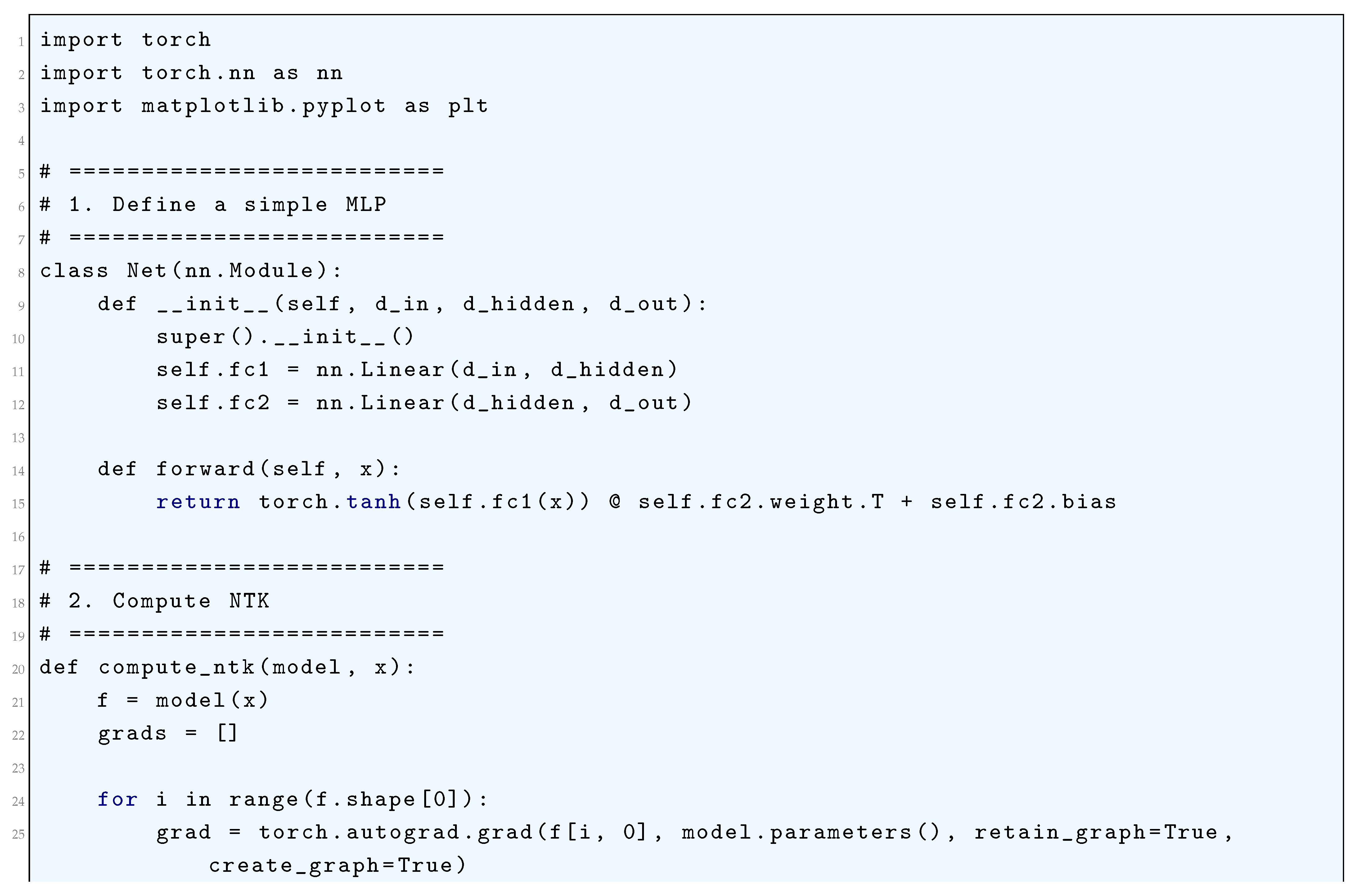

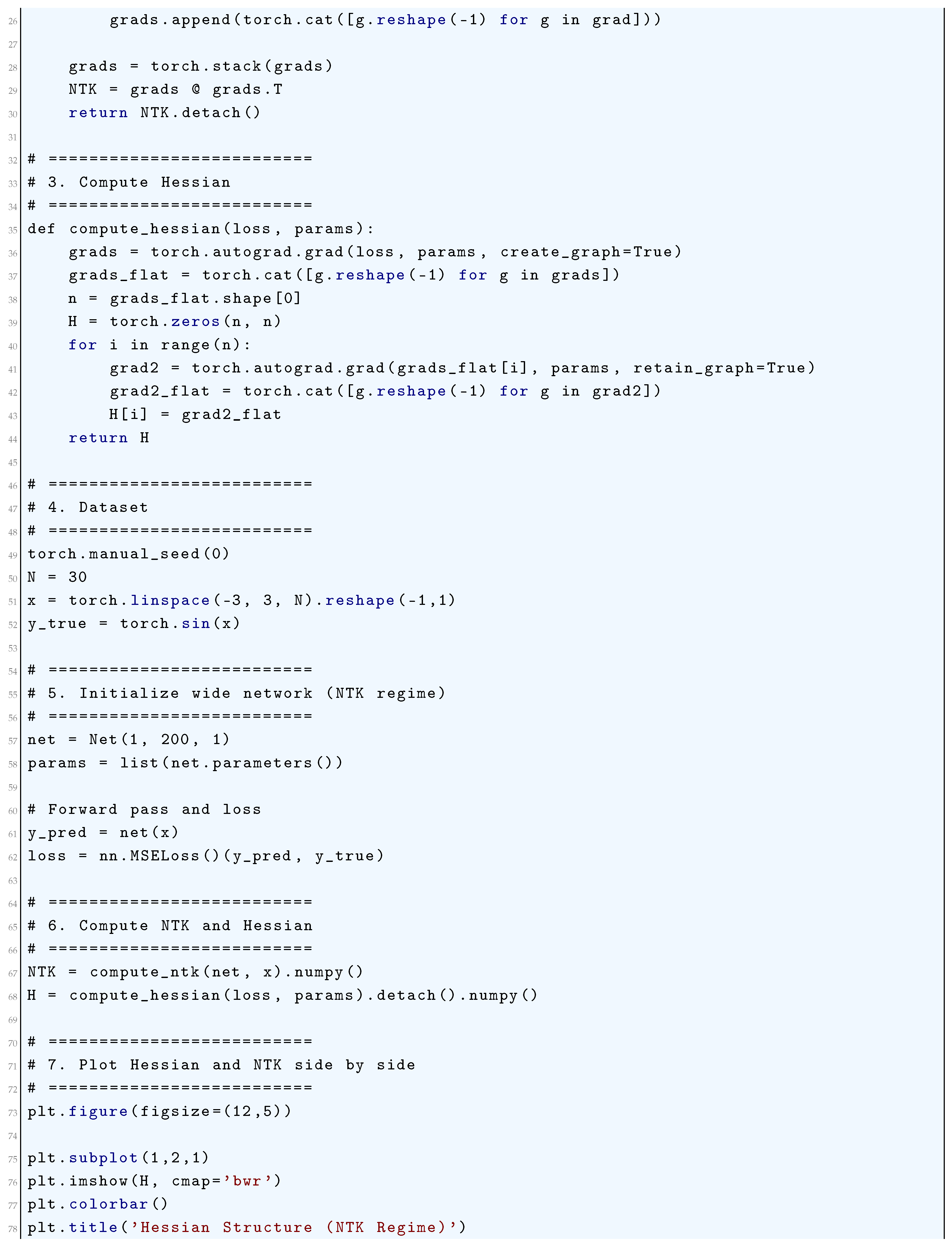

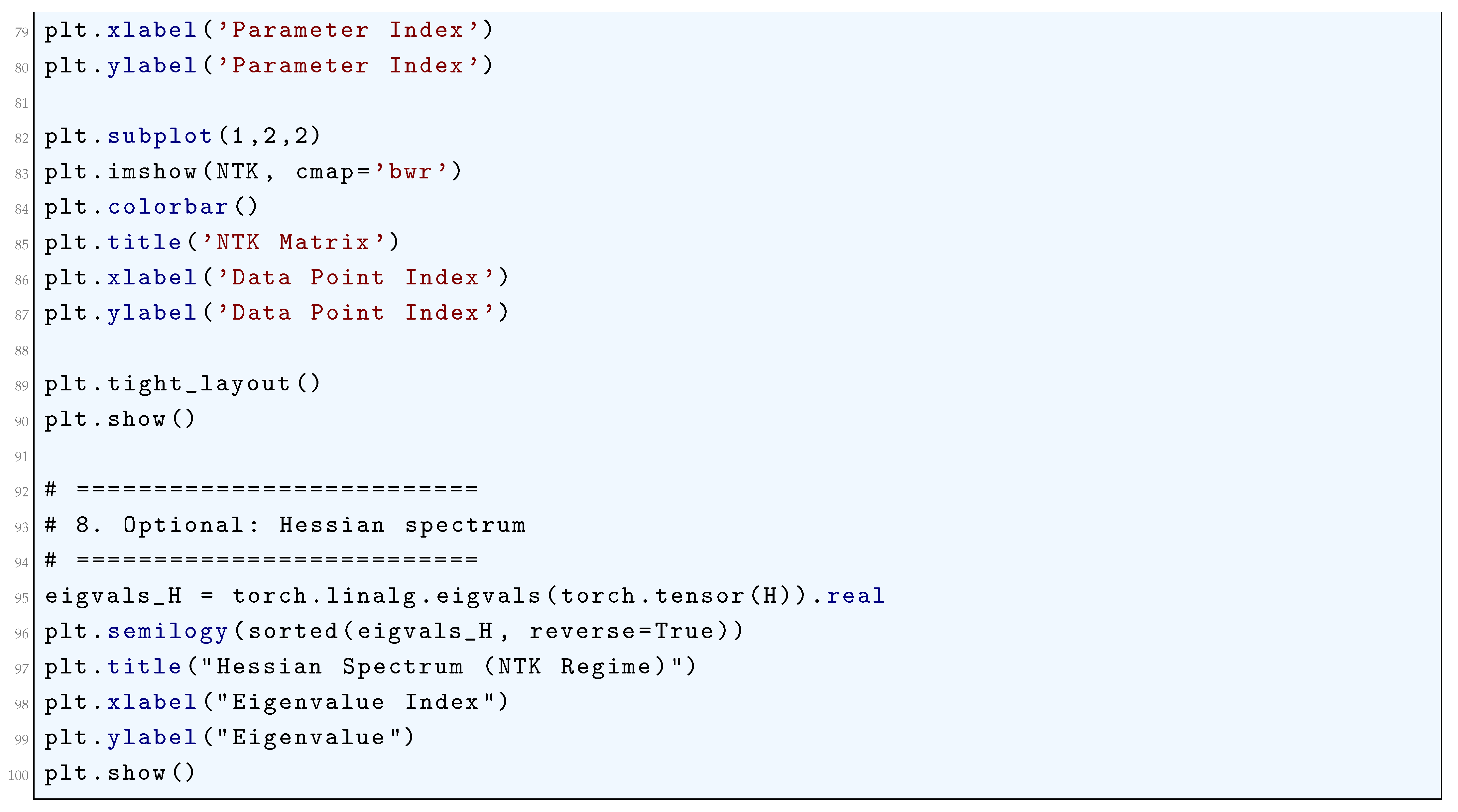

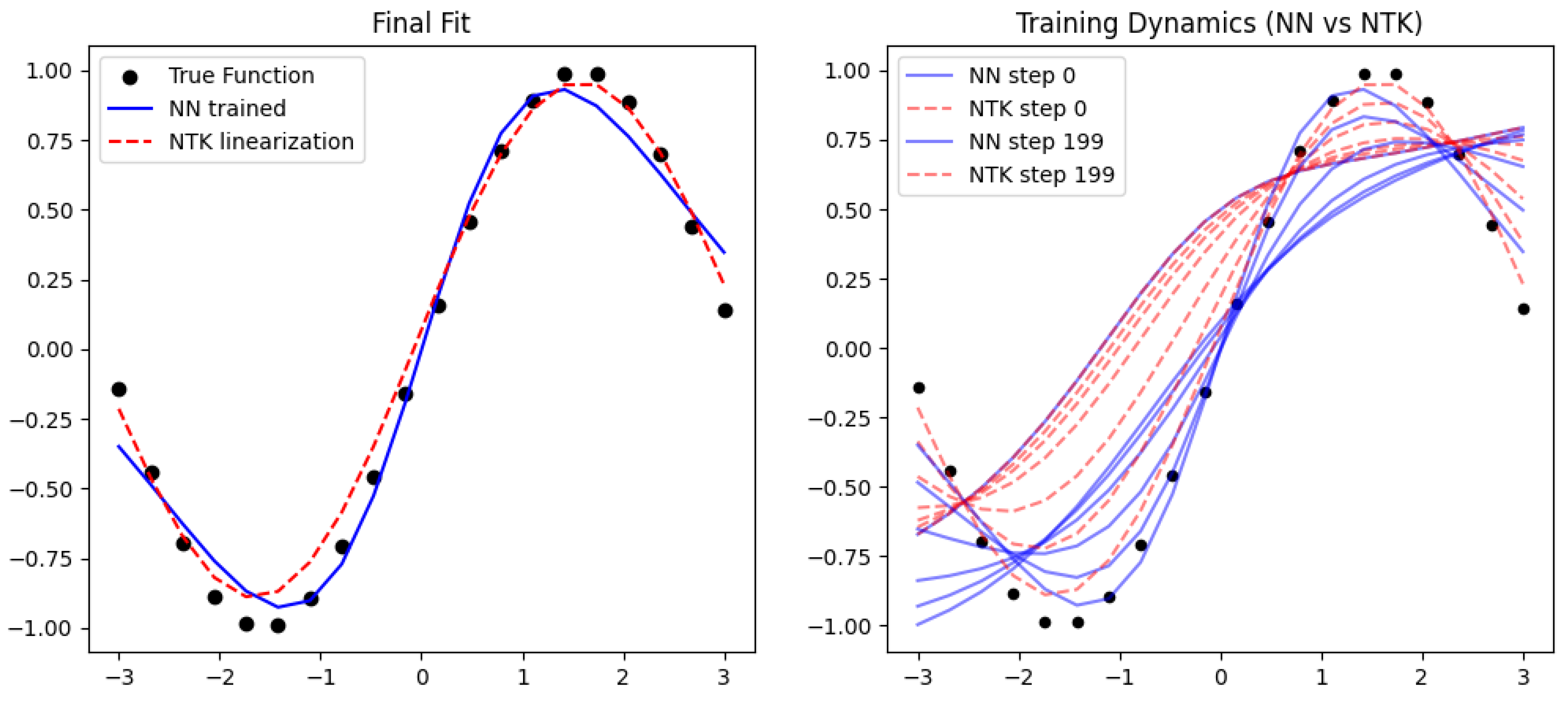

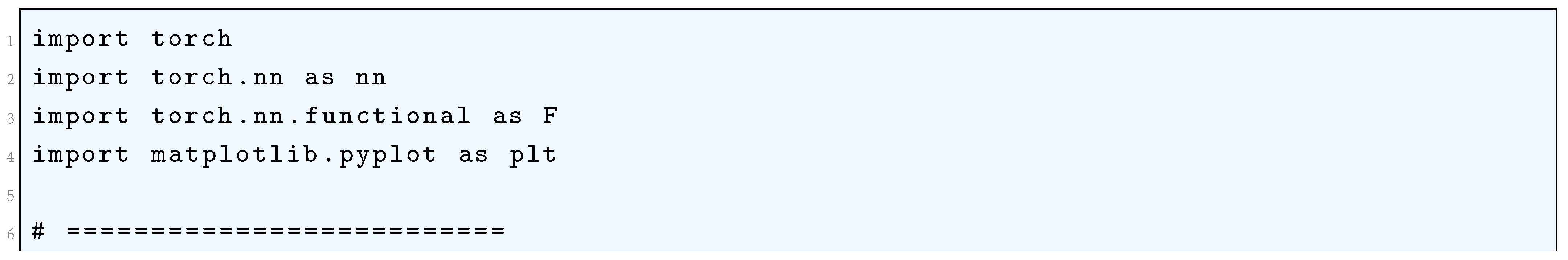

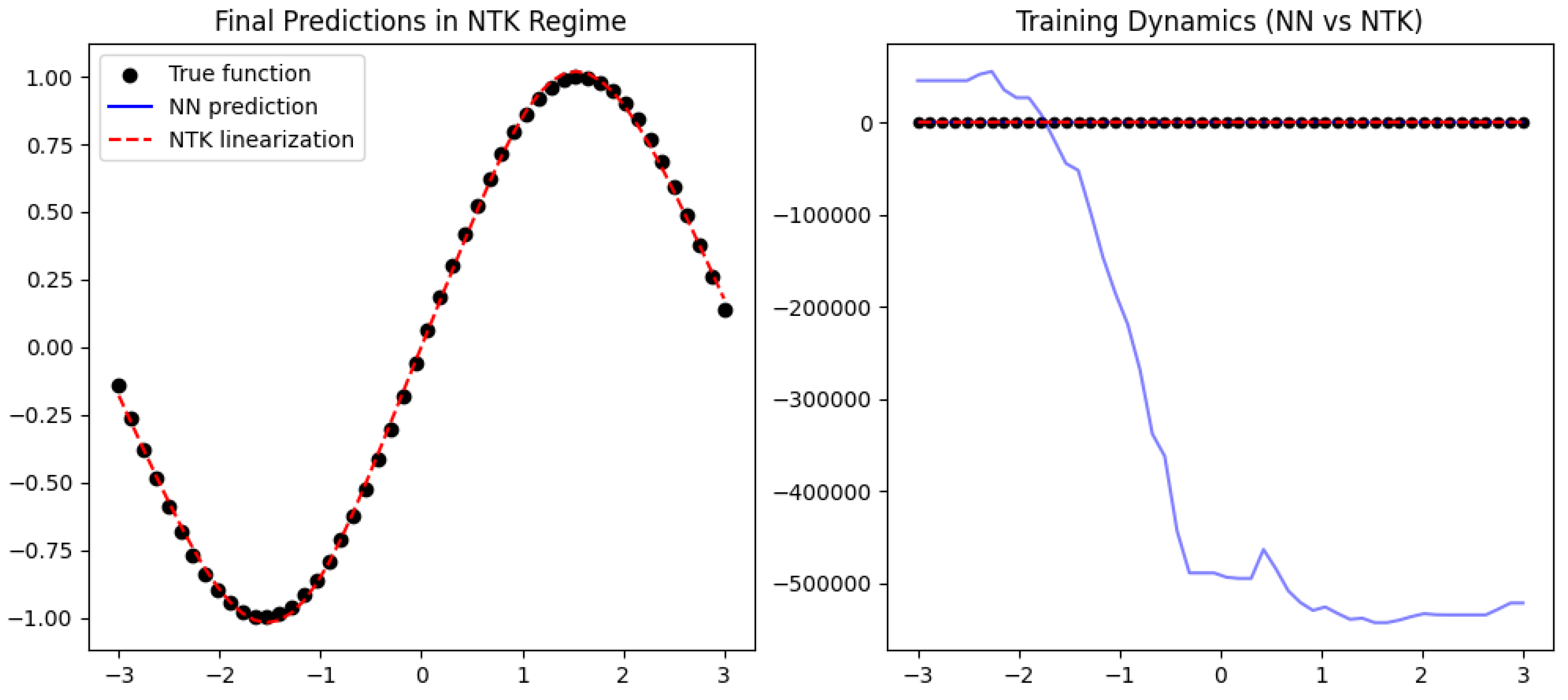

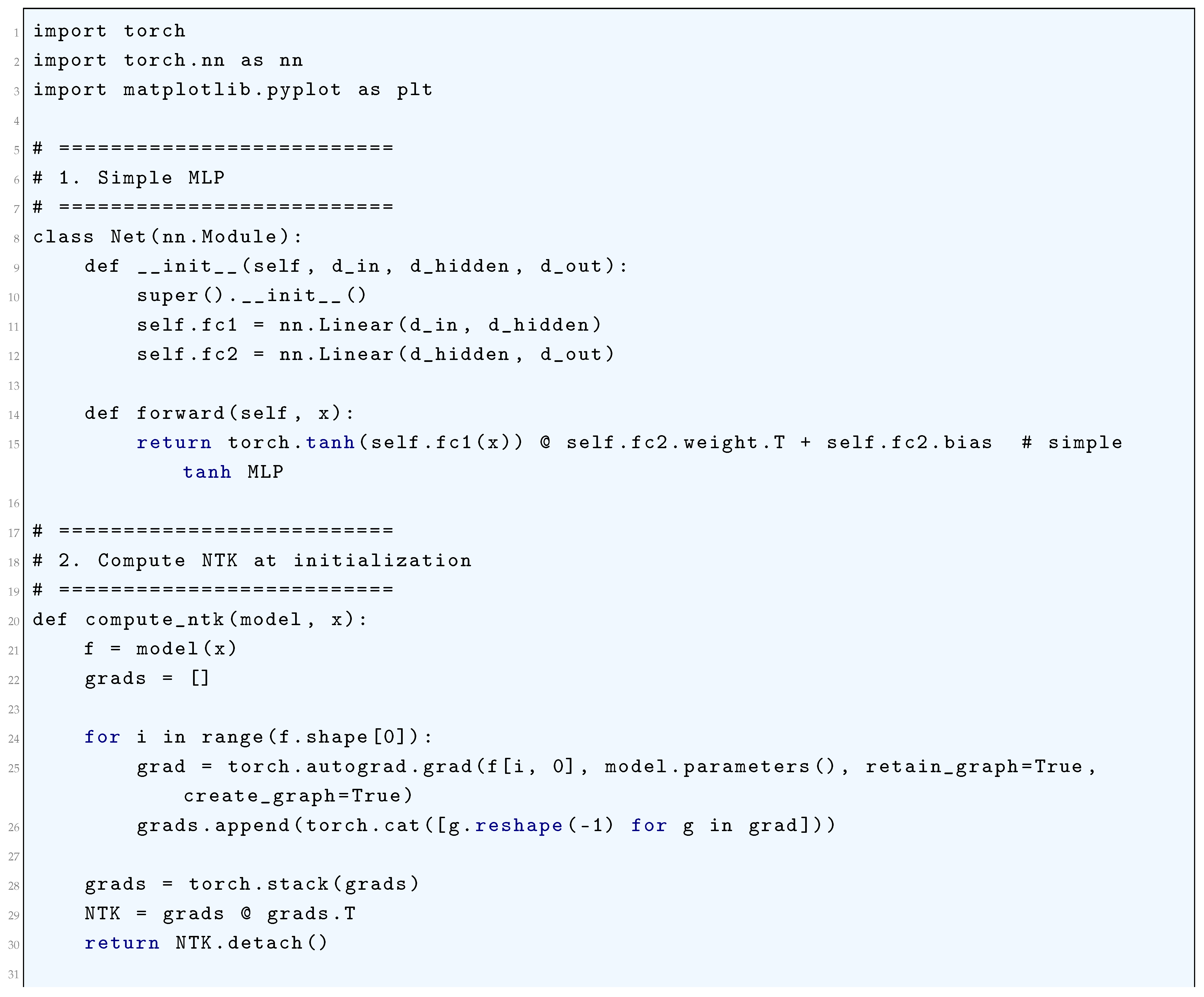

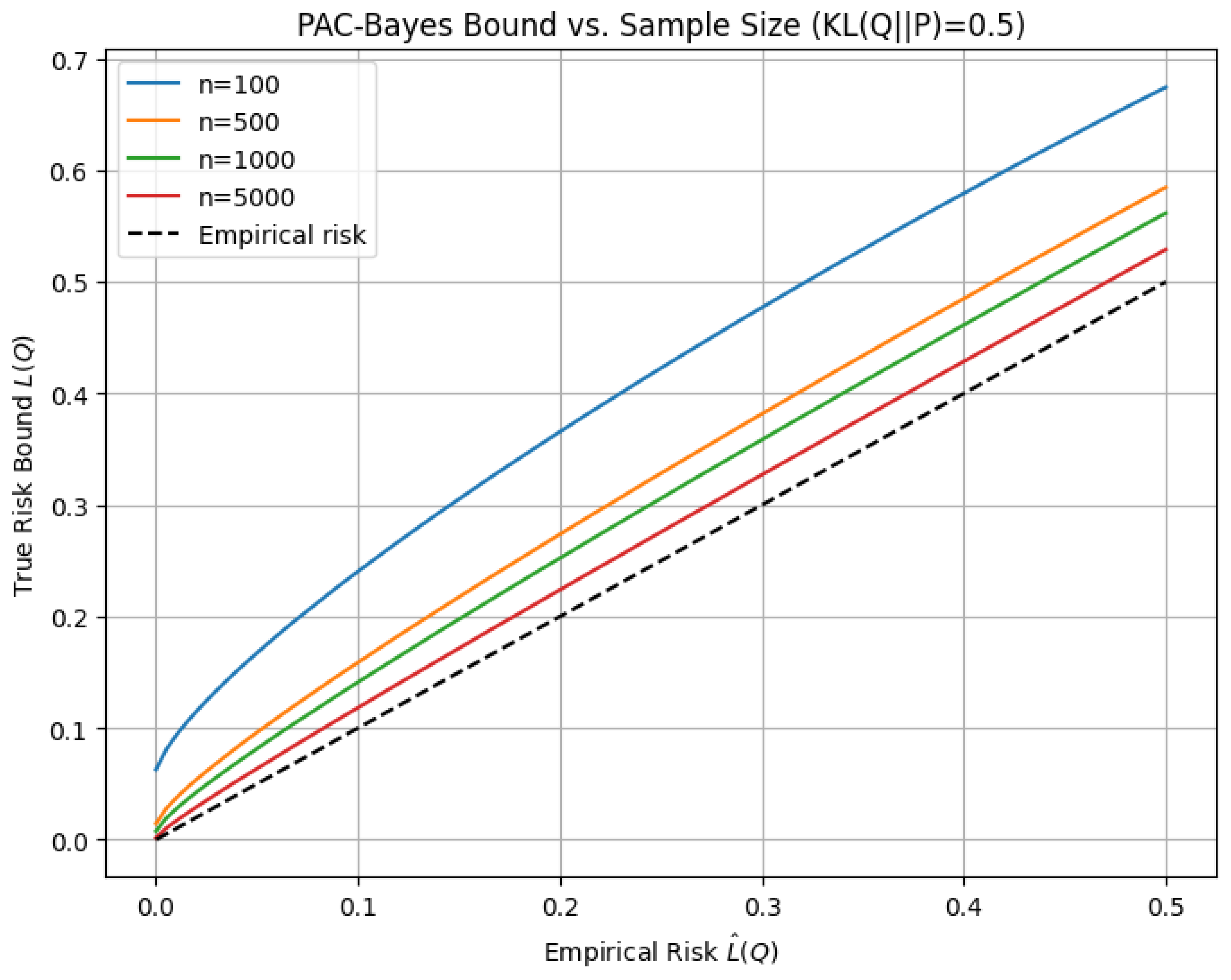

3. Training Dynamics and NTK Linearization

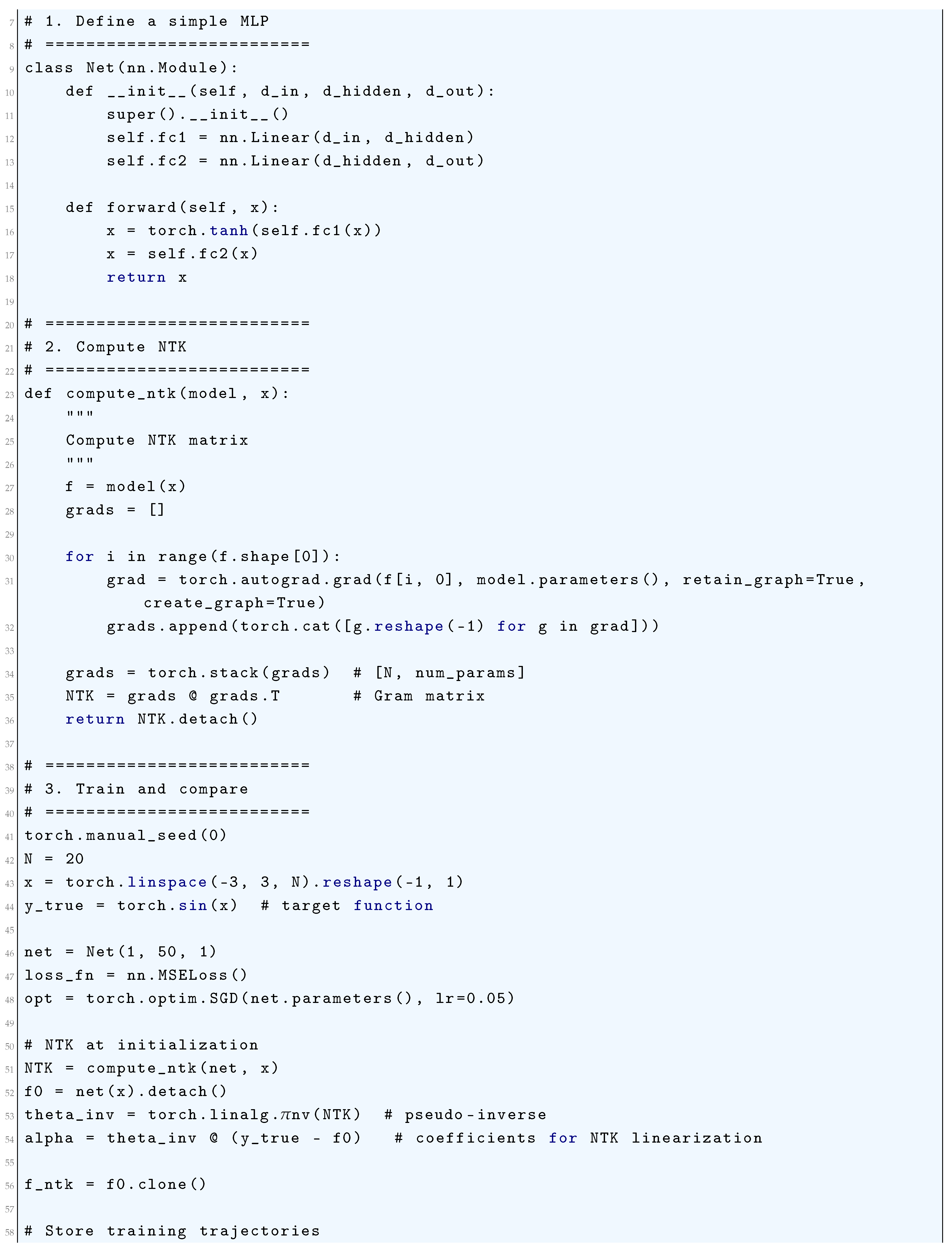

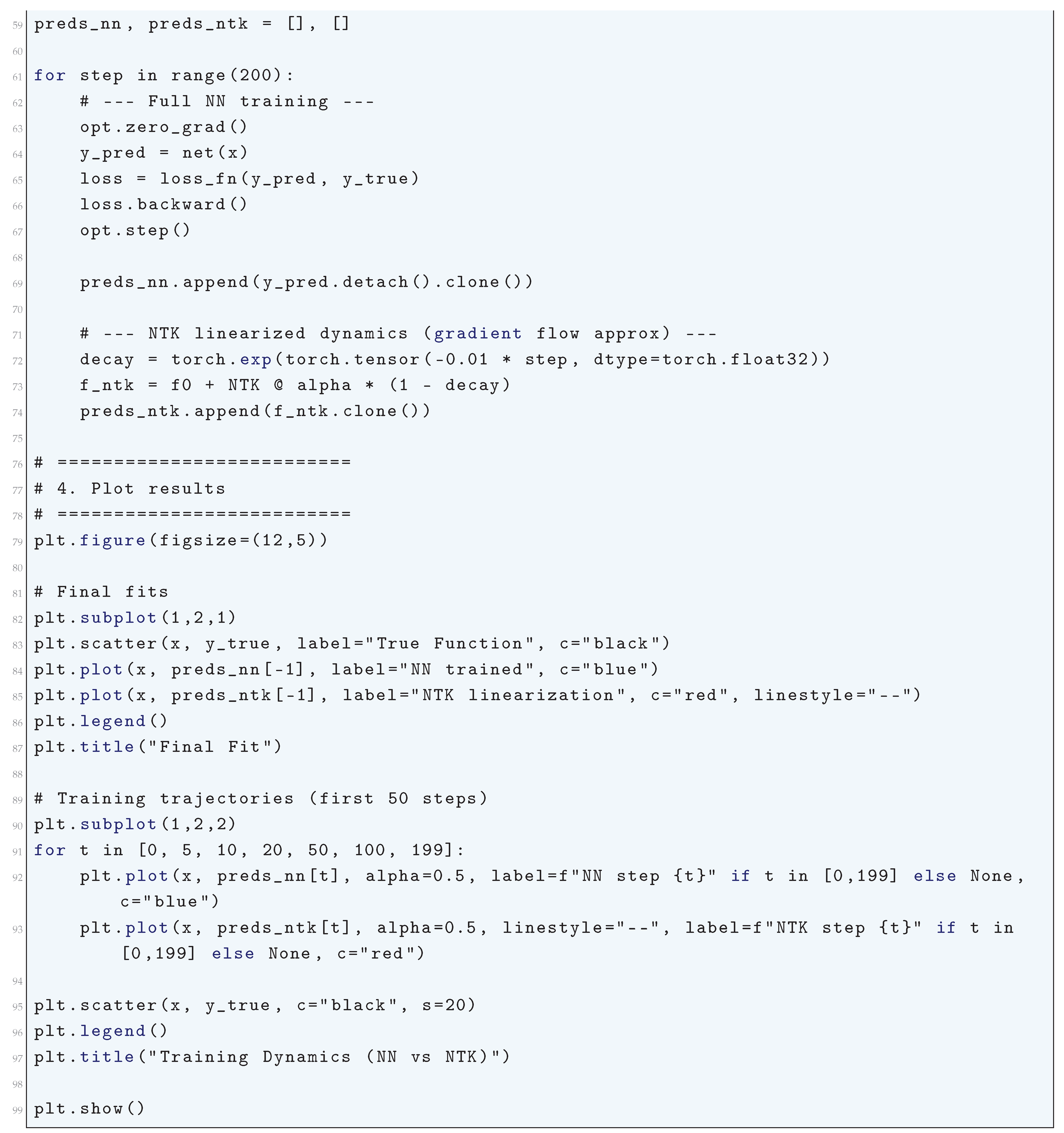

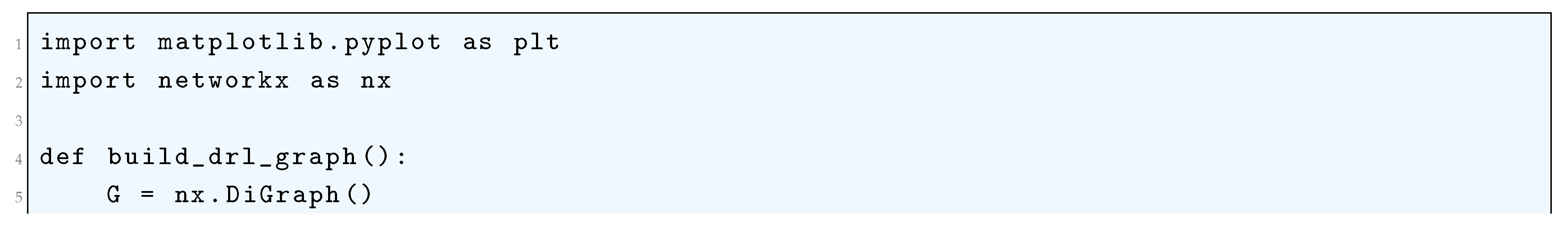

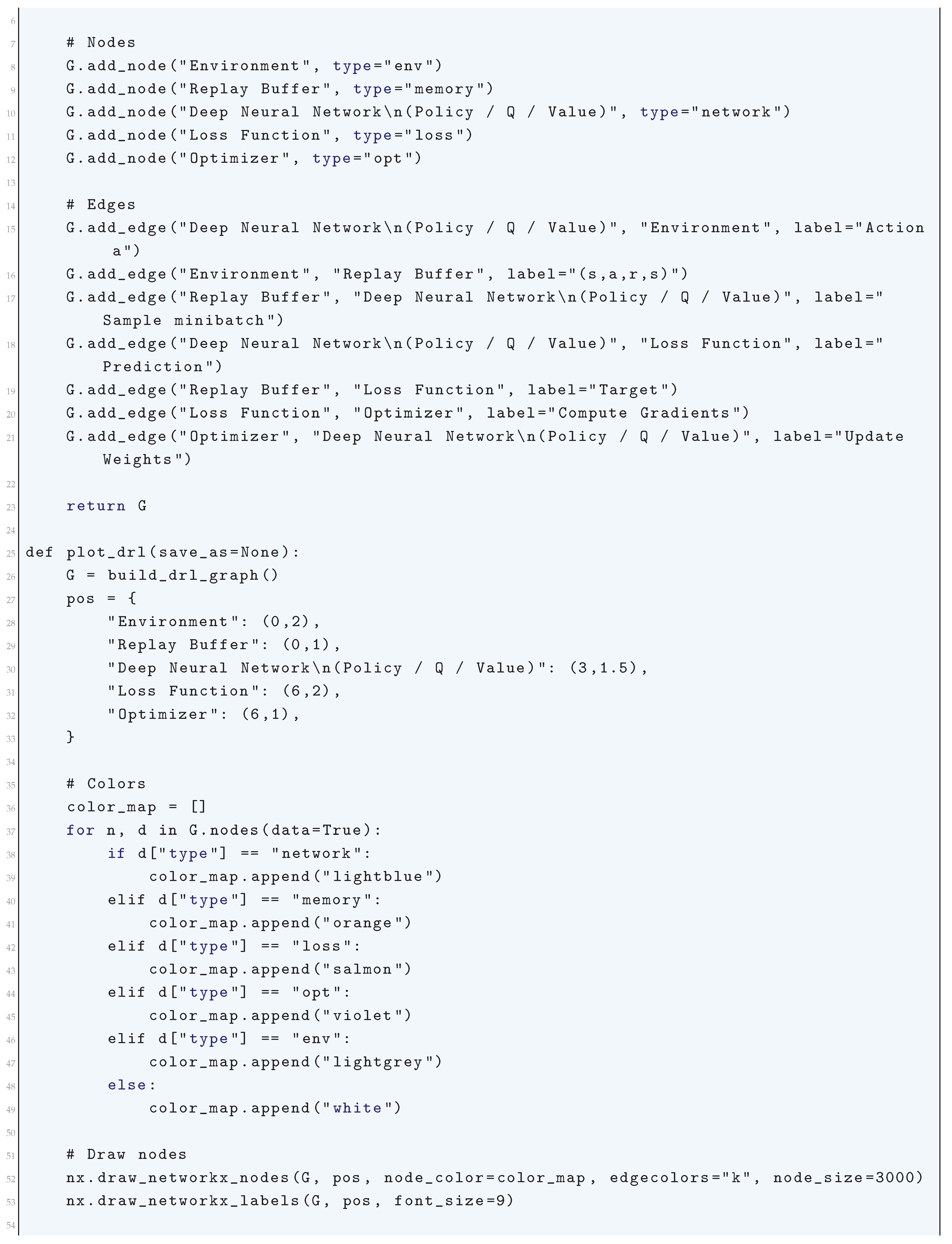

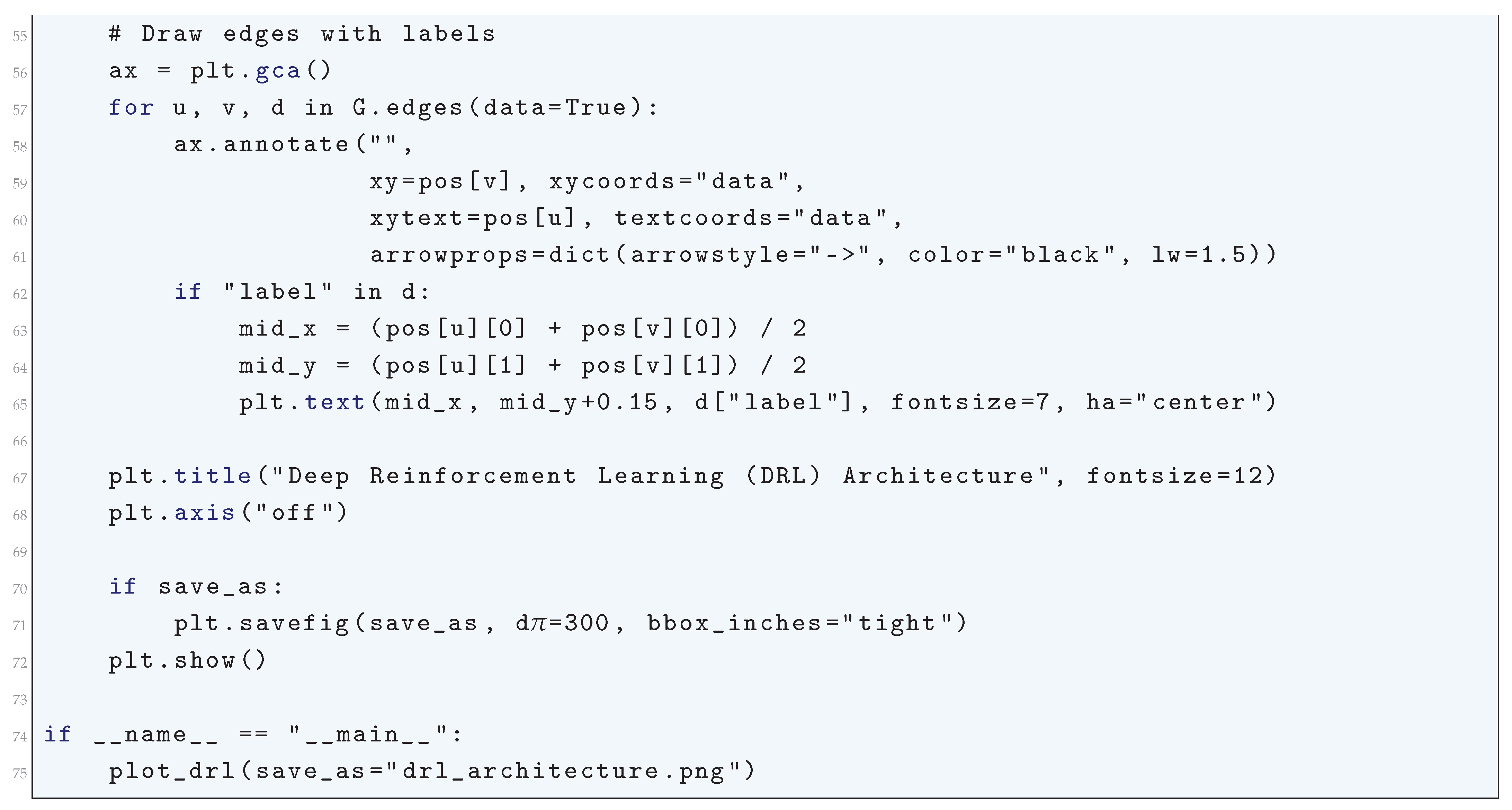

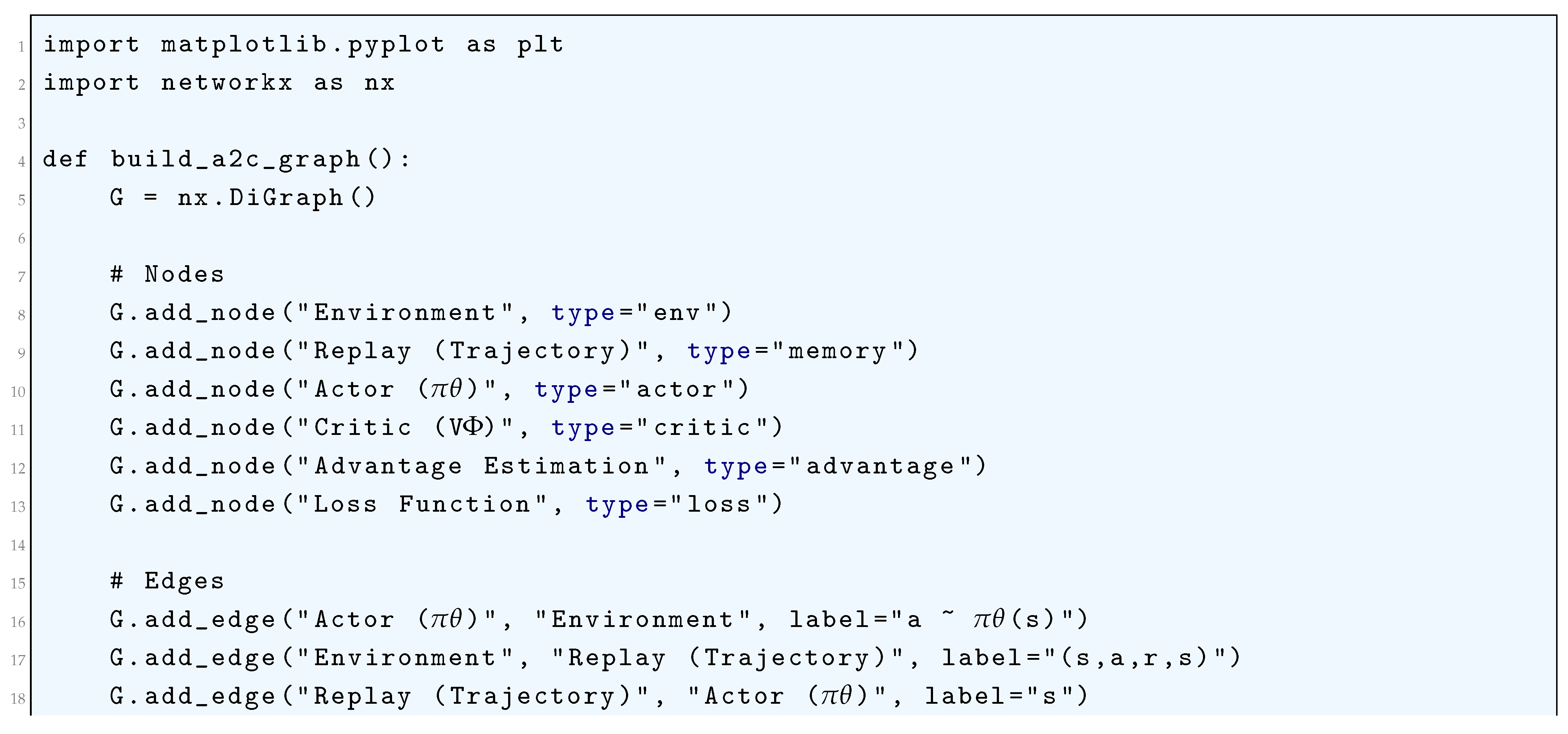

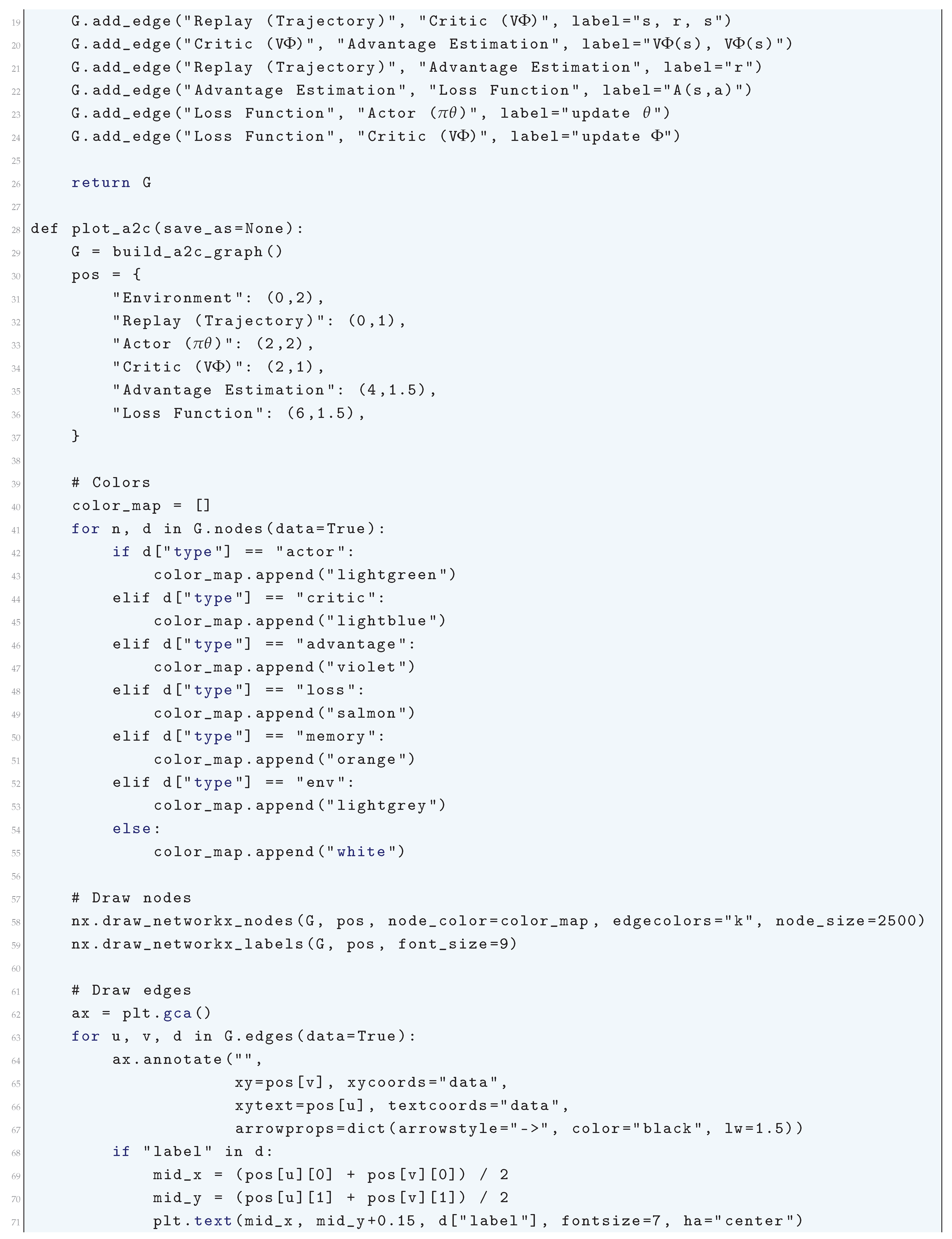

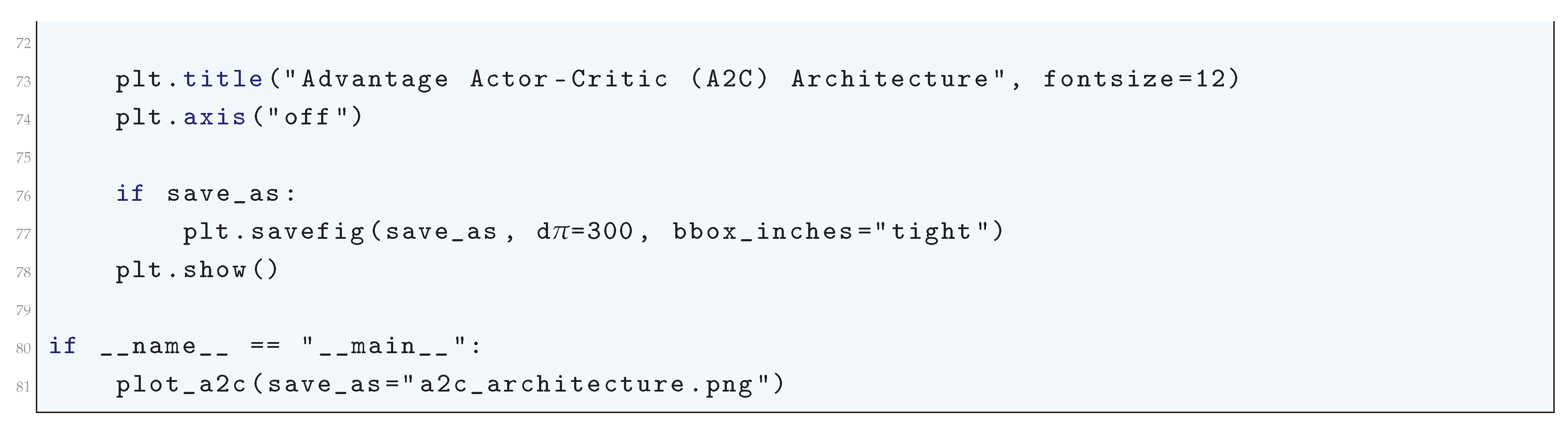

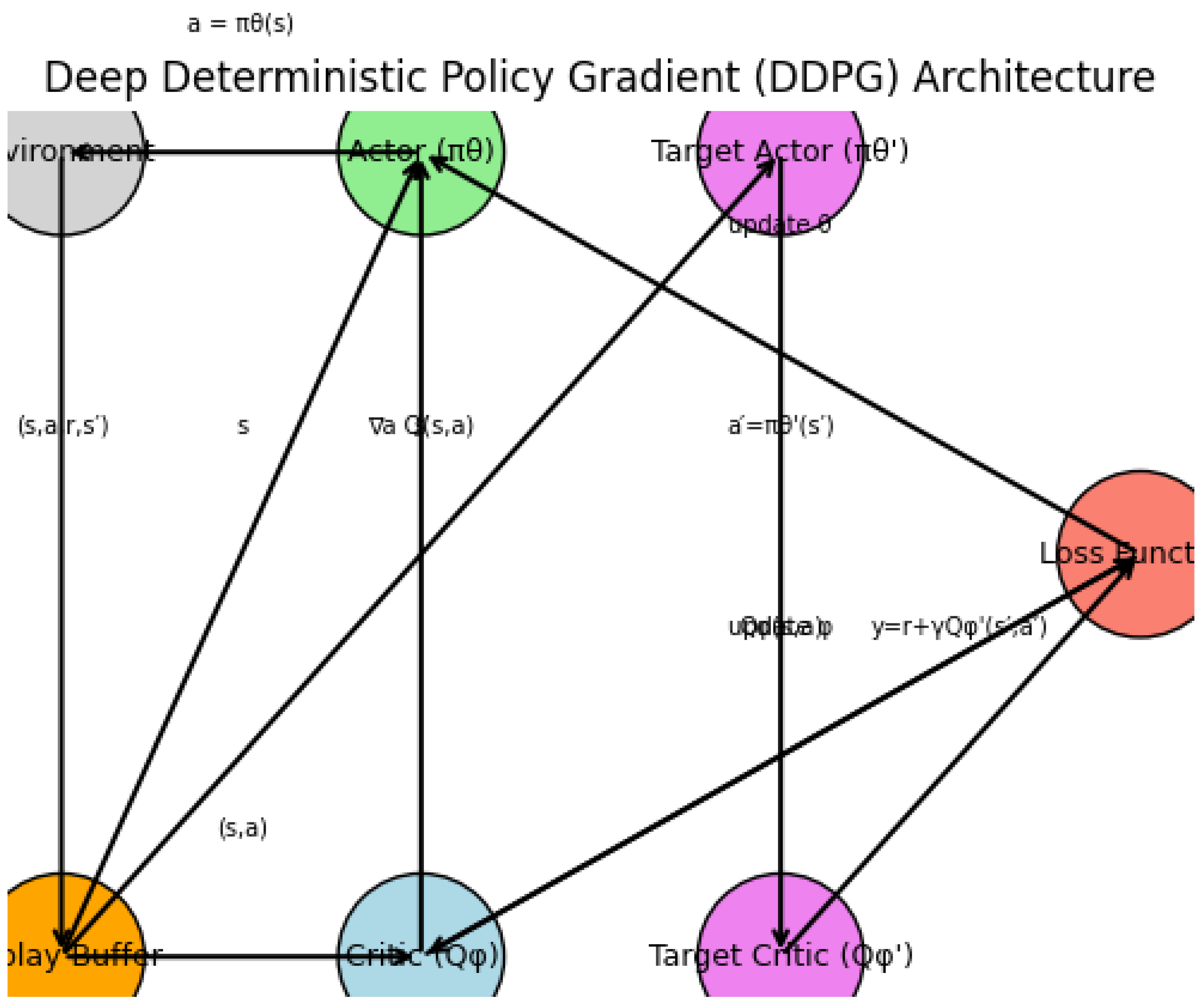

3.1. Python Code to Generate Figure 30 Illustrating the Training Dynamics vs NTK Linearization

3.2. Literature Review of Training Dynamics and NTK Linearization

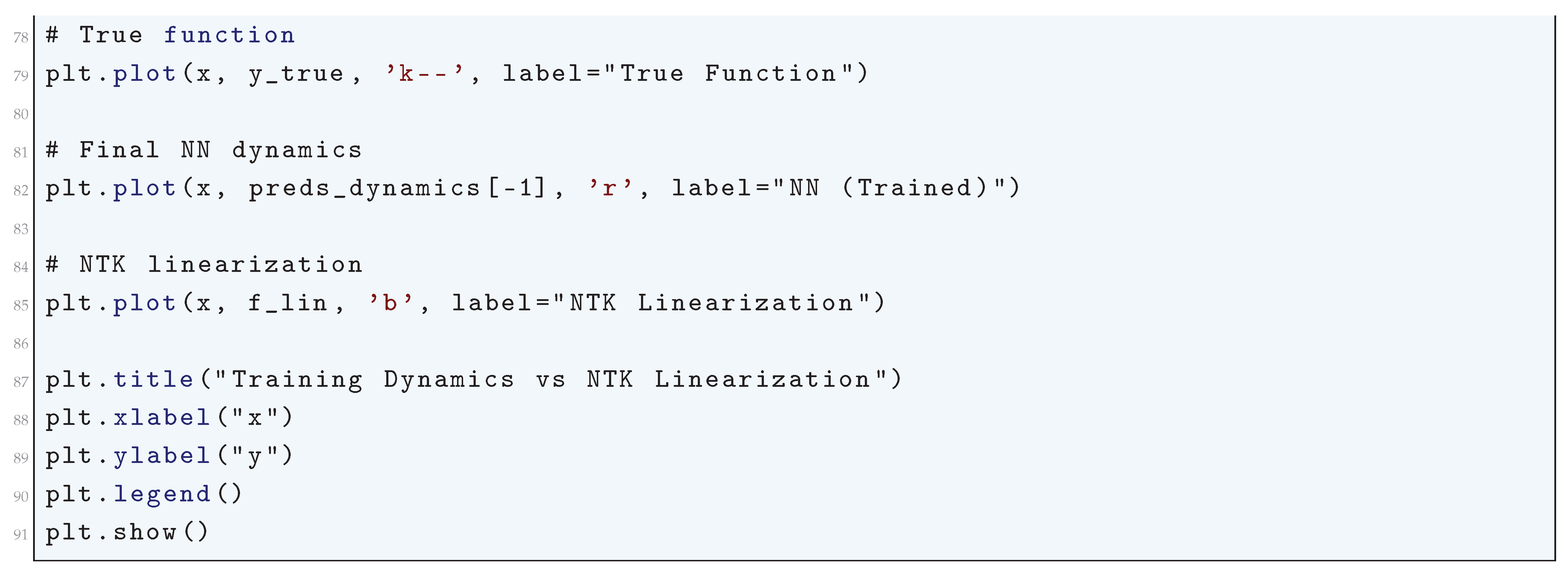

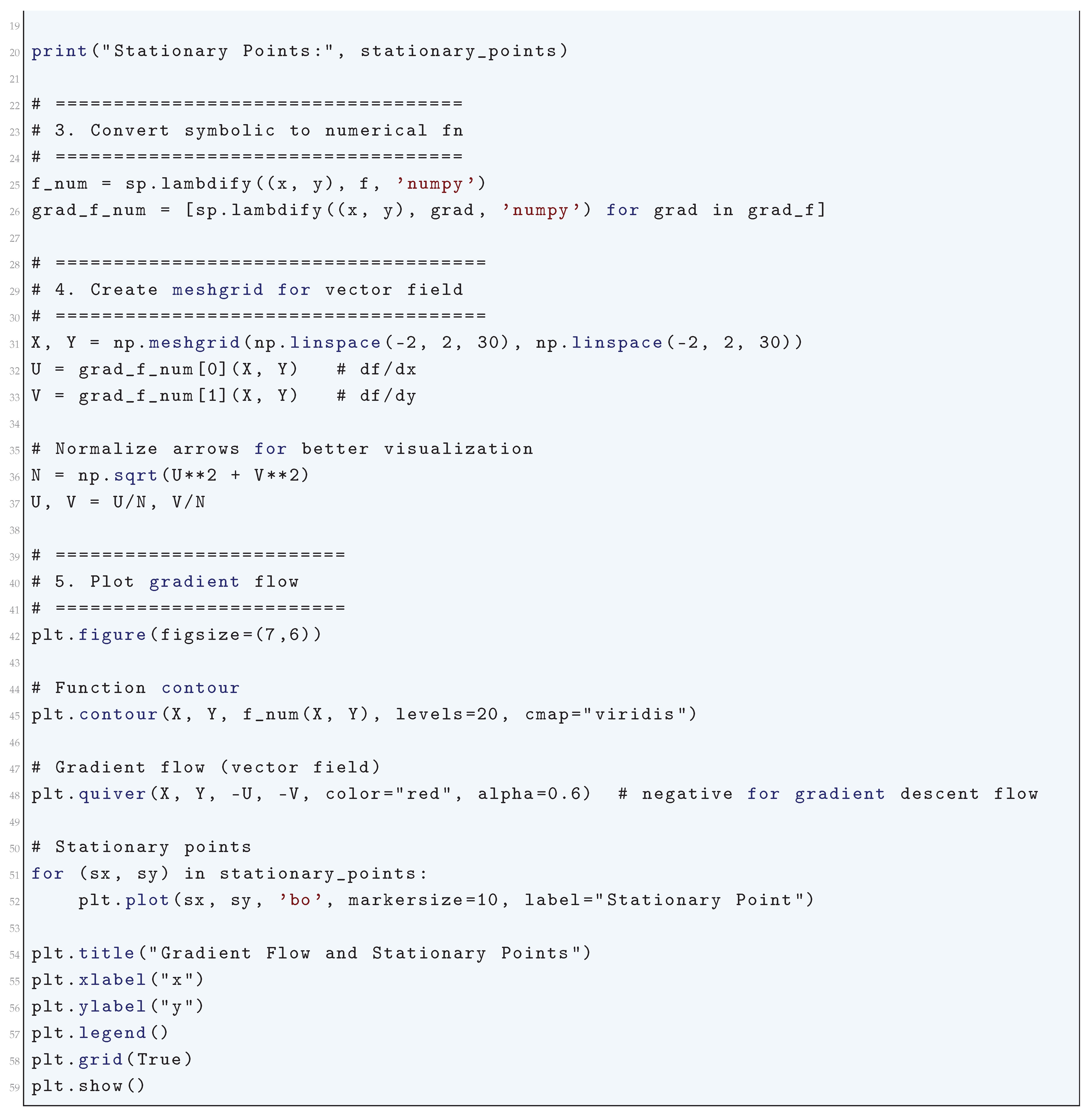

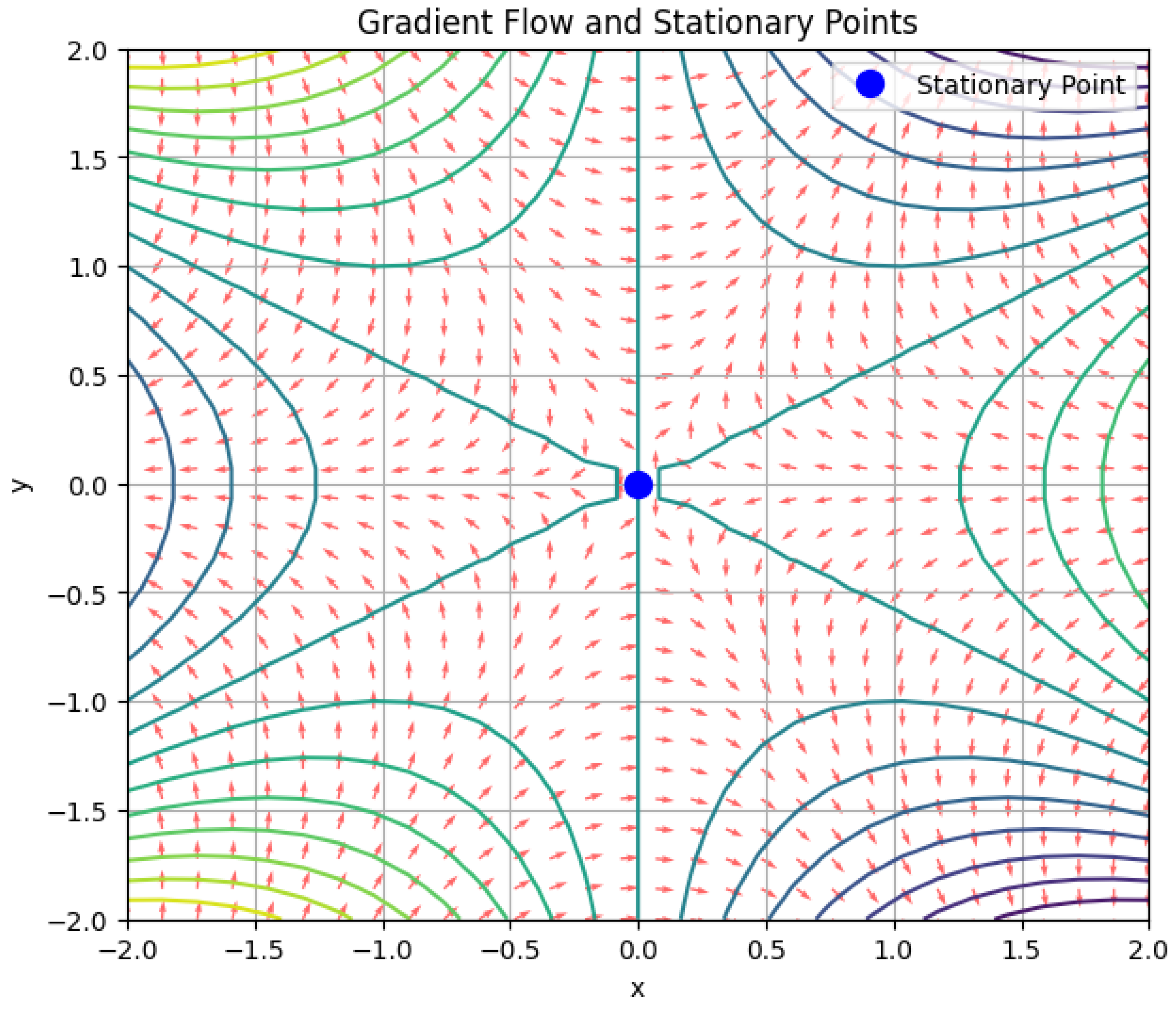

3.3. Gradient Flow and Stationary Points

3.3.1. Literature Review of Gradient Flow and Stationary Points

3.3.2. Analysis of Gradient Flow and Stationary Points

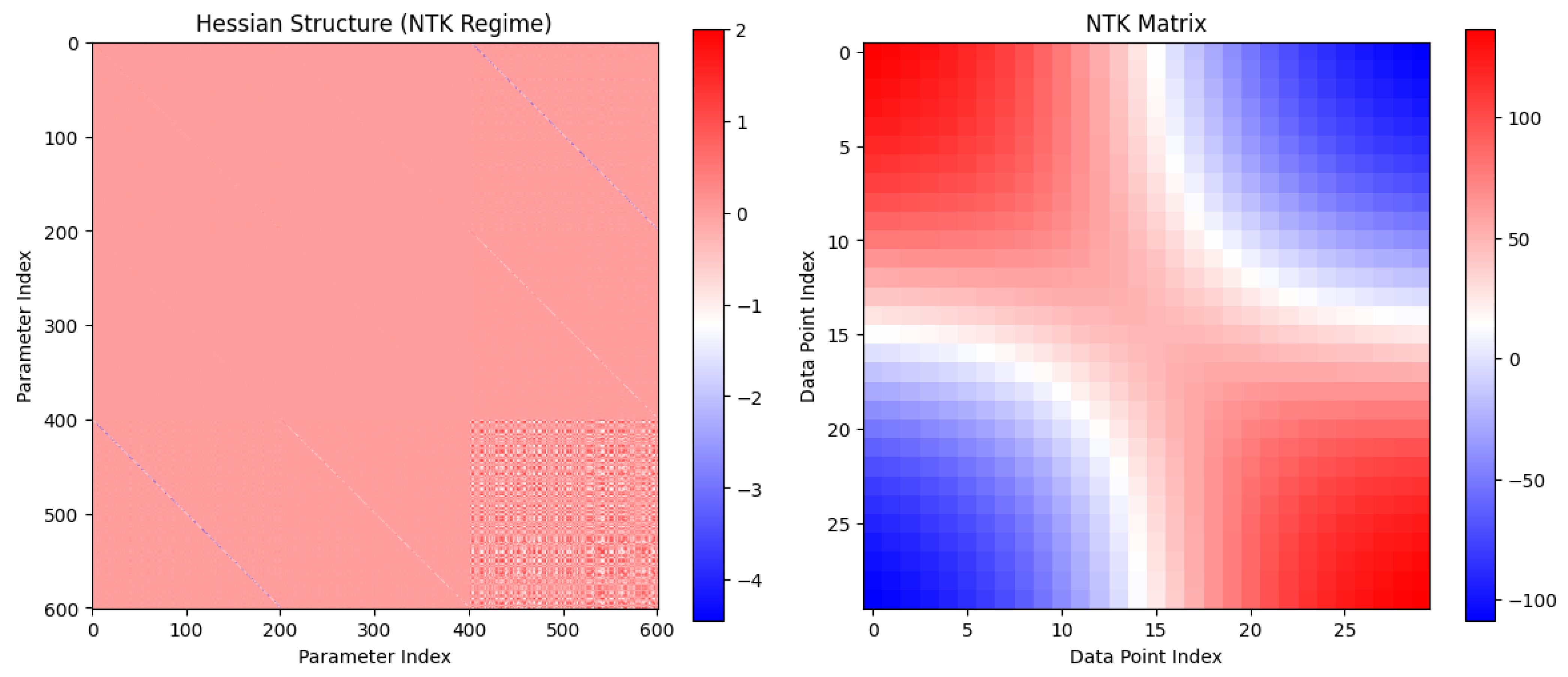

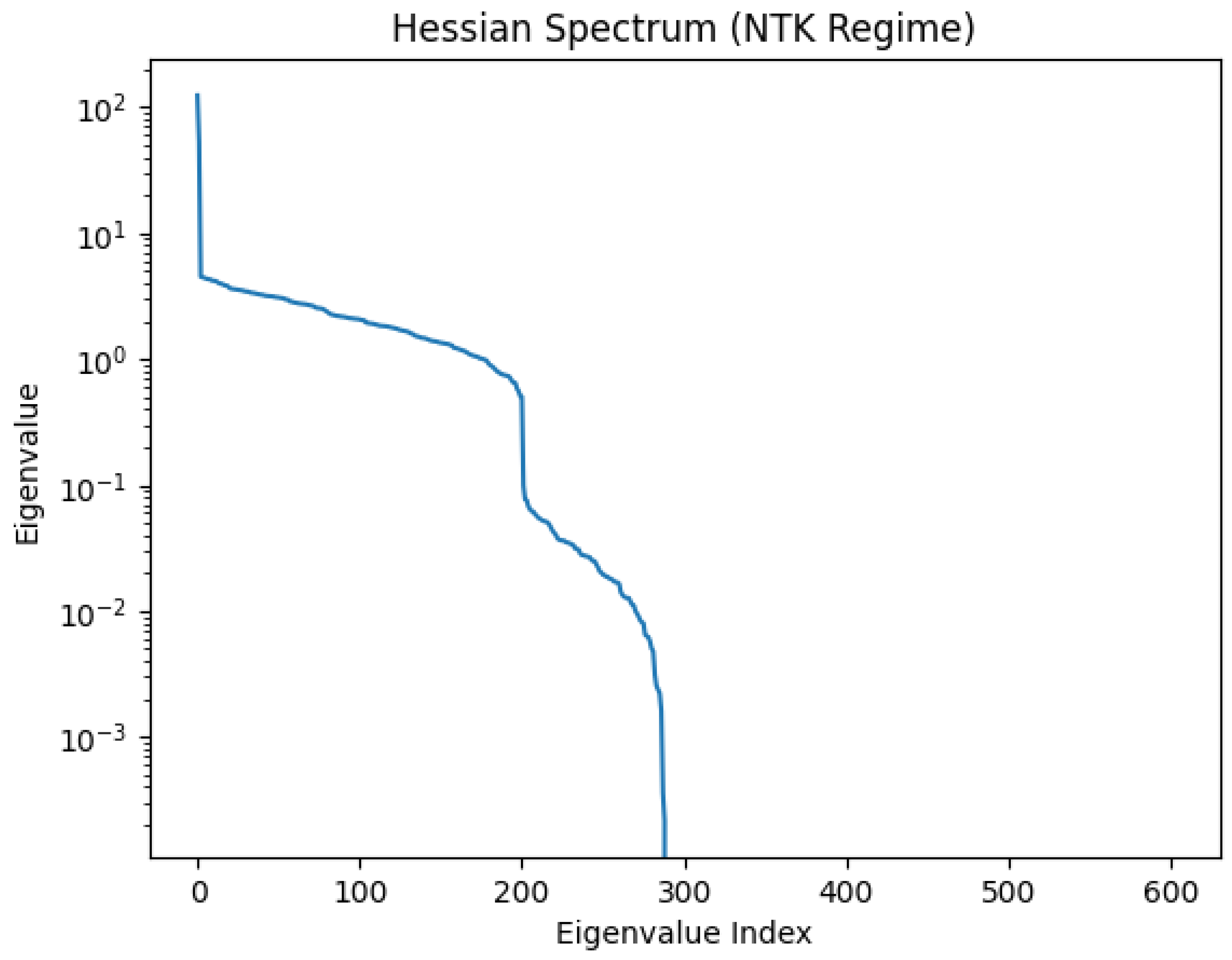

3.3.3. Hessian Structure

3.3.4. NTK Linearization

3.3.5. Python Code to Generate Figure 31 Illustrating the Gradient Flow and Stationary Points

3.3.6. Python Code to Generate Figure 32 and Figure 33 Illustrating the Hessian Structure

3.3.7. Python Code to Generate Figure 34 Illustrating the Final Fit & Training Dynamics (NN vs NTK)

3.4. NTK Regime

3.4.1. Literature Review of NTK Regime

3.4.2. Analysis of NTK Regime

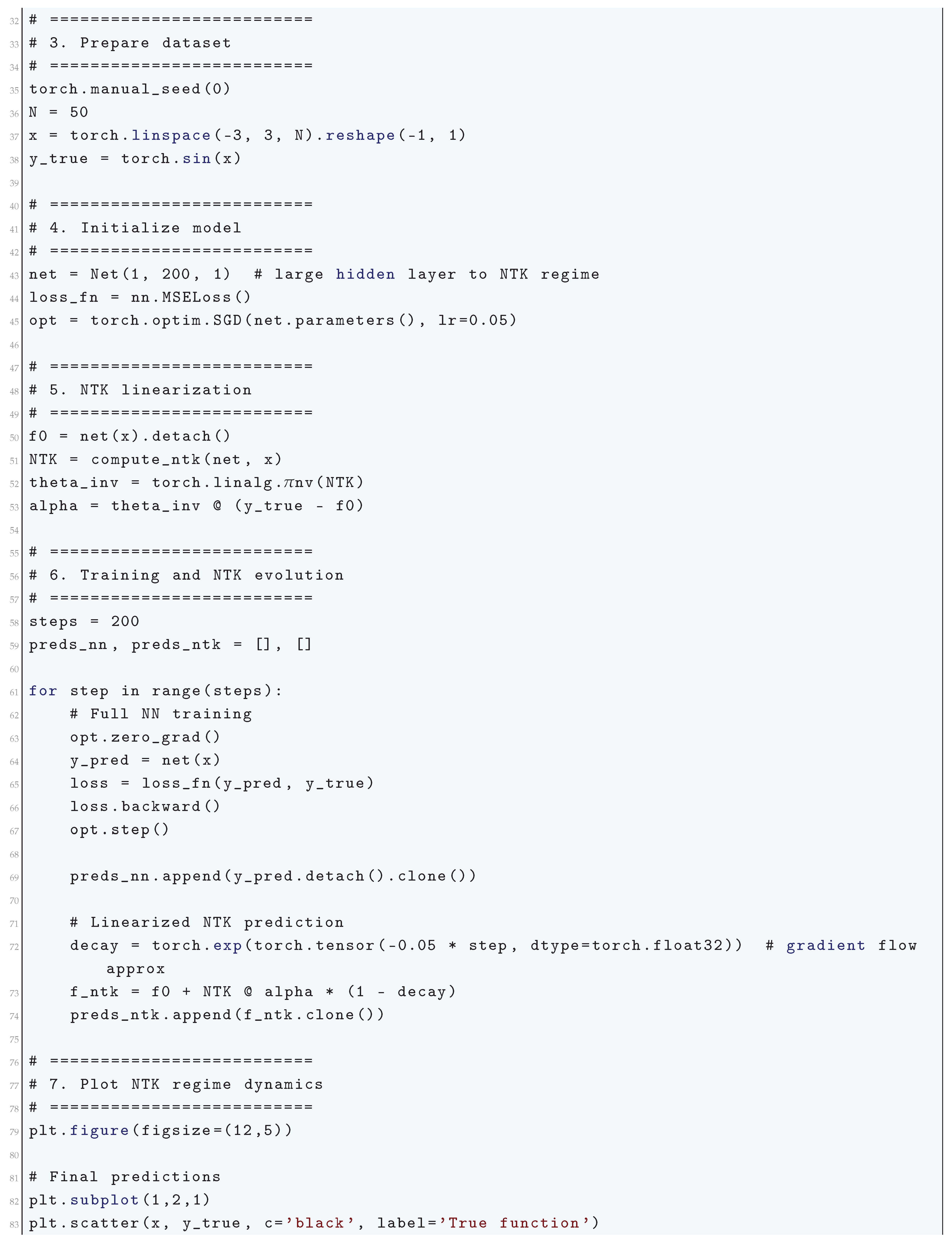

3.4.3. Python Code to Generate Figure 35 Illustrating the Final Predictions in NTK Regime & Training Dynamics (NN vs NTK)

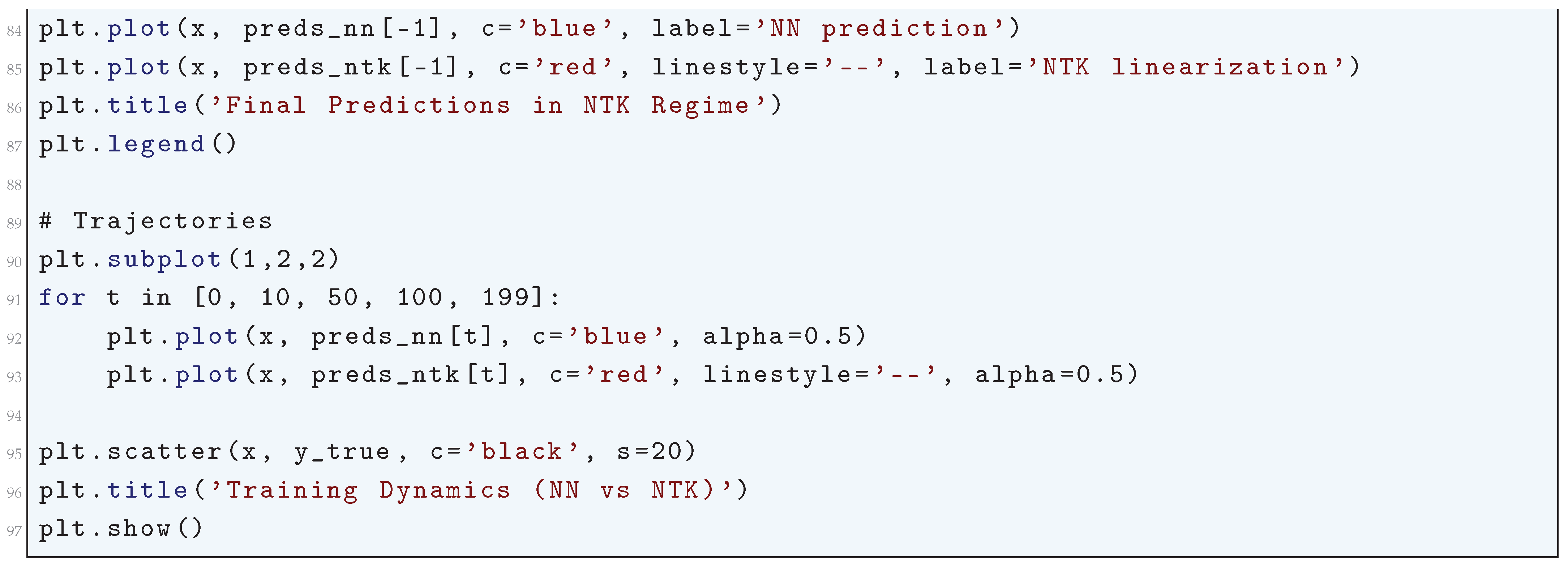

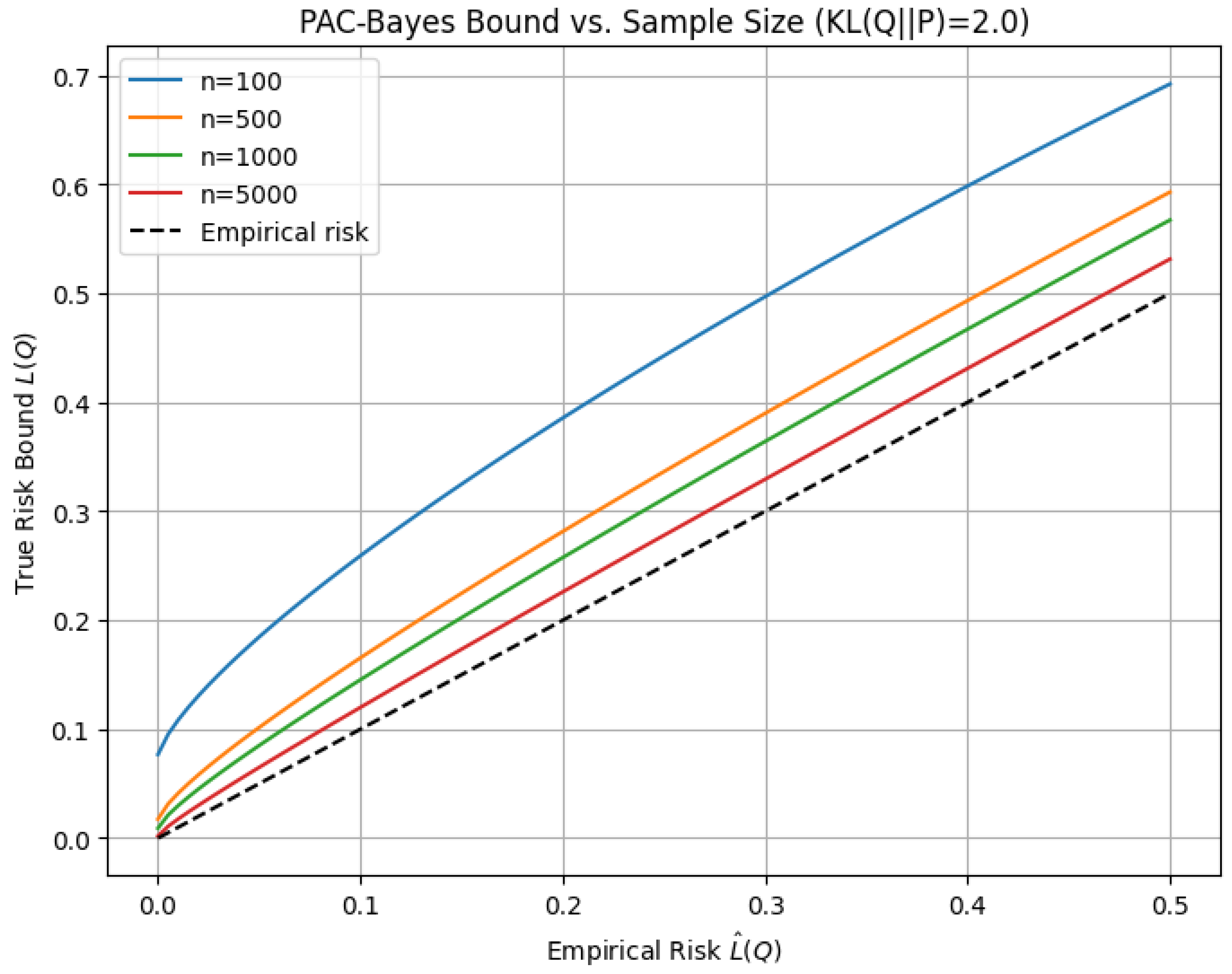

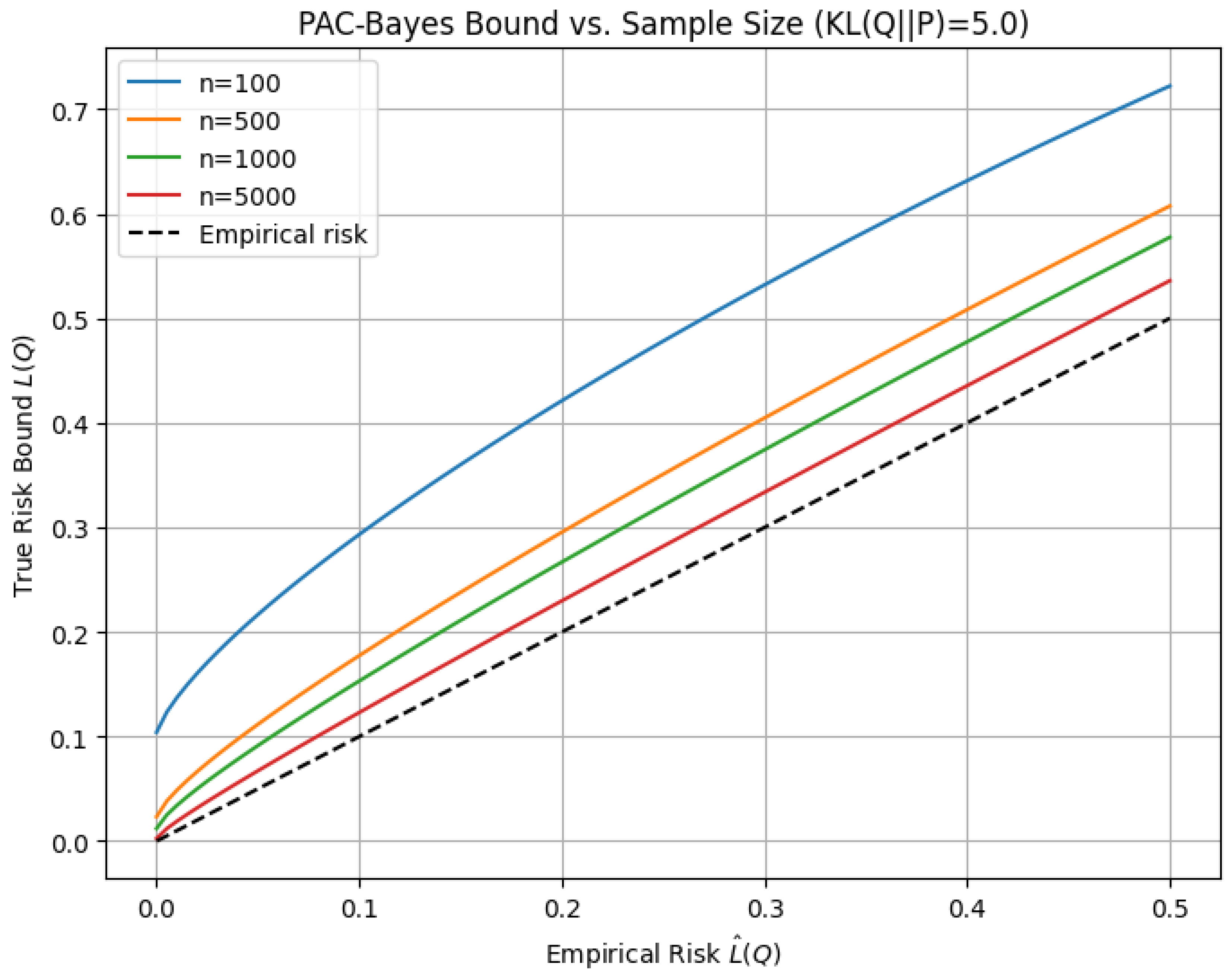

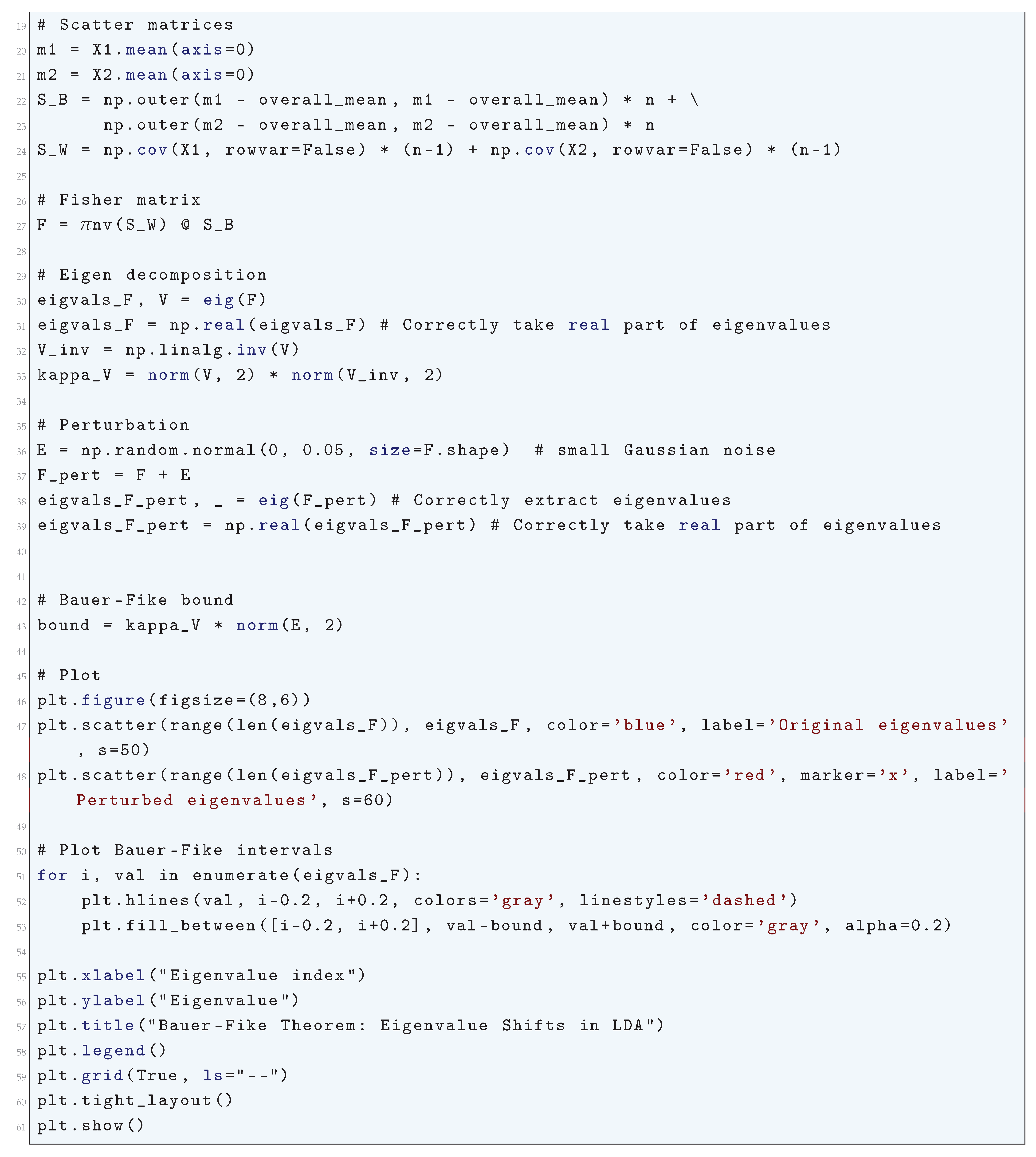

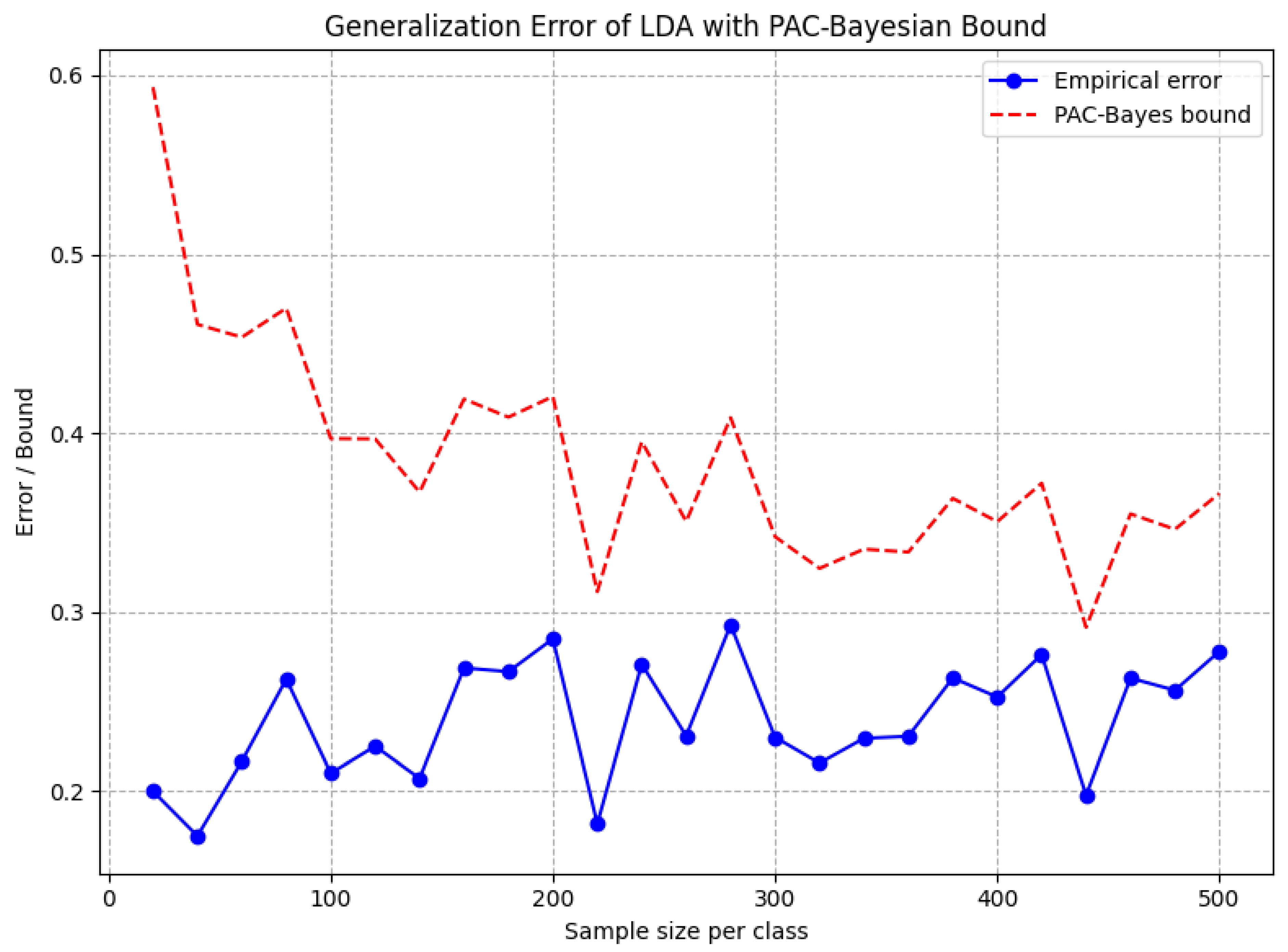

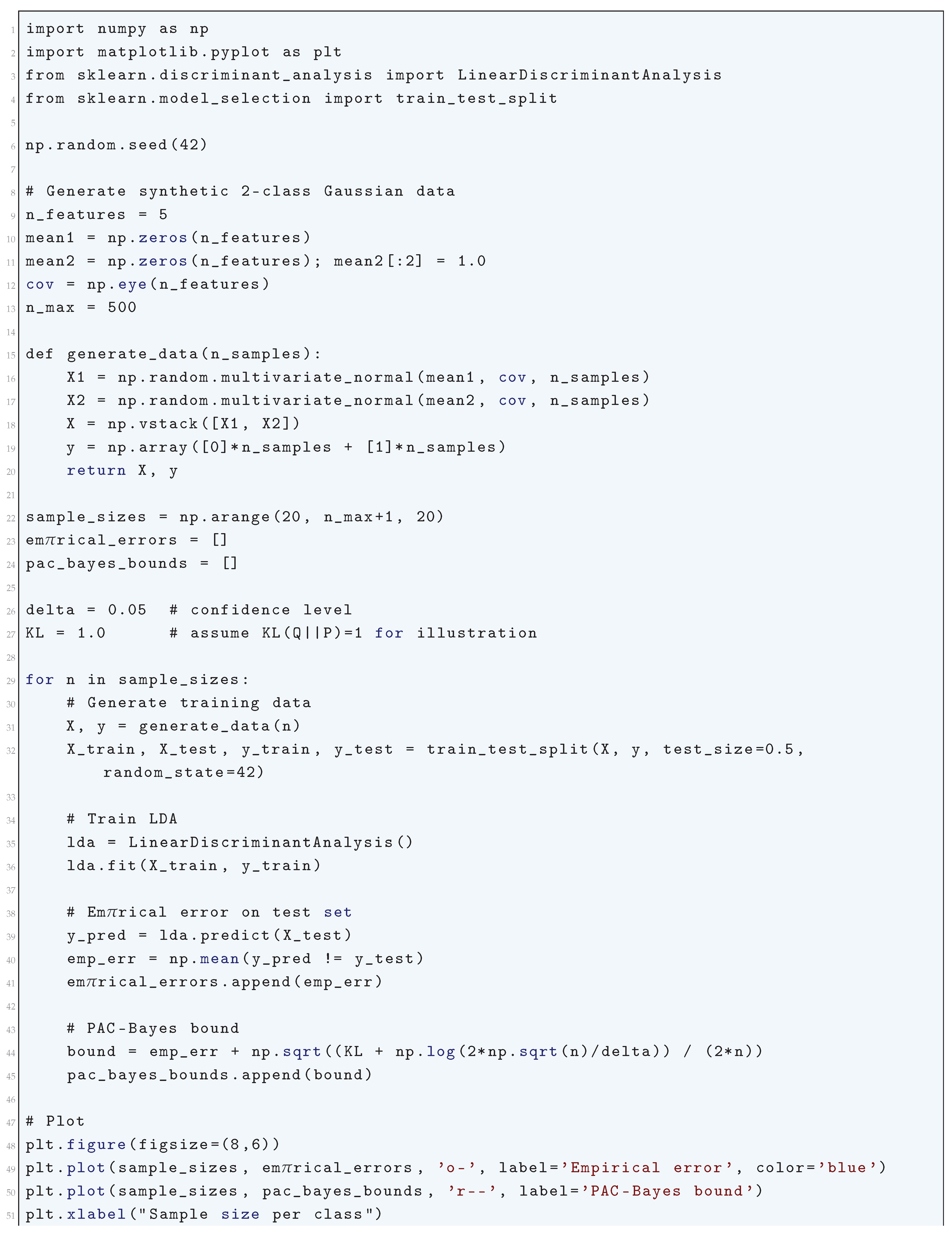

4. Generalization Bounds: PAC-Bayes and Spectral Analysis

4.1. PAC-Bayes Formalism

4.1.1. Literature Review of PAC-Bayes Formalism

4.1.2. Analysis of PAC-Bayes Formalism

- Empirical Risk: captures how well the posterior Q fits the training data.

- Complexity: The KL divergence ensures that Q remains close to P, discouraging overfitting and promoting generalization.

- Confidence: The term shrinks with increasing sample size, tightening the bound and enhancing reliability.

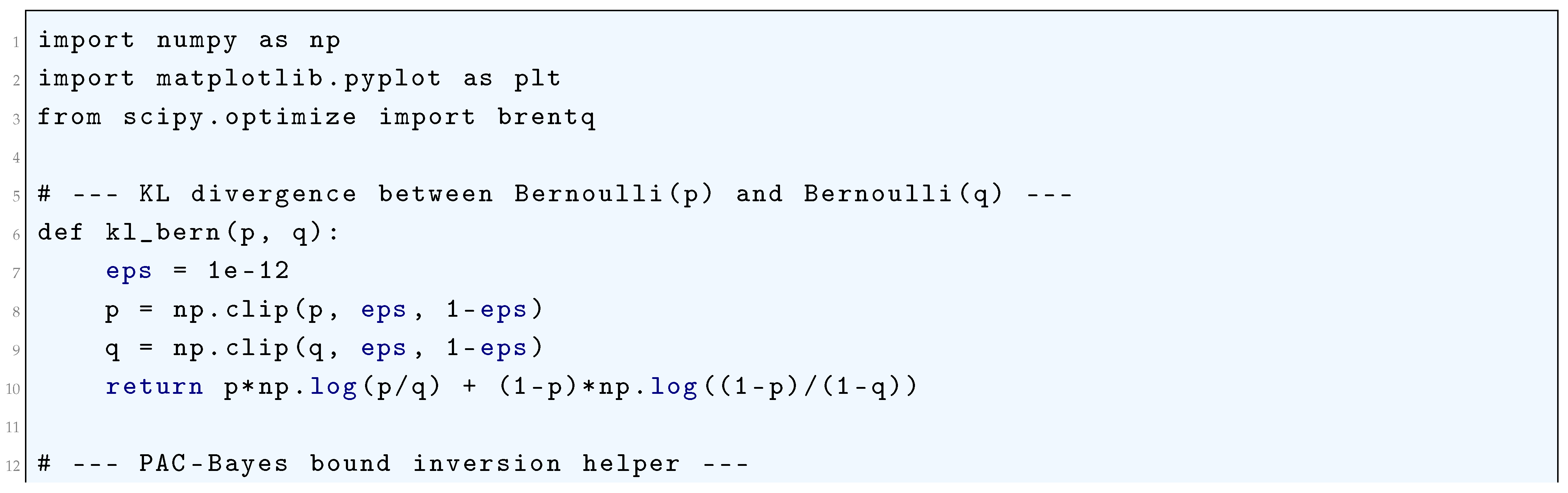

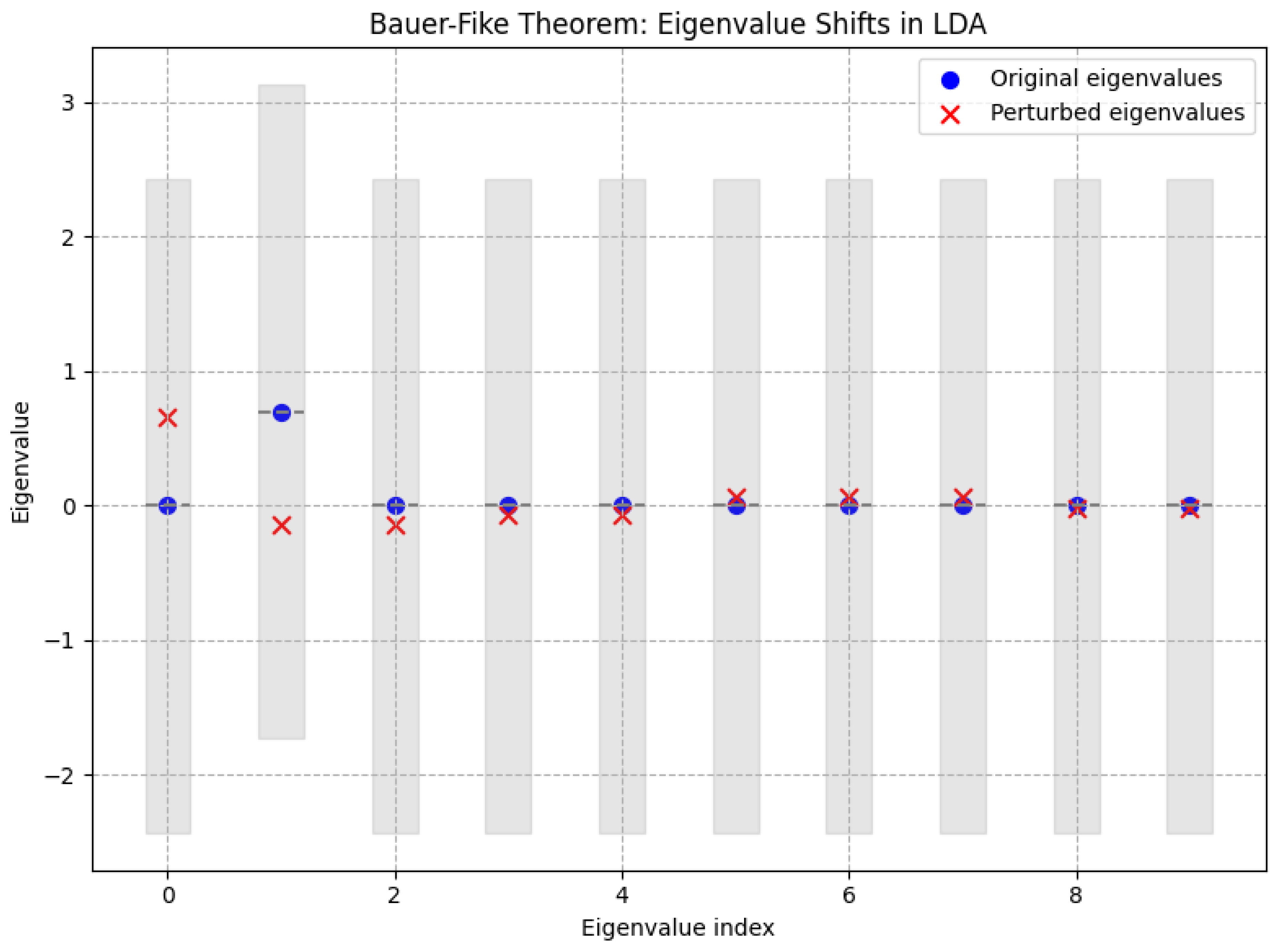

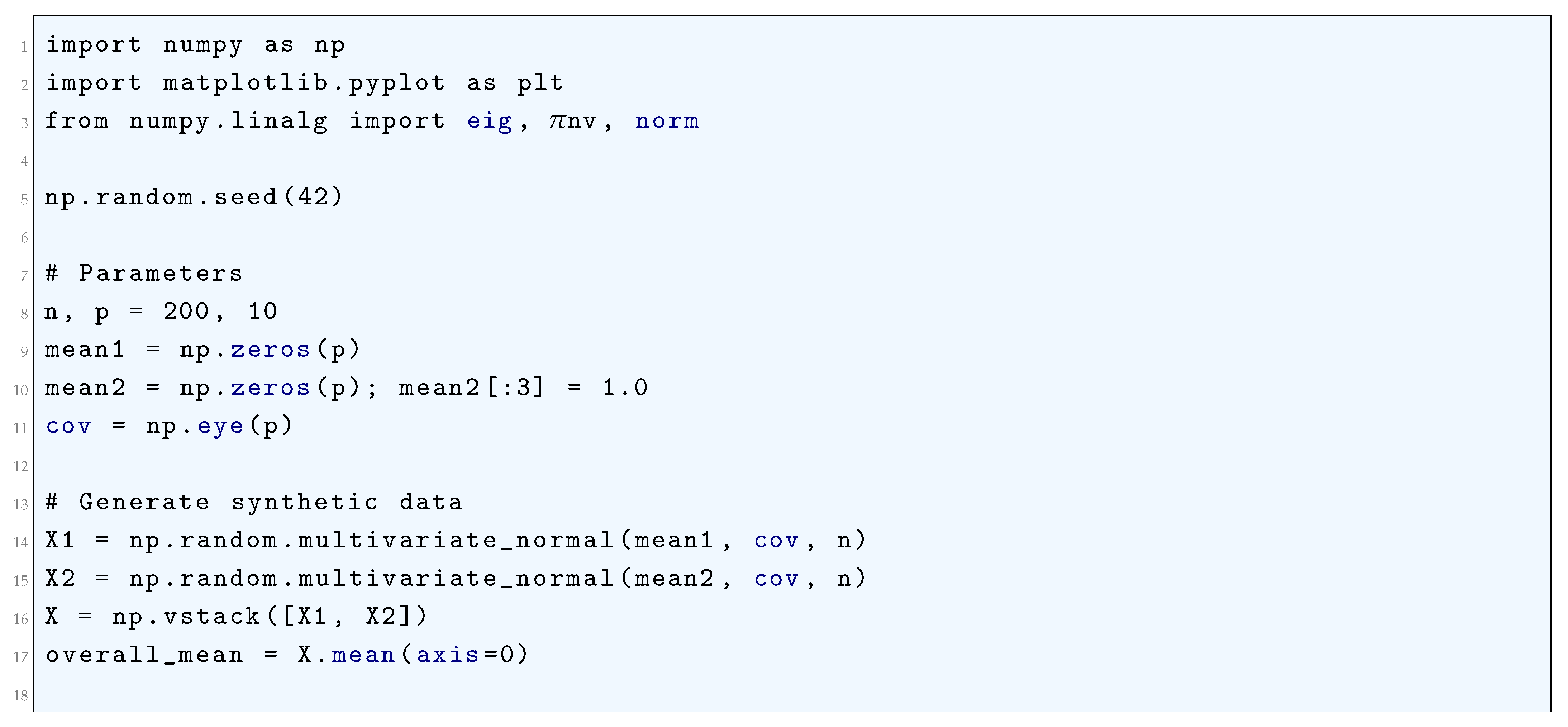

4.1.2.1 Python Code to Generate Figure 36, Figure 37, and Figure 38 Illustrating PAC-Bayes Bound vs. Sample Size

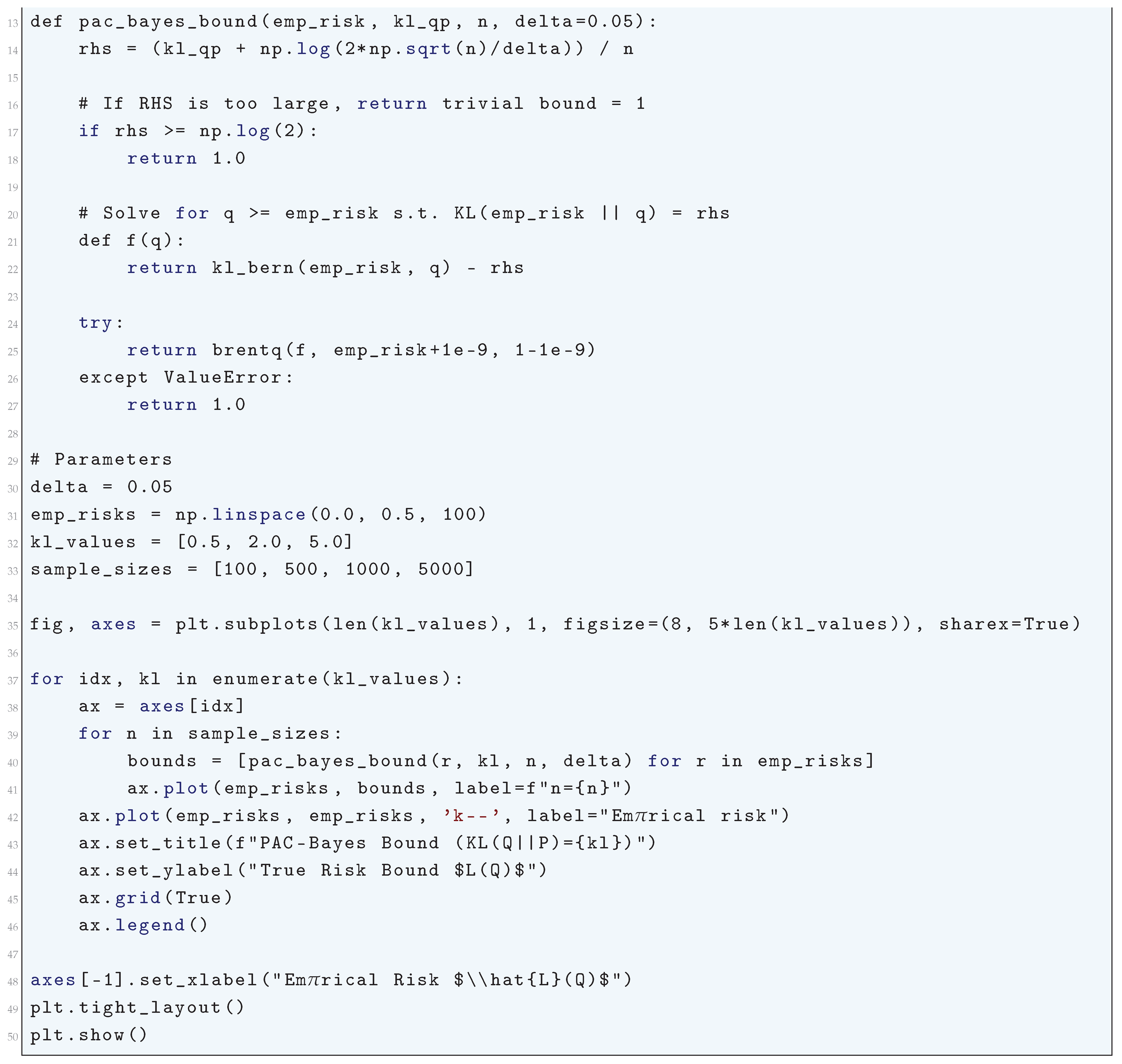

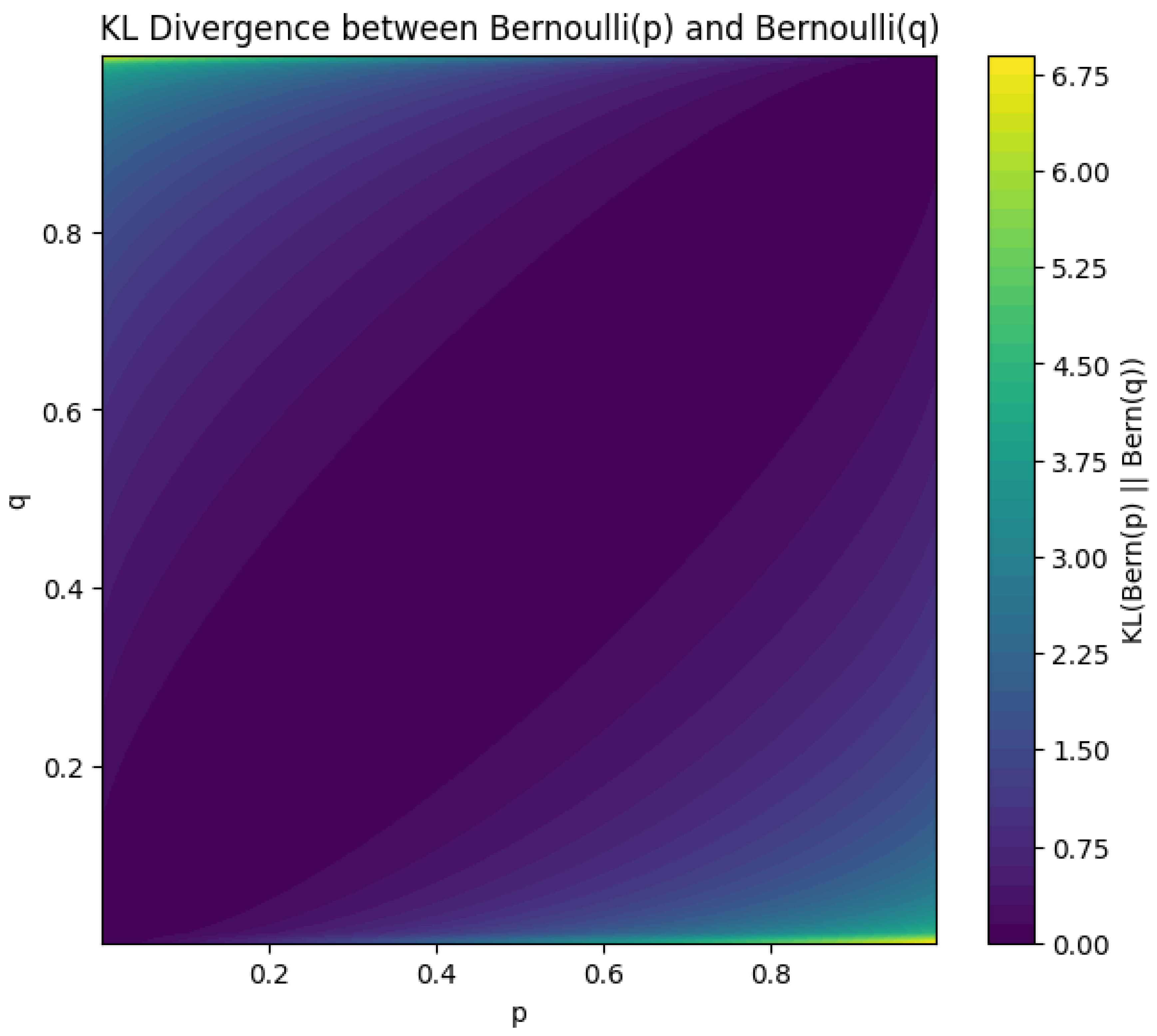

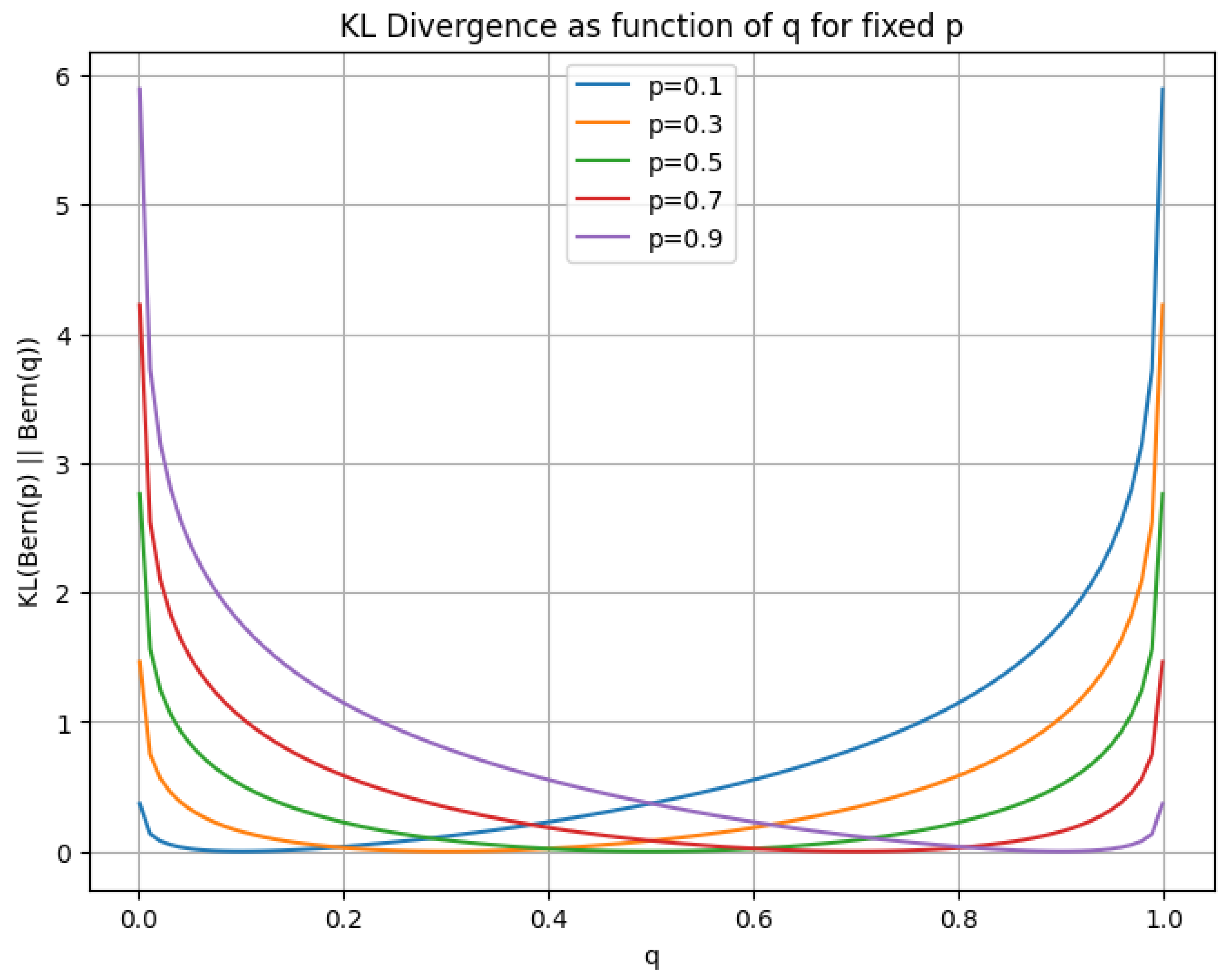

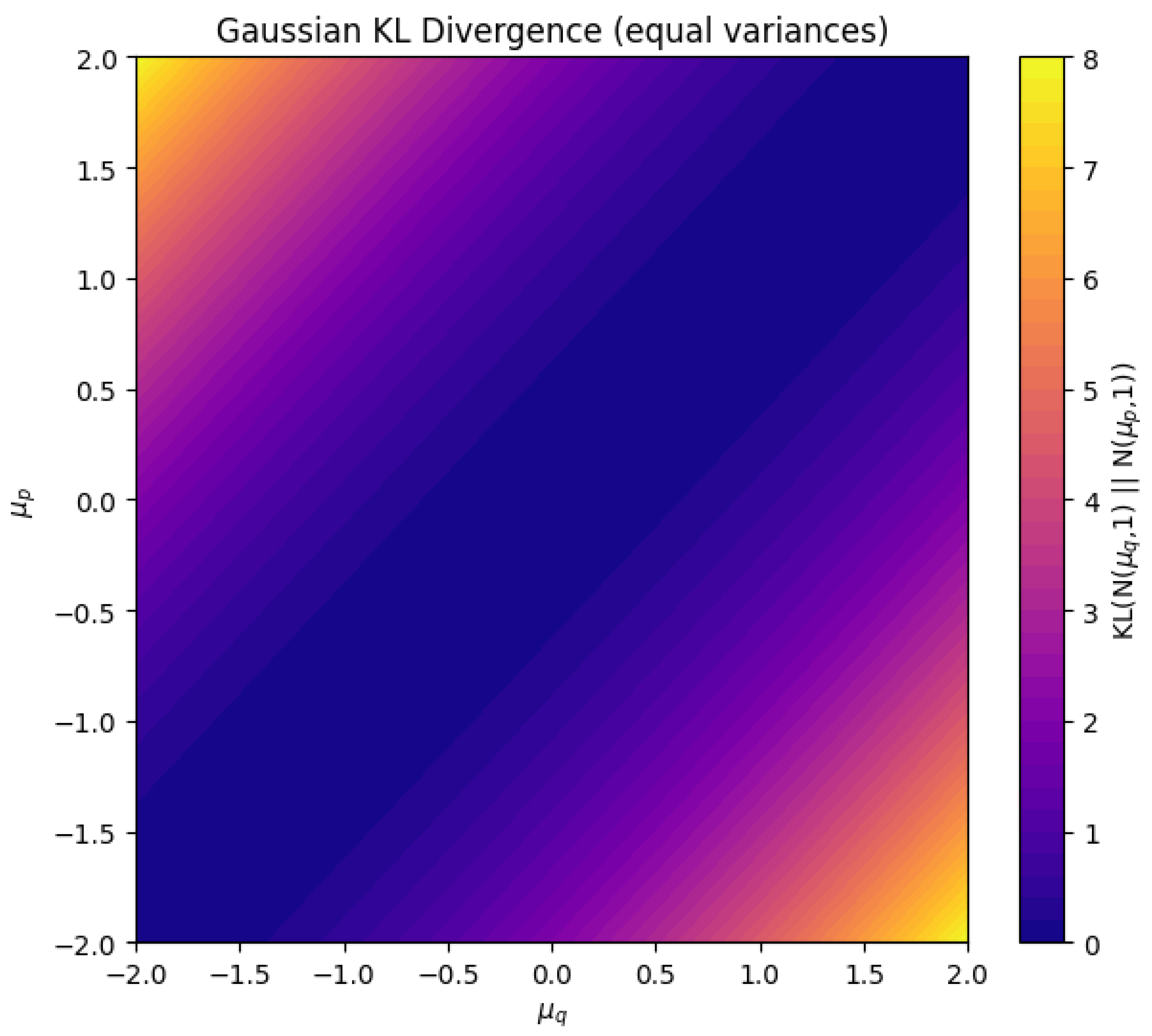

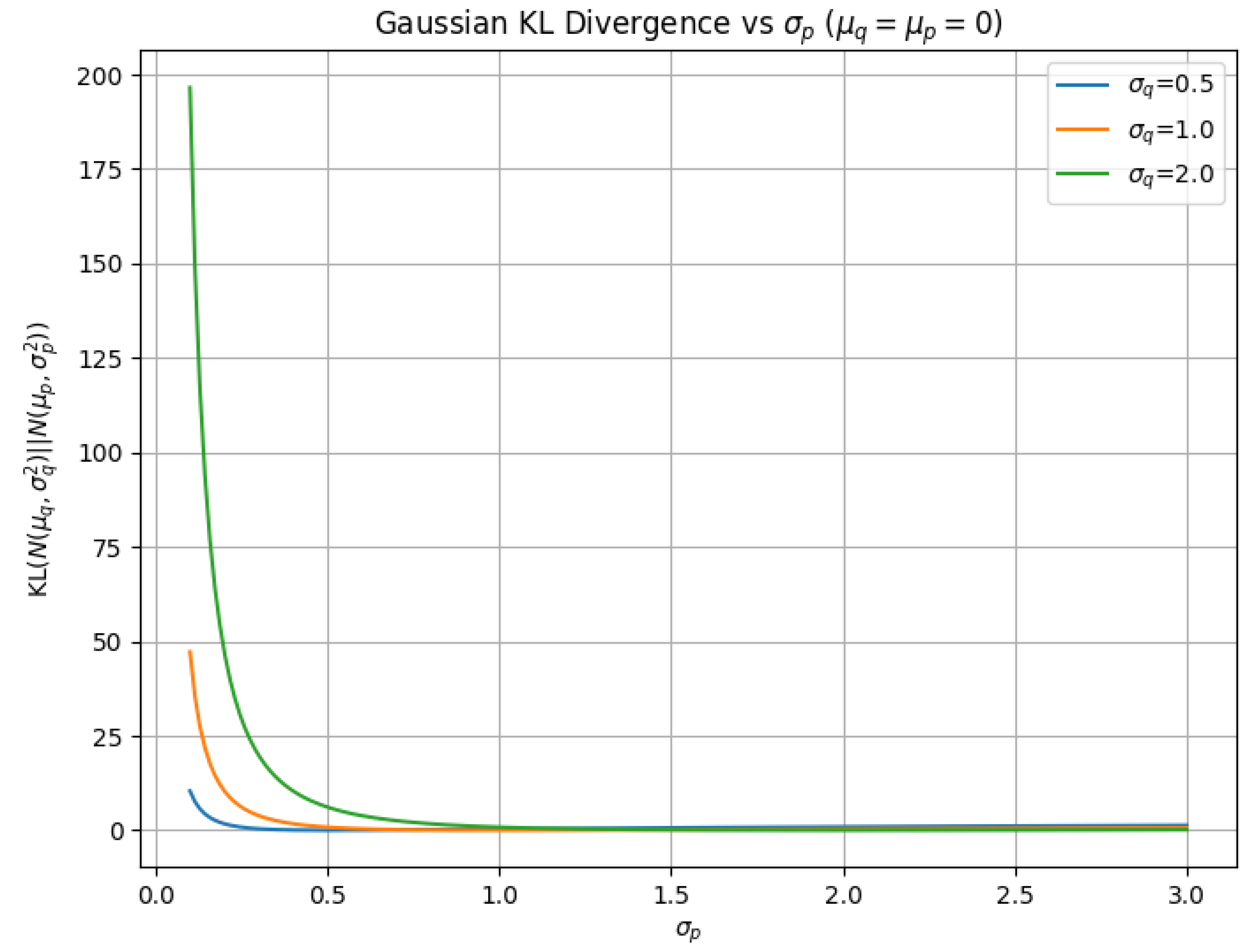

4.1.3. KL Divergence

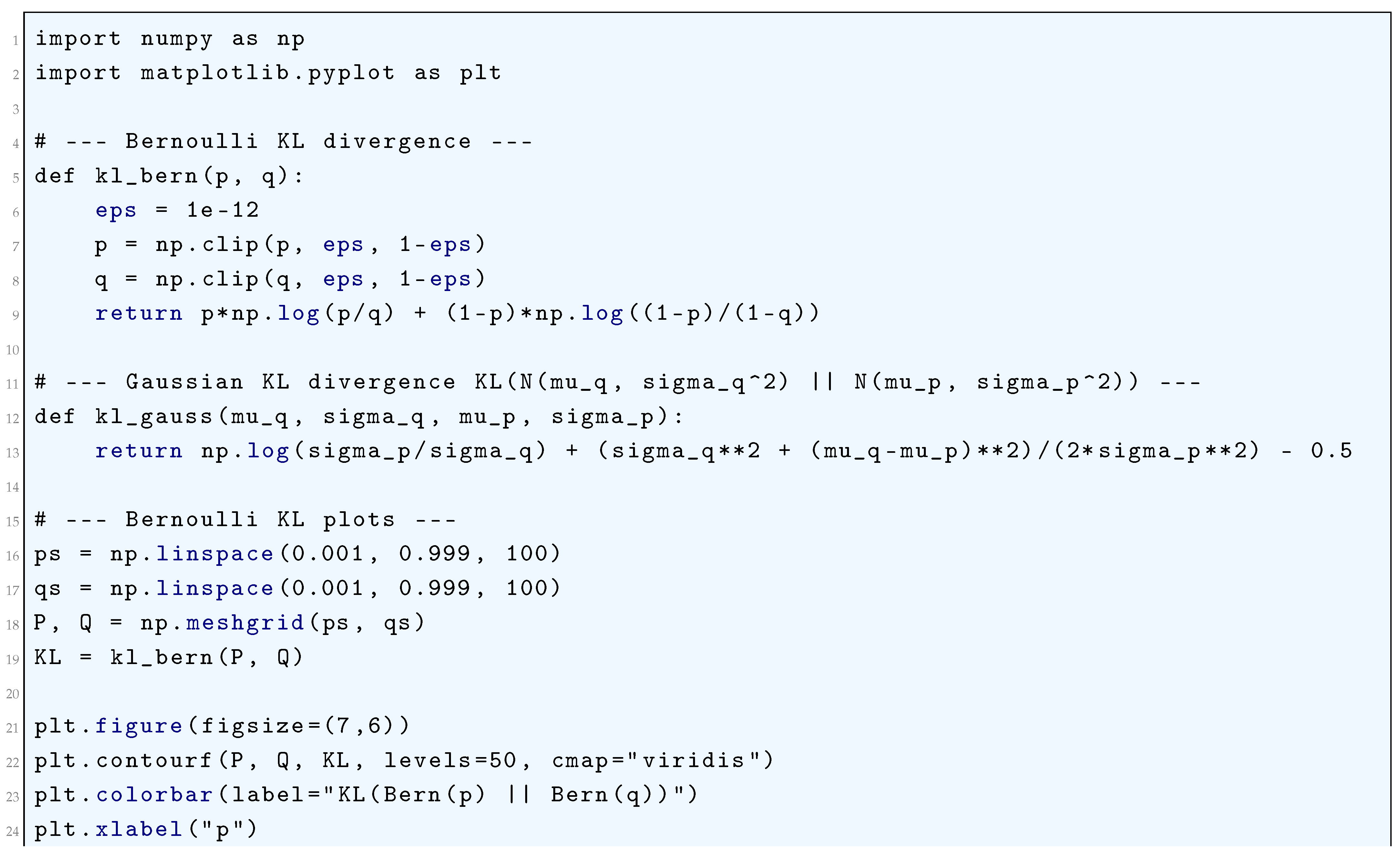

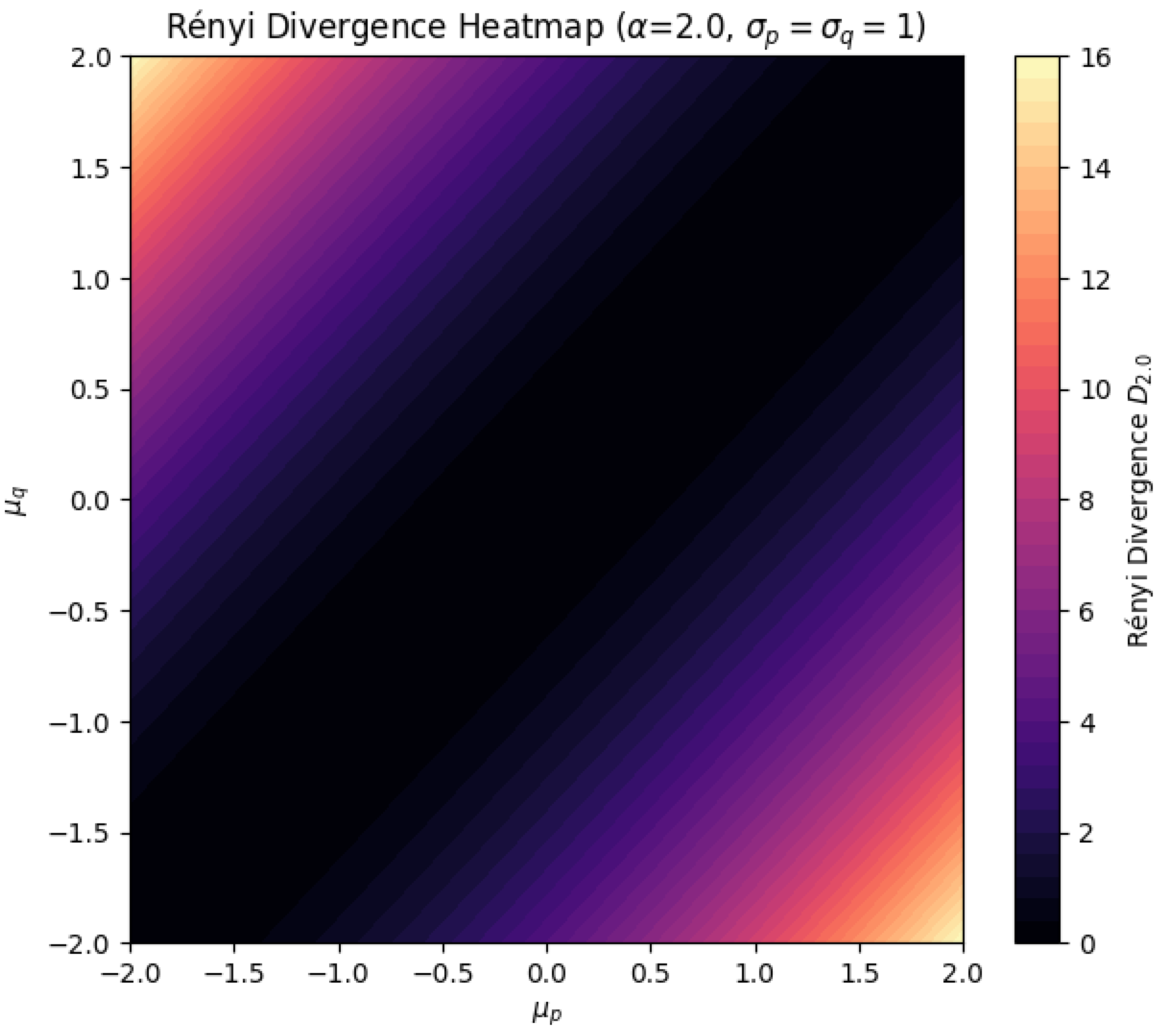

4.1.3.1 Python Code to Generate Figure 39, Figure 40 Illustrating KL Divergence in Bernoulli Random Variables and Figure 41, Figure 42 Illustrating KL Divergence in Gaussian Random Variables

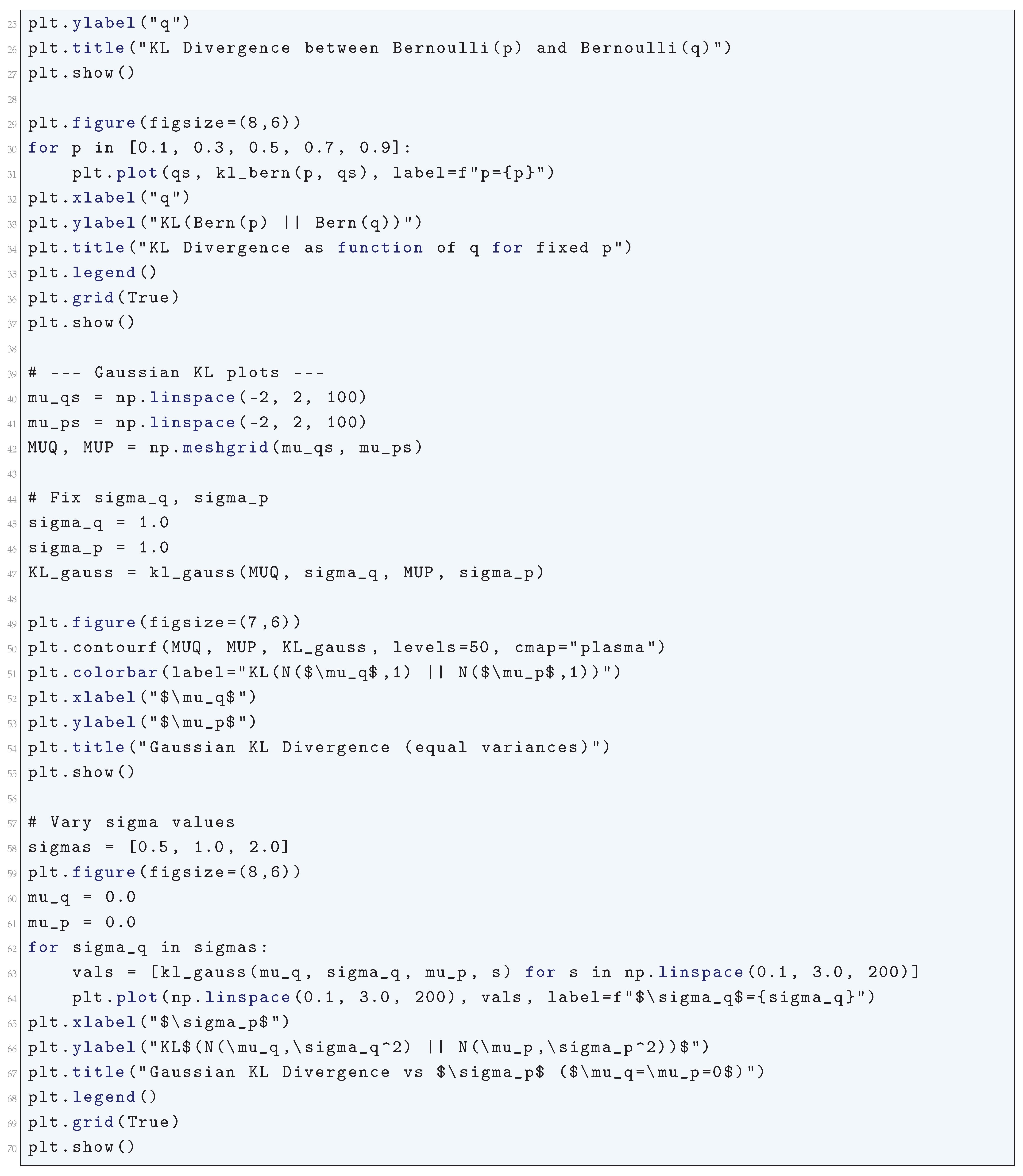

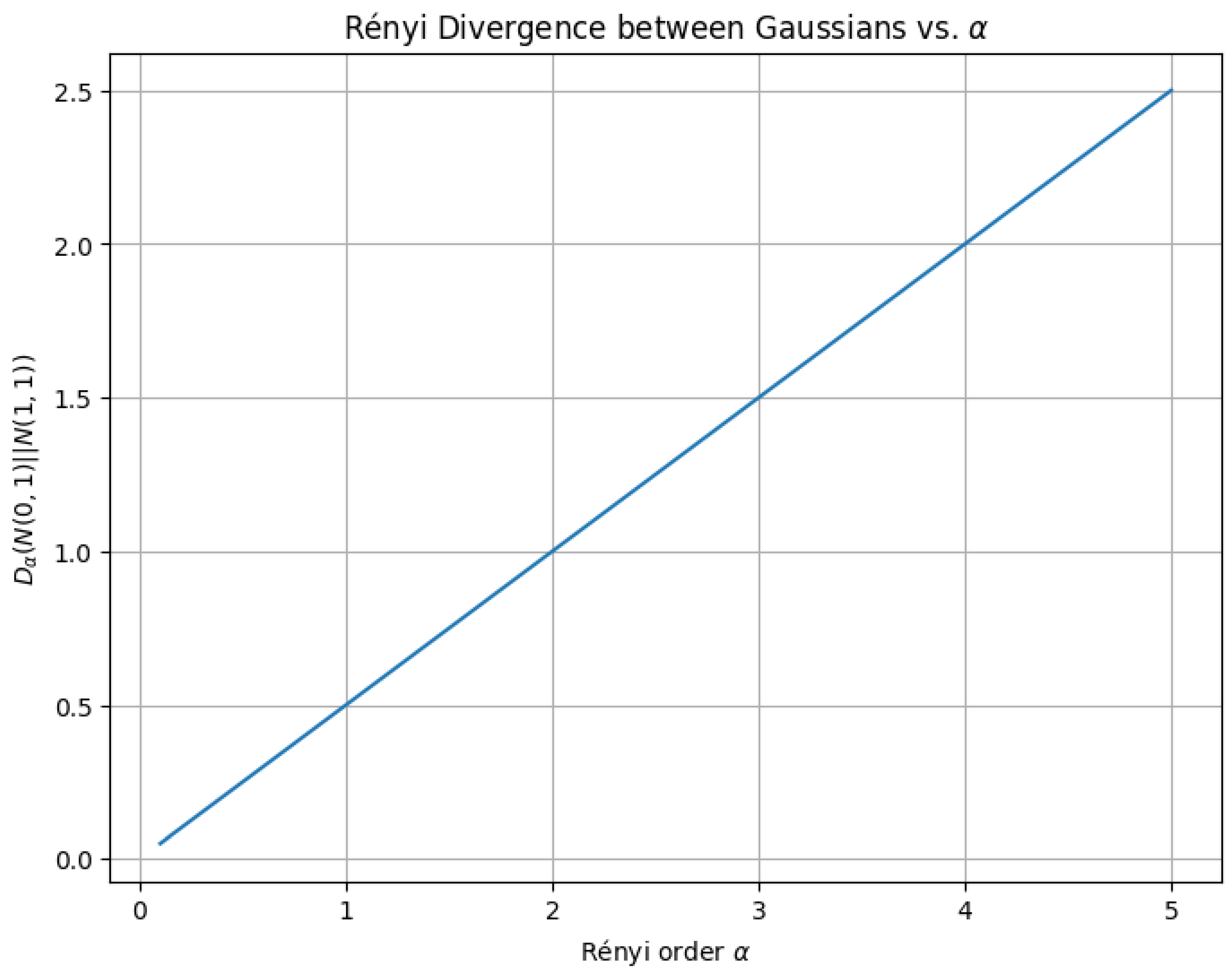

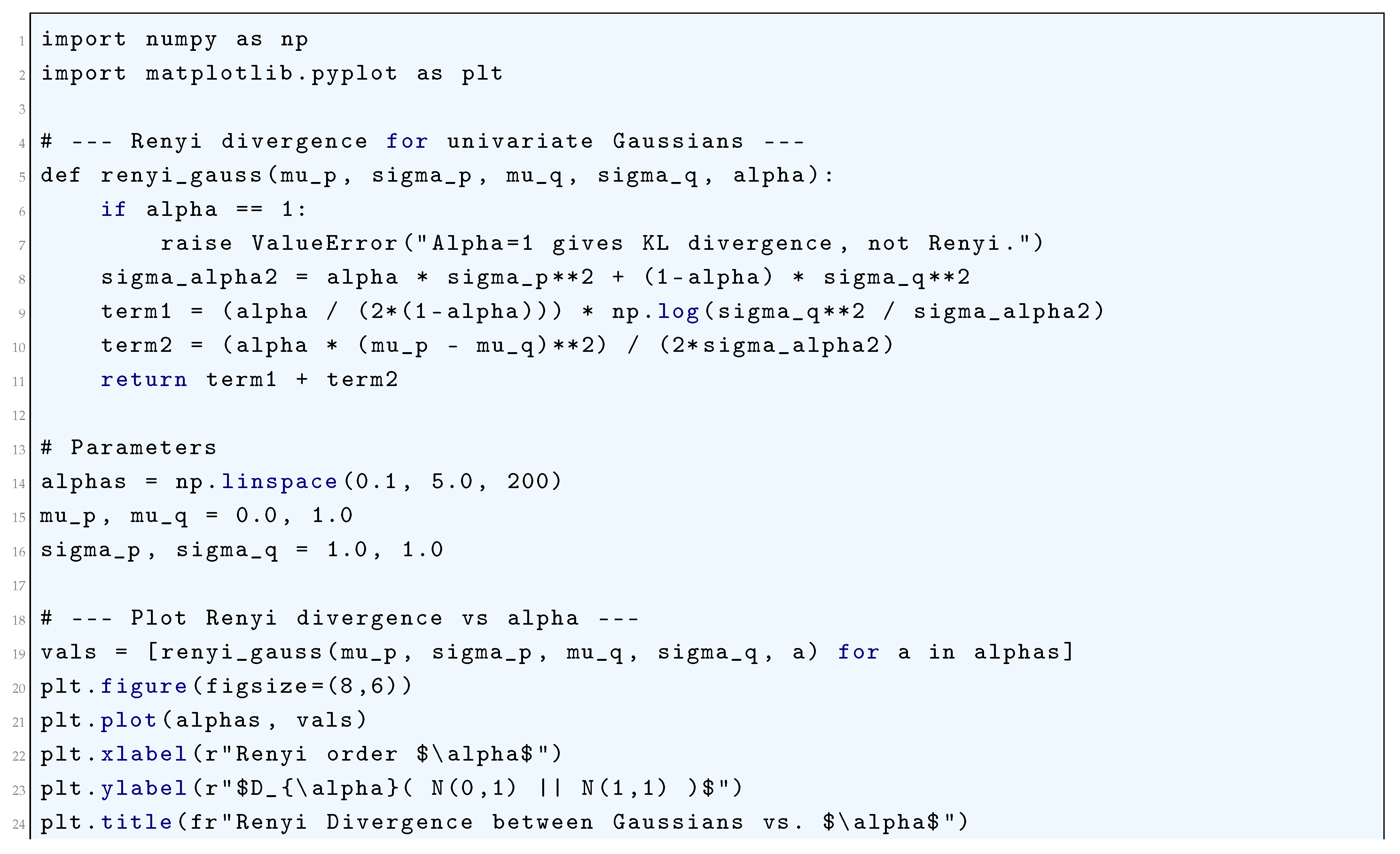

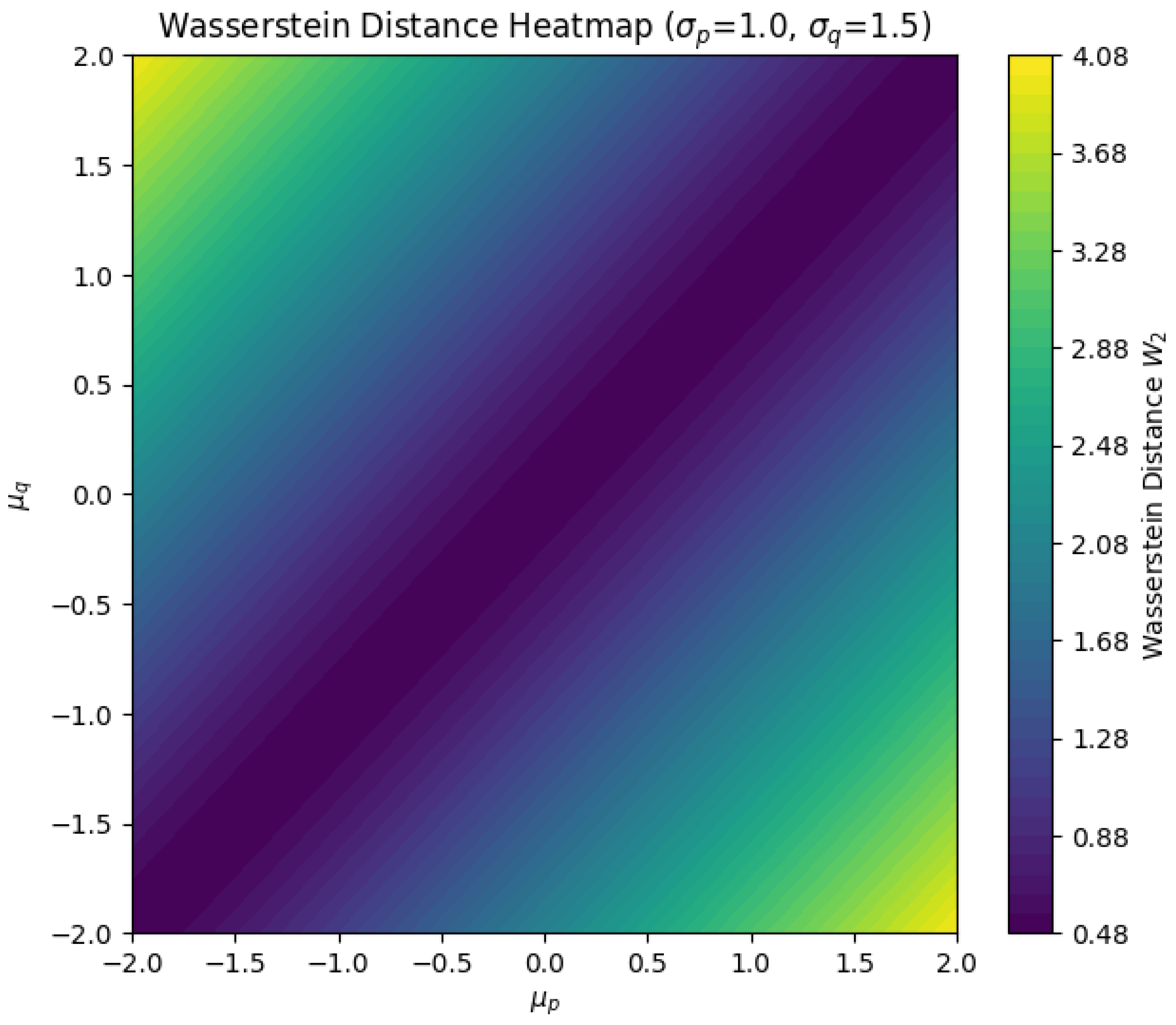

4.1.4. Rényi Divergence

4.1.4.1 Python Code to Generate Figure 43 and Figure 44 Illustrating Rényi Divergence

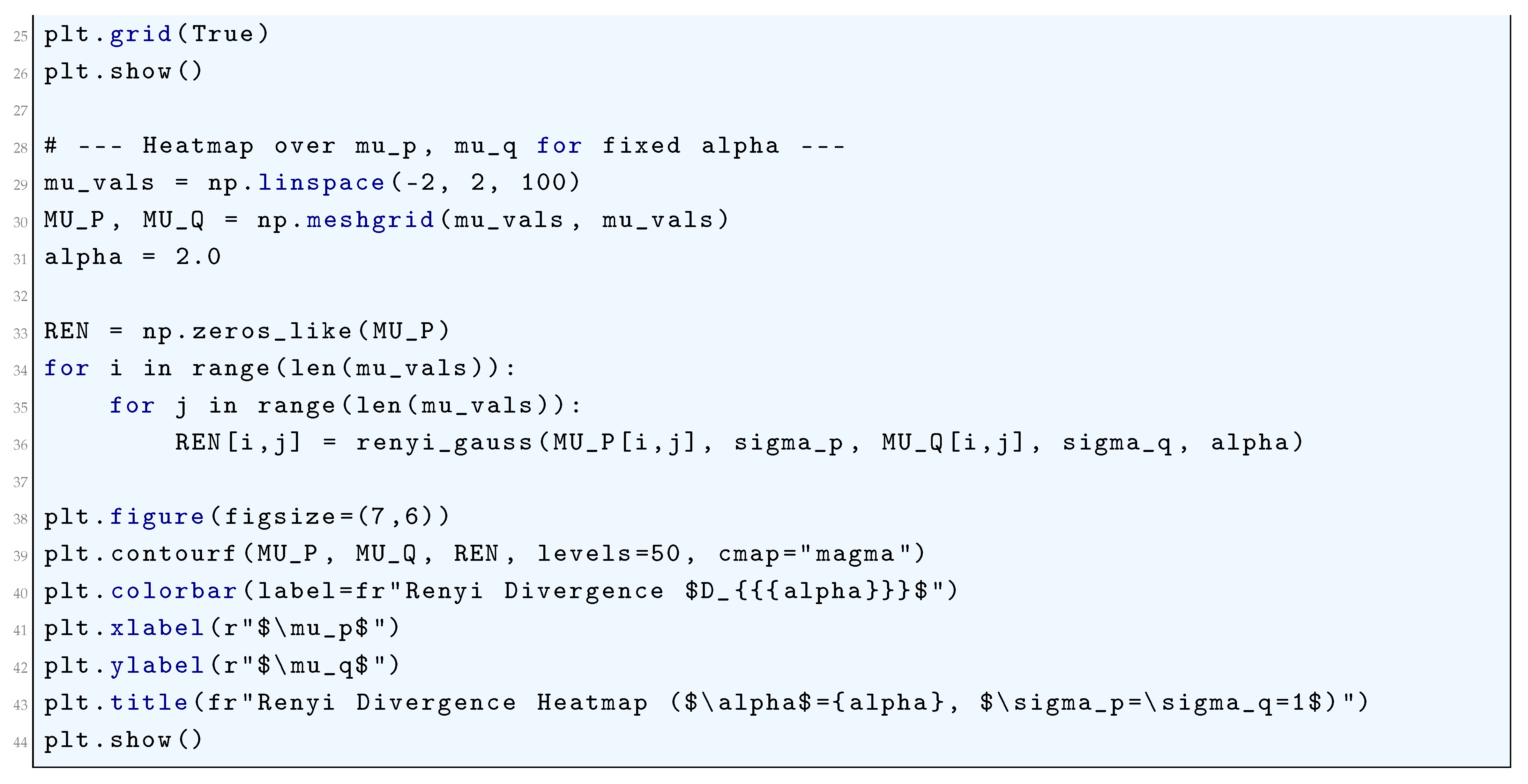

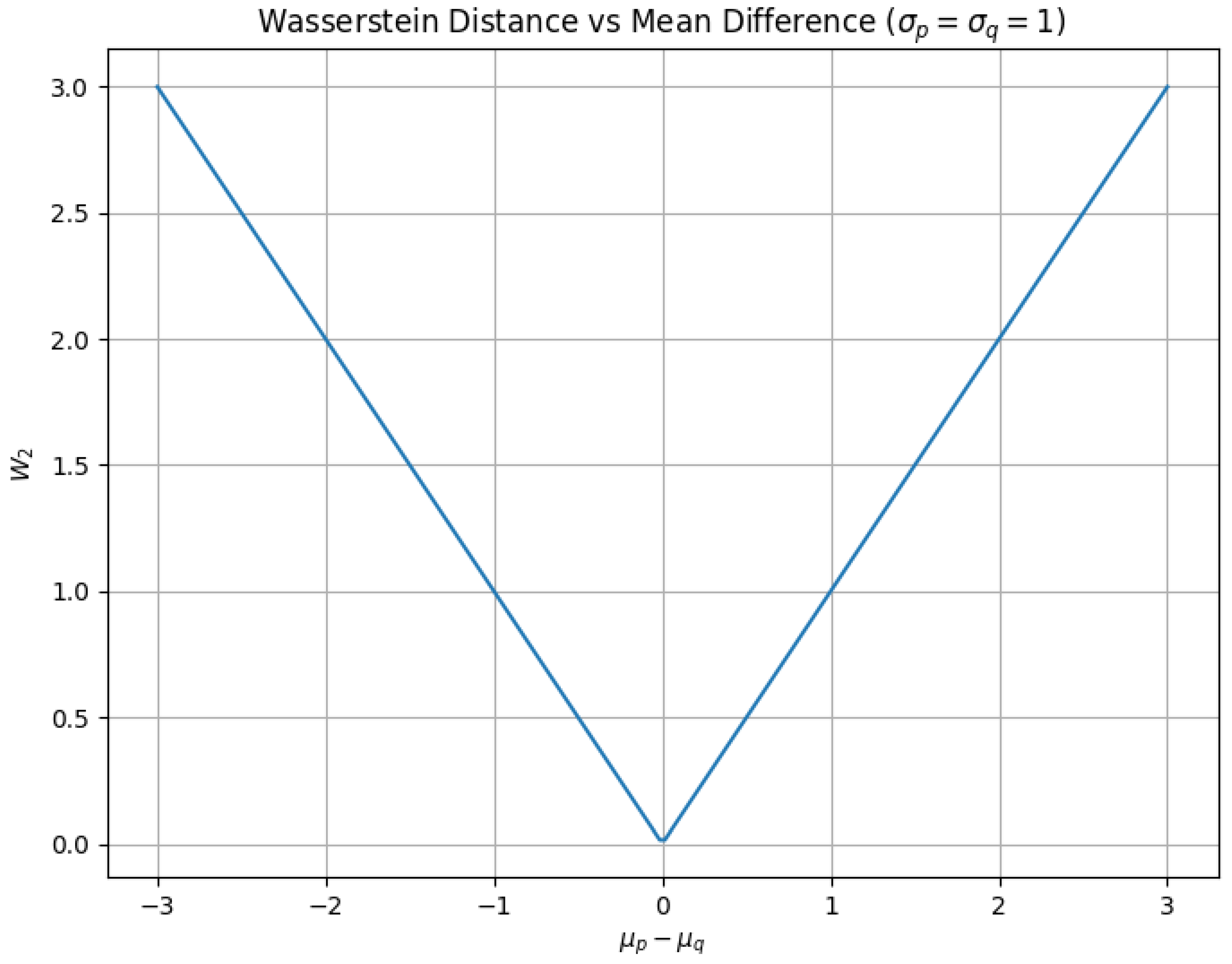

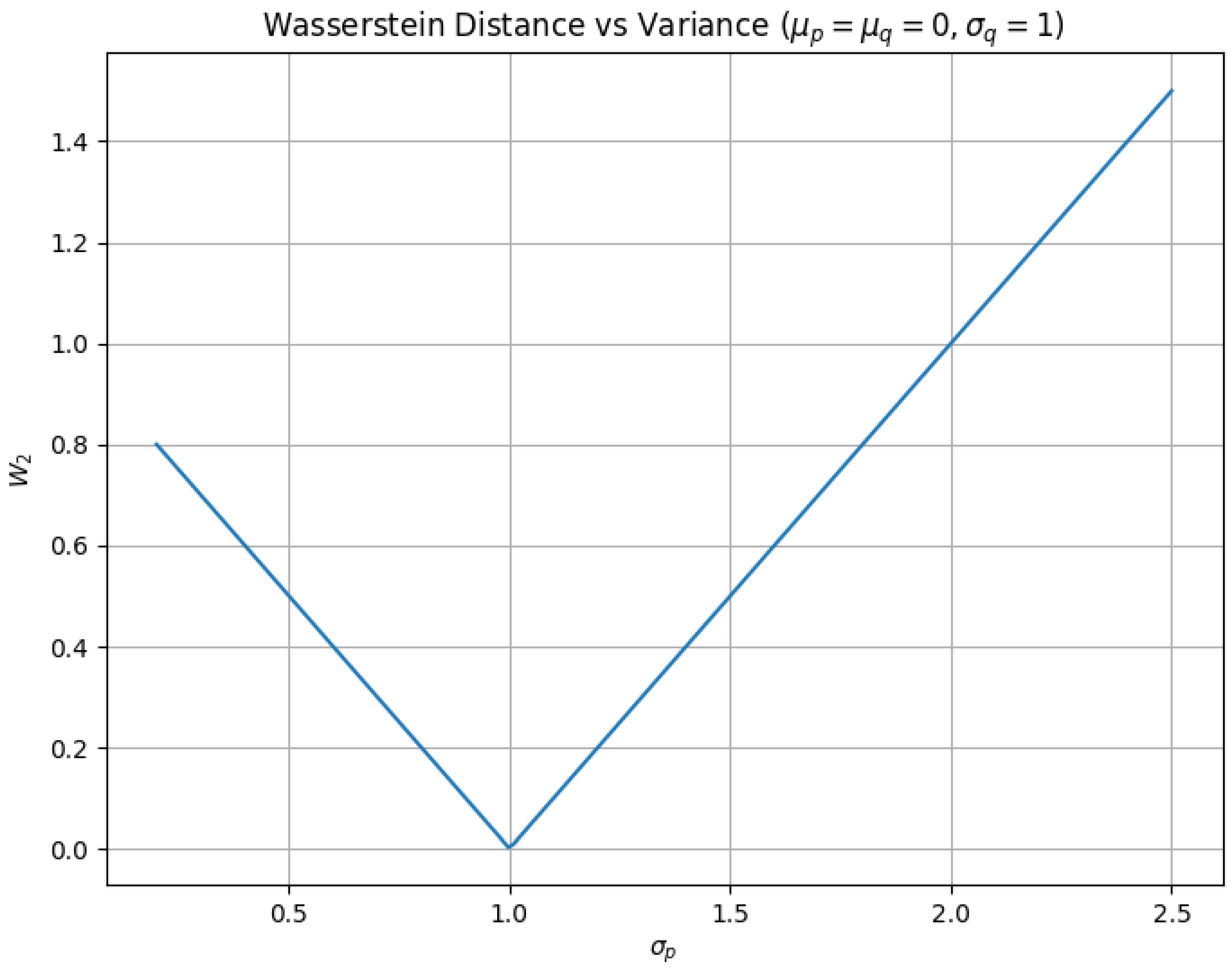

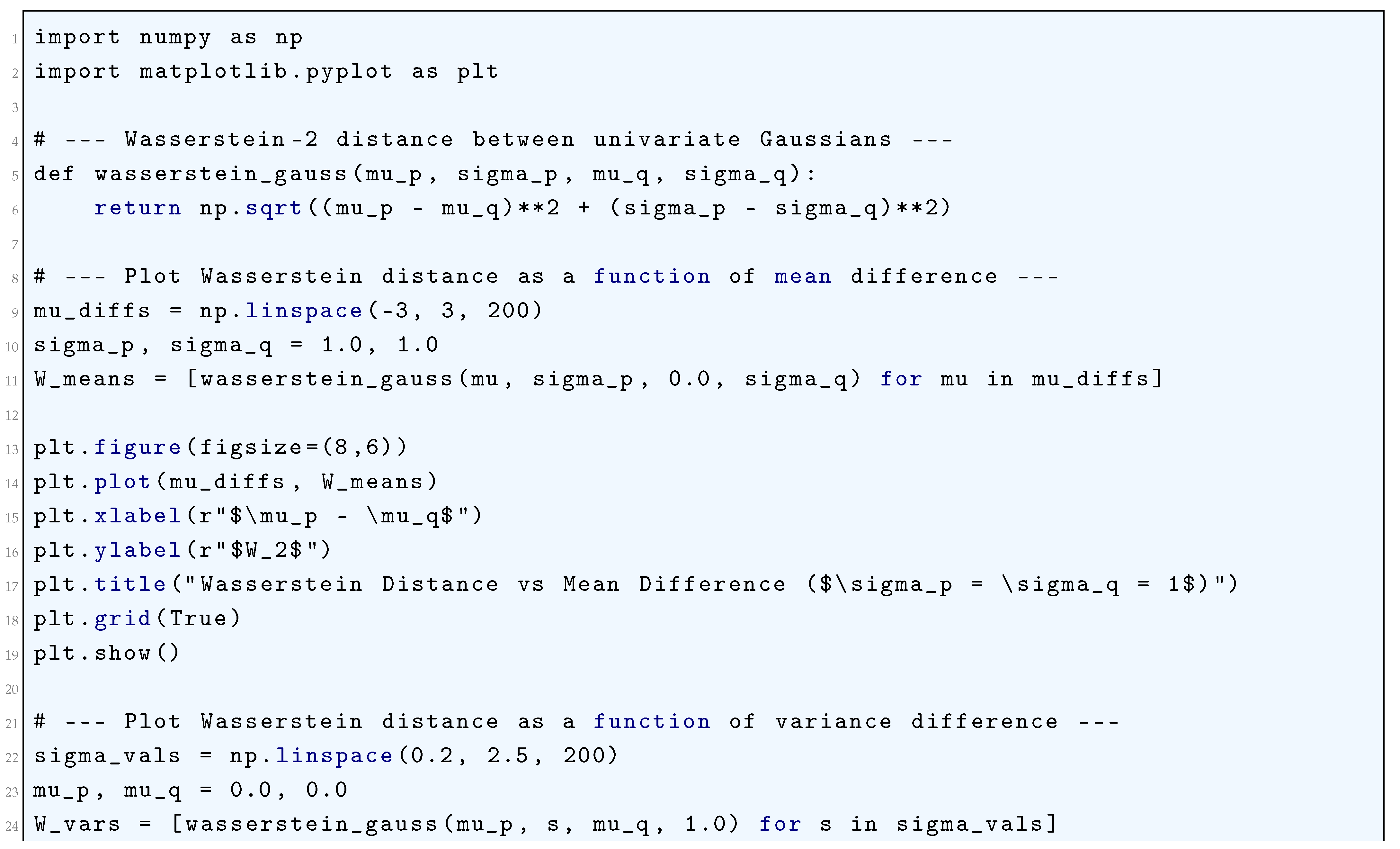

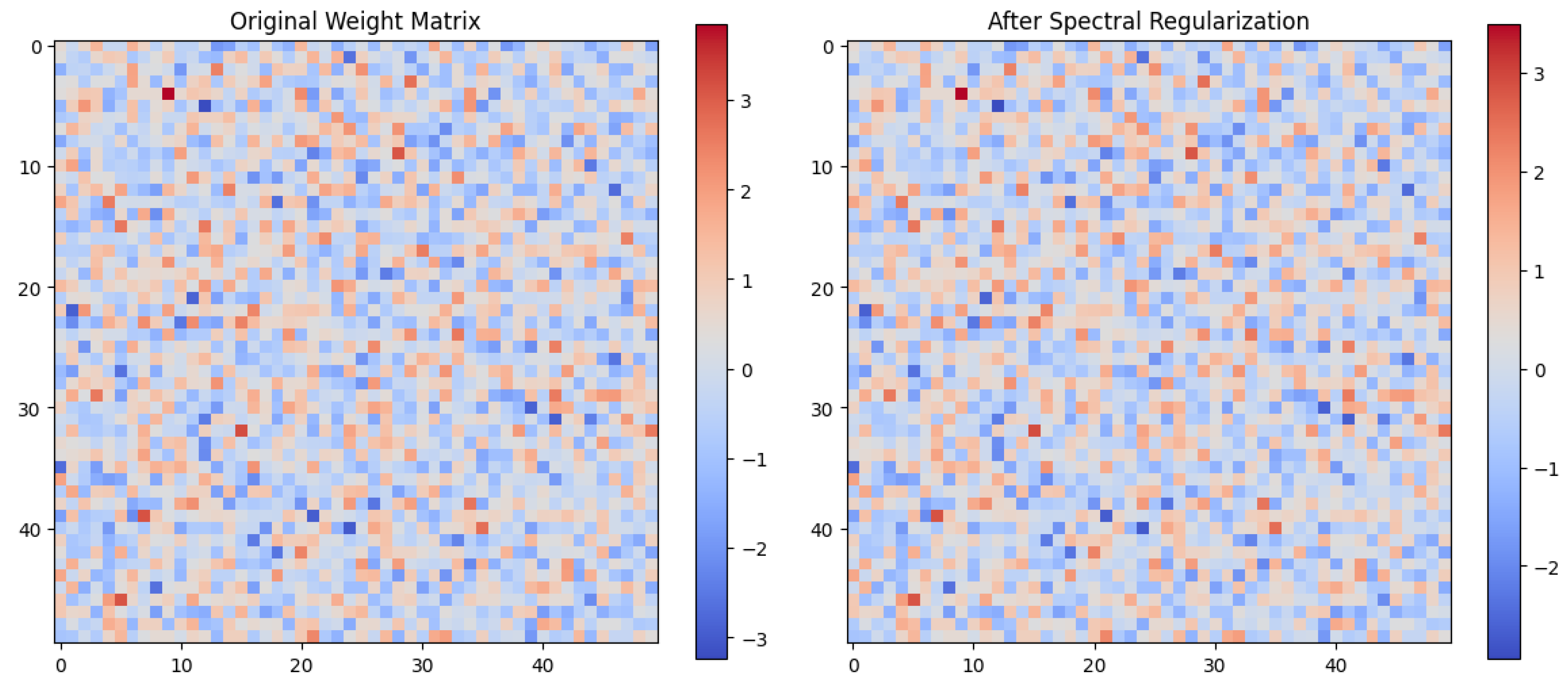

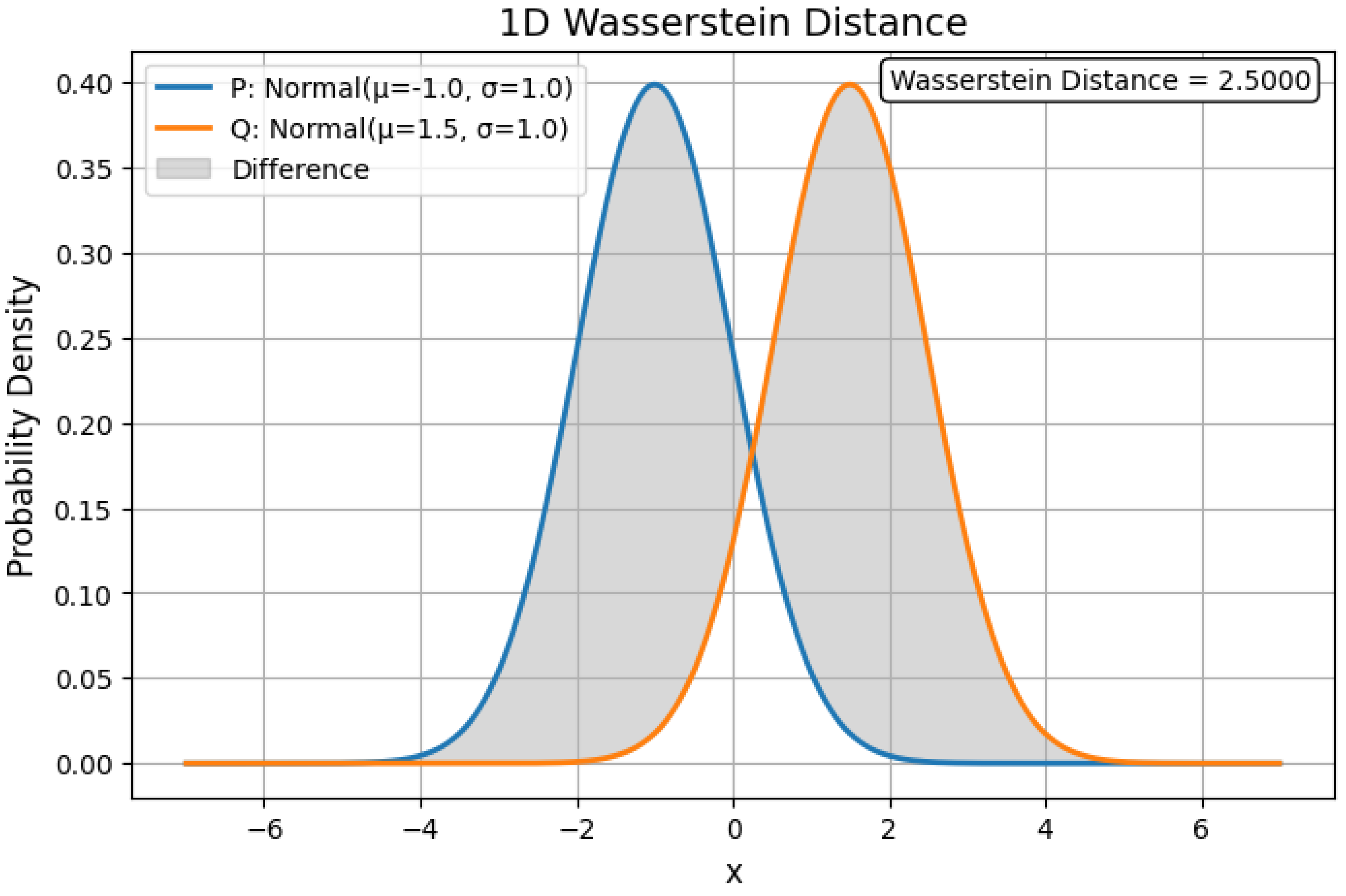

4.1.5. Wasserstein Distance

4.1.5.1 Python Code to Generate Figure 45, Figure 46, and Figure 47 Illustrating Wasserstein Distance

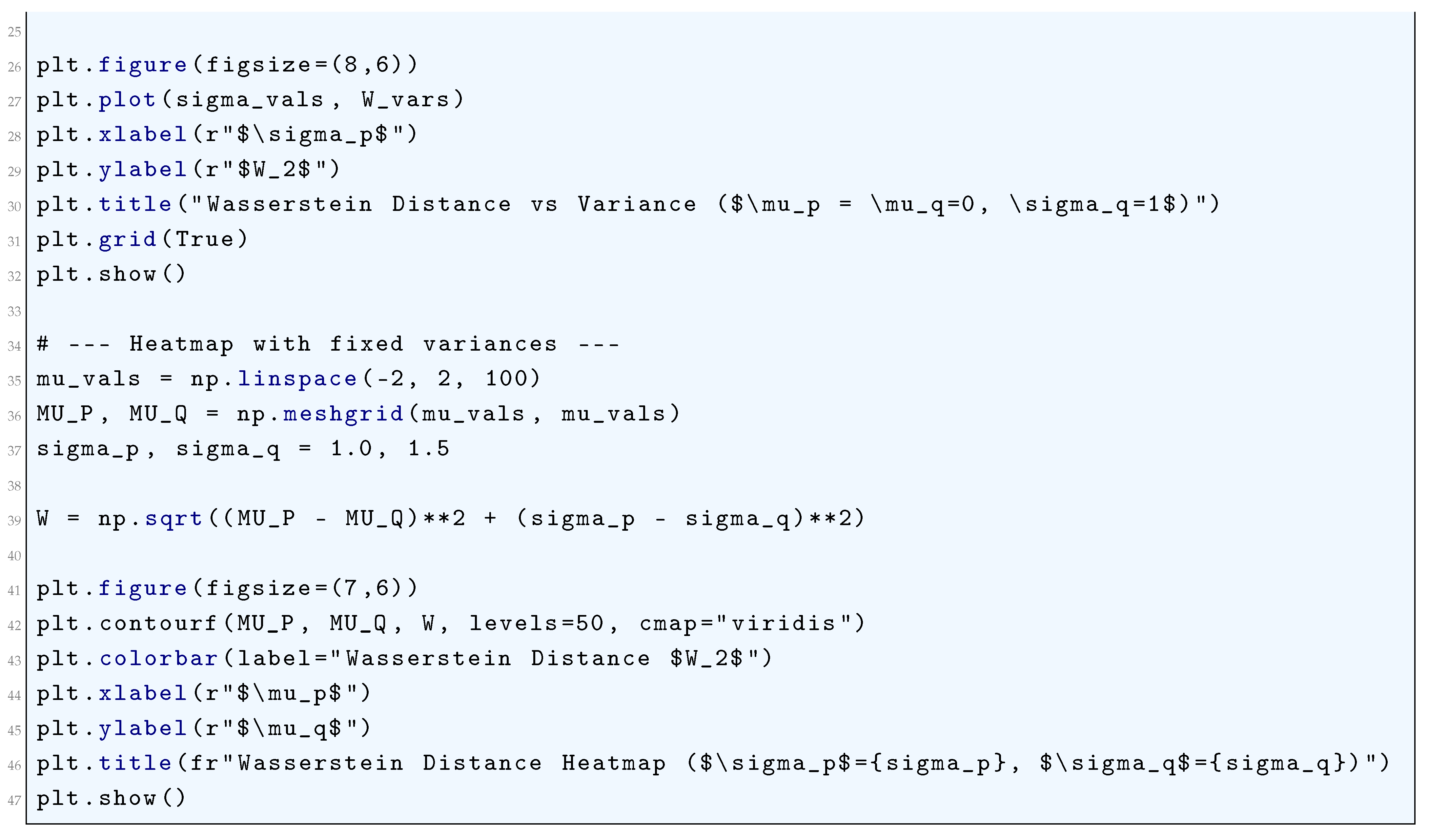

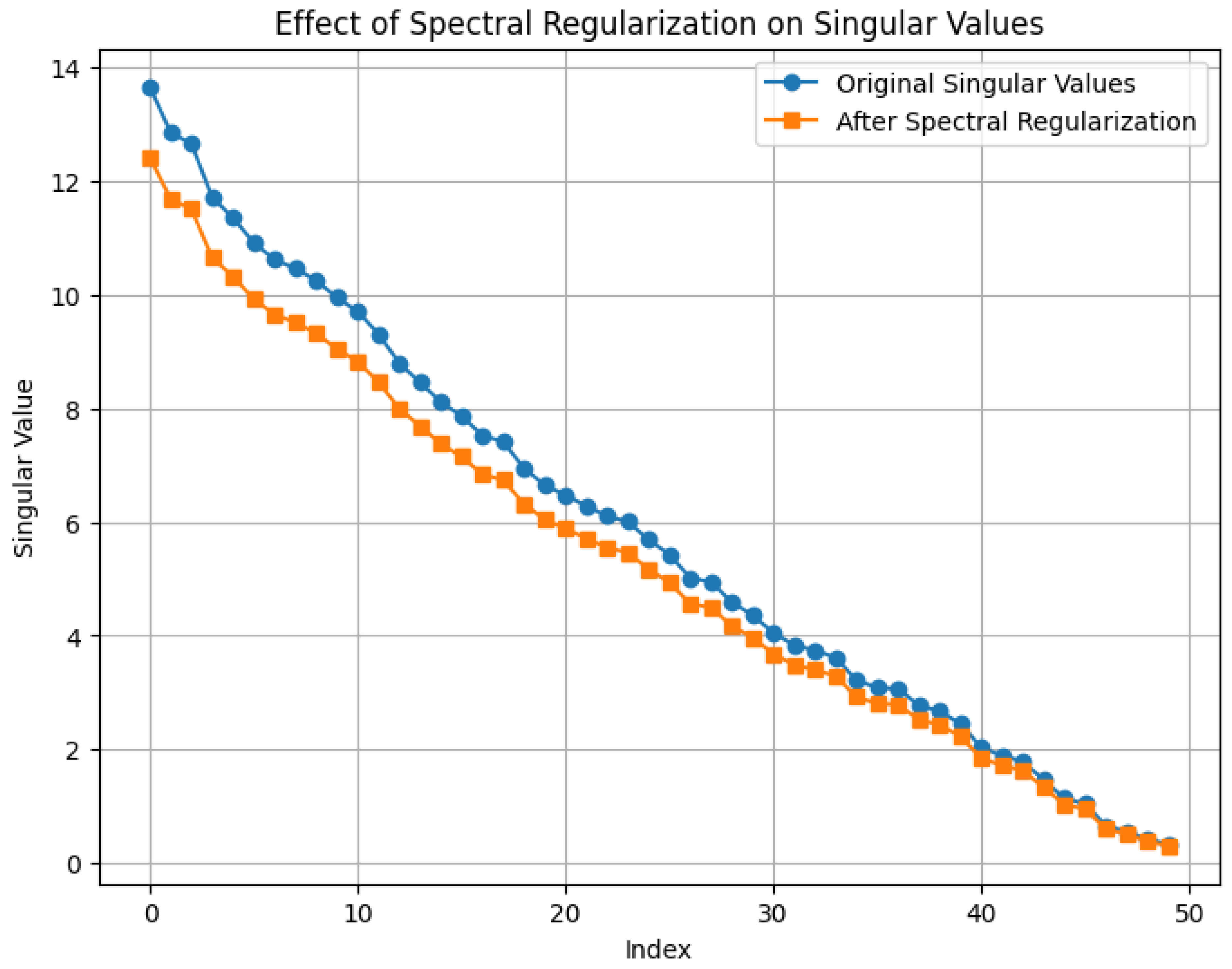

4.2. Spectral Regularization

4.2.1. Literature Review of Spectral Regularization

4.2.2. Analysis of Spectral Regularization

4.2.3. Python Code to Generate Figure 48 and Figure 49 Illustrating Spectral Regularization

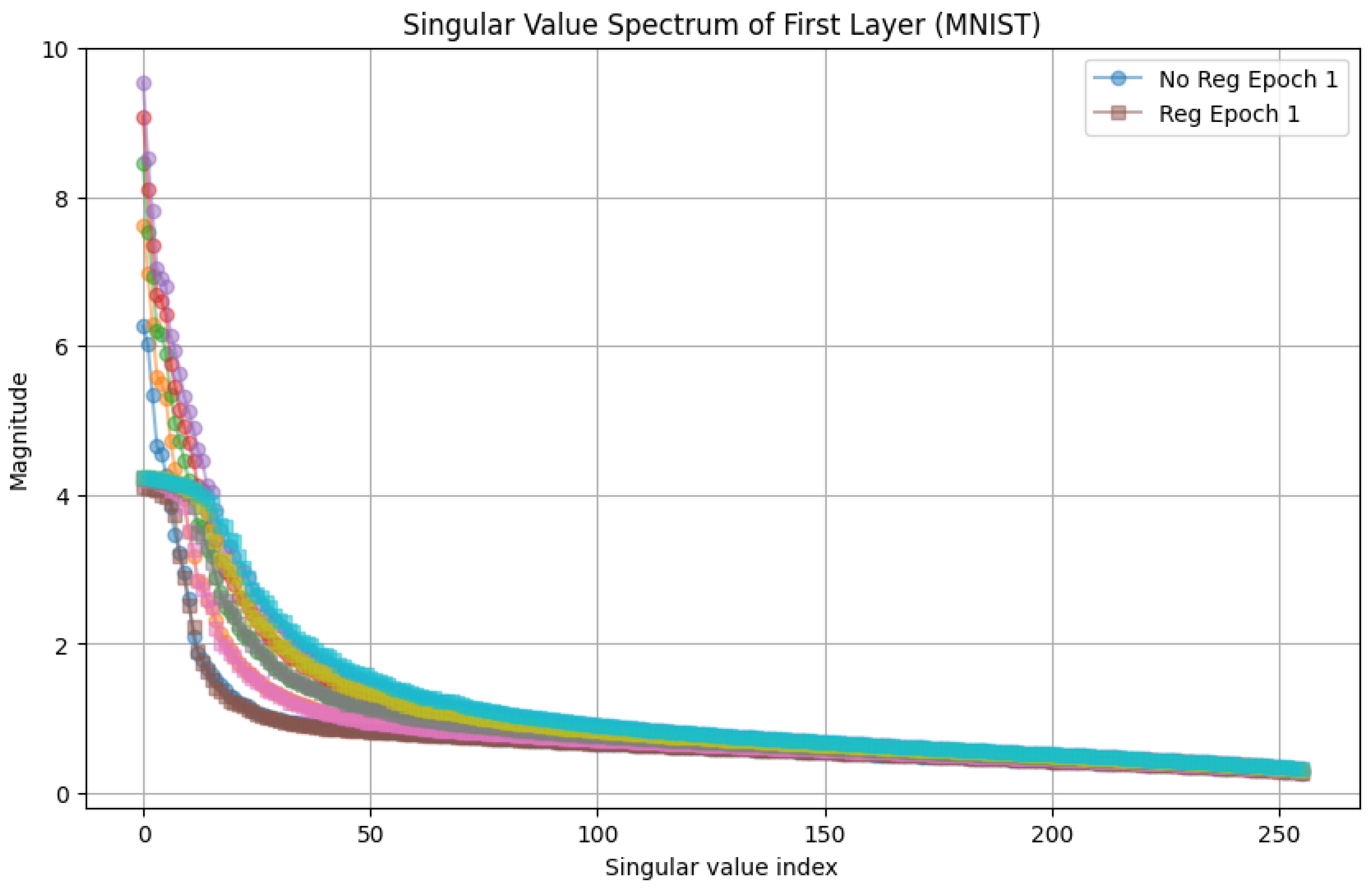

4.2.4. Python Code to Generate Figure 50 Illustrating Singular Value Spectrum of First Layer (MNIST)

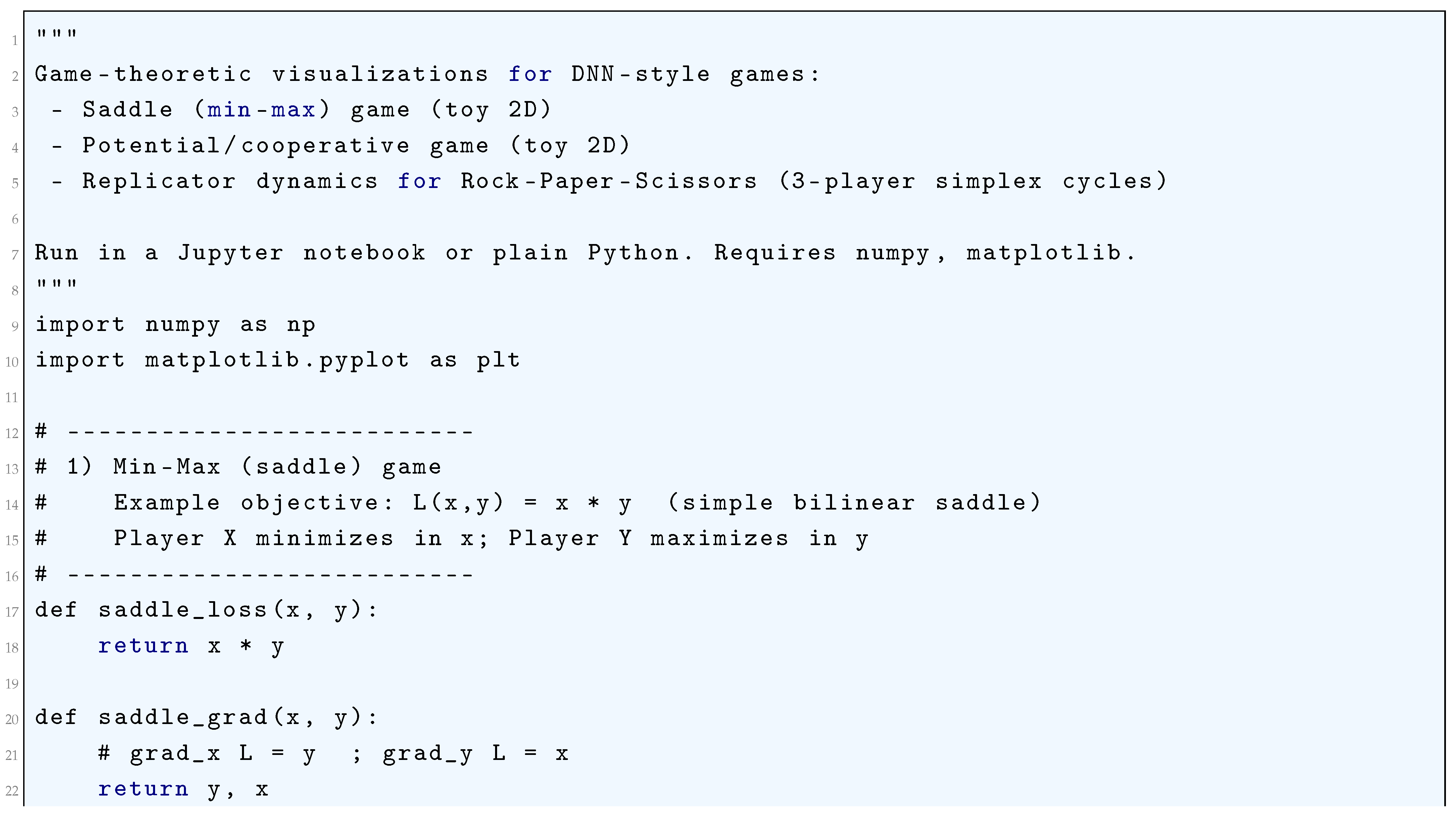

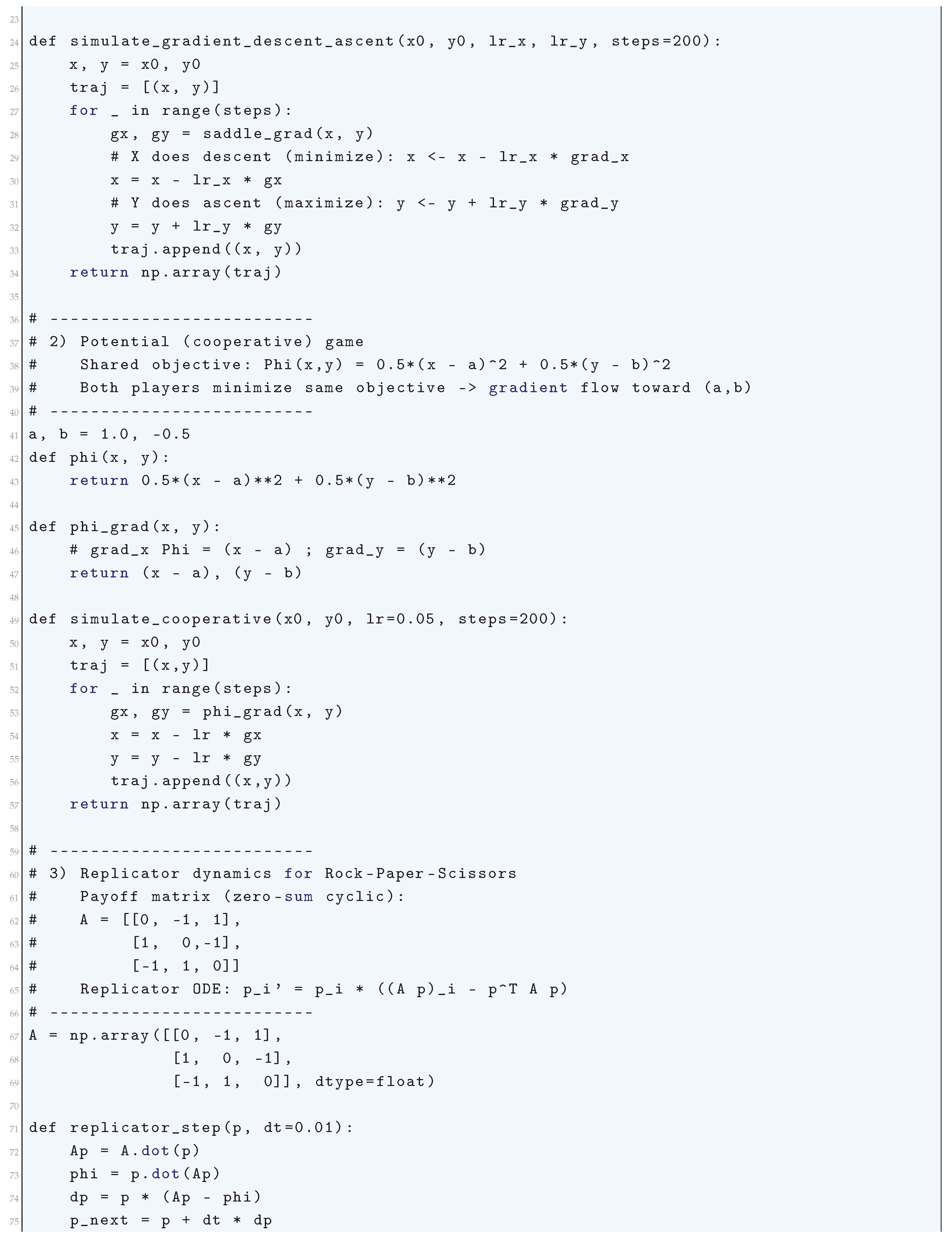

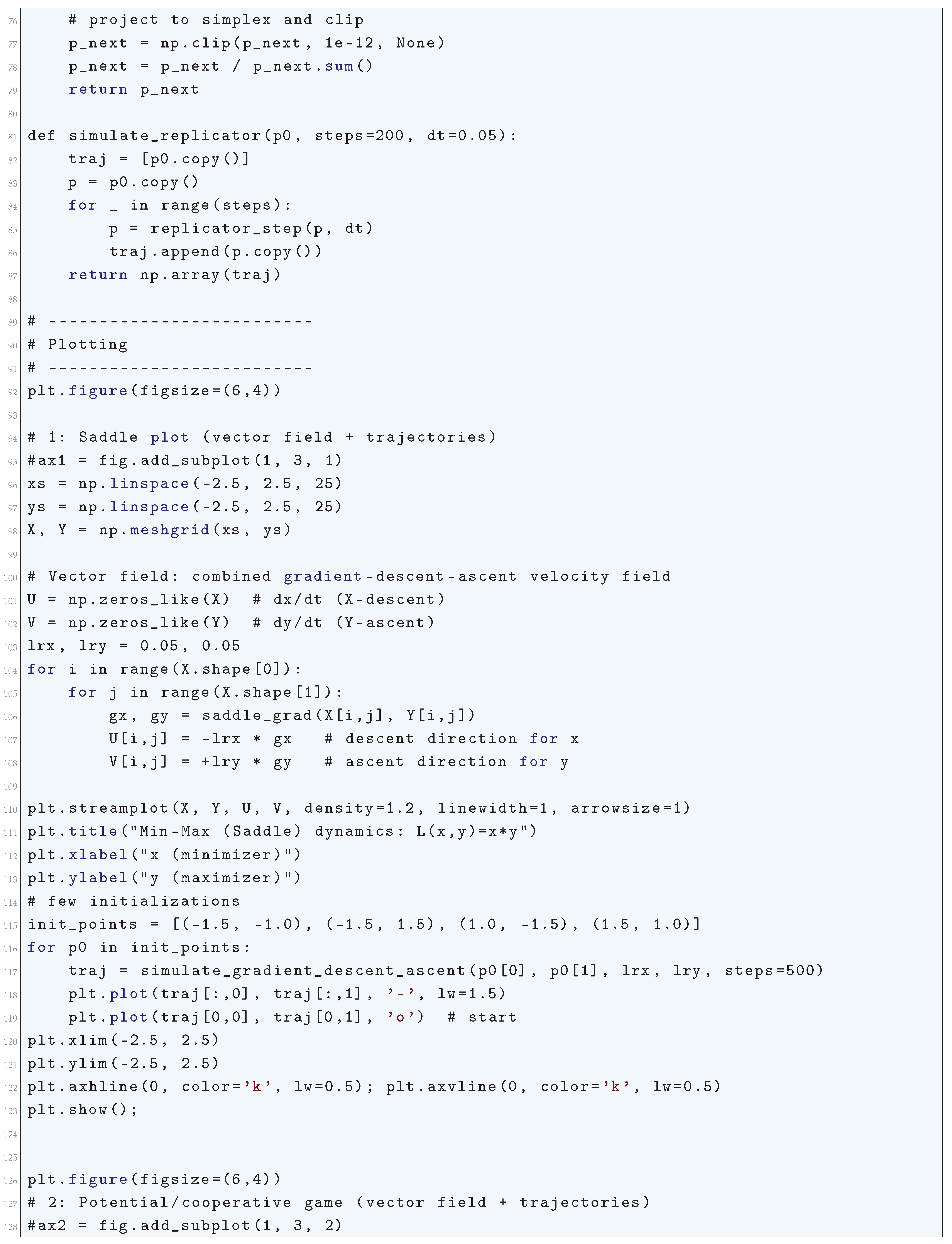

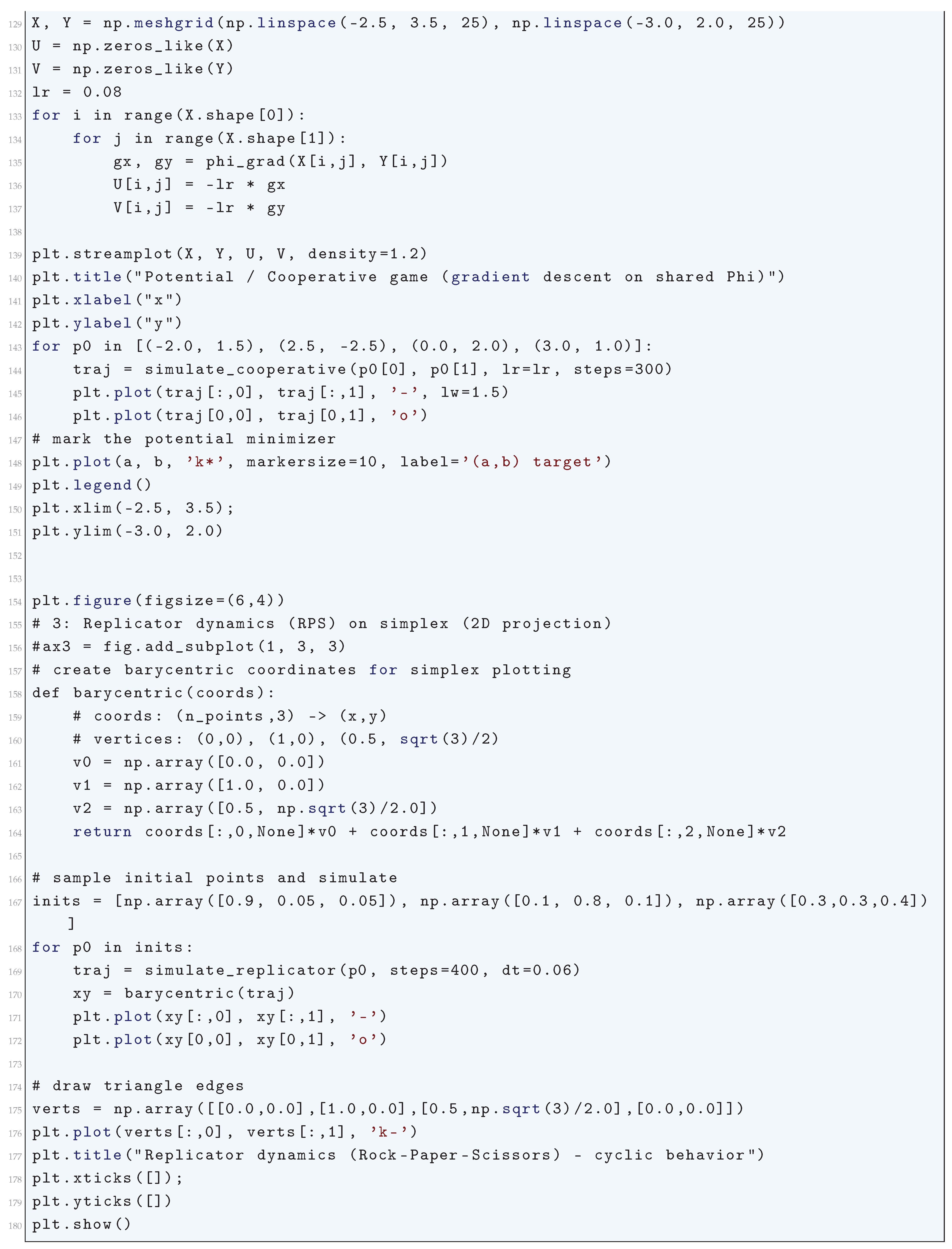

5. Game-Theoretic Formulations of Deep Neural Networks

5.1. Literature Review of Game-Theoretic Formulations of Deep Neural Networks

5.2. Analysis of Game-Theoretic Formulations of Deep Neural Networks

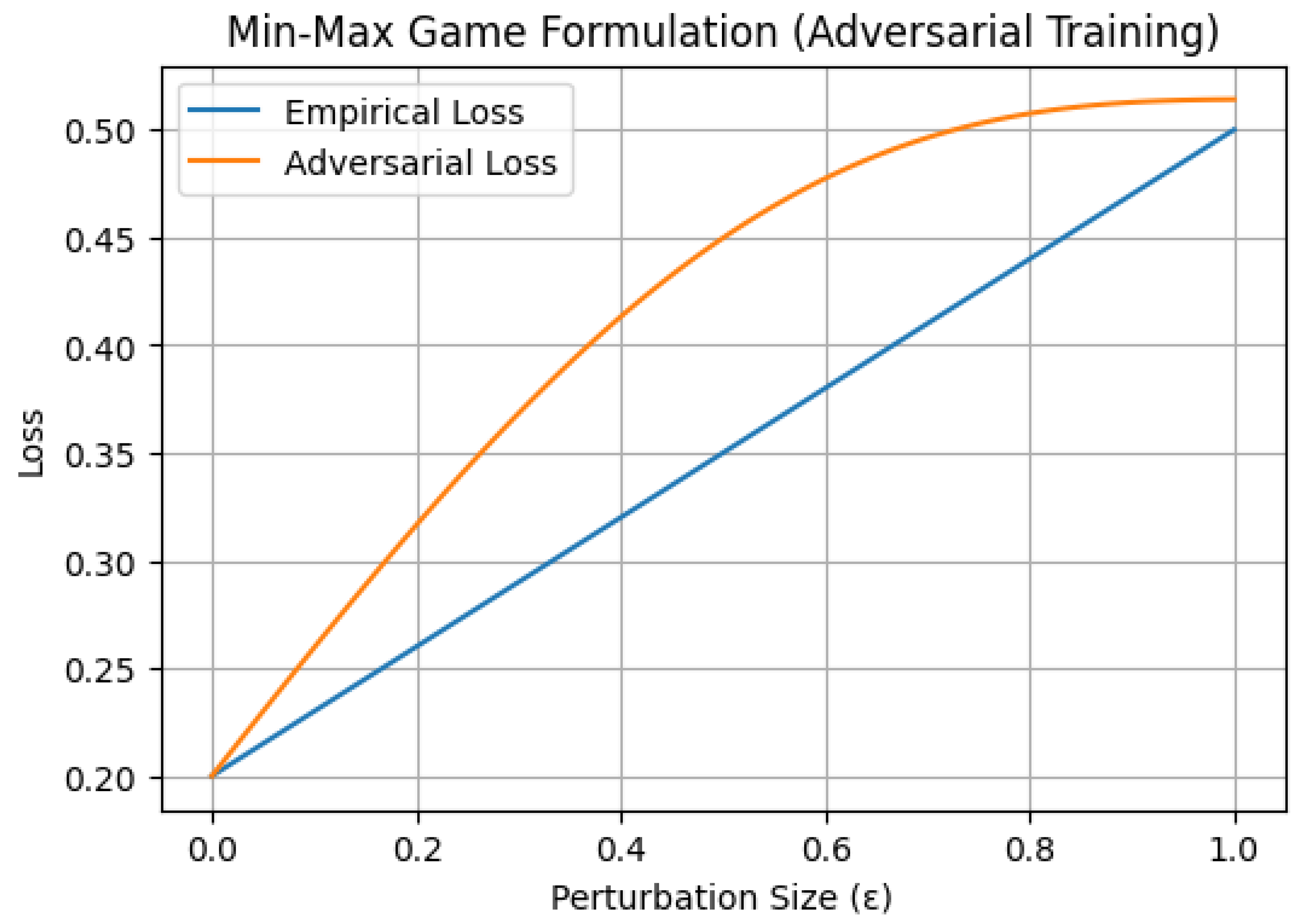

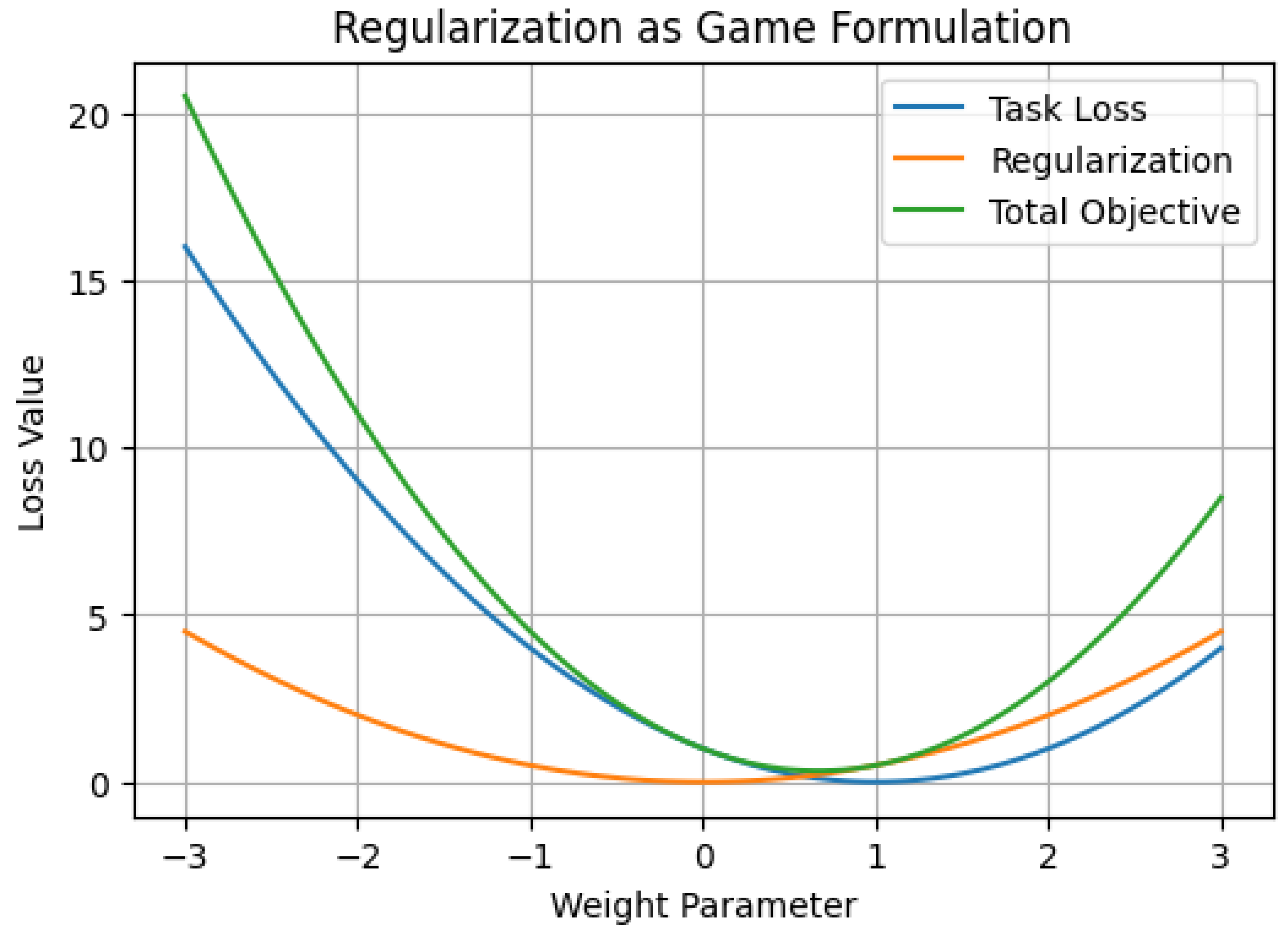

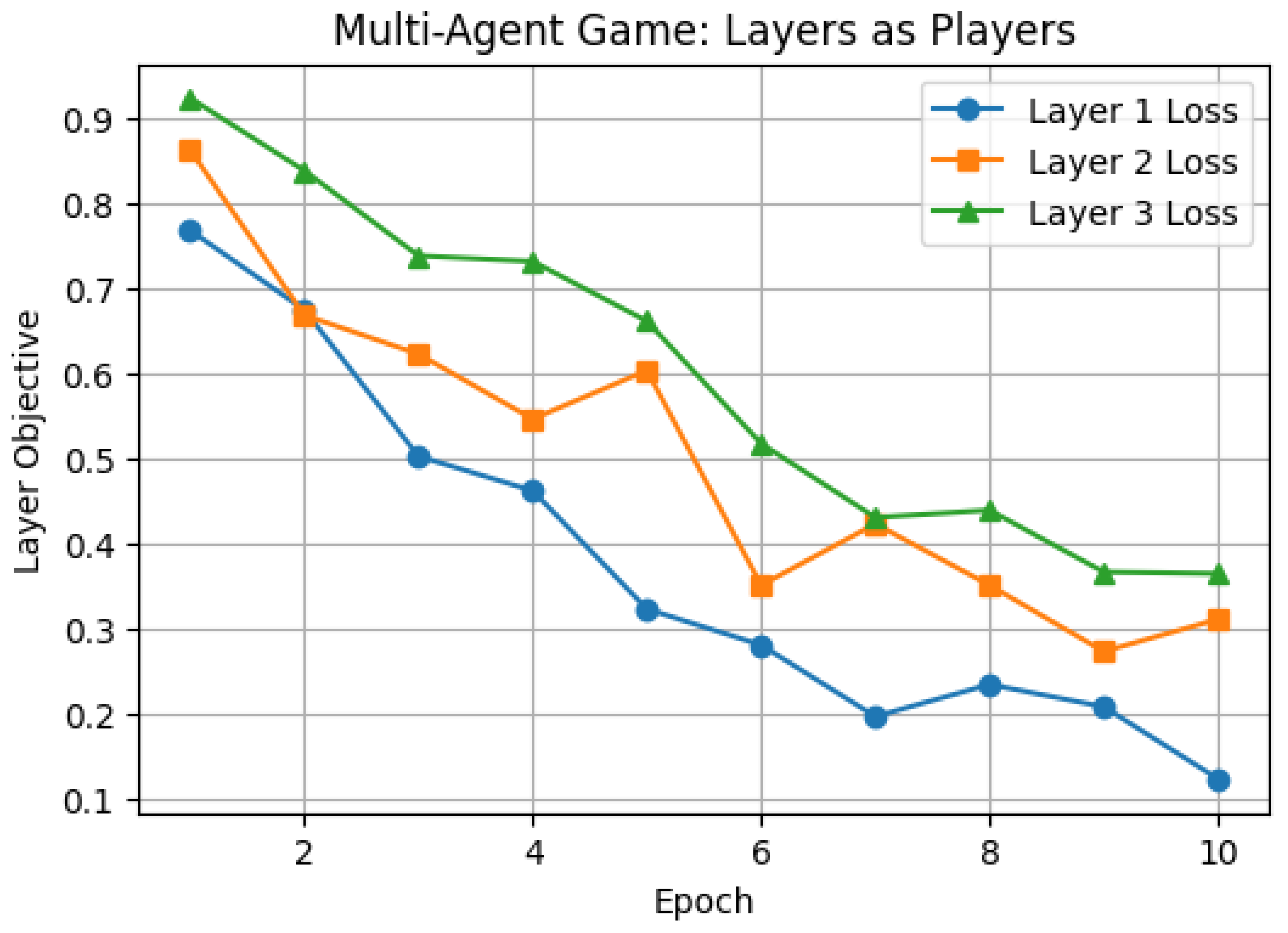

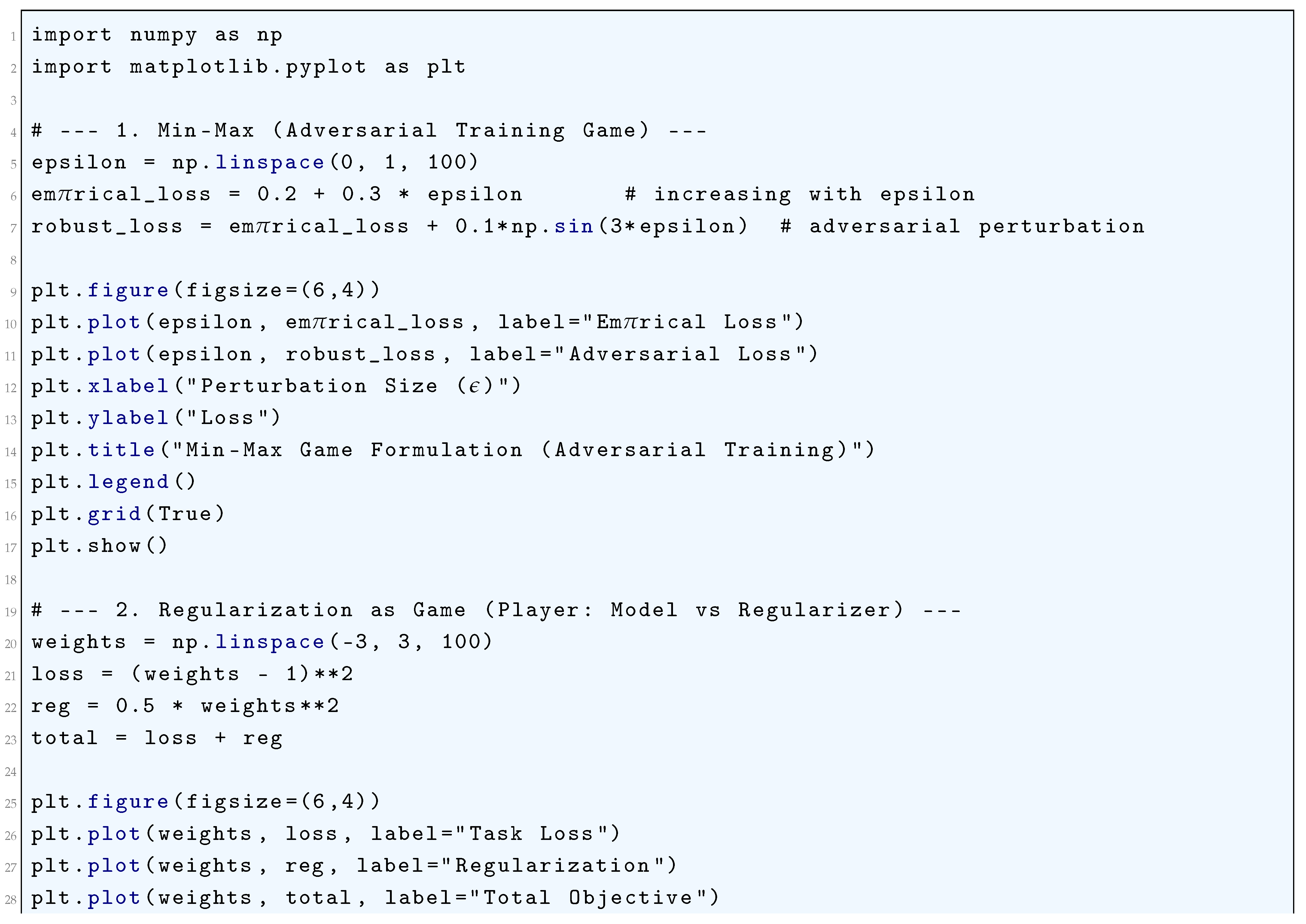

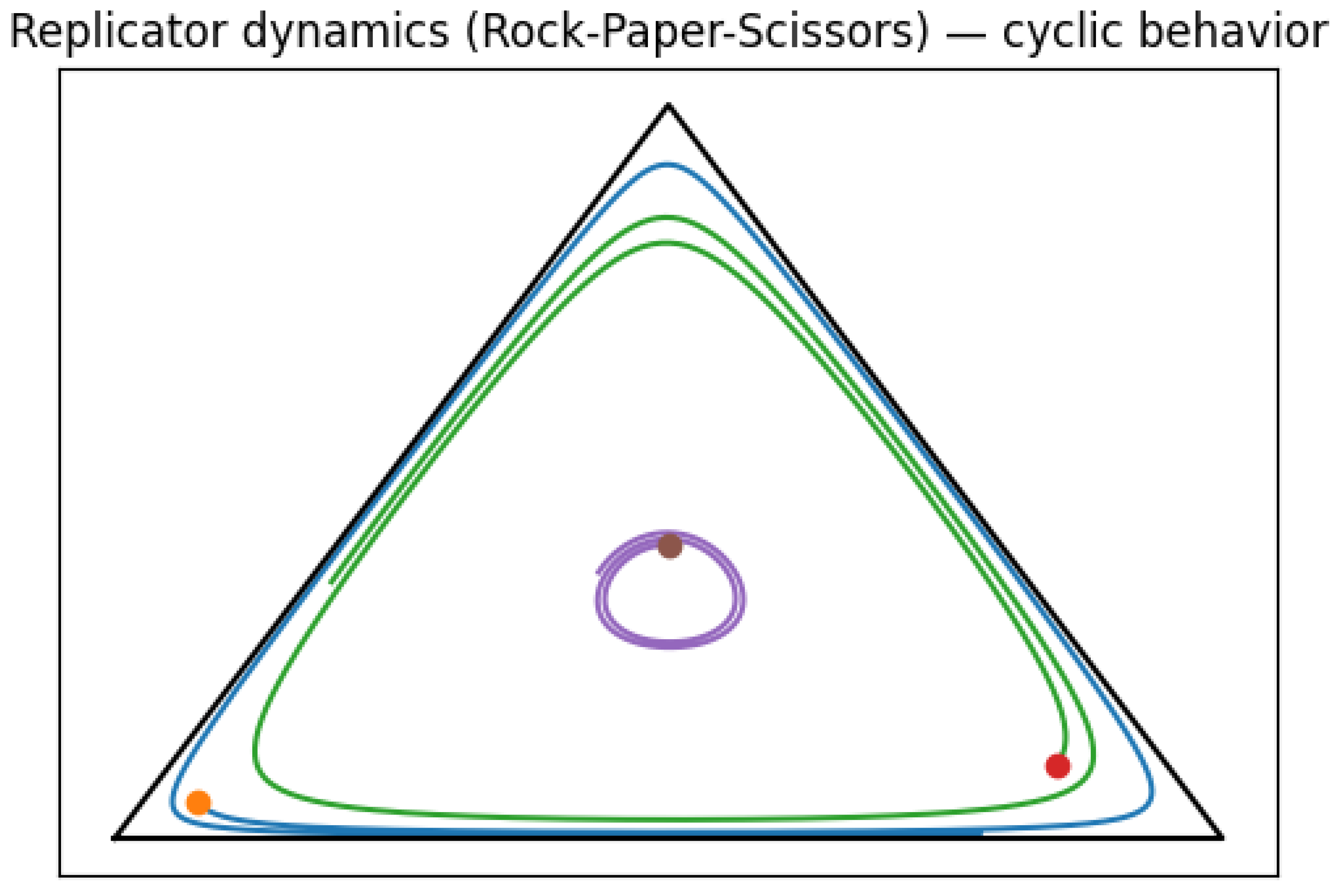

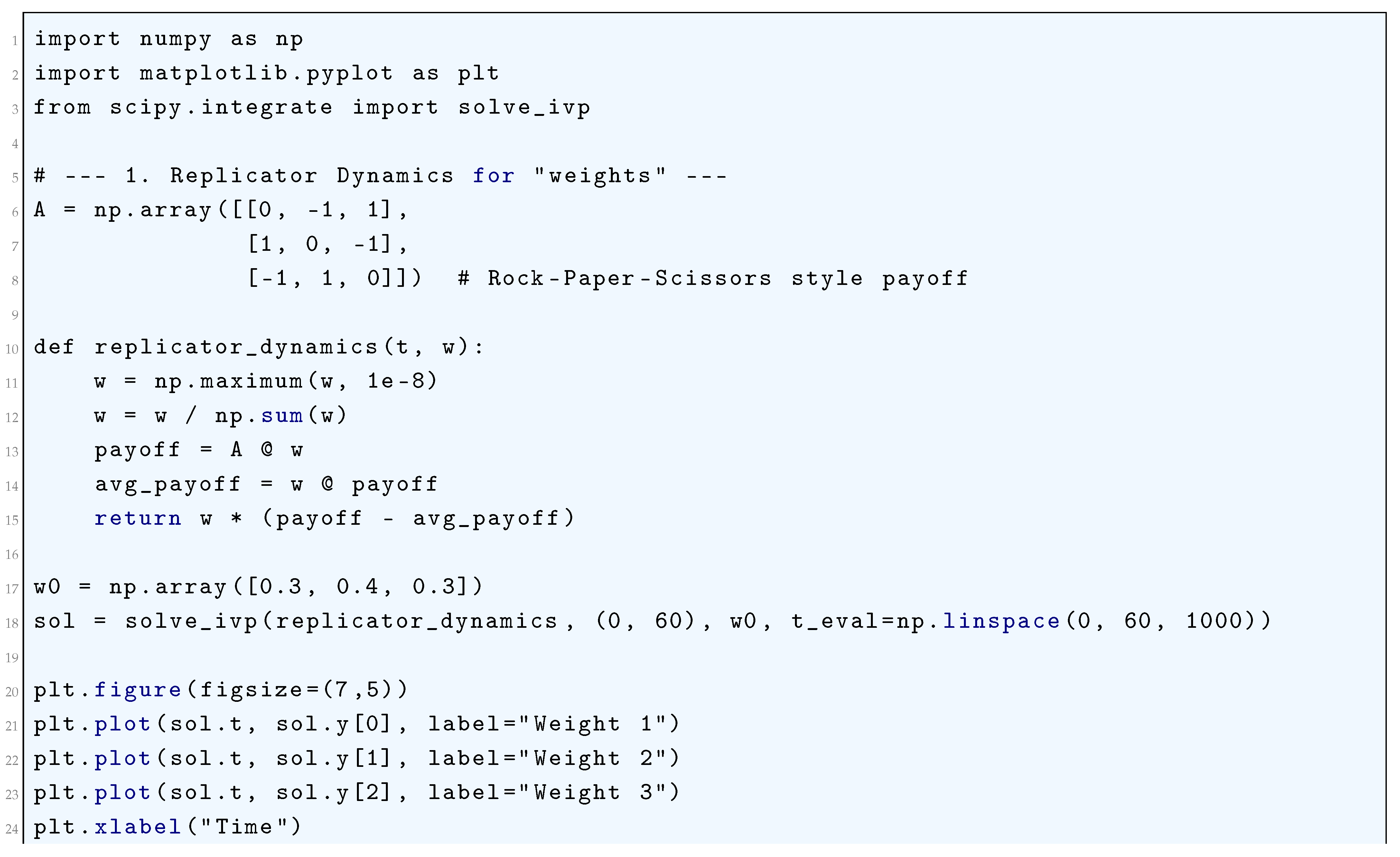

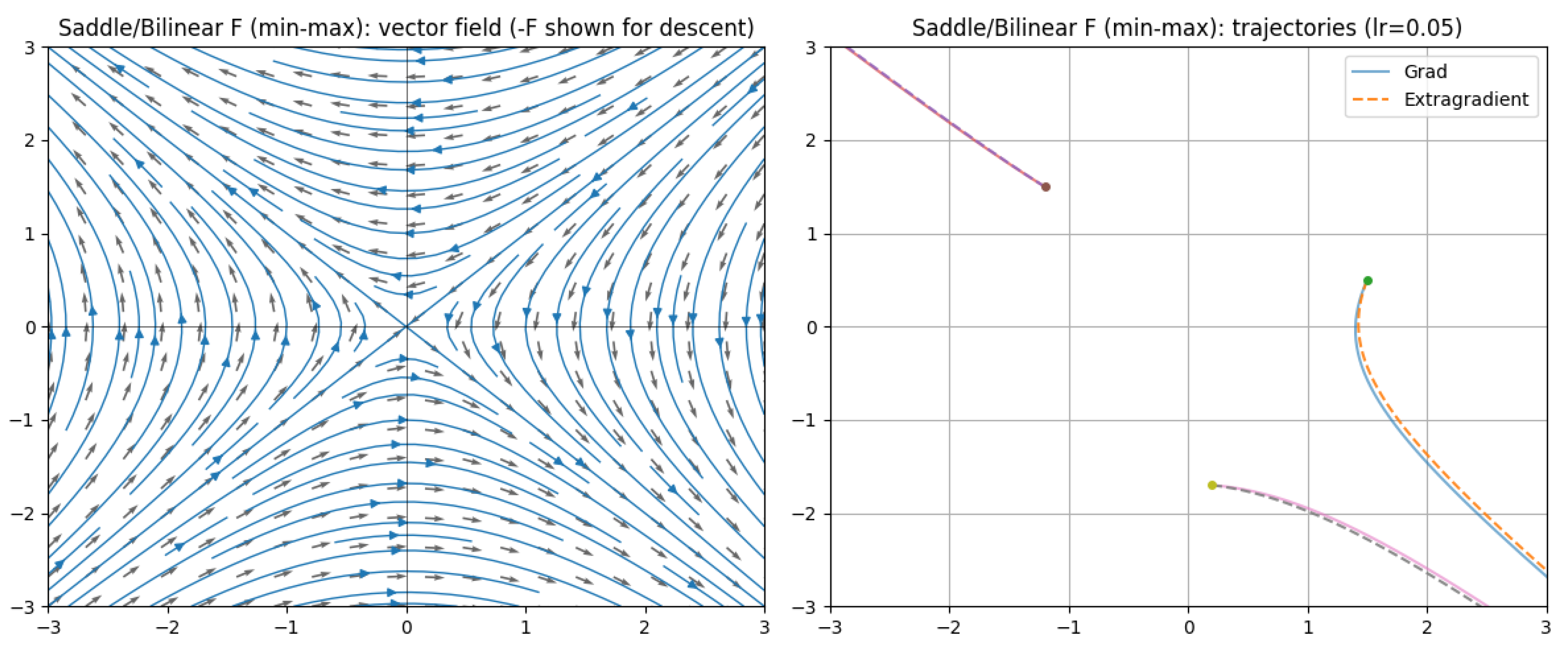

5.2.1. Python Code to Generate Figure 51, Figure 52, and Figure 53 Illustrating Game-Theoretic Formulations of Deep Neural Networks

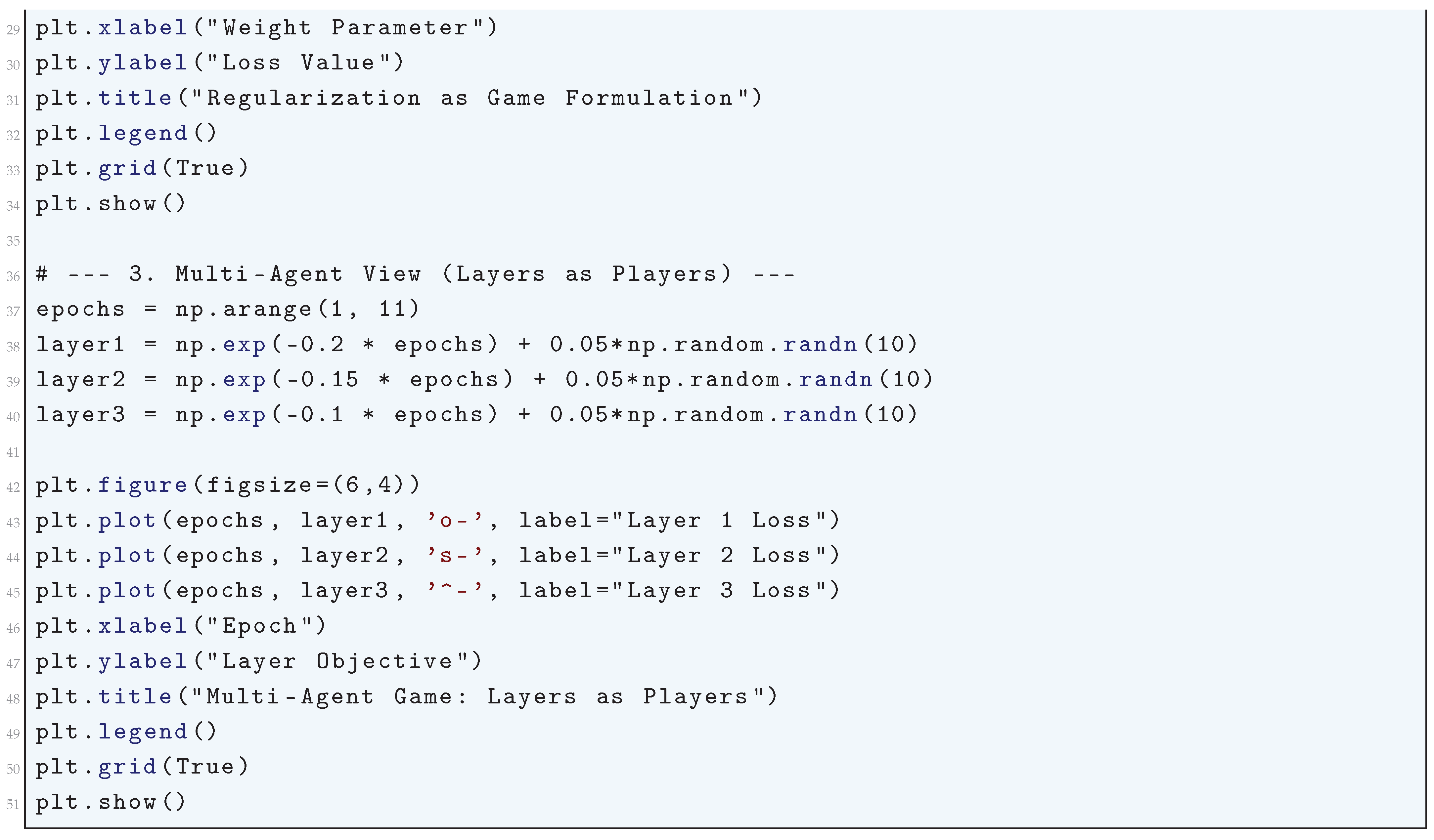

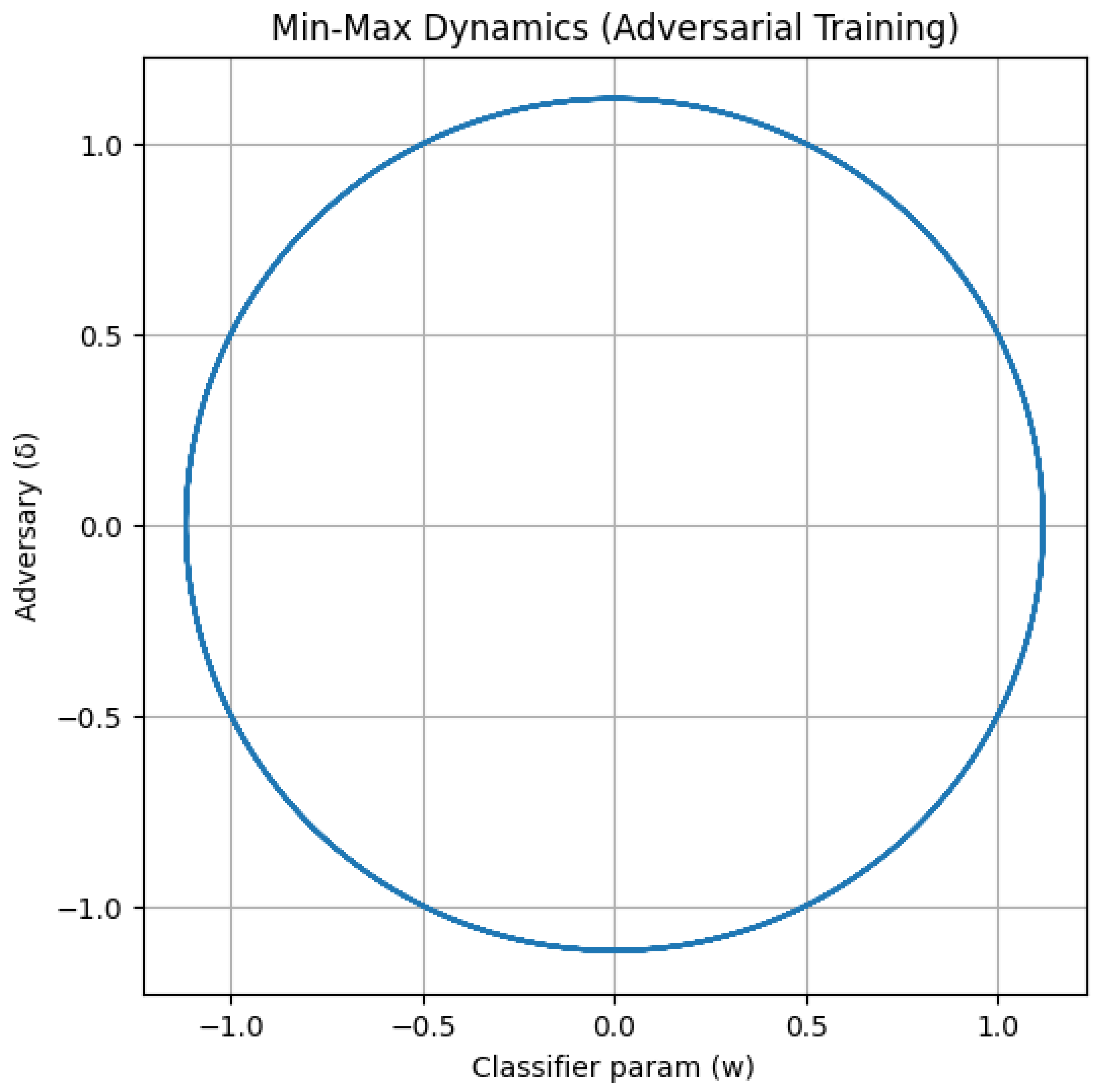

5.2.2. Min-Max (Saddle) Dynamics:

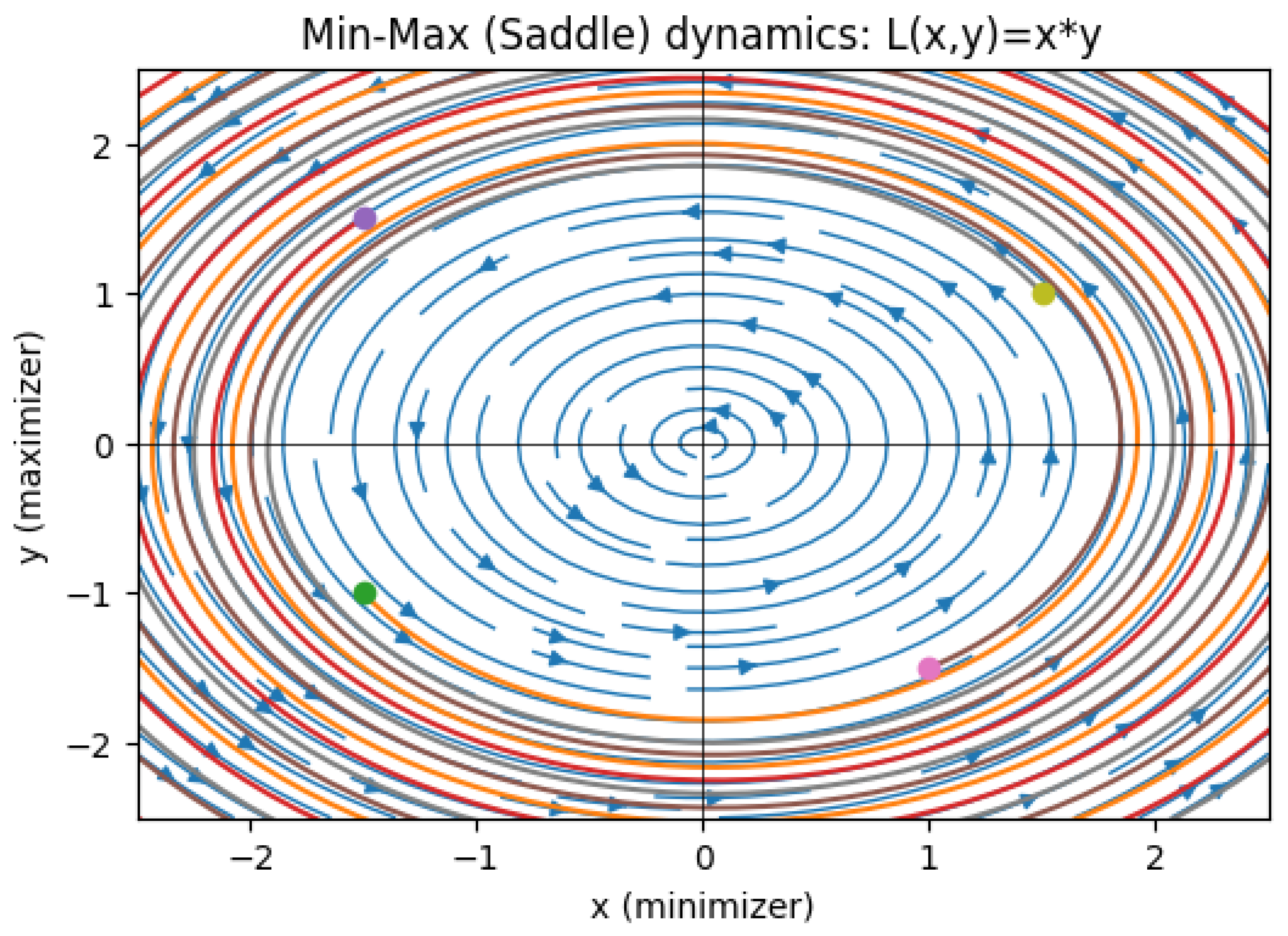

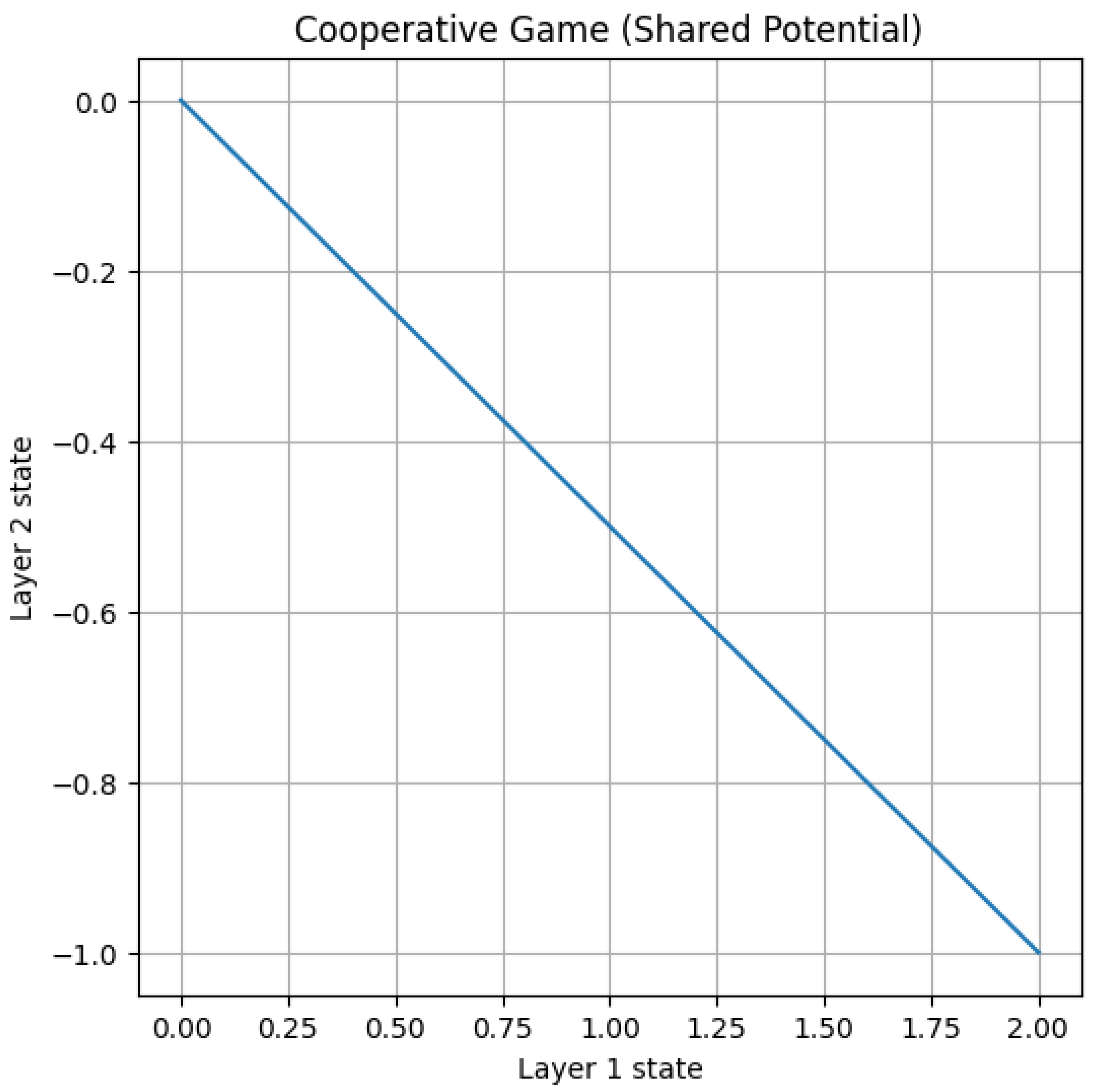

5.2.3. Potential/Cooperative Game (Gradient Descent on Shared Phi)

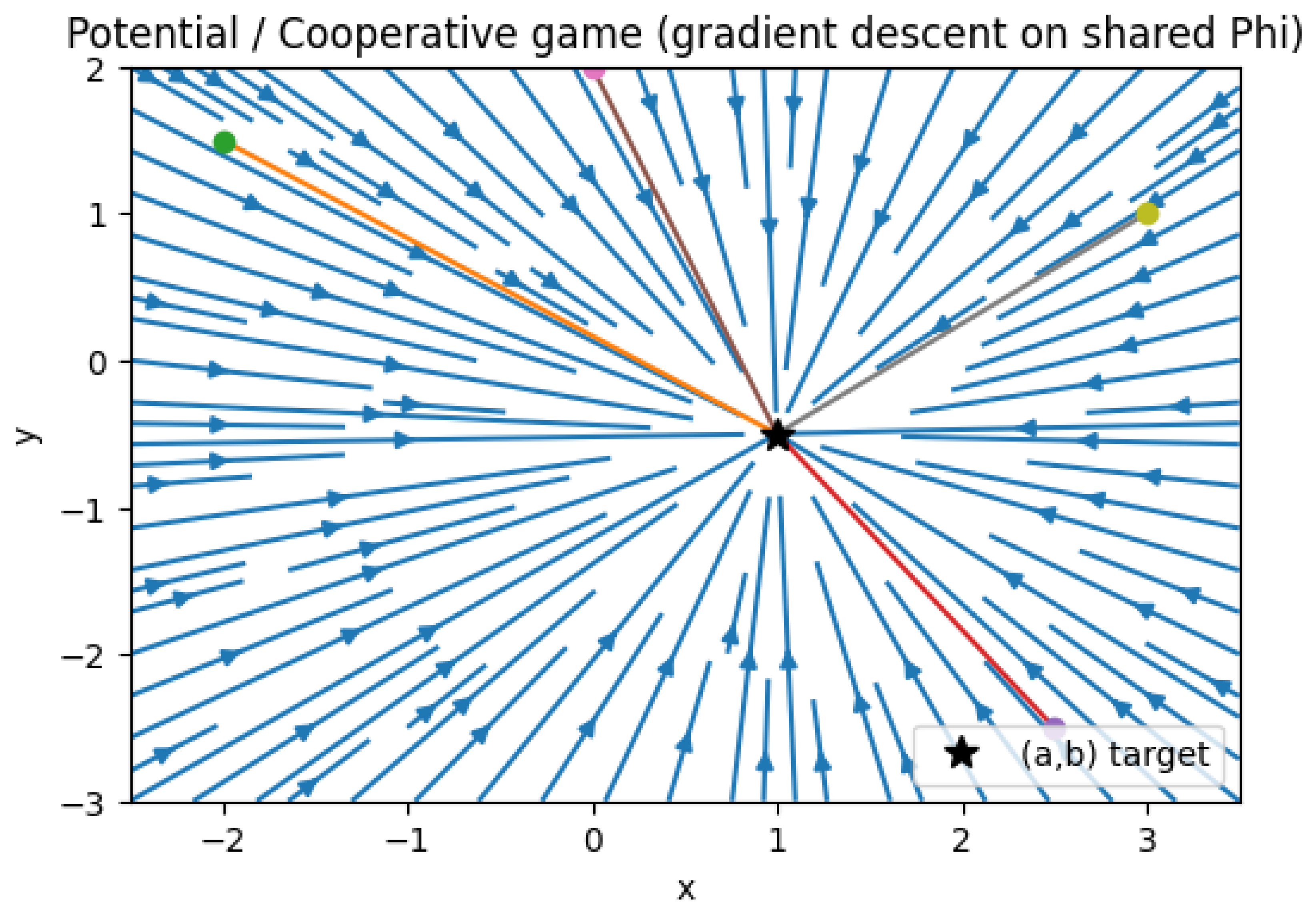

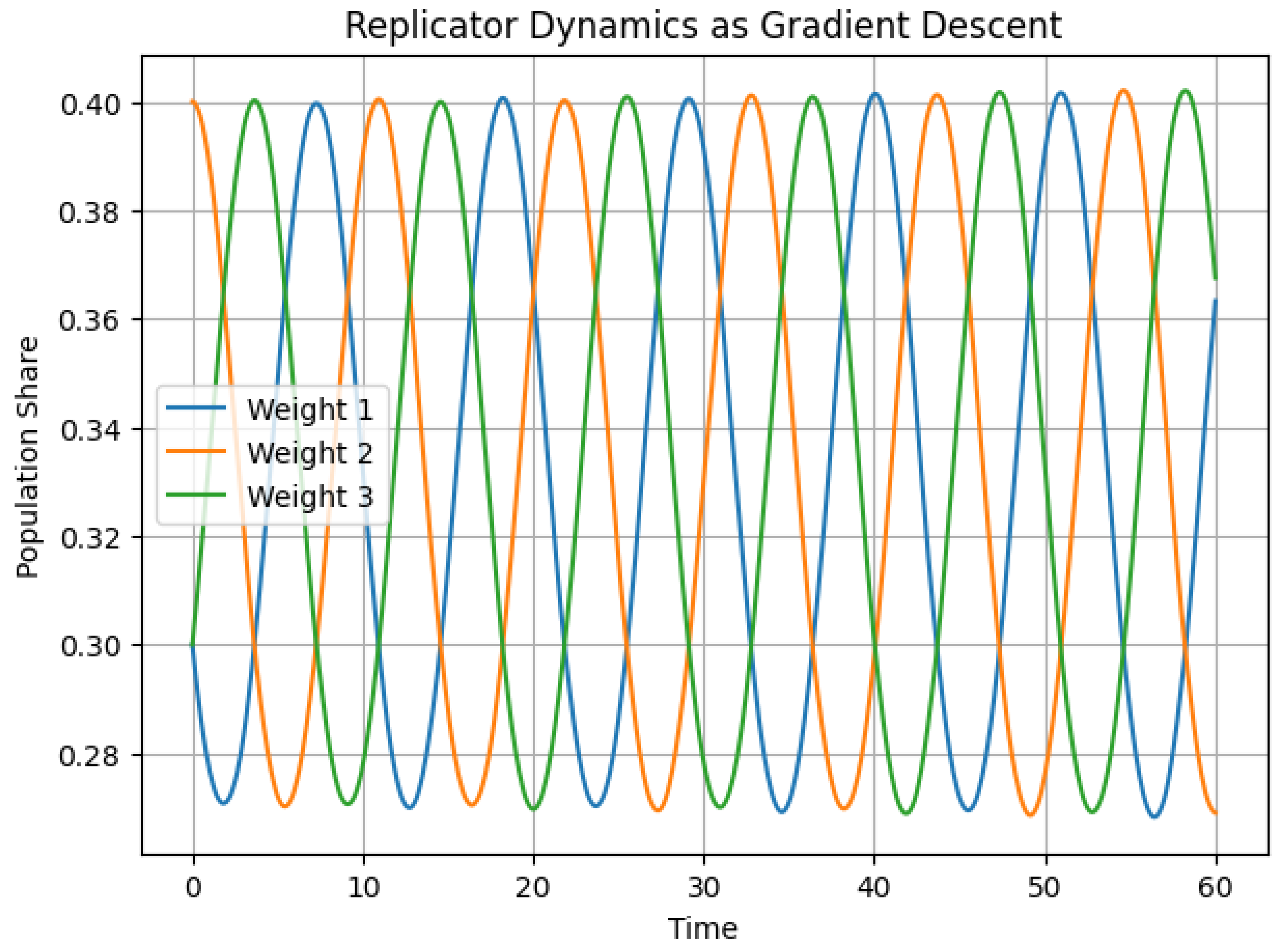

5.2.4. Replicator Dynamics (Rock-Paper-Scissors) — Cyclic Behavior

5.2.5. Python Code to Generate Figure 54, Figure 55 and Figure 56 Illustrating Min-Max (Saddle) Dynamics, Potential/Cooperative Game (Gradient Descent on Shared Phi), and Replicator Dynamics (Rock-Paper-Scissors) — Cyclic Behavior

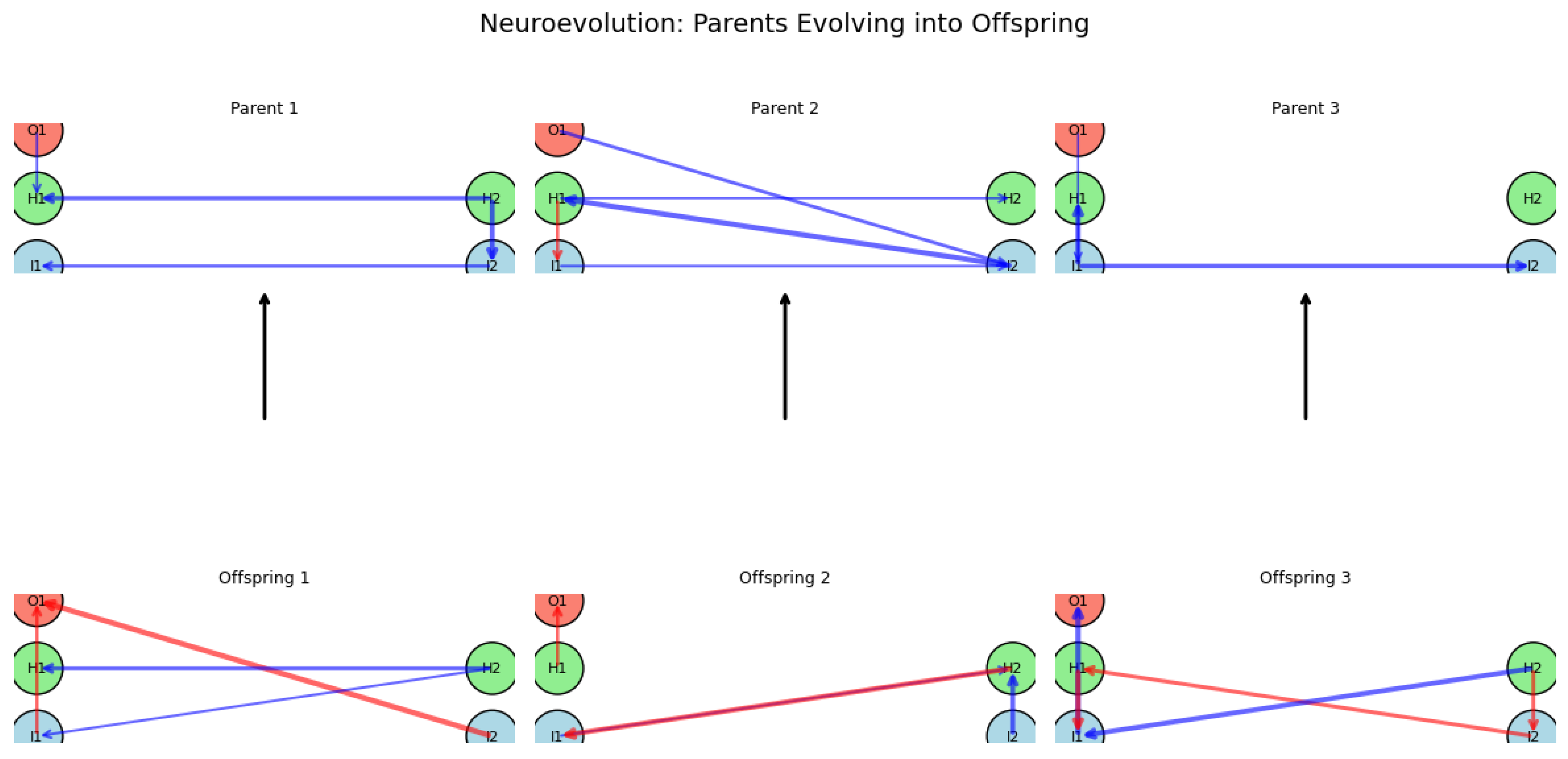

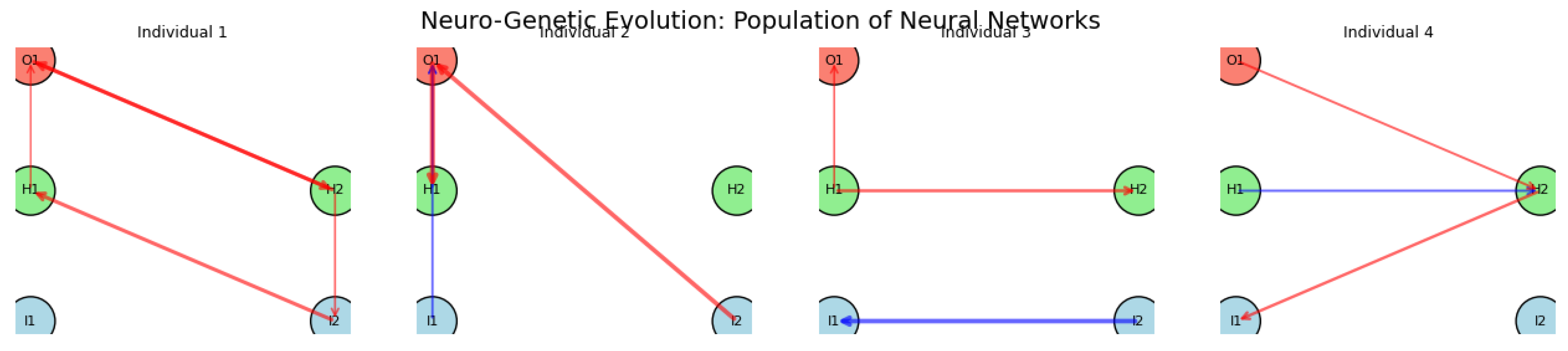

5.3. Game-Theoretic Formulations of Deep Neural Networks (DNNs) Through Evolutionary Game Dynamics

5.3.1. Python Code to Generate Figure 57, Figure 58 and Figure 59 Illustrating Game-Theoretic Formulations of Deep Neural Networks (DNNs) Through Evolutionary Game Dynamics

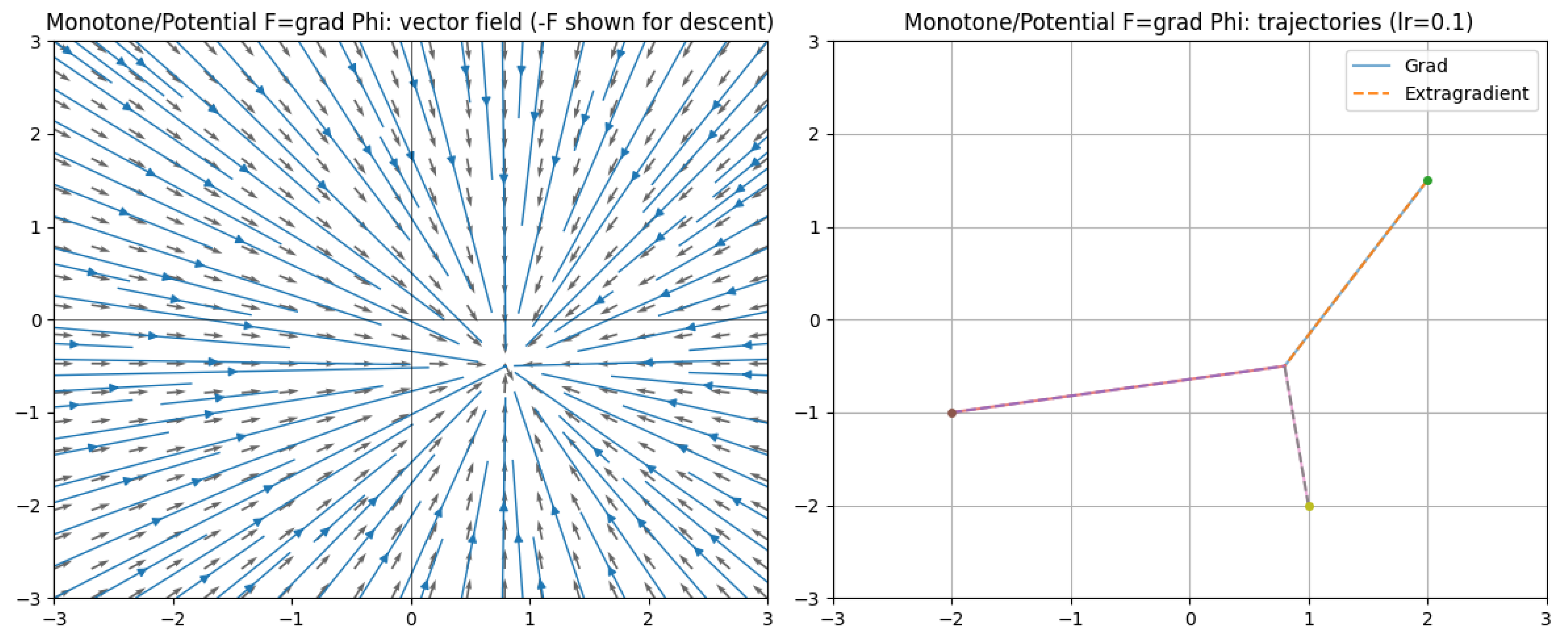

5.4. Analysis of Deep Neural Networks (DNNs) Through Variational Inequalities

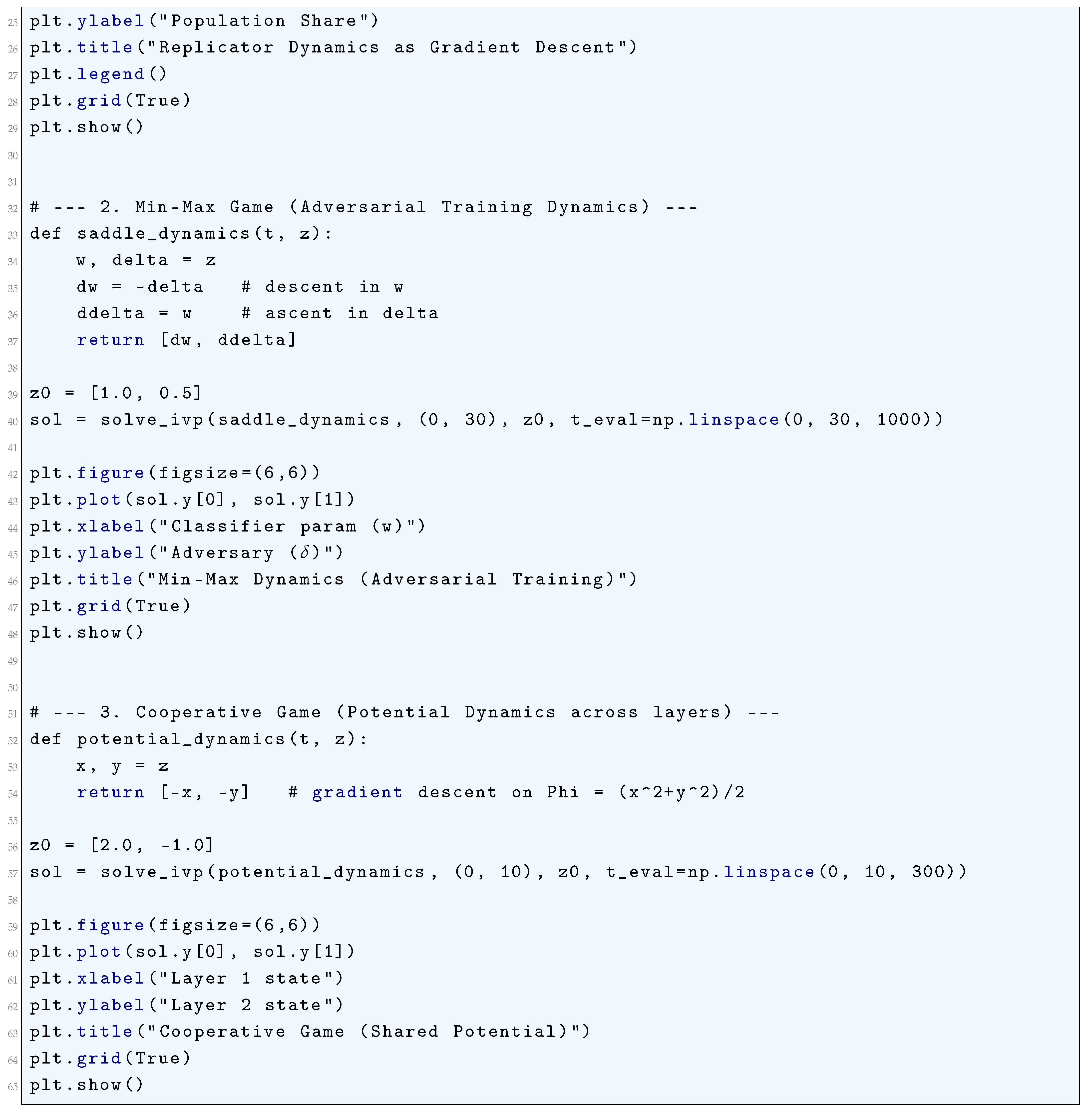

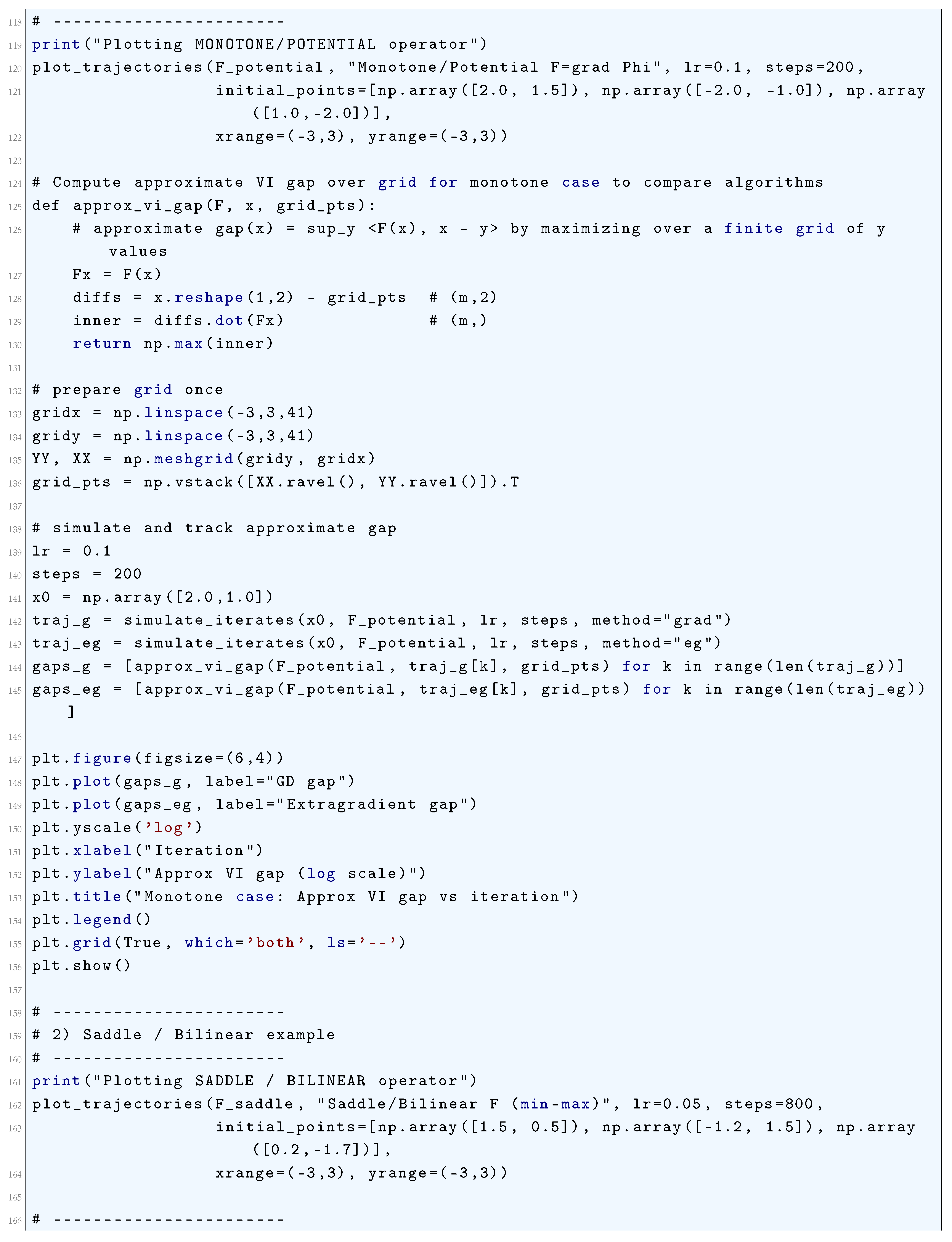

5.4.1. Monotone/Potential Operator (Gradient of a Convex Potential)

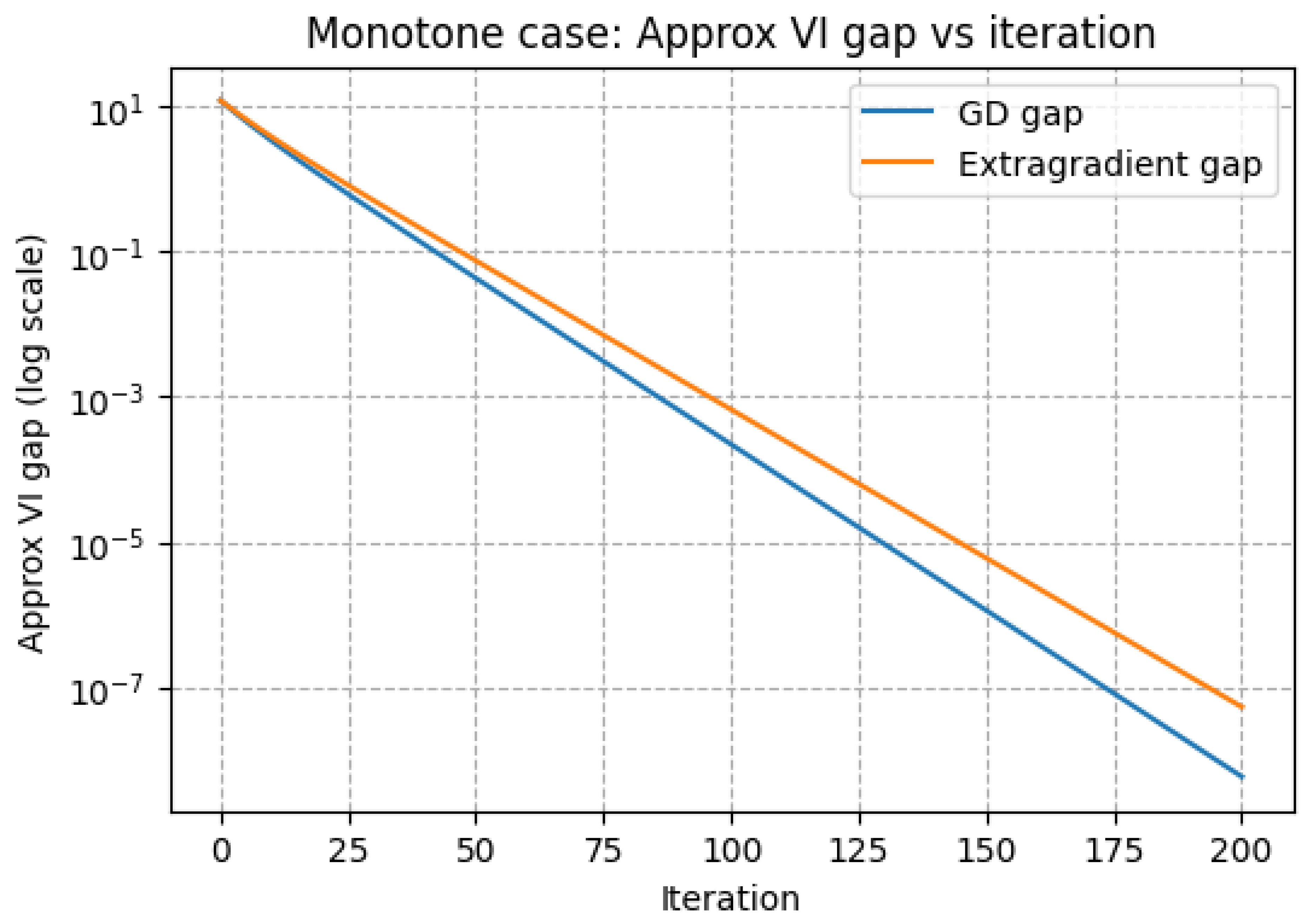

5.4.2. Saddle/Non-Monotone Bilinear Operator (Models min–max Behavior)

- The block-Jacobianwith

- The identityfor the bilinear case

- Conservation of quadratic energies in continuous time, and

- Discrete amplificationfor explicit Euler.

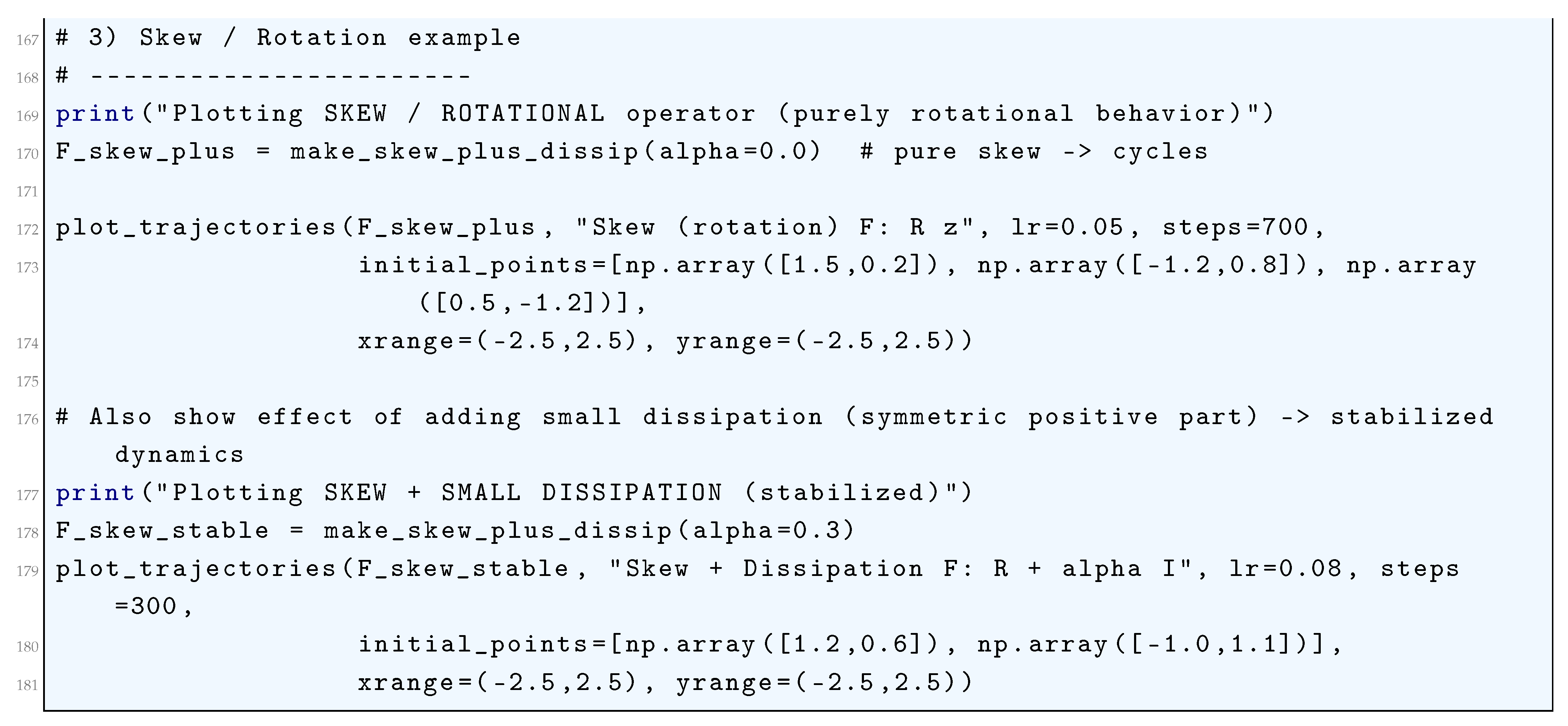

5.4.3. Skew (Rotation)

5.4.4. Skew + Dissipation

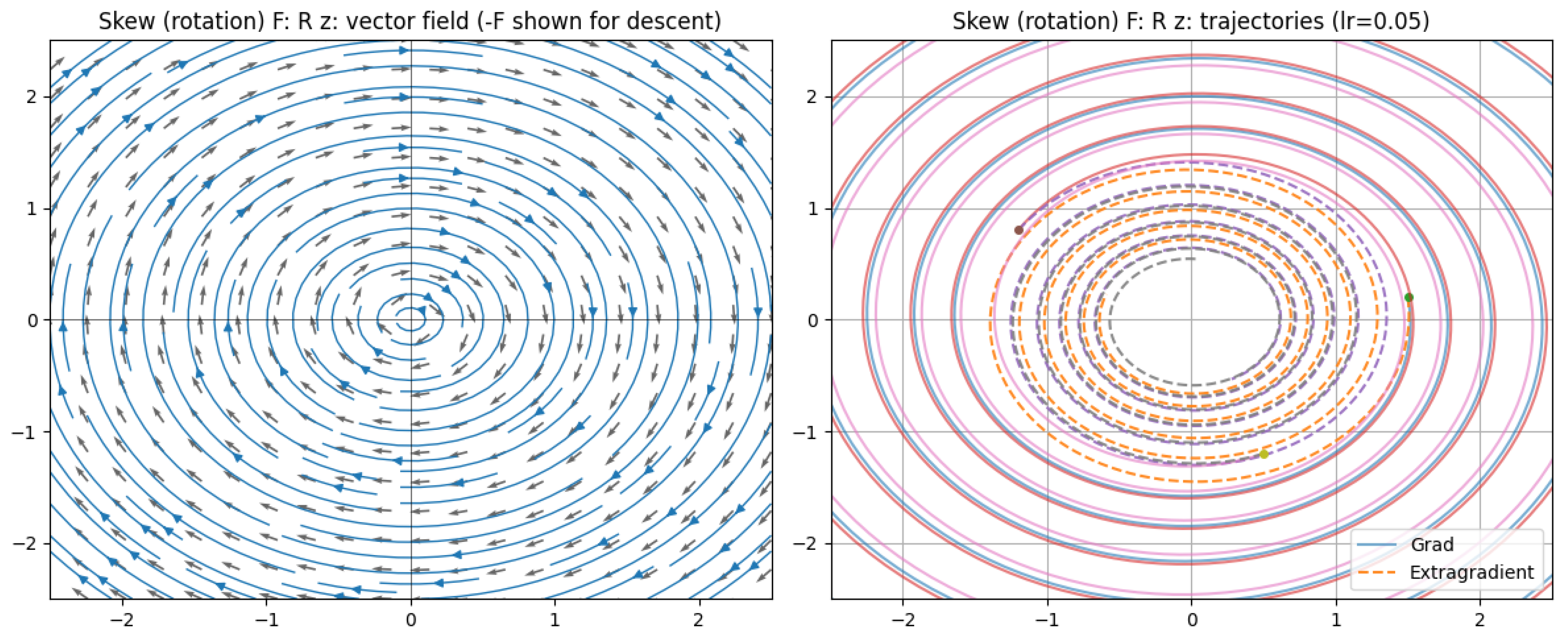

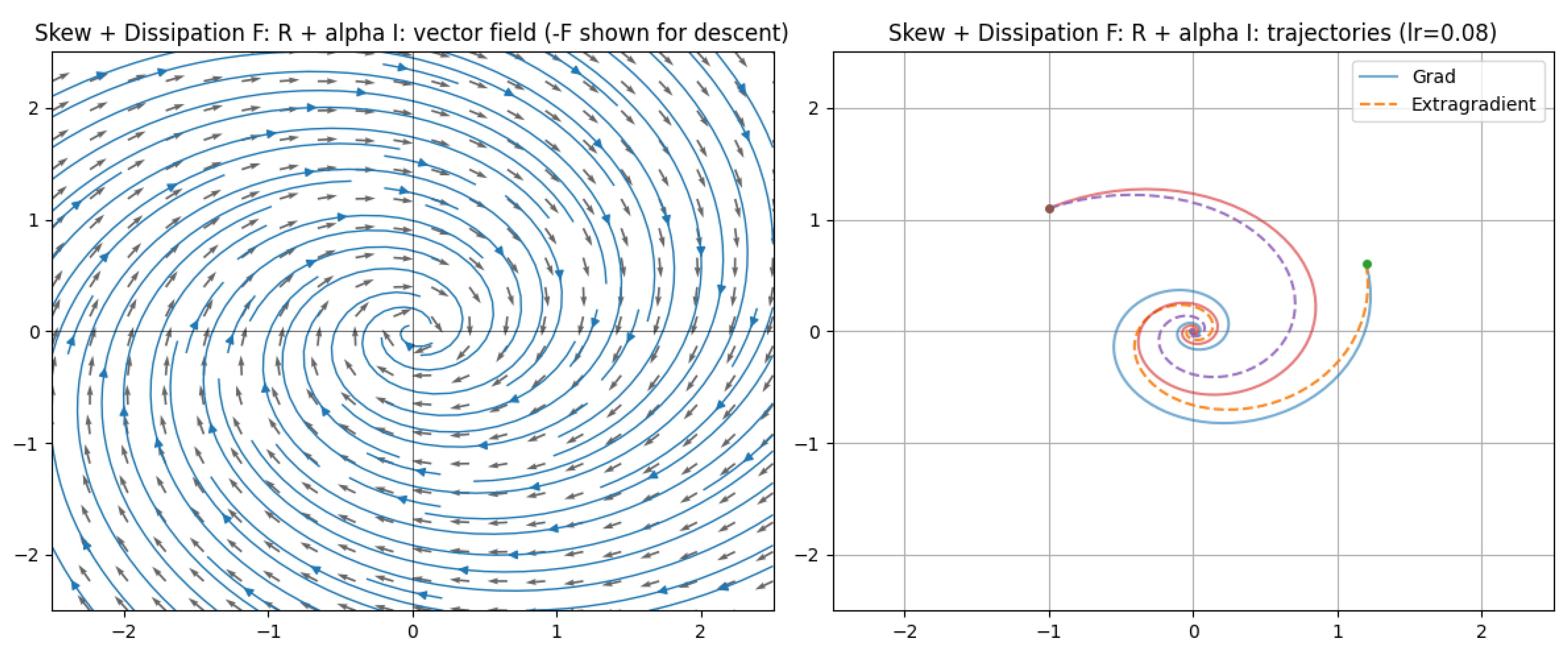

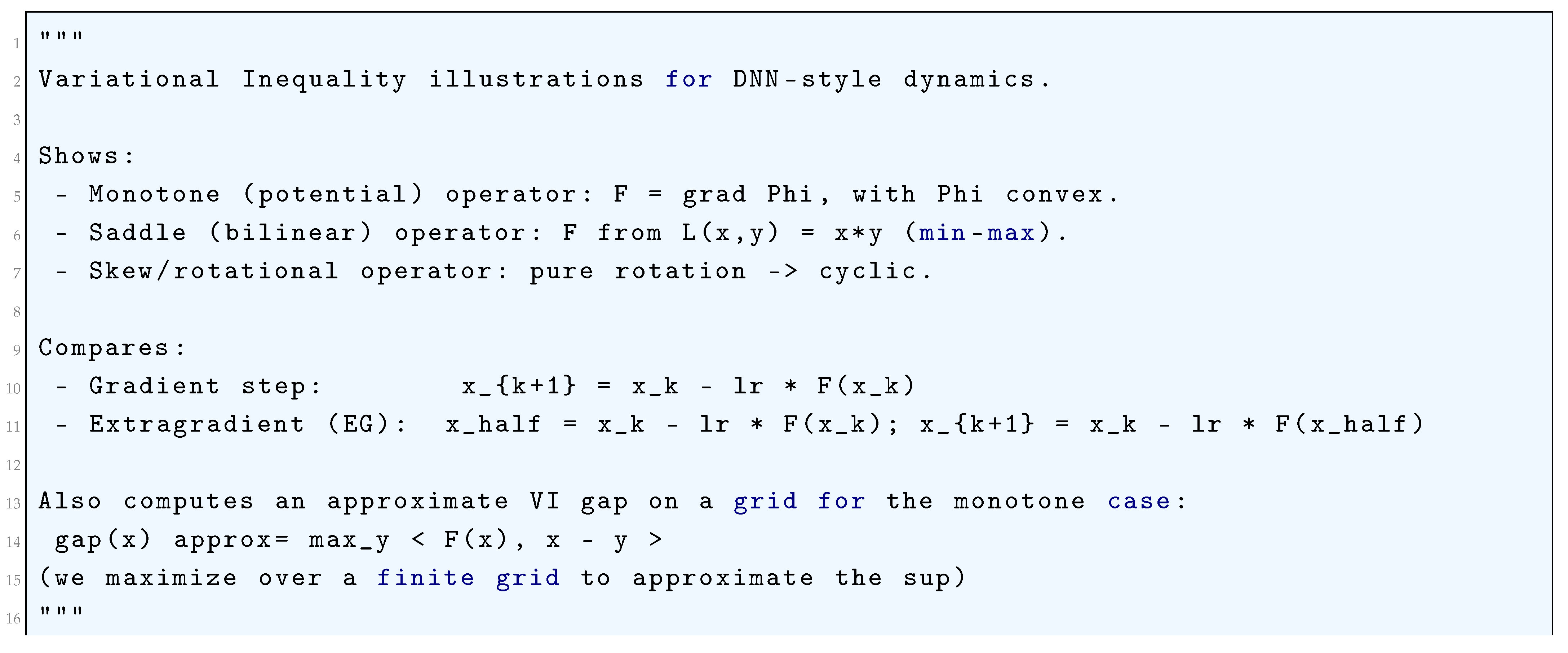

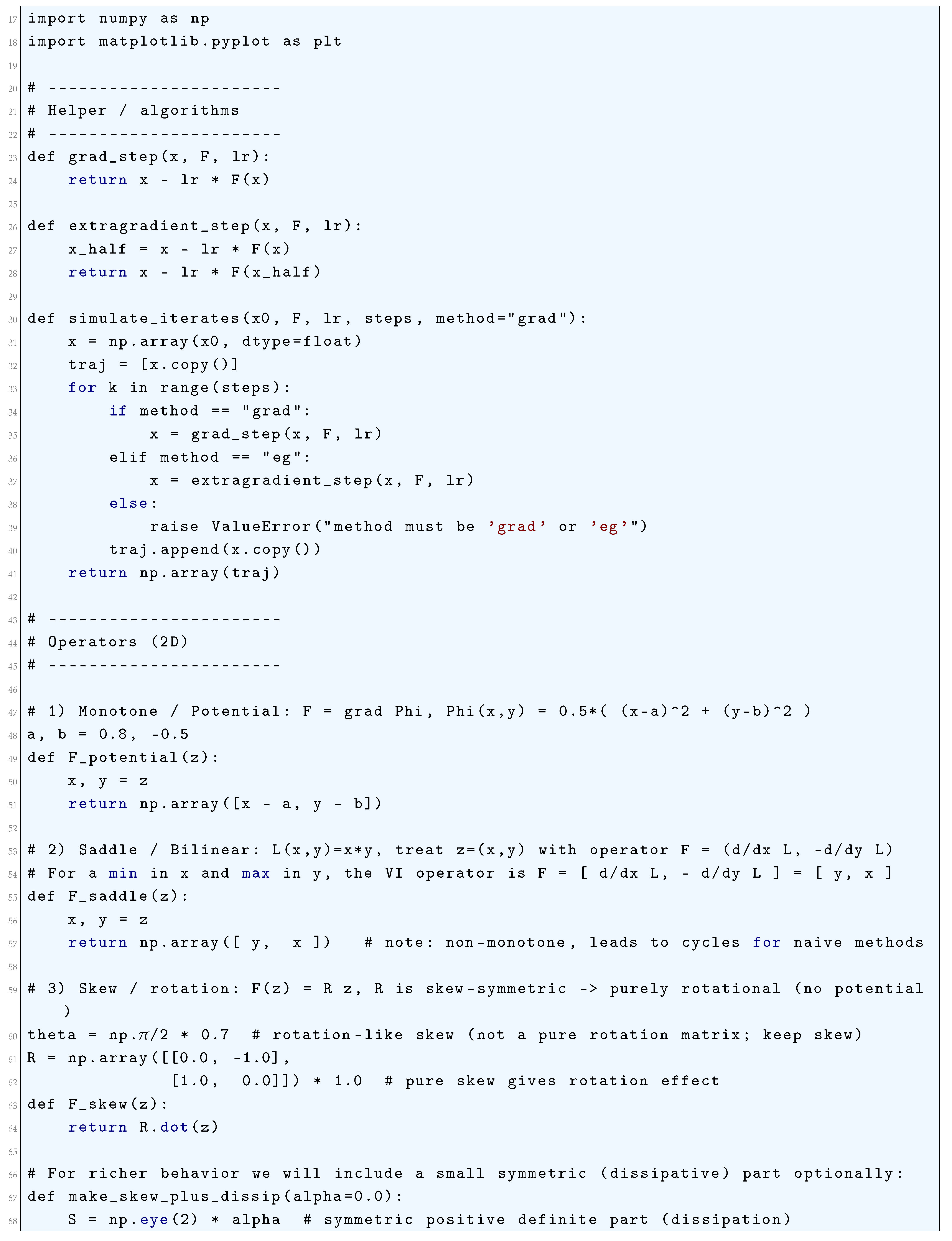

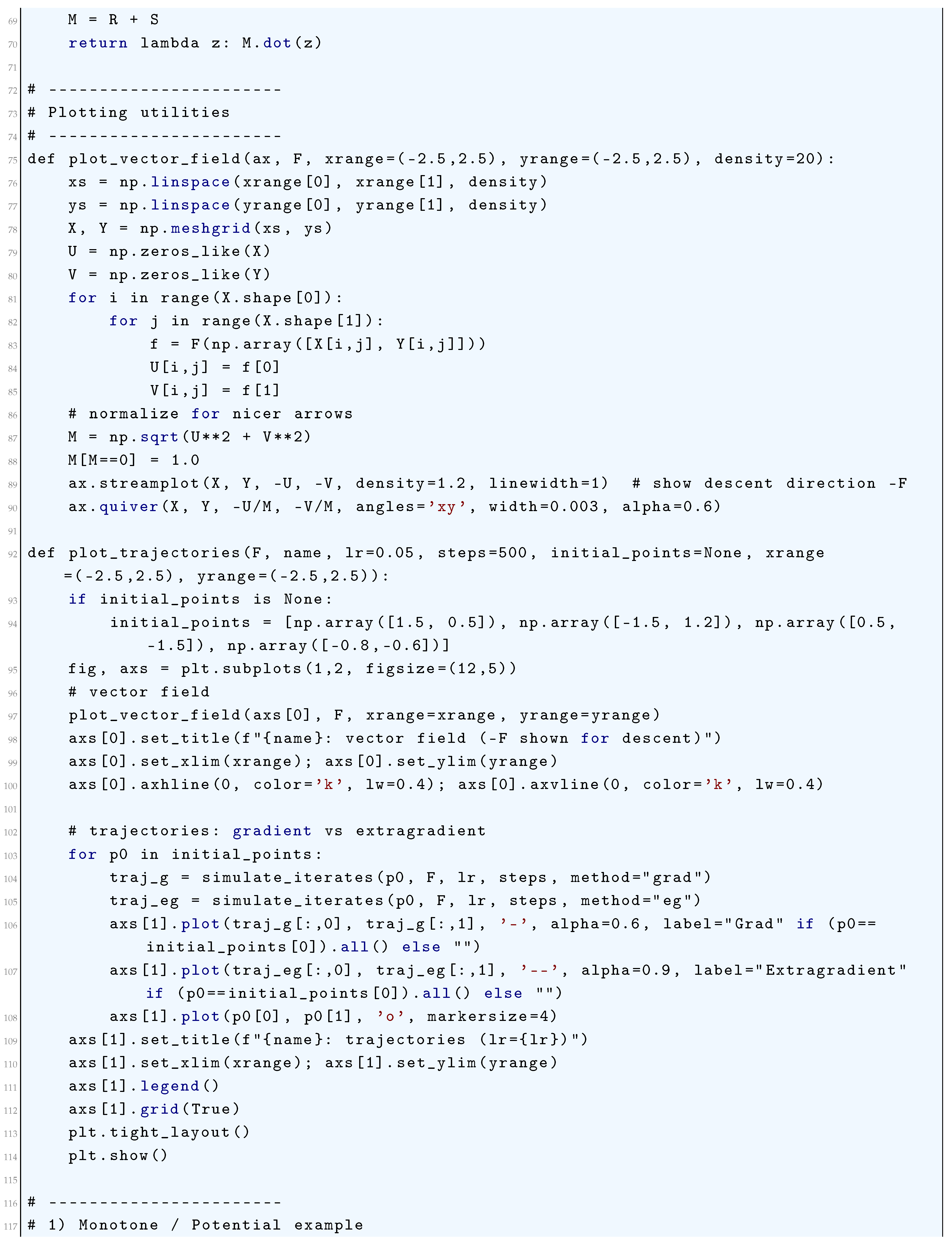

5.4.5. Python Code to Generate Figure 60, Figure 61 Illustrating Monotone/Potential Operator and Figure 62 Illustrating Saddle Bilinear F (min-max) and Figure 63 Illustrating Skew (Rotation) F: R z and Figure 64 Illustrating Skew + Dissipation F: R + Alpha I

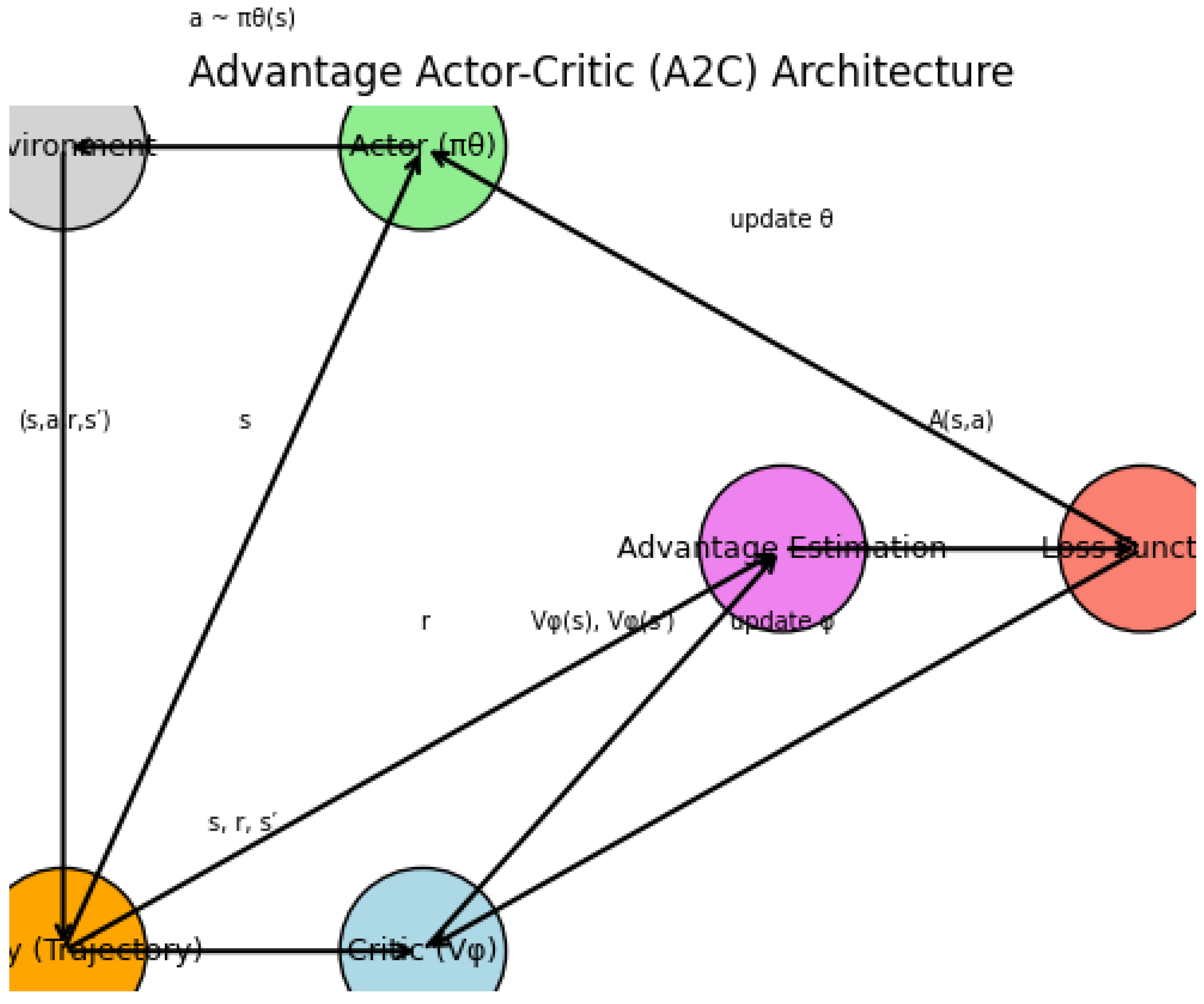

5.5. Optimal Control in Reinforcement Learning (RL)-Based Deep Neural Network (DNN) Training

5.5.1. Backpropagation as Pontryagin’s Maximum Principle (PMP)

5.5.2. The Method of Successive Approximation (MSA)

5.5.3. Differentiable Optimization Layers

5.5.4. Neural Ordinary Differential Equations (Neural ODEs)

5.5.5. Stochastic Optimal Control for RL and Robust Training

5.5.6. Stable and Efficient Network Design

5.5.7. Optimal Control of the Training Process Itself

5.5.8. Connections to Reinforcement Learning

5.6. Differential Game Theory and Training Dynamics

6. Optimal Transport Theory in Deep Neural Networks

6.1. Literature Review of Optimal Transport Theory in Deep Neural Networks

6.2. Analysis of Optimal Transport Theory in Deep Neural Networks

6.3. Jensen-Shannon Divergence

6.4. Matching Latent and Data Distributions in Probabilistic Autoencoders

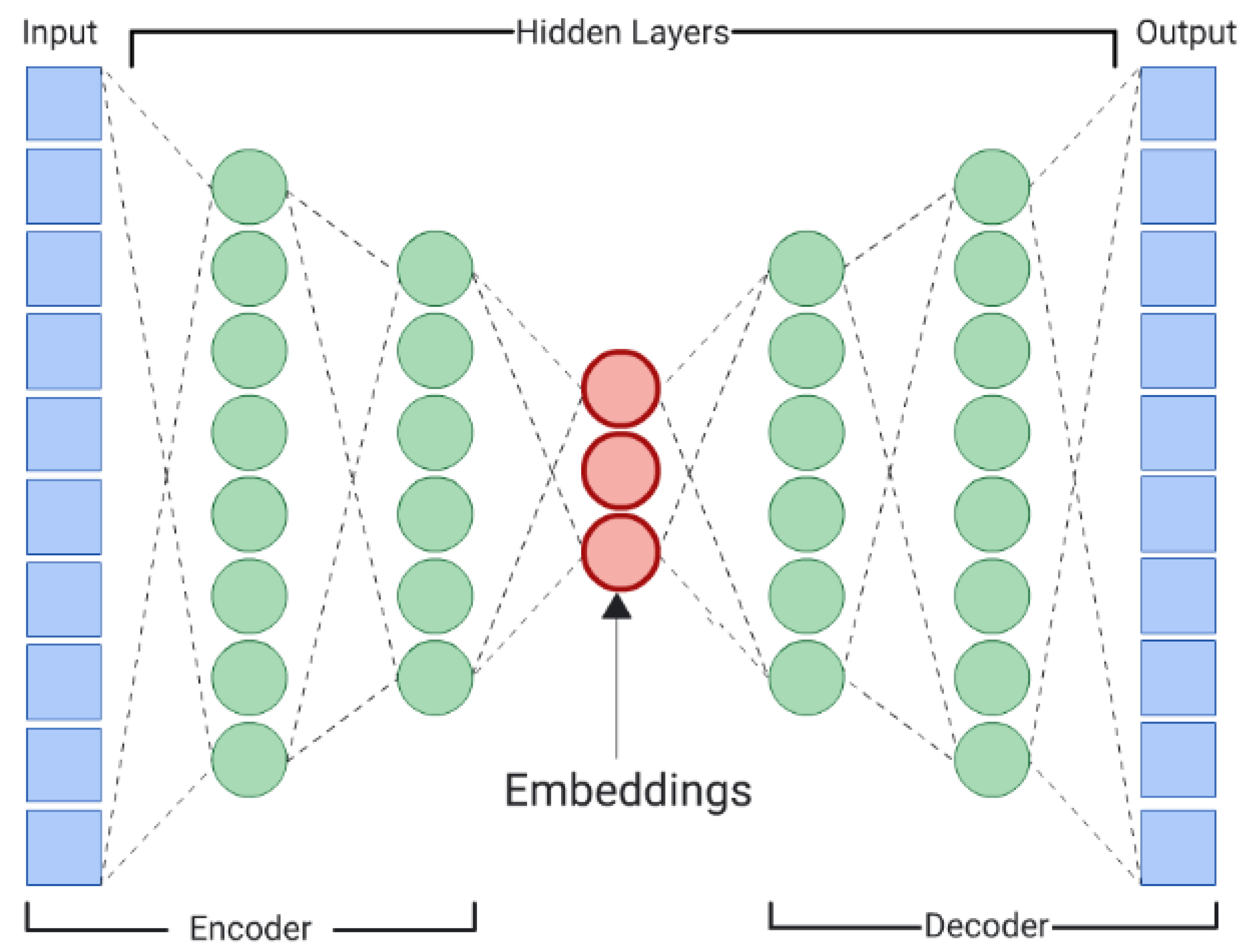

6.4.1. Probabilistic Autoencoders

6.4.2. 2-Wasserstein Distance

6.5. Optimal Transport (OT)-Based Priors in Bayesian Deep Learning

6.6. Application of Optimal Transport in Learning Energy-Based Models

6.7. Sinkhorn-Knopp Algorithm

6.7.1. Sinkhorn Distance

6.7.2. Wasserstein GANs

6.8. Kantorovich Duality

6.9. Entropy Regularization

6.9.1. Classical Monge-Kantorovich Formulation

6.9.2. Fenchel-Rockafellar Duality Theorem

6.10. Optimal transport theory Based Training Dynamics and Sinkhorn Divergences

6.11. Optimal Transport Theory in Neural Architecture and Gradient Flows

6.12. Geometric Regularization and Barycenters

7. Categorical Foundations of Deep Learning

7.1. Introduction

7.1.1. Motivation: Why Category Theory for Deep Learning?

- Abstraction over computation: Neural networks are fundamentally morphisms (maps) between data representations, and Category Theory provides tools to study compositions of such maps.

- Generalization across architectures: Whether working with CNNs, RNNs, or Transformers, Category Theory allows us to describe them as instances of categorical constructs (e.g., monoidal categories, functors).

- Handling complex data flows: Many Deep Learning constructs (e.g., attention mechanisms, residual connections) are naturally expressed via universal properties (limits, adjunctions).

- Bridging discrete and continuous reasoning: Gradient-based optimization (backpropagation) can be formulated categorically via lenses or reverse derivative categories.

- Formal reasoning about model behavior.

- Systematic architecture design via compositionality.

- Novel generalizations (e.g., neural networks over graphs, higher-order interactions).

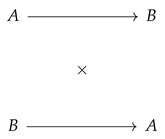

7.1.2. Limits of Traditional Mathematical Formalism in Modern Machine Learning

- Lack of Compositionality: Deep Learning models are built via hierarchical composition of layers, but linear algebra treats them as sequences of matrix operations without inherent structure. The Category Theory Solution is to represent networks as morphisms in a category, where composition is associative by definition.

- Poor Handling of Complex Data Types: Tensors, graphs, and sequences require ad-hoc notation. Backpropagation, for instance, is often derived via index manipulation rather than structurally. The Category Theory Solution is to use monoidal categories to model tensors and string diagrams for graphical reasoning.

- Opaque Optimization Dynamics: Gradient descent is typically analyzed via local approximations (Taylor expansions), obscuring global behavior. The Category Theory Solution is to use reverse derivative categories (RDCs) to give an abstract formulation of backpropagation.

- Difficulty in Generalizing Architectures: Novel components (e.g., attention, memory) are introduced empirically without a unifying framework. The Category Theory Solution is to use universal properties (e.g., adjunctions) to guide principled extensions.

- Weak Theoretical Guarantees: Traditional theory (e.g., VC dimension) fails to explain the success of overparametrized Deep Learning models. The Category Theory Solution is to use functorial semantics to connect learning to algebraic invariants.

7.1.3. Overview of the Chapter and Learning Goals

- Categories and Functors: At its core, a category formalizes the notion of objects (such as data spaces) and morphisms (such as neural network layers) that can be composed associatively. Functors extend this by mapping one category to another, preserving structure—allowing us to describe how neural architectures transform data systematically. We shall discuss the basic definitions, examples (e.g., FeedForwardNet, the category of neural networks).

- Universal Constructions: Universal constructions, including products and limits, characterize optimal ways to combine or relate objects. In Deep Learning, these appear in architectures like residual networks, where skip connections arise as coproducts, or attention mechanisms, which aggregate information universally. The ability to reason about such constructions enables principled architecture design rather than relying on intuition alone. We shall discuss the products, coproducts, and how they relate to branching architectures (e.g., ResNet skip connections).

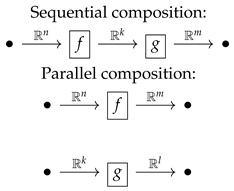

- Monoidal Categories: Monoidal categories introduce tensor products, capturing parallel computation and multi-dimensional data flow. This framework elegantly models operations in convolutional networks or transformers, where tensor contractions and parallel processing are fundamental. String diagrams, a graphical notation from monoidal categories, offer an intuitive way to visualize and manipulate complex network structures. We shall discuss the modeling of tensors and parallel computation (e.g., CNNs as functors).

- Lenses and Backpropagation: Differentiation and optimization, central to Deep Learning, are naturally expressed using lenses and reverse derivative categories. A lens pairs a forward pass (evaluation) with a backward pass (gradient propagation), abstracting backpropagation as a compositional process. This shifts gradient-based learning from an algorithmic procedure to a categorical construct, clarifying its mathematical essence. We shall discuss the categorical differentiation via reverse derivative categories.

- Higher-Order Abstractions: Higher-order abstractions like adjunctions and monads further extend the expressive power of the framework. Adjunctions formalize relationships between paired functors, such as encoder-decoder networks in autoencoders, while monads model computational effects like stochasticity in dropout or reinforcement learning. These tools allow us to describe complex behaviors—such as memory, recursion, or attention—in a unified way. We shall discuss the adjunctions, monads, and their role in attention/ memory mechanisms.

- Design models more systematically.

- Analyze them more rigorously.

- Discover new architectures more confidently.

7.2. Category Theory Primer for Machine Learners

7.2.1. Basic Definitions: Categories, Functors, Natural Transformations, Adjunctions, Monoidal Categories

7.2.1.1 Categories A category consists of:

- A collection of objects (e.g., sets, vector spaces).

- For every pair , a set of morphisms (arrows) .

- A composition rule ∘ such that for and , there exists .

- An identity morphism for each object, satisfying:

- Associativity: For ,

7.2.1.2 Functors A (covariant) functor between categories consists of:

- A mapping on objects.

-

For each morphism in , a morphism in , preserving:

- −

- Identities: .

- −

- Composition: .

7.2.1.3 Natural Transformations Given functors , a natural transformation assigns to each a morphism such that for every in , the following naturality condition holds:

7.2.1.4 Adjunctions

7.2.1.5 Monoidal Categories

7.2.2. Use of String Diagrams and Abstraction and Generalization in Machine Learning

7.2.2.1 String Diagrams as Graphical Calculus for Neural Networks

7.2.2.2 Abstraction and Generalization in Machine Learning

7.2.3. Examples from Familiar Settings

7.2.3.1 Category of Sets ()

- Objects: Sets.

- Morphisms: Functions .

-

Functors:

- −

- The powerset functor maps A to its power set and to the direct image .

-

Natural Transformation:

- −

- The singleton map , where , is natural because for any ,

7.2.3.2 Category of Vector Spaces ()

- Objects: Vector spaces over .

- Morphisms: Linear maps .

-

Functors:

- −

- The dual space functor maps V to and to its transpose .

-

Natural Transformation:

- −

- The double dual embedding , where , is natural because for any linear ,

7.2.3.3 Category of Neural Networks (Informal Example)

- Objects: Data spaces (e.g., ).

- Morphisms: Neural network layers (e.g., affine maps ).

-

Functors:

- −

- A training functor could map a network architecture to its trained parameter space.

7.2.4. Diagrams as Proofs and Reasoning Tools

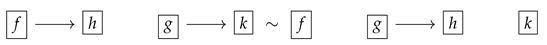

7.2.4.1 Commutative Diagrams A diagram commutes if all paths between two objects yield the same morphism. For example, the naturality square for :

7.2.4.2 Universal Properties via Diagrams

7.2.4.3 Applications in ML

- Backpropagation as a Functorial Diagram: The chain rule can be expressed as a commuting diagram in the category of differentiable functions.

- Attention Mechanisms: The query-key-value interaction in transformers can be modeled as a limit diagram.

7.2.4.3.1 Backpropagation as a Functorial Diagram

7.2.4.3.2 Attention Mechanisms

7.2.5. Summary

- Abstracting computation via categories and functors.

- Unifying structures (e.g., vector spaces, neural networks) under common principles.

- Enabling diagrammatic proofs for complex architectures.

7.3. Neural Networks as Composable Morphisms

7.3.1. Layers as Morphisms; Networks as Compositions

7.3.1.1 Neural Networks as Composable Functions

-

Each layer be a function , where:

- −

- is the input space (e.g., ),

- −

- is the parameter space (e.g., weights and biases ),

- −

- is the output space.

- A network N with k layers is the composition:where .

7.3.1.2 Category-Theoretic Interpretation

- Objects are spaces (e.g., ),

- Morphisms are pairs , where:is a smooth function, and .

- Composition of morphisms and is given by:where:

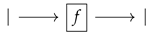

- Identity morphism is given by (no parameters).

7.3.2. The Category of Parameterized Functions

7.3.2.1 Definition of the Category Para

- Objects: Euclidean spaces (or more generally, smooth manifolds).

- Morphisms: A morphism is a smooth function:where is the parameter space (also Euclidean).

- Composition: As defined above, composition is associative due to the associativity of function composition.

- Identity: The identity morphism is the projection .

- A parameter space (a measurable space, often )

- A measurable function

7.3.2.2 Functoriality of Neural Networks

- Each node maps to a space ,

- Each edge maps to a parameterized morphism ,

- Functoriality ensures that compositions in the graph correspond to compositions in Para.

- Object Preservation: (the underlying data space).

- Morphism Action: where .

- Compositionality: for composable , with parameter spaces concatenated:

- Identity Preservation: .

7.3.2.3 Monoidal Structure (for Parallel Composition)

- Tensor product ⊗ combines spaces and parameters:

7.3.3. Role of Associativity and Identity in Sequential Models

7.3.3.1 Associativity of Composition

- The associativity law in Para ensures that composing layers in any order (while respecting dependencies) yields the same function:

- This justifies modular network design: we can group layers into submodules without changing behavior.

7.3.3.2 Identity Morphisms and Skip Connections

- The identity morphism allows for skip connections (e.g., ResNet):

- Identity ensures that a “null” layer (doing nothing) is a valid network component.

7.3.3.3 Universality of Sequential Models

- The ability to compose morphisms arbitrarily allows for universal approximation (e.g., via deep feedforward networks).

- The categorical framework generalizes to recurrent networks by working in a category of dynamical systems, where morphisms are parameterized recurrent cells.

7.3.4. Conclusion

- Layers as morphisms, with networks as their compositions.

- A rigorous category of parameterized functions, supporting functorial and monoidal structures.

- Associativity and identity as fundamental properties enabling modular and correct-by-construction network design.

8. Open Set Learning

-

Gaussian Distribution Model (GDM) The fundamental assumption is that the feature distribution of each known class follows a multivariate normal distribution parameterized by the mean vector and covariance matrix . The likelihood of a given sample belonging to class c is:To quantify the confidence in assigning to class c, we compute the Mahalanobis distance:A sample is rejected as unknown if:where is a threshold chosen based on extreme value statistics.

- Gaussian Mixture Model (GMM) The Gaussian assumption can be generalized using a Gaussian Mixture Model (GMM), which represents each class as a weighted sum of multiple Gaussian components:where are the mixture weights satisfying , and each Gaussian component is given by:Unknown samples are rejected based on low maximum likelihood estimation (MLE) scores:where is a predefined threshold.

- Dirichlet Process Gaussian Mixture Model (DP-GMM) A Dirichlet Process (DP) prior can be introduced to allow the number of mixture components K to grow dynamically. The prior over the mixture weights follows:where is the concentration parameter controlling cluster sparsity. This enables automatic adaptation of the number of mixture components to better capture class distributions. The likelihood of follows:but with a nonparametric prior that ensures more flexible decision boundaries.

- Extreme Value Theory (EVT) Models The tail distribution of softmax probabilities is modeled using an Extreme Value Theorem (EVT) approach. Given softmax scores , we fit a Weibull distribution to the tail:A sample is rejected if:

- Bayesian Neural Networks (BNNs) BNNs introduce uncertainty estimation by placing priors over network weights:>Posterior inference is performed via Bayesian updating:A sample is rejected if the entropy of the predictive distribution is high:

- Support Vector Models (OC-SVM and SVDD) One-Class SVM (OC-SVM): Finds a separating hyperplane such that:subject to . A sample is rejected if:

- Support Vector Data Description (SVDD): Finds a minimum enclosing hypersphere with center and radius R:subject to:A sample is rejected if:

8.1. Literature Review of Deep Neural Network-Based Open Set Learning

| Author(s) | Contribution |

|---|---|

| Scheirer et al. (2012) [1215] | Introduced the concept of open space risk and proposed the 1-vs-Set Machine classifier, which minimizes both empirical and open space risk to identify unseen categories. |

| Bendale and Boult (2015) [1216] | Extended the framework to open world learning with the Nearest Non-Outlier (NNO) algorithm, allowing incremental learning from evolving data. |

| Busto and Gall (2017) [1217] | Proposed the Assign-and-Transform Iterative (ATI) method for domain adaptation when the target domain includes unknown classes not present in the source. |

| Saito et al. (2018) [1218] | Used adversarial training to align features of known classes and distinguish unknowns, improving generalization in open set domain adaptation scenarios. |

| Geng et al. (2020) [1219] | Offered a comprehensive taxonomy and theoretical framework for Open Set Learning methods, becoming a foundational survey in the field. |

| Chen et al. (2020) [1221] | Introduced Reciprocal Points to define extra-class space and improve the separation between known and unknown classes. |

| Authors (Year) | Citation Key | Contribution / Summary |

|---|---|---|

| Liu et al. (2020) | [1222] | Introduced the PEELER algorithm combining meta-learning and entropy maximization for few-shot open set recognition. |

| Kong and Ramanan (2021) | [1223] | Used GANs to generate diverse open-set examples, improving classifier robustness by explicitly modeling the open space. |

| Fang et al. (2021) | [1224] | Proposed generalization bounds and the Auxiliary Open-Set Risk (AOSR) algorithm for robust decision-making in open-world conditions. |

| Mandivarapu et al. (2022) | [1225] | Linked active learning with open set recognition by querying unknown instances for improved adaptivity. |

| Engelbrecht and du Preez (2020) | [1226] | Proposed a semi-supervised OSL model using positive and unlabeled learning to increase robustness to unknowns. |

| Zhou et al. (2024) | [1235] | Introduced a contrastive learning framework with an "unknown score" to enhance known-unknown separation. |

| Shao et al. (2022) | [1227] | Analyzed distributional shifts between training and testing phases, improving OSL generalization. |

| Park et al. (2024) | [1228] | Provided theoretical insights on distinguishing known and unknowns using Jacobian-based metrics in neural networks. |

| Liu et al. (2022) | [1230] | Developed a hybrid OSL object detection model combining labeled and unlabeled data for complex visual scenes. |

| Abouzaid et al. (2023) | [1236] | Used D-band FMCW radar with deep learning and open-set recognition for reliable material characterization. |

| Cevikalp et al. (2023) | [1237] | Unified anomaly detection and OSL using compact hypersphere models to define decision boundaries. |

| Palechor et al. (2023) | [1238] | Proposed large-scale ImageNet-based open-set protocols and a new validation metric for realistic OSL evaluation. |

| Cen et al. (2023) | [1240] | Introduced FS-KNNS for few-shot Unified Open-Set Recognition, analyzing uncertainty distributions and pretraining impacts. |

| Authors (Year) | Main Contribution |

|---|---|

| Huang et al. (2022) [1241] | Propose a semantic reconstruction approach that focuses on class-specific feature recovery, enhancing rejection of out-of-distribution samples by bridging the gap between known and unknown classes. |

| Wang et al. (2022) [1242] | Introduce an AUC-optimized objective function that trains deep networks to balance closed-set accuracy and unknown detection, improving open-set decision boundary learning. |

| Alliegro et al. (2022) [1243] | Present a benchmark dataset for 3D open-set learning in object point cloud classification, emphasizing the need for improved 3D feature representation. |

| Grieggs et al. (2021) [1244] | Apply OSL to handwriting recognition by leveraging human perception to identify transcription errors from unfamiliar handwriting styles, expanding OSL beyond classification. |

| Liu et al. (2022) [1230] | Propose a semi-supervised framework for open-world object detection using both labeled and unlabeled data, enabling dynamic adaptation to emerging object classes. |

| Grcić et al. (2022) [1245] | Combine anomaly detection and deep feature learning to enhance open-set semantic segmentation performance in dense prediction tasks. |

| Moon et al. (2022) [1246] | Introduce a simulator for generating synthetic unknown samples to improve model robustness against unfamiliar data distributions. |

| Kuchibhotla et al. (2022) [1248] | Develop an incremental learning framework for adapting to new unknown categories without retraining, suitable for continual learning and autonomous systems. |

| Katsumata et al. (2022) [1249] | Propose a GAN-based framework for semi-supervised open-set image generation that aligns synthesized images with both known and unknown class features. |

| Bao et al. (2022) [1250] | Extend OSL to temporal action recognition by detecting and localizing unseen human actions in video, applicable to surveillance and activity monitoring. |

| Dietterich and Guyer (2022) [1251] | Provide a theoretical analysis of why deep networks fail in open-set generalization, attributing it to feature familiarity levels and proposing architectural considerations. |

| Authors (Year) | Main Contribution |

|---|---|

| Cai et al. (2022) [1253] | Propose a method to localize unfamiliar samples in long-tailed distributions using feature similarity measures, enabling outlier rejection and integrating OSL with long-tailed classification. |

| Wang et al. (2022) [1254] | Present a framework for adapting open-world learning to user-defined tasks, enhancing model adaptability to dynamic real-world data distributions. |

| Zhang et al. (2022) [1256] | Introduce an architecture search algorithm tailored to OSL, highlighting the importance of network design in effectively rejecting unknown instances. |

| Lu et al. (2022) [1257] | Develop a prototype-based method that refines decision boundaries and improves open-set rejection by mining robust feature prototypes from known classes. |

| Xia et al. (2021) [1258] | Propose the Adversarial Motorial Prototype Framework (AMPF), using adversarial learning to refine class prototypes and explicitly model uncertainty boundaries. |

| Kong and Ramanan (2021) [1259] | Introduce OpenGAN, which uses GANs to synthesize OOD data for improving generalization, although requiring auxiliary OOD data. |

| Huang et al. (2021) [1260] | Present a semi-supervised cross-modal method (Trash to Treasure) for mining OOD samples from unlabeled data, with dependency on multi-modal data availability. |

| Wang et al. (2021) [1262] | Develop an energy-based model (EBM) for uncertainty calibration that offers principled confidence measures without OOD data, albeit with high computational cost. |

| Zhang and Ding (2021) [1263] | Adapt prototypical matching for zero-shot segmentation with open-set rejection, achieving efficiency but relying on pre-defined class embeddings. |

| Author(s) | Main Contribution |

|---|---|

| Girish et al. [1264] | Propose a framework for detecting GAN-generated images using contrastive learning and clustering to discover novel synthetic sources in open-world scenarios. |

| Wang et al. [1265] | Introduce a benchmark for open-world video object segmentation combining uncertainty estimation and spatio-temporal consistency to reject unknowns and learn new categories. |

| Cen et al. [1266] | Use deep metric learning with prototype-based margin separation to improve open-set semantic segmentation by distinguishing known and unknown classes. |

| Wu et al. [1267] | Present NGC, a framework combining graph-based label propagation and uncertainty-aware sample selection for robust learning under noisy and open-world conditions. |

| Bastan et al. [1268] | Address large-scale open-set logo detection using hierarchical clustering and outlier-aware loss to manage noisy real-world open-set data. |

| Saito et al. [1269] | Propose OpenMatch, a semi-supervised learning approach that integrates consistency regularization with open-set outlier rejection. |

| Esmaeilpour et al. [1270] | Extend CLIP to zero-shot open-set detection, leveraging vision-language models to detect novel categories without labeled data, but note limitations in fine-grained unknown discrimination. |

| Chen et al. [1272] | Introduce Adversarial Reciprocal Points Learning using adversarial optimization to define class boundaries while rejecting unknowns via a geometric margin constraint. |

| Guo et al. [1273] | Develop a Conditional Variational Capsule Network combining capsules and VAEs for hierarchical uncertainty modeling in open-set recognition. |

| Bao et al. [1274] | Apply Evidential Deep Learning and subjective logic to explicitly model epistemic uncertainty in video-based action recognition. |

| Sun et al. [1275] | Propose M2IOSR, an information-theoretic model that maximizes mutual information for compact, separable class manifolds and robust unknown rejection. |

| Hwang et al. [1276] | Address open-set panoptic segmentation using prototype learning to distinguish known and unknown objects, integrating metric learning in dense prediction. |

| Balasubramanian et al. [1278] | Focus on real-world detection of unknown traffic scenarios using ensemble diversity to improve uncertainty estimation and robustness. |

| Author(s) | Main Contribution |

|---|---|

| Salomon et al. [1285] | Apply metric learning to distinguish known from unknown classes in open-set face recognition with small galleries. |

| Jia and Chan [1284] | Incorporate margin-based constraints into feature learning to improve discriminability in OSR. |

| Jia and Chan [1283] | Learn robust representations through reconstruction of original images from augmented views to generalize to unknowns. |

| Yue et al. [1282] | Generate synthetic unknowns to refine decision boundaries using counterfactual reasoning, bridging OSR and zero-shot learning. |

| Cevikalp et al. [1281] | Model known classes as convex cones using a deep polyhedral conic classifier to enable open-set robustness. |

| Zhou et al. [1280] | Learn placeholder prototypes for potential unknown classes during training to dynamically adjust decision boundaries. |

| Jang and Kim [1279] | Introduce a teacher-explorer-student (TES) meta-learning framework where an explorer guides the student using challenging open-set samples. |

| Sun et al. [1287] | Propose a Conditional Gaussian Distribution Learning (CGDL) method to model class-conditional distributions for uncertainty-based OSR. |

| Perera et al. [1288] | Combine variational autoencoders (VAEs) with discriminative classifiers in a hybrid framework to separate known and unknown classes. |

| Ditria et al. [1289] | Present OpenGAN, which generates synthetic outliers to improve open-set detection by training the discriminator to reject unknowns. |

| Geng and Chen [1290] | Propose a collective decision framework that aggregates multiple classifiers to improve robustness in open-set scenarios. |

| Jang and Kim [1291] | Develop a One-vs-Rest deep probability model to estimate the probability of a sample belonging to an unknown class. |

| Zhang et al. [1292] | Explore hybrid models that combine discriminative and generative components for joint optimization of feature learning and OSR. |

| Author(s) | Main Contribution |

|---|---|

| Shao et al. [1293] | Developed Open-set Adversarial Defense by integrating OSR robustness into adversarial training, enabling resilience to both adversarial and unknown-class intrusions. |

| Yu et al. [1294] | Proposed a Multi-Task Curriculum Framework for semi-supervised OSR, balancing supervised and unsupervised learning to progressively handle unknown classes. |

| Miller et al. [1295] | Introduced Class Anchor Clustering, a distance-based loss to form compact class clusters while maximizing inter-class separation in the feature space. |

| Jia and Chan [1296] | Proposed MMF loss to enhance intra-class compactness and inter-class separation, improving discriminative feature learning in OSR. |

| Oliveira et al. [1300] | Extended OSR to semantic segmentation via Fully Convolutional Open Set Segmentation with uncertainty-aware pixel-wise rejection. |

| Yang et al. [1301] | Proposed S2OSC, a semi-supervised OSR framework combining consistency regularization and entropy minimization to exploit both labeled and unlabeled data. |

| Sun et al. [1302] | Used conditional probabilistic generative models to estimate likelihoods and reject unknowns based on uncertainty thresholds. |

| Yang et al. [1303] | Introduced Convolutional Prototype Network (CPN), learning prototypes for known classes and using distance-based rejection for OSR. |

| Dhamija et al. [1304] | Highlighted limitations in open set detection for object recognition and introduced an evaluation framework stressing real-world challenges. |

| Meyer and Drummond [1305] | Advocated for metric learning in robotic vision OSR, emphasizing active learning and incremental unknown class discovery. |

| Oza and Patel [1306] | Proposed a multi-task learning method using autoencoders for joint classification and reconstruction to detect outliers in OSR. |

| Yoshihashi et al. [1307] | Introduced CROSR, which combines classification and reconstruction to use reconstruction error as a cue for open set rejection. |

| Malalur and Jaakkola [1308] | Proposed an alignment-based matching network using metric learning for one-shot OSR with focus on feature alignment. |

| Schlachter et al. [1309] | Developed intra-class splitting to improve decision boundaries by subdividing known classes into more refined sub-clusters. |

| Imoscopi et al. [1310] | Focused on speaker identification in OSR using confidence thresholds in discriminatively trained neural networks. |

| Mundt et al. [1311] | Showed that uncertainty-based methods like softmax entropy and Monte Carlo dropout can rival generative models in OSR. |

| Author(s) | Main Contribution |

|---|---|

| Liu et al. [1313] | Proposed a decoupled learning framework for large-scale, long-tailed recognition that improves balance across head and tail classes while rejecting unknowns. |

| Perera and Patel [1314] | Explored deep transfer learning for novelty detection using pre-trained models to identify multiple unknown classes. |

| Xiong et al. [1315] | Presented a spatial divide-and-conquer framework for open-set to closed-set object counting, applying OSR concepts to counting tasks. |

| Yang et al. [1316] | Applied open-set recognition to human activity recognition using micro-Doppler radar signatures to distinguish known and unknown movements. |

| Oza and Patel [1317] | Introduced C2AE, a class-conditioned autoencoder that separates known and unknown samples using reconstruction error thresholds. |

| Liu et al. [1318] | Provided PAC-based theoretical guarantees for open category detection, offering bounds on detection error. |

| Venkataram et al. [1319] | Adapted CNNs for open-set text classification using prototype-based rejection mechanisms. |

| Hassen and Chan [1320] | Proposed a representation learning technique that models uncertainty to improve robustness to unknown data. |

| Shu et al. [1321] | Developed a framework for open-world classification, enabling discovery of new classes incrementally. |

| Dhamija et al. [1322] | Tackled overconfidence on unknowns by designing loss functions that reduce incorrect high-confidence predictions. |

| Zheng et al. [1324] | Investigated adversarial attacks in open-set systems and proposed defenses to enhance unknown-class detection reliability. |

| Author(s) | Main Contribution |

|---|---|

| Neal et al. [1325] | Introduced counterfactual image generation to simulate unknown classes and improve classifier robustness using synthetic outliers. |

| Rudd et al. [1326] | Proposed the Extreme Value Machine (EVM), leveraging EVT to model sample inclusion probabilities for open set recognition. |

| Vignotto and Engelke [1327] | Compared GPD and GEV classifiers for EVT-based modeling of tail distributions in open set recognition. |

| Cardoso et al. [1328] | Explored weightless neural networks using probabilistic memory structures, enabling dynamic adaptation to new data without retraining. |

| Rozsa et al. [1329] | Compared Softmax and Openmax under adversarial conditions, showing Openmax’s superior ability to reject uncertain samples. |

| Shu et al. [1330] | Developed DOC, a deep open classification framework for text, modeling semantic boundaries to detect unknown classes. |

| Ge et al. [1331] | Introduced Generative Openmax, synthesizing unknown class samples to improve multi-class open set classification. |

| Yu et al. [1332] | Used adversarial sample generation to train classifiers for distinguishing between known and unknown categories. |

| Vaze et al. [1231] | Claimed well-trained closed-set classifiers can inherently perform open set recognition without specific modifications. |

| Barcina-Blanco et al. [1232] | Provided a comprehensive literature review on OSL, highlighting its ties to out-of-distribution detection and uncertainty estimation. |

| iCGY96 (GitHub) [1233] | Curated a repository of papers and resources for open set learning research. |

8.2. Literature Review of Traditional Machine Learning Open Set Learning

8.2.1. Foundational Theoretical Frameworks of Open Set Recognition

8.2.2. Sparse and Hyperplane-Based Models for OSR

8.2.3. Support Vector and Nearest Neighbor Approaches

8.2.4. Domain Transfer and Zero-Shot Learning Integration

8.2.5. Application-Centric Advances: Face Recognition and Text Classification

8.2.6. Ensemble and Fusion-Based Enhancements

8.3. Mahalanobis Distance

8.4. Literature Review of Bayesian Formulation in Open Set Learning

8.4.1. Literature Review of Bayesian Neural Networks (BNNs) for OSL

8.4.2. Literature Review of Dirichlet-Based Uncertainty for OSL

8.4.3. Literature Review of Gaussian Processes (GPs) for OSL

8.4.4. Literature Review of Variational Autoencoders (VAEs) and Bayesian Generative Models

8.4.5. Critical Synthesis

8.5. Analysis of Bayesian Formulation in Open Set Learning

8.6. Gaussian Mixture Model (GMM)

8.7. Dirichlet Process Gaussian Mixture Model (DP-GMM)

8.8. Conjugate Normal-Inverse-Wishart (NIW) Distribution

8.9. Extreme Value Theory (EVT) Models

8.10. Bayesian Neural Networks (BNNs)

8.11. Support Vector Models

8.12. Support Vector Data Description

9. Zero-Shot Learning

9.1. Literature Review of Zero-Shot Learning

9.2. Analysis of Zero-Shot Learning

9.3. Energy-based models for Zero-Shot Learning

9.3.1. Various Loss function Used in Energy-Based Models for Zero-Shot Learning

9.3.2. Generative Constraints in Energy-Based Models for Zero-Shot Learning

9.3.3. Use of Inference in Energy-Based Models for Zero-Shot Learning

9.4. Meta-Learning Approaches for Zero-Shot Learning

9.4.1. Model-Agnostic Meta-Learning (MAML)

9.4.2. Meta-Embedding Strategy

9.4.3. Metric-Based Meta-Learning

9.4.4. Graph-Based Meta-Learning

9.4.5. Bayesian Meta-Learning

10. Neural Network Basics

10.1. Literature Review of Neural Network Basics

10.2. Perceptrons and Artificial Neurons

10.3. Feedforward Neural Networks

10.4. Activation Functions

10.5. Loss Functions

10.5.1. Loss Functions for Regression Tasks

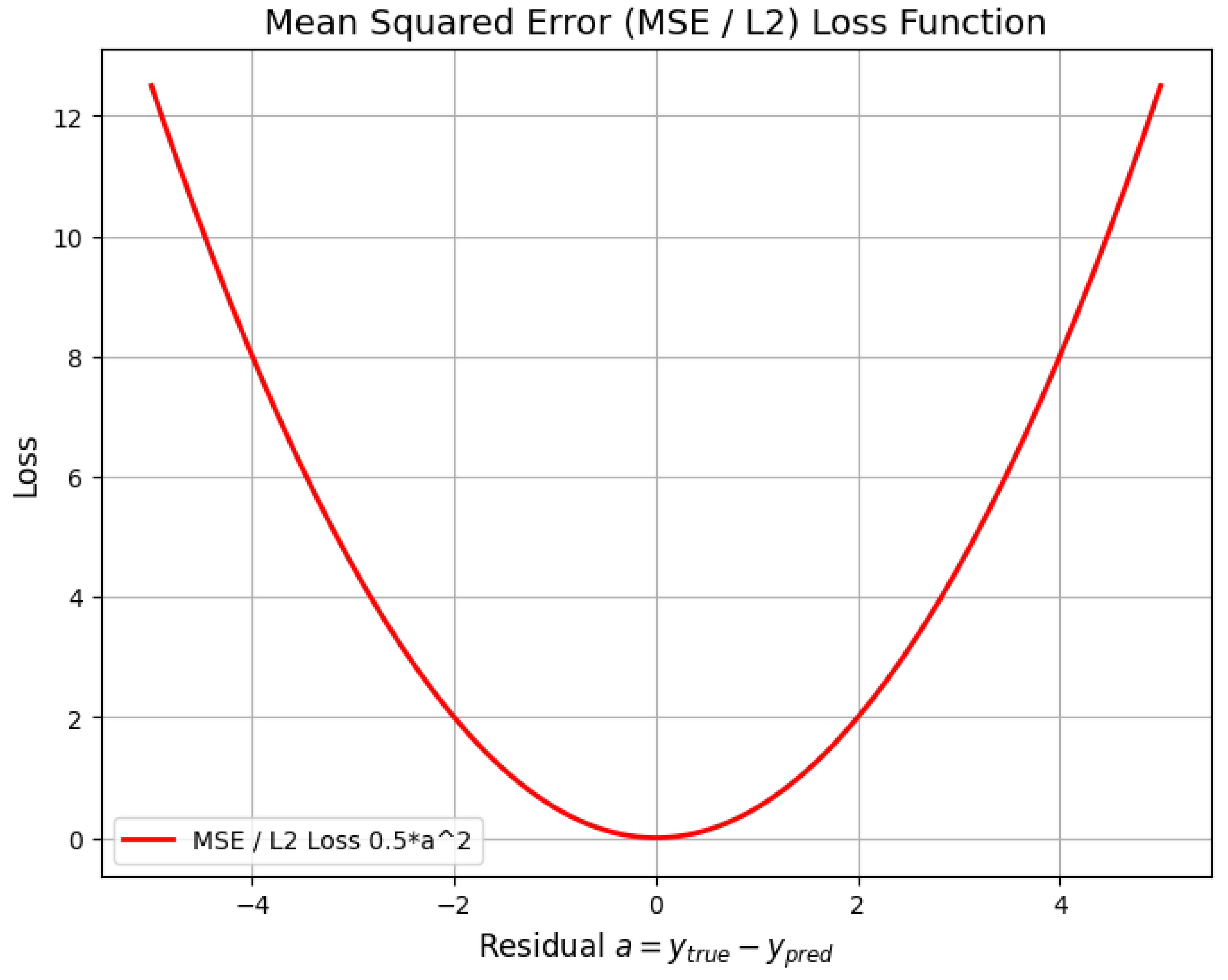

10.5.1.1 Mean Squared Error (MSE/L2 Loss)

10.5.1.2 Mean Absolute Error (MAE/L1 Loss)

10.5.1.3 Huber Loss (Smooth Mean Absolute Error)

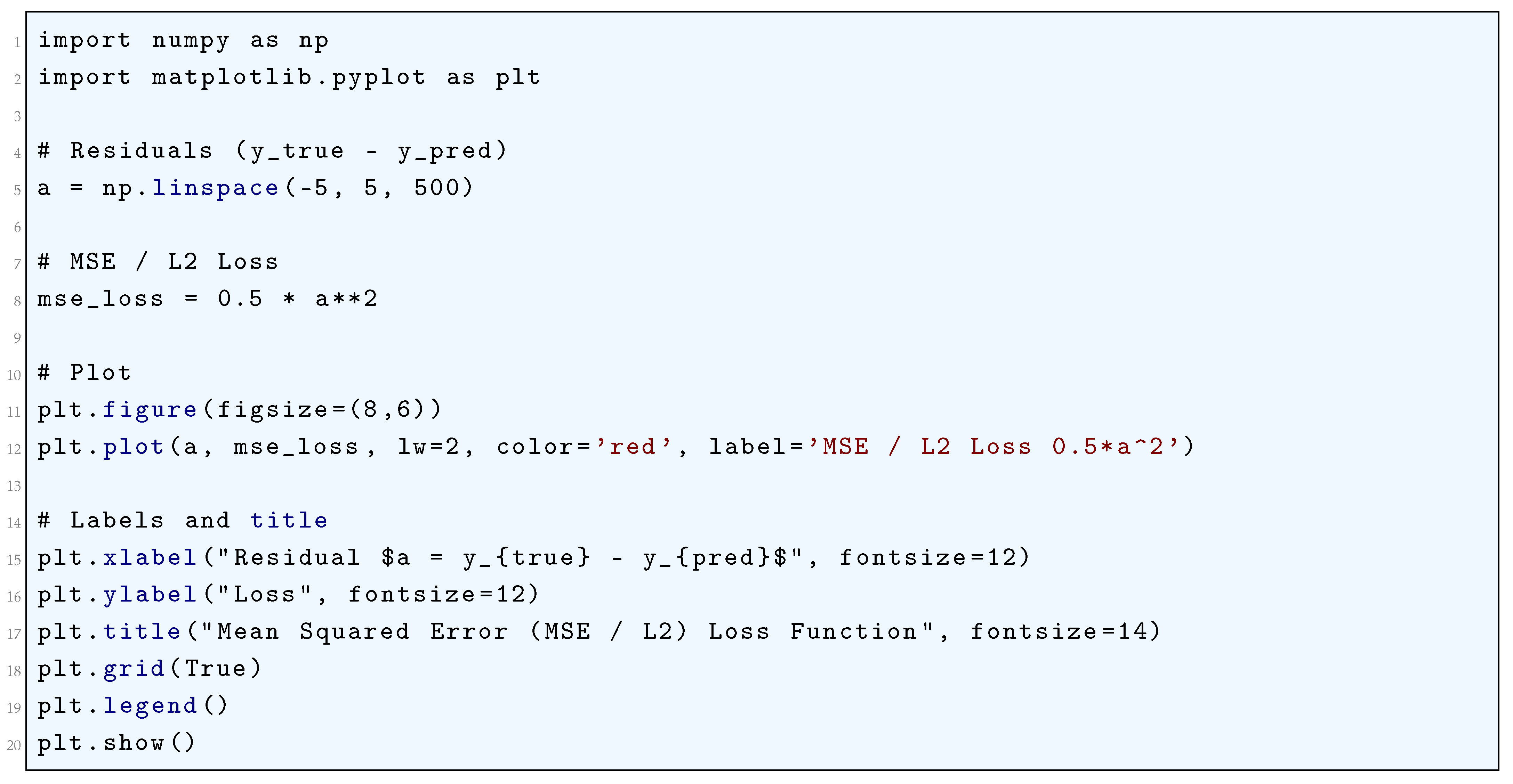

10.5.1.4 Python Code to Generate Figure 65 Illustrating Mean Squared Error (MSE/L2) Loss Function

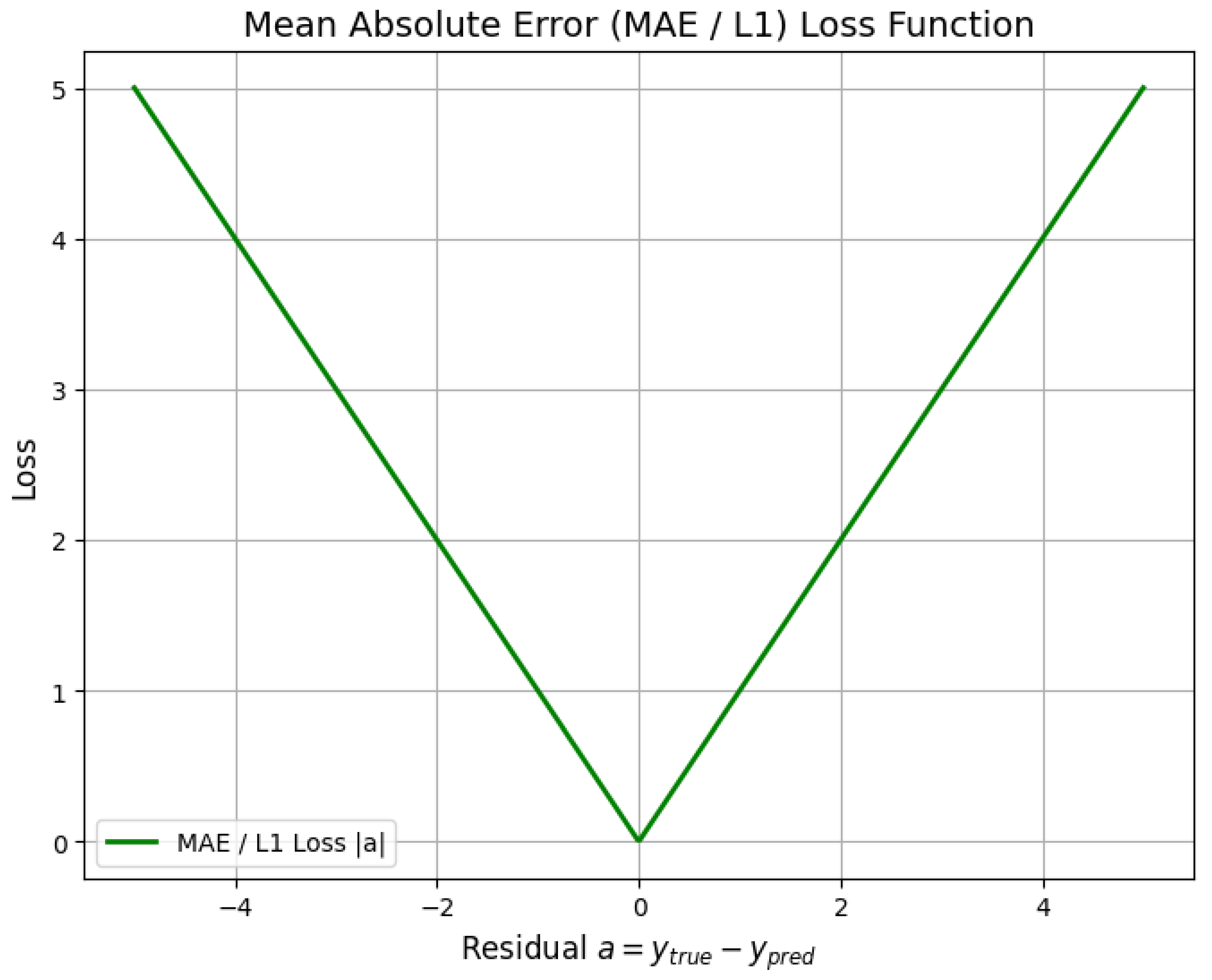

10.5.1.5 Python Code to Generate Figure 66 Illustrating Mean Absolute Error (MAE/L1) Loss Function

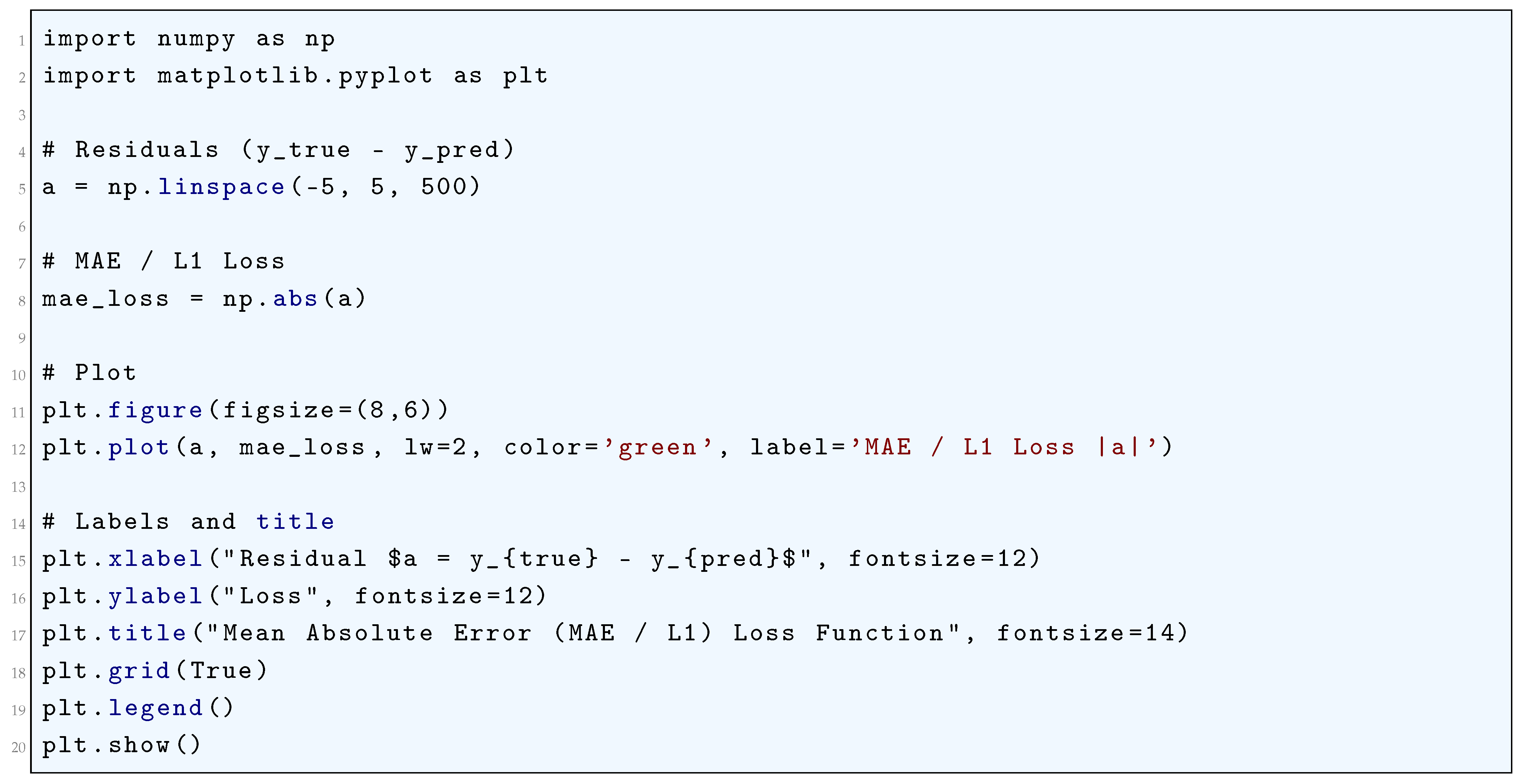

10.5.1.6 Python Code to Generate Figure 67 Illustrating Huber Loss Function

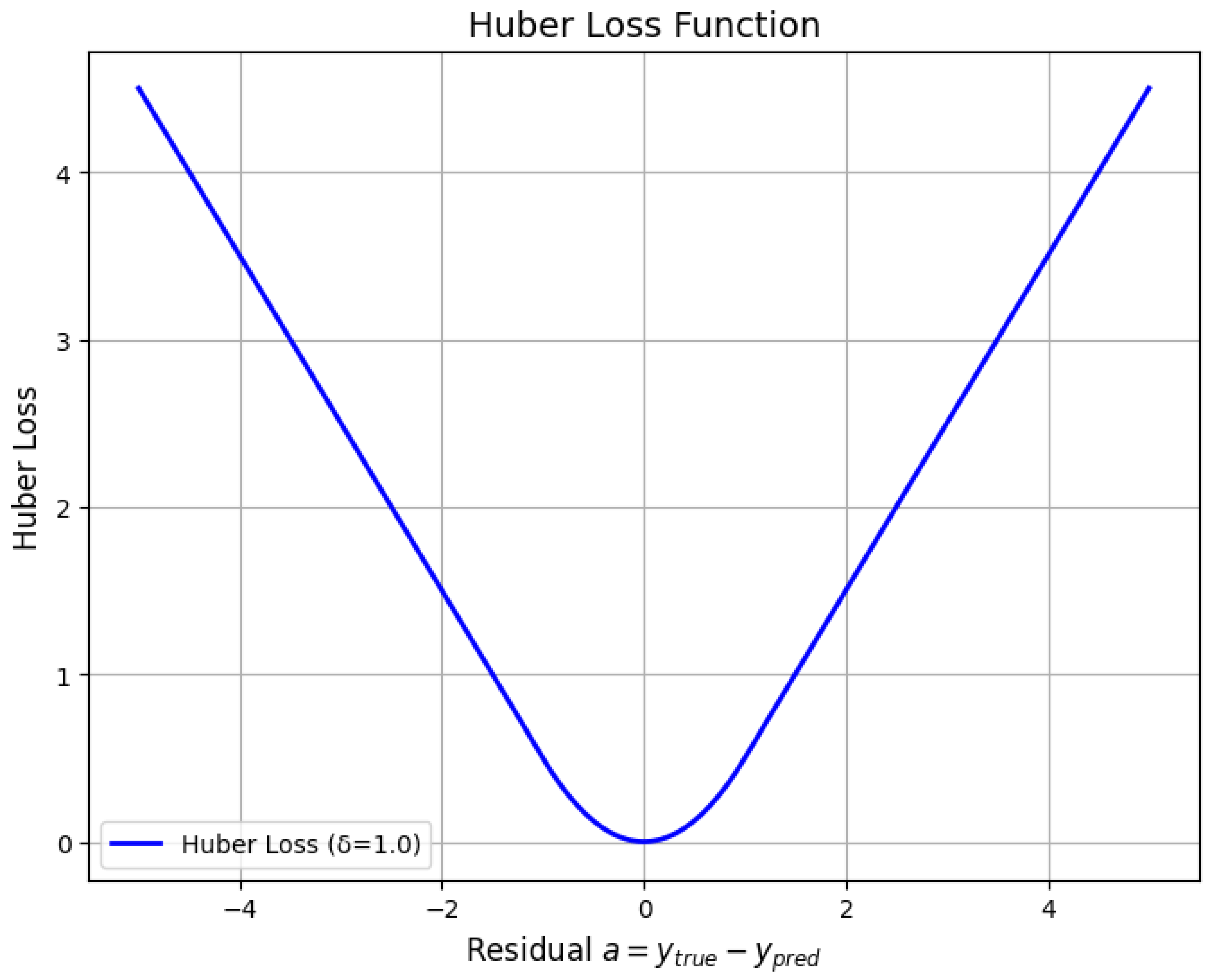

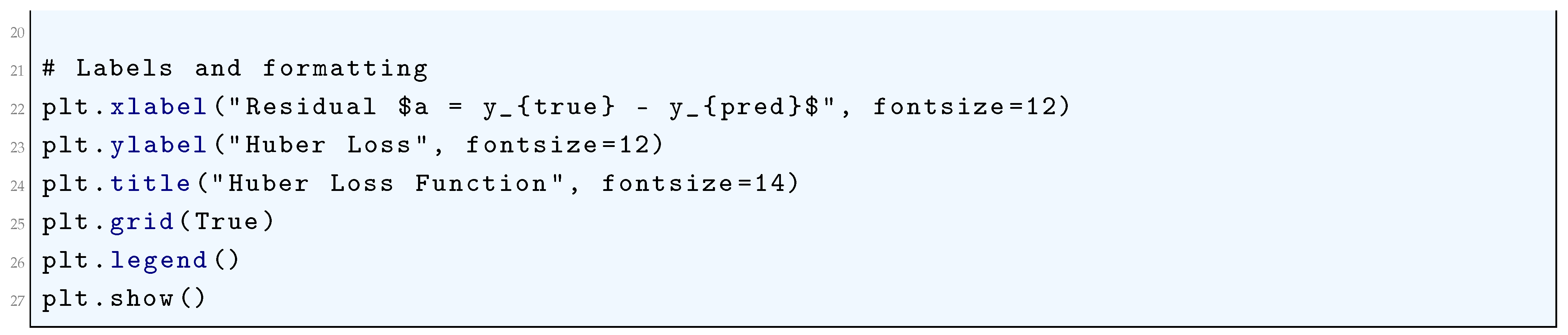

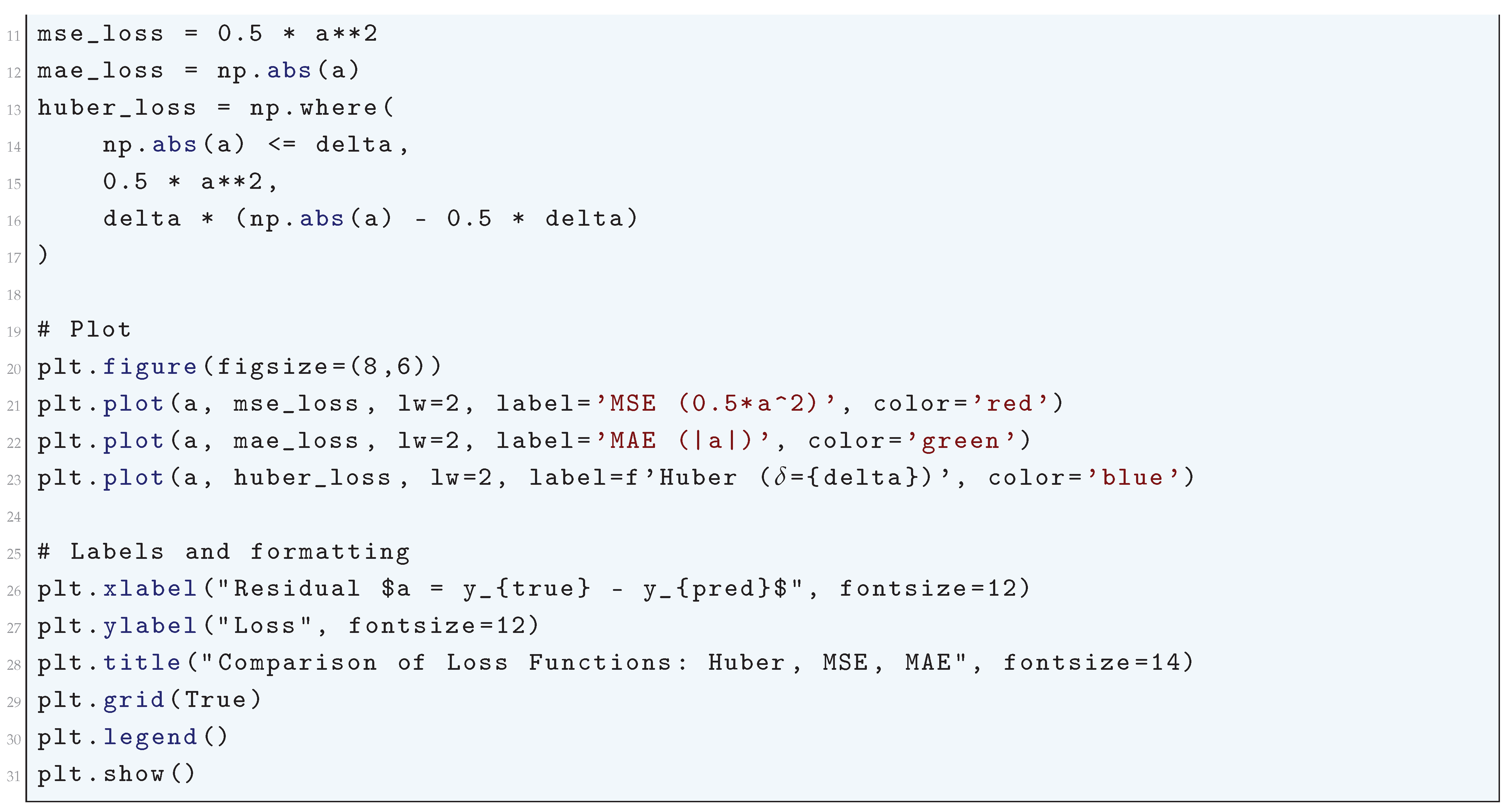

10.5.1.7 Python Code to Generate Figure 68 Comparing the Loss Functions: Huber, MSE, MAE

10.5.2. Loss Functions for Classification Tasks

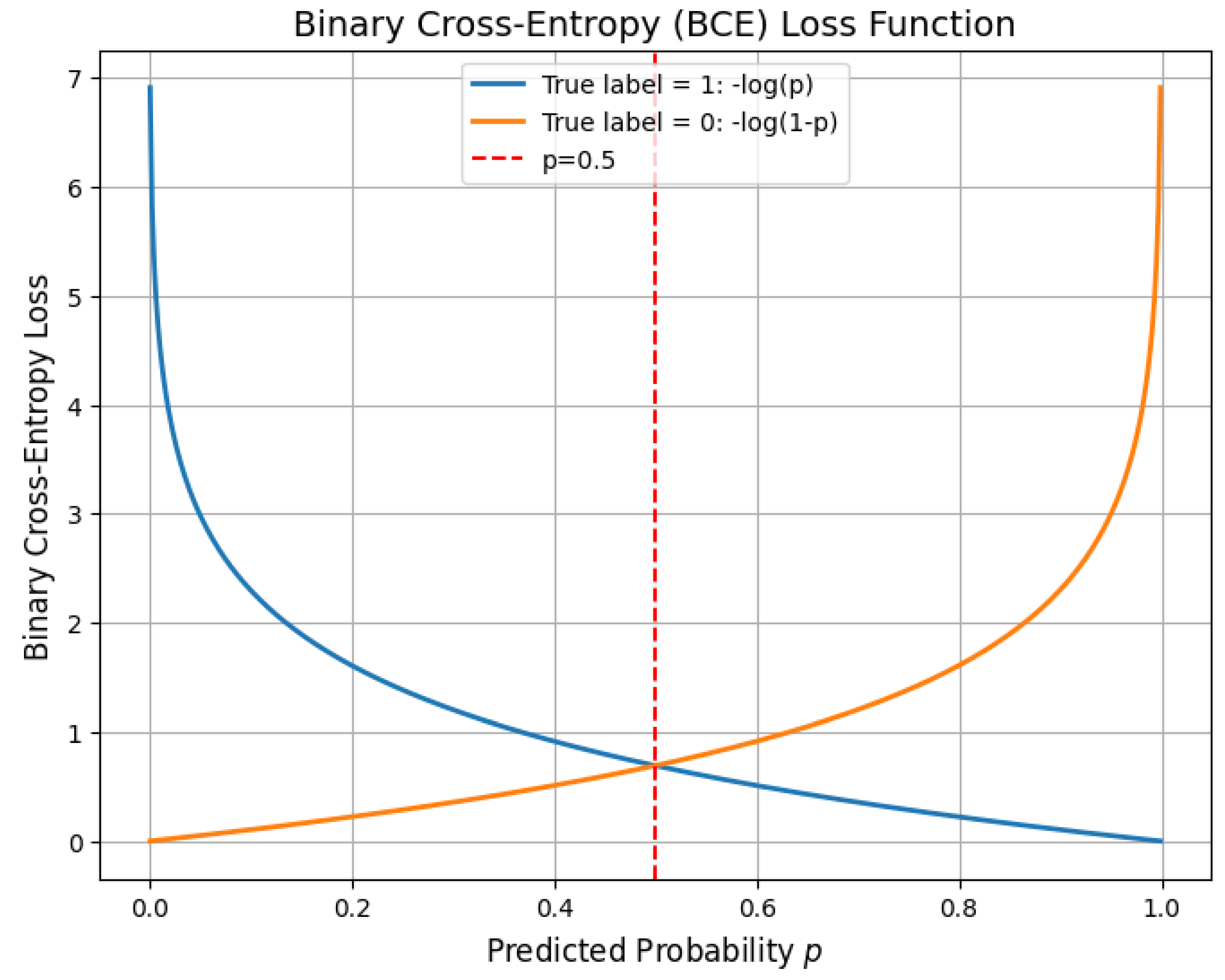

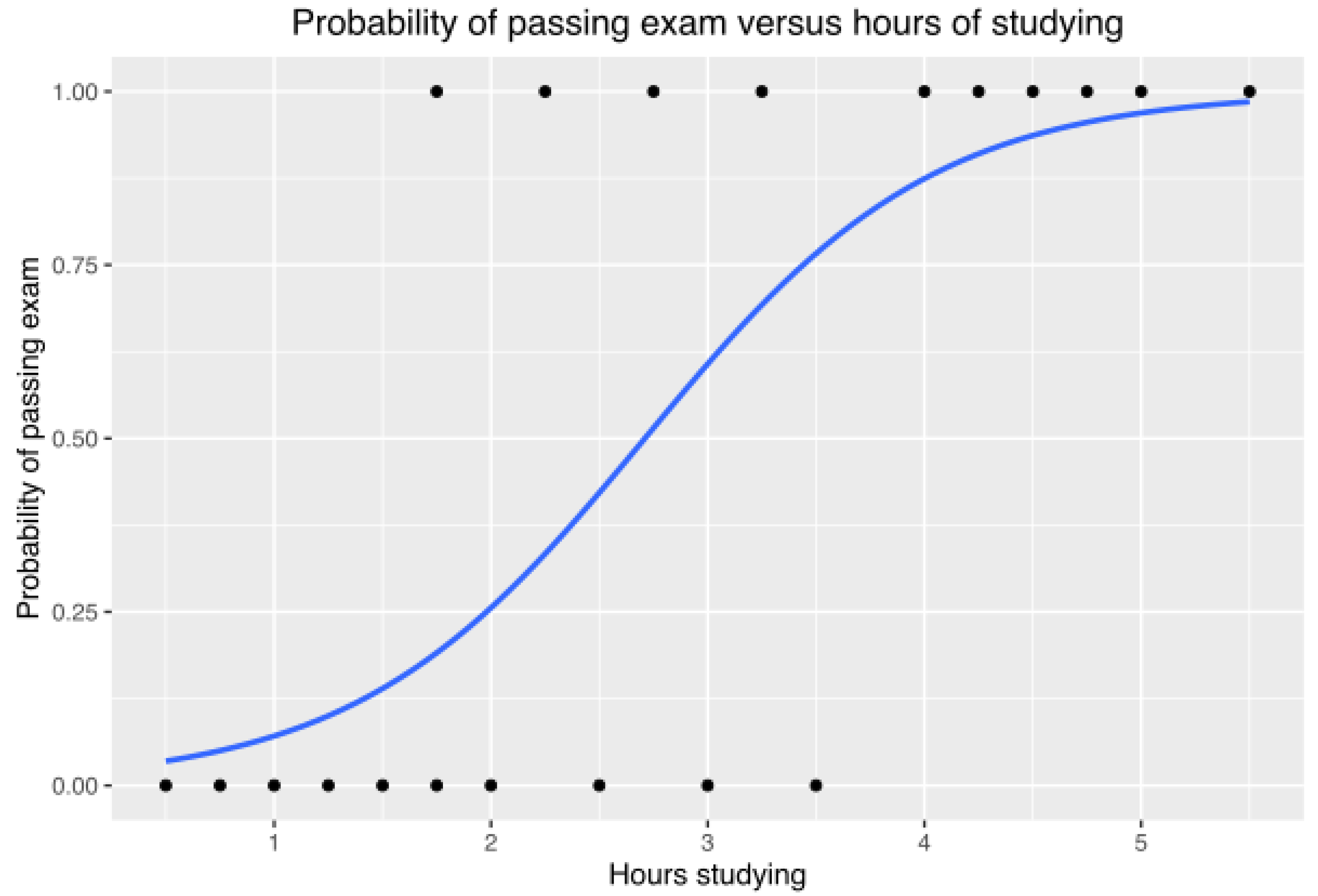

10.5.2.1 Binary Cross-Entropy (Log Loss)

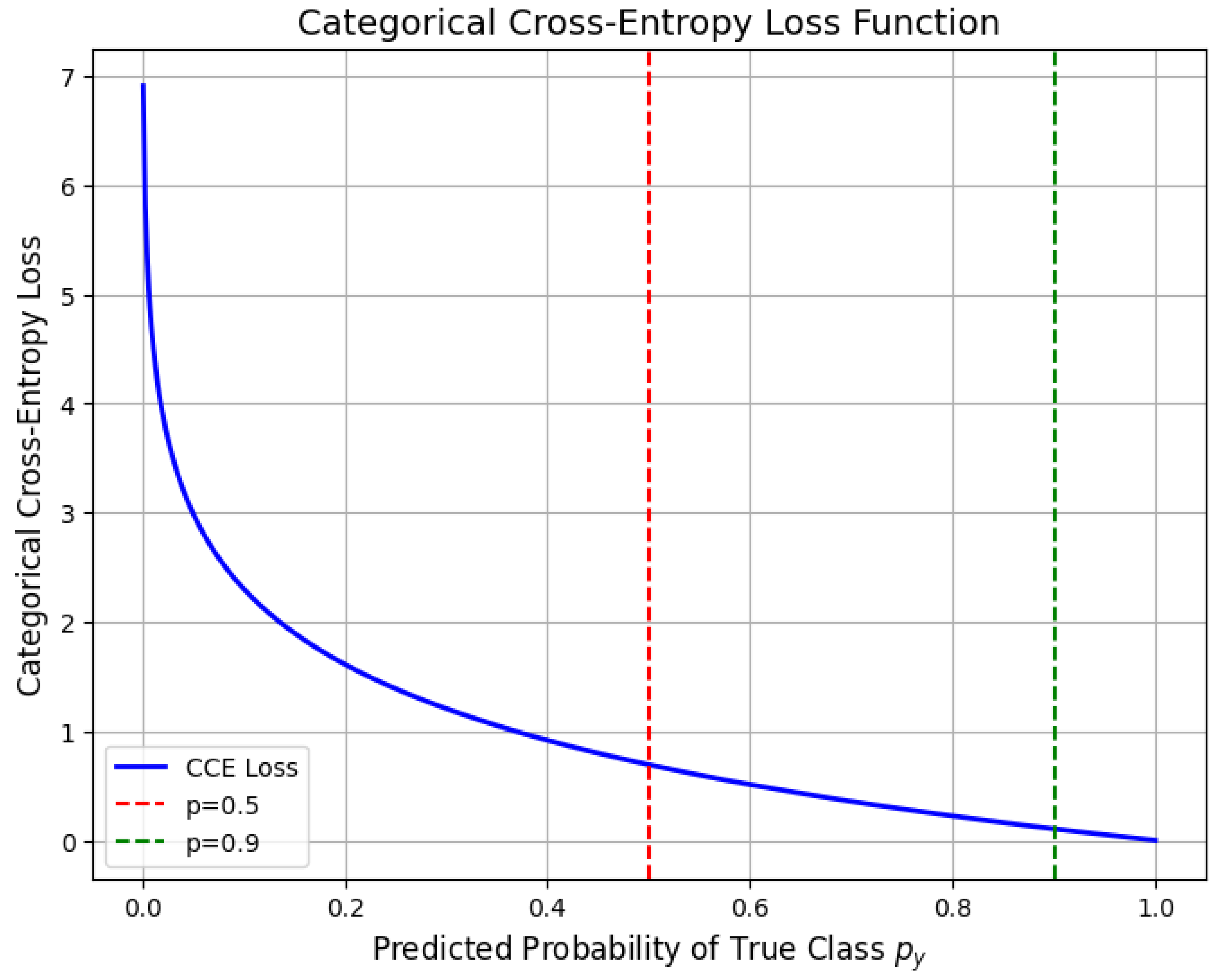

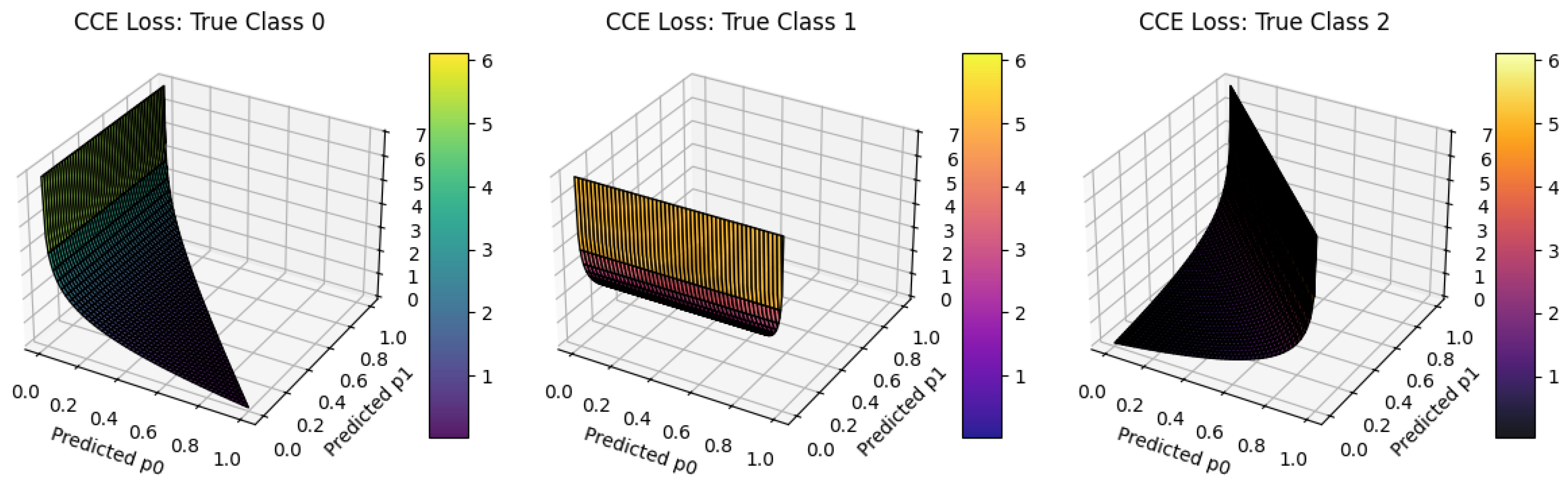

10.5.2.2 Categorical Cross-Entropy

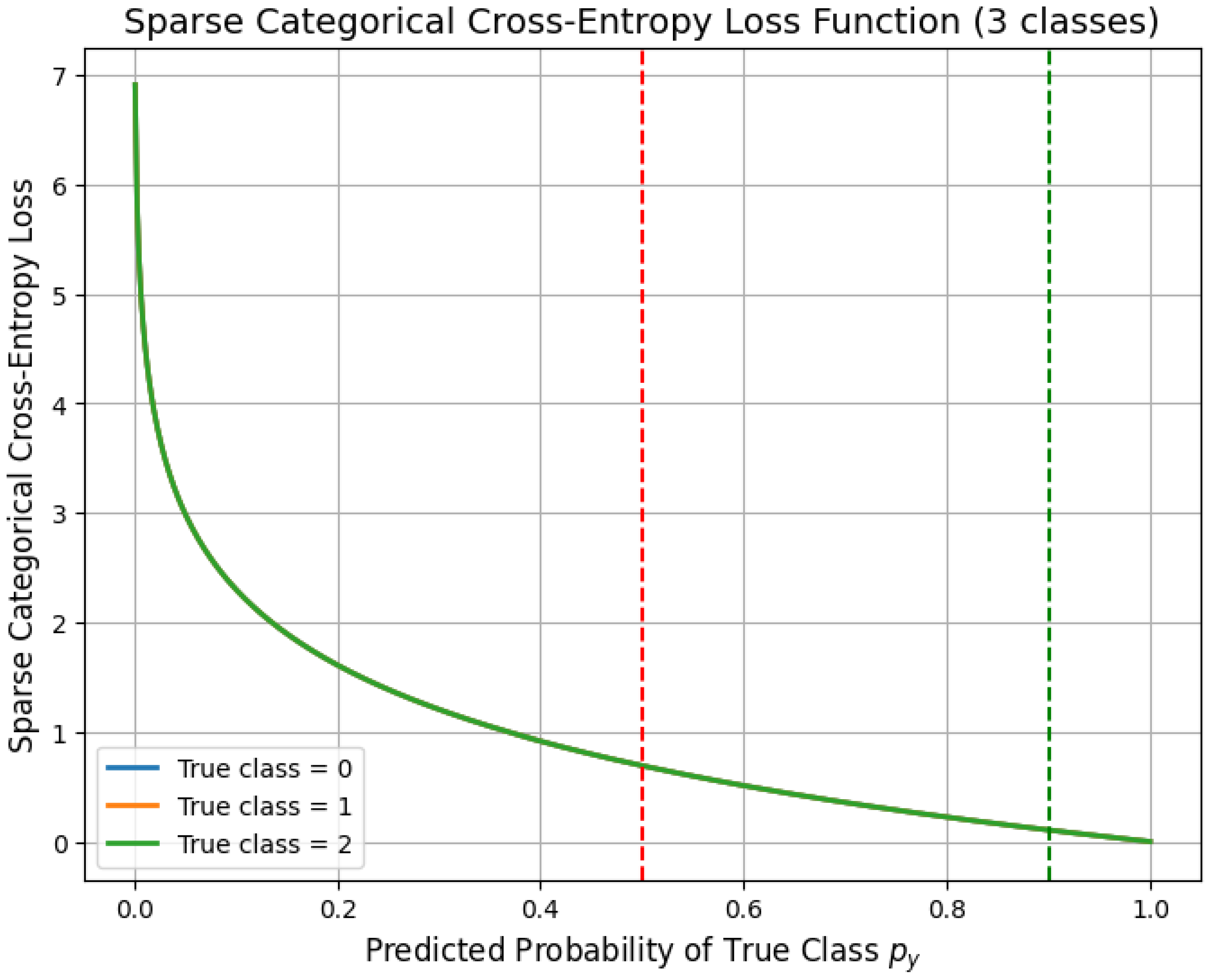

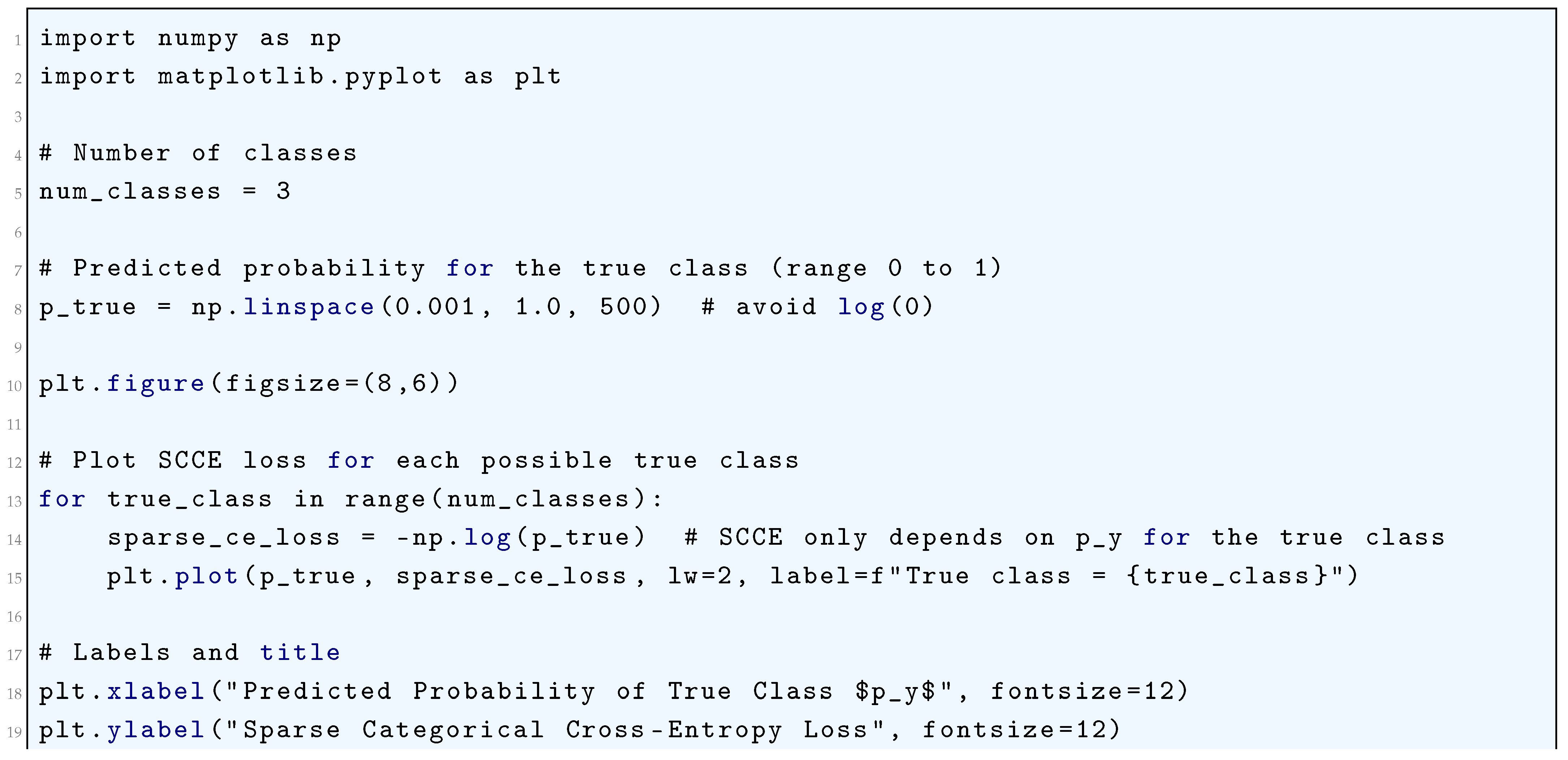

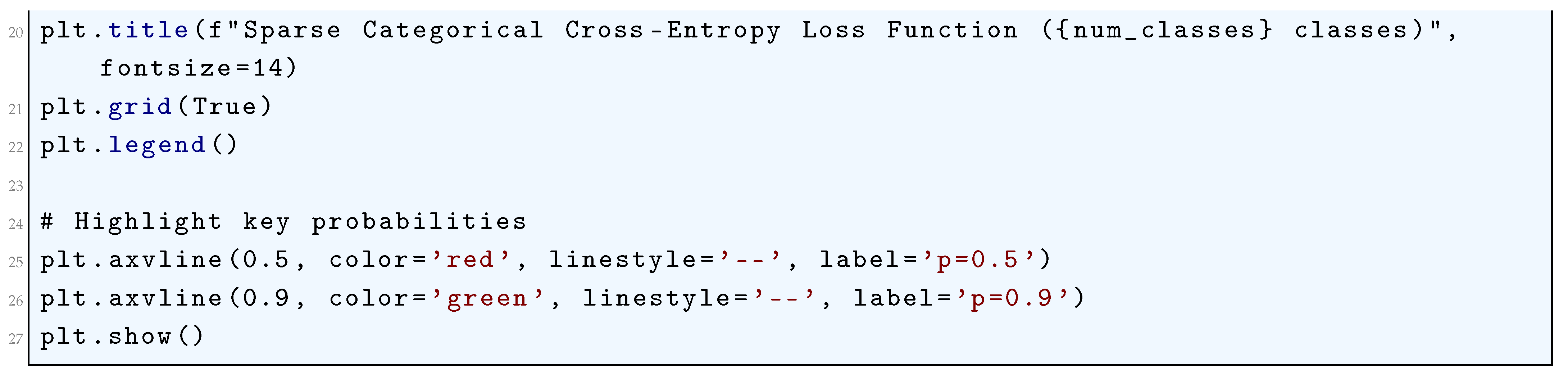

10.5.2.3 Sparse Categorical Cross-Entropy

10.5.2.4 Kullback-Leibler Divergence (KL Divergence)

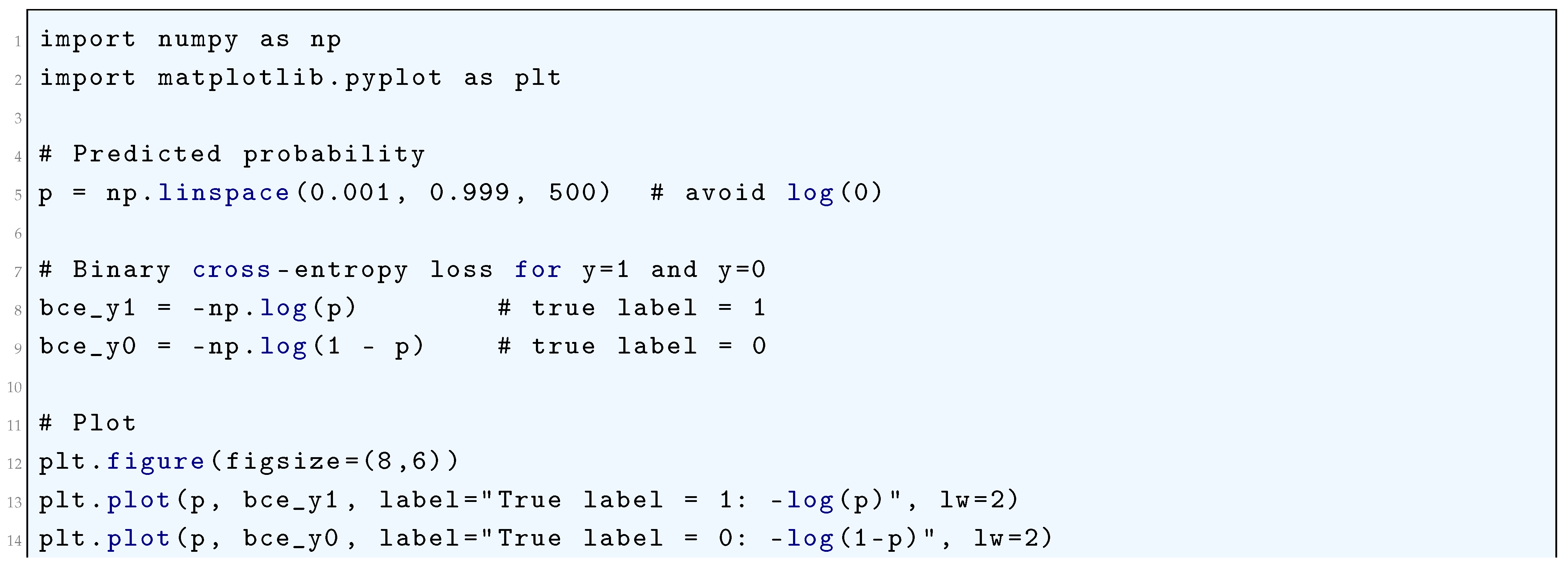

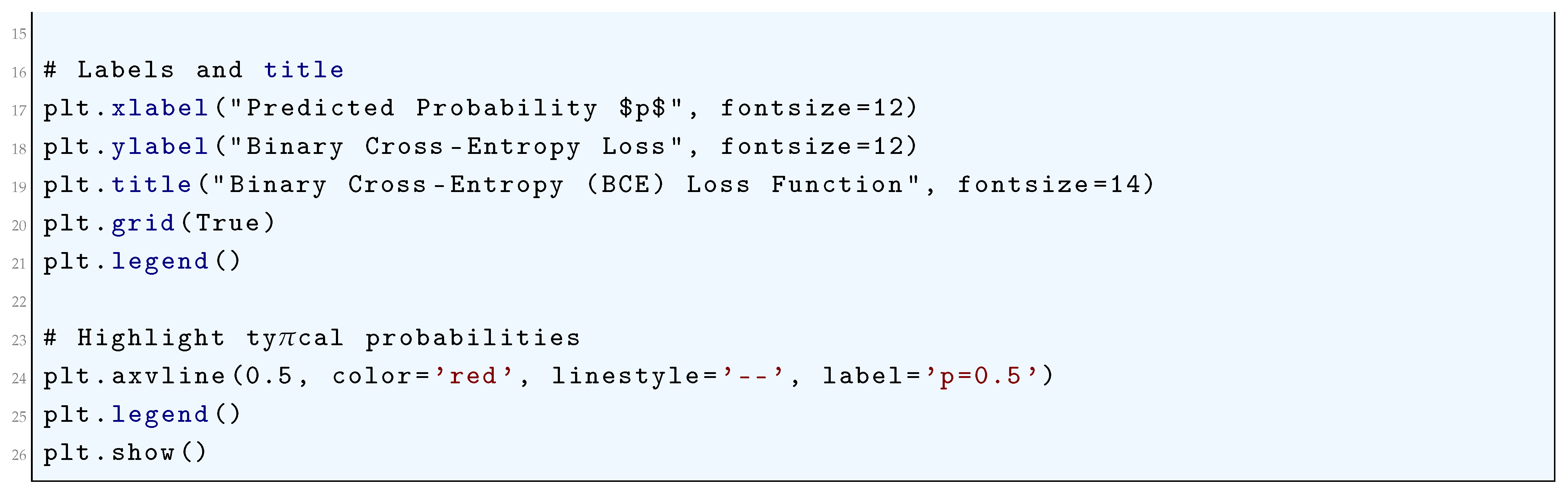

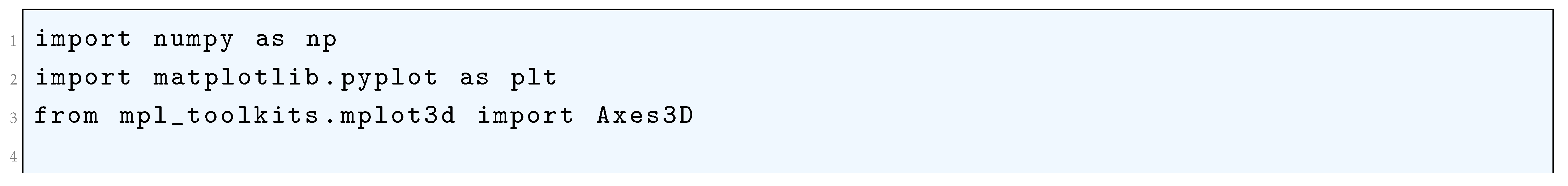

10.5.2.5 Python Code to Generate Figure 69 Illustrating Binary Cross-Entropy (BCE) Loss Function

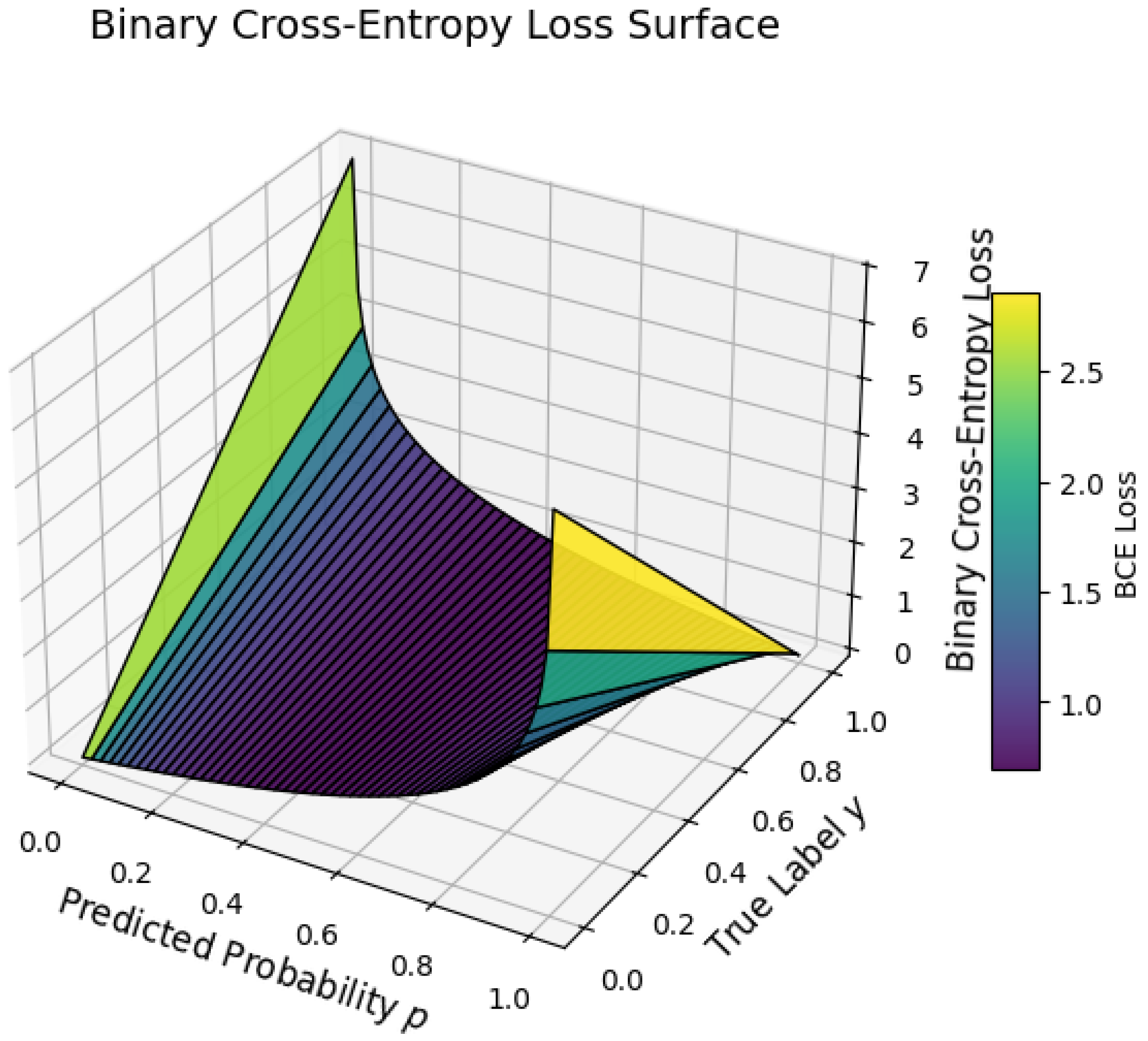

10.5.2.6 Python Code to Generate Figure 70 Illustrating Binary Cross-Entropy Loss Surface

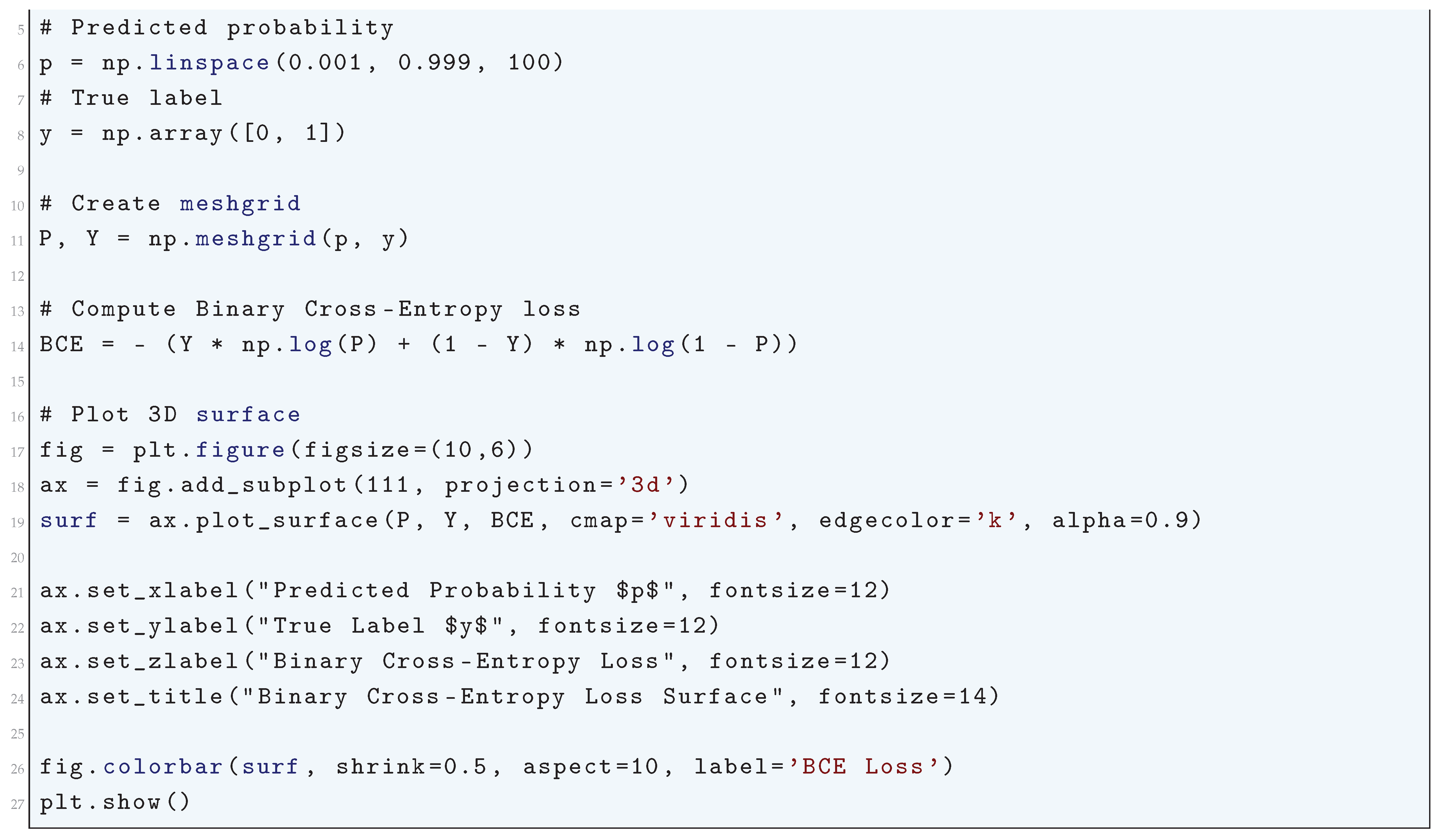

10.5.2.7 Python Code to Generate Figure 71 Illustrating Categorical Cross-Entropy Loss

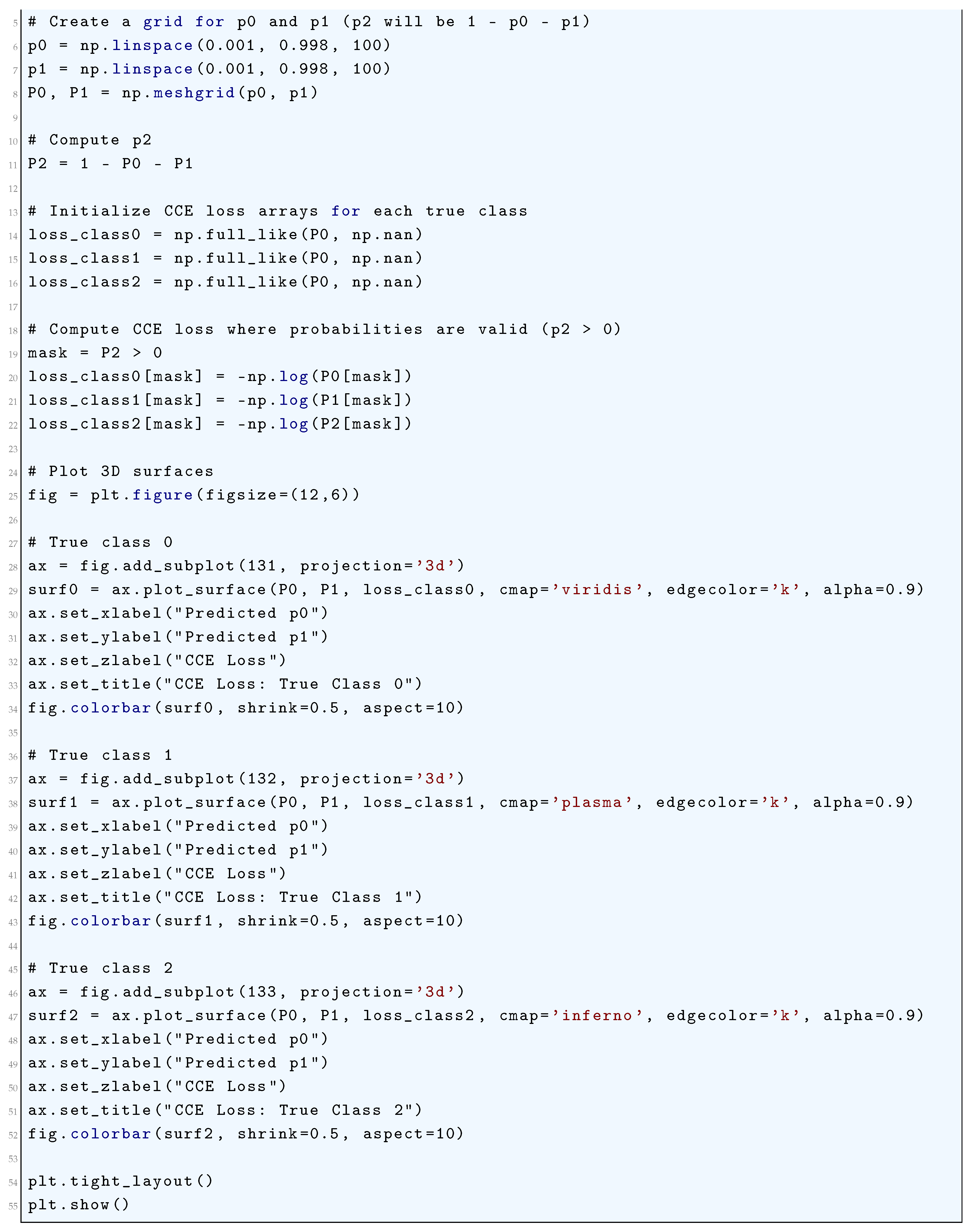

10.5.2.8 Python Code to Generate Figure 72 Illustrating Categorical Cross-Entropy Loss

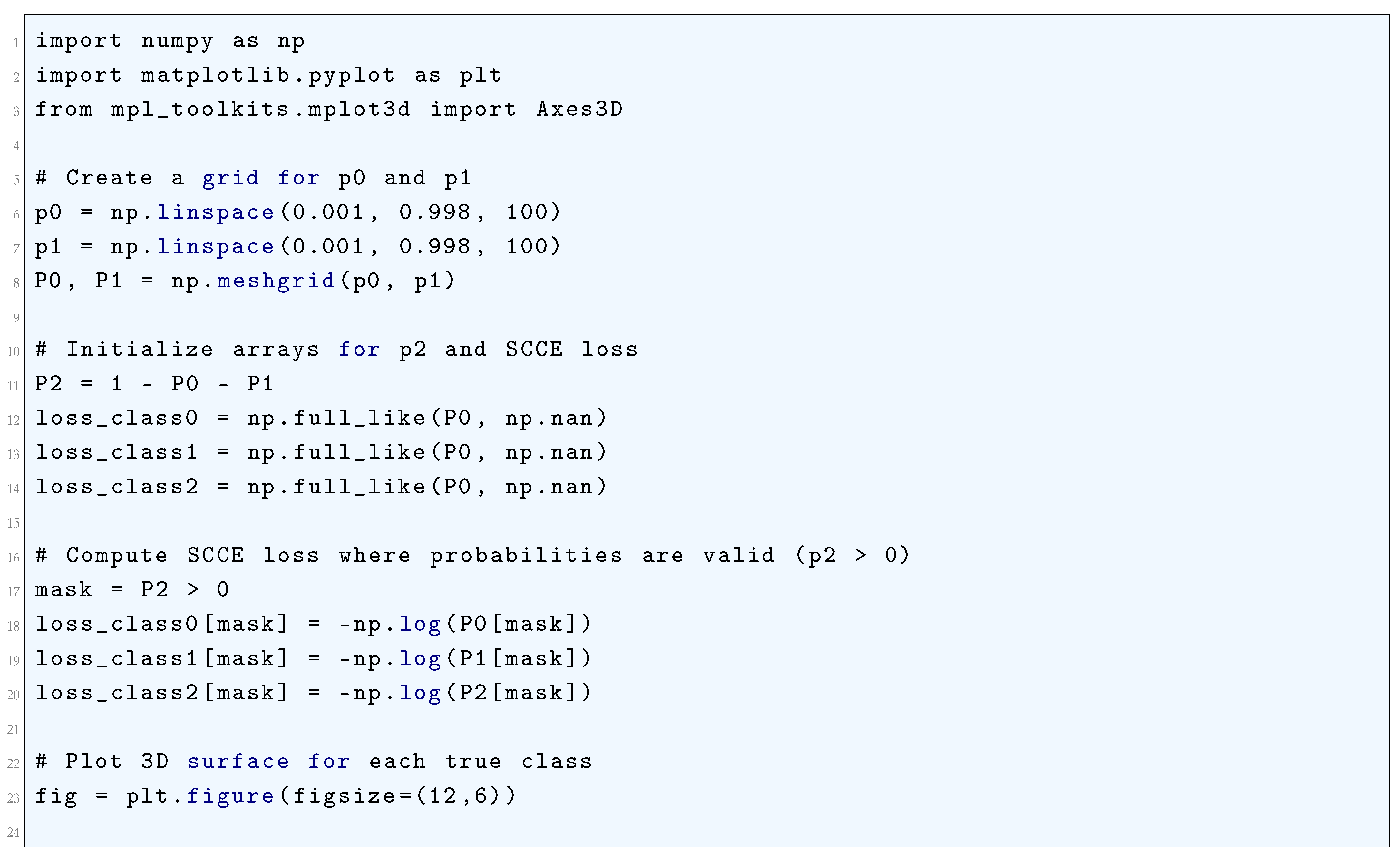

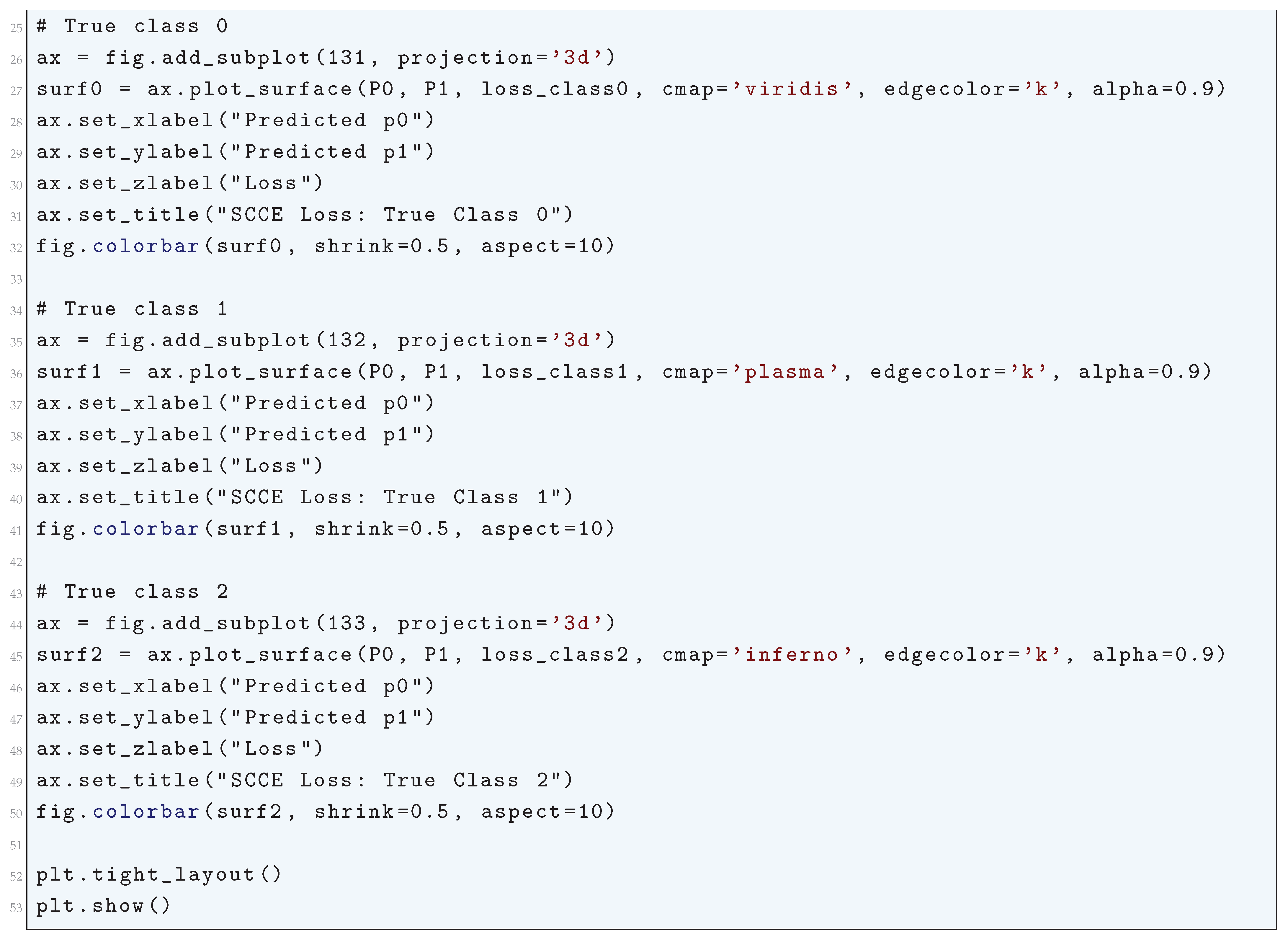

10.5.2.9 Python Code to Generate Figure 73 Illustrating Sparse Categorical Cross-Entropy Loss

10.5.2.10 Python Code to Generate Figure 74 Illustrating Surface Plot of Sparse Categorical Cross-Entropy Loss

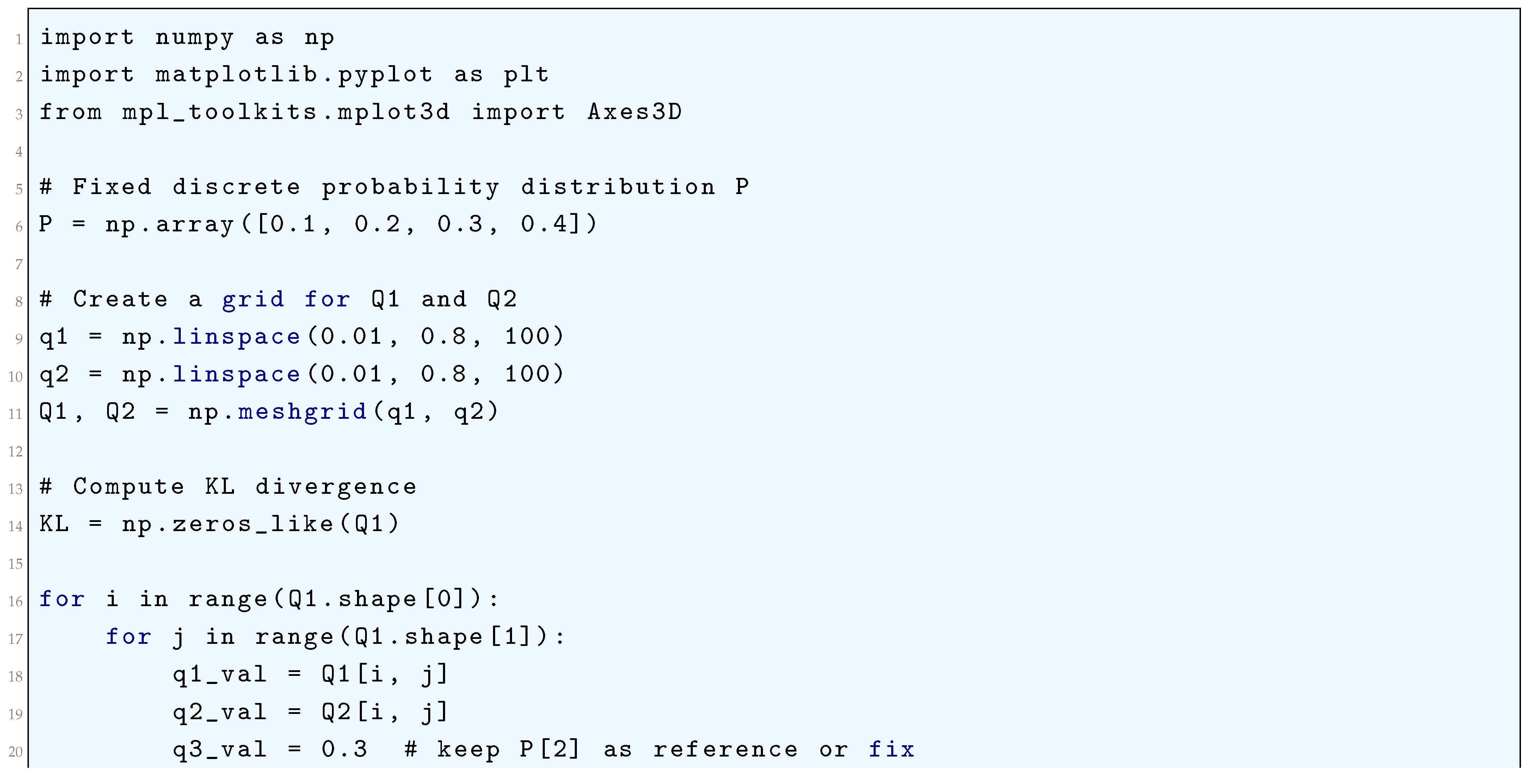

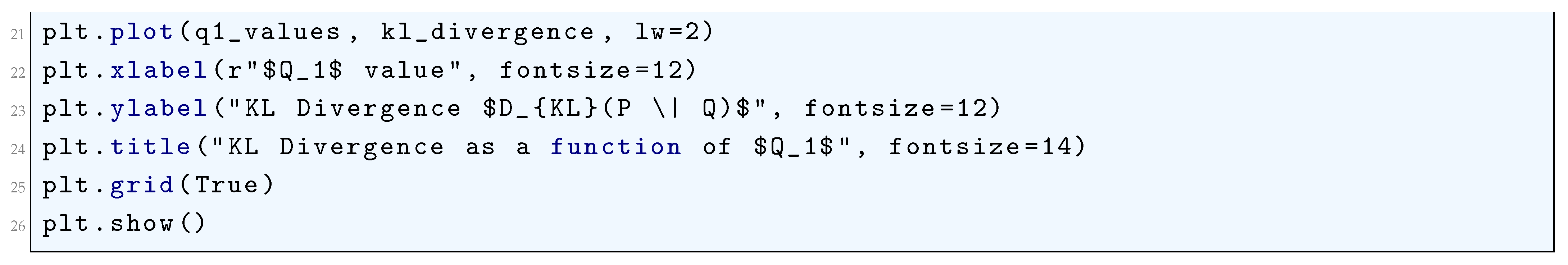

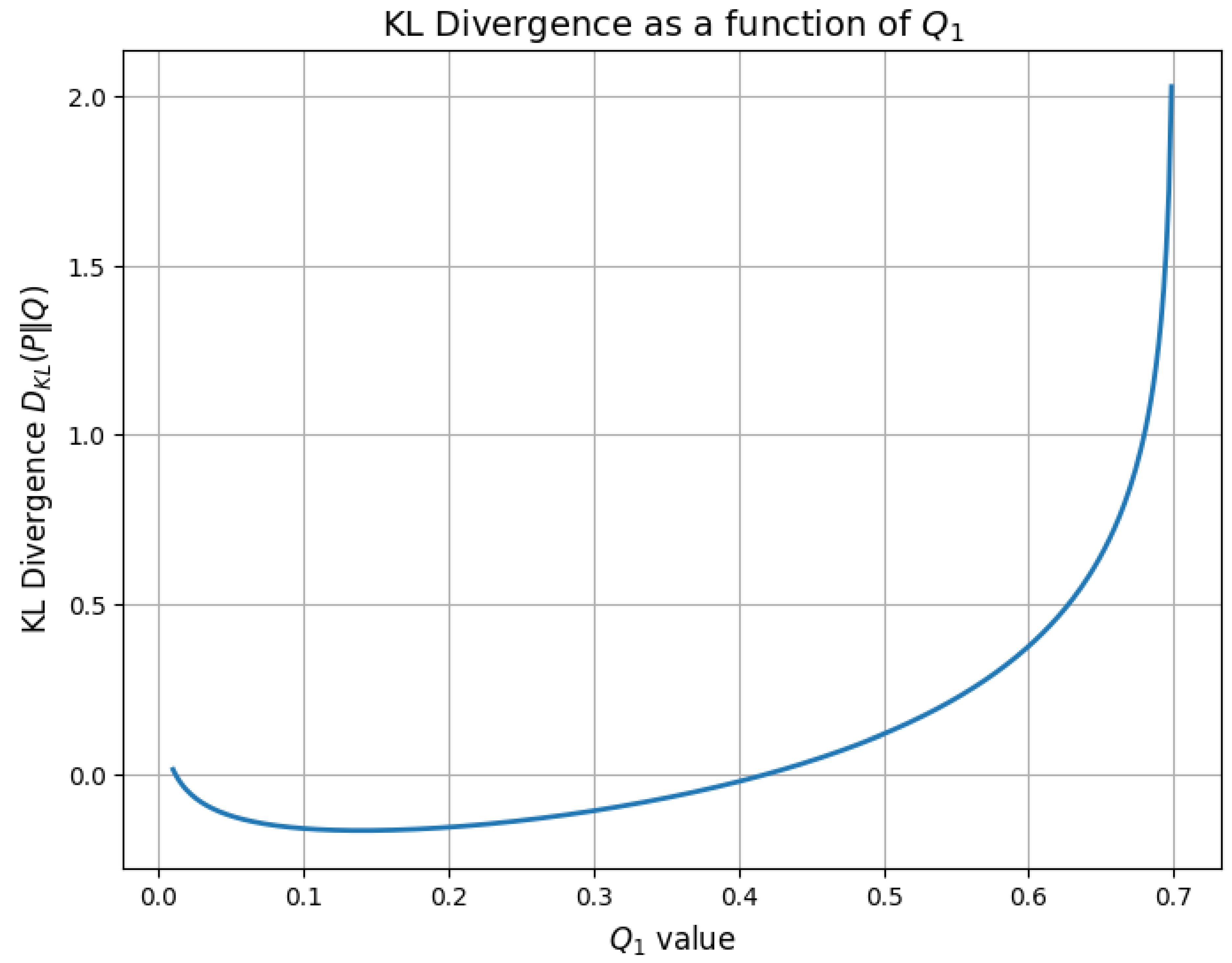

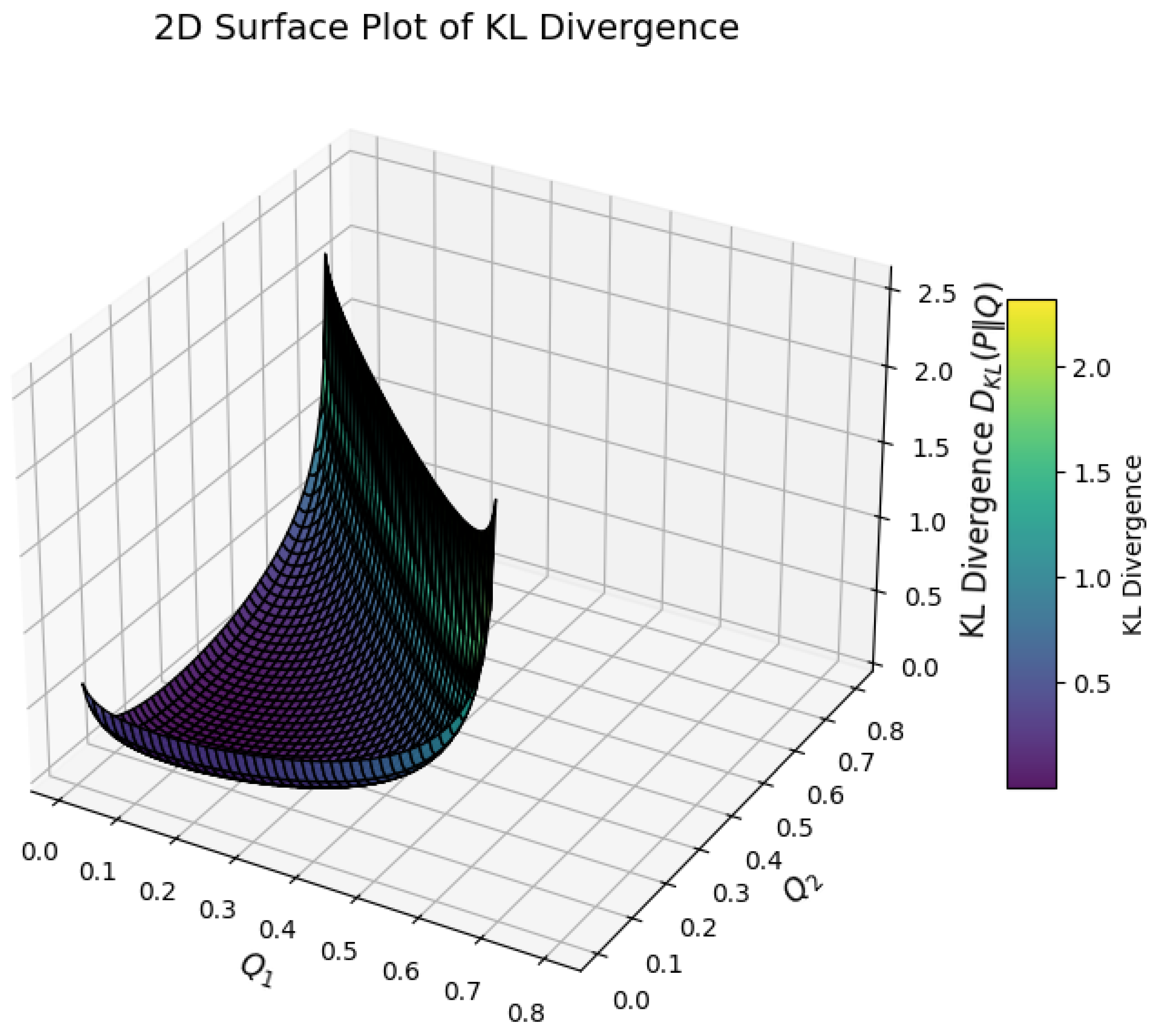

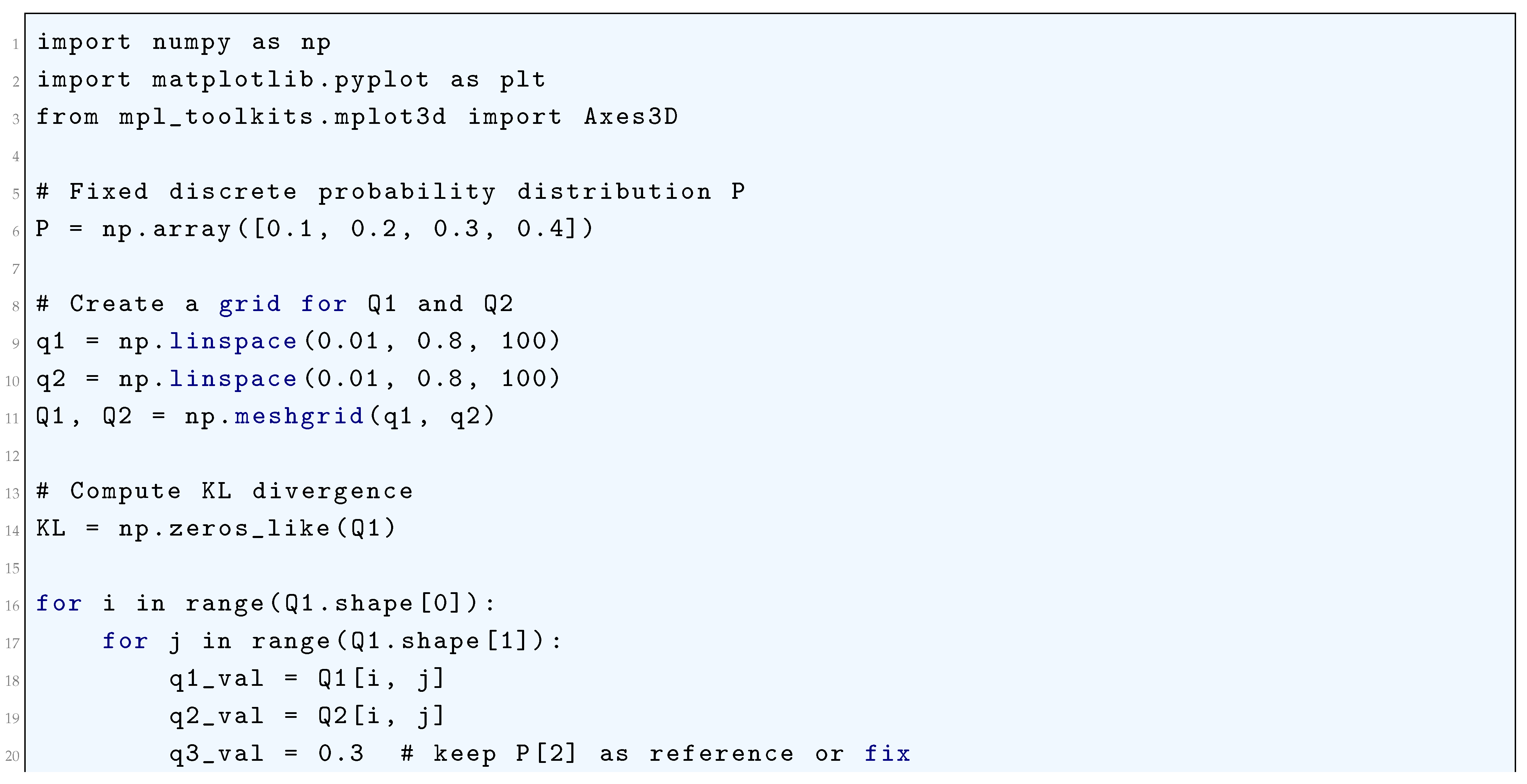

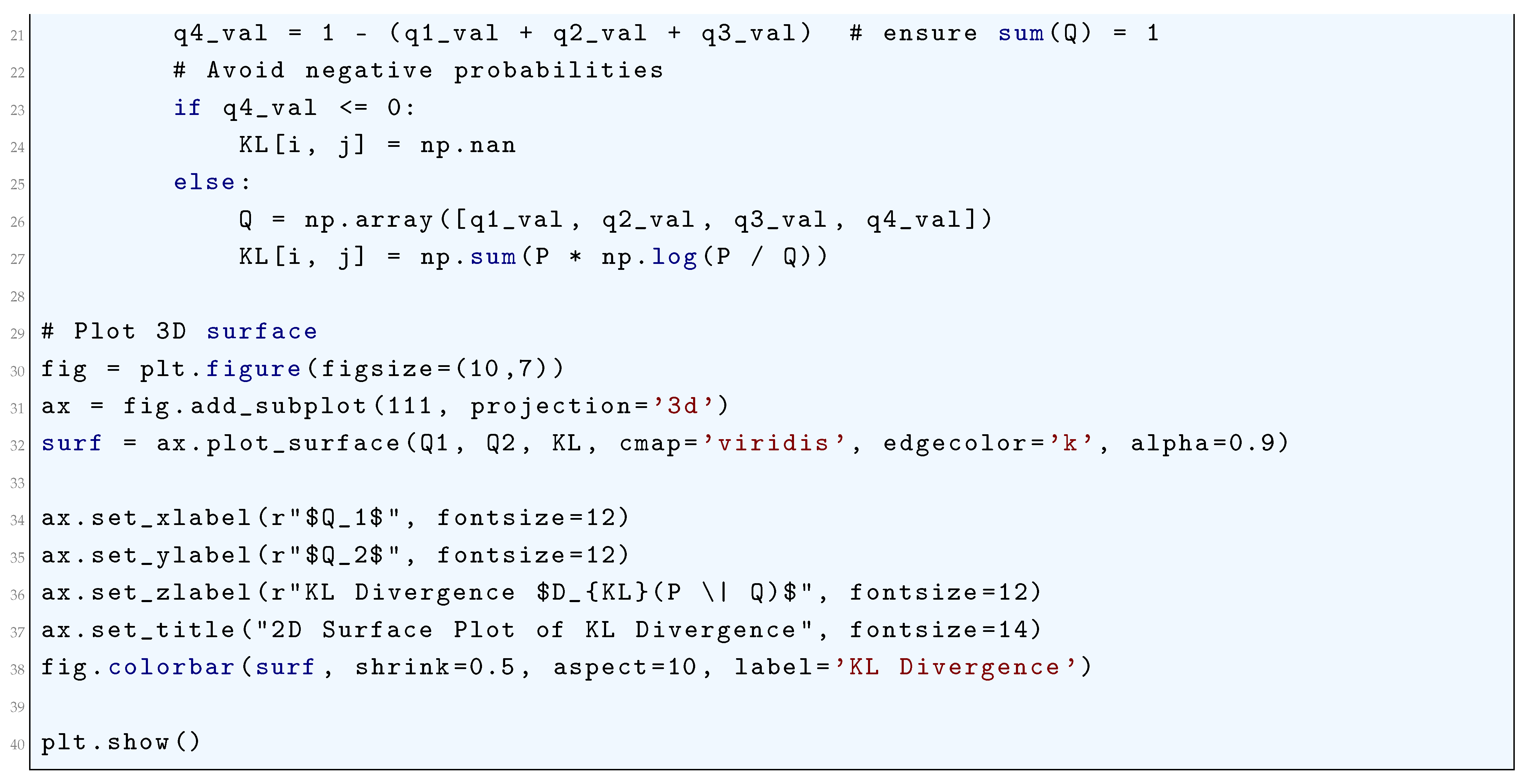

10.5.2.11 Python Code to Generate Figure 75 Illustrating KL Divergence

10.5.2.12 Python Code to Generate Figure 76 Illustrating 2D Surface Plot of KL Divergence

10.5.3. Advanced and Specialized Loss Functions

10.5.3.1 Loss Functions for Generative Adversarial Networks (GANs)

10.5.3.1.1 Wasserstein Loss (Earth Mover’s Distance)

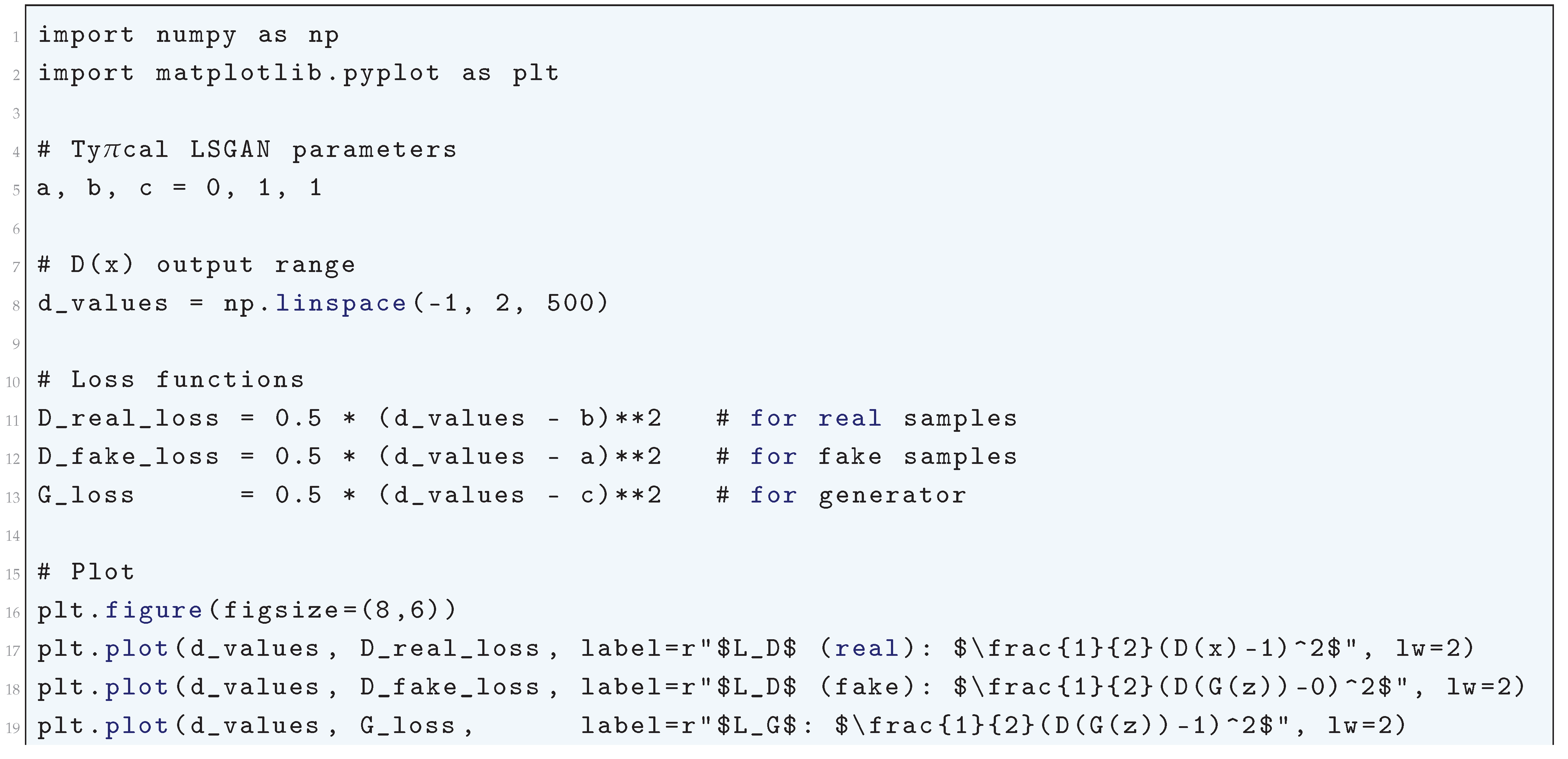

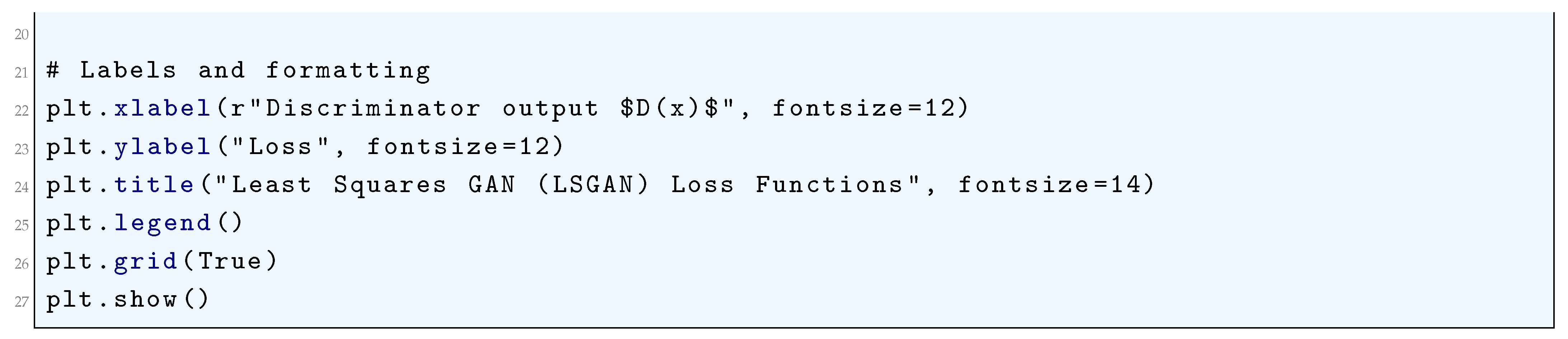

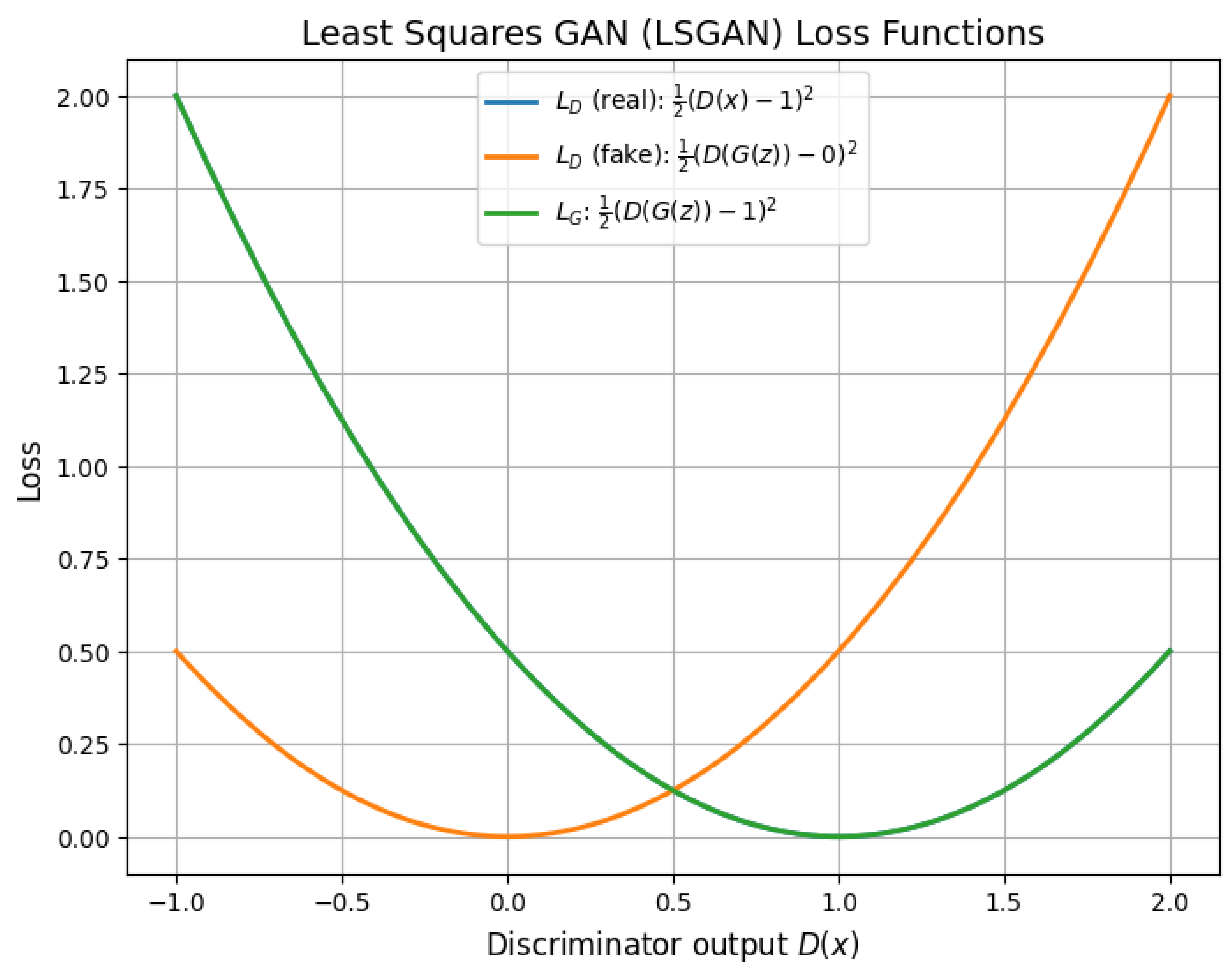

10.5.3.1.2 Least Squares GAN (LSGAN) Loss

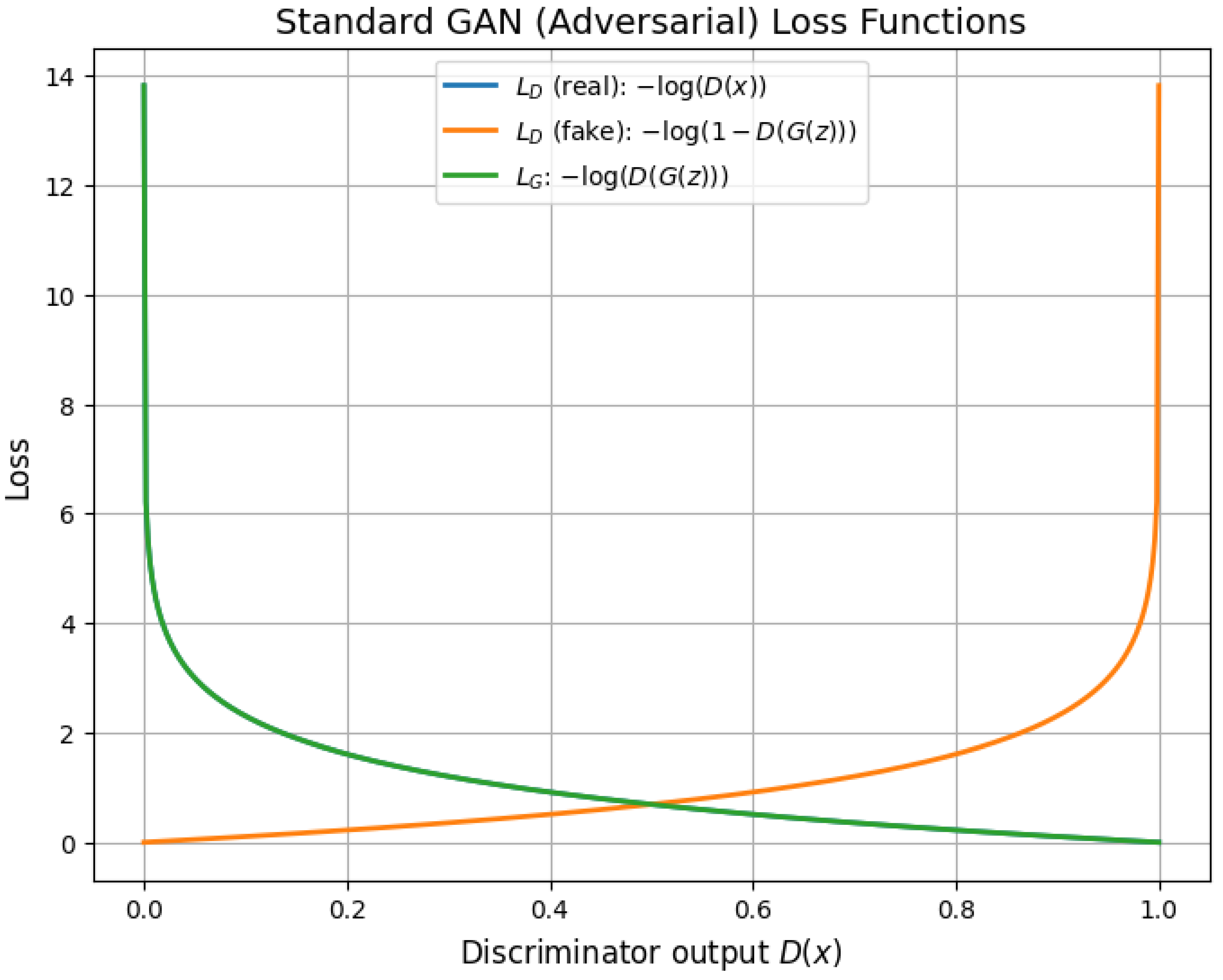

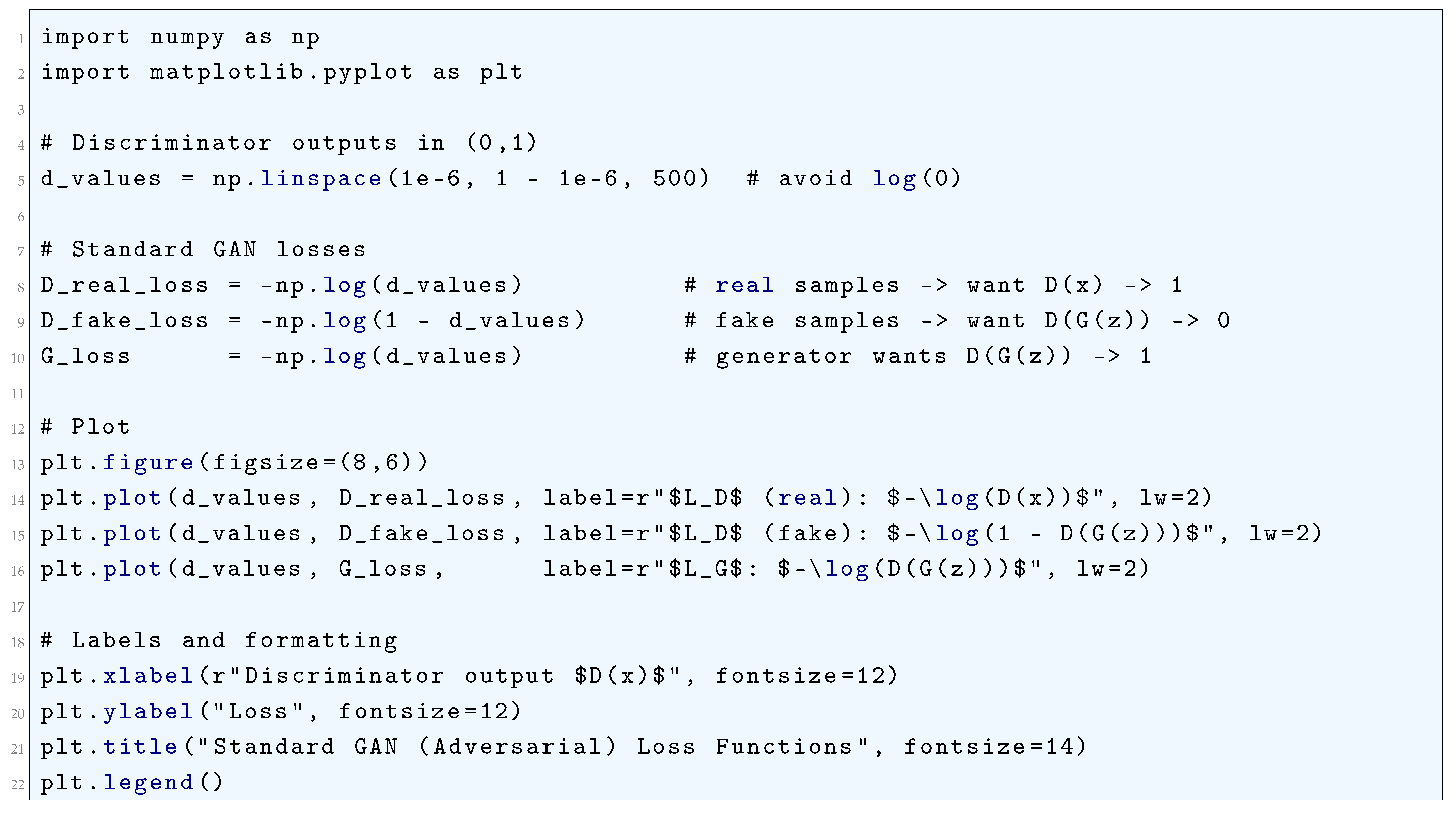

10.5.3.1.3 Adversarial Loss (Standard GAN Loss)

10.5.3.2 Loss Functions for Siamese and Metric Learning

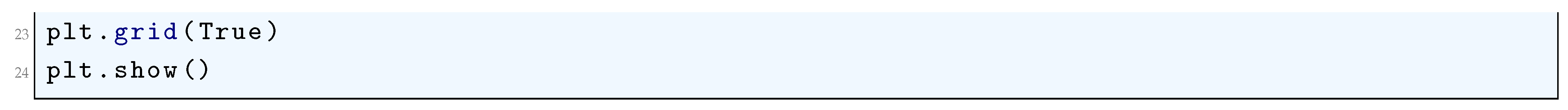

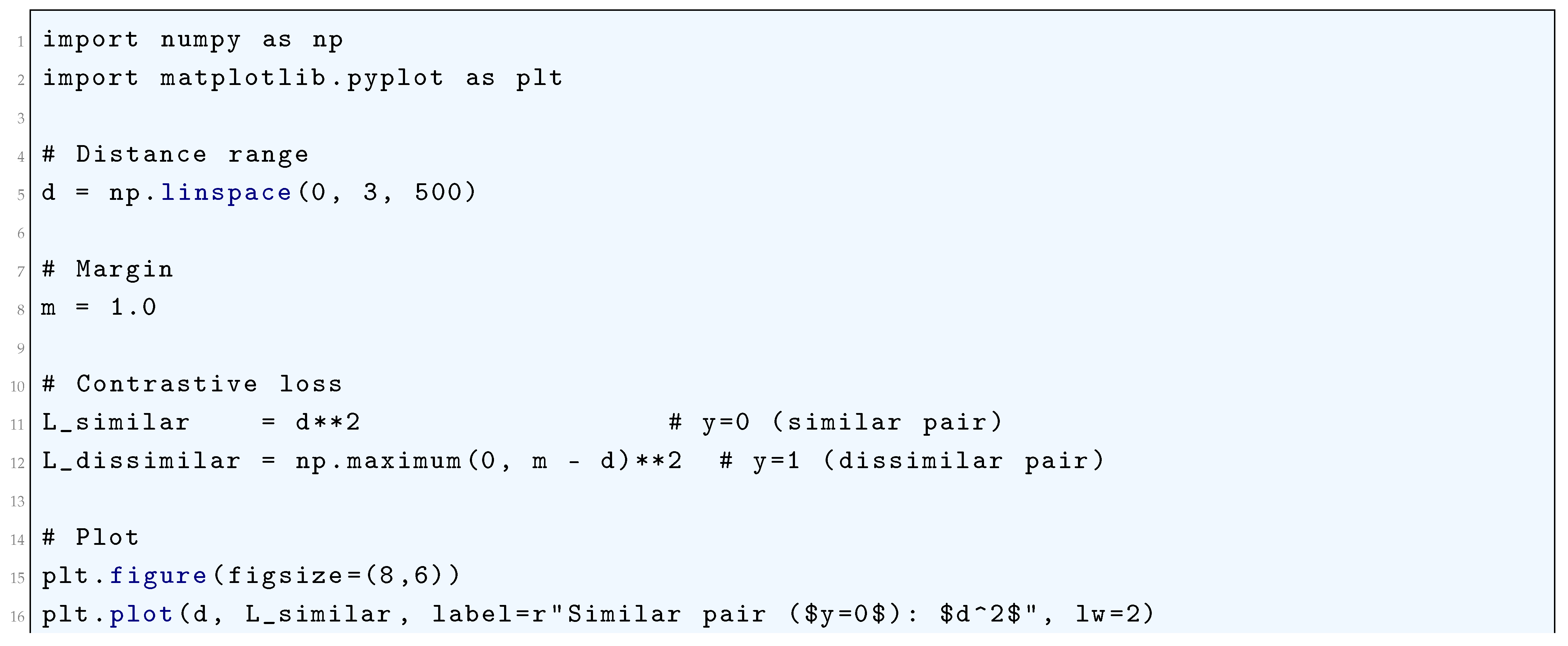

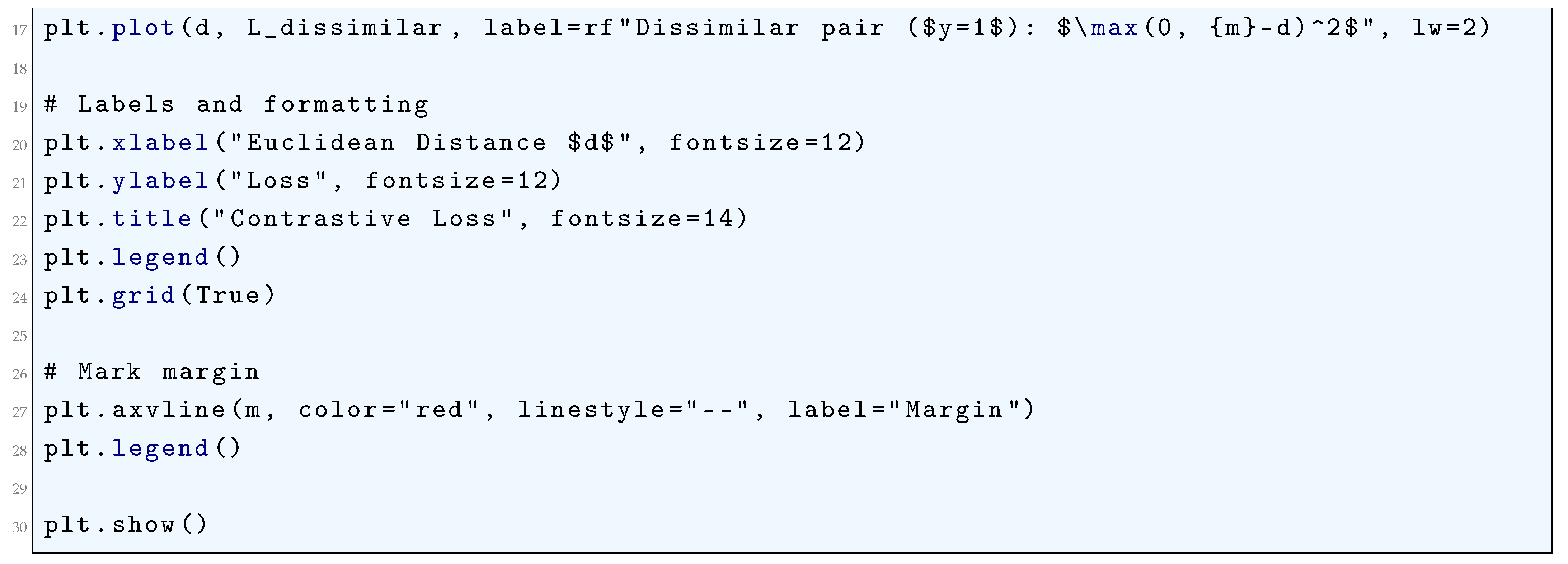

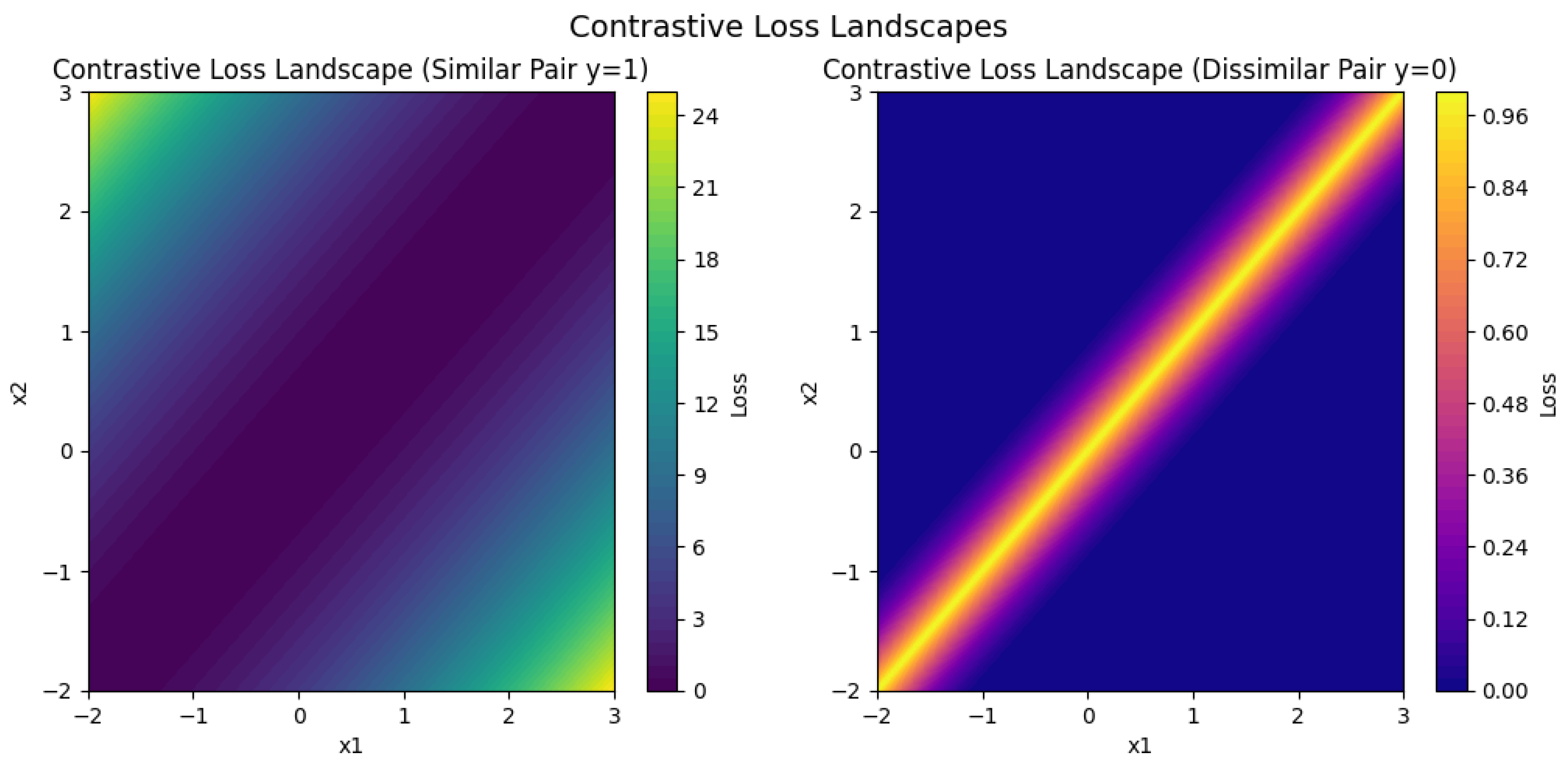

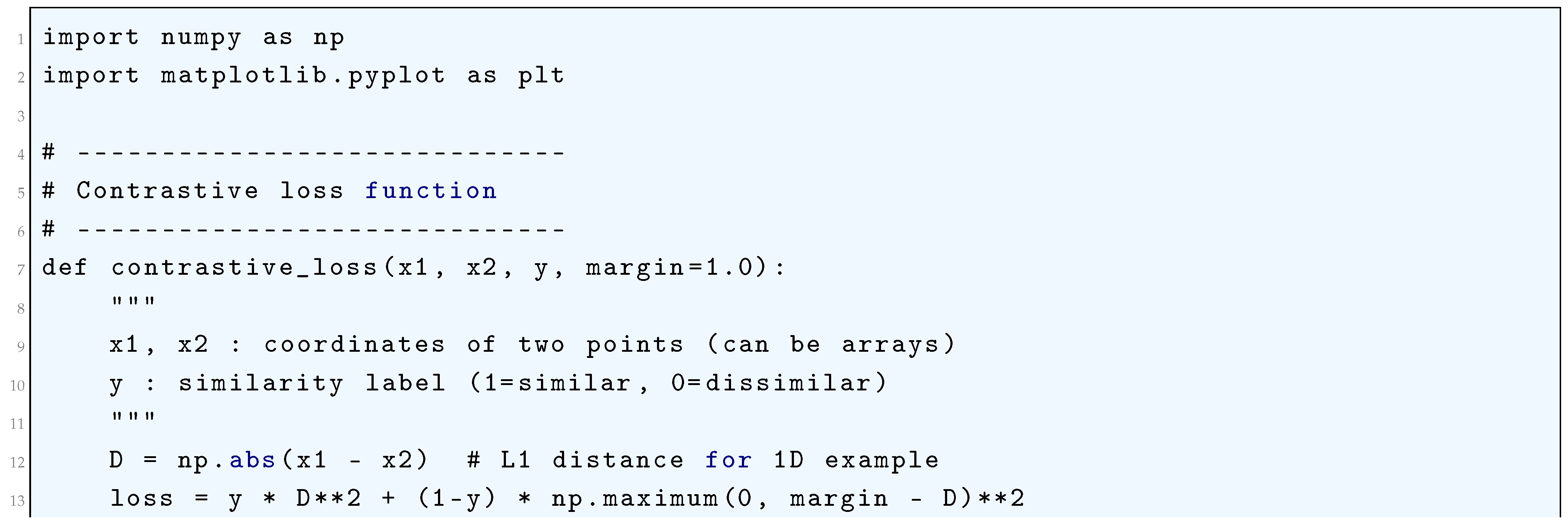

10.5.3.2.1 Contrastive Loss

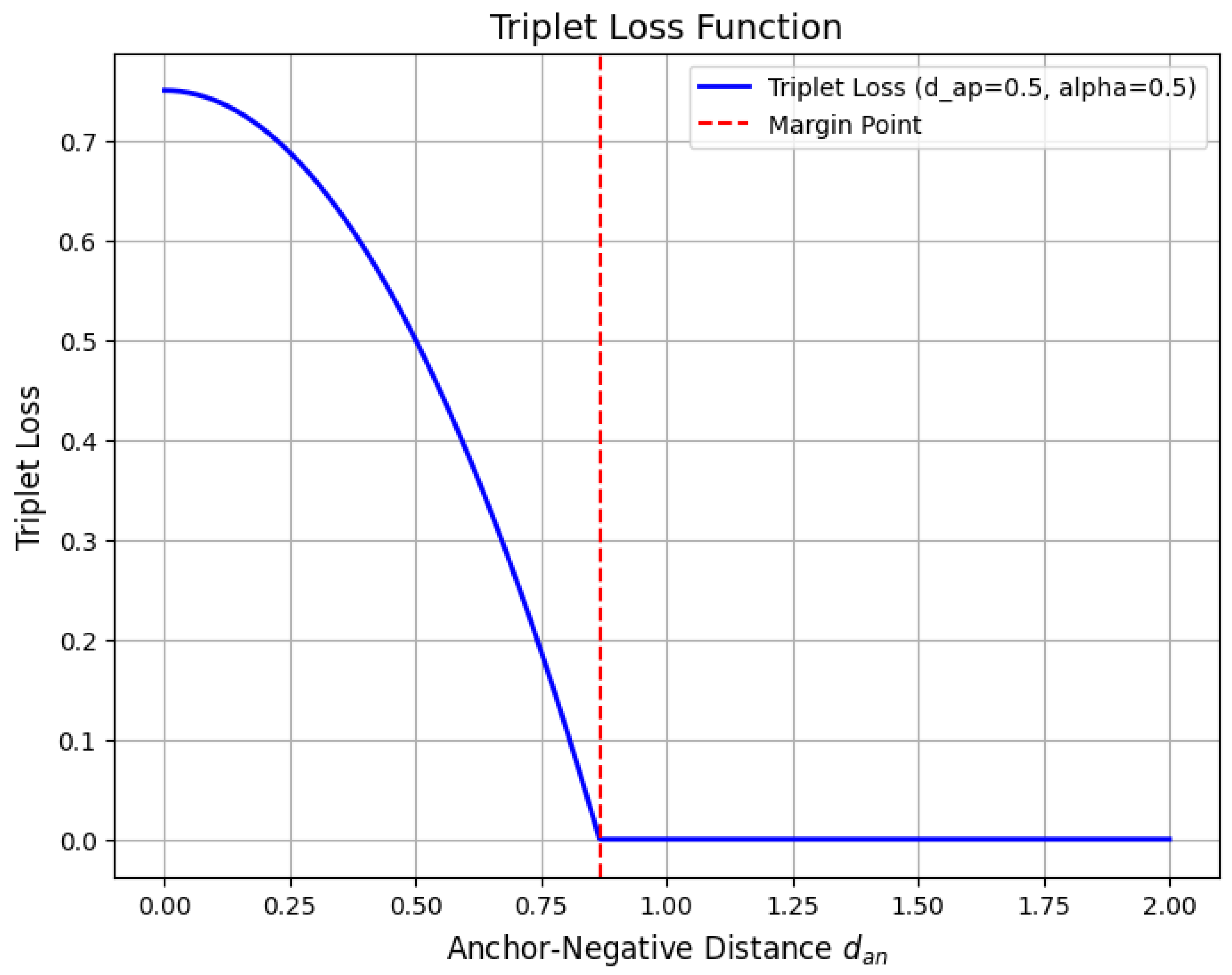

10.5.3.2.2 Triplet Loss

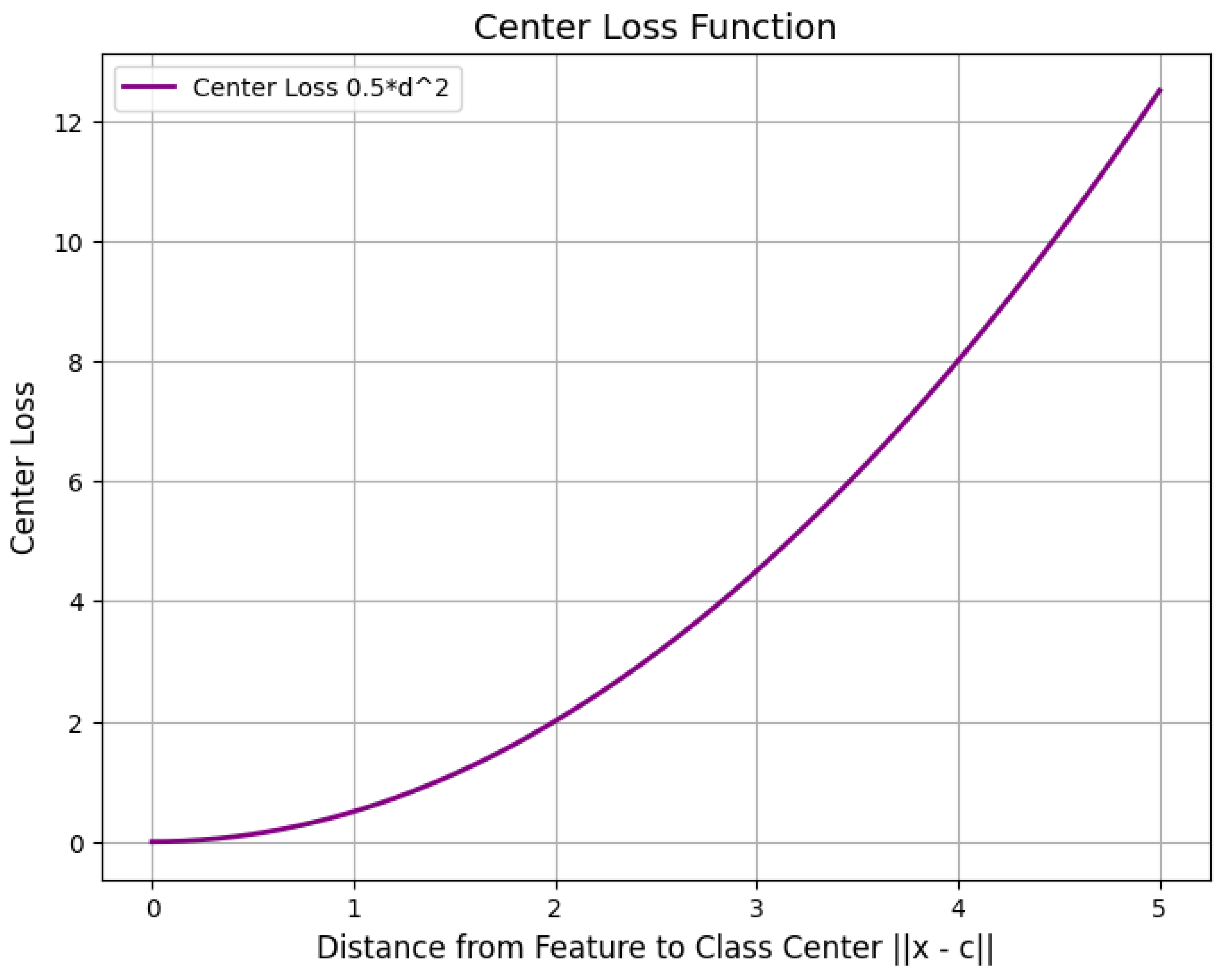

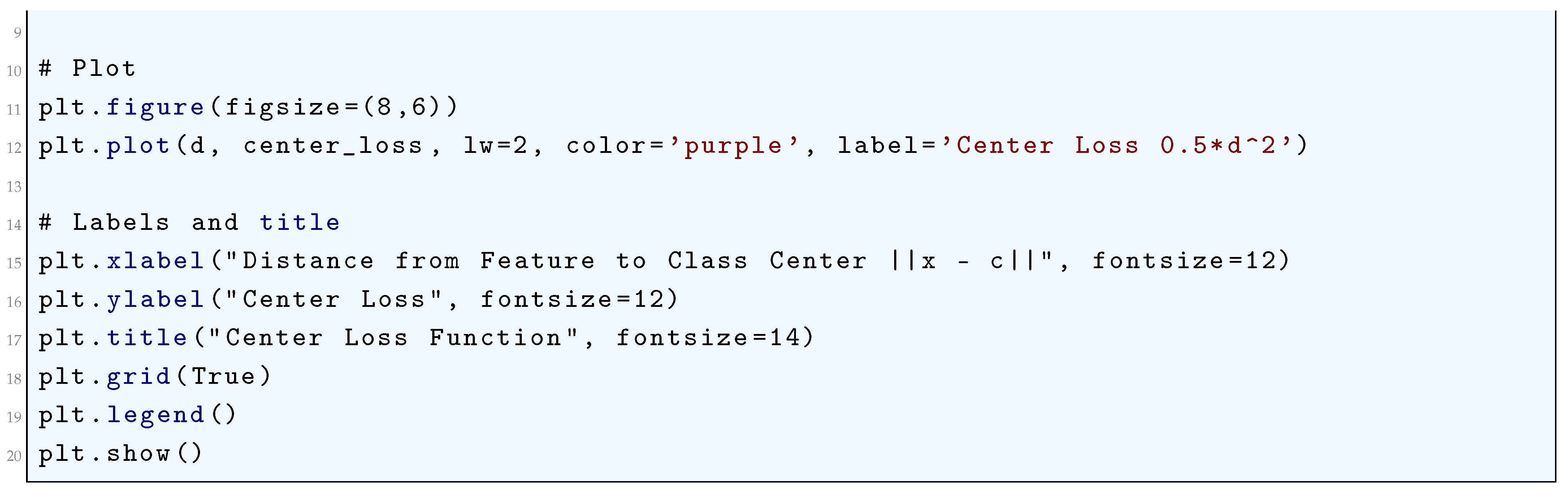

10.5.3.2.3 Center Loss

10.5.3.3 Loss Functions for Style Transfer and Super-Resolution

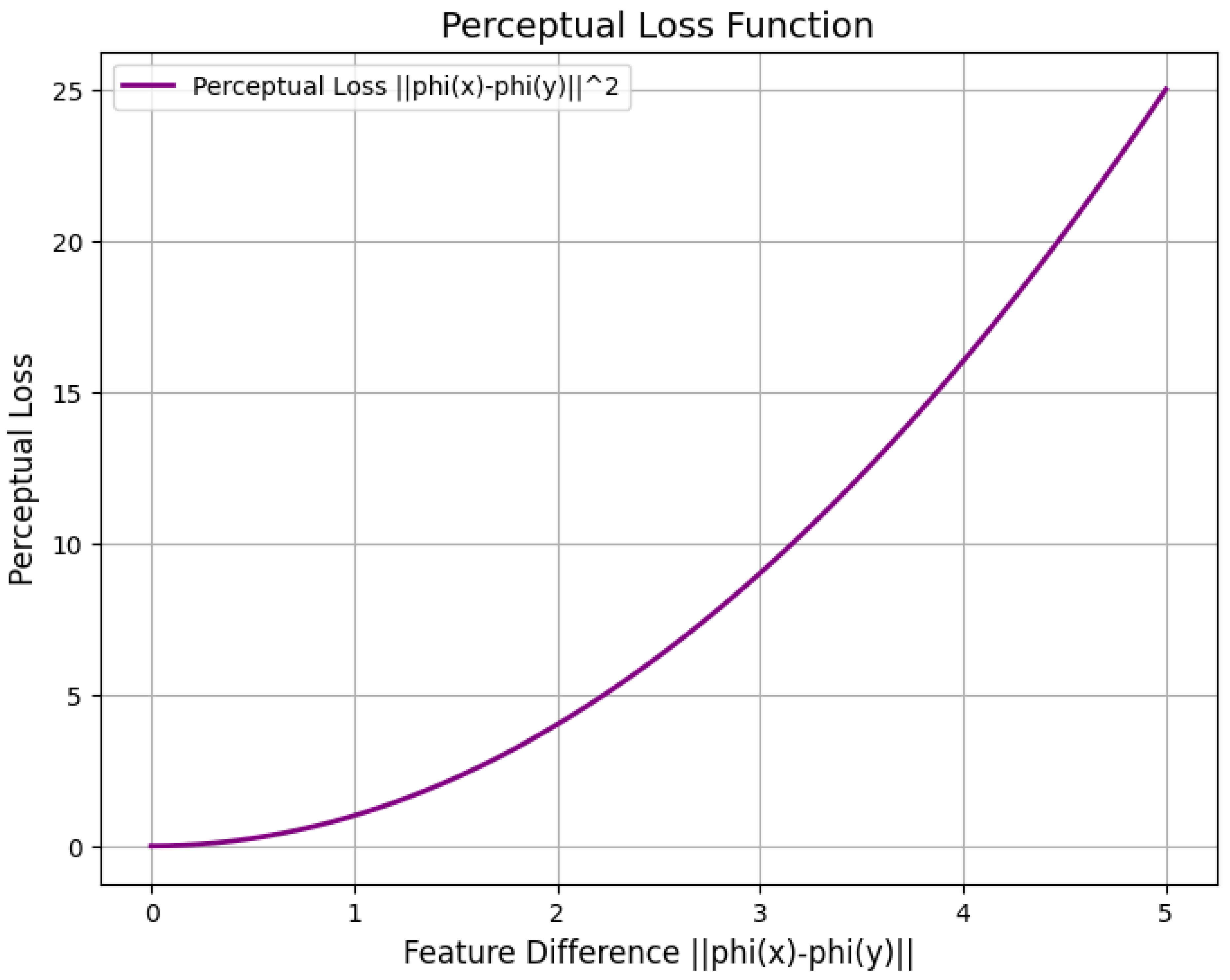

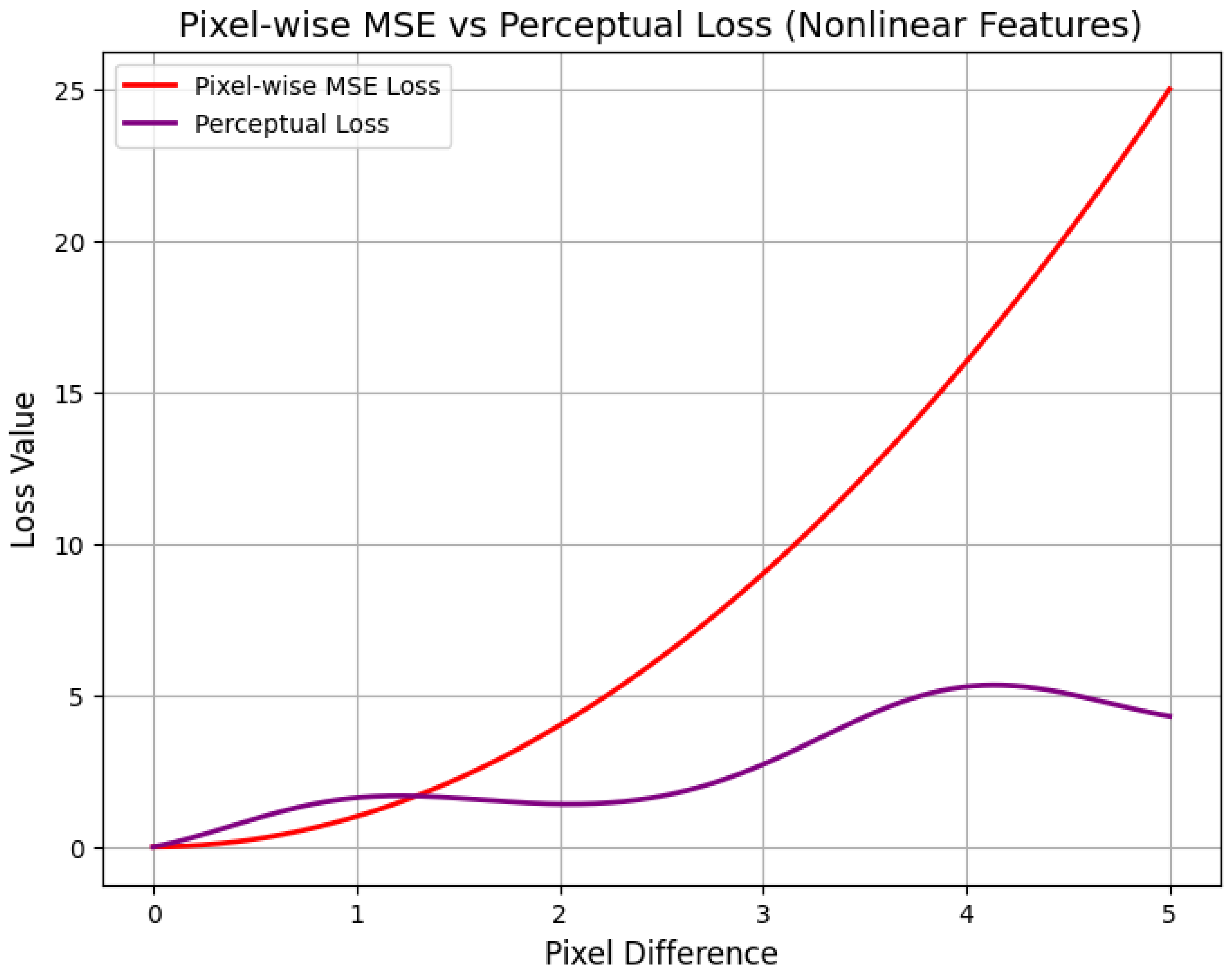

10.5.3.3.1 Perceptual Loss

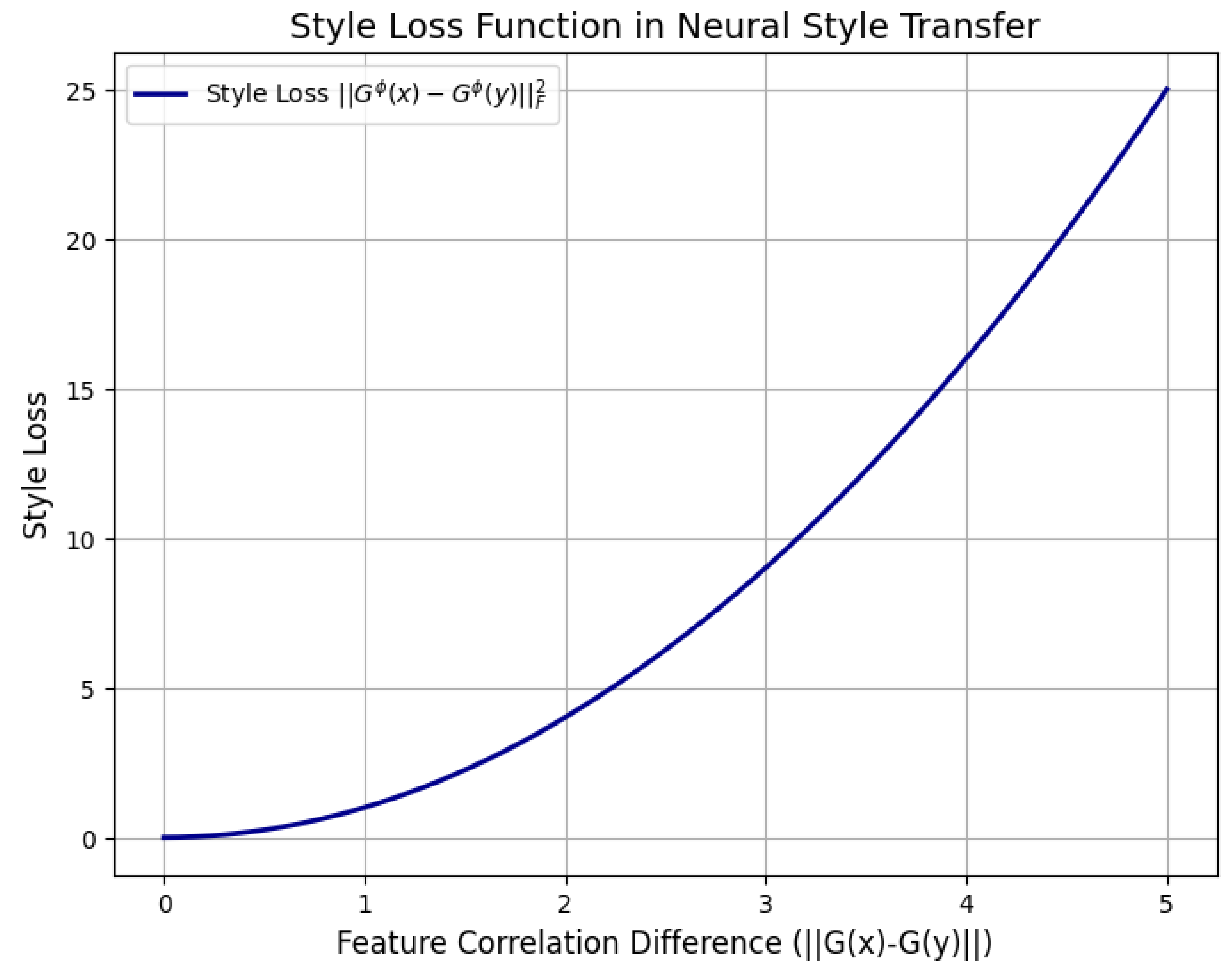

10.5.3.3.2 Style Loss

10.5.3.3.3 Total Variation (TV) Loss

10.5.3.4 Loss Functions for Uncertainty Estimation and Bayesian Deep Learning

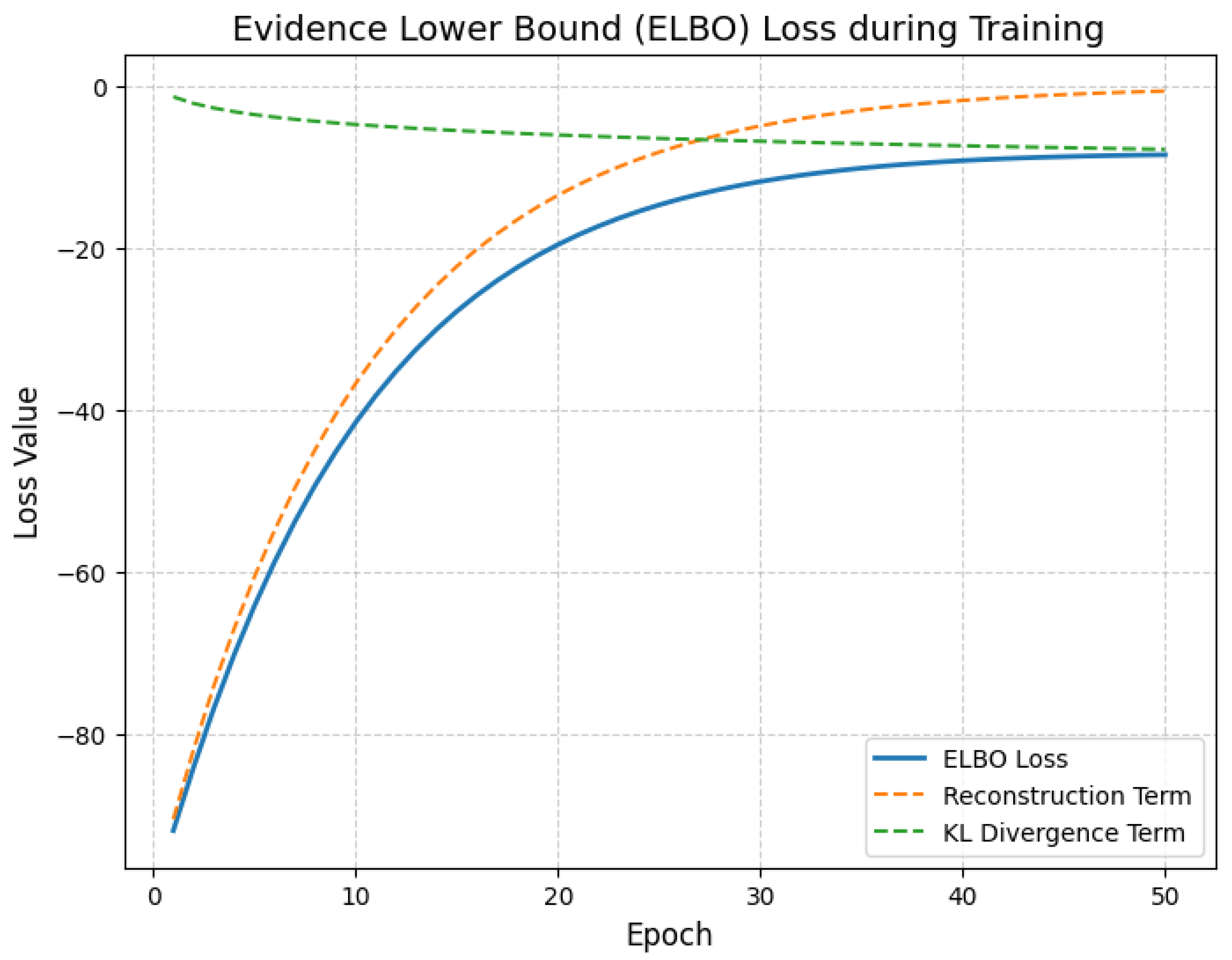

10.5.3.4.1 Evidence Lower Bound (ELBO) Loss

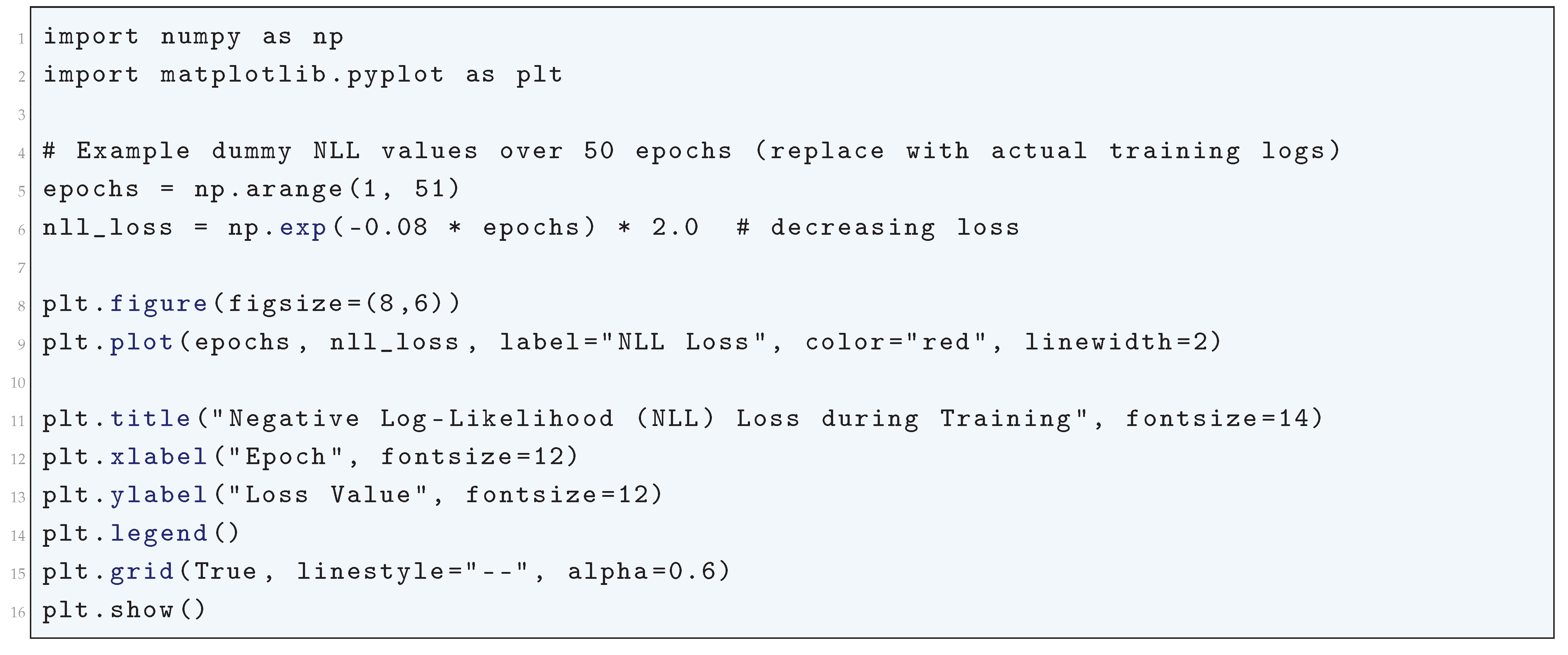

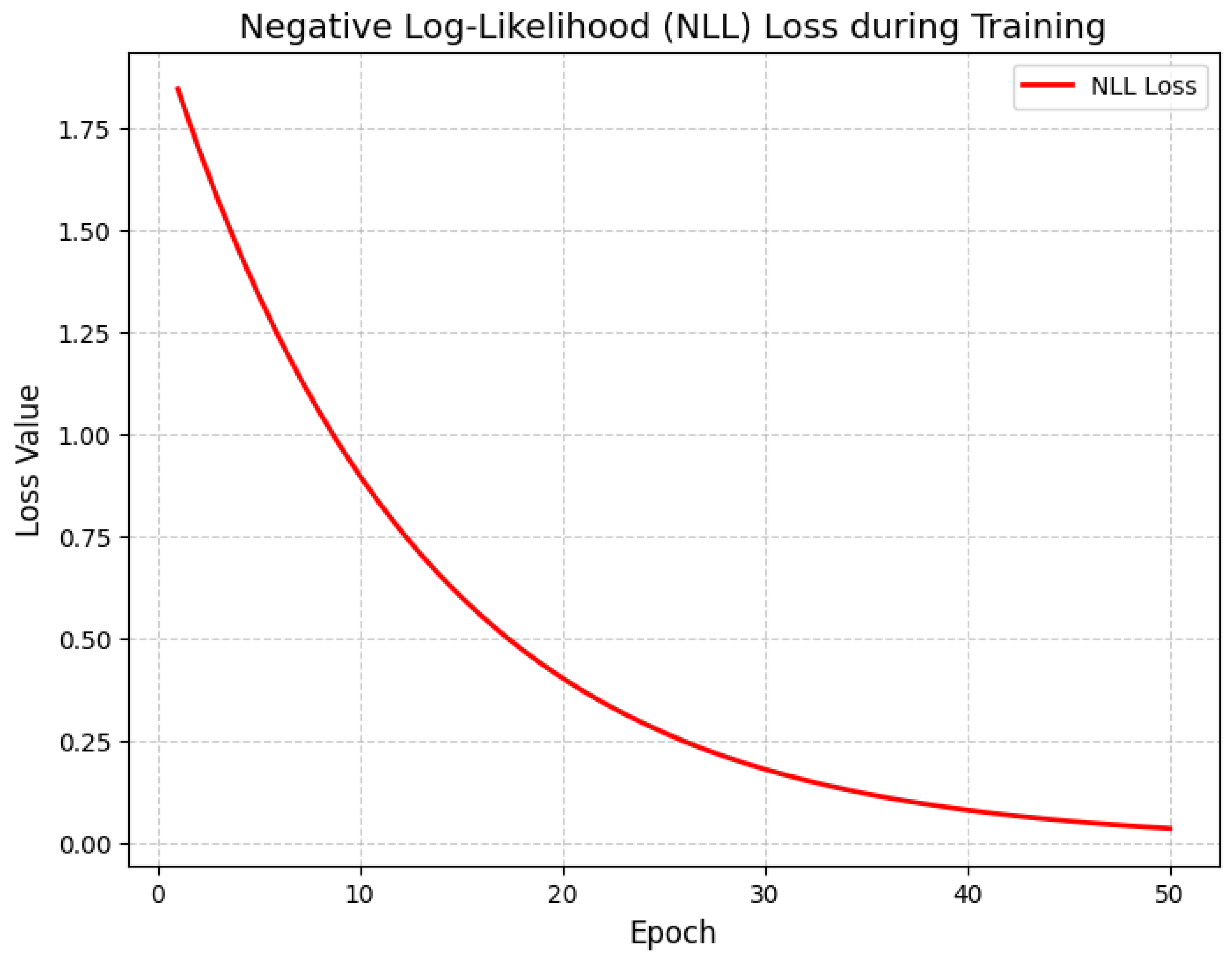

10.5.3.4.2 Negative Log-Likelihood (NLL) Loss

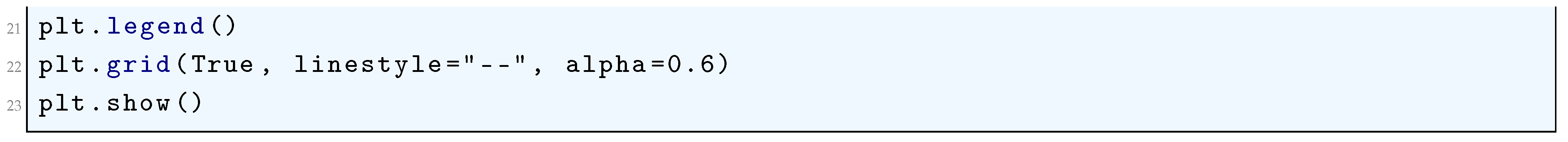

10.5.3.5 Loss Functions for Domain Adaptation

10.5.3.5.1 Domain Adversarial Loss

10.5.3.5.2 Maximum Mean Discrepancy (MMD) Loss

10.5.3.6 Loss Functions for Object Detection and Segmentation

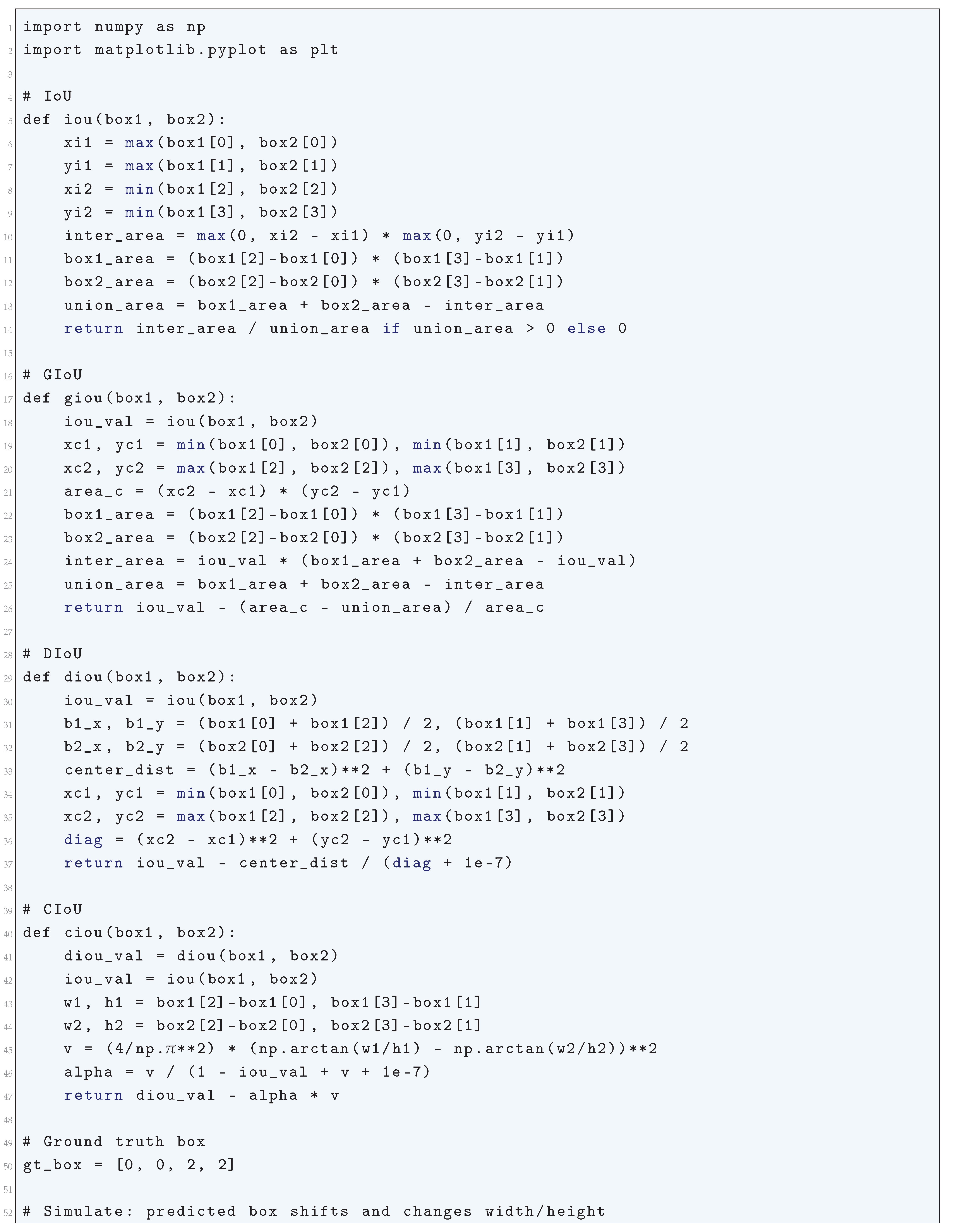

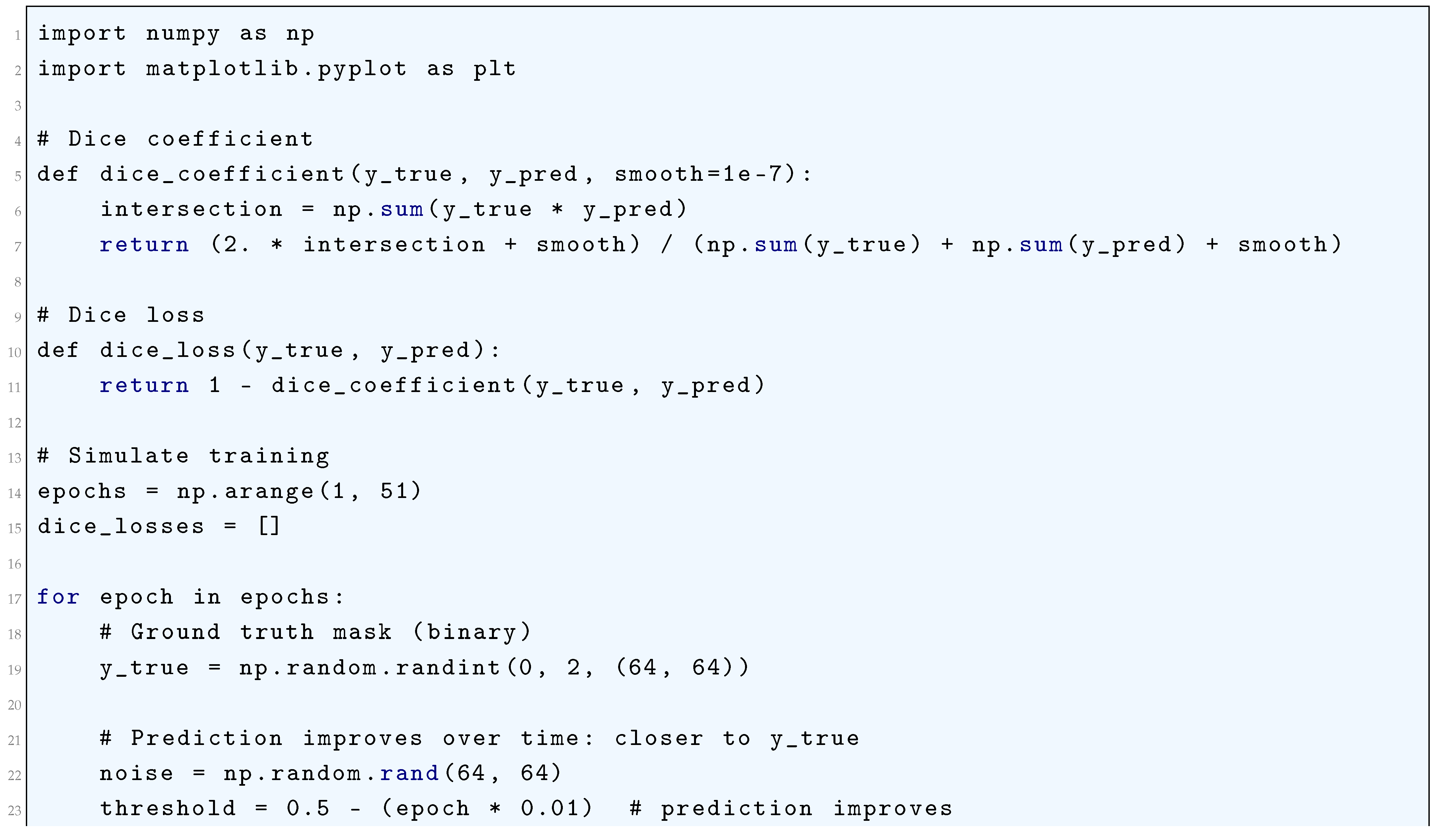

10.5.3.6.1 IoU Loss (Intersection over Union) and Its Variants (GIoU, DIoU, CIoU)

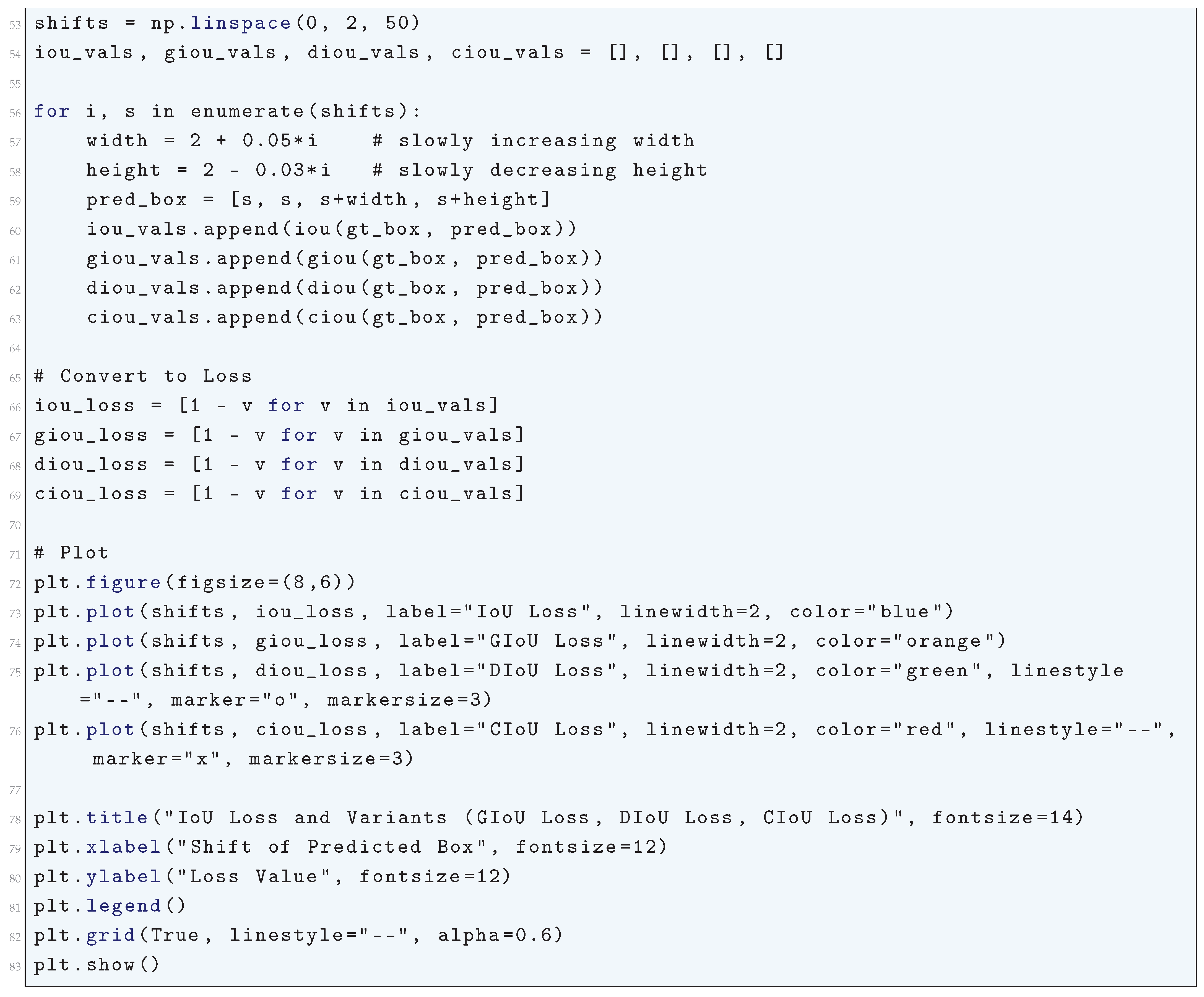

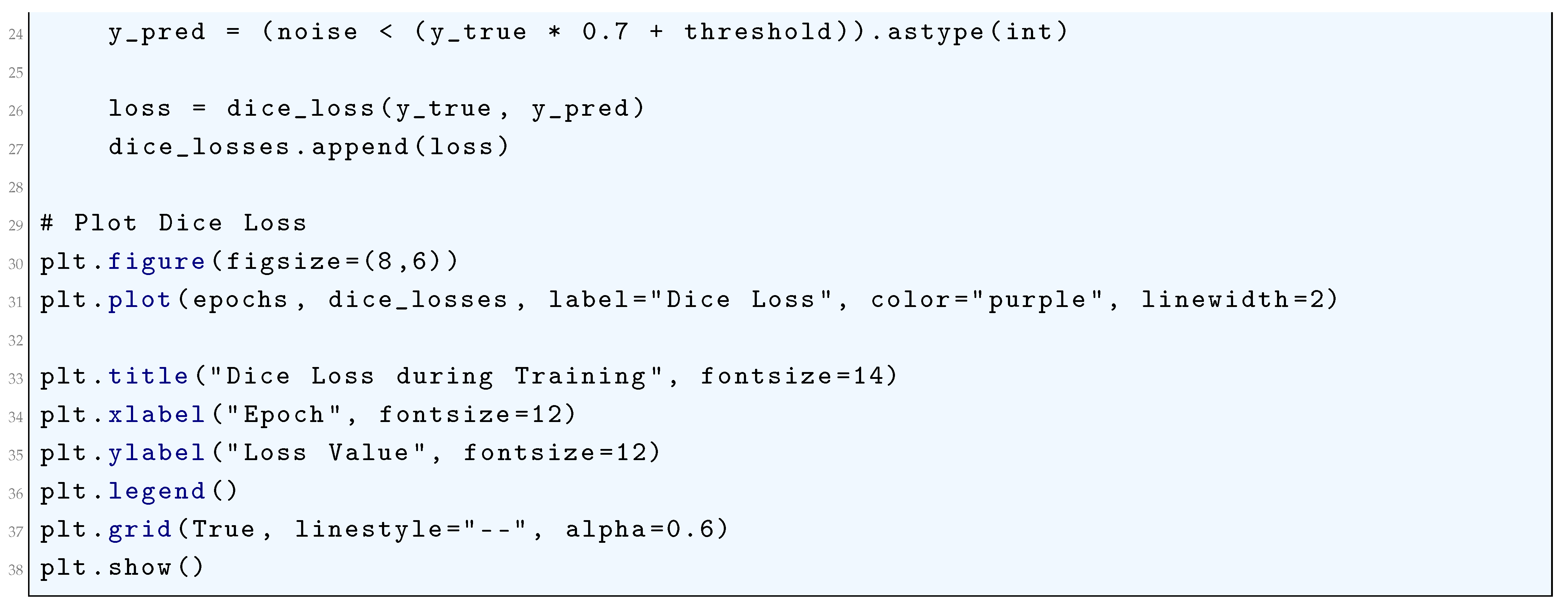

10.5.3.6.2 Dice Loss

10.5.3.6.3 Focal Loss

10.5.3.7 Loss Functions for Knowledge Distillation

10.5.3.8 Loss Functions for Reinforcement Learning

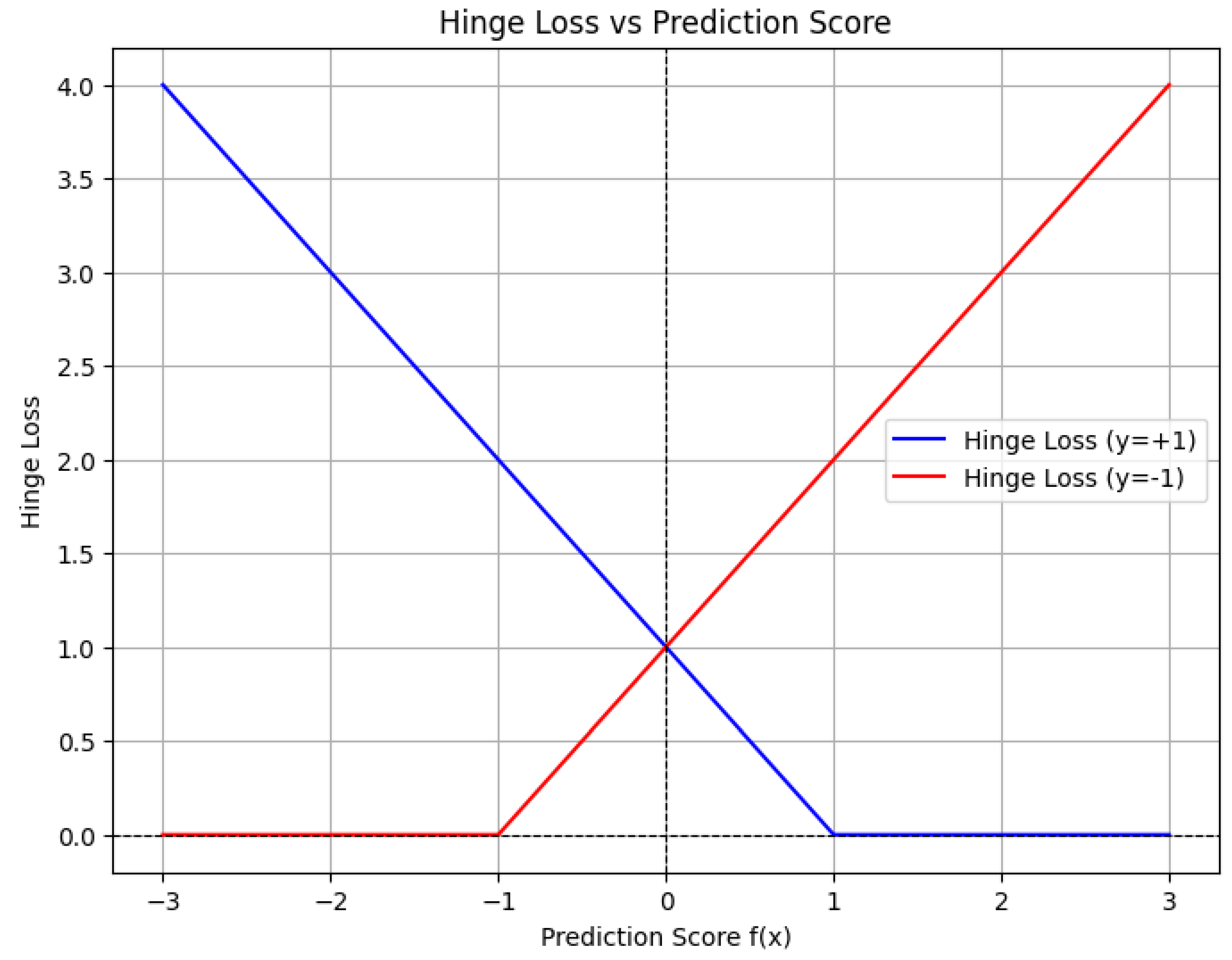

10.5.3.8.1 Hinge Loss

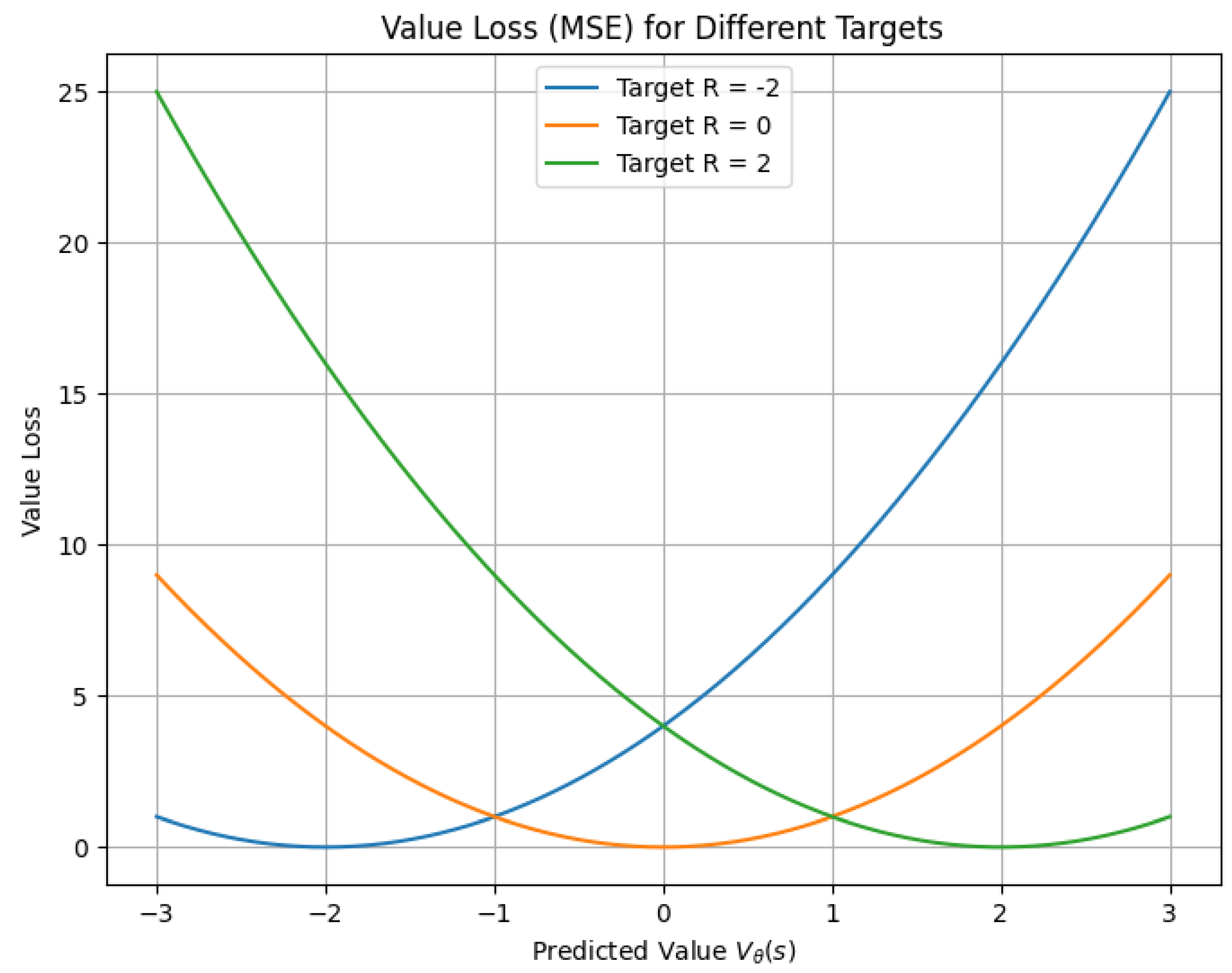

10.5.3.8.2 Value Loss

10.5.3.8.3 Policy Loss

10.5.3.8.4 Entropy Regularization

10.5.3.9 Loss Functions for Sparse and Structured Outputs

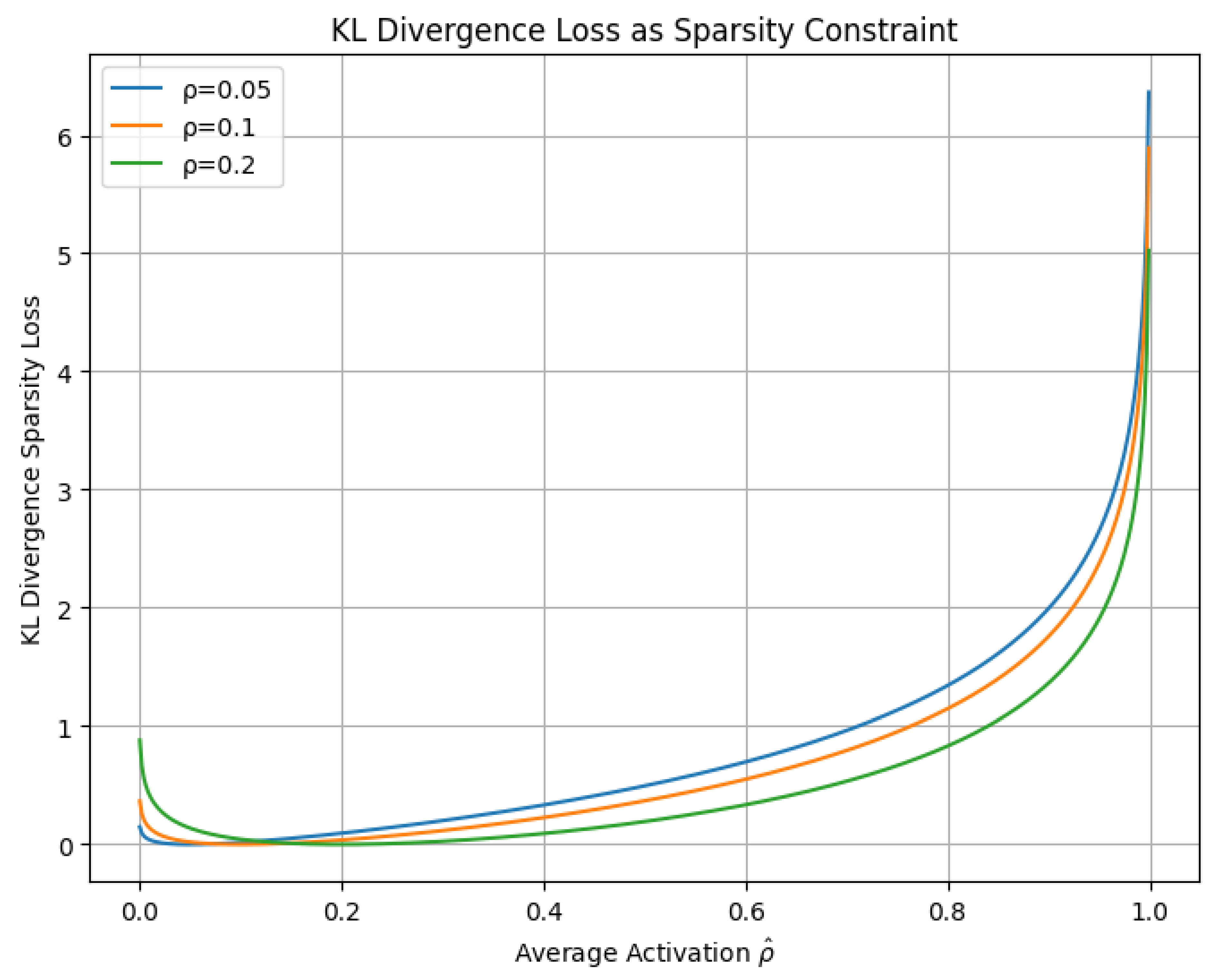

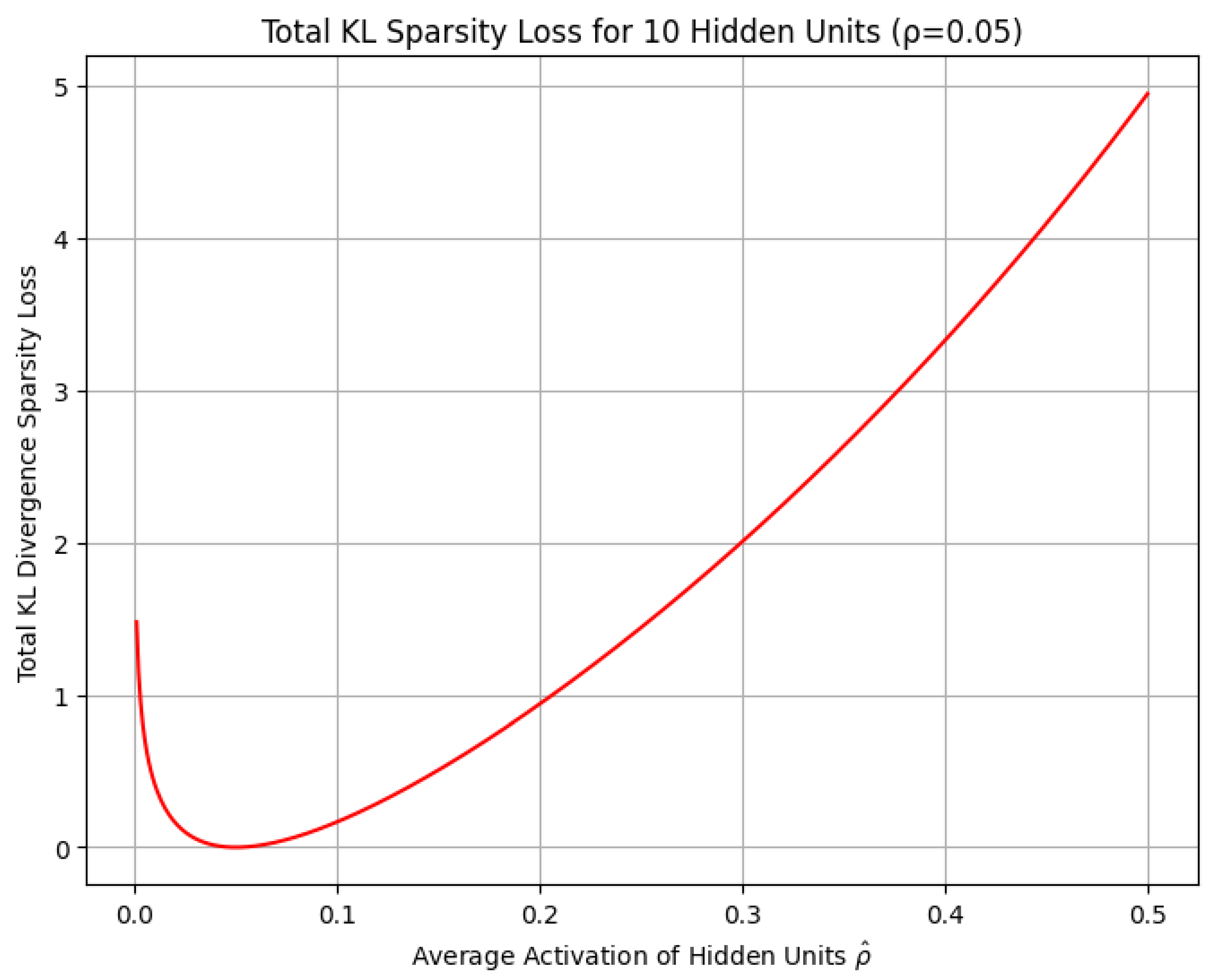

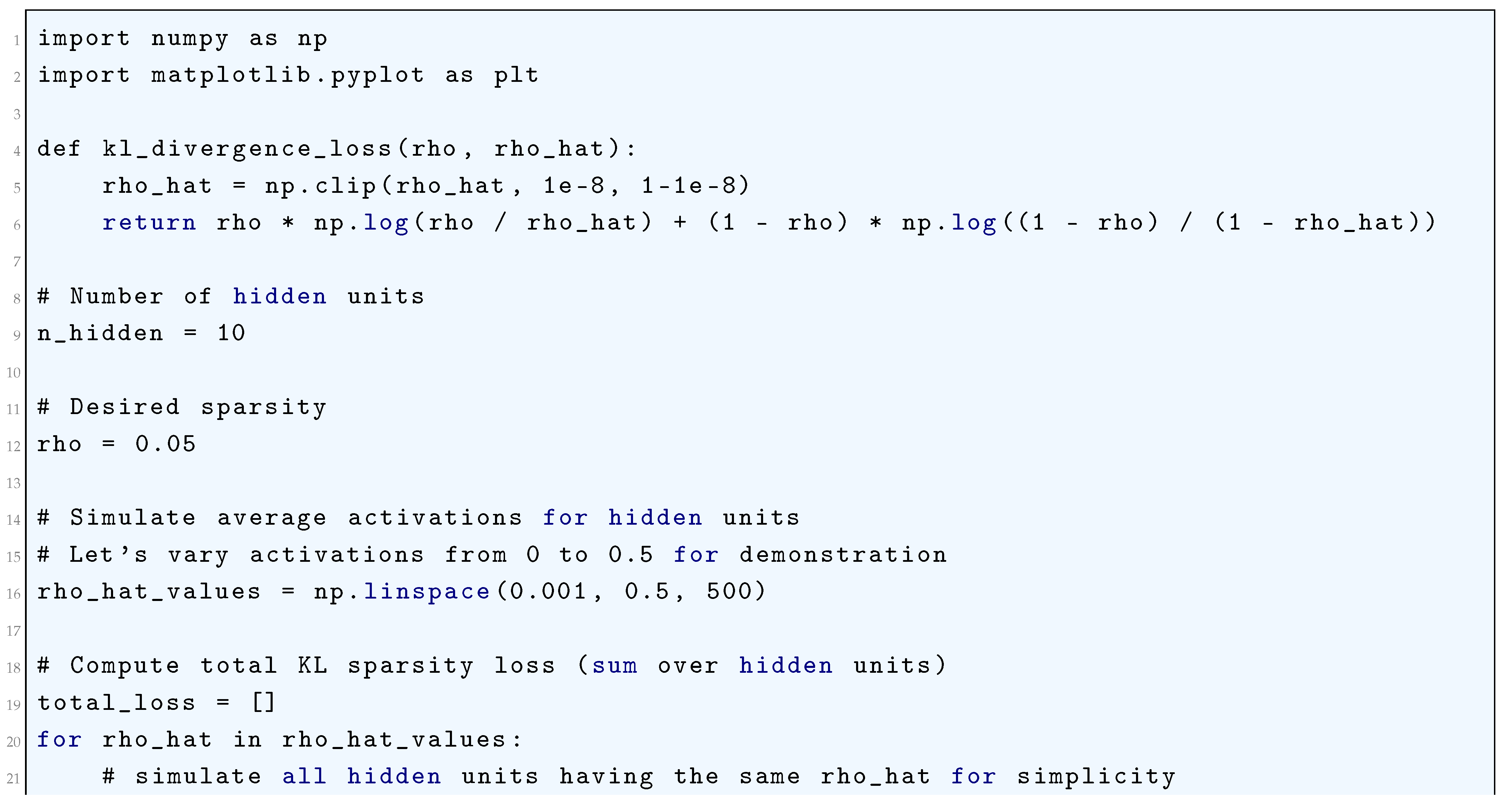

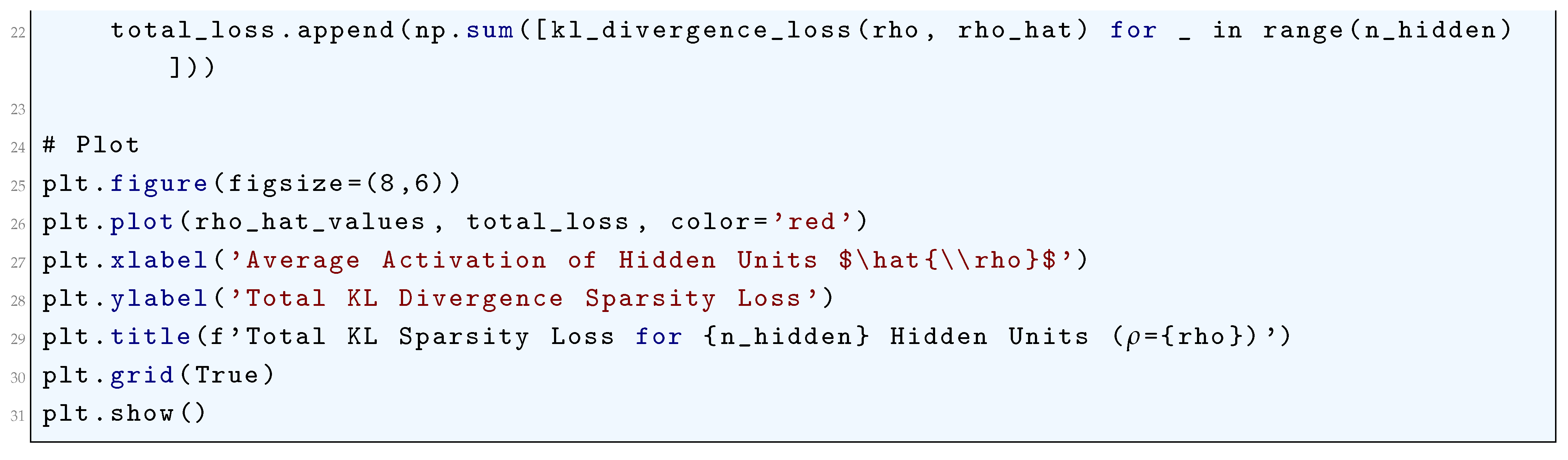

10.5.3.9.1 Kullback-Leibler (KL) Divergence Loss (as a Sparsity Constraint)

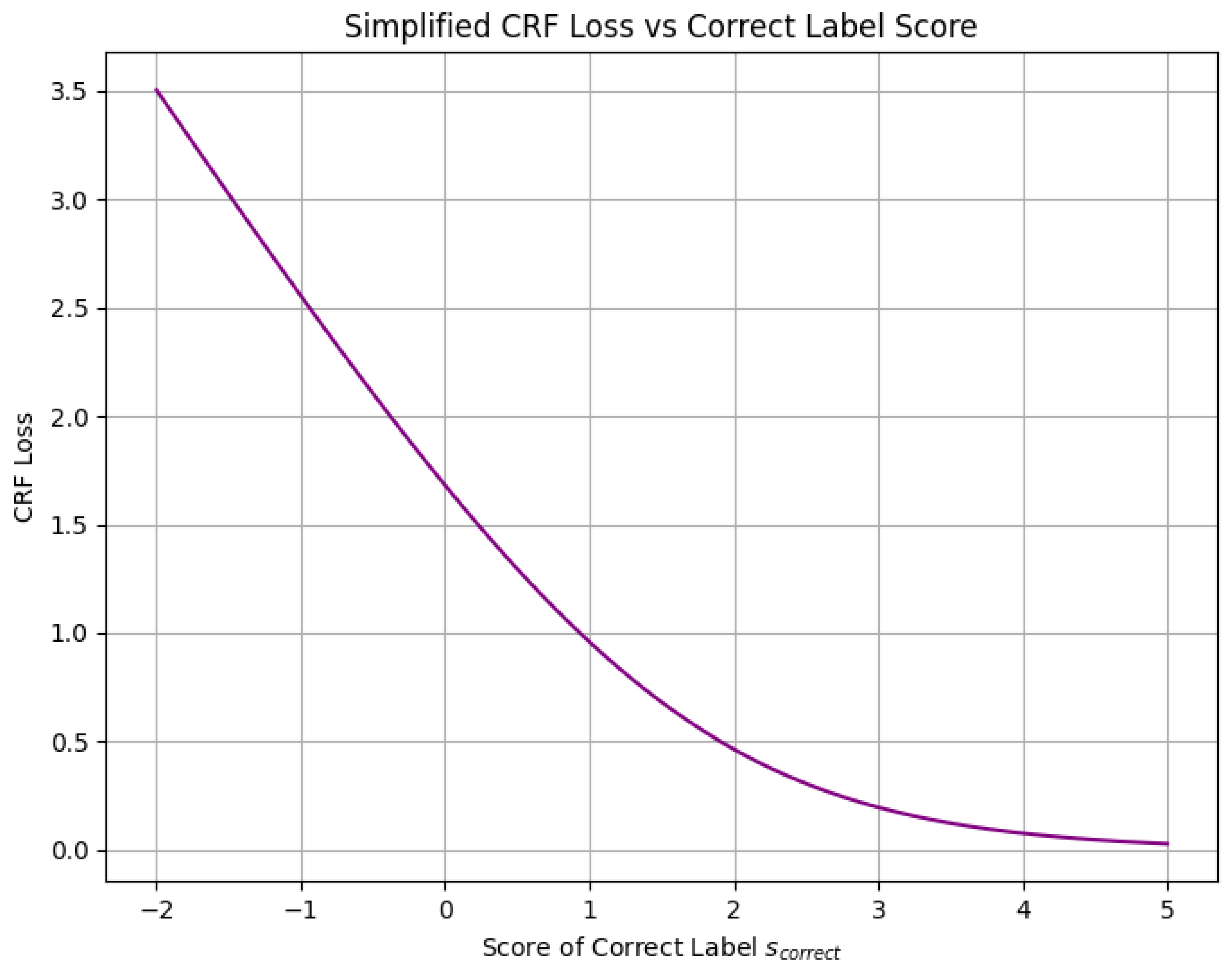

10.5.3.9.2 Structured Support Vector Machine (SSVM) Loss

10.5.3.9.3 Conditional Random Field (CRF) Loss

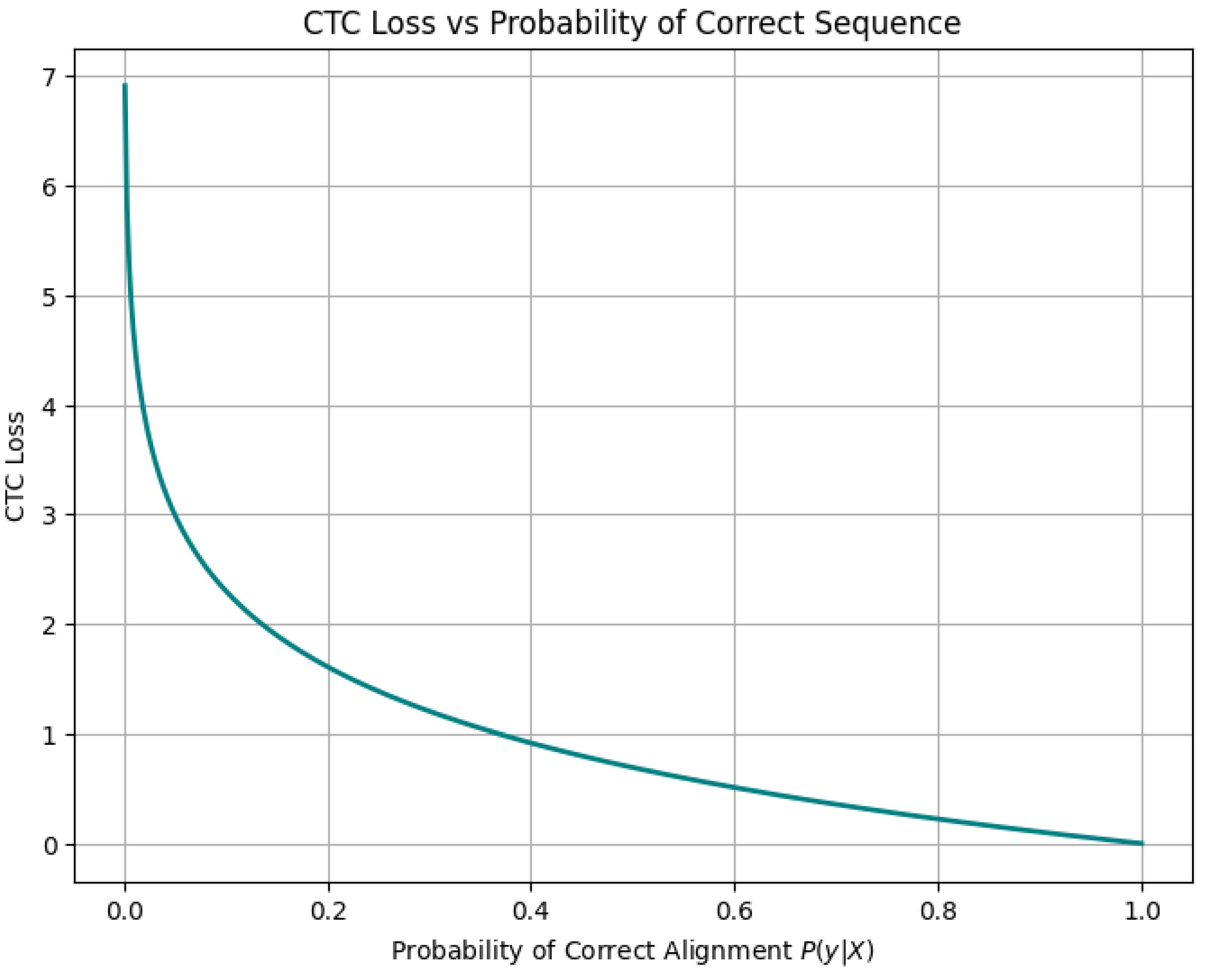

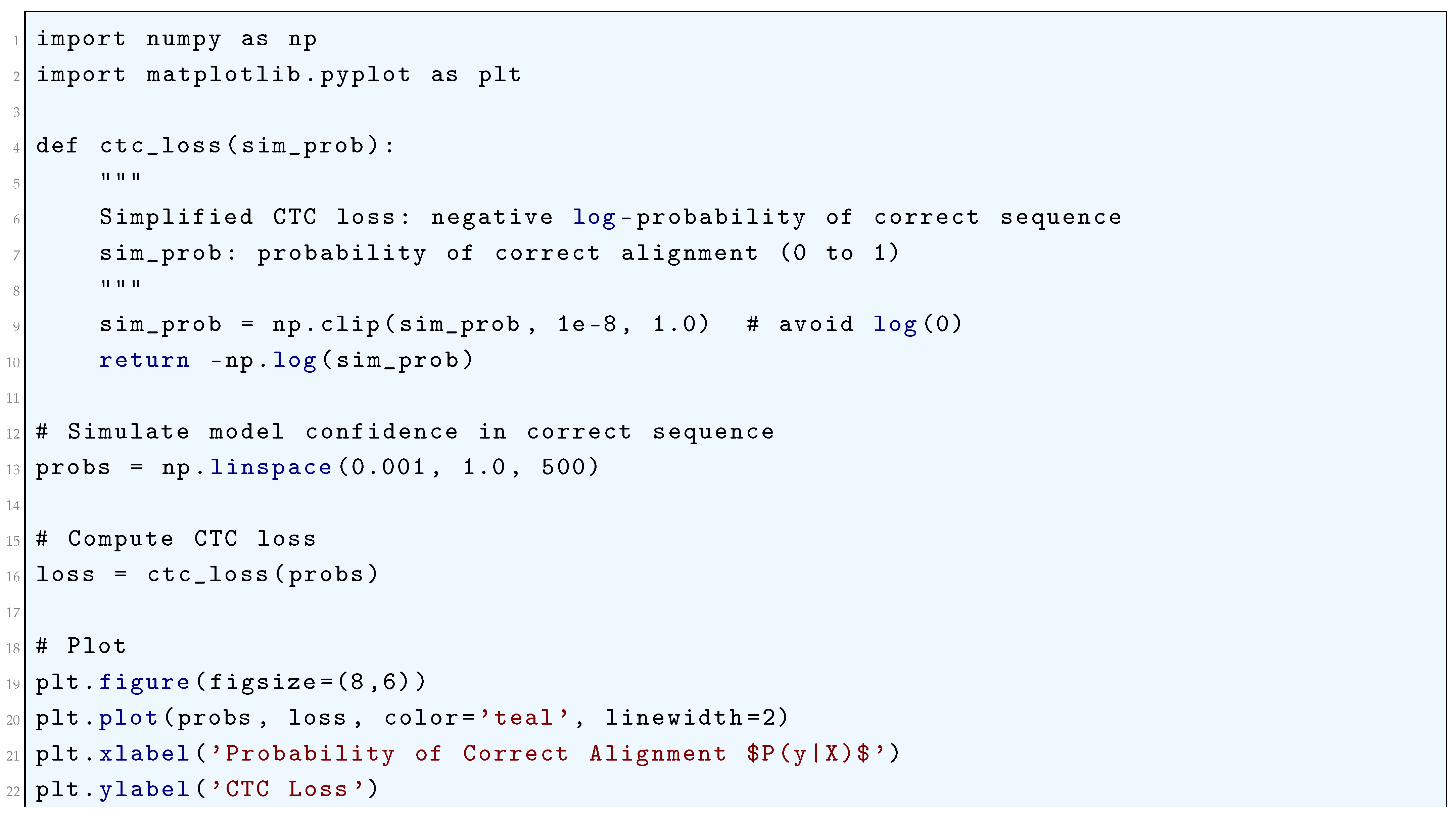

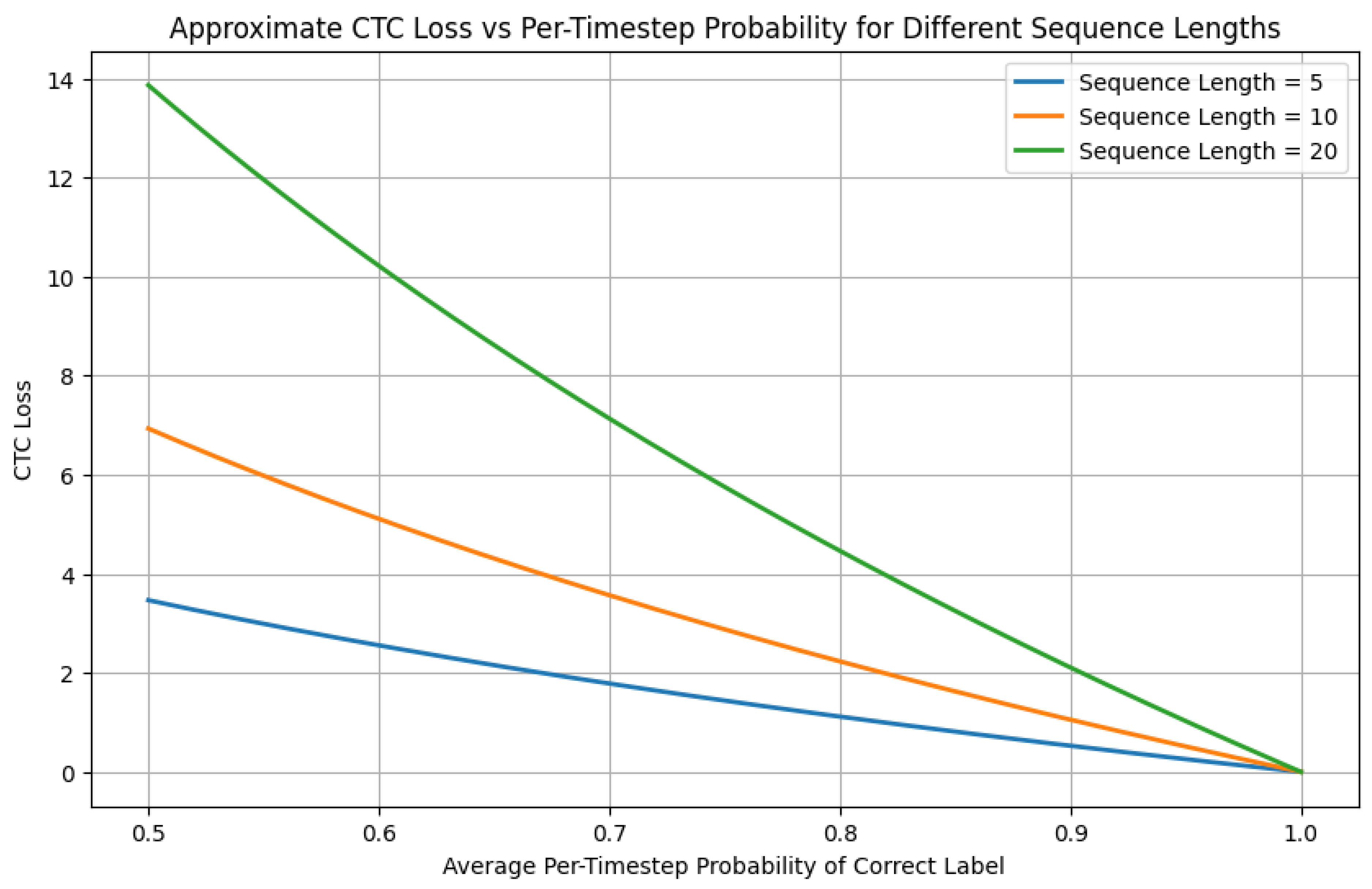

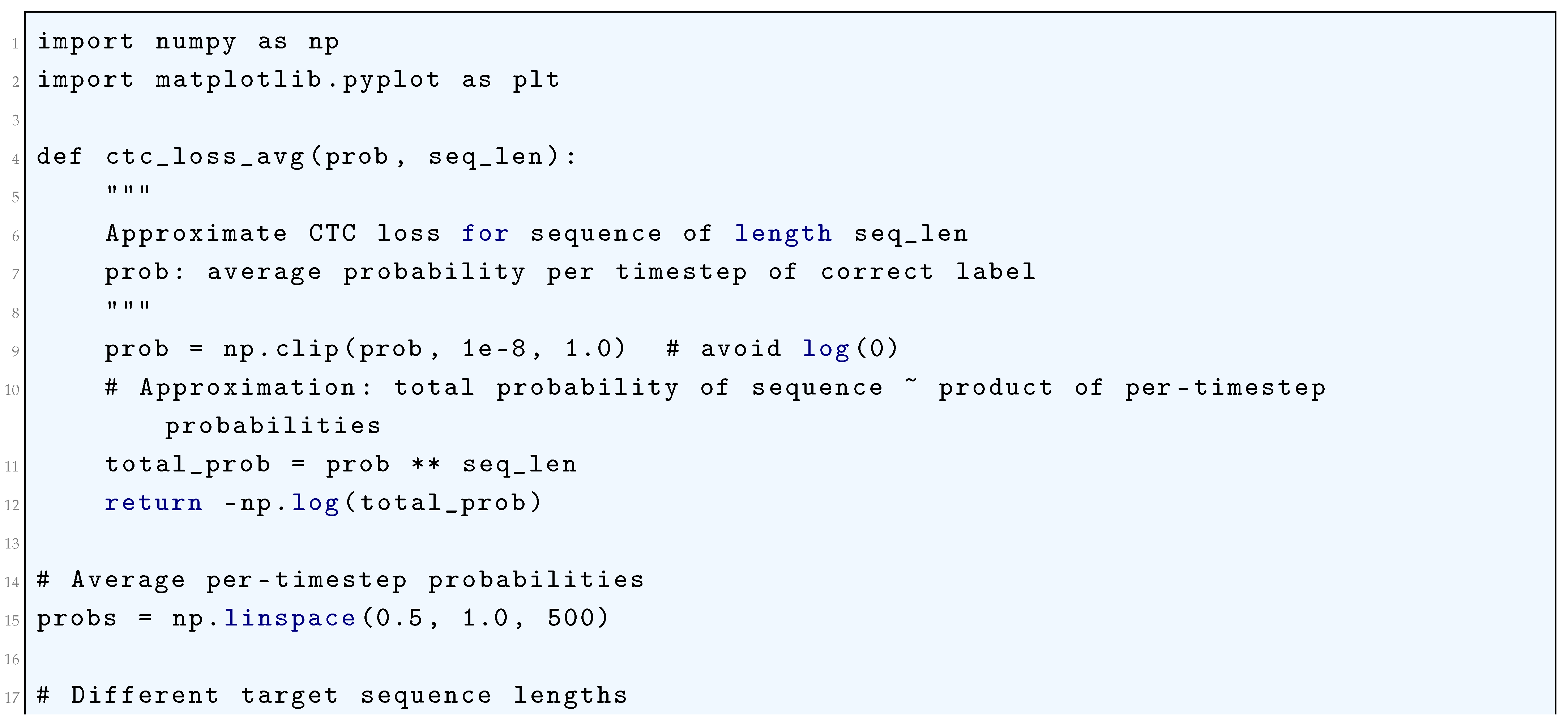

10.5.3.9.4 Connectionist Temporal Classification (CTC) Loss

10.5.3.9.5 Maximum Margin Markov Networks Loss

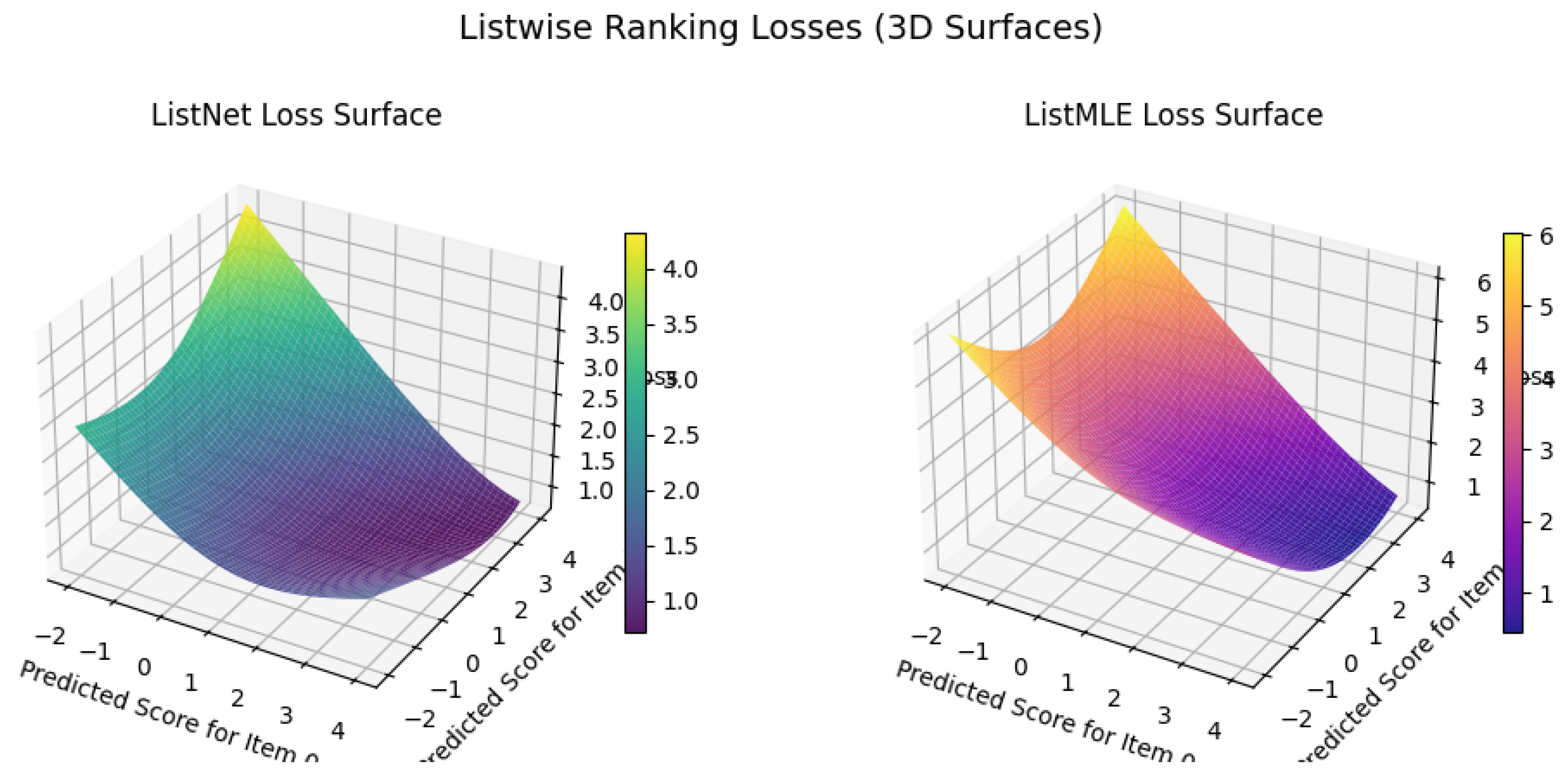

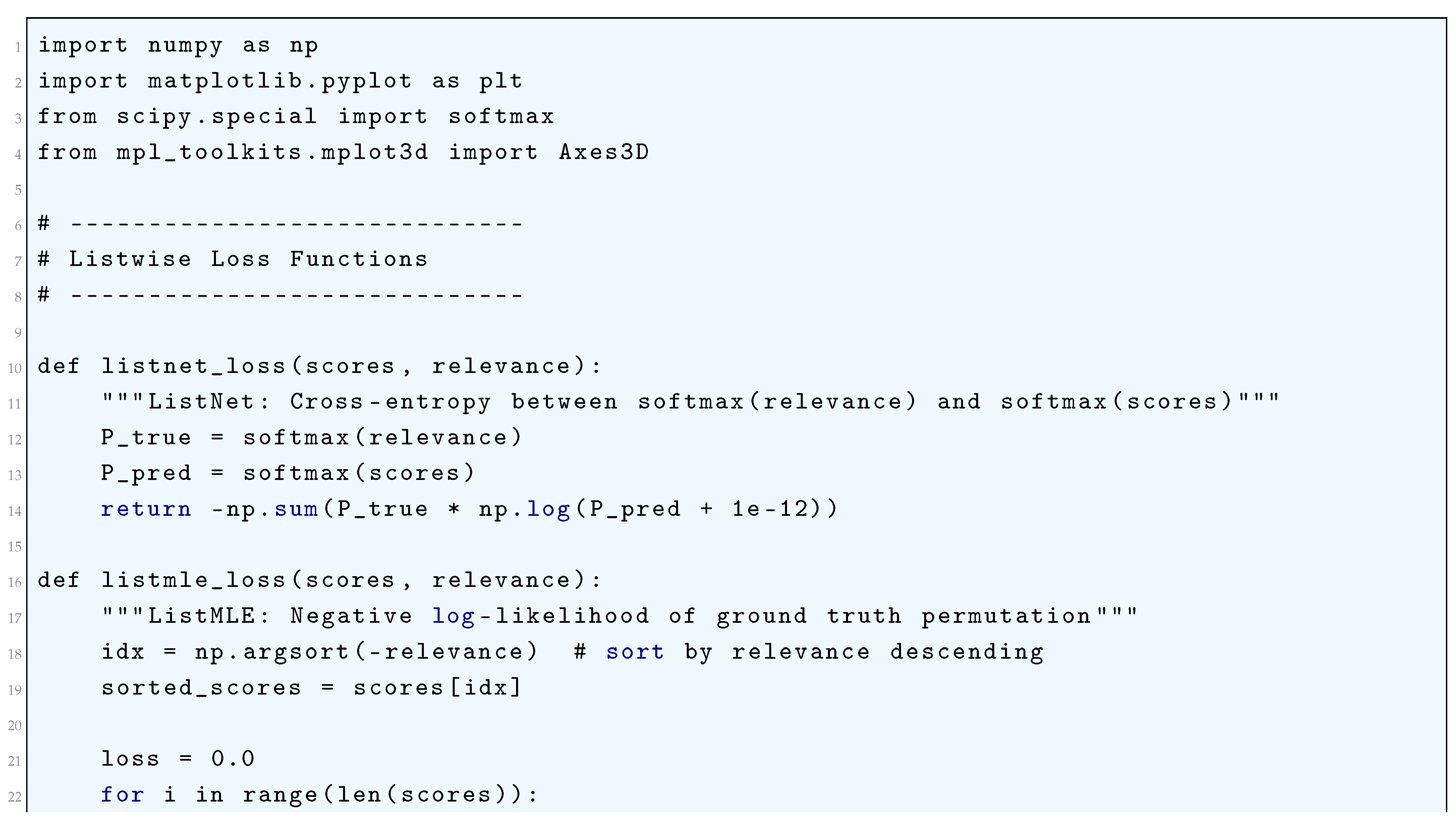

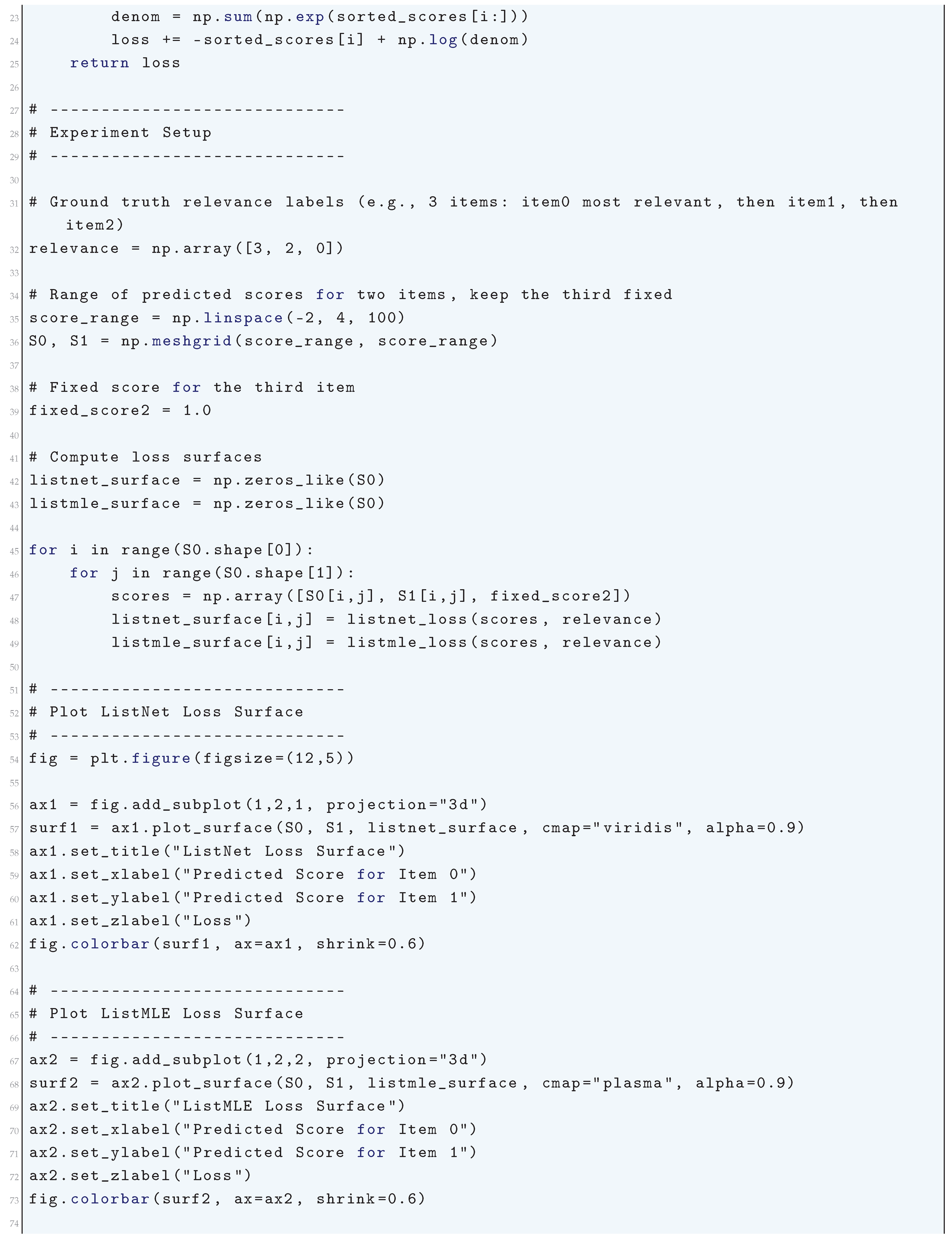

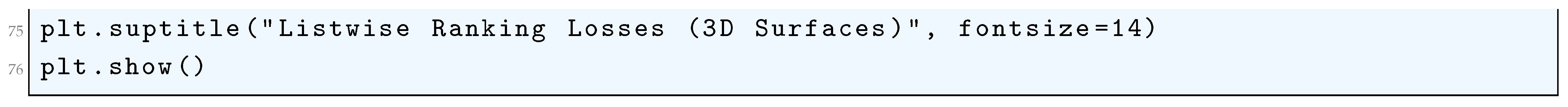

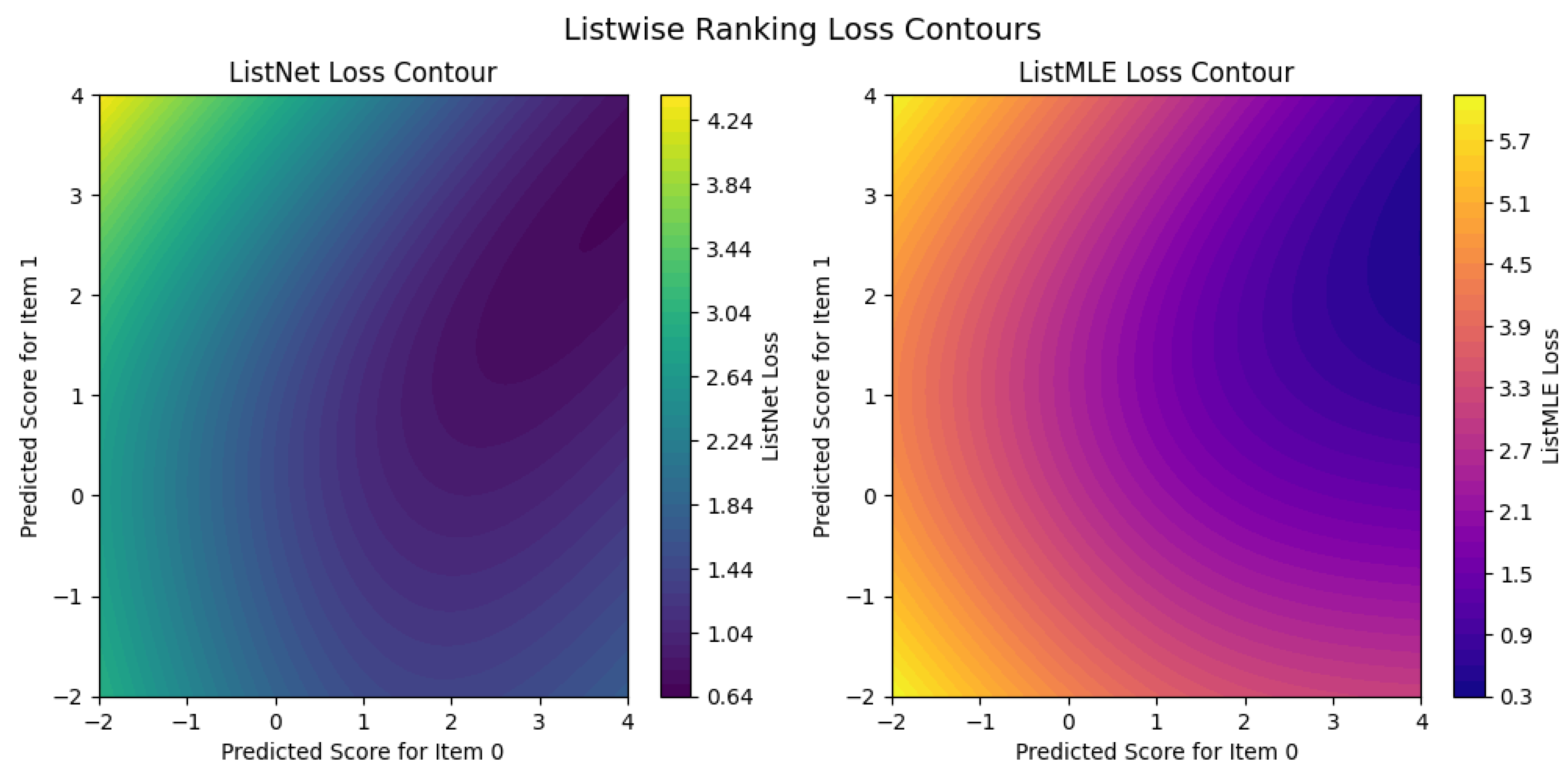

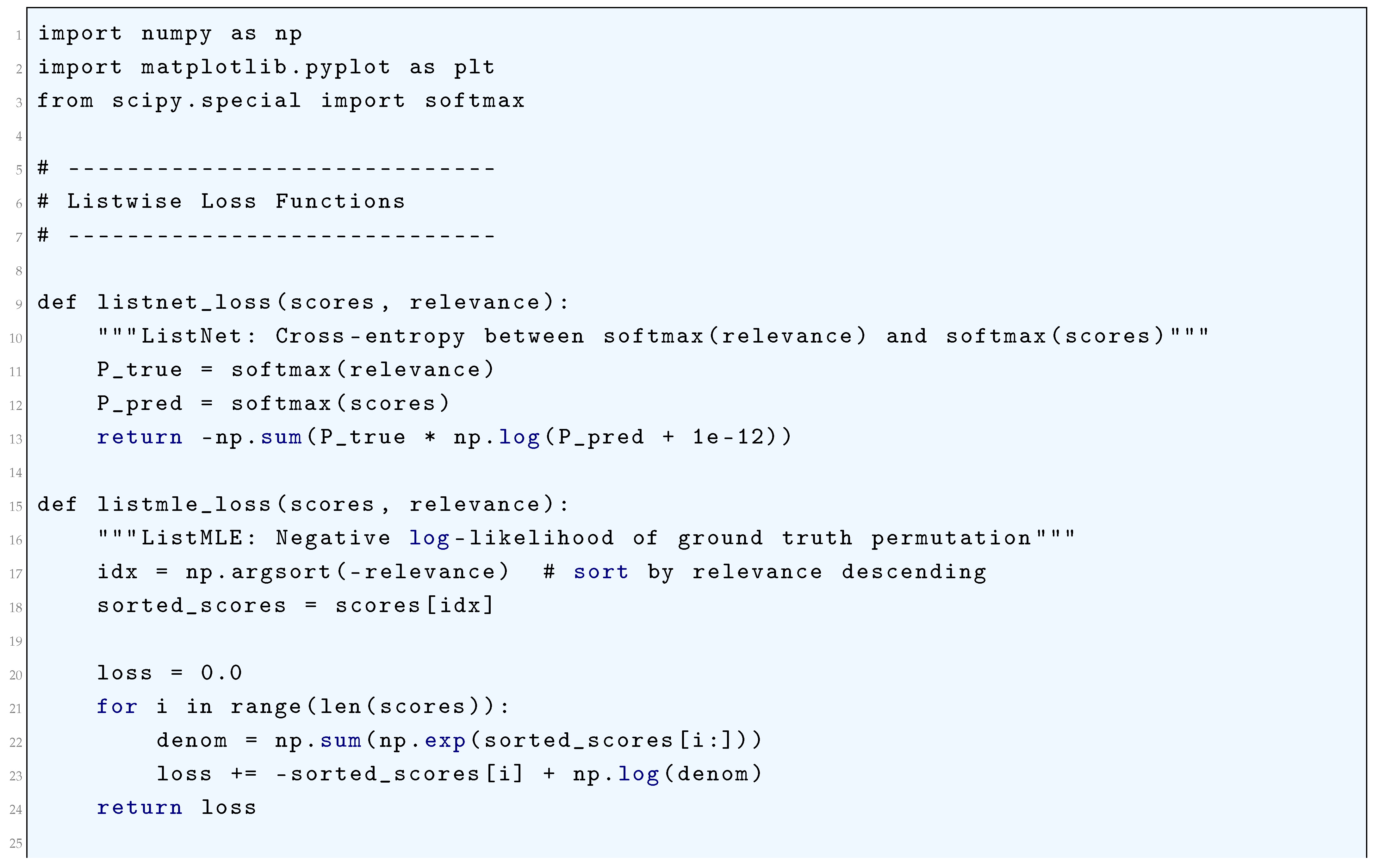

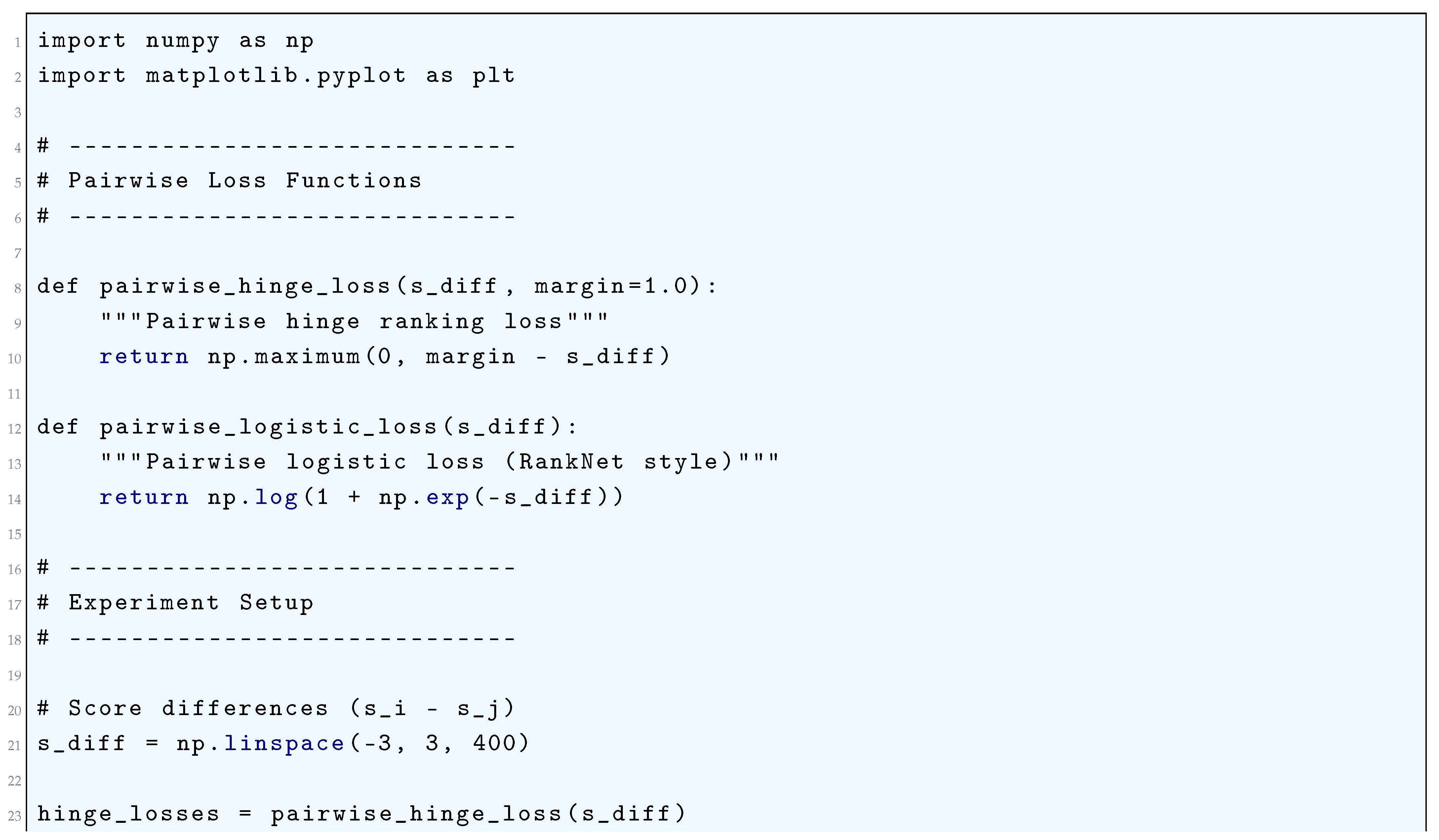

10.5.3.9.6 Listwise Ranking Losses

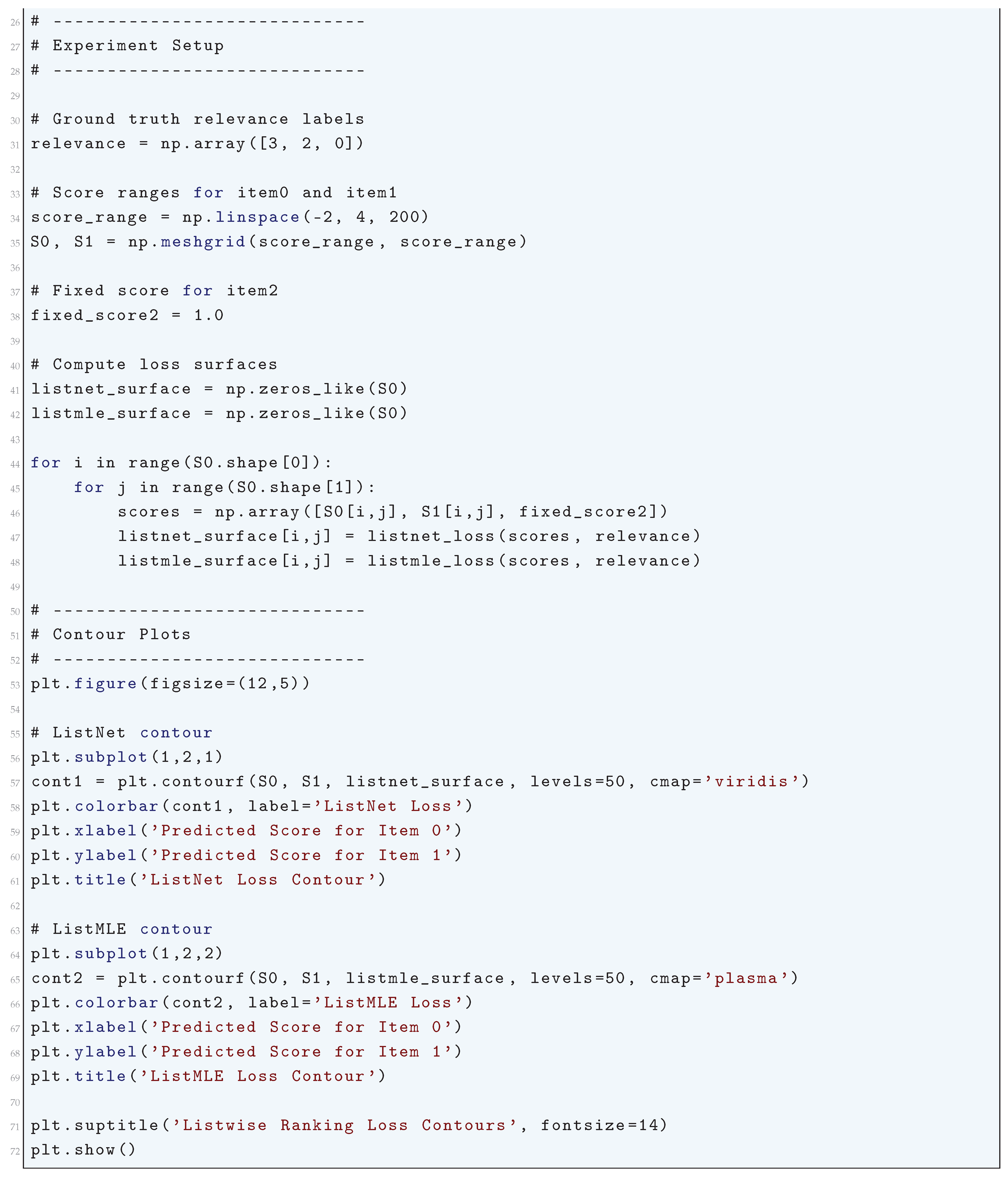

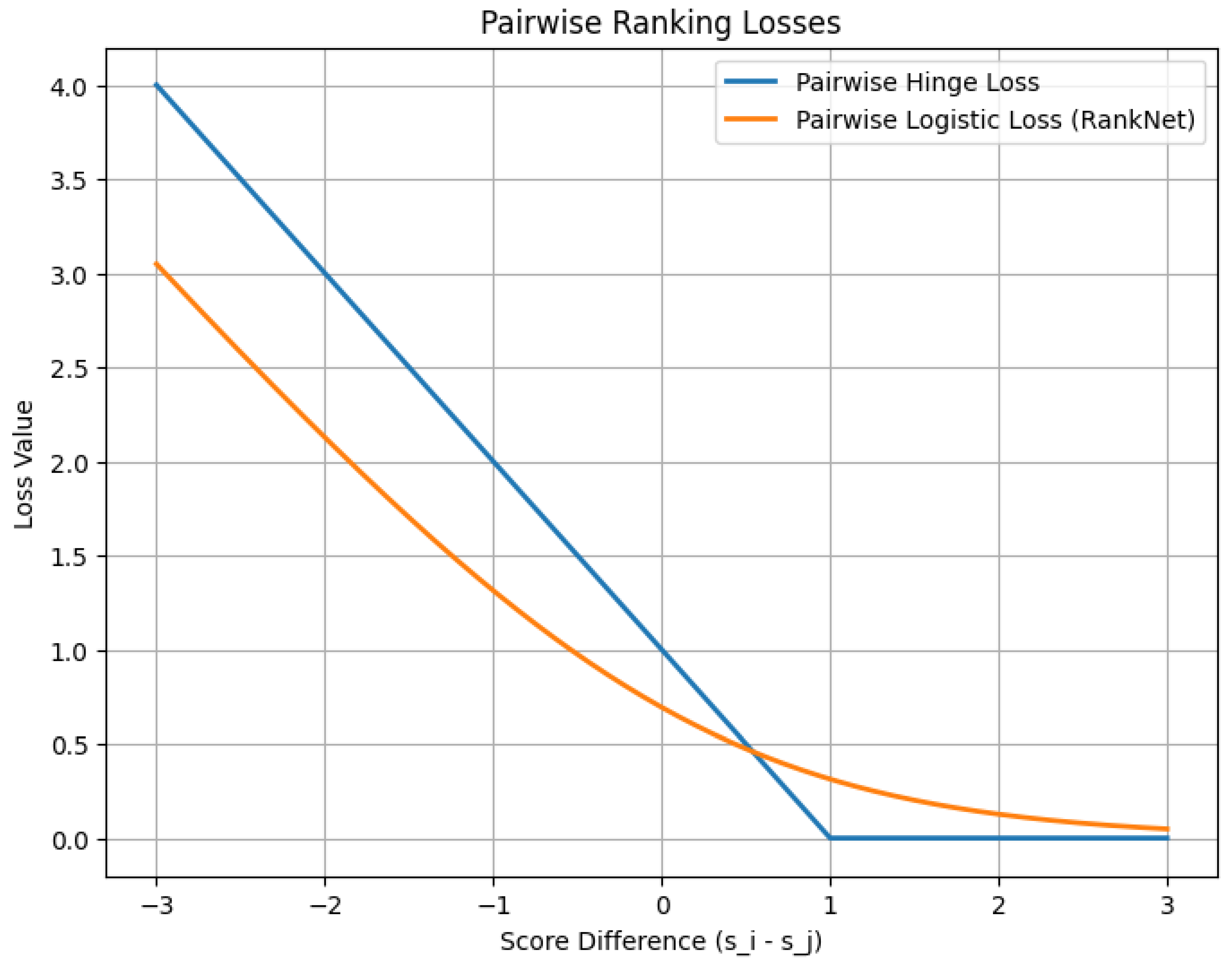

10.5.3.9.7 Pairwise Ranking Losses

10.5.3.9.8 Contrastive Divergence (CD) Loss

10.5.3.9.9 Persistent Contrastive Divergence (PCD) Loss

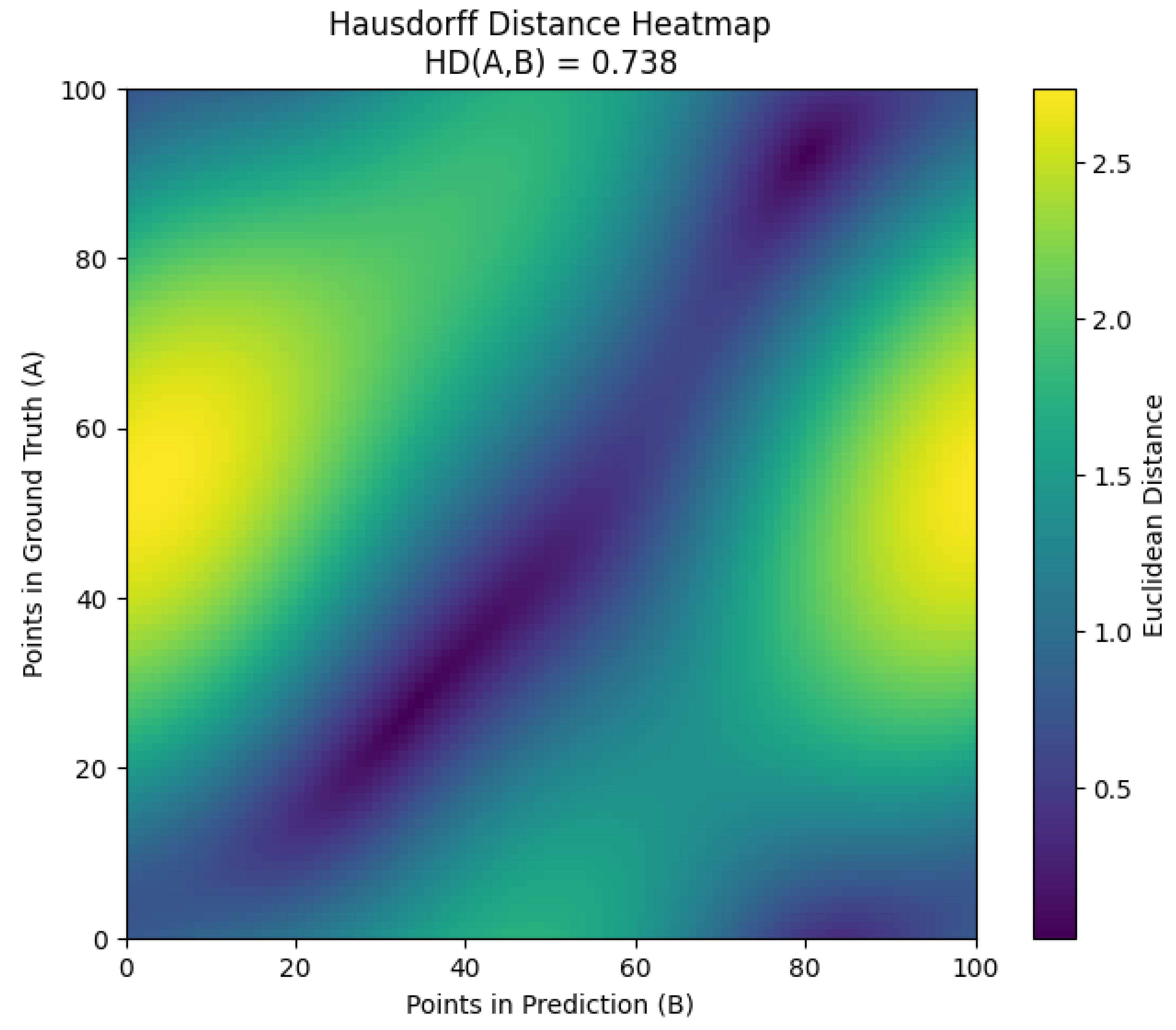

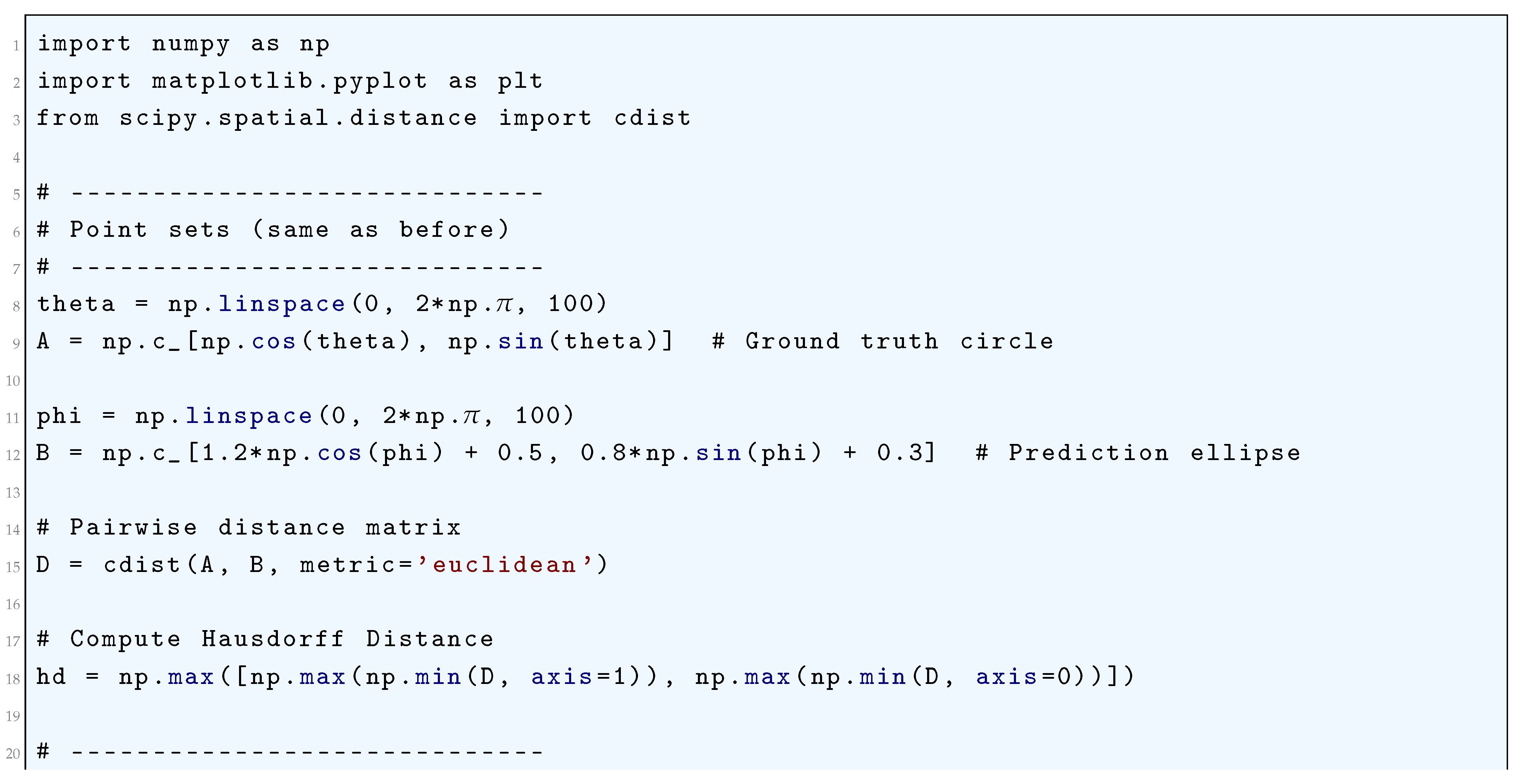

10.5.3.9.10 Hausdorff Distance Loss

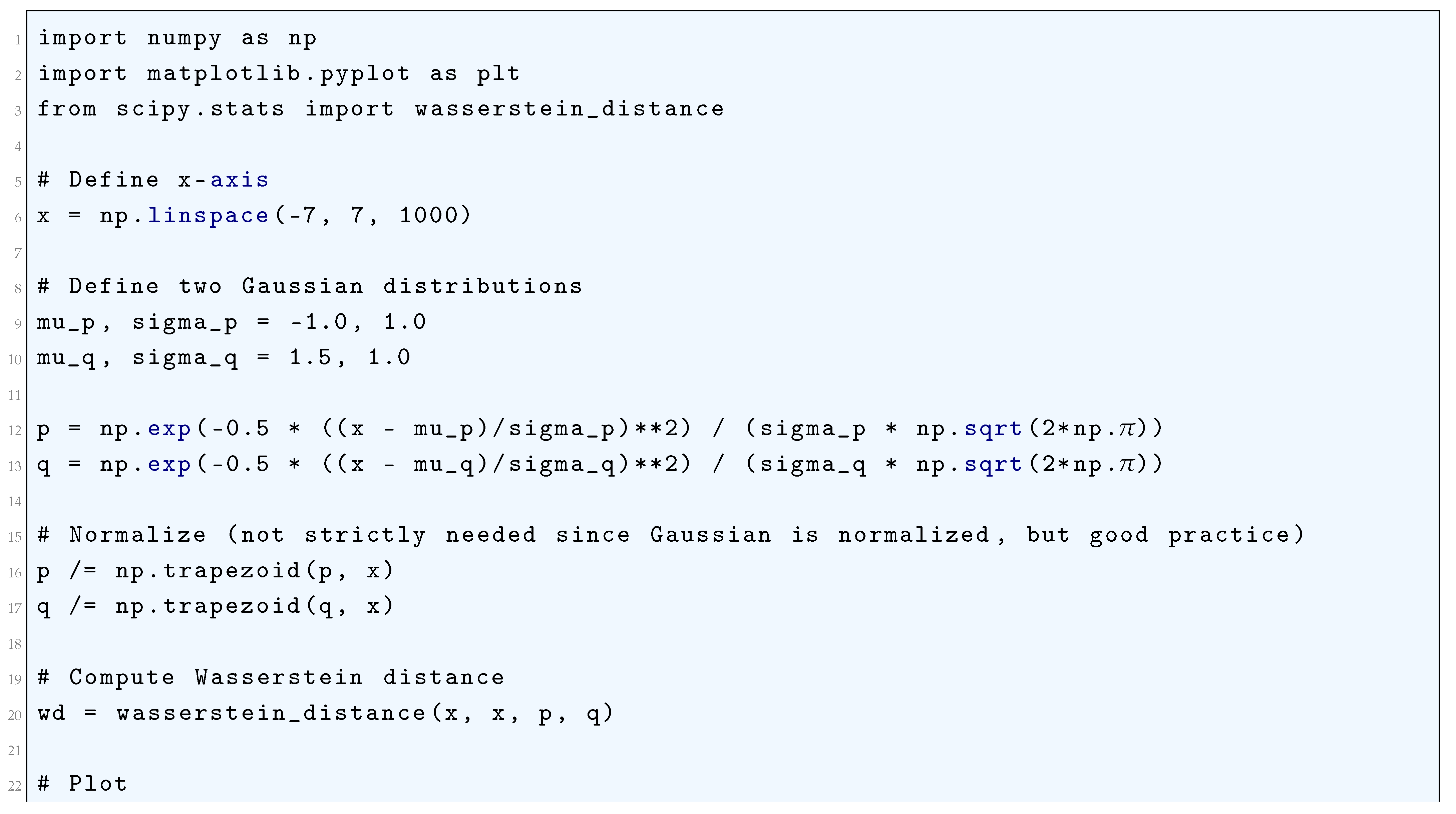

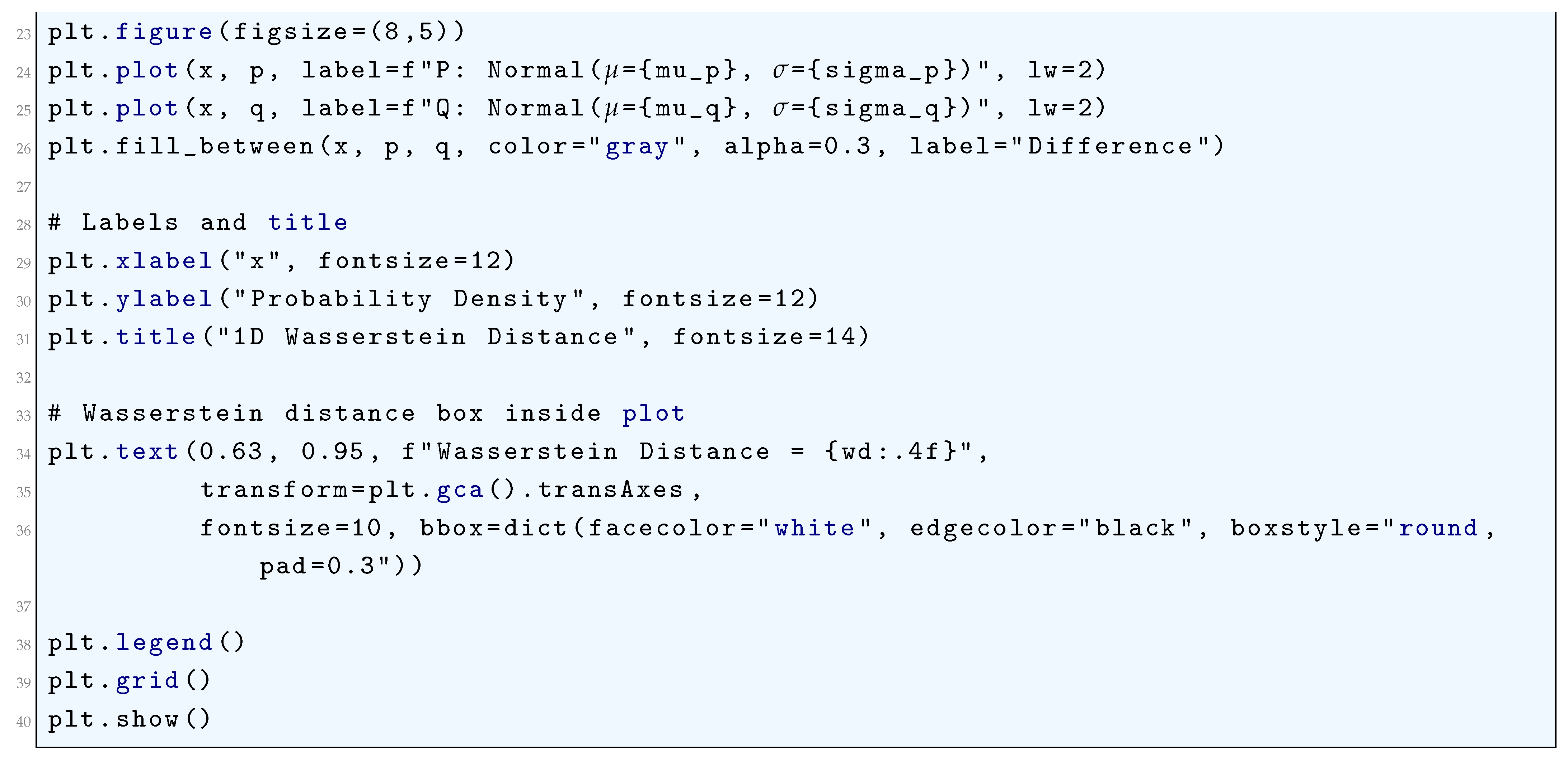

10.5.3.10 Python Code to Generate Figure 77 Illustrating 1D Wasserstein Distance of Two Distributions Having Parameters: Normal() and Normal()

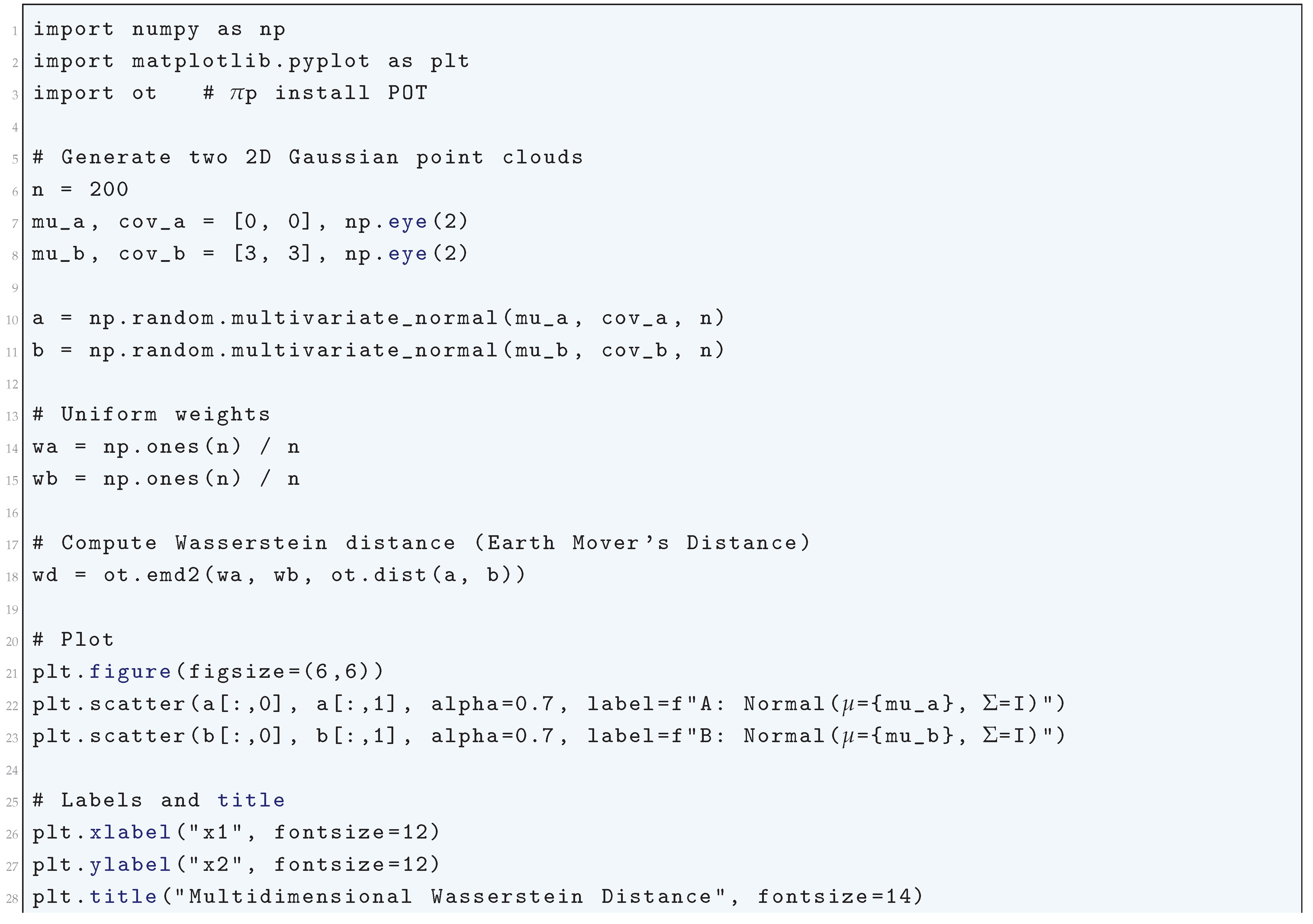

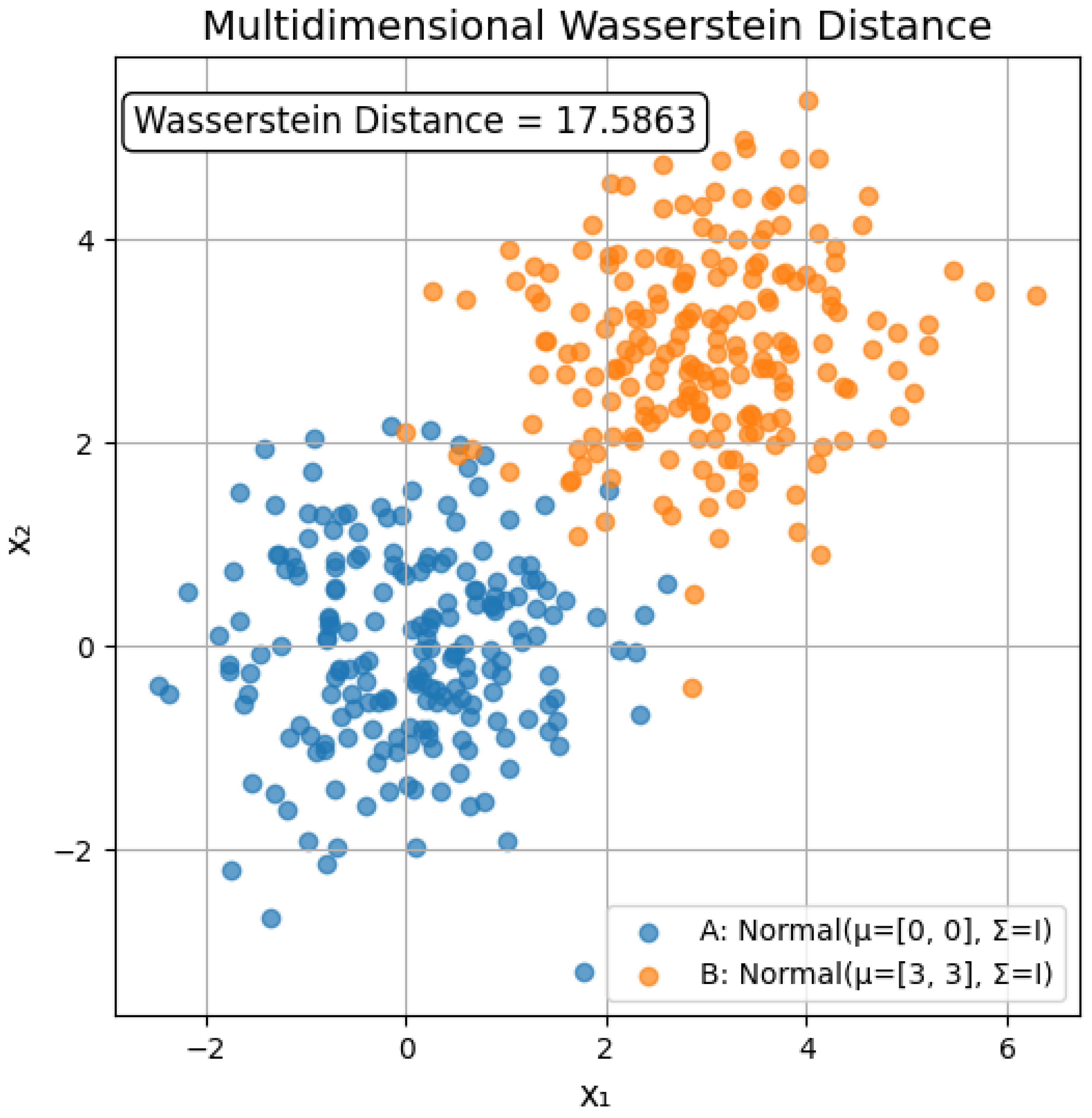

10.5.3.11 Python Code to Generate Figure 78 Illustrating Multidimensional Wasserstein Distance of Two Distributions Having Parameters, Distribution A: , Distribution B:

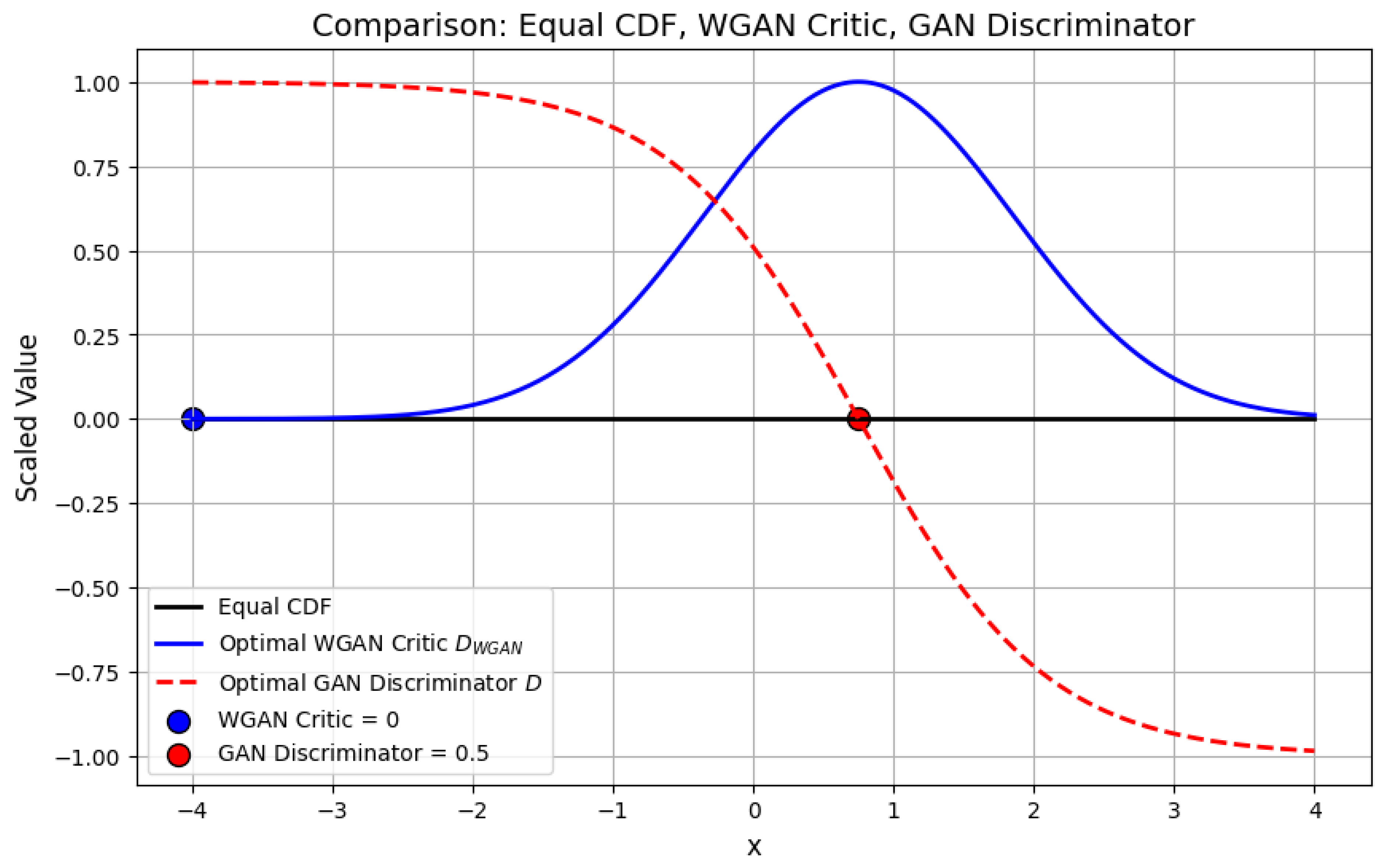

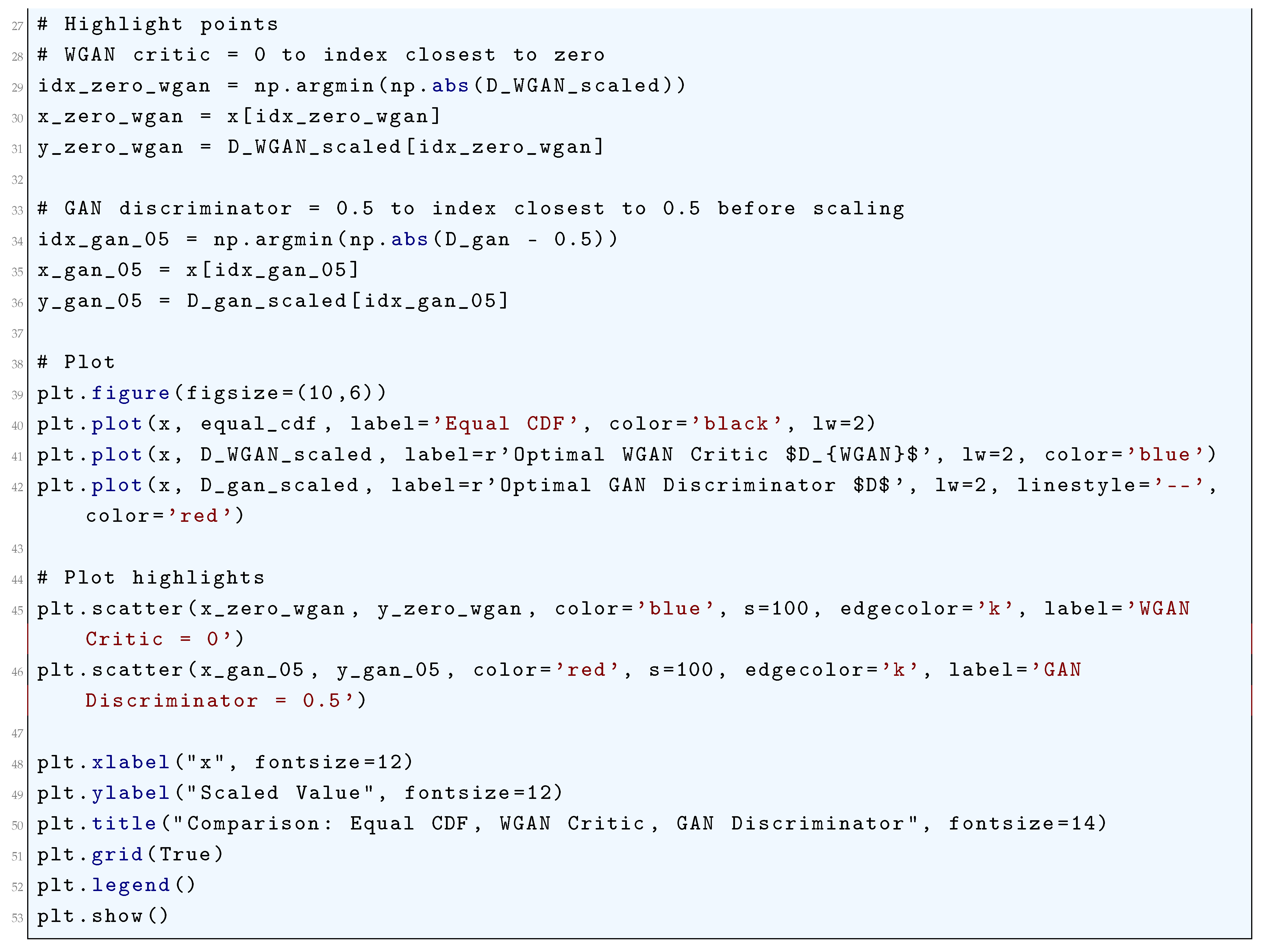

10.5.3.12 Python Code to Generate Figure 79 Comparing Equal CDF, WGAN Critic, GAN Discriminator

10.5.3.13 Python Code to Generate Figure 80 Illustrating Least Squares GAN (LSGAN) Loss Functions

10.5.3.14 Python Code to Generate Figure 81 Illustrating Standard GAN (Adversarial) Loss Functions

10.5.3.15 Python Code to Generate Figure 82 Illustrating Contrastive Loss Functions

10.5.3.16 Python Code to Generate Figure 83 Illustrating Contrastive Loss Landscapes

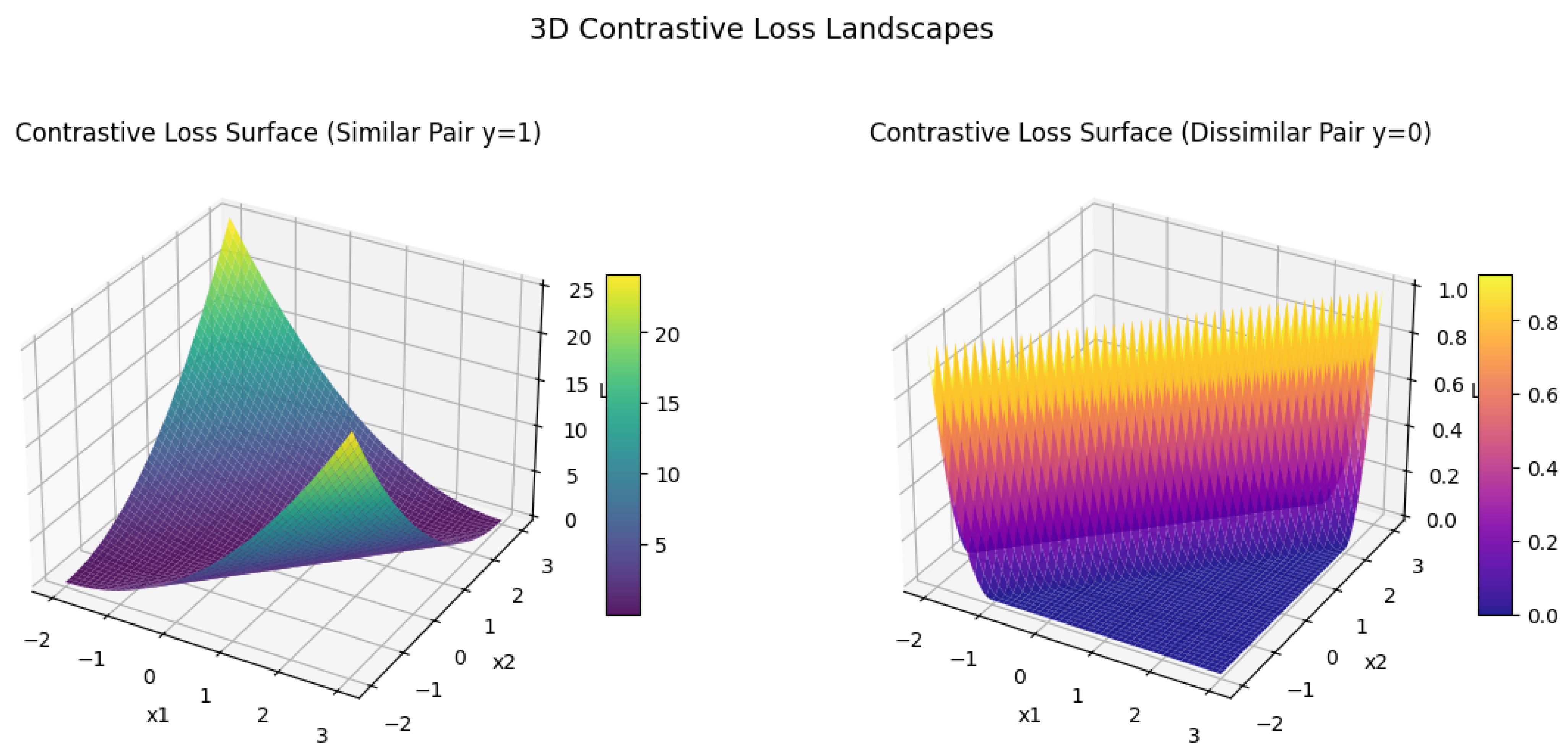

10.5.3.17 Python Code to Generate Figure 84 Illustrating 3D Contrastive Loss Landscapes

10.5.3.18 Python Code to Generate Figure 85 Illustrating Triplet Loss Function

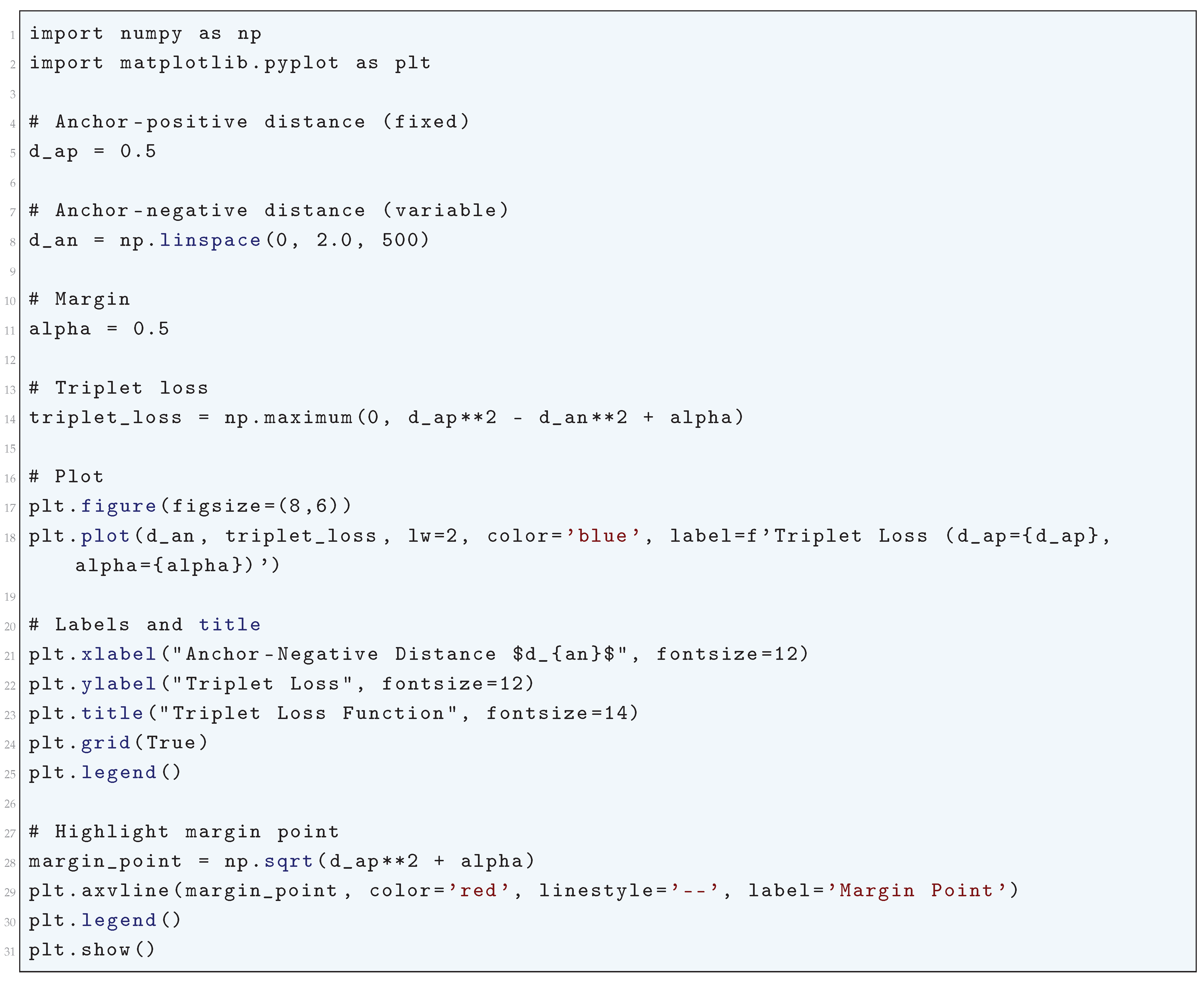

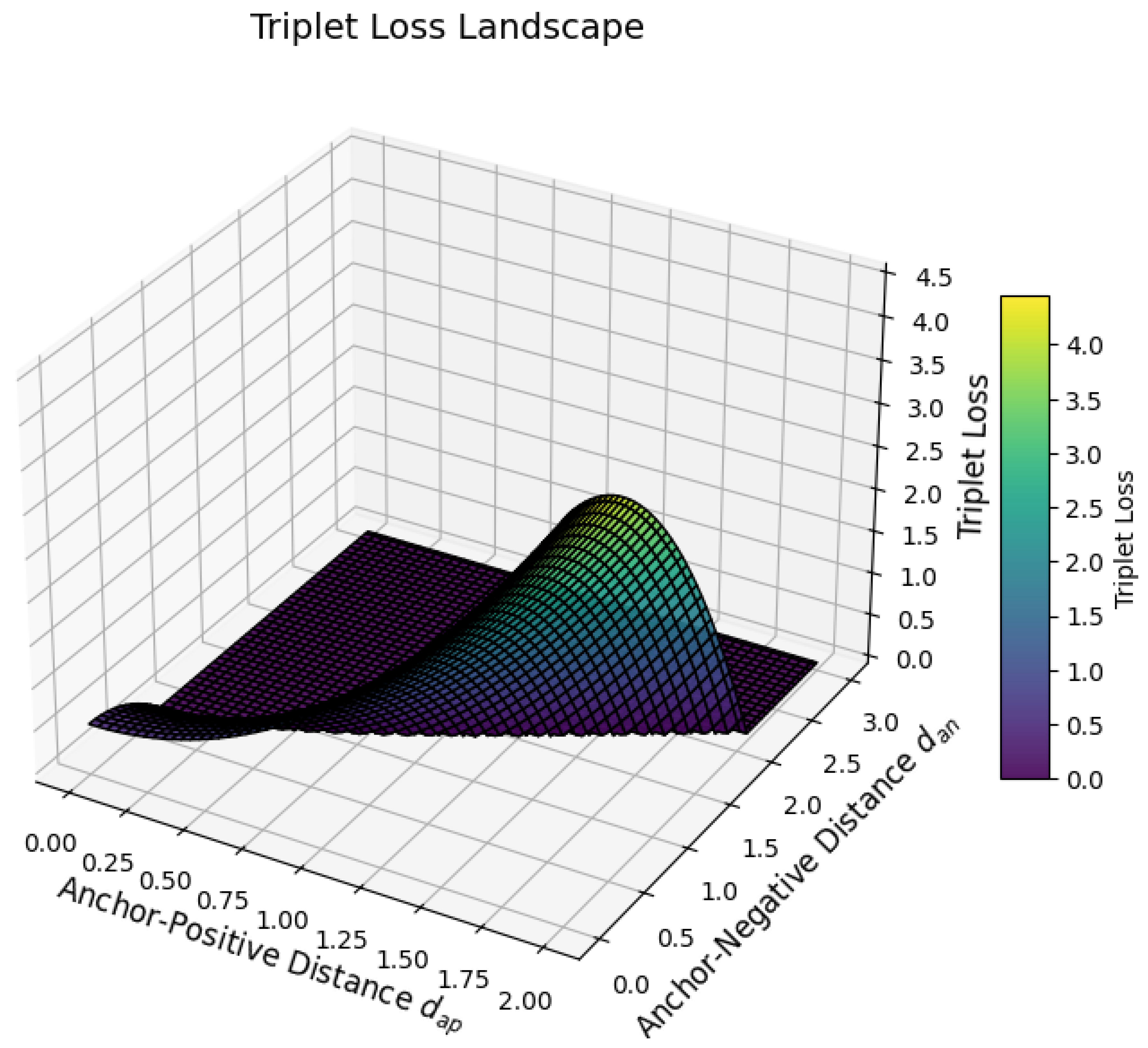

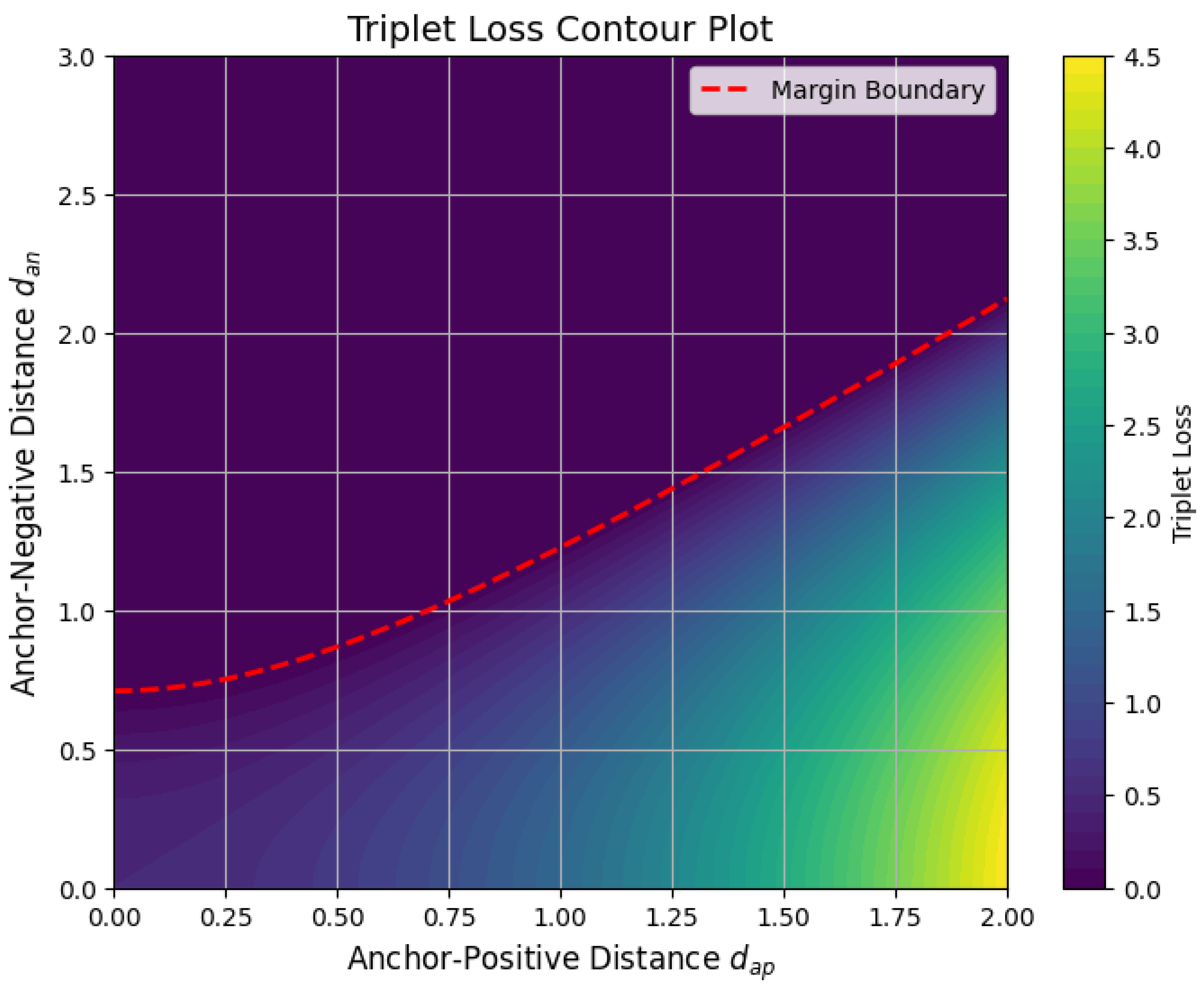

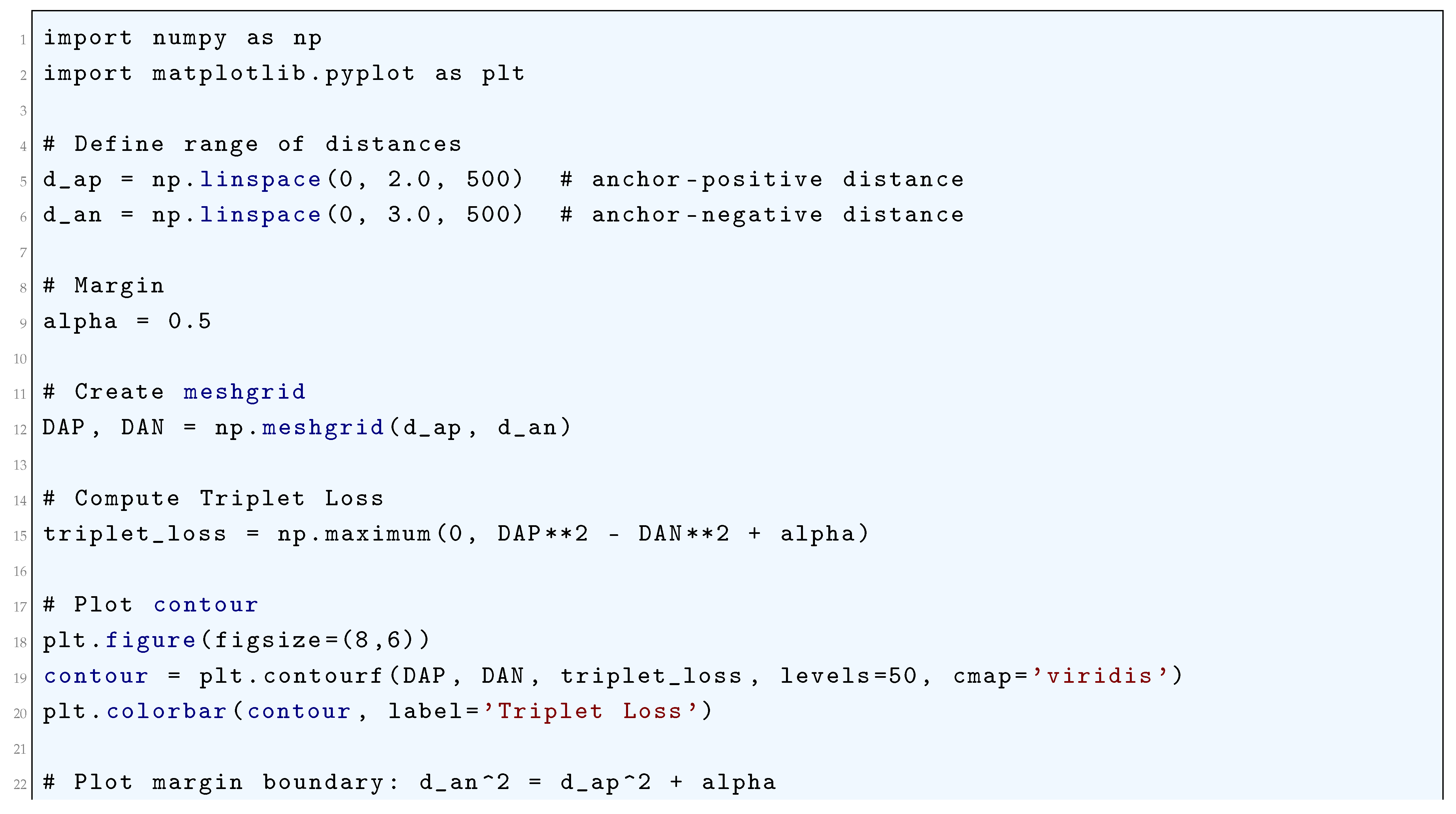

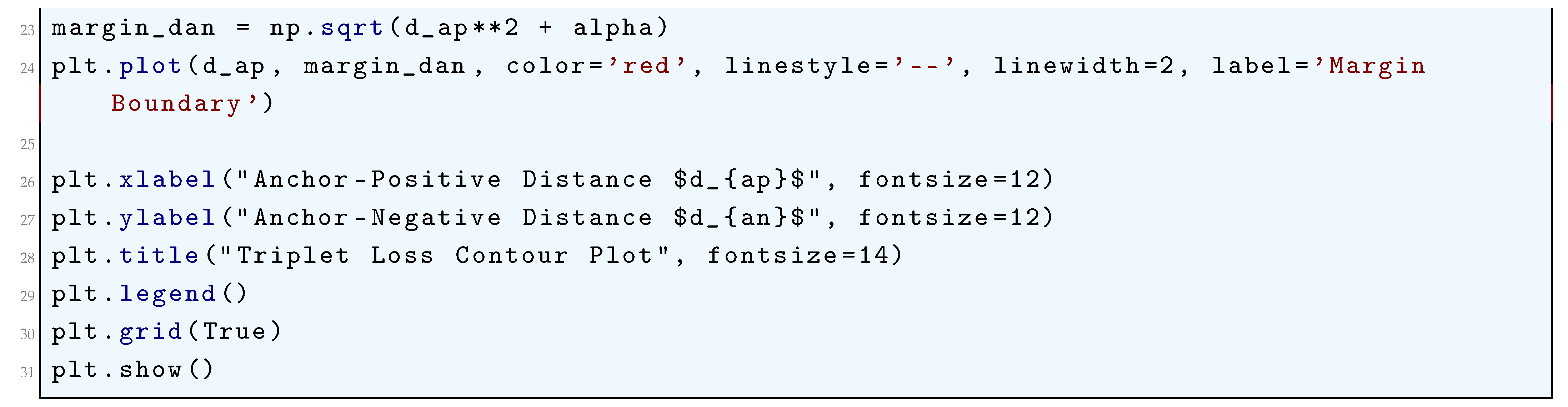

10.5.3.19 Python Code to Generate Figure 86 Illustrating Triplet Loss Landscape

10.5.3.20 Python Code to Generate Figure 87 Illustrating Triplet Loss Contour Plot

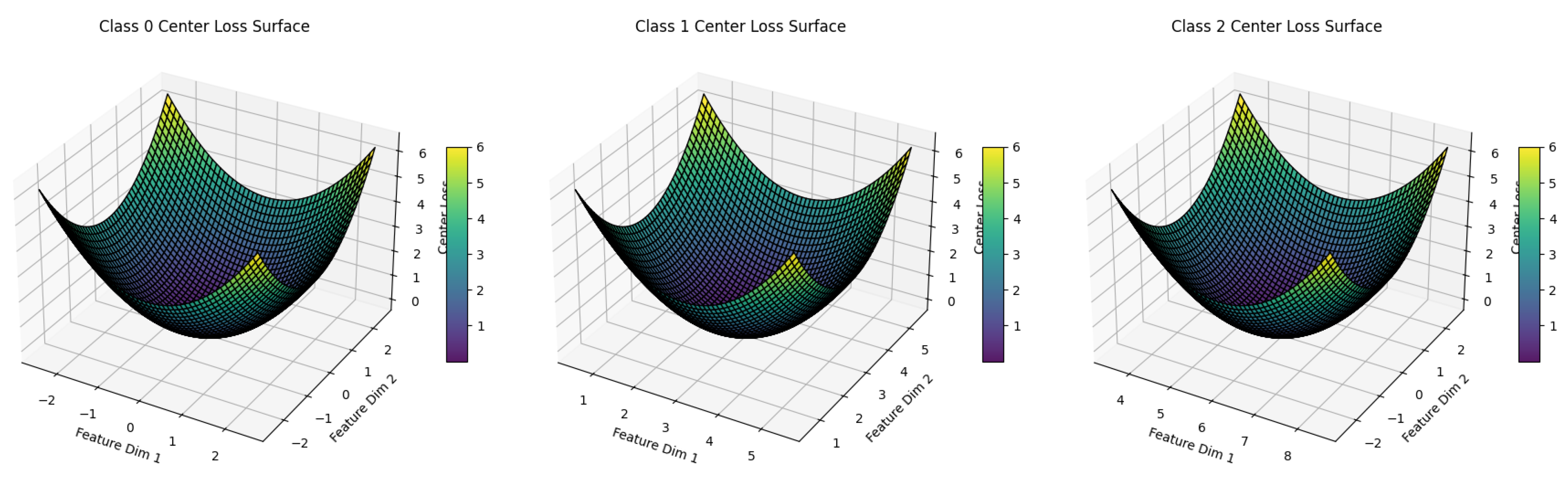

10.5.3.21 Python Code to Generate Figure 88 Illustrating Center Loss Function

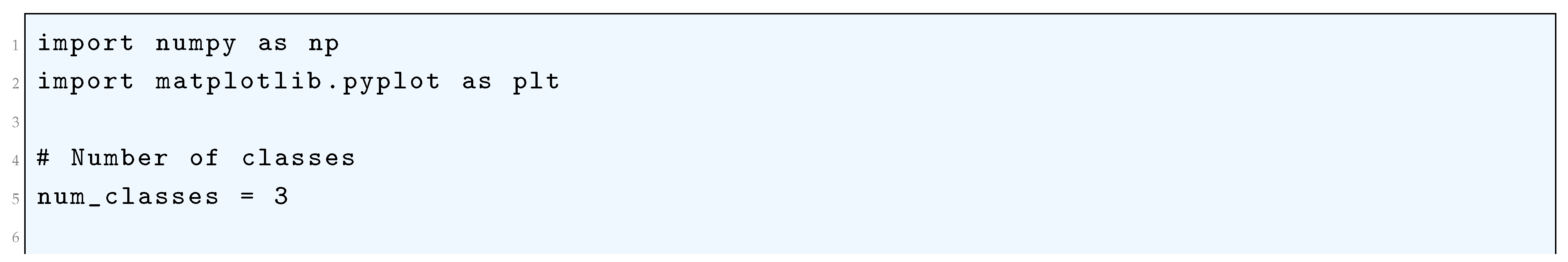

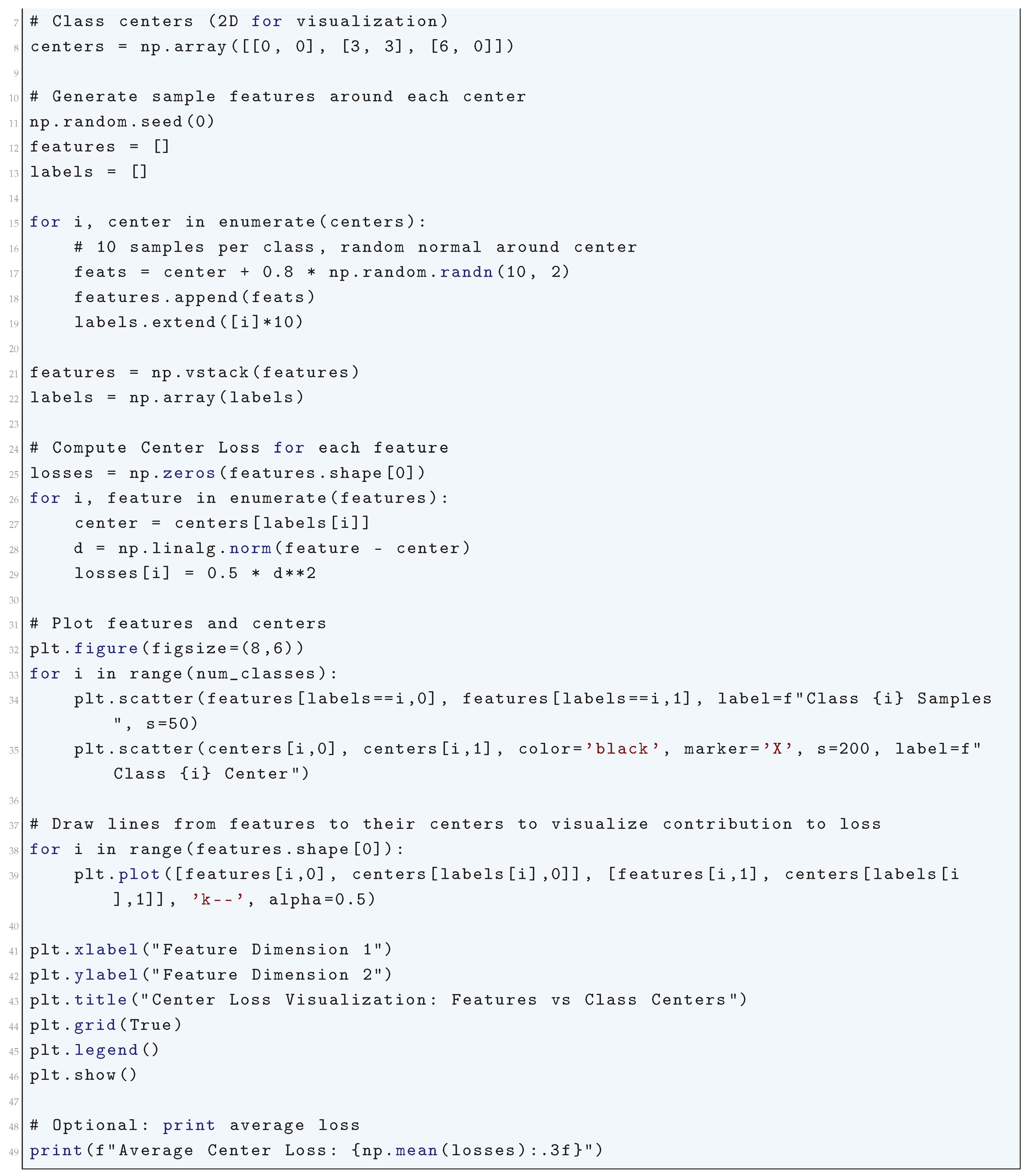

10.5.3.22 Python Code to Generate Figure 89 Illustrating Center Loss Visualization: Features vs Class Centers

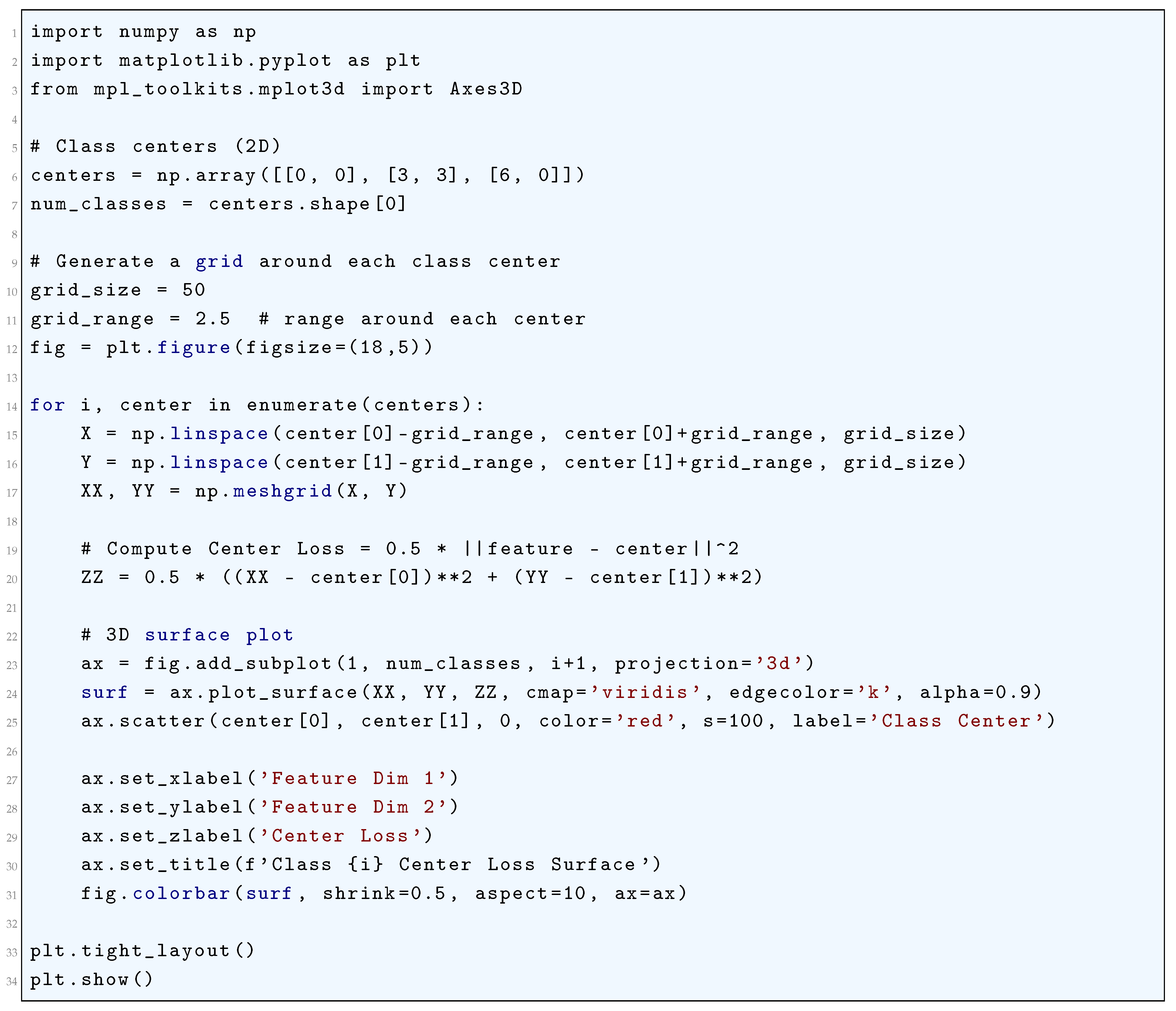

10.5.3.23 Python Code to Generate Figure 90 Illustrating 3D Center Loss Surface

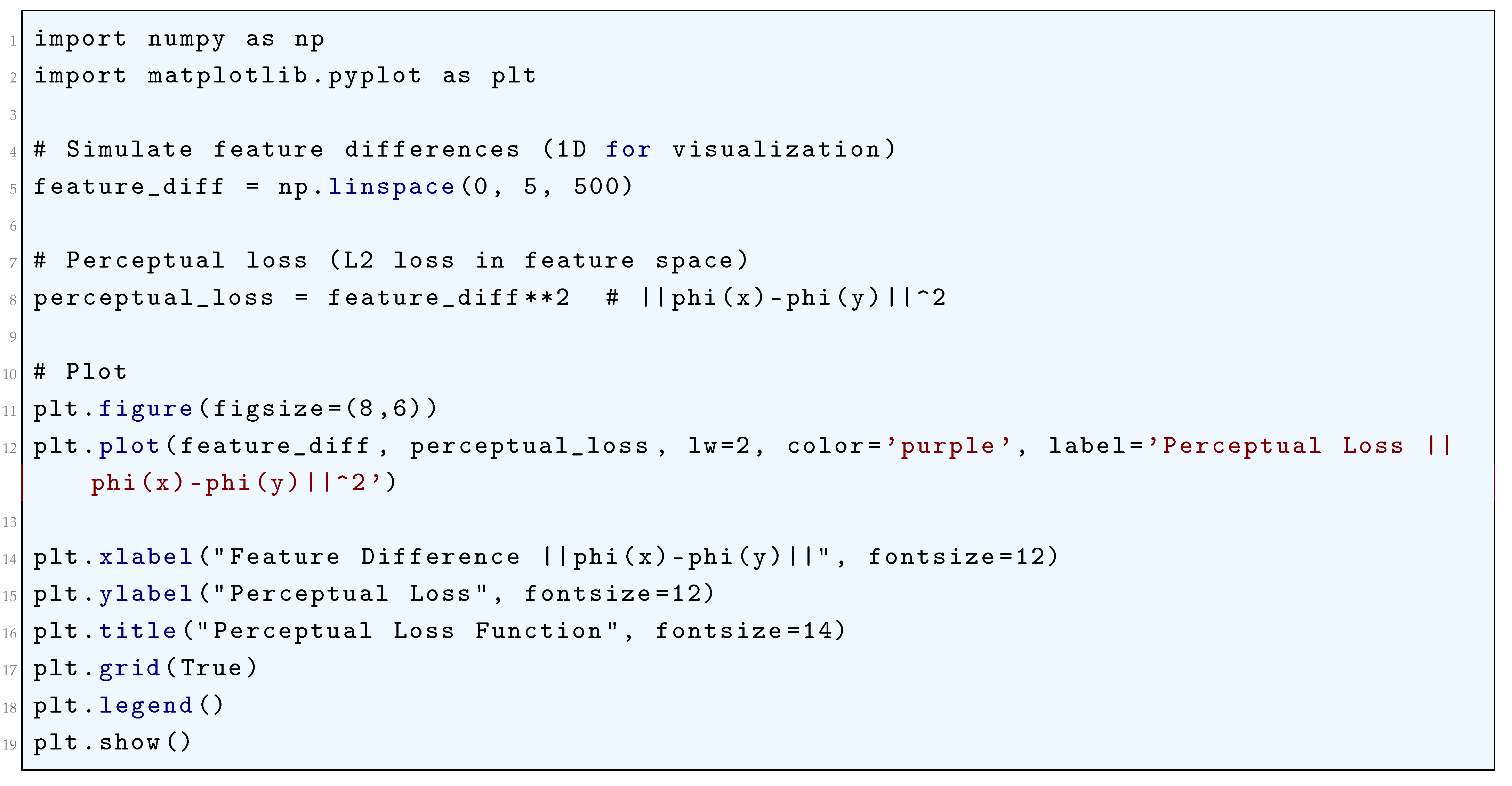

10.5.3.24 Python Code to Generate Figure 91 Illustrating Perceptual Loss Function

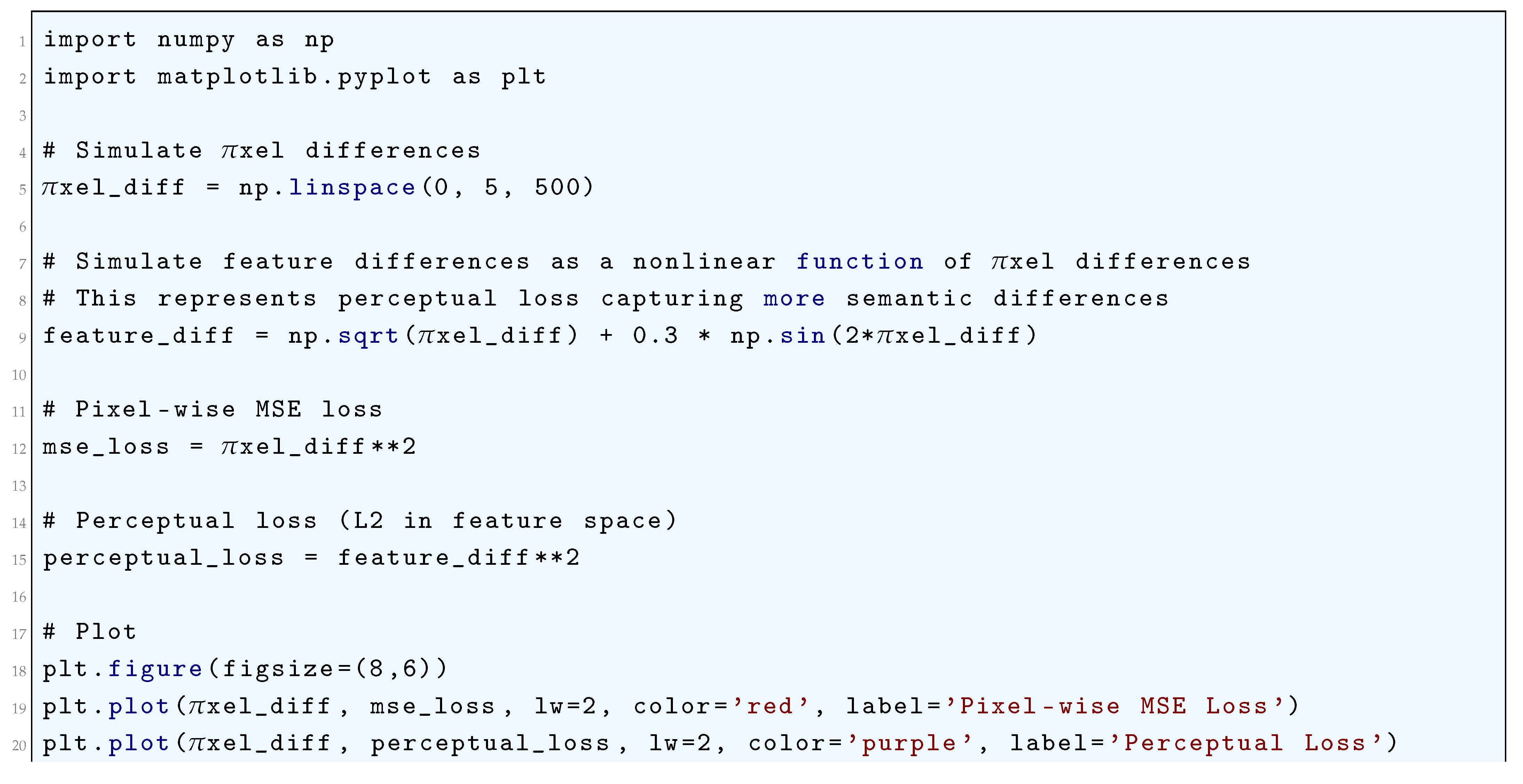

10.5.3.25 Python Code to Generate Figure 92 Illustrating Pixel-Wise MSE vs Perceptual Loss (Nonlinear Features)

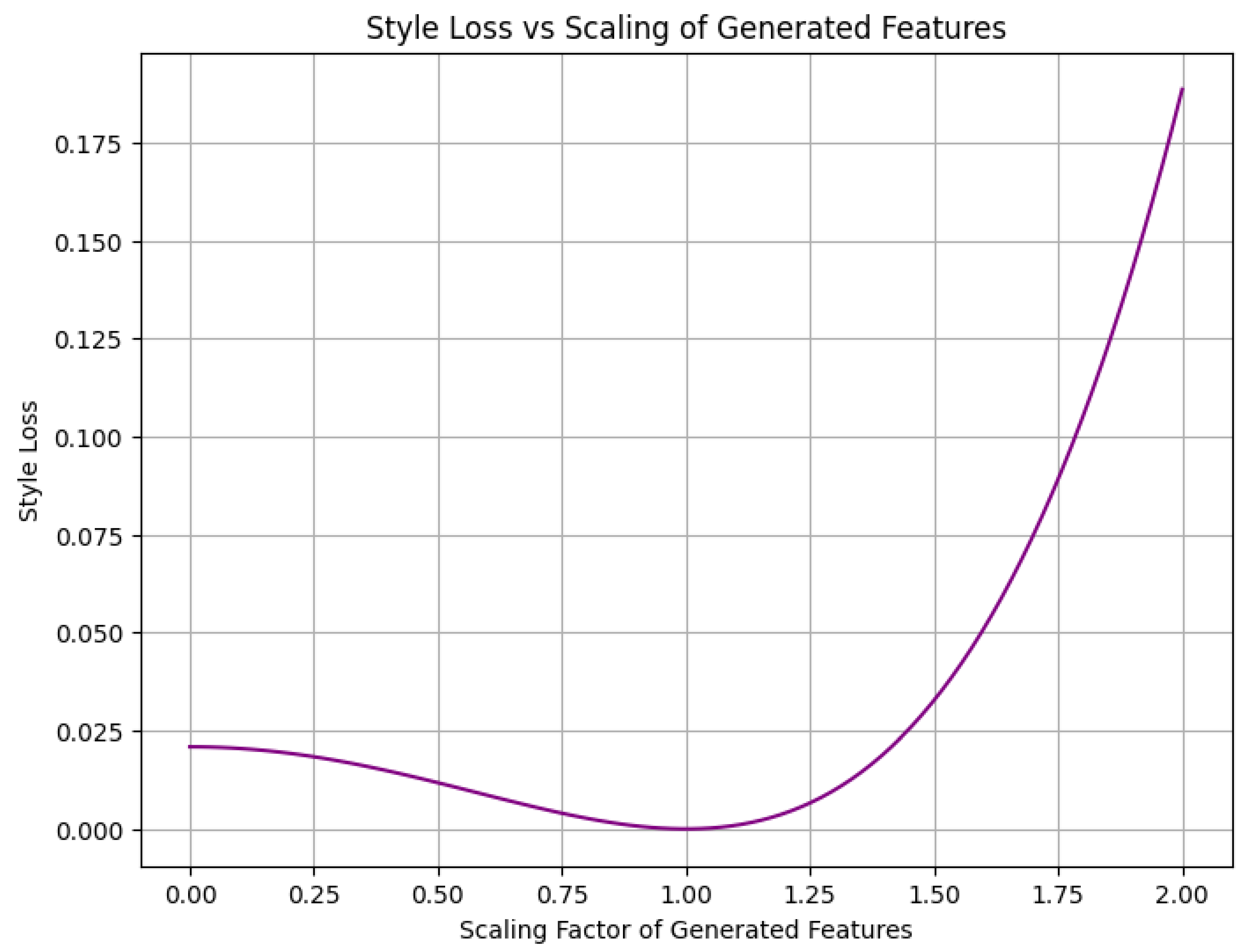

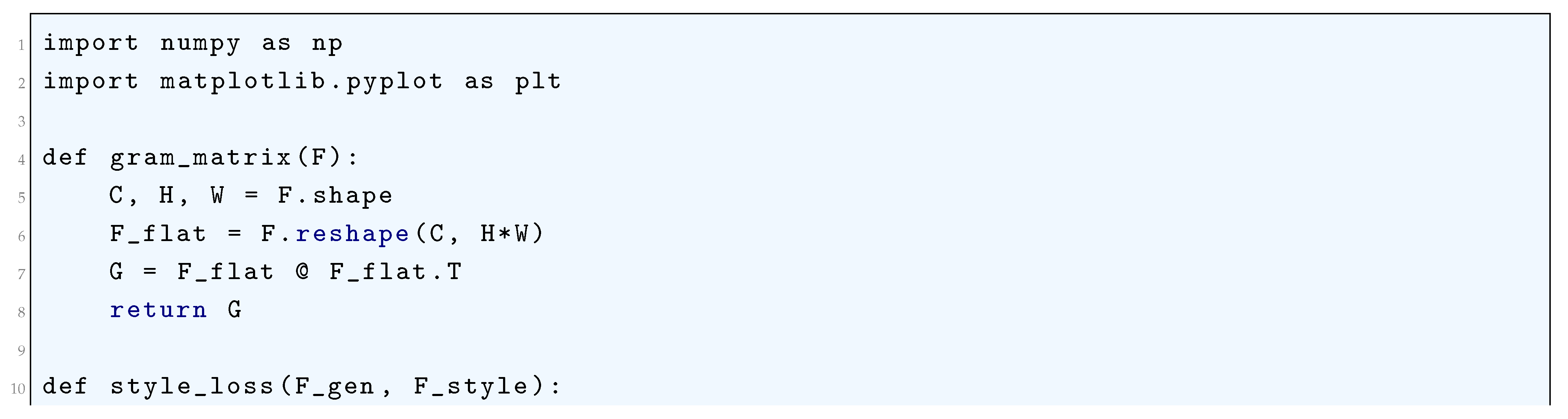

10.5.3.26 Python Code to Generate Figure 93 Illustrating Style Loss Function in Neural Style Transfer

10.5.3.27 Python Code to Generate Figure 94 Illustrating Style Loss vs Scaling of Generated Features

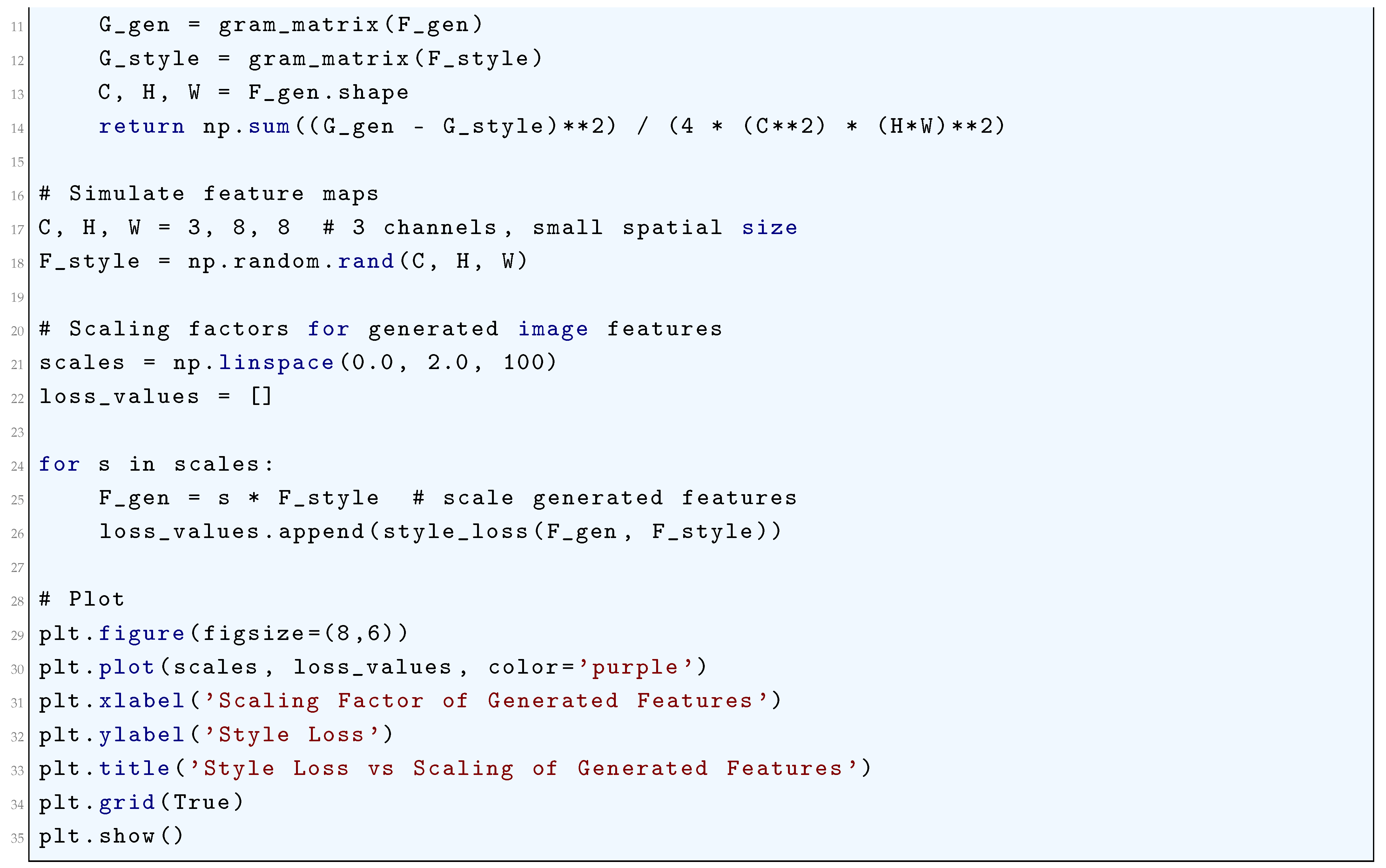

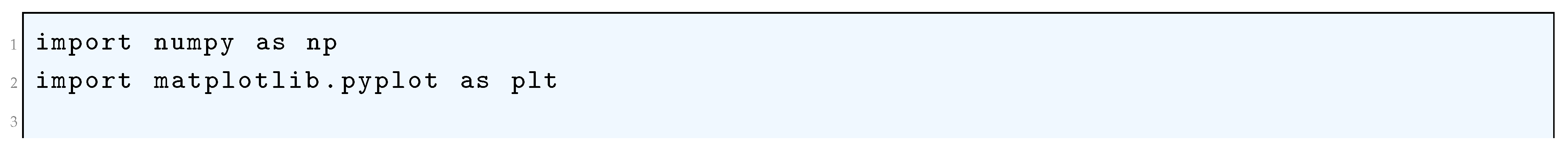

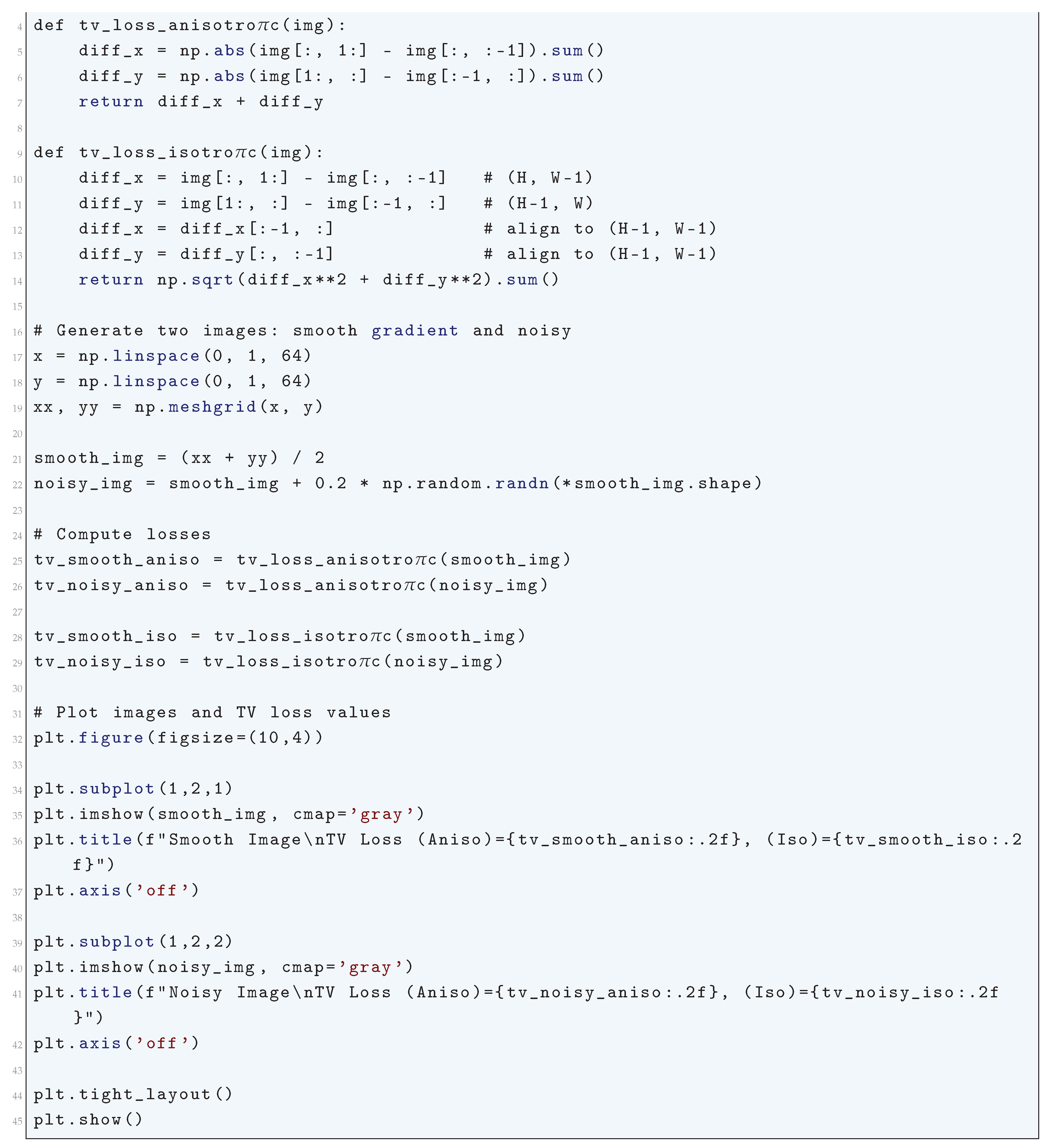

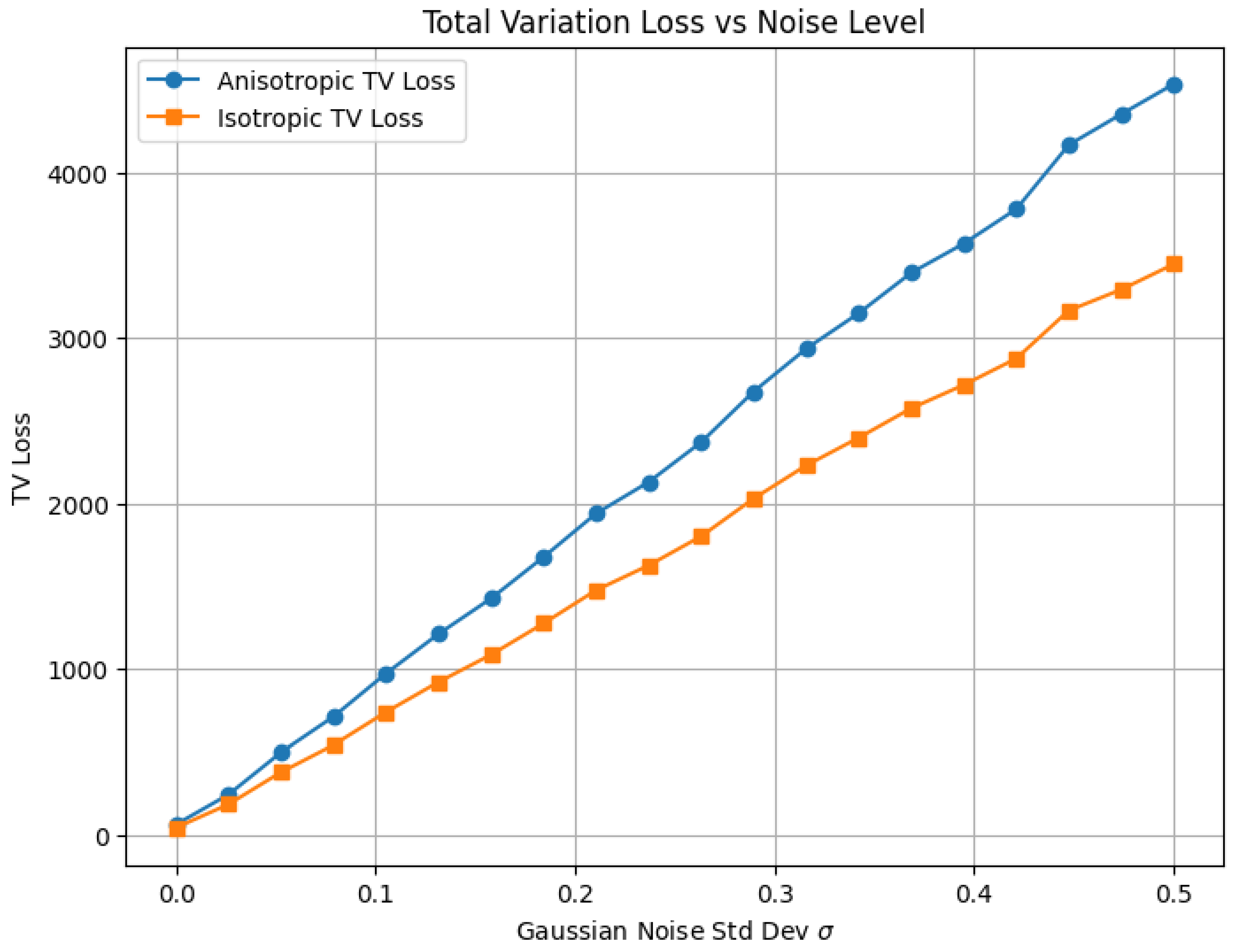

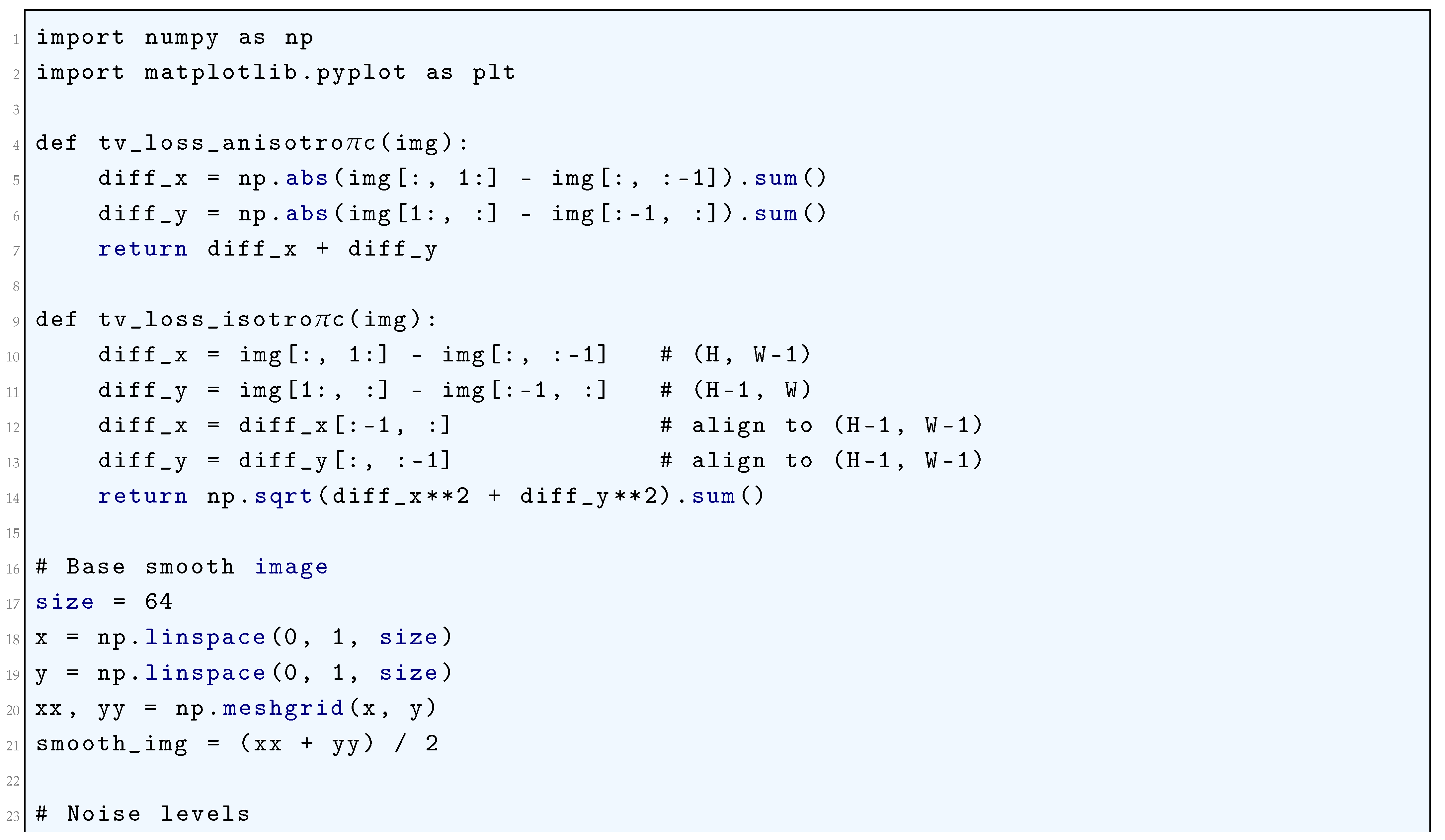

10.5.3.28 Python Code to Generate Figure 95 Illustrating Total Variation (TV) Loss

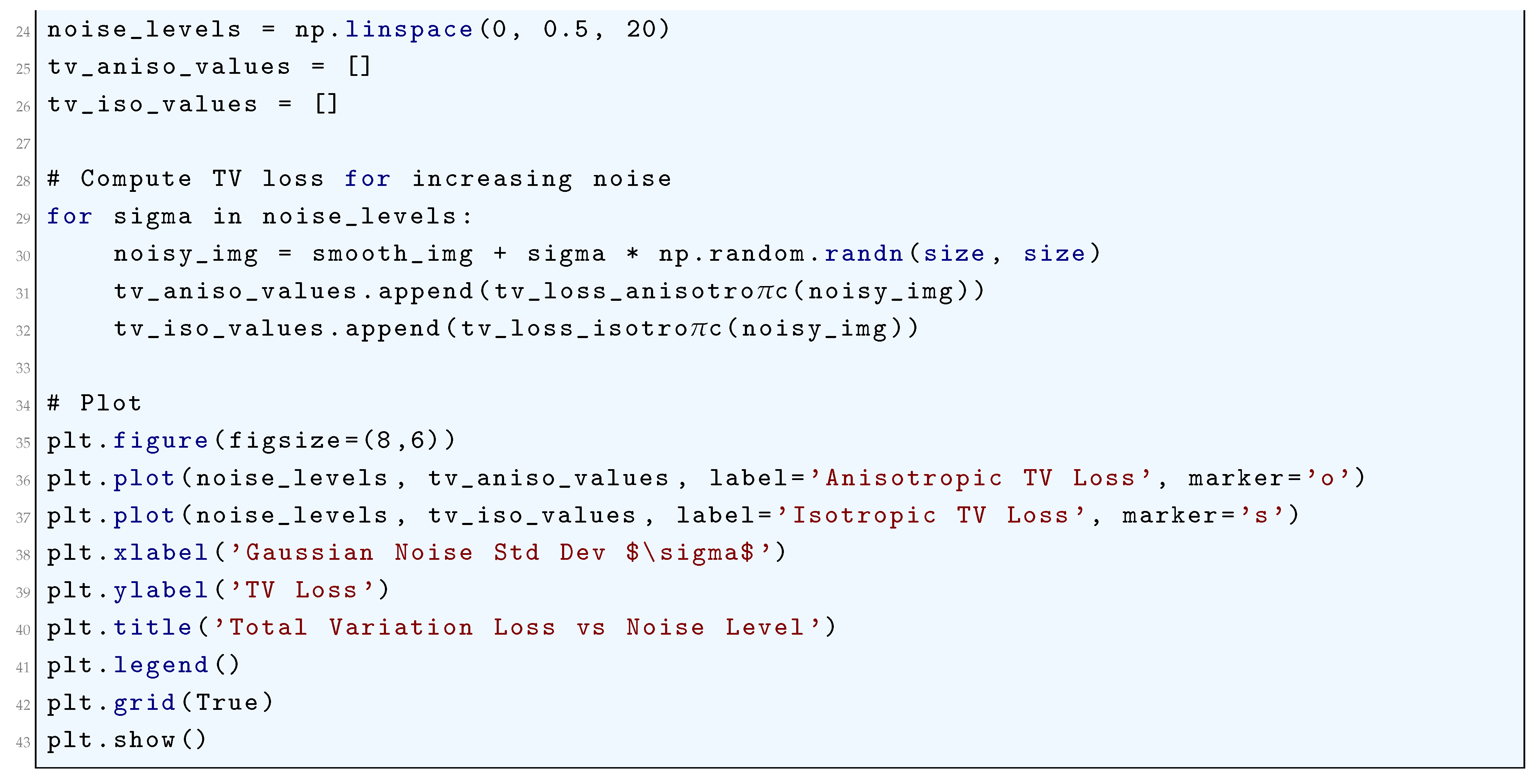

10.5.3.29 Python Code to Generate Figure 96 Illustrating Total Variation Loss vs Noise Level

10.5.3.30 Python Code to Generate Figure 97 Illustrating Evidence Lower Bound (ELBO) Loss During Training

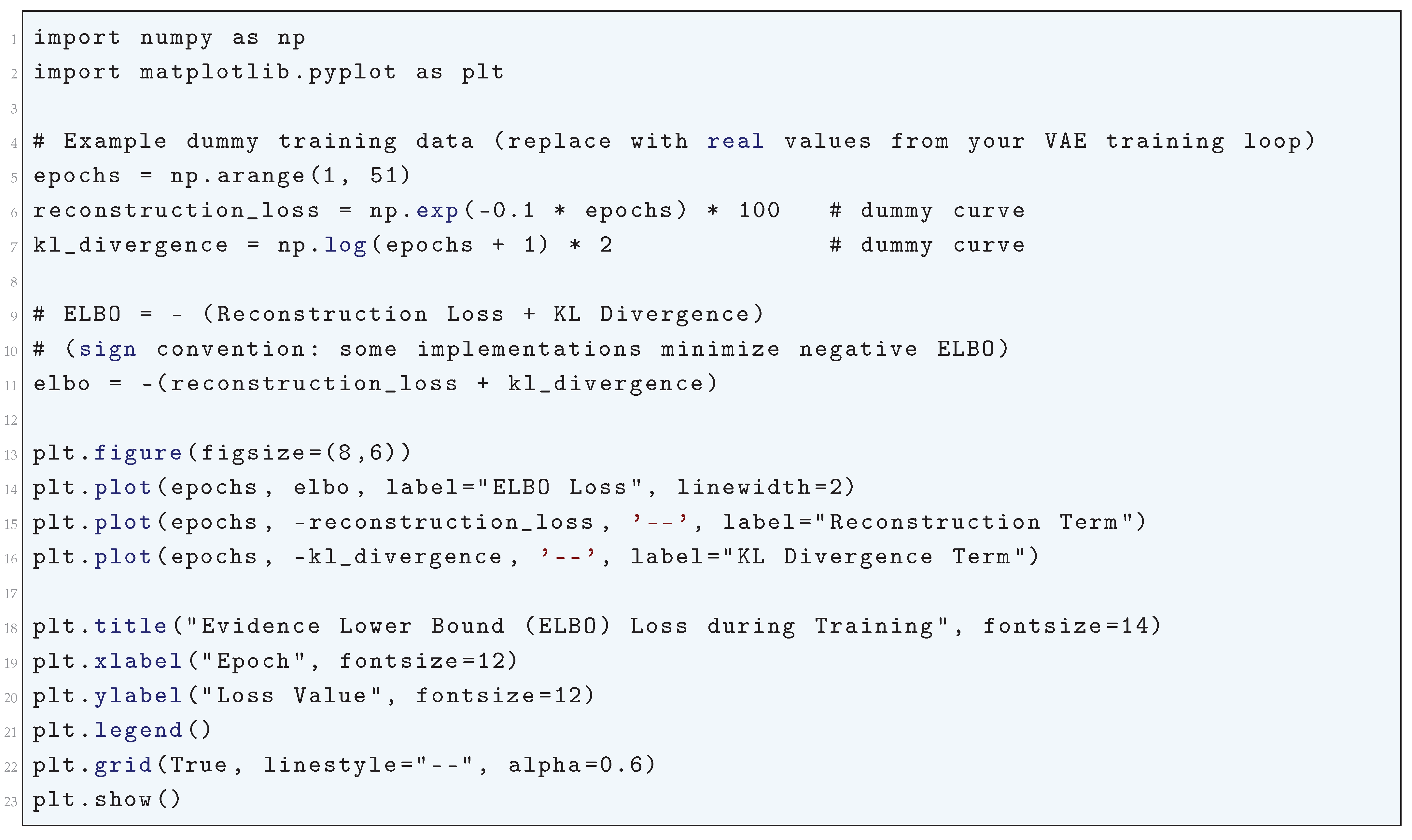

10.5.3.31 Python Code to Generate Figure 98 Illustrating Negative Log-Likelihood (NLL) Loss

10.5.3.32 Python Code to Generate Figure 99 Illustrating Domain Adversarial Loss During Training

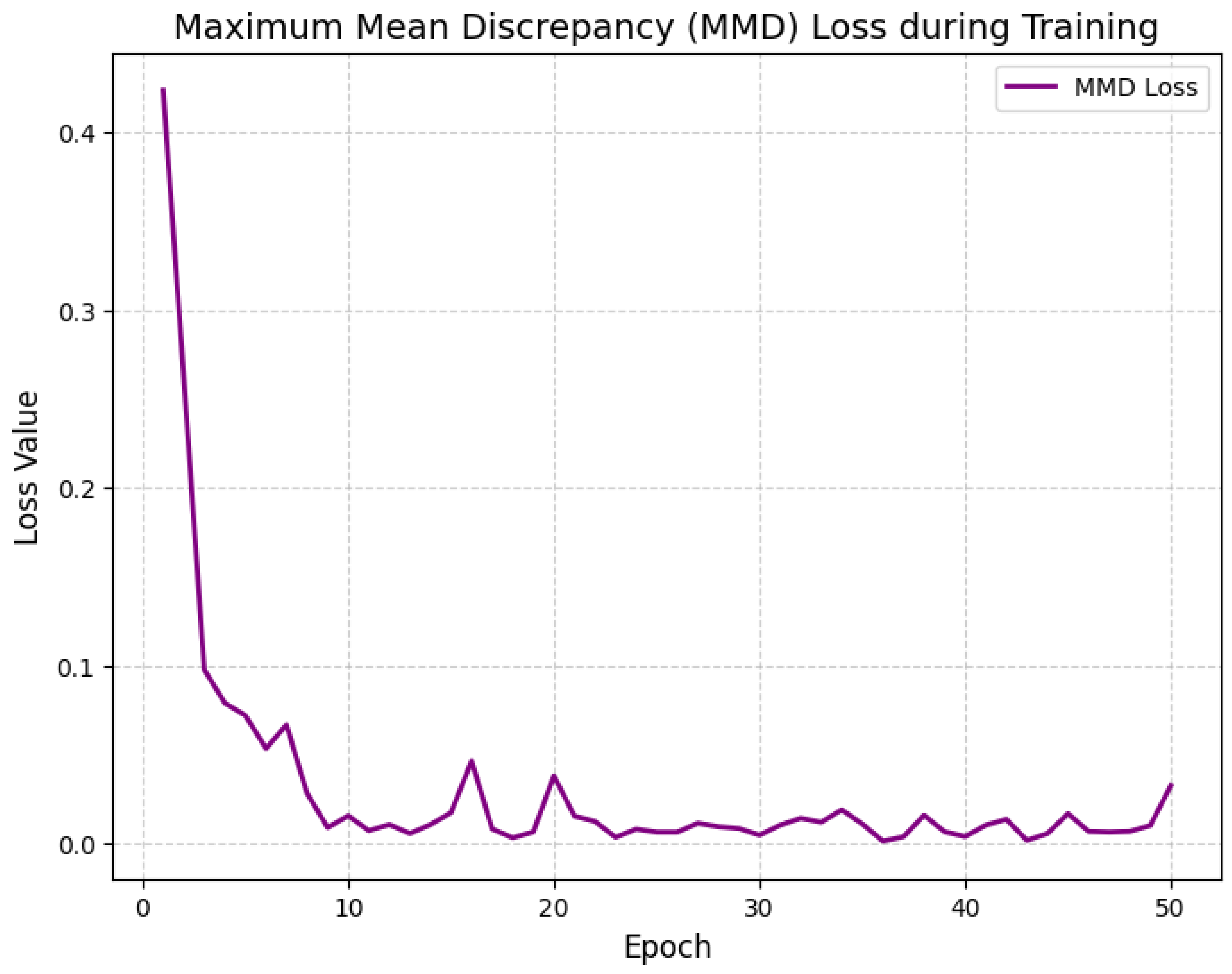

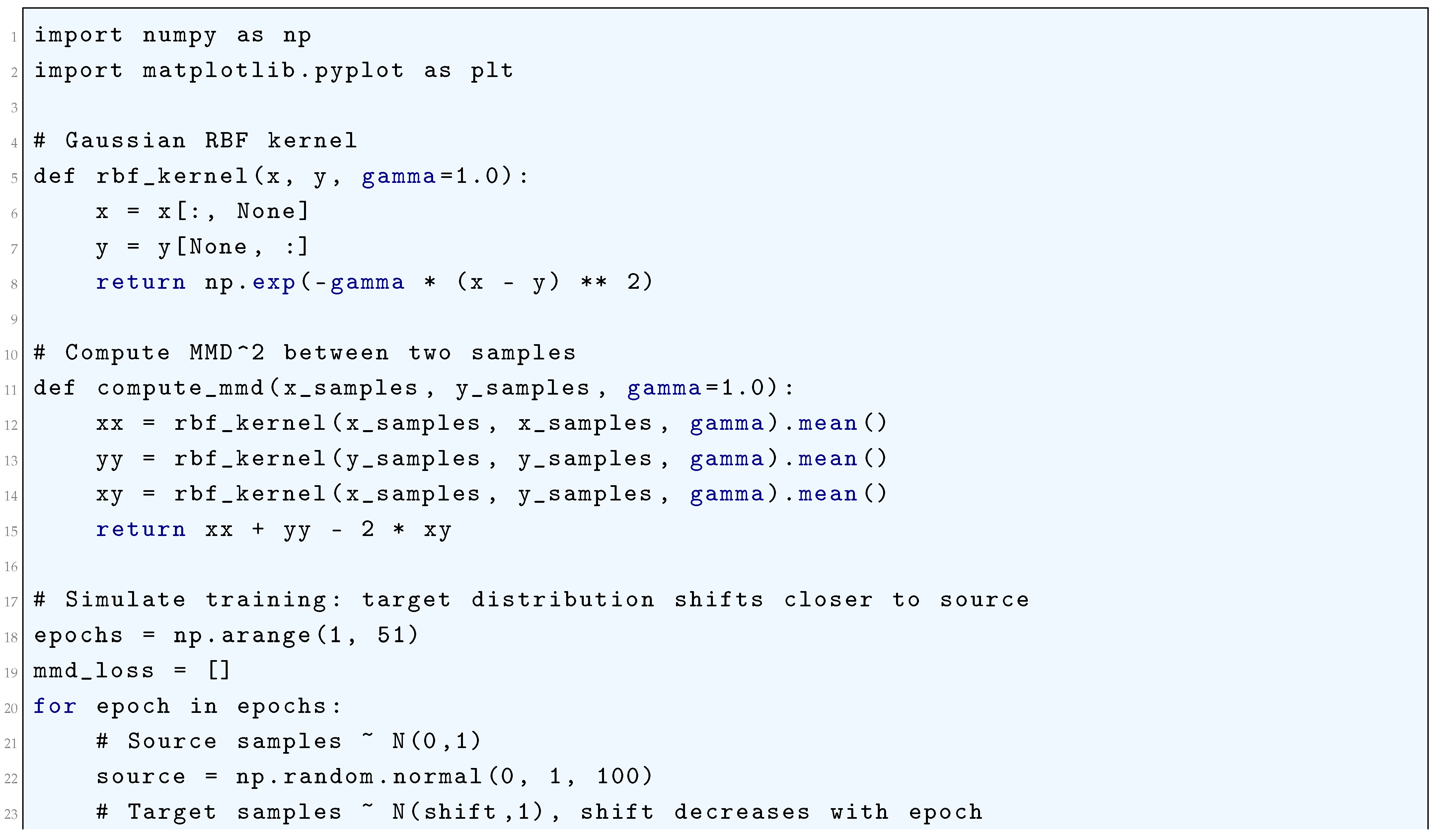

10.5.3.33 Python Code to Generate Figure 100 Illustrating Maximum Mean Discrepancy (MMD) Loss During Training

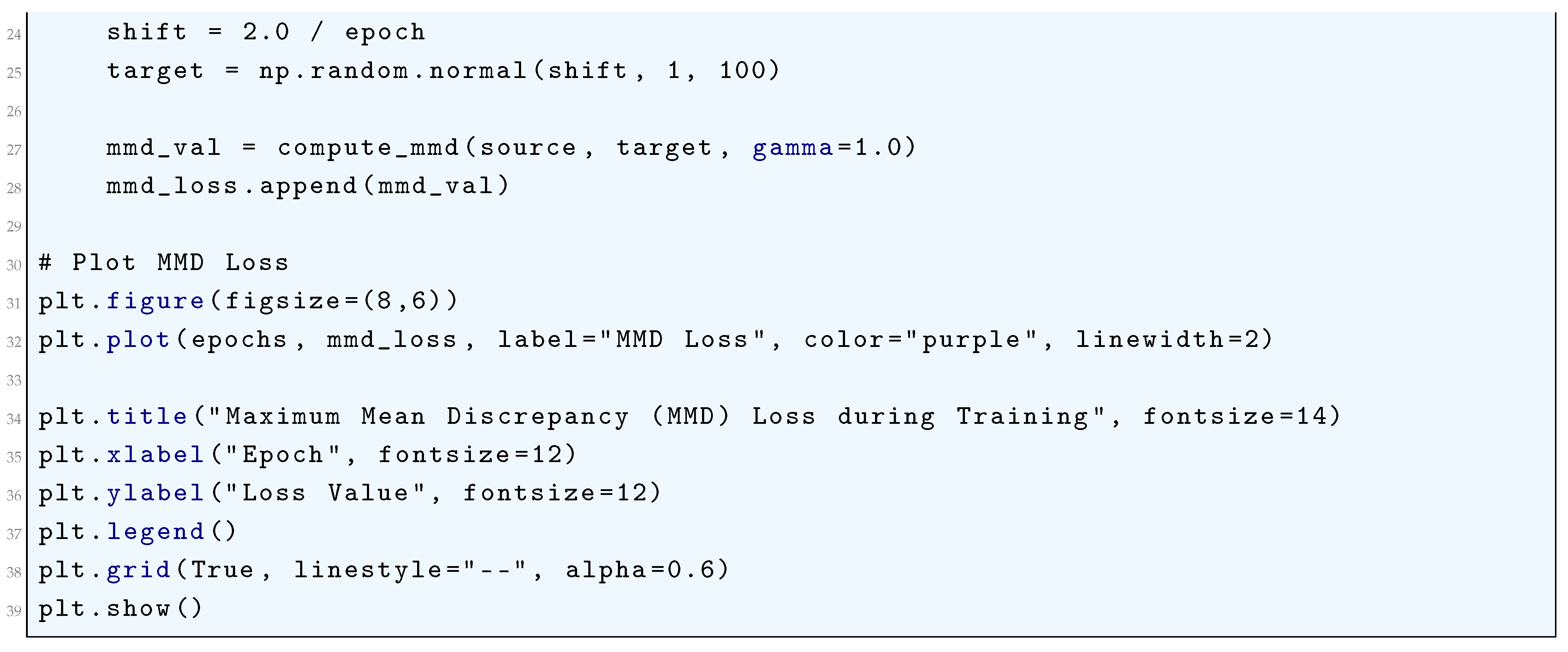

10.5.3.34 Python Code to Generate Figure 101 Illustrating IoU Loss and Variants (GIoU Loss, DIoU Loss, CIoU Loss)

10.5.3.35 Python Code to Generate Figure 102 Illustrating Dice Loss During Training

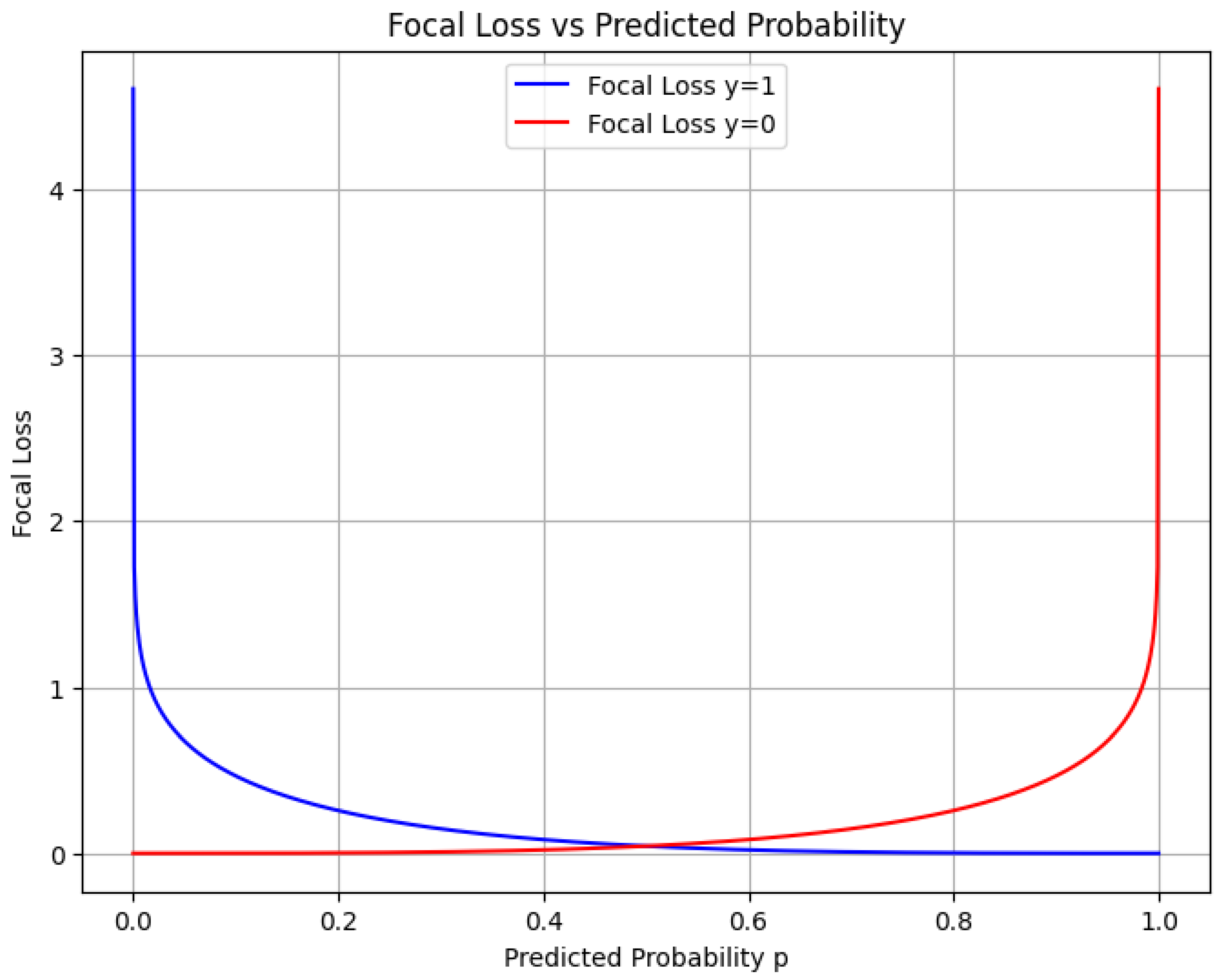

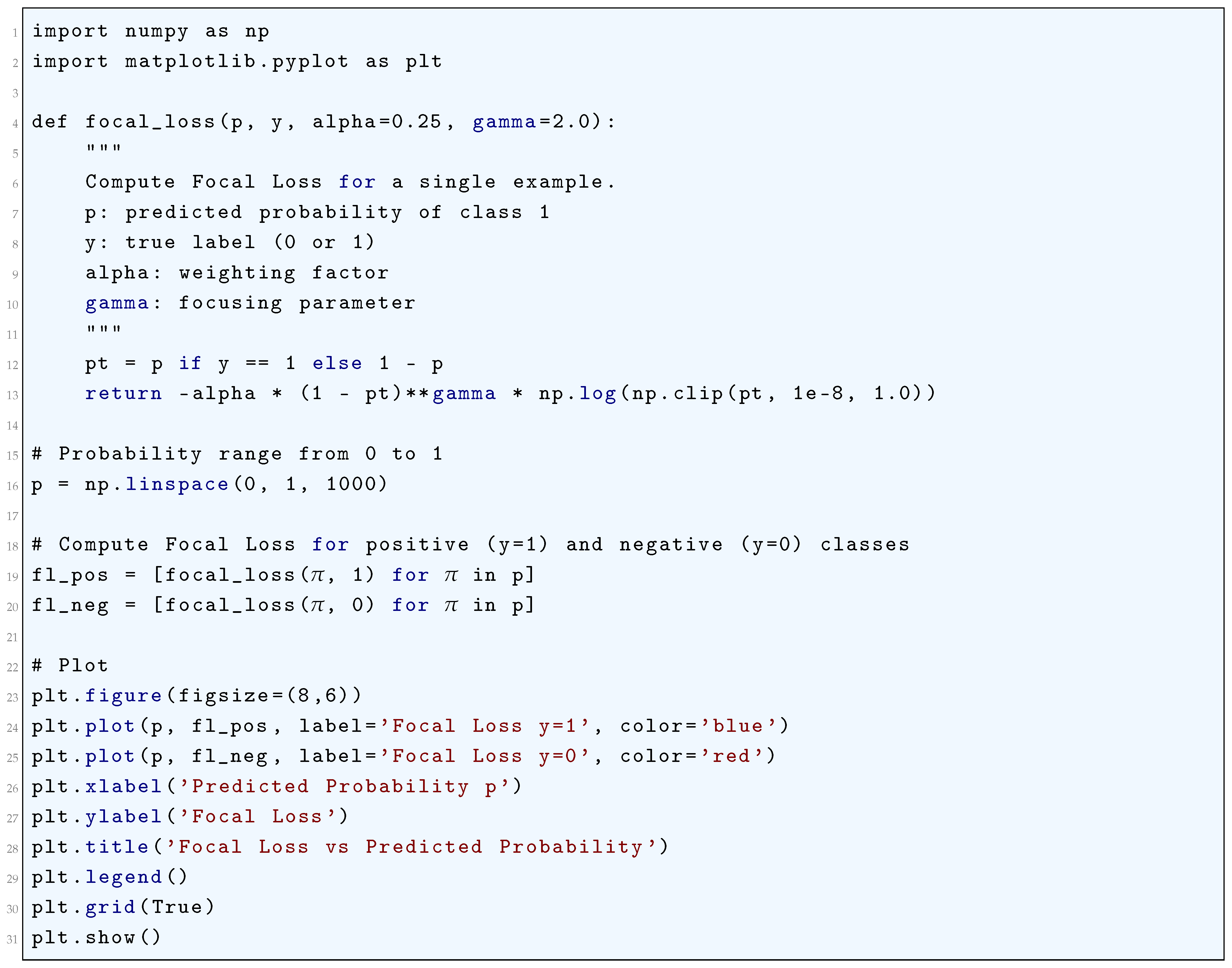

10.5.3.36 Python Code to Generate Figure 103 Illustrating Focal Loss vs Predicted Probability

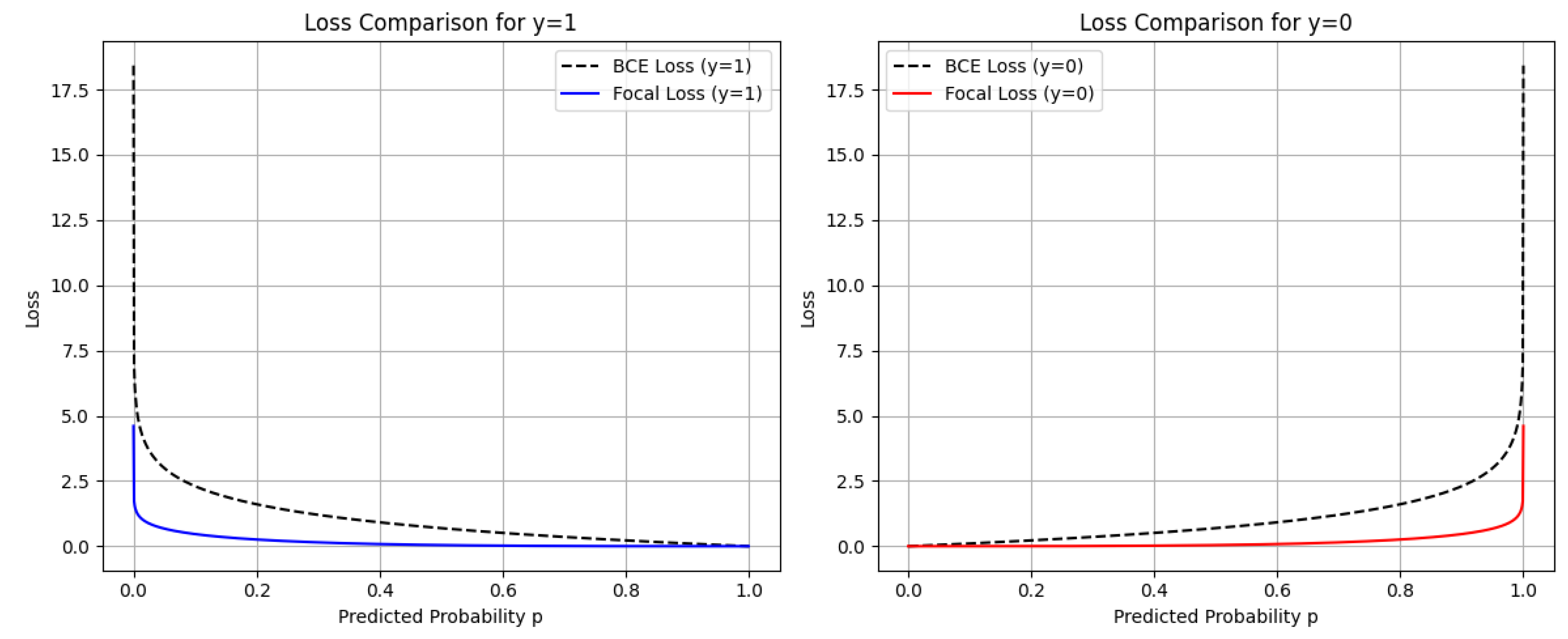

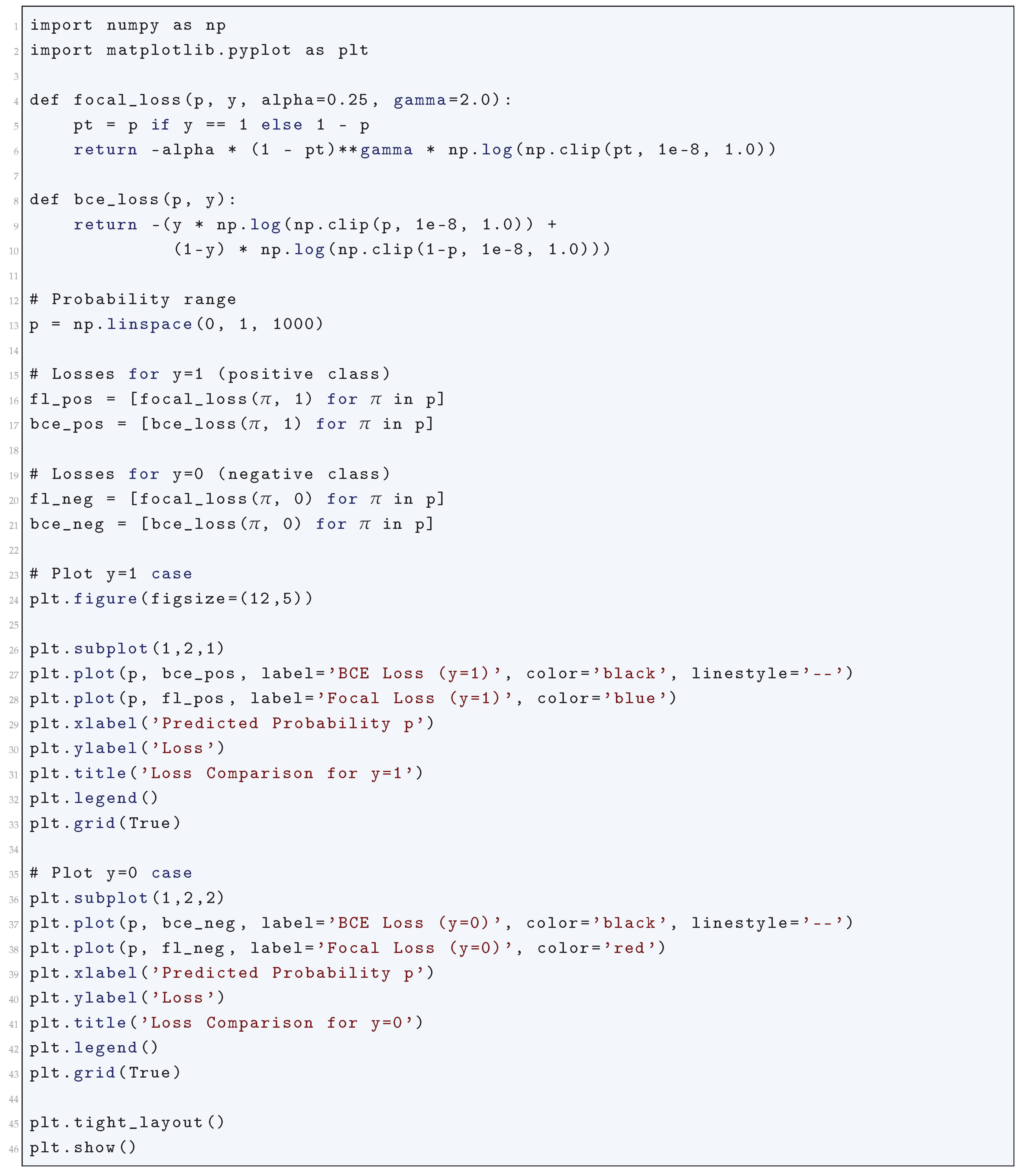

10.5.3.37 Python Code to Generate Figure 104 Illustrating Focal Loss vs Standard Binary Cross-Entropy

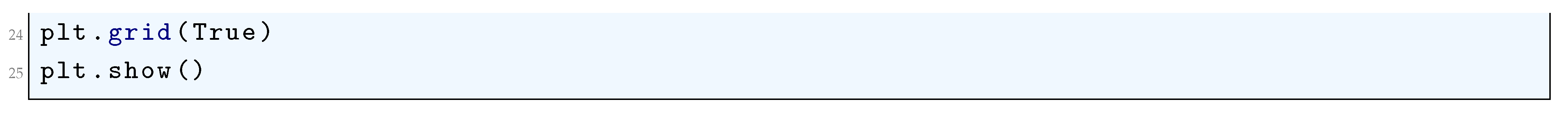

10.5.3.38 Python Code to Generate Figure 105 Illustrating Hinge Loss vs Prediction Score

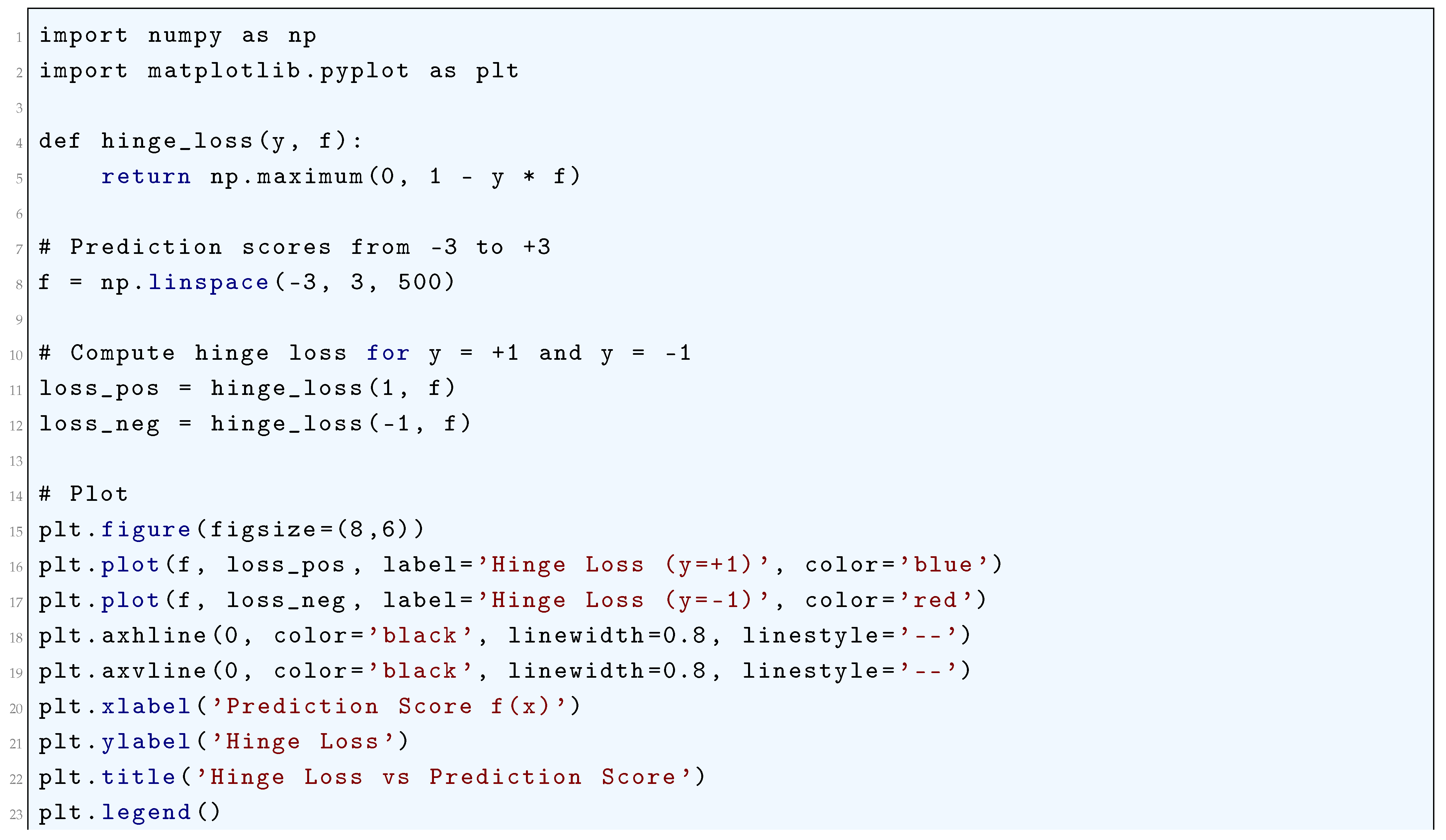

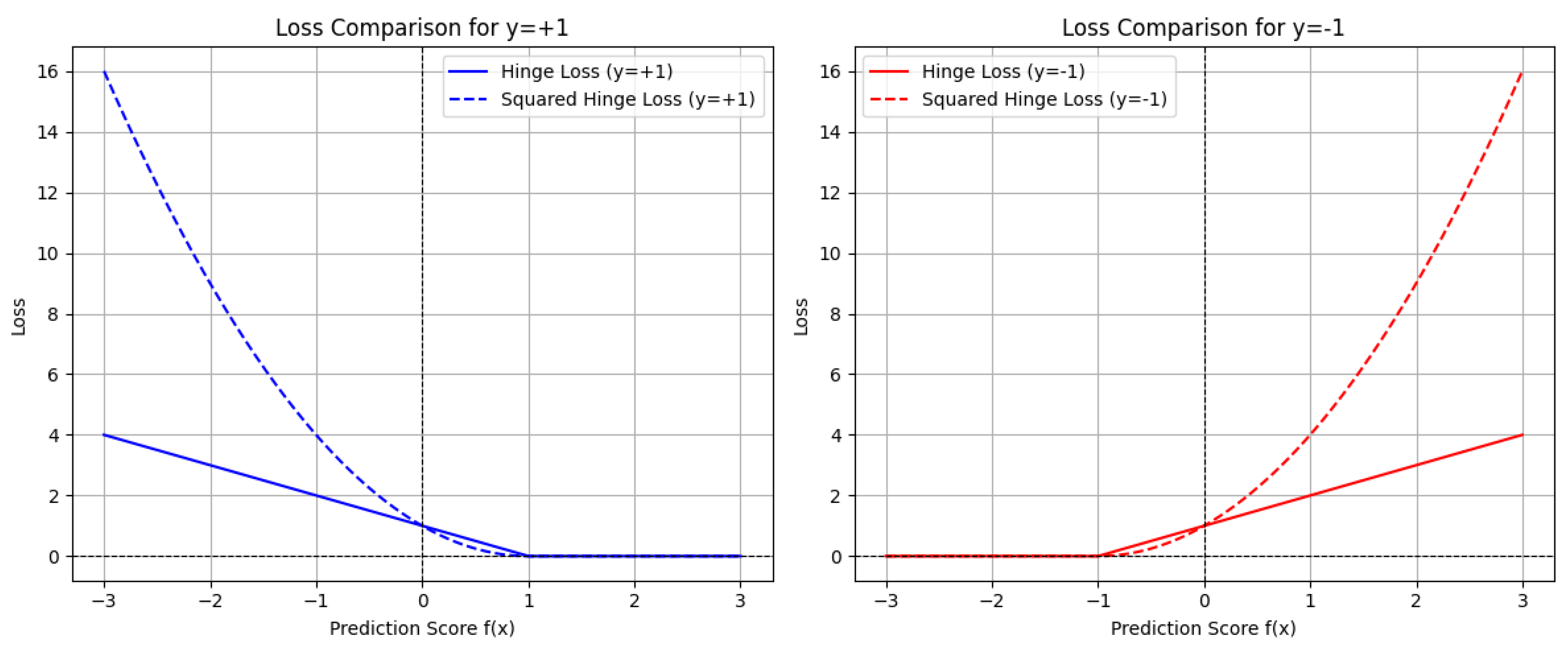

10.5.3.39 Python Code to Generate Figure 106 Illustrating Comparison of the Standard Hinge Loss and the Squared Hinge Loss

10.5.3.40 Python Code to Generate Figure 107 Illustrating Value Loss (MSE) for Different Targets

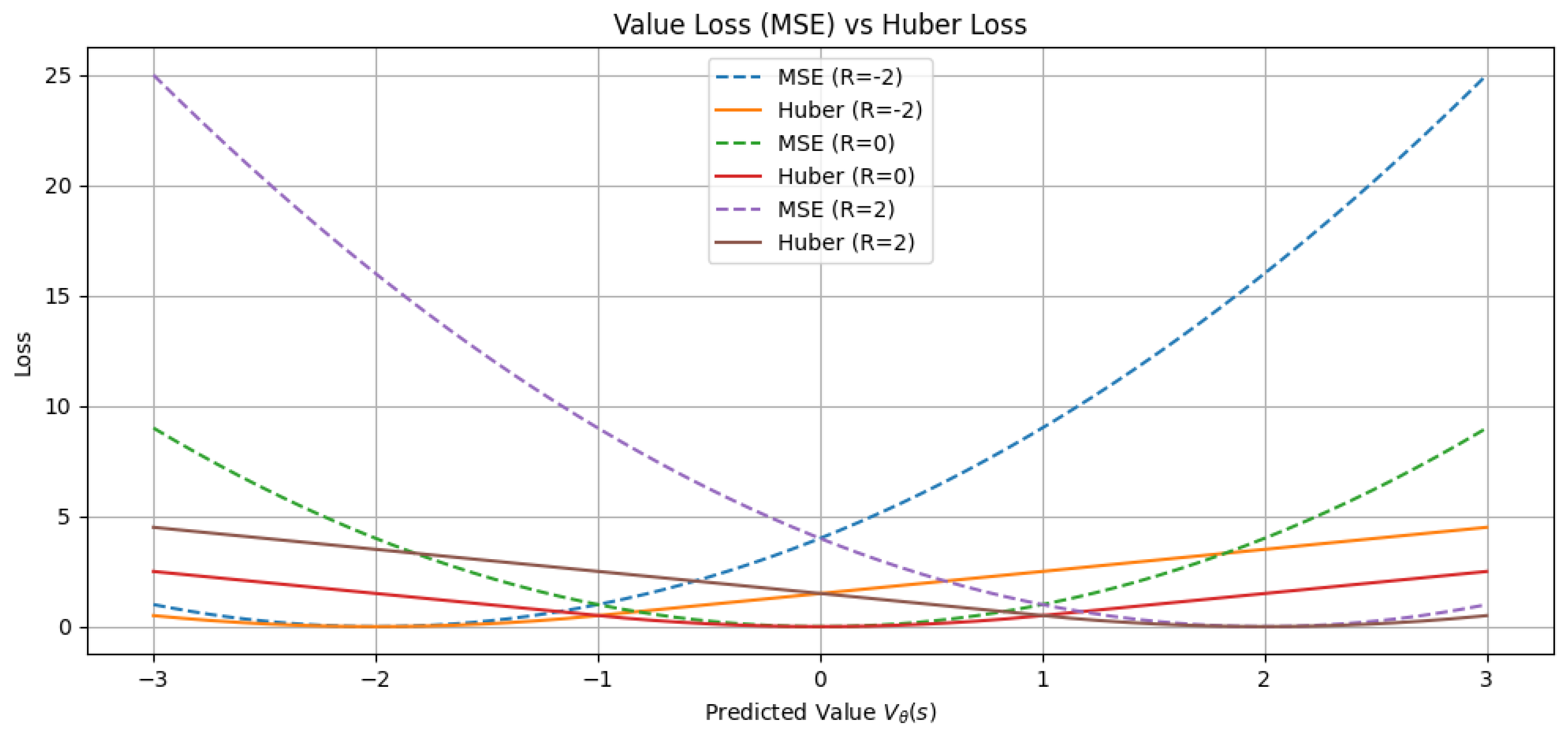

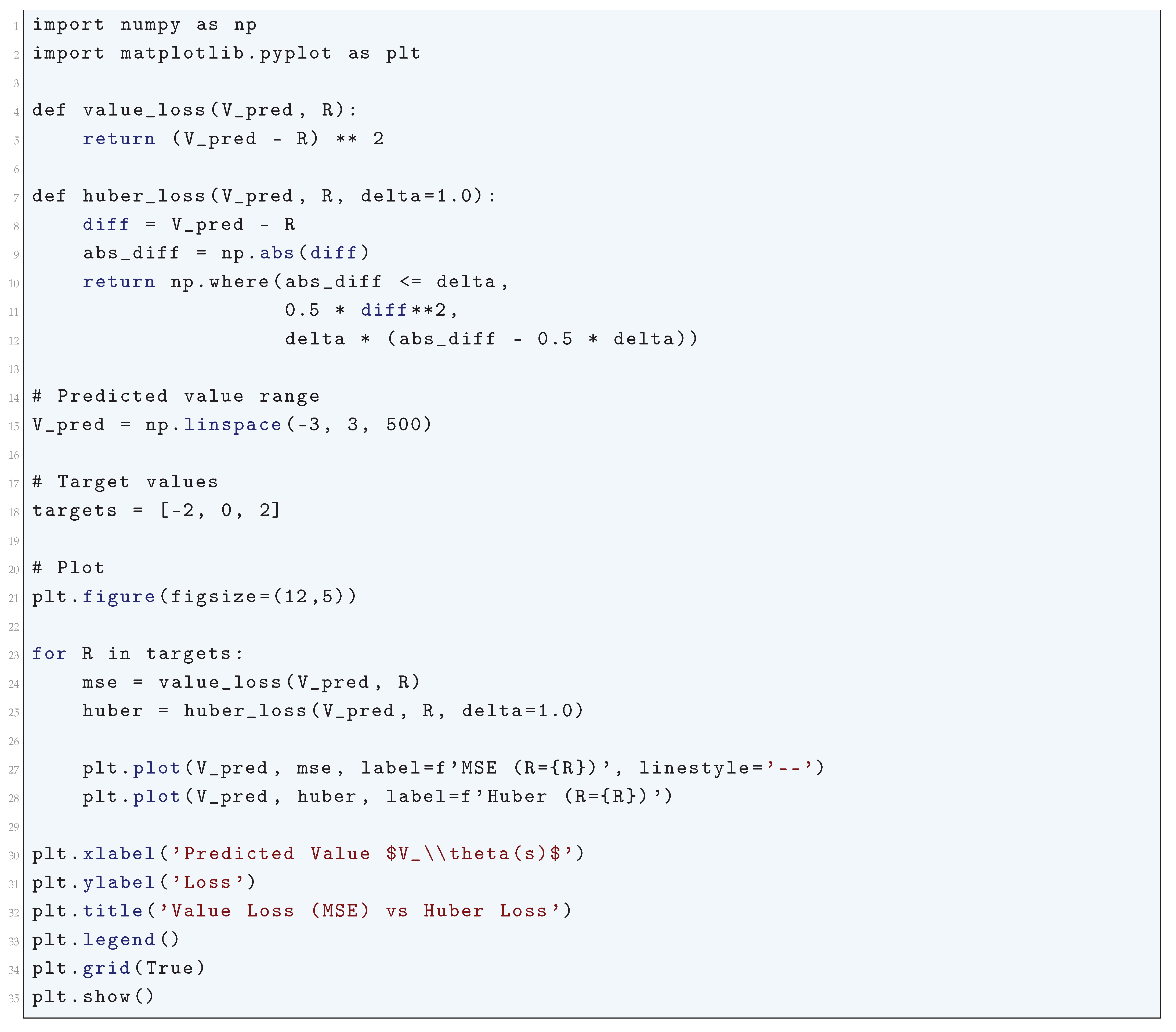

10.5.3.41 Python Code to Generate Figure 108 Illustrating Value Loss (MSE) vs Huber Loss

10.5.3.42 Python Code to Generate Figure 109 Illustrating Policy Loss vs Action Probability

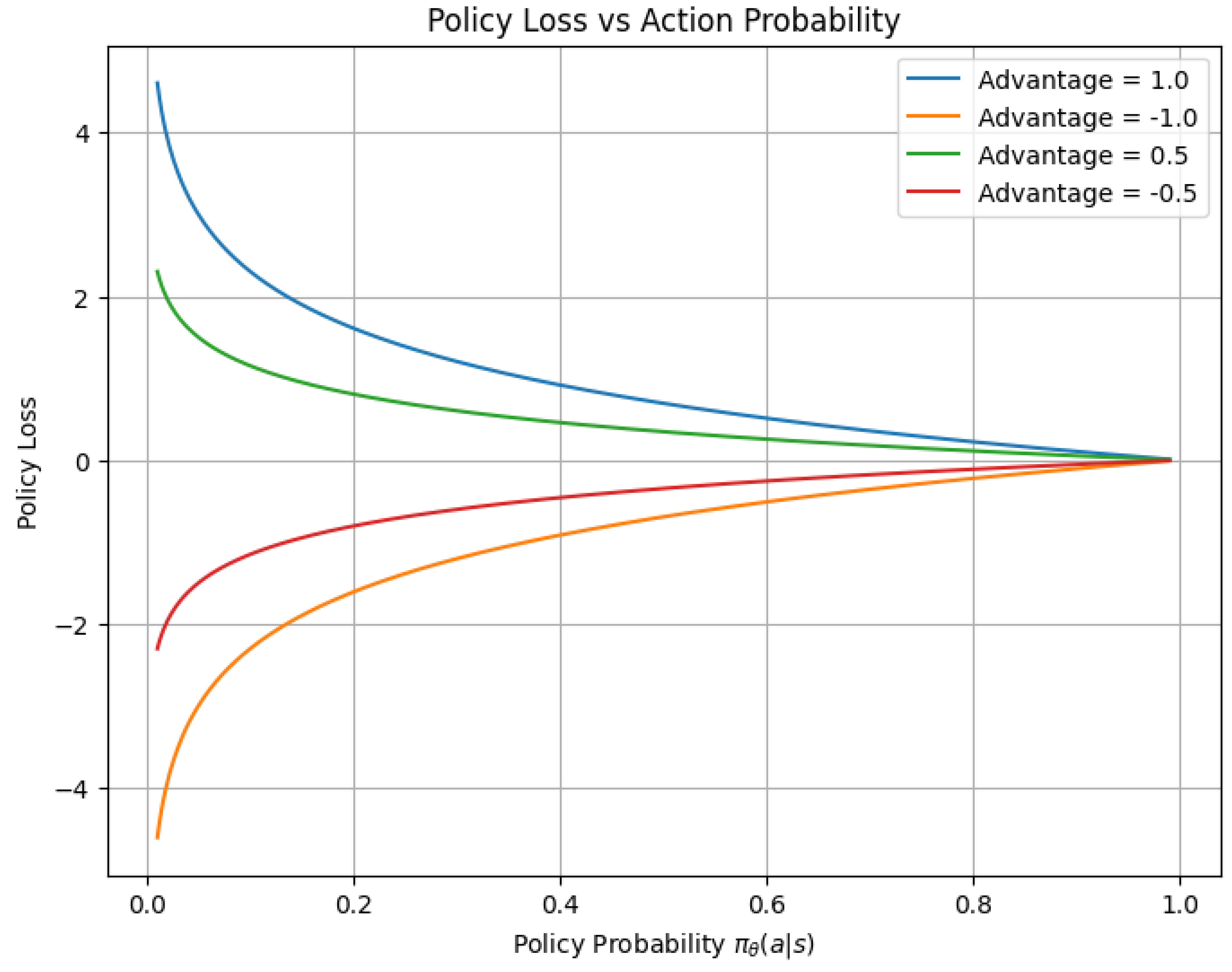

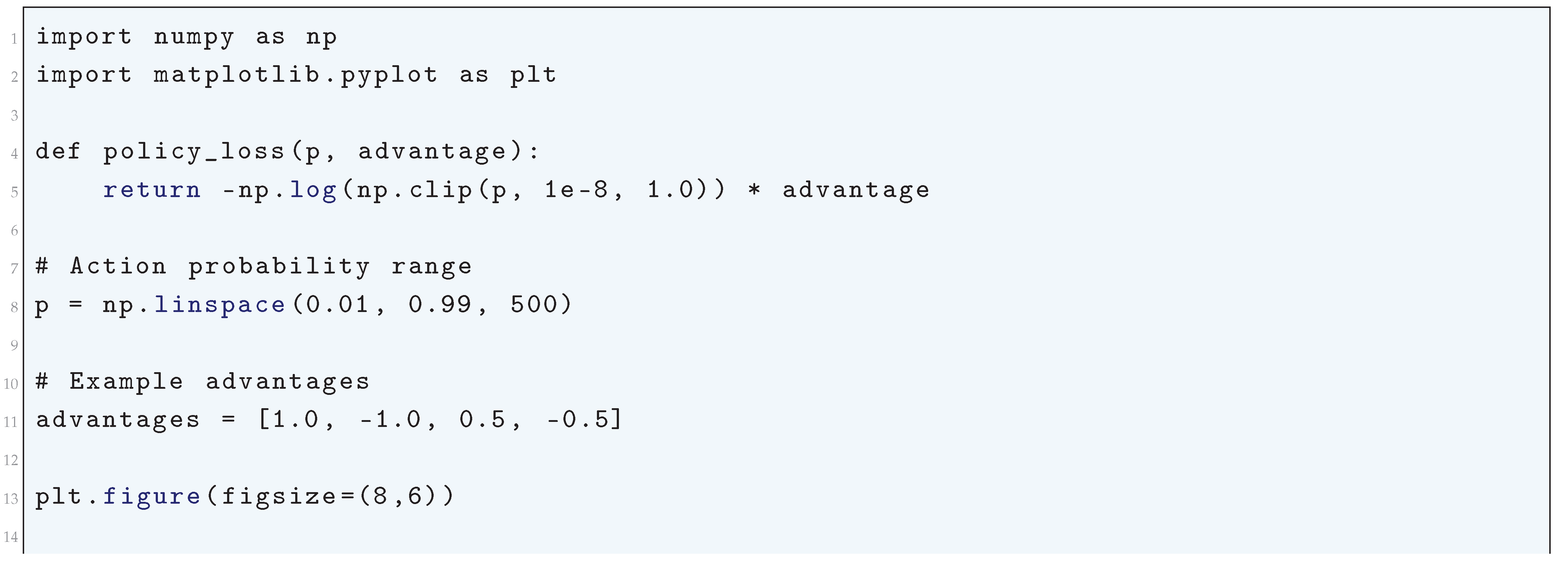

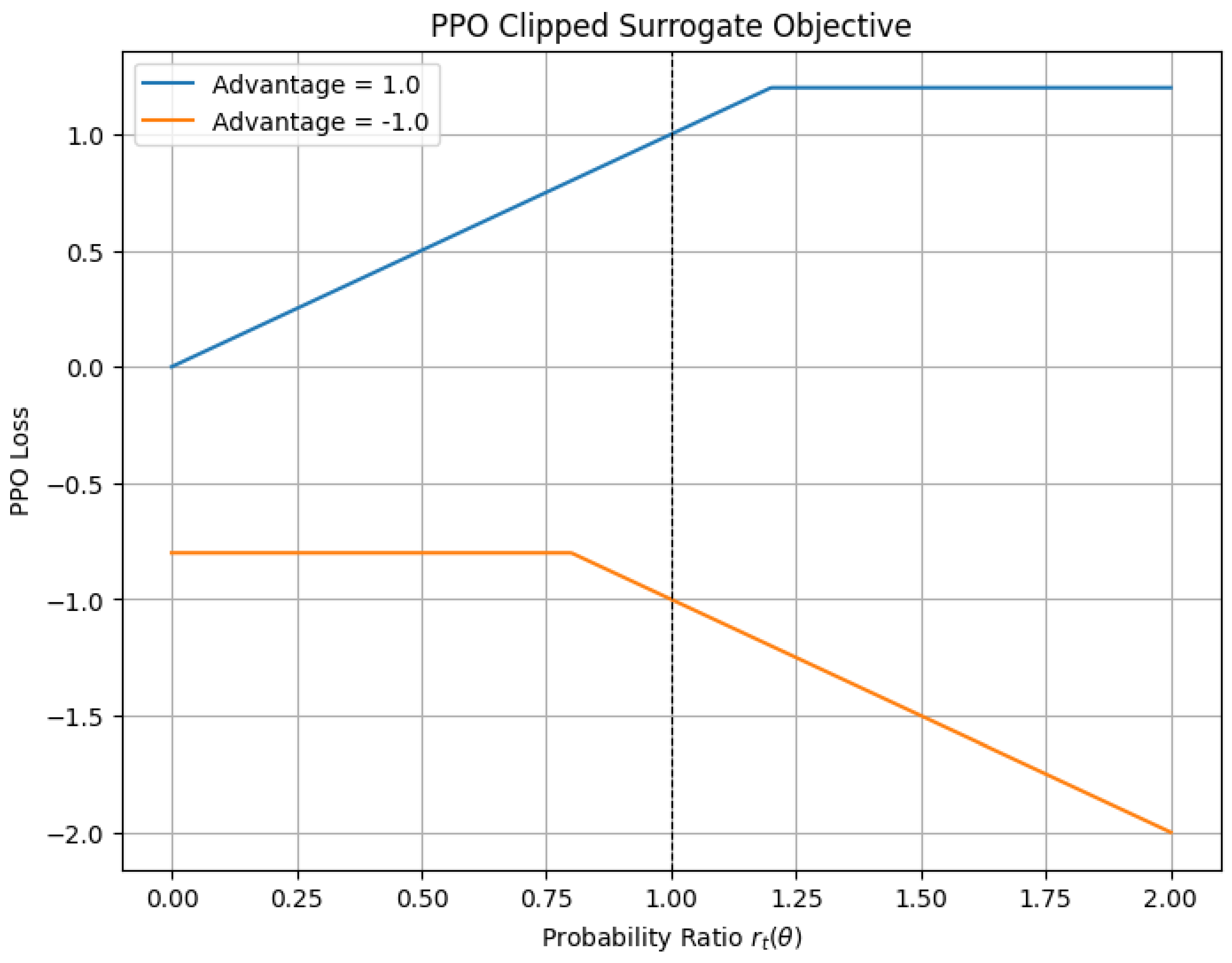

10.5.3.43 Python Code to Generate Figure 110 Illustrating PPO Clipped Surrogate Objective

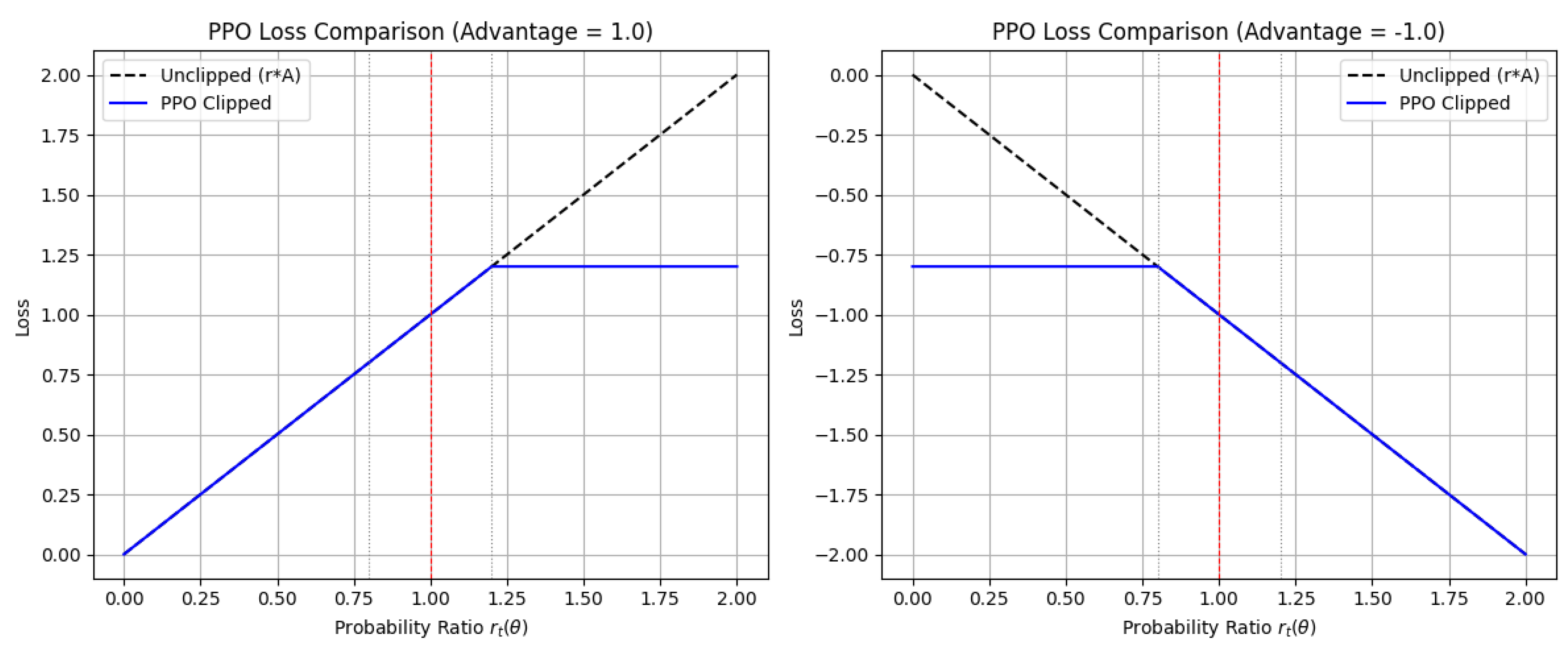

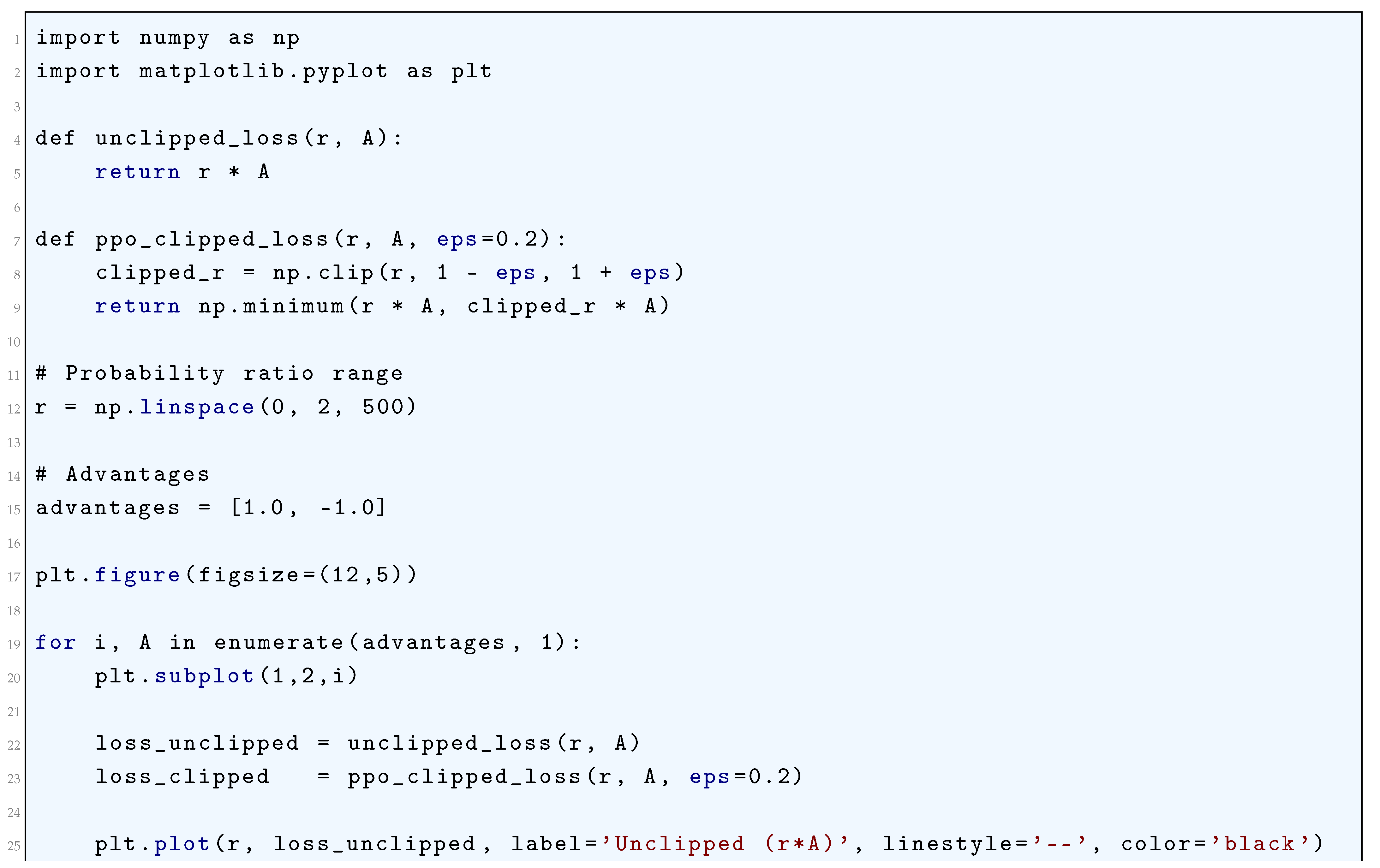

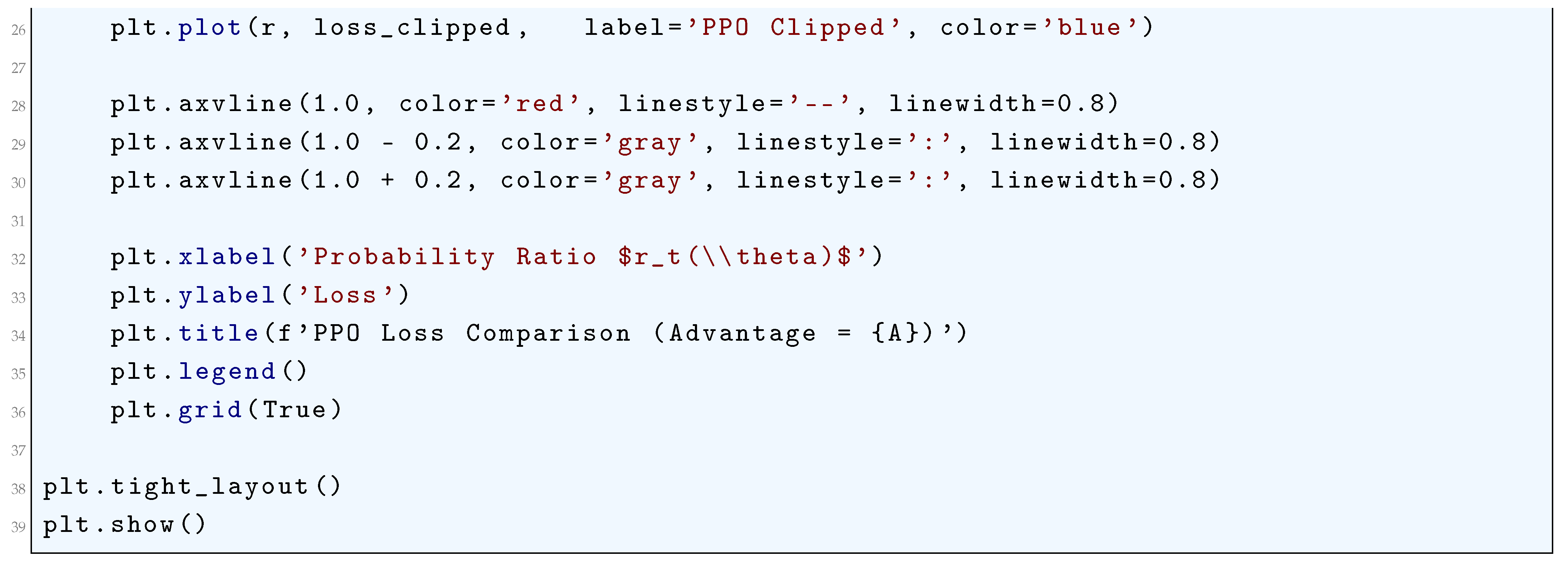

10.5.3.44 Python Code to Generate Figure 111 Illustrating PPO Loss Comparison

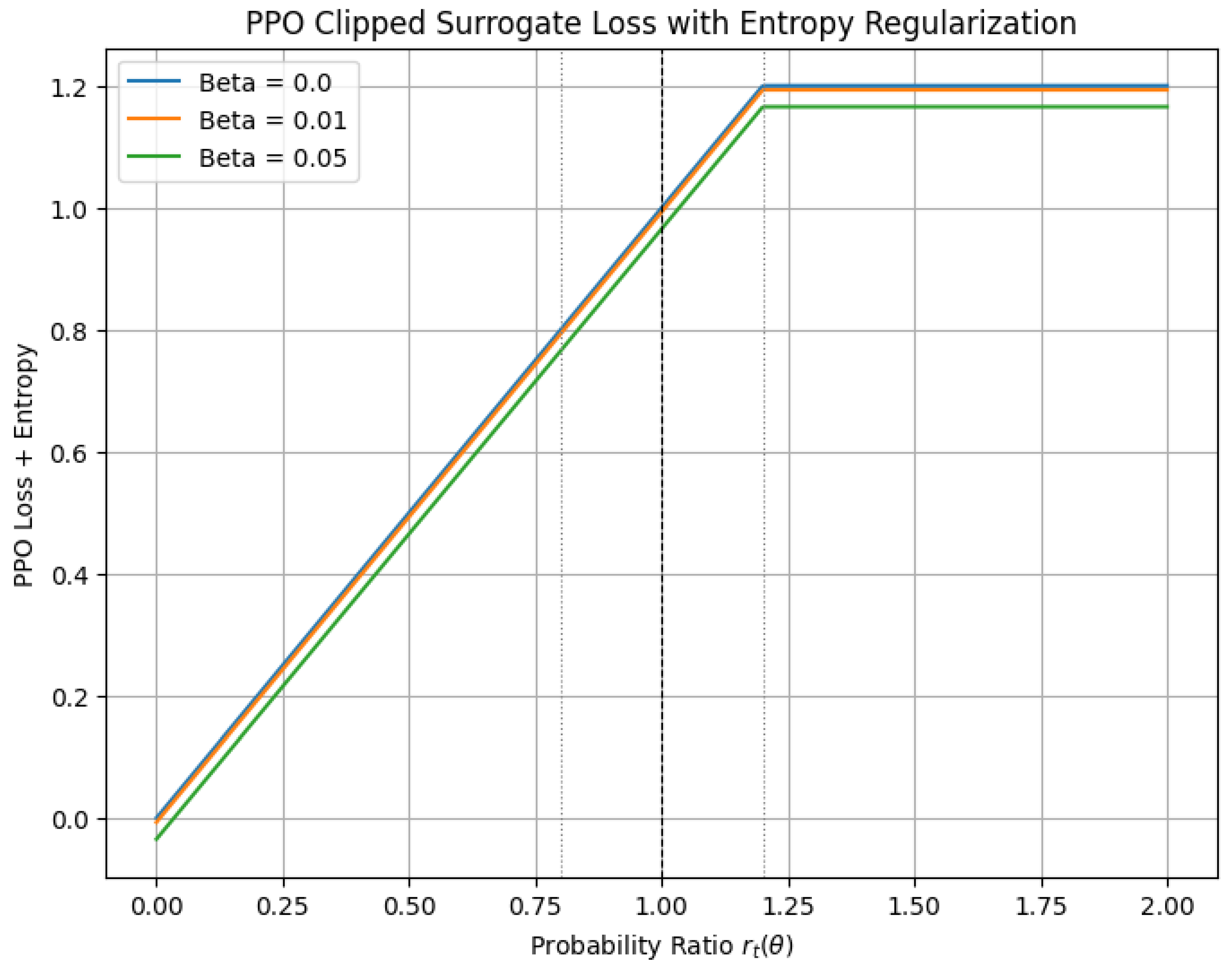

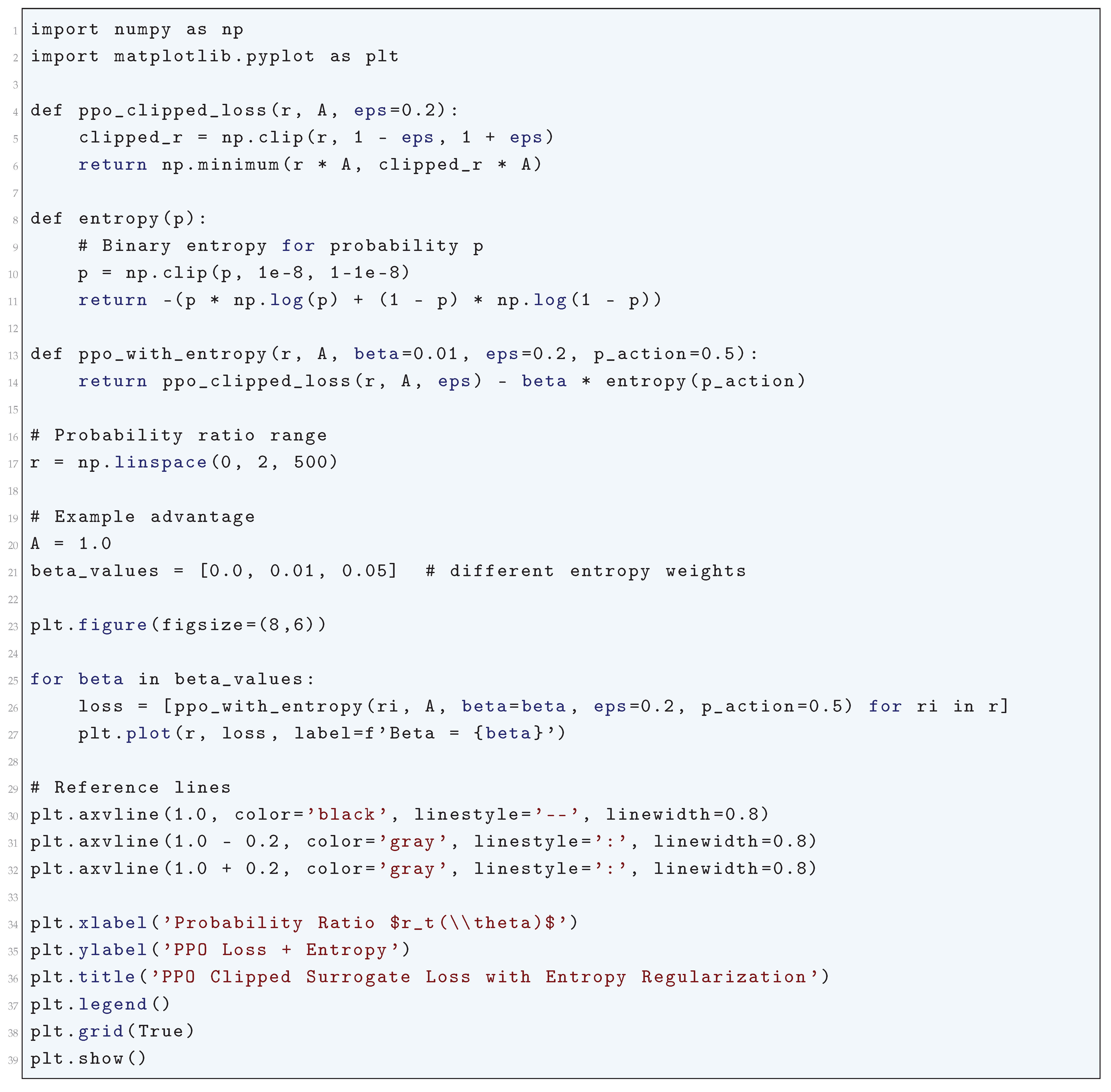

10.5.3.45 Python Code to Generate Figure 112 Illustrating PPO Clipped Surrogate Loss with Entropy Regularization

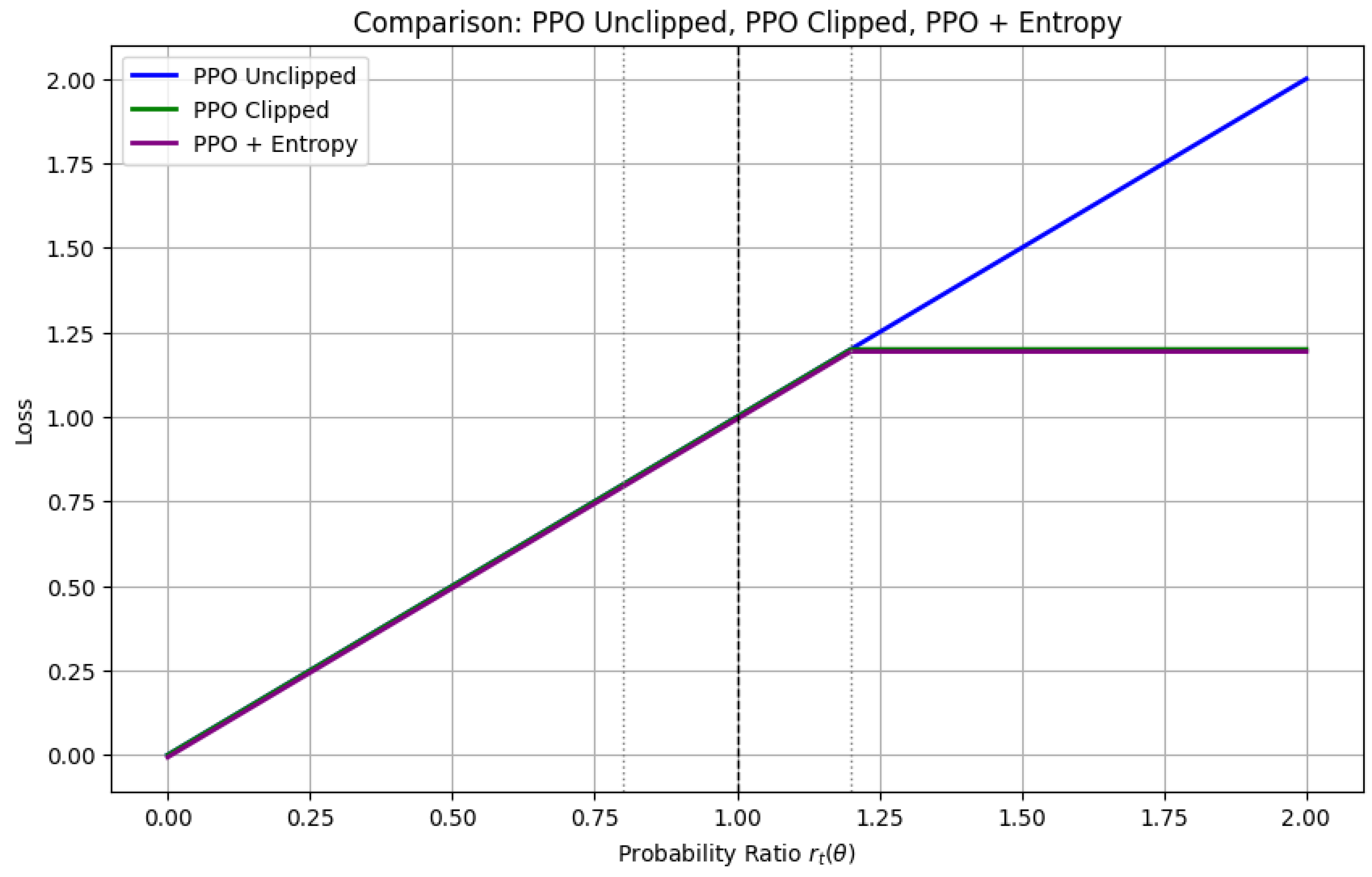

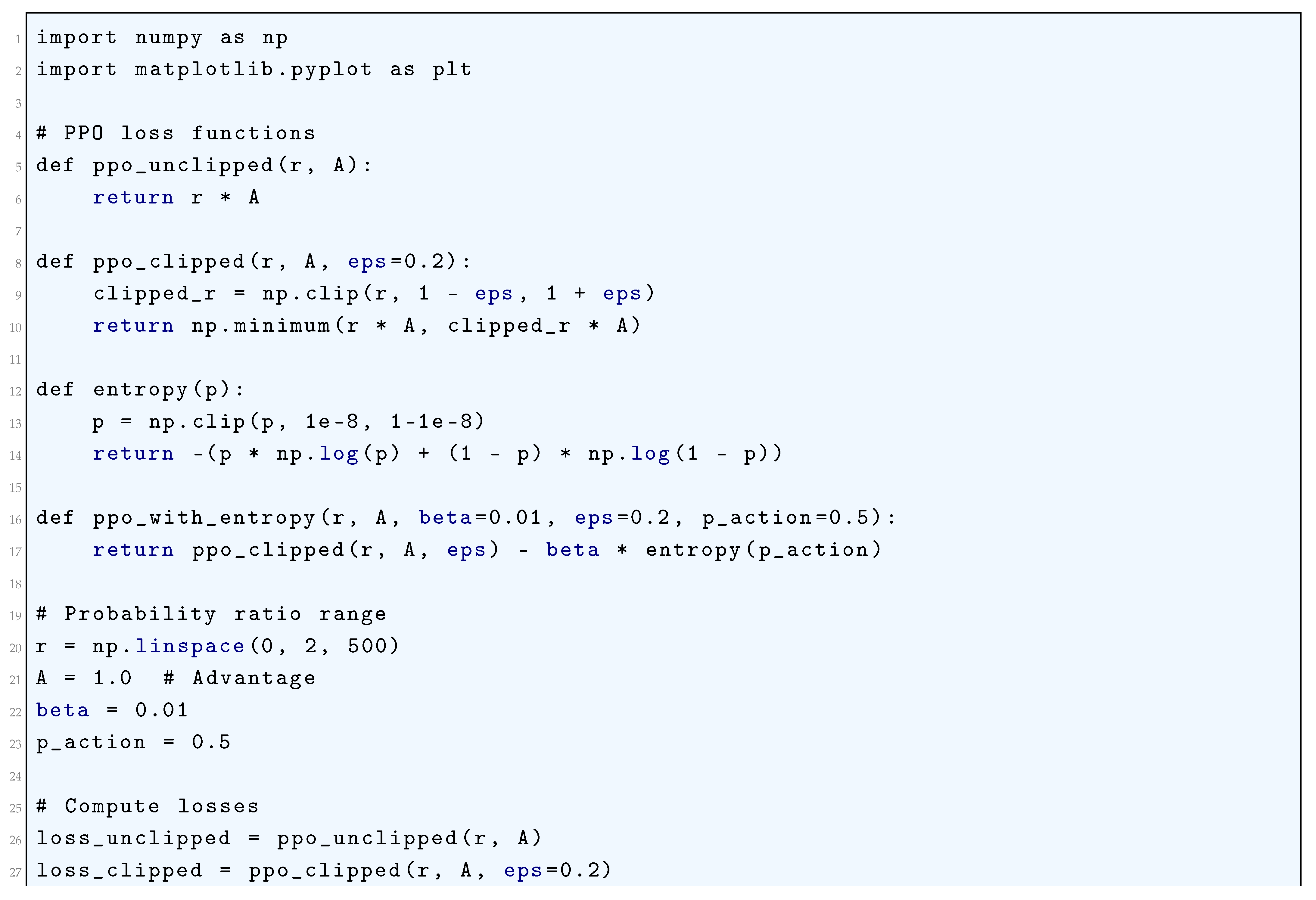

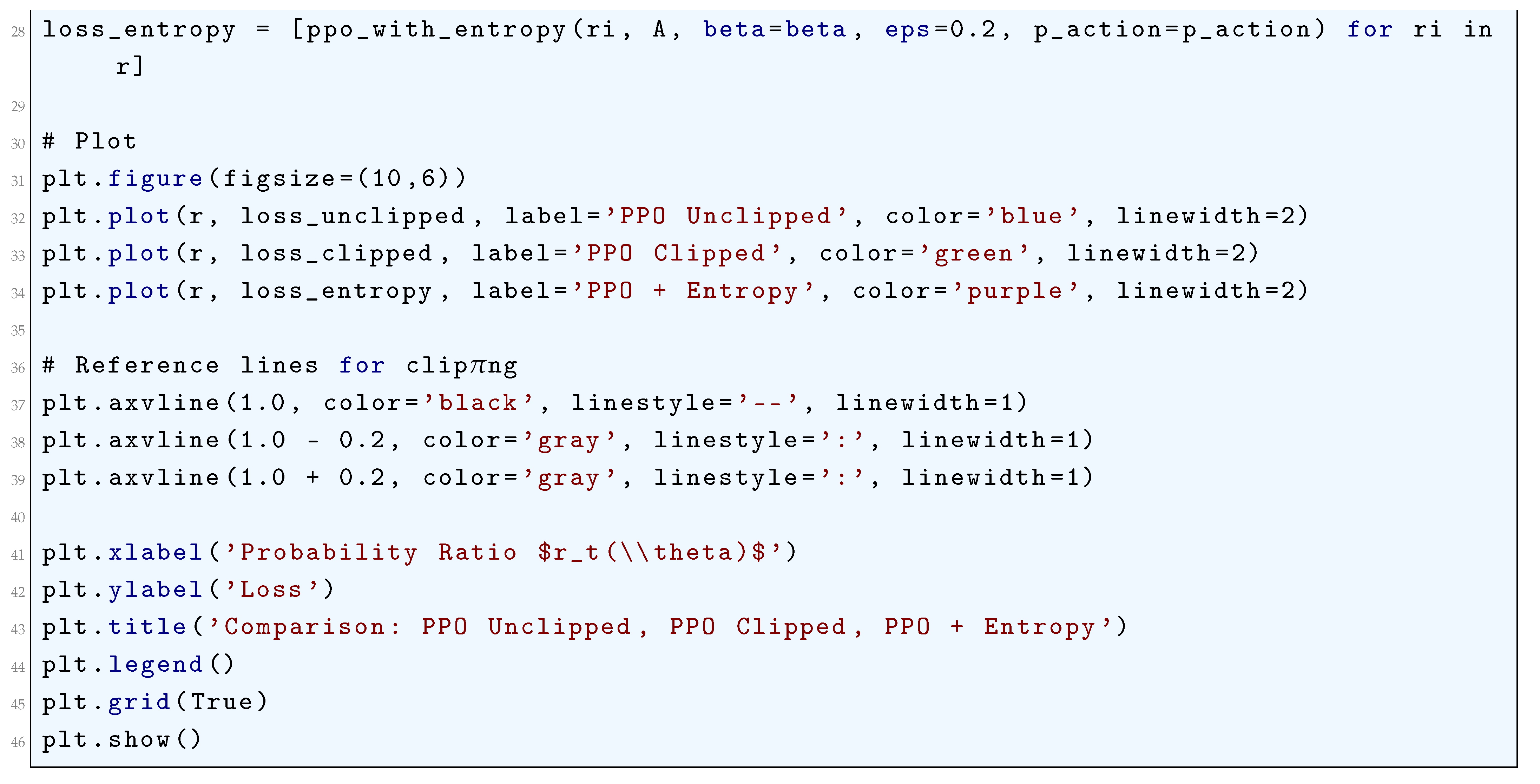

10.5.3.46 Python Code to Generate Figure 113 Comparing PPO Unclipped, PPO Clipped, PPO + Entropy

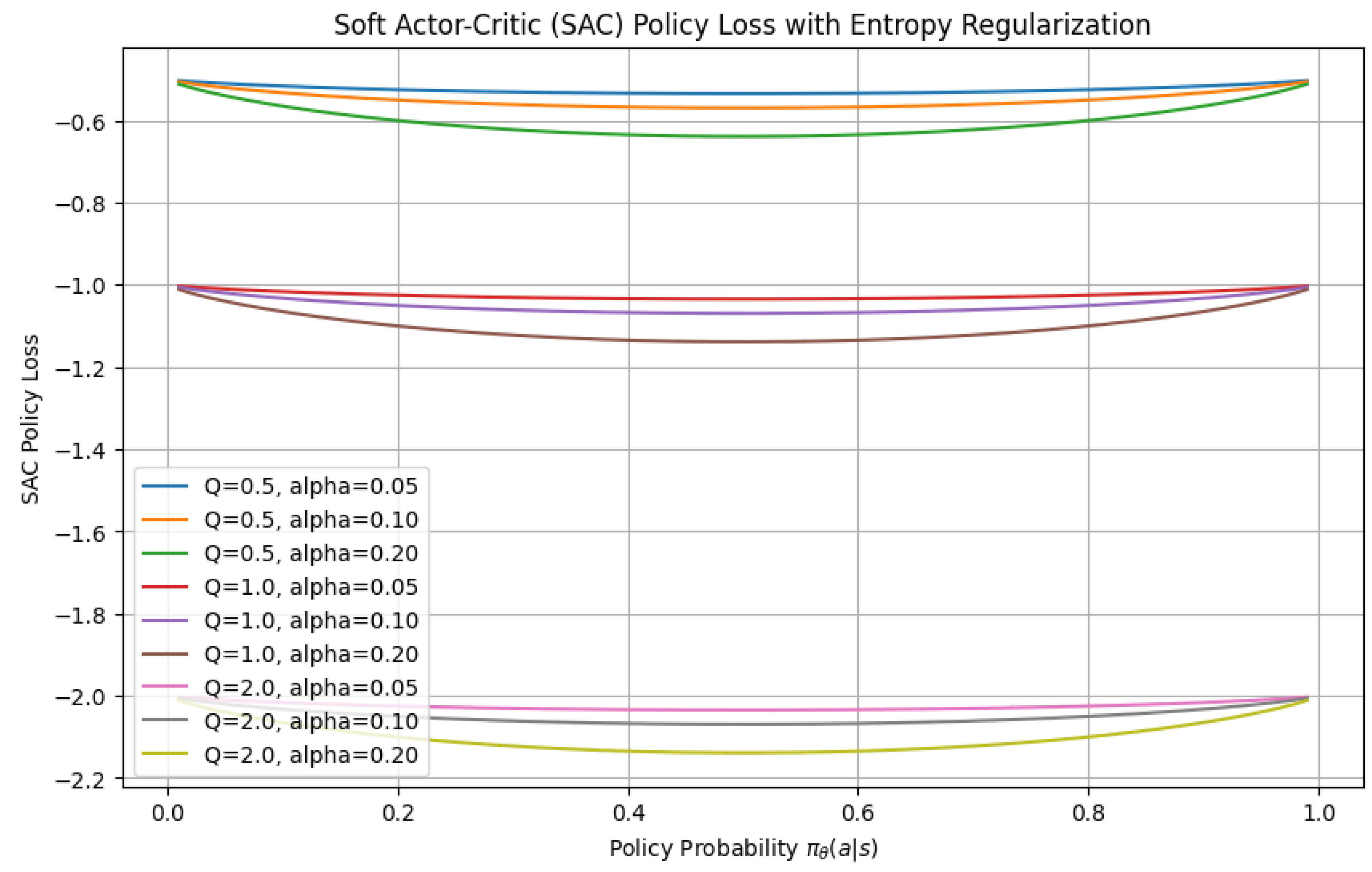

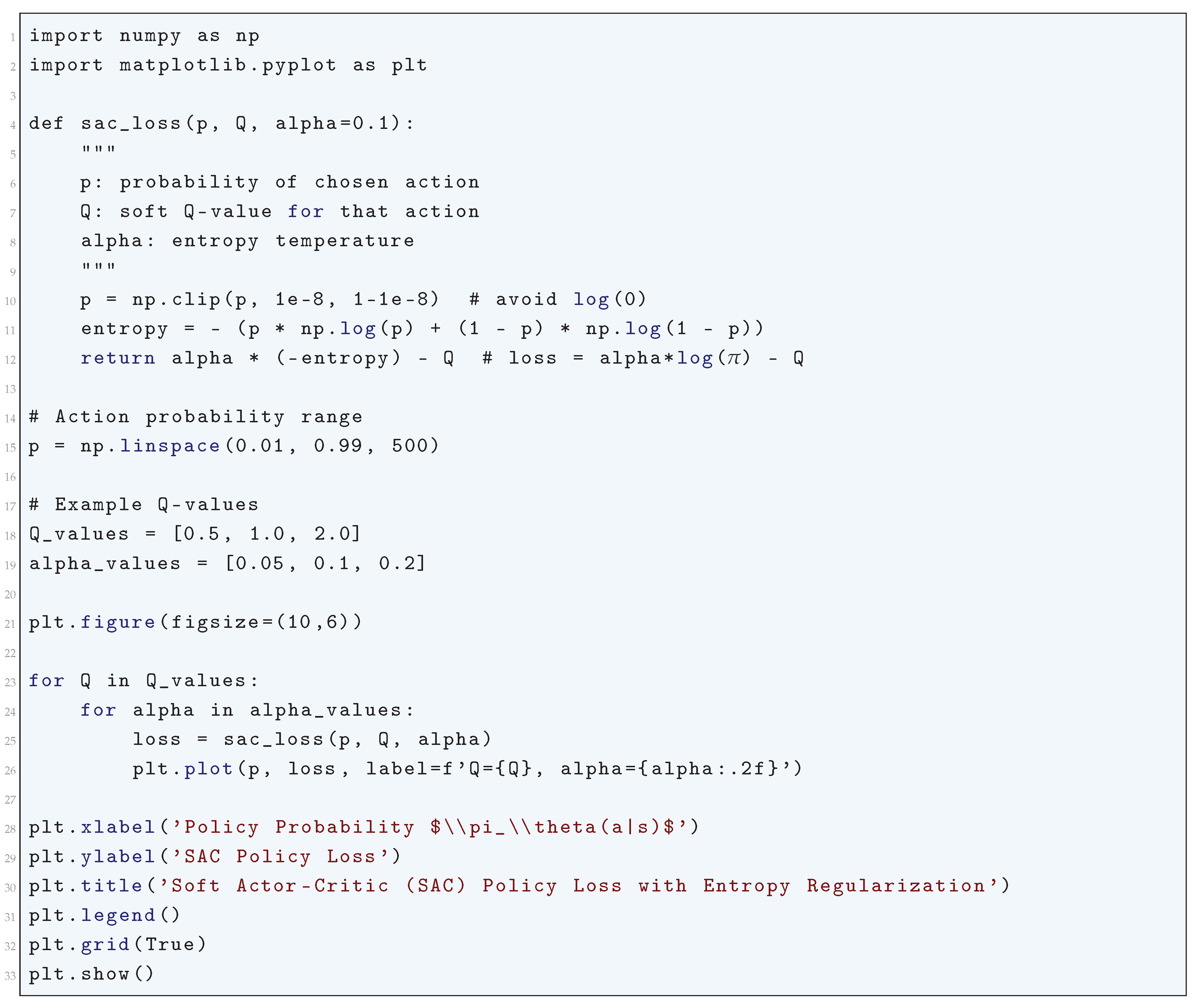

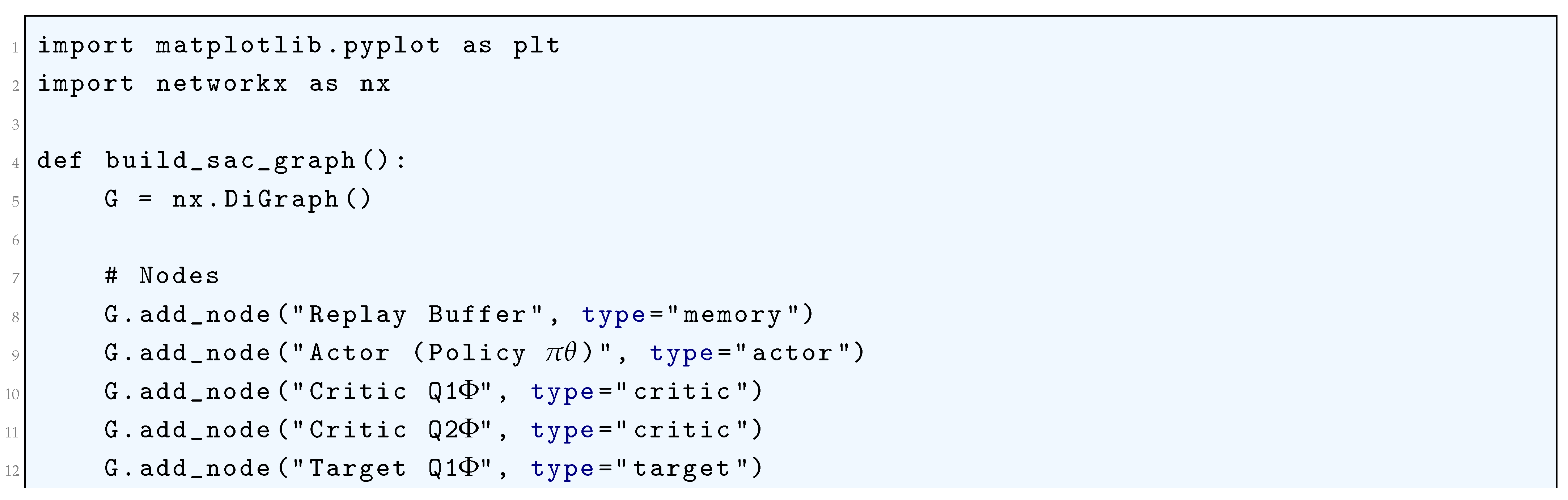

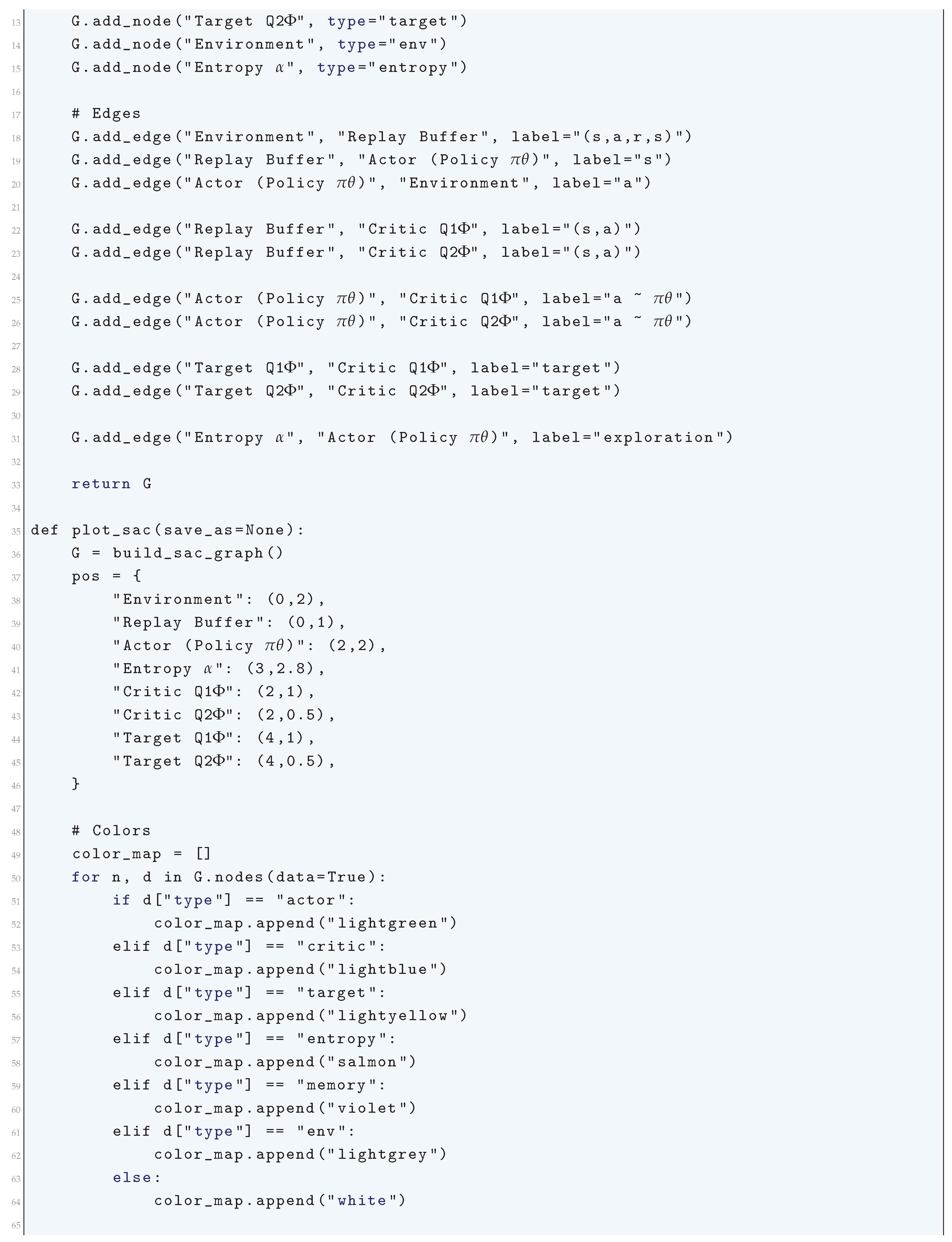

10.5.3.47 Python Code to Generate Figure 114 Illustrating Soft Actor-Critic (SAC) Policy Loss with Entropy Regularization

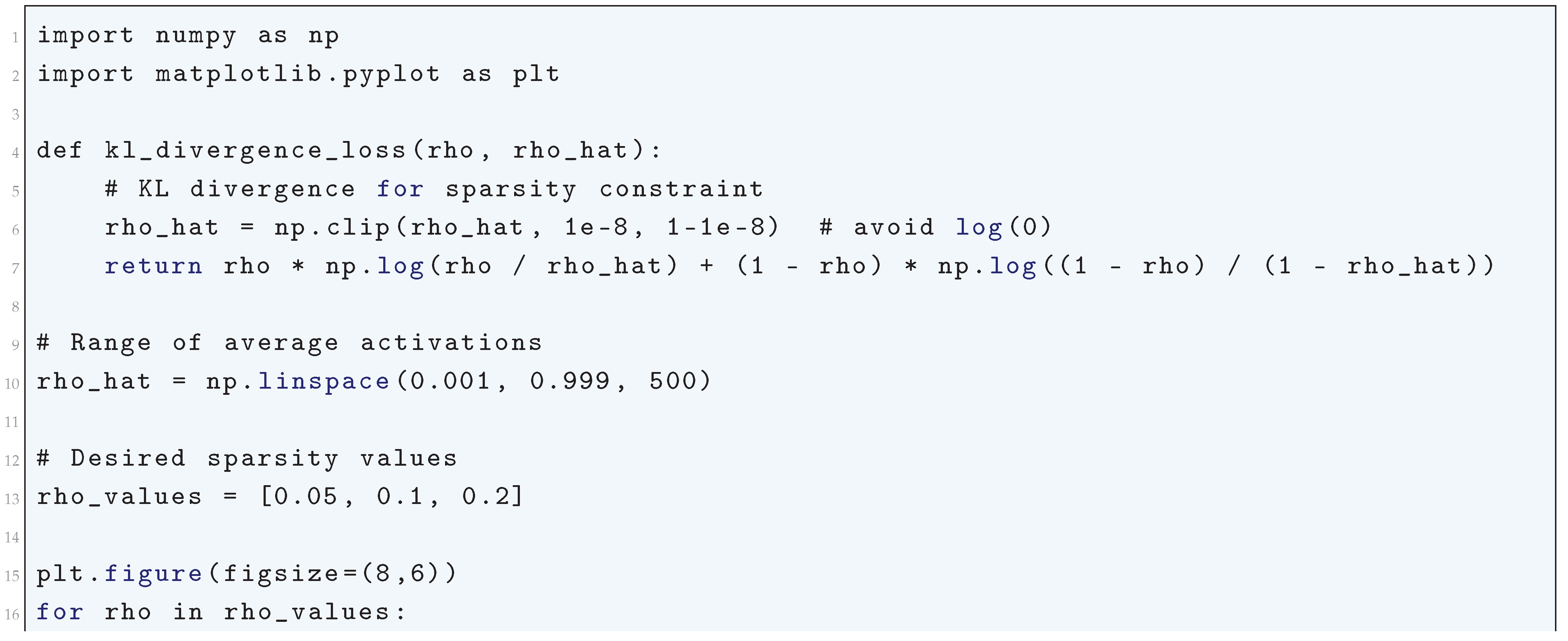

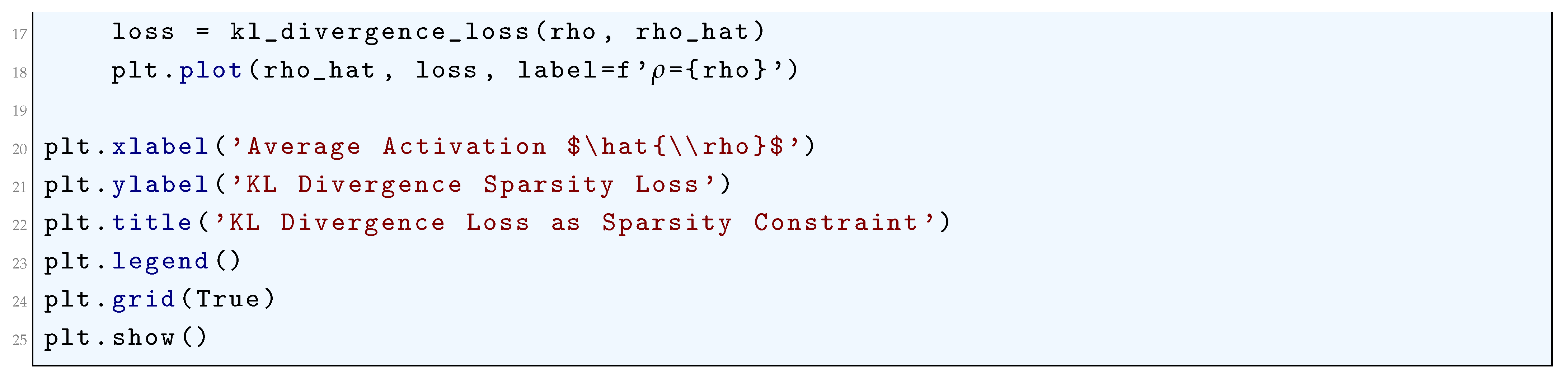

10.5.3.48 Python Code to Generate Figure 115 Illustrating KL Divergence Loss as Sparsity Constraint

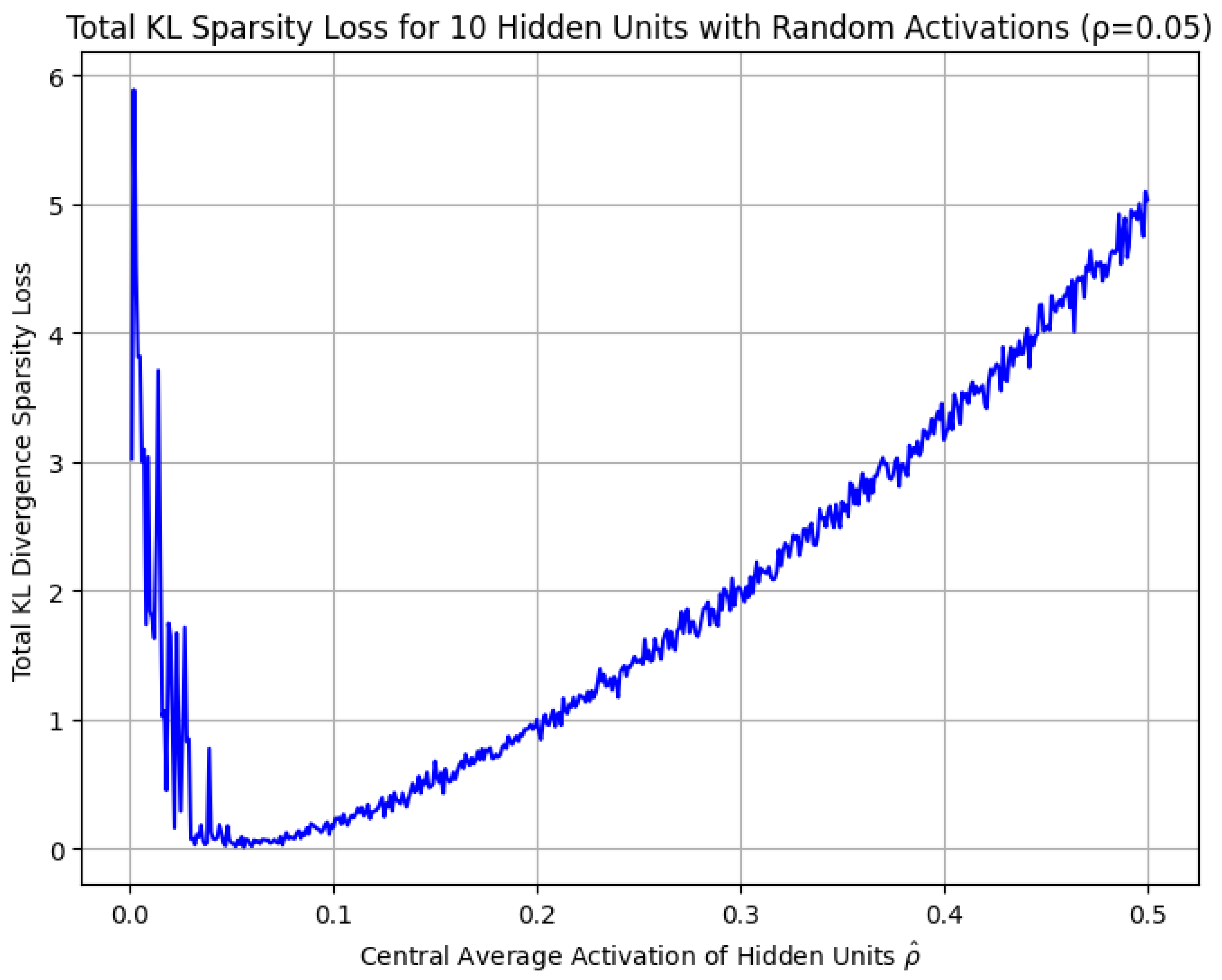

10.5.3.49 Python Code to Generate Figure 116 Illustrating Total KL Sparsity Loss for 10 Hidden Units ()

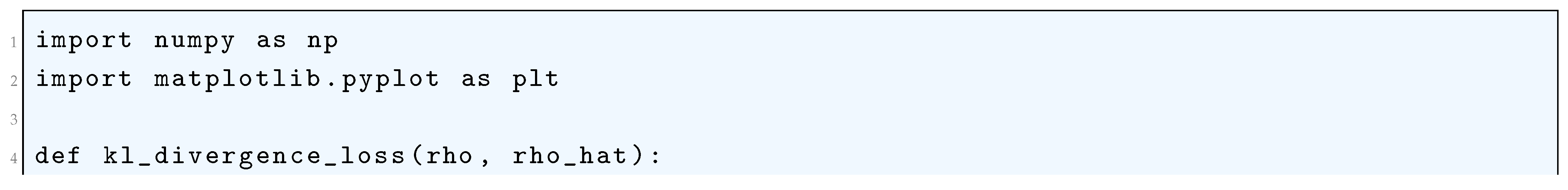

10.5.3.50 Python Code to Generate Figure 117 Illustrating Total KL Sparsity Loss for 10 Hidden Units with Random Activations ()

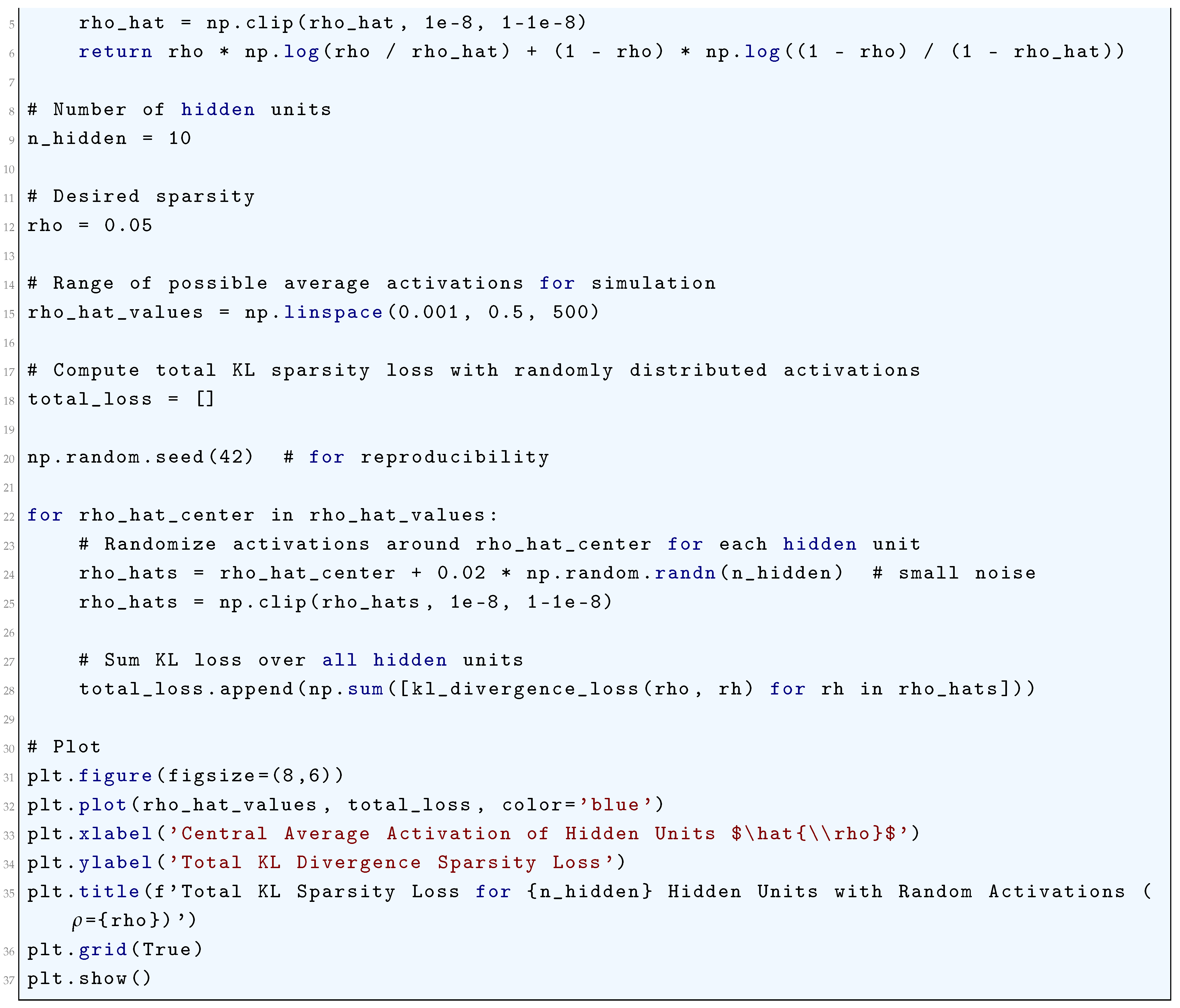

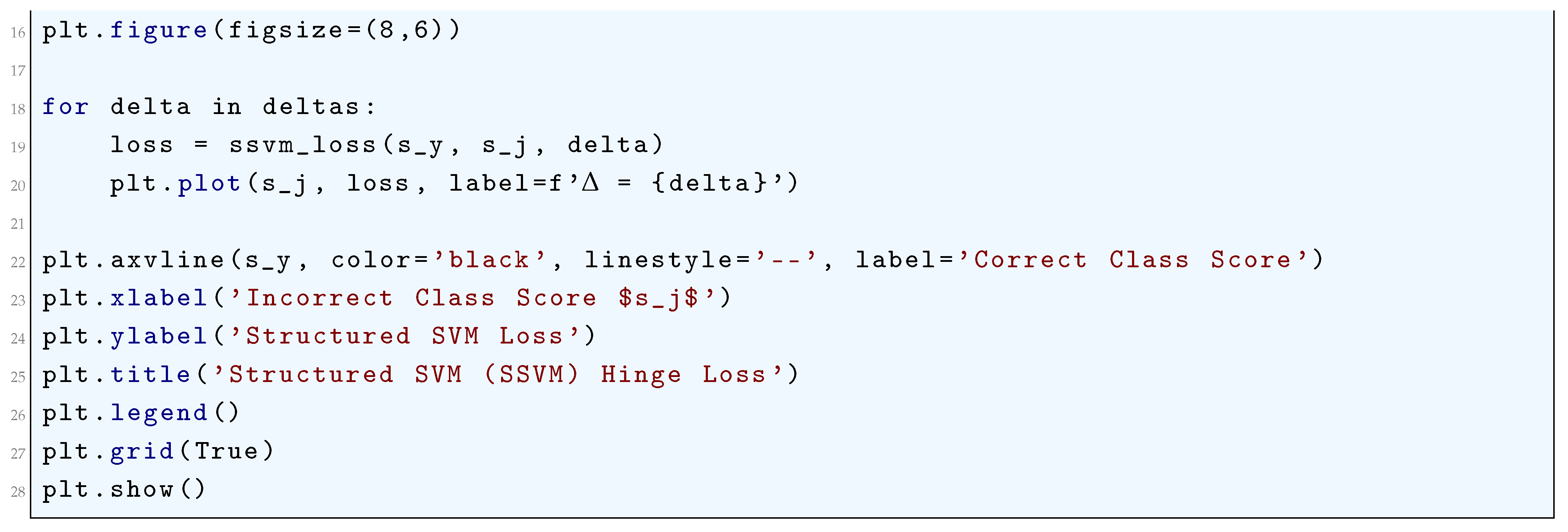

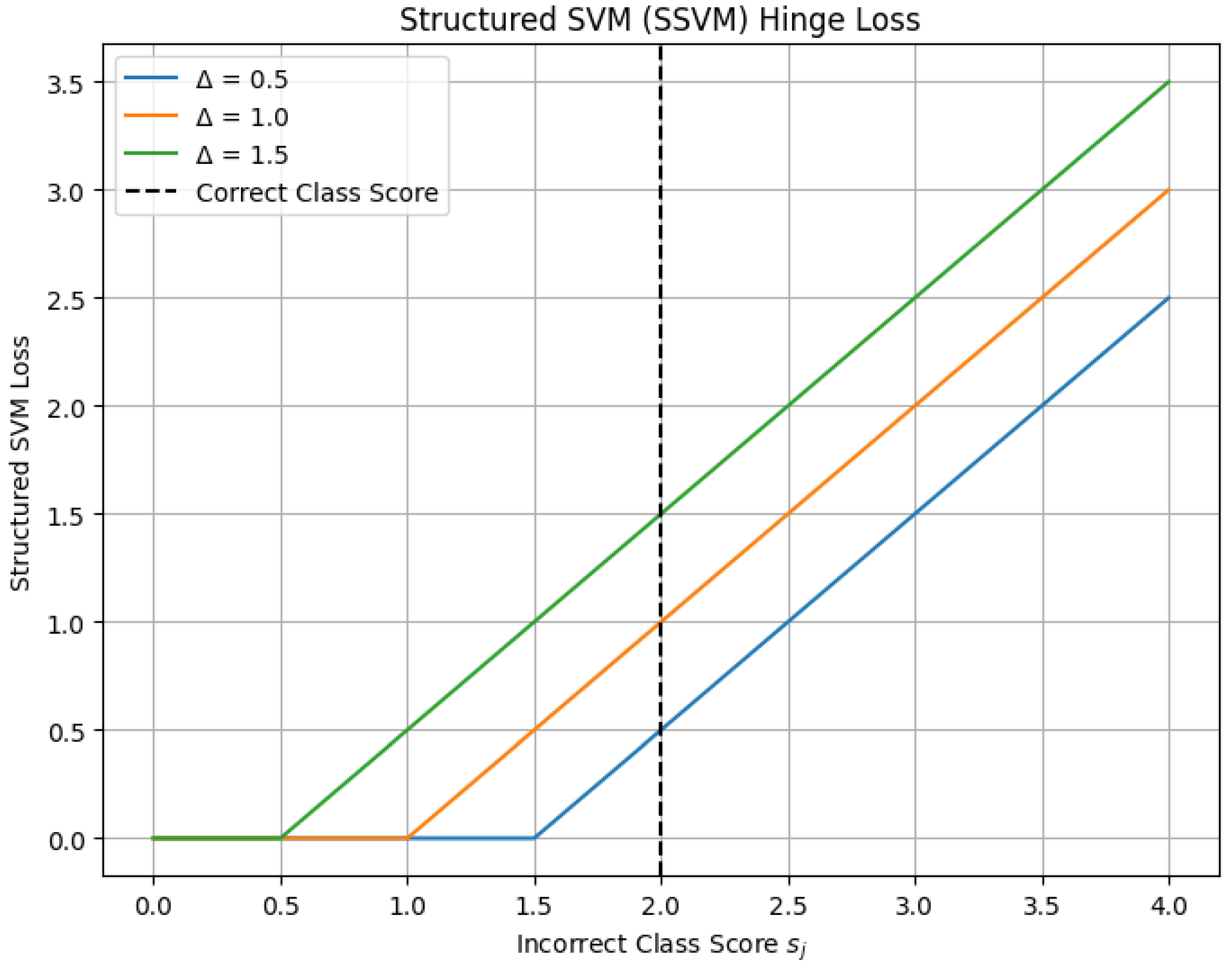

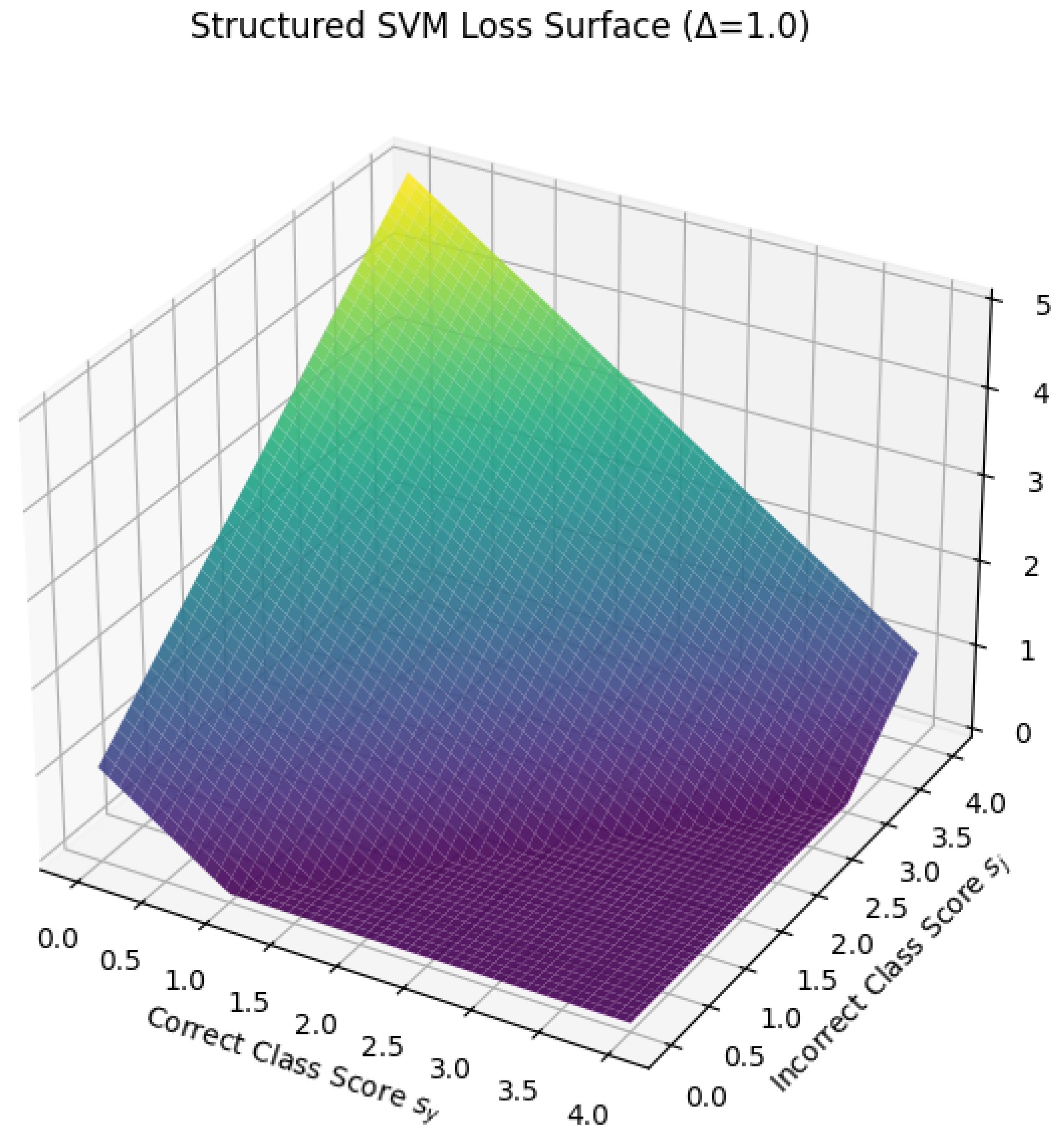

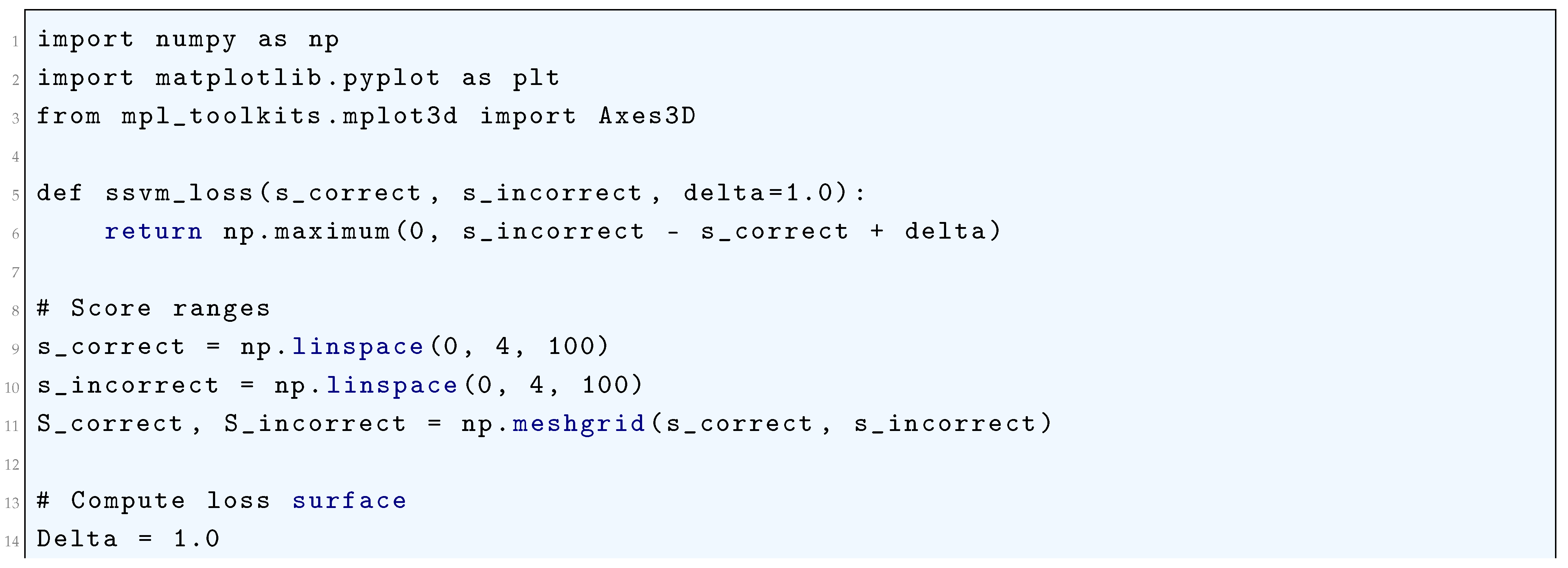

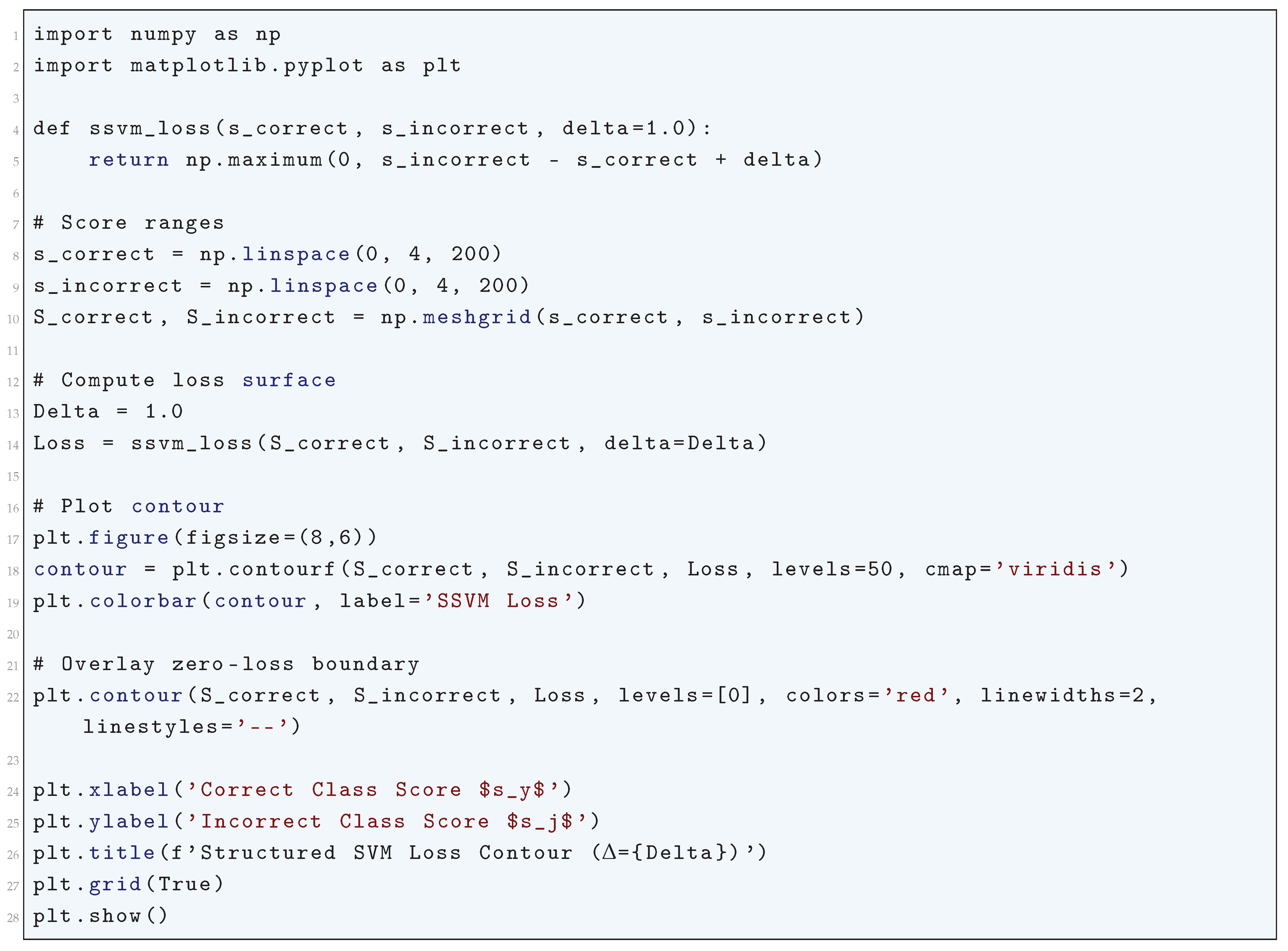

10.5.3.51 Python Code to Generate Figure 118 Illustrating Structured SVM (SSVM) Hinge Loss

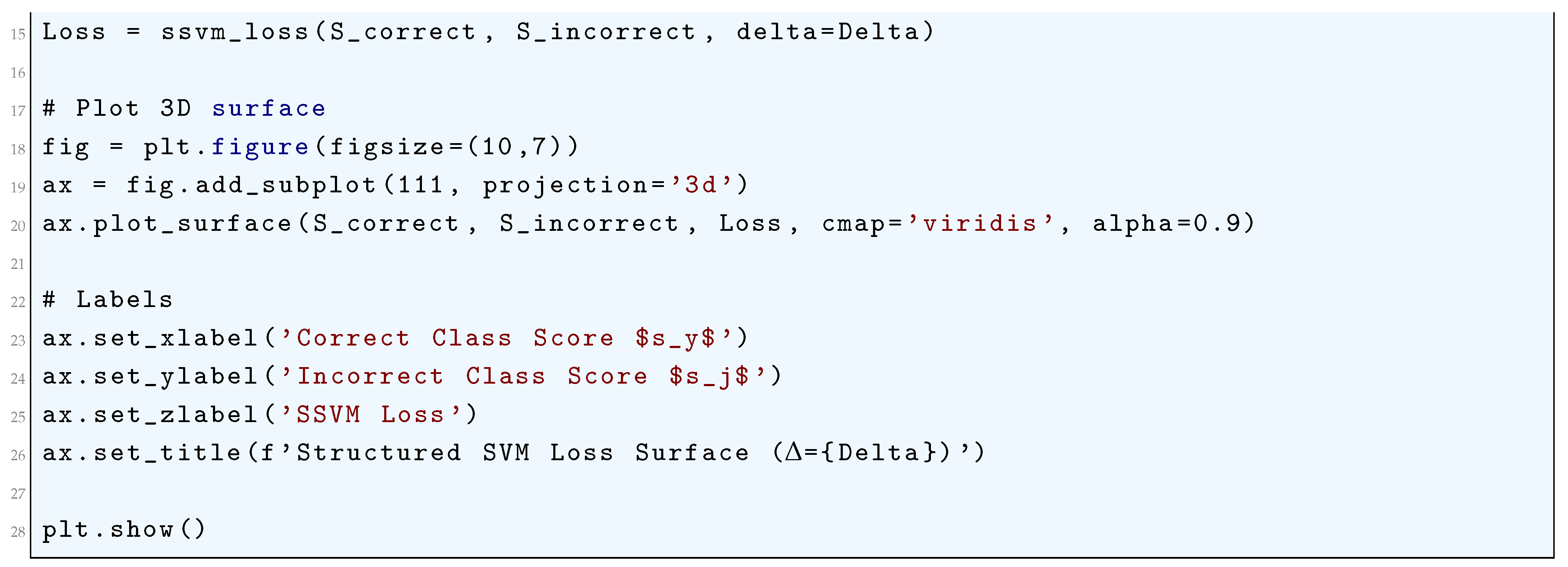

10.5.3.52 Python Code to Generate Figure 119 Illustrating Structured SVM Loss Surface ()

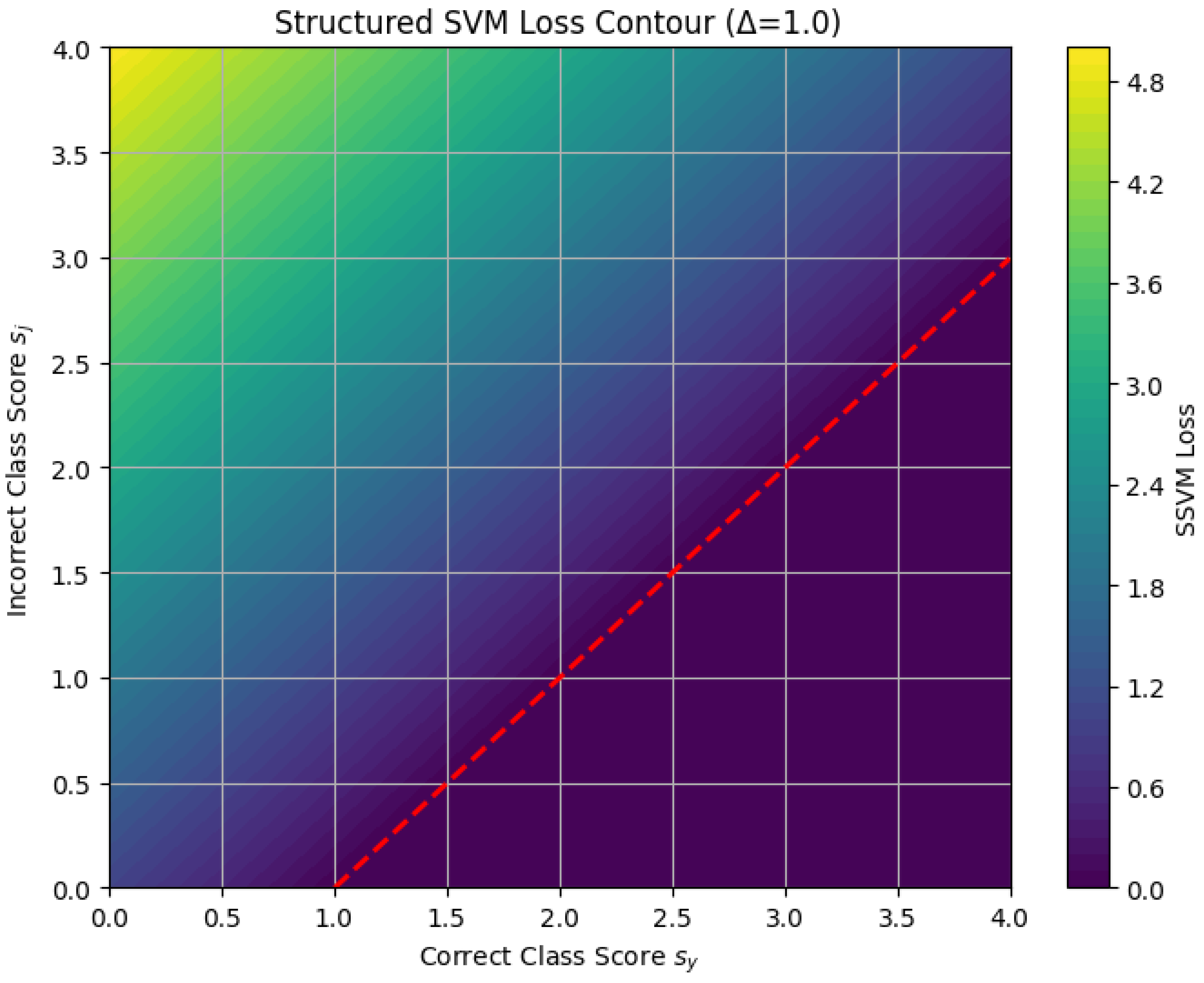

10.5.3.53 Python Code to Generate Figure 120 Illustrating Structured SVM Loss Contour ()

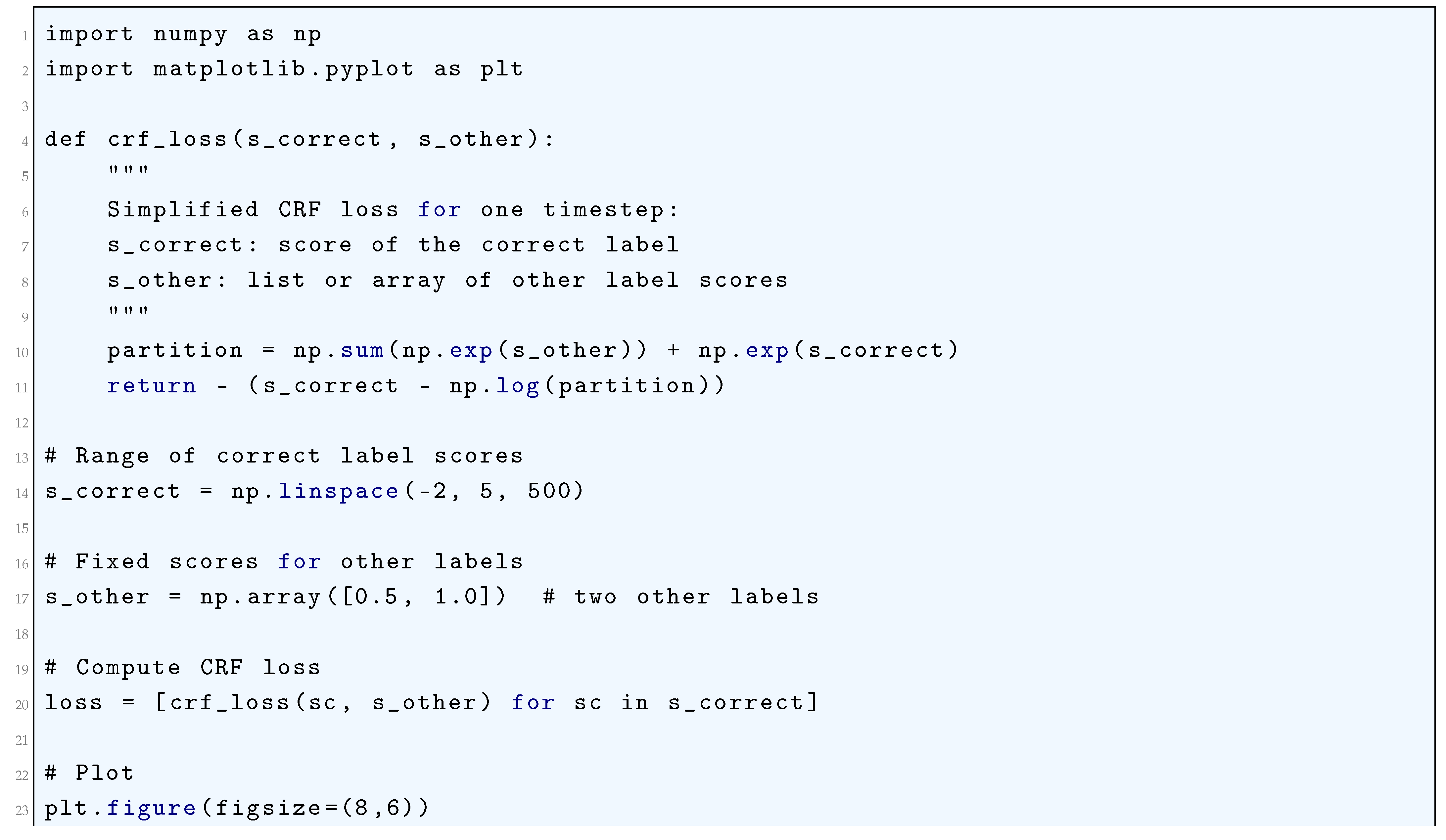

10.5.3.54 Python Code to Generate Figure 121 Illustrating Simplified CRF Loss vs Correct Label Score

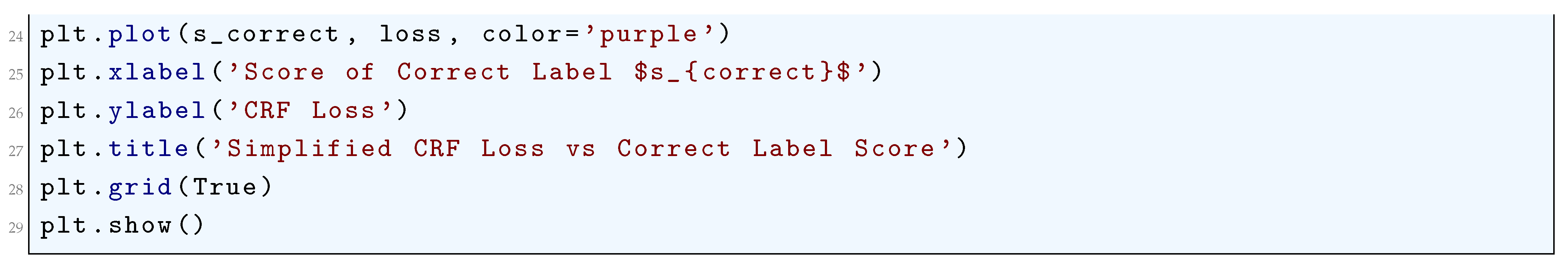

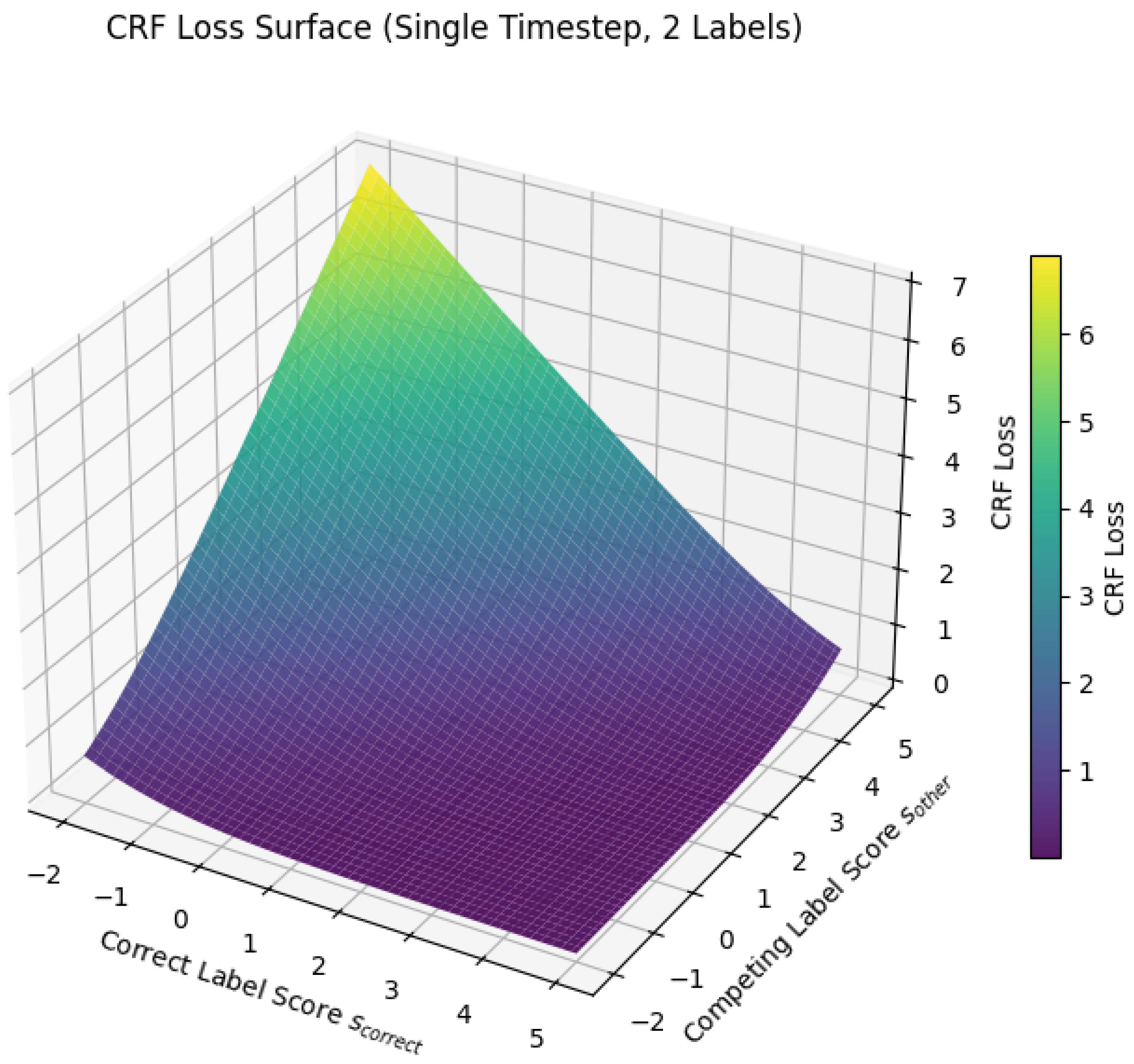

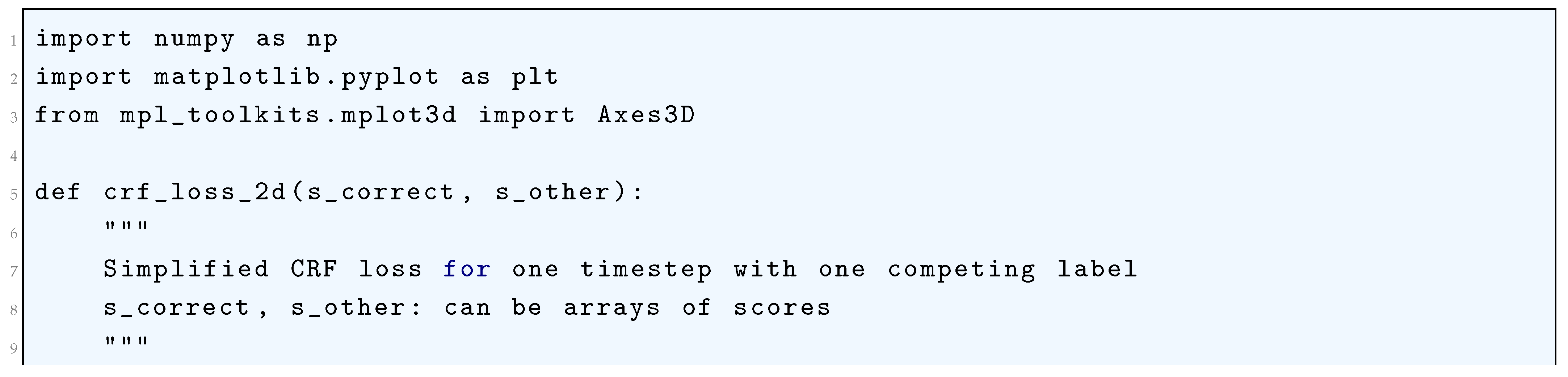

10.5.3.55 Python Code to Generate Figure 122 Illustrating CRF Loss Surface (Single Timestep, 2 Labels)

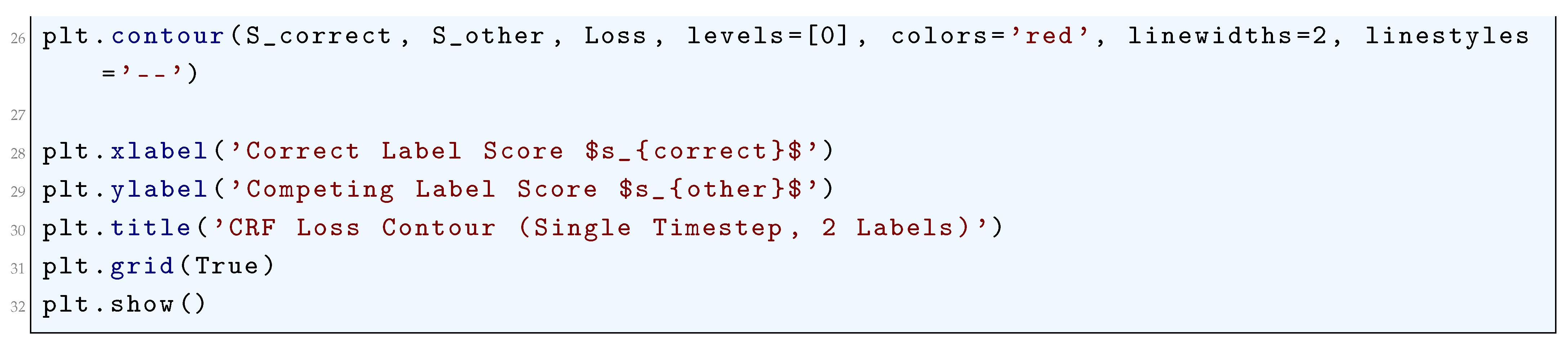

10.5.3.56 Python Code to Generate Figure 123 Illustrating CRF Loss Contour (Single Timestep, 2 Labels)

10.5.3.57 Python Code to Generate Figure 124 Illustrating CTC Loss vs Probability of Correct Sequence

10.5.3.58 Python Code to Generate Figure 125 Illustrating Approximate CTC Loss vs Per-Timestep Probability for Different Sequence Lengths

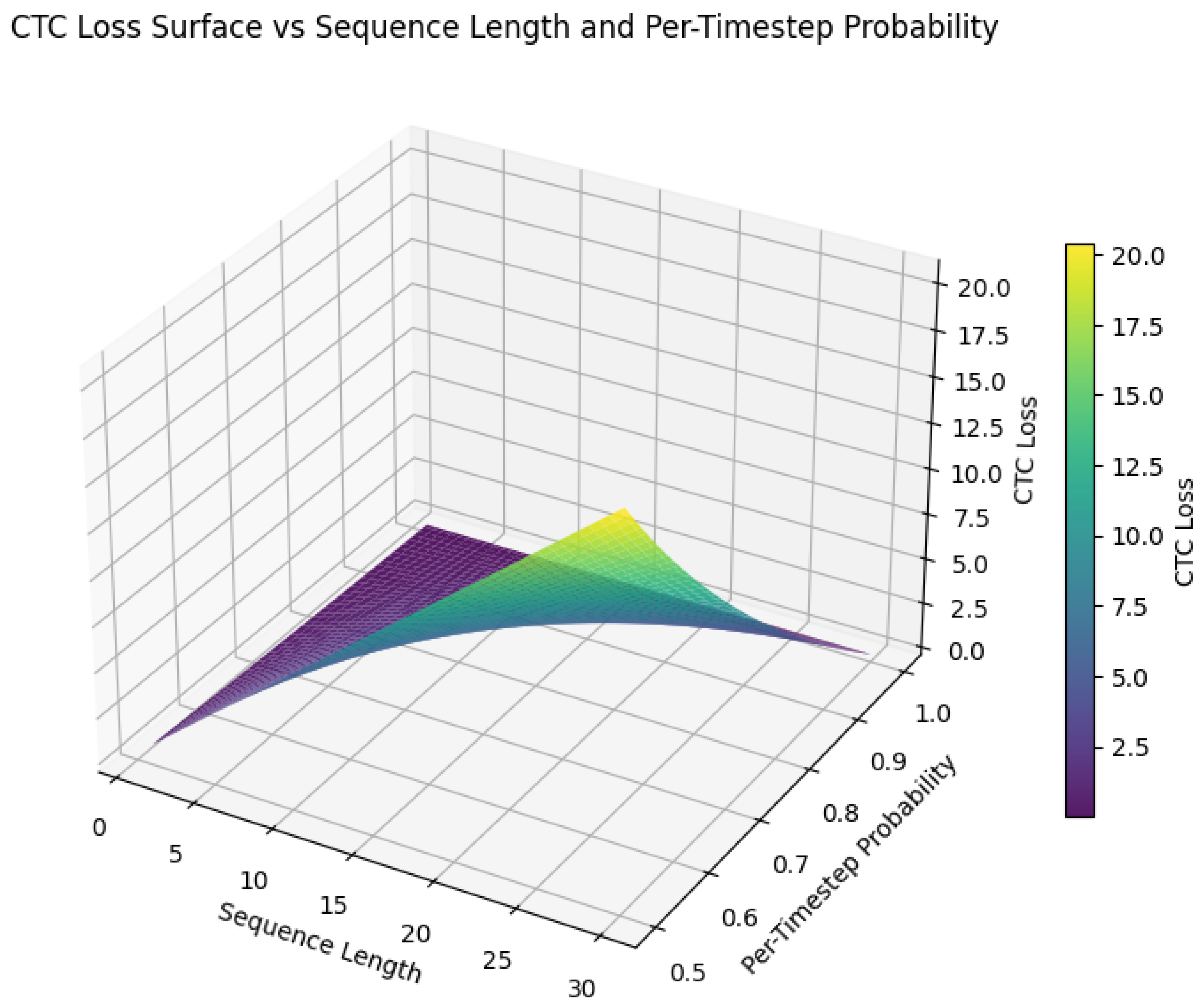

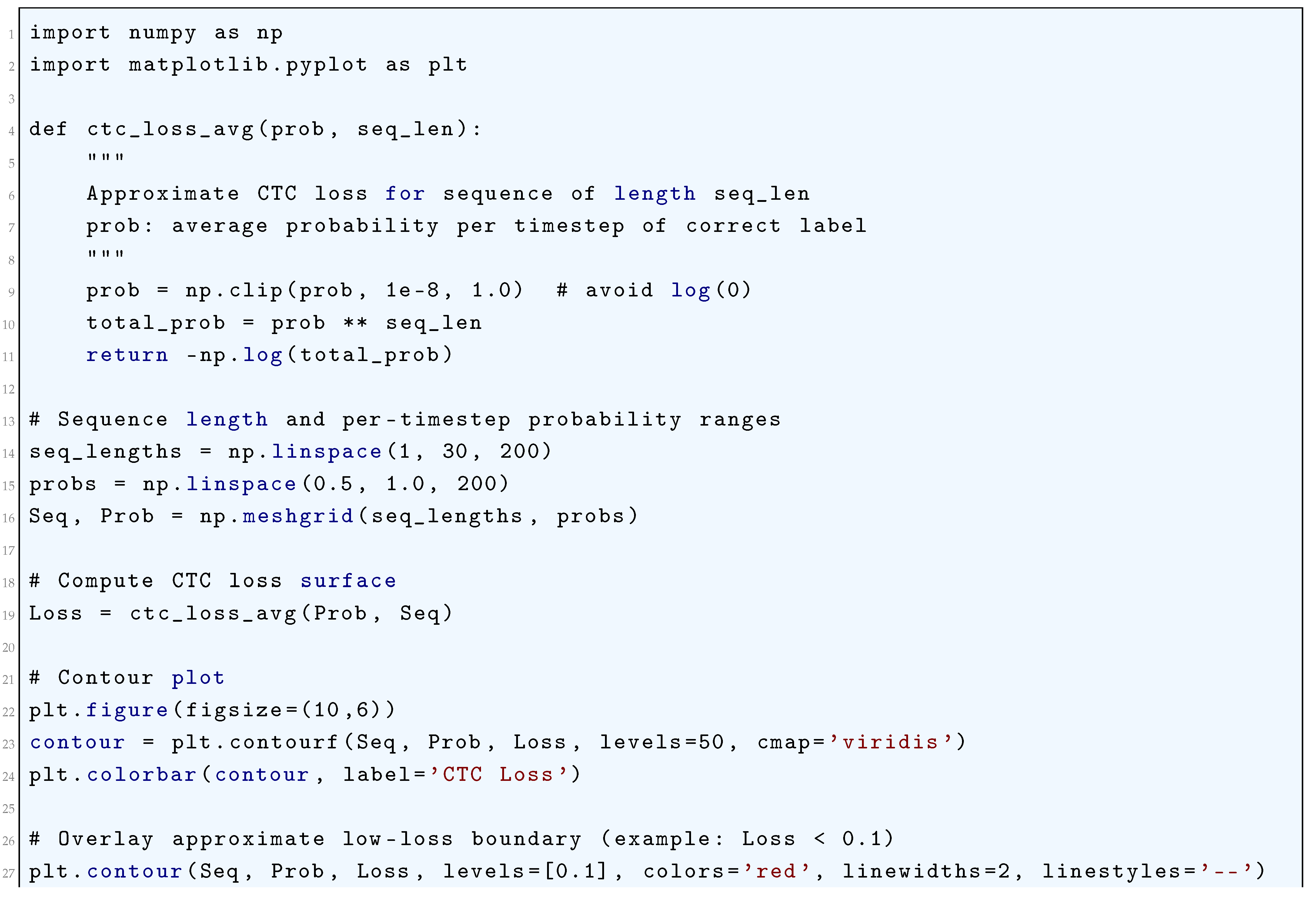

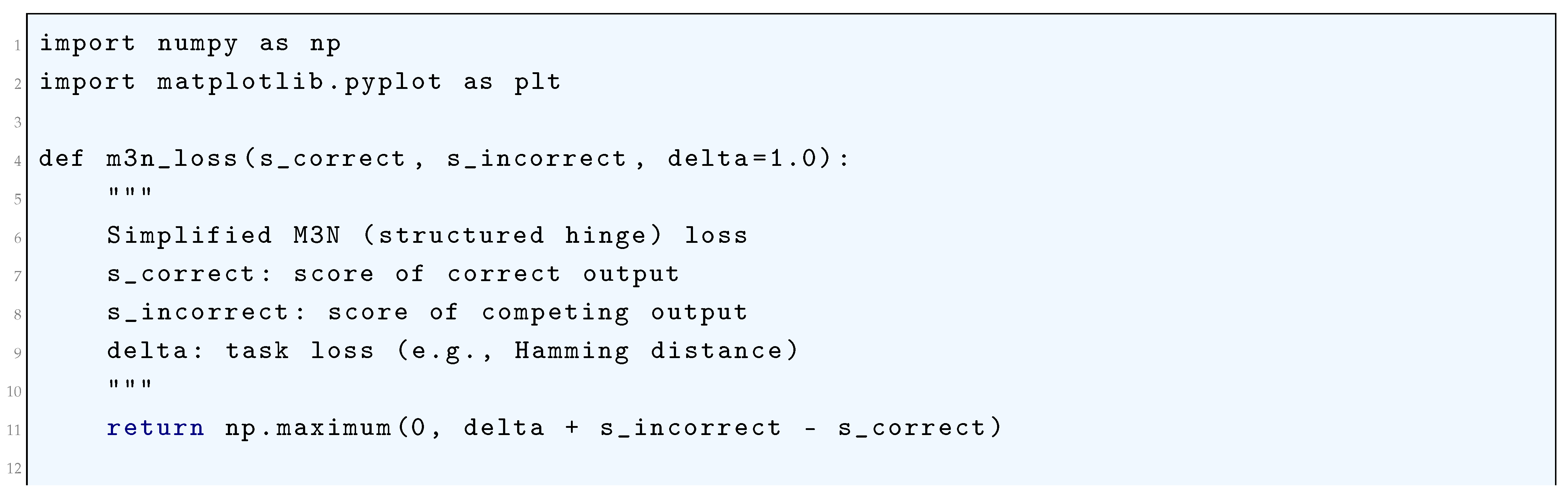

10.5.3.59 Python Code to Generate Figure 126 Illustrating CTC Loss Surface vs Sequence Length and Per-Timestep Probability

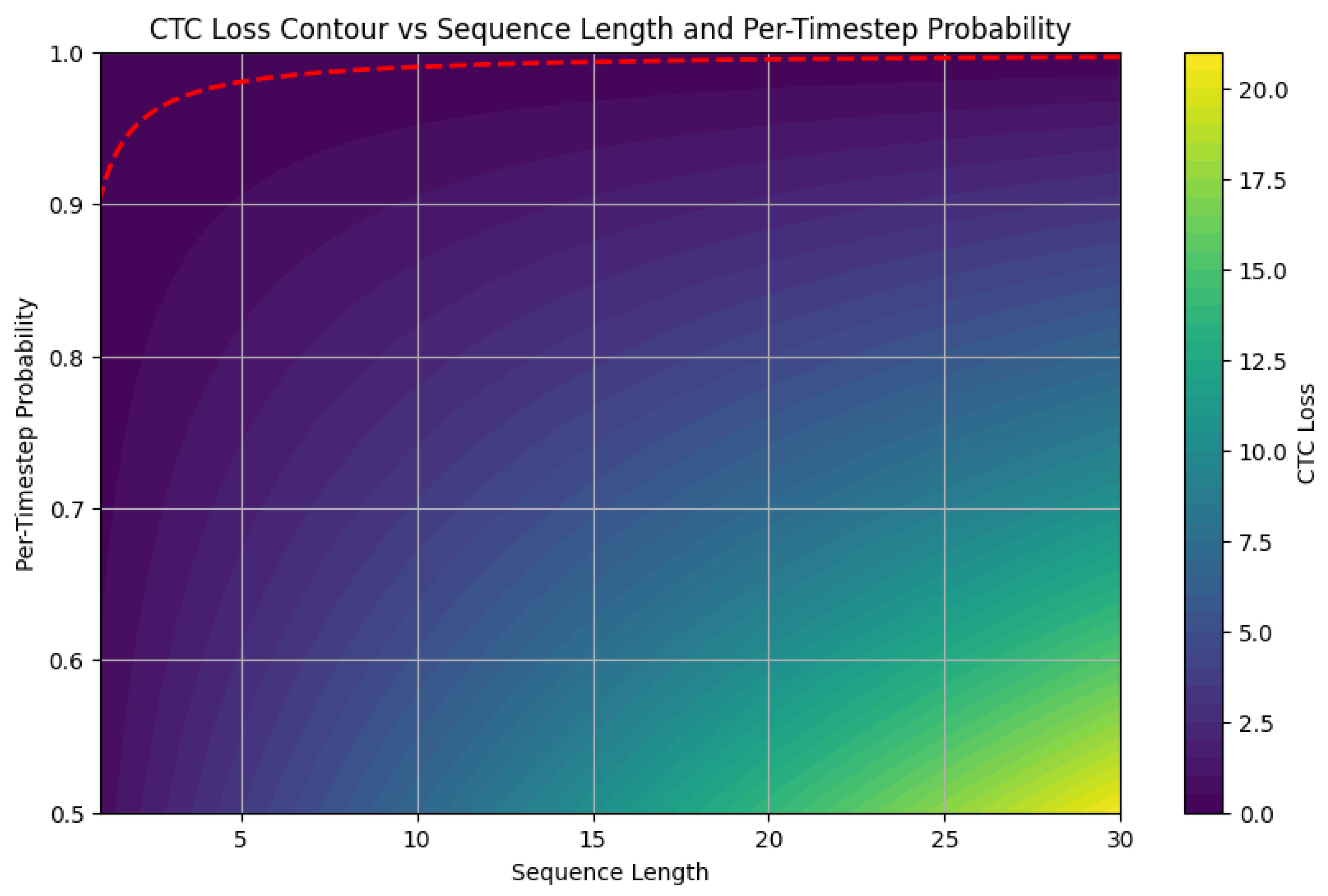

10.5.3.60 Python Code to Generate Figure 127 Illustrating CTC Loss Contour vs Sequence Length and Per-Timestep Probability

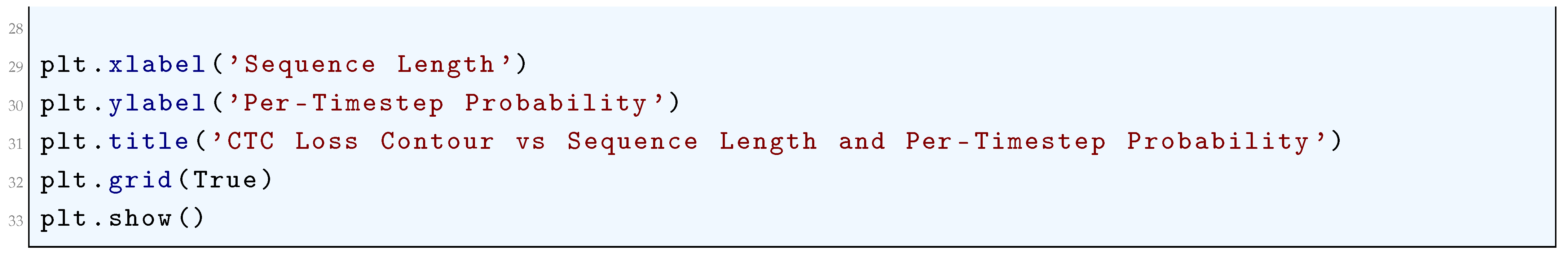

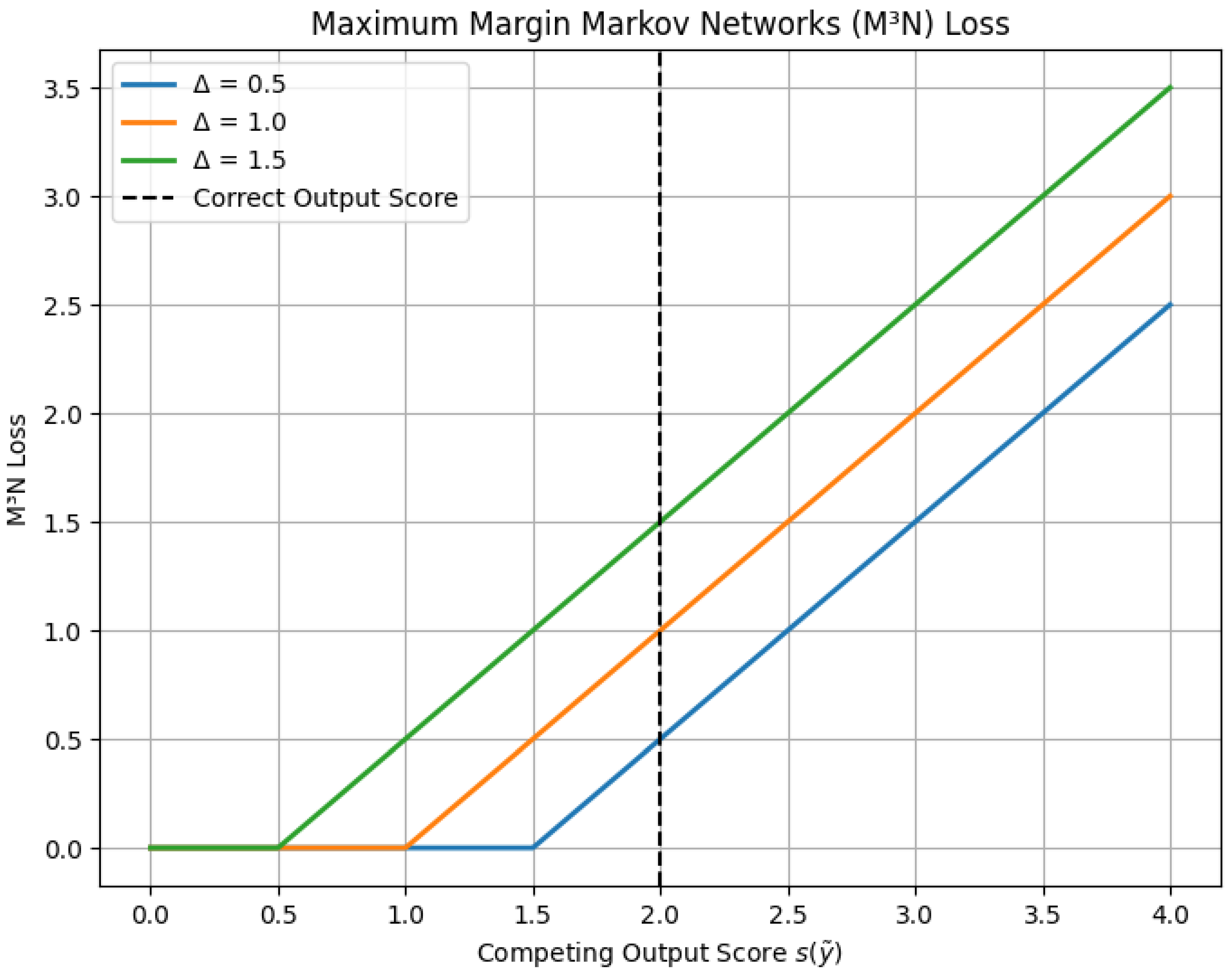

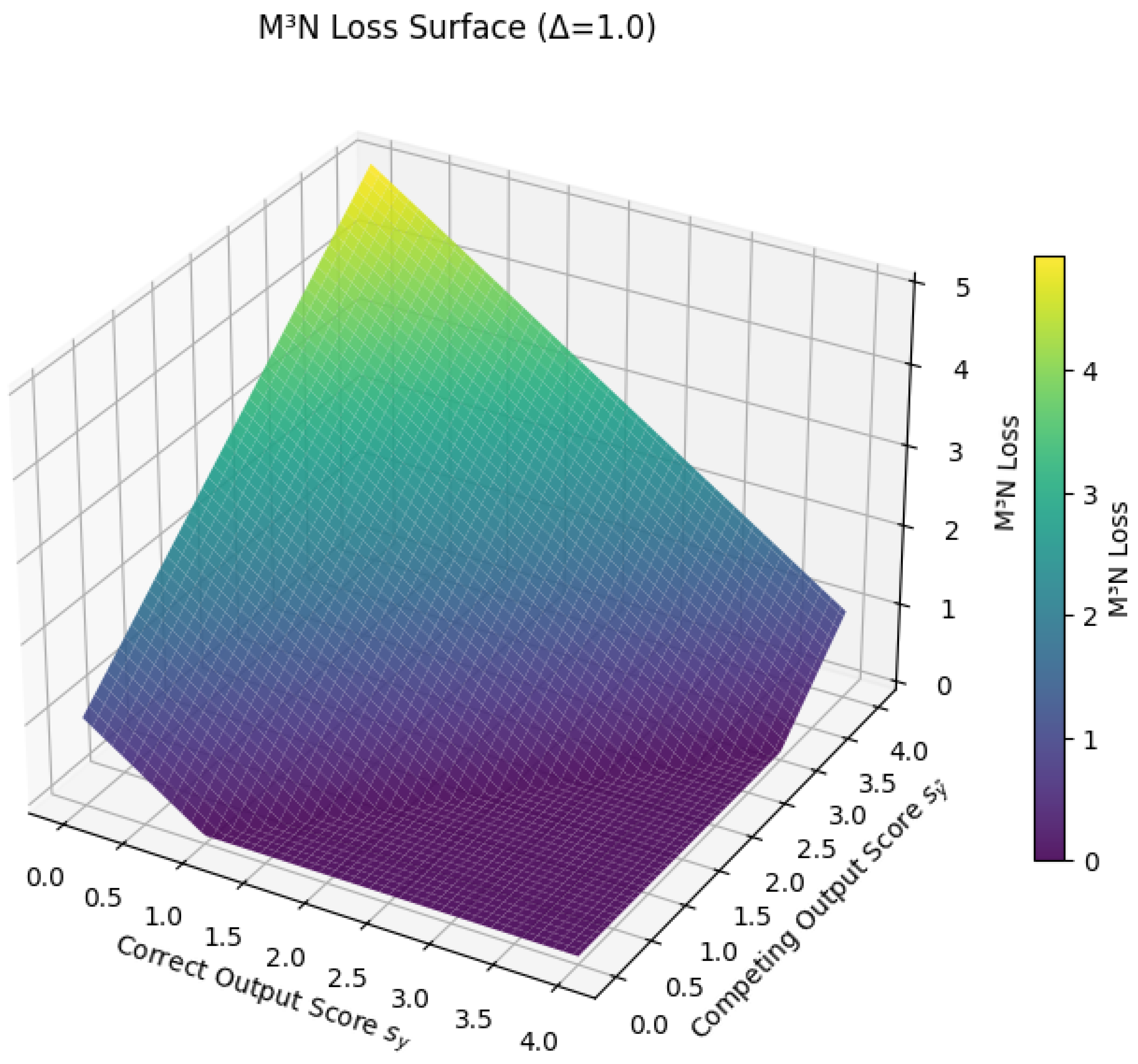

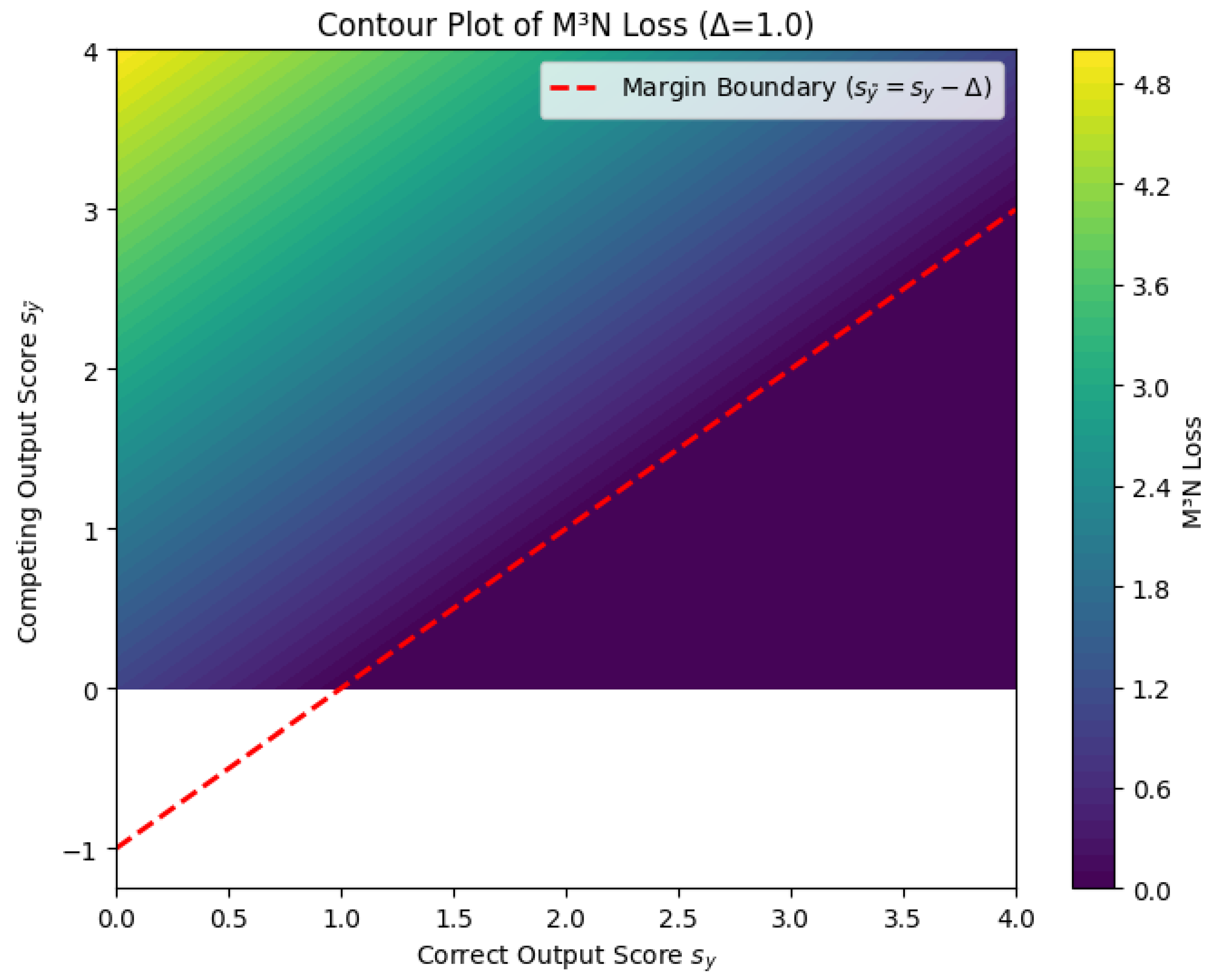

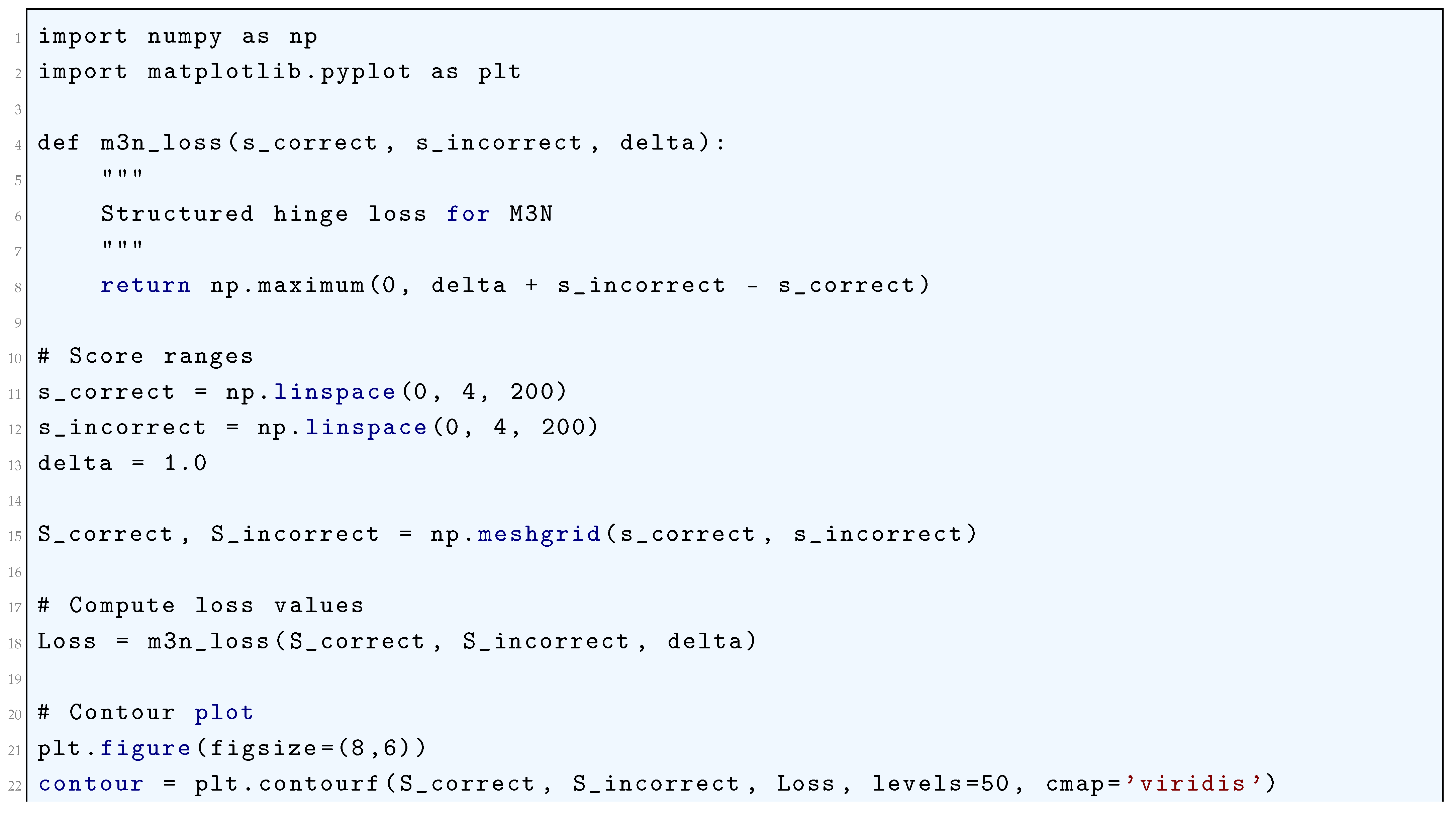

10.5.3.61 Python Code to Generate Figure 128 Illustrating Maximum Margin Markov Networks (M³N) Loss

10.5.3.62 Python Code to Generate Figure 129 Illustrating M³N Loss Surface ()

10.5.3.63 Python Code to Generate Figure 130 Illustrating Contour Plot of M³N Loss ()

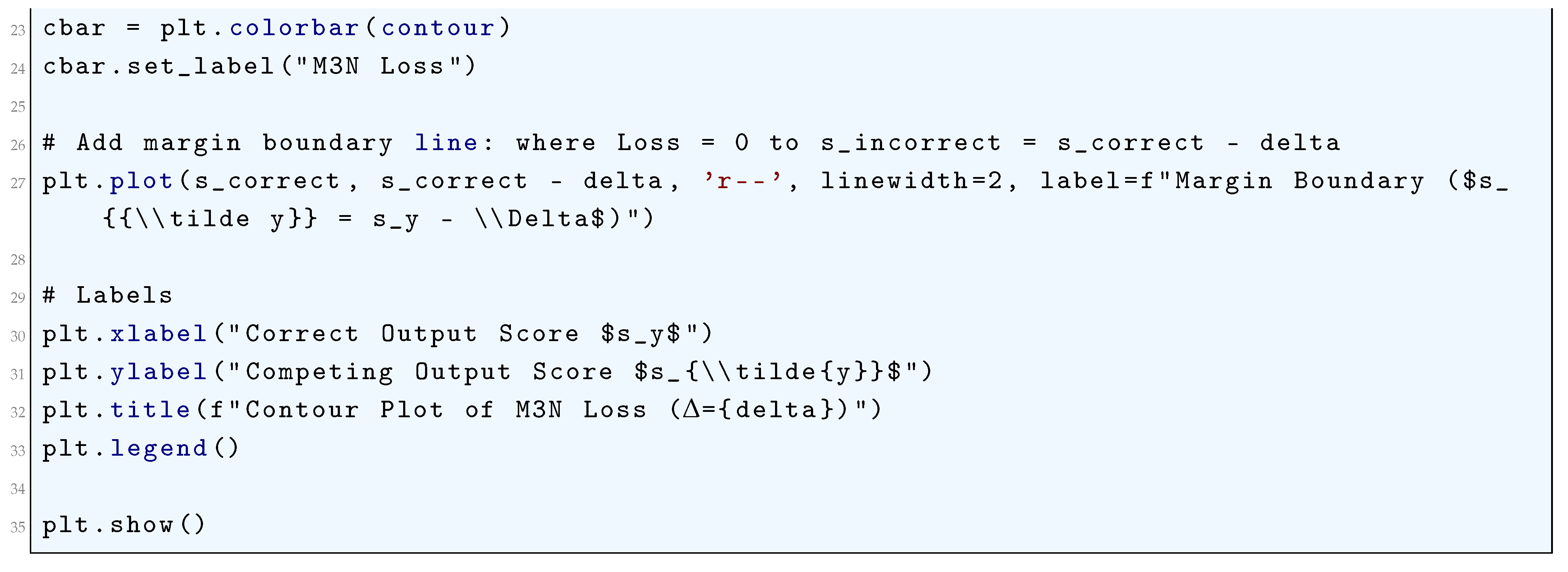

10.5.3.64 Python Code to Generate Figure 131 Illustrating Listwise Ranking Losses (ListNet vs ListMLE)

10.5.3.65 Python Code to Generate Figure 132 Illustrating Listwise Ranking Losses (3D Surfaces)

10.5.3.66 Python Code to Generate Figure 133 Illustrating Listwise Ranking Loss Contours

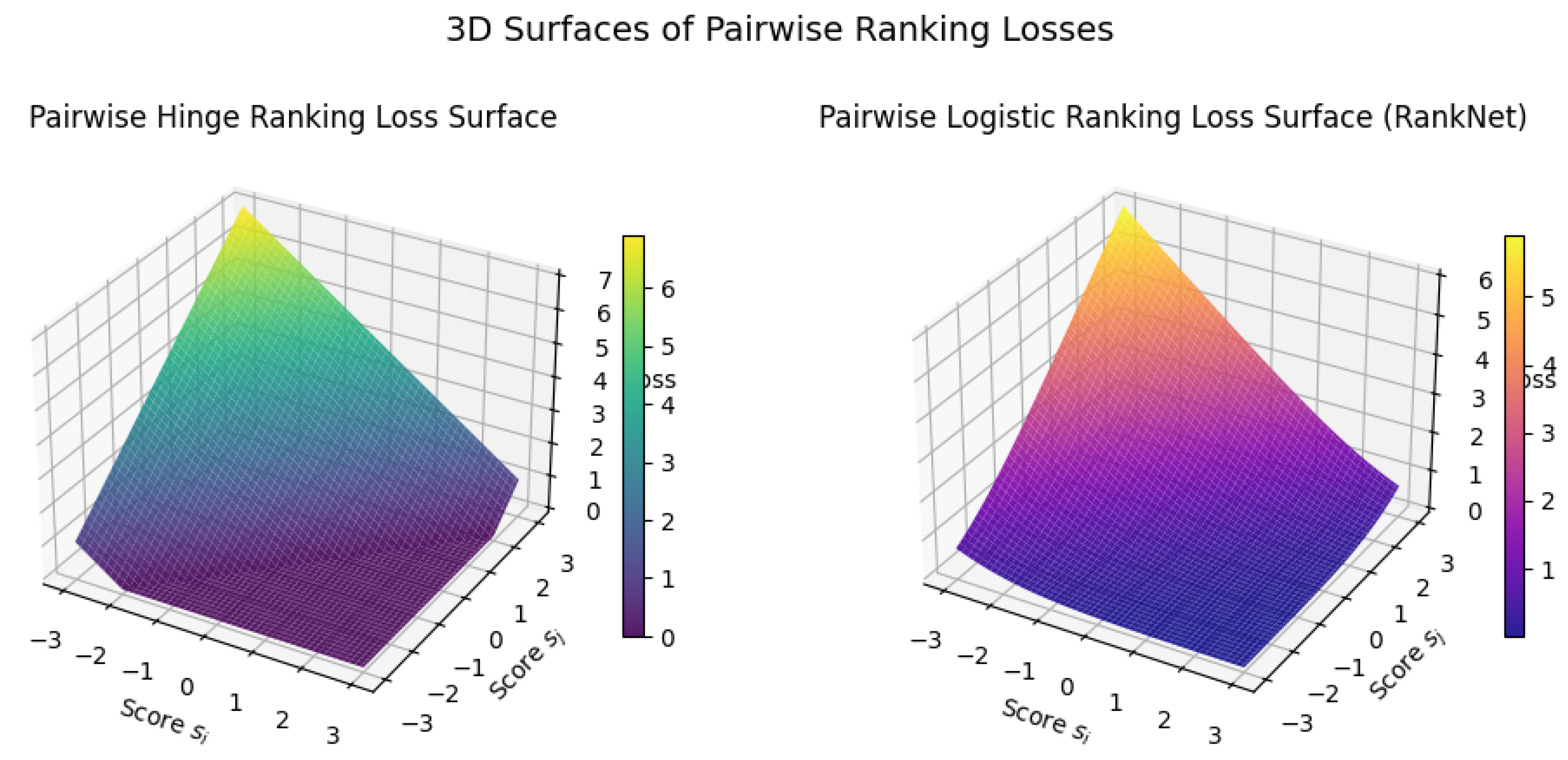

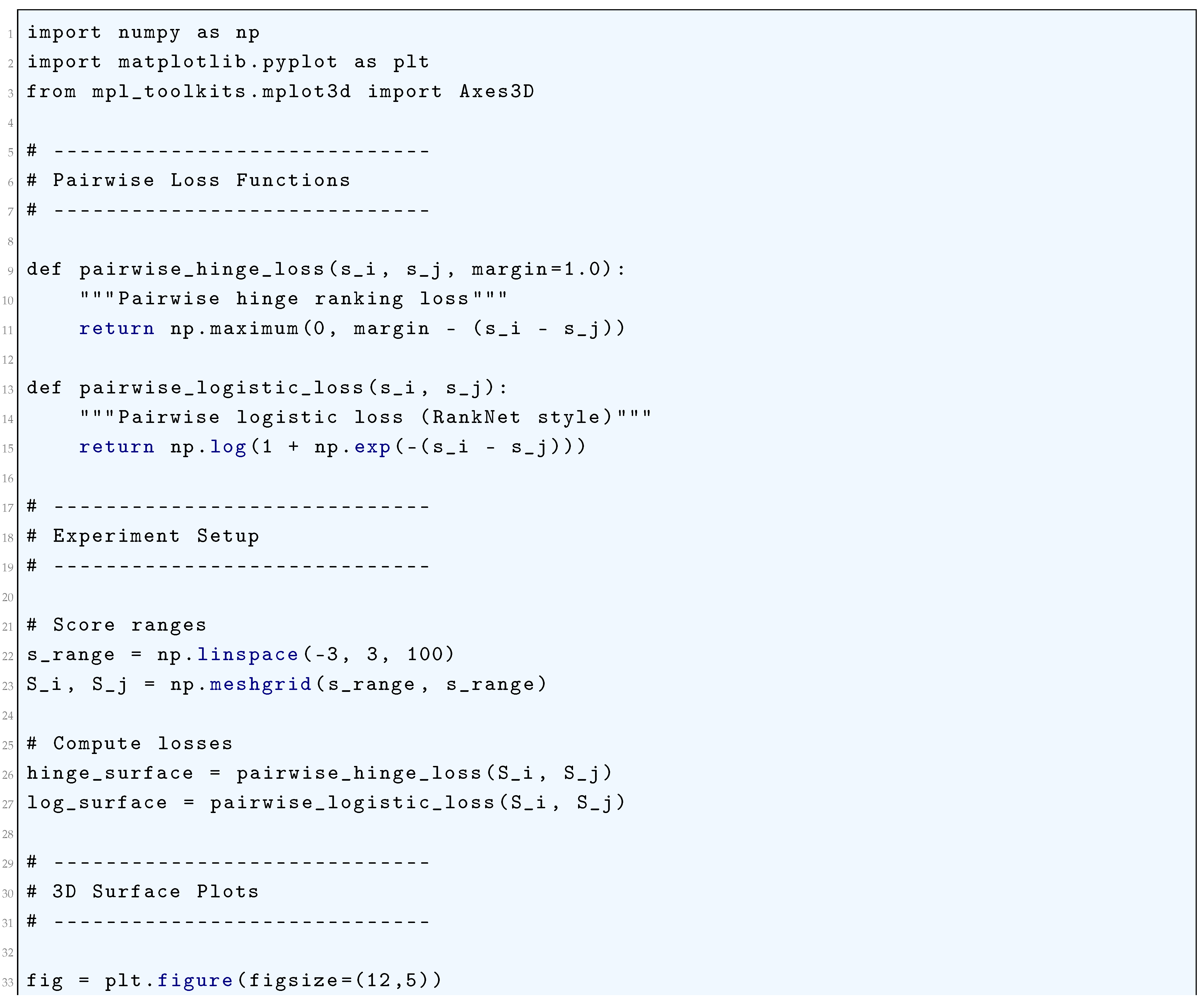

10.5.3.67 Python Code to Generate Figure 134 illustrating Pairwise Ranking Losses

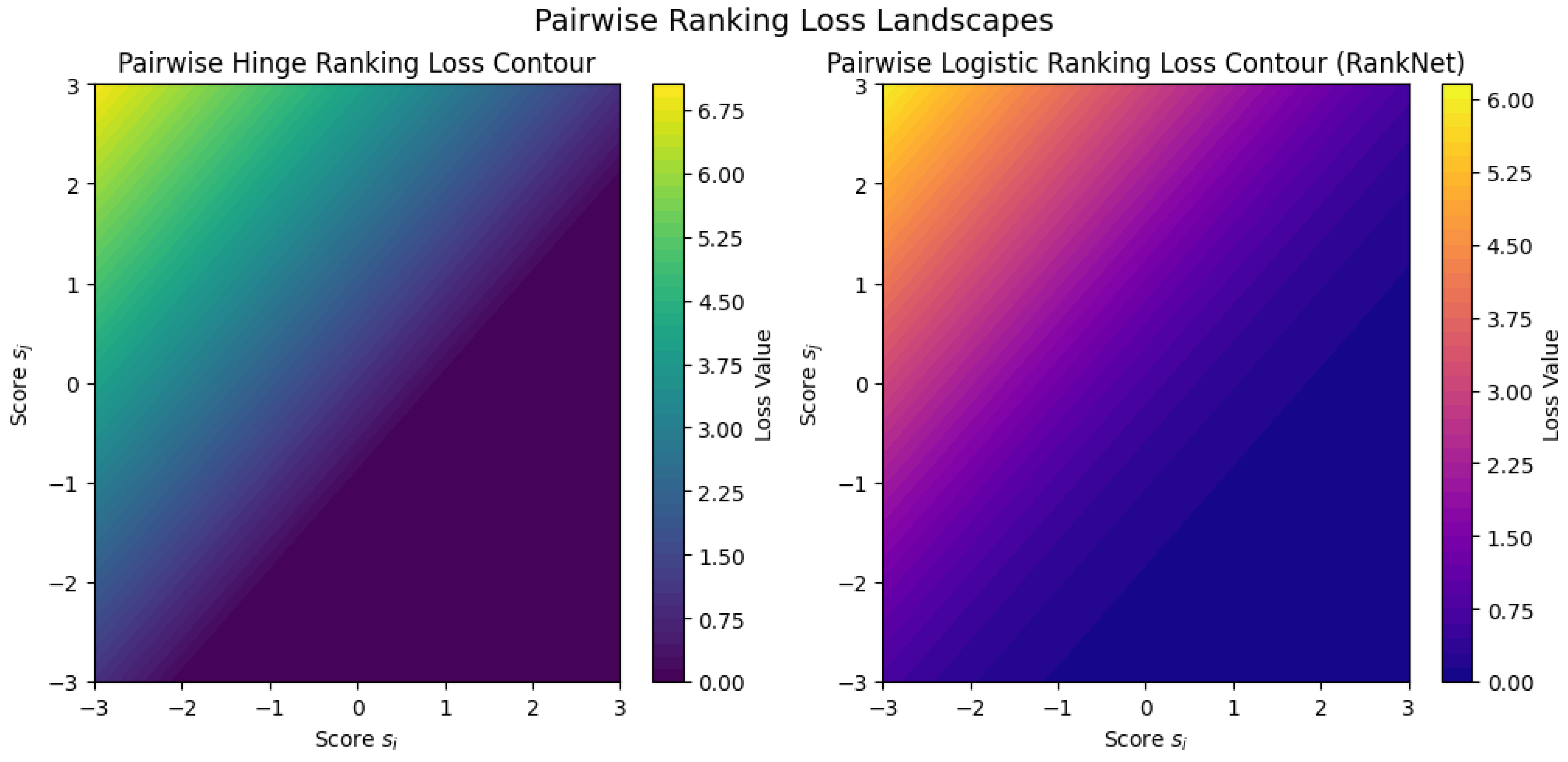

10.5.3.68 Python Code to Generate Figure 135 Illustrating Pairwise Ranking Loss Landscapes

10.5.3.69 Python Code to Generate Figure 136 Illustrating 3D Surfaces of Pairwise Ranking Losses

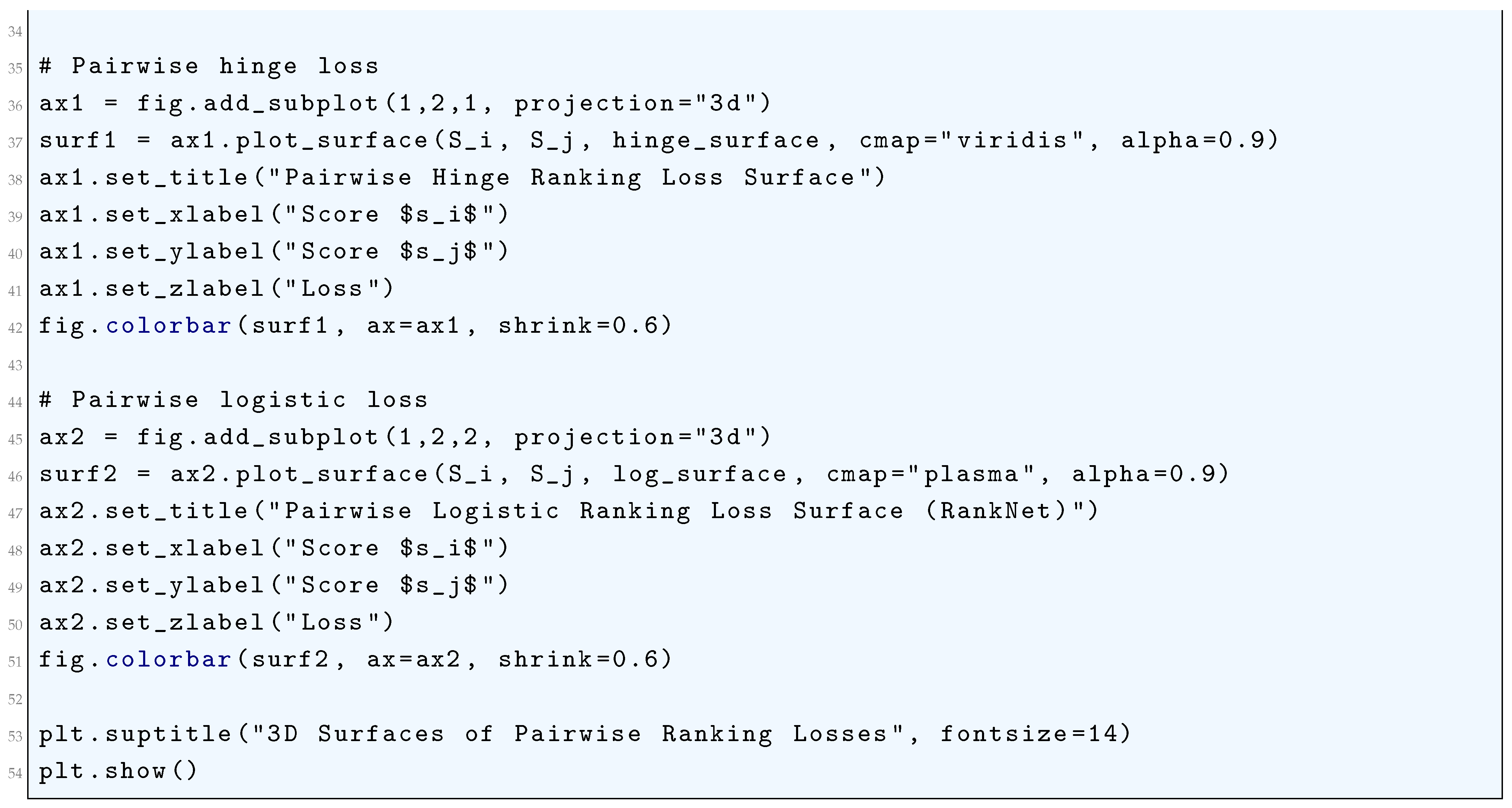

10.5.3.70 Python Code to Generate Figure 137 Illustrating Contrastive Divergence (CD) Loss vs Weight

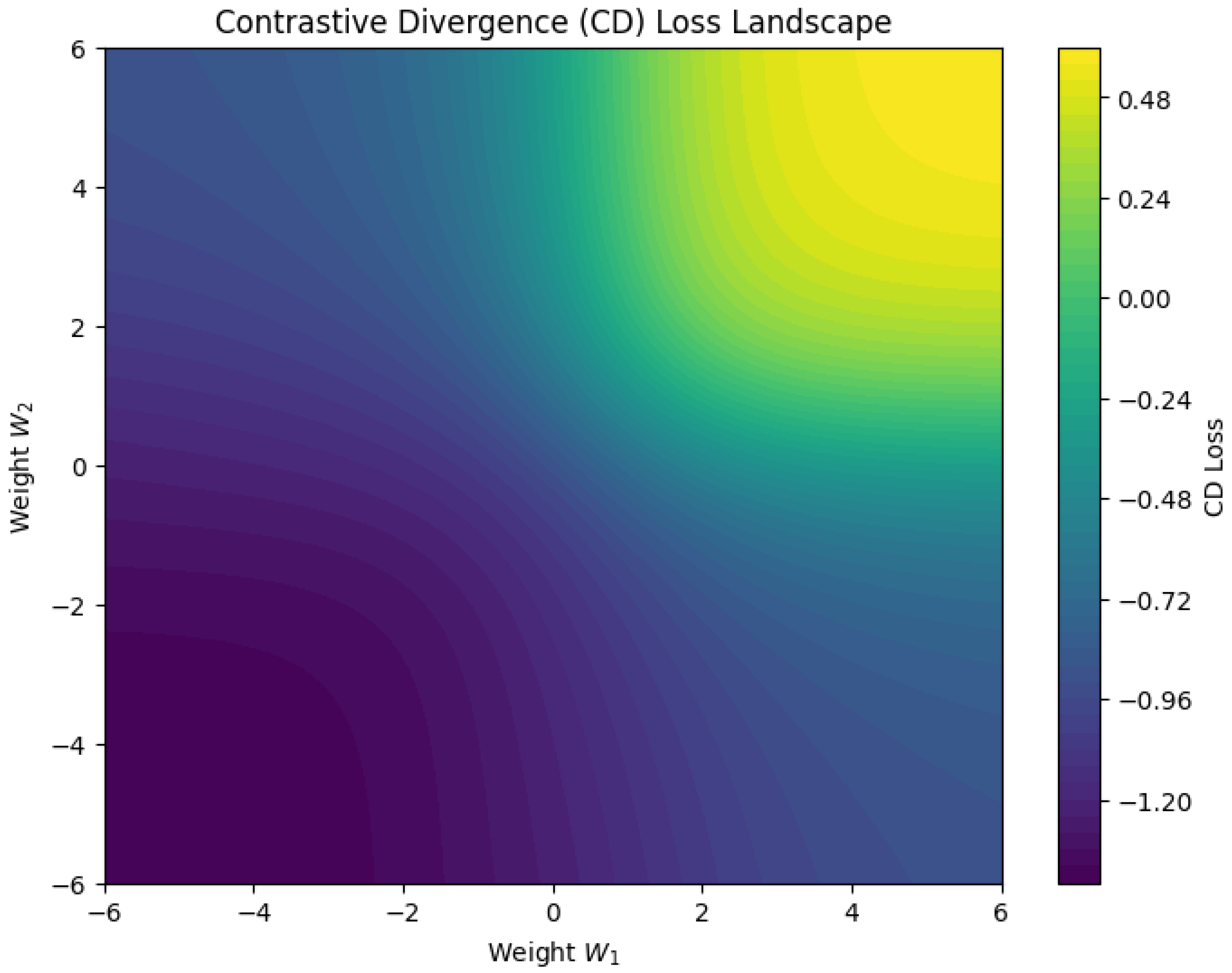

10.5.3.71 Python Code to Generate Figure 138 Illustrating Contrastive Divergence (CD) Loss Landscape

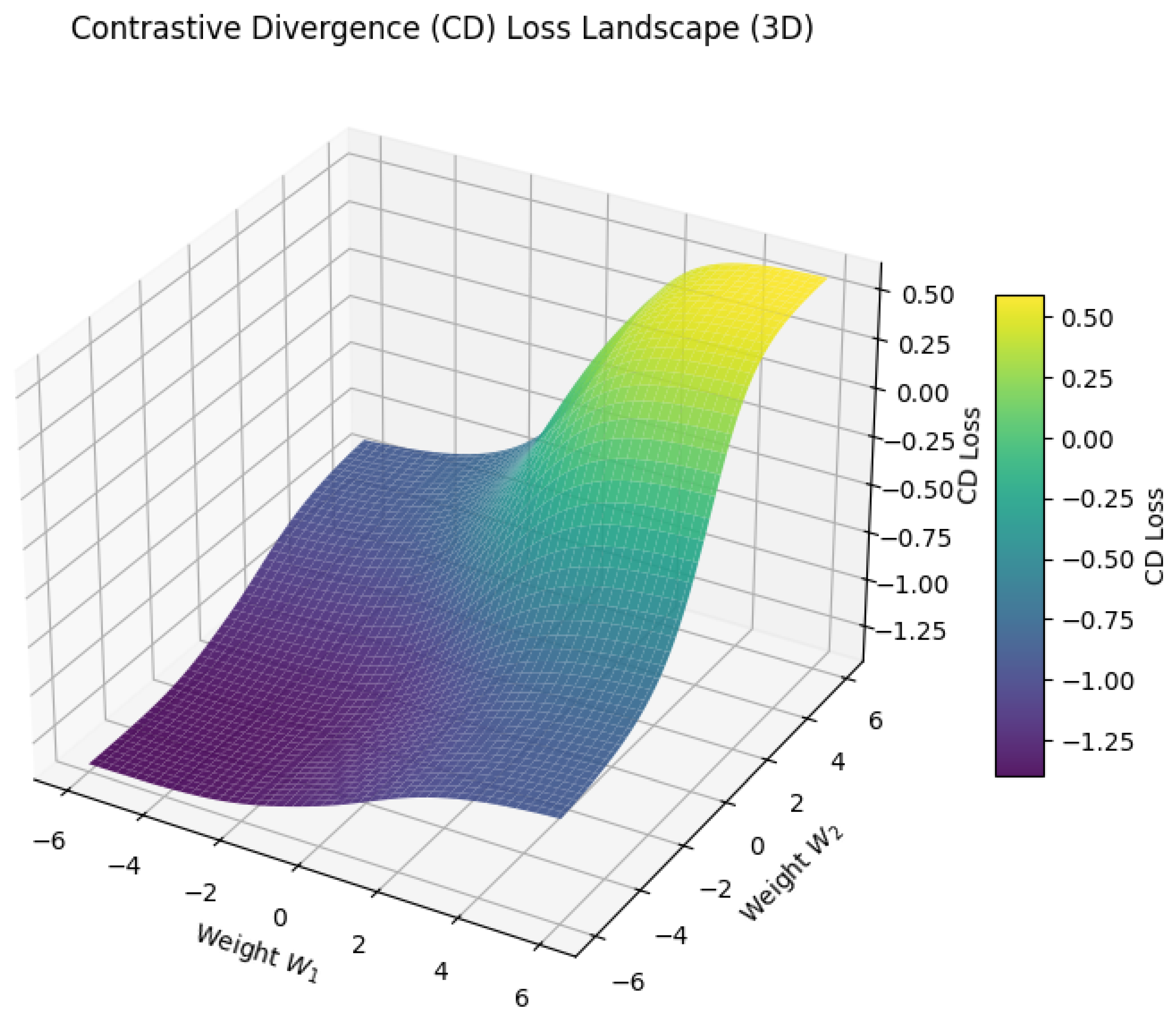

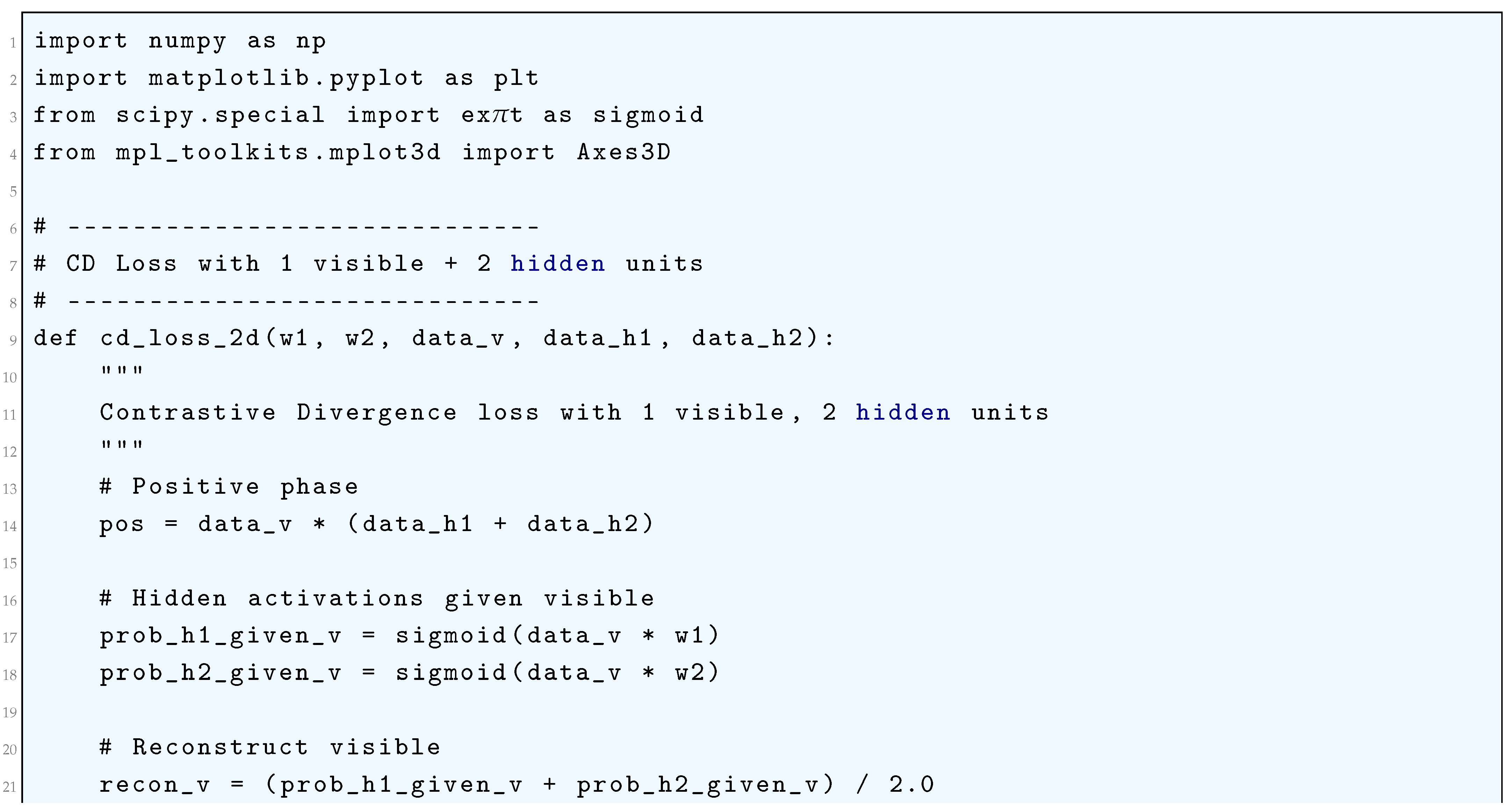

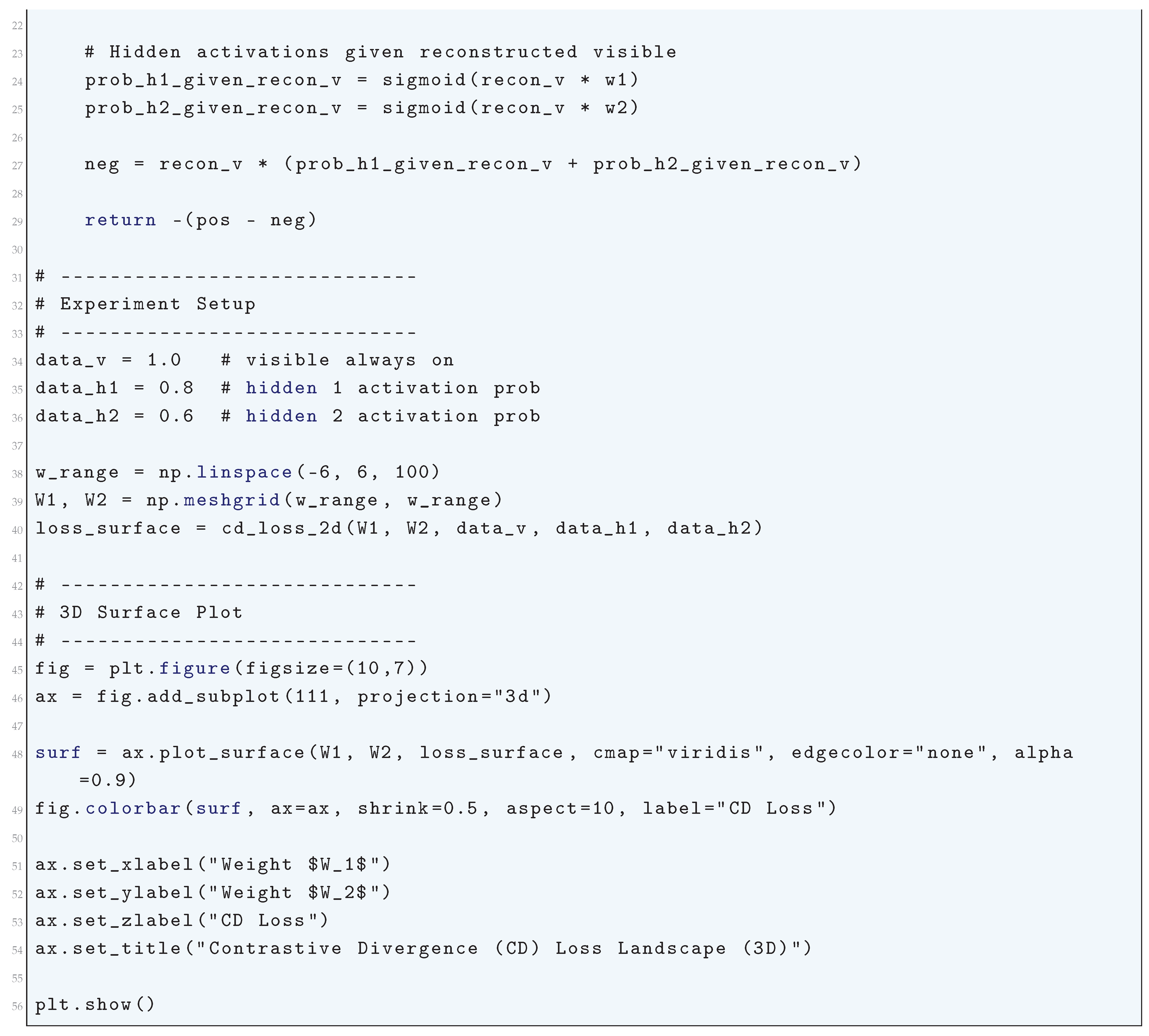

10.5.3.72 Python Code to Generate Figure 139 Illustrating Contrastive Divergence (CD) Loss Landscape (3D)

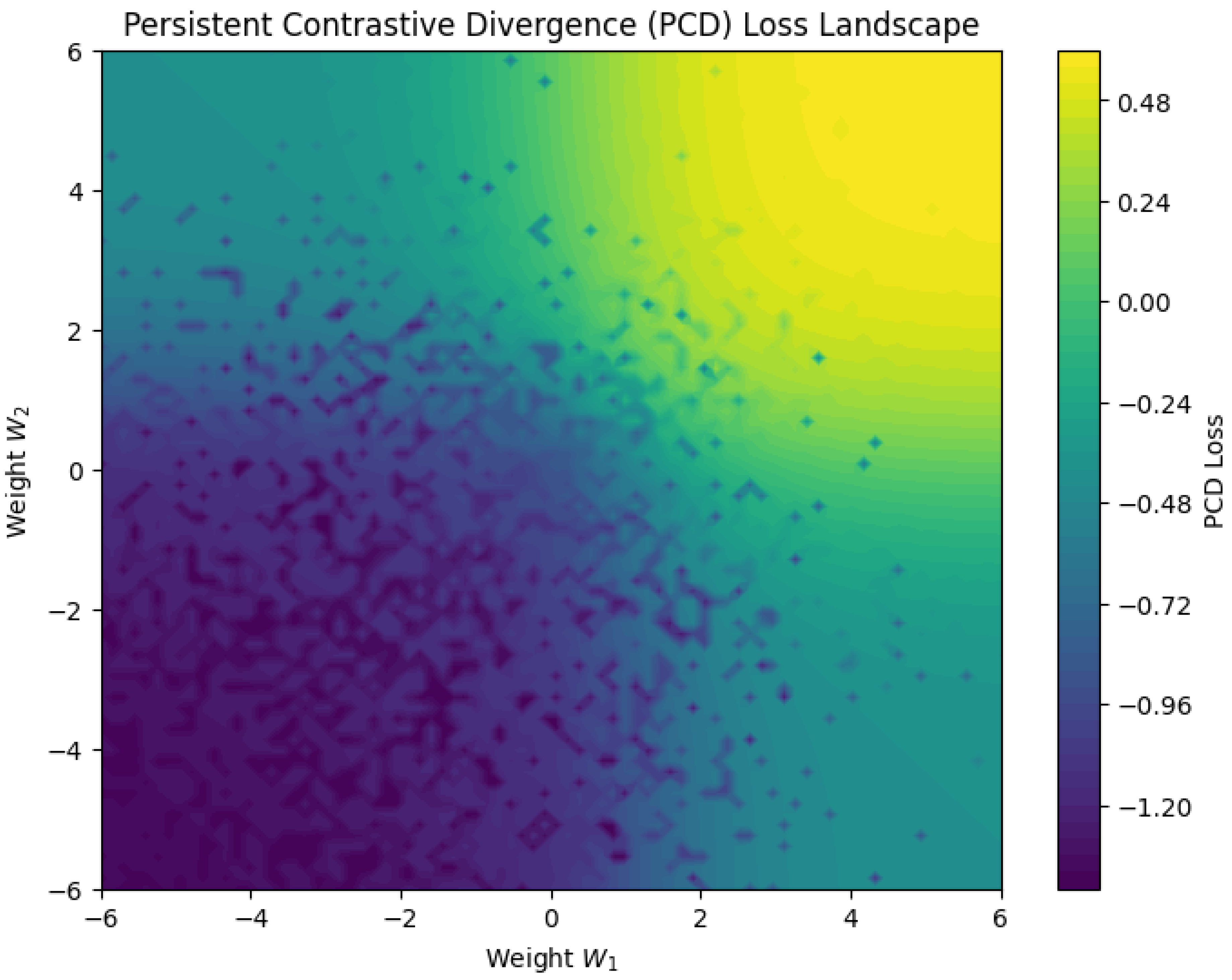

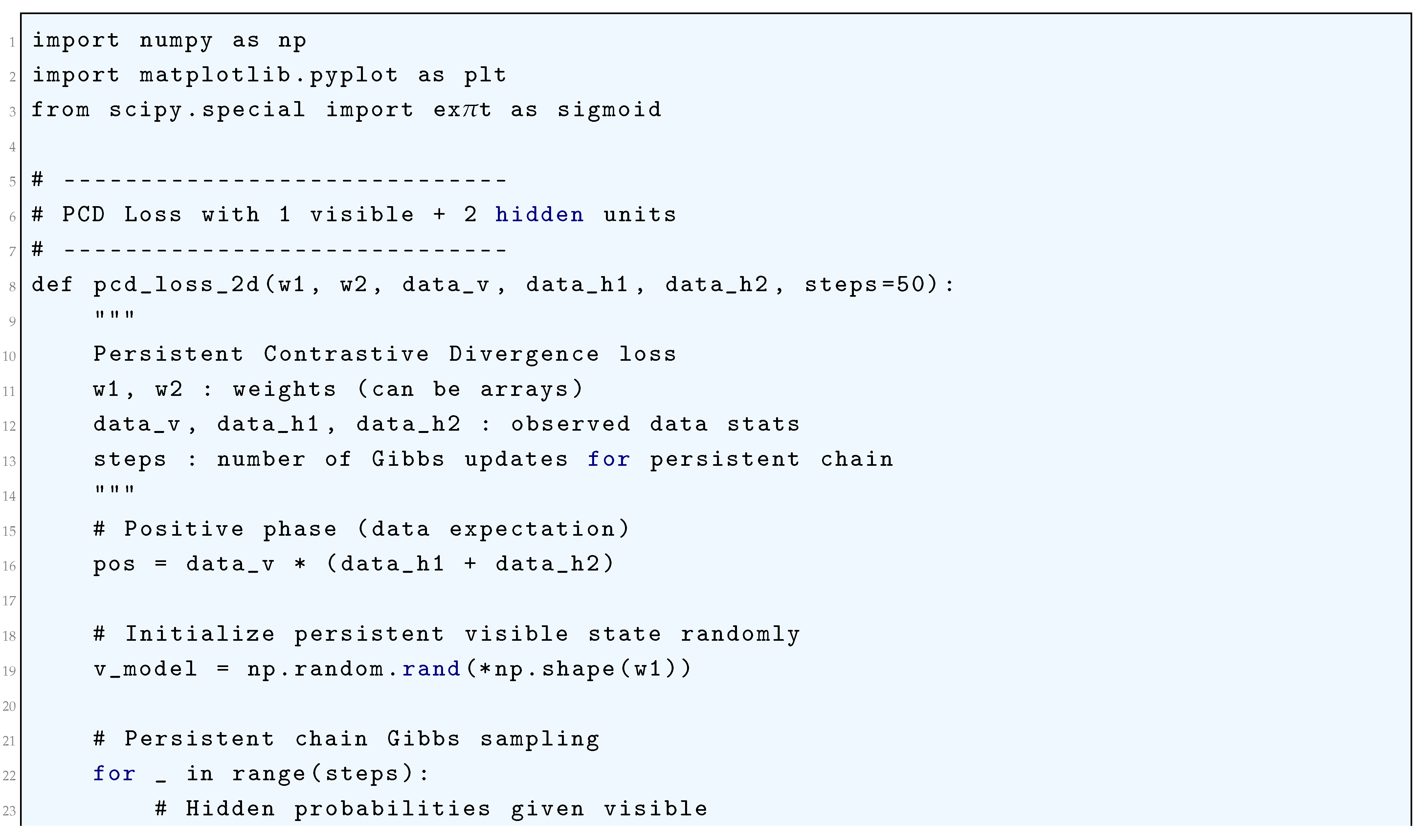

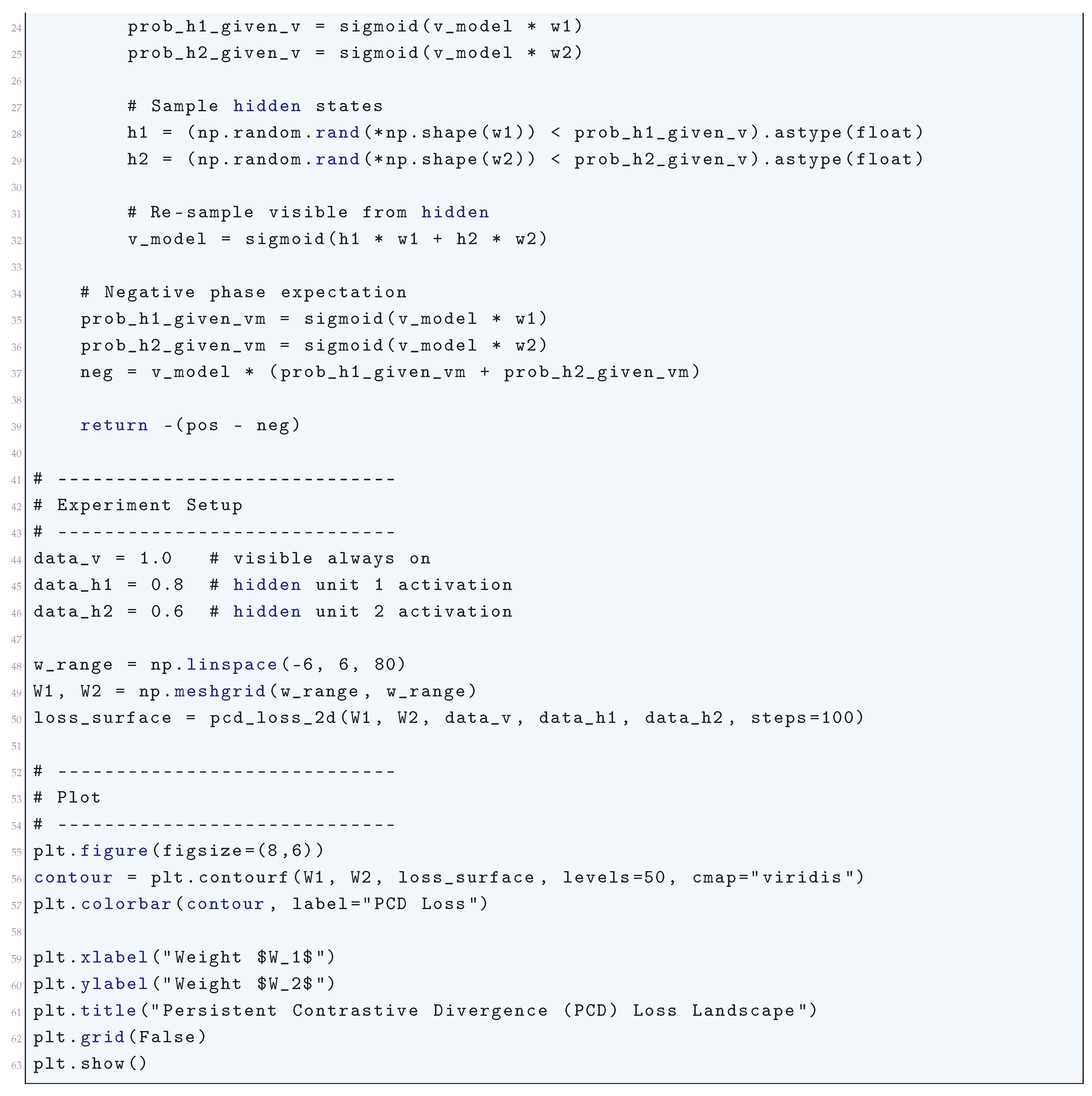

10.5.3.73 Python Code to Generate Figure 140 Illustrating Persistent Contrastive Divergence (PCD) Loss Landscape

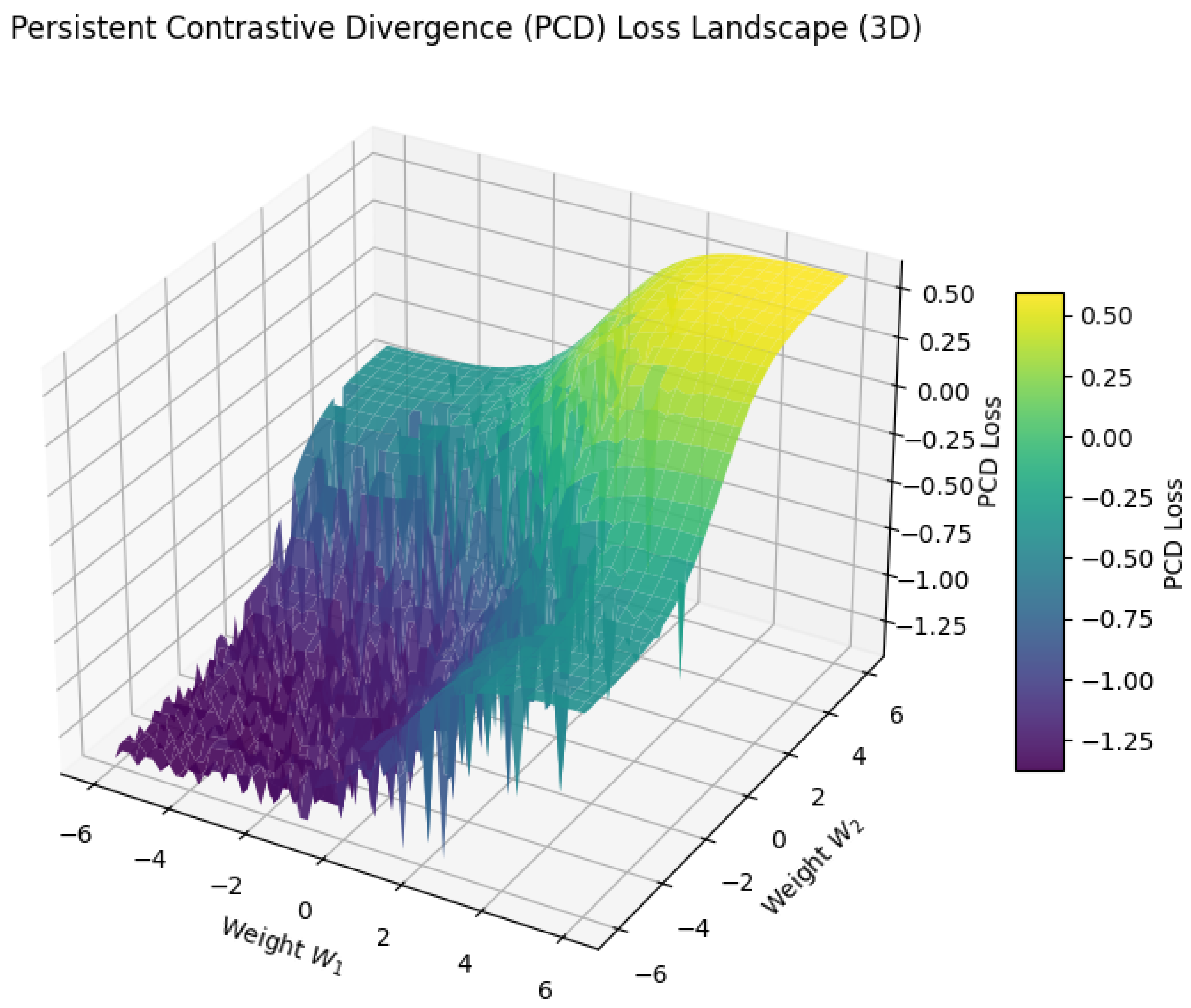

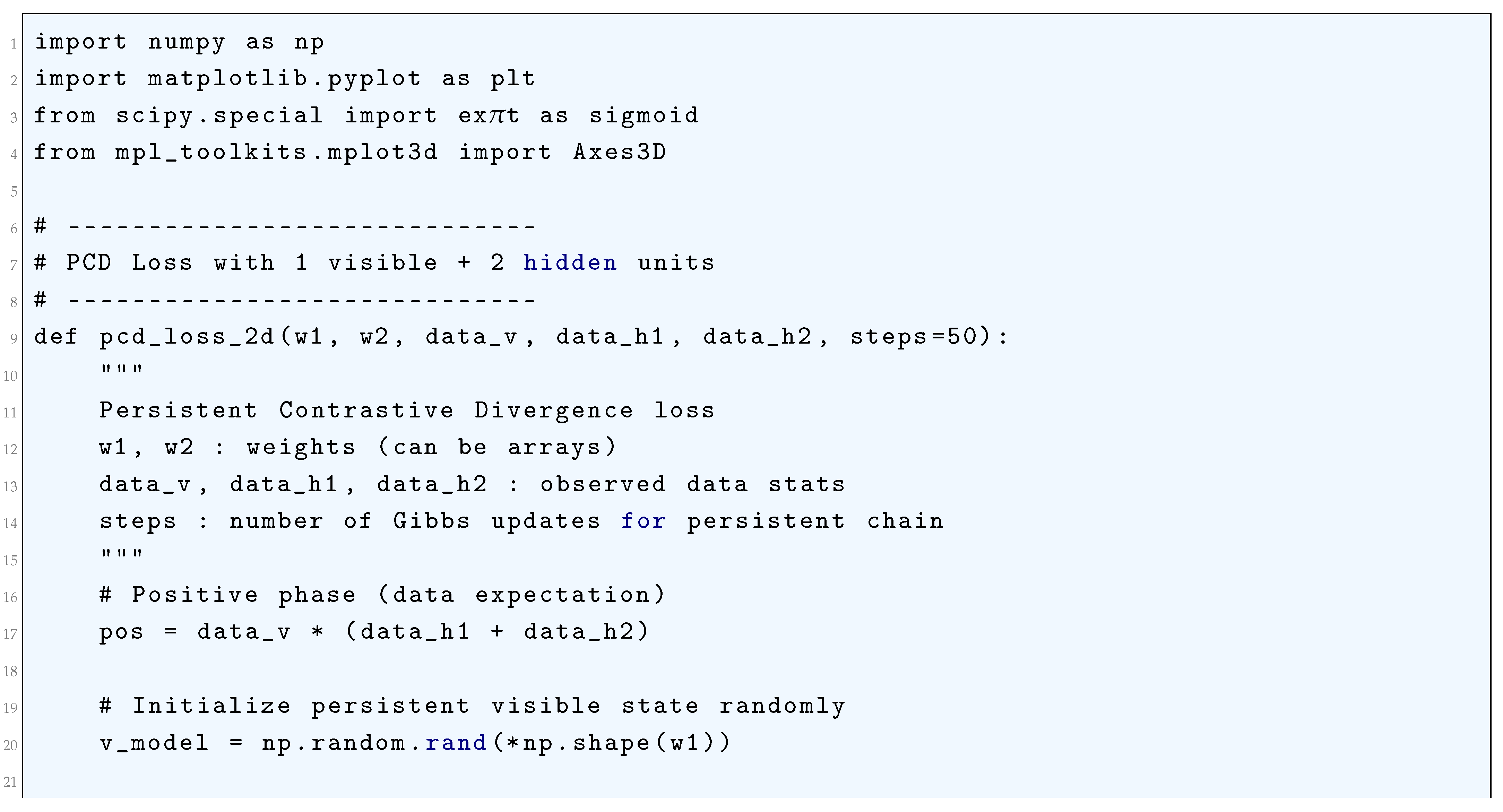

10.5.3.74 Python Code to Generate Figure 141 Illustrating Persistent Contrastive Divergence (PCD) Loss Landscape (3D)

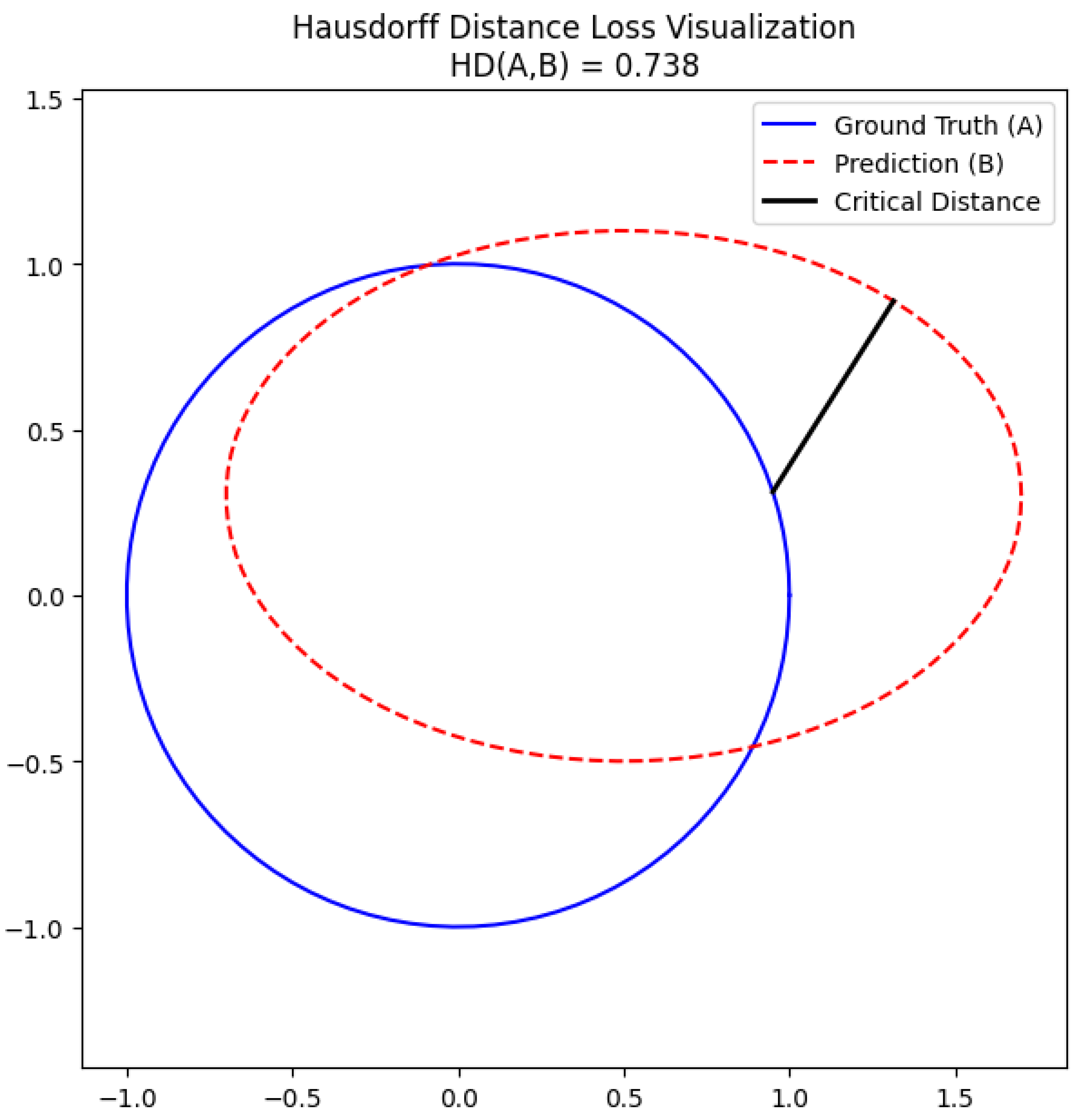

10.5.3.75 Python Code to Generate Figure 142 Illustrating Hausdorff Distance Loss Visualization

10.5.3.76 Python Code to Generate Figure 143 Illustrating Hausdorff Distance Heatmap

11. Few-Shot Learning

11.1. Meta-Learning Formulation in Few Shot Learning

11.2. Bayesian Methods in Few Shot Learning

11.3. Prototypical Networks in Few Shot Learning

11.4. Model-Agnostic Meta-Learning (MAML) in Few Shot Learning

11.5. Metric-Based Learning in Few Shot Learning

11.6. Bayesian Methods in Few Shot Learning

12. Metric Learning

12.1. Large Margin Nearest Neighbors (LMNN) Approach to Metric Learning

12.2. Information-Theoretic Metric Learning (ITML) Framework Approach to Metric Learning

12.3. Deep Metric Learning

12.4. Normalized Temperature-Scaled Cross-Entropy Loss (NT-Xent) Approach to Metric Learning

13. Adversial Learning

13.1. Fast Gradient Sign Method (FGSM) in Adversial Learning

13.2. Projected Gradient Descent (PGD) Method in Adversial Learning

13.3. Generative Approach in Adversarial Learning

13.4. Interpreting Generative Adversarial Networks Within the Framework of Energy-Based Models

14. Casual Inference in Deep Neural Networks

14.1. Structural Causal Model (SCM)

- Abduction: Infer exogenous variables using the observed data:

- Action: Modify the SCM by replacing X with :

- Prediction: Solve for in the modified SCM:

14.2. Counterfactual Reasoning in Causal Inference for Deep Neural Networks

14.3. Domain Adaptation in Causal Inference Within Deep Neural Networks

14.4. Invariant Risk Minimization (IRM) in Causal Inference for Deep Neural Networks

14.5. Empirical Risk Minimization (ERM) in Causal Inference for Deep Neural Networks

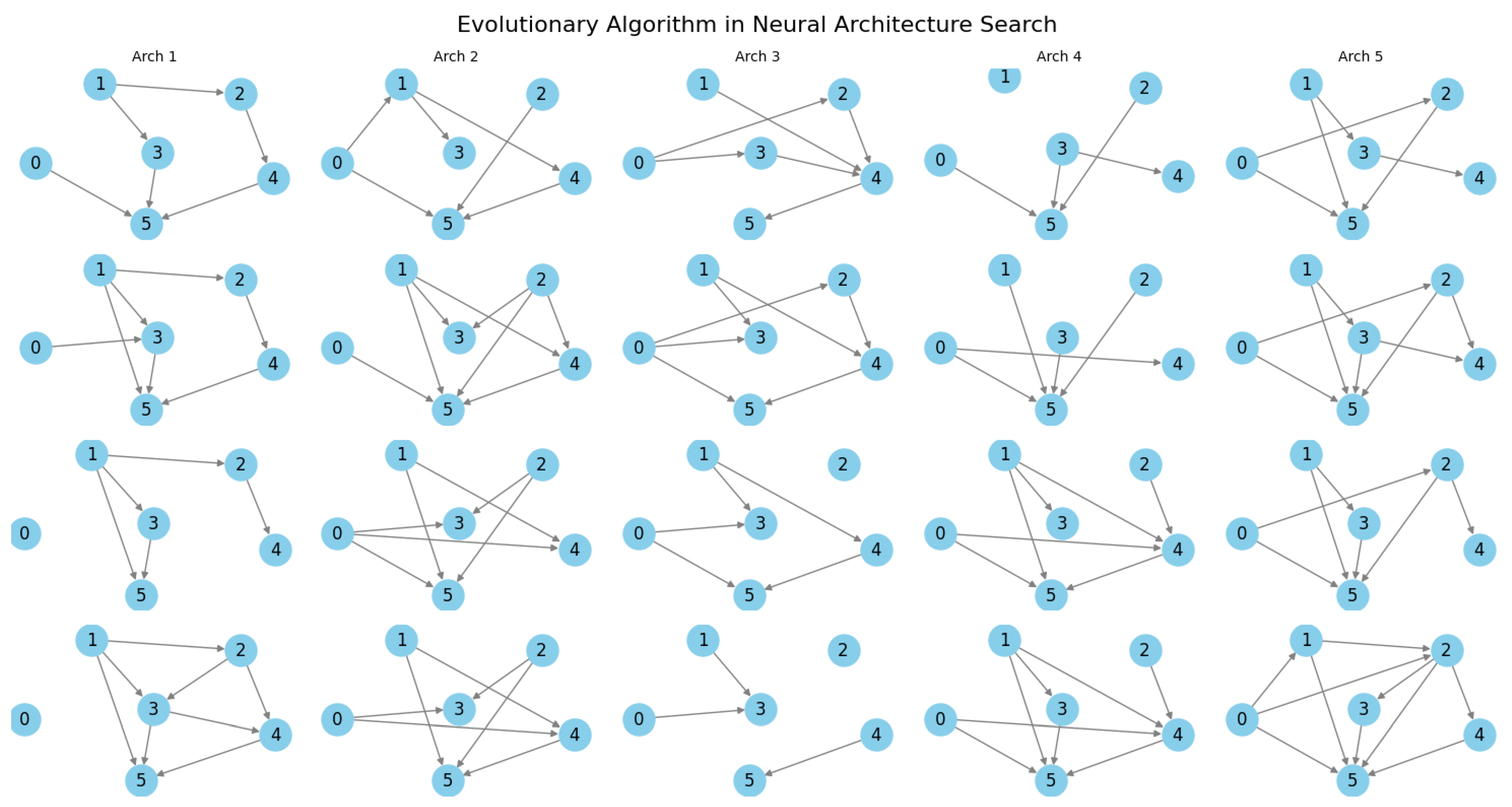

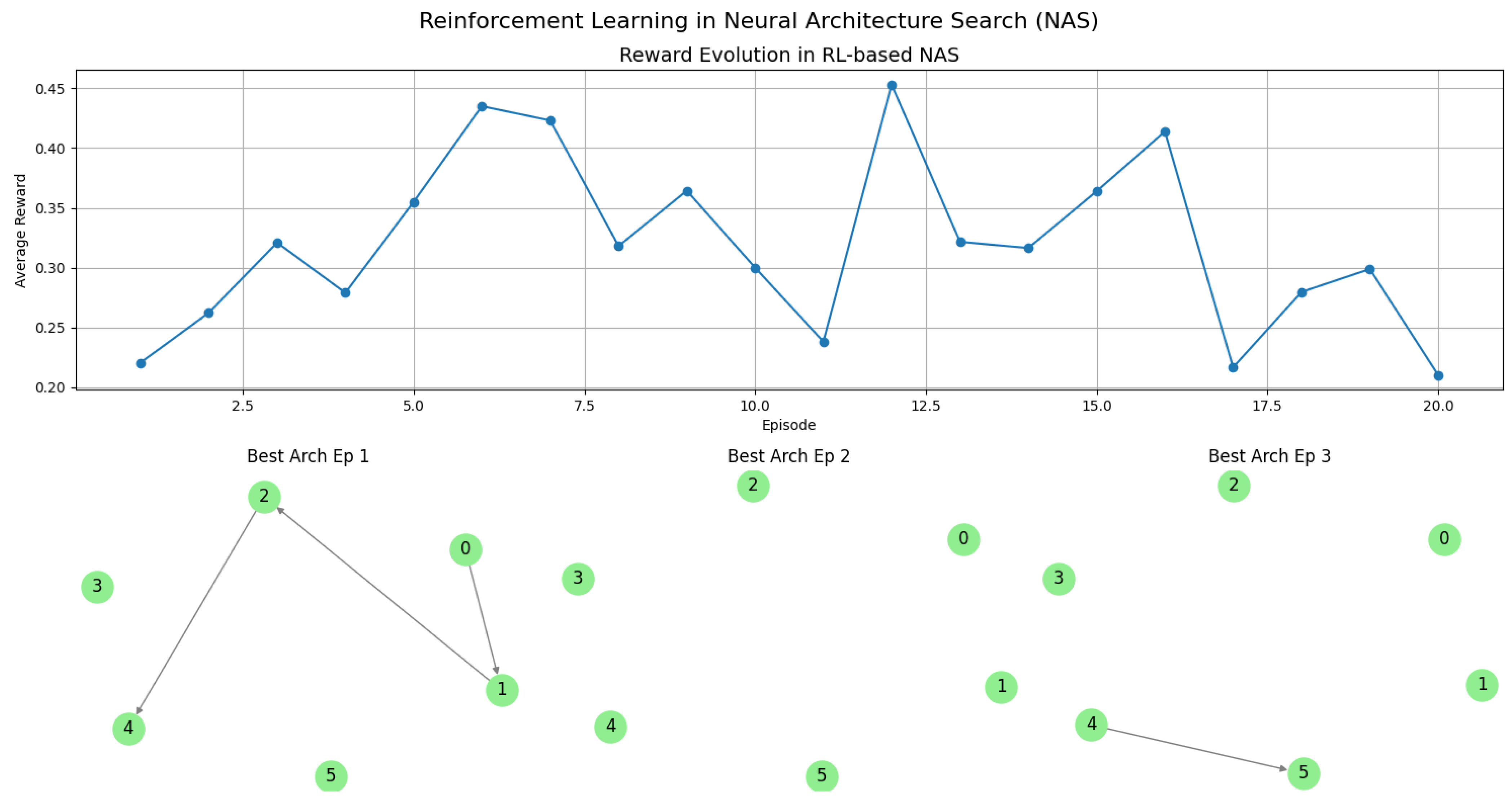

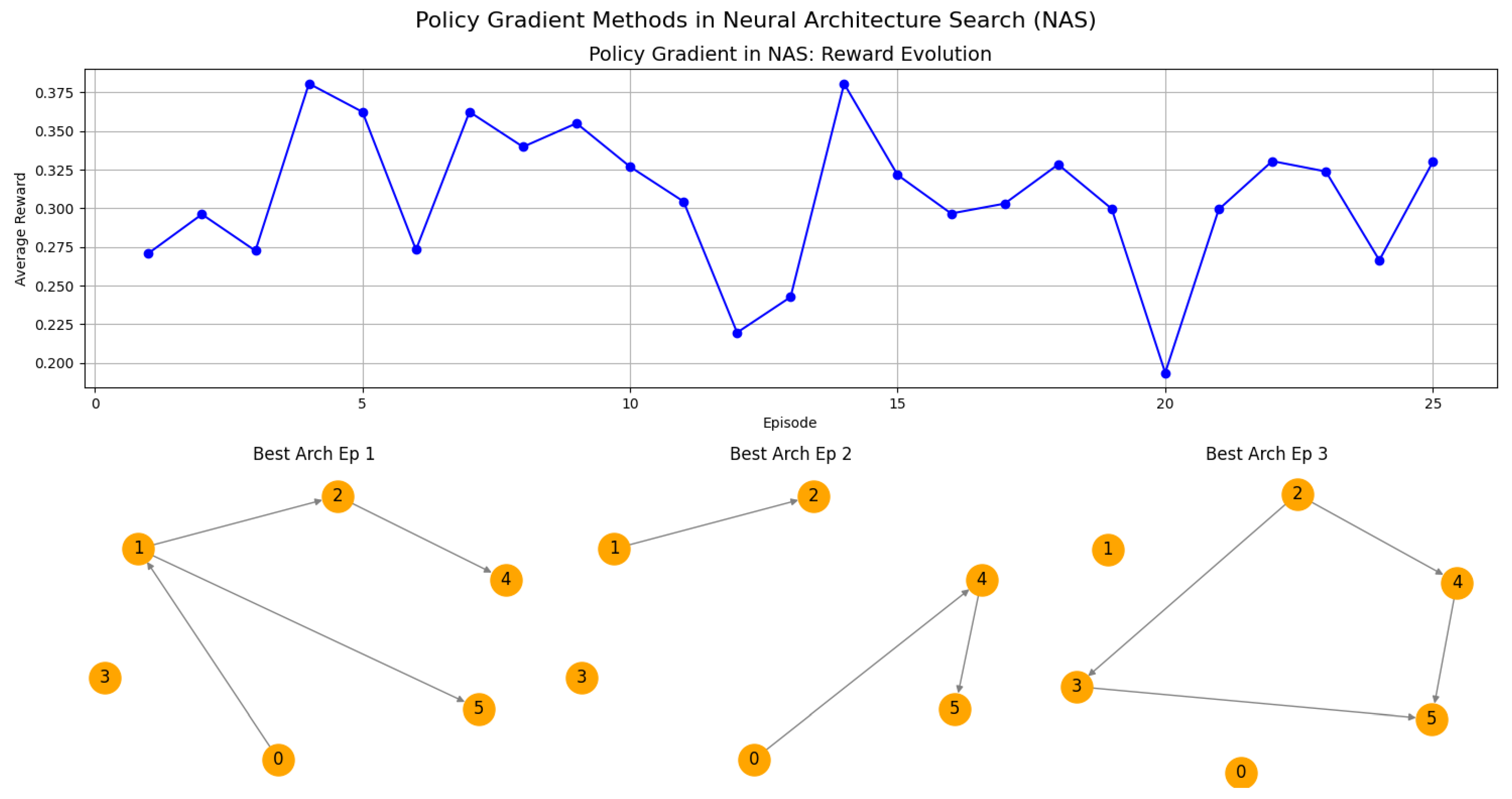

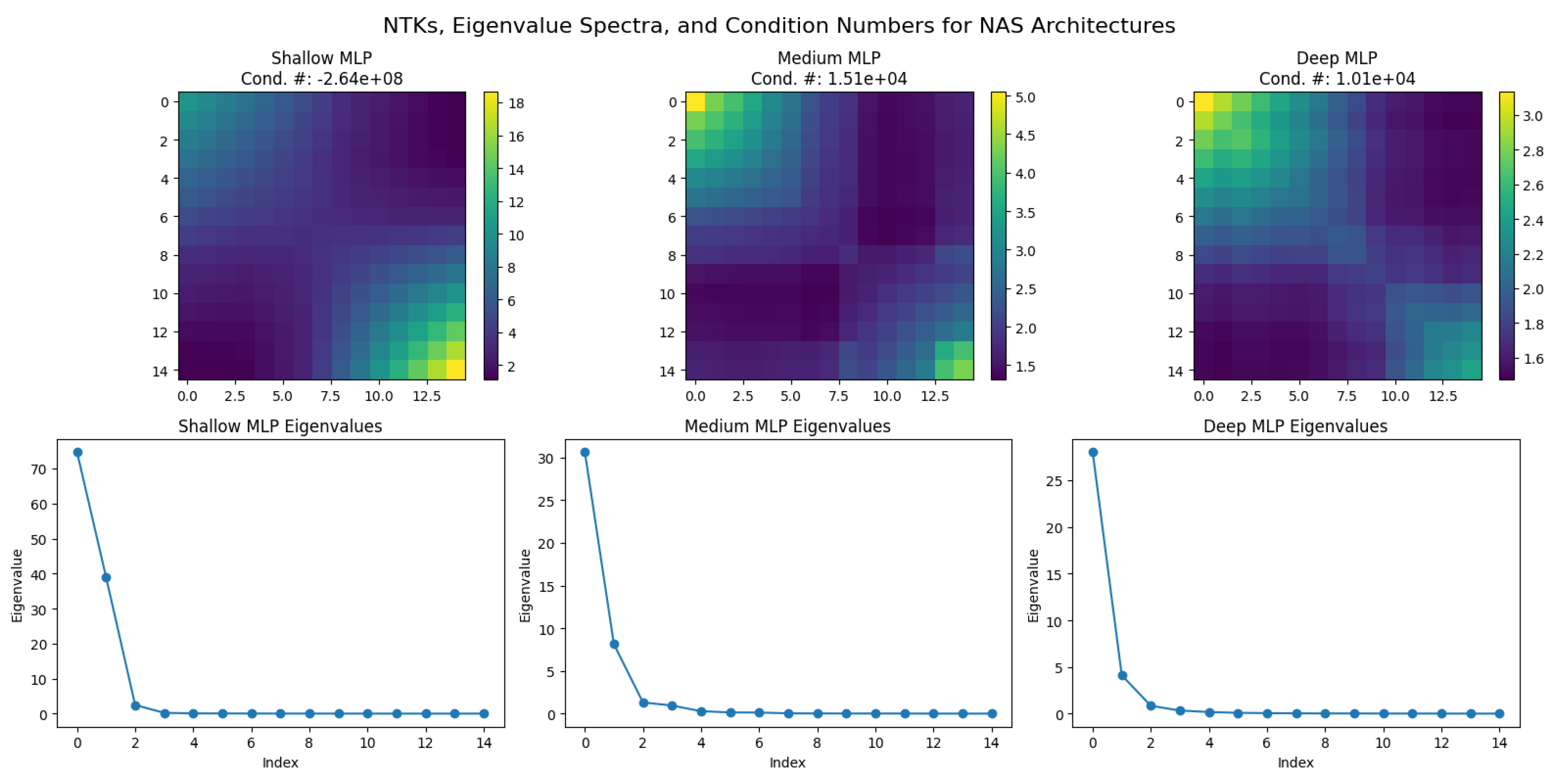

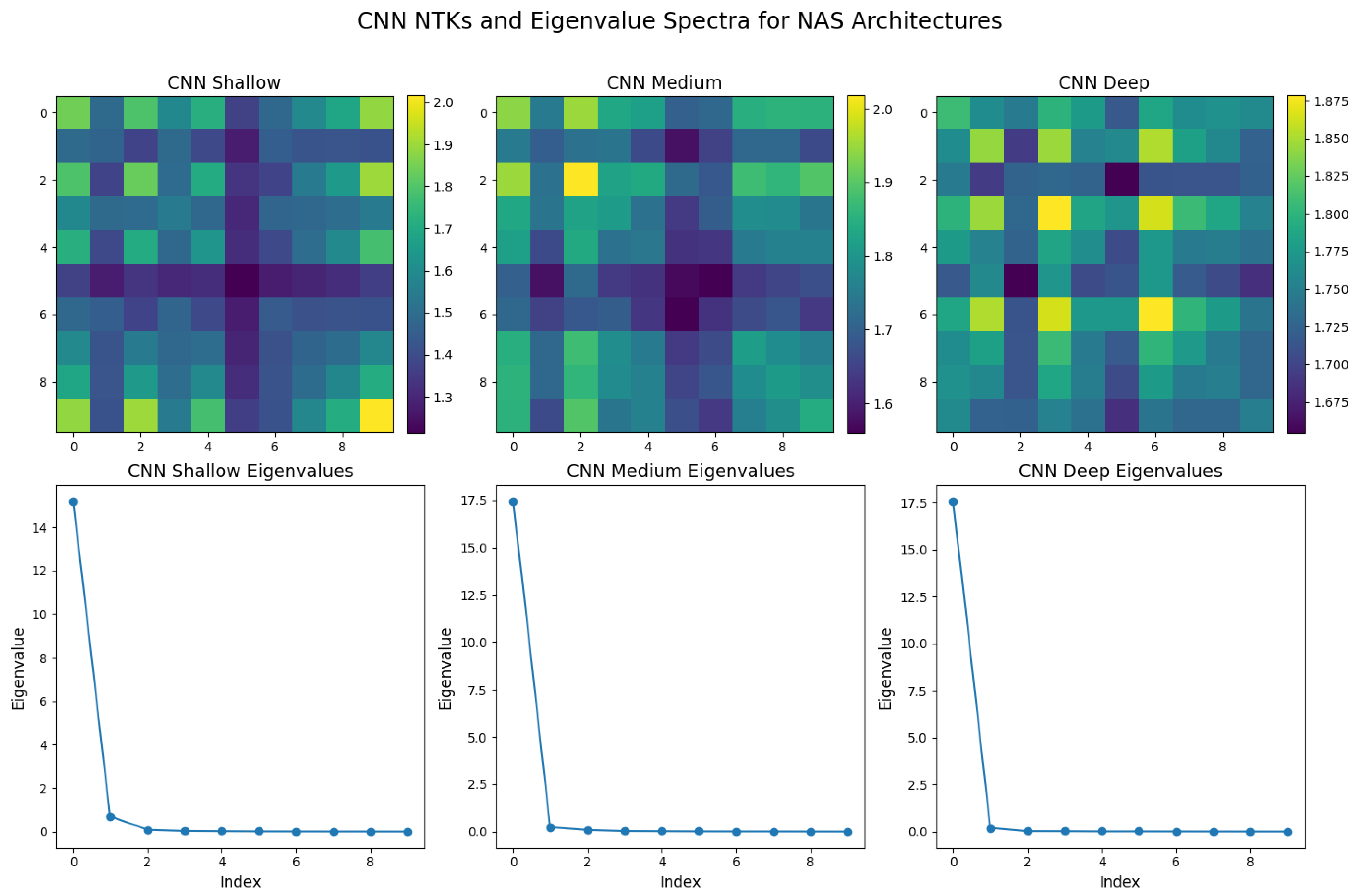

15. Network Architecture Search (NAS) in Deep Neural Networks

15.1. Evolutionary Algorithms in Network Architecture Search

15.2. Reinforcement Learning in Network Architecture Search

15.3. Policy Gradient Methods in Network Architecture Search

15.4. Neural Tangent Kernels (NTKs) in Network Architecture Search

16. Learning Paradigms

16.1. Unsupervised Learning

16.1.1. Literature Review of Unsupervised Learning

| Authors (Year) | Contribution |

|---|---|

| MacQueen (1967) [1035] | Introduced the k-means algorithm, a foundational clustering method that minimizes intra-cluster variance through iterative centroid updates and point reassignment based on Euclidean distance. |

| Dempster, Laird, and Rubin (1977) [1036] | Developed the Expectation-Maximization (EM) algorithm, a general framework for maximizing likelihood estimates in models with latent variables, forming the basis for Gaussian Mixture Models (GMMs). |

| Kohonen (1982) [1037] | Proposed self-organizing maps (SOMs), a neural-inspired model for competitive learning, which preserves topological relationships and has been instrumental in feature extraction. |

| Belkin and Niyogi (2003) [1038] | Introduced Laplacian Eigenmaps, which use a graph Laplacian to capture local geometric properties of data manifolds, providing a foundation for spectral clustering and nonlinear dimensionality reduction. |

| Tishby, Pereira, and Bialek (2000) [1039] | Proposed the Information Bottleneck (IB) method, optimizing mutual information to balance compression and predictive efficiency, influencing representation learning in autoencoders. |

| Hinton and Salakhutdinov (2006) [1040] | Demonstrated deep belief networks (DBNs), where layer-wise training of restricted Boltzmann machines (RBMs) enables hierarchical unsupervised representation learning. |

| Kingma and Welling (2013) [1041] | Developed variational autoencoders (VAEs), leveraging variational inference for probabilistic generative modeling of complex data distributions. |

| Goodfellow et al. (2020) [121] | Introduced generative adversarial networks (GANs), an adversarial framework where a generator and discriminator compete, leading to advances in synthetic data generation. |

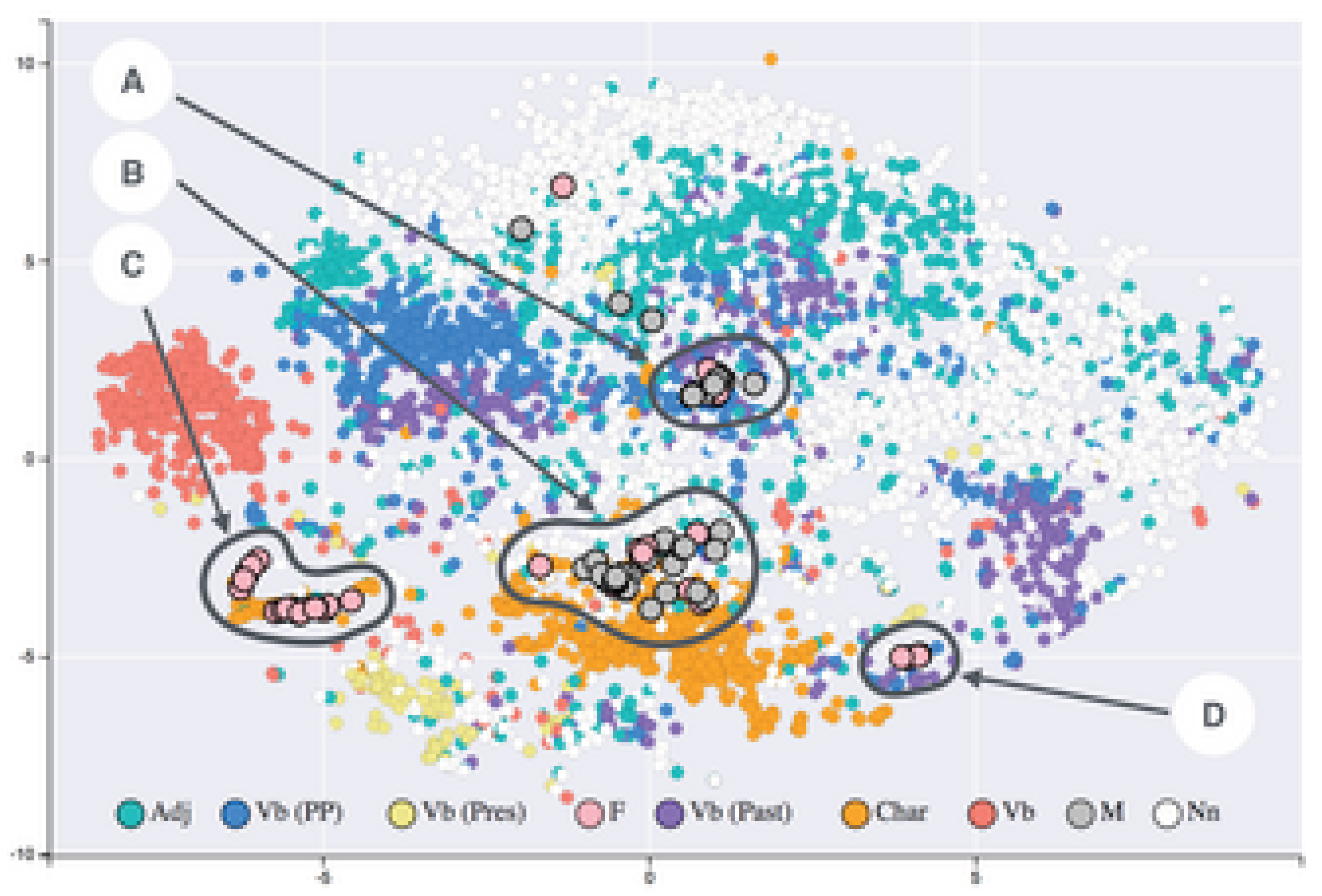

| van der Maaten and Hinton (2008) [1042] | Developed t-distributed stochastic neighbor embedding (t-SNE), a probabilistic approach for high-dimensional data visualization that preserves local similarities. |

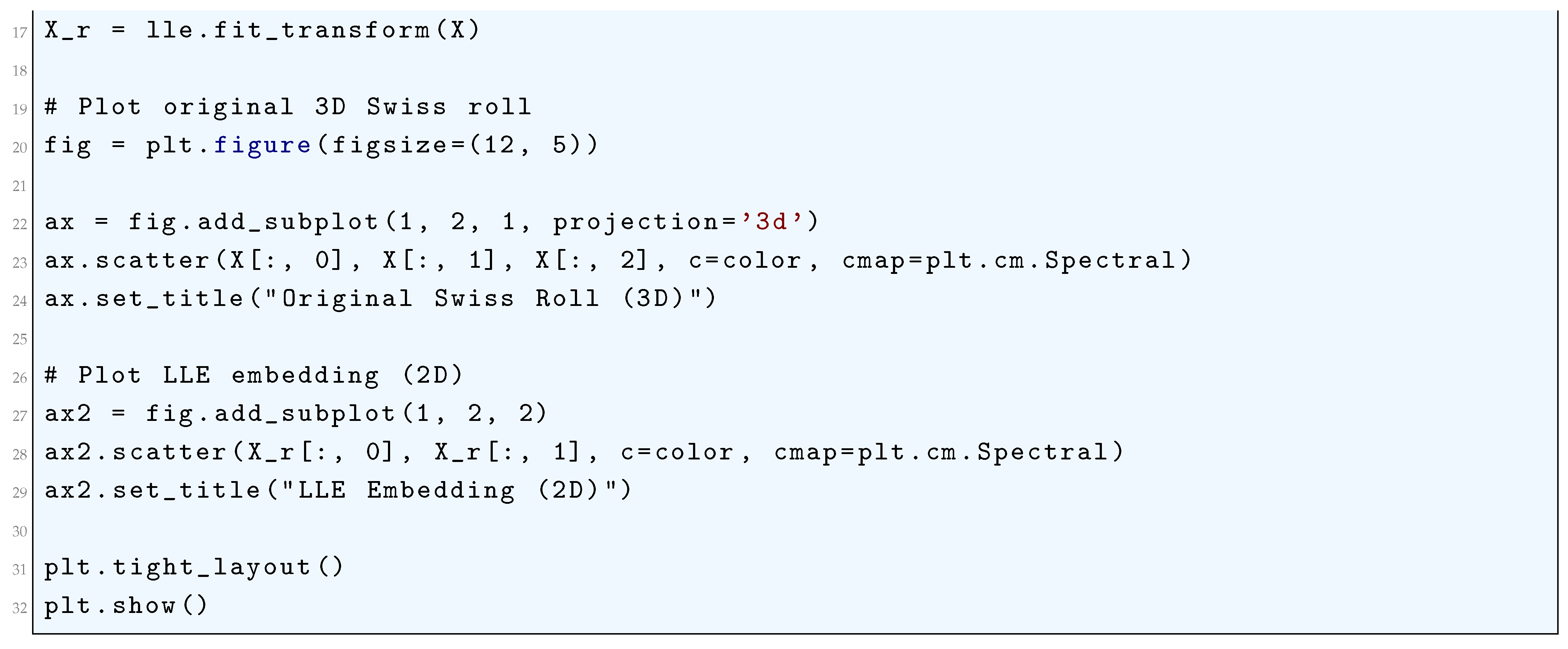

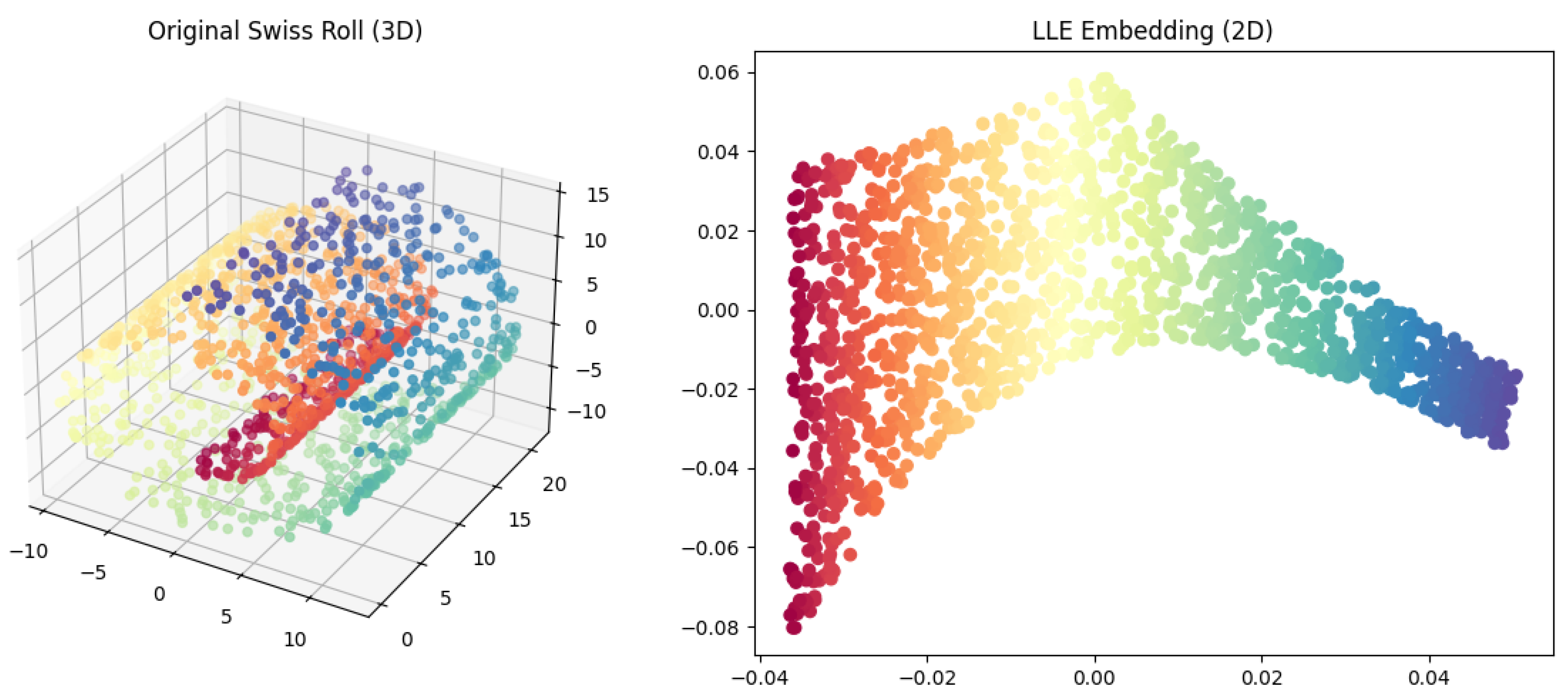

| Roweis and Saul (2000) [1043] | Introduced Locally Linear Embedding (LLE), a nonlinear dimensionality reduction technique that preserves local geometric relationships and is effective for manifold learning. |

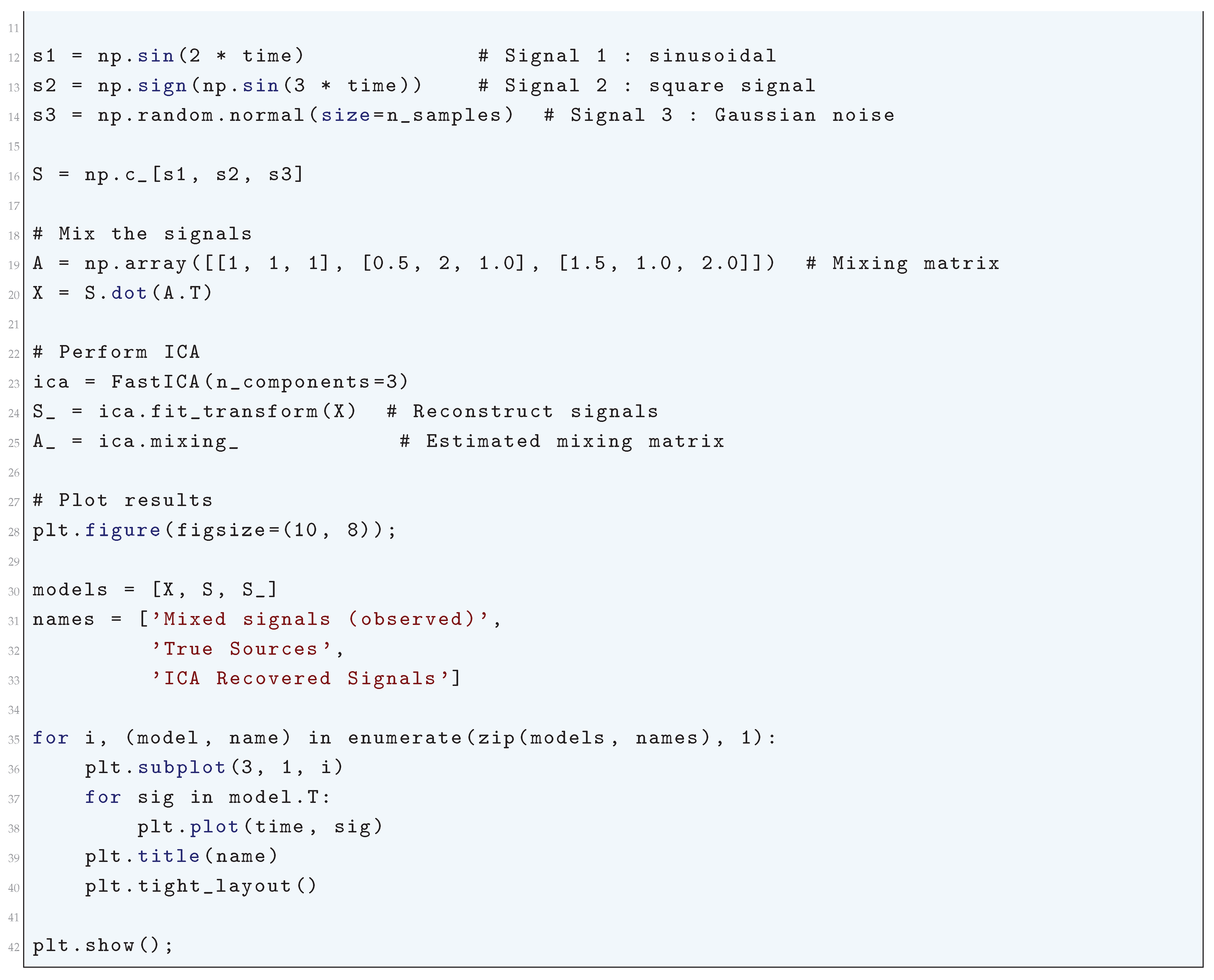

| Bell and Sejnowski (1995) [1044] | Developed Independent Component Analysis (ICA), an information-theoretic method for blind source separation, leveraging higher-order statistics to extract statistically independent signals. |

16.1.2. Recent Literature Review of Unsupervised Learning

| Authors (Year) | Contribution |

|---|---|

| Parmar (2025) [1045] | Introduced an unsupervised learning framework for identifying unknown defects in semiconductor manufacturing, leveraging clustering and anomaly detection to improve quality control in industrial settings. |

| Raikwar and Gupta (2025) [1046] | Developed an AI-driven trust management framework for wireless ad hoc networks, combining unsupervised and supervised learning to classify network nodes based on trustworthiness and detect malicious activity. |

| Moustakidis et al. (2025) [1047] | Proposed deep learning autoencoders for FFT-based clustering in structural health monitoring, enabling automated detection of temporal damage evolution in composite materials. |

| Liu et al. (2025) [1048] | Designed an unsupervised feature selection algorithm using L2, p-norm feature reconstruction, reducing redundant features and improving clustering performance for high-dimensional datasets. |

| Zhou et al. (2025) [1049] | Applied unsupervised clustering techniques to metabolic profiles, identifying hidden metabolic subtypes associated with hypertriglyceridemia and disease risks, advancing personalized medicine. |

| Lin et al. (2025) [1050] | Developed an unsupervised learning-based risk control model for health insurance fund management, effectively identifying high-risk groups and fraudulent claims through anomaly detection. |

| Huang et al. (2025) [1051] | Proposed an unsupervised domain adaptation method for open-world object detection, enabling models to generalize across different environments without extensive labeled datasets. |

| Wu and Liu (2025) [1052] | Designed a VQ-VAE-2-based unsupervised detection algorithm for concrete crack identification, automating structural health monitoring and reducing manual inspection efforts. |

| Nagelli and Saleena (2025) [1053] | Developed an aspect-based sentiment analysis model using self-attention mechanisms, enabling multilingual sentiment analysis without labeled training data. |

| Ekanayake (2025) [1054] | Applied deep learning-based unsupervised learning for MRI reconstruction and super-resolution, reducing scan times while maintaining high image quality in medical imaging. |

16.1.3. Mathematical Analysis of Unsupervised Learning

16.1.4. Information Bottleneck (IB) Method

16.1.4.1 Literature Review of Information Bottleneck (IB) Method

| Authors (Year) | Contribution |

| Tishby et al. (1999) [1068] | Introduced the Information Bottleneck (IB) method, formulating an optimization problem that balances mutual information terms to extract relevant information from a random variable while minimizing redundancy. Developed an iterative variational algorithm for solving the IB problem, demonstrating its application in clustering and representation learning. |

| Chechik et al. (2003) [1069] | Extended the IB method to jointly Gaussian variables, deriving analytical solutions that connect IB with canonical correlation analysis and PCA. Provided a rigorous foundation for applying IB to real-world Gaussian data. |

| Chechik and Tishby (2002) [1070] | Developed a variant of the IB framework incorporating side information, enabling extraction of representations that retain information about one target while obfuscating another. Applied to privacy-preserving and fairness-aware machine learning. |

| Tishby and Zaslavsky (2015) [1071] | Proposed that deep neural network training follows an IB perspective, consisting of an initial fitting phase followed by a compression phase, explaining generalization through information-theoretic principles. |

| Saxe et al. (2019) [1072] | Critically examined the IB hypothesis in deep learning, showing that the presence of a compression phase depends on network architecture and activation functions, challenging the universality of IB in training dynamics. |

| Shwartz-Ziv and Tishby (2017) [1073] | Conducted empirical analysis of information flow in deep networks using information plane visualizations, providing evidence for compression in networks trained with SGD and reinforcing IB-based interpretations. |

| Noshad et al. (2019) [1074] | Developed a new mutual information estimator using dependence graphs to improve the scalability and accuracy of IB-based analyses in high-dimensional settings, addressing limitations of traditional estimators. |

| Goldfeld et al. (2018) [1075] | Provided refined mutual information estimation techniques for deep networks, offering rigorous mathematical justifications for compression effects in neural representations. |

| Geiger (2021) [1077] | Reviewed information plane analyses in neural classifiers, evaluating the strengths and weaknesses of IB interpretations, highlighting cases where IB fails to accurately characterize training dynamics. |

| Kawaguchi et al. (2023) [1078] | Analyzed generalization properties of neural networks under IB, linking information compression to generalization error bounds and establishing IB as a regularization mechanism for improved performance. |

16.1.4.2 Recent Literature Review of Information Bottleneck (IB) Method

| Authors (Year) | Contribution |

|---|---|

| Dardour et al. (2025) [1079] | Introduced a novel approach to enhance adversarial robustness in stochastic neural networks. By leveraging inter-separability and intra-concentration, their study demonstrated that IB constraints help neural networks learn more robust latent features, effectively mitigating adversarial perturbations. |

| Krinner et al. (2025) [1080] | Applied IB principles to reinforcement learning by designing state-space world models that accelerate learning efficiency. Their study showed that IB-based methods help an agent discard irrelevant environmental noise while retaining essential features, leading to improved exploration efficiency. |

| Yildirim et. al. (2024) [1081] | Explored how IB constraints affect StyleGAN-based image editing. They demonstrated that GAN-based inversion techniques often suffer from excessive compression-induced detail loss, proposing refined inversion methods that better preserve fine-grained image features. |

| Yang et al. (2025) [1082] | Developed a cognitive-load-aware activation mechanism for large language models (LLMs), improving efficiency by dynamically activating only the necessary model parameters. Their study used IB principles to retain relevant contextual representations while discarding redundant computations, reducing computational overhead. |

| Liu et al. (2025) [1083] | Incorporated IB principles in a structure-aware Vision Mamba network for crack segmentation in infrastructure. Their method efficiently filters out redundant spatial information, enhancing computational efficiency and segmentation accuracy, making it crucial for real-time applications in structural health monitoring. |

| Stierle and Valtere (2025) [1084] | Applied IB theory to medical innovation, examining how information bottlenecks in regulatory and patent frameworks slow down gene therapy advancements. Their work analyzed how such bottlenecks in medical research and policy impede technological progress. |

| Chen et al. (2025) [1085] | Applied IB concepts to quantum computing, particularly in optimizing construction supply chains. Their work demonstrated that quantum models integrated with IB techniques efficiently compress relevant data while filtering out extraneous information, improving decision-making processes. |

| Yuan et al. (2025) [1086] | Extended IB applications to plant metabolomics by proposing a novel feature selection approach that retains highly informative metabolite interactions while discarding non-essential data. This method improved interpretability in plant metabolic studies. |

| Dey et al. (2025) [1087] | Utilized IB principles in spatio-temporal prediction models for NDVI (Normalized Difference Vegetation Index), which is crucial for rice crop yield forecasting. Their IB-augmented neural network improved prediction accuracy by filtering out irrelevant environmental variables. |

| Li (2025) [1088] | Applied IB principles in robotic path planning, developing an optimized method for navigation path extraction in mobile robots. Their approach eliminated irrelevant environmental noise while preserving crucial navigational data, improving robotic movement efficiency. |

16.1.4.3 Mathematical Analysis of Information Bottleneck (IB) method

- is the mutual information between the input X and the compressed representation T, which measures the amount of information retained about X in T.

- is the mutual information between T and the target variable Y, ensuring that the compressed representation remains useful for predicting Y.

- is a Lagrange multiplier that controls the trade-off between compression and prediction accuracy.

- is a normalization constant ensuring that is a valid probability distribution.

- is the Kullback-Leibler (KL) divergence between the posterior distributions and , ensuring that T retains relevant information about Y.

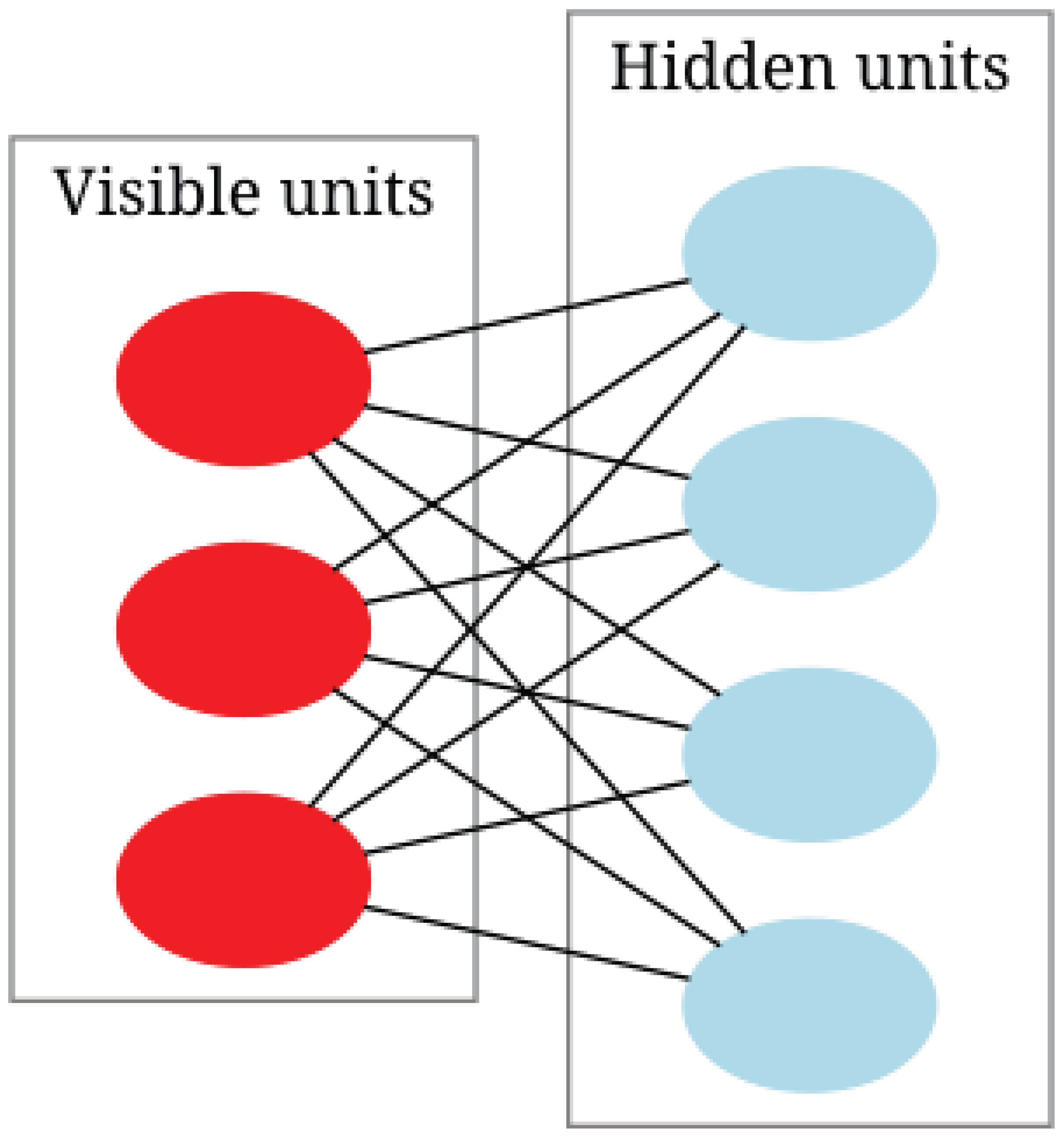

16.1.5. Restricted Boltzmann machines (RBMs)

16.1.5.1 Literature Review of Restricted Boltzmann machines (RBMs)

| Authors (Year) | Contribution |

|---|---|

| Smolensky (1986)[1090] | Introduced the concept of the Harmonium, providing the theoretical foundation for energy-based models and probabilistic representations in neural networks. |

| Hinton and Salakhutdinov (2006)[1040] | Demonstrated how RBMs could be stacked to form Deep Belief Networks (DBNs), enabling efficient unsupervised pretraining and improving deep learning architectures. |

| Carreira-Perpiñán and Hinton (2005)[1091] | Analyzed the Contrastive Divergence (CD) algorithm, providing insights into its convergence properties and limitations for RBM training. |

| Hinton (2012)[1092] | Provided a practical guide for training RBMs, detailing hyperparameter tuning, initialization strategies, and best practices. |

| Fischer and Igel (2014)[1093] | Offered a comprehensive introduction to RBMs, covering theoretical foundations, training methodologies, and practical applications. |

| Larochelle and Bengio (2008)[1094] | Introduced a discriminative variant of RBMs tailored for classification tasks, demonstrating their adaptability to supervised learning. |

| Salakhutdinov, Mnih, and Hinton (2007)[1095] | Applied RBMs to collaborative filtering, showing their effectiveness in recommender systems by capturing latent user-item interactions. |

| Coates, Lee, and Ng (2011)[1096] | Analyzed RBMs for unsupervised feature learning, demonstrating their ability to extract hierarchical representations from raw data. |

| Salakhutdinov and Hinton (2009)[1097] | Proposed the Replicated Softmax model, extending RBMs for modeling word counts in natural language processing tasks. |

| Adachi and Henderson (2015)[1098] | Investigated the use of quantum annealing for RBM training, exploring potential acceleration of learning using quantum computing techniques. |

16.1.5.2 Recent Literature Review of Restricted Boltzmann Machines (RBMs)

| Authors (Year) | Contribution |

| Salloum et al. (2024) [1099] | Compared classical RBMs with quantum-restricted Boltzmann machines for MNIST classification, demonstrating that quantum models exhibit superior performance in certain optimization scenarios. |

| Joudaki (2025) [1100] | Conducted a comprehensive literature review on RBMs and Deep Belief Networks (DBNs) for human action recognition, identifying key challenges such as overfitting and slow convergence. |

| Prat Pou et al. (2025) [1101] | Proposed an improved method for evaluating the partition function in RBMs using annealed importance sampling, which enhances accuracy in statistical physics applications. |

| Decelle et al. (2025) [1102] | Investigated the ability of RBMs to infer high-order dependencies in complex systems, particularly in protein interaction networks and spin glasses. |

| Savitha et al. (2025) [1103] | Integrated RBMs within DBNs for cardiovascular disease prediction, leveraging optimization techniques such as the Harris Hawks Search algorithm to improve diagnostic accuracy. |

| Béreux et al. (2025) [1104] | Developed an efficient training strategy for RBMs that accelerates convergence while maintaining strong generalization capabilities in large-scale machine learning problems. |

| Thériault et al. (2024) [1105] | Explored structured learning in RBMs within a teacher-student setting, demonstrating that incorporating structured priors enhances generalization beyond seen data. |

| Manimurugan et al. (2024) [1106] | Combined Bi-LSTM networks with RBMs for underwater object detection, showcasing the ability of RBMs to effectively capture spatial dependencies in sonar and optical imagery. |

| Hossain et al. (2025) [1107] | Benchmarked RBMs against classical and deep learning models for human activity recognition, highlighting their effectiveness in extracting latent features. |

| Qin et al. (2025) [1108] | Integrated RBMs with magnetic tunnel junctions for magnetic anomaly detection, demonstrating their potential in neuromorphic computing for energy-efficient AI systems. |

16.1.5.3 Mathematical Analysis of Restricted Boltzmann Machines (RBMs)

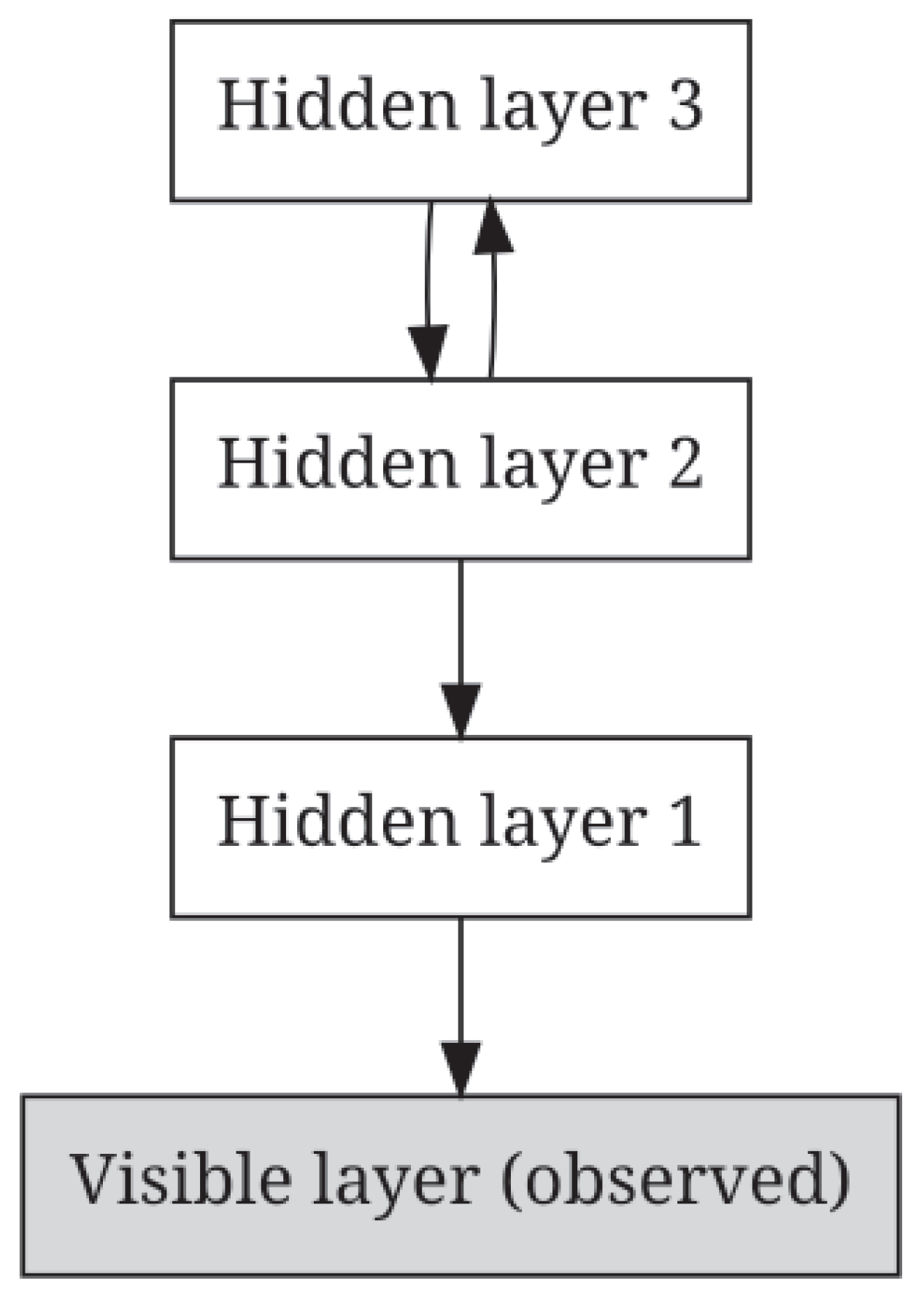

16.1.6. Deep Belief Networks (DBNs)

16.1.6.1 Literature Review of Deep Belief Networks (DBNs)

| Authors (Year) | Contribution |

|---|---|

| Hinton et al. (2006) [854] | Introduced a fast learning algorithm for DBNs using a greedy layer-wise pre-training strategy based on Restricted Boltzmann Machines (RBMs). Addressed the vanishing gradient problem and established DBNs as foundational deep learning architectures. |